1. Introduction

Modern machinery and equipment are widely used in industrial production, and their structures are sophisticated and complex. They are usually operated in a high-intensity working environment. Among them, rotating machinery plays an essential role in modern mechanical equipment, and is fragile and vulnerable to damage, significantly affecting the entire system’s stability. Therefore, fault diagnosis of rotating machinery is vital in the modern industry. To get better diagnosis results, it is critical to extract significant features. Traditional data-driven fault diagnosis methods extract features artificially from raw signals, namely handcraft features [

1,

2,

3]. These handcraft features can be generated from time domain, frequency domain, time-frequency domain or other signal processing methods, and are classified by pattern recognition algorithms, such as Support Vector Machine (SVM) [

4,

5], K-nearest Neighbors (k-NN) [

6], Decision Tree (DT) [

7,

8] and so on. However, handcraft features require a lot of experience and professional knowledge, and different problems may require different feature extraction methods. Besides, feature selection among variously alternative features is also tricky and time-consuming.

In recent years, deep learning has been applied in fault diagnosis [

9,

10,

11], which has a powerful ability to learn features from large amounts of data compared with traditional machine learning [

12]. It can automatically mine useful features from signals and regularization terms can be added for feature selection. Besides, deep learning can achieve end-to-end learning that combines feature extraction and classification. The feature extraction and classifier of traditional methods are uncoupled and independent from each other. But feature extractor and classifier of deep learning are trained jointly, and the extracted features are specific to certain diagnostic tasks [

13].

While deep learning has achieved good performance in fault diagnosis, two problems need to be solved: (a) Exiting deep learning models require a lot of labeled data. However, sensors of industrial devices will produce a lot of unlabeled data in a short time, and labeling data is very time-consuming and labor-intensive [

14]. (b) Operating conditions of actual industrial equipment are often changing, which results in different distributions of collected datasets [

15]. a model trained on one specific dataset will have poor generalization ability on another dataset with a different distribution.

To solve the above problems, transfer learning, a branch of machine learning, has been employed in fault diagnosis [

16]. In transfer learning, the domain has a lot of labeled data and knowledge is called the

source domain, and the

target domain is the object that we want to transfer knowledge to [

17,

18]. Based on whether the source domain dataset has labels, transfer learning is divided into three categories: supervised transfer learning, semi-supervised transfer learning and unsupervised transfer learning [

17]. In this paper, we focus on unsupervised transfer learning. a widely used method to solve unsupervised transfer learning is

domain adaptation, which is to learn common feature expressions between two domains to achieve feature adaptation [

19,

20]. Domain adaptation has been proven effective in fault diagnosis and has become one of the research hot spots in fault diagnosis [

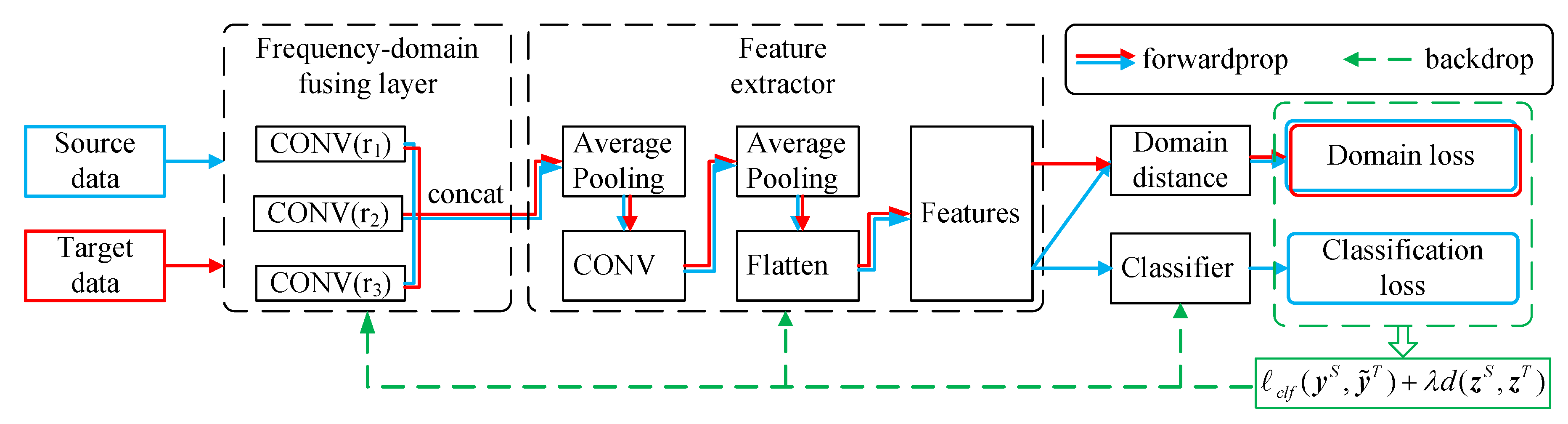

16]. However, exciting domain adaptation methods for fault diagnosis extract features on a single scale, and do not consider network design from the perspective of frequency-domain. In this paper, amplitude-frequency characteristics (AFC) curve is utilized to describe the frequency domain characteristics of convolution kernels for the first time. Inspired by the discovery that convolution kernels of different scales filter signals of different frequency bands, we propose a unified CNN architecture to improve the effect of domain adaptation for fault diagnosis, named Frequency-domain Fusing CNN (FFCNN). Since a large kernel will increase the number of the networks’ parameters, we use dilated convolution [

21,

22,

23] to expand the receptive field of convolution kernel without increasing the number of parameters. FFCNN concatenates several convolution kernels with different dilation rates in the first layer, which will extract features at different scales of the original signals. Then these features are fused for domain adaptation.

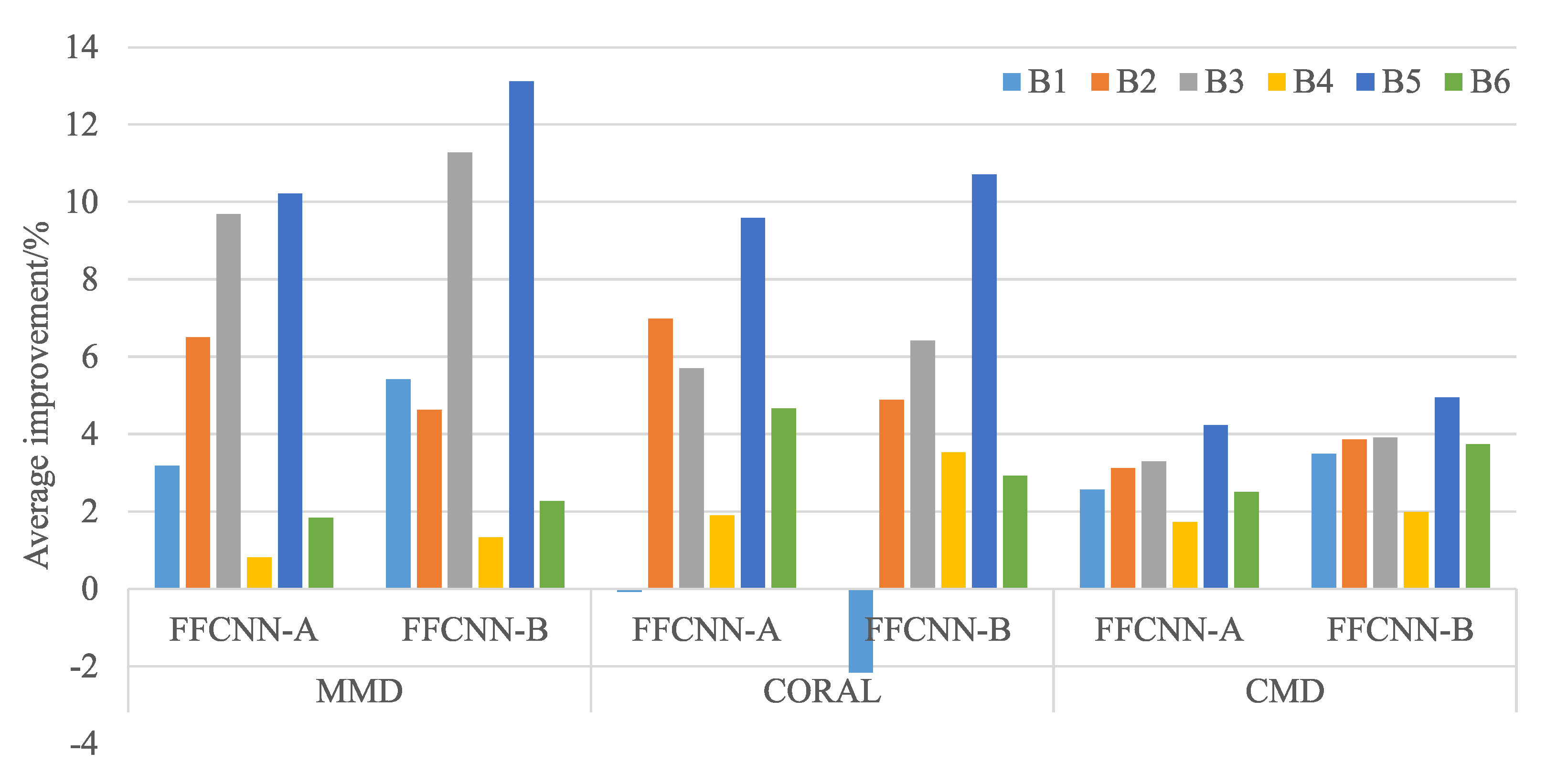

While some papers have proposed similar network architectures of multi-scale convolution [

24,

25,

26,

27], our approach differs from theirs in the following respects: (a) Most existing papers focus on general classification problems, but we have verified the effectiveness of multi-scale structure in domain adaptation; (b) Most methods do not clarify the physical meaning of multi-scale convolution, but our method is driven by the frequency-domain characteristics of convolution kernels, which has a clear physical meaning. Compared with the previous domain adaptation methods for fault diagnosis, our proposed method is unified and suitable for different domain adaptation losses. In consequence, the contributions of this paper are summarized as follows:

We design the network architecture for fault diagnosis from the perspective of frequency-domain characteristics of convolution kernels. The motivation for network design has a clear physical meaning.

For the first time, we use the amplitude-frequency characteristic curve to describe the frequency domain characteristic of the convolution kernels. This provides a new idea for analyzing the physical meaning of the convolution kernels.

the proposed FFCNN is suitable for various domain adaptation loss functions, and can significantly improve the performance of domain adaptation for fault diagnosis without increasing the complexity of the networks.

Dilated convolution is used in domain adaptation and fault diagnosis. Dilated convolution can improve the receptive field without increasing the number of parameters.

The rest of this paper is organized as follows. In

Section 2, related work about deep learning methods and domain adaptation methods are introduced. Some background knowledge will be introduced, including domain adaptation, CNN, and dilated convolution in

Section 3.

Section 4 will give the motivation of our proposed method.

Section 5 will detail the proposed MSCNN and the training process.

Section 6 will study two cases and provide in-depth analysis from different perspectives. Some usage suggestions, existing problems and future research contents are given in

Section 7. Finally, the conclusions are drawn in

Section 8. The symbols used in this paper are listed in Abbreviations.

2. Related Work

Deep learning for fault diagnosis. a variety of deep learning methods have been successfully applied in fault diagnosis in recent years. Jia et al. [

28] proposes a Local Connection Network (LCN) constructed by normalized sparse Autoencoder (NSAE), named NSAE-LCN. This method overcomes two shortcomings of traditional methods: (a) They may learn similar features in feature extraction. (b) the learned features have shift variant properties, which leads to the misclassification of fault types. Yu et al. [

29] proposed a component selective Stacked Denoising Autoencoders (SDAE) to extract effective fault features from vibration signals. Then correlation learning is used to fine-tune the SDAE to construct component classifiers. Finally, a selective ensemble is finished based on these SDAEs for gearbox fault diagnosis. Except for autoencoder, CNN is also a widely used deep learning method. Jing et al. [

30] developed a 1-D CNN to extract features directly from frequency data of vibration signals. The results showed that the proposed CNN method can extract more effective features than the manually-extracting method. Huang et al. [

27] developed an improved CNN that uses a new layer before convolutional layer to construct new signals of more distinguishable information. The new signals are obtained by concatenating the signals convolved by kernels of different lengths. Generative adversarial network (GAN) and Capsule Network (CN) are the latest research results of deep learning. Han et al. [

31] used adversarial learning as a regularization in CNN. The adversarial learning framework can make the feature representation robust, boost the generalization ability of the trained model, and avoid overfitting even with a small size of labeled data. Chen et al. [

32] proposed a novel method called deep capsule network with stochastic delta rule (DCN-SDR). The effective features are extracted from raw temporal signals, and the capsule layers reserve the multi-dimensional features to improve the representation capacity of the model.

Domain adaptation for fault diagnosis. Domain adaptation method can use the unlabeled data for transfer learning. In the work of Li et al. [

33], the multi-kernel maximum mean discrepancies (MMD) are minimized to adapt the learned features in multiple layers between two domains. This method can learn domain-invariant features and significantly improve the performance of cross-domain testing. Han et al. [

34] proposed an intelligent domain adaptation framework for fault diagnosis, deep transfer network (DTN). DTN extends the marginal distribution adaptation to joint distribution adaptation, guaranteeing a more accurate distribution matching. Wang et al. [

35] applies adversarial learning to domain adaptation, and proposes Domain-Adversarial Neural Networks (DANN). In addition, a unified experimental protocol for a fair comparison between domain adaptation methods for fault diagnosis is offered. Guo et al. [

36] proposes an intelligent method named deep convolutional transfer learning network (DCTLN) consists of condition recognition and domain adaptation. The condition recognition module is a 1-D CNN to learn features and recognize machines’ health conditions. The domain adaptation module maximizes domain recognition errors and minimizes probability distribution distance to help 1-D CNN learning domain invariant features. Li et al. [

37] proposed a weakly supervised transfer learning method with domain adversarial training. This method aims to improve the diagnostic performance on the target domain by knowledge transferation from multiple different but related source domain.

3. Background

3.1. Transfer Learning and Domain Adaptation

We consider a deep learning classification task where is the dataset sampled form input space and is the labels of dataset from label space . Above elements form a specific domain . We need to learn a feature extractor and a classifier , where Z is the learned features representation. Given two domains with different distributions named source domain and target domain , transfer learning is to improve the performance of target domain using the knowledge of source domain, where or .

From the perspective of input spaces and label spaces, transfer learning can be divided into the following two types:

Homogeneous transfer learning. The input spaces of the source domain and target domain are similar and the label spaces are the same, expressed as and .

Heterogeneous transfer learning. Both the input spaces and the label spaces may be different, expressed as or .

Besides, according to whether the target domain contains labels, transfer learning can also be divided into following three types:

Supervised transfer learning. All data in the target domain have labels.

Semi-supervised transfer learning. Only part of the data in the target domain have labels.

Unsupervised transfer learning. All data in the target domain have no labels.

Most of the research in recent years has focused on unsupervised homogeneous transfer learning [

38], which is also the direction of our work. Domain adaptation is a common method to solve unsupervised homogeneous transfer learning. Given source domain

and target domain

, a labeled source dataset

is sampled

from

, and an unlabeled target dataset

is sampled

form

. a domain adaptation problem aims to train a common feature extractor

over

and

, and a classifier

learned from

with a low target risk [

39]:

To adapt the feature space of source domain and target domain, a specific criterion is chosen for measuring the discrepancy between and . which is regarded as a loss function.

3.2. Convolutional Neural Network

In this paper, a one-dimensional convolutional neural network is built to extract features and classify fault types. a typical CNN consists of convolution layers, pooling layers and a fully-connected layer. Let

is the output of

layer containing source domain data and target domain data,

N is the number of channels,

M is the dimensional of feature maps. The kernel of

convoluntion layers is

, bias is

,

C is the number of channels in the output feature maps,

H is kernel size. So the output of

layer is obtained as follows [

13]:

where

is activation function, * is convolution operation,

s is the stride step, and

p is padding size to keep the input and output dimensions consistent. After convolution layer, a down-sampling layer is connected to reduce the number of parameters and avoid overfitting [

13]:

where

s is the pooling step, and

L is pooling size. Repeat convolution layer and pooling layer several times to deepen the network. Then the feature maps are flattened into one-dimension to connect a fully-connected layer. Finally, the softmax layer outputs the predicted classification probability:

The classification loss used to measure the discrepancy between predictions and labels can be expressed by cross-entropy:

where

is the real label of

sample. The objective of the classification task is to optimize the loss function to reduce the classification risk.

3.3. Dilated Convolution

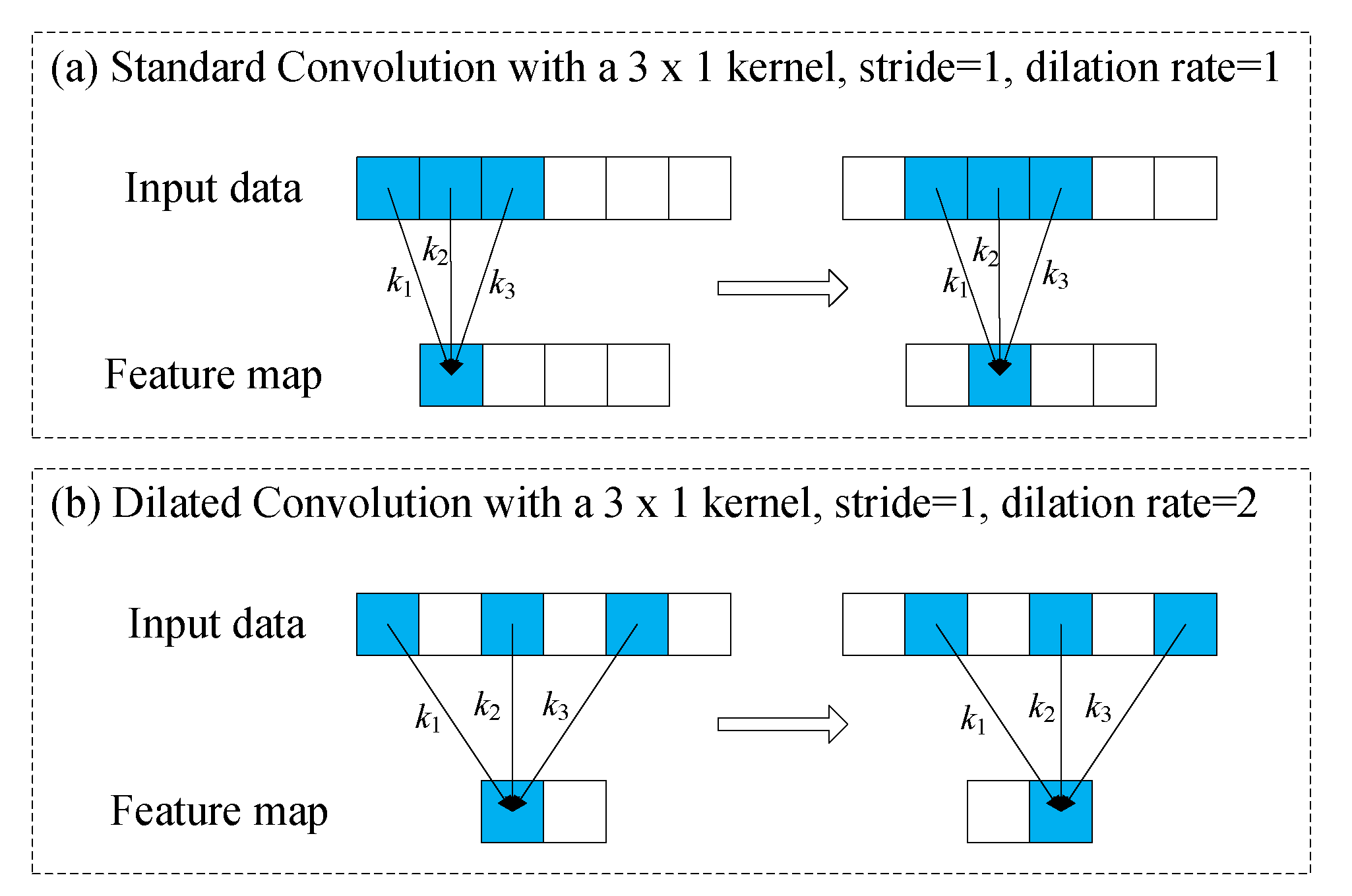

To explain dilated convolution, we compare it with a standard convolution as shown in

Figure 1. We assume that the input data

is six-dimensions, kernel is

, stride is 1. According to Equation (1), the output is

in

Figure 1a, where

In the standard convolution, the adjacent elements of the input data are multiplied and added to the kernel, and the operation is repeated by sliding s strides to the end of input data. Dimension of output is .

In dilated convolution, we denote

r the dilation rate. Unlike standard convolution, the elements multiplied and added with the kernel are separated by

elements in dilated convolution. In

Figure 1b, dilation rate is 2, and the output becomes

[

21], where

Dilated convolution is equivalent to expanding the kernel size, that is, expanding the receptive field, and the equivalent kernel size is [

40]:

So the dimension of output

becomes:

The standard convolution is the dilated convolution of .

4. Motivation

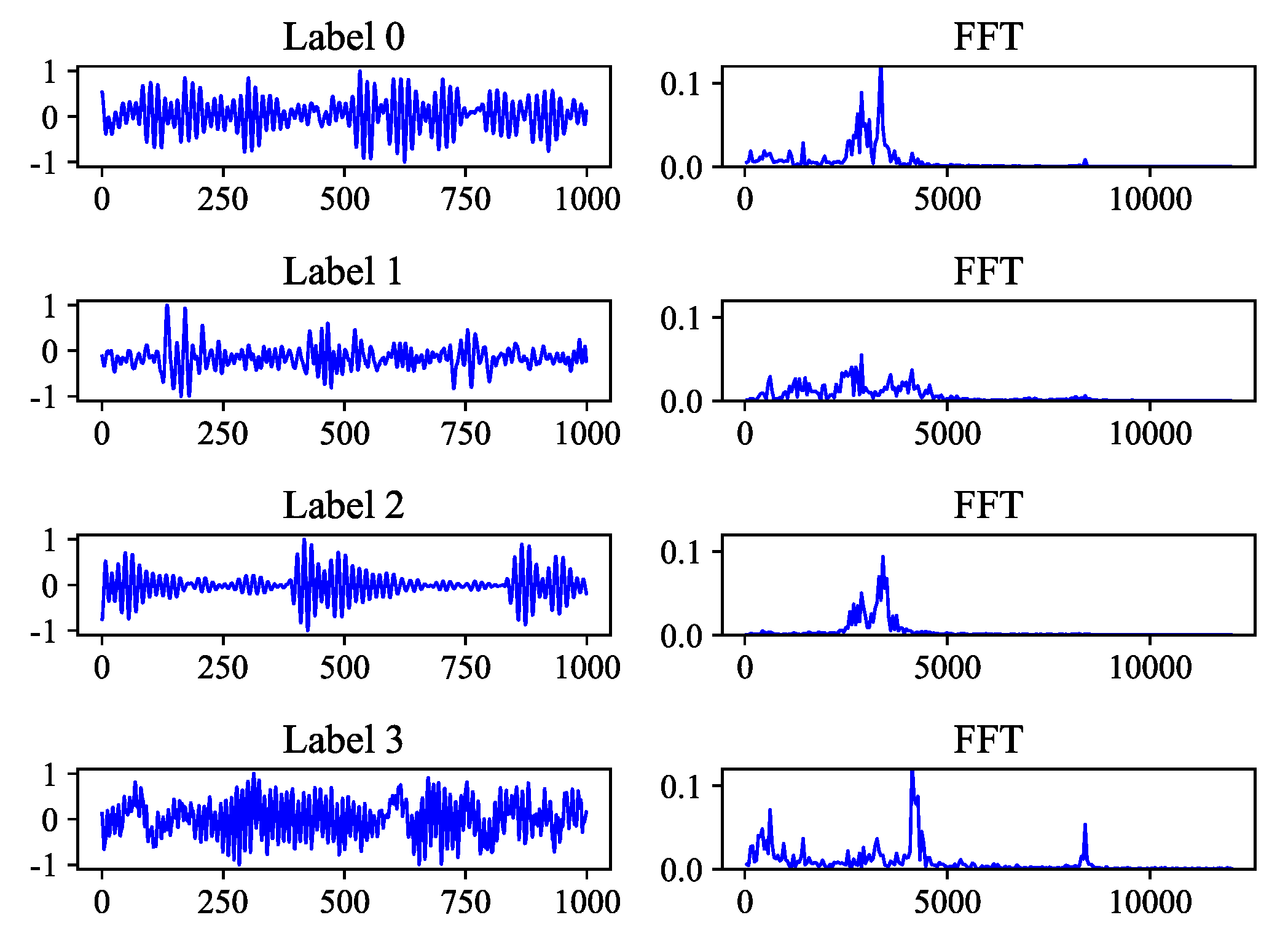

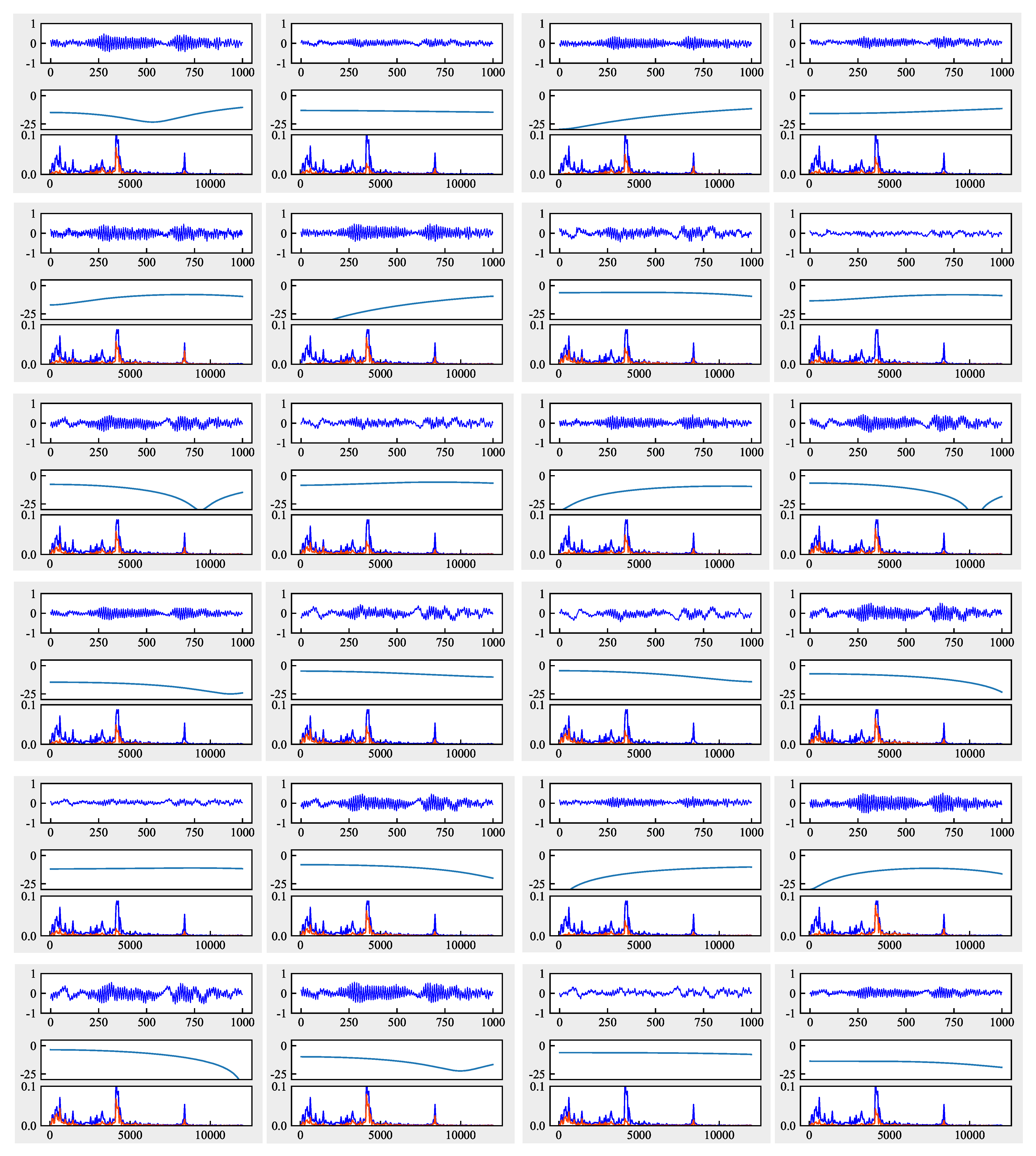

The vibration signal is time domain signal, and most deep learning methods are designed from the perspective of time domain. But vibration signal can be composed of a series of sine wave signals with different frequencies, phases, and amplitudes, which are the frequency domain representations of the vibration signal. The vibration modes of different fault types are different, and the FFT spectrograms are also different, as shown in

Figure 2. Signals of different fault type have different dominant frequency bands, which means that useful information is contained in different frequency bands. Traditional methods usually use some signal processing techniques to extract features in the time domain and frequency domain. The commonly used CNN can automatically extract features from the original signals and learn related fault modes based on the labeled data. But what exactly does the learned convolution kernel mean? Here we can regard the first layer of convolution kernels as the preprocessing of the original signals. To observe the frequency domain characteristics of the convolution kernels, we can draw the amplitude-frequency characteristics (AFC) curve of kernels. Next, the principle of AFC will be explained.

Let the input signal is

, the output signal after a convolutional kernel is

, and the convolution operation can be seen as a function

. To get the AFC curve of

, we take a series of sinusoidal signals

with different frequencies

. For each signal, the length is

:

Then a series of corresponding outputs

will be obtained. The amplitude ratio of the output signal to the input signal is calculated, and the logarithm of 20 times is taken:

where

is amplitude of output signal,

is amplitude of the input signal. So we will get a set of

. With

from low to high as the horizontal axis and

as the vertical axis, we can get the AFC curve. AFC curve shows the ability of a convolution kernel to suppress signals in various frequency bands. In general, the signal amplitude that passes through the filter will decrease and

will be negative. If the value

is very small, the filter will suppress the signal

with frequency

. In contrast, the filter does not suppress the signal

.

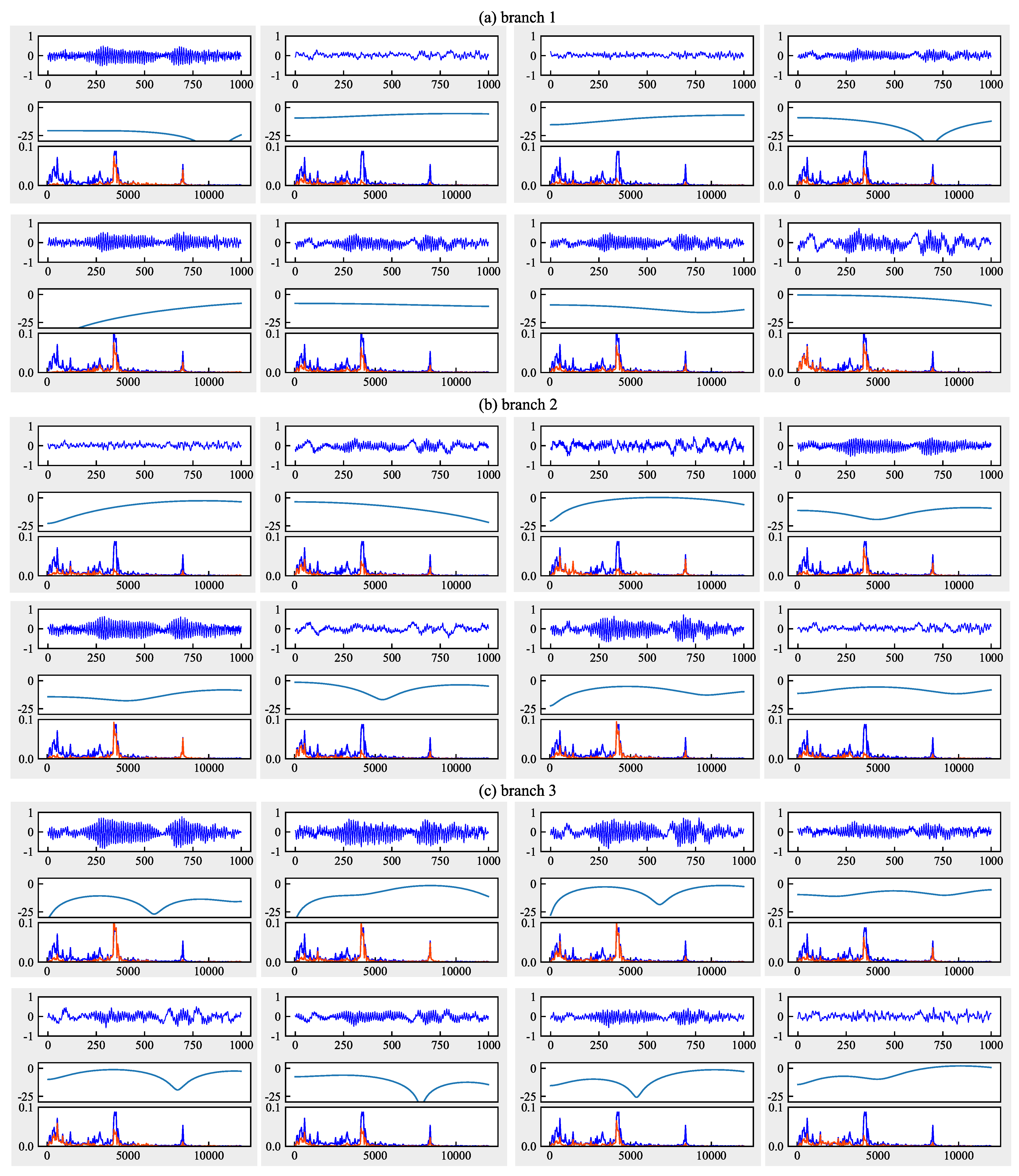

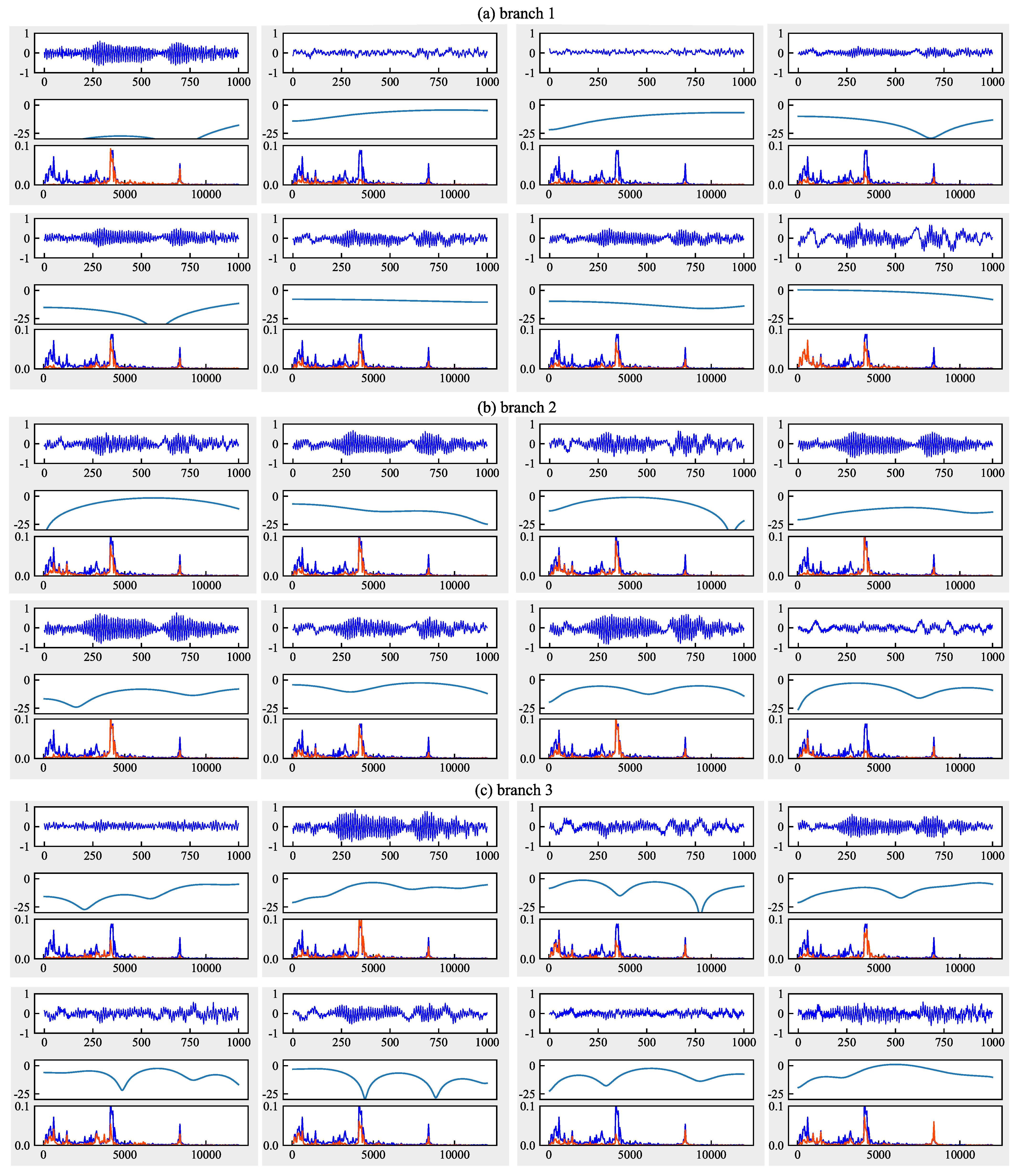

To explore the meaning of the convolution kernel from a frequency domain perspective, we trained four CNN with different kernel sizes (kernel size is 15, dilation rates are 1, 2, 3, and 5). The output of signal after the first convolution layer, AFC curve of one of the convolution kernels and FFT spectrogram of output are drawn in

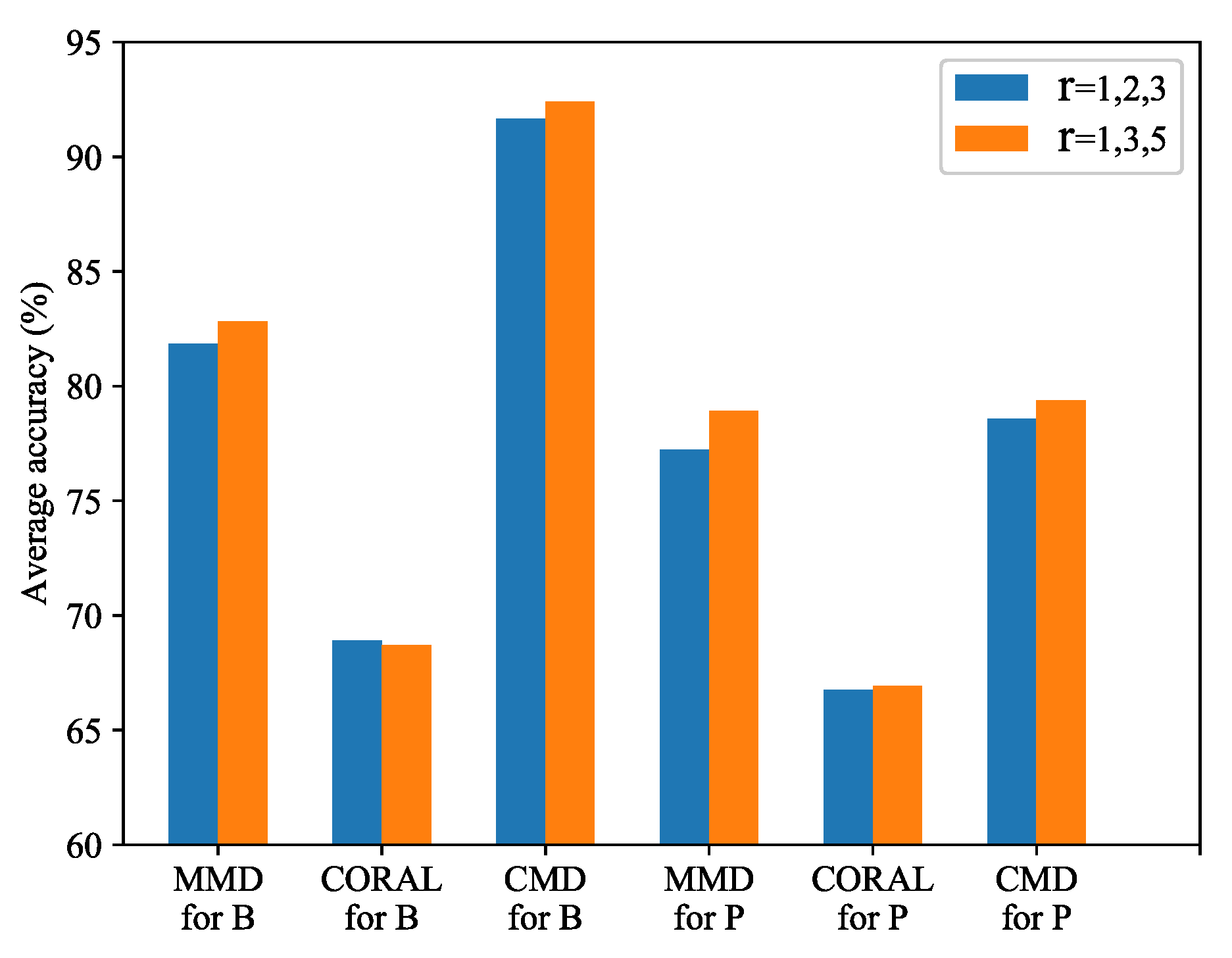

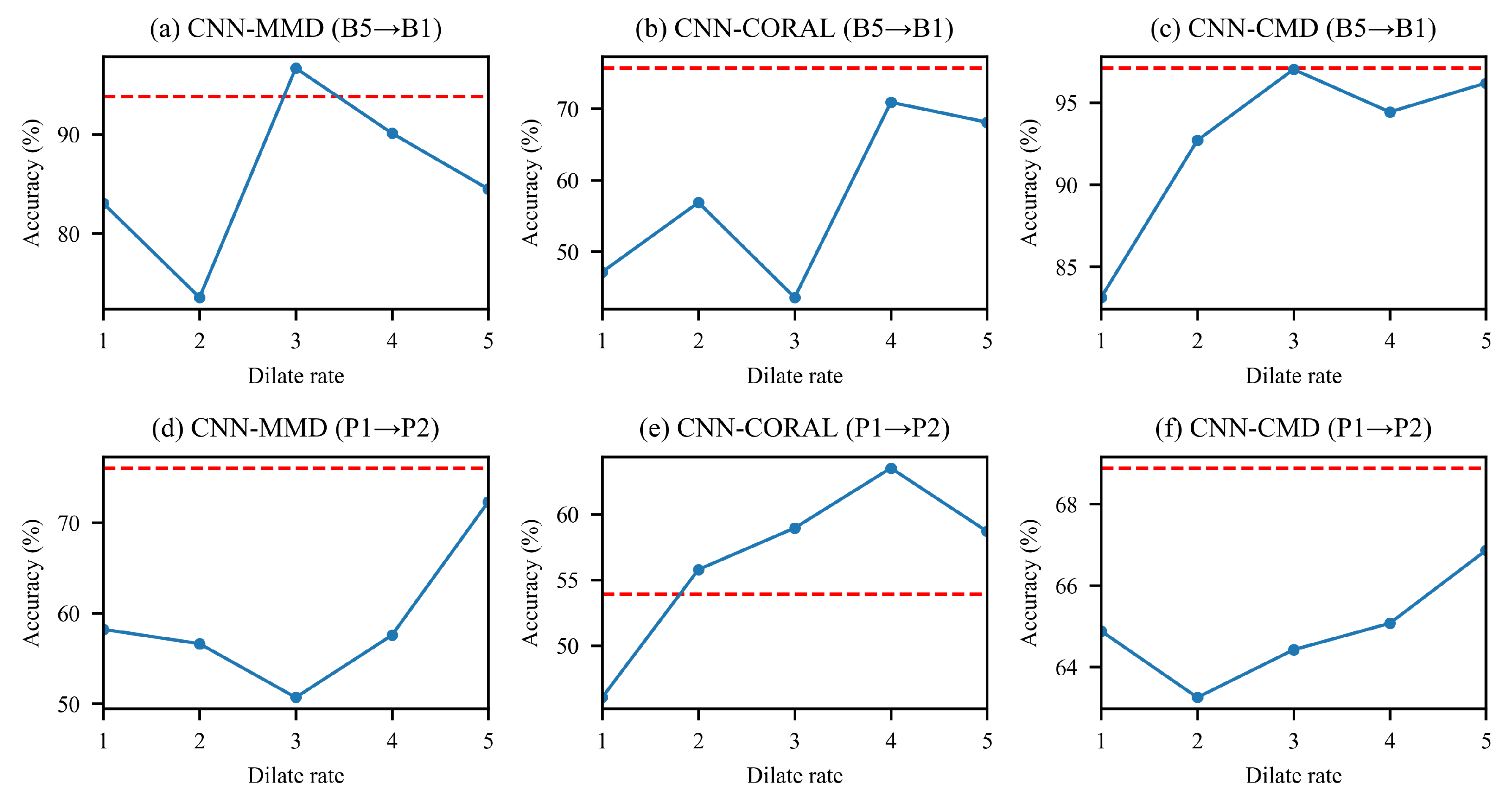

Figure 3. As we can see that the convolution kernels can be regarded as a series of filters, which can filter out signals of different frequency bands. Observing these AFC curves, we can get the following points:

the convolution kernels can be regarded as a series of filters, which can suppress signals in some single frequency bands.

Different dilation rates have different AFC curves. Convolution kernels with a dilation rate have multiple suppression bands. And kernels with higher dilation rates have more suppression bands.

The above findings motivate us to design the network architecture from the perspective of the frequency domain. We change the first layer of CNN to a multi-scale convolution kernel fusion method. The input signal is preprocessed in multiple frequency bands before entering the next stage of feature extraction. Compared with single-scale CNN, the improved CNN can extract richer frequency domain information to improve CNN’s feature extraction ability.