Individual’s Social Perception of Virtual Avatars Embodied with Their Habitual Facial Expressions and Facial Appearance

Abstract

1. Introduction

1.1. Habitual Facial Expressions and Facial Appearance

1.2. Virtual Avatar

1.3. Research Goal

2. Methods

2.1. Participants

2.2. Materials

2.2.1. Video Stimulus

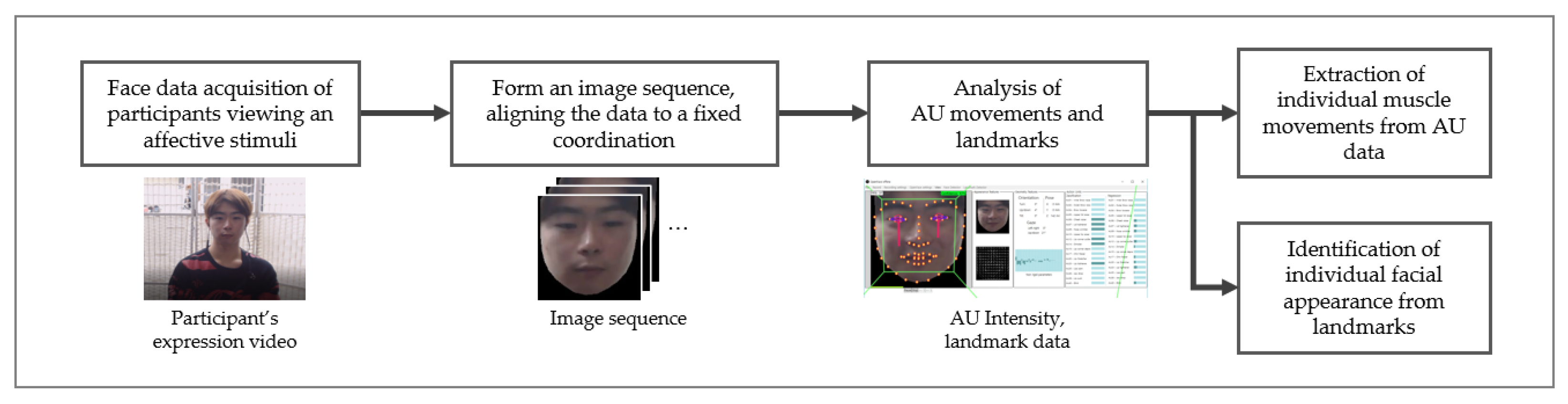

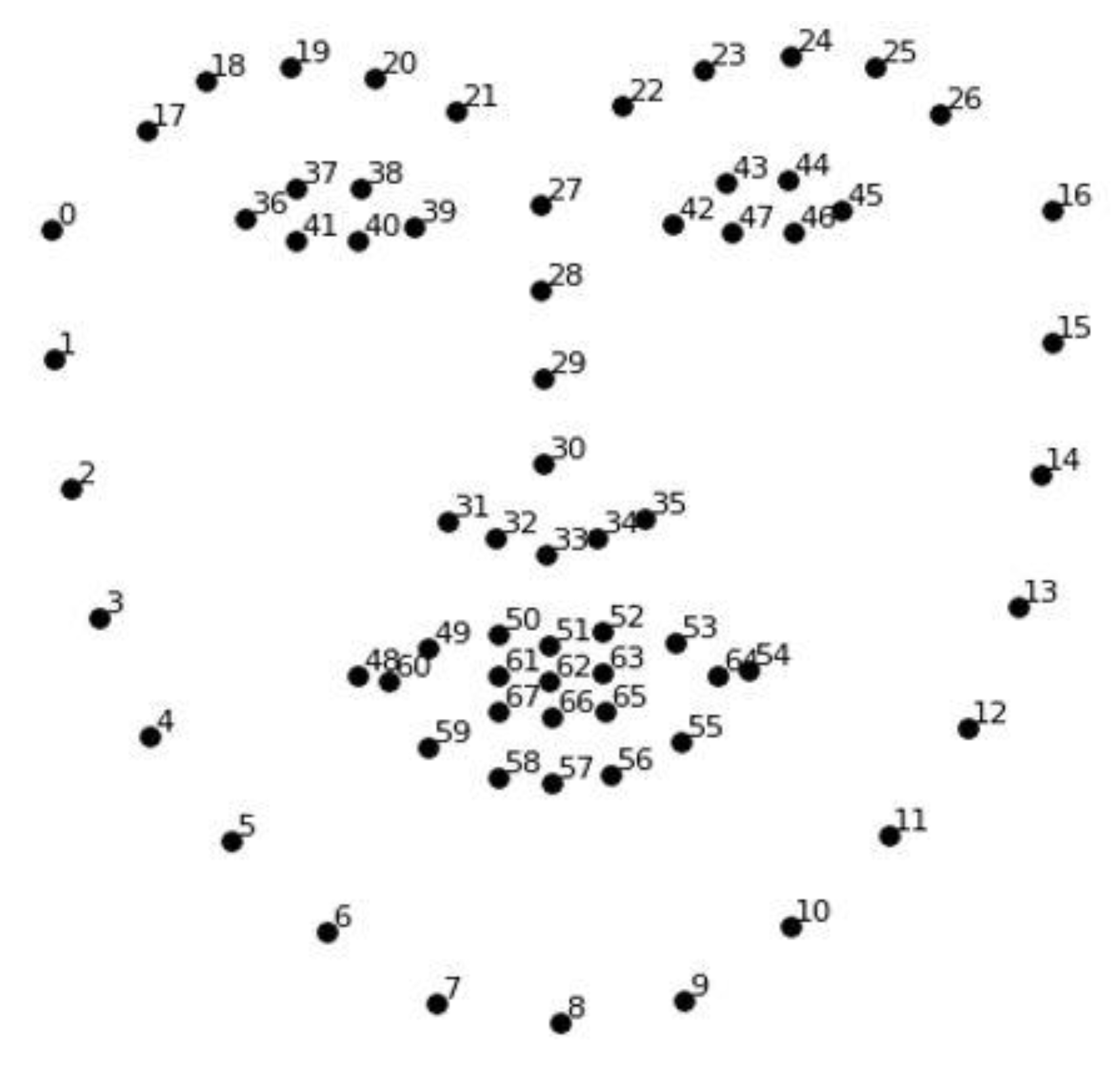

2.2.2. Video Analysis

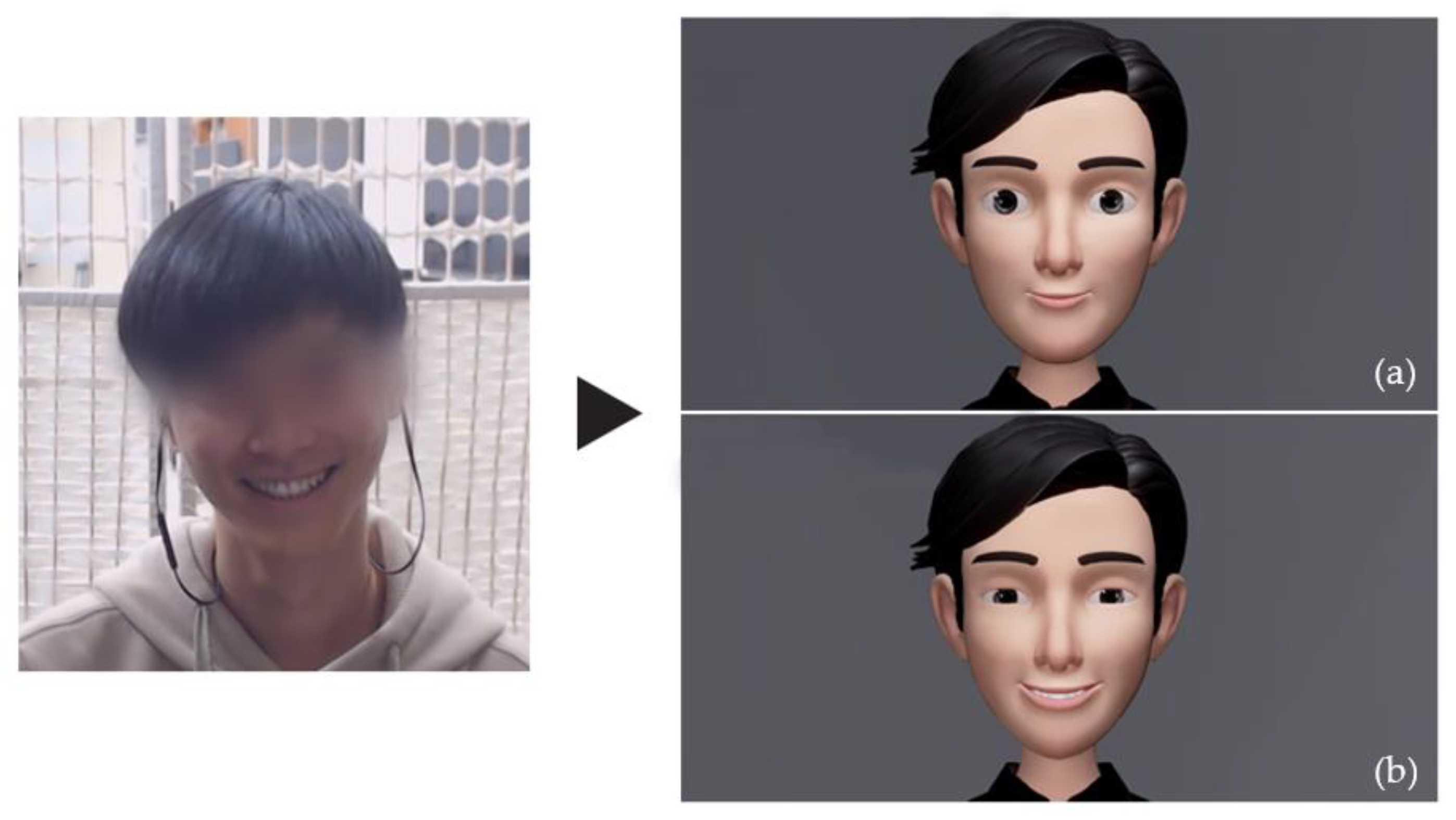

2.2.3. Virtual Avatar

2.2.4. Subjective Appraisal of Social Constructs

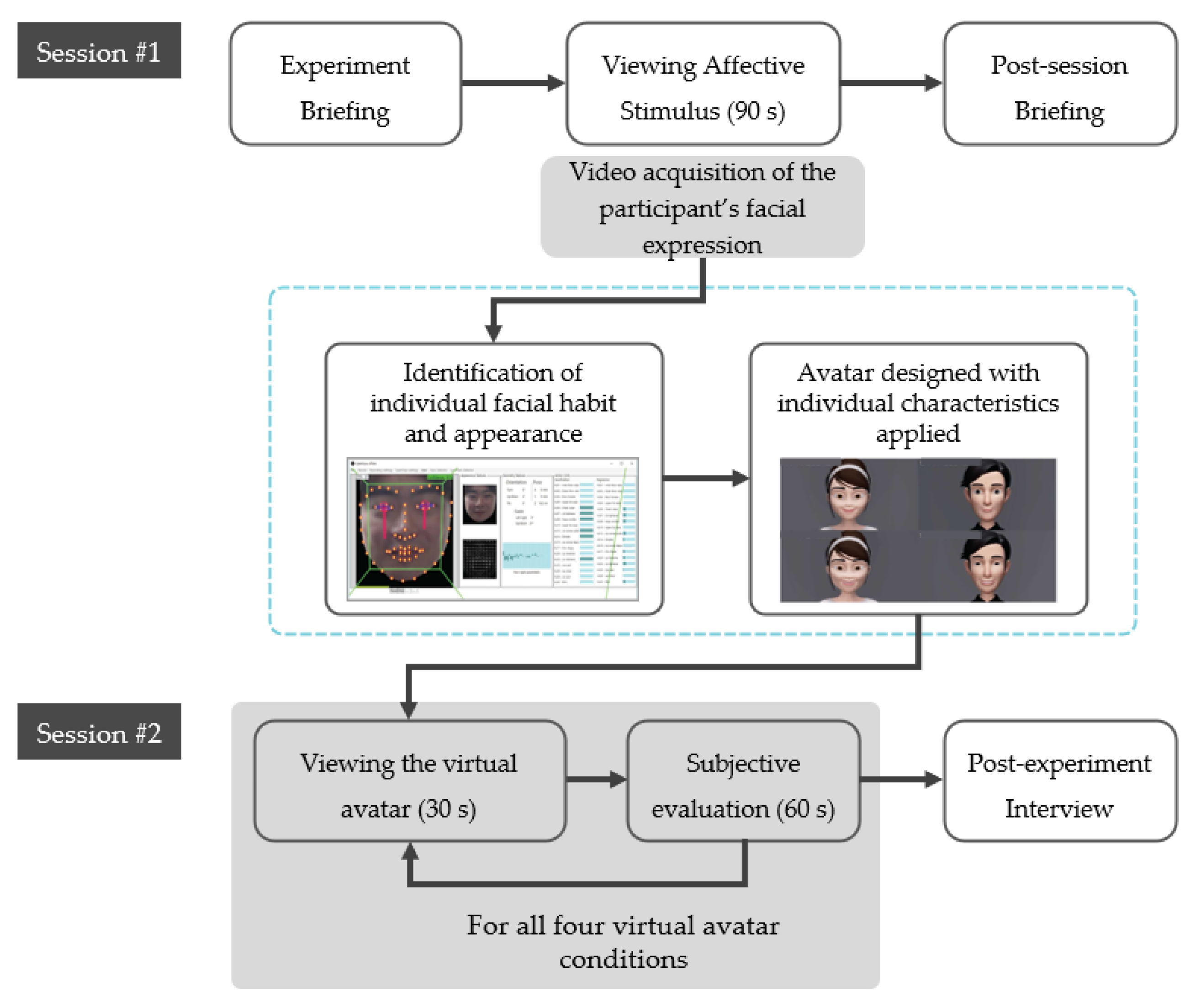

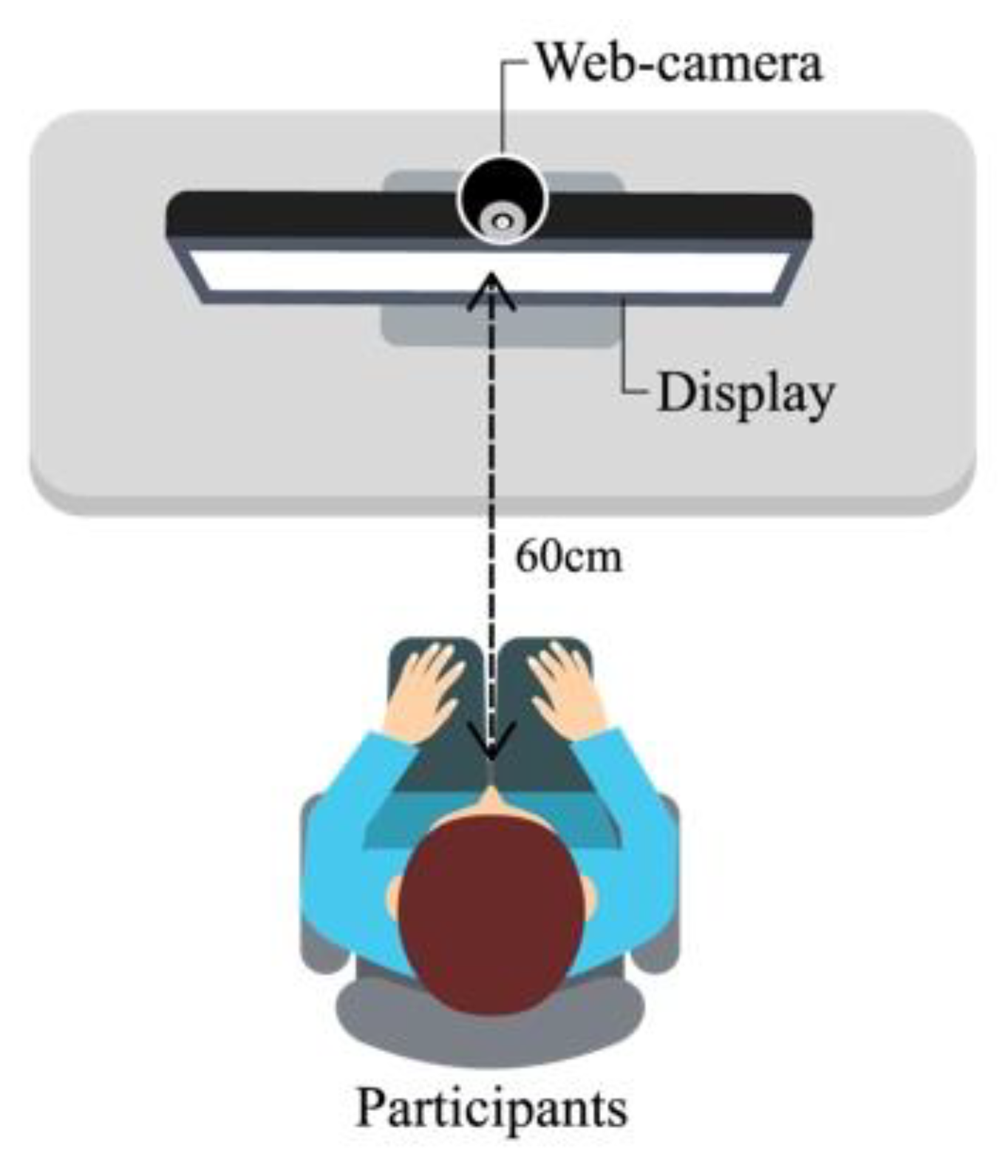

2.3. Procedure

- (1)

- An avatar with both the habitual facial expression and appearance applied;

- (2)

- An avatar with only the facial appearance applied;

- (3)

- An avatar with only the habitual facial expression applied;

- (4)

- Baseline avatar with none of the individual data applied.

2.4. Statistical Analysis

3. Results

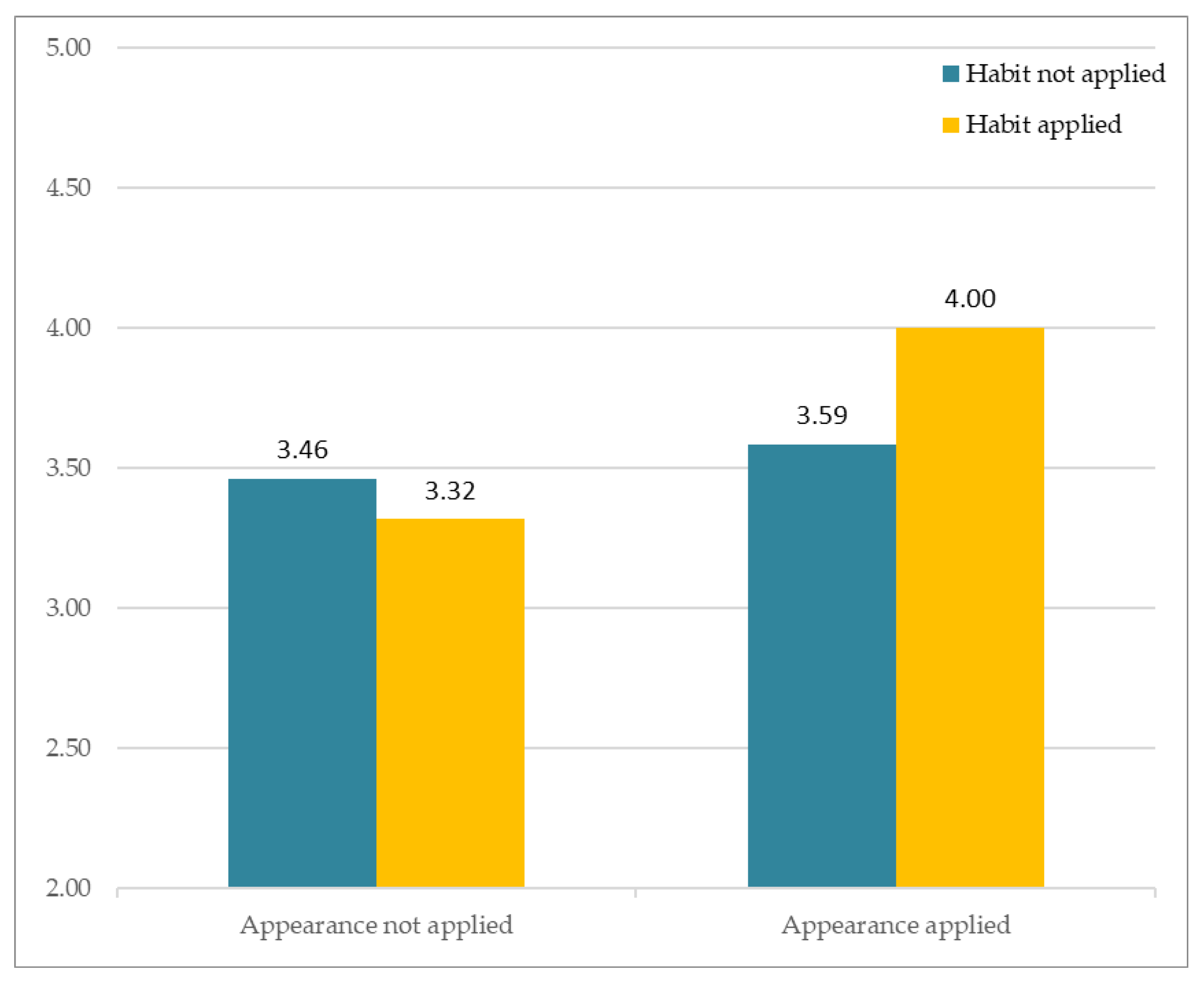

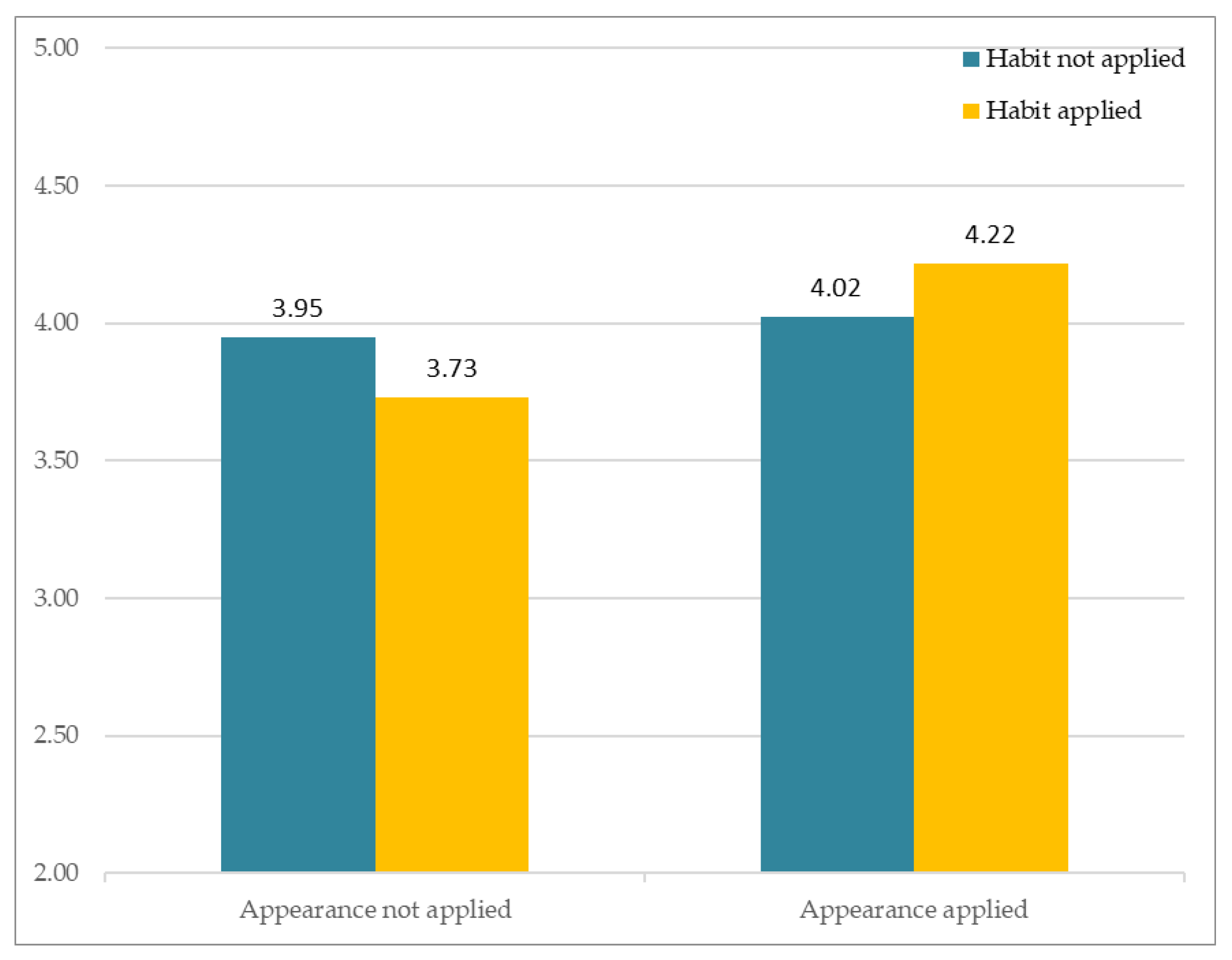

3.1. Similarity

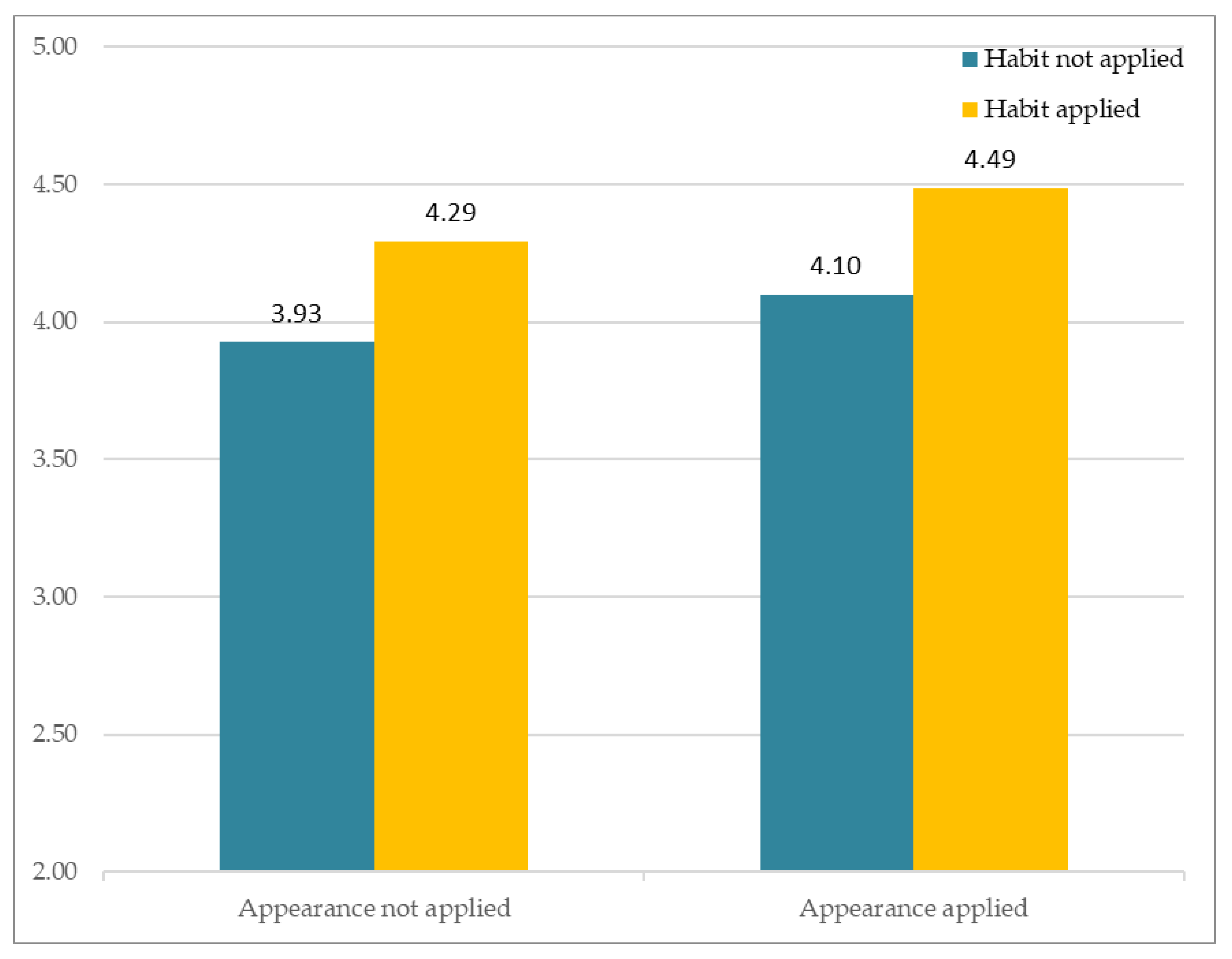

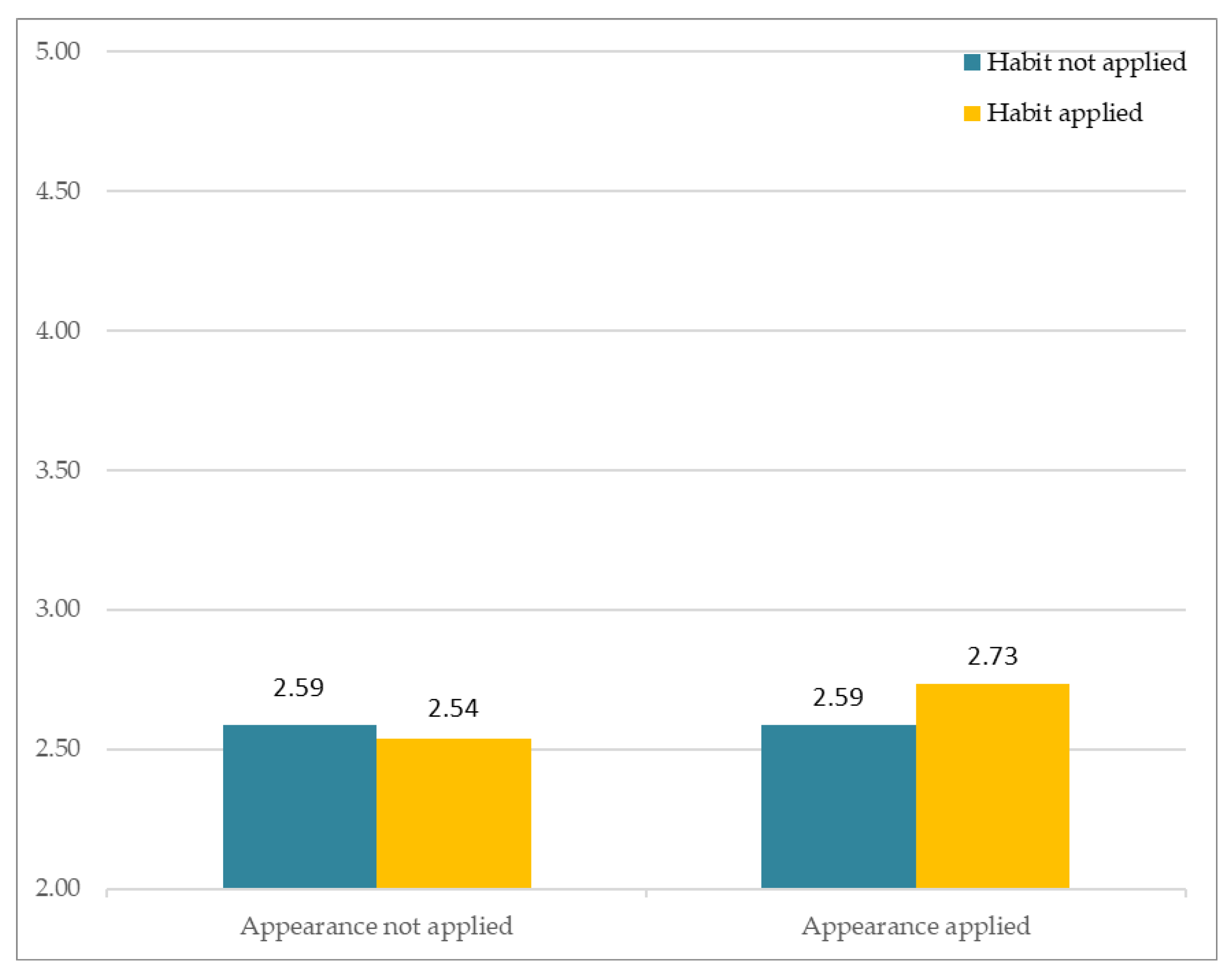

3.2. Familiarity

3.3. Attraction

3.4. Liking

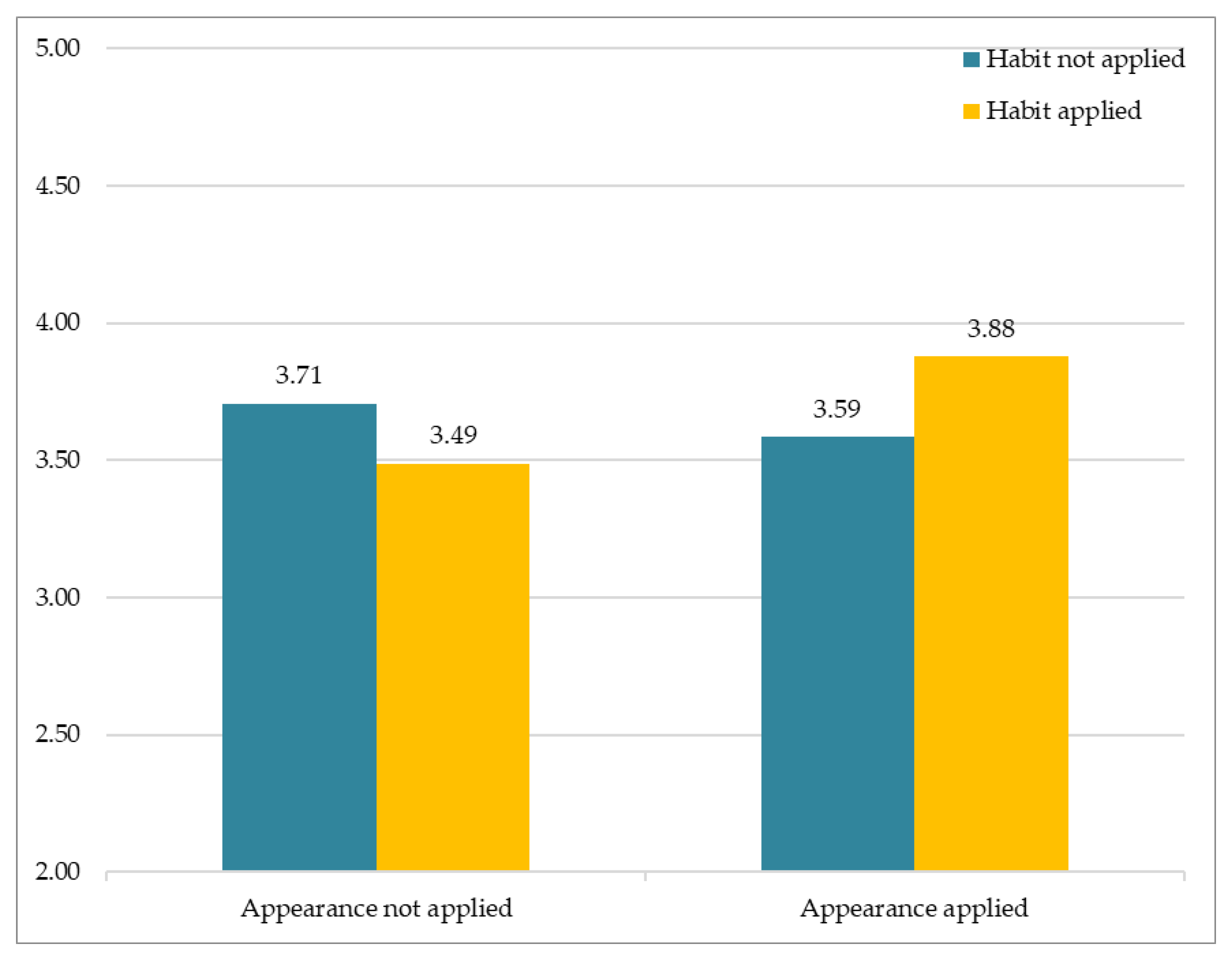

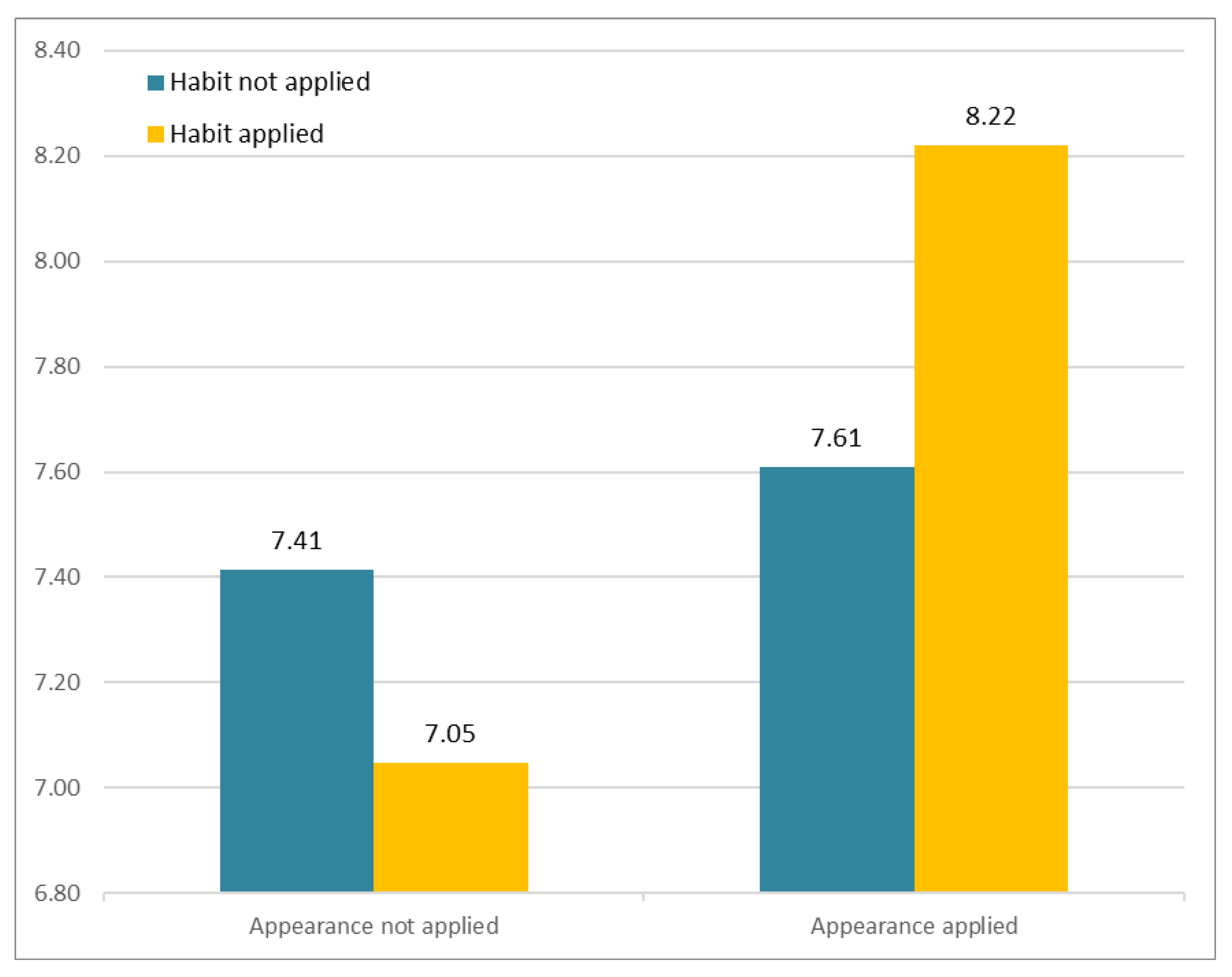

3.5. Involvement

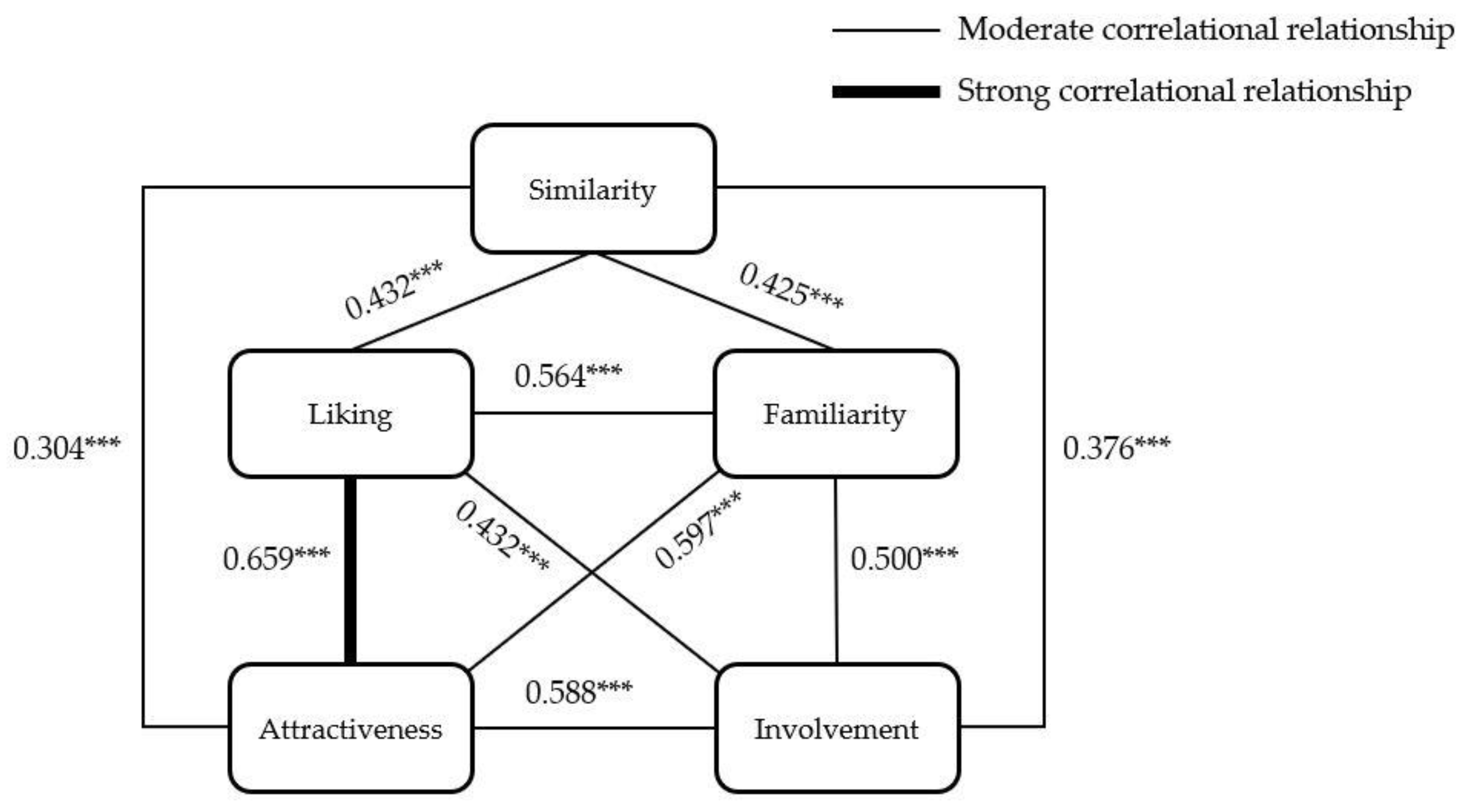

3.6. The Correlations between Social Perceptions

3.7. Data Categorization

4. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- Patterson, M.L. Invited article: A parallel process model of nonverbal communication. J. Nonverbal. Behav. 1995, 19, 3–29. [Google Scholar] [CrossRef]

- Ekman, P.; Friesen, W.V. Emotions Revealed: Recognizing Faces and Feelings to Improve Communication and Emotional; Calavia Balduz, J.M., López-Palop de Piquer, B., Laita de Roda, P., Eds.; Holt Paper-Back: New York, NY, USA, 2007. [Google Scholar]

- Sproull, L.; Subramani, M.; Kiesler, S.; Walker, J.H.; Waters, K. When the interface is a face. Hum.-Comput. Interact. 1996, 11, 97–124. [Google Scholar] [CrossRef]

- Morton, J.; Johnson, M.H. CONSPEC and CONLERN: A two-process theory of infant face recognition. Psychol. Rev. 1991, 98, 164. [Google Scholar] [CrossRef]

- Bond, E.K. Perception of form by the human infant. Psychol. Bull. 1972, 77, 225. [Google Scholar] [CrossRef]

- Diener, E.; Fraser, S.C.; Beaman, A.L.; Kelem, R.T. Effects of deindividuation variables on stealing among Halloween trick-or-treaters. J. Pers. Soc. Psychol. 1976, 33, 178. [Google Scholar] [CrossRef]

- Rhodes, G. Looking at faces: First-order and second-order features as determinants of facial appearance. Perception 1988, 17, 43–63. [Google Scholar] [CrossRef] [PubMed]

- Cohn, J.F.; Schmidt, K.; Gross, R.; Ekman, P. Individual differences in facial expression: Stability over time, relation to self-reported emotion, and ability to inform person identification. In Proceedings of the 4th IEEE International Conference on Multimodal Interfaces, Pittsburgh, PA, USA, 16 October 2002; p. 491. [Google Scholar]

- Ekman, P. Facial expression and emotion. Am. Psychol. 1993, 48, 384. [Google Scholar] [CrossRef]

- Patterson, M.L. Nonverbal Behavior: A Functional Perspective; Springer: New York, NY, USA, 2012. [Google Scholar]

- Fridlund, A.J. Human Facial Expression: An Evolutionary View; Academic Press: Cambridge, MA, USA, 2014. [Google Scholar]

- Gallup, G.G., Jr.; Anderson, J.R.; Shillito, D.J. The mirror test. Cogn. Anim. Empir. Theor. Perspect. Anim. Cogn. 2002, 325–333. Available online: https://courses.washington.edu/ccab/Gallup%20on%20mirror%20test.pdf (accessed on 3 September 2021).

- Zajonc, R.B. Attitudinal effects of mere exposure. J. Pers. Soc. Psychol. 1968, 9, 1. [Google Scholar] [CrossRef]

- Saegert, S.; Swap, W.; Zajonc, R.B. Exposure, context, and interpersonal attraction. J. Pers. Soc. Psychol. 1973, 25, 234. [Google Scholar] [CrossRef]

- Swap, W.C. Interpersonal attraction and repeated exposure to rewarders and punishers. Personal. Soc. Psychol. Bull. 1977, 3, 248–251. [Google Scholar] [CrossRef]

- Harrison, A.A.; Tutone, R.M.; McFadgen, D.G. Effects of frequency of exposure of changing and unchanging stimulus pairs on affective ratings. J. Pers. Soc. Psychol. 1971, 20, 102. [Google Scholar] [CrossRef]

- Hamm, N.H.; Baum, M.R.; Nikels, K.W. Effects of race and exposure on judgments of interpersonal favorability. J. Exp. Soc. Psychol. 1975, 11, 14–24. [Google Scholar] [CrossRef]

- Moreland, R.L.; Zajonc, R.B. Exposure effects in person perception: Familiarity, similarity, and attraction. J. Exp. Soc. Psychol. 1982, 18, 395–415. [Google Scholar] [CrossRef]

- Parkinson, B.; Fischer, A.H.; Manstead, A.S.R. Emotion in Social Relations: Cultural, Group, and Interpersonal Processes; Psychology Press: London, UK, 2005. [Google Scholar]

- Hoffman, M.L. Toward a theory of empathic arousal and development. In The Development of Affect; Springer: Berlin/Heidelberg, Germany, 1978; pp. 227–256. [Google Scholar]

- Zajonc, R.B.; Adelmann, P.K.; Murphy, S.T.; Niedenthal, P.M. Convergence in the physical appearance of spouses. Motiv. Emot. 1987, 11, 335–346. [Google Scholar] [CrossRef]

- Benjafield, J.; Adams-Webber, J. Assimilative projection and construct balance in the repertory grid. Br. J. Psychol. 1975, 66, 169–173. [Google Scholar] [CrossRef]

- Ross, L.; Greene, D.; House, P. The ‘false consensus effect’: An egocentric bias in social perception and attribution processes. J. Exp. Soc. Psychol. 1977, 13, 279–301. [Google Scholar] [CrossRef]

- Van Vugt, H.C.; Bailenson, J.N.; Hoorn, J.F.; Konijn, E.A. Effects of facial similarity on user responses to embodied agents. ACM Trans. Comput. Interact. 2008, 17, 1–27. [Google Scholar] [CrossRef]

- Bailenson, J.N.; Yee, N. Digital chameleons: Automatic assimilation of nonverbal gestures in immersive virtual environments. Psychol. Sci. 2005, 16, 814–819. [Google Scholar] [CrossRef]

- Garau, M.; Slater, M.; Vinayagamoorthy, V.; Brogni, A.; Steed, A.; Sasse, M.A. The impact of avatar realism and eye gaze control on perceived quality of communication in a shared immersive virtual environment. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Fort Lauderdale, FL, USA, 5–10 April 2003; pp. 529–536. [Google Scholar]

- Bailenson, J.N.; Yee, N.; Merget, D.; Schroeder, R. The effect of behavioral realism and form realism of real-time avatar faces on verbal disclosure, nonverbal disclosure, emotion recognition, and copresence in dyadic interaction. Presence Teleoperators Virtual Environ. 2006, 15, 359–372. [Google Scholar] [CrossRef]

- Mori, M. The uncanny valley: The original essay by masahiro mori. IEEE Robot. 2017. Available online: https://www.semanticscholar.org/paper/The-Uncanny-Valley%3A-The-Original-Essay-by-Masahiro-Mori-MacDorman/243242898b3148b32a31df5f884d4c4f01ea4e61 (accessed on 3 September 2021).

- Bente, G.; Rüggenberg, S.; Krämer, N.C.; Eschenburg, F. Avatar-mediated networking: Increasing social presence and interpersonal trust in net-based collaborations. Hum. Commun. Res. 2008, 34, 287–318. [Google Scholar] [CrossRef]

- Appel, J.; von der Pütten, A.; Krämer, N.C.; Gratch, J. Does humanity matter? Analyzing the importance of social cues and perceived agency of a computer system for the emergence of social reactions during human-computer interaction. Adv. Hum.-Comput. Interact. 2012, 2012, 324694. [Google Scholar] [CrossRef]

- Kim, H.; Suh, K.-S.; Lee, U.-K. Effects of collaborative online shopping on shopping experience through social and relational perspectives. Inf. Manag. 2013, 50, 169–180. [Google Scholar] [CrossRef]

- Ducheneaut, N.; Wen, M.-H.; Yee, N.; Wadley, G. Body and mind: A study of avatar personalization in three virtual worlds. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Boston, MA, USA, 4–9 April 2009; pp. 1151–1160. [Google Scholar]

- Ekman, P. Universal facial expressions of emotions. Calif. Ment. Health Res. Dig. 1970, 8, 151–158. [Google Scholar]

- Liu, Y.; Schmidt, K.L.; Cohn, J.F.; Mitra, S. Facial asymmetry quantification for expression invariant human identification. Comput. Vis. Image Underst. 2003, 91, 138–159. [Google Scholar] [CrossRef]

- Livingstone, M.; Hubel, D. Segregation of form, color, movement, and depth: Anatomy, physiology, and perception. Science 1988, 240, 740–749. [Google Scholar] [CrossRef]

- Bruce, V.; Young, A. Understanding face recognition. Br. J. Psychol. 1986, 77, 305–327. [Google Scholar] [CrossRef]

- Samson, A.C.; Kreibig, S.D.; Soderstrom, B.; Wade, A.A.; Gross, J.J. Eliciting positive, negative and mixed emotional states: A film library for affective scientists. Cogn. Emot. 2016, 30, 827–856. [Google Scholar] [CrossRef]

- Schroff, F.; Kalenichenko, D.; Philbin, J. Facenet: A unified embedding for face recognition and clustering. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 815–823. [Google Scholar]

- Ekman, P.; Friesen, W.V. Manual of the Facial Action Coding System (FACS); Consulting Psychologists Press: Palo Alto, CA, USA, 1978. [Google Scholar]

- Baltrušaitis, T.; Mahmoud, M.; Robinson, P. Cross-dataset learning and person-specific normalisation for automatic action unit detection. In Proceedings of the 2015 11th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG), Ljubljana, Slovenia, 4–8 May 2015; Volume 6, pp. 1–6. [Google Scholar]

- Alvarez, J.; Garcia, M. 2015. Available online: https://www.meryproject.com/ (accessed on 20 August 2021).

- Unity Real-Time Development Platform. Available online: https://unity.com/ (accessed on 20 August 2021).

- Folkes, V.S. Forming relationships and the matching hypothesis. Personal. Soc. Psychol. Bull. 1982, 8, 631–636. [Google Scholar] [CrossRef]

- Montoya, R.M.; Horton, R.S.; Kirchner, J. Is actual similarity necessary for attraction? A meta-analysis of actual and perceived similarity. J. Soc. Pers. Relat. 2008, 25, 889–922. [Google Scholar] [CrossRef]

- Condon, J.W.; Crano, W.D. Inferred evaluation and the relation between attitude similarity and interpersonal attraction. J. Pers. Soc. Psychol. 1988, 54, 789. [Google Scholar] [CrossRef]

- Hoyle, R.H. Interpersonal attraction in the absence of explicit attitudinal information. Soc. Cogn. 1993, 11, 309–320. [Google Scholar] [CrossRef]

- Ptacek, J.T.; Dodge, K.L. Coping strategies and relationship satisfaction in couples. Personal. Soc. Psychol. Bull. 1995, 21, 76–84. [Google Scholar] [CrossRef]

- Byrne, D.; Gouaux, C.; Griffitt, W.; Lamberth, J.; Murakawa, N.; Prasad, M.; Prasad, A.; Ramirez, M., III. The ubiquitous relationship: Attitude similarity and attraction: A cross-cultural study. Hum. Relat. 1971, 24, 201–207. [Google Scholar] [CrossRef]

- White, G.L.; Shapiro, D. Don’t I know you? Antecedents and social consequences of perceived familiarity. J. Exp. Soc. Psychol. 1987, 23, 75–92. [Google Scholar] [CrossRef]

- Ladwig, P.; Dalrymple, K.E.; Brossard, D.; Scheufele, D.A.; Corley, E.A. Perceived familiarity or factual knowledge? Comparing operationalizations of scientific understanding. Sci. Public Policy 2012, 39, 761–774. [Google Scholar] [CrossRef]

- Noble, A.M.; Klauer, S.G.; Doerzaph, Z.R.; Manser, M.P. Driver training for automated vehicle technology–Knowledge, behaviors, and perceived familiarity. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting, Seattle, WA, USA, 28 October–1 November 2019; Volume 63, pp. 2110–2114. [Google Scholar]

- Byrne, D. Attitudes and attraction. In Advances in Experimental Social Psychology; Elsevier: Amsterdam, The Netherlands, 1969; Volume 4, pp. 35–89. [Google Scholar]

- Byrne, D. An overview (and underview) of research and theory within the attraction paradigm. J. Soc. Pers. Relat. 1997, 14, 417–431. [Google Scholar] [CrossRef]

- Berscheid, E.; Hatfield, E. Interpersonal Attraction; Addison-Wesley Reading: Boston, MA, USA, 1969; Volume 69. [Google Scholar]

- Newcomb, T.M. The Acquaintance Process as a Prototype of Human Interaction; Holt, Rinehart & Winston: New York, NY, USA, 1961. [Google Scholar]

- Aron, A.; Lewandowski, G. Psychology of interpersonal attraction. Int. Encycl. Soc. Behav. Sci. 2001, 7860–7862. [Google Scholar]

- Huston, T.L. Foundations of Interpersonal Attraction; Elsevier: Amsterdam, The Netherlands, 2013. [Google Scholar]

- Backman, C.W.; Secord, P.F. The effect of perceived liking on interpersonal attraction. Hum. Relat. 1959, 12, 379–384. [Google Scholar] [CrossRef]

- Slane, S.; Leak, G. Effects of self-perceived nonverbal immediacy behaviors on interpersonal attraction. J. Psychol. 1978, 98, 241–248. [Google Scholar] [CrossRef]

- Davis, M.H. Empathy: A Social Psychological Approach; Routledge: London, UK, 2018. [Google Scholar]

- De Vignemont, F.; Singer, T. The empathic brain: How, when and why? Trends Cogn. Sci. 2006, 10, 435–441. [Google Scholar] [CrossRef] [PubMed]

- Park, S.; Catrambone, R. Social Responses to Virtual Humans: The Effect of Human-Like Characteristics. Appl. Sci. 2021, 11, 7214. [Google Scholar] [CrossRef]

- Duck, S.E.; Perlman, D.E. Understanding Personal Relationships: An Interdisciplinary Approach; Sage Publications, Inc.: Thousand Oaks, CA, USA, 1985. [Google Scholar]

- Byrne, D.; Griffitt, W. Similarity versus liking: A clarification. Psychon. Sci. 1966, 6, 295–296. [Google Scholar] [CrossRef][Green Version]

- Johnston, O.; Thomas, F. The Illusion of Life: Disney Animation; Disney Editions: New York, NY, USA, 1981. [Google Scholar]

- Bates, J. The role of emotion in believable agents. Commun. ACM 1994, 37, 122–125. [Google Scholar] [CrossRef]

- Berger, C.R. Proactive and retroactive attribution processes in interpersonal communications. Hum. Commun. Res. 1975, 2, 33–50. [Google Scholar] [CrossRef]

- Byrne, D.; Rhamey, R. Magnitude of positive and negative reinforcements as a determinant of attraction. J. Pers. Soc. Psychol. 1965, 2, 884. [Google Scholar] [CrossRef] [PubMed]

- Peterson, J.L.; Miller, C. Physical attractiveness and marriage adjustment in older American couples. J. Psychol. 1980, 105, 247–252. [Google Scholar] [CrossRef]

- Yeong Tan, D.T.; Singh, R. Attitudes and attraction: A developmental study of the similarity-attraction and dissimilarity-repulsion hypotheses. Personal. Soc. Psychol. Bull. 1995, 21, 975–986. [Google Scholar] [CrossRef]

- Banikiotes, P.G.; Neimeyer, G.J. Construct importance and rating similarity as determinants of interpersonal attraction. Br. J. Soc. Psychol. 1981, 20, 259–263. [Google Scholar] [CrossRef]

| Research Hypotheses | |

|---|---|

| H1 | A virtual avatar that displays the participant’s habitual expressions will elicit the following perceived social constructs more than a virtual avatar that does not: Perceived similarity Perceived familiarity Perceived attraction Perceived liking Perceived involvement |

| H2 | A virtual avatar that has a similar facial appearance to the participant will elicit the following perceived social constructs more than a virtual avatar that does not: Perceived similarity Perceived familiarity Perceived attraction Perceived liking Perceived involvement |

| H3 | There is an interaction between the participant’s habitual expressions and facial appearance. |

| Blend Shape | Description | Muscular Basis |

|---|---|---|

| AU1 | Inner brow raiser | Frontalis, Pars medialis |

| AU2 | Outer brow raiser | Frontalis, Pars lateralis |

| AU4 | Brow lowerer | Depressor glabellae, Depressor supercilli, Corrugator supercilli |

| AU5 | Upper lid raiser | Levator palpebrae superioris |

| AU6 | Cheek raiser | Orbicularis oculi, Pars orbitalis |

| AU7 | Lid tightener | Orbicularis oculi, Pars palpebralis |

| AU9 | Nose wrinkler | Levator labii superioris alaeque nasi |

| AU10 | Upper lip raiser | Levator labii superioris, Caput infraorbitalis |

| AU12 | Lip corner puller | Zygomaticus major |

| AU14 | Dimpler | Buccinator |

| AU15 | Lip corner depressor | Depressor anguli oris (Triangularis) |

| AU17 | Chin raiser | Mentalis |

| AU20 | Lip stretcher | Risorius |

| AU23 | Lip tightener | Orbicularis oris |

| AU25 | Lips part | Depressor labii, Relaxation of mentalis (AU17), Orbicularis oris |

| AU26 | Jaw drop | Masseter, Temporal and Internal pterygoid relaxed |

| AU28 | Lip suck | Orbicularis oris |

| AU45 | Blink | Relaxation of levator palpebrae and Contraction of orbicularis oculi, Pars palpebralis. |

| Shape1 | Expansion of the lower jaw bone | Mandible ramus extension |

| Shape2 | Contraction of the lower jaw bone | Mandible ramus compression |

| Shape3 | Expansion of the lower jaw | Chin extension |

| Shape4 | Contraction of the lower jaw | Chin compression |

| Social Construct | Operational Definition |

|---|---|

| Similarity | The degree to which the participant believes the virtual avatar’s appearance is similar to themselves. |

| Familiarity | The degree to which the participant is familiar with the virtual avatar’s appearance. |

| Attraction | The degree to which the participant is attracted to the virtual avatar. |

| Liking | The degree to which the participant likes or dislikes the virtual avatar. |

| Involvement | The degree to which the participant relates to or empathizes with the virtual avatar. |

| Similarity | Familiarity | Attraction | Liking | Involvement | |

|---|---|---|---|---|---|

| Similarity | 0.425 *** | 0.304 *** | 0.432 *** | 0.376 *** | |

| Familiarity | 0.597 *** | 0.564 *** | 0.500 *** | ||

| Attraction | 0.659 *** | 0.588 *** | |||

| Liking | 0.499 *** | ||||

| Involvement |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Park, S.; Kim, S.P.; Whang, M. Individual’s Social Perception of Virtual Avatars Embodied with Their Habitual Facial Expressions and Facial Appearance. Sensors 2021, 21, 5986. https://doi.org/10.3390/s21175986

Park S, Kim SP, Whang M. Individual’s Social Perception of Virtual Avatars Embodied with Their Habitual Facial Expressions and Facial Appearance. Sensors. 2021; 21(17):5986. https://doi.org/10.3390/s21175986

Chicago/Turabian StylePark, Sung, Si Pyoung Kim, and Mincheol Whang. 2021. "Individual’s Social Perception of Virtual Avatars Embodied with Their Habitual Facial Expressions and Facial Appearance" Sensors 21, no. 17: 5986. https://doi.org/10.3390/s21175986

APA StylePark, S., Kim, S. P., & Whang, M. (2021). Individual’s Social Perception of Virtual Avatars Embodied with Their Habitual Facial Expressions and Facial Appearance. Sensors, 21(17), 5986. https://doi.org/10.3390/s21175986