Automated Loss-of-Balance Event Identification in Older Adults at Risk of Falls during Real-World Walking Using Wearable Inertial Measurement Units

Abstract

:1. Introduction

2. Materials and Methods

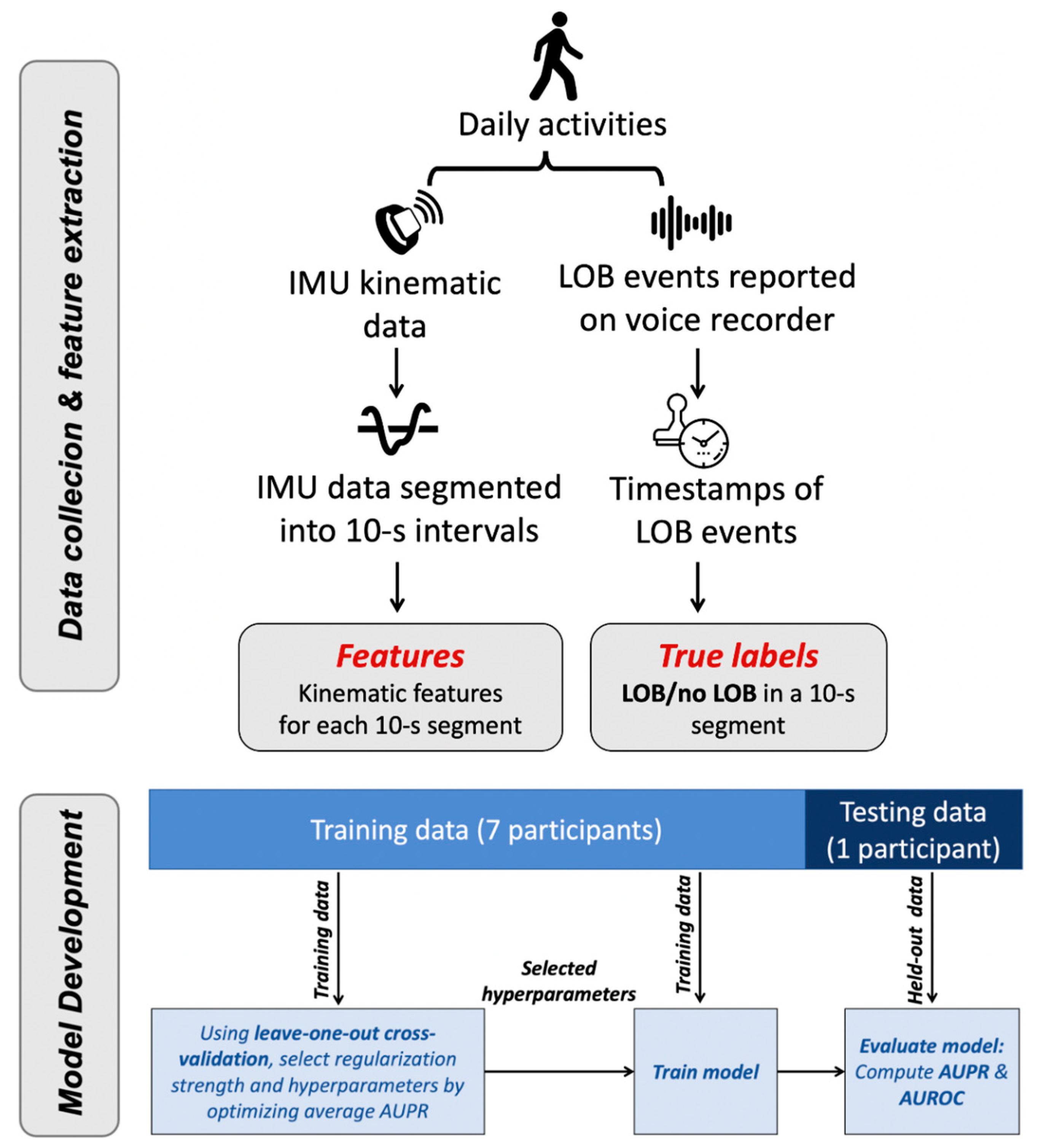

2.1. Overview

2.2. Data Collection

2.2.1. Study Cohort

2.2.2. Outcome

2.3. Data Segmentation and Processing

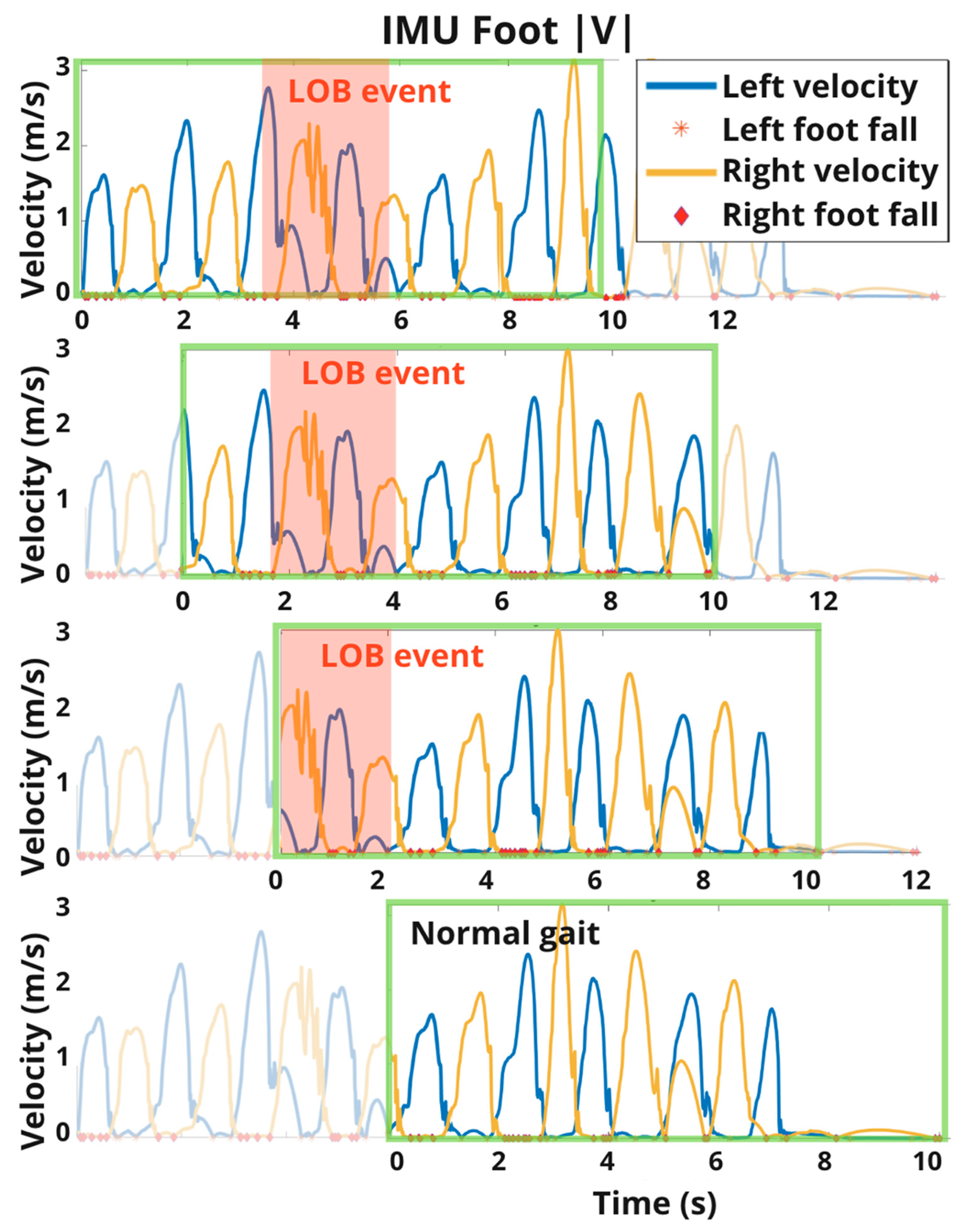

2.3.1. Data Segmentation

2.3.2. Extraction of IMU-Derived Features (Inputs)

2.3.3. Extraction of LOB Labels (Outputs)

2.4. Loss-of-Balance Classification Task

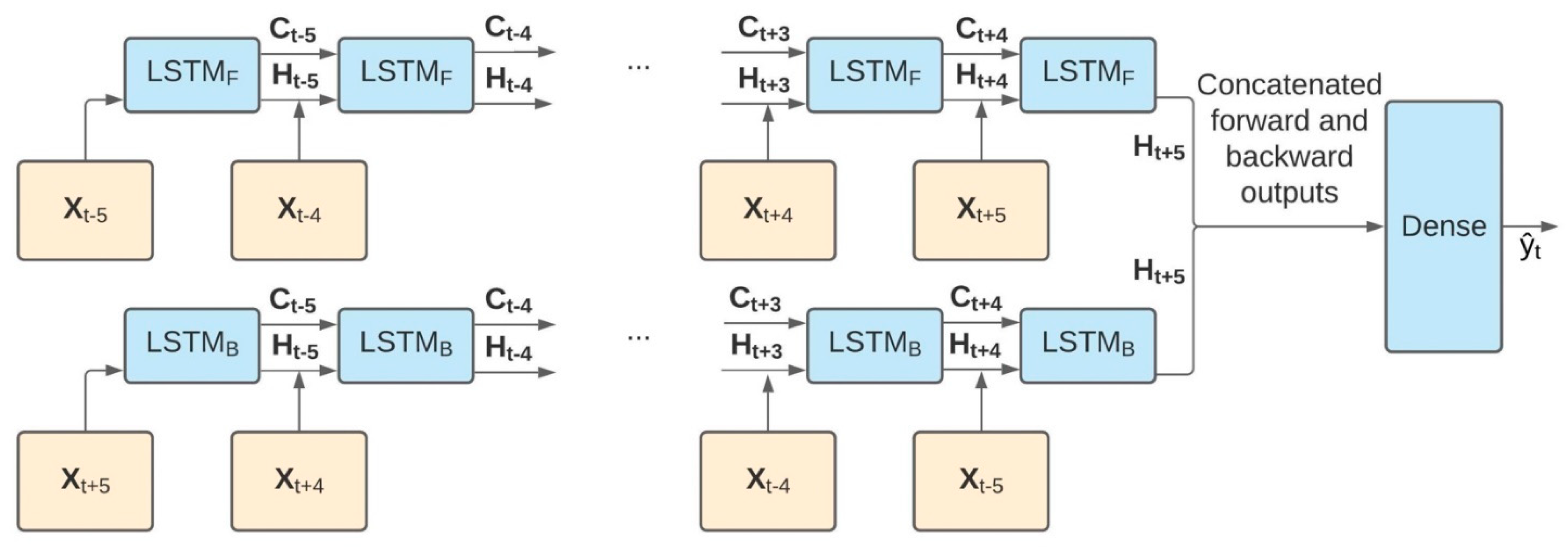

2.4.1. Model Architecture

2.4.2. Training Details

2.4.3. Evaluation Details

2.4.4. Feature Importance

3. Results

4. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Bergen, G.; Stevens, M.R.; Burns, E. Falls and Fall Injuries among Adults Aged ≥65—United States, 2014. Morb. Mortal. Wkly. Rep. 2016, 65, 993–998. [Google Scholar] [CrossRef]

- Burns, E.; Kakara, R. Deaths from Falls among Persons Aged ≥65 Years—United States, 2007–2016. Morb. Mortal. Wkly. Rep. 2018, 67, 509–514. [Google Scholar] [CrossRef] [Green Version]

- Sterling, A.D.; O’Connor, J.A.; Bonadies, J. Geriatric Falls: Injury Severity Is High and Disproportionate to Mechanism. J. Trauma Inj. Infect. Crit. Care 2001, 50, 116–119. [Google Scholar] [CrossRef]

- Leveille, S.G.; Jones, R.N.; Kiely, D.K.; Hausdorff, J.M.; Shmerling, R.H.; Guralnik, J.M.; Kiel, D.P.; Lipsitz, L.A.; Bean, J.F. Chronic Musculoskeletal Pain and the Occurrence of Falls in an Older Population. JAMA 2009, 302, 2214–2221. [Google Scholar] [CrossRef] [PubMed]

- Berg, W.P.; Alessio, H.M.; Mills, E.M.; Tong, C. Circumstances and consequences of falls in independent community-dwelling older adults. Age Ageing 1997, 26, 261–268. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Maidan, I.; Freedman, T.; Tzemah, R.; Giladi, N.; Mirelman, A.; Hausdorff, J. Introducing a new definition of a near fall: Intra-rater and inter-rater reliability. Gait Posture 2014, 39, 645–647. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Srygley, J.M.; Herman, T.; Giladi, N.; Hausdorff, J.M. Self-Report of Missteps in Older Adults: A Valid Proxy of Fall Risk? Arch. Phys. Med. Rehabil. 2009, 90, 786–792. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Stevens, J.A. Falls among older adults—risk factors and prevention strategies. J. Saf. Res. 2005, 36, 409–411. [Google Scholar] [CrossRef]

- Perell, K.L.; Nelson, A.; Goldman, R.L.; Luther, S.L.; Prieto-Lewis, N.; Rubenstein, L.Z. Fall Risk Assessment Measures an Analytic Review. J. Gerontol. A Biol. Sci. Med. Sci. 2001, 56, 761–766. [Google Scholar] [CrossRef] [Green Version]

- Klenk, J.; Becker, C.; Lieken, F.; Nicolai, S.; Maetzler, W.; Alt, W.; Zijlstra, W.; Hausdorff, J.; van Lummel, R.; Chiari, L.; et al. Comparison of acceleration signals of simulated and real-world backward falls. Med. Eng. Phys. 2011, 33, 368–373. [Google Scholar] [CrossRef]

- Handelzalts, S.; Alexander, N.B.; Mastruserio, N.; Nyquist, L.V.; Strasburg, D.M.; Ojeda, L.V. Detection of Real-World Trips in At-Fall Risk Community Dwelling Older Adults Using Wearable Sensors. Front. Med. 2020, 7. [Google Scholar] [CrossRef]

- Ojeda, L.V.; Rebula, J.R.; Kuo, A.D.; Adamczyk, P.G. Influence of contextual task constraints on preferred stride parameters and their variabilities during human walking. Med. Eng. Phys. 2015, 37, 929–936. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Hillel, I.; Gazit, E.; Nieuwboer, A.; Avanzino, L.; Rochester, L.; Cereatti, A.; Croce, U.D.; Rikkert, M.O.; Bloem, B.R.; Pelosin, E.; et al. Is Every-Day Walking in Older Adults More Analogous to Dual-Task Walking or to Usual Walking? Elucidating the Gaps between Gait Performance in the Lab and during 24/7 Monitoring. Eur. Rev. Aging Phys. Act. 2019, 16, 6. [Google Scholar] [CrossRef] [PubMed]

- Renggli, D.; Graf, C.; Tachatos, N.; Singh, N.; Meboldt, M.; Taylor, W.R.; Stieglitz, L.; Daners, M.S. Wearable Inertial Measurement Units for Assessing Gait in Real-World Environments. Front. Physiol. 2020, 11. [Google Scholar] [CrossRef] [PubMed]

- Cummings, S.R.; Nevitt, M.C.; Kidd, S. Forgetting Falls. J. Am. Geriatr. Soc. 1988, 36, 613–616. [Google Scholar] [CrossRef]

- Bianchi, F.; Redmond, S.J.; Narayanan, M.R.; Cerutti, S.; Lovell, N.H. Barometric Pressure and Triaxial Accelerometry-Based Falls Event Detection. IEEE Trans. Neural Syst. Rehabil. Eng. 2010, 18, 619–627. [Google Scholar] [CrossRef]

- Zhao, S.; Li, W.; Niu, W.; Gravina, R.; Fortino, G. Recognition of human fall events based on single tri-axial gyroscope. In Proceedings of the 2018 IEEE 15th International Conference on Networking, Sensing and Control (ICNSC), Zhuhai, China, 27–29 March 2018; Institute of Electrical and Electronics Engineers (IEEE): Piscataway, NJ, USA, 2018; pp. 1–6. [Google Scholar]

- Sun, R.; Sosnoff, J.J. Novel sensing technology in fall risk assessment in older adults: A systematic review. BMC Geriatr. 2018, 18, 14. [Google Scholar] [CrossRef] [Green Version]

- Ojeda, L.V.; Adamczyk, P.G.; Rebula, J.R.; Nyquist, L.V.; Strasburg, D.M.; Alexander, N.B. Reconstruction of body motion during self-reported losses of balance in community-dwelling older adults. Med. Eng. Phys. 2019, 64, 86–92. [Google Scholar] [CrossRef]

- Broadley, R.W.; Klenk, J.; Thies, S.B.; Kenney, L.P.J.; Granat, M.H. Methods for the Real-World Evaluation of Fall Detection Technology: A Scoping Review. Sensors 2018, 18, 2060. [Google Scholar] [CrossRef] [Green Version]

- Albert, Fall Classification by Machine Learning Using Mobile Phones ProQuest. Available online: https://search-proquest-com.proxy.lib.umich.edu/docview/1324602131?pq-origsite=summon (accessed on 17 September 2020).

- Weiss, A.; Shimkin, I.; Giladi, N.; Hausdorff, J.M. Automated detection of near falls: Algorithm development and preliminary results. BMC Res. Notes 2010, 3, 62. [Google Scholar] [CrossRef] [Green Version]

- Aziz, O.; Park, E.J.; Mori, G.; Robinovitch, S. Distinguishing near-falls from daily activities with wearable accelerometers and gyroscopes using Support Vector Machines. In Proceedings of the 2012 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, San Diego, CA, USA, 28 August–1 September 2012; Institute of Electrical and Electronics Engineers (IEEE): Piscataway, NJ, USA, 2012; pp. 5837–5840. [Google Scholar]

- Antwi-Afari, M.F.; Li, H.; Seo, J.; Wong, A.Y.L. Automated detection and classification of construction workers’ loss of balance events using wearable insole pressure sensors. Autom. Constr. 2018, 96, 189–199. [Google Scholar] [CrossRef]

- Mezzina, G.; De Venuto, D. Time-Frequency Linearization of Reactive Cortical Responses for the Early Detection of Balance Losses. J. Sens. 2019, 2019, 1–14. [Google Scholar] [CrossRef]

- Amin, M.G.; Zhang, Y.D.; Ahmad, F.; Ho, K.D. Radar Signal Processing for Elderly Fall Detection: The future for in-home monitoring. IEEE Signal Process. Mag. 2016, 33, 71–80. [Google Scholar] [CrossRef]

- Xu, T.; Zhou, Y. Elders’ fall detection based on biomechanical features using depth camera. Int. J. Wavelets Multiresolution Inf. Process. 2018, 16, 1840005. [Google Scholar] [CrossRef]

- Nasreddine, Z.S.; Phillips, N.A.; Bedirian, V.; Charbonneau, S.; Whitehead, V.; Collin, I.; Cummings, J.L.; Chertkow, H. The Montreal Cognitive Assessment, MoCA: A Brief Screening Tool for Mild Cognitive Impairment. J. Am. Geriatr. Soc. 2005, 53, 695–699. [Google Scholar] [CrossRef]

- Ojeda, L.; Borenstein, J. Non-GPS navigation with the personal dead-reckoning system. In Unmanned Systems Technology IX; International Society for Optics and Photonics: Bellingham, WA, USA, 2007; p. 65610C. [Google Scholar]

- Ojeda, L.; Borenstein, J. Transfer alignment from a personal locator system to a handheld or head-mounted instrument. SPIE Def. Secur. Sens. 2011, 8041, 804109. [Google Scholar] [CrossRef] [Green Version]

- Rebula, J.R.; Ojeda, L.V.; Adamczyk, P.G.; Kuo, A.D. Measurement of foot placement and its variability with inertial sensors. Gait Posture 2013, 38, 974–980. [Google Scholar] [CrossRef] [Green Version]

- Akula, M.; Dong, S.; Kamat, V.; Ojeda, L.; Borrell, A.; Borenstein, J. Integration of infrastructure based positioning systems and inertial navigation for ubiquitous context-aware engineering applications. Adv. Eng. Inform. 2011, 25, 640–655. [Google Scholar] [CrossRef]

- Oberg, T.; Karsznia, A.; Oberg, K. Basic gait parameters: Reference data for normal subjects, 10–79 years of age. J. Rehabil. Res. Dev. 1993, 30, 210–223. [Google Scholar]

- Graves, A.; Schmidhuber, J. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Netw. 2005, 18, 602–610. [Google Scholar] [CrossRef]

- Schuster, M.; Paliwal, K. Bidirectional recurrent neural networks. IEEE Trans. Signal Process. 1997, 45, 2673–2681. [Google Scholar] [CrossRef] [Green Version]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. In Proceedings of the International Conference Learn. Represent (ICLR), San Diego, CA, USA, 5–8 May 2015. [Google Scholar]

- Altmann, A.; Toloşi, L.; Sander, O.; Lengauer, T. Permutation importance: A corrected feature importance measure. Bioinformatics 2010, 26, 1340–1347. [Google Scholar] [CrossRef] [PubMed]

- Chaudhuri, S.; Oudejans, D.; Thompson, H.J.; Demiris, G. Real-World Accuracy and Use of a Wearable Fall Detection Device by Older Adults. J. Am. Geriatr. Soc. 2015, 63, 2415–2416. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Palmerini, L.; Klenk, J.; Becker, C.; Chiari, L. Accelerometer-Based Fall Detection Using Machine Learning: Training and Testing on Real-World Falls. Sensors 2020, 20, 6479. [Google Scholar] [CrossRef] [PubMed]

| Feature | Definition | Variables |

|---|---|---|

| Gait | Binary value (=1 if at least 5 strides of length >0.1 m) | IS GAIT (Binary) |

| Walked distance (m) | Total distance traveled in the horizontal plane | GAIT DISTANCE (Total) |

| Stride length (m) | Distance traveled in the horizontal plane between two consecutive footfalls of the same foot | SL MAX (maximum), SL MIN (minimum), SL MEAN (mean), SL MEDIAN (median), SL IQR (interquartile range), SL VAR (variance), SL RMS (root mean square) |

| Stride time (s) | Time elapsed between two consecutive footfalls of the same foot | ST MAX, ST MIN, ST MEAN, ST MEDIAN, ST IQR, ST VAR, ST RMS |

| Foot velocity (m/s) | Magnitude of foot velocities for both feet (left and right) | RF MAX VEL, RF MEAN VEL LF MAX VEL, LF MEAN VEL |

| Peak/Swing foot velocity (m/s) | Peak foot velocity magnitude for each foot (left and right) at every stride corresponding to swing phase | RS MAX VEL, RS MEAN VEL, RS MIN VEL LS MAX VEL, LS MEAN VEL, LS MIN VEL |

| Trunk angles (deg) | Angular sway in the pitch and roll directions | TRUNK RMS PITCH TRUNK RMS ROLL |

| Trunk angular velocities (deg/s) | Angular velocities in the pitch and roll directions | TW RMS PITCH TW RMS ROLL TW RANGE PITCH TW RANGE ROLL |

| Participant ID | Number of Days with Observed LOB Events | Number of Reported LOB Events |

|---|---|---|

| S 1 | 10 | 23 |

| S 2 | 5 | 8 |

| S 3 | 1 | 1 |

| S 4 | 1 | 2 |

| S 5 | 5 | 18 |

| S 6 | 3 | 3 |

| S 7 | 2 | 2 |

| S 8 | 4 | 5 |

| Total | 31 | 62 |

| Logistic Regression Model | BiLSTM Model | |

|---|---|---|

| Participant ID | AUROC (95% CI) | AUROC (95% CI) |

| S 1 | 0.815 (0.659, 0.929) | 0.911 (0.887, 0.938) |

| S 2 | 0.802 (0.621, 0.937) | 0.902 (0.878, 0.927) |

| S 3 | 0.788 (0.627, 0.918) | 0.948 (0.916, 0.970) |

| S 4 | 0.808 (0.657, 0.938) | 0.942 (0.907, 0.977) |

| S 5 | 0.735 (0.575, 0.920) | 0.874 (0.843, 0.915) |

| S 6 | 0.816 (0.615, 0.934) | 0.906 (0.842, 0.950) |

| S 7 | 0.738 (0.550, 0.908) | 0.892 (0.859, 0.927) |

| S 8 | 0.778 (0.599, 0.936) | 0.946 (0.935, 0.958) |

| Logistic Regression Model | BiLSTM Model | ||

|---|---|---|---|

| Participant ID | Incidence Rate | AUPR (95% CI) | AUPR (95% CI) |

| S 1 | 0.066% | 0.006 (0.002, 0.009) | 0.004 (0.003, 0.006) |

| S 2 | 0.061% | 0.004 (0.001, 0.008) | 0.004 (0.002, 0.009) |

| S 3 | 0.037% | 0.004 (0.001, 0.008) | 0.004 (0.002, 0.0057) |

| S 4 | 0.131% | 0.004 (0.001, 0.008) | 0.030 (0.007, 0.101) |

| S 5 | 0.161% | 0.003 (0.001, 0.006) | 0.005 (0.003, 0.006) |

| S 6 | 0.036% | 0.005 (0.002, 0.009) | 0.011 (0.002, 0.041) |

| S 7 | 0.028% | 0.003 (0.001, 0.006) | 0.005 (0.003, 0.008) |

| S 8 | 0.037% | 0.004 (0.001, 0.008) | 0.005 (0.003, 0.010) |

| Participant ID | Overall Data Reduction (95% CI) | Sensitivity (95% CI) | Precision (95% CI) |

|---|---|---|---|

| S 1 | 91.1% (89.7, 92.0) | 87.0% (82.3, 90.9) | 0.46% (0.37, 0.56) |

| S 2 | 68.0% (54.0, 72.6) | 98.8% (95.8, 100) | 0.18% (0.13, 0.23) |

| S 3 | 78.5% (62.0, 83.7) | 100% (100, 100) | 0.16% (0.08, 0.28) |

| S 4 | 84.7% (74.5, 86.9) | 98.0% (93.4, 100) | 0.81% (0.43, 1.21) |

| S 5 | 67.2% (52.3, 77.7) | 98.7% (96.6, 99.7) | 0.52% (0.44, 0.63) |

| S 6 | 85.5% (78.6, 89.6) | 89.5% (83.1, 95.9) | 0.21% (0.11, 0.31) |

| S 7 | 70.9% (55.8, 80.7) | 100% (100, 100) | 0.31% (0.23, 0.39) |

| S 8 | 91.0% (87.8, 92.9) | 98.1% (93.2, 100) | 0.39% (0.28, 0.54) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hauth, J.; Jabri, S.; Kamran, F.; Feleke, E.W.; Nigusie, K.; Ojeda, L.V.; Handelzalts, S.; Nyquist, L.; Alexander, N.B.; Huan, X.; et al. Automated Loss-of-Balance Event Identification in Older Adults at Risk of Falls during Real-World Walking Using Wearable Inertial Measurement Units. Sensors 2021, 21, 4661. https://doi.org/10.3390/s21144661

Hauth J, Jabri S, Kamran F, Feleke EW, Nigusie K, Ojeda LV, Handelzalts S, Nyquist L, Alexander NB, Huan X, et al. Automated Loss-of-Balance Event Identification in Older Adults at Risk of Falls during Real-World Walking Using Wearable Inertial Measurement Units. Sensors. 2021; 21(14):4661. https://doi.org/10.3390/s21144661

Chicago/Turabian StyleHauth, Jeremiah, Safa Jabri, Fahad Kamran, Eyoel W. Feleke, Kaleab Nigusie, Lauro V. Ojeda, Shirley Handelzalts, Linda Nyquist, Neil B. Alexander, Xun Huan, and et al. 2021. "Automated Loss-of-Balance Event Identification in Older Adults at Risk of Falls during Real-World Walking Using Wearable Inertial Measurement Units" Sensors 21, no. 14: 4661. https://doi.org/10.3390/s21144661

APA StyleHauth, J., Jabri, S., Kamran, F., Feleke, E. W., Nigusie, K., Ojeda, L. V., Handelzalts, S., Nyquist, L., Alexander, N. B., Huan, X., Wiens, J., & Sienko, K. H. (2021). Automated Loss-of-Balance Event Identification in Older Adults at Risk of Falls during Real-World Walking Using Wearable Inertial Measurement Units. Sensors, 21(14), 4661. https://doi.org/10.3390/s21144661