Abstract

Currently, greenhouses are widely applied for plant growth, and environmental parameters can also be controlled in the modern greenhouse to guarantee the maximum crop yield. In order to optimally control greenhouses’ environmental parameters, one indispensable requirement is to accurately predict crop yields based on given environmental parameter settings. In addition, crop yield forecasting in greenhouses plays an important role in greenhouse farming planning and management, which allows cultivators and farmers to utilize the yield prediction results to make knowledgeable management and financial decisions. It is thus important to accurately predict the crop yield in a greenhouse considering the benefits that can be brought by accurate greenhouse crop yield prediction. In this work, we have developed a new greenhouse crop yield prediction technique, by combining two state-of-the-arts networks for temporal sequence processing—temporal convolutional network (TCN) and recurrent neural network (RNN). Comprehensive evaluations of the proposed algorithm have been made on multiple datasets obtained from multiple real greenhouse sites for tomato growing. Based on a statistical analysis of the root mean square errors (RMSEs) between the predicted and actual crop yields, it is shown that the proposed approach achieves more accurate yield prediction performance than both traditional machine learning methods and other classical deep neural networks. Moreover, the experimental study also shows that the historical yield information is the most important factor for accurately predicting future crop yields.

1. Introduction

Compared with field growing, currently, greenhouse growing is preferred by many crop growers. Growing crops in the greenhouse can extend their growing season, protect crops against temperature and weather changes and thus provide a safe growing environment. Moreover, environmental parameters (e.g., humidity, temperature radiation, carbon dioxide, etc. [1,2] can also be controlled in the modern greenhouse to guarantee crops grow at the most appropriate environmental conditions.

Crop yield forecasting in greenhouses plays an important role in farming planning and management in greenhouses, and optimally controlling environmental parameters guarantees the maximum crop yield. Cultivators and farmers can utilize yield prediction in greenhouses to make knowledgeable management and financial decisions. However, it is an extremely challenging task. There are many factors that have an influence on crop yield in a greenhouse, such as radiations, carbon dioxide concentrations, temperature, quality of crop seeds, soil quality and fertilization, and disease occurrences (as shown in [3,4,5]). It is not straightforward to construct an explicit model to reflect the relationship between such a variety of factors and crop yield.

In this work, we propose a deep neural network-based greenhouse crop yield prediction method, by combining two state-of-the-art networks for temporal sequence processing: recurrent neural network (RNN) and temporal convolutional network (TCN). The proposed deep neural network is developed for predicting future crop yields in a greenhouse based on a sequence of historical greenhouse input parameters (e.g., temperature, humidity, carbon dioxide, radiation) as well as yield information. According to the experimental evaluations of multiple datasets collected from multiple greenhouses in different time periods, it is shown that the RNN+TCN-based deep learning approach achieves more accurate yield prediction results with smaller root mean square errors (RMSEs), compared with both classical machine learning and other popular deep learning-based counterparts.

2. Literature Works

Although there is much research related to crop yield prediction for the farming field, a relatively small amount of works focus on greenhouse crop yield forecasting. Approaches that have been developed for greenhouse crop yield forecasting are divided into two main categories: the explanatory biophysical model-based approach and the data driven/machine learning model-based approach.

Explanatory biophysical model-based approach: Based on a series of ordinary differential equations (ODEs) reflecting a dynamic process, the explanatory model describes the relationship between some environmental factors and crop growth or morphological development. Different biophysical models have been applied for crop growth modelling which can thus be used for yield forecasting, based on greenhouse environmental parameters.

The Tomgro model is proposed by Jones et al. in [6], which models the tomato growth and fruit yield with respect to dynamically changing temperature, solar radiation, and CO concentration inside a greenhouse. A more complex Tomsim biophysical model is proposed in [7], which contains multiple sub-modules developed for modelling different aspects (i.e., photosynthesis, dry matter production, truss appearance rate, fruit growth period and dry matter partitioning, etc.) related to tomato growth. A crop yield model that describes the effects of greenhouse climate on yield based on ODEs was described and validated in [4]. This yield model was validated for four temperature regimes. Results demonstrated that the tomato yield was simulated accurately for both near-optimal and non-optimal temperature conditions in the Netherlands and southern Spain, respectively, with varying light and CO concentrations. An integrated Yield Prediction Model [8], which is an integration of Tomgro model [6] and Vanthoor model [4], is applied to predict the crop yield in greenhouses based on controllable greenhouse environmental parameters. Different biophysical models, including Vanthoor model [4], Tomsim model [7], Greenhouse Technology applications (GTa) model, the model proposed in [9] and their combined version were compared in [10]. The experimental studies show that the combined model can outperform original models with smaller root mean square errors (RMSEs) for yield prediction. The biophysical models proposed in [11,12] describe effects of electrical conductivity, nitrogen, phosphorus, potassium, and light quality on dry matter yield and photosynthesis of greenhouse tomatoes and cucumbers, respectively.

The explanatory model is practical to reflect the actual growth process of crops, which is bio-physically meaningful and explainable. However, the aforementioned explanatory models suffer from the following two main limitations:

- (i)

- There are many intrinsic model parameters associated with a biophysical model and the performance of an explanatory model is highly sensitive to its model parameters (as shown in [13]). Moreover, the model parameter setting suitable for predicting greenhouse crop yield in one region may not be workable for other regions [13].

- (ii)

- In addition, most biophysical models are also not universal and restricted to model the growth for a specific type of plant. For example, the Tomgro model [6] and Tomsim model [7] can only be used to model/predict the growth/yield of tomatoes.

Due to the limitations of the explanatory biophysical model-based approaches, in this work, we refer to another category of approach–machine learning model-based approach for greenhouse crop yield prediction. More details of the machine learning model-based approach are introduced as follows.

Machine learning model-based approach: Data driven/machine learning technique-based approaches have also been applied for greenhouse crop yield forecasting in many studies, which treat the crop yield output as a very complex and nonlinear function of the greenhouse environmental variables and historical crop yield information. In particular, linear and polynomial regression models are used in [14] for strawberry growth and fruit yield using environmental data such as average daily air temperature (ADAT), relative humidity (RH), soil moisture content (SMC), and so on. However, an assumption of a linear or polynomial relationship between the crop yield and environmental factors is not always valid. Partial least squares regression (PLSR) has been applied in [15], for modelling the yield of snap bean based on the data collected from hyperspectral sensing. Neural networks have also been widely applied for greenhouse crop yield prediction. For example, an artificial neural network (ANN) has been applied in [16], for weekly crop yield prediction. While in [17], ANN has been applied to predict the pepper fruit yield based on factors such as fruit water content, days to flowering initiation, and so on. An Evolving Fuzzy Neural Network (EFuNN) was proposed in [18] for automatic tomato yield prediction, given different environmental variables inside the greenhouse, namely, temperature, CO, vapour pressure deficit (VPD), and radiation, as well as past yield. A Dynamic Artificial Neural Network (DANN) [19] was implemented to predict tomato yields, based on a series of predictors such as CO fixation, transpiration, solar radiation as well as past yield. The findings show that the most important environmental variable for yield prediction was CO fixation, and the least important was transpiration. Although ANN-based approaches have been widely applied for greenhouse crop yield prediction tasks as in [16,17,18,19], their performance is highly sensitive to different choices of network architectures and network hyper-parameters settings. Furthermore, there is a lack of studies on optimally designing network architecture and tuning network hyper-parameters for the greenhouse crop yield prediction.

The aforementioned works focus on using classical machine learning approaches for greenhouse crop yield prediction. Given a certain amount of training data, classical machine learning models (such as linear/polynomial regression models, artificial neural network model, etc.) are constructed to predict greenhouse crop yields based on certain factors (such as environmental and past yield information). However, these works suffer from limitations due to the adoptions of simple and ‘shallow’ classical machine learning models, for example:

- (i)

- Features extracted from data for building the classical machine learning models may not be optimal and most representative, thus deteriorating the performance for yield prediction (as shown by our experiment, in most cases, the classical machine learning models perform worse than the deep learning-based ones).

- (ii)

- The classical machine learning models cannot effectively handle data with either high volume or high complexity.

Deep learning is a very popular machine learning technique and it has been successfully applied in a variety of applications (e.g., image classification, computer vision, natural language processing, etc.) [20]. Recently, deep learning technology has also been applied for crop yield prediction in the outdoor environment. For example, in [21], a recurrent neural network deep learning algorithm over the Q-Learning reinforcement learning algorithm is used to predict the crop yield. The results show that the proposed model outperforms the existing models with high accuracy for crop yield prediction. CNN and LSTM are combined in [22] for both end-of-season and in-season county-level soybean yield prediction, based on the remote sensing data in the outdoor environment. Compared with the outdoor application scenarios, there are very few works related to the applications of the deep learning approach for indoor greenhouse crop yield prediction. Some related works can be found in [5,23], from which the researchers have adopted the recurrent neural network (RNN) model with long-short temporal memory (LSTM) units for tomato and ficus yield prediction. Furthermore, it can be seen from the evaluation results that the deep learning-based approaches adopted in [5,23] outperform traditional machine learning algorithms, with more accurate prediction results and lower root mean square errors (RMSEs).

3. Methodology

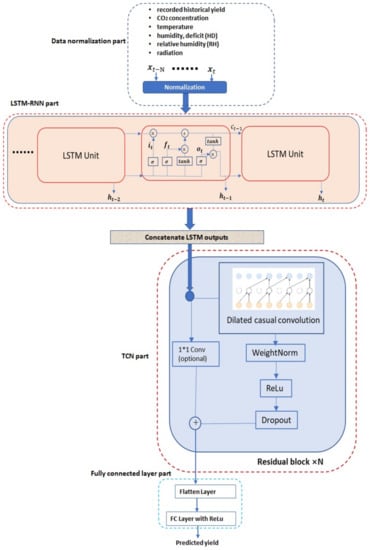

In this work, a novel deep neural network (DNN)-based methodology is proposed, to predict the future crop yield based on historical yields and greenhouse environmental parameters (e.g., CO concentration, temperature, humidity, radiation, etc.) information. The proposed method is based on the hierarchical integration of the recurrent neural network (RNN) and temporal convolutional network (TCN), which are both the current state-of-the-art DNN architectures for temporal sequence processing. Furthermore, a diagram illustrating the proposed methodology is shown in Figure 1, from which we can see that the proposed methodology contains four main parts: normalization part, recurrent neural network part, temporal convolutional network part and the final fully connected layer part. Different parts will be introduced in the next few sections.

Figure 1.

Proposed DNN architecture.

3.1. Input Data Normalization

A temporal sequence of data containing both historical yield and environmental information is exploited to predict the future crop yield after a certain period. As shown in Figure 1, a temporal sequence with the length N denoted as … is taken as the network input. The in the temporal sequence is a vector containing the following factors recorded at the time instance t: recorded yield information (g/m), CO concentration (ppm) in the greenhouse, greenhouse temperature (C), humidity deficit (g/kg), relative humidity (percentage) and radiation (W/m).

Before being fed into the network, firstly, normalization is applied to the data to normalize each factor (e.g., historical yield, CO concentration, temperature, radiation, etc.) to a range between [0, 1] by the following equation:

where represents the i-th factor at the time step t. and represent the corresponding maximum and minimum values for the related factor. After applying Equation (1), each factor is normalized to a range between 0 and 1.

3.2. Recurrent Neural Network

The normalized temporal data sequence is then fed into a recurrent neural network. As in [20], RNN has been widely applied for processing sequence data. It can both capture temporal dependencies between data samples in a sequence and extract the most representative features for that sequence to perform a variety of tasks (e.g., sequence classification, temporal data predictions, etc.). In our work, the RNN is firstly applied to extract representative features from input normalized temporal sequence data for further processing.

The traditional RNN exists problems of both gradient vanishing and gradient explosion [20], which limits its applications especially on processing long sequential data. Currently, the most popular way to overcome limitations of the traditional RNN is to adopt a new architecture with incorporating long short-term memory (LSTM) units, known as LSTM–RNN [24]. As shown in Figure 1, the LSTM–RNN consists of multiple LSTM units, which are shown in Figure 1. Furthermore, there are a series of arithmetic operations associated with a LSTM unit, which are detailed as follows:

where , and represent the LSTM input, LSTM output and LSTM state associated with the data sample at time instance t. is the LSTM cell value representing encoded historical information obtained from previous data samples before t. and represent sigmoid and tanh functions. Other parameters represent weights and bias.

Given the normalized input temporal sequence as shown in Figure 1, representative features are extracted by the LSTM–RNN network as its states , which are then fed into the next component of temporal convolutional network (TCN) for further processing.

3.3. Temporal Convolutional Network

The temporal convolutional network (TCN) component used in this work, as proposed in [25], applies a hierarchy of temporal convolutions across its input sequence, thus effectively extracting its representative features from different temporal scales. As in Figure 1, the dilated TCN component consists of multiple residual blocks while each residual block consists of multiple dilated causal convolution layers. Dilated causal temporal convolution operations are performed in the dilated convolution layers. In particular, the t-th output in the l-th layer and j-th block (denoted as ) is calculated from the previous layer by the following operations:

where represents the activation function (such as Relu as shown in Figure 1), and represent weights and b is the bias value. During the training procedure of the dilated convolution layers, weight normalization [26] can be performed on the dilated convolution layer’s weights, to help speed up the convergence of the related weights training algorithms. Moreover, a certain percentage of weights of the dilated convolution layers can be dropped out during the training, for improving the generalization performance.

An additional 1D convolution operation is performed within each residual block, to adjust the dimension of the residual block input to be the same as that of the dilated casual convolution layer output to add them together. The output results obtained from one residual block are fed as the input of the next block and the final output is obtained from the last residual block. The final output of the last residual block from the TCN is then flattened and fed into a fully connected (FC) layer to output the final yield prediction result.

3.4. Fully Connected Layer

The output of the TCN part is flattened and fed into a vector, which is then fed into a fully connected layer for the final yield prediction, as shown in Figure 1. In particular, the fully connected layer has one output with a Relu activation function.

In a summary, the proposed work investigates the combination of two state-of-the-arts deep neural networks for temporal sequence processing: LSTM–RNN and TCN, for greenhouse crop yield prediction. Based on an input temporal sequence containing both historical yields and environmental parameters information during a certain period, firstly, an LSTM–RNN is applied for pre-processing the original input to extract representative feature sequences, which are then further processed by a sequential of residual blocks in the TCN to generate the final features used for the future yield prediction. Compared with other deep learning methodology for greenhouse crop yield prediction by solely exploiting the LSTM–RNN as in [5], in our work, an additional TCN layer is added on the top of the LSTM–RNN layer, to better exploit the LSTM–RNN output and extract more representative features for a more accurate crop yield prediction. As validated from the experimental studies, the combination of RNN and TCN achieves better performance than exploiting solely RNN [5] or TCN for the greenhouse crop yield prediction.

4. Experimental Studies

The experimental studies of the proposed DNN based crop yield prediction approach are presented in this section.

4.1. Datasets Descriptions

Three datasets are collected from a tomato-growing site in Newcastle, UK, which contain recorded environmental parameters and crop yield information in different greenhouses during different time periods. The details of these datasets are described in the following Table 1:

Table 1.

Datasets descriptions.

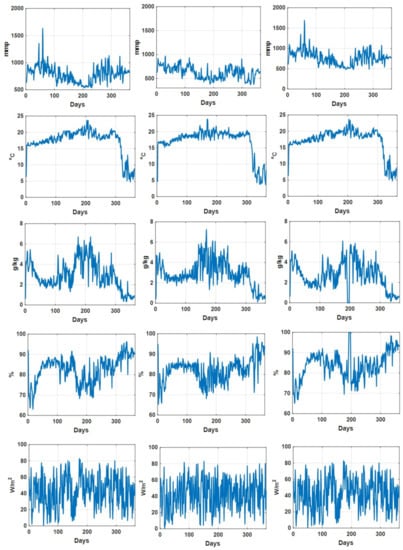

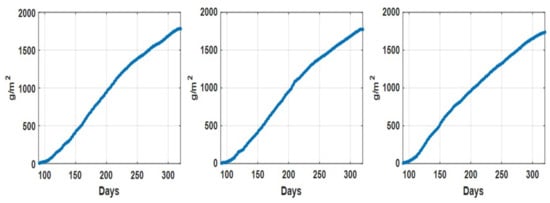

As an illustration, the daily recorded environmental parameters (CO concentration, temperature, humidity deficit, relative humidity, and radiation) for all three datasets are shown in Figure 2. In addition, the descriptive statistical analysis on environmental parameters for different datasets is summarized in Table 2. We can see that for Dataset 2, the descriptive statistics (min, max median, and mean values) of recorded CO concentration values are comparatively lower than those in the other two datasets, while the descriptive statistics of the other environmental parameters for these three datasets are quite consistent. Figure 3 shows the recorded accumulated tomato yield information during a one year period associated with three datasets. Furthermore, we can see that the recorded accumulated dry fruit weights follow similar patterns (due to the fact that they are from one grower at one particular site).

Figure 2.

Daily recorded CO concentration (mmp), temperature (C), humidity deficit (g/kg), relative humidity (percentage) and radiation (W/m) associated with dataset 1 (left column), dataset 2 (middle column) and dataset 3 (right column).

Table 2.

Descriptive statistics of greenhouse environmental parameters associated with different datasets.

Figure 3.

Accumulated tomato fruit yield (g/m) recorded associate with dataset 1 (left), dataset 2 (middle) and dataset 3 (right).

4.2. Experimental Design

Based on the temporal recordings of environment and yield information for every dataset, the sliding window method (with step 1) is applied to generate data samples, which contain recorded environmental parameters and yield information during one week as well as the associated future crop yield after one week. These generated data samples are exploited to train/test a network, for predicting the crop yield after one week given the collected environmental and yield information during the last week. Generated data samples are split into training and testing datasets with a proportion of 70% versus 30%. The training dataset is applied to train the network, which is then tested against another testing dataset for evaluating the performance of the trained network. Adam’s method in [27] is applied for network training to minimize the mean square error (MSE) loss defined as below:

where is the train sample size while and represent the ground truth and predicted yield values in the training dataset, respectively.

Furthermore, we use the root mean square error () as defined below to evaluate the network performance on a testing dataset

where is the sample size in the test dataset while and represent the ground truth and predicted test sample values, respectively.

4.3. Network Performance

For evaluating the proposed deep neural network’s performance for the greenhouse crop yield prediction, firstly, we need to identify the optimal network architecture. As mentioned in the previous section, our developed DNN is combined with two components: LSTM–RNN and TCN. The LSTM–RNN component consists of multiple LSTM units while the TCN component contains residual blocks containing three dilated convolutional layers. Each convolutional layer contains multiple convolutional filters with kernel size 2 and dilated rate 1 in [25]. Firstly, we have evaluated different network architectures with different LSTM units and convolutional filter numbers. Especially, each network architecture is trained/tested based on the training/testing split of data samples associated with every dataset multiple times, while the mean and standard deviation of obtained multiple RMSEs are calculated. The calculated means and standard deviations of RMSEs associated with different network architectures for all three datasets, as well as average ones (calculated as the average of the RMSE means and standard deviations obtained from three datasets) are summarized in Table 3. From Table 3, we can see that overall, adding more LSTM units can achieve better results with obtained smaller RMSEs (by comparing the average RMSEs for and those for = 250). However, there is no obvious relationship between the network performance and the filter number (). From the table, we can see that the optimal performance is obtained with an LSTM number 200 and filter number 250, with the smallest average mean RMSE being obtained (bolded in the table).

Table 3.

Obtained mean and standard deviation of RMSEs with different LSTM unit numbers () and convolutional filter numbers () for three datasets.

Moreover, we have also tested whether adding more LSTM layers or Residual blocks can further improve the performance, with the results being summarized in Table 4. From Table 4, we can see that adding more LSTM layers in LSTM–RNN or residual blocks in TCN deteriorates the performance for the majority of cases (only with a very marginal reduction in mean RMSE for dataset 2 by adding one LSTM layer). From this table, we can see that adding more LSTM layers or residual blocks does not gain extra benefits due to over-fitting.

Table 4.

Mean and standard deviation of RMSEs for different LSTM layers and residual block numbers on different datasets.

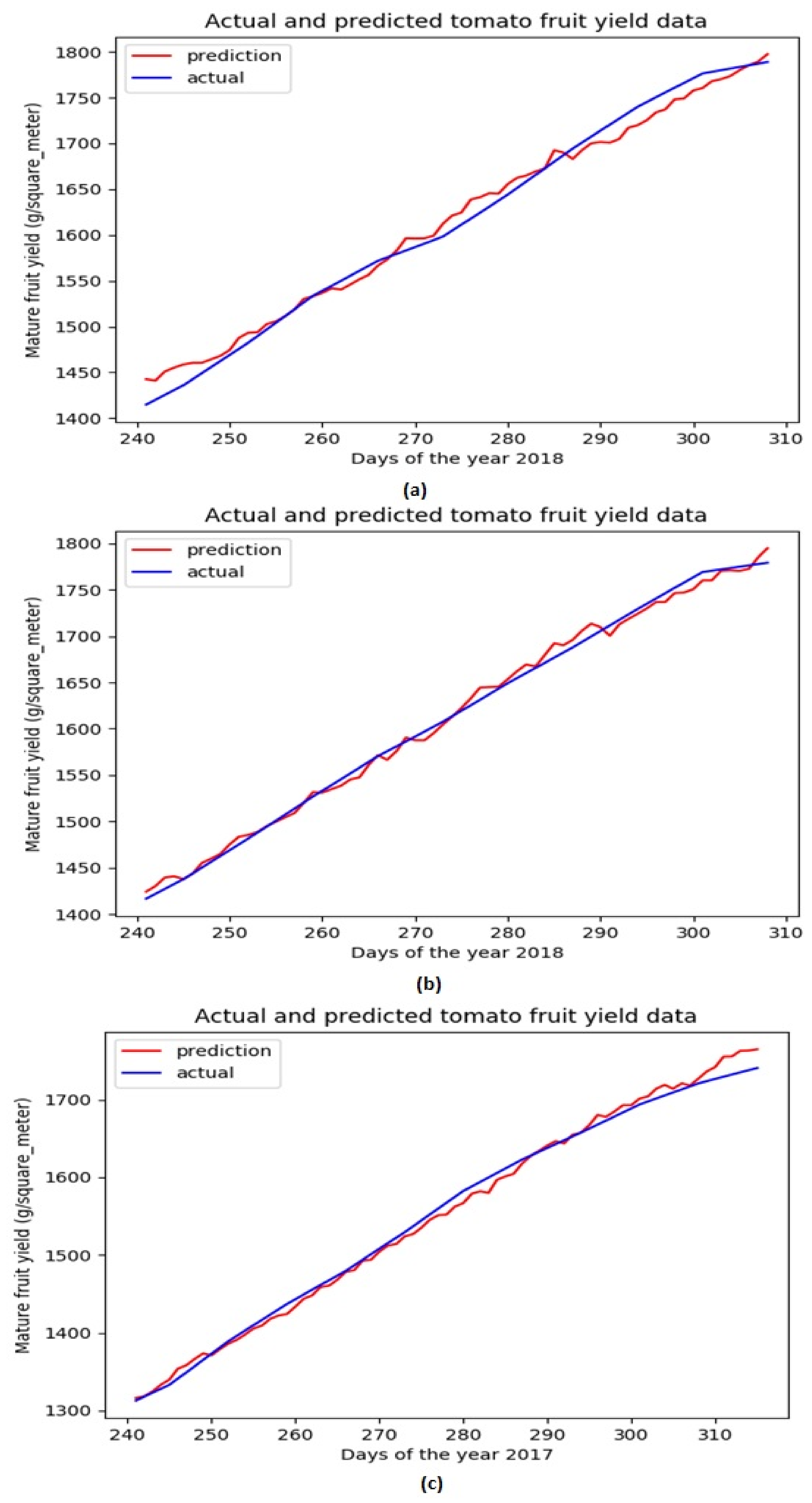

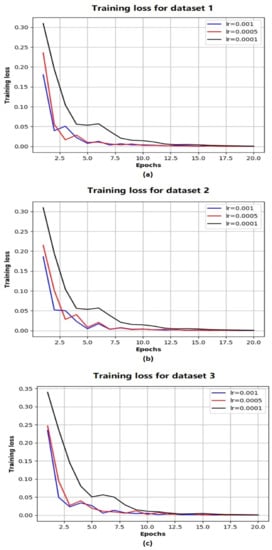

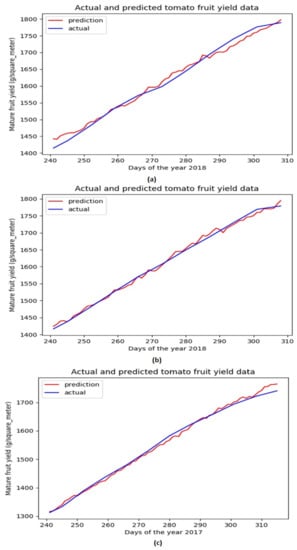

Based on the above evaluations, we have determined that the network architecture used in this study be with one LSTM layer and one TCN block. While the number of filters in the TCN block is chosen as 250 and that of the LSTM units is chosen as 200. Figure 4 shows the evolution of MSE losses with respect to training epoch for training our network model with the aforementioned architecture based on three training datasets. As the epoch increases, we can see that the MSE losses successfully converge to 0 for all the scenarios, while the convergence rate is fastest with the largest learning rate (when ). Figure 5 shows the comparison of ground truth accumulated yields and predicted ones by our trained network during different time periods. We can intuitively observe that the predicted tomato fruit yield values almost coincide with the ground truth ones for all three scenarios.

Figure 4.

The evolution of MSE losses with training epoches with respect to Dataset 1 (a) Dataset 2 (b) Dataset 3 (c).

Figure 5.

Ground truth tomato fruit yield values and predicted ones for testing datasets associated with Dataset 1 (a) Dataset 2 (b) Dataset 3 (c).

Moreover, we have evaluated the importance of each factor for future yield prediction. In particular, at one time, we have excluded a factor as the network input and then trained/tested the network model on three datasets multiple times. The obtained averaged RMSE mean and standard deviation values are calculated and summarized in Table 5. We can obviously find out that the obtained error by excluding the historical yield information is much larger than those obtained by excluding other factors. Based on the results in Table 5, we can conclude that the historical yield information plays the most important role in the future yield prediction.

Table 5.

Statistical metrics (mean and standard deviation) of RMSEs (g/m) by excluding certain input factors.

4.4. Comparison Studies

We have compared our developed DNN method with other methods, including both traditional machine learning-based methods (linear regression (LR), random forest (RF), support vector regression (SVR), decision tree (DT), gradient boosting regression (GBR), multi-layers artificial neural network (MLANN)) as well as other deep learning-based ones (single/multiple layer(s) LSTM–RNN [5], LSTM–RNN with attention mechanism [23] and TCN with single/multiple residual blocks). The comparison results are summarized in Table 6. From Table 6, we can see the majority of deep learning-based models (multiple layers LSTM–RNN, LSTM–RNN with attention, TCN with multiple blocks, and ours) outperform classical machine learning models with smaller mean RMSEs for all three datasets, which shows the advantages of the adopting of the deep learning for the greenhouse crop yield prediction. Furthermore, among the deep learning models, the proposed model in this work achieves the best performance with the smallest mean RMSEs for all three datasets.

Table 6.

Statistical metrics (mean and standard deviation) of RMSEs (g/m) obtained by different methodologies for three datasets.

5. Conclusions

In this work, we have proposed a new methodology for greenhouse crop yield prediction, by integrating two state-of-the-arts DNN network architectures used for temporal sequence processing: RNN and TCN. Given an input temporal sequence containing historical yield and environmental information, firstly, an LSTM—RNN is applied for pre-processing the original inputs to extract representative features, which are then further processed by a sequential of residual blocks of the TCN module. The features finally extracted from the TCN are then fed into a fully connected network for future crop yield prediction. Comprehensive evaluations through statistical analysis of obtained RMSEs for multiple datasets have shown that:

- (i)

- The proposed approach can be applied for accurate greenhouse crop yield prediction, based on both historical environmental and yield information.

- (ii)

- The proposed approach can achieve much more accurate prediction than other counterparts of both traditional machine learning and deep learning methods.

Furthermore, it is also shown in the experimental study that the historical yield information is the most important factor for accurately predicting future crop yields.

With respect to future work, to further validate the general effectiveness of the proposed model, we will evaluate it on more datasets collected from different growers on different sites. Moreover, we will also test the model’s performance on yield prediction for different types of popular greenhouse crops. More advanced network architecture will also be considered, for example, the LSTM encoder–decoder component as in [23] will be considered to be incorporated into the current model to build up a more advanced network architecture. Finally, we will also investigate the combination of the developed machine learning-based model with a biophysical model to achieve more accurate/robust crop yield prediction based on a multi-model framework.

Author Contributions

Conceptualization, L.G. and M.Y.; methodology, L.G.; validation, L.G. and M.Y.; formal analysis, L.G. and M.Y.; writing—original draft preparation, L.G.; writing—review and editing, M.Y.; visualization, L.G. and M.Y.; supervision, S.J., V.C. and S.P. All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported as part of SMARTGREEN, an Interreg project supported by the North Sea Programme of the European Regional Development Fund of the European Union.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Restrictions apply to the availability of these data. Data was obtained from a third party collaborator of the SMARTGREEN project and are available under permissions.

Acknowledgments

This research was supported as part of SMARTGREEN, an Interreg project supported by the North Sea Programme of the European Regional Development Fund of the European Union.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Vanthoor, B.; Stanghellini, C.; Van Henten, E.; De Visser, P. A methodology for model-based greenhouse design: Part 1, description and validation of a tomato yield model. Biosyst. Eng. 2011, 110, 363–377. [Google Scholar] [CrossRef]

- Ponce, P.; Molina, A.; Cepeda, P.; Lugo, E.; MacCleery, B. Greenhouse Design and Control; CRC Press: Boca Raton, FL, USA, 2014. [Google Scholar]

- Hoogenboom, G. Contribution of agrometeorology to the simulation of crop production and its applications. Agric. For. Meteorol. 2000, 103, 137–157. [Google Scholar] [CrossRef]

- Vanthoor, B.; De Visser, P.; Stanghellini, C.; Van Henten, E. A methodology for model-based greenhouse design: Part 2, description and validation of a tomato yield model. Biosyst. Eng. 2011, 110, 378–395. [Google Scholar] [CrossRef]

- Alhnait, B.; Pearson, S.; Leontidis, G.; Kollias, S. Using deep learning to predict plant growth and yield in greenhouse environments. arXiv 2019, arXiv:1907.00624. [Google Scholar]

- Jones, J.; Dayan, E.; Allen, L.; VanKeulen, H.; Challa, H. A dynamic tomato growth and yield model (tomgro). Trans. ASAE 1998, 34, 663–672. [Google Scholar] [CrossRef]

- Heuvelink, E. Evaluation of a dynamic simulation model for tomato crop growth and development. Ann. Bot. 1999, 83, 413–422. [Google Scholar] [CrossRef] [Green Version]

- Lin, D.; Wei, R.; Xu, L. An integrated yield prediction model for greenhouse tomato. Agronomy 2019, 9, 873. [Google Scholar] [CrossRef] [Green Version]

- Seginer, I.; Gary, C.; Tchamitchian, M. Optimal temperature regimes for a greenhouse crop with a carbohydrate pool: A modelling study. Sci. Hortic. 1994, 60, 55–80. [Google Scholar] [CrossRef]

- Kuijpers, W.; Molengraft, M.; Mourik, S.; Ooster, A.; Hemming, S.; Henten, E. Model selection with a common structure: Tomato crop growth models. Biosyst. Eng. 2019, 187, 247–257. [Google Scholar] [CrossRef]

- Ni, J.; Mao, H. Dynamic simulation of leaf area and dry matter production of greenhouse cucumber under different electrical conductivity. Trans. Chin. Soc. Agric. Eng. 2011, 27, 105–109. [Google Scholar]

- Ni, J.; Liu, Y.; Mao, H.; Zhang, X. Effects of different fruit number and distance between sink and source on dry matter partitioning of greenhouse tomato. J. Drain. Irrig. Mach. Eng. 2019, 47, 346–351. [Google Scholar]

- Vazquez-Cruz, M.; Guzman-Cruz, R.; Lopez-Cruz, I.; Cornejo-Perez, O.; Torres-Pacheco, I.; Guevara-Gonzalez, R. Global sensitivity analysis by means of EFAST and Sobol’ methods andcalibration of reduced state-variable TOMGRO model using geneticalgorithms. Comput. Electron. Agric. 2014, 100, 1–12. [Google Scholar] [CrossRef]

- Sim, H.; Kim, D.; Ahn, M.; Ahn, S.; Kim, S. Prediction of strawberry growth and fruit yield based on environmental and growth data in a greenhouse for soil cultivation with applied autonomous facilities. Hortic. Sci. Technol. 2020, 38, 840–849. [Google Scholar]

- Hassanzadeh, A.; Aardt, J.; Murphy, S.; Pethybridge, S. Yield modeling of snap 245 bean based on hyperspectral sensing: A greenhouse study. J. Appl. Remote Sens. 2020, 14, 024519. [Google Scholar] [CrossRef]

- Ehret, D.; Hill, B.; Helmer, T.; Edwards, D. Neural network modeling of green- house tomato yield, growth and water use from automated crop monitoring data. Comput. Electron. Agric. 2011, 79, 82–89. [Google Scholar] [CrossRef]

- Gholipoor, M.; Nadali, F. Fruit yield prediction of pepper using artificial neural network. Sci. Hortic. 2019, 250, 249–253. [Google Scholar] [CrossRef]

- Qaddoum, K.; Hines, E.; Iliescu, D. Yield prediction for tomato greenhouse using EFUNN. ISRN Artif. Intell. 2013, 2013, 430986. [Google Scholar] [CrossRef]

- Salazar, R.; Lpez, I.; Rojano, A.; Schmidt, U.; Dannehl, D. Tomato yield prediction in a semi-closed greenhouse. In Proceedings of the International Horticultural Congress on Horticulture: Sustaining Lives, Livelihoods and Landscapes (IHC2014): International Symposium on Innovation and New Technologies in Protected Cropping, Brisbane, Australia, 18–22 August 2014. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Elavarasan, D.; Vincent, P. Crop yield prediction using deep reinforcement learning model for sustainable agrarian application. IEEE Access 2020, 8, 86886–86901. [Google Scholar] [CrossRef]

- Sun, J.; Di, L.; Sun, Z.; Shen, Y.; Lai, Z. County-level soybean yield prediction using deep CNN-LSTM model. Sensors 2019, 19, 4363. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Alhnait, B.; Kollias, S.; Leontidis, G.; Shouyong, J.; Schamp, B.; Pearson, S. An autoencoder wavelet based deep neural network with attention mechanism for multi-step prediction of plant growth. Inf. Sci. 2021, 560, 35–50. [Google Scholar] [CrossRef]

- Sherstinsky, A. Fundamentals of recurrent neural network (RNN) and long short-term memory (LSTM) network. Phys. D Nolinear Phenom. 2020, 404, 132306. [Google Scholar] [CrossRef] [Green Version]

- Lea, C.; Flynn, M.; Vidal, R.; Reiter, A. Temporal convolutional networks for action segmentation and detection. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Salimans, T.; Kingma, D. Weight normalization: A simple reparameterization to accelerate training of deep neural networks. In Proceedings of the 30th International Conference on Neural Information Processing Systems, Barcelona, Spain, 4–9 December 2016. [Google Scholar]

- Kingma, D.; Ba, J. Adam: A method for stochastic optimization. In Proceedings of the 3rd International Conference for Learning Representations, San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).