1. Introduction

The booming number of IoT devices is paving the way for peer-to-peer (P2P) architecture to dominate in IoT platforms so that connectivity and latency issues usually associated with centralized cloud services architecture are avoided [

1]. Although the cloud model is easy to manage and economical to scale, the requirements for uninterrupted internet connections are too costly and burdensome for many IoT devices. However, P2P architecture supports emerging IoT applications such as proximity sharing, where colocated devices can cooperate with each other in real time to complete a task or share a resource. However, in this case, devices (peers) need to discover each other, trust each other, and then make a connection [

2].

To illustrate the important role of trustworthy communication between P2P IoT devices, consider a smart home where IoT devices provide functions that help make users’ daily routines more convenient. A smart door lock is a smart home IoT device that has seen snowballing adoption in recent years. An example of such a device is one that communicates with another device that includes an identity verification mechanism, such as a mobile phone. Accordingly, the smart lock identifies a user as the homeowner and unlocks the home door automatically as they approach. When a homeowner leaves home, the smart lock automatically locks the door behind them. This is an idealized case where both devices are trustworthy and the user has immutable control over them. Problems arise if a malicious actor takes over control of one or more of these devices. This could occur due to any of the common IoT devices’ vulnerabilities, such as poor configuration or using default passwords. For instance, if a malicious actor were to compromise a user identification mechanism, the smart lock can give them control over who comes in or out of a home, thus enabling intruders to have access to the house and lock residents out of it [

3]. In this context, the user identity is the resource, the smart phone is the resource provider while the smart lock is the recipient. After each locking and unlocking event, the smart lock would send a confirmation message to the user to ask if they are aware of the door locking/unlocking event. The feedback about the transaction is sourced from multiple channels (i.e., phone, email, etc.); this limits the impact of bad transactions.

Peers in a P2P network vary greatly in their behavior, from newcomers to pretrusted, good and malicious peers. Newcomers are peers who have just joined the network. Their behaviors are revealed gradually once they start communicating with other peers. Pretrusted peers are usually assigned by the founders of the network; thus, they always provide trusted resources and honest feedback on the quality of the resources they receive. Good peers normally provide good resources unless fooled by malicious peers, and they always give honest feedback [

4]. However, malicious peers use the vulnerability of P2P networks to distribute bad resources [

5,

6] and dishonest feedback [

7]. Malicious peers are usually further classified into four categories. First, pure malicious peers provide bad resources and dishonest feedback on other peers [

8,

9]. Second, camouflaged malicious (disguised malicious) peers are inconsistent in their behavior. They normally provide bad resources, but they also offer good resources to enhance their rating and delude other peers [

10]. In addition, they always give negative feedback on other peers [

11]. Third, feedback-skewing peers (malicious spies) provide good resources but lie in their feedback on other peers [

12]. Fourth, malignant providers provide bad resources to other peers. However, they do not lie in their feedback. Malicious peers work individually or assemble in groups [

13,

14,

15]. Malicious behavior can be categorized according to two strategies: isolated and collective. Isolated malicious peers act independently from each other while collective malicious peers cooperate in groups to harm other peers. In contrast, they never harm their group members with bad resources or negative feedback.

Trust management is about developing strategies for establishing dependable interactions between peers and predicting the likelihood of a peer behaving honestly. It focuses on how to create a trustworthy system from trustworthy distributed components [

16,

17], which is paramount, especially for newly emerging forms of distributed systems [

16], such as cloud computing [

18,

19], fog computing [

20] and IoT [

1,

6]. Trust management systems monitor peers’ conduct and allow them to give feedback on their previous transactions; thus, good and bad peers can be identified. This binary classification of peers is the approach followed by most previous studies [

12,

21,

22,

23], disregarding the fact that peers vary greatly in their behavior between the two extremes. Additionally, existing trust management systems considered neither predicting the specific peer model nor identifying other members within his/her malicious group. To bridge this gap, this paper proposes Trutect, which is a trust management system that uses the power of neural networks to detect malicious peers and identify their specific model and other group members, if any. The proposed system is thoroughly evaluated and benchmarked based on rival trust management systems, including EigenTrust [

24] and InterTrust [

25].

Among the main contributions of this paper are the following:

A trust management system that exploits the power of neural networks to identify the specific peer model and its group members.

A large dataset of behavioral models in P2P networks that can be used to advance research in the field.

A well-controlled evaluation framework to study the performance of a trust management system.

2. Literature Review

Due to the rapid adoption of IoT technologies, they are becoming an attractive target for cyber criminals who take advantage of lack of security functions and abilities to compromise IoT devices. Therefore, a plethora of research is emerging to elucidate the security attacks and different security and trust management mechanisms involved in IoT applications [

26,

27].

In identifying malicious peers, trust and reputation management systems follow different approaches [

25], such as trust vectors [

24], subjective logic [

25] and machine learning [

8]. EigenTrust [

24] is one of the most commonly used trust management algorithms based on a normalized trust vector. Despite the widespread use of EigenTrust, it suffers from drawbacks, mainly related to the ability of a peer to easily manipulate the ratings it provides on other peers. Additionally, its heavy reliance on pretrusted peers makes them focal points of failure. In [

28], an enhancement of the EigenTrust algorithm to ensure that a peer cannot manipulate its recommendation is proposed; hence, this approach is called nonmanipulatable EigenTrust. In [

29], another reputation scheme based on EigenTrust where the requester selects a provider peer using the roulette wheel selection algorithm to reduce the reliance of pretrusted peers was introduced. For the same reason, [

30] introduced the concept of honest peers, i.e., a peer with a high reputation value who can be targeted, instead of pretrusted peers, by new peers.

Among the early trust management systems is the trust network algorithm using subjective logic (TNA-SL) [

31]. Trust in TNA-SL is calculated as the opinion of a peer on another peer based on four components: belief, disbelief, uncertainty and a base rate. Opinions provide accurate trust information about peers; however, the main drawback of TNA-SL is its exponential running time complexity due to the lengthy matrix chain multiplication process for transitive trust calculations. Thus, InterTrust [

25] was proposed to overcome this issue. InterTrust maintains the advantages of the original TNA-SL while being scalable and lightweight with low computational overhead by using more scalable data structures and reducing the need for matrix chain multiplications.

Machine learning can help with the prediction and classification of peers’ trustworthiness in either a static or a dynamic manner [

32]. In static approaches, the peers’ extracted features are selected offline to produce one final model, thereby decreasing the computational overhead. In contrast, dynamic approaches extract peers’ features online while the application is running, which incrementally updates the model based on past data and offers the ability to detect malicious peers based on new behavioral information. However, these advantages usually result in high computational overheads.

A static generic machine learning-based trust framework for open systems using linear discriminant analysis (LDA) [

33] and decision trees (DTs) [

34] was presented in [

35]. In this approach, an agent’s past behavior is not considered when determining whether to interact with the agent; instead, the false positive rate, false negative rate, and overall falseness are used. A trust management system for the IoT based on machine learning and the elastic slide window technique was proposed in [

6]. The main goal was to identify on-off attackers based on static analysis.

Another static approach using machine learning for the problem of trust prediction in social networks was presented in [

7]. The study uses recommender systems to predict the trustworthiness of each peer. In [

36], a trust-based recommendation system that assesses the trustworthiness of a friend recommendation while preserving users’ privacy in an online social network is introduced. Friends’ features were statically analyzed, and recommendations about peer trustworthiness were derived using the K-nearest neighbors algorithm (KNN).

The work in [

37] enables the prediction results to be integrated with an existing trust model. It was applied on an online web service that helps customers book hotels. Features related to the application domain were selected and statically analyzed by several supervised algorithms, such as experience-based Bayesian, regression, and decision tree algorithms. A reputation system for P2P using a Support vector machines algorithm (SVM) was built in [

22]. The system dynamically collects information on the number of good transactions in each single time slot. The system outperformed the other system even more at very high imbalance ratios because the overall accuracy increased, and the system tended to classify almost all nodes as malicious.

All previously mentioned works are static and focus on the binary classification of peers’ trustworthiness, which means that a peer is either good or bad. In contrast, D-Trust [

38] presents a dynamic multilevel social recommendation approach. D-Trust creates a trust-user-item network topology based on dynamic user rating scores. This topology uses a deep neural network, focuses on positive links and eliminates negative links. Another dynamic neural network-based multilevel reputation model for distributed systems was proposed by [

15]. It dynamically analyses global reputation values to find the peer with the highest reputation. In [

39] a deep neural network was used to build trustworthy communications in Vehicular Ad hoc Networks (VANETs). The trust model evaluates neighbours’ behaviour while forwarding routing information using a software-defined trust-based dueling deep reinforcement learning approach. Additionally, a multilevel trust management framework was proposed in [

14]. This approach uses dynamic analysis with an SVM to classify interactions into trustworthy, neutrally trusted, or untrustworthy interactions.

Based on the above surveyed work, trust and reputation management systems usually follow a binary classification approach to identify a peer as either good or bad. Few studies [

14,

15,

38] have considered the multiclassification of peers. However, these works have considered neither predicting the specific peer model nor identifying other members of a malicious group. Therefore, this paper proposes Trutect to bridge these gaps.

3. System Design

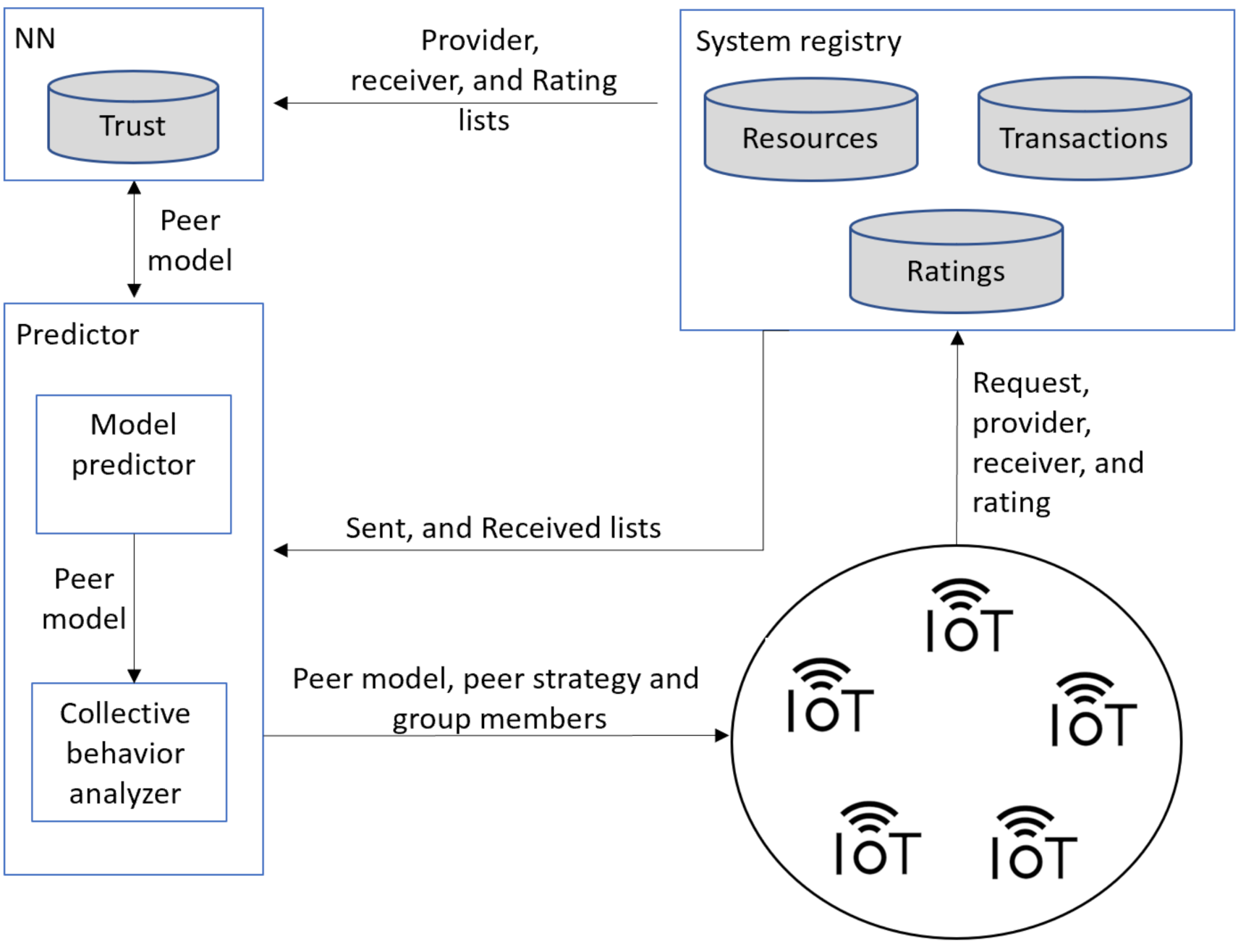

As shown in

Figure 1, the Trutect trust management system consists of three main components that can run on the cloud or in a fog gateway device. Alternatively, each component can be placed in a separate fog gateway device. However, in this case, these deceives are better placed in close vicinity to each other to reduce communication delays and possible communication problems.

Registry manager: The system registry manager is a centralized component responsible for administering and updating three lists: resource, transaction, and rating lists. The resource list maintains resources in the system and information on their owners. The transaction list contains the resource requests with the receiver and provider of each. Finally, the rating list includes the sent and received sublists for each peer. The sent list of a peer logs for each peer sending transactions, the resource sent, the resource receiver ID and the rating received for the transaction. The received list of a peer stores information about transactions where this peer was the resource receiver, including the provider, and the peer ratings of the transaction. The system registry manager updates the rating list after each transaction.

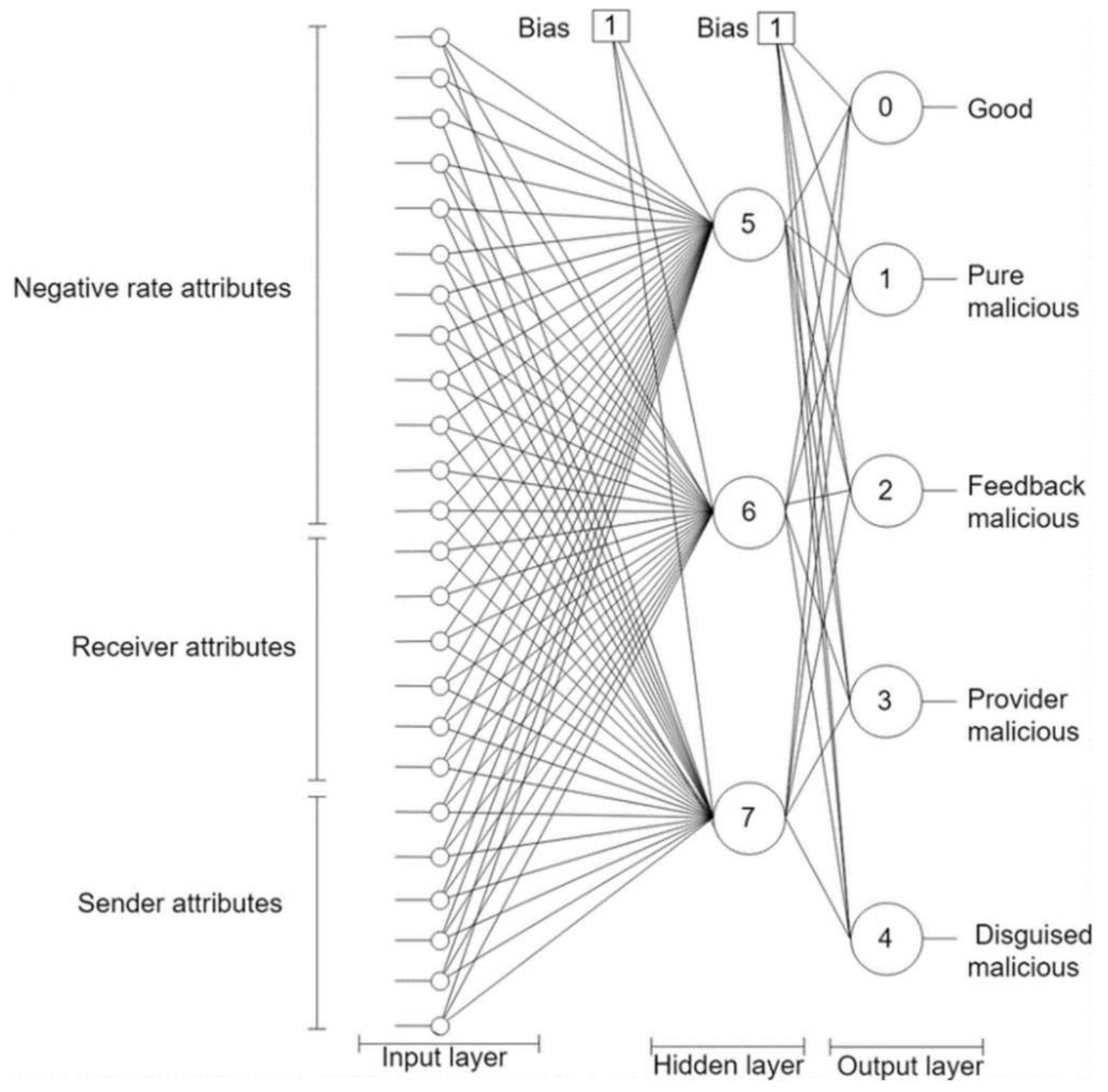

NN component: This component employs an NN classifier to learn peers’ models (see

Appendix A). It has twenty-five input nodes and five output nodes. The network weight is one and its depth is two as depicted in

Figure 2. The NN is trained offline on a trust dataset that was constructed by simulating a P2P network using QTM [

11]. The model simulated 100,000 transactions over 100,000 resources owned by 5000 peers. The peers included 1000 good peers (of whom 5% were pretrusted peers), 1000 pure malicious peers, 1000 feedback-skewing peers, 1000 malignant peers, and 1000 disguised malicious peers. Fifty percent of the malicious peers of each type are isolated, and 50% are in groups.

Predictor: The predictor contains two main parts: the peer model predictor and the collective behavior analyzer. The peer model predictor feeds the provider information, based on the sent and received lists, into the trained NN, which predicts the peer model. If the peer model is good, then the requester peer is signaled to approve the peer as a candidate provider. Otherwise, if the peer is not an isolated malicious, the peer model is studied by the collective behavior analyzer to identify their group members. Finally, all transaction information is logged in the system registry and sent to the system administrator upon request.

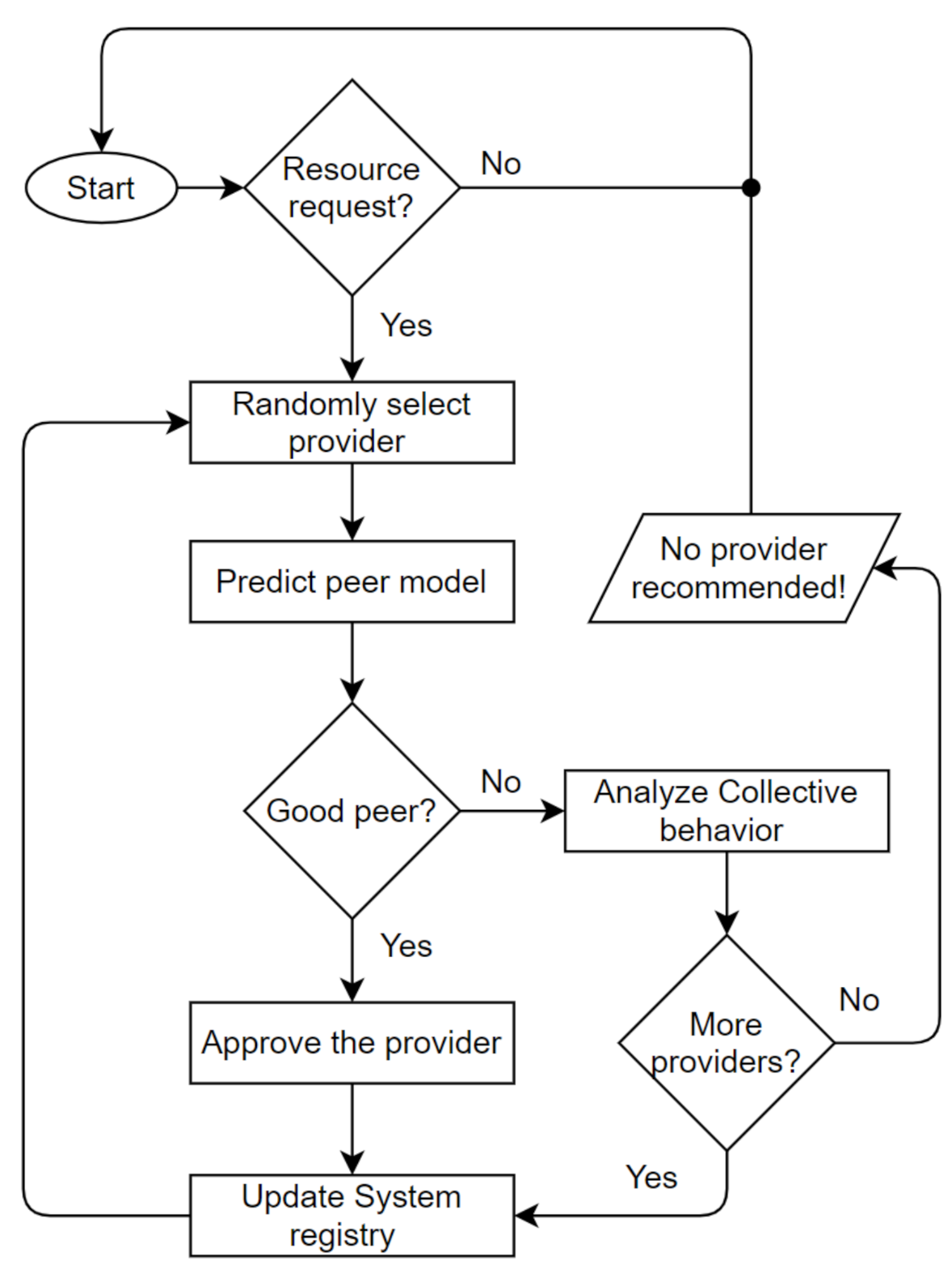

The Trutect logic flow is diagrammed in

Figure 3. Once a resource request arrives, the system randomly selects a provider of this resource from the resource list. Then, the NN predicter module determines, based on the sent and received lists of the provider, whether it is a good, purely malicious, feedback-skewing, malignant or disguised malicious peer. If the peer is good, the resource provider is approved, and the receiver rates the interaction as either positive or negative. Otherwise, if the receiver is malicious, the peer model is analyzed by the collective behavior analyzer, as shown in

Figure 4, to determine which strategy the malicious peer is following, whether isolated or collective; and its group members, if any. Accordingly, the peer’s previous interactions and ratings are analyzed based on the sent and received lists; in addition, the generated information, which includes the malicious peer strategy and detected peer members, is sent to the system administrator to take an action and decide whether to proceed with resource sharing, provide a warning, or look for another provider.

4. Evaluation Methodology

A strictly controlled empirical evaluation framework was followed to evaluate the proposed algorithms. To allow for full control of the experimental parameters, we employed the renowned open-source simulator QTM [

11]. The simulator imitates an assortment of network configurations and malicious peers’ behavioral models.

A P2P resource-sharing application was considered, although the system can be easily applied to other P2P applications. The following four context parameters were controlled to simulate representative samples of a P2P network:

Percentages of malicious peers: Five different percentages of malicious peers were studied to test the robustness of the system: 15%, 30%, 45%, 60%, and 75%.

Number of transactions: To simulate system performance under different loads, five values of the number of transactions were examined: 1000, 1500, 2000, 2500, and 3000 transactions.

Malicious peer model: Four different types of malicious behavior were imitated, including pure, providers, feedback, and disguised, in each scenario to embody a real environment.

Malicious strategies: Two malicious strategies, collective and isolated, were implemented in each scenario to represent real environments.

The number of peers and number of resources were fixed at 256 and 1000, respectively. Pretrusted peers were 5% of the total peers. For collective malicious groups, the number and size of groups were randomized. For simplicity, we considered a “closed world” network model where the peers within a network are static; they do not join and leave the network.

Two state-of-the-art algorithms, namely, EigenTrust [

24] and InterTrust [

25], and a reference case with no trust algorithm (none) were employed to benchmark the proposed algorithm performance.

Three performance metrics are used to assess the algorithm performance, the success rate, the running time, and the accuracy, as described below.

The success rate is represented in Equation (1) as the number of good resources received by good peers over the number of transactions attempted by good peers:

The running time is defined as the total time of the algorithm’s execution in seconds. It is the time from when the system calls the main function of an algorithm until the control returns to the caller. Due to the sensitivity of the running time measure, all of the experiments were conducted on the same computer with an Intel core i7 CPU with a 1.1 GHz speed, 2 GB of RAM and a 200 GB hard disk.

The classification accuracy is represented by the number of correctly classified sample cases over the number of all sample cases, as shown in Equation (2):

6. Conclusions

Many studies have considered discerning malicious peers, but none have focused on identifying the specific type and group members of a malicious peer. Trutect is proposed in this paper to bridge this gap. It exploits the power of neural networks to build models of each peer and classify them based on their behavior and communication patterns.

Trutect performance was evaluated against existing rival trust management systems considering the success rate, running time, and accuracy. For success rate, Trutect showed the highest success rate as it does not only reveal malicious peers but also their team members which makes it easier to identify good providers in the whole network after few rounds of transactions. Trutect also shows significant improvement in running time as the malicious peer percentage increases. Generally, Trutect recorded a significant result in identifying malicious peer models and group members. In addition, an increased number of transactions and a higher malicious peer percentage had clear positive effects on the accuracy of defining collective members in particular. In summary, Trutect is effective, especially on poisoned and busy networks with large percentages of malicious peers and enormous numbers of transactions.

Several interesting future research directions are opened by this research. For example, the accuracy of identifying disguised malicious peers might be improved by constructing a separate dataset with only good and disguised malicious peers. Additionally, Trutect’s performance at detecting malicious peers can be improved by considering dynamic learning where the system incrementally learns from newly classified instances. We would also consider sybil attackers who constantly change their identity to erase their bad histories.