Real-Time Plane Detection with Consistency from Point Cloud Sequences

Abstract

1. Introduction

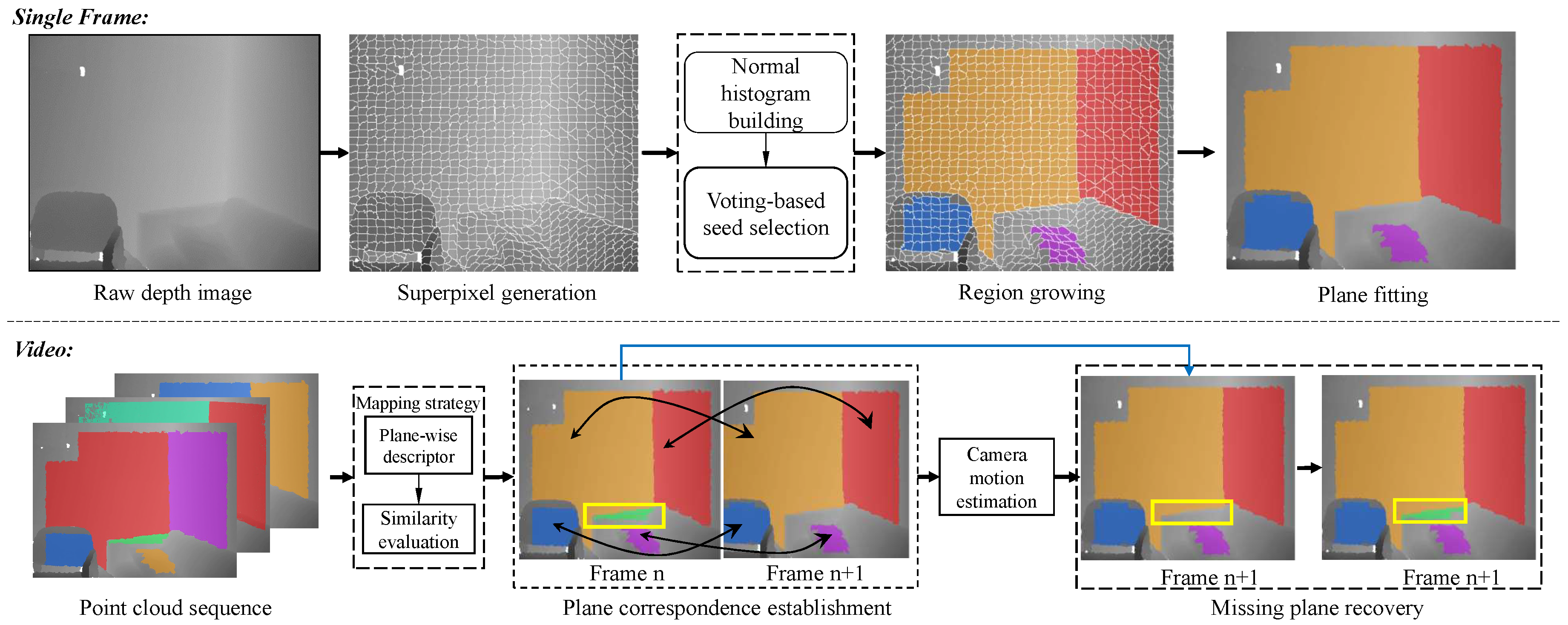

- We introduce a real-time plane extraction algorithm from consecutive raw 3D point clouds collected by RGB-D sensors.

- We propose a superpixel-based plane detection method in order to achieve smooth and accurate plane boundary.

- We present a strategy for the recovery of undetected planes by utilizing the information from the corresponding planes in adjacent frames.

2. Related Work

2.1. Patch Segmentation

2.2. Plane Detection

3. Method

3.1. Plane Detection in Single Frame

- is unlabeled;

- the normal angle difference between and is less than a given threshold , which is set as by default in our experiments; and,

- the distance from ’s centroid to the ’s fitting plane is less than , where is the total number of 3D points in the current merged superpixels and l is the merge distance threshold.

3.2. Plane Correspondence Establishment

- the Euclidean distance of descriptors and is smaller than the given threshold ;

- there are no other planes in Frame whose descriptor is closer to the plane ; and,

- if descriptor of plane is the smallest one to more than one plane in Frame , would be assigned the label of the plane whose descriptor is the closest to it.

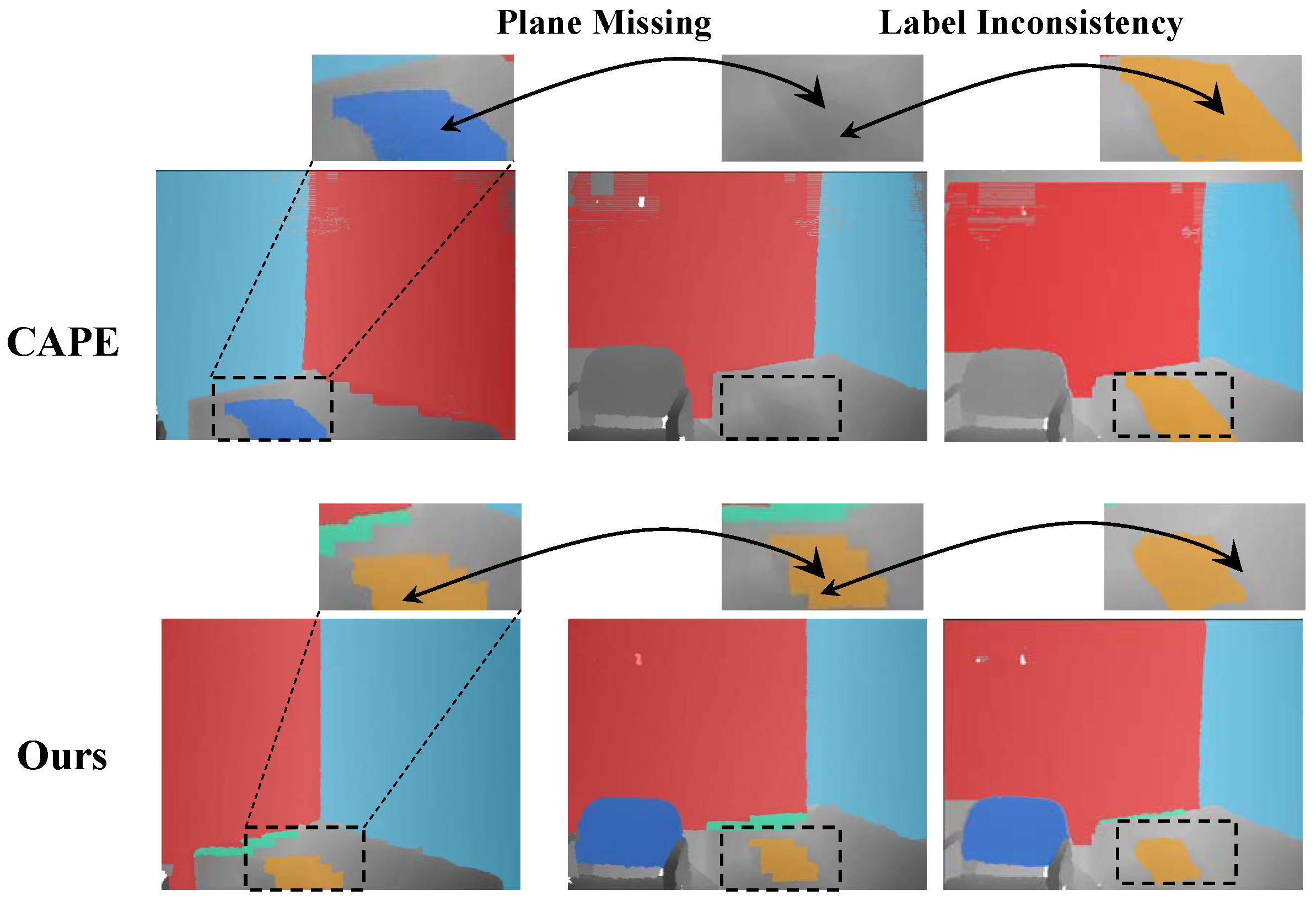

3.3. Undetected Plane Recovery

4. Experiments and Results

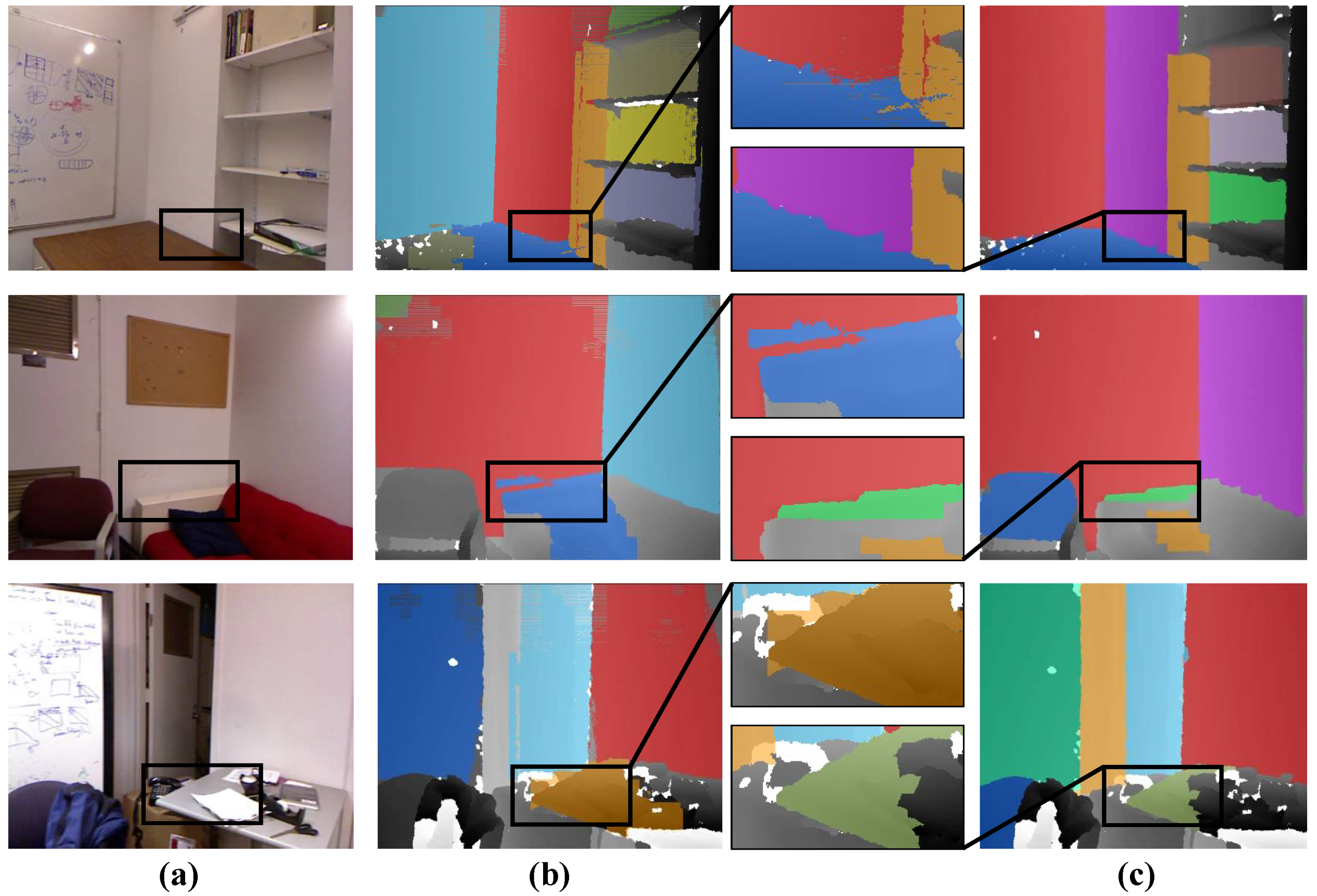

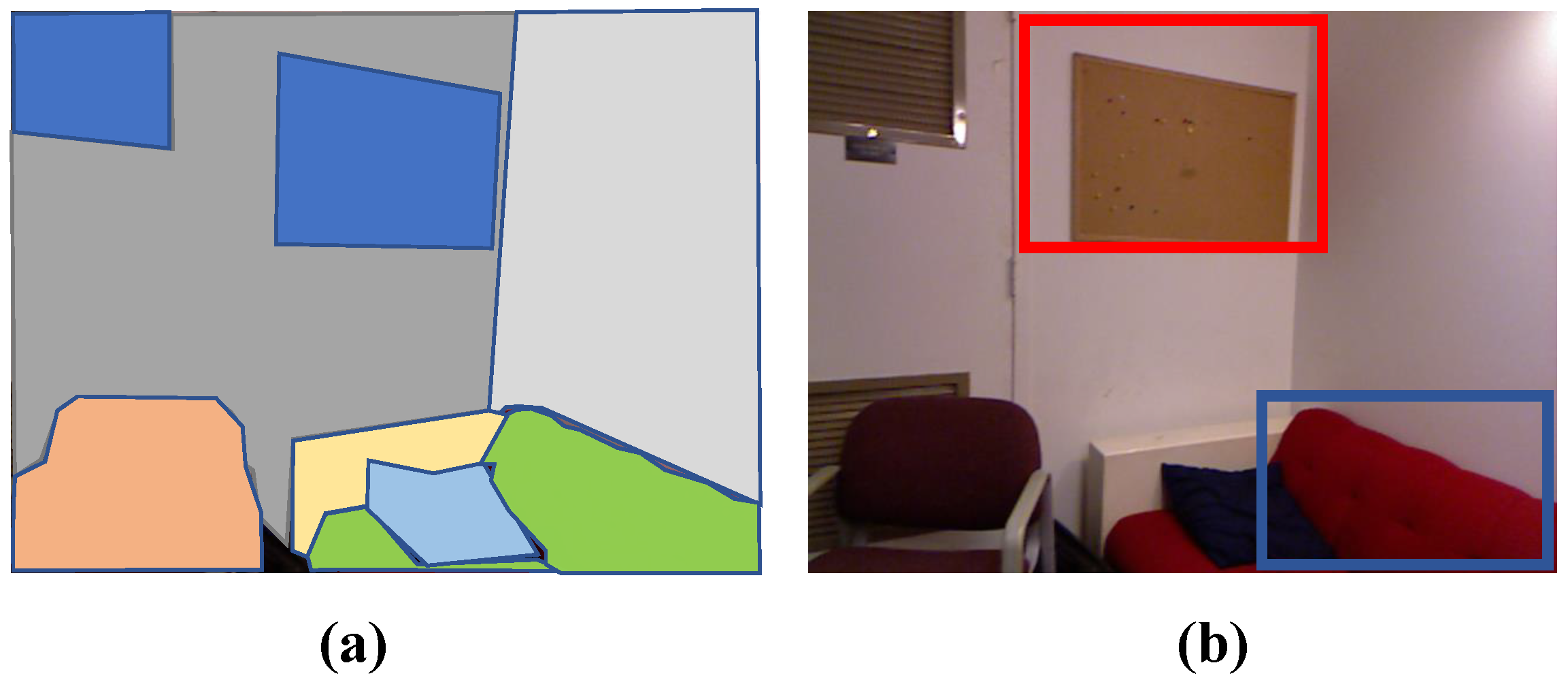

4.1. Experiment #1: Plane Detection in Single Frame

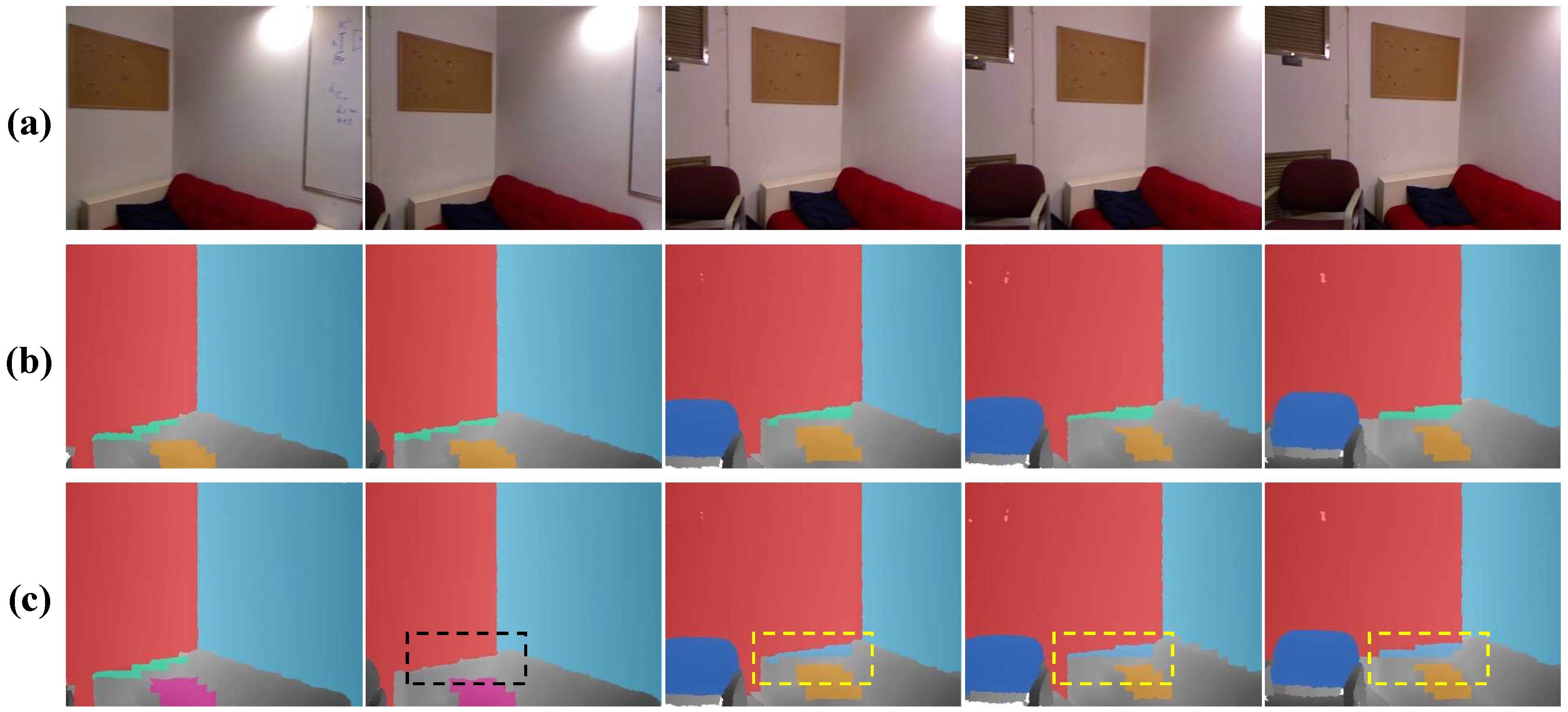

4.2. Experiment #2: Plane Detection in Frame Sequences

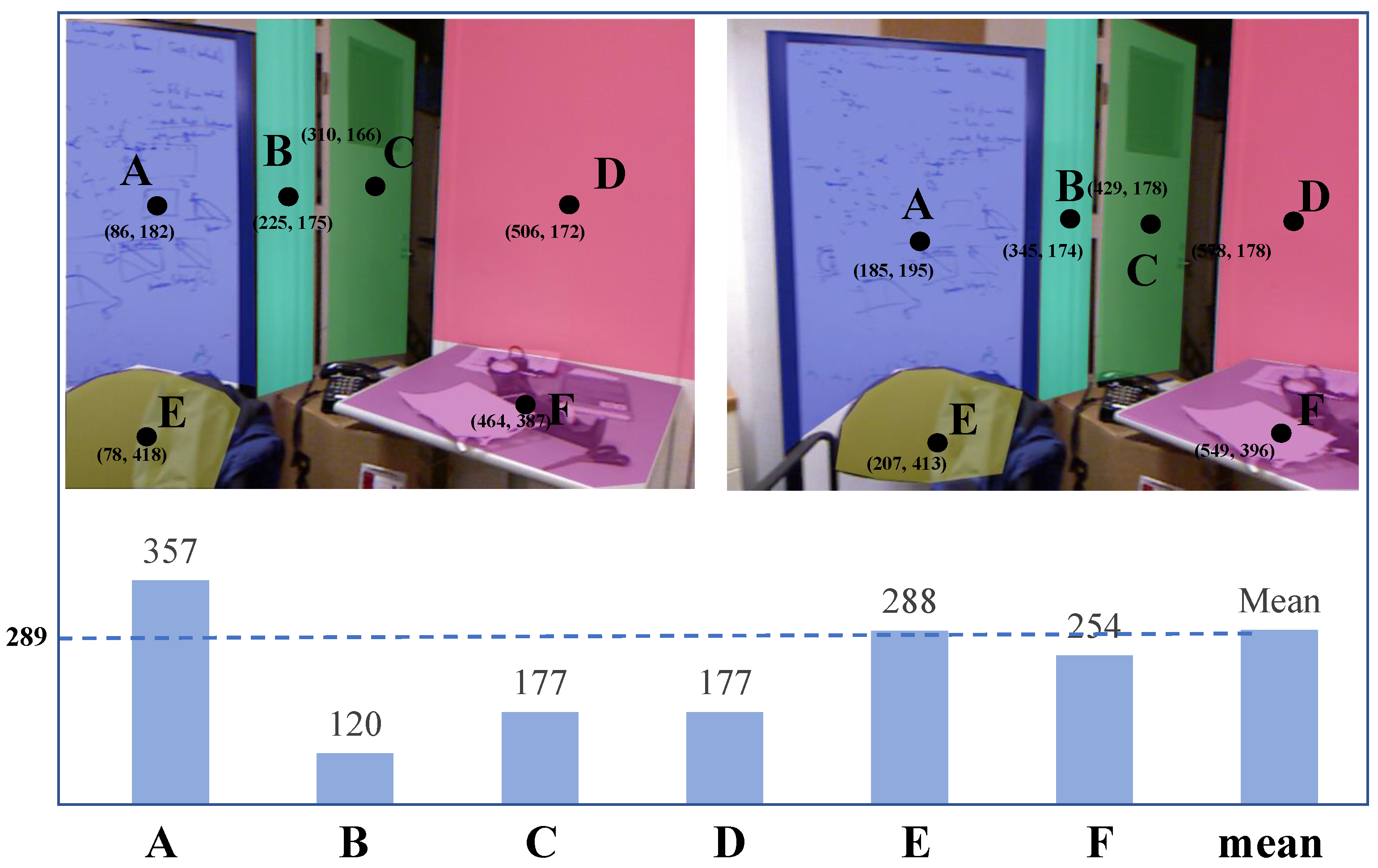

4.3. Experiment #3: Ablative Analysis

4.4. Limitation

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Czerniawski, T.; Nahangi, M.; Walbridge, S.; Haas, C. Automated removal of planar clutter from 3D point clouds for improving industrial object recognition. In Proceedings of the International Symposium on Automation and Robotics in Construction, Auburn, AL, USA, 18–21 July 2016; Volume 33, p. 1. [Google Scholar]

- Landau, Y.; Ben-Moshe, B. STEPS: An Indoor Navigation Framework for Mobile Devices. Sensors 2020, 20, 3929. [Google Scholar] [CrossRef]

- Czerniawski, T.; Sankaran, B.; Nahangi, M.; Haas, C.; Leite, F. 6D DBSCAN-based segmentation of building point clouds for planar object classification. Autom. Constr. 2018, 88, 44–58. [Google Scholar] [CrossRef]

- Yin, H.; Ma, Z.; Zhong, M.; Wu, K.; Wei, Y.; Guo, J.; Huang, B. SLAM-Based Self-Calibration of a Binocular Stereo Vision Rig in Real-Time. Sensors 2020, 20, 621. [Google Scholar] [CrossRef]

- Liu, Q.; Wang, Z.; Wang, H. SD-VIS: A Fast and Accurate Semi-Direct Monocular Visual-Inertial Simultaneous Localization and Mapping (SLAM). Sensors 2020, 20, 1511. [Google Scholar] [CrossRef]

- Ataer-Cansizoglu, E.; Taguchi, Y.; Ramalingam, S.; Garaas, T. Tracking an RGB-D camera using points and planes. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Sydney, Australia, 2–8 December 2013; pp. 51–58. [Google Scholar]

- Kaess, M. Simultaneous localization and mapping with infinite planes. In Proceedings of the 2015 IEEE International Conference on Robotics and Automation (ICRA), Seattle, WA, USA, 26–30 May 2015; pp. 4605–4611. [Google Scholar]

- Uygur, I.; Miyagusuku, R.; Pathak, S.; Moro, A.; Yamashita, A.; Asama, H. Robust and Efficient Indoor Localization Using Sparse Semantic Information from a Spherical Camera. Sensors 2020, 20, 4128. [Google Scholar] [CrossRef]

- Newcombe, R.A.; Izadi, S.; Hilliges, O.; Molyneaux, D.; Kim, D.; Davison, A.J.; Kohli, P.; Shotton, J.; Hodges, S.; Fitzgibbon, A.W. Kinectfusion: Real-time dense surface mapping and tracking. In Proceedings of the 2011 10th IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Basel, Switzerland, 26–29 October 2011; Volume 11, pp. 127–136. [Google Scholar]

- Dai, A.; Nießner, M.; Zollhöfer, M.; Izadi, S.; Theobalt, C. Bundlefusion: Real-time globally consistent 3d reconstruction using on-the-fly surface reintegration. ACM Trans. Graph. (ToG) 2017, 36, 24. [Google Scholar] [CrossRef]

- Liu, Y.; Zhang, H.; Huang, C. A Novel RGB-D SLAM Algorithm Based on Cloud Robotics. Sensors 2019, 19, 5288. [Google Scholar] [CrossRef] [PubMed]

- Feng, C.; Taguchi, Y.; Kamat, V.R. Fast plane extraction in organized point clouds using agglomerative hierarchical clustering. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–7 June 2014; pp. 6218–6225. [Google Scholar]

- Liu, C.; Kim, K.; Gu, J.; Furukawa, Y.; Kautz, J. PlaneRCNN: 3D plane detection and reconstruction from a single image. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 4450–4459. [Google Scholar]

- Vera, E.; Lucio, D.; Fernandes, L.A.; Velho, L. Hough Transform for real-time plane detection in depth images. Pattern Recognit. Lett. 2018, 103, 8–15. [Google Scholar] [CrossRef]

- Jin, Z.; Tillo, T.; Zou, W.; Zhao, Y.; Li, X. Robust plane detection using depth information from a consumer depth camera. IEEE Trans. Circuits Syst. Video Technol. 2017, 29, 447–460. [Google Scholar] [CrossRef]

- Salas-Moreno, R.F.; Glocken, B.; Kelly, P.H.; Davison, A.J. Dense planar SLAM. In Proceedings of the 2014 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Munich, Germany, 10–12 September 2014; pp. 157–164. [Google Scholar]

- Ma, L.; Kerl, C.; Stückler, J.; Cremers, D. CPA-SLAM: Consistent plane-model alignment for direct RGB-D SLAM. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 1285–1291. [Google Scholar]

- Proença, P.F.; Gao, Y. Probabilistic combination of noisy points and planes for RGB-D odometry. In Annual Conference Towards Autonomous Robotic Systems; Springer: Berlin/Heidelberg, Germany, 2017; pp. 340–350. [Google Scholar]

- Proença, P.F.; Gao, Y. Fast cylinder and plane extraction from depth cameras for visual odometry. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 6813–6820. [Google Scholar]

- Li, C.; Yu, L.; Fei, S. Real-time 3D motion tracking and reconstruction system using camera and IMU sensors. IEEE Sens. J. 2019, 19, 6460–6466. [Google Scholar] [CrossRef]

- Pollefeys, M.; Nistér, D.; Frahm, J.M.; Akbarzadeh, A.; Mordohai, P.; Clipp, B.; Engels, C.; Gallup, D.; Kim, S.J.; Merrell, P.; et al. Detailed real-time urban 3d reconstruction from video. Int. J. Comput. Vis. 2008, 78, 143–167. [Google Scholar] [CrossRef]

- Mouragnon, E.; Lhuillier, M.; Dhome, M.; Dekeyser, F.; Sayd, P. Real time localization and 3d reconstruction. In Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’06), New York, NY, USA, 17–22 June 2006; Volume 1, pp. 363–370. [Google Scholar]

- Ren, X.; Malik, J. Learning a classification model for segmentation. In Proceedings of the Ninth IEEE International Conference on Computer Vision, Nice, France, 13–16 October 2003; p. 10. [Google Scholar]

- Moore, A.P.; Prince, S.J.; Warrell, J.; Mohammed, U.; Jones, G. Superpixel lattices. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Veksler, O.; Boykov, Y.; Mehrani, P. Superpixels and supervoxels in an energy optimization framework. In European Conference on Computer Vision; Springer: Berlin/Heidelberg, Germany, 2010; pp. 211–224. [Google Scholar]

- Weikersdorfer, D.; Gossow, D.; Beetz, M. Depth-adaptive superpixels. In Proceedings of the 21st International Conference on Pattern Recognition (ICPR2012), Tsukuba, Japan, 11–15 November 2012; pp. 2087–2090. [Google Scholar]

- Zhou, Y.; Ju, L.; Wang, S. Multiscale superpixels and supervoxels based on hierarchical edge-weighted centroidal voronoi tessellation. IEEE Trans. Image Process. 2015, 24, 3834–3845. [Google Scholar] [CrossRef] [PubMed]

- Picciau, G.; Simari, P.; Iuricich, F.; De Floriani, L. Supertetras: A Superpixel Analog for Tetrahedral Mesh Oversegmentation. In International Conference on Image Analysis and Processing; Springer: Berlin/Heidelberg, Germany, 2015; pp. 375–386. [Google Scholar]

- Song, S.; Lee, H.; Jo, S. Boundary-enhanced supervoxel segmentation for sparse outdoor LiDAR data. Electron. Lett. 2014, 50, 1917–1919. [Google Scholar] [CrossRef]

- Lin, Y.; Wang, C.; Chen, B.; Zai, D.; Li, J. Facet segmentation-based line segment extraction for large-scale point clouds. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4839–4854. [Google Scholar] [CrossRef]

- Lin, Y.; Wang, C.; Zhai, D.; Li, W.; Li, J. Toward better boundary preserved supervoxel segmentation for 3D point clouds. ISPRS J. Photogramm. Remote Sens. 2018, 143, 39–47. [Google Scholar] [CrossRef]

- Yang, M.Y.; Förstner, W. Plane detection in point cloud data. In Proceedings of the 2nd International Conference on Machine Control Guidance, Bonn, Germany, 9–11 March 2010; Volume 1, pp. 95–104. [Google Scholar]

- Taguchi, Y.; Jian, Y.D.; Ramalingam, S.; Feng, C. Point-plane SLAM for hand-held 3D sensors. In Proceedings of the 2013 IEEE International Conference on Robotics and Automation, Karlsruhe, Germany, 6–10 May 2013; pp. 5182–5189. [Google Scholar]

- Schnabel, R.; Wahl, R.; Klein, R. Efficient RANSAC for point-cloud shape detection. In Computer Graphics Forum; Wiley Online Library: Oxford, UK, 2007; Volume 26, pp. 214–226. [Google Scholar]

- Bostanci, E.; Kanwal, N.; Clark, A.F. Extracting planar features from Kinect sensor. In Proceedings of the 2012 4th Computer Science and Electronic Engineering Conference (CEEC), Colchester, UK, 12–13 September 2012; pp. 111–116. [Google Scholar]

- Biswas, J.; Veloso, M. Depth camera based indoor mobile robot localization and navigation. In Proceedings of the 2012 IEEE International Conference on Robotics and Automation, Saint Paul, MN, USA, 14–18 May 2012; pp. 1697–1702. [Google Scholar]

- Lee, T.K.; Lim, S.; Lee, S.; An, S.; Oh, S.Y. Indoor mapping using planes extracted from noisy RGB-D sensors. In Proceedings of the 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, Vilamoura, Portugal, 7–12 October 2012; pp. 1727–1733. [Google Scholar]

- Hough, P.V. Method and Means for Recognizing Complex Patterns. U.S. Patent 3,069,654, 18 December 1962. [Google Scholar]

- Rabbani, T.; Van Den Heuvel, F. Efficient hough transform for automatic detection of cylinders in point clouds. Isprs Wg Iii/3 Iii/4 2005, 3, 60–65. [Google Scholar]

- Nguyen, H.H.; Kim, J.; Lee, Y.; Ahmed, N.; Lee, S. Accurate and fast extraction of planar surface patches from 3D point cloud. In Proceedings of the 7th International Conference on Ubiquitous Information Management and Communication, Kota Kinabalu, Malaysia, 17–19 January 2013; ACM: New York, NY, USA, 2013; p. 84. [Google Scholar]

- Hulik, R.; Spanel, M.; Smrz, P.; Materna, Z. Continuous plane detection in point-cloud data based on 3D Hough Transform. J. Vis. Commun. Image Represent. 2014, 25, 86–97. [Google Scholar] [CrossRef]

- Borrmann, D.; Elseberg, J.; Lingemann, K.; Nüchter, A. The 3d hough transform for plane detection in point clouds: A review and a new accumulator design. 3D Res. 2011, 2, 3. [Google Scholar] [CrossRef]

- Limberger, F.A.; Oliveira, M.M. Real-time detection of planar regions in unorganized point clouds. Pattern Recognit. 2015, 48, 2043–2053. [Google Scholar] [CrossRef]

- Poppinga, J.; Vaskevicius, N.; Birk, A.; Pathak, K. Fast plane detection and polygonalization in noisy 3D range images. In Proceedings of the 2008 IEEE/RSJ International Conference on Intelligent Robots and Systems, Nice, France, 22–26 September 2008; pp. 3378–3383. [Google Scholar]

- Holz, D.; Behnke, S. Fast range image segmentation and smoothing using approximate surface reconstruction and region growing. In Intelligent Autonomous Systems 12; Springer: Berlin/Heidelberg, Germany, 2013; pp. 61–73. [Google Scholar]

- Holz, D.; Holzer, S.; Rusu, R.B.; Behnke, S. Real-time plane segmentation using RGB-D cameras. In Robot Soccer World Cup; Springer: Berlin/Heidelberg, Germany, 2011; pp. 306–317. [Google Scholar]

- Trevor, A.J.; Gedikli, S.; Rusu, R.B.; Christensen, H.I. Efficient organized point cloud segmentation with connected components. Semant. Percept. Mapping Explor. 2013. Available online: https://cs.gmu.edu/~kosecka/ICRA2013/spme13$_$trevor.pdf (accessed on 27 December 2020).

- Silberman, N.; Hoiem, D.; Kohli, P.; Fergus, R. Indoor Segmentation and Support Inference from RGBD Images. In Proceedings of the European Conference on Computer Vision (ECCV), Florence, Italy, 7–13 October 2012. [Google Scholar]

| Methods | CAPE [19] | DPD [15] | Feng et al. [12] | Ours | |

|---|---|---|---|---|---|

| SE | 65.62 | 91.89 | 71.40 | 90.57 | |

| Scene1 | SP | 89.55 | 93.83 | 94.85 | 92.98 |

| CDR | 62.50 | 100.00 | 75.00 | 100.00 | |

| SE | 55.86 | 93.81 | 68.52 | 90.89 | |

| Scene2 | SP | 93.45 | 97.58 | 94.85 | 96.83 |

| CDR | 33.33 | 100.00 | 66.67 | 100.00 | |

| Data Sets | CAPE [19] | DPD [15] | Feng et al. [12] | Ours |

|---|---|---|---|---|

| NYU dataset | 3 ms | - | 7000 ms | 14 ms |

| SR4000 dataset | 1 ms | 43.17 s | 532 ms | 2 ms |

| Total Planes | CAPE [19] | CAPE+ [18] | Ours | ||||

|---|---|---|---|---|---|---|---|

| PFF | PMF | PFF | PMF | PFF | PMF | ||

| scene 1 | 350 | 186 | 23 | 25 | 23 | 7 | 5 |

| scene 2 | 165 | 43 | 5 | 5 | 5 | 0 | 0 |

| scene 3 | 276 | 122 | 17 | 18 | 17 | 3 | 2 |

| Total Planes | CAPE+ | Ours | |||

|---|---|---|---|---|---|

| PFF | PMF | PFF | PMF | ||

| scene 1 | 350 | 9 | 5 | 7 | 5 |

| scene 2 | 165 | 0 | 0 | 0 | 0 |

| scene 3 | 276 | 2 | 2 | 3 | 2 |

| Superpixel Size | SE | SP |

|---|---|---|

| 8 × 10 | 91.33 | 94.17 |

| 20 × 15 | 90.57 | 92.98 |

| 40 × 36 | 88.26 | 90.44 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xu, J.; Xie, Q.; Chen, H.; Wang, J. Real-Time Plane Detection with Consistency from Point Cloud Sequences. Sensors 2021, 21, 140. https://doi.org/10.3390/s21010140

Xu J, Xie Q, Chen H, Wang J. Real-Time Plane Detection with Consistency from Point Cloud Sequences. Sensors. 2021; 21(1):140. https://doi.org/10.3390/s21010140

Chicago/Turabian StyleXu, Jinxuan, Qian Xie, Honghua Chen, and Jun Wang. 2021. "Real-Time Plane Detection with Consistency from Point Cloud Sequences" Sensors 21, no. 1: 140. https://doi.org/10.3390/s21010140

APA StyleXu, J., Xie, Q., Chen, H., & Wang, J. (2021). Real-Time Plane Detection with Consistency from Point Cloud Sequences. Sensors, 21(1), 140. https://doi.org/10.3390/s21010140