Classification of VLF/LF Lightning Signals Using Sensors and Deep Learning Methods

Abstract

1. Introduction

Novelty and Contributions

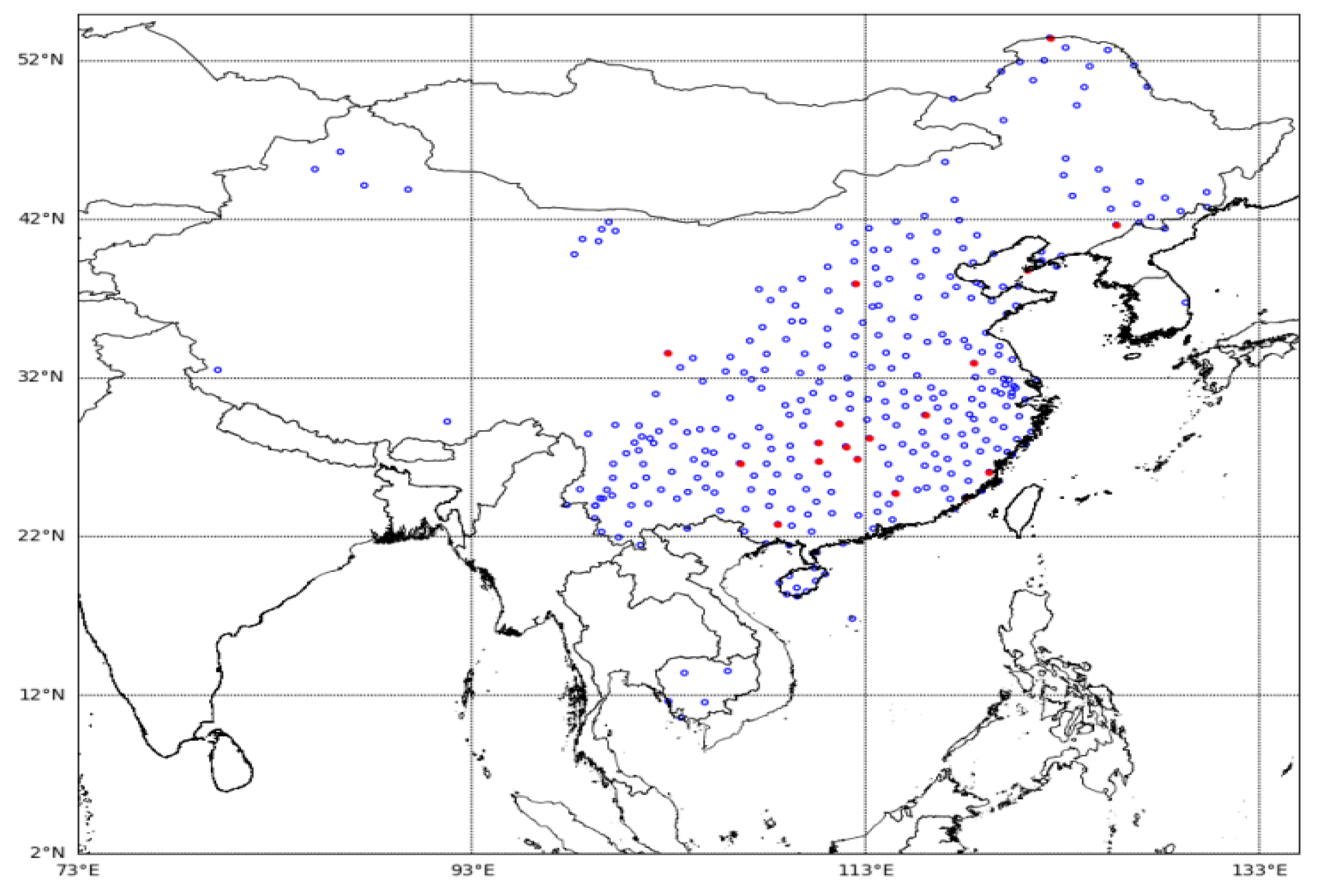

- Using the lightning waveforms and lightning location data from different regions collected by the three-dimensional lightning positioning network of the Institute of Electrical Engineering of the Chinese Academy of Sciences, a lightning waveform database was constructed for deep learning testing, training, and verification. The collected lightning signal is an electric field signal in the VLF/LF band.

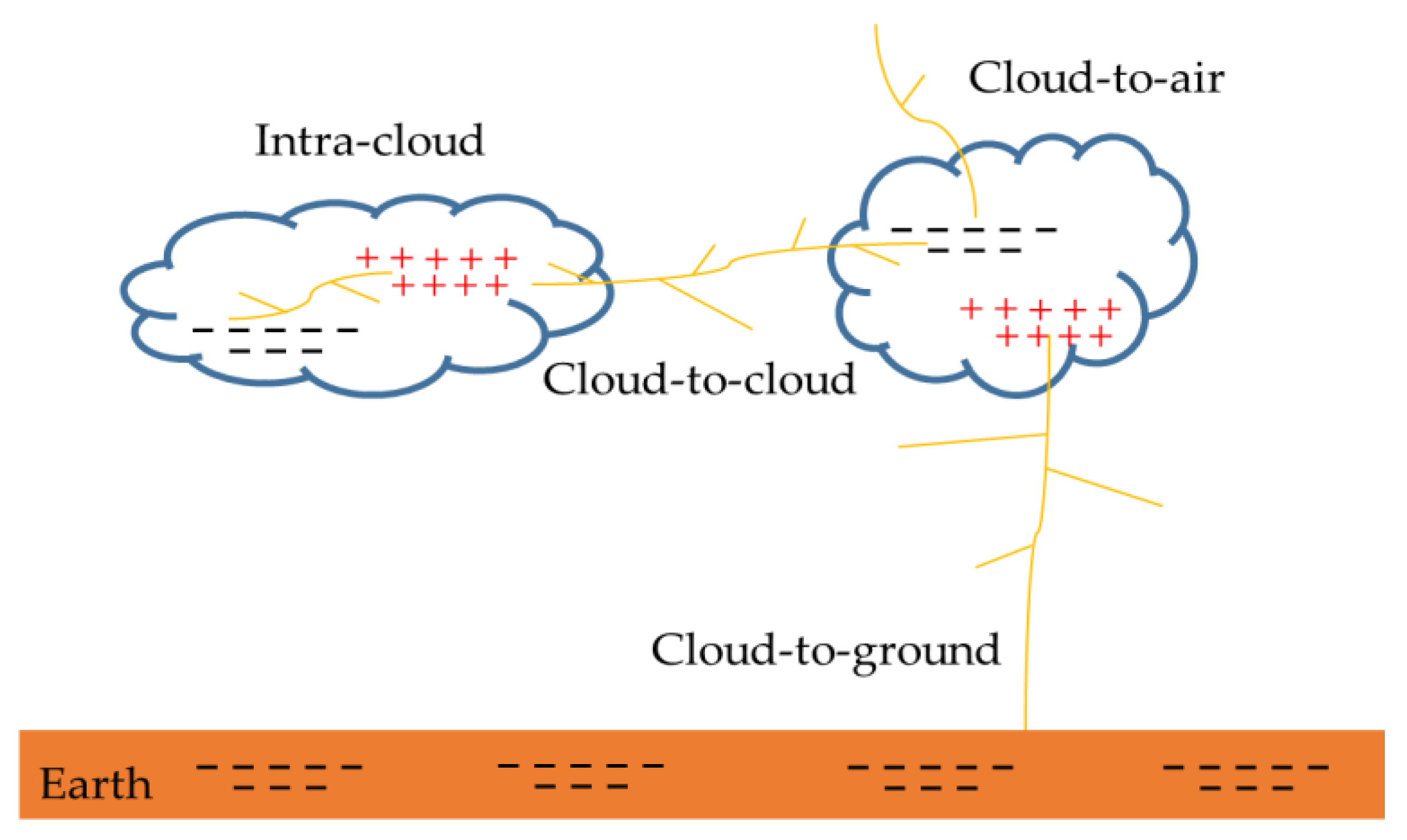

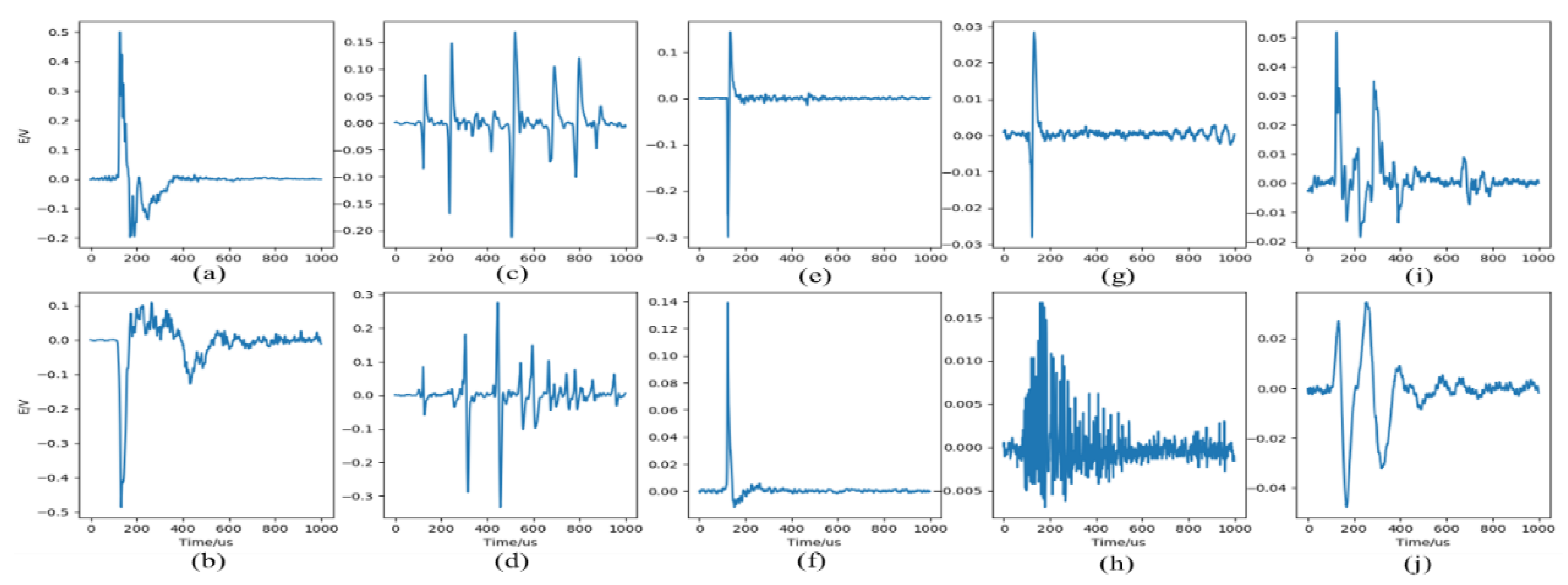

- Based on the expert classification method, we classified the waveforms in the lightning waveform database. Currently, the database contains 10 types of lightning. It needs to be emphasized that we considered lightning signals transmitted from different distances, including but not limited to the basic types of lightning described earlier. This paper mainly analyzed the feasibility and effectiveness of deep learning methods in lightning signal classification.

- A lightning classification algorithm based on deep learning was proposed to replace the statistical method. The input of the algorithm is a fixed-length lightning signal, and the output is a lightning type number.

- Apply the classification algorithm to a thunderstorm process to detect the actual application of the algorithm. Test results showed that the model could accurately recognize lightning during this thunderstorm as high as 97.55%.

2. Dataset and Preprocessing

2.1. Lightning Dataset

2.2. Pre-Processing

- (1)

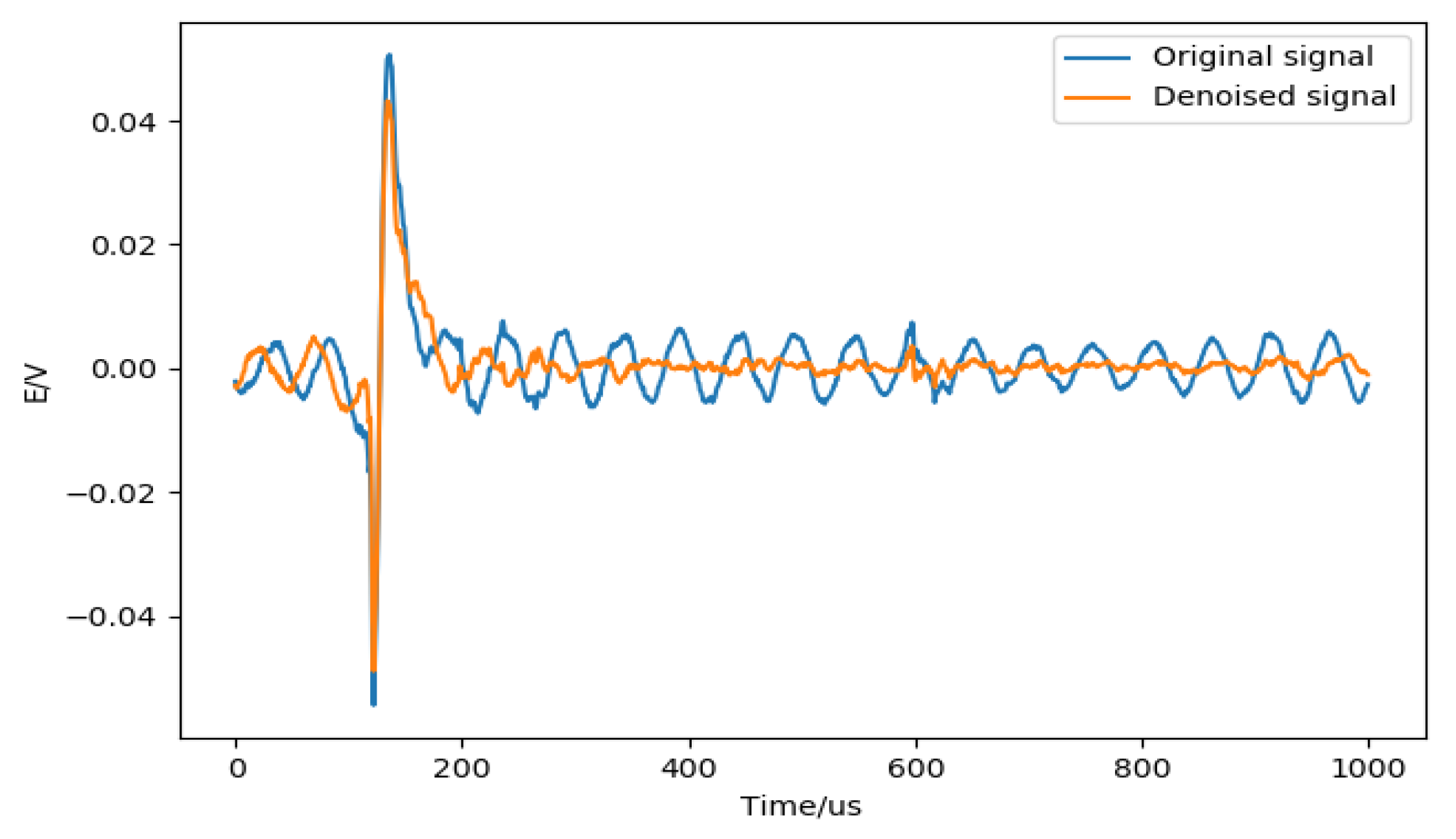

- Digital filter to reduce noise. The zero-phase digital filtering [27] method is used to remove the high-frequency noise contained in the signal. Zero-phase filtering reduces noise in the signal and preserves the lightning complex at the same time it occurs in the original. Conventional filtering reduces noise in the signal but delays the lightning complex. Figure 4 shows an example of suppressing signal noise by zero-phase digital filtering.

- (2)

- Data standardization (normalization) [28]. Data standardization is to scale the data to a specific interval according to a certain algorithm, remove the unit limit of the data, and convert it into a dimensionless pure value. Normally, standardization allows the features between different dimensions to be numerically comparable, which can greatly improve the accuracy of the classifier and prompt the convergence speed. The most typical one is the normalization of the data; that is, the data is uniformly mapped to [0,1]. Data normalization methods include min-max normalization (min-max normalization), log conversion, arctangent conversion, z-score normalization, and fuzzy quantization.

3. Methods

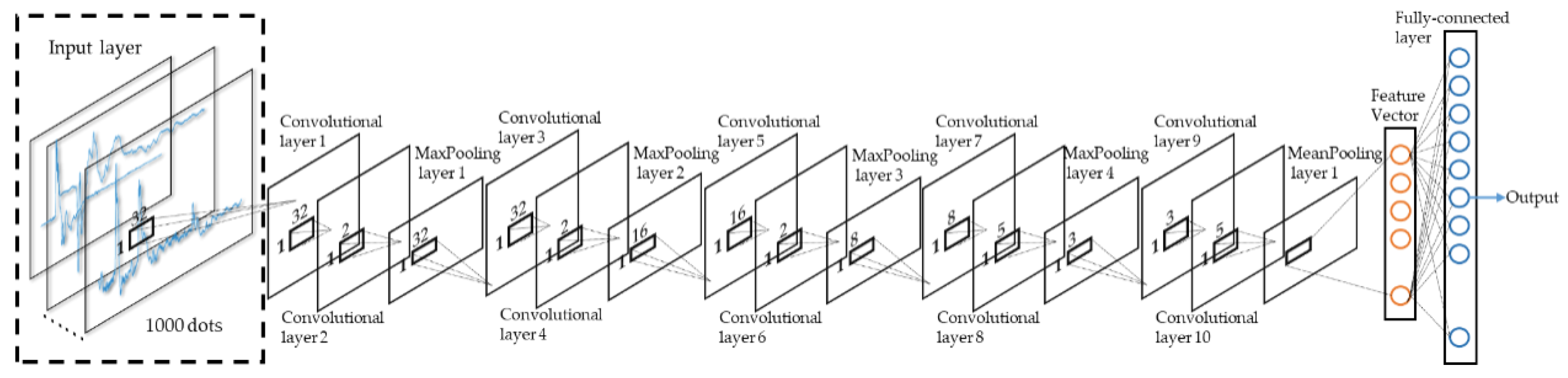

3.1. Independent Feature Branch

3.1.1. 1-D Convolution Layer

3.1.2. 1-D Pooling Layer

3.1.3. Fully-Connected Layer

3.2. Parameter Optimization

4. Result

4.1. Model Structure and Parameters

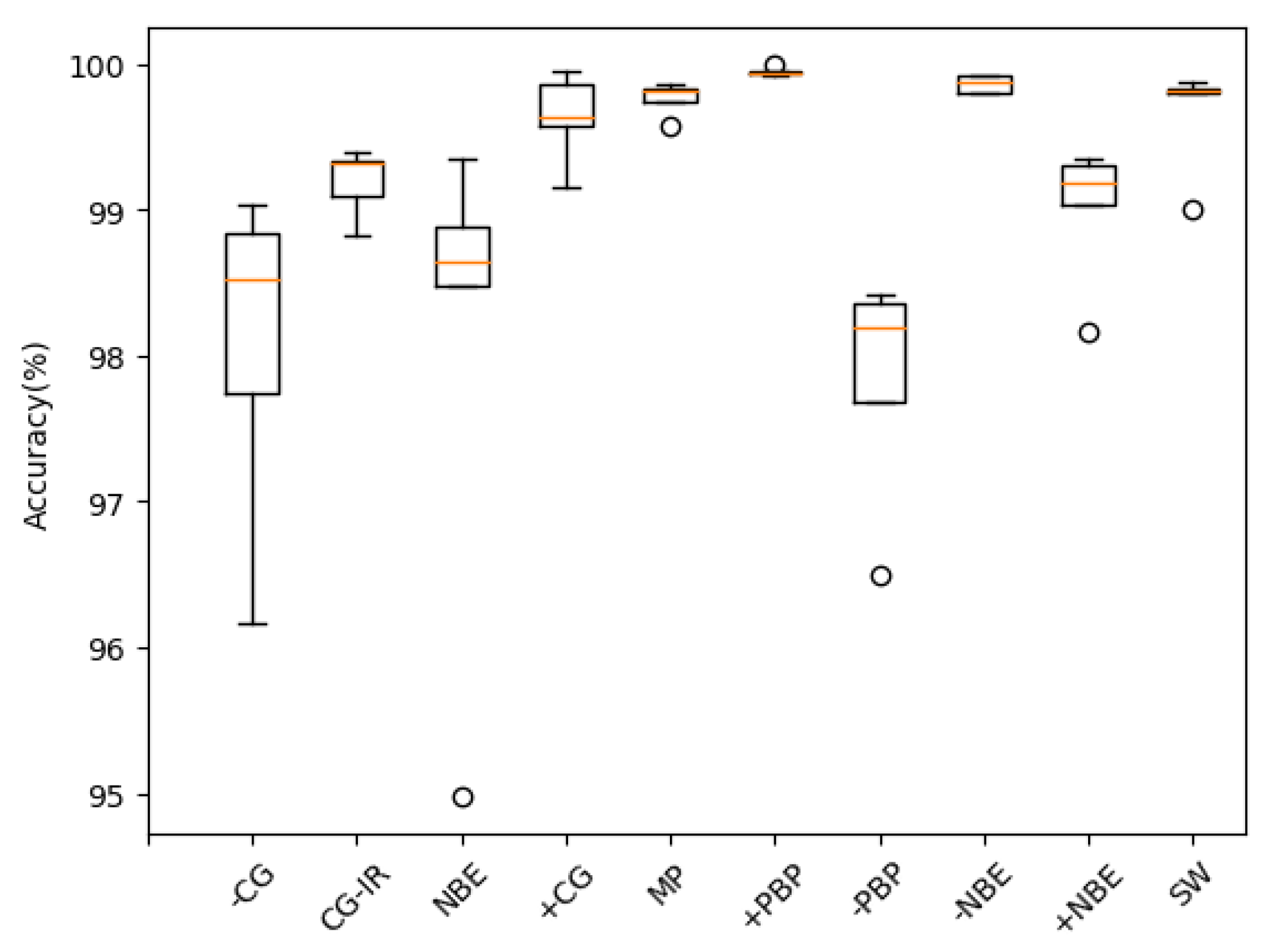

4.2. Train and Evaluate

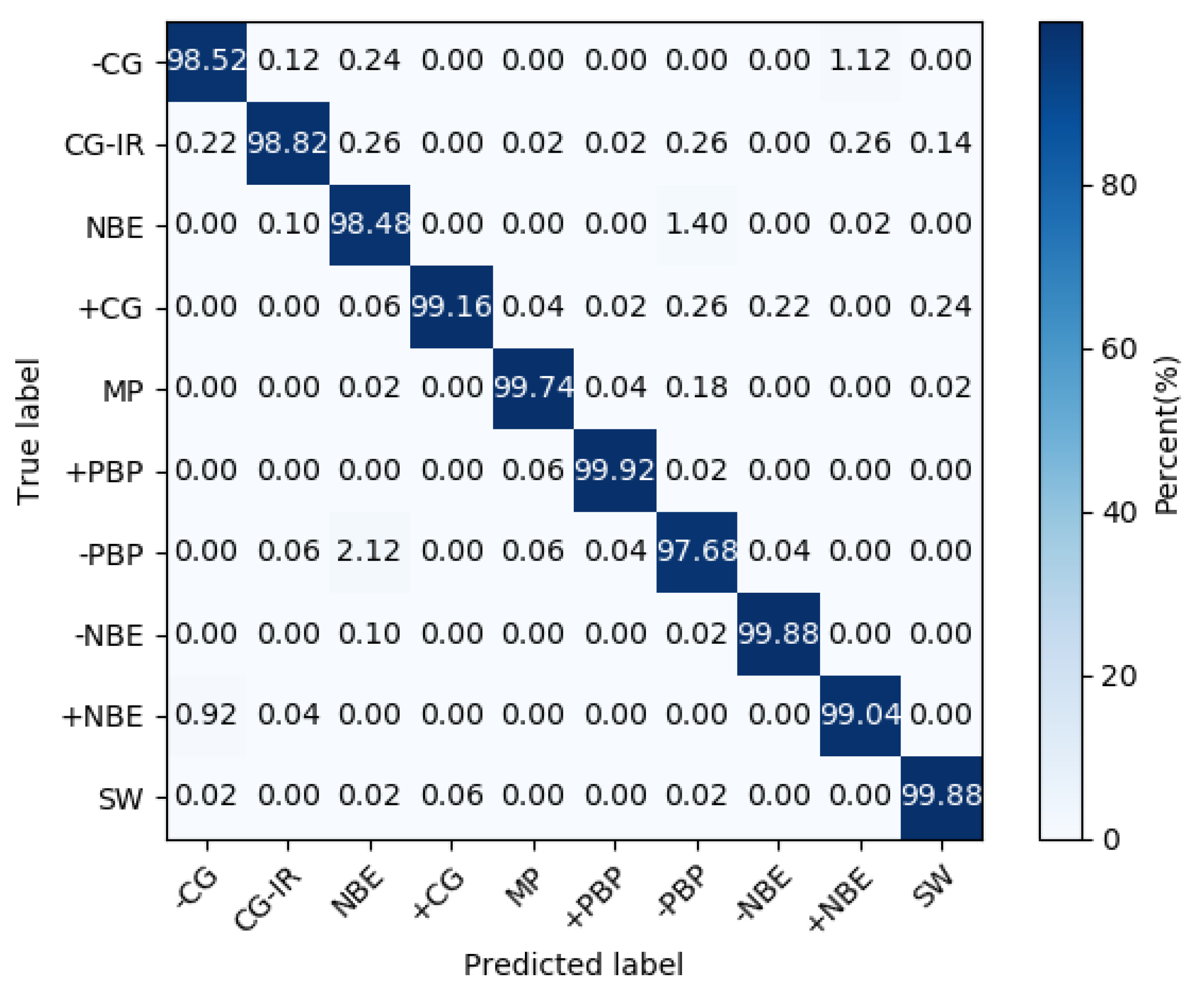

4.3. Model Test

- (1)

- The +CG occurrence probability was significantly less than other classes, which resulted in fewer samples in this class.

- (2)

- We found that there were a few data that were significantly different from the waveforms in the data set when manually verifying the classification accuracy of the model. This was also the reason that the accuracy of +CG type recognition was significantly reduced.

5. Discussion

5.1. Methods Comparison

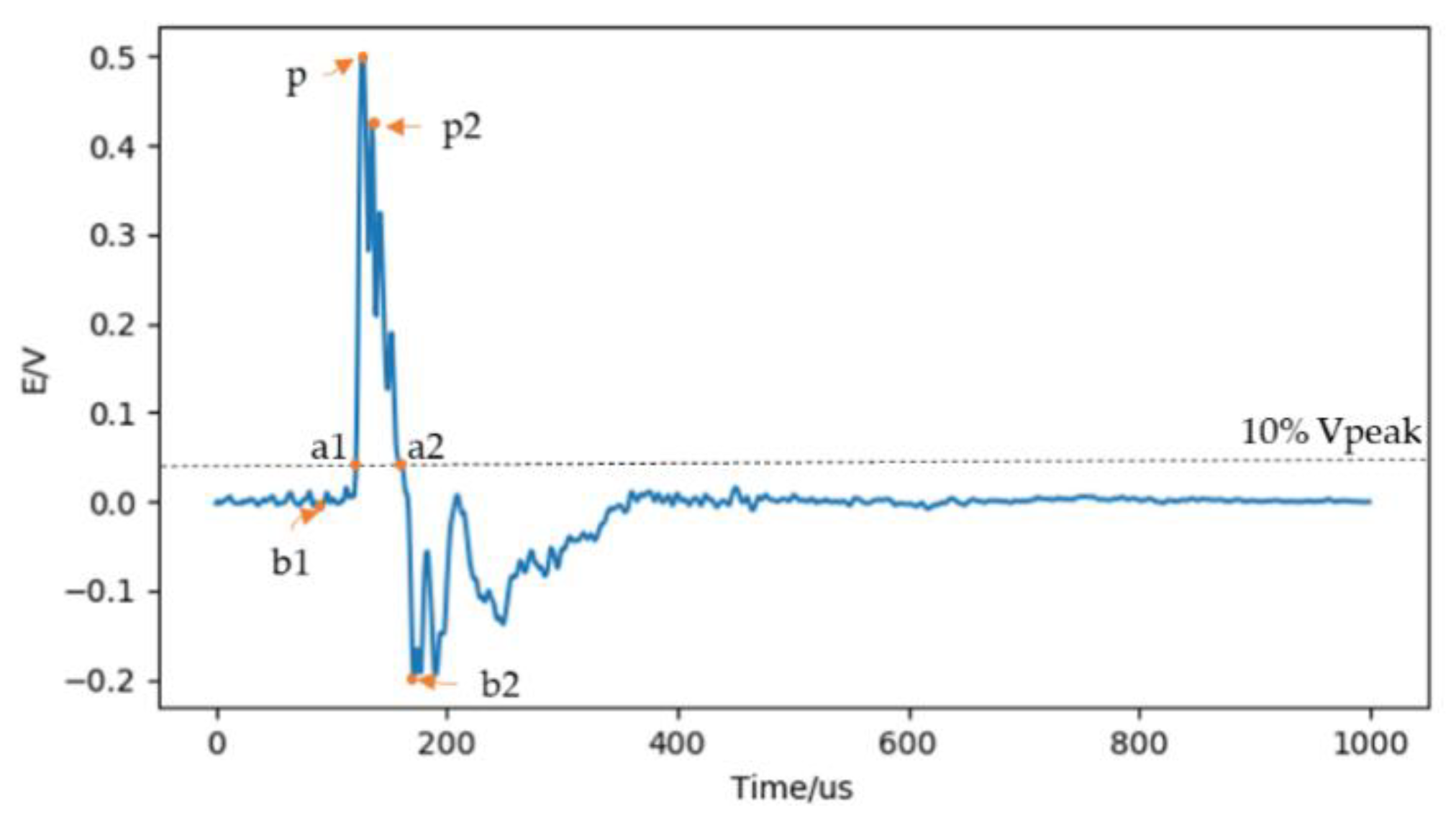

- (1)

- Pulse rise time. The time elapsed from the 10% peak of the waveform to the peak of the waveform. .

- (2)

- Pulse fall time. The time elapsed from the peak of the waveform to the 10% peak of the waveform. .

- (3)

- Pulse width. The time elapsed from the 10% peak of the rising edge to the 10% peak of the falling edge. .

- (4)

- Forward peak-to-peak ratio. The ratio of initial negative peak to maximum peak. .

- (5)

- Backward peak-to-peak ratio. The ratio of following negative peak to maximum peak. .

- (6)

- Sub-peak ratio. The ratio of the maximum peak to the secondary peak. .

- (7)

- Signal to noise ratio (SNR). The ratio of the average power of the signal within 20 before and after the peak point to the average power of the signal at other times.where represents the amplitude of the Nth point, P represents the point with the largest amplitude.

- (8)

- Pre-SNR. The ratio of the average power of the signal within 20 before and after the peak point to the average power of the signal before the peak point.

- (9)

- Post-SNR. The ratio of the average power of the signal within 20 before and after the peak point to the average power of the signal after the peak point.

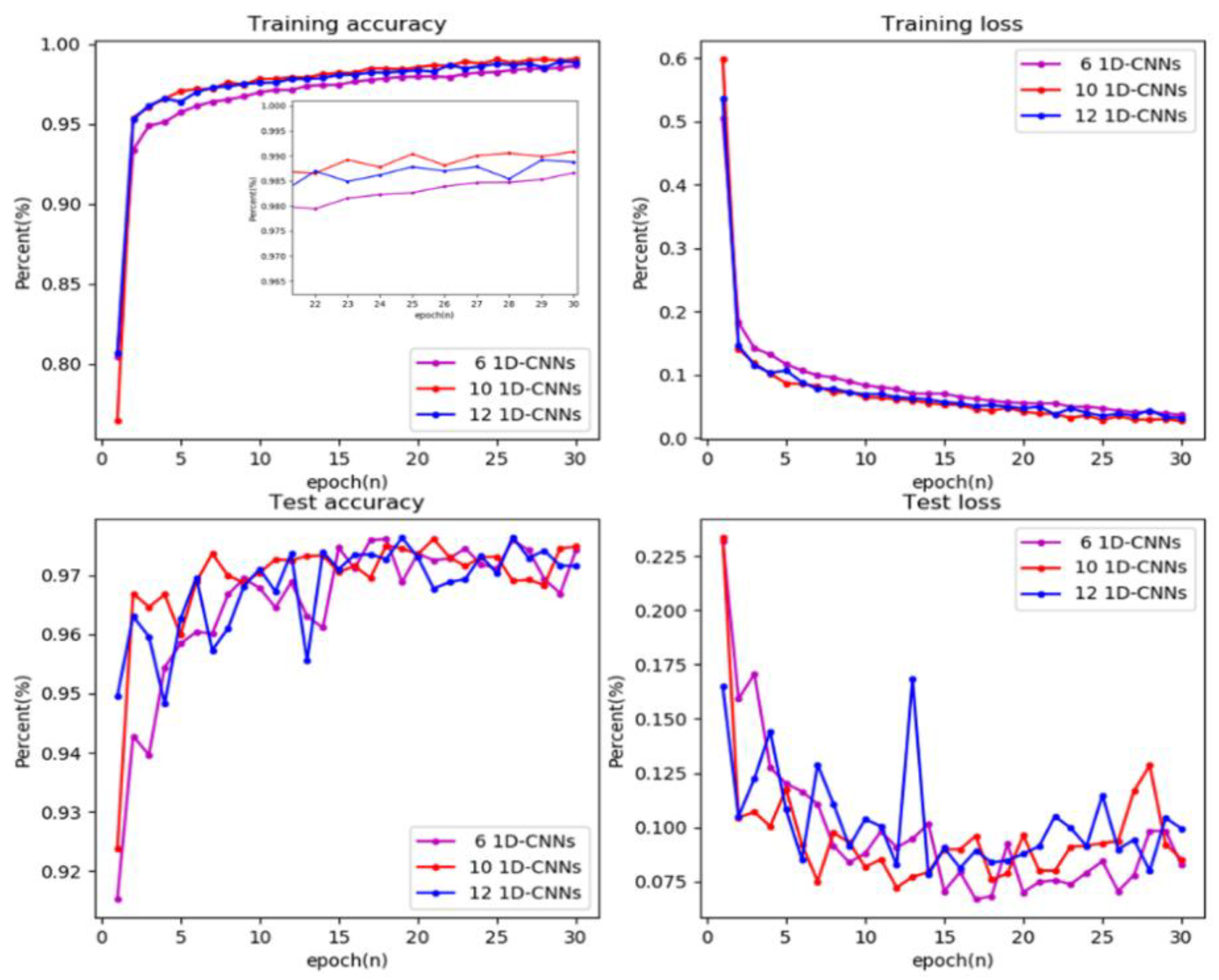

5.2. Model Structure Analysis

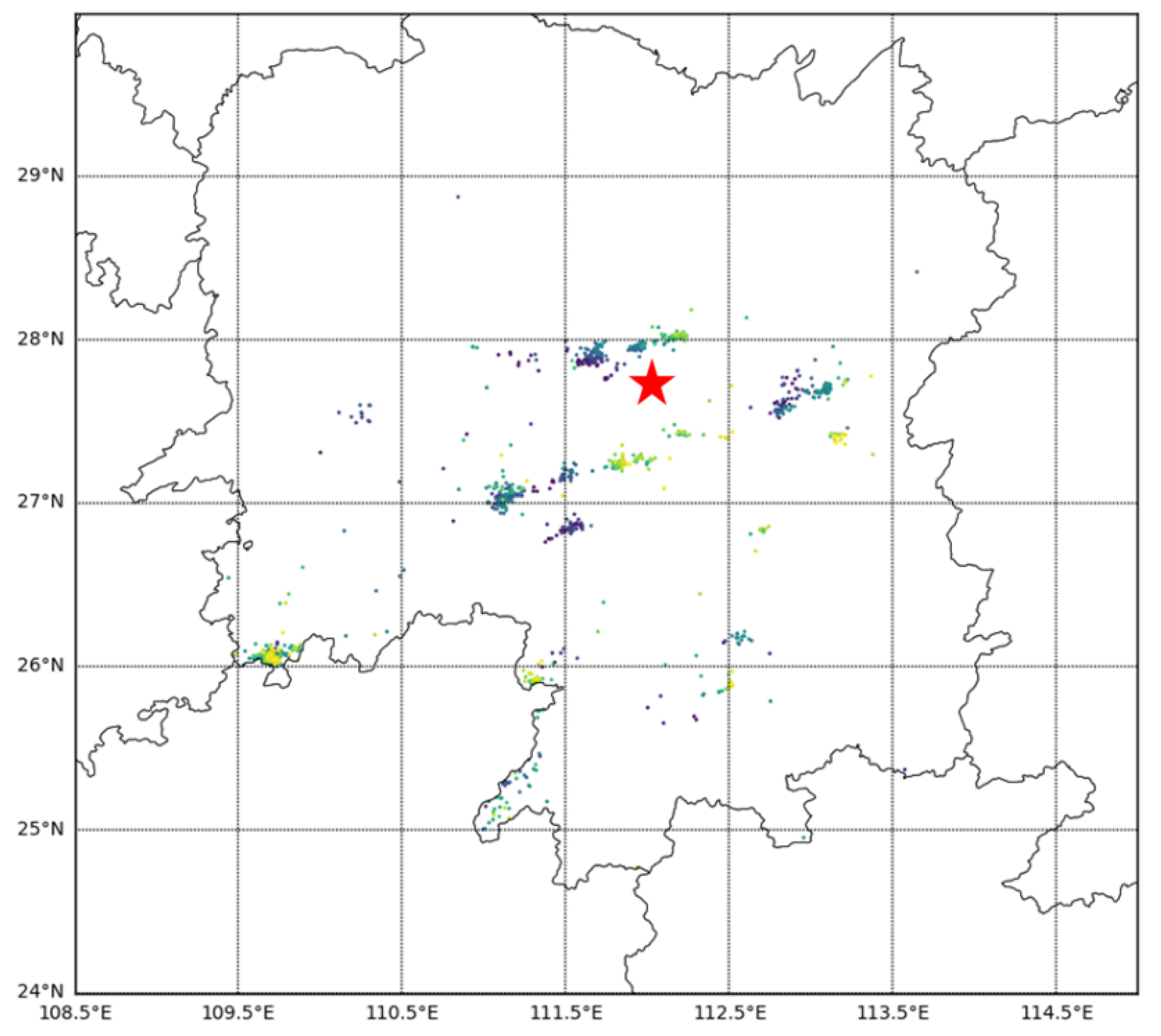

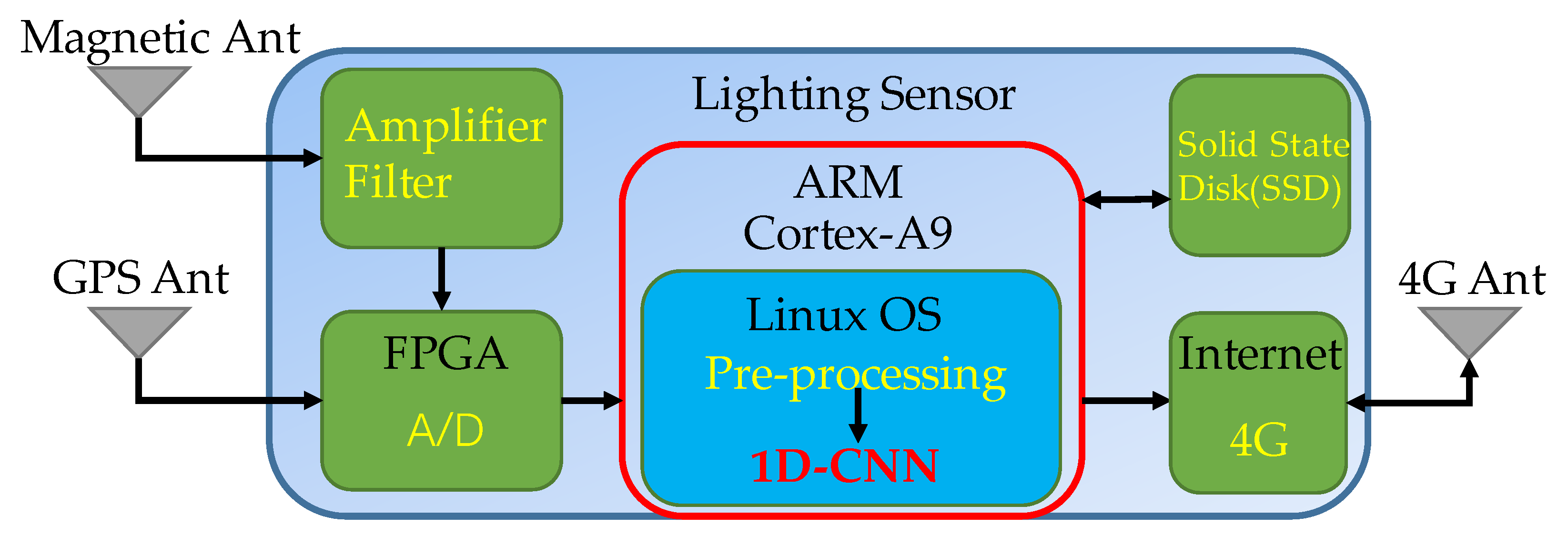

5.3. Real-Time Analysis

6. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Rakov, V.; Uman, M.; Raizer, Y. Lightning: Physics and Effects. Phys. Today-PHYS TODAY 2004, 57, 63–64. [Google Scholar] [CrossRef]

- Rodger, C.J.; Brundell, J.B.; Hutchins, M.; Holzworth, R.H. The world wide lightning location network (WWLLN): Update of status and applications. In Proceedings of the 2014 XXXIth URSI General Assembly and Scientific Symposium (URSI GASS), Beijing, China, 16–23 August 2014; pp. 1–2. [Google Scholar]

- Rakov, V.A. Lightning phenomenology and parameters important for lightning protection. In Proceedings of the IX International Symposium on Lightning Protection, Foz do Iguaçu, Brazil, 26–30 November 2007. [Google Scholar]

- Akinyemi, M.; Boyo, A.; Emetere, M.; Usikalu, M.; Olawole, O. Lightning a Fundamental of Atmospheric Electricity. IERI Procedia 2014, 9, 47–52. [Google Scholar] [CrossRef][Green Version]

- Dwyer, J.; Uman, M. The physics of lightning. Phys. Rep. 2013, 534. [Google Scholar] [CrossRef]

- Le Vine, D.M. Sources of the strongest RF radiation from lightning. J. Geophys. Res. 1980, 85. [Google Scholar] [CrossRef]

- Willett, J.C.; Bailey, J.C.; Krider, E.P. A class of unusual lightning electric field waveforms with very strong high-frequency radiation. J. Geophys. Res. Atmos. 1989, 94, 16255–16267. [Google Scholar] [CrossRef]

- Rison, W.; Krehbiel, P.R.; Stock, M.G.; Edens, H.E.; Shao, X.M.; Thomas, R.J.; Stanley, M.A.; Zhang, Y. Observations of narrow bipolar events reveal how lightning is initiated in thunderstorms. Nat. Commun. 2016, 7, 10721. [Google Scholar] [CrossRef] [PubMed]

- Rison, W.; Thomas, R.J.; Krehbiel, P.R.; Hamlin, T.; Harlin, J. A GPS-based three-dimensional lightning mapping system: Initial observations in central New Mexico. Geophys. Res. Lett. 1999, 26, 3573–3576. [Google Scholar] [CrossRef]

- Thomas, R.J.; Krehbiel, P.R.; Rison, W.; Hamlin, T.; Boccippio, D.J.; Goodman, S.J.; Christian, H.J. Comparison of ground-based 3-dimensional lightning mapping observations with satellite-based LIS observations in Oklahoma. Geophys. Res. Lett. 2000, 27, 1703–1706. [Google Scholar] [CrossRef]

- Lyu, F.; Cummer, S.A.; Solanki, R.; Weinert, J.; McTague, L.; Katko, A.; Barrett, J.; Zigoneanu, L.; Xie, Y.; Wang, W. A low-frequency near-field interferometric-TOA 3-D Lightning Mapping Array. Geophys. Res. Lett. 2014, 41, 7777–7784. [Google Scholar] [CrossRef]

- Wu, T.; Wang, D.; Takagi, N. Lightning Mapping With an Array of Fast Antennas. Geophys. Res. Lett. 2018, 45, 3698–3705. [Google Scholar] [CrossRef]

- Smith, D.A. The Los Alamos Sferic Array: A research tool for lightning investigations. J. Geophys. Res. 2002, 107. [Google Scholar] [CrossRef]

- Cooray, V.; Fernando, M.; Gomes, C.; Sorenssen, T. The Fine Structure of Positive Lightning Return-Stroke Radiation Fields. IEEE Trans. Electromagn. Compat. 2004, 46, 87–95. [Google Scholar] [CrossRef]

- Dowden, R.L.; Brundell, J.B.; Rodger, C.J. VLF lightning location by time of group arrival (TOGA) at multiple sites. J. Atmos. Sol.-Terr. Phys. 2002, 64, 817–830. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep Learn. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Cho, H.; Yoon, S.M. Divide and Conquer-Based 1D CNN Human Activity Recognition Using Test Data Sharpening. Sensors (Basel) 2018, 18, 1055. [Google Scholar] [CrossRef]

- Liu, W.; Zhang, M.; Zhang, Y.; Liao, Y.; Huang, Q.; Chang, S.; Wang, H.; He, J. Real-Time Multilead Convolutional Neural Network for Myocardial Infarction Detection. IEEE J. Biomed. Health Inform. 2018, 22, 1434–1444. [Google Scholar] [CrossRef]

- Hannun, A.Y.; Rajpurkar, P.; Haghpanahi, M.; Tison, G.H.; Bourn, C.; Turakhia, M.P.; Ng, A.Y. Cardiologist-level arrhythmia detection and classification in ambulatory electrocardiograms using a deep neural network. Nat Med. 2019, 25, 65–69. [Google Scholar] [CrossRef]

- Liu, W.; Wang, F.; Huang, Q.; Chang, S.; Wang, H.; He, J. MFB-CBRNN: A hybrid network for MI detection using 12-lead ECGs. IEEE J. Biomed. Health Inform. 2019. [Google Scholar] [CrossRef]

- Ali, Z.; Jiao, L.; Baker, T.; Abbas, G.; Abbas, Z.H.; Khaf, S. A Deep Learning Approach for Energy Efficient Computational Offloading in Mobile Edge Computing. IEEE Access 2019, 7, 149623–149633. [Google Scholar] [CrossRef]

- Alloghani, M.; Baker, T.; Al-Jumeily, D.; Hussain, A.; Mustafina, J.; Aljaaf, A.J. Prospects of Machine and Deep Learning in Analysis of Vital Signs for the Improvement of Healthcare Services. In Nature-Inspired Computation in Data Mining and Machine Learning; Yang, X.-S., He, X.-S., Eds.; Springer International Publishing: Cham, Switzerland, 2020. [Google Scholar]

- Samaras, S.; Diamantidou, E.; Ataloglou, D.; Sakellariou, N.; Vafeiadis, A.; Magoulianitis, V.; Lalas, A.; Dimou, A.; Zarpalas, D.; Votis, K.; et al. Deep Learning on Multi Sensor Data for Counter UAV Applications-A Systematic Review. Sensors (Basel) 2019, 19, 4837. [Google Scholar] [CrossRef]

- Rathore, H.; Al-Ali, A.K.; Mohamed, A.; Du, X.; Guizani, M. A Novel Deep Learning Strategy for Classifying Different Attack Patterns for Deep Brain Implants. IEEE Access 2019, 7, 24154–24164. [Google Scholar] [CrossRef]

- Li, D.; Wang, R.; Xie, C.; Liu, L.; Zhang, J.; Li, R.; Wang, F.; Zhou, M.; Liu, W. A Recognition Method for Rice Plant Diseases and Pests Video Detection Based on Deep Convolutional Neural Network. Sensors 2020, 20, 578. [Google Scholar] [CrossRef] [PubMed]

- Mahmood, A.; Ospina, A.G.; Bennamoun, M.; An, S.; Sohel, F.; Boussaid, F.; Hovey, R.; Fisher, R.B.; Kendrick, G.A. Automatic Hierarchical Classification of Kelps Using Deep Residual Features. Sensors 2020, 20, 447. [Google Scholar] [CrossRef] [PubMed]

- Gustafsson, F. Determining the initial states in forward-backward filtering. IEEE Trans. Signal Process. 1996, 44, 988–992. [Google Scholar] [CrossRef]

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. arXiv 2015, arXiv:1502.03167. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G. ImageNet Classification with Deep Convolutional Neural Networks. Neural Inf. Process. Syst. 2012, 25. [Google Scholar] [CrossRef]

- Zhang, J.; Huang, Q.; Wu, H.; Liu, Y. A Shallow Network with Combined Pooling for Fast Traffic Sign Recognition. Information 2017, 8, 45. [Google Scholar] [CrossRef]

- Kingma, D.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Duchi, J.; Hazan, E.; Singer, Y. Adaptive Subgradient Methods for Online Learning and Stochastic Optimization. J. Mach. Learn. Res. 2011, 12, 2121–2159. [Google Scholar]

- Cai, L. Ground-based VLF/LF Three Dimensional Total Lightning Location Technology. Ph.D. Thesis, Wuhan University, Wuhan, China, 2013. [Google Scholar]

| No. | Layer | Filter Number | Kernel Size | Pooling Window Size | Padding | Stride | Activation Function | Output Shape |

|---|---|---|---|---|---|---|---|---|

| 1 | Input | / | / | / | / | / | / | (1000,1) |

| 2 | 1D-Conv | 16 | 32 | / | √ | / | ReLU | (1000,16) |

| 3 | 1D- Conv | 16 | 32 | / | √ | / | ReLU | (1000,16) |

| 4 | Max-Pooling | / | / | 2 | × | 2 | / | (500,16) |

| 5 | 1D- Conv | 32 | 32 | / | √ | / | ReLU | (500,32) |

| 6 | 1D- Conv | 32 | 32 | / | √ | / | ReLU | (500,32) |

| 7 | Max-Pooling | / | / | 2 | × | 2 | / | (250,32) |

| 8 | 1D- Conv | 64 | 16 | / | √ | / | ReLU | (250,64) |

| 9 | 1D- Conv | 64 | 16 | / | √ | / | ReLU | (250,64) |

| 10 | Max-Pooling | / | / | 2 | × | 2 | / | (125,64) |

| 11 | 1D- Conv | 128 | 8 | / | √ | / | ReLU | (125,128) |

| 12 | 1D- Conv | 128 | 8 | / | √ | / | ReLU | (125,128) |

| 13 | Max-Pooling | / | / | 5 | × | 5 | / | (25,128) |

| 14 | 1D- Conv | 256 | 3 | / | √ | / | ReLU | (25,256) |

| 15 | 1D- Conv | 256 | 3 | / | √ | / | ReLU | (25,256) |

| 16 | Mean-Pooling | / | / | 5 | × | 5 | / | 256 |

| 17 | Dense | / | / | / | / | / | Softmax | 10 |

| K | −CG (%) | CG-IR (%) | NBR (%) | +CG (%) | MP (%) | +PBP (%) | −PBP (%) | −NBE (%) | +NBE (%) | SW (%) | Ave (%) |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 98.84 | 99.40 | 98.88 | 99.64 | 99.58 | 100.00 | 96.50 | 99.80 | 98.16 | 99.82 | 99.06 |

| 2 | 96.16 | 99.10 | 94.98 | 99.86 | 99.86 | 99.94 | 98.20 | 99.80 | 99.30 | 99.00 | 98.62 |

| 3 | 99.04 | 99.34 | 99.36 | 99.96 | 99.82 | 99.94 | 98.36 | 99.92 | 99.18 | 99.84 | 99.47 |

| 4 | 97.74 | 99.32 | 98.64 | 99.58 | 99.84 | 99.96 | 98.42 | 99.92 | 99.36 | 99.80 | 99.26 |

| 5 | 98.52 | 98.82 | 98.48 | 99.16 | 99.74 | 99.92 | 97.68 | 99.88 | 99.04 | 99.88 | 99.11 |

| Ave | 98.06 | 99.20 | 98.07 | 99.64 | 99.77 | 99.95 | 97.83 | 99.86 | 99.01 | 99.67 | 99.11 |

| −CG | CG-IR | NBE | +CG | MP | +PBP | −PBP | −NBE | +NBE | SW | Total | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| TP | 1321 | 1852 | 1180 | 179 | 260 | 417 | 830 | 729 | 298 | 2345 | 9411 |

| NP | 23 | 28 | 84 | 32 | 8 | 4 | 32 | 3 | 0 | 22 | 236 |

| Acc(%) | 98.29 | 98.51 | 93.35 | 84.83 | 97.01 | 99.05 | 96.29 | 99.59 | 100.00 | 99.07 | 97.55 |

| Type | CC | CG | +NBE/−NBE | Other | Acc (%) |

|---|---|---|---|---|---|

| −CG | 2232 | 2752 | 0 | 16 | 55.04 |

| CG-IR | 4196 | 582 | 0 | 222 | 11.64 |

| NBE | 4049 | 1 | 0 | 950 | 80.98 |

| +CG | 0 | 3436 | 0 | 1564 | 68.72 |

| MP | 522 | 3 | 0 | 4475 | 10.44 |

| +PBP | 1202 | 0 | 2 | 3796 | 24.04 |

| −PBP | 3507 | 1 | 1 | 1491 | 70.14 |

| −NBE | 0 | 4 | 3206 | 1790 | 64.12 |

| +NBE | 0 | 904 | 3953 | 143 | 79.06 |

| SW | 4620 | 66 | 0 | 314 | 92.40 |

| Average | 55.66 | ||||

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, J.; Huang, Q.; Ma, Q.; Chang, S.; He, J.; Wang, H.; Zhou, X.; Xiao, F.; Gao, C. Classification of VLF/LF Lightning Signals Using Sensors and Deep Learning Methods. Sensors 2020, 20, 1030. https://doi.org/10.3390/s20041030

Wang J, Huang Q, Ma Q, Chang S, He J, Wang H, Zhou X, Xiao F, Gao C. Classification of VLF/LF Lightning Signals Using Sensors and Deep Learning Methods. Sensors. 2020; 20(4):1030. https://doi.org/10.3390/s20041030

Chicago/Turabian StyleWang, Jiaquan, Qijun Huang, Qiming Ma, Sheng Chang, Jin He, Hao Wang, Xiao Zhou, Fang Xiao, and Chao Gao. 2020. "Classification of VLF/LF Lightning Signals Using Sensors and Deep Learning Methods" Sensors 20, no. 4: 1030. https://doi.org/10.3390/s20041030

APA StyleWang, J., Huang, Q., Ma, Q., Chang, S., He, J., Wang, H., Zhou, X., Xiao, F., & Gao, C. (2020). Classification of VLF/LF Lightning Signals Using Sensors and Deep Learning Methods. Sensors, 20(4), 1030. https://doi.org/10.3390/s20041030