1. Introduction

As one of the most important devices to obtain 3D point clouds, laser scanning technique has developed rapidly. Scanned data have been widely used in various areas in recent decades, such as in automatic driving [

1], high precision maps [

2], virtual reality (VR), augmented reality (AR) [

3,

4], etc. However, limited by the scanning conditions, the scanned objects are often seriously incomplete. Various factors may influence LiDAR point densities and spatial distributions, for example, Balsa-Barreiro et al. [

5,

6] analyse variations in point density across different land covers with an airborne oscillating mirror laser scanner.

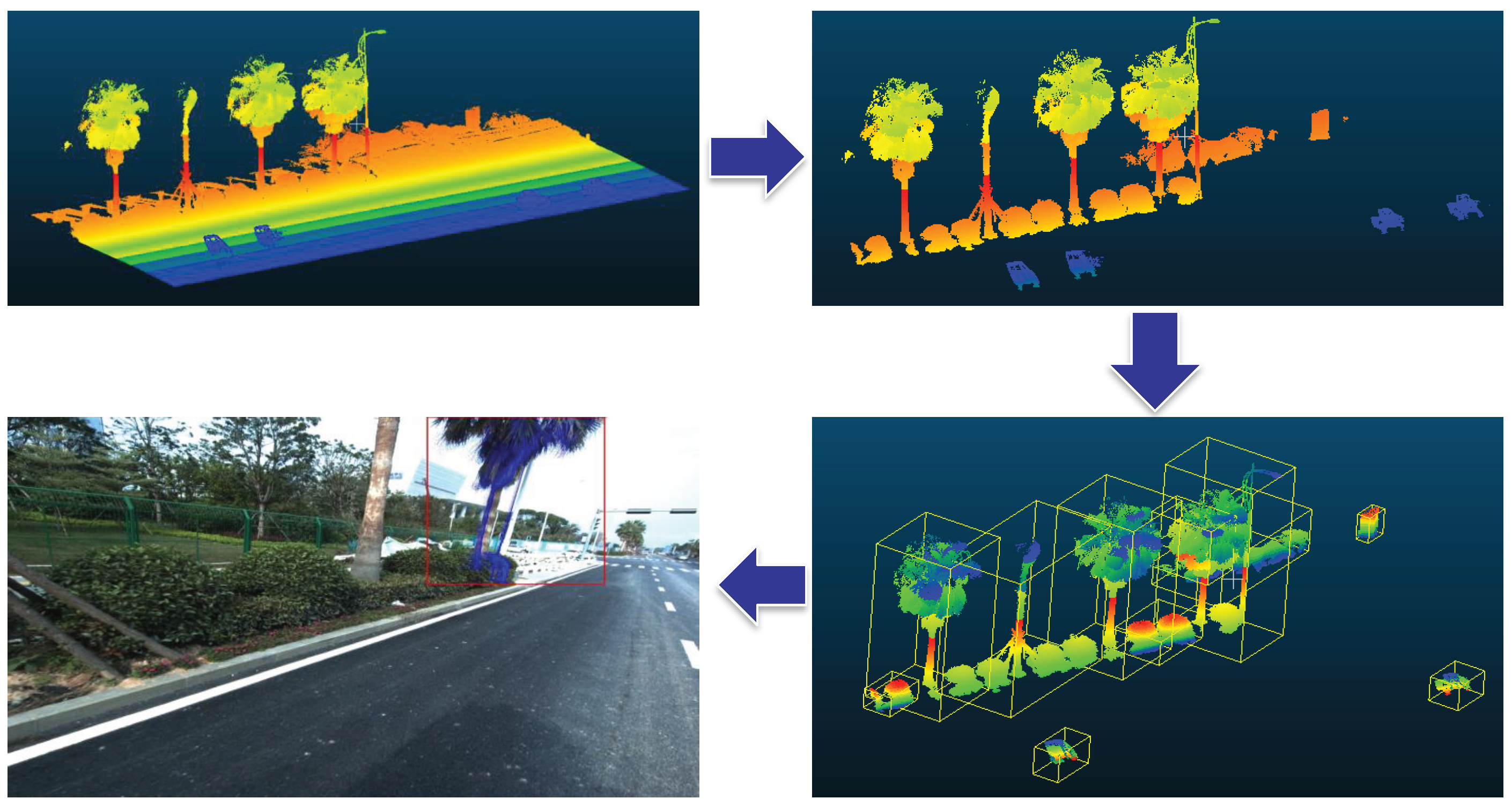

Figure 1 shows an example of a parking place (acquired by the mobile scanning system RIEGL VMX-450), where most of the cars are incomplete due to the occlusion. These is a common yet challenging problem in completion for the point cloud objects.

Previous completion methods usually focus on filling in small parts, where the basic structure is relatively complete. A. Ley et al. [

7] propose a simple convex optimization formulation that exploits geometric constraint, which has been demonstrated in denoising point clouds and filling in small holes on E-SAR data. Z. Cai et al. [

8] come up with an occluded boundary detection method based on the last-echo information, but is only fit for on small-footprint LIDAR point clouds [

9]. For the airborne laser scanning system, the data is affected by occlusion severely in trees. G. Zhou et al. [

10,

11] and J. Zhang et al. [

12] use specified fusions between LiDAR and aerial imagery to extract buildings or various applications to eliminate the influence of occlusion. H. Wang et al. [

13] utilize Hough Forest framework for object detection. In order to deal with the occlusion from adjacent objects, they propose the distance weighted voting. Some methods detect symmetries and utilize the priori knowledge to fill in missing parts [

14,

15], but these methods may fail when the data is not symmetrical. We have also noticed that photogrammetry, in addition to allowing completing LiDAR point clouds, provides more detailed information in some cases related to surface textures and colors [

16,

17].

However, most of these methods are based on the designed feature descriptors or rules and they are limited to the small-scale completion, while in practice, the objects often suffer serious incompletion, which leads to largely failures for traditional methods, thus calling for the learning-based frameworks. As far as we know, there is no one in remote sensing that has utilised the deep-learning-based method to complete point cloud object.

The completion of large missing parts is essentially a generation problem, and some of the recent generative methods have provided beneficial inspiration. ShapeNet [

18], known as a large-scale CAD dataset, has promoted the development of 3D generative methods, which can be divided roughly into two sets of methods: voxel-based and point-cloud-based methods. J. Wu et al. [

19] propose a 3D Generative Adversarial Networks (3D-GANs) to predict voxelized 3D models, and have achieved superior results compared to other unsupervised methods. H. Fan et al. [

20] focus on generating point clouds from a single image and come up with the point-cloud-generative network (

). They use Chamfer Distance (CD) to calculate the distance between the generated model and ground truth. X. Yan et al. [

21] utilize projection maps to obtain 3D spatial distribution. M. Tatarchenko et al. [

22] propose the octree generating network (

), which has achieved state-of-the-art results among the voxel-based methods. An exception is the recent work of C.-H. Lin et al. [

23]. The method produces dense multi-view projected point clouds, rather than the spatial 3D models directly.

Considering the irregular and unordered distribution of the point cloud, it is difficult to process such data under the deep-learning frameworks. To address this problem, C. R. Qi et al. [

24] propose PointNet, which is a basic work for point clouds classification and segmentation. Then, the network is further improved by their following work [

25], PointNet++, which learns local features with increasing contextual scales through a proposed hierarchical architecture.

In order to reconstruct the object with large missing parts, inspired by the above point cloud generative networks, we propose the Point Cloud Completion Network (

), which is the first image-guided deep-learning-based scanning object completion framework by utilising 2D single-view images to generate complete point cloud models. To jointly consider the 2d and 3d information, an attention-based module is designed to fuse the 2d and 3d features adaptively, then the decoder learns to construct the whole model. Furthermore, to obtain consistent spatial distribution from multi-view observations, a projection supervision scheme is offered to provide consistent multi-view reconstruction results.

Figure 2 is an overview of our method: (a) and (b) are the input of

, and (c) is the output of

(intermediate result) and (d) is the final result after aligned by the Iterative Closest Point (ICP) [

26] with the scanned point clouds.

2. Network Architecture

In this section, we introduce the network framework, which completes 3D object models based on a real image. Our algorithm involves three steps: (1) We obtain the training and testing data (see in the supplementary material). (2) Then, taking the image and point clouds pairs as input, the network is trained to generate corresponding point clouds. (3) Finally, the generated point clouds are aligned with the initial point clouds to obtain complete 3D models.

2.1. Problem Statement

Our goal is to generate complete 3D point clouds through an original image. Using a large number of unordered points to compose an object, designated as , where N is the number of points. Here, to achieve a balance between a good presentation of 3D models and calculation burden, N is set as 1024. Points are sampled on the surface from CAD models in ShapeNet.

The network actually learns a mapping scheme from a 2D image and the incomplete model to its corresponding model, denoted as:

where

denotes the network parameters;

T denotes the incomplete model; and

I denotes the 2D image. For evaluation, a given incomplete model is connected with the image to form input pairs.

Then, the merging phase is to combine the aligned generative point clouds and initial point clouds:

where

and

denote the aligned generated point clouds and initial point clouds respectively.

2.2. PCCNet Architecture

To complete shapes with large holes, we propose a novel network to generate point clouds, as shown in

Figure 3. Unlike conventional networks for reconstruction, our network uses two inputs: the incomplete 3D shape and its corresponding image. In the training phase, the process contains two stages to obtain the point clouds.

First, in the encoding phase, we use a 2D encoder to extract the image feature and a 3D encoder to obtain the features from incomplete shapes. Then, we design an attention-based module to fuse the 2D and 3D features, which can learn to adjust weights of the two parts adaptively. So after fusing the two features, we have an insight of the whole object, not only from the 2D form, but also from spatial and geometric information. Next, we use a decoder comprised of several convolutional and deconvolutional layers, learning to map the fused features to complete point clouds. The output is the generated point clouds as a matrix.

Specifically, we give a detailed illustration about the architecture. For the input, a image and an incomplete shape with 1024 points make up the input pair, which is fed into the 2D-3D encoder. The 2D encoder contains five convolutional and ReLU layers. Then, a 2048-dimensional feature map of the image is produced. As for the 3D encoder, we adopt the basic structure of PointNet++. Three set abstraction levels, including the sampling, grouping and PointNet layers, are utilised to extract the 3D information. Thus, we obtain a 1024-dimensional feature of the 3D part. To jointly consider the 2d and 3d information, an attention-based fusion module is designed to fuse the 2d and 3d features. First, the concatenated features of the two encoders are fed into a fully connected layer and a sigmoid layer to form two weights between 0 and 1, which represent the relative significance of the two features. Then, two fully connected layers learn to further integrate them and reshape to with 8 channels to fit for the decoder.

Inspired by the single-view generative networks, the decoder contains four convolutional layers, one deconvolutional layer and two fully connected layers, which can recover the 3D distribution from the feature space. To keep more fine-grained structures from the initial 3D models, a skipped connection from the third set abstraction level is added, such as the structure of U-Net [

27]. After the last fully connected layer, the map is reshaped to a

matrix.

2.3. Loss Function

Inspired by the single-view generative networks, we use the Chamfer Distance (CD) as the criterion measuring the distance between two models

:

where

and

denote the generated model and ground truth;

p and

q denote points in these two models. CD can be conducted efficiently, and the overall distance is the mean of all points in the two shapes. Both

and our experiments confirm that CD provides a good measurement of spatial distance. Additionally, we add a projection loss to train the network. At each iteration, the generated point clouds and ground truth are rotated according to the same random transformation. Then, they are projected on a

image. For every pixel, the projection pixel and its three neighbor pixels are labeled as foreground with white.

To ensure the multi-view observation consistence while capturing fine-grained parts, we adopt the the projection as an additional supervisor. Notice that there is a recent work [

23] that generates multi-view projection directly and is designed for dense point cloud generation. On the contrary,

targets at real images and measure the discrepancy of projections. The projection loss is the per-pixel discrepancy between the two projected images of the generated model and ground truth:

where

and

denote the pixels with the location

i in the two projected images.

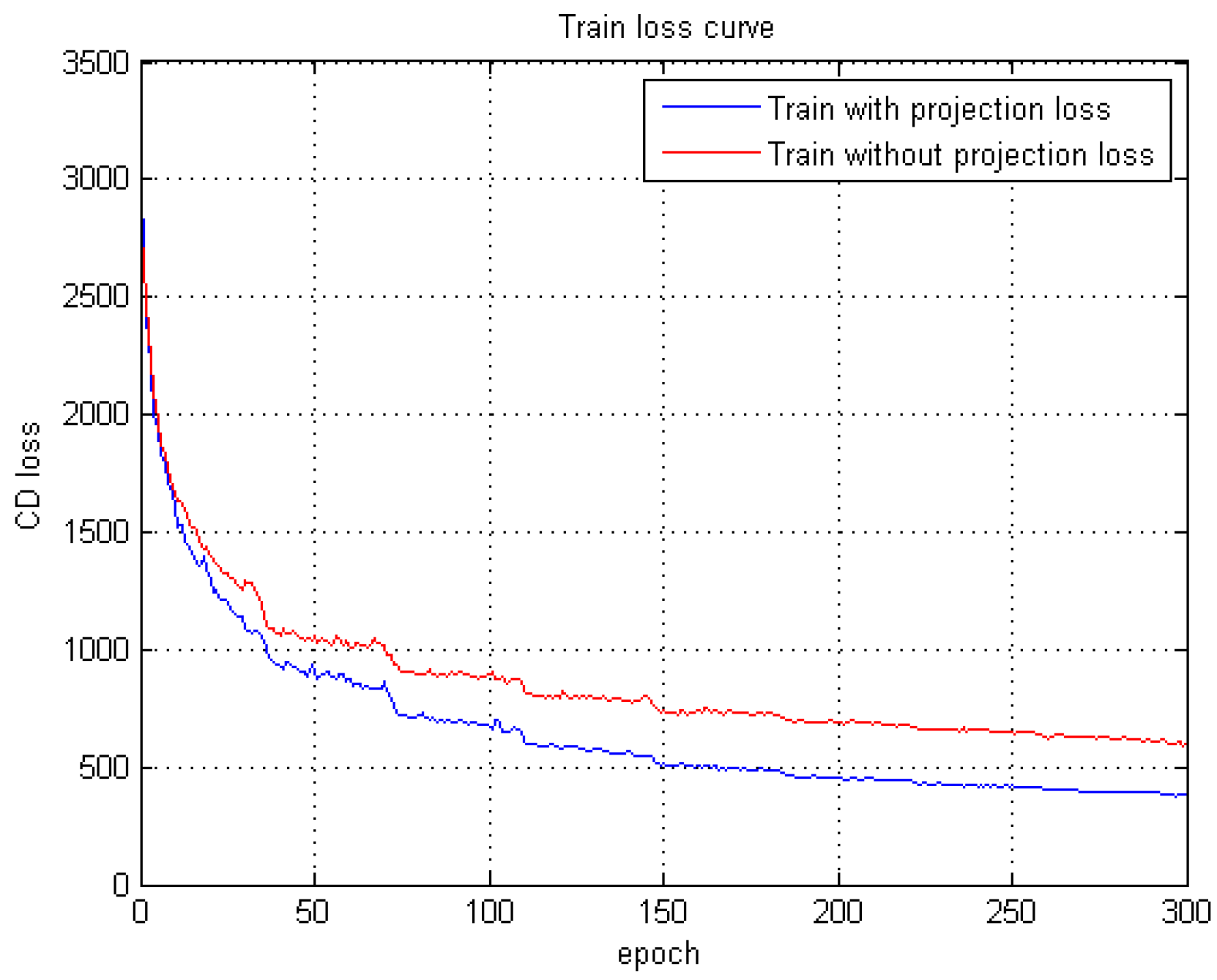

Experiments are carried out to compare the function of projection loss, which demonstrate the promotion in training speed and accuracy (

Section 3.2). The total objective function is:

3. Experiment

In this section, we provide some implementation details about the proposed along with the employed datasets. To evaluate the capability of 3D reconstruction, first, is compared to two single-view reconstruction methods. Then, is compared with a state-of-the-art MLS completion approach.

3.1. Dataset and Implementation Details

Dataset. Our network is trained on ShapeNetCore55, which covers 55 common object categories with approximately 51,300 unique 3D models. To construct the image and point clouds pairs for training, we use CAD models with complex backgrounds from one fixed viewpoint (looking down at 20 degrees) to mimic the real images. Simultaneously, we sample the CAD surface to obtain point clouds. All of the sampled point clouds are normalized into a 1 m cube and centered at the origin. We split the dataset into training and testing sets in the ratio of 4:1.

In the testing phase, point clouds are generated from real street photos, together with scanning point clouds acquired by a RIEGL VMX-450 MLS system. However, at the same time it suffers from incompetent scanning especially at the back. First, we will have a brief introduction of the MLS system. Then, to have a clear view of our method, the making procedure of the training data is introduced.

MLS system. There are mainly five parts, as shown in

Figure 4, which are mobile laser scanning system, optical camera system, global positioning system, inertial navigation system and Distance Measurement Indicator (DMI). The core device is the mobile laser scanning system, i.e., a RIEGL VMX-450 MLS system, which can provide low-noise and gapless

lines at a measurement rate of 550,000 pts/s and a scan rate of up to 200 lines/s. Meanwhile, to form the training data of picture-point-cloud pairs, the optical camera system, containing four optical digital camera to capture the surrounding environment, is taking photos at the same time. The other three systems provide assistant effects for the scanning procedure.

The making procedure of training and testing data. The whole data contains two parts, which are ShapeNet data for training and MLS data for testing. There are a large amount of mesh models in ShapeNet, first, we sample points on the surfaces of these meshes as the complete models. To form incomplete models, we select random planes through the center of models and cut a half. The pairing images are rendered with random selected background images. The procedure of making ShapeNet training data is shown in

Figure 5a. For the MLS data, as shown in

Figure 6, the first step is to remove the ground and get individual MLS objects. Then, based on the recorded parameters of each images and 3D-2D projection relationships, we are able to get accurate image and point clouds pairs. Due to the one-to-many mapping between 3D models and 2D images, with careful selection, we obtain MLS pairs for testing.

Implementation details. The network is programmed in the TensorFlow framework, the training optimizer uses Adam [

28]. We run the code on a server with two Titan X GPUs. The network is trained from scratch with a batch size of 50 and 300 epochs in total. The learning rate automatically decays according to the setting of PointNet++. The size of the input pictures is

, and the number of generated point clouds is 1024. For the 2D encoder, the kernel size of the convolutional layers is

with no padding. The parameters of the 3D encoder are derived from PointNet++: the numbers of sampled points are 512 and 128, and each local group has 64 points with the ball radius of 0.35 and 0.45. As for the decoder, the kernel size of the deconvolutional layer is

. Besides, we set ReLU as the activation function.

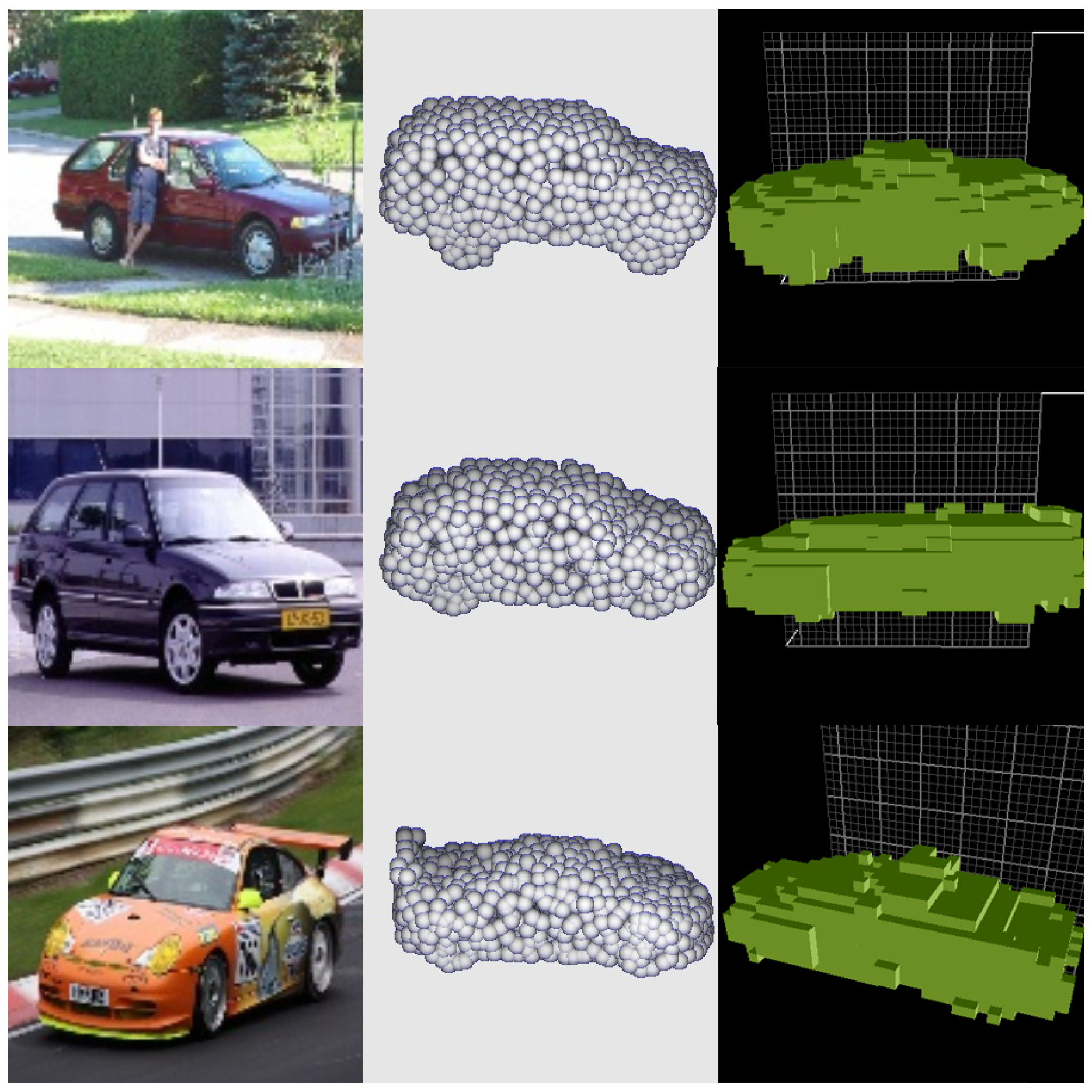

3.2. Evaluation of the Proposed PCCNet

Reconstruction performance of . To have an intuitive understanding of

, based on ShapeNetCore55, we select five categories for training and testing data. To simulate the real environment, the CAD dataset is synthesized with several real scenes. Shown in

Figure 7 are four selected cars, it can be seen that the generated point clouds by

are sharing similar distribution with the ground truth.

In order to measure the attention-based fusion module, we take away the weighted branch (a fully connected layer and a sigmoid layer) and keep the two fully connected layers. The results are shown in column

in

Table 1 and

Table 2. It can be seen that compared with the complete structure

,

has lower accuracy.

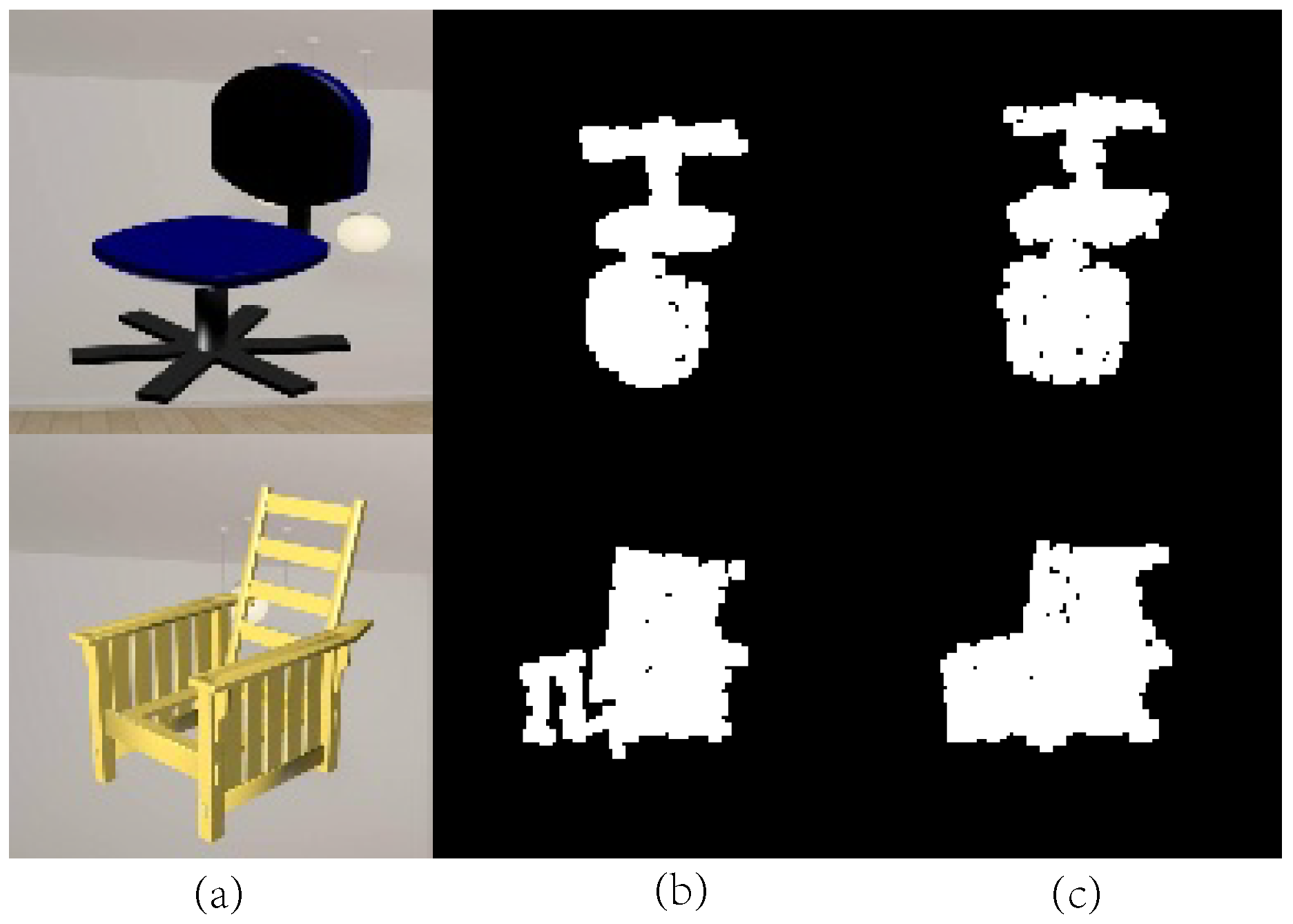

The function of projection is to delineate the outline of an object. Compared with volumetric methods [

29] that cannot delineate some fine-grained parts, our method can exhibit more detailed parts, thus accelerating and promoting training quality.

Figure 8 shows two samples of the projection results with some fine-grained parts. The training comparison is shown in

Figure 9. After adding projection loss, the CD loss decreased faster, and achieved higher accuracy.

Comparisons with state-of-the-art generative networks. To evaluate the reconstruction capability,

is compared with

and

, reported as state-of-the-art 3D object generation networks. The measurement between

and

is Intersection over Union (IoU), which is widely adopted by voxel-based methods. Meanwhile, the measurement between

and

is CD, which is widely used by point-cloud-based methods. Comparisons with

and

are shown in

Figure 10 and

Figure 11, following their original settings and displays, which demonstrate that our generated 3D models are more similar and integrated.

Statistics of the reconstruction accuracy on five categories are shown in

Table 1 and

Table 2. In the two tables,

and

denote

with and without projection loss, and

is without the weighted fusion module. From the results, we can see that

,

and

achieve the higher accuracy compared with state-of-the-art generative methods on images with complex backgrounds. Besides,

performs better than

since the multi-view consistency is considered.

3.3. Comparison with Traditional Point Completion Works

Due to the scanning conditions, objects in scanned point clouds are often faced with severe incompletion. Because traditional point completion methods require roughly complete models, they may fail in those cases where large structures are missing. On the contrary, our proposed data-driven completion framework provides a benefited solution for object completion in such extreme cases.

Specifically, using the pre-trained network on ShapeNet, real street images and incomplete object models are fed into the network to generate a complete model. Then, utilised Iterative Closest Point (ICP) registration method [

26] provided in Point Cloud Library (PCL), the generated point clouds are aligned with the initial point clouds, followed by merging and normalizing to form complete models.

The experiment results are shown in

Figure 12 and

Figure 13. Three different kinds of cars have different qualities in MLS point clouds. Among them, the white Porsche has relatively dense and intact scanning structures in the front, but it lacks 3D structures at the back. The Toyota in the middle row is the most incomplete, missing more than three quarters of the entire model.

Figure 12a,b are the original street images and incomplete scanning models, forming the input pairs.

Figure 12c displays the results of

, and it can be seen that no matter how large the missing parts are, our method can produce the entire models, which are almost identical to the actual 3D structures. As for the traditional completion methods [

8], which represents state-of-the-art MLS completion standard. As shown in

Figure 12d, under the same conditions, the method [

8] fails to complete the large holes or fill in wrong places.

Limited by the categories of ShapeNet models, in this paper, we only train and test on the cars, as shown in

Figure 13. It can be seen that our method, along the data-driven way, can produce complete models for the largely incomplete shapes, where previous feature-based methods may probably fail. As for other categories, we have confidence that our method is also suitable for them.