Robust Visual Tracking Using Structural Patch Response Map Fusion Based on Complementary Correlation Filter and Color Histogram

Abstract

1. Introduction

2. Related Work

2.1. Correlation Filter-Based Tracking

2.2. Color Histogram-Based Tracking

2.3. Part-Based Tracking

2.4. Sparse-Based Tracking and Deep Learning-Based Tracking

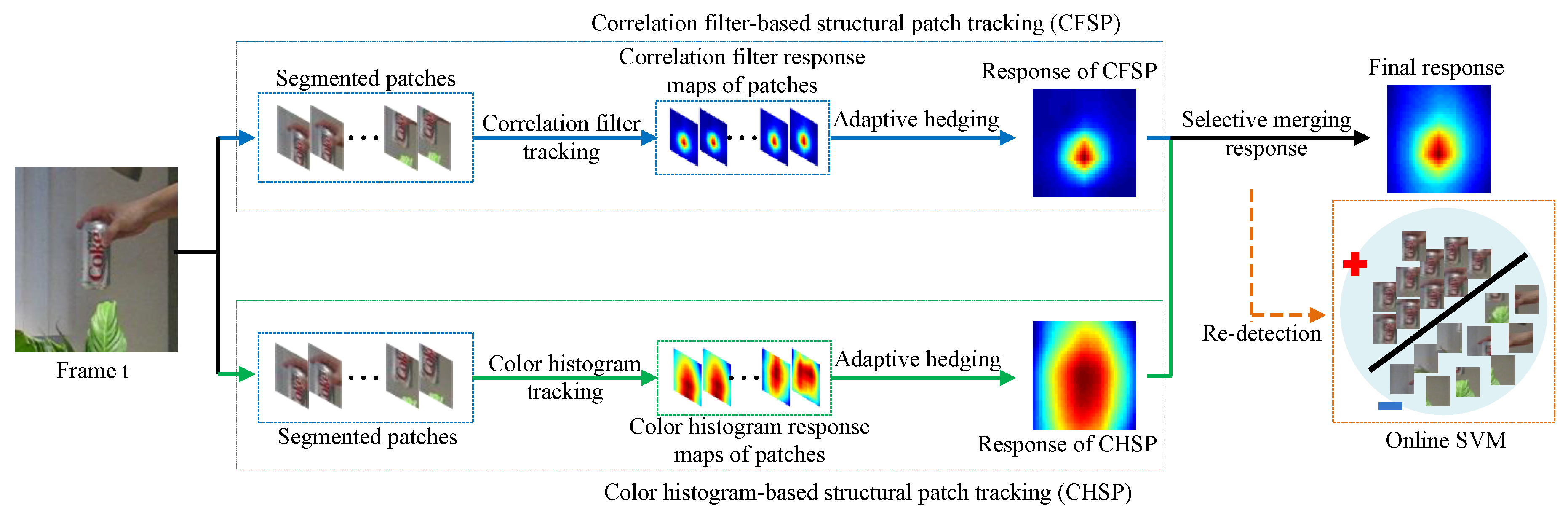

3. Proposed Algorithm

3.1. Overview

3.2. Adaptive Hedge Algorithm

3.3. Correlation Filter-Based Structural Patch Tracking (CFSP)

| Algorithm 1: Correlation filter-based structural patch tracking |

| Inputs: current weight distribution ; estimated target position in the previous frame; Output: updated weight distribution ; the response map in the current frame. Repeat: 1: compute correlation filter response of each patch using Equation (11); 2: compute the fused response map using Equation (12); 3: compute the similarity and displacement difference of each patch using Equations (13–16); 4: compute loss of each patch tracker using Equation (17); 5: update stability models using Equations (4) and (5); 6: measure each patch tracker’s stability using Equation (6); 7: update regret of each patch using Equations (1), (2), (7), and (8); 8: update weight distribution for each patch tracker using Equation (9); |

3.4. Color Histogram-Based Structural Patch Tracking (CHSP)

| Algorithm 2: Color histogram-based structural patch tracking |

| Inputs: current weight distribution ; estimated target position in the previous frame; Output: updated weight distribution ; the response map in the current frame. Repeat: 1: compute color histogram response of each patch using Equation (22); 2: compute the response map using Equation (23); 3: compute the discrimination and displacement difference of each patch using Equations (24)–(28) and (30); 4: compute loss of each patch tracker using Equation (29); 5: update stability models using Equations (4) and (5); 6: measure each patch tracker’s stability using Equation (6); 7: update regret of each patch using Equations (1), (2), (7) and (8); 8: update weight distribution for each patch tracker using Equations (9); |

3.5. Response Maps Fusion between CFSP and CHSP

| Algorithm 3: Complementary structural patches response fusion tracking (CSPRF) |

| Inputs: the responses of the CFSP and CHSP , ; estimated target position in the previous frame; Output: estimated current target position . Repeat: 1: obtain the confidence scores and using the SVM classifier on the tracking results of CFSP and CHSP. 2: if Cchsp ≥ Tchsp then 3: set and compute the current target position using Equation (31); 4: else if then 5: set and compute the current target position using Equation (31); 6: else 7: use the online SVM classifier to draw dense candidates around and obtain the detecting scores of all candidate samples; 8: if then 9: current target position ; 10: else 11: set and compute the current target position using Equation (31); 12: end 13: end 14: end |

3.6. Update Scheme

3.7. Scale Estimation

4. Experimental Results

4.1. Experimental Setup

4.2. Implementation Details

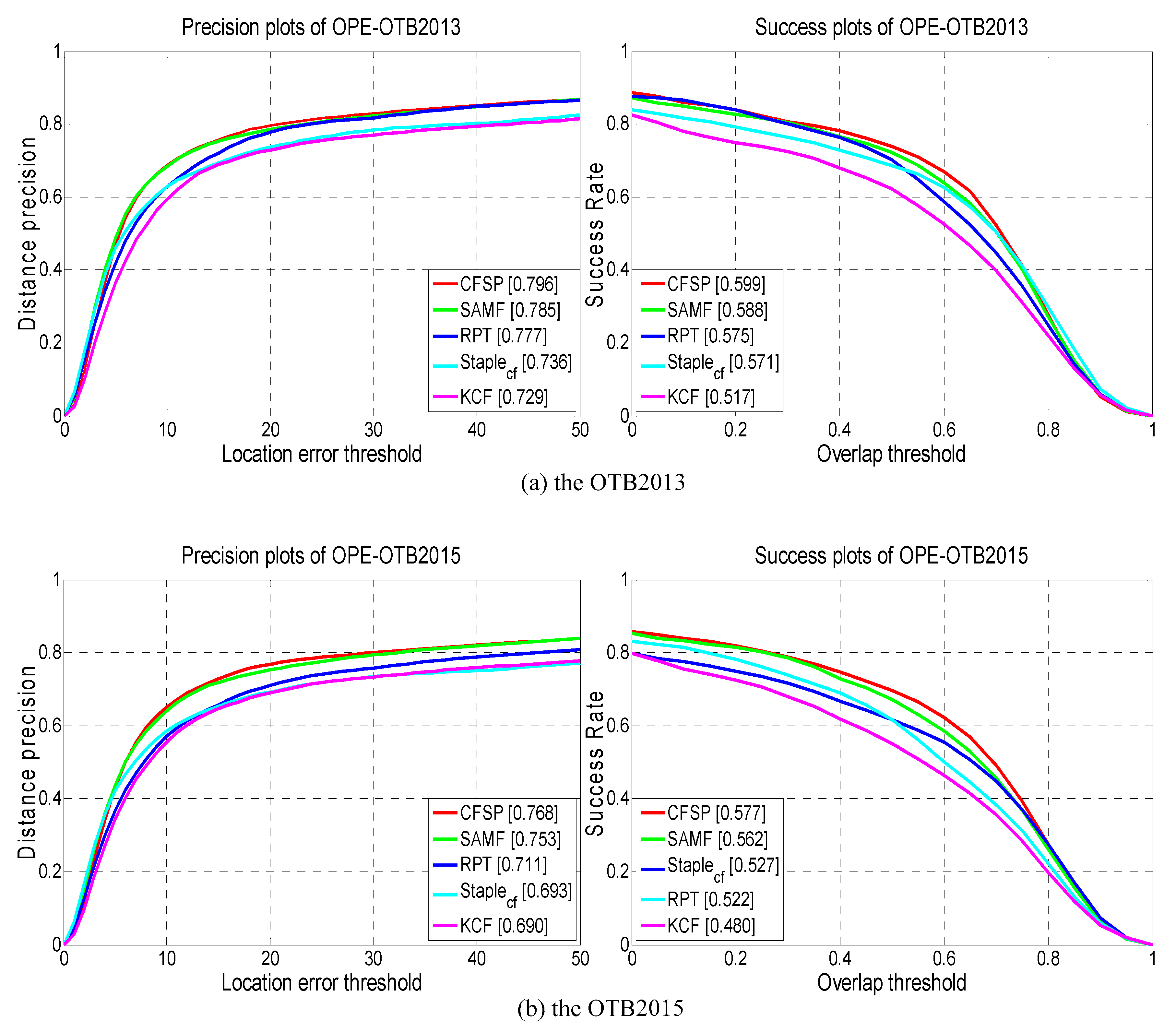

4.3. Performance Evaluation of the CSPRF Tracker on OTB2013 and OTB2015

4.3.1. Quantitative Evaluation

4.3.2. Attribute-Based Evaluation

4.3.3. Qualitative Evaluation

4.4. Performance Comparison of LGCmF with CSPRF

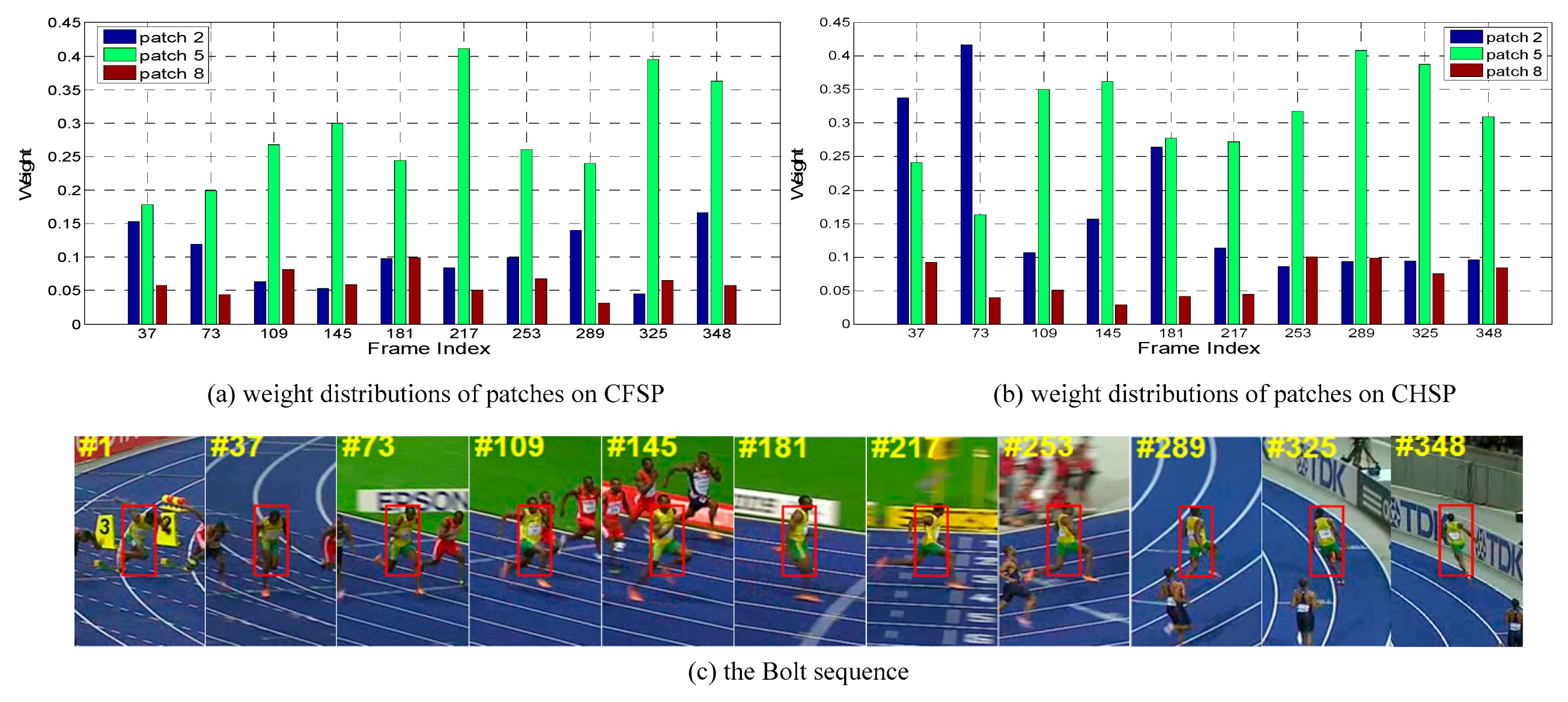

4.5. Performance Evaluation of Component Trackers CFSP, CHSP

4.6. Performance Evaluation of the CSPRF Tracker on VOT2016

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Li, X.; Hu, W.; Shen, C.; Zhang, Z.; Dick, A.; Hengel, A.V.D. A survey of appearance models in visual object tracking. ACM Trans. Intell. Syst. Technol. 2013, 4, 1–48. [Google Scholar] [CrossRef]

- Ma, B.; Huang, L.; Shen, J.; Shao, L.; Yang, M.H.; Porikli, F. Visual tracking under motion blur. IEEE Trans. Image Process. 2016, 25, 5867–5876. [Google Scholar] [CrossRef] [PubMed]

- Hare, S.; Saffari, A.; Torr, P.H.S. Struck: Structured output tracking with kernels. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Barcelona, Spain, 6–13 November 2011; pp. 263–270. [Google Scholar]

- Zhang, J.; Ma, S.; Sclaroff, S. MEEM: Robust tracking via multiple experts using entropy minimization. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, 8–11 September 2014; pp. 188–203. [Google Scholar]

- Bolme, D.S.; Beveridge, J.R.; Draper, B.A.; Lui, Y.M. Visual object tracking using adaptive correlation filters. In Proceedings of the IEEE Computer Vision and Pattern Recognition (CVPR), San Francisco, CA, USA, 13–18 June 2010; pp. 2544–2550. [Google Scholar]

- Henriques, J.F.; Caseiro, R.; Martins, P.; Batista, J. High-speed tracking with kernelized correlation filters. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 37, 583–596. [Google Scholar] [CrossRef] [PubMed]

- Danelljan, M.; Hager, G.; Khan, F.S.; Felsberg, M. Learning spatially regularized correlation filters for visual tracking. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 4310–4318. [Google Scholar]

- Mueller, M.; Smith, N.; Ghanem, B. Context-aware correlation filter tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 1387–1395. [Google Scholar]

- Li, F.; Tian, C.; Zuo, W.; Zhang, L.; Yang, M.H. Learning spatial-temporal regularized correlation filters for visual tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVRP), Salt Lake City, UT, USA, 18–23 June 2018; pp. 4904–4913. [Google Scholar]

- Wu, Y.; Lim, J.; Yang, M.H. Online object tracking: A benchmark. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Portland, OR, USA, 23–28 June 2013; pp. 2411–2418. [Google Scholar]

- Wu, Y.; Lim, J.; Yang, M.H. Object tracking benchmark. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 37, 1834–1848. [Google Scholar] [CrossRef] [PubMed]

- Kristan, M.; Leonardis, A.; Matas, J.; Felsberg, M.; Pflugfelder, R.; Cehovin, L.; Vojir, T.; Hager, G.; Lukezic, A.; Fernandez, G.; et al. The visual object tracking VOT2016 challenge results. In Proceedings of the European Conference on Computer Vision Workshops (ECCV), Amsterdam, The Netherlands, 8–16 October 2016; pp. 777–823. [Google Scholar]

- Felzenszwalb, P.F.; Girshick, R.B.; McAllester, D.; Ramanan, D. Object detection with discriminatively trained part-based models. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 1627–1645. [Google Scholar] [CrossRef] [PubMed]

- Bertinetto, L.; Valmadre, J.; Golodetz, S.; Miksik, O.; Torr, P.H. Staple: Complementary learners for real-time tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 1401–1409. [Google Scholar]

- Zhang, H.; Liu, G.; Hao, Z. Robust visual tracking via multi-feature response maps fusion using a collaborative local-global layer visual model. J. Vis. Commun. Image Represent. 2018, 56, 1–14. [Google Scholar] [CrossRef]

- Chaudhuri, K.; Freund, Y.; Hsu, D. A parameter-free hedging algorithm. In Proceedings of the International Conference on Neural Information Processing Systems (NIPS), Vancouver, BC, Canada, 7–10 December 2009; pp. 297–305. [Google Scholar]

- Ma, C.; Huang, J.B.; Yang, X.; Yang, M.H. Adaptive correlation filters with long-term and short-term memory for object tracking. Int. J. Comput. Vis. 2018, 126, 771–796. [Google Scholar] [CrossRef]

- Henriques, J.F.; Caseiro, R.; Martins, P.; Batista, J. Exploiting the circulant structure of tracking-by-detection with kernels. In Proceedings of the European Conference on Computer Vision (ECCV), Firenze, Italy, 7–12 October 2012; pp. 702–715. [Google Scholar]

- Danelljan, M.; Khan, F.S.; Felsberg, M.; Weijer, J.V.D. Adaptive color attributes for real-time visual tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; pp. 1090–1097. [Google Scholar]

- Weijer, J.V.D.; Schmid, C.; Verbeek, J.; Larlus, D. Learning color names for real-world applications. IEEE Trans. Image Process. 2009, 18, 1512–1523. [Google Scholar] [CrossRef]

- Danelljan, M.; Hager, G.; Khan, F.S.; Felsberg, M. Accurate scale estimation for robust visual tracking. In Proceedings of the British Machine Vision Conference, Nottingham, UK, 1–5 September 2014; pp. 1–11. [Google Scholar]

- Yang, Y.; Zhang, Y.; Li, D.; Wang, Z. Parallel correlation filters for real-time visual tracking. Sensors 2019, 19, 2362. [Google Scholar] [CrossRef]

- Zhang, Y.; Yang, Y.; Zhou, W.; Shi, L.; Li, D. Motion-aware correlation filters for online visual tracking. Sensors 2018, 18, 3937. [Google Scholar] [CrossRef]

- Comaniciu, D.; Ramesh, V.; Meer, P. Kernel-based object tracking. IEEE Trans. Pattern Anal. Mach. Intell. 2003, 25, 564–577. [Google Scholar] [CrossRef]

- Abdelali, H.A.; Essannouni, F.; Essannouni, L.; Aboutajdine, D. Fast and robust object tracking via accept-reject color histogram-based method. J. Vis. Commun. Image Rep. 2016, 34, 219–229. [Google Scholar] [CrossRef]

- Duffner, S.; Garcia, C. PixelTrack: A fast adaptive algorithm for tracking non-rigid objects. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Sydney, NSW, Australia, 1–8 December 2013; pp. 2480–2487. [Google Scholar]

- Possegger, H.; Mauthner, T.; Bischof, H. In defense of color-based model-free tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 2113–2120. [Google Scholar]

- Lukezic, A.; Vojir, T.; Zajc, L.C.; Matas, J.; Kristan, M. Discriminative correlation filter tracker with channel and spatial reliability. Int. J. Comput. Vis. 2018, 126, 671–688. [Google Scholar] [CrossRef]

- Fan, J.; Song, H.; Zhang, K.; Liu, Q.; Lian, W. Complementary tracking via dual color clustering and spatio-temporal regularized correlation learning. IEEE Access 2018, 6, 56526–56538. [Google Scholar] [CrossRef]

- Nejhum, S.M.S.; Ho, J.; Yang, M.H. Visual tracking with histograms and articulating blocks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Zhang, T.; Jia, K.; Xu, C.; Ma, Y.; Ahuja, N. Partial occlusion handling for visual tracking via robust part matching. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; pp. 1258–1265. [Google Scholar]

- Yao, R.; Shi, Q.; Shen, C.; Zhang, Y.; Hengel, A.V.D. Part-based visual tracking with online latent structural learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Portland, OR, USA, 23–28 June 2013; pp. 2363–2370. [Google Scholar]

- Liu, T.; Wang, G.; Yang, Q. Real-time part-based visual tracking via adaptive correlation filters. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 4902–4912. [Google Scholar]

- Li, Y.; Zhu, J.; Hoi, S.C.H. Reliable patch trackers: Robust visual tracking by exploiting reliable patches. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 353–361. [Google Scholar]

- Sun, X.; Cheung, N.M.; Yao, H.; Guo, Y. Non-rigid object tracking via deformable patches using shape-preserved KCF and level sets. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 5496–5504. [Google Scholar]

- Wang, X.; Hou, Z.; Yu, W.; Pu, L.; Jin, Z.; Qin, X. Robust occlusion-aware part-based visual tracking with object scale adaptation. Pattern Recognit. 2018, 81, 456–470. [Google Scholar] [CrossRef]

- Zhang, S.; Lan, X.; Qi, Y.; Yuen, P.C. Robust visual tracking via basis matching. IEEE Trans. Circuits Syst. Video Technol. 2017, 27, 421–430. [Google Scholar] [CrossRef]

- Zhang, L.; Wu, W.; Chen, T.; Strobel, N.; Comaniciu, D. Robust object tracking using semi-supervised appearance dictionary learning. Pattern Recognit. Lett. 2015, 62, 17–23. [Google Scholar] [CrossRef]

- Zhang, S.; Lan, X.; Yao, H.; Zhou, H.; Tao, D.; Li, X. A biologically inspired appearance model for robust visual tracking. IEEE Trans. Neural Netw. Learn. Syst. 2017, 28, 2357–2370. [Google Scholar] [CrossRef]

- Ma, C.; Huang, J.B.; Yang, X.; Yang, M.H. Hierarchical convolutional features for visual tracking. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 3074–3082. [Google Scholar]

- Qi, Y.; Zhang, S.; Qin, L.; Huang, Q.; Yao, H.; Lim, J.; Yang, M.H. Hedging deep features for visual tracking. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 41, 1116–1130. [Google Scholar] [CrossRef]

- Zhang, S.; Qi, Y.; Jiang, F.; Lan, X.; Yuen, P.C.; Zhou, H. Point-to-set distance metric learning on deep representations for visual tracking. IEEE Trans. Intell. Transp. Syst. 2018, 19, 187–198. [Google Scholar] [CrossRef]

- Danelljan, M.; Bhat, G.; Khan, F.S.; Felsberg, M. Atom: Accurate tracking by overlap maximization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 4660–4669. [Google Scholar]

- Zhang, S.; Zhou, H.; Yao, H.; Zhang, Y.; Wang, K.; Zhang, J. Adaptive NormalHedge for robust visual tracking. Signal Process. 2015, 110, 132–142. [Google Scholar] [CrossRef]

- Collins, R.T.; Liu, Y.; Leordeanu, M. Online selection of discriminative tracking features. IEEE Trans. Pattern Anal. Mach. Intell. 2005, 27, 1631–1643. [Google Scholar] [CrossRef] [PubMed]

- Crammer, K.; Dekel, O.; Keshet, J.; Shalev-Shwartz, S.; Singer, Y. Online passive-aggressive algorithms. J. Mach. Learn. Res. 2006, 7, 551–585. [Google Scholar]

- Li, Y.; Zhu, J. A scale adaptive kernel correlation filter tracker with feature integration. In Proceedings of the European Conference on Computer Vision Workshops (ECCV), Zurich, Switzerland, 6–12 September 2014; pp. 254–265. [Google Scholar]

- Lee, D.Y.; Sim, J.Y.; Kim, C.S. Visual tracking using pertinent patch selection and masking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; pp. 3486–3493. [Google Scholar]

| CSPRF | LCT+ | DSST | Staple_CA | Staple | SAMF | SRDCF | RPT | KCF | CSR-DCF | MEEM | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Meam OS (%) | I | 81.4 | 81.2 | 67.3 | 76.1 | 74.2 | 72.2 | 78.1 | 70.2 | 62.1 | 75.6 | 70.8 |

| II | 75.4 | 70.1 | 61.3 | 72.8 | 70.4 | 67.0 | 71.2 | 61.6 | 55.1 | 71.2 | 62.2 | |

| Median OS (%) | I | 82.5 | 82.3 | 68.0 | 77.2 | 75.1 | 73.4 | 78.8 | 71.9 | 63.7 | 76.9 | 72.9 |

| II | 76.6 | 71.3 | 62.2 | 74.5 | 71.8 | 68.7 | 72.3 | 63.6 | 56.9 | 72.3 | 64.5 | |

| Median DP (%) | I | 89.1 | 86.1 | 75.1 | 85.0 | 80.2 | 80.6 | 82.7 | 80.5 | 75.5 | 83.0 | 86.7 |

| II | 85.6 | 78.2 | 69.8 | 82.7 | 80.4 | 77.6 | 78.3 | 74.0 | 71.7 | 81.5 | 81.0 | |

| Median CLE (pixel) | I | 6.39 | 7.23 | 12.2 | 7.27 | 8.42 | 8.72 | 4.82 | 8.26 | 11.4 | 7.98 | 7.50 |

| II | 7.10 | 9.13 | 13.1 | 7.09 | 8.35 | 9.43 | 7.75 | 11.3 | 14.7 | 8.50 | 9.92 |

| CSPRF | LCT+ | DSST | Staple_CA | Staple | SAMF | SRDCF | RPT | KCF | CSR-DCF | MEEM | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| IV(25) | 84.5 | 79.2 | 72.4 | 80.1 | 74.2 | 70.6 | 71.3 | 74.1 | 70.7 | 71.3 | 76.9 |

| SV(28) | 84.4 | 75.7 | 71.5 | 80.5 | 73.6 | 73.0 | 77.1 | 74.0 | 65.5 | 70.0 | 70.0 |

| OCC(29) | 87.0 | 84.6 | 70.0 | 80.6 | 78.3 | 84.5 | 81.2 | 73.5 | 73.1 | 79.0 | 81.6 |

| DEF(19) | 90.1 | 87.0 | 66.3 | 83.9 | 78.8 | 81.9 | 79.5 | 72.8 | 74.6 | 82.6 | 84.6 |

| MB (12) | 71.1 | 66.5 | 54.0 | 78.5 | 70.8 | 61.3 | 72.9 | 72.6 | 60.5 | 72.4 | 71.3 |

| FM (17) | 71.6 | 66.3 | 51.8 | 76.6 | 66.1 | 65.4 | 73.0 | 67.7 | 57.0 | 73.2 | 74.1 |

| IPR (31) | 82.8 | 80.2 | 75.2 | 83.9 | 78.8 | 72.2 | 75.0 | 77.7 | 70.8 | 74.6 | 80.9 |

| OPR(39) | 87.6 | 84.9 | 72.0 | 82.3 | 77.4 | 77.8 | 78.7 | 77.0 | 71.5 | 78.5 | 84.9 |

| OV (6) | 76.6 | 72.8 | 51.4 | 69.7 | 65.0 | 63.5 | 70.6 | 67.8 | 64.8 | 66.2 | 74.4 |

| BC (21) | 84.0 | 79.3 | 69.2 | 79.0 | 74.9 | 71.7 | 72.7 | 78.4 | 72.3 | 78.8 | 79.8 |

| LR (4) | 80.4 | 71.7 | 69.0 | 97.2 | 69.5 | 65.0 | 76.9 | 78.1 | 62.9 | 65.3 | 98.7 |

| Mean DP (%) | Mean OS (%) | Median DP (%) | Median OS (%) | Median CLE | AUC | |

|---|---|---|---|---|---|---|

| LGCmF | 80.6 | 72.2 | 82.4 | 74.1 | 8.35 | 59.8 |

| CSPRF | 83.9 | 75.4 | 85.6 | 76.6 | 7.10 | 61.7 |

| CSPRF | CSR-DCF | DAT | DSST | HCF | KCF | SRDCF | Staple | STRCF | |

|---|---|---|---|---|---|---|---|---|---|

| EAO | 0.307 | 0.332 | 0.217 | 0.181 | 0.220 | 0.194 | 0.246 | 0.295 | 0.252 |

| Accuracy | 0.53 | 0.52 | 0.47 | 0.53 | 0.45 | 0.49 | 0.53 | 0.54 | 0.51 |

| Robustness | 0.97 | 0.90 | 1.72 | 2.52 | 1.42 | 2.03 | 1.5 | 1.35 | 1.35 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hao, Z.; Liu, G.; Gao, J.; Zhang, H. Robust Visual Tracking Using Structural Patch Response Map Fusion Based on Complementary Correlation Filter and Color Histogram. Sensors 2019, 19, 4178. https://doi.org/10.3390/s19194178

Hao Z, Liu G, Gao J, Zhang H. Robust Visual Tracking Using Structural Patch Response Map Fusion Based on Complementary Correlation Filter and Color Histogram. Sensors. 2019; 19(19):4178. https://doi.org/10.3390/s19194178

Chicago/Turabian StyleHao, Zhaohui, Guixi Liu, Jiayu Gao, and Haoyang Zhang. 2019. "Robust Visual Tracking Using Structural Patch Response Map Fusion Based on Complementary Correlation Filter and Color Histogram" Sensors 19, no. 19: 4178. https://doi.org/10.3390/s19194178

APA StyleHao, Z., Liu, G., Gao, J., & Zhang, H. (2019). Robust Visual Tracking Using Structural Patch Response Map Fusion Based on Complementary Correlation Filter and Color Histogram. Sensors, 19(19), 4178. https://doi.org/10.3390/s19194178