Use of Computing Devices as Sensors to Measure Their Impact on Primary and Secondary Students’ Performance

Abstract

1. Introduction

2. Material and Methods

2.1. Research Problem

- RQ1. Are there differences in the number of passed subjects between students who connect to the LMS and those who do not connect?

- RQ2. Are there differences in the number of failed subjects between students who connect to the LMS and those who do not connect?

- RQ3. For those students who do connect to the LMS, are there differences in the number of passed subjects depending on the computing device being used?

- RQ4. For those students who do connect to the LMS, are there differences in the number of failed subjects depending on the computing device being used?

- RQ5. For those students who do connect to the LMS, are there differences in the number of passed subjects depending on the operating system being used?

- RQ6. For those students who do connect to the LMS, are there differences in the number of failed subjects depending on the operating system being used?

- RQ7. Is it possible to generate a predictive model on the number of passed subjects taking into account the data from the computing device (e.g., type of device and OS)?

2.2. Constructing the Experiment

2.3. Conducting the Experiment

3. Results

3.1. Descriptive Statistics

3.2. Inferential Statistics

3.3. Predicting the Number of Passed Subjects Based on Computing Device Data

- Gender

- Educational stage

- Type of device

- Type of operating system

- Total number of connections during the academic year

- Average number of connections per week

- Maximum number of connections per week

- Minimum number of connections per week

- Most frequent connection day

- Most frequent connection time slot

- Number of passed subjects

4. Discussion

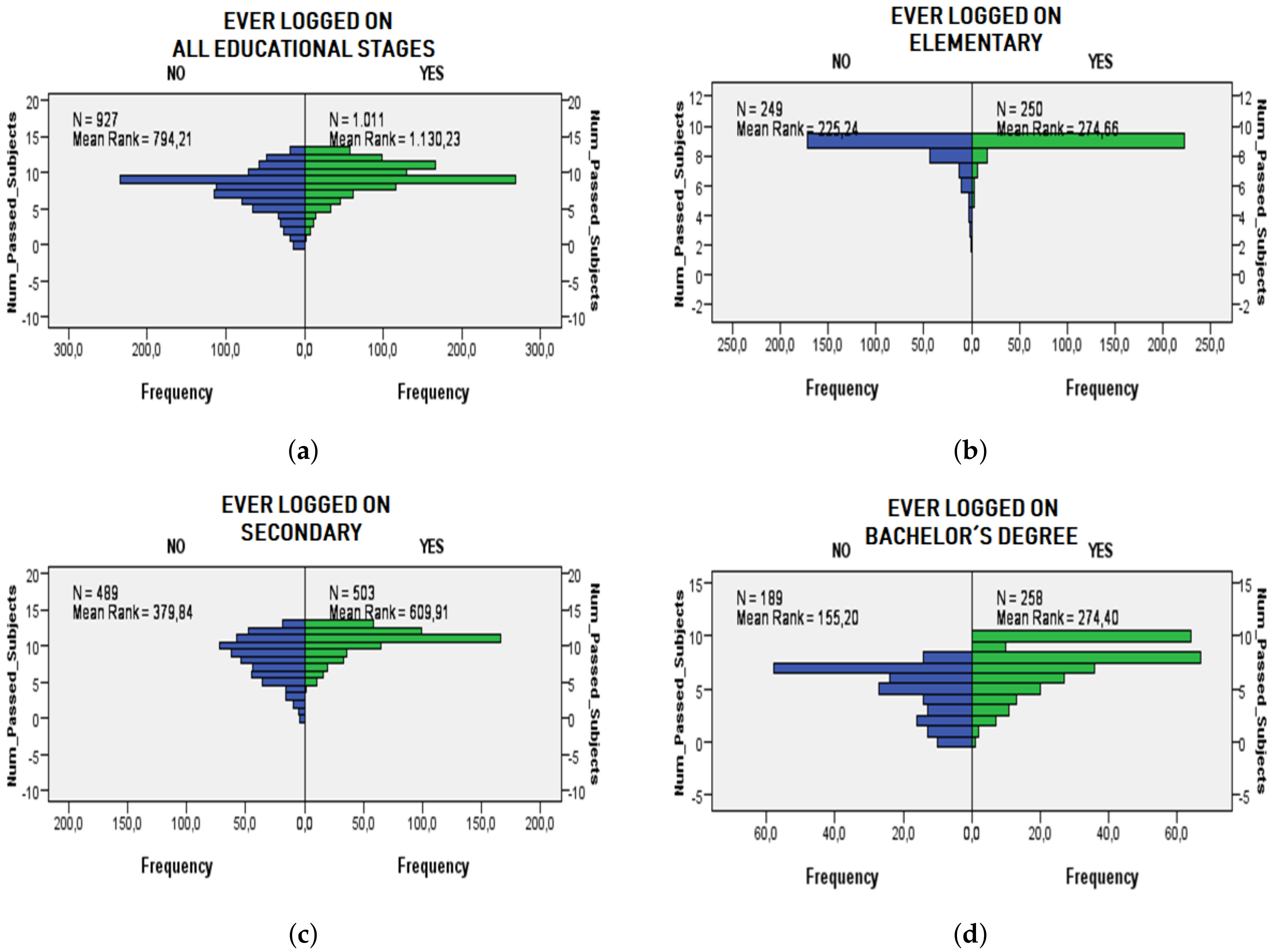

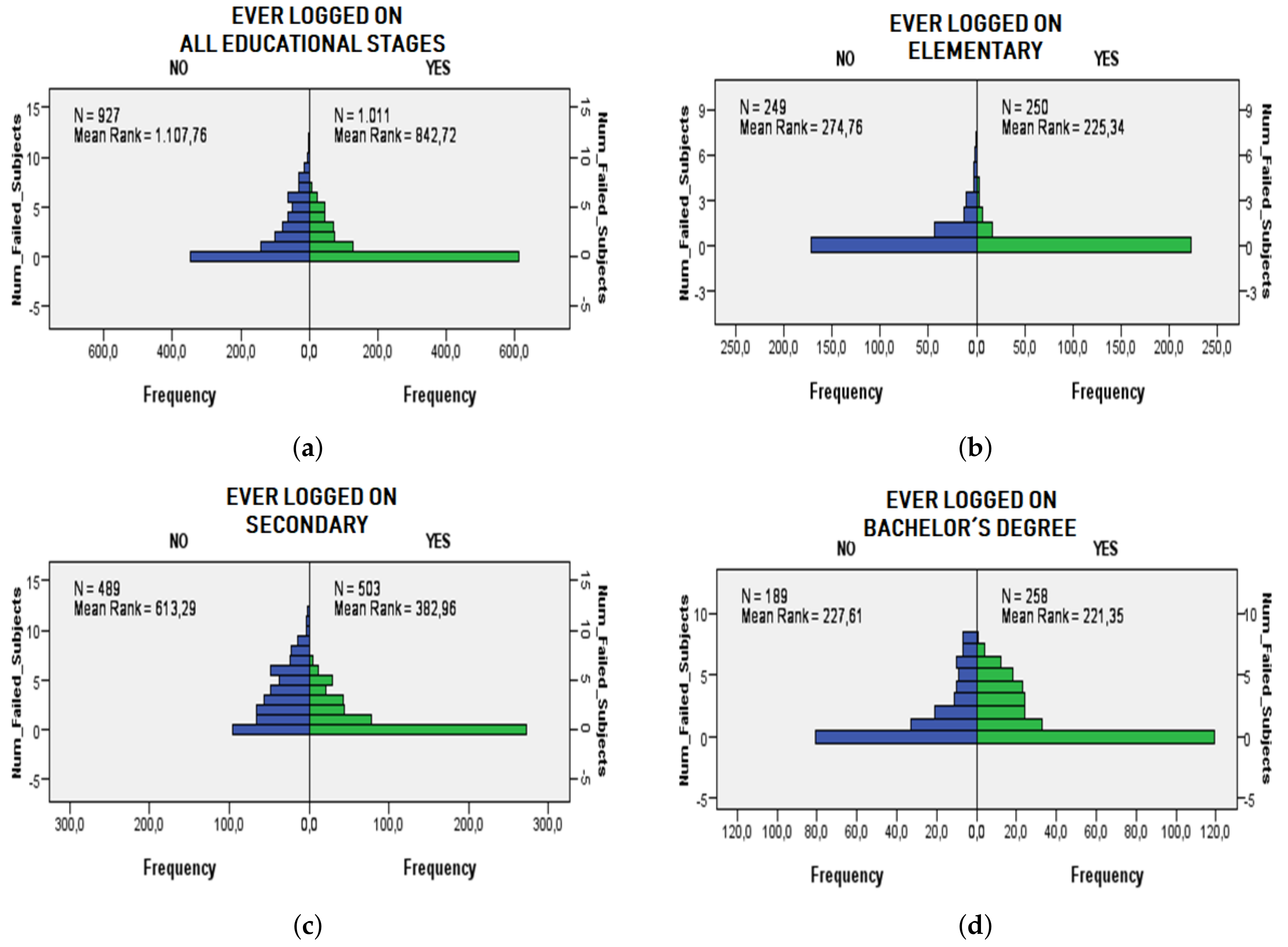

- RQ1. There are significant differences between the number of subjects passed by a student with respect to whether or not the student has ever logged into the LMS. Analyzing the values in Table 4 along with the statistical test demonstrates that a student passes more subjects if he or she ever logged into the LMS. This conclusion holds considering each educational stage separately.

- RQ2. There are significant differences between the number of subjects failed by a student with respect to whether or not the student has ever logged into the LMS. Analyzing the values in Table 4 along with the statistical test it is concluded that a student fails fewer subjects if he or she ever logs into the LMS. This conclusion holds considering each educational stage separately except for the bachelor’s degree stage, where there is no significant evidence among the relation of students who have ever accessed the LMS and the number of failed subjects.

- RQ3. There are significant differences between the number of subjects passed by a student and the type of device used to log into the LMS. Specifically, and studying in depth the results of the statistical test and Table 5, there is a greater number of subjects passed for students who use tablets, followed by students who use PCs and finally for students who use smartphones.

- RQ4. There are significant differences between the number of subjects failed by a student and the type of device used to log into the LMS. Analyzing the results of the statistical test and Table 5, there are fewer failed subjects for students who use tablets, followed by students who use PCs and finally students who use smartphones.

- RQ5. There are significant differences between the number of subjects passed by a student and the operating system used to log into the LMS. Studying the results of the statistical test and Table 6, the students who use the MacOS and ChromeOS obtain a greater number of passed subjects, followed by the students who use the iOS operating system, then the students who use the Windows operating, and finally the students who use Android.

- RQ6. There are significant differences between the number of subjects failed by a student and the operating system used to log into the LMS. In this case, students using MacOS, iOS and ChromeOS obtain a similar number of failed subjects without significant differences. This number of failed subjects is lower than students using Windows and Android.

- RQ7. The initial model of rules created using the M5Rules algorithm is robust since after repeating it for 5 times randomly it has given similar results obtaining a low standard deviation. Although the results can be improved, the model obtains an average error of almost 2 subjects when predicting the number of passed subjects and obtains a correlation between the input attributes and the target attribute of 72% on average. There have been variables not included in this first initial model as the time slot or the most frequent day of connection. These variables will be analyzed in a more complex model that can provide more adjusted results.

5. Conclusions and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Demirkan, H.; Spohrer, J.C.; Welser, J.J. Digital innovation and strategic transformation. IT Prof. 2016, 18, 14–18. [Google Scholar] [CrossRef]

- Nespoli, P.; Useche Pelaez, D.; Díaz López, D.; Gómez Mármol, F. COSMOS: Collaborative, Seamless and Adaptive Sentinel for the Internet of Things. Sensors 2019, 19, 1492. [Google Scholar] [CrossRef] [PubMed]

- Wang, H.; Xie, S.; Li, K.; Ahmad, M.O. Big Data-Driven Cellular Information Detection and Coverage Identification. Sensors 2019, 19, 937. [Google Scholar] [CrossRef] [PubMed]

- Osimani, F.; Stecanella, B.; Capdehourat, G.; Etcheverry, L.; Grampín, E. Managing Devices of a One-to-One Computing Educational Program Using an IoT Infrastructure. Sensors 2019, 19, 70. [Google Scholar] [CrossRef] [PubMed]

- Gikas, J.; Grant, M.M. Mobile computing devices in higher education: Student perspectives on learning with cellphones, smartphones & social media. Internet High. Educ. 2013, 19, 18–26. [Google Scholar]

- Al-Turjman, F.M. Information-centric sensor networks for cognitive IoT: an overview. Ann. Telecommun. 2017, 72, 3–18. [Google Scholar] [CrossRef]

- Shenoy, J.; Pingle, Y. IOT in agriculture. In Proceedings of the 2016 3rd International Conference on Computing for Sustainable Global Development (INDIACom), New Delhi, India, 16–18 March 2016; pp. 1456–1458. [Google Scholar]

- Jagüey, J.G.; Villa-Medina, J.F.; López-Guzmán, A.; Porta-Gándara, M.Á. Smartphone irrigation sensor. IEEE Sens. J. 2015, 15, 5122–5127. [Google Scholar] [CrossRef]

- Seedhouse, P.; Knight, D. Applying digital sensor technology: A problem-solving approach. Appl. Linguist. 2016, 37, 7–32. [Google Scholar] [CrossRef]

- Espinosa, H.G.; Lee, J.; Keogh, J.; Grigg, J.; James, D.A. On the use of inertial sensors in educational engagement activities. Procedia Eng. 2015, 112, 262–266. [Google Scholar] [CrossRef]

- Blikstein, P.; Worsley, M. Multimodal Learning Analytics and Education Data Mining: using computational technologies to measure complex learning tasks. J. Learn. Anal. 2016, 3, 220–238. [Google Scholar] [CrossRef]

- Baygin, M.; Yetis, H.; Karakose, M.; Akin, E. An effect analysis of industry 4.0 to higher education. In Proceedings of the 2016 15th International Conference on Information Technology Based Higher Education and Training (ITHET), Istanbul, Turkey, 8–10 September 2016; pp. 1–4. [Google Scholar]

- Martín-Gutiérrez, J.; Mora, C.E.; Añorbe-Díaz, B.; González-Marrero, A. Virtual technologies trends in education. Eurasia J. Math. Sci. Technol. Educ. 2017, 13, 469–486. [Google Scholar] [CrossRef]

- Parsons, D.; Thomas, H.; Wishart, J. Exploring Mobile Affordances in the Digital Classroom. In Proceedings of the 12th International Conference on Mobile Learning; Arnedillo-Sanchez, I., Isias, P., Eds.; IADIS Press: Lisbon, Portugal, 2016; pp. 43–50. [Google Scholar]

- Abdel-Basset, M.; Manogaran, G.; Mohamed, M.; Rushdy, E. Internet of things in smart education environment: Supportive framework in the decision-making process. Concurr. Comput. Pract. Exp. 2019, 31, e4515. [Google Scholar] [CrossRef]

- Akin, M.; Pratt, A.; Blackburn, J.; Dietzel, A. Paper-Based Magneto-Resistive Sensor: Modeling, Fabrication, Characterization, and Application. Sensors 2018, 18, 4392. [Google Scholar] [CrossRef] [PubMed]

- Pecori, R. A virtual learning architecture enhanced by fog computing and big data streams. Future Internet 2018, 10, 4. [Google Scholar] [CrossRef]

- Chang, F.C.; Chiu, C.H.; Chen, P.H.; Chiang, J.T.; Miao, N.F.; Chuang, H.Y.; Liu, S. Children’s use of mobile devices, smartphone addiction and parental mediation in Taiwan. Comput. Hum. Behav. 2019, 93, 25–32. [Google Scholar] [CrossRef]

- McCoy, B.R. Digital distractions in the classroom phase II: Student classroom use of digital devices for non-class related purposes. J. Media Educ. 2016, 7, 5–32. [Google Scholar]

- Wollscheid, S.; Sjaastad, J.; Tømte, C. The impact of digital devices vs. Pen (cil) and paper on primary school students’ writing skills—A research review. Comput. Educ. 2016, 95, 19–35. [Google Scholar] [CrossRef]

- Collins, A.; Halverson, R. Rethinking Education in the Age of Technology: The Digital Revolution and Schooling in America; Teachers College Press: New York, NY, USA, 2018. [Google Scholar]

- Crompton, H.; Burke, D. The use of mobile learning in higher education: A systematic review. Comput. Educ. 2018, 123, 53–64. [Google Scholar] [CrossRef]

- Watson, S.L.; Watson, W.R. Principles for personalized instruction. In Instructional-Design Theories and Models; Routledge: Abingdon, UK, 2016; Volume IV, pp. 109–136. [Google Scholar]

- Reigeluth, C.M.; Beatty, B.J.; Myers, R.D. Instructional-Design Theories and Models, Volume IV: The Learner-Centered Paradigm of Education; Routledge: Abingdon, UK, 2016. [Google Scholar]

- McKnight, K.; O’Malley, K.; Ruzic, R.; Horsley, M.K.; Franey, J.J.; Bassett, K. Teaching in a digital age: How educators use technology to improve student learning. J. Res. Technol. Educ. 2016, 48, 194–211. [Google Scholar] [CrossRef]

- Shamir-Inbal, T.; Blau, I. Developing digital wisdom by students and teachers: The impact of integrating tablet computers on learning and pedagogy in an elementary school. J. Educ. Comput. Res. 2016, 54, 967–996. [Google Scholar] [CrossRef]

- Sung, Y.T.; Chang, K.E.; Liu, T.C. The effects of integrating mobile devices with teaching and learning on students’ learning performance: A meta-analysis and research synthesis. Comput. Educ. 2016, 94, 252–275. [Google Scholar] [CrossRef]

- Sevillano-Garcia, M.L.; Vázquez-Cano, E. The impact of digital mobile devices in higher education. J. Educ. Technol. Soc. 2015, 18, 106–118. [Google Scholar]

- Farley, H.; Murphy, A.; Johnson, C.; Carter, B.; Lane, M.; Midgley, W.; Hafeez-Baig, A.; Dekeyser, S.; Koronios, A. How do students use their mobile devices to support learning? A case study from an Australian regional university. J. Interact. Media Educ. 2015, 2015, 13. [Google Scholar] [CrossRef]

- Garcia-Cabot, A.; de Marcos, L.; Garcia-Lopez, E. An empirical study on m-learning adaptation: Learning performance and learning contexts. Comput. Educ. 2015, 82, 450–459. [Google Scholar] [CrossRef]

- Premlatha, K.; Geetha, T. Learning content design and learner adaptation for adaptive e-learning environment: A survey. Artif. Intell. Rev. 2015, 44, 443–465. [Google Scholar] [CrossRef]

- Klašnja-Milićević, A.; Vesin, B.; Ivanović, M.; Budimac, Z.; Jain, L.C. Personalization and adaptation in e-learning systems. In E-Learning Systems; Springer: Berlin, Germany, 2017; pp. 21–25. [Google Scholar]

- Nikolopoulou, K. Mobile learning usage and acceptance: perceptions of secondary school students. J. Comput. Educ. 2018, 5, 499–519. [Google Scholar] [CrossRef]

- Sahlin, J.S.; Tsertsidis, A.; Islam, M.S. Usages and impacts of the integration of information and communication technologies (ICTs) in elementary classrooms: Case study of Swedish municipality schools. Interact. Learn. Environ. 2017, 25, 561–579. [Google Scholar] [CrossRef][Green Version]

- Aliaño, Á.M.; Hueros, A.D.; Franco, M.G.; Aguaded, I. Mobile Learning in University Contexts Based on the Unified Theory of Acceptance and Use of Technology (UTAUT). J. New Approaches Educ. Res. (NAER J.) 2019, 8, 7–17. [Google Scholar] [CrossRef]

- Cacciamani, S.; Villani, D.; Bonanomi, A.; Carissoli, C.; Olivari, M.G.; Morganti, L.; Riva, G.; Confalonieri, E. Factors affecting students’ acceptance of tablet PCs: A study in Italian high schools. J. Res. Technol. Educ. 2018, 50, 120–133. [Google Scholar] [CrossRef]

- Juristo, N.; Moreno, A.M. Basics of Software Engineering Experimentation; Springer Science & Business Media: Berlin, Germany, 2013. [Google Scholar]

- McKight, P.E.; Najab, J. Kruskal-wallis test. Corsini Encycl. Psychol. 2010. [Google Scholar] [CrossRef]

- Holmes, G.; Hall, M.; Prank, E. Generating rule sets from model trees. In Australasian Joint Conference on Artificial Intelligence; Springer: Berlin, Germany, 1999; pp. 1–12. [Google Scholar]

- Harrati, N.; Bouchrika, I.; Tari, A.; Ladjailia, A. Exploring user satisfaction for e-learning systems via usage-based metrics and system usability scale analysis. Comput. Hum. Behav. 2016, 61, 463–471. [Google Scholar] [CrossRef]

- Paz, F.; Pow-Sang, J.A. A systematic mapping review of usability evaluation methods for software development process. Int. J. Softw. Eng. Its Appl. 2016, 10, 165–178. [Google Scholar] [CrossRef]

| Education Stage | # of Students | Gender | Ever Logged on |

|---|---|---|---|

| Elementary | 499 | M: 262 (52.5%) F: 237 (47.5%) | Y: 250 (50%) N: 249 (50%) |

| Secondary | 992 | M: 463 (46.7%) F: 529 (53.3%) | Y: 503 (51%) N: 489 (49%) |

| Bachelor’s degree | 447 | M: 156 (34.9%) F: 291 (65.1%) | Y: 258 (58.8%) N: 189 (41.2%) |

| Educational Stage | Device | OS | N of Students |

|---|---|---|---|

| Bachelor’s degree | Computer | Android | 2 |

| iOS | 12 | ||

| macOS | 29 | ||

| Windows | 88 | ||

| Smartphone | Android | 30 | |

| iOS | 42 | ||

| Tablet | Android | 2 | |

| iOS | 51 | ||

| Phablets | Android | 2 | |

| Secondary | Computer | Chrome OS | 17 |

| iOS | 3 | ||

| macOS | 32 | ||

| Windows | 30 | ||

| Smartphone | Android | 17 | |

| iOS | 13 | ||

| Tablet | Android | 11 | |

| iOS | 378 | ||

| Phablets | Android | 2 | |

| Elementary | Computer | macOS | 1 |

| Windows | 15 | ||

| Smartphone | Android | 8 | |

| iOS | 2 | ||

| Tablet | Android | 2 | |

| iOS | 221 | ||

| Phablets | Android | 1 |

| Ever Logged on | Attributes | Max | Mean | SD |

|---|---|---|---|---|

| YES | Num_Failed_Subjects | 8 | 1.1 | 1.75 |

| Num_Passed_Subjects | 13 | 9.27 | 2.26 | |

| NO | Num_Failed_Subjects | 12 | 2.29 | 2.64 |

| Num_Passed_Subjects | 13 | 7.65 | 2.8 |

| Educational Stage | Ever Logged on | Attributes | Max | Mean | SD |

|---|---|---|---|---|---|

| Bachelor’s degree | YES | Num_Failed_Subjects | 8 | 1.72 | 2.064 |

| Num_Passed_Subjects | 10 | 7.29 | 2.298 | ||

| NO | Num_Failed_Subjects | 8 | 1.89 | 2.390 | |

| Num_Passed_Subjects | 8 | 4.96 | 2.363 | ||

| Secondary | YES | Num_Failed_Subjects | 7 | 1.24 | 1.766 |

| Num_Passed_Subjects | 13 | 10.51 | 1.910 | ||

| NO | Num_Failed_Subjects | 12 | 3.30 | 2.790 | |

| Num_Passed_Subjects | 13 | 8.31 | 2.910 | ||

| Elementary | YES | Num_Failed_Subjects | 4 | 0.20 | 0.651 |

| Num_Passed_Subjects | 9 | 8.80 | 0.651 | ||

| NO | Num_Failed_Subjects | 7 | 0.60 | 1.188 | |

| Num_Passed_Subjects | 9 | 8.40 | 1.188 |

| Educational Stage | Device | N. of Students (%) | Attributes | Max | Mean | SD |

|---|---|---|---|---|---|---|

| Bachelor’s degree | Computer | 131 (50.8%) | Num_Failed_Subjects | 7 | 1.51 | 1.951 |

| Num_Passed_Subjects | 10 | 7.42 | 2.201 | |||

| Smartphone | 72 (27.9%) | Num_Failed_Subjects | 8 | 2.39 | 2.323 | |

| Num_Passed_Subjects | 10 | 6.53 | 2.584 | |||

| Tablet | 53 (20.5%) | Num_Failed_Subjects | 5 | 1.30 | 1.761 | |

| Num_Passed_Subjects | 10 | 8.02 | 1.855 | |||

| Phablets | 2 (0.8%) | Num_Failed_Subjects | 4 | 2.00 | 2.828 | |

| Num_Passed_Subjects | 8 | 7.00 | 1.414 | |||

| Secondary | Computer | 82 (16.3%) | Num_Failed_Subjects | 7 | 1.55 | 2.044 |

| Num_Passed_Subjects | 13 | 10.82 | 2.363 | |||

| Smartphone | 30 (6.0%) | Num_Failed_Subjects | 7 | 2.80 | 2.265 | |

| Num_Passed_Subjects | 12 | 8.50 | 2.271 | |||

| Tablet | 389 (77.3%) | Num_Failed_Subjects | 6 | 1.06 | 1.592 | |

| Num_Passed_Subjects | 13 | 10.60 | 1.681 | |||

| Phablets | 2 (0.4%) | Num_Failed_Subjects | 2 | 1.00 | 1.414 | |

| Num_Passed_Subjects | 11 | 11.00 | 0.0 | |||

| Elementary | Computer | 16 (6.4%) | Num_Failed_Subjects | 4 | 0.31 | 1.014 |

| Num_Passed_Subjects | 9 | 8.69 | 1.014 | |||

| Smartphone | 10 (4.0%) | Num_Failed_Subjects | 0 | 0.0 | 0.0 | |

| Num_Passed_Subjects | 9 | 9.00 | 0.0 | |||

| Tablet | 223 (89.2%) | Num_Failed_Subjects | 4 | 0.2 | 0.634 | |

| Num_Passed_Subjects | 9 | 8.80 | 0.634 | |||

| Phablets | 1 (0.4%) | Num_Failed_Subjects | 0 | 0.0 | 0.0 | |

| Num_Passed_Subjects | 9 | 9.00 | 0.0 |

| Educational Stage | OS | N. of Students (%) | Attributes | Max | Mean | SD |

|---|---|---|---|---|---|---|

| Bachelor’s degree | Android | 36 (14.0%) | Num_Failed_Subjects | 8 | 2.19 | 2.505 |

| Num_Passed_Subjects | 10 | 6.86 | 2.587 | |||

| iOS | 105(40.7%) | Num_Failed_Subjects | 6 | 1.61 | 1.959 | |

| Num_Passed_Subjects | 10 | 7.42 | 2.227 | |||

| macOS | 29(11.2%) | Num_Failed_Subjects | 6 | 1.14 | 1.726 | |

| Num_Passed_Subjects | 10 | 7.83 | 2.172 | |||

| Windows | 88(34.1%) | Num_Failed_Subjects | 7 | 1.84 | 2.067 | |

| Num_Passed_Subjects | 10 | 7.14 | 2.290 | |||

| Secondary | Android | 30(6.0%) | Num_Failed_Subjects | 6 | 2.27 | 2.116 |

| Num_Passed_Subjects | 12 | 9.07 | 2.149 | |||

| Chrome OS | 17(3.4%) | Num_Failed_Subjects | 5 | 1.71 | 1.724 | |

| Num_Passed_Subjects | 13 | 11.29 | 1.724 | |||

| iOS | 394(78.3%) | Num_Failed_Subjects | 7 | 1.11 | 1.649 | |

| Num_Passed_Subjects | 13 | 10.55 | 1.747 | |||

| macOS | 32(6.4%) | Num_Failed_Subjects | 5 | 0.69 | 1.378 | |

| Num_Passed_Subjects | 13 | 12.13 | 1.581 | |||

| Windows | 30(6.0%) | Num_Failed_Subjects | 7 | 2.27 | 2.477 | |

| Num_Passed_Subjects | 13 | 9.40 | 2.486 | |||

| Elementary | Android | 11(4.4%) | Num_Failed_Subjects | 3 | 0.27 | 0.905 |

| Num_Passed_Subjects | 9 | 8.73 | 0.905 | |||

| iOS | 223(89.2) | Num_Failed_Subjects | 4 | 0.18 | 0.606 | |

| Num_Passed_Subjects | 9 | 8.82 | 0.606 | |||

| macOS | 1(0.4%) | Num_Failed_Subjects | 0 | 0.0 | 0.0 | |

| Num_Passed_Subjects | 9 | 9.00 | 0.0 | |||

| Windows | 15(6.0%) | Num_Failed_Subjects | 4 | 0.33 | 1.047 | |

| Num_Passed_Subjects | 9 | 8.67 | 1.047 |

| Device1-Device2 | Test Statistics | Std. Error | Std. Test Statistics | P-Value | Adj. p-Value |

|---|---|---|---|---|---|

| Smartphone - Computer | 153.14 | 33.19 | 4.61 | 0.00 | 0.00 |

| Smartphone - Tablet | −281.443 | 29.40 | −9.57 | 0.00 | 0.00 |

| Computer - Tablet | −128.29 | 22.05 | −5.81 | 0.00 | 0.00 |

| Device1- Device2 | Test Statistics | Std. Error | Std. Test Statistics | p-Value | Adj. p-Value |

|---|---|---|---|---|---|

| Tablet - Computer | 95.41 | 19.685 | 4.84 | 0.00 | 0.00 |

| Tablet - Smartphone | 195.34 | 26.24 | 7.44 | 0.00 | 0.00 |

| Computer - Smartphone | −99.93 | 29.62 | −3.37 | 0.01 | 0.04 |

| OS1 - OS2 | Test Statistics | Std. Error | Std. Test Statistics | p-Value | Adj. p-Value |

|---|---|---|---|---|---|

| Windows - iOS | 207.11 | 27.16 | 7.62 | 0.00 | 0.00 |

| Windows - macOS | 272.01 | 44.26 | 6.14 | 0.00 | 0.00 |

| Windows - Chrome OS | 439.01 | 74.14 | 5.92 | 0.00 | 0.00 |

| Android - iOS | −184.96 | 34.51 | −5.36 | 0.00 | 0.00 |

| Android - macOS | −249.86 | 49.11 | −5.08 | 0.00 | 0.00 |

| Android - Chrome OS | −416.85 | 77.13 | −5.41 | 0.00 | 0.00 |

| iOS - Chrome OS | 231.899 | 70.63 | 3.28 | 0.01 | 0.10 |

| OS1 - OS2 | Test Statistics | Std. Error | Std. Test Statistics | p-value | Adj. p-value |

|---|---|---|---|---|---|

| iOS - Windows | −120.72 | 24.24 | −4.98 | 0.00 | 0.00 |

| iOS - Android | 130.697 | 30.80 | 4.24 | 0.00 | 0.00 |

| macOS - Windows | −114.68 | 39.51 | −2.90 | 0.04 | 0.04 |

| macOS - Android | 124.66 | 43.85 | 2.84 | 0.04 | 0.05 |

| CC | MAE | RMSE | |

|---|---|---|---|

| Mean | 0.7279 | 1.3718 | 1.86967 |

| SD | 0.0116 | 0.0311 | 0.0446 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fernández-Soriano, F.L.; López, B.; Martínez-España, R.; Muñoz, A.; Cantabella, M. Use of Computing Devices as Sensors to Measure Their Impact on Primary and Secondary Students’ Performance. Sensors 2019, 19, 3226. https://doi.org/10.3390/s19143226

Fernández-Soriano FL, López B, Martínez-España R, Muñoz A, Cantabella M. Use of Computing Devices as Sensors to Measure Their Impact on Primary and Secondary Students’ Performance. Sensors. 2019; 19(14):3226. https://doi.org/10.3390/s19143226

Chicago/Turabian StyleFernández-Soriano, Francisco Luis, Belén López, Raquel Martínez-España, Andrés Muñoz, and Magdalena Cantabella. 2019. "Use of Computing Devices as Sensors to Measure Their Impact on Primary and Secondary Students’ Performance" Sensors 19, no. 14: 3226. https://doi.org/10.3390/s19143226

APA StyleFernández-Soriano, F. L., López, B., Martínez-España, R., Muñoz, A., & Cantabella, M. (2019). Use of Computing Devices as Sensors to Measure Their Impact on Primary and Secondary Students’ Performance. Sensors, 19(14), 3226. https://doi.org/10.3390/s19143226