Combining Inter-Subject Modeling with a Subject-Based Data Transformation to Improve Affect Recognition from EEG Signals

Abstract

1. Introduction

2. Related Previous Work

2.1. Modeling Approaches

2.2. Public Databases

3. Data Analysis

3.1. Problem Formulation

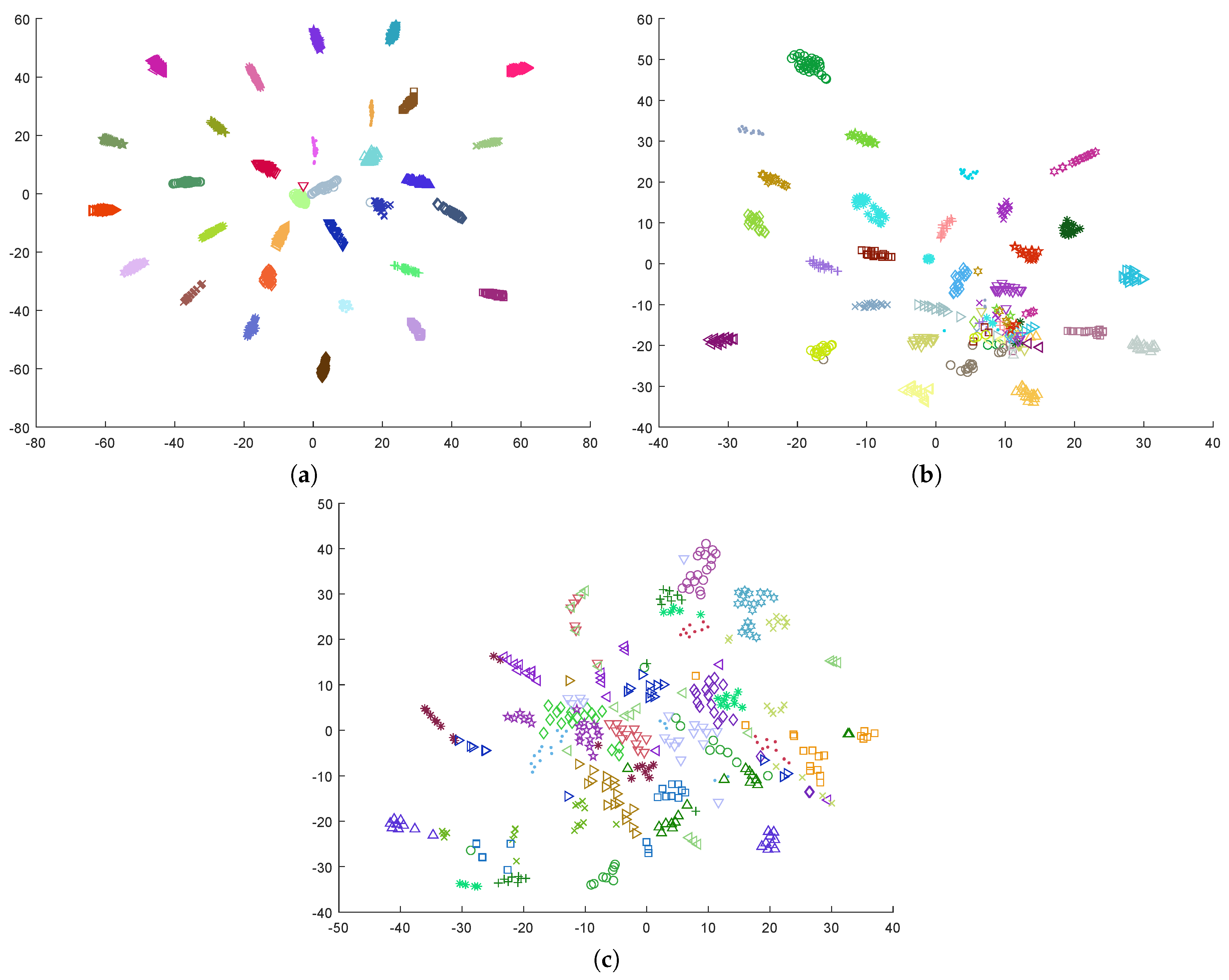

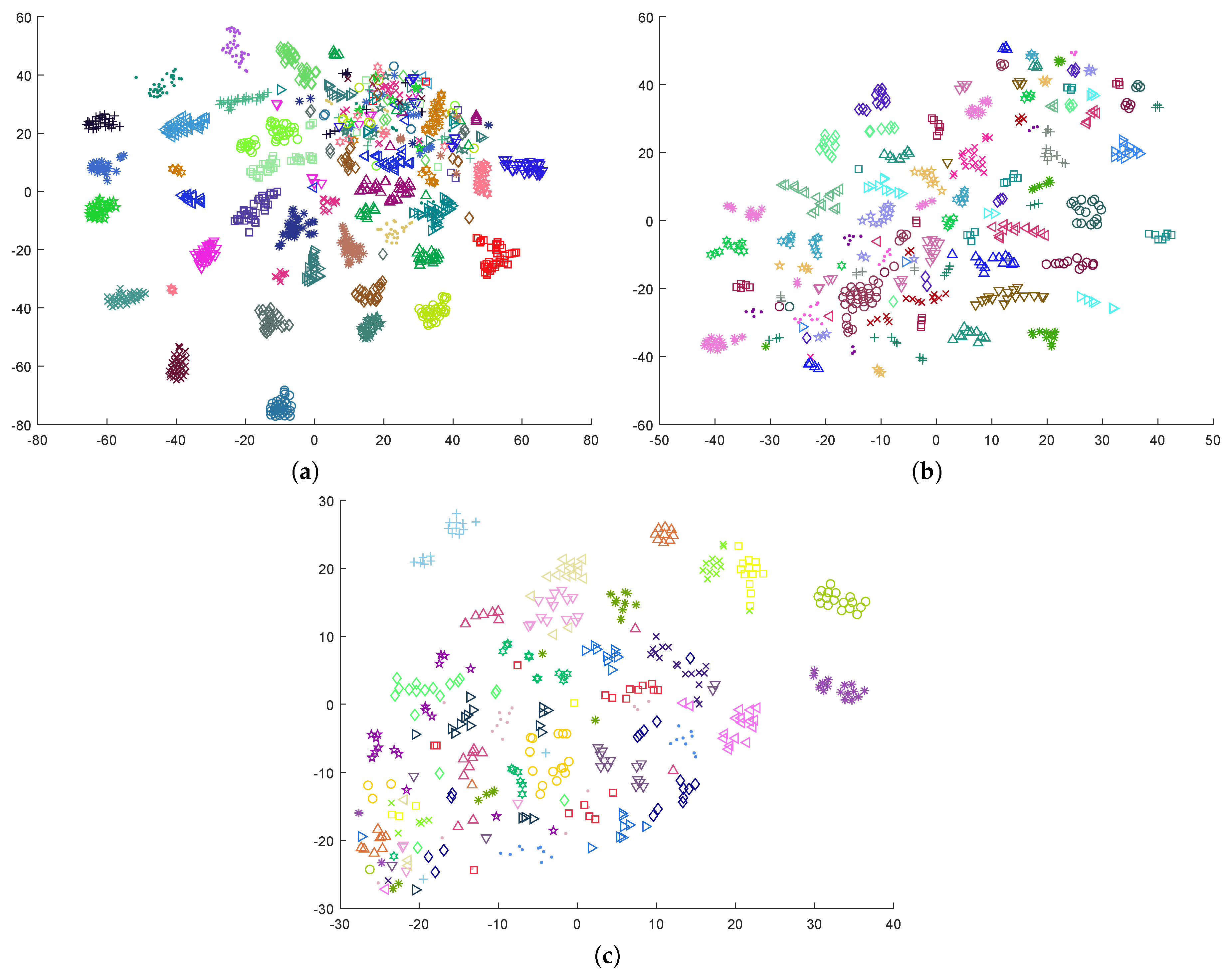

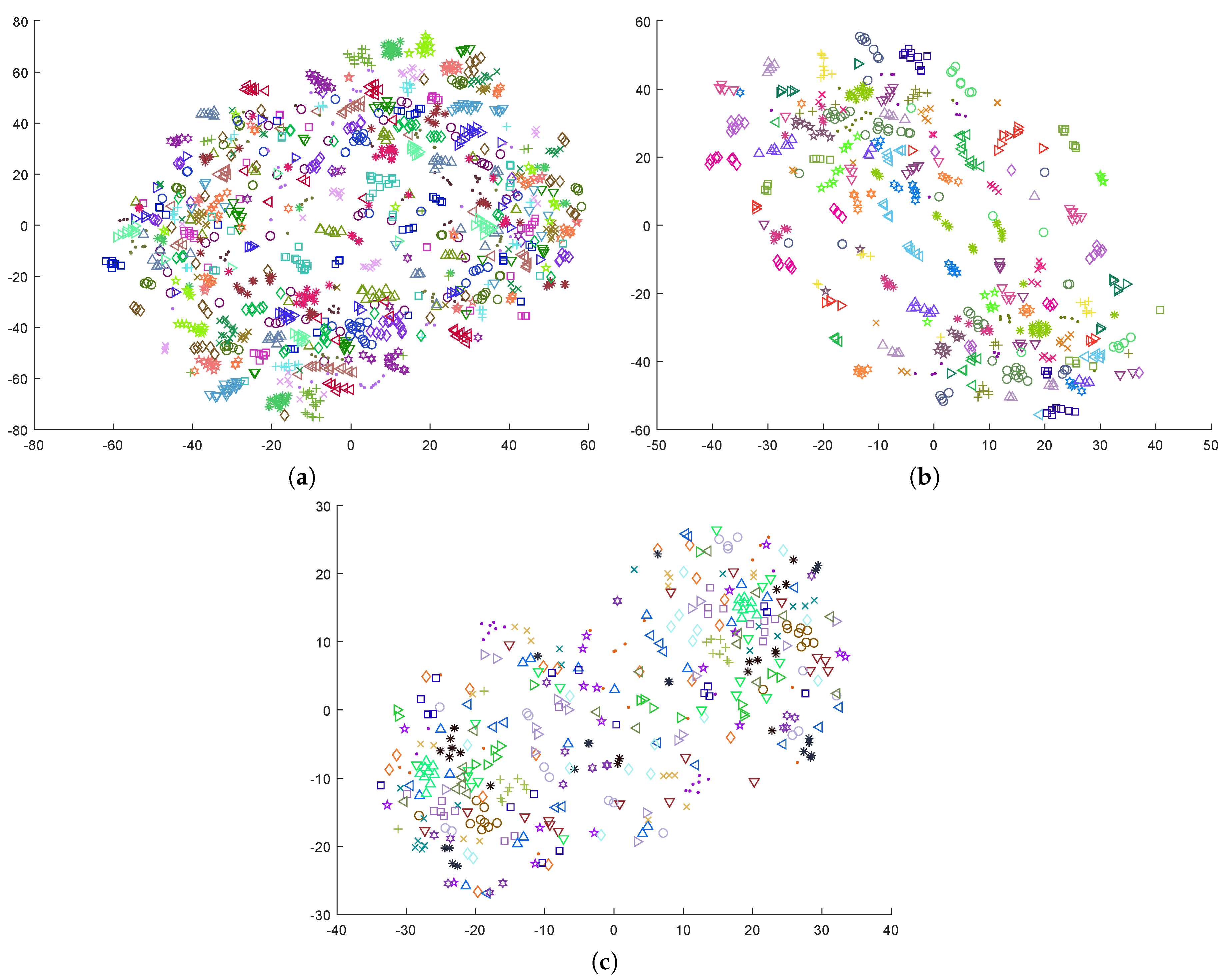

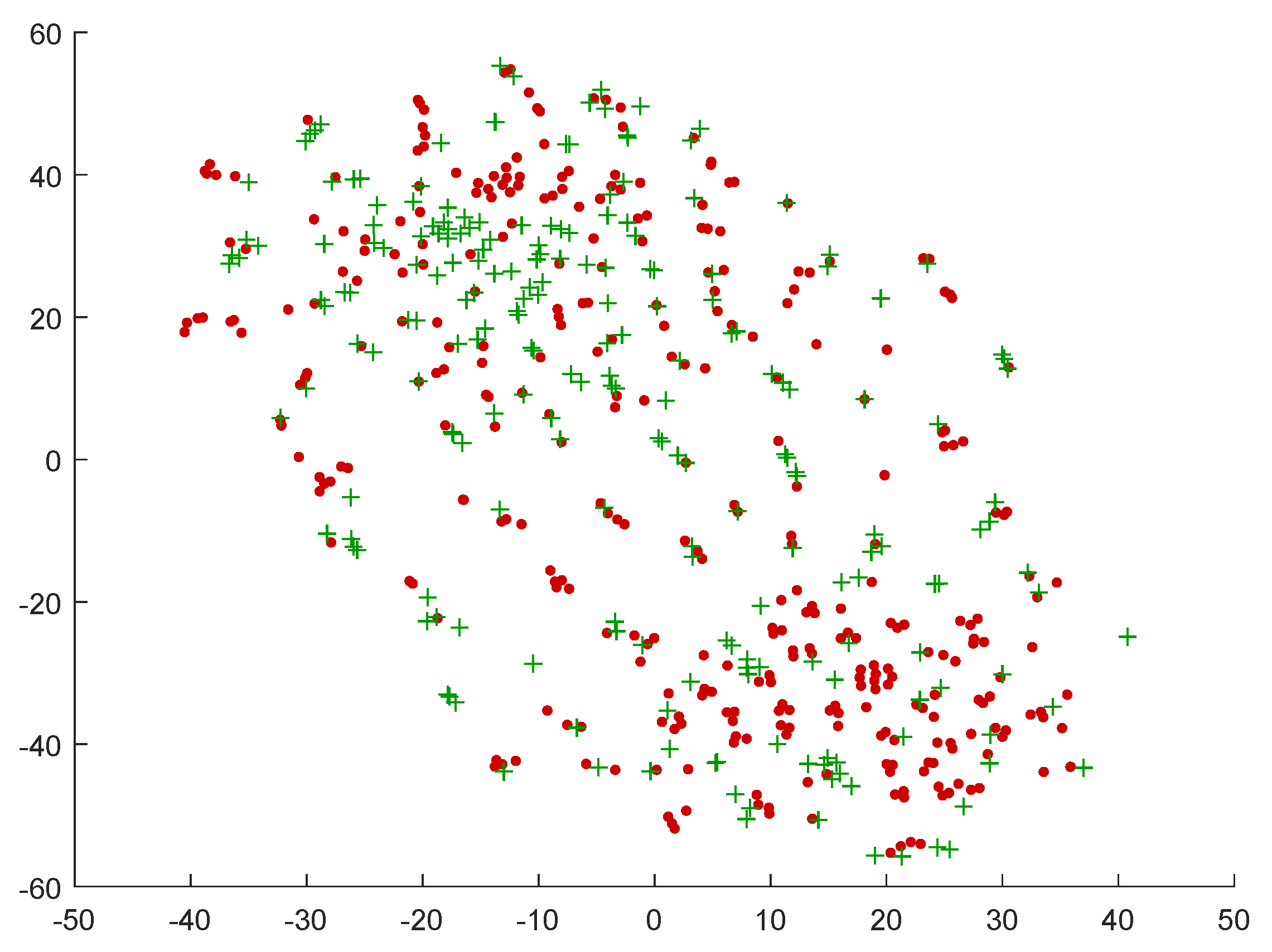

3.2. Topological Structure of the Data

4. Proposed Approach

4.1. Typical Data Transformations

4.2. Nonlinear Data Transformation

5. Experimental Results

5.1. Improvement on Classification Accuracy

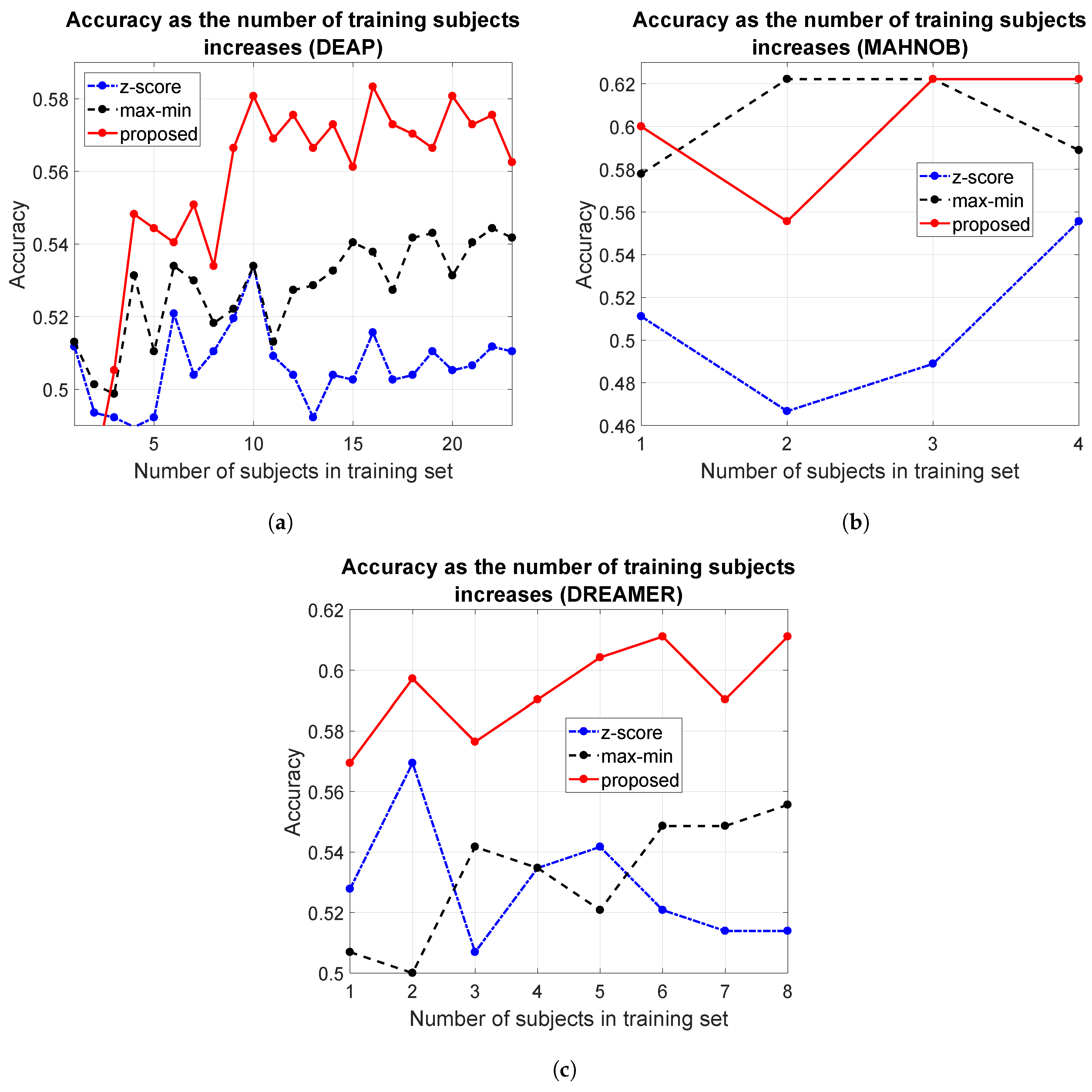

5.2. Scalability

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| DEAP | Database for Emotion Analysis using Physiological Signals |

| EEG | Electroencephalography |

| fMRI | Functional Magnetic Resonance Imaging |

| LDA | Linear Discriminant Analysis |

| PSD | Power Spectral Density |

| RBF | Radial Basis Function |

| SVM | Support Vector Machine |

| t-SNE | t-Distributed Stochastic Neighbor Embedding |

References

- Zhang, Y.; Ren, W.; Zhu, T.; Faith, E. MoSa: A Modeling and Sentiment Analysis System for Mobile Application Big Data. Symmetry 2019, 11, 115. [Google Scholar] [CrossRef]

- Samadiani, N.; Huang, G.; Cai, B.; Luo, W.; Chi, C.H.; Xiang, Y.; He, J. A Review on Automatic Facial Expression Recognition Systems Assisted by Multimodal Sensor Data. Sensors 2019, 19, 1863. [Google Scholar] [CrossRef] [PubMed]

- Hajarolasvadi, N.; Demirel, H. 3D CNN-Based Speech Emotion Recognition Using K-Means Clustering and Spectrograms. Entropy 2019, 21, 479. [Google Scholar] [CrossRef]

- Shu, L.; Xie, J.; Yang, M.; Li, Z.; Li, Z.; Liao, D.; Xu, X.; Yang, X. A Review of Emotion Recognition Using Physiological Signals. Sensors 2018, 18, 2074. [Google Scholar] [CrossRef] [PubMed]

- Zhang, X.; Xu, C.; Xue, W.; Hu, J.; He, Y.; Gao, M. Emotion Recognition Based on Multichannel Physiological Signals with Comprehensive Nonlinear Processing. Sensors 2018, 18, 3886. [Google Scholar] [CrossRef] [PubMed]

- Abadi, M.K.; Kia, M.; Subramanian, R.; Avesani, P.; Sebe, N. Decoding affect in videos employing the MEG brain signal. In Proceedings of the 2013 10th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG), Shanghai, China, 22–26 April 2013; pp. 1–6. [Google Scholar]

- Arevalillo-HerrÁez, M.; Marco-Giménez, L.; Arnau, D.; González-Calero, J.A. Adding sensor-free intention-based affective support to an Intelligent Tutoring System. Knowl.-Based Syst. 2017, 132, 85–93. [Google Scholar] [CrossRef]

- Wang, X.; Gong, G.; Li, N. Automated Recognition of Epileptic EEG States Using a Combination of Symlet Wavelet Processing, Gradient Boosting Machine, and Grid Search Optimizer. Sensors 2019, 19, 219. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Yang, S.; Liu, Y.; Zhang, Y.; Han, B.; Zhou, F. Integration of 24 Feature Types to Accurately Detect and Predict Seizures Using Scalp EEG Signals. Sensors 2018, 18, 1372. [Google Scholar] [CrossRef] [PubMed]

- Patidar, S.; Pachori, R.B.; Upadhyay, A.; Acharya, U.R. An integrated alcoholic index using tunable-Q wavelet transform based features extracted from EEG signals for diagnosis of alcoholism. Appl. Soft Comput. 2017, 50, 71–78. [Google Scholar] [CrossRef]

- Mumtaz, W.; Vuong, P.L.; Xia, L.; Malik, A.S.; Rashid, R.B.A. Automatic diagnosis of alcohol use disorder using EEG features. Knowl.-Based Syst. 2016, 105, 48–59. [Google Scholar] [CrossRef]

- Prasad, D.K.; Liu, S.; Chen, S.H.A.; Quek, C. Sentiment analysis using EEG activities for suicidology. Expert Syst. Appl. 2018, 103, 206–217. [Google Scholar] [CrossRef]

- Gu, Y.; Liang, Z.; Hagihira, S. Use of Multiple EEG Features and Artificial Neural Network to Monitor the Depth of Anesthesia. Sensors 2019, 19, 2499. [Google Scholar] [CrossRef] [PubMed]

- Yang, S.; Deravi, F. On the Usability of Electroencephalographic Signals for Biometric Recognition: A Survey. IEEE Trans. Hum. Mach. Syst. 2017, 47, 958–969. [Google Scholar] [CrossRef]

- Zeng, Y.; Wu, Q.; Yang, K.; Tong, L.; Yan, B.; Shu, J.; Yao, D. EEG-Based Identity Authentication Framework Using Face Rapid Serial Visual Presentation with Optimized Channels. Sensors 2018, 19, 6. [Google Scholar] [CrossRef] [PubMed]

- Hu, J. An approach to EEG-based gender recognition using entropy measurement methods. Knowl.-Based Syst. 2018, 140, 134–141. [Google Scholar] [CrossRef]

- Chao, H.; Dong, L.; Liu, Y.; Lu, B. Emotion Recognition from Multiband EEG Signals Using CapsNet. Sensors 2019, 19, 2212. [Google Scholar] [CrossRef]

- Arnau-GonzÁlez, P.; Arevalillo-Herráez, M.; Ramzan, N. Fusing highly dimensional energy and connectivity features to identify affective states from EEG signals. Neurocomputing 2017, 244, 81–89. [Google Scholar] [CrossRef]

- Kim, M.K.; Kim, M.; Oh, E.; Kim, S.P. A Review on the Computational Methods for Emotional State Estimation from the Human EEG. Comput. Math. Methods Med. 2013, 2013, 573734. [Google Scholar] [CrossRef]

- Lu, Y.; Zheng, W.L.; Li, B.; Lu, B.L. Combining Eye Movements and EEG to Enhance Emotion Recognition. In International Joint Conference on Artificial Intelligence (IJCAI); Yang, Q., Wooldridge, M., Eds.; AAAI Press: Palo Alto, CA, USA, 2015; pp. 1170–1176. [Google Scholar]

- Jiang, X.; Bian, G.B.; Tian, Z. Removal of Artifacts from EEG Signals: A Review. Sensors 2019, 19, 987. [Google Scholar] [CrossRef]

- Mur, A.; Dormido, R.; Duro, N. An Unsupervised Method for Artefact Removal in EEG Signals. Sensors 2019, 19, 2302. [Google Scholar] [CrossRef]

- Chen, D.W.; Miao, R.; Yang, W.Q.; Liang, Y.; Chen, H.H.; Huang, L.; Deng, C.J.; Han, N. A Feature Extraction Method Based on Differential Entropy and Linear Discriminant Analysis for Emotion Recognition. Sensors 2019, 19, 1631. [Google Scholar] [CrossRef] [PubMed]

- Jenke, R.; Peer, A.; Buss, M. Feature Extraction and Selection for Emotion Recognition from EEG. IEEE Trans. Affect. Comput. 2014, 5, 327–339. [Google Scholar] [CrossRef]

- Campos, J.A.D. Improving BCI-based emotion recognition by combining EEG feature selection and kernel classifiers. Expert Syst. Appl. 2016, 47, 35–41. [Google Scholar]

- Gross, J.J.; John, O.P. Revealing Feelings: Facets of Emotional Expressivity in Self-Reports, Peer Ratings, and Behavior. J. Pers. Soc. Psychol. 1997, 72, 435–448. [Google Scholar] [CrossRef] [PubMed]

- Chen, J.; Hu, B.; Wang, Y.; Moore, P.; Dai, Y.; Feng, L.; Ding, Z. Subject-independent emotion recognition based on physiological signals: A three-stage decision method. BMC Med. Inform. Decis. Mak. 2017, 17, 167. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.W.; Nie, D.; Lu, B.L. Emotional state classification from EEG data using machine learning approach. Neurocomputing 2014, 129, 94–106. [Google Scholar] [CrossRef]

- Hadjidimitriou, S.; Charisis, V.; Hadjileontiadis, L. Towards a Practical Subject-Independent Affective State Recognition Based On Time-Domain EEG Feature Extraction. Int. J. Herit. Digit. Era 2015, 4, 165–178. [Google Scholar] [CrossRef][Green Version]

- Li, X.; Song, D.; Zhang, P.; Zhang, Y.; Hou, Y.; Hu, B. Exploring EEG Features in Cross-Subject Emotion Recognition. Front. Neurosci. 2018, 12, 162. [Google Scholar] [CrossRef]

- Salmeron-Majadas, S.; Arevalillo-Herráez, M.; Santos, O.C.; Saneiro, M.; Cabestrero, R.; Quirós, P.; Arnau, D.; Boticario, J.G. Filtering of Spontaneous and Low Intensity Emotions in Educational Contexts. In Artificial Intelligence in Education; Conati, C., Heffernan, N., Mitrovic, A., Verdejo, M.F., Eds.; Springer International Publishing: Cham, Switzerland, 2015; pp. 429–438. [Google Scholar]

- Ayesh, A.; Arevalillo-Herráez, M.; Ferri, F. Cognitive reasoning and inferences through psychologically based personalised modelling of emotions using associative classifiers. In Proceedings of the IEEE 13th International Conference on Cognitive Informatics Cognitive Computing (ICCI*CC), London, UK, 18–20 August 2014; pp. 67–72. [Google Scholar] [CrossRef]

- Koelstra, S.; Muhl, C.; Soleymani, M.; Lee, J.S.; Yazdani, A.; Ebrahimi, T.; Pun, T.; Nijholt, A.; Patras, I. DEAP: A Database for Emotion Analysis using Physiological Signals. IEEE Trans. Affect. Comput. 2012, 3, 18–31. [Google Scholar] [CrossRef]

- Soleymani, M.; Lichtenauer, J.; Pun, T.; Pantic, M. A Multimodal Database for Affect Recognition and Implicit Tagging. IEEE Trans. Affect. Comput. 2012, 3, 42–55. [Google Scholar] [CrossRef]

- Katsigiannis, S.; Ramzan, N. DREAMER: A Database for Emotion Recognition Through EEG and ECG Signals from Wireless Low-cost Off-the-Shelf Devices. IEEE J. Biomed. Health Inform. 2017, 22, 98–107. [Google Scholar] [CrossRef] [PubMed]

- Calvo, R.A.; D’Mello, S. Affect detection: An interdisciplinary review of models, methods, and their applications. IEEE Trans. Affect. Comput. 2010, 1, 18–37. [Google Scholar] [CrossRef]

- Zeng, Z.; Pantic, M.; Roisman, G.I.; Huang, T.S. A survey of affect recognition methods: Audio, visual, and spontaneous expressions. IEEE Trans. Pattern Anal. Mach. Intell. 2009, 31, 39–58. [Google Scholar] [CrossRef] [PubMed]

- Schuller, H.G.B. Categorical and dimensional affect analysis in continuous input: Current trends and future directions. Image Vis. Comput. 2013, 31, 120–136. [Google Scholar]

- Smith, C.A.; Ellsworth, P.C. Patterns of cognitive appraisal in emotion. J. Pers. Soc. Psychol. 1985, 48, 813. [Google Scholar] [CrossRef] [PubMed]

- Sohaib, A.T.; Qureshi, S.; Hagelbäck, J.; Hilborn, O.; Jerčić, P. Evaluating Classifiers for Emotion Recognition Using EEG. In Foundations of Augmented Cognition; Schmorrow, D.D., Fidopiastis, C.M., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 492–501. [Google Scholar]

- Petrantonakis, P.; Hadjileontiadis, L. Adaptive Emotional Information Retrieval From EEG Signals in the Time-Frequency Domain. IEEE Trans. Signal Process. 2012, 60, 2604–2616. [Google Scholar] [CrossRef]

- Olivetti, E.; Kia, S.M.; Avesani, P. MEG decoding across subjects. In Proceedings of the 2014 International Workshop on Pattern Recognition in Neuroimaging, Tubingen, Germany, 4–6 June 2014; pp. 1–4. [Google Scholar]

- Kia, S.M.; Pedregosa, F.; Blumenthal, A.; Passerini, A. Group-level spatio-temporal pattern recovery in MEG decoding using multi-task joint feature learning. J. Neurosci. Methods 2017, 285, 97–108. [Google Scholar] [CrossRef]

- Murugappan, M.; Nagarajan, R.; Yaacob, S. Combining spatial filtering and wavelet transform for classifying human emotions using EEG Signals. J. Med. Biol. Eng. 2011, 31, 45–51. [Google Scholar] [CrossRef]

- Brown, L.; Grundlehner, B.; Penders, J. Towards wireless emotional valence detection from EEG. In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society, EMBC, Boston, MA, USA, 30 August–3 September 2011; pp. 2188–2191. [Google Scholar] [CrossRef]

- Petrantonakis, P.; Hadjileontiadis, L. Emotion Recognition From EEG Using Higher Order Crossings. IEEE Trans. Inf. Technol. Biomed. 2010, 14, 186–197. [Google Scholar] [CrossRef]

- Lin, Y.P.; Wang, C.H.; Jung, T.P.; Wu, T.L.; Jeng, S.K.; Duann, J.R.; Chen, J.H. EEG-Based Emotion Recognition in Music Listening. IEEE Trans. Biomed. Eng. 2010, 57, 1798–1806. [Google Scholar] [CrossRef]

- Petrantonakis, P.C.; Hadjileontiadis, L.J. A novel emotion elicitation index using frontal brain asymmetry for enhanced EEG-based emotion recognition. IEEE Trans. Inf. Technol. Biomed. 2011, 15, 737–746. [Google Scholar] [CrossRef]

- Kaundanya, V.; Patil, A.; Panat, A. Performance of k-NN classifier for emotion detection using EEG signals. In Proceedings of the International Conference on Communications and Signal Processing (ICCSP), Melmaruvathur, India, 2–4 April 2015; pp. 1160–1164. [Google Scholar] [CrossRef]

- AlZoubi, O.; Calvo, R.A.; Stevens, R.H. Classification of EEG for affect recognition: An adaptive approach. In AI 2009: Advances in Artificial Intelligence; Springer: Heidelberg, Germany, 2009; pp. 52–61. [Google Scholar]

- Wang, Q.; Sourina, O. Real-time mental arithmetic task recognition from EEG signals. IEEE Trans. Neural Syst. Rehabil. Eng. 2013, 21, 225–232. [Google Scholar] [CrossRef] [PubMed]

- Murugappan, M.; Ramachandran, N.; Sazali, Y. Classification of human emotion from EEG using discrete wavelet transform. J. Biomed. Sci. Eng. 2010, 3, 390–396. [Google Scholar] [CrossRef]

- Russell, J.A. Affective Space is Bipolar. J. Personal. Soc. Psychol. 1979, 37, 345. [Google Scholar] [CrossRef]

- Mehrabian, A. Pleasure-arousal-dominance: A general framework for describing and measuring individual differences in Temperament. Curr. Psychol. 1996, 14, 261–292. [Google Scholar] [CrossRef]

- Liu, Y.; Sourina, O. EEG-based subject-dependent emotion recognition algorithm using fractal dimension. In Proceedings of the IEEE International Conference on Systems, Man and Cybernetics (SMC), San Diego, CA, USA, 5–8 October 2014; pp. 3166–3171. [Google Scholar] [CrossRef]

- Jirayucharoensak, S.; Pan-Ngum, S.; Israsena, P. EEG-based emotion recognition using deep learning network with principal component based covariate shift adaptation. Sci. World J. 2014, 2014, 627892. [Google Scholar] [CrossRef] [PubMed]

- Rozgic, V.; Vitaladevuni, S.; Prasad, R. Robust EEG emotion classification using segment level decision fusion. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Vancouver, BC, Canada, 26–31 May 2013; pp. 1286–1290. [Google Scholar] [CrossRef]

- Aspinall, P.; Mavros, P.; Coyne, R.; Roe, J. The urban brain: Analysing outdoor physical activity with mobile EEG. Br. J. Sports Med. 2015, 49, 272–276. [Google Scholar] [CrossRef]

- Liu, Y.; Sourina, O.; Nguyen, M.K. Real-Time EEG-Based Human Emotion Recognition and Visualization. In Proceedings of the International Conference on Cyberworlds (CW), Singapore, 20–22 October 2010; pp. 262–269. [Google Scholar] [CrossRef]

- van der Maaten, L.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Ruiz-Blondet, M.V.; Jin, Z.; Laszlo, S. CEREBRE: A novel method for very high accuracy event-related potential biometric identification. IEEE Trans. Inf. Forensics Secur. 2016, 11, 1618–1629. [Google Scholar] [CrossRef]

- Armstrong, B.C.; Ruiz-Blondet, M.V.; Khalifian, N.; Kurtz, K.J.; Jin, Z.; Laszlo, S. Brainprint: Assessing the uniqueness, collectability, and permanence of a novel method for ERP biometrics. Neurocomputing 2015, 166, 59–67. [Google Scholar] [CrossRef]

- Thomas, K.P.; Vinod, A.P.; Robinson, N. Online Biometric Authentication Using Subject-Specific Band Power features of EEG. In Proceedings of the 2017 International Conference on Cryptography, Security and Privacy, Wuhan, China, 17–19 March 2017; pp. 136–141. [Google Scholar]

- Bozhkov, L.; Georgieva, P.; Santos, I.; Pereira, A.; Silva, C. EEG-based subject independent affective computing models. Procedia Comput. Sci. 2015, 53, 375–382. [Google Scholar] [CrossRef][Green Version]

- Jatupaiboon, N.; Pan-ngum, S.; Israsena, P. Real-time EEG-based happiness detection system. Sci. World J. 2013, 2013, 618649. [Google Scholar] [CrossRef] [PubMed]

- Arnau-González, P.; Arevalillo-Herráez, M.; Katsigiannis, S.; Ramzan, N. On the influence of affect in EEG-based subject identification. IEEE Trans. Affect. Comput. 2018. [Google Scholar] [CrossRef]

- Garcia, S.; Herrera, F. An Extension on Statistical Comparisons of Classifiers over Multiple Data Sets for all Pairwise Comparisons. J. Mach. Learn. Res. 2008, 9, 2677–2694. [Google Scholar]

| Database | Subjects | Videos | Stimuli | Duration | Device | Channels | Sampling Frequency | Features |

|---|---|---|---|---|---|---|---|---|

| DEAP | 32 | 40 | Music | 60 s | Biosemi | 32 | 512 Hz * | 230 |

| videos | Active II | |||||||

| MAHNOB | 27 | 20 | Excerpts | 34.9–117 s | Biosemi | 32 | 512 Hz * | 230 |

| from movies | (M = 81 s) | Active II | ||||||

| DREAMER | 23 | 18 | Music | 65–393 s | Emotive | 14 | 128 Hz | 105 |

| videos | (M = 199 s) | EPOC |

| Valence | Arousal | |||

|---|---|---|---|---|

| Database | Number of | Samples per | Number of | Samples |

| Subjects | Subject | Subjects | per Subject | |

| DEAP | 24 | 32 | 16 | 32 |

| MAHNOB | 5 | 18 | 10 | 18 |

| DREAMER | 9 | 16 | 7 | 14 |

| Valence | Arousal | ||||||

|---|---|---|---|---|---|---|---|

| SVM | SVM | Naive | SVM | SVM | Naive | ||

| Cubic | Radial | Bayes | Cubic | Radial | Bayes | ||

| DEAP | z-score | 0.51 | 0.50 | 0.51 | 0.52 | 0.50 | 0.50 |

| proposed | 0.54 | 0.58 | 0.57 | 0.54 | 0.56 | 0.55 | |

| MAHNOB | z-score | 0.50 | 0.56 | 0.56 | 0.55 | 0.52 | 0.57 |

| proposed | 0.51 | 0.65 | 0.65 | 0.59 | 0.61 | 0.62 | |

| DREAMER | z-score | 0.50 | 0.52 | 0.51 | 0.55 | 0.53 | 0.50 |

| proposed | 0.54 | 0.59 | 0.59 | 0.58 | 0.57 | 0.57 | |

| Valence | Arousal | ||||||

|---|---|---|---|---|---|---|---|

| SVM | SVM | Naive | SVM | SVM | Naive | ||

| Cubic | Radial | Bayes | Cubic | Radial | Bayes | ||

| DEAP | pairwise comparisons | 480 | 480 | 480 | 320 | 320 | 320 |

| average rank z-score | 1.65 | 1.73 | 1.71 | 1.57 | 1.78 | 1.78 | |

| average rank proposed | 1.35 | 1.27 | 1.29 | 1.43 | 1.22 | 1.22 | |

| p-value | <10 | <10 | <10 | 0.01 | <10 | <10 | |

| MAHNOB | pairwise comparisons | 100 | 100 | 100 | 200 | 200 | 200 |

| average rank z-score | 1.61 | 1.82 | 1.84 | 1.58 | 1.84 | 1.70 | |

| average rank proposed | 1.39 | 1.18 | 1.16 | 1.42 | 1.16 | 1.30 | |

| p-value | 0.02 | <10 | <10 | 0.02 | <10 | <10 | |

| DREAMER | pairwise comparisons | 180 | 180 | 180 | 140 | 140 | 140 |

| average rank z-score | 1.66 | 1.81 | 1.82 | 1.54 | 1.66 | 1.73 | |

| average rank proposed | 1.34 | 1.19 | 1.18 | 1.46 | 1.34 | 1.27 | |

| p-value | <10 | <10 | <10 | 0.31 | <10 | <10 | |

| Valence | Arousal | |||||

|---|---|---|---|---|---|---|

| SVM | SVM | Naive | SVM | SVM | Naive | |

| Cubic | Radial | Bayes | Cubic | Radial | Bayes | |

| DEAP | 0.62 | 0.64 | 0.61 | 0.55 | 0.54 | 0.59 |

| MAHNOB | 0.59 | 0.59 | 0.58 | 0.56 | 0.66 | 0.62 |

| DREAMER | 0.50 | 0.52 | 0.46 | 0.49 | 0.51 | 0.51 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Arevalillo-Herráez, M.; Cobos, M.; Roger, S.; García-Pineda, M. Combining Inter-Subject Modeling with a Subject-Based Data Transformation to Improve Affect Recognition from EEG Signals. Sensors 2019, 19, 2999. https://doi.org/10.3390/s19132999

Arevalillo-Herráez M, Cobos M, Roger S, García-Pineda M. Combining Inter-Subject Modeling with a Subject-Based Data Transformation to Improve Affect Recognition from EEG Signals. Sensors. 2019; 19(13):2999. https://doi.org/10.3390/s19132999

Chicago/Turabian StyleArevalillo-Herráez, Miguel, Maximo Cobos, Sandra Roger, and Miguel García-Pineda. 2019. "Combining Inter-Subject Modeling with a Subject-Based Data Transformation to Improve Affect Recognition from EEG Signals" Sensors 19, no. 13: 2999. https://doi.org/10.3390/s19132999

APA StyleArevalillo-Herráez, M., Cobos, M., Roger, S., & García-Pineda, M. (2019). Combining Inter-Subject Modeling with a Subject-Based Data Transformation to Improve Affect Recognition from EEG Signals. Sensors, 19(13), 2999. https://doi.org/10.3390/s19132999