1. Introduction

Synthetic aperture radar (SAR) systems provide fine-resolution images regardless of the weather conditions. Therefore, they are widely used in environmental monitoring, military reconnaissance, and other fields [

1,

2,

3]. Nevertheless, coherent imaging commonly causes multiplicative speckle noise in SAR images, which limits the analysis and interpretation of the scene and reduces the visualization of the images [

4,

5,

6]. To avoid these problems, the speckle noise should be reduced as far as possible while retaining the structural features and texture information in SAR images [

7,

8,

9,

10,

11].

In recent work, much attention was devoted to spatial-domain adaptive filtering based on Bayesian statistical estimation. Techniques such as linear minimum mean-square error (LMMSE)-based filtering [

12] and maximum posterior probability (MAP)-based filtering [

13] were introduced at this time. LMMSE filtering is performed by Lee [

14], Frost [

15], and Kuan filters [

16], whereas MAP filtering [

17] employs Gaussian and gamma filters. Although filters are easily implemented and have low computational complexity, they lose much of the texture information [

18]. Despeckling methods in the wavelet domain have also been proposed. Initially, multiplicative noise was converted to additive noise through homomorphic filtering. Later, the normal inverse Gaussian model was introduced [

19], which removes speckle noise without the shortcomings of spatial filter wave technology and has set a new direction for SAR image processing. Transform-domain-based methods better preserve the edges than spatial-domain filters, but tend to generate artifacts. Another important property of SAR images is nonlocal self-similarity, which is easily incorporated in SAR image despeckling. For example, nonlocal means (NLM) filtering [

20] exploits self-similarity for image denoising. In an NLM filter, the resemblance between image patches is measured by the Euclidian distance and weighted averaging is performed in nonlocal regions. Inspired by the success of the NLM denoising filter, the authors of [

21] developed the probabilistic patch-based (PPB) algorithm, in which the similarity between image patches in the SAR image is determined by the noise distribution rather than the traditional Euclidian distance.

In recent years, the continuous development of sparse theory has sparked interest in image denoising methods based on sparse representation [

22,

23,

24,

25,

26]. In these methods, the most critical steps are selecting the appropriate dictionary, constructing the sparse model, and estimating the sparse coefficient. The earliest sparse representation methods, such as the discrete cosine transform and wavelet transform, relied on fixed, over-complete dictionaries. However, fixed dictionaries lack sufficient flexibility and self-adaptability for disparate image structures and the exact expression of signals. A more flexible dictionary would better characterize the prior information of the images. Recently, image patches have been identified as a flexible sparse target. Unlike the whole image, a patch is low-dimensional and overlapped patches provide opportunities for adaptively learning a dictionary or transforming the image into a sparse representation. Sparse dictionaries of training image patches extracted from images are known to ensure sparse representation coefficients for specific image structures [

27,

28]. The famous K singular value decomposition (K-SVD) algorithm [

29] assumes that each patch has a sparse representation in a global over-complete dictionary. Each atom in the dictionary is then determined by minimizing the fitting error of the represented patches under the sparsity constraint. Considering the similarity and geometric direction of image patches, the authors of [

30] classified image patches and learned an orthogonal dictionary for each type of image patch. As this method sparingly represents the types of image patches, it achieves faster dictionary learning, sparser coding, and better image reconstruction quality than the K-SVD algorithm. Unlike fixed dictionaries, a learned dictionary can adapt to the structural features of images and effectively obtains the sparse representations of images.

In recent works, the sparsity and self-similarity of images are often combined to improve the representation performance. The BM3D algorithm [

31] combines similar image patches with three-dimensional (3D) transformation to enhance the sparsity level of 3D groups. For this purpose, BM3D performs a 3D wavelet transform on the groups and estimates the real-image wavelet coefficients by wiener filtering, which effectively removes Gaussian white noise from the images. The SAR-BM3D algorithm [

32] extends the BM3D algorithm to SAR images. This algorithm replaces the wiener filter with a local LMMSE estimator that more accurately estimates the wavelet coefficients of the image, and hence reduces the speckle effect on SAR images. The fast adaptive nonlocal SAR despeckling (FANS) algorithm [

33] is a faster and spatially-adaptive version of SAR-BM3D, which ensures a better speckle rejection in homogeneous areas. Dong et al. [

34] proposed a nonlocal centralized sparse representation model based on the traditional sparse representation model, which estimates the sparse coefficients by nonlocal averaging of the image patches. As the image patches are used for constructing the coefficient correction terms in the sparse representation, the sparse coefficient is close to the real-image coefficient. To estimate the real image, some researchers exploit the low-rank property of similar image patch sets [

35] and apply a soft thresholding that handles the singular values of similar noisy image patch sets.

As is well known, image regions differ in structure, so the sparsities of various image regions differ in the transformation domain. For this reason, imposing constant constraints on the coefficients is unlikely to fully utilize the prior information. To characterize the structural differences in the image, weighted processing of the image coefficients is required. The authors of [

36] proposed a weighted nuclear norm minimization (WNNM) algorithm. The standard nuclear norm minimization (NNM) algorithm treats all singular values equally and shrinks them with the same threshold. However, the equalization process of NNM ignores the differences between image singular values in collections of similar image patches with different image prior information. The WNNM algorithm treats different singular values by assigning them with different weights. The weighting fully utilizes the prior information in different singular values, thus improving the flexibility of the kernel parameter constraint. Inspired by the Schatten

p-norm and WNNM, the authors of [

37] developed the weighted Schatten

p-norm minimization model, which replaces the nuclear norm in the standard NNM model with the Schatten

p-norm. They also recommended a weight parameter for the Schatten

p-norm that obtains a new low-rank regular term. The problem is then solved by a generalized threshold contraction method. The trilateral weighted sparse coding model [

38] has achieved good results in practical image denoising. This model accurately locates different noise intensities in different image regions, introduces three weight matrices into the model, and characterizes the statistics of realistic noise and image priors with data fidelity term and regularization term of the sparse coding framework. The high significance of the weights ensures that the estimated sparse coding coefficients approach the real-image coefficients.

Inspired by the above weighting idea, we propose a novel SAR image despeckling model based on dictionary learning and multi-weighted sparse coding. In the proposed multi-weighted sparse coding (MWSC), the efficient representation capacity of the orthogonal dictionary is integrated with the strong sparsity constraint of the multi-weighting model. This work makes three main contributions to the existing literature. First, we establish our general analysis dictionary learning and MWSC-based SAR image despeckling model. The proposed model continuously and efficiently trains the orthogonal dictionaries through SVD. Second, we introduce a weight matrix for nonuniform regularization, which explicitly and reasonably characterizes the diverse nonlocal characteristic of a group rather than performing uniform nonlocal regularizations. Another weight matrix is introduced to the data-fidelity term for characterizing the noise property. Third, we design an alternative minimization solution step for simultaneous orthogonal dictionary learning, weight parameter update, nonconvex sparse coding, and image reconstruction. As demonstrated in subsequent experimental results, the orthogonal dictionary learning and weight constraints both contribute to the final performance improvements. In terms of speckle noise removal and preservation of details, the MWSC recovered higher-quality images than other SAR image despeckling methods.

2. Traditional Sparse Coding Model

Speckles in SAR images can be characterized by the following multiplicative noise model [

39]:

where

is the observed SAR image,

is the real value of the SAR image, and

is the fully developed speckle noise. First, the multiplicative noise model must be transformed into an additive noise model. To this end, the SAR image is preprocessed as

where

is the observed additive noise, which depends on the signal. In sparse representation theory, the sparse coding problem of each patch

over dictionary

is formulated as

where

is the data-fidelity term,

is the image patch, and

is a defined operator that extracts patch

from

.

is the regularization term denoting the image prior, and

is the regularization parameter used for balancing the relationship between the sparse approximation error and the sparse constraint. The sparse coding process of each patch

attempts to find a sparse coefficient

over a given dictionary

. Therefore, most of the entries in the vector

are zero or close to zero. Once

is computed, the latent clean patch matrix

is estimated as

. The clean image

to be reconstructed is then sparsely represented by a set of sparse codes {

} formulated as follows:

3. The Proposed SAR Despeckling Algorithm

3.1. Weighted Sparse Representation Model

As the observed image

is seriously damaged by speckle noise, the image patch-based sparse coding method exploits only the local sparsity, which is insufficient for estimating the sparse coefficients

from

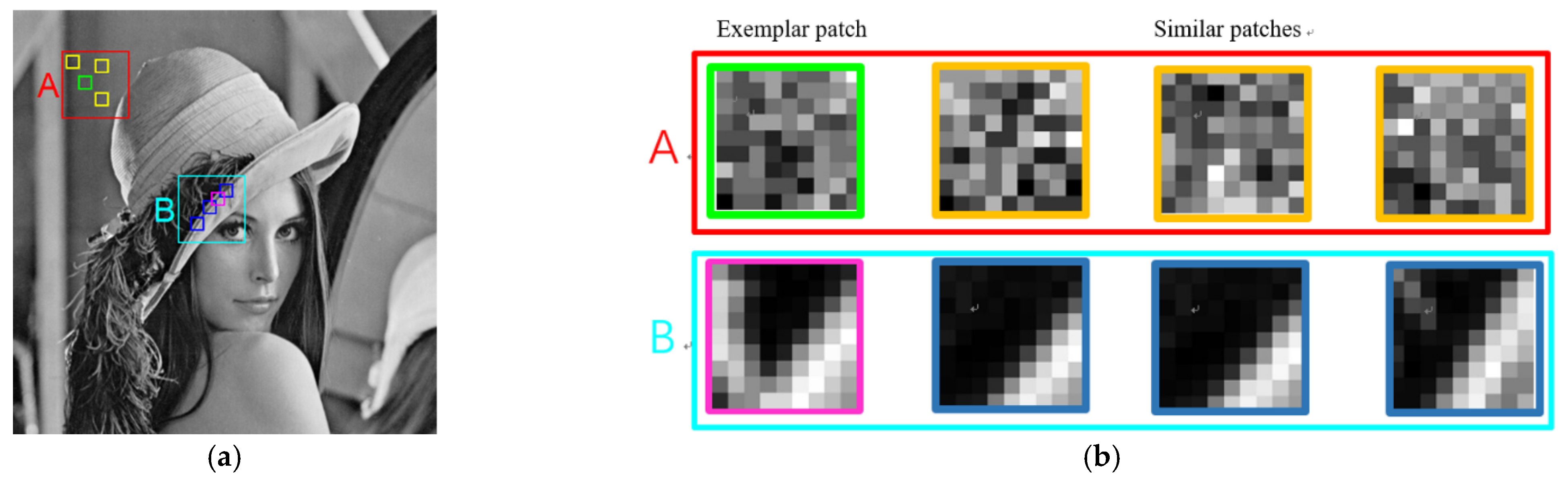

. The correlation among image patches is another important prior information of the image. To exploit this prior, we construct and utilize groups of patches (rather than a single patch) as the basic unit of sparse representation. To retain the correlation in the group, regularizing its group sparsity is an obvious solution; however, the group sparsity may not be sufficiently guaranteed for all groups, because the levels of similarity widely vary in different image regions. This variability is demonstrated on the “Lena” image in

Figure 1. Two groups, labeled “A” and “B” in

Figure 1, are found in the homogeneous region and a heterogeneous region, respectively. The enlarged patches in

Figure 1b clarify the similarity differences between the groups “A” and “B.” In the current group-based sparse coding approaches, nonlocal similarity is enforced by uniform nonlocal regularizations with the same penalty parameter, such as the norm. If the same constraints are imposed on each image patch, the structural features of the image cannot be fully captured and utilized, and some details and texture information are inevitably lost from the image. In addition, the data-fidelity term in the sparse coding model ensures the authenticity of the sparse coefficient in the sparse representation process and conforms to the image despeckling process. In reality, the additive noise

depends on the signals in the SAR image and its level varies in different local patches. Therefore, in the actual SAR image despeckling process, the important work of the data-fidelity term

is varying the local noise intensity.

To rectify the problems in traditional patch-based sparse representation, we build groups of similar patches and simultaneously exploit the local sparsity and the nonlocal self-similarity of SAR images in a unified framework. We first divide the image

into

overlapped patches, denoting each patch by a vector

, i.e.,

. For each target image patch

in the

training window, we search for

best matched patches. Finally, all patches (the target image patch and all similar image patches) are stacked into a matrix

representing the group. This step requires a suitable metric for measuring the similarity between noisy patches. Here, the Euclidean distance is selected as the similarity metric between the target image patch

and the candidate patch

in the training window. The Euclidean metric is given by

The group

containing the searched similar patches is then represented as

where

is an operator that extracts the

j-th similar patch

from

, and

is the number of similar patches in group

. As all image patches in

share a similar underlying structure, the column vectors of

are strongly correlated. The sparse coefficients of the internal patches of group

are collected into a coefficient matrix denoted by

C = [

c1,

c2,…,

cN].

The current group-based SAR image despeckling approaches impose the same constraint on each group. Because they ignore the varying local noise intensity, they cannot fully capture and utilize the nonlocal characteristics of the image. To improve the accuracy of

, we analyze its probability statistics. More specifically, we apply the maximum a posterior (MAP) estimation derived from Gaussian Naïve Bayesian formula to the coefficient matrix

:

In Equation (7),

is the data-fidelity term. Its form depends on the statistical characteristics of the image noise that penalizes the differences between group

and the coefficient matrix

. As the noise intensity varies in different regions of the SAR image, we assume that the additive noise

follows a Gaussian distribution of noise standard deviations. Hence, the data-fidelity term

is defined as

where

is the noise standard deviation of the additive noise in image patch

.

By the group property, all image patches in

share a similar underlying structure. Therefore, their coefficient vectors under one dictionary should share the same sparse profile. Specifically, the elements in one row of

have the same magnitude. We thus assume that each row of

is independent of all other rows and that its scale parameters follow a Laplace distribution. Therefore, the prior term in Equation (7) is defined as

where

is denotes the

l-th row vector of

and

are the scale parameters of

. Substituting Equations (8) and (9) into Equation (7), the MAP estimation model of coefficient matrix

is obtained as

where

and

are two diagonal matrices. The design of

and

to characterize the varying statistics of realistic noise and the sparsity prior of SAR images is described in

Section 3.2.

Unlike the traditional sparse representation model, we modify the data-fidelity term and regularization term with the weight matrices and , respectively. The weight matrix characterizes the residual in the data-fidelity term . When the diagonal element is large, the speckle noise intensity is high and a stronger constraint is imposed on the weighted residual term. Conversely, when the speckle noise intensity is low, the constraint on the residual term is weakened. By introducing the weight matrix into the data-fidelity term, we capture and utilize the realistic noise properties in different patches. Meanwhile, in the regularization term reflects the importance of each element in . Specifically, one weight in is assigned to the elements in one row of to enforce the same sparse profile among similar patches, whereas the elements of in different rows are assigned different weights that change the strengths of the sparsity constraints. Overall, the weighted sparse constraint model not only reflects the varying local noise intensity, but also simultaneously utilizes the patch-level sparsity and relevance among patches, which effectively improves the adaptability of the model to real SAR images and the accuracy of the coefficient matrix .

3.2. Adaptive Matrix Parameter Learning

Because the noise intensity varies in different image regions, the weight matrix

must learn to adaptively describe the statistical properties of the noise. In the actual speckle-reduction process, the weight matrix

is updated through iterative regularization. During the

k-th iteration, the parameter

in weight

is updated as

where

is the noise benchmark parameter,

is a control factor, which is tuned according to the intensity of noise. In general, a smaller

is set for low noise level and a larger

is set for high noise level.

is the

j-th image patch extracted from the original observation image

, and

is the

j-th image patch extracted from the input image

during the

k-th iteration of iterative regularization (see

Section 3.4. for details).

An effective regularization term that describes the image priors is required for a high-quality recovered image. In the proposed model given by Equation (10), the combinational regularization term is precisely controlled by a weight matrix describing the importance of the different elements of the coefficient matrix. In our method, the weight matrix of the regularization term is learned from for adaptively adjusting the weights of the coefficients. Clearly, is individual to group and effectively captures the specific structural information of that group.

Fixed dictionaries are unlikely to effectively express the signals in a sparse representation, but a trained dictionary is expected to enhance the sparsity of coefficients. The dictionary learning method in the traditional sparse coding model (Equation (3)) jointly optimizes the dictionary and the coefficient vector . The dictionary learned by this method adapts to a given whole image, not merely a group. When the same dictionary is shared by all image patches, it may not adapt to specific local structures in the image. Instead of learning a redundant dictionary for the whole image, we directly learn an individual dictionary for each group , which covers all patches in that group. The model also imposes a tight frame constraint , which not only restrains the correlations among dictionary atoms, but also simplifies the complexity of the sparse decomposition operations.

Finally, the complete group-based dictionary learning and MWSC model is defined as follows:

where

,

and

are matrices to be solved.

3.3. Model Optimization

To facilitate the solution of Equation (12), we define an auxiliary variable

. Equation (12) is then rewritten as

Equation (13) includes three unknown variables. Because Equation (13) is nonconvex, it is solved by an alternative minimization method. In each iteration, Equation (13) is decomposed into three subproblems: , and for learning the orthogonal dictionary , updating the weight , and sparse coding of , respectively.

Given

and

, the subproblem of Equation (13) with respect to

is

As

is an orthogonal dictionary, the subproblem of

can be rewritten as

The objective function Equation (15) is separable into the diagonal elements of

and its minimization problem can be consequently decoupled into multiple independent scalar optimizations of the form

where

and

represent the

l-th rows of the matrices

and

, respectively. Equation (16) is a quadratic minimization problem, so its diagonal element

is solved as

Estimating each l-th diagonal element of by Equation (17), the solution of is obtained.

Given

and

, the subproblem of Equation (13) with respect to

is

Equation (18) is a Frobenius norm minimization problem with an orthogonal constraint, which can be solved by SVD of

as

where

and

are the matrices of the left and right singular vectors, respectively, and

is the singular value matrix. By SVD, Equation (19) is solved as

Clearly, the dictionary is self-adaptive to each group and requires only one SVD for each group, which is computationally efficient.

Given

and

, the subproblem of Equation (13) with respect to

A is

As

is orthogonal, the first Frobenius norm term in Equation (21) is rewritten as

. The minimization of Equation (21) is separable into the elements of the matrices

and

as

where

is the

j-th column vector of matrix

. The minimization of Equation (22) can be further decoupled into multiple independent scalar optimizations of the form

where

is the

l-th element of

and

is the

l-th column of dictionary

. Equation (23) can be efficiently and accurately solved by soft thresholding, that is

where

is a symbolic function. The soft-thresholding approach provides an explicit solution to the original Equation (21).

3.4. SAR Image Despeckling

Equations (14), (18) and (21) are solved until the stopping criterion is satisfied. The clean group

is then estimated using the solved

,

and

as

. Based on the estimated clean group

, the SAR image reconstruction model is constructed as

where

is a regularization parameter and

is the

j-th column of the estimated clean group

. Equation (25) is a least squares problem that is minimized as follows:

where

is a diagonal matrix whose diagonal elements are the numbers of the corresponding pixels extracted from all similar patches. The inverse of the large-scale matrix

is easily obtained. Solving Equation (26) yields the reconstructed SAR image.

To further improve the speckle-reduction performance of the proposed model, we repeatedly remove speckles using an iterative regularization technique that filters the noise back to the denoised image as the new input image:

where

is the number of iterations and

is the relaxation parameter. The above despeckling process is repeated on the input image

to reconstruct the SAR image

. This procedure is repeated several times until the number of iterations reaches the threshold M, and the final despeckled SAR image is output from the model.

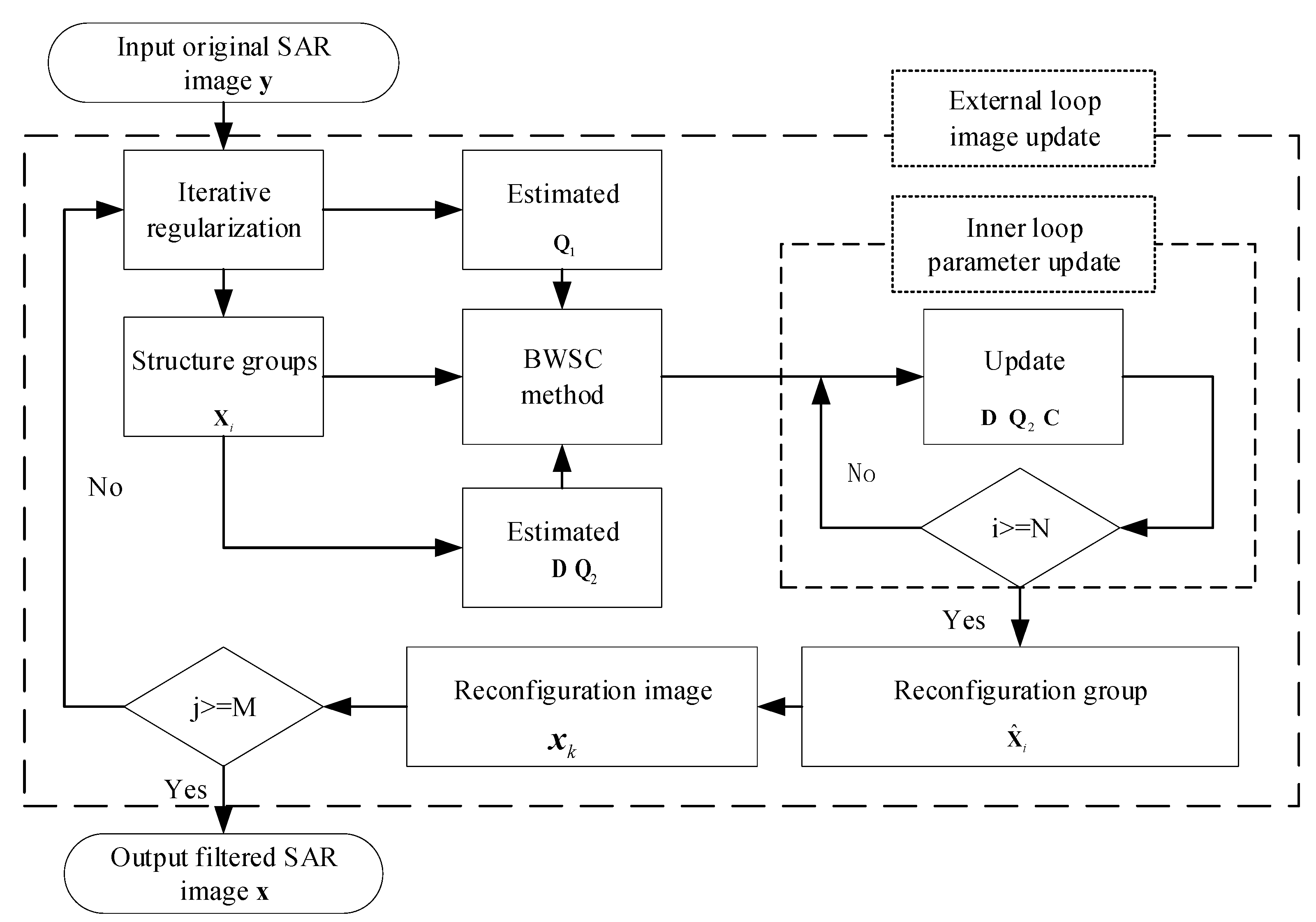

Figure 2 is a flowchart of the MWSC algorithm for suppressing speckle noise in SAR images.

4. Experimental Results and Analysis

In this section, we experimentally verify the performance of the proposed MWSC approach for SAR image despeckling. The despeckling performance was compared with those of Gamma-MAP filters, the Log-KSVD method, the PPB method, the POTDF method, the FANS method, and the SAR-BM3D method. PPB and SAR-BM3D are well-known classical algorithms for processing SAR images. The executable codes of the competing methods were downloaded from the authors’ website (

http://www.grip.unina.it/research/80-sar-despeckling.html) (16 October 2021).

To investigate the impacts of patch size and overlapping factor, despeckling experiments on three test images are separately conducted under various patch sizes and overlapping factors.

Figure 3 shows the curves of PSNR versus patch size and overlapping factor. From

Figure 3a, we have the following observations. As the patch size increases, the PSNR first increases and then drops. This is because using a large patch size allows more robust discrimination between noisy patches. However, using a too large patch will prevent the algorithm from finding enough similar patches. From

Figure 3b, it is concluded that the performance of our proposed algorithm is not quite sensitive to overlapping factor since all the curves are almost flat. The highest performance for each test image is achieved with the overlapping factor in the range [

2,

5]. Based on the above analysis, in the proposed MWSC algorithm, we set the size of image patch as 8 × 8 and overlapping factor is empirically set as 4. Besides, the range

of the training window for searching similar patches is set as 30 × 30. The number of image patches in one group is set as 32 and the size of the learning dictionary is set as 64 × 64.

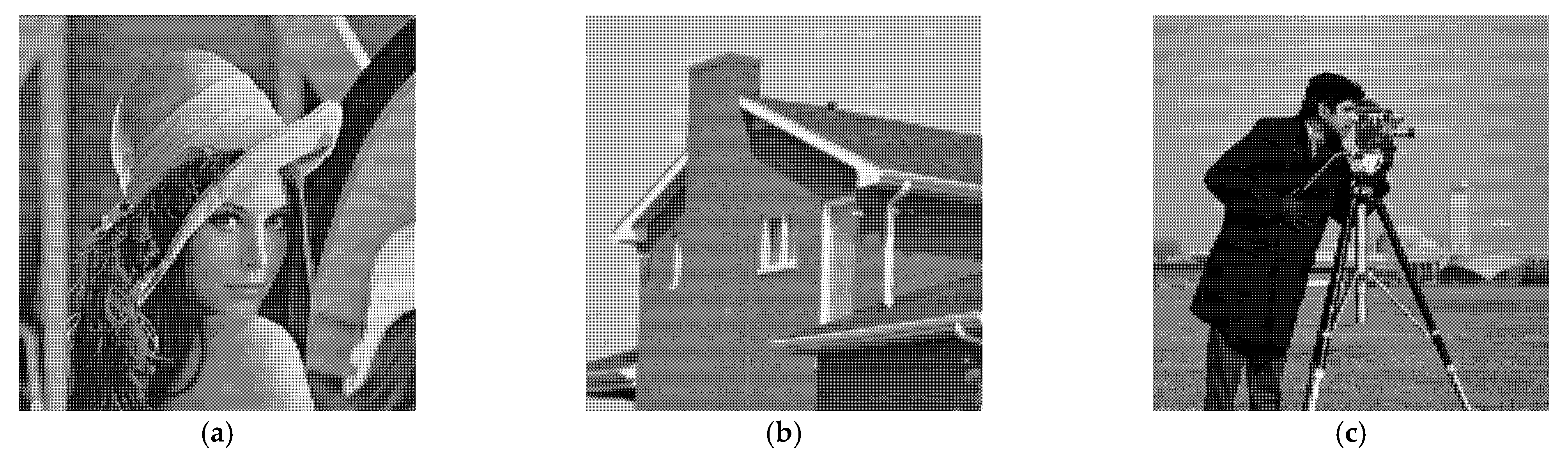

As noise-free signals are lacking in real SAR images, we simulated a SAR image for the experiment based on an optical image. In our simulated test, we chose the “House” and “Cameraman” images of size 256 × 256 and the “Lena” image of size 512 × 512. The despeckling performance was quantitatively evaluated by two performance measures: the peak-signal-to-noise ratio (PSNR) and the structural similarity index (SSIM), which evaluate the speckle-suppression capability of the algorithm and the structural similarity of the recovered image to the original image, respectively.

On real SAR images, the despeckling performance was evaluated by the equivalent number of looks (ENL) and the overall edge preservation index (EPI). The ENL is a widely used index that measures the degree of speckle suppression in a homogeneous region. A large ENL denotes a strong speckle-suppression ability. Meanwhile, the EPI reflects the degree of image-detail preservation. A large EPI indicates a strong detail-preservation ability.

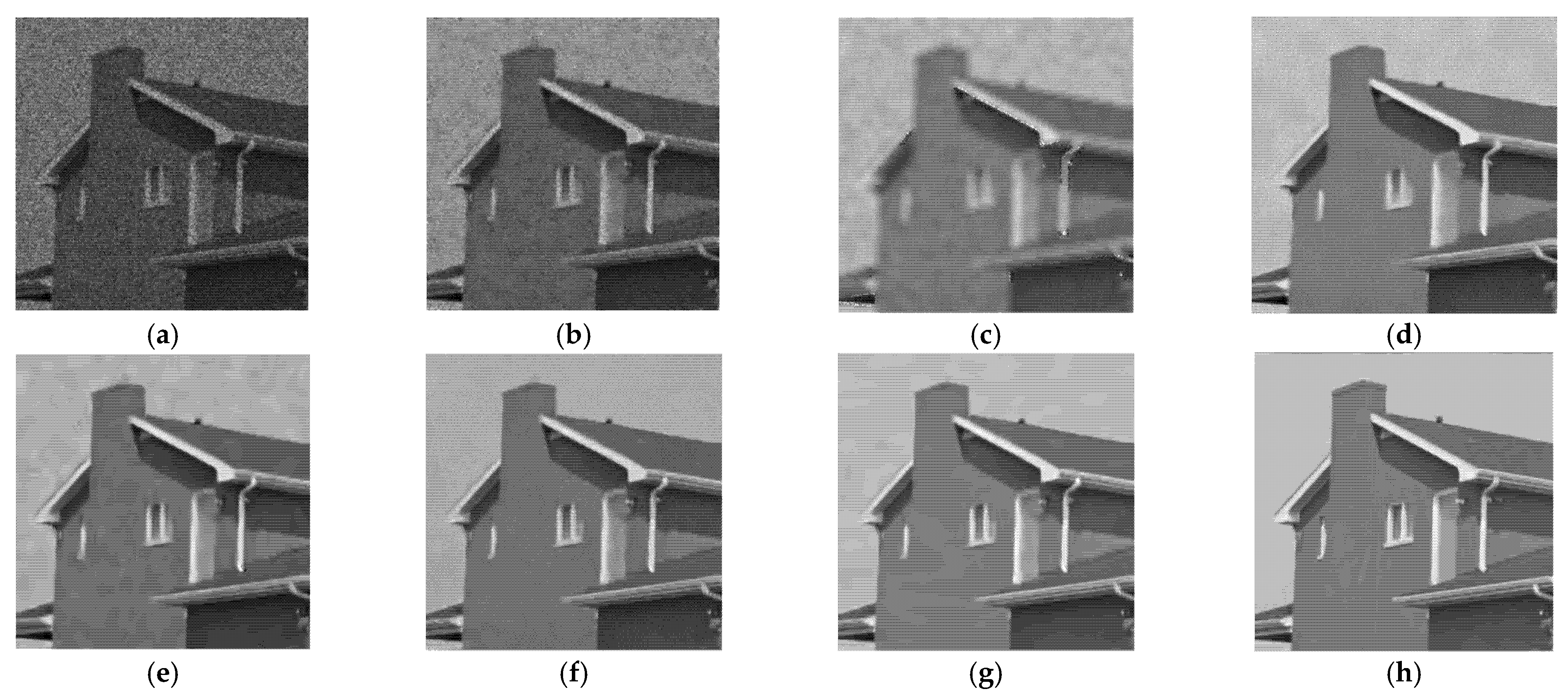

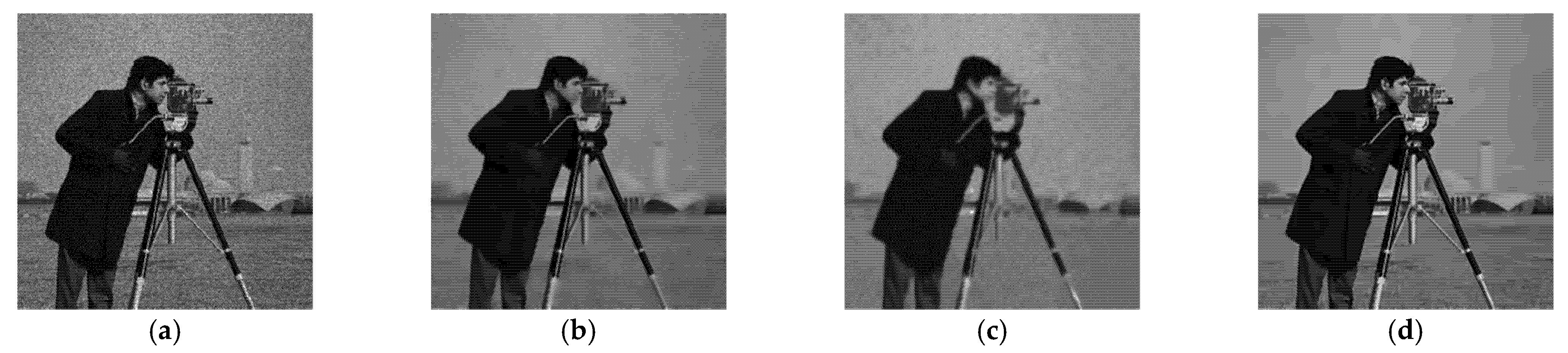

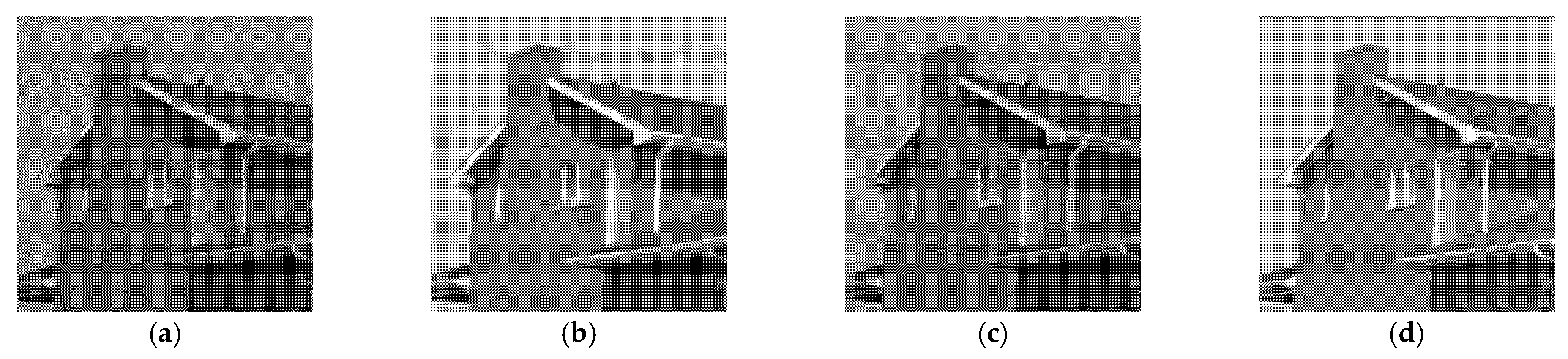

4.1. Despeckling Results of Simulated SAR Images

We first validated the proposed method on simulated SAR images generated by adding different levels of gamma speckle noise (

L = 4 or 16) to the natural images “House,” “Cameraman”, and “Lena”. The number of looks

L represents the speckle noise level, where smaller

L denotes a greater noise intensity. The original images are shown in

Figure 4.

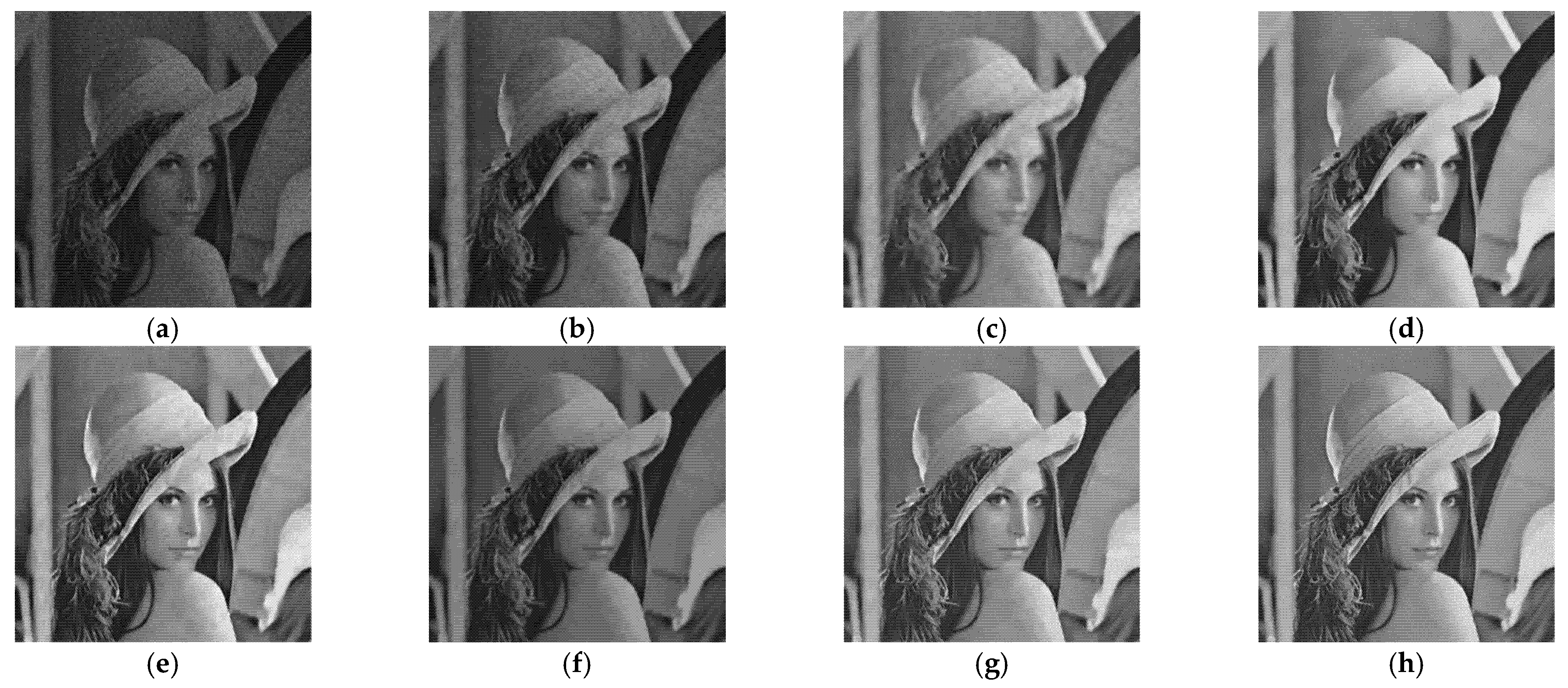

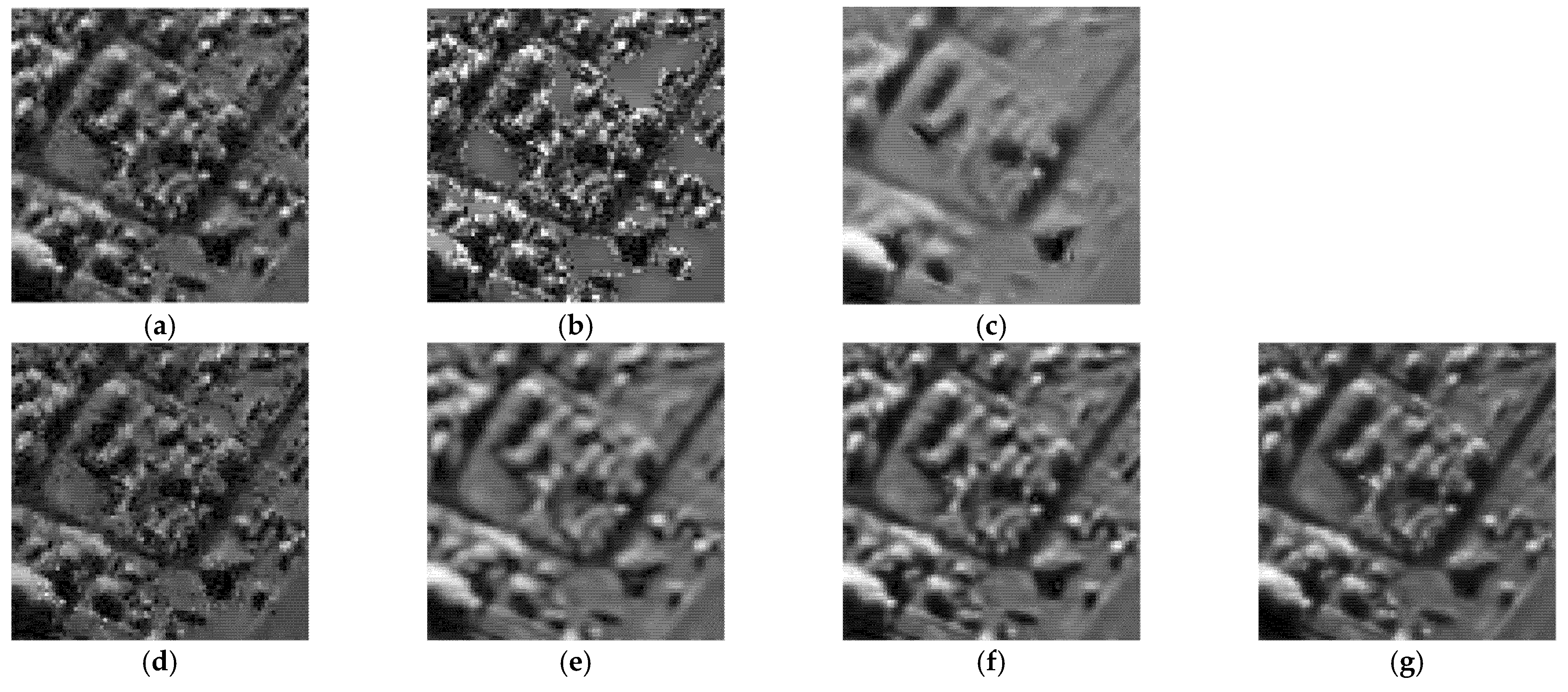

Figure 5,

Figure 6,

Figure 7 and

Figure 8 show the images obtained by the different despeckling methods on the simulated optical images contaminated by speckle. All methods achieved satisfactory despeckling performance, although Gamma-MAP introduced some obvious artifacts that severely degraded the image quality of Lena (

Figure 5c,

Figure 6c,

Figure 7c and

Figure 8c). The Log-KSVD method left significant noise residues in the smoothed areas (

Figure 5b and

Figure 6b). Although PPB provided a much smoother result than Log-KSVD and Gamma-MAP, it blurred some important details, such as the hat texture in

Figure 6d and the window edge in

Figure 7d. POTDF keeps working very well on the heterogeneous region while unsatisfactory on the homogeneous region (

Figure 7e). The SAR-BM3D method well preserved the texture information of the image and reasonably suppressed the speckle noise, but some residual noise appeared in the despeckled image of Lena (

Figure 6f). Likewise, FANS produces a smoother output, but many wavelet-related artifacts appear. We observe that the proposed MWSC model adequately suppressed speckle noise and better restored the edges and textures of the original images than the competing methods.

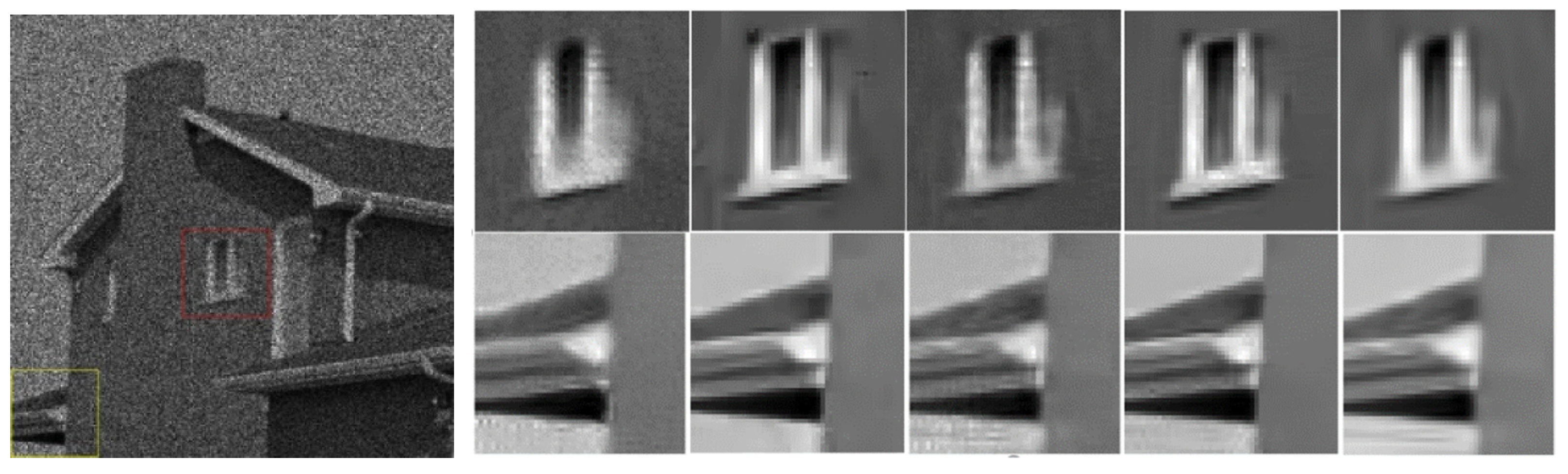

To demonstrate the processing results of each algorithm on image details and point targets, we enlarged some local details in

Figure 5 and

Figure 7. The enlargements are shown in

Figure 9 and

Figure 10, respectively. Here we compared only the results of PPB, POTDF, SAR-BM3D, FANS and MWSC, the results of the Log-KSVD and Gamma-MAP algorithms were omitted because their visual effects were poor. Visible speckle noise persisted in the House image despeckled by PPB (

Figure 10). The POTDF tended to produce an unfavorable oversmoothing effect. On the other hand, SAR-BM3D, FANS, and the proposed MWSC method best preserved the image details, but MWSC better suppressed the speckle noise than the SAR-BM3D and FANS. For example, significant speckle noise appeared in the enlarged local details of House obtained by SAR-BM3D and FANS (

Figure 10). Besides eliminating the speckle noise, the proposed MWSC algorithm better preserved the sharper edges and finer details than the other algorithms, and thereby obtained much clearer and better visual results. The corresponding PSNR and SSIM evaluations of the different algorithms on the three synthetic images are displayed in

Table 1. The MWSC algorithm achieved the highest PSNR and SSIM results in most cases (highlighted in bold font), consistent with the visual inspections.

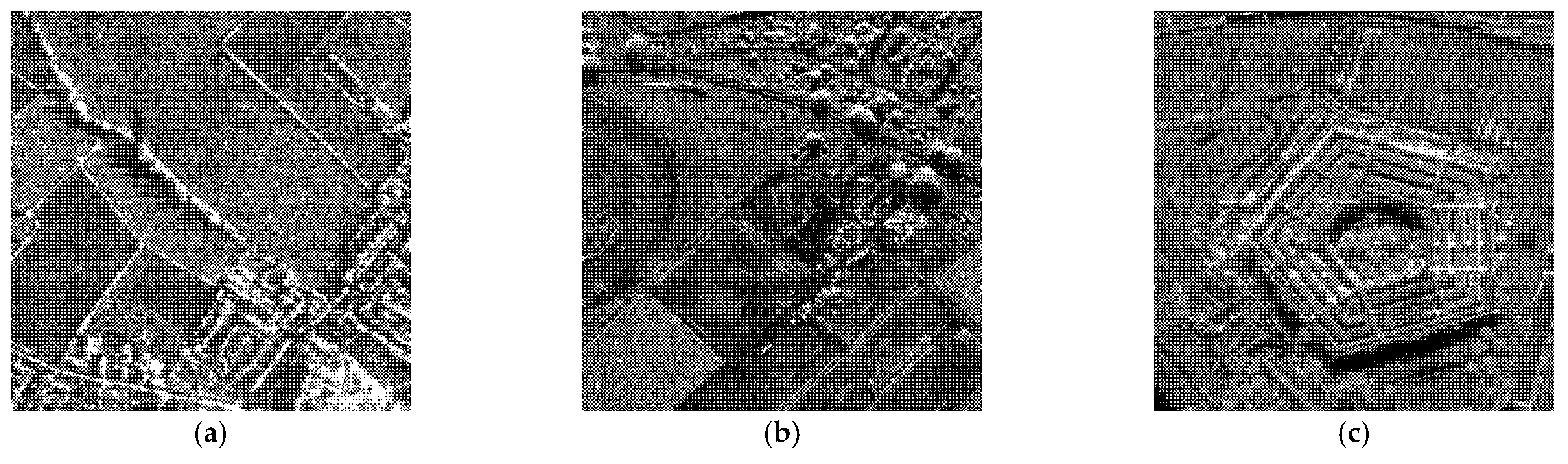

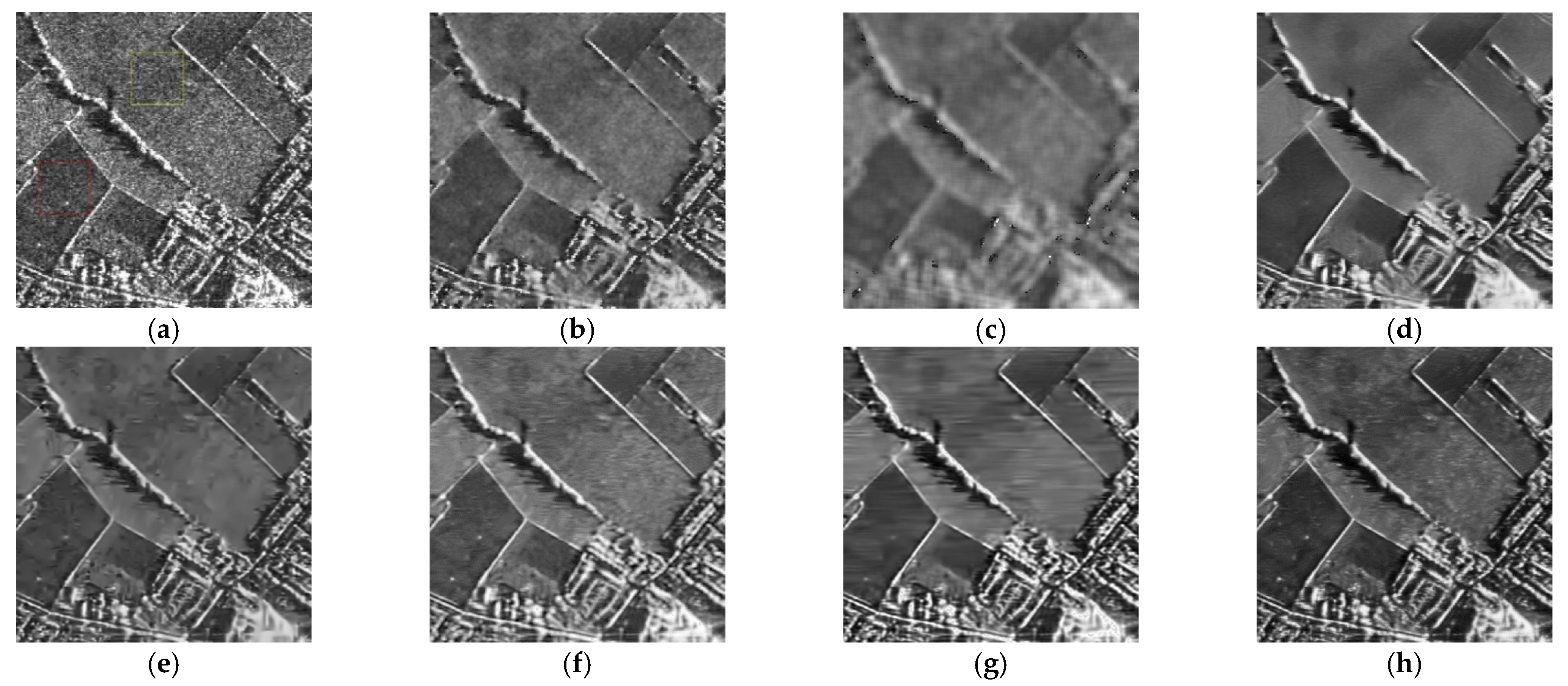

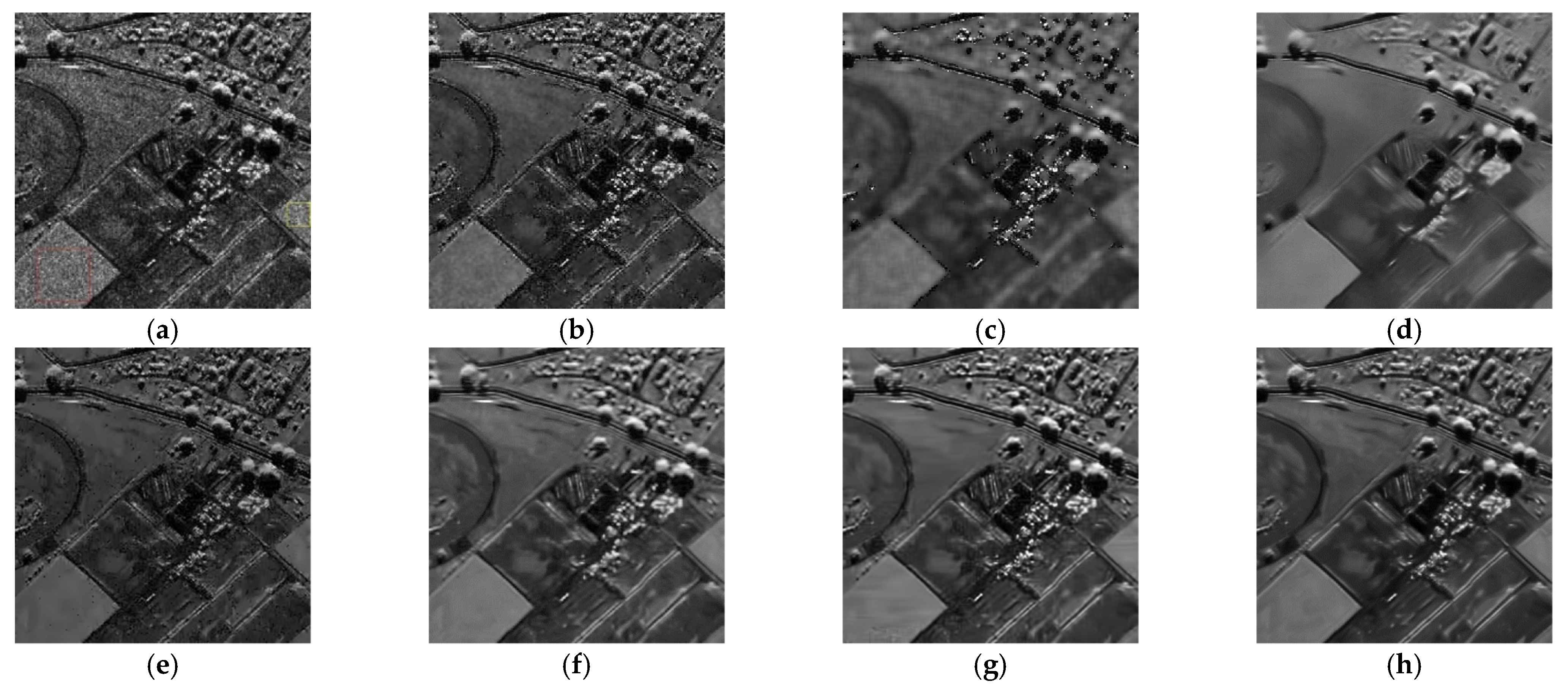

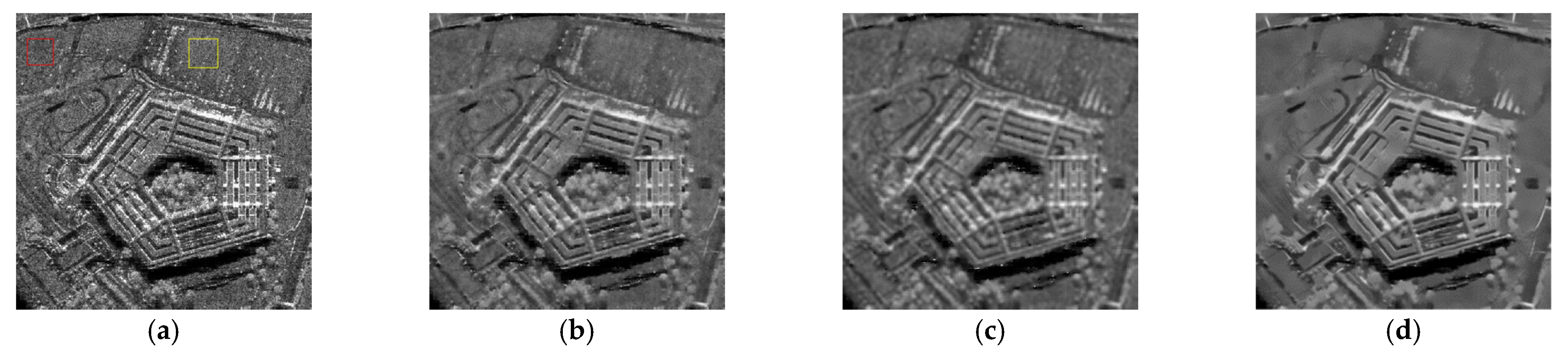

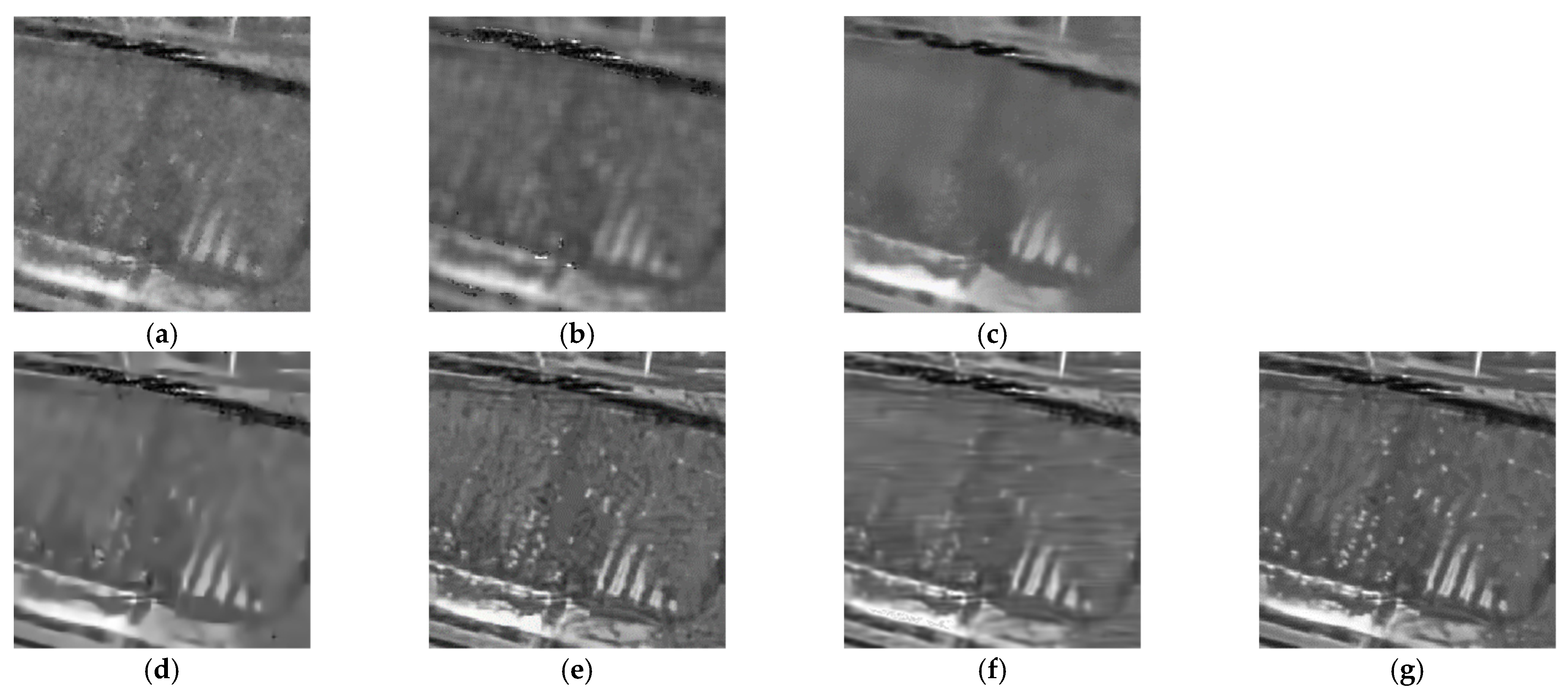

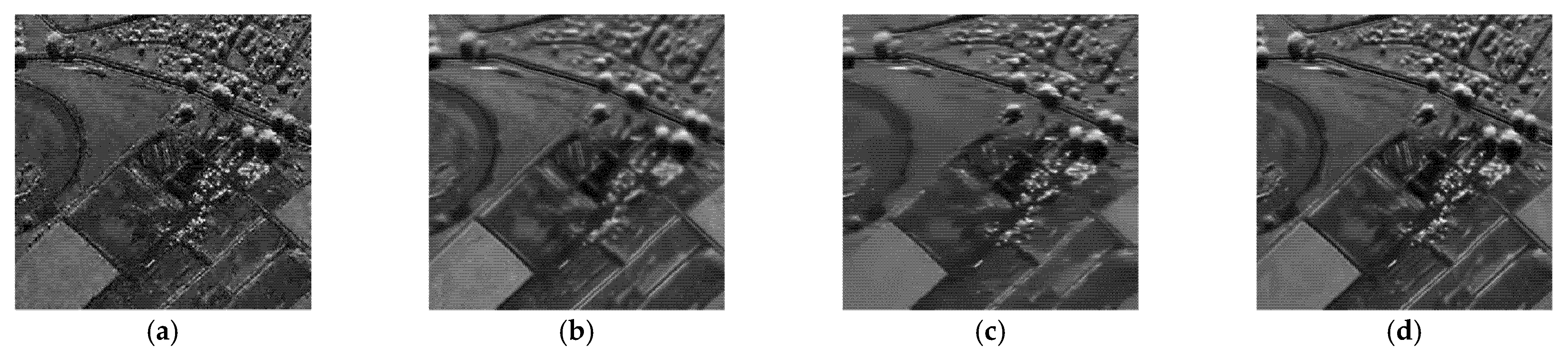

4.2. Despeckling Results of Real SAR Images

In this experiment, the despeckling performances of the proposed and existing algorithms were tested on real SAR images (see

Figure 11). The despeckled results of the real SAR images obtained by the compared methods are shown in

Figure 12,

Figure 13 and

Figure 14.

As shown in

Figure 12,

Figure 13 and

Figure 14, the proposed MWSC outperformed its competitors on the real SAR images. The Gamma-MAP algorithm eliminated less speckle noise than other methods and introduced serious aliasing artifacts. It also blurred edges of the details, as shown in

Figure 12c and

Figure 13c.The Log-KSVD algorithm slightly improved the despeckling effect but retained some obvious speckle noise (see

Figure 12b and

Figure 13b). Although PPB effectively suppressed the speckle noise from the SAR images, it blurred some edges and strong targets

Figure 12d and

Figure 14d). From

Figure 12e and

Figure 13e, we can find that POTDF still remains much noise in both homogeneous and heterogeneous areas. SAR-BM3D and FANS preserve all structures very well, with an accuracy comparable to that of MWSC. The MWSC, however, succeed in removing speckle in both homogeneous and heterogeneous areas, providing a sharper result and contributing to a better perceived quality. To more intuitively compare the detailed processing of the various algorithms,

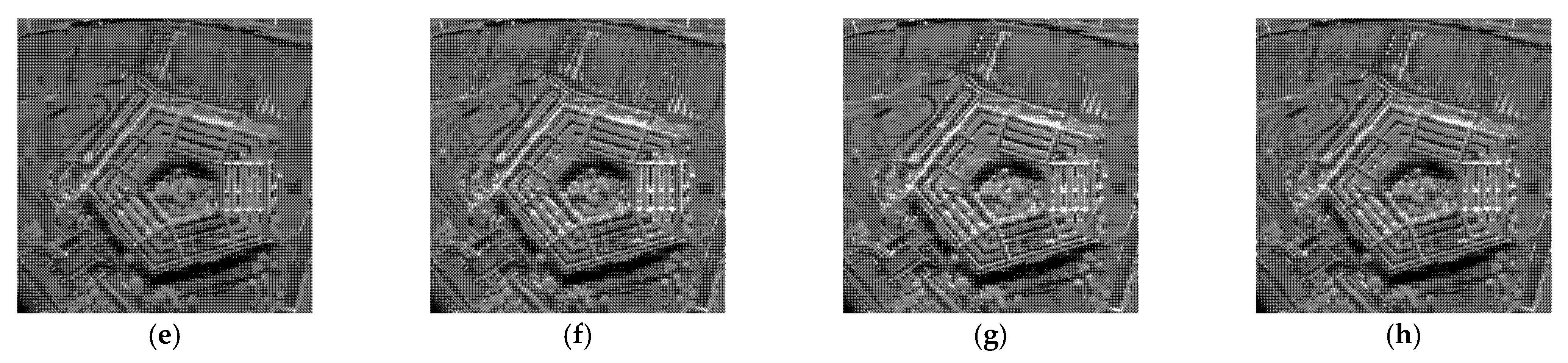

Figure 15 and

Figure 16 present local enlargements of the despeckled images obtained by the despeckling methods. Again, the proposed MWSC method achieved a higher visual quality than the other despeckling methods.

To quantitatively evaluate the despeckling results, the corresponding ENLs and EPIs of the three real images are provided in

Table 2, with the best values highlighted in bold. The ENL values were calculated in homogeneous regions of the real SAR images. Regions 1 and 2 in

Table 2 correspond to the red and yellow regions, respectively, in the SAR images (such as the

Figure 12a). PPB and the proposed MWSC achieved higher ENL than the other despeckling methods, affirming that the PPB and MWSC algorithms have stronger speckle-reduction ability in the homogeneous regions than the remaining algorithms. This conclusion is consistent with the above visual inspection. Meanwhile, SAR-BM3D, FANS, and MWSC obtained higher EPI than PPB, Gamma-MAP, Log-KSVD and POTDF, confirming the superior performance of SAR-BM3D, FANS and MWSC in image-detail retention. In short, the proposed MWSC provided the best compromise between speckle reduction and detail preservation.

4.3. Impacts of Weights and Dictionaries on Performance

To better understand the roles of the weights and and the learning dictionary in the MWSC model, we compared the performances of the proposed MWSC and several MWSC-based benchmark methods. We first replaced the weight with the unit diagonal matrix, forming the QWSC benchmark model. Replacing the learning dictionary with the wavelet dictionary, we then formed the DWSC benchmark model.

Figure 17 and

Figure 18 present the despeckled images obtained by the different despeckling methods. Log-KSVD retained a large amount of speckle noise in the “House” image (

Figure 17a). QWSC improved the visual effect of “House” because the weights

improve the sparse representation capability of the QWSC algorithm and further enhance the speckle-suppression performance (

Figure 17b). MWSC achieved a higher visual effect than QWSC because the weights

effectively capture and utilize the statistical properties of the noise (

Figure 18b,d). Although the result of DWSC exhibited no obvious speckle noise, a small amount of detailed information was lost (

Figure 18c). Obviously, the learning dictionary in the proposed model adapted to different structures of the image to improve the speckle-reduction performance of the model.

Table 3 and

Table 4 give the numerical evaluation results of the different algorithms on the “House” and real SAR2 images, respectively, with the best results marked in bold. As shown in

Table 3, MWSC achieved higher PSNR and SSIM values than the benchmark algorithms on “House,” confirming that weight

, weight

, and dictionary learning all improved the PSNR and SSIM values of the recovered images to different degrees. Meanwhile, the ENL and EPI values in

Table 4 clarify that the proposed BWCS algorithm well balanced the speckle noise suppression with detail preservation in the real SAR image, outperforming the Log-KSVD algorithm and the benchmark algorithms.

This comprehensive analysis demonstrates that introducing weight improves the noise-statistical properties of SAR images, while introducing weight captures the sparse a priori properties of SAR images. The learning dictionary is more adaptable to different image structures than the fixed dictionary, thereby enhancing the signal sparsity and achieving competitive SAR image-despeckling performance.