1. Introduction

Human collectives are examples of a specific subclass of complex adaptive system, the sub-system components of which—individual humans—are themselves highly autonomous complex adaptive systems. Consider that, subjectively, we perceive ourselves to be autonomous individuals at the same time that we actively participate in collectives. Families, organizations, sports teams, and polities exert agency over our individual behavior [

1,

2] and are even capable, under certain conditions, of intelligence that cannot be explained by aggregation of individual intelligence [

3,

4]. To date, however, formal models of collective intelligence have lacked a plausible mathematical description of the functional relationship between individual and collective behavior.

In this paper, we use the Active Inference Framework (AIF) to develop a clearer understanding of the relationship between patterns of individual interaction and collective intelligence in systems composed of highly autonomous subsystems, or “agents”. We adopt a definition of collective intelligence established within organizational psychology, as groups of individuals capable of acting collectively in ways that seem intelligent and that cannot be explained by individual intelligence [

5] (p.3). As we outline below, collective intelligence can be operationalized under AIF as a composite system’s ability to minimize free energy or perform approximate Bayesian inference at the collective level. To demonstrate the formal relationship between local-scale agent interaction and collective behavior, we develop a computational model that simulates the behavior of two autonomous agents in state space. In contrast to typical agent-based models, in which agents behave according to more rudimentary decision-making algorithms (e.g., from game theory; see [

6]), we model our agents as self-organizing systems whose actions are themselves dictated by the directive of free energy minimization relative to the “local” degrees of freedom accessible to them, including those that specify their embedding in the larger system [

7,

8,

9]. We demonstrate that AIF may be particularly useful for elucidating mechanisms and dynamics of systems composed of highly autonomous interacting agents, of which human collectives are a prominent instance. But the universality of our formal computational approach makes our model relevant to collective intelligence in any composite system.

1.1. Motivation: The “Missing Link” between Individual-Level and System-Level Accounts of Human Collective Intelligence

Existing formal accounts of collective intelligence are predicated on composite systems whose sub-system components are subject to vastly fewer degrees of freedom than individuals in human collectives. Unlike ants in a colony or neurons in a brain, which appear to rely on comparatively rudimentary autoregulatory mechanisms to sustain participation in collective ensembles [

10,

11], human agents participate in collectives by leveraging an array of phylogenetic (evolutionarily) and ontogenetic (developmental) mechanisms and socio-culturally constructed regularities or affordances (e.g., language) [

12,

13,

14]. Human agents’ cognitive abilities and sociocultural niches create avenues for active participation in functional collective behavior (e.g., the pursuit of shared goals), as well as avenues to shirk global constraints in the pursuit of local (individual) goals. Mathematical models for collective intelligence of this subclass of system must not only seek to account for richer complexity of agent behavior at each scale of the system (particularly at the individual level), but also the relationship between local scale interaction between individual agents and global scale behavior of the collective.

Existing research of human collective intelligence is limited precisely by a lack of alignment between these two scales of analysis. On the one hand, accounts of local-scale interactions from behavioral science and psychology tend to construe individual humans as goal-directed individuals endowed with discrete cognitive mechanisms (specifically social perceptiveness or Theory of Mind and shared intentionality; see [

15,

16]) that allow individuals to establish and maintain adaptive connections with other individuals in service of shared goals [

3,

4,

5,

17,

18,

19] (Riedl and colleagues [

19] report a recent analysis of 1356 groups that found social perceptiveness and group interaction processes to be strong predictors of collective intelligence measured by a psychometric test.). Researchers conjecture that these mechanisms allow collectives to derive and utilize more performance-relevant information from the environment than could be derived by an aggregation of the same individuals acting without such connections (for example, by facilitating an adaptive, system-wide balance between cognitive efficiency and diversity; see [

4]). Empirical substantiation of such claims has proven difficult, however. Most investigations rely heavily on laboratory-derived summaries or “snapshots” of individual and collective behavior that flatten the complexity of local scale interactions [

20] and make it difficult to examine causal relationships between individual scale mechanisms and collective behavior as they typically unfold in real world settings [

21,

22].

Accounts of global-scale (collective) behavior, by contrast, tend to adopt system-based (rather than agent-based) perspectives that render collectives as random dynamical systems in phase space, or equivalent formulations [

23,

24,

25,

26]. Only rarely deployed to assess the construct of human collective intelligence specifically (e.g., [

27]), these approaches have been fruitful for identifying gross properties of phase-space dynamics (such as synchrony, metastability, or symmetry breaking) that correlate with collective intelligence or collective performance, more generally construed [

28,

29,

30,

31,

32]. However, on their own, such analyses are limited in their ability to generate testable predictions for multiscale behavior, such as how global-scale dynamics (rendered in phase-space) translate to specific local-scale interactions between individuals (in state-space), or how local-scale interactions between individuals translate to evolution and change in collective global-scale dynamics [

26].

In sum, the substantive differences between these two analytical perspectives (individual and collective) on collective intelligence in human systems make it difficult to develop a formal description of how local-scale interactions between autonomous individual agents relate to global-scale collective behavior and vice versa. Most urgent for the development of a formal model of collective intelligence in this subclass of system, therefore, is a common mathematical framework capable of operating between individual-level cognitive mechanisms and system-level dynamics of the collective [

4].

1.2. The Free Energy Principle and an Active Inference Formulation of Collective Intelligence

FEP has recently emerged as a candidate for this type of common mathematical framework for multiscale behavioral processes [

33,

34,

35]. FEP is a mathematical formulation of how adaptive systems resist a natural tendency to disorder [

33,

36]. FEP states that any non-equilibrium steady state system self organizes as such by minimizing variational free energy in its exchanges with the environment [

37]. The key trick of FEP is that the principle of free energy minimization can be neatly translated into an agent-based process theory, AIF, of approximate Bayesian inference [

38] and applied to any self-organizing biological system at any scale [

39]. The upshot is that, in theory, any AIF agent at one spatio-temporal scale could be simultaneously composed of nested AIF agents at the scale below, and a constituent of a larger AIF agent at the scale above it [

40,

41,

42]. In effect, AIF allows you to pick a composite system or agent A that you want to understand, and it will be generally true both that: A is an approximate, global minimizer of free energy at the scale at which that agent reliably persists; and A is composed of subsystems {A_i} that are approximate, local minimizers of free energy (which is composed of the remainder of A). Thus, under AIF, collective intelligence can conceivably be modelled as a case of individual AIF agents that interact within—or indeed, interact to produce—a superordinate AIF agent at the scale of the collective [

9,

43]. In this way, AIF provides a framework within which a multiscale model of collective intelligence could be developed. The aim of this paper is to propose a provisional AIF model of collective intelligence that can depict the relationship between local-scale interactions and collective behavior.

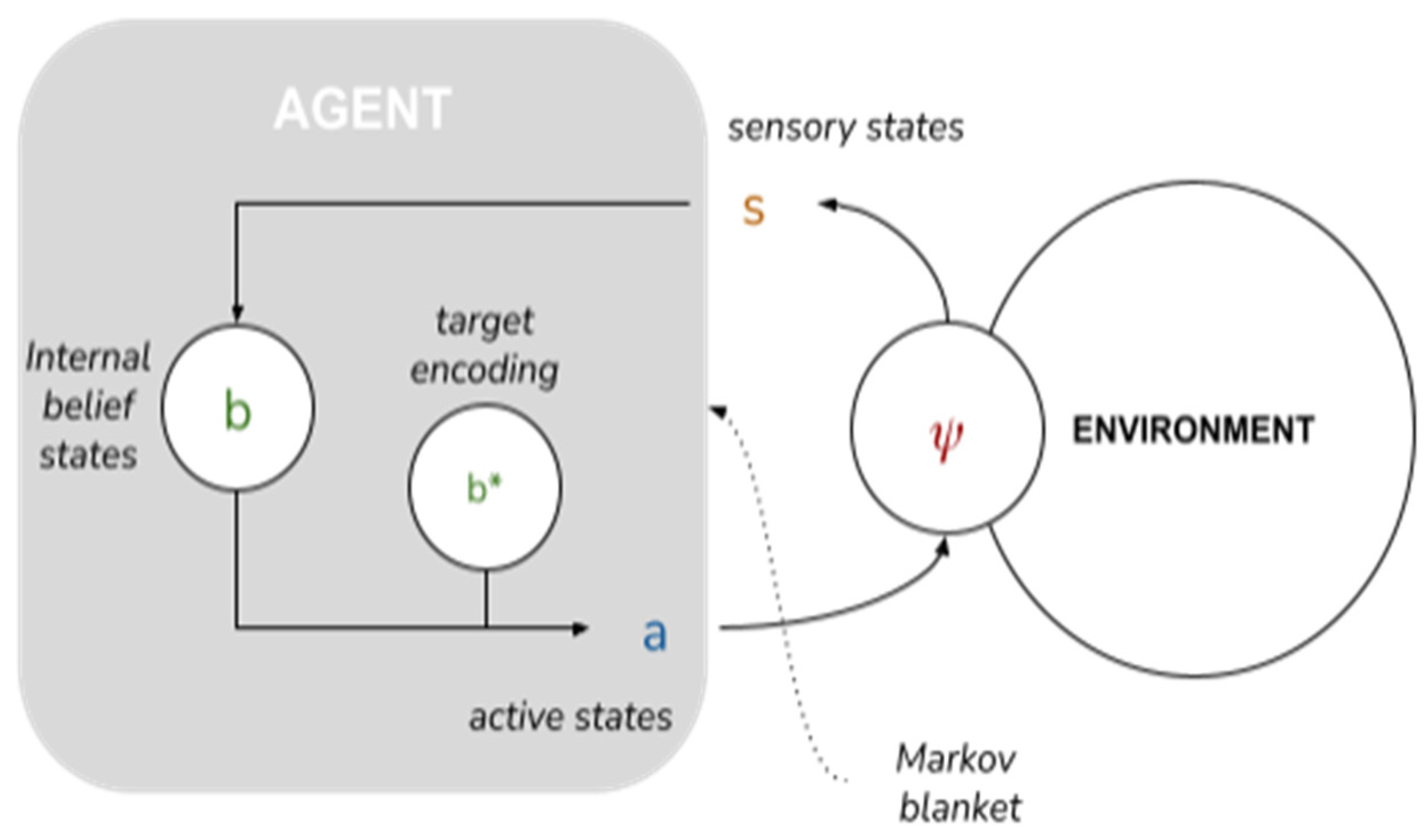

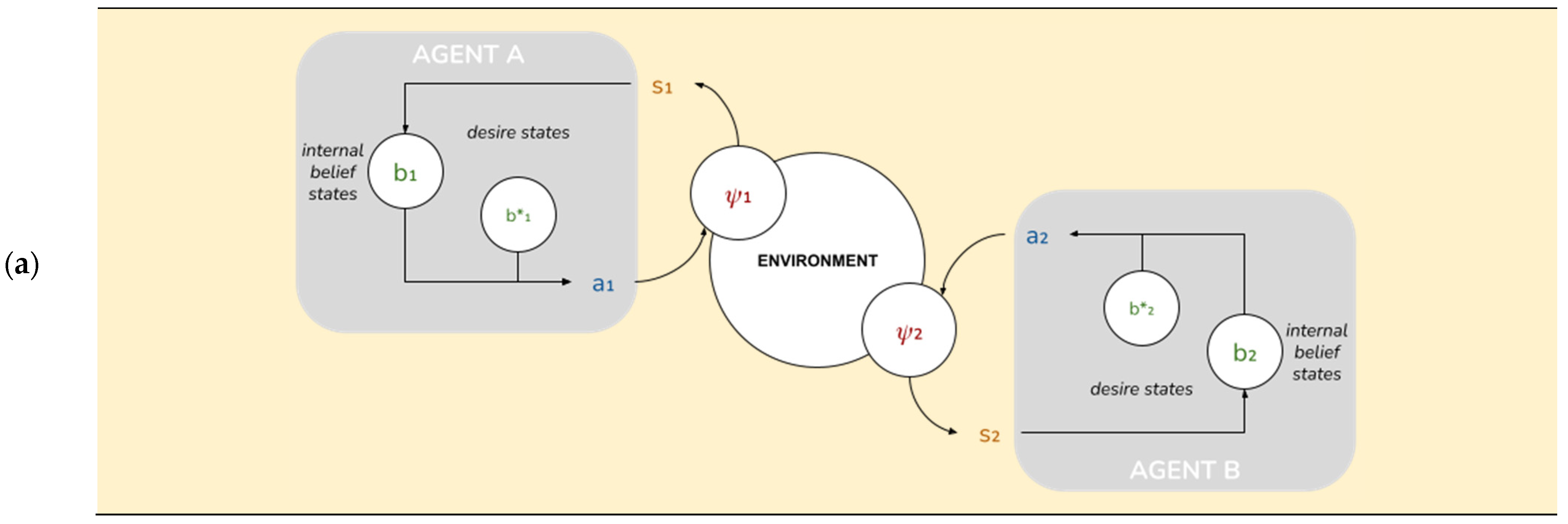

An AIF model of collective intelligence begins with the depiction of a minimal AIF agent. Specifically, an AIF agent denotes any set of states enclosed by a “Markov blanket”—a statistical partition between a system’s internal states and external states [

44]—that infers beliefs about the causes of (hidden) external states by developing a probabilistic

generative model of external states [

37]. A Markov blanket is composed of sensory states and active states that mediate the relationship between a system’s internal states and external states: external states (

) act on sensory states (

), which influence, but are not influenced by internal states (

). Internal states couple back through active states (

), which influence but are not influenced by external states. Through conjugated repertoires of perception and action, the agent embodies and refines (learns) a generative model of its environment [

45] and the environment embodies and refines its model of the agent (akin to a circular process of environmental niche construction; see [

12]).

Having established the notion of an AIF agent, the next step in developing an AIF model of collective intelligence is to consider the existence of multiple nested AIF agents across individual and collective scales of organization. Existing multiscale treatments of AIF provide a clear account of “downward reaching” causation, whereby superordinate AIF agents like brains or multicellular organisms systematically determine [

46] the behavior of subordinate AIF agents (neurons or cells), limiting their behavioral degrees of freedom [

9,

40,

47,

48]. Consistent with this account of downward-reaching causation, existing toy models that simulate the emergence of collective behavior under AIF do so by simply using the statistical constraints from one scale to drive behavior at another, e.g., by explicitly endowing AIF agents with a genetic prior for functional specialization within a superordinate system [

9] or by constructing a scenario in which the emergence of a superordinate agent at the global scale is predestined by limiting an agent’s model of the environment to sensory evidence generated by a counterpart agent [

7,

8].

While perhaps useful for depicting the behavior of cells within multicellular organisms [

9] or exact behavioral synchronization between two or more agents [

7,

8], these existing AIF models are less well-suited to explain collective intelligence in human systems, for two reasons. First, humans are relatively autonomous individual agents whose statistical boundaries for self-evidencing appear to be transient, distributed, and multiple [

49,

50,

51,

52]. Therefore, human collective intelligence cannot be explained simply by the way in which global-level system regularities constrain individual interaction from the “top-down”. Second, the behavior of the collective in these toy models reflects the instructions or constraints supplied exogenously by the “designer” of the system, not a causal consequence of individual agents’ autonomous problem-solving enabled by AIF. In this sense, extant models of AIF for collectives bear a closer resemblance to Searle’s [

53] “Chinese Room Argument” than to what we would recognize as emergent collective intelligence.

In sum, currently missing from AIF models of composite systems are specifications for how a system’s emergent global-level cognitive capabilities causally relate to individual agents’ emergent cognitive capabilities, and how local-scale interactions between individual AIF agents give rise,

endogenously, to a superordinate AIF agent that exhibits (collective) intelligence [

43]. Specifically, existing approaches lack a description of the key cognitive mechanisms of AIF agents that might provide a functional “missing link” for collective intelligence. In this paper, we initiate this line of inquiry by exploring whether some basic information-theoretic capabilities of individual AIF agents, motivated by analogies with human social capabilities, create opportunities for collective intelligence at the global scale.

1.3. Our Approach

To operationalize AIF in a way that is useful for investigating this question, we begin by examining what minimal features of autonomous individual AIF agents are required to achieve collective intelligence, operationalized as active inference at the level of the global-scale system. We conjecture that very generic information theoretic patterns of an environment in which individual AIF agents exploit other AIF agents as affordances of free energy minimization should support the emergence of collective intelligence. Importantly, we expect that these patterns emerge under very general assumptions and from the dynamics of AIF itself—without the need for exogenously imposed fitness or incentive structures on local-scale behavior, contra extant computational models of collective intelligence (that rely on cost or utility functions; e.g., [

54,

55]) or other common approaches to reinforcement learning (that rely on exogenous parameters of the Bellman equation; see [

56,

57]).

To justify our modelling approach, we draw upon recent research that systematically maps the complex adaptive learning process of AIF agents to empirical social scientific evidence for cognitive mechanisms that support adaptive human social behavior. In line with this research, we posit a series of stepwise progressions or “hops” in the individual cognitive ability of any AIF agent in an environment populated by other self-similar AIF agents. These hops represent evolutionarily plausible “adaptive priors” [

42] (p.109) that would likely guide action-perception cycles of AIF agents in a collective toward unsurprising states:

Baseline AIF—AIF agents, to persist as such, will minimize immediate free energy by accurately sensing and acting on salient affordances of the environment. This will require a general ability for “perceptiveness” of the (physical) environment.

Folk Psychology—AIF agents in an environment populated by other AIF agents would fare better by minimizing free energy not only relative to their physical environment, but also to the “social environment” composed of their peers [

13]. The most parsimonious way for AIF agents to derive information from other agents would be to (i) assume that other agents are self-similar, or are “creatures like me” [

58], and (ii) differentiate other-generated information by calculating how it diverges from self-generated information (akin to a process of “alterity” or self-other distinction). This ability aligns with the notion of a “folk psychological theory of society”, in which humans deploy a combination of phylogenetic and ontogenetic modules to process social information [

59,

60].

Theory of Mind—AIF agents that develop “social perceptiveness” or an ability to accurately infer beliefs and intentions of other agents will likely outperform agents with less social perceptiveness. Social perceptiveness, also commonly known in cognitive psychology as “Theory of Mind”, would minimally require cognitive architecture for encoding the internal belief states of other agents as a source of self-inference (for game-theoretical simulations of this proposal, see [

61,

62]). As discussed above, experimental evidence suggests that social perceptiveness or Theory of Mind (measured using the “Reading the Mind in the Eyes” test; see [

63]) is a significant predictor of human collective intelligence in a range of in-person and on-line collaborative tasks [

4].

Goal Alignment—It is possible to imagine scenarios in which the effectiveness of Theory of Mind would be limited, such as situations of high informational uncertainty (in which other agents hold multiple or unclear goals), or in environments populated by more agents than would be computationally tractable for a single AIF agent to actively theorize [

64]. AIF agents capable of transitioning from merely encoding internal belief states of other AIF agents to recognizing shared goals and actively aligning goals with other AIF agents would likely enjoy considerable coordination benefits and (computational) efficiencies [

16,

65] that would also likely translate to collective-level performance [

55,

66].

Shared Norms—Acquisition of capacities to engage directly with the reified signal of sharedness (a.k.a., “norms”) between agents as a stand-in for (or in addition to) bottom-up discovery of mutually viable shared goals would also likely confer efficiencies to individuals and collectives [

12]. Humans appear unique in their ability to leverage densely packaged socio-cultural installed affordances to cue regimes of perception and action that establish and stabilize adaptive collective behavior without the need for energetically expensive parsing of bottom-up sensory signals (a process recently described as “Thinking through Other Minds”; see [

14]).

The clear resonance between generic information-theoretic patterns of basic AIF agents and empirical evidence of human social behavior is remarkable, and gives credence to the extension of seemingly human-specific notions such as “alterity”, “shared goals”, “alignment”, “intention”, and “meaning” to a wider spectrum of bio-cognitive agents [

67]. In effect, the universality of FEP—a principle that can be applied to any biological system at any scale—makes it possible to strip-down the complex and emergent behavioral phenomenon of collective intelligence to basic operating mechanisms, and to clearly inspect how local-scale capabilities of individual AIF agents might enable global-scale state optimization of a composite system.

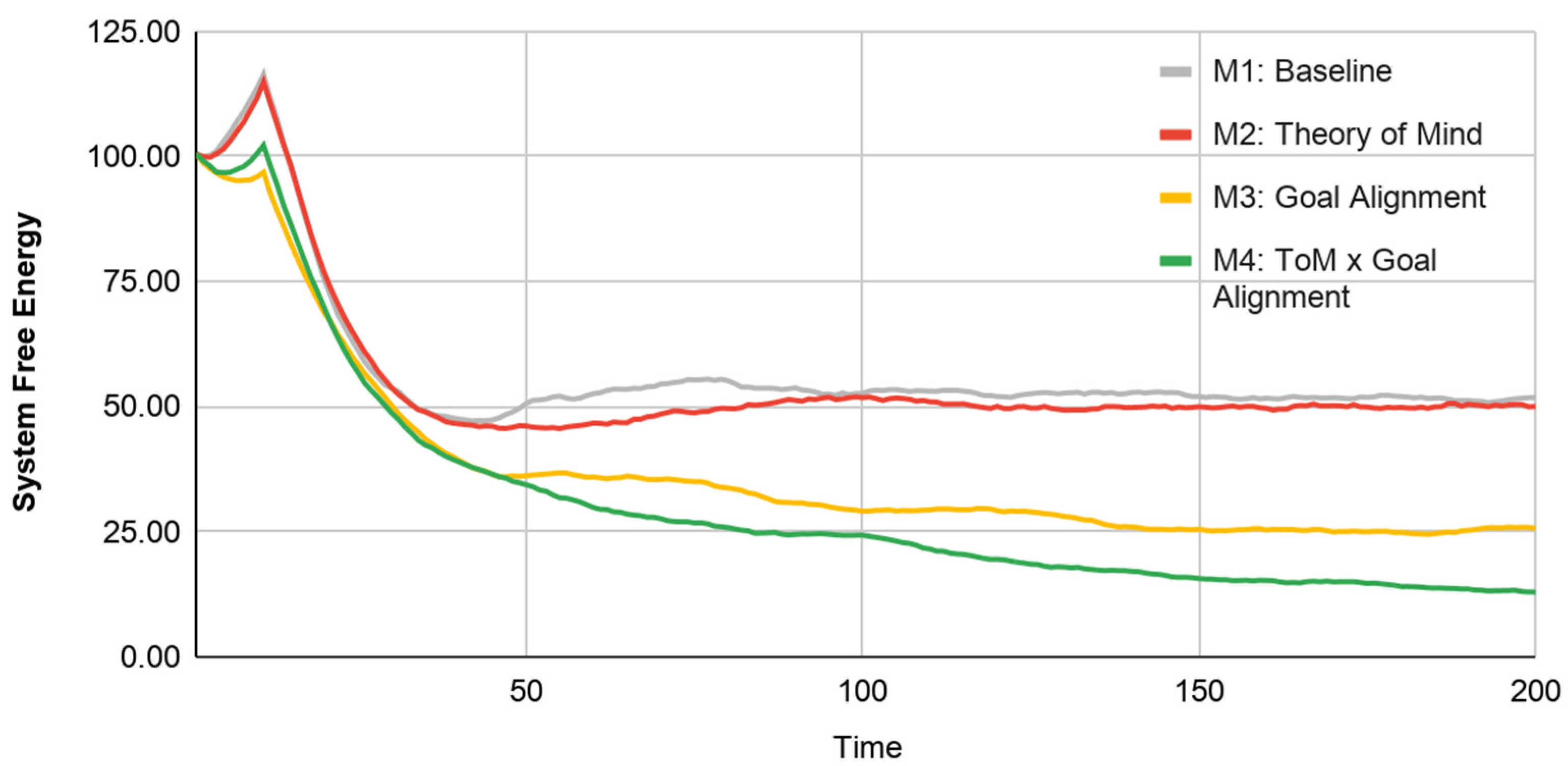

In the following section we use AIF to model the relationship between a selection of these hops in cognitive ability and collective intelligence. We construct a simple 1D search task based on [

68], in which two AIF agents interact as they pursue individual and shared goals. We endow AIF agents with two key cognitive abilities—Theory of Mind and Goal Alignment—and vary these abilities systematically in four simulations that follow a 2 × 2 (Theory of Mind × Goal Alignment) progression: Model 1 (Baseline AIF, no social interaction), Model 2 (Theory of Mind without Goal Alignment), Model 3 (Goal Alignment without Theory of Mind), and Model 4 (Theory of Mind with Goal Alignment). We use a measure of free energy to operationalize performance at the local (individual) and global (collective) scales of the system [

69]. While our goals in this paper are exploratory (these models and simulations are designed to be generative, not to test hypotheses), we do generally expect that increases in sophistication of cognitive abilities at the level of individual agents will correspond with an increase in local- and global-scale performance. Indeed, illustrative results of model simulations (

Section 3) show that each hop in cognitive ability improves global system performance, particularly in cases of alignment between local and global optima.

2. Materials and Methods

2.1. Paradigm and Set-Up

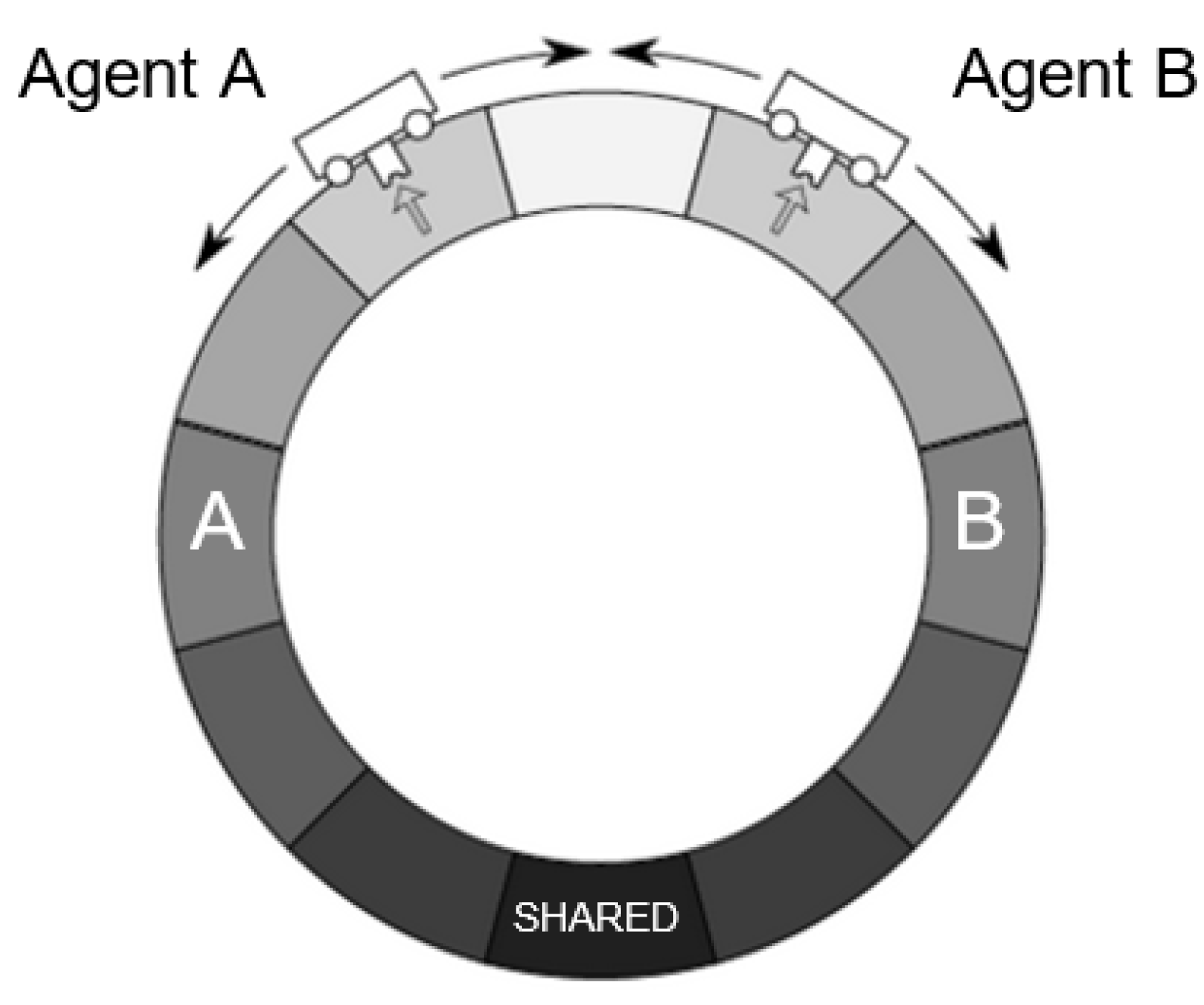

Our AIF model builds upon the work of McGregor and colleagues, who develop a minimal AIF agent that behaves in a discrete one-dimensional time world [

68]. In this set-up, a single agent senses a chemical concentration in the environment and acts on the environment by moving one of two ways until it arrives at its desired state, the position in which it believes the chemical concentration to be highest, denoting a food source. We adapt this paradigm by modelling two AIF agents (Agent A and Agent B) that occupy the same world and interact according to parameters described below (see

Figure 1). The McGregor et al. paradigm and AIF model is attractive for its computational implementability and tractability as a simple AIF agent with minimum viable complexity. It is also accessible and reproducible; whereas most existing agent-based implementations of AIF are implemented in MATLAB, using the SPM codebase (e.g., [

57]), an implementation of the McGregor et al. AIF model is widely available in the open-source programming language Python, using only standard open source numerical computing libraries [

70]. For a comprehensive mathematical guide to FEP and a simple agent-based model implementing perception and action under AIF, see [

36].

We extend the work of McGregor and colleagues to allow for interactions not only between an agent and the “physical” environment, but also between an agent and its “social” environment (i.e., its partner). Accordingly, we make minor simplifications to the McGregor et al. model that are intended to reduce the number of independent parameters and make interpretation of phenomena more straightforward (alterations to the McGregor et al. model are noted throughout).

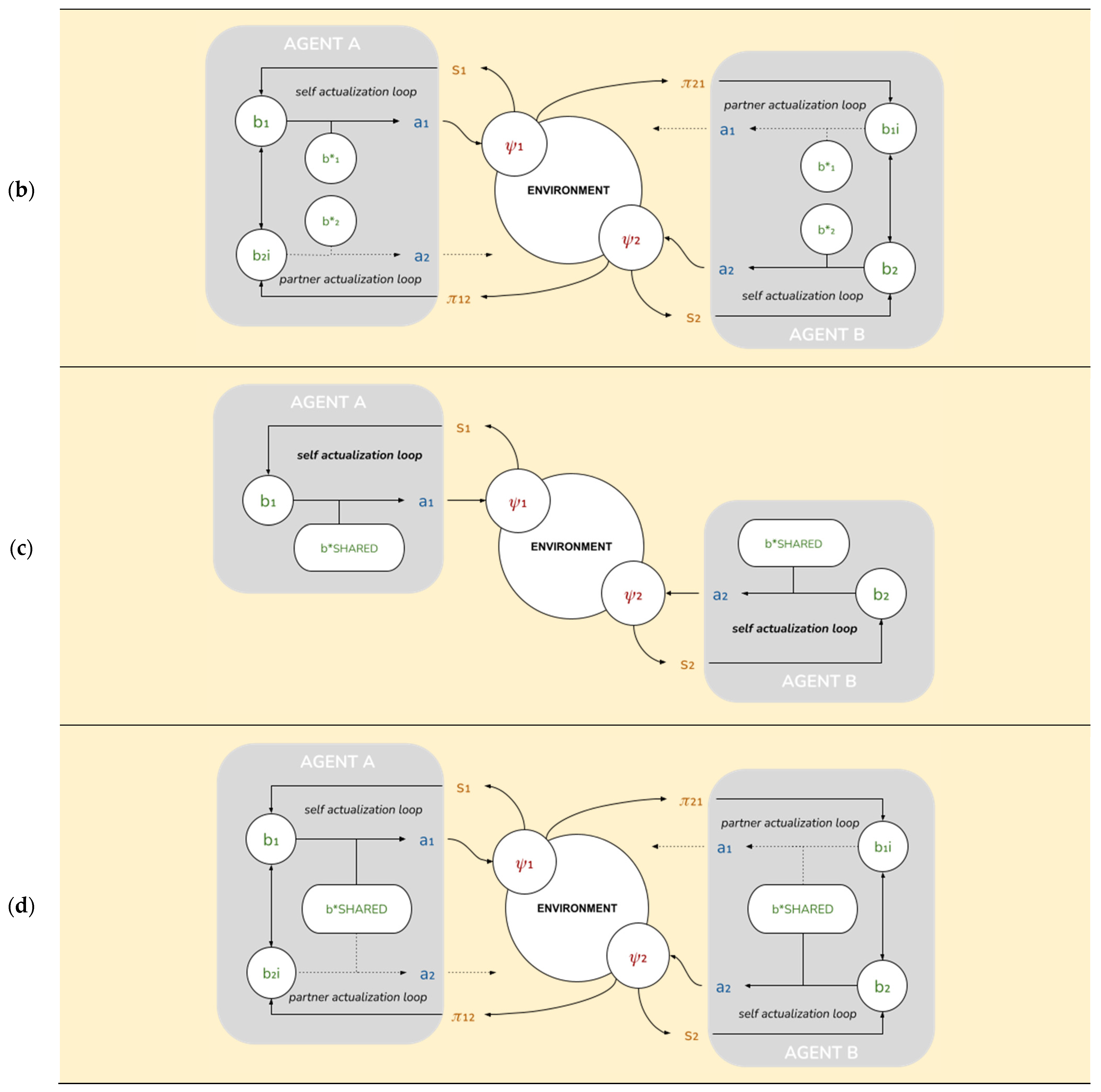

2.2. Conceptual Outline of AIF Model

Our model consists of two agents. Descriptively, one can think of these as simple automata, each inhabiting a discrete “cell” in a one-dimensional circular environment where there are predefined targets (food sources). As agents aren’t endowed with a frame of reference, an agent’s main cognitive challenge is to situate itself in the environment (i.e., to infer its own position). Both agents have the following capabilities:

Physical capabilities:

“Chemical sensors” able to pick up a 1-bit chemical signal from the food source at each time step;

“Actuators” that allow agents to “move” one cell at each time step;

“Position and motion sensors” that allow agents to detect each other’s position and motion.

Cognitive capabilities:

Beliefs about their own current position; we construe this as a “self-actualization loop” or Sense->Understand->Act cycle: (1) sense environment; (2) optimize belief distribution relative to sensory inputs (by minimizing free energy given by an adequate generative model); and (3) act to reduce FE relative to desired beliefs, under the same generative model.

Desires (also described as “desired beliefs”) about their own position relative to their prescribed target positions;

Ability to select the actions that will best “satisfy” their desires;

“Theory of Mind”: they possess beliefs about their partner’s position, knowledge of their partner’s desires, and therefore, the ability to imagine the actions that their partners are expected to take. We implement this as a “partner-actualization loop” that is formally identical to the self-actualization loop above;

“Goal Alignment”: the ability to alter their own desires to make them more compatible with their partner’s.

2.3. Model Preliminaries

Throughout, we use the following shorthand:

for any superscript index, where . This converts a belief represented as a vector in ℝN to the equivalent probability distribution over [0..N-1]; the offset is for numerical stability. We choose to convert back by , to enforce .

Beliefs are also implicitly constrained to , for numerical stability. This means .

when necessary, to disambiguate between it and .

to denote shifting a vector.

to denote “re-ranging” a probability distribution, squishing its range from [0,1] to [].

All arithmetic in the space of positions ( or ) and actions () is considered to be mod N.

2.4. State Space

These capabilities are implemented as follows. Each agent A

i is represented by a tuple A

i = (ψ

i, s

i, b

i, a

i). In what follows we’ll omit the indices except where there is a relevant difference between agents. These tuples form the relevant state space (see

Figure 2):

ψ ∈ [0..N-1] is the agent’s external state, its position in a circular environment with period N. Crucially, the agent doesn’t have direct access to its external state, but only to limited information about the environment afforded through the sensory state below.

s = (sown ∈ {0, 1}, Δ ∈ [0..N-1], app ∈ {−1, 0, 1}) is the agent’s sensory state. sown is a one-bit sensory input from the environment; Δ is the perceived difference between the agent’s own position and its partner’s; app is the partner’s last action.

b = (bown ∈ BN, b*own ∈ BN, bpartner ∈ BN, b*partner ∈ BN) is the agent’s internal or “belief” state. bown and b*own are, respectively, its actual and desired beliefs about its own position; equivalently, bpartner and b*partner are its actual and desired beliefs about its partner’s position.

a = (aown ∈ {−1, 0, 1}, apartner ∈ {−1, 0, 1}) is the partner’s action state: aown is its own action; apartner is the action it expects from the partner.

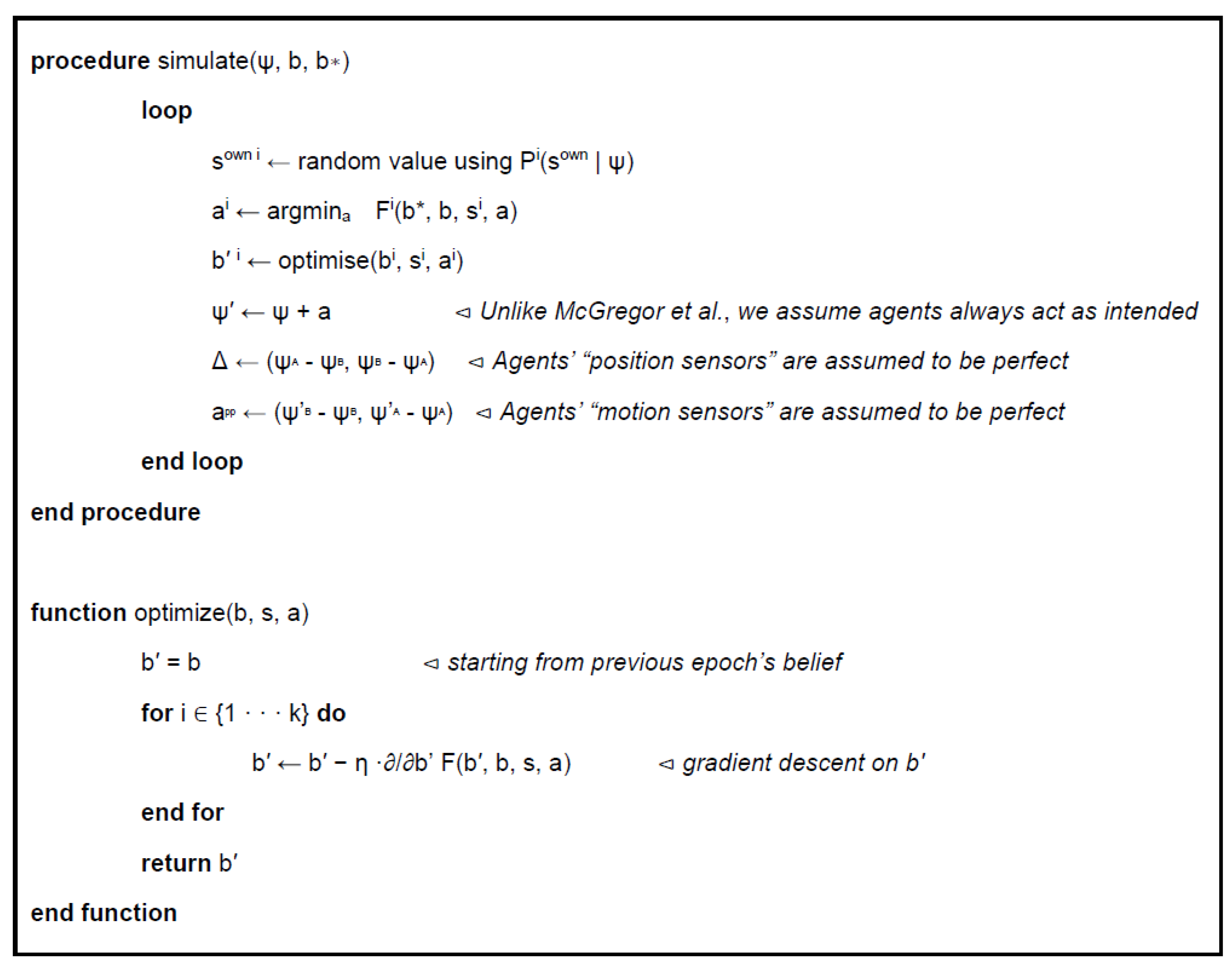

2.5. Agent Evolution

These states evolve according to a discrete-time free energy minimization procedure, extended from McGregor et al. (

Figure 3). At each time step, each agent selects the action that will minimize the free energy relative to its target encoding (achieved by explicit computation of F for each of the 3 possible actions), and then updates its beliefs to best match the current sensory state (achieved by gradient descent on

b’).

2.6. Sensory Model

Let us recapitulate McGregor et al’s definition of the free energy for a single-agent model:

where

q(b) = softmax(

b) is the “variational (probability) density” encoded by

b, and

p(ψ′,

s|b,

a) is the “generative (probability) density” representing the agent’s mental model of the world [

37].

is the Kullback–Leibler (KL) divergence or relative entropy between the variational and generative densities [

71].

To respect the causal relationships prescribed by the Markov blanket (see

Figure 2), the generative density may be decomposed as:

where the three terms within the summation are arbitrary functions of their variables. In the single-agent model, where the only source of information is the environment, we follow McGregor’s model, in a slightly simplified form:

: the agent’s actions are always assumed to have the intended effect, being the discrete Kronecker delta.

: the agent assumes the probability of s = 1 (sensoria triggered) is higher for regions near the “center” of the environment. This is identical to the real “physical” probability of chemical signals, meaning the agent’s generative distribution is correct.

, in agreement with the definition of b as encoding the belief distribution over ψ.

From list item 1 directly above, this generative density can also be read as a simple Bayesian updating plus a change of indexes to reflect the effects of the action: or even more simply, .

In our model, both agents implement their own copies of the generative density above (we leave it to the reader to add “▯own” indices where appropriate). The parameter k, denoting the maximum sensory probability, is assumed agent-specific; we naturally identify it with an agent’s “perceptiveness”. ω and , on the other hand, are environmental parameters.

2.7. Partner Model

In addition to the sensory model, we will define a new generative density implementing the agent’s inference of its partner’s behavior, or “Theory of Mind” (ToM; see Figure 6b). An agent with a sensory and partner model will adopt the following form:

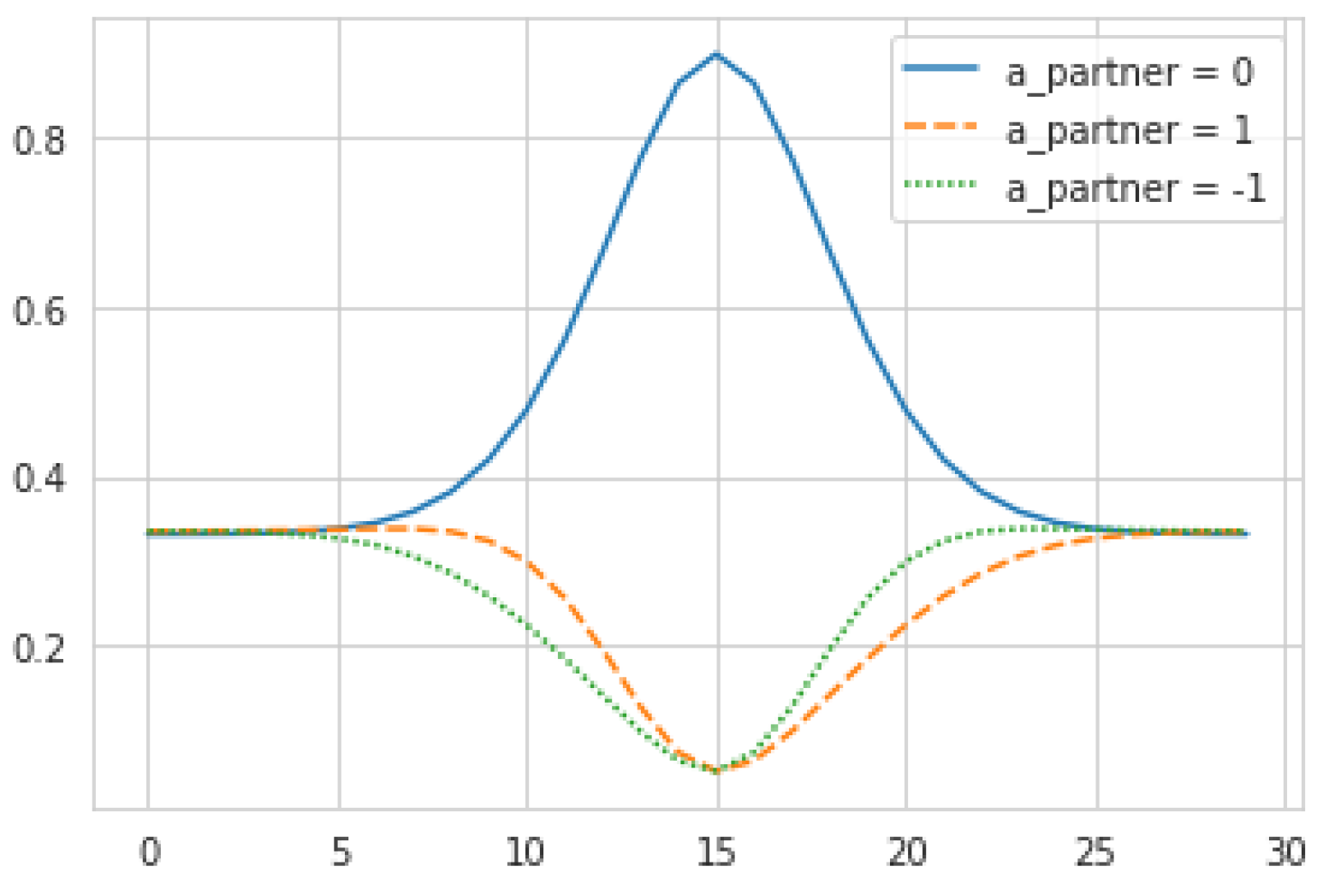

The first three terms on the right-hand side correspond to mechanistic models of the evolution of the variables ’, Δ, app, whereas the last one, , defines the “prior” and is analogous to in the sensory model. To fully specify this density, we define these models as follows:

describes the expected results of the partner’s observed action upon its inferred position. The Kronecker delta implies that the partner’s actions are always effective, matching item #1 from

Section 2.6.

: the agent (correctly) believes that is a deterministic function of the two positions, and therefore the probability of observing a given , given the partner’s position , is equal to the probability the agent ascribes to itself being in the corresponding position .

the agent determines its belief in the partner’s previous action by “backtracking” to its previous state

, and leveraging the following model of the partner’s next action:

This equation seems complex but its output and mechanical interpretation are quite simple (see

Figure 4). To justify it, note that the agent must produce probabilities of the partner’s actions without knowing their

actual internal states at that time, but only their targets

. To do so, the agent assumes that the partner will act mechanistically according to those desires, i.e., the higher a partner’s desire for its current location, the more likely it is to stay put. To eliminate spurious dependence on absolute values of

, we set

to be proportional to

. The constant of proportionality

corresponds to the maximum probability of the partner standing still, when

achieves its global maxima. This leaves the remaining probability mass to be allocated across the other actions

, which we do by assuming the probability of moving in a given direction is proportional to the desires in the adjacent locations. For the purpose of this study,

is held constant at 0.9.

The combination of these three models results in a generative density has the same form as the original generative density from the baseline sensory model,

. This is consistent with our modeling decision to make the “other-evidencing loop” functionally identical to the “self-actualization loop”, as discussed above (

Section 2.2).

As before, each agent implements its own copy of the partner model. is assumed equal for both agents; they have the same capability to interpret the partner’s actions.

2.8. Agent-Level Free Energy

We are finally ready to define the free energy for our individual-level model. For each agent:

Where:

is the sensory model (outlined above in

Section 2.6).

is the partner model (outlined above in

Section 2.7).

The “reranging” function,

, serves to moderate the influence of the partner model on the agent’s own beliefs, and vice-versa.

is an agent-specific parameter, which, as we will see in

Section 2.9, is identified with each agent’s degree of “alterity”.

The right-hand side of each KL divergence (i.e., the products of generative densities) is implicitly constrained to , to ensure the resulting beliefs remain within their range . This is interpreted as preventing overconfidence and is implemented as a simple maximum.

We interpret Equation (4) as follows: The agent’s sensory and partner models jointly constrain its beliefs both about its own position and its partner’s position. Thus, at each step, the agent: (a) refines its beliefs about both positions, in order to best fit the evidence provided by all of its inputs (i.e., its “chemical” sensor for the physical environment and “position and motion” sensor for its partner); and (b) selects the “best” next pair of actions (for self and partner), i.e., that which minimizes the “difference” (the KL divergence) between its present beliefs and the desired beliefs (For reasons of numerical stability, we follow McGregor et al. in implementing (b) before (a): The agent chooses the next actions based on current beliefs, then updates beliefs for the next time-step, based on the expected effects of those actions [

68] (pp. 6–7)).

2.9. Theory of Mind

In this section we motivate the parameterization of an agent’s Theory of Mind ability with or simply, its degree of alterity.

Note that when considered as a discrete-time dynamical system evolution, the process of refining beliefs about own and partner positions in the environment (step (a) in

Section 2.8 above) potentially involves multiple recursive dependencies: the updated variational densities

and

both depend on the previous

(via both

and

), as well as on the previous

(via

). This is by design: the dependencies ensure that

and

are consistent with each other, as well as with their counterparts across time steps. However, too much of a good thing can be a problem. If left unconstrained,

and

can easily evolve towards spurious fixed points (Kronecker deltas), which can be interpreted as overfitting on prematurely established priors (In this case, it could be possible to observe scenarios such as

“the blind leading the blind” in which a weak agent fixates on the movement trajectory of a strong agent who is overconfident about its final destination.). On the other hand, if

were to depend only on

, it would eliminate the spurious fixed points: without the crossed dependence, the first term of the partner model (

Section 2.7) only has fixed points at

, meaning that the agent has achieved a local desire optimum. Effectively, this “shuts down” the agent’s ability to use the partner’s information to shape its own beliefs, or its theory of mind, making it equivalent to MacGregor’s original model.

Thus, there would appear to be no universal “best” value for an agent’s Theory of Mind; an appropriate level of Theory of Mind would depend on a trade-off between the risk of overfitting and that of discarding valid evidence from the partner. The appropriate level of Theory of Mind would also depend on the agent’s other capabilities (in this case, its perceptiveness, k).

This motivates the operationalization of as a parameter for the intensity to which Theory of Mind shapes the agent’s beliefs. can be understood simply as an agent’s degree of alterity, or propensity to see the “other” as an agent like itself. In simulations with values of close to 0, we expect the partner’s behavior to be dominated by its own “chemical” sensory input. Increasing , we expect to see an agent’s behavior being more heavily influenced by inputs from its partner, driving to become sharper as soon as does so. Past a certain threshold, this could spill over into premature overfitting.

Finally, note the

in the second term of agent-level free energy (Equation (4)). This represents the notion that the agent is using “second-order theory of mind” or thinking about what its partner might be thinking about it (First-order ToM involves thinking about what some-one else is thinking or feeling; second-order ToM involves thinking about what someone is thinking or feeling about what someone else is thinking or feeling [

72]). Here,

comes in as “my model of my partner’s model of my behavior”. It seems appropriate for the agent to believe the partner to possess the same level of alterity as itself; we then represent this as applying the rearranging function (the “squishing” of the probability distribution) twice,

2.10. Goal Alignment

In this section we motivate the parameterization of the degree of goal alignment between agents.

Recall that is an arbitrary (exogenous) real vector; the implied desire distribution can have multiple maxima, leading to a generally challenging optimization task for the agent. Theory of Mind can help, but it can also make matters worse: if also has multiple peaks, the partner’s behavior can easily become ambiguous, i.e., it could appear coherent with multiple distinct positions. This ambiguity can easily lead the agent astray.

This problem is reduced if the agents can

align goals with each other, that is, avoid pursuing targets that are not shared between them. We implement this as:

where

is a parameter representing the degree of alignment between this specific agent pair, and we assume each agent has knowledge of what goals are shared vs private to itself or its partner. That is, with

, the agent is equally interested in its private goals and in the shared ones (and assumes the same for the partner); with

, the agent is solely interested in the shared goals (and assumes the same for the partner).

This operation may seem quite artificial, especially as it implies a “leap of faith” on the part of the agent to effectively change its expectations about the partner’s behavior (Equation (6)). However, if we accept this assumption, we see that the task is made easier: in the general case, alignment reduces the agent-specific goal ambiguity, leading to better ability to focus and less positional ambiguity coming from the partner. Of course, one can construct examples where alignment does not help or even hurts; for instance, if both agents share all of their peaks, alignment not only will not help reduce ambiguity, but it can make the peaks sharper and hard to find. And as we will see, in the context of the system-level model, alignment becomes a natural capability.

In the present paper, for simplicity, we assume agents’ shared goals are assigned exogenously. In light of the system-level model (

Section 2.11), however, it is easy to see that such shared goals have a natural connection with the global optimum states. In this context, one can expect shared goals to emerge endogenously from the agents’ interaction with their social environment over the “long run”. This will be explored in future work.

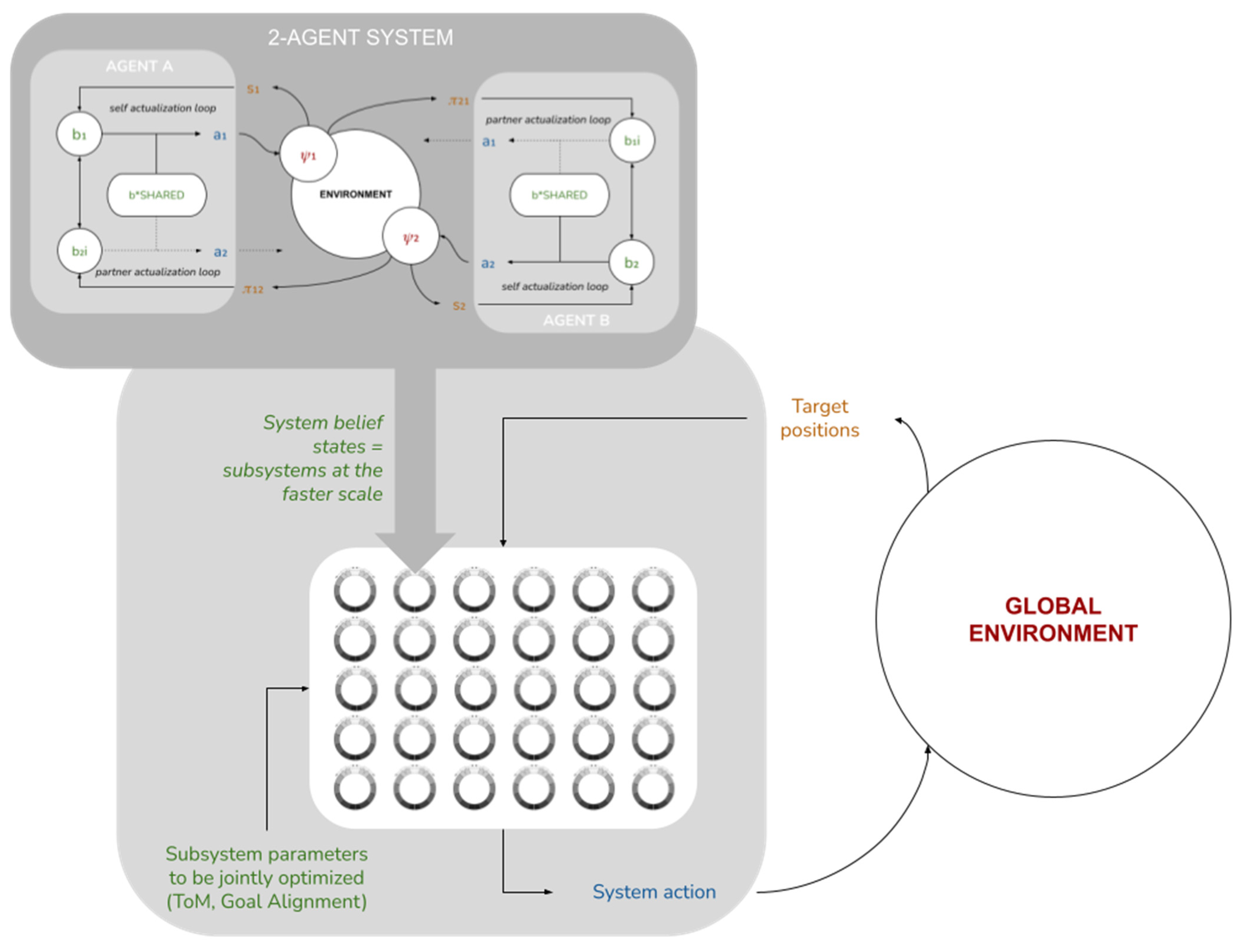

2.11. System-Level Free Energy

Up until now, we have restricted ourselves to discussing our model at the level of individual agents and their local-scale interactions. We now take a higher vantage point and consider the implications of these local-scale interactions for global-scale system performance. We posit an ensemble of M identical copies of the two-agent subsystem above (i.e., 2

M), each in its own independent environment, also assumed to be identical except for the position of the food source (see

Figure 5).

From this vantage point, each of the 2M agents is now a “point particle”, described only by its position ψi. The tuple is then the set of internal states of the system as a whole.

We will now assume that this set of internal states interacts with a global environment

. We reinterpret the “food sources” as sensory states:

, where each

is assumed to correlate with

through some sensory mechanism. We further assume the system is capable to act back on the environment through some active mechanism

. This provides us with a complete system-level Markov blanket (

Figure 5b), for which we can define a system-level free energy as

where

, the system’s “variational density”, is simply the empirical distribution of the various agents’ positions.

In this paper, we will not cover the “active” part of active inference at the global level—namely, the system action remains undefined. We will instead consider a single system-level inference step, corresponding to fixed values of ,. As we can see from the formulation above, this corresponds to optimizing given —that is, to the aggregate behavior of the 2M agents’ over an entire run of the model at the individual level.

This in turn motivates defining the system’s generative density as : given a set of internal states (agent positions), the system “expects” it to have been produced by the agents moving towards the corresponding sensory states (food source). Thus, to the extent that the agents perform their local active inference tasks well, the system performs approximate Bayesian inference over this generative density, and we can evaluate the degree to which this inference is effective, by evaluating whether, and how quickly, is minimized. We return to the topic of system-level (active) inference in the discussion.

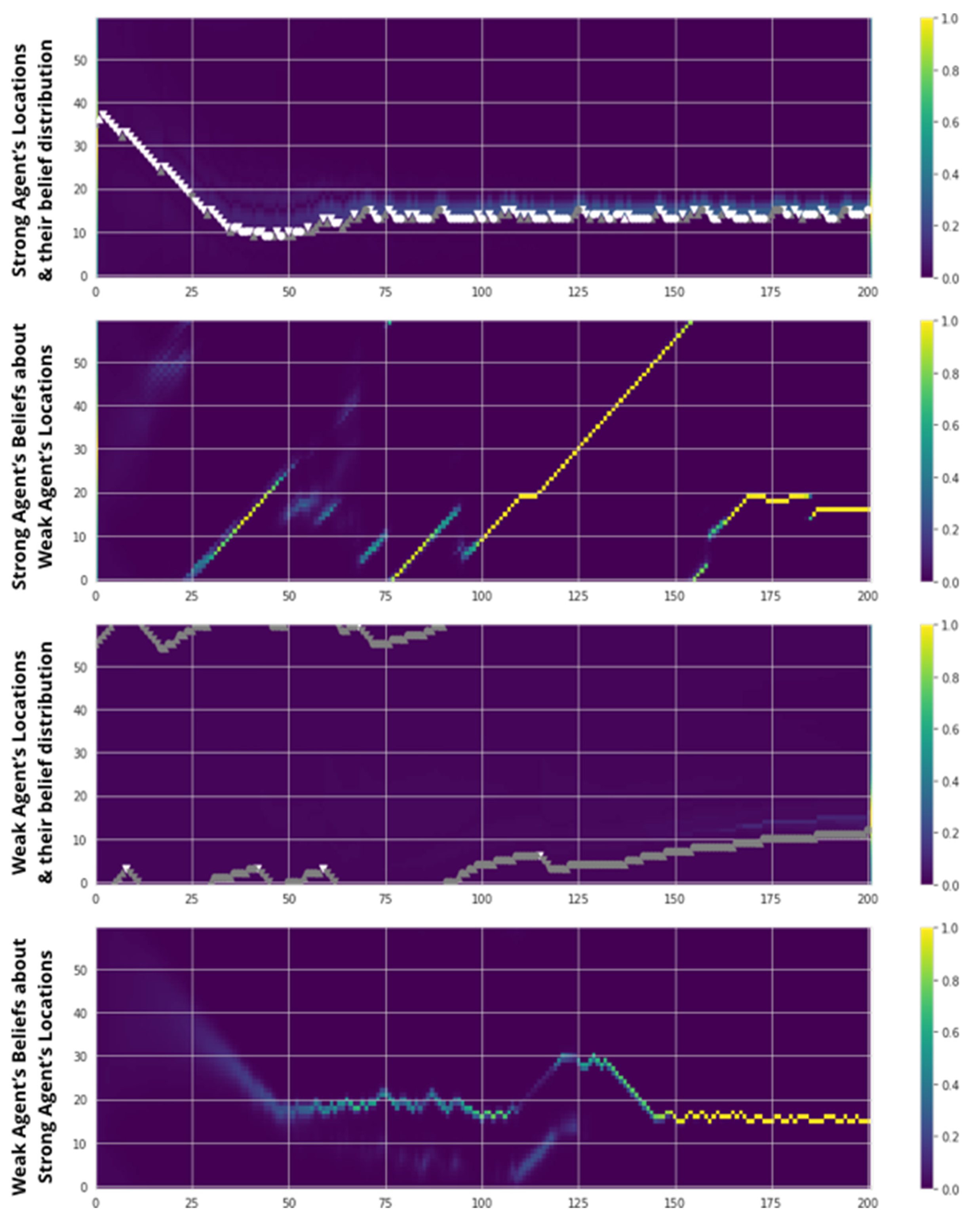

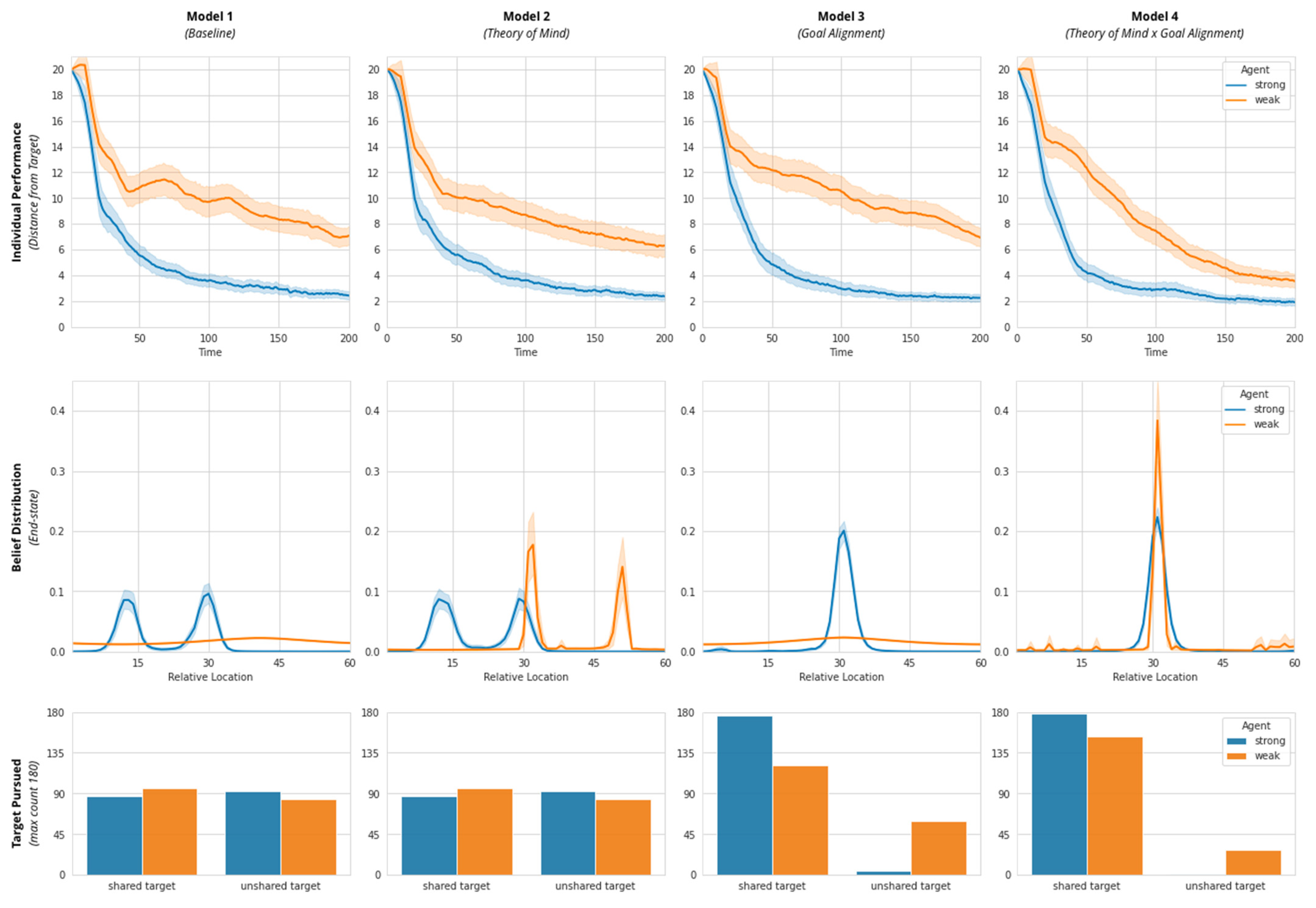

2.12. Simulations

We have thus defined this system at two altitudes, enabling us to perform simulations at the agent level and analyze their implied performance at the system level (as measured by system-level free energy). We can now use this framework to analyze the extent to which the two novel agent-level cognitive capabilities we introduced (“Theory of Mind” and “Goal Alignment”) increase the system’s ability to perform approximate inference at local and global scales. To explore the effects of agent-level cognitive capabilities on collective performance, we create four experimental conditions according to a 2 × 2 (Theory of Mind × Goal Alignment) matrix: Model 1 (Baseline), Model 2 (Theory of Mind), Model 3 (Goal Alignment), and Model 4 (Theory of Mind and Goal Alignment; see

Table 1).

Throughout, we use the same two agents, Agent A and Agent B. To establish meaningful variation in agent performance at the individual-scale, we parameterize an agent’s perceptiveness to the physical environment (i.e., to the reliability of the information derived from its “chemical sensors”), by assigning one agent with “strong” perceptiveness (Agent A—Strong;) and the other agent with “weak” perceptiveness (Agent B—Weak).

We assign each agent with two targets, one shared (Shared Target) and one unshared (individual target or Target A and Target B). Accordingly, we assume each agent’s desire distributions have both a shared peak (corresponding to a Shared Target) and an unshared peak (corresponding to Target A or Target B). Throughout, we measure both the collective performance (system-level free energy), as well as individual performance (distance from their closest target). In addition, we also capture their end-state desire distribution.

We implement simulations in Python (V3.7) using Google Colab (V1.0.0). As noted above, our implementation draws upon and extends an existing AIF model implementation developed in Python (V2.7) by van Shaik [

70]. To ensure that the agent behavior is not an artefact of their specific location in the environment, we run 180 runs for each simulation for each experimental condition by randomizing their starting locations throughout the environment. The environment size was held constant at 60 cells. To ensure that the agent behavior is not an artefact of initial conditions, we perform 180 runs for each simulation for each experimental condition by uniformly distributing their starting locations throughout the environment (three times per location), while preserving the distance between starting locations and target. This uniform distribution of initial conditions across the environment also corresponds to the “worst-case scenario” in terms of system-level specification of sensory inputs for a two-agent system, discussed in

Section 2.11.

2.13. Model Parameters

Our four models were created by setting physical perceptiveness for the strong and weak agent and varying their ability to exhibit social perceptiveness and align goals. The parameter settings are summarized at the individual agent level as follows (see

Figure 6 and

Table 2):

Model 1 contains a self-actualization loop driven by physical perceptiveness. Physical perceptiveness (individual skill parameter; range [0.01, 0.99]) is varied such that Agent A is endowed with strong perceptiveness (0.99) and Agent B is endowed with weak perceptiveness (0.05).

Model 2 is made up of a self-actualization loop and a partner-actualization loop (instantiating ToM). The other-actualization loop is implemented by setting the value of alterity (ToM or social perceptiveness parameter; range [0.01, 0.99]) as 0.20 for the weak agent and 0 for the strong agent. This parameterization helps the weak agent use social information to navigate the physical environment. These two loops implement a single (non-separable) free energy functional: The weak agent’s inferences from their stronger partner’s behavior serve to refine its beliefs about its position in the environment.

Model 3 entails a self-actualization loop (but no partner-actualization loop) as well as enforces the pursuit of a common goal (set alignment = 1) by fully suppressing their unshared goals (alignment parameter; range [0,1]). In this simplified implementation, we assume that goal alignment is a relational/dyadic property such that both partners exhibit the same level of alignment towards each other. This is akin to partners fully exploring each other’s targets and agreeing to pursue their common goal. Setting alignment lower than 1 will increase the relative weighting of unshared goals and cause them to compete with their shared goals.

Model 4 includes both cognitive features: self- and partner-actualization loops for the weak agent (instantiating ToM; alterity = 0.2) and complete goal alignment between agents.

4. Discussion

A formal understanding of collective intelligence in complex adaptive systems requires a formal description, within a single multiscale framework, of how the behavior of a composite system and its subsystem components co-inform each other to produce behavior that cannot be explained at any single scale of analysis. In this paper we make a contribution toward this type of formal grasp of collective intelligence, by using AIF to posit a computational model that connects individual-level constraints and capabilities of autonomous agents to collective-level behavior. Specifically, we provide an explicit, fully specified two-scale system where free energy minimization occurs at both scales, and where the aggregate behavior of agents at the faster/smaller scale can be rigorously identified with the belief-optimization (a.k.a. “inference”) step at the slower/bigger scale. We introduce social cognitive capabilities at the agent level (Theory of Mind and Goal Alignment), which we implement directly through AIF. Further, illustrative results of this novel approach suggest that such capabilities of individual agents are directly associated with improvements in the system’s ability to perform approximate Bayesian inference or minimize variational free energy. Significantly, improvements in global-scale inference are greatest when local-scale performance optima of individuals align with the system’s global expected state (e.g., Model 4). Crucially, all of this occurs “bottom-up”, in the sense that our model does not provide exogenous constraints or incentives for agents to behave in any specific way; the system-level inference emerges as a product of self-organizing AIF agents endowed with simple social cognitive mechanisms. The operation of these mechanisms improves agent-level outcomes by enhancing agents’ ability to minimize free energy in an environment populated by other agents like it.

Of course, our account does not preclude or dismiss the operation of “top-down” dynamics, or the use of exogenous incentives or constraints to engineer specific types of individual and collective behavior. Rather, our approach provides a principled and mechanistic account of bio-cognitive systems in which “bottom-up” and “top-down” mechanisms may meaningfully interplay to inform accounts of behavior such as collective intelligence [

4]. Our results suggest that models such as these may help establish a mechanistic understanding of how collective intelligence evolves and operates in real-life systems, and provides a plausible lower bound for the kind of agent-level cognitive capabilities that are required to successfully implement collective intelligence in such systems.

4.1. We Demonstrate AIF as a Viable Mathematical Framework for Modelling Collective Intelligence as a Multiscale Phenomenon

This work demonstrates the viability of AIF as a mathematical language that can integrate across scales of a composite bio-cognitive system to predict behavior. Existing multiscale formulations of AIF [

39,

40], while more immediately useful for understanding the behavior of docile subsystem components like cells in a multicellular organism or neurons in the brain, do not yet offer clear predictions about the behavior of collectives composed of highly autonomous AIF agents that engage in reciprocal self-evidencing with each other as well as with the physical (non-social) environment [

43]. What’s more, existing toy simulations of multiscale AIF engineer collective behavior as a predestination—either as a prior in an agent’s generative model [

9], or by default of an environment that consists solely of other agents [

7,

8]. We build upon these accounts by using AIF to first posit the minimal information-theoretical patterns (or “adaptive priors”; see [

42]) that would likely emerge at the level of the individual agent to allow that agent to persist and flourish in an environment populated by other AIF agents [

58]. We then examine the relationship between these local-scale patterns and collective behavior as a process of Bayesian inference across multiple scales. Our models show that collective intelligence can emerge endogenously in a simple goal-directed task from interaction between agents endowed with suitably sophisticated cognitive abilities (and without the need for exogenous manipulation or incentivization).

Key to our proposal is the suggestion that collective intelligence can be understood as a dynamical process of (active) inference at the global-scale of a composite system. We operationalize self-organization of the collective as a process of free energy minimization or approximate Bayesian inference based on sensory (but not active) states (for a previous attempt to operationalize collective behavior as both active and sensory inference, see [

69]). In a series of four models, we demonstrate the responsiveness of this system-level measure to learning effects over time; the progression of each Model exhibits a pattern akin to a gradient descent on free energy, evoking the notion that a system that performs (active) Bayesian inference. Further, stepwise increases in cognitive sophistication at the individual level show a clear reduction in free energy, particularly between Model 1 (Baseline) and Model 4 (Theory of Mind x Goal Alignment). These illustrative results suggest a formal, causal link between behavioral processes across multiple scales of a complex adaptive system.

Going further, we can imagine an extension of this model where the collective system interacts with a non-trivial environment, but at a slower time scale, such that a complete simulation run of all 2M agents corresponds to a single belief optimization step for the whole system, after which it acts on the environment and receives sensory information from it (manifested, for example, as changes in the agents’ food sources). In this extended model (see

Figure 10), and if the agent-specific parameters (alterity/Theory of Mind (α), and Goal Alignment (γ)) could be made endogenous (either via selective mechanisms via some other learning mechanisms; see [

48,

73]) we would expect to see the system finding (non-zero) values of these parameters that optimize its free energy minimization. For example, it is likely that a system would select for higher values of γ (Goal Alignment) when both agents’ end-state beliefs and actual target locations mutually cohere, or higher values of α for agents with weaker perceptiveness. Interestingly, this would show that degrees of Theory of Mind and Goal Alignment are capabilities that would be selected for or boosted at these longer time scales, providing empirical support for the heuristic arguments made for their existence in our model and in human collective intelligence research more generally [

4].

4.2. AIF Sheds Light on Dynamical Operation of Mechanisms That Underwrite Collective Intelligence

In this way, AIF offers a paradigm through which to move beyond the methodological constraints associated with experimental analyses of the relationship between local interactions and collective behavior [

21]. Even our very rudimentary 2-Agent AIF model proposed here offers insight into the dynamic operation and function of individual cognitive mechanisms for individual and collective level behavior. In distinct contrast to laboratory paradigms that usually rely on low-dimensional behavioral “snapshots” or summaries of behavior to verify linearly causal predictions about individual and collective phenomena, our computational model can be used to explore the effects of fine-grained, agent- and collective-level variations in cognitive ability on individual and collective behavior in real time.

For example, by parameterizing key cognitive abilities (Theory of Mind and Goal Alignment), our model shows that it is not necessarily a case of “more is better” when it comes to cognitive mechanisms underlying adaptive social behavior and collective intelligence. If an agent’s level of social perceptiveness (Theory of Mind) were too low, it is likely that agents would miss vital performance-relevant information about the environment populated by other agents; if an agent’s Theory of Mind were too high, it may instead over-index on partner belief states as an affordance for own beliefs (a scenario of “blind leading the blind”). We show that canonical cognitive abilities such as Theory of Mind and Goal Alignment can function across multiple scales to stabilize and reduce the computational uncertainty of an environment made up of other AIF agents, but only when these abilities are optimally tuned to a “goldilocks” level that is suitable to performance in that specific environment.

The essence of this proposal is captured by empirical research of attentional processes of human agents that engage in sophisticated joint action [

74,

75]. For instance, athletes in novice basketball teams are found to devote more attentional resources to tracking and monitoring their own teammates, while expert teams spend less time attending to each other and more time instead attending to the socio-technical task environment [

76]. Viewed from the perspective of AIF, in both novice and expert teams alike, agents likely differentially deploy physical and social perceptiveness at levels that make sense for pursuing collective performance in a given situation; novices may stand to gain more from attending to (and therefore learning from) their teammates (recall our Agent B in Model 2 who leverages Theory of Mind to overcome weak physical perceptiveness, for example); while experts might stand to gain more from down-regulating social perceptiveness and redirecting limited attentional resources to physical perception of the task or (adversarial) social environment [

77,

78].

As evidence in organizational psychology and management suggests, (and outlined in the introduction), it is likely that social perceptiveness may indeed be an important factor (among many) that underwrites collective intelligence. But this may be especially the case in the context of unacquainted teams of “WEIRD” experimental subjects [

79] who coordinate for a limited number of hours in a contrived laboratory setting [

3]. If the experimental task were to be translated to a real-world performance setting (e.g., one involving high-stakes or elite performance requirements), or if that same team of experimental subjects were to persist over time beyond the lab in a randomly fluctuating environment, it is conceivable that a premium for social perceptiveness may give way to demands for other types of abilities needed to continue to gain performance-relevant information from the task environment (e.g., through physical perceptiveness of the task environment). Viewed from this perspective, the true “special sauce” of collective intelligence (and individual intelligence, for that matter; see [

80]) may turn out not to be one or other discrete or reified individual or team level ability per se (e.g., social perceptiveness), but instead a collective ability to nimbly adjust the volumes of multiple parameters to foster specific information-theoretic patterns conducive to minimizing free energy across multiple scales and over specific, performance-relevant time periods.

In this spirit, the computational approach we adopt here under AIF affords a dynamical and situational perspective on team performance that may offer important insights into long-standing and nascent hypotheses concerning the causal mechanisms of collective intelligence. For instance, our model is well positioned to investigate the long-proposed (but hitherto unsubstantiated) claim that successful team performance, and by extension, collective intelligence, depends on balancing a tradeoff between cognitive diversity and cognitive efficiency [

4] (p. 421). Likewise, our approach could help elucidate mechanisms and dynamics through which memory, attention, and reasoning capabilities become distributed through a collective, and the conditions in which these “transactive” processes [

81] facilitate emergence of intelligent behavior [

77,

82,

83]. In either case, our model would simply require specification with the appropriate individual-level cognitive abilities or priors. For example, to better understand the causal relationship between transactive knowledge systems and collective intelligence, our model could leverage recent empirical research that observes a connection between individual agents’ metacognitive abilities (e.g., perception of others’ skills, focus, and goals), the formation of transactive knowledge systems, and a collective’s ability to adapt to a changing task environment [

83]. On an important and related note to these opportunities for future research, efforts to simulate human collective intelligence should strive to develop models composed of two or more agents to better mimic human-like coordination dynamics [

50,

84].

4.3. Increases in System Performance Correspond with Alignment between an Agent’s Local and Global Optima

A key insight from our models, and worthy of further investigation, is that the greatest improvement in collective intelligence (Model 4; measured by global-scale inference) occurs when local-scale performance optima of individuals align with the system’s global expected state. This effect can be understood as individuals jointly implementing approximate Bayesian inference of the system’s expectations. In effect, our model suggests that multi-scale alignment between lower- and higher-order states may contribute to the emergence of collective intelligence.

Alignment between local and global states might sound like an obvious prerequisite for collective intelligence, particularly for more docile AIF agents such as neurons or cells (it is near impossible to imagine a scenario in which a neuron or cell could meaningfully persist without being spatially aligned with a superordinate agent; see [

9]). But our model exemplifies a more subtle form of alignment, based on a loose coupling between scales through a system’s generative model (

Section 2.11), enabling the extension of this idea to scenarios where the local and global optimizations may be taking place in arbitrarily distinct and abstract state spaces [

49,

51]. By now it is well understood in brain and behavioral sciences that coordinated human behavior relies for its stability and efficacy on an intricate web of biologically evolved physiological and cognitive mechanisms [

85,

86], as well as culturally evolved affordances of language, norms, and institutions [

87]. But precisely how these various mechanisms and affordances—particularly those that are separated across scales—coordinate in real or evolutionary time to enable human collective phenomena remains poorly understood [

39,

73,

88].

Computational models, such as the one we have presented here, that are capable of formally representing multiscale alignment may help reorganize and clarify causal relationships between the various hypothesized physiological, cognitive, and cultural mechanisms hypothesized to underpin human collective behavior [

14]. For example, a computational model such as the one proposed here could conceivably be adapted to help more systematically test the burgeoning hypothesis that coordination between basal physiological, metabolic and homeostatic processes at one scale of organization and linguistically mediated processes of interaction and exchange at another scale determine fundamental dynamics of individual and collective behavior [

88,

89,

90].

Future research should aspire to examine causal connections between a fuller range of meaningful scales of behavior. In the case of human collectives, meaningful scales of behavior could extend from the basal mechanisms of physiological energy, movement, and emotional regulation on the micro scale [

91,

92], to linguistically- (and now digitally-) mediated social informational systems at the meso scale [

93] to global socio-ecological systems at the macro scale [

94,

95,

96,

97]. As we have demonstrated here, the key requirement for the development of such multiscale models under AIF is faithful construction of the appropriate generative models at each scale. These models provide the mechanistic “missing links” between AIF and the phenomena to be explained—a task that will require tremendously innovative and intelligent collective behavior on the part of a diverse range of agents.

The patterns that crop up again and again in successful space are there because they are in fundamental accord with characteristics of the human creature. They allow him to function as a human. They emphasize his essence—he is at once an individual and a member of a group. They deny neither his individuality nor his inclination to bond into teams. They let him be what he is.

- DeMarco and Lister [

98] (1987, p.90)