2. Notation

A parameterized curve in

is a continuous function

where

is a closed interval of the real line. The length of

is given by

Let

be a sequence of data, where

stands for the

-ball centered in

with radius

. Let

be a grid over

, i.e.,

where

is a lattice in

with spacing

. Let

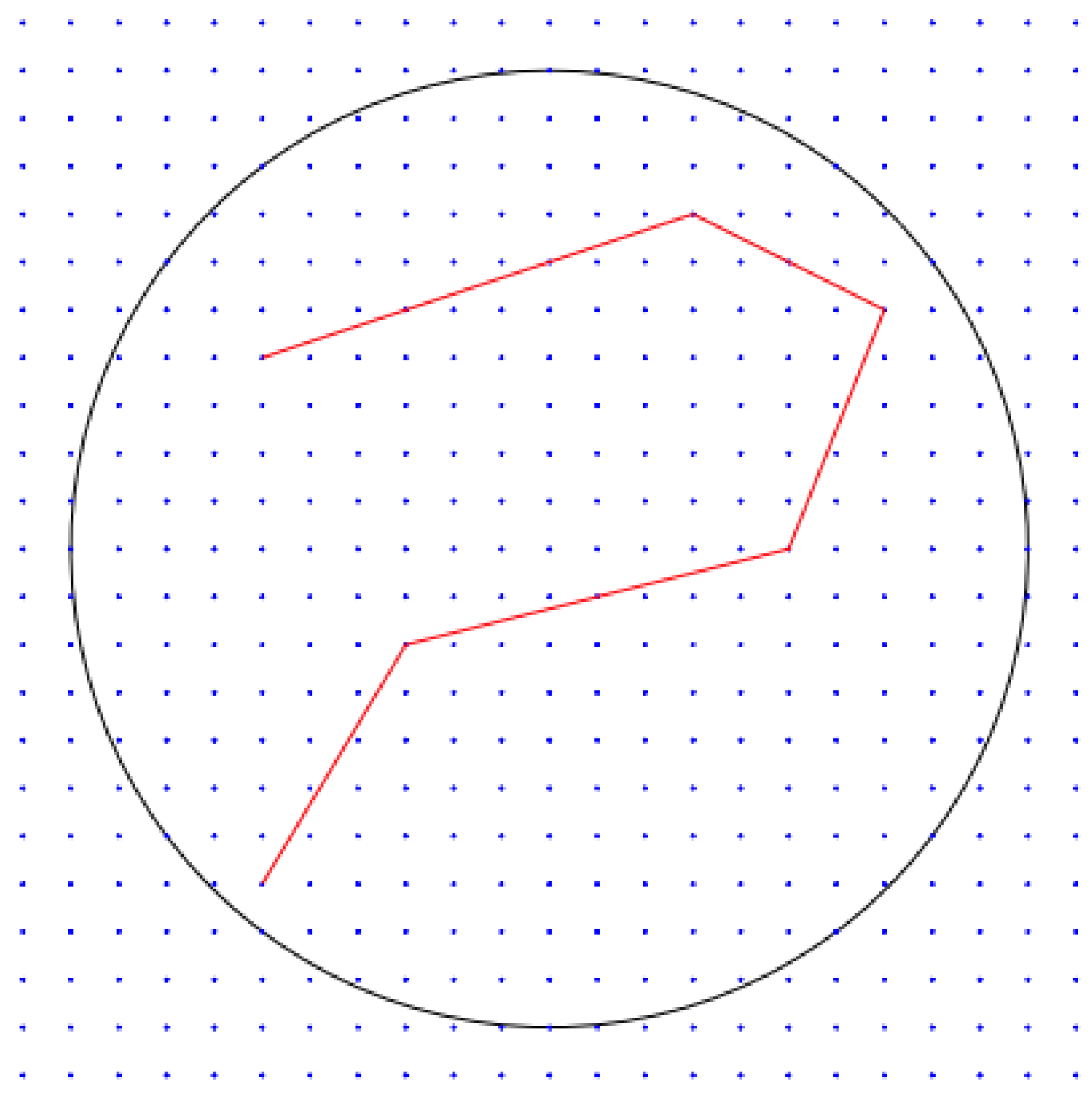

and define for each

the collection

of polygonal lines

with

k segments whose vertices are in

and such that

. Denote by

all polygonal lines with a number of segments

, whose vertices are in

and whose length is at most

L. Finally, let

denote the number of segments of

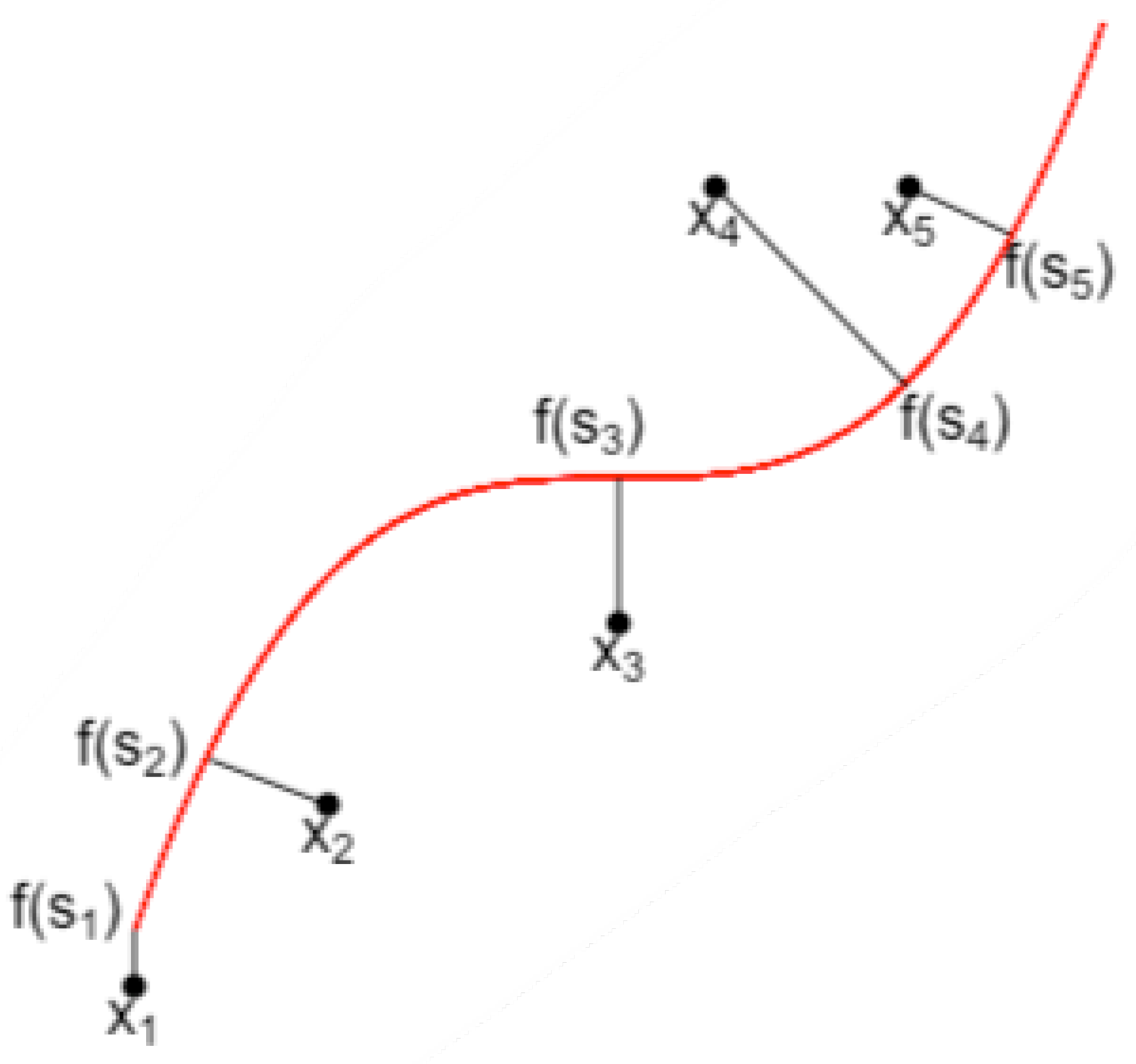

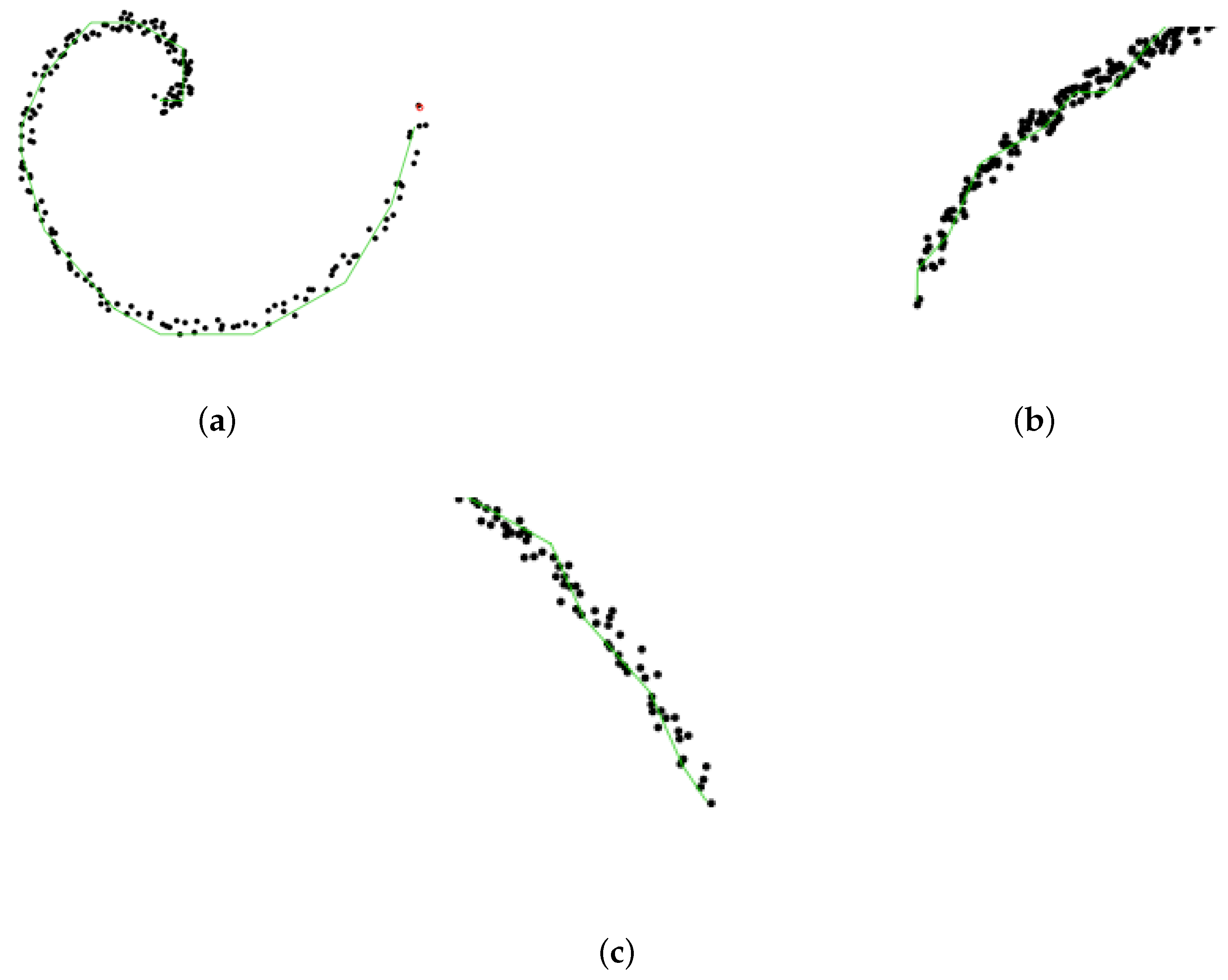

. This strategy is illustrated by

Figure 4.

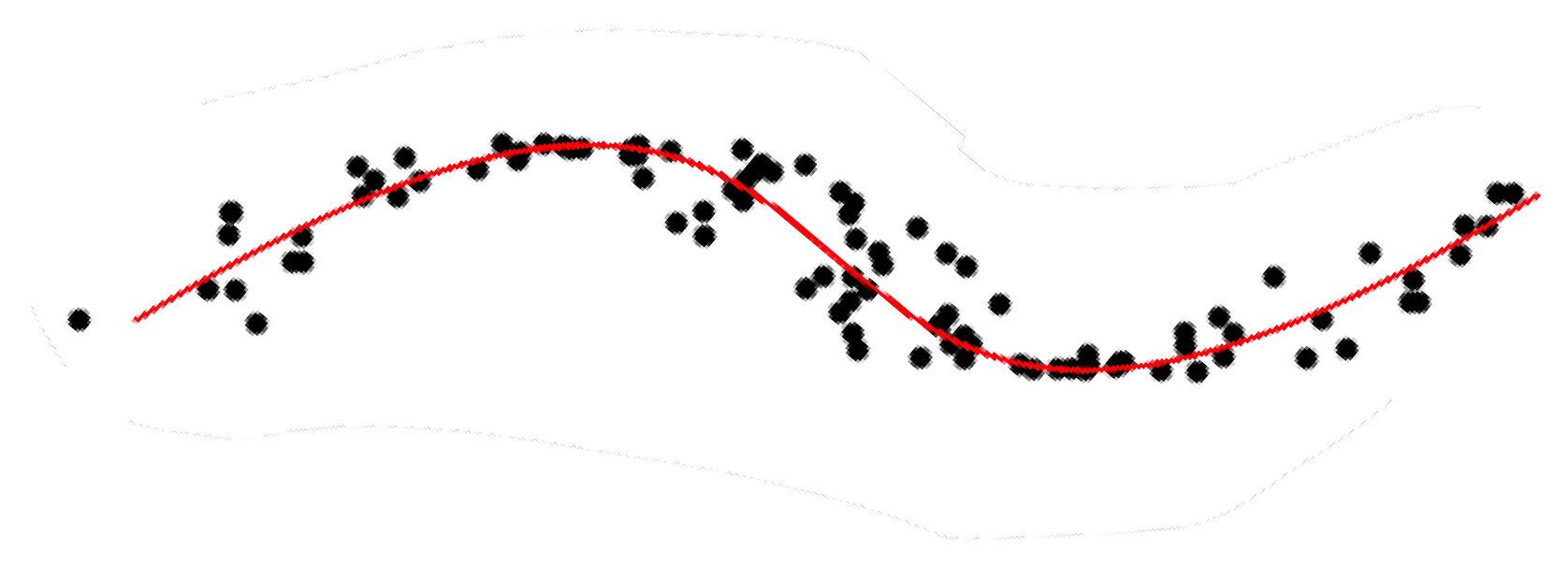

Our goal is to learn a time-dependent polygonal line which passes through the “middle” of data and gives a summary of all available observations

(denoted by

hereafter) before time

t. Our output at time

t is a polygonal line

depending on past information

and past predictions

. When

is revealed, the instantaneous loss at time

t is computed as

In what follows, we investigate regret bounds for the cumulative loss based on (

2). Given a measurable space

(embedded with its Borel

-algebra), we let

denote the set of probability distributions on

, and for some reference measure

, we let

be the set of probability distributions absolutely continuous with respect to

.

For any

, let

denote a probability distribution on

. We define the

prior on

as

where

and

.

We adopt a quasi-Bayesian-flavored procedure: consider the Gibbs quasi-posterior (note that this is not a proper posterior in all generality, hence the term “quasi”):

where

as advocated by [

32,

35] who then considered realizations from this quasi-posterior. In the present paper, we will rather focus on a quantity linked to the mode of this quasi-posterior. Indeed, the mode of the quasi-posterior

is

where

(i) is a cumulative loss term,

(ii) is a term controlling the variance of the prediction

to past predictions

, and

(iii) can be regarded as a penalty function on the complexity of

if

is well chosen. This mode hence has a similar flavor to follow the best expert or follow the perturbed leader in the setting of prediction with experts (see [

22,

36], Chapters 3 and 4) if we consider each

as an expert which always delivers constant advice. These remarks yield Algorithm 1.

| Algorithm 1 Sequentially learning principal curves. |

- 1:

Input parameters: and penalty function - 2:

Initialization: For each , draw and - 3:

For - 4:

Get the data - 5:

where , . - 6:

End for

|

3. Regret Bounds for Sequential Learning of Principal Curves

We now present our main theoretical results.

Theorem 1. For any sequence , and any penalty function , let . Let ; then the procedure described in Algorithm 1 satisfieswhere and The expectation of the cumulative loss of polygonal lines

is upper-bounded by the smallest penalized cumulative loss over all

up to a multiplicative term

, which can be made arbitrarily close to 1 by choosing a small enough

. However, this will lead to both a large

in

and a large

. In addition, another important issue is the choice of the penalty function

h. For each

,

should be large enough to ensure a small

, but not too large to avoid overpenalization and a larger value for

. We therefore set

for each

with

k segments (where

denotes the cardinality of a set

M) since it leads to

The penalty function

satisfies (

3), where

are constants depending on

R,

d,

,

p (this is proven in Lemma 3, in

Section 6). We therefore obtain the following corollary.

Corollary 1. Under the assumptions of Theorem 1, letThenwhere . Proof. Note that

and we conclude by setting

□

Sadly, Corollary 1 is not of much practical use since the optimal value for

depends on

which is obviously unknown, even more so at time

. We therefore provide an adaptive refinement of Algorithm 1 in the following Algorithm 2.

| Algorithm 2 Sequentially and adaptively learning principal curves. |

- 1:

Input parameters: , , , h and - 2:

Initialization: For each , draw , and - 3:

For - 4:

Compute - 5:

Get data and compute - 6:

- 7:

End for

|

Theorem 2. For any sequence , let where , , are constants depending on . Let andwhere and . Then the procedure described in Algorithm 2 satisfies The message of this regret bound is that the expected cumulative loss of polygonal lines

is upper-bounded by the minimal cumulative loss over all

, up to an additive term which is sublinear in

T. The actual magnitude of this remainder term is

. When

L is fixed, the number

k of segments is a measure of complexity of the retained polygonal line. This bound therefore yields the same magnitude as (

1), which is the most refined bound in the literature so far ([

18] where the optimal values for

k and

L were obtained in a model selection fashion).

4. Implementation

The argument of the infimum in Algorithm 2 is taken over

which has a cardinality of order

, making any greedy search largely time-consuming. We instead turn to the following strategy: Given a polygonal line

with

segments, we consider, with a certain proportion, the availability of

within a neighborhood

(see the formal definition below) of

. This consideration is well suited for the principal curves setting, since if observation

is close to

, one can expect that the polygonal line which well fits observations

lies in a neighborhood of

. In addition, if each polygonal line

is regarded as an action, we no longer assume that all actions are available at all times, and allow the set of available actions to vary at each time. This is a model known as “sleeping experts (or actions)” in prior work [

37,

38]. In this setting, defining the regret with respect to the best action in the whole set of actions in hindsight remains difficult, since that action might sometimes be unavailable. Hence, it is natural to define the regret with respect to the best ranking of all actions in the hindsight according to their losses or rewards, and at each round one chooses among the available actions by selecting the one which ranks the highest. Ref. [

38] introduced this notion of regret and studied both the full-information (best action) and partial-information (multi-armed bandit) settings with stochastic and adversarial rewards and adversarial action availability. They pointed out that the

EXP4 algorithm [

37] attains the optimal regret in the adversarial rewards case but has a runtime exponential in the number of all actions. Ref. [

39] considered full and partial information with stochastic action availability and proposed an algorithm that runs in polynomial time. In what follows, we materialize our implementation by resorting to “sleeping experts”, i.e., a special set of available actions that adapts to the setting of principal curves.

Let

denote an ordering of

actions, and

a subset of the available actions at round

t. We let

denote the highest ranked action in

. In addition, for any action

we define the reward

of

at round

by

It is clear that

. The convention from losses to gains is done in order to facilitate the subsequent performance analysis. The reward of an ordering

is the cumulative reward of the selected action at each time:

and the reward of the best ordering is

(respectively,

when

is stochastic).

Our procedure starts with a partition step which aims at identifying the “relevant” neighborhood of an observation with respect to a given polygonal line, and then proceeds with the definition of the neighborhood of an action . We then provide the full implementation and prove a regret bound.

Partition. For any polygonal line

with

k segments, we denote by

its vertices and by

the line segments connecting

and

. In the sequel, we use

to represent the polygonal line formed by connecting consecutive vertices in

if no confusion arises. Let

and

be the Voronoi partitions of

with respect to

, i.e., regions consisting of all points closer to vertex

or segment

.

Figure 5 shows an example of Voronoi partition with respect to

with three segments.

Neighborhood. For any

, we define the neighborhood

with respect to

as the union of all Voronoi partitions whose closure intersects with two vertices connecting the projection

of

x to

. For example, for the point

x in

Figure 5, its neighborhood

is the union of

and

. In addition, let

be the set of observations

belonging to

and

be its average. Let

denote the diameter of set

. We finally define the local grid

of

at time

t as

We can finally proceed to the definition of the neighborhood

of

. Assume

has

vertices

, where vertices of

belong to

while those of

and

do not. The neighborhood

consists of

sharing vertices

and

with

, but can be equipped with different vertices

in

; i.e.,

where

and

m is given by

In Algorithm 3, we initiate the principal curve

as the first component line segment whose vertices are the two farthest projections of data

(

can be set to 20 in practice) on the first component line. The reward of

at round

t in this setting is therefore

. Algorithm 3 has an exploration phase (when

) and an exploitation phase (

). In the exploration phase, it is allowed to observe rewards of all actions and to choose an optimal perturbed action from the set

of all actions. In the exploitation phase, only rewards of a part of actions can be accessed and rewards of others are estimated by a constant, and we update our action from the neighborhood

of the previous action

. This local update (or search) greatly reduces computation complexity since

when

p is large. In addition, this local search will be enough to account for the case when

locates in

. The parameter

needs to be carefully calibrated since it should not be too large to ensure that the condition

is non-empty; otherwise, all rewards are estimated by the same constant and thus lead to the same descending ordering of tuples for both

and

. Therefore, we may face the risk of having

in the neighborhood of

even if we are in the exploration phase at time

. Conversely, very small

could result in large bias for the estimation

of

. Note that the exploitation phase is close yet different to the label efficient prediction ([

40], Remark 1.1) since we allow an action at time

t to be different from the previous one. Ref. [

41] proposed the

geometric resampling method to estimate the conditional probability

since this quantity often does not have an explicit form. However, due to the simple exponential distribution of

chosen in our case, an explicit form of

is straightforward.

| Algorithm 3 A locally greedy algorithm for sequentially learning principal curves. |

- 1:

Input parameters: , , , , , and any penalty function h - 2:

Initialization: Given , obtain as the first principal component - 3:

For - 4:

Draw and . - 5:

Let

i.e., sorting all in descending order according to their perturbed cumulative reward till . - 6:

If , set and and observe - 7:

- 8:

If , set , and observe - 9:

where denotes all the randomness before time t and . In particular, when , we set for all , and . - 10:

End for

|

Theorem 3. Assume that , and let , , , andThen the procedure described in Algorithm 3 satisfies the regret bound The proof of Theorem 3 is presented in

Section 6. The regret is upper bounded by a term of order

, sublinear in

T. The term

is the price to pay for the local search (with a proportion

) of polygonal line

in the neighborhood of the previous

. If

, we would have that

, and the last two terms in the first inequality of Theorem 3 would vanish; hence, the upper bound reduces to Theorem 2. In addition, our algorithm achieves an order that is smaller (from the perspective of both the number

of all actions and the total rounds

T) than [

39] since at each time, the availability of actions for our algorithm can be either the whole action set or a neighborhood of the previous action while [

39] consider at each time only partial and independent stochastic available set of actions generated from a predefined distribution.

5. Numerical Experiments

We illustrate the performance of Algorithm 3 on synthetic and real-life data. Our implementation (hereafter denoted by

slpc—Sequential Learning of Principal Curves) is conducted with the R language and thus our most natural competitors are the R package

princurve, which is the algorithm from [

10], and

incremental, which is the algorithm from SCMS [

23]. We let

,

,

. The spacing

of the lattice is adjusted with respect to data scale.

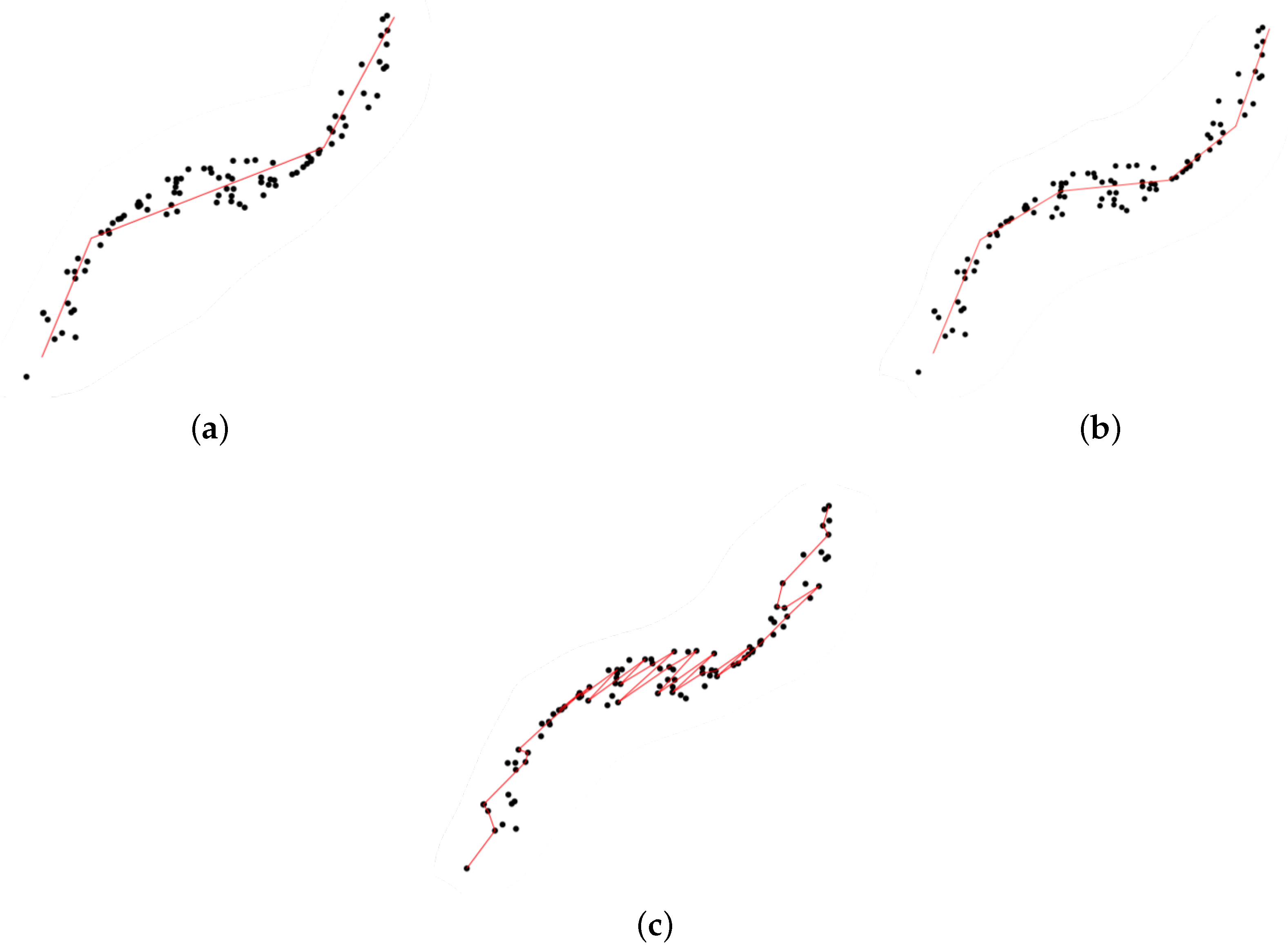

Synthetic data We generate a dataset

uniformly along the curve

,

.

Table 1 shows the regret (first row) for

the ground truth (sum of squared distances of all points to the true curve),

princurve and incremental SCMS (sum of squared distances between observation and fitted princurve on observations ),

slpc (regret being equal to in both cases).

The mean computation time with different values for the time horizons T are also reported.

Table 1 demonstrates the advantages of our method

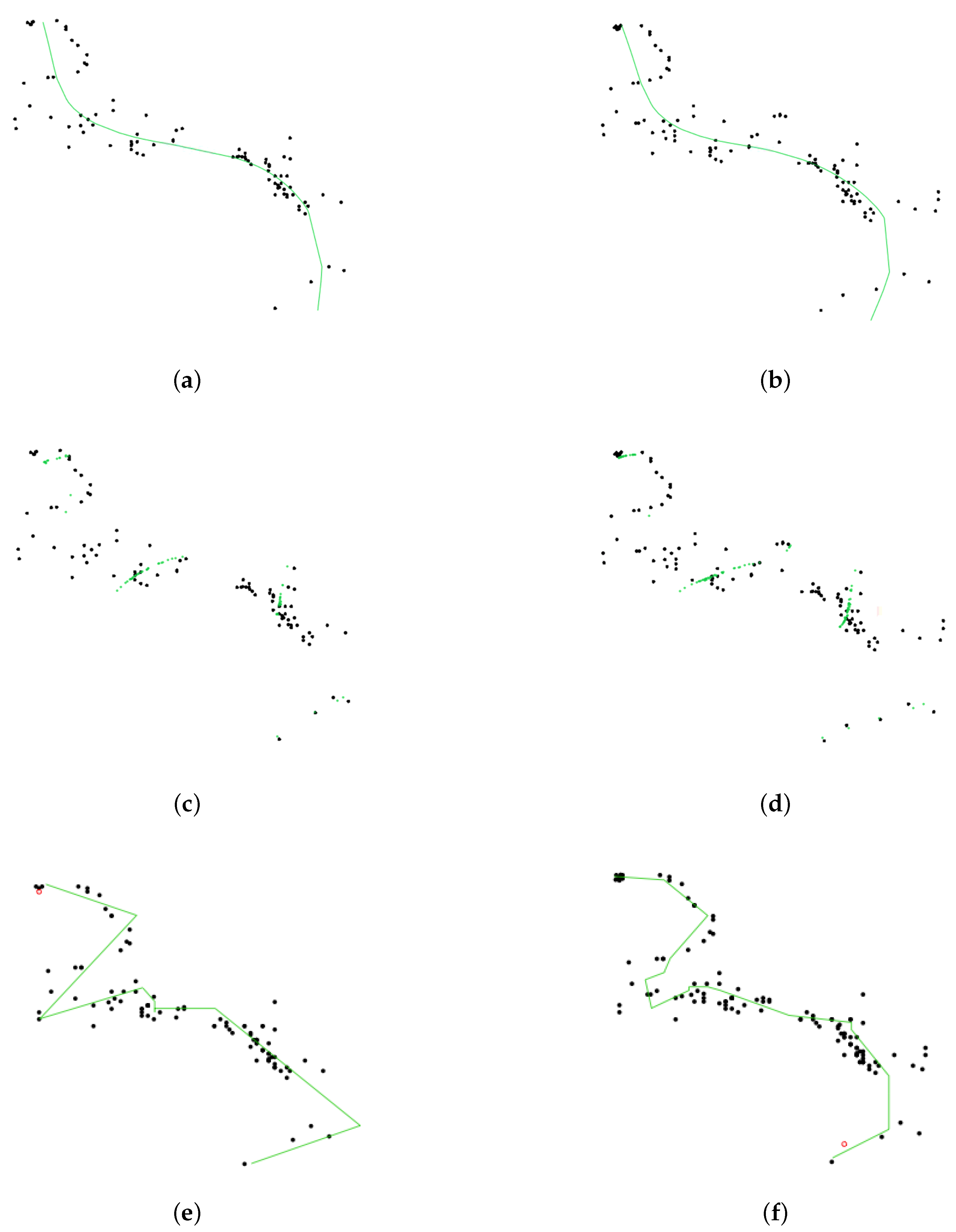

slpc, as it achieved the optimal tradeoff between performance (in terms of regret) and runtime. Although

princurve outperformed the other two algorithms in terms of computation time, it yielded the largest regret, since it outputs a curve which does not pass in “the middle of data” but rather bends towards the curvature of the data cloud, as shown in

Figure 6 where the predicted principal curves

for

princurve,

incremental SCMS and

slpc are presented.

incremental SCMS and

slpc both yielded satisfactory results, although the mean computation time of

splc was significantly smaller than that of

incremental SCMS (the reason being that eigenvectors of the Hessian of PDF need to be computed in

incremental SCMS).

Figure 7 showed, respectively, the estimation of the regret of

slpc and its per-round value (i.e., the cumulative loss divided by the number of rounds) both with respect to the round

t. The jumps in the per-round curve occurred at the beginning, due to the initialization from a first principal component and to the collection of new data. When data accumulates, the vanishing pattern of the per-round curve illustrates that the regret is sublinear in

t, which matches our aforementioned theoretical results.

In addition, to better illustrate the way

slpc works between two epochs,

Figure 8 focuses on the impact of collecting a new data point on the principal curve. We see that only a local vertex is impacted, whereas the rest of the principal curve remains unaltered. This cutdown in algorithmic complexity is one the key assets of

slpc.

Synthetic data in high dimension. We also apply our algorithm on a dataset

in higher dimension. It is generated uniformly along a parametric curve whose coordinates are

where

t takes 100 equidistant values in

. To the best of our knowledge, [

10,

16,

18] only tested their algorithm on 2-dimensional data. This example aims at illustrating that our algorithm also works on higher dimensional data.

Table 2 shows the regret for the ground truth,

princurve and

slpc.

In addition,

Figure 9 shows the behaviour of

slpc (green) on each dimension.

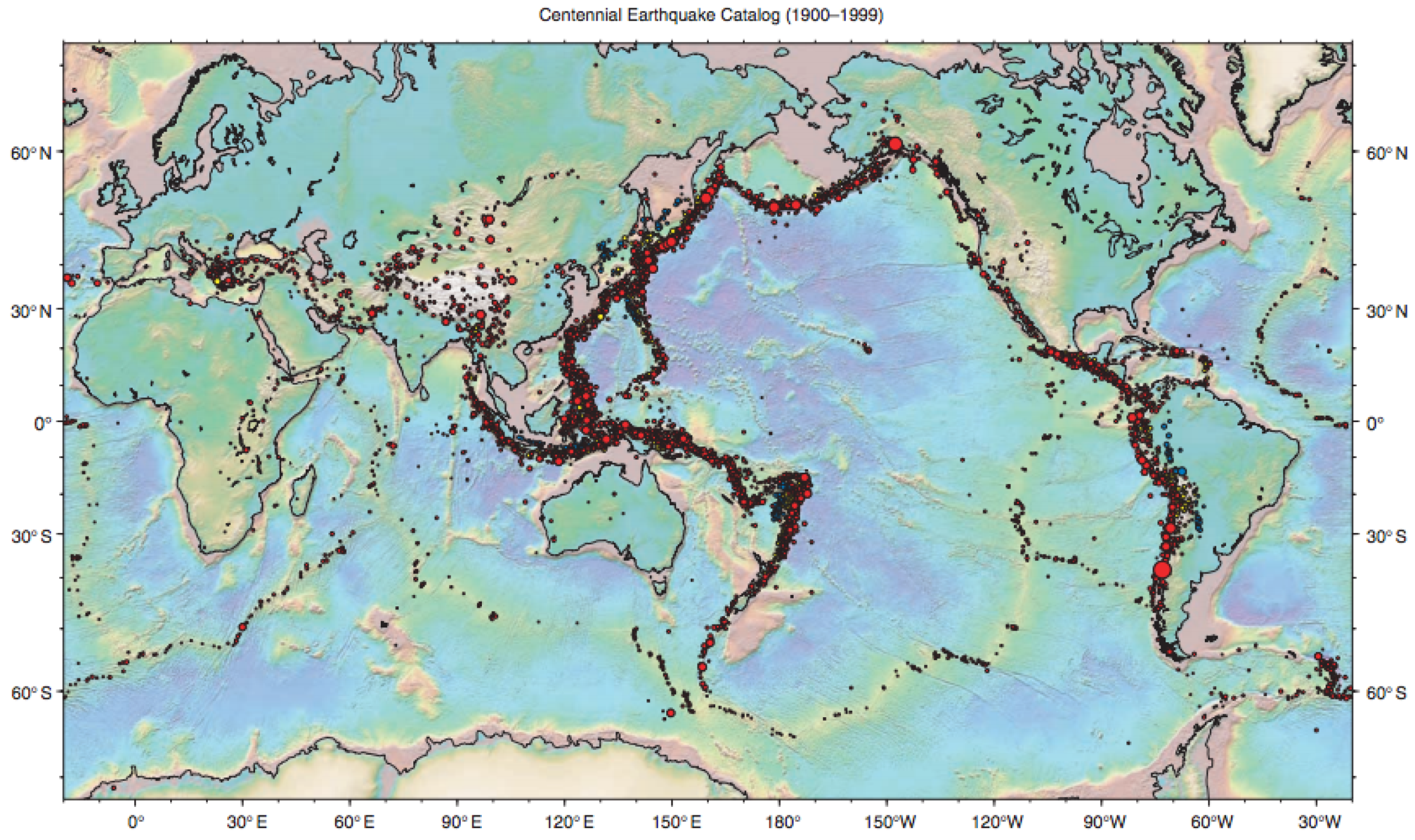

Seismic data. Seismic data spanning long periods of time are essential for a thorough understanding of earthquakes. The “Centennial Earthquake Catalog” [

42] aims at providing a realistic picture of the seismicity distribution on Earth. It consists in a global catalog of locations and magnitudes of instrumentally recorded earthquakes from 1900 to 2008. We focus on a particularly representative seismic active zone (a lithospheric border close to Australia) whose longitude is between E

to E

and latitude between S

to N

, with

seismic recordings. As shown in

Figure 10,

slpc recovers nicely the tectonic plate boundary, but both

princurve and

incremental SCMS with well-calibrated bandwidth fail to do so.

Lastly, since no ground truth is available, we used the coefficient to assess the performance (residuals are replaced by the squared distance between data points and their projections onto the principal curve). The average over 10 trials was 0.990.

Back to Seismic Data.Figure 11 was taken from the USGS website (

https://earthquake.usgs.gov/data/centennial/) and gives the global locations of earthquakes for the period 1900–1999. The seismic data (latitude, longitude, magnitude of earthquakes, etc.) used in the present paper may be downloaded from this website.

Daily Commute Data. The identification of segments of personal daily commuting trajectories can help taxi or bus companies to optimize their fleets and increase frequencies on segments with high commuting activity. Sequential principal curves appear to be an ideal tool to address this learning problem: we tested our algorithm on trajectory data from the University of Illinois at Chicago (

https://www.cs.uic.edu/~boxu/mp2p/gps_data.html). The data were obtained from the GPS reading systems carried by two of the laboratory members during their daily commute for 6 months in the Cook county and the Dupage county of Illinois.

Figure 12 presents the learning curves yielded by

princurve and

slpc on geolocalization data for the first person, on May 30. A particularly remarkable asset of

slpc is that abrupt curvature in the data sequence was perfectly captured, whereas

princurve does not enjoy the same flexibility. Again, we used the

coefficient to assess the performance (where residuals are replaced by the squared distances between data points and their projections onto the principal curve). The average over 10 trials was 0.998.

6. Proofs

This section contains the proof of Theorem 2 (note that Theorem 1 is a straightforward consequence, with

,

) and the proof of Theorem 3 (which involves intermediary lemmas). Let us first define for each

the following forecaster sequence

Note that

is an “illegal” forecaster since it peeks into the future. In addition, denote by

the polygonal line in

which minimizes the cumulative loss in the first

T rounds plus a penalty term.

is deterministic, and

is a random quantity (since it depends on

,

drawn from

). If several

attain the infimum, we chose

as the one having the smallest complexity. We now enunciate the first (out of three) intermediary technical result.

Lemma 1. For any sequence in , Proof. Proof by induction on

T. Clearly (

5) holds for

. Assume that (

5) holds for

:

Adding

to both sides of the above inequality concludes the proof. □

By (

5) and the definition of

, for

, we have

-almost surely that

where

by convention. The second and third inequality is due to respectively the definition of

and

. Hence

where the second inequality is due to

and

for

since

is decreasing in

t in Theorem 2. In addition, for

, one has

Hence, for any

where

. Therefore, we have

We thus obtain

Next, we control the regret of Algorithm 2.

Lemma 2. Assume that is sampled from the symmetric exponential distribution in , i.e., . Assume that , and define . Then for any sequence , , Proof. Let us denote by

the instantaneous loss suffered by the polygonal line

when

is obtained. We have

where the inequality is due to the fact that

holds uniformly for any

and

. Finally, summing on

t on both sides and using the elementary inequality

if

concludes the proof. □

Lemma 3. For , we control the cardinality of set aswhere denotes the volume of the unit ball in . Proof. First, let

denote the set of polygonal lines with

k segments and whose vertices are in

. Notice that

is different from

and that

Hence

where the second inequality is a consequence to the elementary inequality

combined with Lemma 2 in [

16]. □

We now have all the ingredients to prove Theorem 1 and Theorem 2.

First, combining (

6) and (

7) yields that

Assume that

,

and

for

, then

and moreover

where

and the second inequality is obtained with Lemma 1. By setting

we obtain

where

. This proves Theorem 1.

Finally, assume that

Since

for any

, we have

which concludes the proof of Theorem 2.

Lemma 4. Using Algorithm 3, if , , and for all , where is the cardinality of , then we have Proof. First notice that

if

, and that for

where

denotes the complement of set

. The first inequality above is due to the assumption that for all

, we have

. For

, the above inequality is trivial since

by its definition. Hence, for

, one has

Summing on both sides of inequality (

8) over

t terminates the proof of Lemma 4. □

Lemma 5. Let . If , then we have Proof. By the definition of

in Algorithm 3, for any

and

, we have

where in the second inequality we use that

for all

and

t, and that

when

. The rest of the proof is similar to those of Lemmas 1 and 2. In fact, if we define by

, then one can easily observe the following relation when

(similar relation in the case that

= 0)

Then applying Lemmas 1 and 2 on this newly defined sequence

leads to the result of Lemma 5. □

The proof of the upcoming Lemma 6 requires the following submartingale inequality: let

be a sequence of random variable adapted to random events

such that for

, the following three conditions hold:

Then for any

,

The proof can be found in Chung and Lu [

43] (Theorem 7.3).

Lemma 6. Assume that and , then we have Proof. First, we have almost surely that

Denote by

. Since

and

uniformly for any

and

t, we have uniformly that

, satisfying the first condition.

For the second condition, if

, then

Similarly, for

, one can have

. Moreover, for the third condition, since

then

Setting

leads to

Hence the following inequality holds with probability

Finally, noticing that

almost surely, we terminate the proof of Lemma 6. □

Proof of Theorem 3.

Assume that

,

and let

With those values, the assumptions of Lemmas 4, 5 and 6 are satisfied. Combining their results lead to the following

where the second inequality is due to the fact that the cardinality

is upper bounded by

for

. In addition, using the definition of

that

terminates the proof of Theorem 3. □