1. Introduction

With the increasing ability to collect and store data in large scales, we are facing the opportunities and challenges to analyze data with a large number of covariates per observation, the so-called high-dimensional problem. When this situation arises, variable selection is one of the most commonly used techniques, especially in radiological and genetic research, due to the nature of high-dimensional data extracted from imaging scans and gene sequencing. In the context of regression, when the number of covariates is greater than the sample size, the parameter estimation problem becomes ill posed, and variable selection is usually the first step for dimension reduction.

A good amount of work has recently been done for variable selection from both frequentist and Bayesian perspectives. On the frequentist side, extensive studies on variable selection have emerged ever since the appearance of least absolute shrinkage and selection operator (Lasso) [

1]. Other penalization approaches for sparse model selection including smoothly clipped absolute deviation (SCAD) [

2], minimum concave penalty (MCP) [

3] and many variations have also been introduced. Most of these methods are first considered in the context of linear regression and then extended to generalized linear models. Because all the methods share the basic desire of shrinkage toward sparse models, it has been understood that most of these frequentist methods can be interpreted from a Bayesian perspective and many analogous Bayesian methods have also been proposed. See for example [

4,

5,

6] that discuss the connection between penalized likelihood-based methods and Bayesian approaches. These Bayesian methods employed local priors, which still preserve positive values at null parameter values, to achieve desirable shrinkage.

In this paper, we are interested in nonlocal densities [

7] that are identically zero whenever a model parameter is equal to its null value. Compared to local priors, nonlocal prior distributions have relatively appealing properties for Bayesian model selection. In particular, nonlocal priors discard spurious covariates faster as the sample size grows, while preserving exponential learning rates to detect nontrivial coefficients [

7]. Johnson and Rossell [

8] and Shin et al. [

9] study the behavior of nonlocal densities for variable selection in a linear regression setting. When the number of covariates is much smaller than the sample size, [

10] establish the posterior convergence rate for nonlocal priors in a logistic regression model and suggest a Metropolis–Hastings algorithm for computation.

To the best of our knowledge, a rigorous investigation of high-dimensional posterior consistency properties for nonlocal priors has not been undertaken in the context of generalized linear regression. Although [

11] investigated the model selection consistency of nonlocal priors in generalized linear models, they assumed a fixed dimension

p. Motivated by this gap, our first goal was to examine the model selection property for nonlocal priors, particularly, the product moment (pMOM) prior [

8] in a high-dimensional generalized linear model. It is known that the computation problem can arise for Bayesian approaches due to the non-conjugate structure in generalized linear regression. Hence, our second goal was to develop efficient algorithms for exploring the massive posterior space. These were challenging goals of course, as the posterior distributions are not available in closed form for this type of nonlocal priors.

As the main contributions of this paper, we first establish model selection consistency for generalized linear models with pMOM prior on regression coefficients (Theorems 1–3) when the number of covariates grows at a sub-exponential rate of the sample size. Next,

n terms of computation, we first obtain the posteriors via Laplace approximation and then implement an efficient shotgun stochastic search (SSS) algorithm for exploring the sparsity pattern of the regression coefficients. In particular, the SSS-based methods have been shown to significantly reduce the computational time compared with standard Markov chain Monte Carlo (MCMC) algorithms in various settings [

9,

12,

13]. We demonstrate that our model can outperform existing state-of-the-art methods including both penalized likelihood and Bayesian approaches in different settings. Finally, the proposed method is applied to a functional Magnetic Resonance Imaging (fMRI) data set for identifying alternative brain activities and for predicting Parkinson’s disease.

The rest of paper is organized as follows.

Section 2 provides background material regarding generalized linear models and revisits the pMOM distribution. We detail strong selection consistency results in

Section 3, and proofs are provided in the

Appendix A. The posterior computation algorithm is described in

Section 4, and we show the performance of the proposed method and compare it with other competitors through simulation studies in

Section 5. In

Section 6, we conduct a real data analysis for predicting Parkinson’s disease and show our method yields better prediction performance compared with other contenders. To conclude our paper, a discussion is given in

Section 7.

2. Preliminaries

2.1. Model Specification for Logistic Regression

We first describe the framework for Bayesian variable selection in logistic regression followed by our hierarchical model specification. Let

be the binary response vector and

be the design matrix. Without loss of generality, we assume that the columns of

are standardized to have zero mean and unit variance. Let

denote the

ith row vector of

that contains the covariates for the

ith subject. Let

be the

vector of regression coefficients. We first consider the following standard logistic regression model:

We will work in a scenario where the dimension of predictors, p grows with the sample size n. Thus, we consider the number of predictors is function of n, i.e., , but we denote it as p for notational simplicity.

Our goal is variable selection, i.e., the correct identification of all non-zero regression coefficients. In light of that, we denote a model by if and only if all the nonzero elements of are and denote , where is the cardinality of . For any matrix A, let denote the submatrix of containing the columns of indexed by model . In particular, for , we denote as the subvector of containing the entries of corresponding to model .

The class of pMOM densities [

8] can be used for model selection through the following hierarchical model

Here

is a

nonsingular matrix,

r is a positive integer referred to as the order of the density and

is the normalizing constant independent of the positive constant

. Please note that prior (

2) is obtained as the product of the density of multivariate normal distribution and even powers of parameters,

. This results in

at

, which is desirable because (

2) is a prior for the nonzero elements of

. Some standard regularity assumptions on the hyperparameters will be provided later in

Section 3. In (

3),

is a positive integer restricting the size of the largest model, and a uniform prior is placed on the model space restricting our analysis to models having size less than or equal to

. Similar structure has also been considered in [

5,

9,

14]. An alternative is to use a complexity prior [

15] that takes the form of

for some positive constants

. The essence is to force the estimated model to be sparse by penalizing dense models. As noted in [

9], the model selection consistency result based on the nonlocal priors derives strength directly from the marginal likelihood and does not require strong penalty over model size. This is indeed reflected in the simulation studies in [

14], where the authors compare the model selection performance under uniform prior and complexity prior. The result under uniform prior is much better than that under complexity prior, as the complexity prior always tends to prefer the sparse models.

By the hierarchical model (

1) to (

3) and Bayes’ rule, the resulting posterior probability for model

is denoted by,

where

is the marginal density of

, and

is the marginal density of

under model

given by

where

is the log likelihood function. In particular, these posterior probabilities can be used to select a model by computing the posterior mode defined by

Of course, the closed form of these posterior probabilities cannot be obtained due to not only the nature of logistic regression but also the structure of nonlocal prior. Therefore, special efforts need to be devoted to both consistency analysis and computational strategy as we shall see in the following sections.

2.2. Extension to Generalized Linear Model

We can easily extend our previous discussion on logistic regression to a generalized linear model (GLM) [

16]. Given predictors

and an outcome

for

, a probability density function (or probability mass function) of a generalized linear model has the following form of the exponential family

in which

is a continuously differentiable function with respect to

with nonzero derivative,

is also a continuously differentiable function of

,

is some constant function of

y, and

is also known as the natural parameter that relates the response to the predictors through the linear function

. The mean function is

, where

is the inverse of some chosen link function.

The class of pMOM densities specified in (

2) can still be used for model selection in this generalized setting by noting that the log likelihood function in (

5) and (

6) now takes the general form of

After obtaining the posterior probabilities in (

4) with the log likelihood substituted as (

8), we can select a model by computing the posterior mode. In

Section 4, we will adopt some search algorithm that use these posterior probabilities to target the mode in a more efficient way.

3. Main Results

In this section, we show that the proposed Bayesian model enjoys desirable theoretical properties. Let

be the true model, which means that the nonzero locations of the true coefficient vector are

. We consider

to be a fixed vector. Let

be the true coefficient vector and

be the vector of the true nonzero coefficients. In the following analysis, we will focus on logistic regression, but our argument can be easily extended to any other GLM as well. In particular,

as the negative Hessian of

, where

,

and

In the rest of the paper, we denote

and

for simplicity.

Before we establish our main results, the following notations are needed for stating our assumptions. For any , and mean the maximum and minimum of a and b, respectively. For any sequences and , we denote , or equivalently , if there exists a constant such that for all large n. We denote , or equivalently , if as . Without loss of generality, if and there exist constants such that , we denote . For a given vector , the vector -norm is denoted as . For any real symmetric matrix , and are the maximum and minimum eigenvalue of , respectively. To attain desirable asymptotic properties of our posterior, we assume the following conditions:

Condition (A1) and for some .

Condition (A1) ensures our proposed method can accommodate high dimensions where the number of predictors grows at a sub-exponential rate of

n. Condition (A1) also specifies the parameter

in the uniform prior (

3) that restricts our analysis on a set of

reasonably large models. Similar assumptions restricting the model size have been commonly assumed in the sparse estimation literature [

4,

5,

9,

17].

Condition (A2) For some constant

and

,

and

for any integer

. Furthermore,

.

Condition (A2) gives lower and upper bounds of

and

, respectively, where

is a large model satisfying

. The lower bound condition can be regarded as a restricted eigenvalue condition for

-sparse vectors. Restricted eigenvalue conditions are routinely assumed in high-dimensional theory to guarantee some level of curvature of the objective function and are satisfied with high probability for sub-Gaussian design matrices [

5]. Similar conditions have also been used in the linear regression literature [

18,

19,

20]. The last assumption in Condition (A2) says that the magnitude of true signals is bounded above

up to some constant, which allows the magnitude of signals to increase to infinity.

Condition (A3) For some constant

,

Condition (A3) gives a lower bound for nonzero signals, which is called the

beta-min condition. In general, this type of condition is necessary for catching every nonzero signal. Please note that due to Conditions (A1) and (A2), the right-hand side of (

9) decreases to zero as

. Thus, it allows the smallest nonzero coefficients to tend to zero as we observe more data.

Condition (A4) For some small constant

, the hyperparameters

and

r satisfy

Condition (A4) suggests appropriate conditions for the hyperparameter

in (

2). A similar assumption has also been considered in [

9]. The scale parameter

in the nonlocal prior density reflects the dispersion of the nonlocal prior density around zero, and implicitly determines the size of the regression coefficients that will be shrunk to zero [

8,

9]. For the below theoretical results, we assume that

for simplicity, but our results are still valid for other choices of

as long as

and

.

Theorem 1 (No super set).

Under conditions (A1)

, (A2)

and (A4)

, Theorem 1 says that, asymptotically, our posterior will not overfit the model, i.e., not include unnecessarily many variables. Of course, it does not guarantee that the posterior will concentrate on the true model. To capture every significant variable, we require the magnitudes of nonzero entries in not to be too small. Theorem 2 shows that with an appropriate lower bound specified in Condition (A3), the true model will be the mode of the posterior.

Theorem 2 (Posterior ratio consistency).

Under conditions (A1)

–(A4)

with for some small constant , Posterior ratio consistency is a useful property especially when we are interested in the point estimation with the posterior mode, but does not provide how large probability the posterior puts on the true model. In the following theorem, we state that our posterior achieves strong selection consistency. By strong selection consistency, we mean that the posterior probability assigned to the true model t converges to 1. Since strong selection consistency implies posterior ratio consistency, it requires a slightly stronger condition on the lower bound for the magnitudes of nonzero entries in , i.e., a larger value of , compared to that in Theorem 2.

Theorem 3 (Strong selection consistency).

Under conditions (A1)

–(A4)

with for some small constant , the following holds: 4. Computational Strategy

In this section, we describe how to approximate the marginal density of the data and to conduct the model selection procedure. The integral formulation in (

4) leads to the posterior probabilities not available in closed form. Hence, we use Laplace approximation to compute

and

. A similar approach to compute posterior probabilities has been used in [

8,

9,

10].

Please note that for any model

, when

, the normalization constant

in (

2) is given by

. Let

For any model

, the Laplace approximation of

is given by

where

obtained via the optimization function optim in R using a quasi-Newton method and

is a

symmetric matrix which can be calculated as:

The above Laplace approximation can be used to compute the log of the posterior probability ratio between any given model and true model , and select a model with the highest probability.

We then adopt the shotgun stochastic search (SSS) algorithm [

9,

12] to efficiently navigate through the massive model space and identify the global maxima. Using the Laplace approximations of the marginal probabilities in (

11), the SSS method aims at exploring “interesting” regions of the resulting high-dimensional model spaces and quickly identifies regions of high posterior probability over models. Let

containing all the neighbors of model

, in which

,

and

. The SSS procedure is described in Algorithm 1.

| Algorithm 1 Shotgun Stochastic Search (SSS) |

Choose an initial model

for to do

Compute for all

Sample , and , from , and with probabilities proportional to

Sample the next model from with probability proportional to

end for |

5. Simulation Studies

In this section, we demonstrate the performance of the proposed method in various settings. Let be the design matrix whose first columns correspond to the active covariates for which we have nonzero coefficients, while the rest correspond to the inactive ones with zero coefficients. In all the simulation settings, we generate for under the following two different cases of :

Case 1: Isotropic design, where , i.e., no correlation imposed between different covariates.

Case 2: Autoregressive correlated design, where for all . The correlations among different covariates are set to different values.

Following the simulation settings in [

9,

10], we consider the following two designs, each with the same sample size

and number of predictors being either

or 150:

We investigate the following two settings for the true coefficient vector to include different combinations of small and large signals.

Setting 1: All the entries of are set to 3.

Setting 2: All the entries of are generated from .

Finally, for given

and

, we sample

from the following logistic model as in (

1)

We will refer to our proposed method as “nonlocal” and its performance will then be compared with other existing methods including Spike and Slab prior-based model selection [

21], empirical Bayesian LASSO (EBLasso) [

22], Lasso [

23] and SCAD [

24]. The tuning parameters in the regularization approaches are chosen by 5-fold cross-validation. Spike and slab prior method is implemented via the BoomSpikeSlab package in R. For the nonlocal prior, the hyperparameters are set at

,

and we tune

for four different values of

. We choose the optimal

by the mean squared prediction error through 5-fold cross-validation. Please note that this implies that

is data-dependent and the resulting procedure is similar to an empirical-Bayesian approach in the high-dimensional Bayesian literature given the prior knowledge about the sparse true model [

13]. For the SSS procedure, the initial model was set by randomly taking three coefficients to be active and the remaining to be inactive. The detailed steps for our method are coded in R and publicly available at

https://github.com/xuan-cao/Nonlocal-Logistic-Selection. In particular, the stochastic search is implemented via the SSS function in the R package BayesS5.

To evaluate the performance of variable selection, the precision, sensitivity, specificity, Matthews correlation coefficient (MCC) [

25] and mean-squared prediction error (MSPE) are reported at

Table 1,

Table 2,

Table 3 and

Table 4, where each simulation setting is repeated for 20 times. The criteria are defined as

where

TP,

TN,

FP and

FN are true positive, true negative, false positive and false negative, respectively. Here we denote

, where

is the estimated coefficient based on each method. For Bayesian methods, the usual GLM estimates based on the selected support are used as

. We generated test samples

with

to calculate the MSPE.

Based on the above simulation results, we notice that under the first isotropic covariance case, the nonlocal-based approach overall works better than other methods especially in the strong signal setting (i.e., Setting 1), where our method is able to consistently achieve perfect estimation accuracy. This is because as signal strength gets stronger, the consistency conditions of our method are easier to satisfy which leads to better performance. When the covariance is autoregressive, our method suffers from lower sensitivity compared with the frequentist approaches in high-dimensional design (

Table 4), but still has higher precision, specificity and MCC. The poor precision of the regularization methods has also been discussed in previous literature in the sense that selection of the regularization parameter using cross-validation is optimal with respect to prediction but tends to include too many noise predictors [

26]. Again we observe under the autoregressive design, the performance of our method improves as the true signals strengthen. To sum up, the above simulation studies indicate that the proposed method can perform well under a variety of configurations with different data generation mechanisms.

7. Conclusions

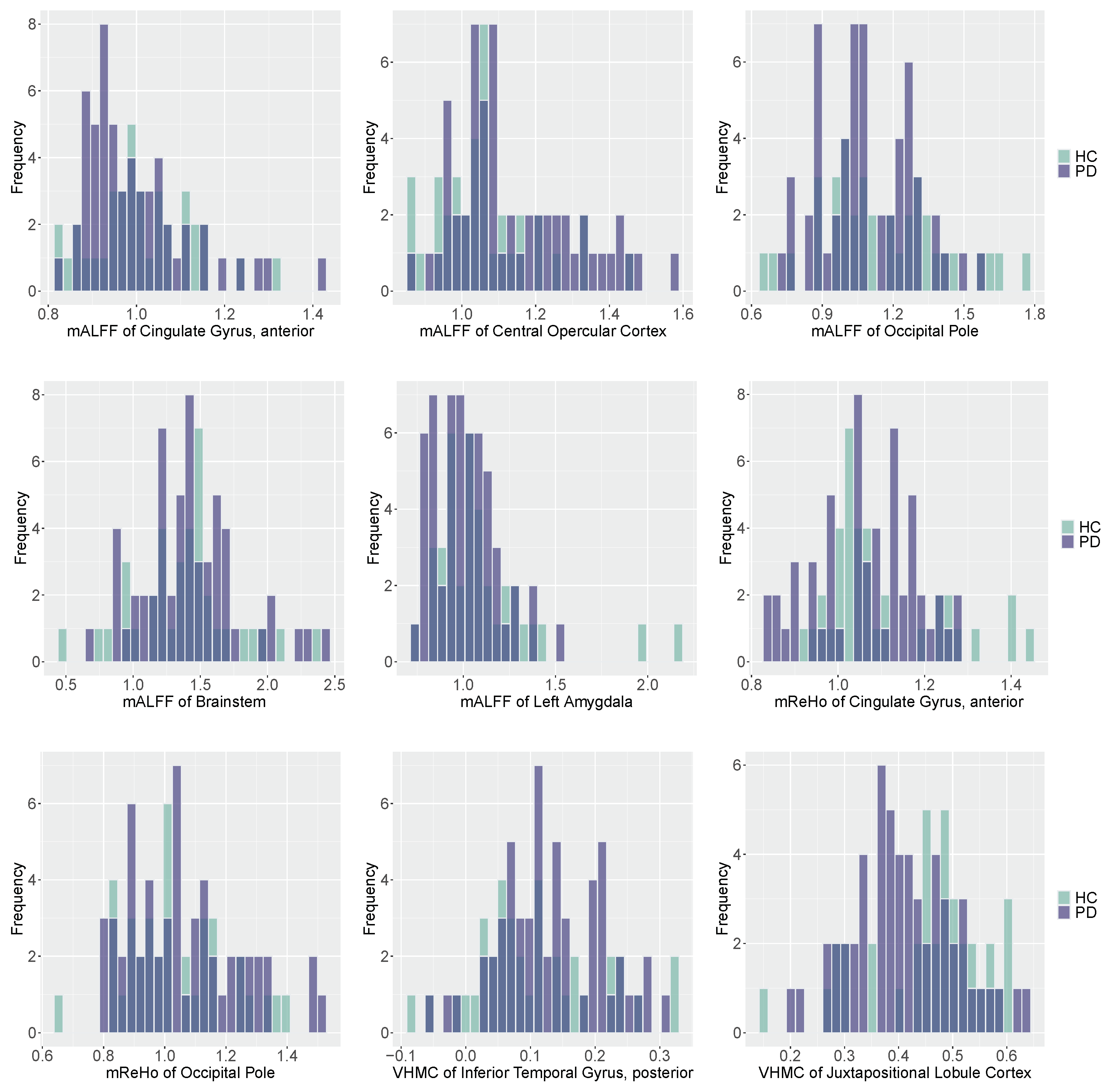

In this paper, we propose a Bayesian hierarchical model with a pMOM prior specification over regression coefficients to perform variable selection in high-dimensional generalized linear models. The model selection consistency of our method is established under mild conditions and the shotgun stochastic search algorithm can be used for the implementation of our proposed approach. Our simulation and real data studies indicate that the proposed method has better performance for variable selection compared to a variety of state-of-the-art competing methods. In the fMRI data analysis, our method is able to identify abnormal functional brain activities for PD that occur in the regions of interest including cingulate gyrus, central opercular cortex, occipital pole, brainstem, left amygdala, occipital pole, inferior temporal gyrus, and juxtapositional lobule cortex. These findings suggest disease-related alterations of functional activities that provide physicians sufficient information to get involved with early diagnosis and treatment. Our findings are also coherent with the alternative functional features in cortical regions, brainstem, and limbic regions discovered in previous studies [

32,

33,

34,

35].

Our fMRI study certainly has limitations. First, we would like to note that fMRI data are typically treated as spatio-temporal objects and a generalized linear model with spatially varying coefficients can be implemented for brain decoding [

36]. However, in our application, for each subject, a total of 135 fMRI scans were obtained, each with the dimension of

. If we take each voxel as a covariate to perform the whole-brain functional analysis, it would be computationally challenging and impractical given the extremely high dimension. Hence, we adopt the radiomics approach to extract three different types of features that can summarize the functional activity of the brain, and take these radiomic features as covariates in our generalized linear model. For future studies, we will focus on several regions of interest rather than the entire brain and take the spatio-temporal dependency among voxels into consideration.

Second, although ReHo, ALFF, and VHMC are different types of radiomic features that quantify the functional activity of the brain, it is definitely possible that in some regions, three measures are highly correlated with each other. Our current theoretical and computational strategy can accommodate a reasonable amount of correlations among covariates, but might not work in the presence of high correlation structure. For future studies, we will first carefully examine the potential correlations among features and might only retain one feature for each region if significant correlations are detected.

One possible extension of our methodology is to address the potential misspecification of the hyperparameter

. The scale parameter

is of particular importance in the sense that it can reflect the dispersion of the nonlocal density around zero, and implicitly determine the size of the regression coefficients that will be shrunk to zero [

8]. Cao et al. [

14] investigated the model selection consistency for the hyper-pMOM priors in linear regression setting, where an additional inverse-gamma prior is placed over

. Wu et al. [

11] proved the model selection consistency using hyper-pMOM prior in generalized linear models, but assumed a fixed dimension

p. For future study, we will consider this fully Bayesian approach to carefully examine the theoretical and empirical properties for such hyper-pMOM prior in the context of high-dimensional generalized linear regression. We can also extend our method to develop a Bayesian approach for growth models in the context of non-linear regression [

37], where the log-transformation is typically used to recover the additive structure. However, then the model does not fall into the category of GLMs, which is beyond the current setting in this paper. Therefore, we leave it as a future work.