A Denoising Method for Fiber Optic Gyroscope Based on Variational Mode Decomposition and Beetle Swarm Antenna Search Algorithm

Abstract

1. Introduction

2. Theoretical Background

2.1. Beetle Swarm Antenna Search Algorithm

| Algorithm 1 BSAS algorithm |

| Input: Define the fitness function . Set the optimization variable x to be optimized and determine its dimension n. |

| Output: and . |

| 1: Initialize: |

| Initialize the position of beetle group and probability constant ; |

| Initialize the search step size and decay coefficient ; |

| Initialize probability constant and set parameter ; |

| Initialize the sensing distance and decay coefficient ; |

| Initialize the maximum number of iterations ; |

| Set initial optimization results for and . |

| 2: if then |

| 3: Generate random directions for each beetle by Equation (1). |

| 4: Calculate the antennae position and of each beetle by Equation (2). |

| 5: Calculate the position of each beetle by Equation (4), and calculate the fitness function value . |

| 6: Compare all and in this iteration. |

| 7: if ∃ then |

| 8: if then |

| 9: = |

| 10: = |

| 11: else |

| 12: = |

| 13: = |

| 14: end if |

| 15: else |

| 16: if then |

| 17: Search step is updated by Equation (5). |

| 18: Sensing distance is updated by Equation (3). |

| 19: else |

| 20: |

| 21: end if |

| 22: end if |

| 23: |

| 24:end if |

2.2. Variational Mode Decomposition Algorithm

| Algorithm 2 VMD algorithm |

3. Methodology

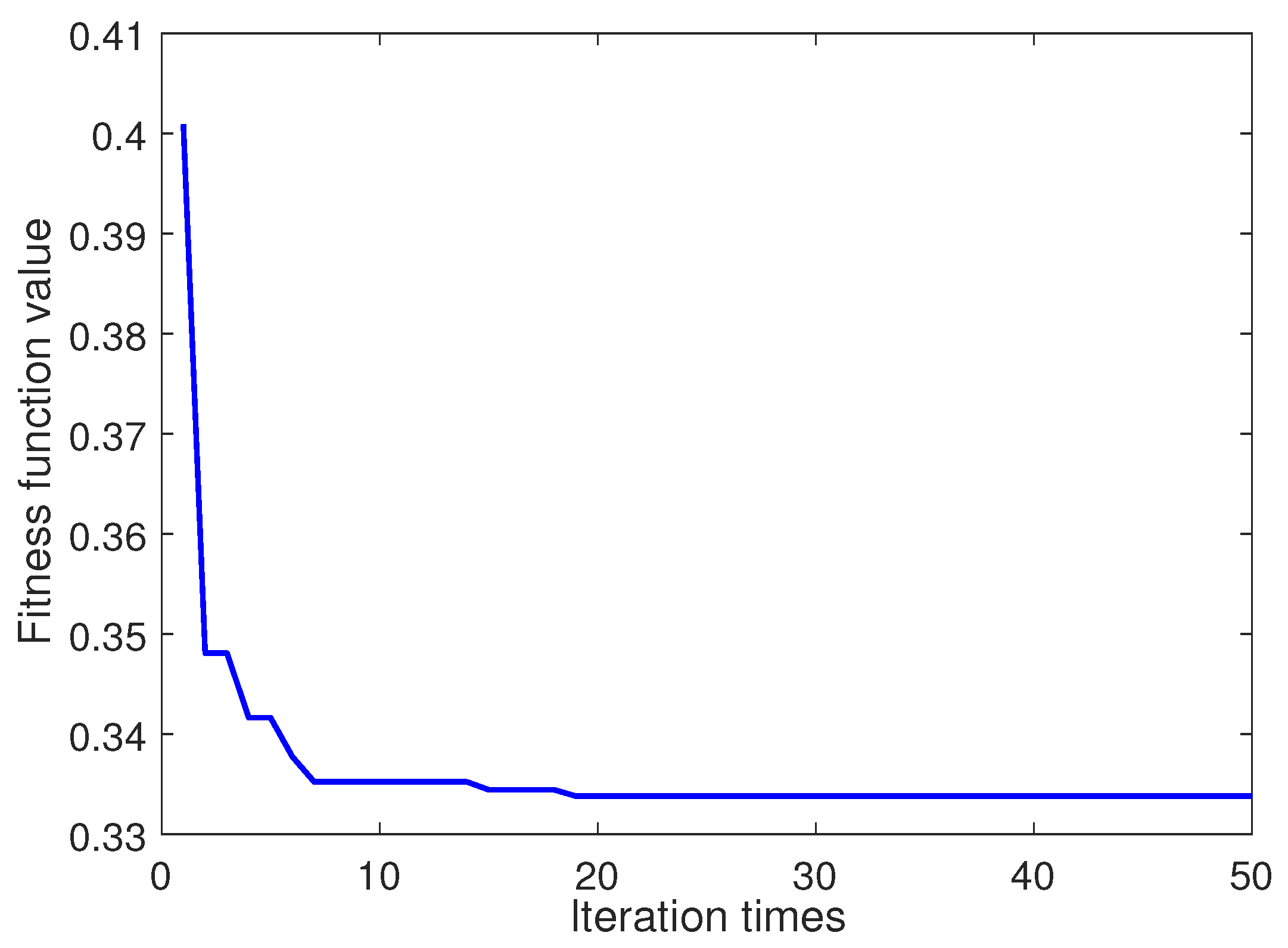

3.1. VMD Parameter Optimization

3.2. Fitness Function Based on Permutation Entropy

3.3. Selecting Relevant Modes

3.4. Proposed Methodology

- Step 1:

- The parameters of the BSAS algorithm are initialized. At the same time, the parameters in permutation entropy are initialized too.

- Step 2:

- Firstly, the VMD algorithm is used to decompose the original signal. After that, the fitness function value based on permutation entropy is calculated, and the parameters K and are optimized by using the BSAS algorithm.

- Step 3:

- Determine whether the termination condition is met. If it is, optimal parameter combination is saved, otherwise step 2 is repeated.

- Step 4:

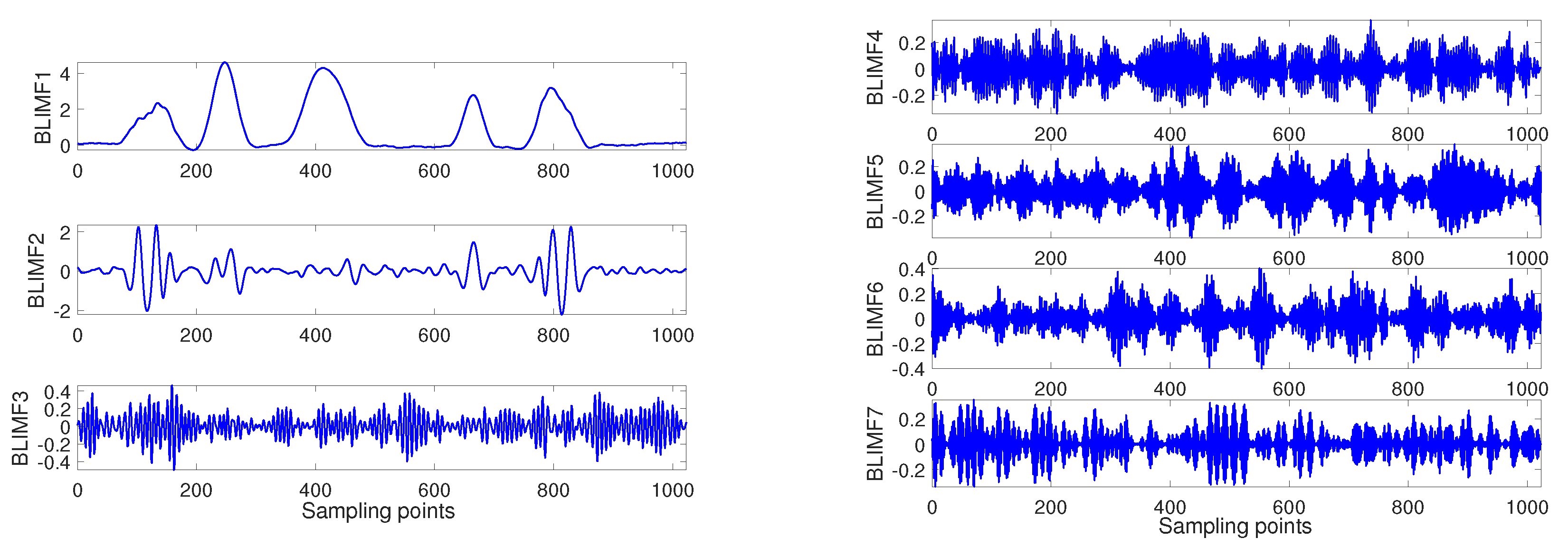

- The original signal is decomposed by using the VMD algorithm based on optimal parameter combination.

- Step 5:

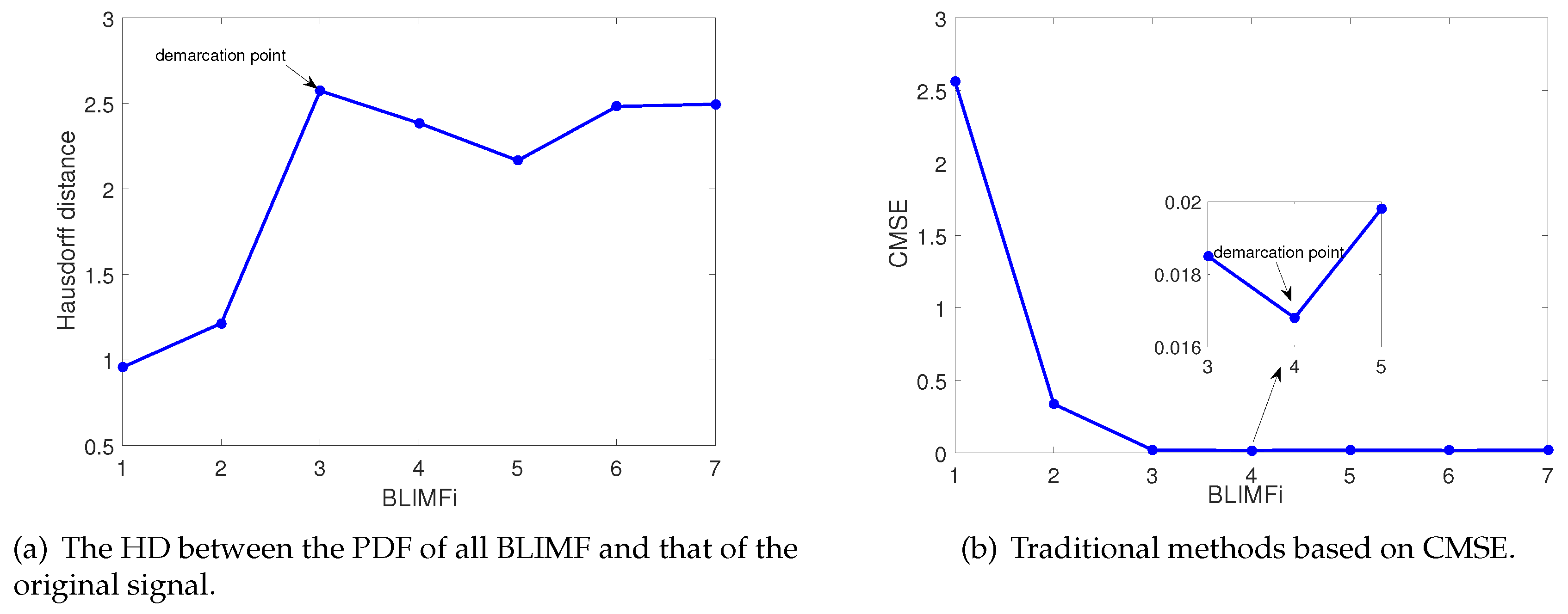

- The HD between the PDF of all BLIMF and that of the original signal is calculated to determine the demarcation point.

- Step 6:

- According to the demarcation point, the selected BLIMF components are retained as the relevant modes, and the unselected BLIMF components are removed.

- Step 7:

- The relevant modes are reconstructed, and finally the denoised signal is obtained.

4. Simulation and Analysis

4.1. Simulation Environment

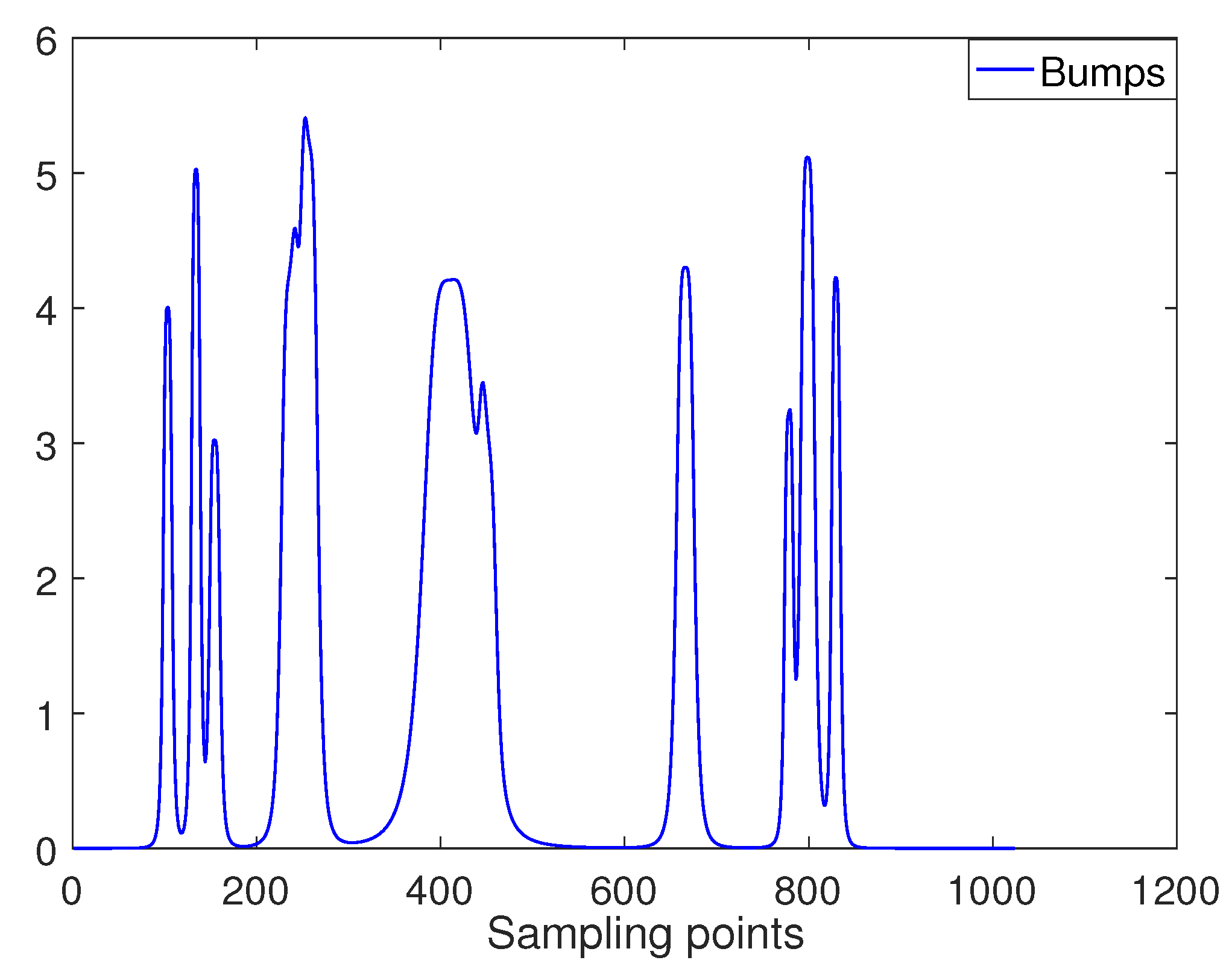

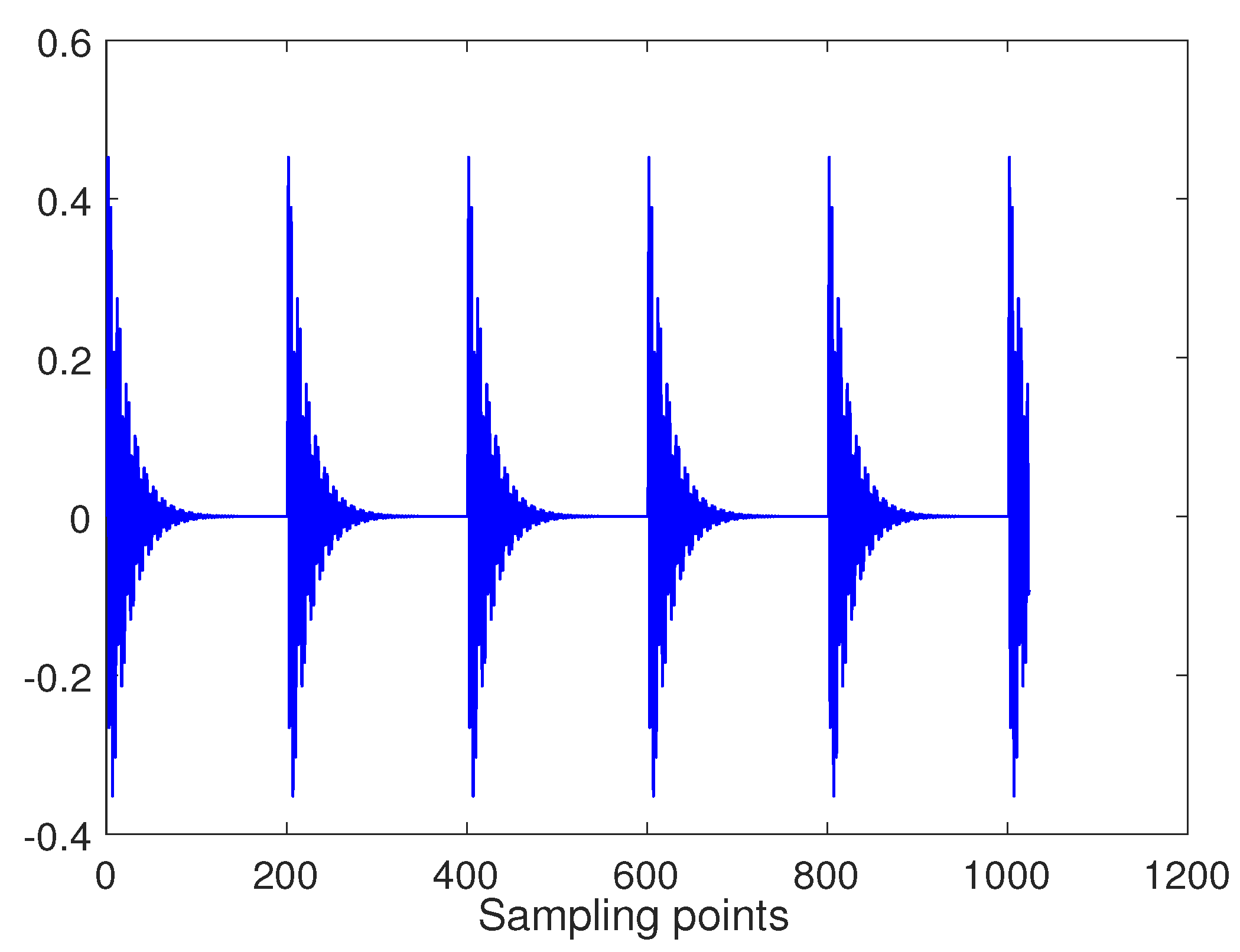

4.2. Simulation Results and Analysis

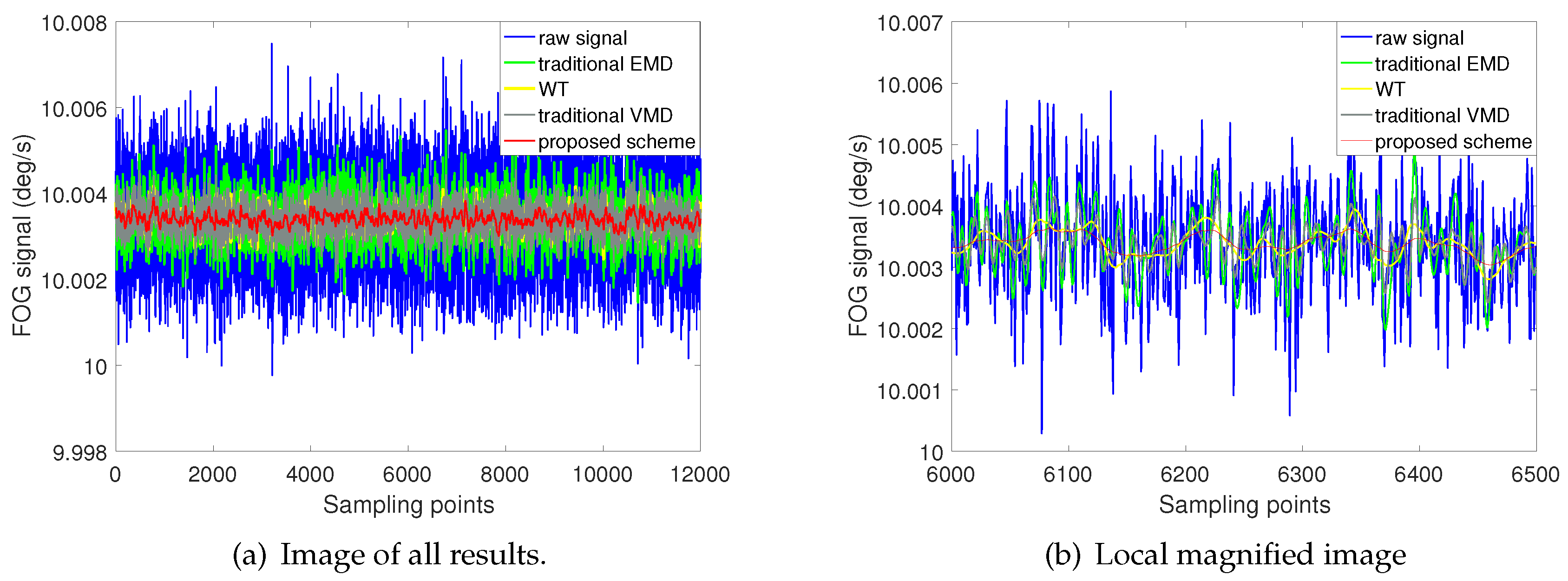

5. Experimental Analysis

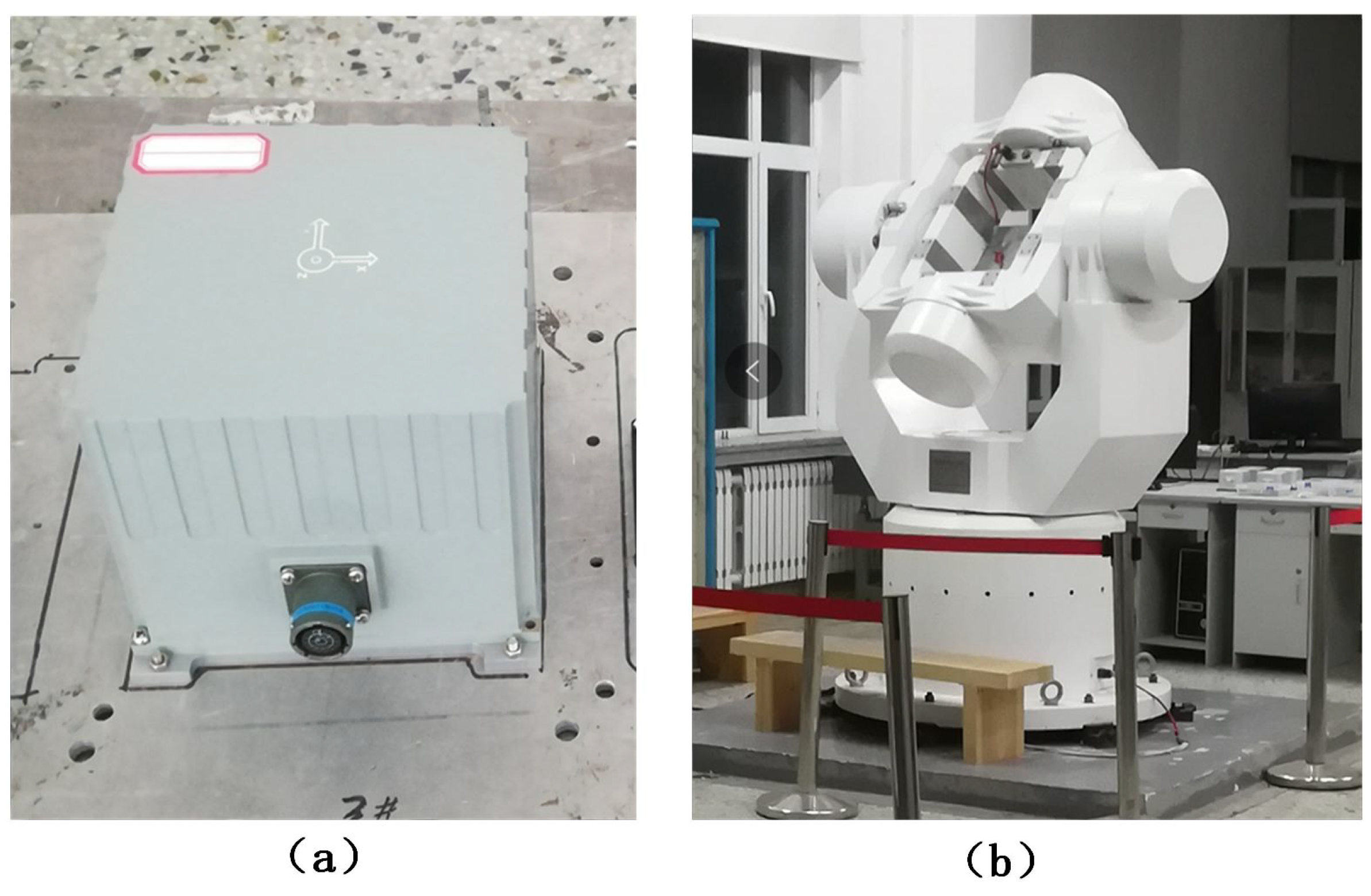

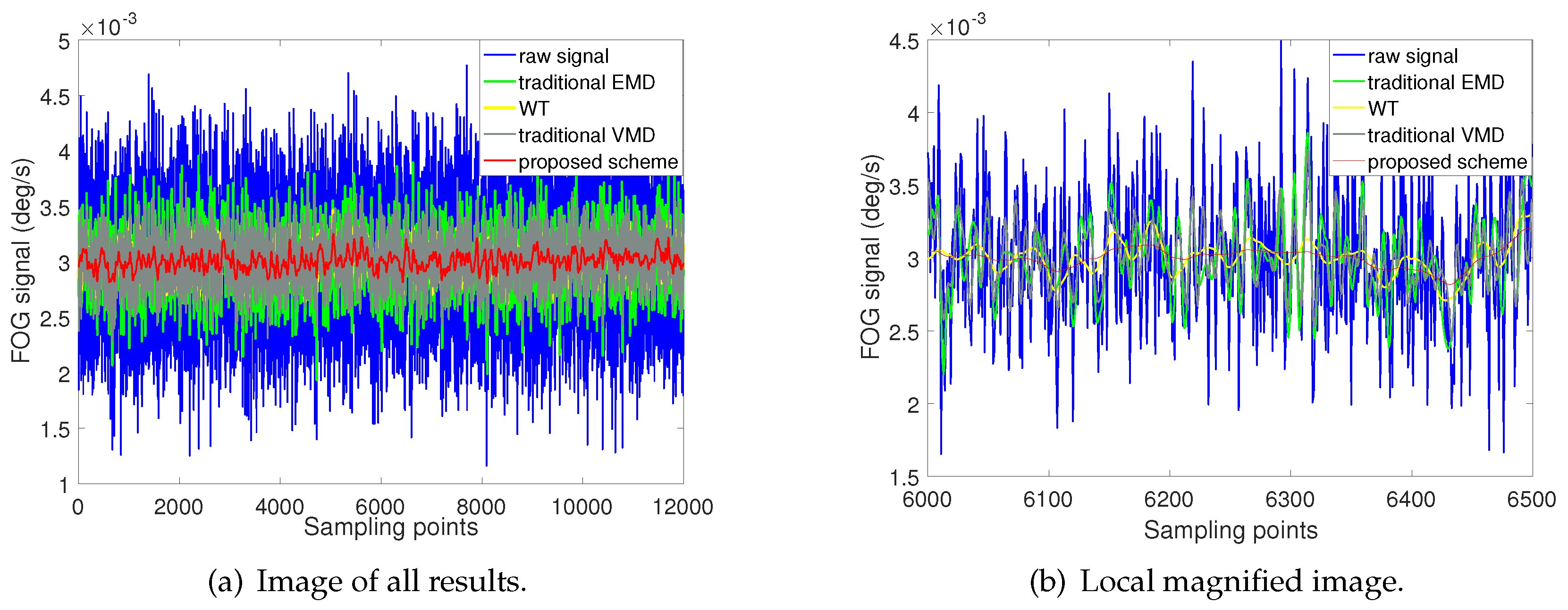

5.1. Static Test Experiment

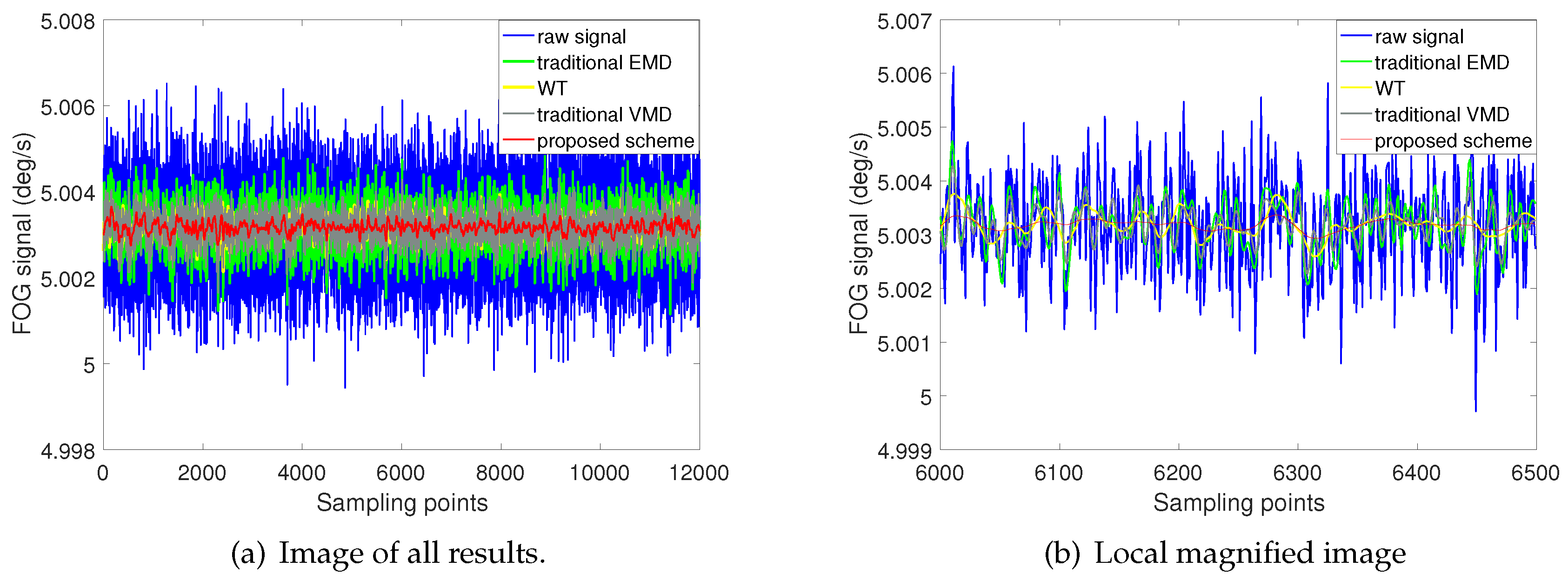

5.2. Dynamic Rotation Test Experiment

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Eisele, J.; Song, Z.; Nelson, K.; Mohseni, K. Visual-Inertial Guidance with a Plenoptic Camera for Autonomous Underwater Vehicles. IEEE Robot. Autom. Lett. 2019, 43, 2777–2784. [Google Scholar] [CrossRef]

- Carlone, L.; Karaman, S. Attention and Anticipation in Fast Visual-Inertial Navigation. IEEE Trans. Robot. 2019, 35, 1–20. [Google Scholar] [CrossRef]

- Gross, J.N.; Gu, Y.; Rhudy, M.B. Robust UAV relative navigation with DGPS, INS, and peer-to-peer radio ranging. IEEE Trans. Autom. Sci. Eng. 2015, 12, 935–944. [Google Scholar] [CrossRef]

- Chung, H.; Ojeda, L.; Borenstein, J. Accurate mobile robot dead-reckoning with a precision-calibrated fiber-optic gyroscope. IEEE Trans. Robot. Autom. 2001, 17, 80–84. [Google Scholar] [CrossRef]

- Ren, W.; Luo, Y.; He, Q.N.; Zhou, X.; Ren, G. Stabilization Control of Electro-Optical Tracking System With Fiber-Optic Gyroscope Based on Modified Smith Predictor Control Scheme. IEEE Sensors J. 2018, 18, 8172–8178. [Google Scholar] [CrossRef]

- Feng, S.; Chao, L.; Wei, G.; Qi, N. Research on modeling and compensation method of Fiber Optic Gyro’Random error. In Proceedings of the IEEE International Conference Mechatronics and Automation, Niagara Falls, ON, Canada, 29 July–1 August 2005. [Google Scholar]

- Wang, L.; Zhang, C.; Lin, T.; Li, X.; Wang, T. Characterization of a Fiber Optic Gyroscope in a Measurement While Drilling System with the Dynamic Allan Variance. Measurement 2015, 75, 263–272. [Google Scholar] [CrossRef]

- Narasimhappa, M.; Nayak, J.; Terra, M.H.; Sabat, S.L. ARMA model based adaptive unscented fading Kalman filter for reducing drift of fiber optic gyroscope. Sens. Actuator A-Phys. 2016, 251, 42–51. [Google Scholar] [CrossRef]

- Narasimhappa, M. Modeling of Inertial Rate Sensor Errors Using Autoregressive and Moving Average (ARMA) Models. In Gyroscopes-Principles and Applications; IntechOpen: London, UK, 2019. [Google Scholar]

- Nassar, S.; Schwarz, K.P.; El-Sheimy, N.; Noureldin, A. Modeling Inertial Sensor Errors Using Autoregressive (AR) Models. Navigation 2004, 51, 259–268. [Google Scholar] [CrossRef]

- Nassar, S.; Schwarz, K.; Elsheimy, N. INS and INS/GPS Accuracy Improvement Using Autoregressive (AR) Modeling of INS Sensor Errors. In Proceedings of the Ion National Technical Meeting, San Diego, CA, USA, 26–28 January 2004; pp. 936–944. [Google Scholar]

- Narasimhappa, M.; Sabat, S.L.; Nayak, J. Fiber-Optic Gyroscope Signal Denoising Using an Adaptive Robust Kalman Filter. IEEE Sens. J. 16, 3711–3718. [CrossRef]

- He, J.; Sun, C.; Wang, P. Noise Reduction for MEMS Gyroscope Signal: A Novel Method Combining ACMP with Adaptive Multiscale SG Filter Based on AMA. Sensors 2019, 19, 4382. [Google Scholar] [CrossRef]

- Daubechies, I. The wavelet transform, time-frequency localization and signal analysis. IEEE Trans. Inf. Theory 1990, 36, 961–1005. [Google Scholar] [CrossRef]

- Flandrin, P.; Rilling, G.; Goncalves, P. Empirical mode decomposition as a filter bank. IEEE Signal Process. Lett. 2004, 11, 112–114. [Google Scholar] [CrossRef]

- Zhang, Q.; Wang, L.; Gao, P.; Liu, Z. An Innovative Wavelet Threshold Denoising Method for Environmental Drift of Fiber Optic Gyro. Math. Probl. Eng. 2016, 2016, 1–8. [Google Scholar] [CrossRef][Green Version]

- Ma, J.; Yang, Z.; Shi, Z.; Zhang, X.; Liu, C. Application and Optimization of Wavelet Transform Filter for North-Seeking Gyroscope Sensor Exposed to Vibration. Sensors 2019, 19, 3624. [Google Scholar] [CrossRef] [PubMed]

- Liu, C.; Yang, Z.; Shi, Z.; Ma, J.; Cao, J. A Gyroscope Signal Denoising Method Based on Empirical Mode Decomposition and Signal Reconstruction. Sensors 2019, 19, 5064. [Google Scholar] [CrossRef] [PubMed]

- Wang, D.; Xu, X.; Zhang, T.; Zhu, Y.; Tong, J. An EMD-MRLS de-noising method for fiber optic gyro Signal. Optik 2019, 183, 971–987. [Google Scholar] [CrossRef]

- Wang, Y.; Markert, R. Filter bank property of variational mode decomposition and its applications. Signal Process. Process. 2016, 120, 509–521. [Google Scholar] [CrossRef]

- Wu, Y.; Shen, C.; Cao, H.; Che, X. Improved Morphological Filter Based on Variational Mode Decomposition for MEMS Gyroscope De-Noising. Micromachines 2018, 9, 246. [Google Scholar] [CrossRef]

- Zhang, X.; Cao, H.; Wang, C.; Kou, Z.; Shao, X.; Li, J.; Liu, J.; Shen, C. Delay-Free Tracking Differentiator Design Based on Variational Mode Decomposition: Application on MEMS Gyroscope Denoising. J. Sensors 2019, 2019, 1–13. [Google Scholar] [CrossRef]

- Wang, J.; Chen, H.; Yuan, Y.; Huang, Y. A novel efficient optimization algorithm for parameter estimation of building thermal dynamic models. Build. Environ. 2019, 153, 233–240. [Google Scholar] [CrossRef]

- Wang, J.; Chen, H. BSAS: Beetle Swarm Antennae Search Algorithm for Optimization Problems. arXiv 2018, arXiv:1807.10470. [Google Scholar]

- Jiang, X.; Li, S. BAS: Beetle antennae search algorithm for optimization problems. arXiv 2017, arXiv:1710.10724. [Google Scholar] [CrossRef]

- Xie, S.; Garofano, V.; Chu, X.; Negenborn, R.R. Model predictive ship collision avoidance based on Q-learning beetle swarm antenna search and neural networks. Ocean. Eng. 2019, 193, 106609. [Google Scholar] [CrossRef]

- Lin, X.; Liu, Y.; Wang, Y. Design and Research of DC Motor Speed Control System Based on Improved BAS. In Proceedings of the 2018 Chinese Automation Congress (CAC), Xi’an, China, 30 November–2 December 2018; pp. 3701–3705. [Google Scholar]

- Wang, P.; Gao, Y.; Wu, M. In-Field Calibration of Triaxial Accelerometer Based on Beetle Swarm Antenna Search Algorithm. Sensors 2020, 20, 947. [Google Scholar] [CrossRef] [PubMed]

- Dragomiretskiy, K.; Zosso, D. Variational Mode Decomposition. IEEE Trans. Signal Process. 2014, 62, 531–544. [Google Scholar] [CrossRef]

- Norman, L. The Wiener (Root Mean Square) Error Criterion in Filter Design and Prediction. J. Math. Phys. 1946, 25, 261–278. [Google Scholar]

- Wu, Z.; Huang, N. Ensemble Empirical Mode Decomposition: A Noise-Assisted Data Analysis Method. Adv. Adapt. Data Anal. 2009, 1, 1–41. [Google Scholar] [CrossRef]

- Lahmiri, S. Comparative study of ECG signal denoising by wavelet thresholding in empirical and variational mode decomposition domains. Healthc. Technol. Lett. 2014, 1, 104–109. [Google Scholar] [CrossRef]

- Miao, Y.; Zhao, M.; Lin, J. Identification of mechanical compound-fault based on the improved parameter-adaptive variational mode decomposition. ISA Trans. 2019, 84, 82–95. [Google Scholar] [CrossRef]

- Yang, K.; Wang, G.; Dong, Y.; Zhang, Q.; Sang, L. Early chatter identification based on an optimized variational mode decomposition. Mech. Syst. Signal Process. 2019, 115, 238–254. [Google Scholar] [CrossRef]

- Chen, X.; Yang, Y.; Cui, Z.; Shen, J. Vibration fault diagnosis of wind turbines based on variational mode decomposition and energy entropy. Energy 2019, 174, 1100–1109. [Google Scholar] [CrossRef]

- Mohanty, S.; Gupta, K.K.; Raju, K.S. Bearing fault analysis using variational mode decomposition. In Proceedings of the 9th international conference on industrial and information, Gwalior, India, 15–17 December 2014. [Google Scholar]

- Bandt, C.; Pompe, B. Permutation Entropy: A Natural Complexity Measure for Time Series. Phys. Rev. Lett. 2002, 88, 174102. [Google Scholar] [CrossRef] [PubMed]

- Li, Y.; Li, Y.; Chen, X.; Yu, J. A novel feature extraction method for ship-radiated noise based on variational mode. decomposition and multi-scale permutation entropy. Entropy 2017, 19, 342. [Google Scholar]

- Zhou, J.; Guo, X.; Wang, Z. Research on Fault Extraction Method of Variational Mode Decomposition Based on Immunized Fruit Fly Optimization Algorithm. Entropy 2019, 21, 400. [Google Scholar] [CrossRef]

- Lu, Q.; Pang, L.; Huang, H.; Shen, C.; Cao, H.; Shi, Y.; Liu, J. High-G Calibration Denoising Method for High-G MEMS Accelerometer Based on EMD and Wavelet Threshold. Micromachines 2019, 10, 134. [Google Scholar] [CrossRef] [PubMed]

- Huttenlocher, D.; Klanderman, G.; Rucklidge, W. Comparing images using the Hausdorff distance. IEEE Trans. Pattern Anal. Mach. Intell. 1993, 15, 850–863. [Google Scholar] [CrossRef]

- Komaty, A.; Boudraa, A.; Augier, B.; Dareemzivat, D. EMD-Based Filtering Using Similarity Measure Between Probability Density Functions of IMFs. IEEE Trans. Instrum. Meas. 2014, 63, 27–34. [Google Scholar] [CrossRef]

- Chang, J.; Zhu, L.; Li, H.; Xu, F.; Liu, B.; Yang, Z. Noise reduction in Lidar signal using correlation-based EMD combined with soft thresholding and roughness penalty. Opt. Commun. 2018, 407, 290–295. [Google Scholar] [CrossRef]

- Li, G.; Yang, Z.; Yang, H. Noise reduction method of underwater acoustic signals based on uniform phase empirical mode decomposition, amplitude-aware permutation entropy, and Pearson correlation coefficie. Entropy 2018, 20, 918. [Google Scholar] [CrossRef]

| Method | Signal-to-Noise Ratio (SNR/dB) | Root Mean Square Error (RMSE) |

|---|---|---|

| Proposed method | 18.3232 | 0.2183 |

| Traditional VMD | 17.2877 | 0.2459 |

| Traditional EMD | 15.2305 | 0.3116 |

| Wavelet transform | 17.3686 | 0.2436 |

| Parameter Item | Parameter Values |

|---|---|

| FOG dynamic range (/s) | |

| FOG bias stability (/h) | 0.03 |

| FOG random bias () | 0.003 |

| Method | Noise Intensity (NI) | Root Mean Square Error (RMSE) |

|---|---|---|

| Proposed method | 7.3872 | 1.2939 |

| Traditional VMD | 1.9982 | 2.2630 |

| Traditional EMD | 2.6109 | 2.8105 |

| Wavelet transform | 1.2901 | 1.6716 |

| Rotational Speed | Proposed Method | Traditional VMD | Traditional EMD | Wavelet Transform | ||||

|---|---|---|---|---|---|---|---|---|

| NI | RMSE | NI | RMSE | NI | RMSE | NI | RMSE | |

| 5 (/s) | 1.2759 | 1.4448 | 3.2013 | 3.2723 | 4.8796 | 4.9211 | 2.3837 | 2.4785 |

| 10 (/s) | 1.3437 | 1.8412 | 3.2248 | 3.4618 | 4.9692 | 5.1202 | 2.3852 | 2.6971 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, P.; Gao, Y.; Wu, M.; Zhang, F.; Li, G.; Qin, C. A Denoising Method for Fiber Optic Gyroscope Based on Variational Mode Decomposition and Beetle Swarm Antenna Search Algorithm. Entropy 2020, 22, 765. https://doi.org/10.3390/e22070765

Wang P, Gao Y, Wu M, Zhang F, Li G, Qin C. A Denoising Method for Fiber Optic Gyroscope Based on Variational Mode Decomposition and Beetle Swarm Antenna Search Algorithm. Entropy. 2020; 22(7):765. https://doi.org/10.3390/e22070765

Chicago/Turabian StyleWang, Pengfei, Yanbin Gao, Menghao Wu, Fan Zhang, Guangchun Li, and Chao Qin. 2020. "A Denoising Method for Fiber Optic Gyroscope Based on Variational Mode Decomposition and Beetle Swarm Antenna Search Algorithm" Entropy 22, no. 7: 765. https://doi.org/10.3390/e22070765

APA StyleWang, P., Gao, Y., Wu, M., Zhang, F., Li, G., & Qin, C. (2020). A Denoising Method for Fiber Optic Gyroscope Based on Variational Mode Decomposition and Beetle Swarm Antenna Search Algorithm. Entropy, 22(7), 765. https://doi.org/10.3390/e22070765