Partial Information Decomposition and the Information Delta: A Geometric Unification Disentangling Non-Pairwise Information

Abstract

1. Introduction

2. Background

2.1. Interaction Information and Multi-Information

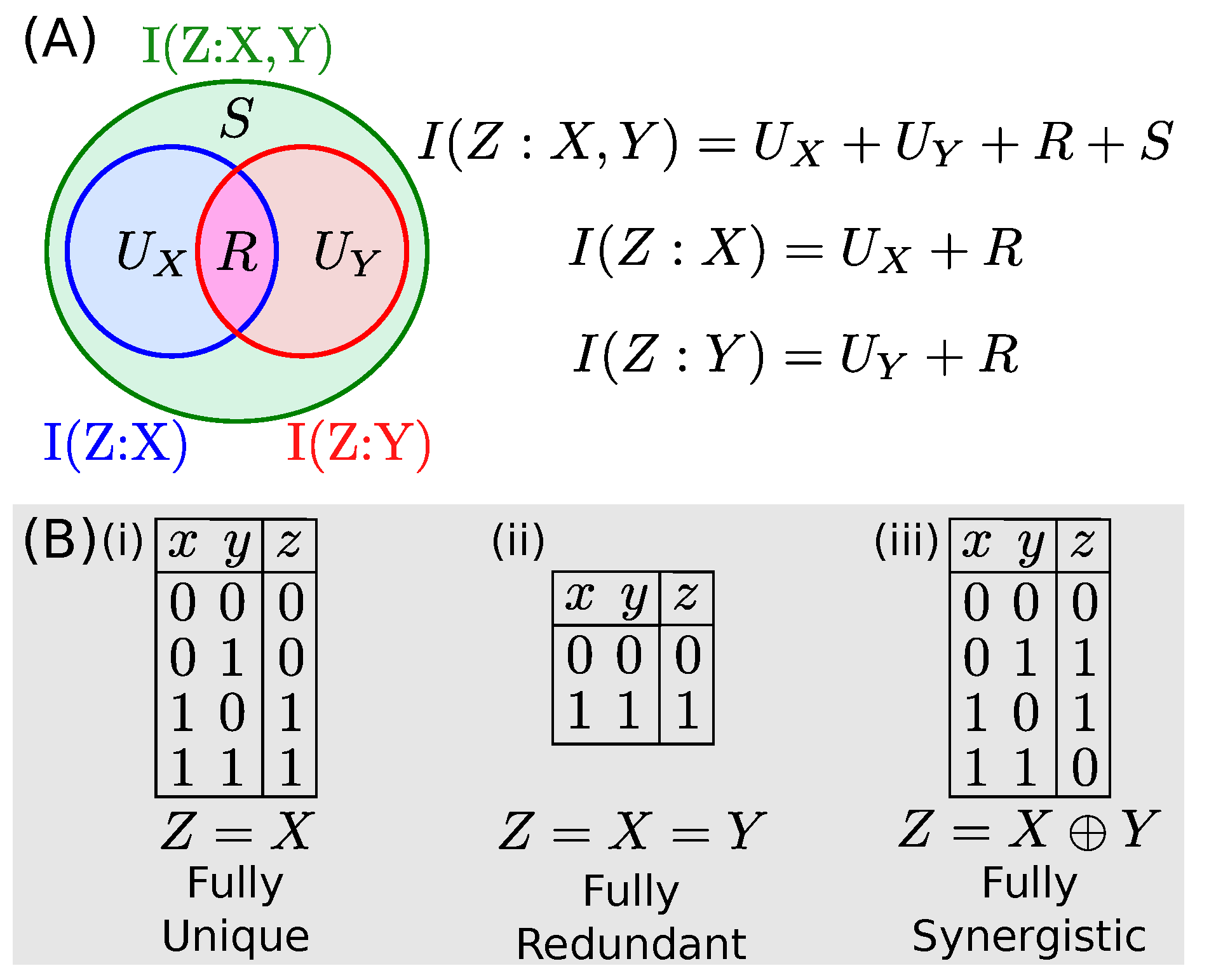

2.2. Information Decomposition

2.3. Solution from Bertschinger et al.

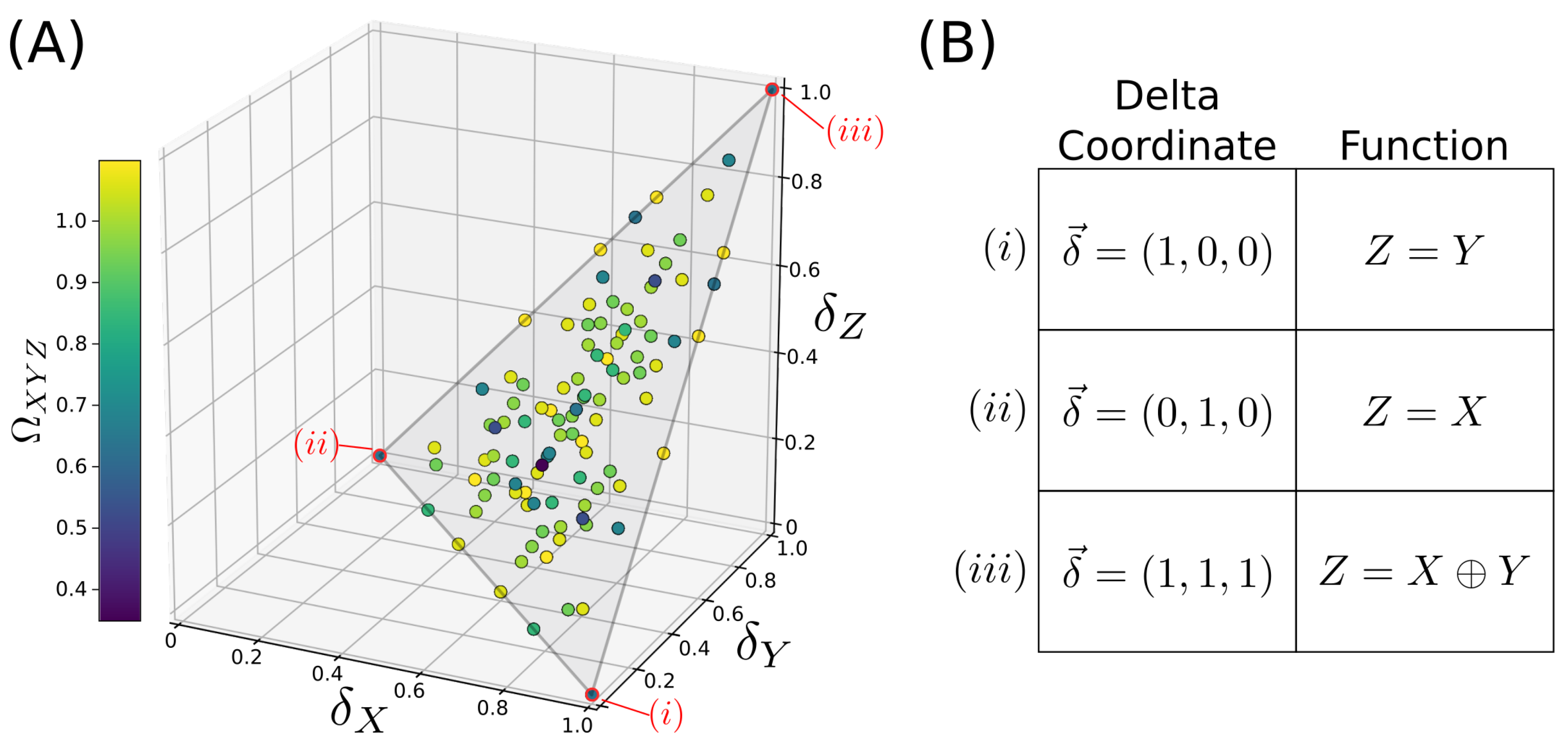

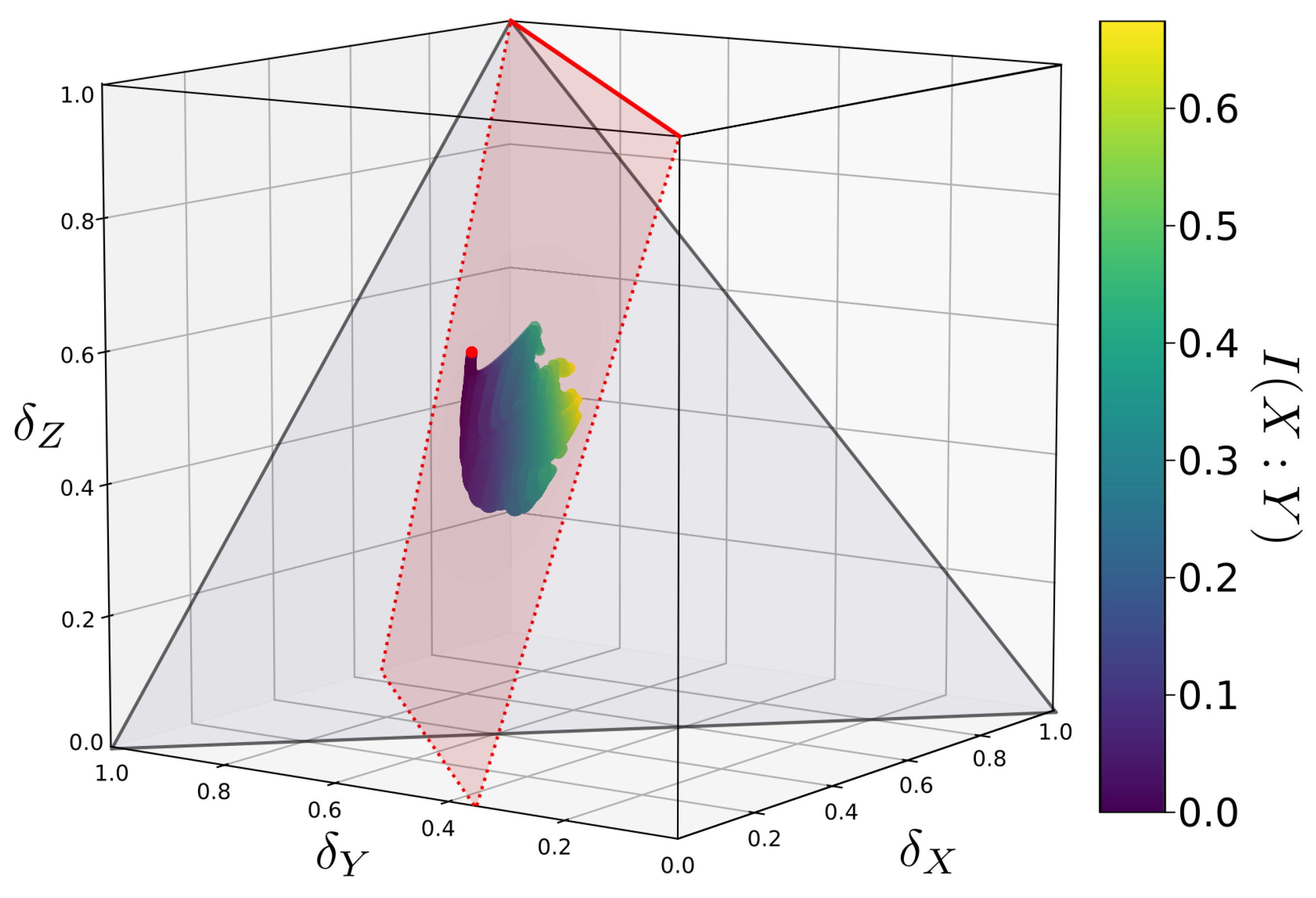

2.4. Information Deltas and Their Geometry

3. PID Mapped into Information Deltas

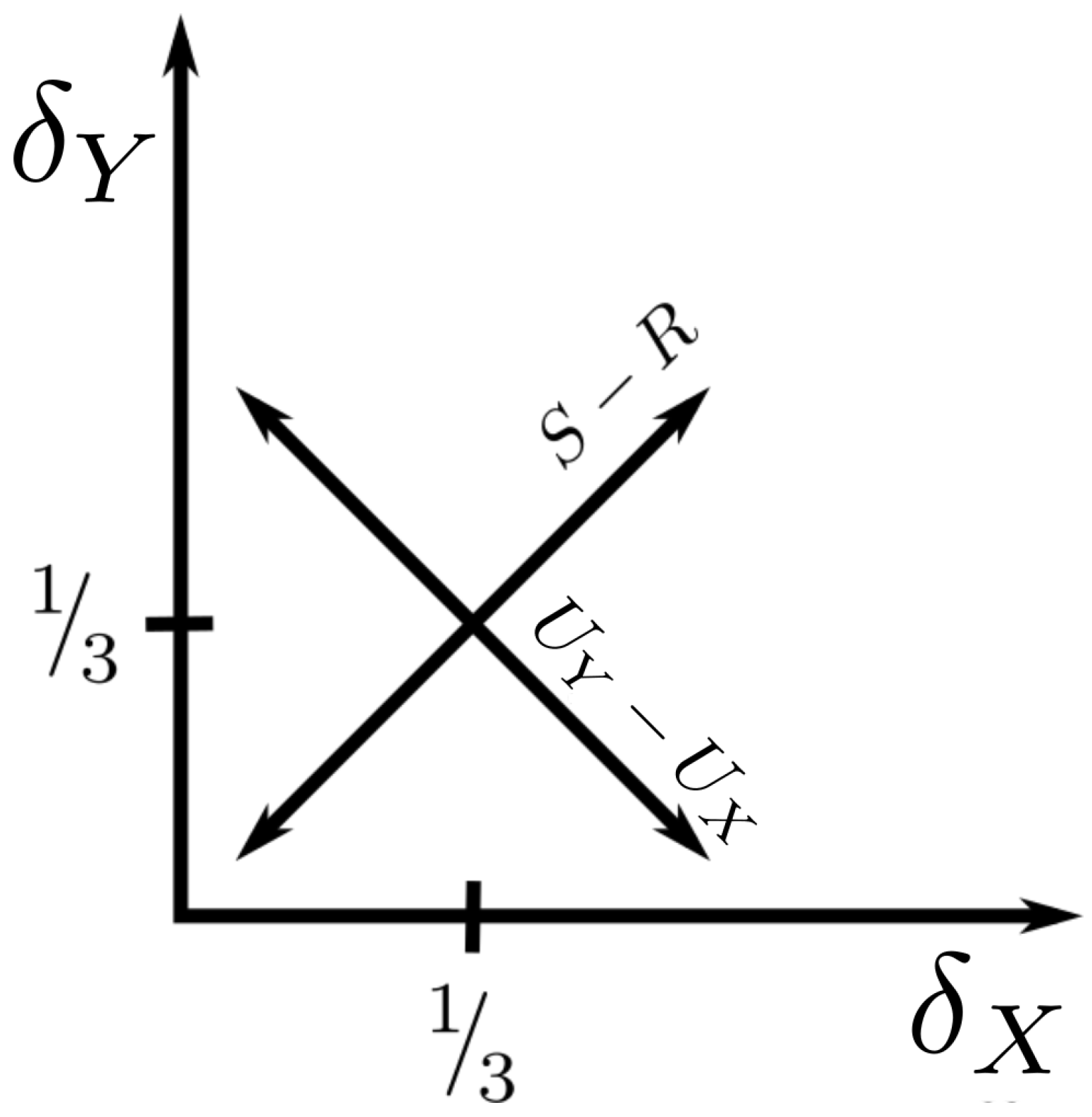

3.1. Information Decomposition in Terms of Deltas

3.2. Relationship between Diagonal and Interaction Information

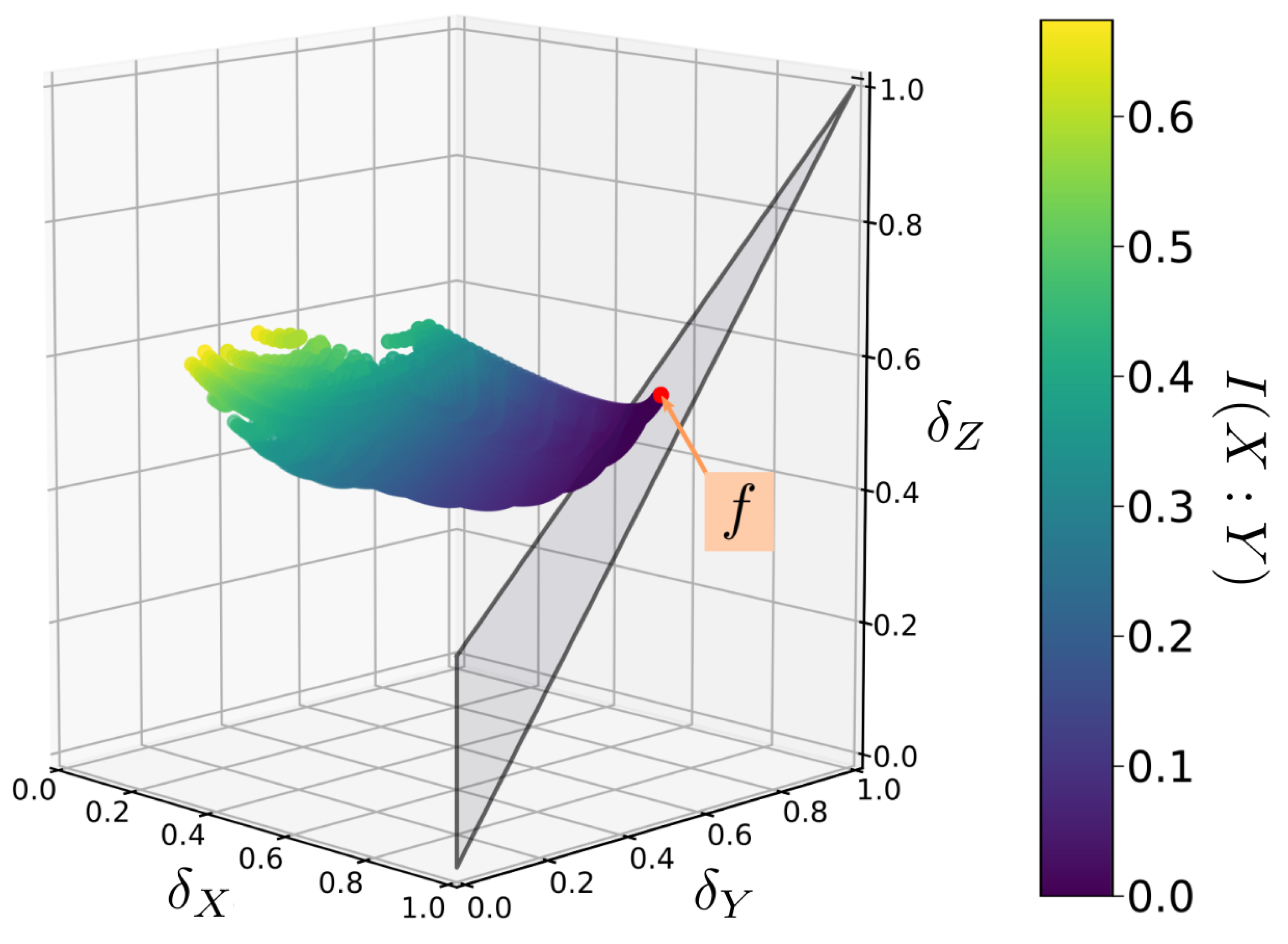

3.3. The Function Plane

4. Solving the PID on the Function Plane

4.1. Transforming Probability Tensors within Q

4.2. -Coordinates in Q Are Always Restricted to a Plane

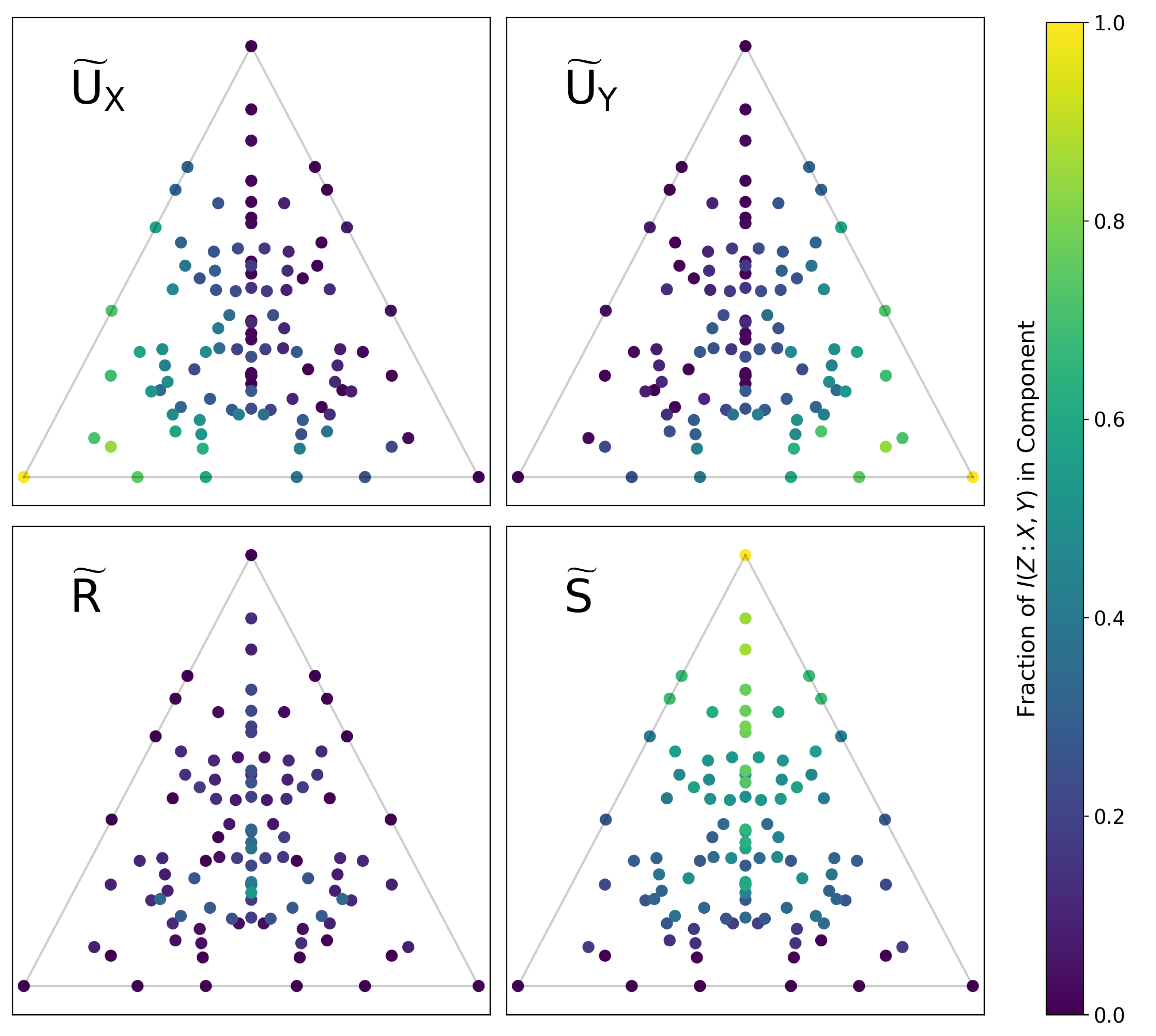

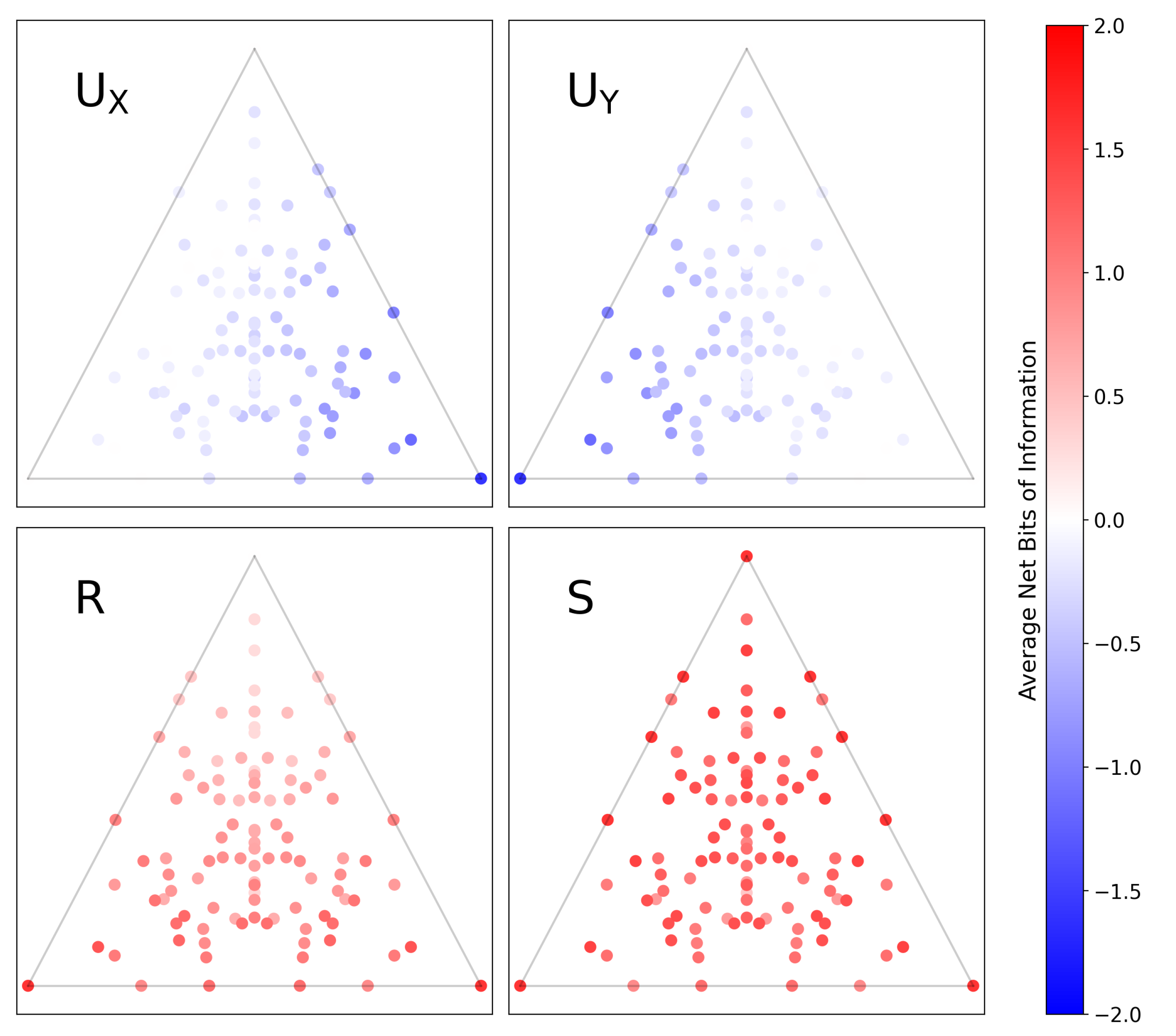

4.3. PID Calculation for All Functions

4.4. Alternate Solutions: Pointwise PID

5. Conclusions

- Construct a library (set) of distributions for all functions, . Specifically, record the -coordinates spanned by each distribution (e.g., as plotted in Figure 4) along with the corresponding function and its PID component values.

- For a set of variables in data for which we wish to find the decomposition, compute its -coordinates and then match them to the closest . This will then immediately yield the corresponding function and PID components.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| PID | Partial Information Decomposition |

| II | Interaction Information |

| CI | Co-Information |

| Unique Information in X | |

| Unique Information in Y | |

| R | Redundant Information |

| S | Synergistic Information |

| PPID | Pointwise Partial Information Decomposition |

References

- Williams, P.L.; Beer, R.D. Nonnegative decomposition of multivariate information. arXiv 2010, arXiv:1004.2515. [Google Scholar]

- James, R.G.; Crutchfield, J.P. Multivariate dependence beyond Shannon information. Entropy 2017, 19, 531. [Google Scholar] [CrossRef]

- Lizier, J.T.; Bertschinger, N.; Jost, J.; Wibral, M. Information Decomposition of Target Effects from Multi-Source Interactions: Perspectives on Previous, Current and Future Work. Entropy 2018, 20, 307. [Google Scholar] [CrossRef]

- Lizier, J.T.; Bertschinger, N.; Jost, J.; Wibral, M. Bivariate measure of redundant information. Phys. Rev. E 2013, 87, 012130. [Google Scholar]

- Griffith, V.; Chong, E.K.; James, R.G.; Ellison, C.J.; Crutchfield, J.P. Intersection information based on common randomness. Entropy 2014, 16, 1985–2000. [Google Scholar] [CrossRef]

- Barrett, A.B. Exploration of synergistic and redundant information sharing in static and dynamical Gaussian systems. Phys. Rev. E 2015, 91, 052802. [Google Scholar] [CrossRef]

- Ince, R.A. Measuring multivariate redundant information with pointwise common change in surprisal. Entropy 2017, 19, 318. [Google Scholar] [CrossRef]

- Rauh, J.; Banerjee, P.K.; Olbrich, E.; Jost, J.; Bertschinger, N. On extractable shared information. Entropy 2017, 19, 328. [Google Scholar] [CrossRef]

- Bertschinger, N.; Rauh, J.; Olbrich, E.; Jost, J.; Ay, N. Quantifying unique information. Entropy 2014, 16, 2161–2183. [Google Scholar] [CrossRef]

- Timme, N.; Alford, W.; Flecker, B.; Beggs, J.M. Synergy, redundancy, and multivariate information measures: An experimentalist’s perspective. J. Comput. Neurosci. 2014, 36, 119–140. [Google Scholar] [CrossRef]

- Stramaglia, S.; Cortes, J.M.; Marinazzo, D. Synergy and redundancy in the Granger causal analysis of dynamical networks. New J. Phys. 2014, 16, 105003. [Google Scholar] [CrossRef]

- Timme, N.M.; Ito, S.; Myroshnychenko, M.; Nigam, S.; Shimono, M.; Yeh, F.C.; Hottowy, P.; Litke, A.M.; Beggs, J.M. High-degree neurons feed cortical computations. PLoS Comput. Biol. 2016, 12, e1004858. [Google Scholar] [CrossRef] [PubMed]

- Wibral, M.; Priesemann, V.; Kay, J.W.; Lizier, J.T.; Phillips, W.A. Partial information decomposition as a unified approach to the specification of neural goal functions. Brain Cogn. 2017, 112, 25–38. [Google Scholar] [CrossRef] [PubMed]

- Wibral, M.; Finn, C.; Wollstadt, P.; Lizier, J.T.; Priesemann, V. Quantifying information modification in developing neural networks via partial information decomposition. Entropy 2017, 19, 494. [Google Scholar] [CrossRef]

- Kay, J.W.; Ince, R.A.; Dering, B.; Phillips, W.A. Partial and entropic information decompositions of a neuronal modulatory interaction. Entropy 2017, 19, 560. [Google Scholar] [CrossRef]

- Galas, D.J.; Sakhanenko, N.A.; Skupin, A.; Ignac, T. Describing the complexity of systems: Multivariable “set complexity” and the information basis of systems biology. J. Comput. Biol. 2014, 21, 118–140. [Google Scholar] [CrossRef]

- Sakhanenko, N.A.; Galas, D.J. Biological data analysis as an information theory problem: Multivariable dependence measures and the Shadows algorithm. J. Comput. Biol. 2015, 22, 1005–1024. [Google Scholar] [CrossRef]

- Sakhanenko, N.; Kunert-Graf, J.; Galas, D. The Information Content of Discrete Functions and Their Application in Genetic Data Analysis. J. Comp. Biol. 2017, 24, 1153–1178. [Google Scholar] [CrossRef]

- Finn, C.; Lizier, J.T. Pointwise partial information decomposition using the specificity and ambiguity lattices. Entropy 2018, 20, 297. [Google Scholar] [CrossRef]

- Kunert-Graf, J. kunert/deltaPID: Initial Release (Version v1.0.0). Zenodo 2020. [Google Scholar] [CrossRef]

- McGill, W. Multivariate information transmission. Trans. IRE Prof. Group Inf. Theory 1954, 4, 93–111. [Google Scholar] [CrossRef]

- Jakulin, A.; Bratko, I. Quantifying and Visualizing Attribute Interactions: An Approach Based on Entropy. arXiv 2003, arXiv:cs/0308002. [Google Scholar]

- Bell, A.J. The co-information lattice. In Proceedings of the Fifth International Workshop on Independent Component Analysis and Blind Signal Separation, ICA, Citeseer, Granada, Spain, 22–24 September 2003; Volume 2003. [Google Scholar]

- Watanabe, S. Information theoretical analysis of multivariate correlation. IBM J. Res. Dev. 1960, 4, 66–82. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kunert-Graf, J.; Sakhanenko, N.; Galas, D. Partial Information Decomposition and the Information Delta: A Geometric Unification Disentangling Non-Pairwise Information. Entropy 2020, 22, 1333. https://doi.org/10.3390/e22121333

Kunert-Graf J, Sakhanenko N, Galas D. Partial Information Decomposition and the Information Delta: A Geometric Unification Disentangling Non-Pairwise Information. Entropy. 2020; 22(12):1333. https://doi.org/10.3390/e22121333

Chicago/Turabian StyleKunert-Graf, James, Nikita Sakhanenko, and David Galas. 2020. "Partial Information Decomposition and the Information Delta: A Geometric Unification Disentangling Non-Pairwise Information" Entropy 22, no. 12: 1333. https://doi.org/10.3390/e22121333

APA StyleKunert-Graf, J., Sakhanenko, N., & Galas, D. (2020). Partial Information Decomposition and the Information Delta: A Geometric Unification Disentangling Non-Pairwise Information. Entropy, 22(12), 1333. https://doi.org/10.3390/e22121333