1. Introduction

Let

be a nonempty finite set containing

n elements and

be a probability vector parameterized by some vector

for an integer

. For instance, the set

can be the real interval

or the set of Hermitian semi-definite positive matrices as it is the case for the simulation of entanglement. The probability vector

p defines a random variable

X such that

for

. To sample exactly the probability vector

p means to produce an output

x such that

. The problem of sampling probability distributions has been studied and is still studied extensively within different random and computational models. Here, we are interested in sampling

exactly a discrete distribution whose defining parameters are distributed among

m different parties. The

’s for

are stored in

m different locations where the

ith party holds

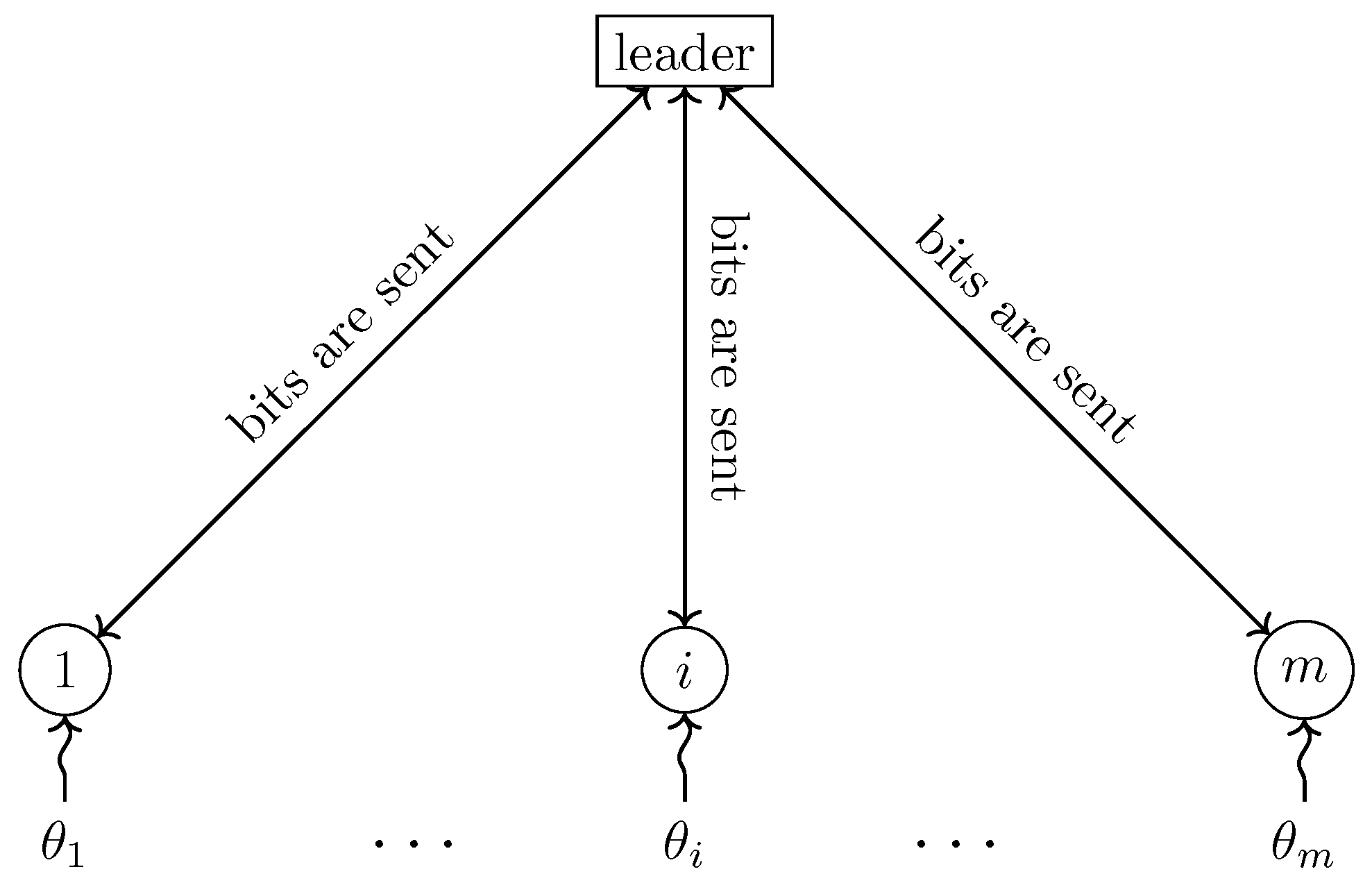

. In general, any communication topology between the parties would be allowed, but, in this work, we concentrate for simplicity on a model in which we add a designated party known as the

leader, whereas the

m other parties are known as the

custodians because each of them is sole keeper of the corresponding parameter

—hence there are

parties in total. The leader communicates in both directions with the custodians, who do not communicate among themselves. Allowing inter-custodian communication would not improve the communication efficiency of our scheme and can, at best, halve the number of bits communicated in any protocol. However, it could dramatically improve the sampling

time in a realistic model in which each party is limited to sending and receiving a fixed number of bits at any given time step, as demonstrated in our previous work [

1] concerning a special case of the problem considered here. The communication scheme is illustrated in

Figure 1.

It may seem paradoxical that the leader can sample

exactly the probability vector

p with a

finite expected number of bits sent by the custodians, who may hold

continuous parameters that define

p. However, this counterintuitive possibility has been known to be achievable for more than a quarter-century in earlier work on the simulation of quantum entanglement by classical communication, starting with Refs. [

2,

3,

4,

5,

6,

7], continuing with Refs. [

8,

9,

10,

11,

12,

13,

14], etc. for the bipartite case and Refs. [

15,

16,

17], etc. for the multipartite case, and culminating with our own Ref. [

1].

Our protocol to sample remotely a given probability vector is presented in

Section 2. For this purpose, the von Neumann rejection algorithm [

18] is modified to produce an output

with exact probability

using mere approximations of those probabilities, which are computed based on partial knowledge of the parameters transmitted on demand by the custodians to the leader. For the sake of simplicity, and to concentrate on the new techniques, we assume initially that algebraic operations on real numbers can be carried out with infinite precision and that continuous random variables can be sampled. Later, in

Section 4, we build on techniques developed in Ref. [

1] to obtain exact sampling in a realistic scenario in which all computations are performed with finite precision and the only source of randomness comes from flipping independent fair coins.

In the intervening

Section 3, we study our motivating application of remote sampling, which is the simulation of quantum entanglement using classical resources and classical communication. Readers who may not be interested in quantum information can still benefit from

Section 2 and most of

Section 4, which make no reference to quantum theory in order to explain our general remote sampling strategies. A special case of remote sampling has been used by the authors [

1], in which the aim was to sample a specific probability distribution appearing often in quantum information science, namely the

m-partite Greenberger–Horne–Zeilinger (GHZ) distribution [

19]. More generally, consider a quantum system of dimension

represented by a density matrix

known by the leader (surprisingly, the custodians have no need to know

). Suppose that there are

m generalized measurements (

povms) acting on quantum systems of dimensions

whose possible outcomes lie in sets

of cardinality

, respectively. Each custodian knows one and only one of the

povms and nothing else about the others. The leader does not know initially any information about any of the

povms. Suppose in addition that the leader can generate independent identically distributed uniform random variables on the real interval

. We show how to generate a random vector

sampled from the exact joint probability distribution that would be obtained if each custodian

i had the

ith share of

(of dimension

) and measured it according to the

ith

povm, producing outcome

. This task is defined formally in

Section 3, where we prove that the total expected number of bits transmitted between the leader and the custodians using remote sampling is

provided all the

’s and

’s are bounded by some constant. The exact formula, involving

m as well as the

’s and

’s, is given as Equation (

14) in

Section 3, where

d and

n denote the product of the

’s and the

’s, respectively. In

Section 4, we obtain the same asymptotic result in the more realistic scenario in which the only source of randomness comes from independent identically distributed uniform random

bits. This result subsumes that of Ref. [

1] since all

’s and

’s are equal to 2 for projective measurements on individual qubits of the

m-partite GHZ state.

2. Remote Sampling

As explained in the Introduction, we show how to sample remotely a discrete probability vector . The task of sampling is carried by a leader ignorant of some parameters that come in the definition of the probability vector, where each is known by the ith custodian only, with whom the leader can communicate. We strive to minimize the amount of communication required to achieve this task.

To solve our conundrum, we modify the von Neumann rejection algorithm [

18,

20]. Before explaining those modifications, let us review the original algorithm. Let

be a probability vector that we know how to sample on the same set

, and let

be such that

for all

. The classical von Neumann rejection algorithm is shown as Algorithm 1. It is well known that the expected number of times round the

repeat loop is exactly

C.

| Algorithm 1 Original von Neumann rejection algorithm |

- 1:

repeat - 2:

Sample X according to - 3:

Sample U uniformly on - 4:

if then - 5:

return X {X is accepted} - 6:

end if - 7:

end repeat

|

If only partial knowledge about the parameters defining

p is known, it would seem that the condition in line 4 cannot be decided. Nevertheless, the strategy is to build a sequence of increasingly accurate approximations that converge to the left and right sides of the test. As explained below, the number of bits transmitted depends on the number of bits needed to compute

q, and on the accuracy in

p required to accept or reject. This task can be achieved either in the

random bit model, in which only i.i.d. random bits are generated, or in the less realistic

uniform model, in which uniform continuous random variables are needed. The random bit model was originally suggested by von Neumann [

18], but only later given this name and formalized by Knuth and Yao [

21]. In this section, we concentrate for simplicity on the uniform model, leaving the more practical random bit model for

Section 4.

Definition 1. A t-bit approximation of a real number x is any such that . A special case of t-bit approximation is the t-bit truncation , where is equal to , 0 or depending on the sign of x. If is a complex number, where , then a t-bit approximation (resp. truncation) of α is any , where and are t-bit approximations (resp. truncations) of a and b, respectively.

Note that we assume without loss of generality that approximations of probabilities are always constrained to be real numbers between 0 and 1, which can be enforced by snapping any out-of-bound approximation (even if it is a complex number) to the closest valid value.

Consider an integer to be determined later. Our strategy is for the leader to compute the probability vector defined below, based on -bit approximations of the probabilities for each . For this purpose, the leader receives sufficient information from the custodians to build the entire vector q at the outset of the protocol. This makes q the “easy-to-sample” distribution required in von Neumann’s technique, which is easy not from a computational viewpoint, but in the sense that no further communication is required for the leader to sample it as many times as needed.

Let

and

Noting that

, these

define a proper probability vector

. Using the definition of a

t-bit approximation and the definition of

from Equation (

2), we have that

Taking the sum over the possible values for

x and recalling that set

is of cardinality

n,

Consider any sampled according to q and U sampled uniformly in as in lines 2 and 3 of Algorithm 1. Should x be accepted because , this can be certified by any t-bit approximation of such that for some positive integer t since . Conversely, any integer t such that certifies that x should be rejected because it implies that since . On the other hand, no decision can be made concerning versus if . It follows that one can modify Algorithm 1 above into Algorithm 2 below, in which a sufficiently precise approximation of suffices to make the correct decision to accept or reject an x sampled according to distribution q. A well-chosen value of must be input into this algorithm, as discussed later.

| Algorithm 2 Modified rejection algorithm—Protocol for the leader |

| Input: Value of |

- 1:

Compute for each {The leader needs information from the custodians in order to compute these approximations} - 2:

Compute C and as per Equations ( 1) and ( 2) - 3:

Sample X according to q - 4:

Sample U uniformly on - 5:

for ∞ do - 6:

if then - 7:

return X {X is accepted} - 8:

else if then - 9:

go to line 3 {X is rejected} - 10:

else - 11:

Continue the for loop {We cannot decide whether to accept or reject because ; communication may be required in order for the leader to compute ; it could be that bits previously communicated to compute can be reused.} - 12:

end if - 13:

end for

|

Theorem 1. Algorithm 2 is correct, i.e., it terminates and returns with probability . Furthermore, let T be the random variable that denotes the value of variable t upon termination of any instance of theforloop, whether the loop terminates in rejection or acceptation. Then, Proof. Consider any

and

. To reach

, it must be that

. Noting that

according to Equation (

2), the probability that

when

is therefore upper-bounded as follows:

The last inequality uses the fact that

It follows that the probability that more turns round the for loop are required decreases exponentially with each new turn once , which suffices to guarantee termination of the for loop with probability 1. Termination of the algorithm itself comes from the fact that the choice of X and U in lines 3 and 4 leads to acceptance at line 7—and therefore termination—with probability , as demonstrated by von Neumann in the analysis of his rejection algorithm.

The fact that is returned with probability is an immediate consequence of the correctness of the von Neumann rejection algorithm since our adaptation of this method to handle the fact that only approximations of are available does not change the decision to accept or reject any given candidate sampled according to q.

In order to bound the expectation of

T, we note that

when

since we start the

for loop at

. We can also use vacuous

rather than the worse-than-vacuous upper bound of 2 given by Equation (

5) in the case

. Therefore,

It remains to note that, since for all , it follows that without condition. □

Let S be the random variable that represents the number of times variable X is sampled according to q at line 3, and let be the random variable that represents the value of variable T upon termination of the ith instance of the for loop starting at line 5, for . The random variables are independently and identically distributed as the random variable T in Theorem 1 and the expected value of S is C. Let be the random variables chosen at successive passes at line 3, so that are rejected, whereas is returned as the final result of the algorithm.

To analyse the communication complexity of Algorithm 2, we introduce function

for each

and

, which denotes the

incremental number of bits that the leader must receive from the custodians in order to compute

, taking account of the information that may already be available if he had previously computed

. For completeness, we include in

the cost of the communication required for the leader to request more information from the custodians. We also introduce function

for

, which denotes the number of bits that the leader must receive from the custodians in order to compute

for all

in a “simultaneous” manner. Note that it could be much less expensive to compute those

n values than

n times the cost of computing any single one of them because some of the parameters held by the custodians may be relevant to more than one of the

’s. The total number of bits communicated in order to implement Algorithm 2 is therefore given by random variable

For simplicity, let us define function

. We then have

whose expectation, according to Wald’s identity, is

Assuming the value of

is upper-bounded by some

,

because

and using Equations (

4) and (

3).

Depending on the specific application, which determines

and function

, Equation (

7) is key to a trade-off that can lead to an optimal choice of

since a larger

decreases

but is likely to increase

. The value of

may play a rôle in the balance. The next section, in which we consider the simulation of quantum entanglement by classical communication, gives an example of this trade-off in action.

3. Simulation of Quantum Entanglement Based on Remote Sampling

Before introducing the simulation of entanglement, let us establish some notation and mention the mathematical objects that we shall need. It is assumed that the reader is familiar with linear algebra, in particular the notion of a semi-definite positive matrix, Hermitian matrix, trace of a matrix, tensor product, etc. For a discussion about the probabilistic and statistical nature of quantum theory, see Ref. [

22]. For convenience, we use

to denote the set

for any integer

n.

Consider integers

, all greater than or equal to 2. Define

and

. Let

be a

density matrix. Recall that any density matrix is Hermitian, semi-definite positive and unit-trace, which implies that its diagonal elements are real numbers between 0 and 1. For each

and

, let

be a

Hermitian semi-definite positive matrix such that

where

is the

identity matrix. In other words, each set

is a

povm (positive-operator valued measure) [

22].

As introduced in

Section 1, we consider one

leader and

m custodians. The leader knows density matrix

and the

ith custodian knows the

ith

povm, meaning that he knows the matrices

for all

. If a physical system of dimension

d in state

were shared between the custodians, in the sense that the

ith custodian had possession of the

ith subsystem of dimension

, each custodian could perform locally his assigned

povm and output the outcome, an integer between 1 and

. The joint output would belong to

, a set of cardinality

n, sampled according to the probability distribution stipulated by the laws of quantum theory, which we review below.

Our task is to sample

with the exact same probability distribution even though there is no physical system in state

available to the custodians, and in fact all parties considered are purely classical! We know from Bell’s Theorem [

23] that this task is impossible in general without communication, even when

, and our goal is to minimize the amount of communication required to achieve it. Special cases of this problem have been studied extensively for expected [

1,

2,

4,

5,

6], etc. and worst-case [

3,

8], etc. communication complexity, but here we solve it in its essentially most general setting, albeit only in the expected sense. For this purpose, the leader will centralize the operations while requesting as little information as possible from the custodians on their assigned

povms. Once the leader has successfully sampled

, he transmits each

to the

ith custodian, who can then output it as would have been the case had quantum measurements actually taken place.

We now review the probability distribution

that we need to sample, according to quantum theory. For each vector

, let

be the

tensor product of matrices

for each

:

The set

forms a global

povm of dimension

d, which applied to density matrix

defines a joint probability vector on

. The probability

of obtaining any

is given by

For a matrix A of size and any pair of indices r and c between 0 and , we use to denote the entry of A located in the row and column. Matrix indices start at 0 rather than 1 to facilitate Fact 2 below. We now state various facts for which we provide cursory justifications since they follow from elementary linear algebra and quantum theory, or they are lifted from previous work.

Fact 1. For all

, we have

when

is defined according to Equation (

10); furthermore,

. This is obvious because quantum theory tells us that Equation (

10) defines a probability distribution over all possible outcomes

, as sampled by the joint measurement. Naturally, this statement could also be proven from Equations (

8) and (

10) using elementary linear algebra.

Fact 2. For each

, matrix

is the tensor product of

m matrices as given in Equation (

9). Therefore, each entry

is the product of

m entries of the

’s. Specifically, consider any indices

r and

c between 0 and

and let

and

be the indices between 0 and

, for each

, such that

The

’s and

’s are uniquely defined by the principle of mixed-radix numeration. We have

Fact 3. Let

M be a Hermitian semi-definite positive matrix. Every entry

of the matrix satisfies

This follows from the fact that all principal submatrices of any Hermitian semi-definite positive matrix are semi-definite positive [

24] (Observation 7.1.2, page 430). In particular, the principal submatrix

is semi-definite positive, and therefore it has nonnegative determinant:

by virtue of

M being Hermitian, where

denotes the complex conjugate of

α.

Fact 4. The norm of any entry of a density matrix ρ is less than or equal to 1. This follows directly from Fact 3 since density matrices are Hermitian semi-definite positive, and from the fact that diagonal entries of density matrices, such as and , are real values between 0 and 1.

Fact 5. Given any povm , we have that

for all ℓ and i, and

for all ℓ, i and j.

The first statement follows from the fact that is the identity matrix by definition of povms, and therefore for all i, and the fact that each because each is semi-definite positive. The second statement follows from the first by applying Fact 3.

Fact 6. (This is a special case of Theorem 1 from Ref. [

1], with

). Let

be an integer and consider any two real numbers

a and

b. If

and

are arbitrary

k-bit approximations of

a and

b, respectively, then

is a

-bit approximation of

. If, in addition,

a and

b are known to lie in interval

, which can also be assumed without loss of generality concerning

and

since otherwise they can be safely pushed back to the appropriate frontier of this interval, then

is a

-bit approximation of

.

Fact 7. Let

be an integer and consider any two

complex numbers

α and

β. If

and

are arbitrary

k-bit approximations of

α and

β, respectively, then

is a

-bit approximation of

. If, in addition,

and the real and imaginary parts of

α and

β are known to lie in interval

, which can also be assumed without loss of generality concerning

and

, then

is a

-bit approximation of

. This is a direct consequence of Fact 6 in the case of addition. In the case of multiplication, consider

,

,

and

, so that

By the multiplicative part of Fact 6,

,

,

and

are

-bit approximations of

,

,

and

, respectively; and then by the additive part of the same fact (which obviously applies equally well to subtraction),

and

are

-bit approximations of

and

, respectively.

Fact 8 (This is Corollary 2 from Ref. [

1]). Let

and

be integers and let

and

be real numbers and their

k-bit approximations, respectively, all in interval

. Then,

is a

-bit approximation of

.

Fact 9. Let

and

be integers and let

and

be complex numbers and their

k-bit approximations, respectively. Provided it is known that

for each

, a

-bit approximation of

can be computed from knowledge of the

’s. The proof of this fact follows essentially the same template as Fact 8, except that

two bits of precision may be lost at each level up the binary tree introduced in Ref. [

1], due to the difference between Facts 6 and 7. A subtlety occurs in the need for Fact 7 to apply that the real and imaginary parts of all the complex numbers under consideration must lie in interval

. This is automatic for the exact values since the

’s are upper-bounded in norm by 1 and the product of such-bounded complex numbers is also upper-bounded in norm by 1, which implies that their real and imaginary parts lie in interval

. For the approximations, however, we cannot force their

norm to be bounded by 1 because we need the approximations to be rational for communication purposes. Fortunately, we can force the real and imaginary parts of all approximations computed at each level up the binary tree to lie in interval

because we know that they approximate such-bounded numbers. Note that the product of two complex numbers whose real and imaginary parts lie in interval

, such as

and

, may not have this property, even if they are

k-bit approximations of numbers bounded in norm by 1.

Fact 10. Let and be integers and let and be complex numbers and their k-bit approximations, respectively, without any restriction on their norm. Then is a -bit approximation of . Again, this follows the same proof template as Fact 8, substituting multiplication of real numbers by addition of complex numbers, which allows us to drop any condition on the size of the numbers considered.

Fact 11. Consider any

and any positive integer

t. In order to compute a

t-bit approximation of

, it suffices to have

-bit approximations of each entry of the

matrices for all

. This is because

by virtue of Fact 2. Every term of the double sum in Equation (

11) involves a product of

m entries, one per

povm element, and therefore incurs a loss of at most

bits of precision by Fact 9, whose condition holds thanks to Fact 5. An additional bit of precision may be lost in the multiplication by

, even though that value is available with arbitrary precision (and is upper-bounded by 1 in norm by Fact 4) because of the additions involved in multiplying complex numbers. Then, we have to add

terms, which incurs an additional loss of at most

bits of precision by Fact 10. In total,

-bit approximations of the

’s will result in a

t-bit approximation of

.

Fact 12. The leader can compute

for any specific

and integer

t if he receives a total of

bits from the custodians. This is because the

ith custodian has the description of matrix

of size

, which is defined by exactly

real numbers since the matrix is Hermitian. By virtue of Fact 11, it is sufficient for the leader to have

-bit approximations for all those

numbers. Since each one of them lies in interval [−1, 1] by Fact 5, well-chosen

k-bit approximations (for instance

k-bit truncations) can be conveyed by the transmission of

bits, one of which carries the sign.

Note that the t-bit approximation of computed according to Fact 12, say , may very well have a nonzero imaginary part b, albeit necessarily between and . Since must be a real number between 0 and 1, the leader sets , taking no account of b, although a paranoid leader may wish to test that indeed and raise an alarm in case it is not (which of course is mathematically impossible unless the custodians are not given proper povms, unless they misbehave, or unless a computation or communication error has occurred).

Fact 13. For any

t, the leader can compute

for each and every

if he receives

bits from the custodians. This is because it suffices for each custodian

i to send to the leader

-bit approximations of all

real numbers that define the entire

ith

povm, i.e., all the matrices

for

. This is a nice example of the fact that it may be much less expensive for the leader to compute at once

for all

, rather than computing them one by one independently, which would cost

bits of communication by applying

n times Fact 12.

After all these preliminaries, we are now ready to adapt the general template of Algorithm 2 to our entanglement-simulation conundrum, yielding Algorithm 3. We postpone the choice of until after the communication complexity analysis of this new algorithm.

| Algorithm 3 Protocol for simulating arbitrary entanglement subjected to arbitrary measurements |

- 1:

Each custodian sends his value of to the leader, who computes - 2:

The leader chooses and informs the custodians of its value - 3:

Each custodian sends to the leader -bit truncations of the real and imaginary parts of the entries defining matrix for each - 4:

The leader computes for every , using Fact 13 - 5:

The leader computes C and as per Equations ( 1) and ( 2) - 6:

- 7:

repeat - 8:

- 9:

The leader samples according to q - 10:

The leader informs each custodian of the value of - 11:

The leader samples U uniformly on - 12:

- 13:

repeat - 14:

if then - 15:

{X is accepted} - 16:

else if then - 17:

{X is rejected} - 18:

else - 19:

The leader asks each custodian for one more bit in the truncation of the real and imaginary parts of the entries defining matrix ; - 20:

Using this information, the leader updates into ; - 21:

- 22:

end if - 23:

until accept or reject - 24:

until accept - 25:

The leader requests each custodian to output his

|

To analyse the expected number of bits of communication required by this algorithm, we apply Equation (

7) from

Section 2 after defining explicitly the cost parameters

for the initial computation of

for all

at lines 3 and 4, and

for the upgrade from a specific

to

at lines 19 and 20. For simplicity, we shall ignore the negligible amount of communication entailed at line 1 (which is

bits), line 2 (

bits), line 10 (also

bits, but repeated

times) and line 25 (m bits) because they are not taken into account in Equation (

7) since they are absent from Algorithm 2. If we counted it all, this would add

bits to Equation (

13) below, which would be less than

bits added to Equation (

14), with no effect at all on Equation (

15).

According to Fact 13,

The cost of line 19 is very modest because we use

truncations rather than general approximations in line 3 for the leader to compute

for all

. Indeed, it suffices to obtain a single additional bit of precision in the real and imaginary parts of each entry defining matrix

from each custodian

. The cost of this update is simply

bits of communication, where the addition of

m is to account for the leader needing to request new bits from the custodians. This is a nice example of what we meant by “it could be that bits previously communicated can be reused” in line 11 of Algorithm 2.

Putting it all together in Equation (

7), the total expected number of bits communicated in Algorithm 3 in order to sample exactly according to the quantum probability distribution is

We are finally in a position to choose the value of parameter

. First note that

. Therefore, any constant choice of

will entail an expected amount of communication that is exponential in

m because of the right-hand term in Equation (

13). At the other extreme, choosing

would also entail an expected amount of communication that is exponential in

m, this time because of the left-hand term in Equation (

13). A good compromise is to choose

, which results in

according to Equation (

3), because in that case

and therefore

so that Equation (

13) becomes

In case all the

’s and

’s are upper-bounded by some constant

, we have that

, hence

, similarly

, and also

. It follows that

which is on the order of

, thus matching with our most general method the result that was already known for the very specific case of simulating the quantum

m-partite GHZ distribution [

1].

4. Practical Implementation Using a Source of Discrete Randomness

In practice, we cannot work with continuous random variables since our computers have finite storage capacities and finite precision arithmetic. Furthermore, the generation of uniform continuous random variables does not make sense computationally speaking and we must adapt Algorithms 2 and 3 to work in a finite world.

For this purpose, recall that

U is a uniform continuous random variable on

used in all the algorithms seen so far. For each

, let

denote the

ith bit in the binary expansion of

U, so that

We acknowledge the fact that the

’s are not uniquely defined in case

for integers

and

, but we only mention this phenomenon to ignore it since it occurs with probability 0 when

U is uniformly distributed on

. We denote the

t-bit truncation of

U by

:

For all

, we have that

We modify Algorithm 2 into Algorithm 4 as follows, leaving to the reader the corresponding modification of Algorithm 3, thus yielding a practical protocol for the simulation of general entanglement under arbitrary measurements.

| Algorithm 4 Modified rejection algorithm with discrete randomness source—Protocol for the leader |

| Input: Value of |

- 1:

Compute for each {The leader needs information from the custodians in order to compute these approximations} - 2:

Compute C and as per Equations ( 1) and ( 2) - 3:

Sample X according to q - 4:

- 5:

fortodo - 6:

Generate i.i.d. unbiased bit - 7:

- 8:

end for - 9:

for ∞ do - 10:

Generate i.i.d. unbiased bit - 11:

- 12:

if then - 13:

return X {X is accepted} - 14:

else if then - 15:

go to line 3 {X is rejected} - 16:

else - 17:

Continue the for loop {We cannot decide to accept or reject because ; communication may be required in order for the leader to compute ; it could be that bits previously communicated to compute can be reused.} - 18:

end if - 19:

end for

|

Theorem 2. Algorithm 4 is correct, i.e., it terminates and returns with probability . Furthermore, let T be the random variable that denotes the value of variable t upon termination of any instance of theforloop that starts at line 9, whether it terminates in rejection or acceptation. Then, Proof. This is very similar to the proof of Theorem 1, so let us concentrate on the differences. First note that it follows from Equation (

16) and the fact that

that

and

Therefore, whenever

X is accepted at line 13 (resp. rejected at line 15), it would also have been accepted (resp. rejected) in the original von Neumann algorithm, which shows sampling correctness. Conversely, whenever we reach a value of

such that

and

, we do not have enough information to decide whether to accept or reject, and therefore we reach line 17, causing

t to increase. This happens precisely when

To obtain an upper bound on

, we mimic the proof of Theorem 1, but in the discrete rather than continuous regime. In particular, for any

and

,

To understand Equation (

17), think of

as an integer chosen randomly and uniformly between 0 and

. The probability that it falls within some real interval

for

is equal to

times the number of integers between 0 and

in that interval, the latter being upper-bounded by the number of unrestricted integers in that interval, which is at most

.

Noting how similar Equation (

18) is to the corresponding Equation (

5) in the analysis of Algorithm 2, it is not surprising that the expected value of

T will be similar as well. Indeed, continuing as in the proof of Theorem 1, without belabouring the details,

We conclude that

without condition since Equation (

19) does not depend on

x. □

The similarity between Theorems 1 and 2 means that there is no significant additional cost in the amount of communication required to achieve remote sampling in the random bit model. i.e., if we consider a realistic scenario in which the only source of randomness comes from i.i.d. unbiased bits, compared to an unrealistic scenario in which continuous random variables can be drawn. For instance, the reasoning that led to Equation (

7) applies

mutatis mutandis to conclude that the expected number

Z of bits that needs to be communicated to achieve remote sampling in the random bit model is

where

and

have the same meaning as in

Section 2.

If we use the random bit approach for the general simulation of quantum entanglement studied in

Section 3, choosing

again, Equation (

14) becomes

which reduces to the identical

in case all the

’s and

’s are upper-bounded by some constant

, which again is on the order of

.

In addition to communication complexity, another natural efficiency measure in the random bit model concerns the

expected number of random bits that needs to be drawn in order to achieve sampling. Randomness is needed in lines 3, 6 and 10 of Algorithm 4. A single random bit is required each time lines 6 and 10 are entered, but line 3 calls for sampling

X according to distribution

q. Let

be the random variable that represents the number of random bits needed on the

ith passage through line 3. For this purpose, we use the algorithm introduced by Donald Knuth and Andrew Chi-Chih Yao [

21], which enables sampling within any finite discrete probability distribution in the random bit model by using an expectation of no more than two random bits in addition to the Shannon binary entropy of the distribution. Since each such sampling is independent from the others, it follows that

is independently and identically distributed as a random variable

V such that

where

denotes the binary entropy of

q, which is never more than the base-two logarithm of the number of atoms in the distribution, here

n.

Let

R be the random variable that represents the number of random bits drawn when running Algorithm 4. Reusing the notation of

Section 2, let

S be the random variable that represents the number of times variable

X is sampled at line 3 and let

be the random variable that represents the value of variable

T upon termination of the

ith instance of the

for loop starting at line 9, for

. The random variables

are independently and identically distributed as the random variable

T in Theorem 2 and the expected value of

S is

C. Since one new random bit is generated precisely each time variable

t is increased by 1 in any pass through either

for loops (line 5 or 9), we simply have

By virtue of Equations (

3) and (

21), Theorem 2, and using Wald’s identity again, we conclude that:

Taking

again, remote sampling can be completed using an expected number of random bit in

, with a hidden multiplicative constant no larger than 6. The hidden constant can be reduced arbitrarily close to 2 by taking

for an arbitrarily large constant

a. Whenever target distribution

p has close to full entropy, this is only twice the optimal number of random bits that would be required according to the Knuth–Yao lower bound [

21] in the usual case when full knowledge of

p is available in a central place rather than having to perform remote sampling. Note, however, that, if our primary consideration is to optimize communication for the classical simulation of entanglement, as in

Section 3, choosing

would be a better idea because the summation in the left-hand term of Equation (

13) dominates that of the right-hand term. For this inconsequential optimization,

a does not have to be a constant, but it should not exceed

, where

is our usual upper bound on the number of possible outcomes for each participant (if it exists), lest the right-hand term of Equation (

13) overtake the left-hand term. Provided

exists, the expected number of random bits that needs to be drawn is linear in the number of participants.

The need for continuous random variables was not the only unrealistic assumption in Algorithms 1–3. We had also assumed implicitly that custodians know their private parameters precisely (and that the leader knows exactly each entry of density matrix

in

Section 3). This could be reasonable in some situations, but it could also be that some of those parameters are transcendental numbers or the result of applying transcendental functions to other parameters, for example

. More interestingly, it could be that the actual parameters are spoon-fed to the custodians by

examiners, who want to test the custodians’ ability to respond appropriately to unpredictable inputs. However, all we need is for the custodians to be able to obtain their own parameters with arbitrary precision, so that they can provide that information to the leader upon request. For example, if a parameter is

and the leader requests a

k-bit approximation of that parameter, the custodian can compute some number

such that

and provide it to the leader. For communication efficiency purposes, it is best if

itself requires only

k bits to be communicated, or perhaps one more (for the sign) in case the parameter is constrained to be between

and 1. It is even better if the custodian can supply a

k-bit

truncation because this enables the possibility to upgrade it to a

-bit truncation by the transmission of a single bit upon request from the leader, as we have done explicitly for the simulation of entanglement at line 19 of Algorithm 3 in

Section 3.

Nevertheless, it may be impossible for the custodians to compute truncations of their own parameters in some cases, even when they can compute arbitrarily precise approximations. Consider for instance a situation in which one parameter held by a custodian is

for some angle

for which he can only obtain arbitrarily precise truncations. Unbeknownst to the custodian,

and therefore

. No matter how many bits the custodian obtains in the truncation of

, however, he can never decide whether

or

. In the first case,

and therefore the 1-bit truncation of

x should be 0, whereas in the second (correct) case,

and therefore the 1-bit truncation of

x is

(or

in binary). Thus, the custodian will be unable to respond if the leader asks him for a 1-bit truncation of

x, no matter how much time he spends on the task. In this example, by contrast, the custodian can supply the leader with arbitrarily precise

approximations of

x from appropriate approximations of

. Should a situation like this occur, for instance in the simulation of entanglement, there would be two solutions. The first one is for the custodian to transmit increasingly precise truncations of

to the leader and let

him compute the cosine on it. This approach is only valid if it is known at the outset that the custodian’s parameter will be of that form, which was essentially the solution taken in our earlier work on the simulation of the quantum

m-partite GHZ distribution [

1]. The more general solution is to modify the protocol and declare that custodians can send arbitrary approximations to the leader rather than truncations. The consequence on Algorithm 3 is that line 19 would become much more expensive since each custodian

i would have to transmit a fresh one-bit-better approximation for the real and imaginary parts of the

entries defining matrix

. As a result, efficiency parameter

in Equation (

6) would become

which should be compared with the much smaller (constant) value of

given in Equation (

12) when truncations of the parameters are available. Nevertheless, taking

again, this increase in

would make no significant difference in the total number of bits transmitted for the simulation of entanglement because it would increase only the right-hand term in Equations (

14) and (

20), but not enough to make it dominate the left-hand term. All counted, we still have an expected number of bits transmitted that is upper-bounded by

whenever all the

’s and

’s are upper-bounded by some constant

, which again is on the order of

.

5. Discussion and Open Problems

We have introduced and studied the general problem of sampling a discrete probability distribution characterized by parameters that are scattered in remote locations. Our main goal was to minimize the amount of communication required to solve this conundrum. We considered both the unrealistic model in which arithmetic can be carried out with infinite precision and continuous random variables can be sampled exactly, and the more reasonable

random bit model studied by Knuth and Yao [

21], in which all arithmetic is carried out with finite precision and the only source of randomness comes from independent tosses of a fair coin. For a small increase in the amount of communication, we can fine-tune our technique to require only twice the number of random bits that would be provably required in the standard context in which all the parameters defining the probability distribution would be available in a single location, provided the entropy of the distribution is close to maximal.

When our framework is applied to the problem of simulating quantum entanglement with classical communication in its essentially most general form, we find that an expected number of

bits of communication suffices when there are

m participants and each one of them (in the simulated world) is given an arbitrary quantum system of bounded dimension and asked to perform an arbitrary generalized measurement (

povm) with a bounded number of possible outcomes. This result generalizes and supersedes the best approach previously known in the context of multi-party entanglement, which was for the simulation of the

m-partite GHZ state under projective measurements [

1]. Our technique also applies without the boundedness condition on the dimension of individual systems and the number of possible outcomes per party, provided those parameters remain finite.

It would be preferable if we could eliminate the dependency of the expected number of bits of communication on the number of possible measurement outcomes. Is perfect simulation possible at all when that number is infinite, regardless of communication efficiency, a scenario in which our approach cannot be applied? In the bipartite case, Serge Massar, Dave Bacon, Nicolas Cerf, and Richard Cleve proved that classical communication can serve to simulate the effect of arbitrary measurements on maximally entangled states in a way that does not require any bounds on the number of possible outcomes [

6]. More specifically, they showed that arbitrary

povms on systems of

n Bell states can be simulated with an expectation of

bits of communication. However, their approach exploits the equivalence of this problem with a variant known as

classical teleportation [

5], in which one party has full knowledge of the quantum state and the other has full knowledge of the measurement to be applied to that state. Unfortunately, the equivalence between those two problems breaks down in a multipartite scenario and there is no obvious way to extend the approach. We leave as an open question the possibility of a simulation protocol in which the expected amount of communication would only depend on the number of participants and the dimension of their simulated quantum systems.

Our work leaves several additional important questions open. Recall that our approach provides a bounded amount on the

expected communication required to perform exact remote sampling. The most challenging open question is to determine if it is possible to achieve the same goal with a guaranteed bounded amount of communication

in the worst case. If possible, this would certainly require the participants to share ahead of time the realization of random variables, possibly even continuous ones. Furthermore, a radically different approach would be needed since we had based ours on the von Neumann rejection algorithm, which has intrinsically no worst-case upper bound on its performance. This task may seem hopeless, but it has been shown to be possible for special cases of entanglement simulation in which the remote parameters are taken from a continuum of possibilities [

3,

8], despite earlier “proofs” that it is impossible [

2].

A much easier task would be to consider other communication models, in which communication is no longer restricted to being between a single leader and various custodians. Would there be an advantage in communicating through the edges of a complete graph? Obviously, the maximum possible savings in terms of communication would be a factor of 2 since any time one participant wants to send a bit to some other participant, he can do so via the leader. However, if we care not only about the total number of bits communicated, but also the

time it takes to complete the protocol in a realistic model in which each party is limited to sending and receiving a fixed number of bits at any given time step, parallelizing communication could become valuable. We had already shown in Ref. [

1] that a parallel model of communication can dramatically improve the time needed to sample the

m-partite GHZ distribution. Can this approach be generalized to arbitrary remote sampling settings?

Finally, we would like to see applications for remote sampling outside the realm of quantum information science.