1. Introduction

Information-related measures are useful tools for multi-variable data analysis, as measures of dependence among variables, and as descriptions of order and disorder in biological and physical systems. The mathematical relationships among these measures are therefore of significant inherent interest. The description of order and disorder in physical, chemical and biological systems is fundamental. It plays a central role not only in the physics and chemistry of condensed matter, but also in systems with biological levels of complexity, including interactions of genes, macromolecules, cells and of networks of neurons, however it is certainly not well understood. Mathematical descriptions of the underlying order, and transitions between states of order, are still far from satisfactory and a subject of much current research (for example [

1,

2]). The difficulty arises in several forms, but the dominant contributors are the number and high degree of effective interactions among components, and their non-linearity. There have been many efforts to define information-based measures as a language for describing the order and disorder of systems and the transfer of information. Negative entropy, joint entropies, multi-information and various manifestations of Kullback–Leibler (K–L) divergence are among the key concepts. Interaction information is one of these. It is an entropy-based measure for multiple variables introduced by McGill in 1954 [

3] as a generalization of mutual information. It has been used effectively in a number of theoretical developments and applications of information-based analysis [

4,

5,

6,

7], and has several interesting properties, including symmetry under permutation of variables. This symmetry is shared with joint entropies and multi-information, though its interpretation as a measure of information in the usual sense is ambiguous as it can have negative values. In previous work we have proposed complexity and dependence measures related to this quantity [

8,

9]. Here we focus on elucidating the character and source of some of the mathematical properties that relate these measures. The formalism presented here can be viewed as a unification of a wide range of information-related measures in the sense that the relations between them are elucidated.

This paper is structured as follows: We briefly review a number of definitions and review preliminaries relevant to information measures, lattices and Möbius inversion. In the next section we define operators that map the functions on the lattice into one another, expressing the Möbius inversions as operator equations. We then determine the products of the operators and, completing the set of operators with a lattice complement operator, we show that together they form a group that is isomorphic to the symmetric group, . In the next section we express previous results in defining dependency and complexity measures in terms of the operator formalism, and illustrate relationships between many commonly used information measures, like interaction information and multi-information. We derive a number of new relations using this formalism, and point out the relationship between multi-information and certain maximum entropy limits. This suggests a wide range of maximum entropy criteria in the relationships inherent in the operator algebra, which are not further explored here. The next section focuses on the relations between these functions and the probability distributions underlying the symmetries. We then illustrate an operator equation expressing our dependence measure in terms of conditional log likelihood functions. Finally, we define a generalized form of the fundamental inversion relation, and show how these operators on functions can be additively decomposed in a variety of ways.

2. Preliminaries

We review briefly the elements of information theory and lattices that are relevant to this paper, and clarify some notational conventions used.

2.1. Information Theory

Consider a set of discrete variables denoted as if there is no ambiguity. We use to denote the set without variable . denotes a joint probability density function over , and denotes a conditional probability density function.

Marginal entropy of a single variable

is defined as

. Similarly given a set of variables

,

joint entropy is defined as

, where

traverses all possible states of

. We write

to denote

conditional entropy of

on the rest of the variables

, obtained by using the conditional distribution and averaging with respect to the marginal. The difference in joint entropy of sets of variables with and without

is called

differential entropy:

The

mutual information measuring the mutual dependence between two variables

and

is defined as

Equivalently, the mutual information can be expressed

via marginal and joint entropies:

Similar to Equation (3), given three variables

,

, and

, the conditional mutual information can be defined as

A generalization of mutual information to more than two variables is called

interaction information [

3]. For three variables it is defined as the difference between mutual information with and without knowledge of the third variable:

When expressed entirely in terms of entropies we have

Consider the interaction information for a set of

variables

The interaction information

for a set of

variables obeys a recursion relation that parallels that for the joint entropy of sets of variables,

, which is derived in turn directly from the probability chain rule:

where the second terms on the right are conditionals. These two information functions are known to be related by Möbius inversion [

4,

5,

6,

7].

Given Equation (7), we define the

differential interaction information,

, as the difference between values of successive interaction informations arising from adding variables

The last equality in Equation (8) comes from the recursive relation for the interaction information, Equation (5). The differential interaction information is based on providing the target variable

to be added to the set of

variables, and is therefore asymmetric. If we multiply differential interaction informations with all possible choices of the target variable, the resulting measure is symmetric and we call it a

symmetric delta,

There is another measure for multivariable dependence called

multi-information, or

total correlation [

10], which is defined as the difference between the sum of single entropies for each variable of a set and the joint entropy for the entire set

Multi-information is frequently used because it is always postive and goes to zero when all the variables are independent. We can think of it as a kind of conglomerate of dependencies among members of the set

. At the two-variable level multi-information, Kullback–Leibler divergence and interaction information are all identical, and equal to mutual information. There is an inherent duality between the marginal entropy functions and the interaction information functions based on Möbius inversion, which we will show in detail in

Section 3. Bell described an elegantly symmetric form of the inversion and identified the source of this duality in the lattice associated with the variables [

4]. The duality is based on the inclusion lattice of the set of variables. We start with this symmetric inversion relation and extend it to an algebra of operators on these lattices. We will first define the lattice and other relevant concepts from lattice theory before discussing Möbius inversion further.

2.2. Lattice Theory

We review here some definitions from the lattice theory that we will use [

11]. We say that a set

is a

poset (a

partially ordered set) if there is a partial order defined on it,

. A partial order (

) is a binary relation that is reflexive, antisymmetric, and transitive. Note that we would write

to denote the partial order between elements

and

of a poset. Note also that an inverse of a partial order is a partial order. A

chain of a poset

is a subset

such that for any two elements

either

or

. Similarly, a path of length

is a subset

such that

for any

either

or

. Note that any chain is a path, but not other way around, since

and

of a path need not be ordered if

.

Let be a subset of a poset . The minimum of , if exists, is such that and for any . Similarly, the maximum of , if exists, is such that and for any . A poset has a top element (a greatest element) iff and for any . Similarly, a poset has a bottom element (a least element) iff and for any .

The dual of a poset is , where is the inverse partial order of . For any statement based on the partial order and true about all posets, the dual statement (based on the inverse partial order ) is also true about all posets.

For a poset we call a down-set (or an ideal) iff, for any . Dually, we call an up-set (or a filter) iff for any . Note that a set is a down-set of iff its set complement is an up-set of .

Given a subset of a poset , is an upper bound of iff for any . And dually, is a lower bound of iff for any . The join of , if exists, is called an upper bound of , which is the least of the upper bounds of . And dually, the meet of is the greatest lower bound of .

A poset where for every two elements there exist the unique join and meet is called a lattice. A lattice that contains a top element and a bottom element , such that for every element of the lattice, , is called a bounded lattice. An inclusion lattice (also called a subset lattice) is a typical example of a lattice defined on all subsets of a given set ordered by a subset inclusion . If a set is finite, then its corresponding inclusion lattice is bounded, where the top element is itself and the bottom element is the empty set.

3. Möbius Dualities

Many applications make use of the relations among information theoretic quantities like joint entropies and interaction information that are formed by what can be called Möbius duality [

4]. Restricting ourselves to functions on subset lattices, we note that a function on a lattice is a mapping of each of the lattice element (subset of variables) to the reals. The Möbius function for this lattice is

where

is a subset of

,

is the cardinality of the subset.

3.1. Möbius Inversion

Consider a set of

variables

and define

, the dual of

for the set of variables:

Note that function

is the interaction information if

were the entropy function

, adopting the sign convention of [

4]. It can easily be shown that the symmetric relation holds:

The relations defined in Equation (12a,b) represent a symmetric form of Möbius inversion, and the functions

and

g can be called Möbius duals, and inversions of one another.

Now consider an inclusion lattice. The Möbius inversion is a convolution of the Möbius function with any function defined on the lattice over all its elements (subsets) between the argument subset,

, of the function and the empty set. The summation in the inversion is over all the elements on all chains between

and the empty set, counting the elements only once, which is called a down-set of the inclusion lattice (see

Section 2). The empty set, at the limit of the range of the convolution, can be considered as the “reference element”. We use the idea of a reference element in

Section 7 in generalizing the inversion relations. The range of the convolution can of course be limited at the top element (largest subset) and the bottom element of the lattice. In defining the Möbius operators below we need to carefully define how the range is determined.

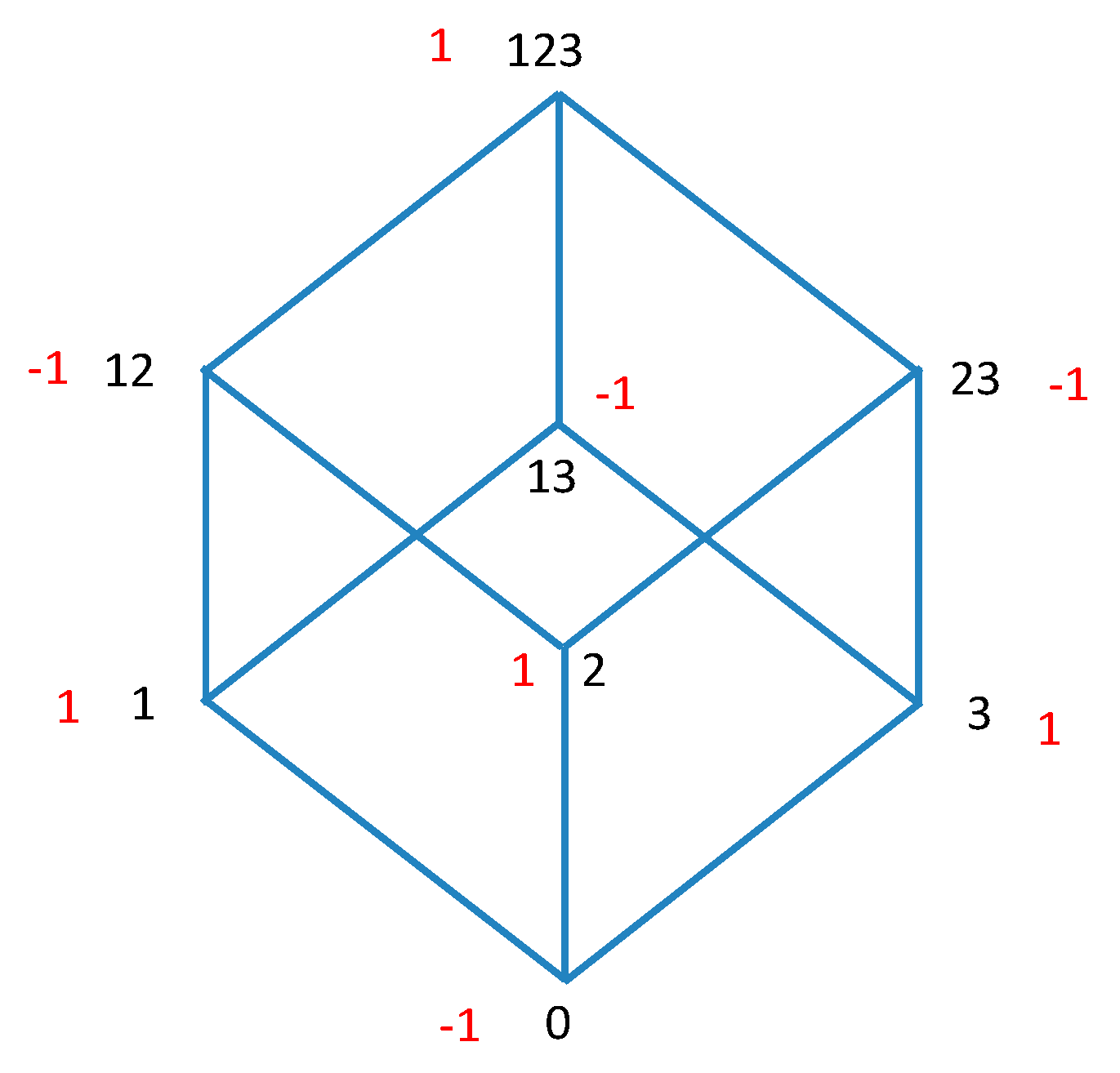

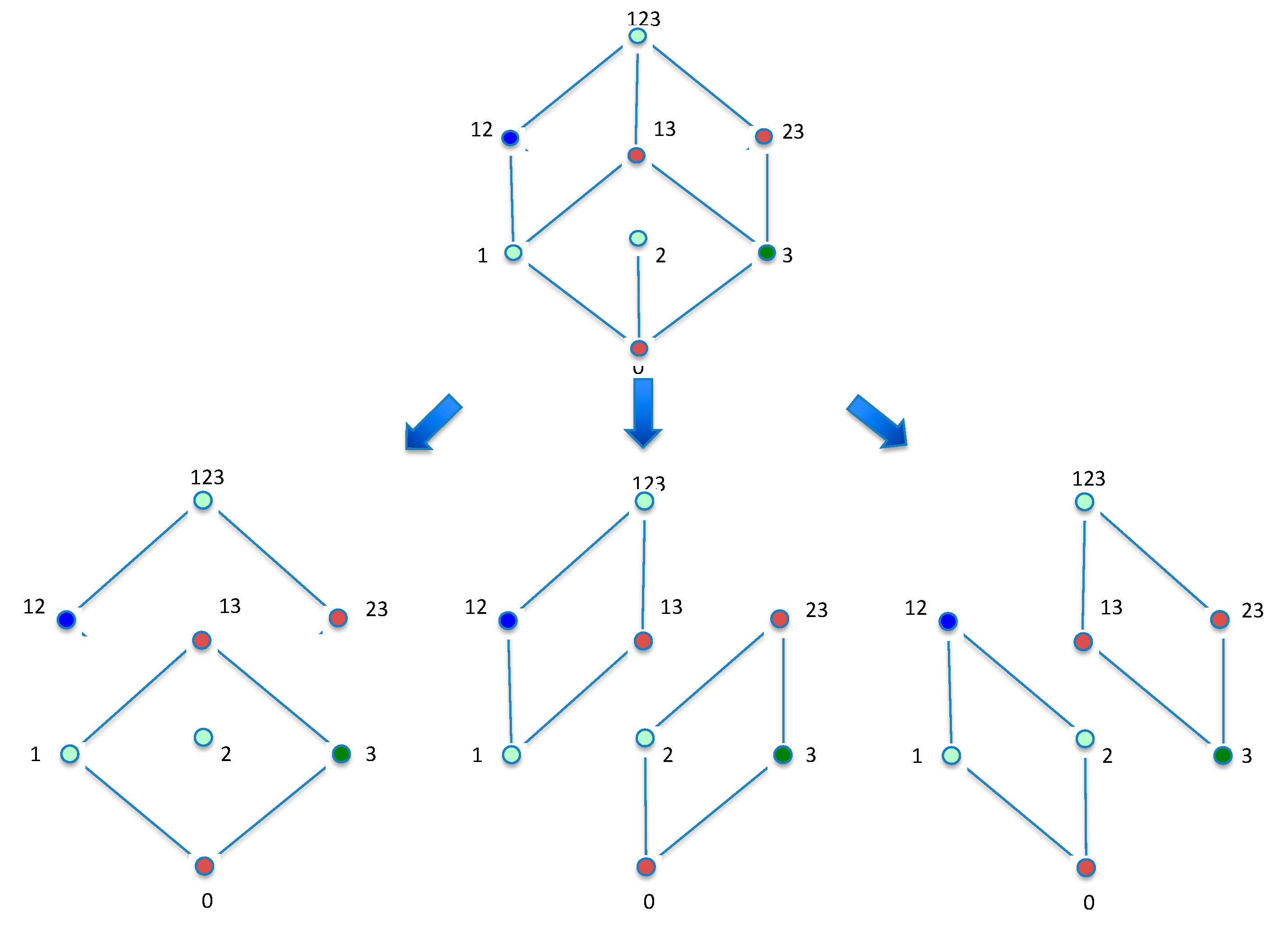

To illustrate the relations concretely the nodes and the Möbius function are shown graphically for three variables in

Figure 1. When the functions in Equation (12) are mapped onto the lattice for three variables, these equations represent the convolution of the lattice functions and the Möbius function over the lattice.

3.2. Möbius Operators

The convolutions with the Möbius function over the lattice in Equation (12) define mappings that can be expressed as operators. The operators can be thought of as mapping of one function on the lattice into another. A function on the lattice, in turn, is a map of the subsets of variables at each node into the real numbers. When acting on sums or differences of functions the operators are distributive.

Definition 1. Möbius down-set operator.

Given a set of variables,, which is the element in the inclusion lattice, we define the Möbius down-set operator,

,

that operates on a function on this lattice.The down-set operator is defined as an operator form of the convolution with the Möbius function: the sum over the lattice of subsets of

, of product of the values of the function times the Möbius function. The upper bound of this convolution is the entire set,, the lower bound is the empty set. Likewise, we can define a Mobius up-set operator. The definition is significantly different in that the lower limit needs to be specified, whereas the downset operator uses the empty set unless otherwise specified.

Definition 2. Mobius up-set operator.

Given a set of variables,, the operator,, is defined as the convolution operator on a function on the inclusion lattice which is the sum is over the lattice of supersets of.

The lower bound of this convolution is and the upper bound is the complete set.

Given a function,

, Equations (13a,b) define the functions

and

, respectively: the

down-set and

up-set inverses, or duals, of

. The sum in the expression of Equation (13a) is the same as the symmetric form of the Möbius inversion [

4]:

and

in Equation (13a) are interchangable, dual with respect to the down set operator (see Equations (12a) and (12b)). Given a subset argument of the function, the up-set operator induces a convolution whose limits are the given subset and the full set, while the down-set operator’s convolution’s limits are the given subset and the empty set.

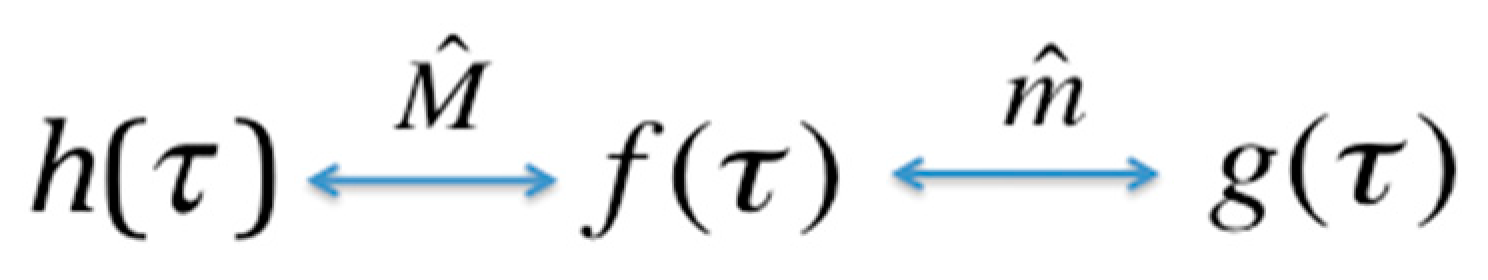

We see from Equation (13a) that the nature of Möbius inversion implies that the down-set operator applied twice yields the identity,

. Similarly, using Equation (13b) we see that

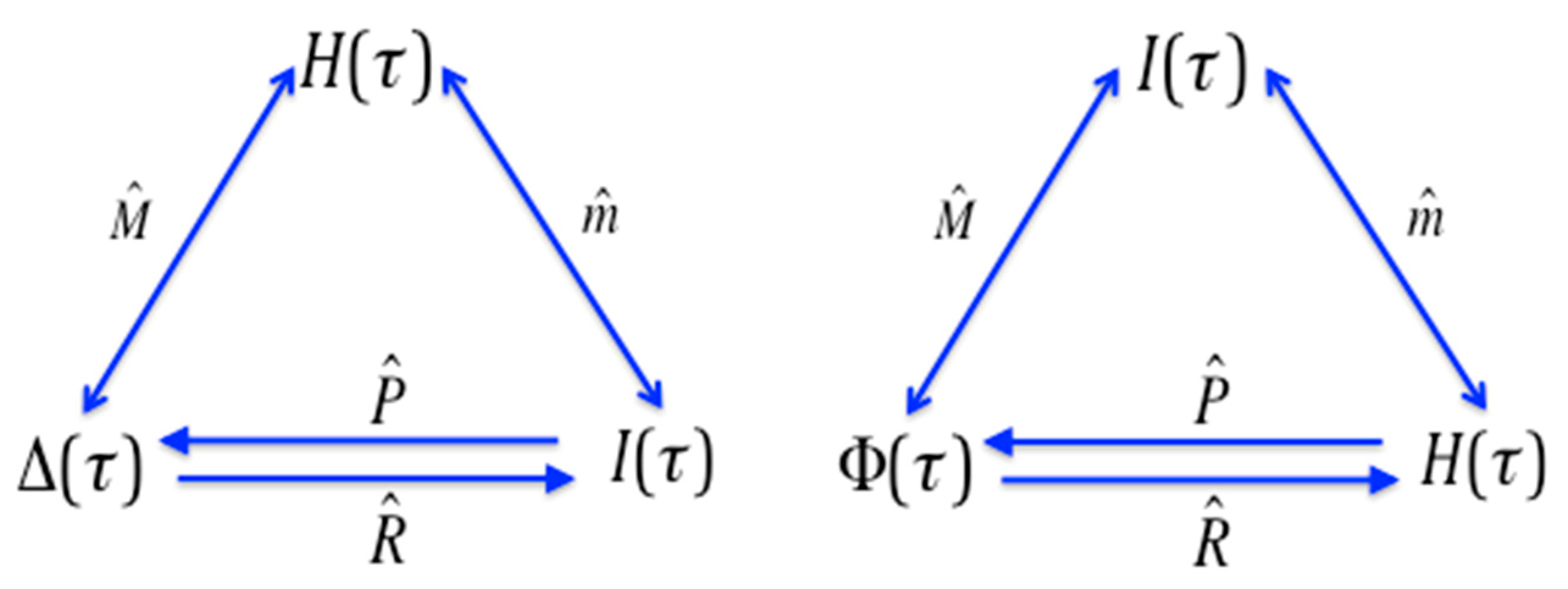

. This is an expression of the duality: this idempotent property of the Möbius operators is equivalent to the symmetry in Equation (12); in other words, the exchangability in these equations, or duality of the functions is exactly the same property as the idempotecy of the operators. The relationships between pairs of the dual functions, generated by the operators are shown in the diagram in

Figure 2. The range of the convolution operators is clear here, but this will not always be true, and where it is ambiguous we use a subscript on the operator to identify the reference set. We will need this subscript in

Section 7.

To advance this formalism further we need to define another operator on the inclusion lattice. The complementation operator,

, has the effect of mapping function values of all elements of the lattice (subsets) into the function values of the corresponding set complement elements. For example, node 1 maps into node 23 in

Figure 1, as 23 is the complement of 1 for the three element set. Viewed in 3D as a geometric object, as shown in

Figure 1, the complementation corresponds to an inversion of the lattice, all such 3-D coordinates mapping into their opposites through the origin at the geometic center of the cube. We thus define the operator

, acting on functions whose arguments are subsets

of the set

:

The sign change factor is added since inversion of the lattice also has the effect of shifting the Möbius function by a sign for odd numbers of total variables on the lattice.

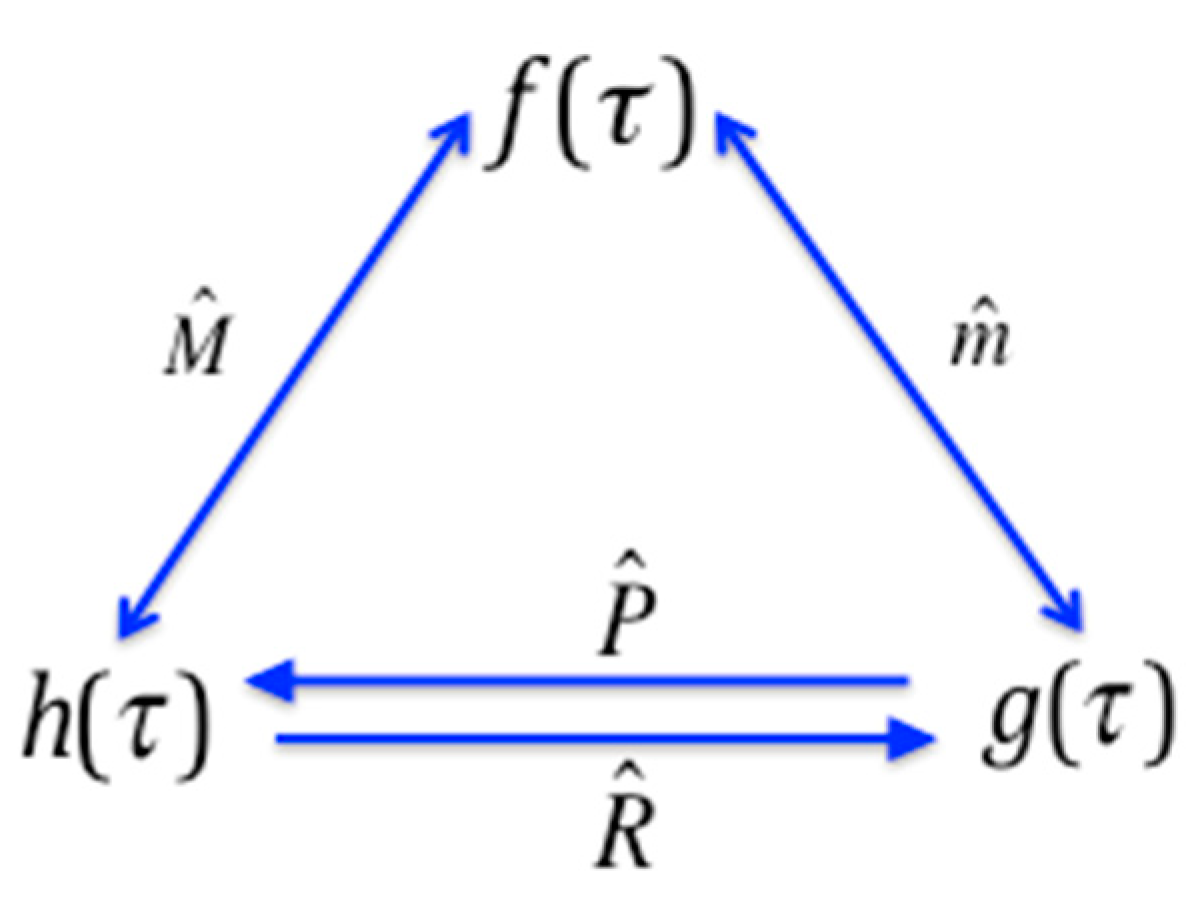

If we define the composite operators,

and

, as:

the pairwise relations among the functions and the operators shown in

Figure 3 then follow. The three- and four-variable case for the relationships in

Figure 3 can easily be confirmed by direct calculation, and as it happens the general case is also easy to prove. The proofs are direct and follow from the Möbius inversion sums, by keeping track of the effects of each of the inversion and convolution operators, and are not presented here.

Let us now collect the operators of

Figure 3, add the identity operator and the composite operators

and

, and calculate the full product table of the set of operators. This product table of the operators is shown in

Table 1.

It is immediately clear that this set of six operators forms a group: the set is closed, it contains an identity element, all its elements have an inverse included, and they demonstrate associativity. Furthermore, examination of the table immediately shows that it is isomorphic to the symmetric group , the group of permutations of three objects.

Table 2 shows the 3 × 3 matrix representation of the group

, with the one-line notation of the operator effect, and the correspondence between the Möbius operators and the

representation.

Note that while the operators themselves, which act on functions, depend on the number of variables since they define convolutions, their relationships do not. Thus, the group structure is independent of the number of variables in the lattice. For any number of variables the structure is simply the permutation group, .

4. Connections to the Deltas

The differential interaction information and the symmetric deltas were defined in [

8] as overall measures of both dependence and complexity (see definitions in Equations (9) and (10)). We will now show the connection between these deltas and our operator algebra. We will use the three-variable case to illustrate the connection. First recall that if the marginal entropies are identified with the function

, then by the definitions of the down-set operator (Equation (13a)) and the interaction information (Equation (7)) we have:

which for three variables using simplified notation is:

If the marginal entropies are identified with the function

in Equation (12), and the interaction informations identified with

, then the differential interaction information is identified with

. For the three-variable case these examples are shown using simplifed notation:

Simplifying the notation we can express the relations between these functions using the Möbius operator as:

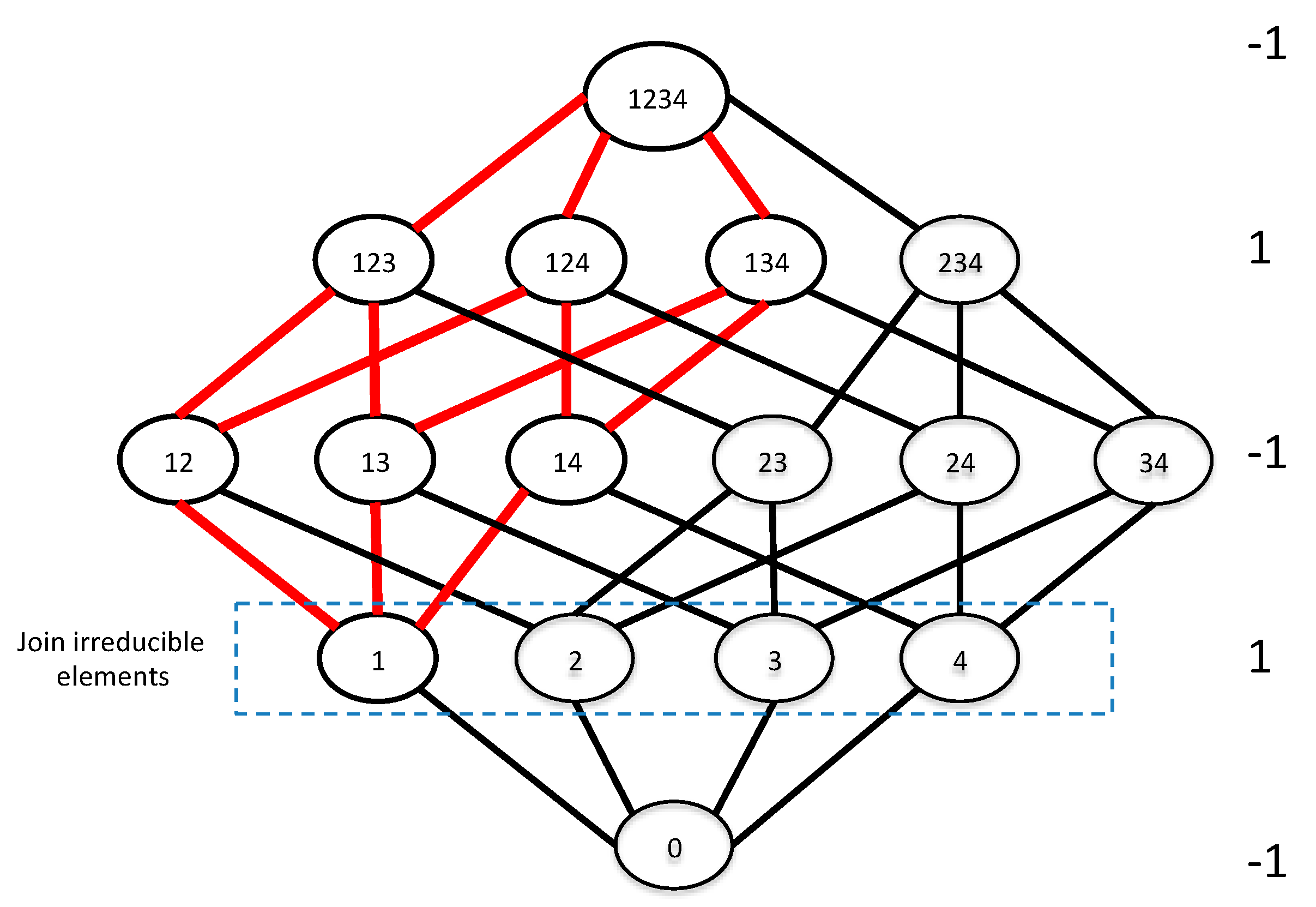

The full set of the lattice is and the variable is singled out as in Equation (16c). Furthermore, the convolution can be seen to take place over the set . Equation (16d), if interpreted properly, provides a simple connection between the deltas and the Möbius operator algebra, and expresses a key relation (Theorem 1). We have proved the following theorem.

Theorem 1. The Möbius up-set operator acting on the join-irreducible elements of the lattice of marginal entropies generates the conditional interaction informations, the deltas, for the full set of variables of the lattice.

Join-irreducible lattice elements are all those that cannot be expressed as the join, or union, of other elements. In this case they are all the single variables. Since the deltas are differentials of the interaction information at the top of the lattice (the argument of the function is the full set), their expression in terms of the join-irreducible elements is the most fundamental form.

To illustrate the relation more concretely,

Figure 4 shows the specific connection between the join-irreducible elements and deltas for the four-variable lattice. A general statement of this connection emerging from this geometric picture is a general property of the algebraic structure of the subset lattice.

Corollary 1. The differential of one function on the lattice corresponds to the up-set operator on another function of the join-irreducible elements.

Written in terms of the functions related by the inversions, and using the same set notation as above, indicating a join-irreducible element, we can state this general result as follows.

If

and

is a join-irreducible element of lattice, then:

where the final term is a conditional form of the

function in which

is instantiated. This is defined as function over all

for which

. These deltas, and delta-like functions more generally, are represented as convolutions over a lattice that is one dimension less than the full variable set lattice.

We have previously proposed the symmetric delta (the product of all variable permutations of the delta function,

) as a measure of complexity, and of collective variable dependence [

8]. Then the symmetric delta, simply the product of the individual deltas, is seen to be the product of the results of the up-set operator acting on the functions of

all of the join-irreducible elements of the entropy lattice. Note that by Equation (8) both the conditional entropies and conditional interaction informations, since they correspond to the differentials, imply a path independent chain rule. Note that these kinds of differential functions include many more than just those generated by the up-set operator acting on the join-irreducible elements, as shown in the next section.

5. Symmetries Reveal a Wide Range of New Relations

The system of functions and operators defined in the previous section reveals a wide range of relationships. Examination of Equation (8) and comparision with 16d shows that delta is also related to the differential entropy (defined by Equation (1)) measuring the change in the entropy of a set when we consider an additional variable. Keep in mind that the differential is defined by the full set and the added variable. Applying the down-set operator to Equation (1), and using sets and as the upper bounds, gives us:

Theorem 2. Given the definition of the differential entropy (the difference in joint entropy of sets of variables with and without,

), and the definitions of the up-set and down-set operators, and their distributive character over functions on the lattice:whereis the element that is the difference between the sets and . Equation (18) is based on the successive application of the differential and the down-set operators (recall from Equations (16a) and (16b) that ). Each of these acts on and produces a function of a subset of variables on the lattice, so their effects are well defined.

We can consider

as an operator, if we define the additional variable that is added to obtain

, but note that it does not define a convolution over elements of the lattice as do the Möbius operators. Considering

as an operator (recalling that it is defined by two sets differening by a single variable) we note that

and

commute. The duality between

and

implies a dual version of Equation (18) as well, which we will not derive here. If we apply other operators to the expression in Equaton (18) we find another set of relations among these marginal entropy functions. For example, another remarkable symmetry emerges:

This can easily be checked for three and four variables by direct calculation, and by referring to the group

Table 1. Equations (18) and (19) are seen to relate functions of the higher lattice elements to functions of the join irreducible elements.

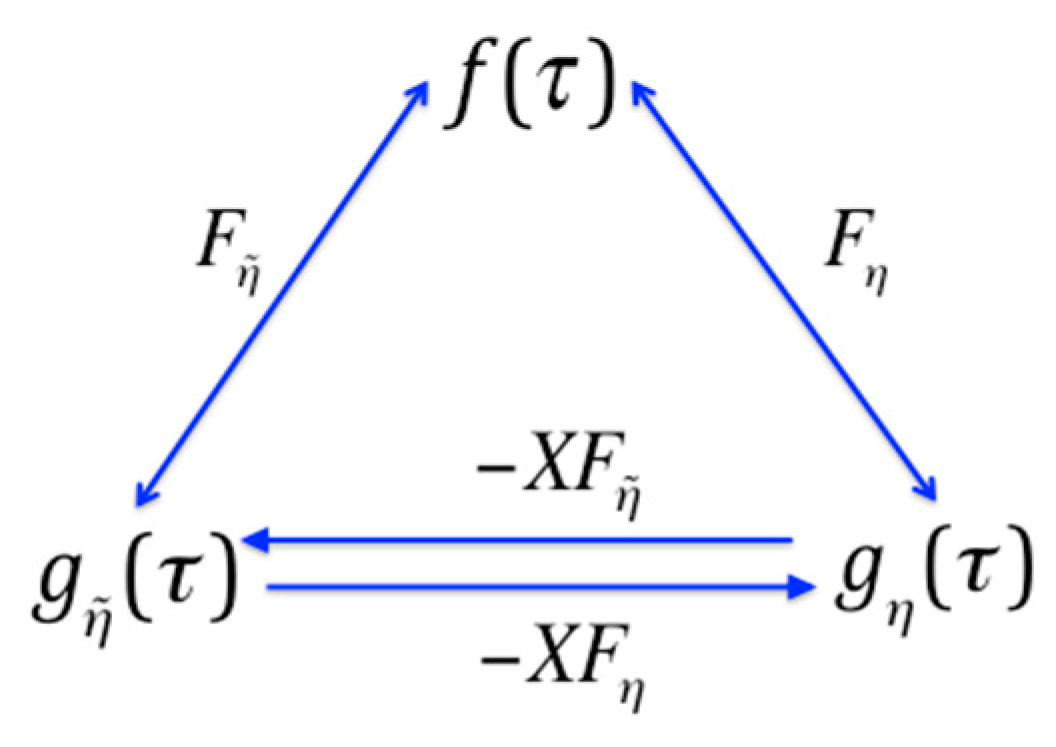

There are further symmetries to be seen in this set of information functions. Consider the mapping diagram of

Figure 3. If we define a function which is simply the delta function with each lattice element mapped into its set complement, that is, acted on by the lattice complementation operator, from Equation (16d) we have (supressing the argument notation in the functions):

Then these functions occupy different positions in the mapping diagram as seen in

Figure 5. Several other such modifications can be generated by similar operations.

There are a large number of similar relations that can be generated by such considerations. There are other information-based measures that we can describe using the operator algebra. Because it is a widely used measure for multi-variable dependence we will now examine the example of multi-information,

, defined by Equation (11). In terms of entropy functions on the lattice elements,

, as expressed in this equation, can be thought of as the sum of the join-irreducible elements, minus the top element or the join of the inclusion lattice. To apply the down-set operator to the terms in Equation (11) we must carefully define the bounds of the convolutions. If we calculate the convolution over the

function, we have:

Since the upper bound of the down-set operator is defined as the argument set of the function, the down-set of a single variable function is the function itself (since

). Note that we are using the distributive property of the operator here. The application of the up-set operator to the multi-information function on the lattice, on the other hand, gives us:

Since the multi-information is a composite function the results of the action of the (distributive) Möbius operators are also composite functions.

7. Generalizing the Möbius Operators

The up-set and down-set operators, and , defined above, generate convolutions over chains from each element of the inclusion lattice to the top element (full set) or to the bottom element (empty set) respectively. The convolutions are either “down”, towards subset elements, or “up” toward supersets. The chains over which the convolutions (sums of the product of function and Möbius function) are taken are clear and are defined by the subset lattice for these two operators. No element is included more than once in the sum. Moreover, the sign of the Möbius function is the same across all elements at the same distance from the extreme elements.

We can generalize the Möbius operators by defining the range of the convolution, the end elements of the paths, to be any pair of elements of the lattice, an upper and lower element, rather than one of them being defined by the bounds of the lattice. Two elements are required: the starting element, and an ending element. The starting element is determined by the argument of the function being operated on, but the ending element can be defined to generalize the operators. We can call the ending element a reference element. The specification of both the upper and lower element is essential here. For example, instead of the up-set operator, with the full set as its natural reference element, we could designate an arbitrary subset element like as the reference and thereby define another operator. Consider now a lattice of the full set , where designates a reference element.

Definition 3. The generalized Möbius operator , acting on a function of a subset, , is defined by Equation (27), where the subsets of variables, , ranges over all of the shortest paths between . The functions only occur once in the sum, of course, even if they are on more than one path, as for the original operators: There are often multiple shortest paths between any two elements in the lattice, since the subset lattice is a hypercube. We specify the upper and lower elements by the reference and the element specified by the function.

The two extreme reference elements, the empty set and the full set, then yield the down-set and up-set operators respectively:

The reference element

establishes a relation between the lattice sums and the Möbius function. It is the juxtaposition of the lattice, anchored at

, to the Möbius function that defines the symmetries of the generalized Möbius operator algebra. Note that we now have the possibility of including elements that are not ordered along the paths by inclusion since the reference element can be chosen from any lattice element. For example, the convolution between

and

for the 3D-cube lattice, shows this clearly (see

Figure 1) as it inclues

,

and the empty set.

Definition 4. Given we define the complement generalized Möbius operator as .

The products of the generalized operators can easy be calculated for the 3- and 4-element sets. We can identify some similarities of these general operators to the operators and . First, we note that the operators, , are all idempotent. This is easy to calculate for the 3D and 4D case, and to derive using the relations indicated in Equation (27). The idempotent property implies that there are pairs of functions that are related by each general Möbius operator – a generalized Möbius inversion on the inclusion lattice, a generalized duality. Furthermore, the products exhibit other familiar symmetries. The notable relationships that involve a subset and its complement are summarized in the following theorem.

Theorem 4. For all the following properties of the generalized Möbius operator and its complement hold: where and are set complements of and correspondingly. Equation (29a) is true since the products of the generalized Möbius operators involve the operator , namely , which in the geometric metaphor is like a rotation of the hypercube (inclusion lattice). Applying Equation (29a) to results in Equation (29b). The property shown in Equation (29c) follows directly from the definition of and its complement. The proof of the last property (Equation (29d)) is direct as follows. Since the limiting elements of the convolution are a subset and its complement, it encompasses the whole lattice. Thus for any subset is seen to describe the convolution over all subsets of the entire lattice and therfore Equation (29d) holds.

The full group structure of the general operator algebra is more complex than the group defined by the up-set and down-set operators as there are many more operators, defined by the full range of reference elements. (If

is the number of subsets on the lattice there are

down-set operators, while for the generalized case there are

operators). The symmetry of the subgroups determined by pairs of complementary subsets are preserved, remaining isomorphic to

(seen to be true for the 3D, and 4D case by direct calculation, and it appears to be generally true, though we do not yet have a proof of the general case). The relations between these pairs of functions on the lattice is described by the diagram in

Figure 6. It appears that the sets of three functions, specific to a reference set

, with the operators that map one into the other exhibit the same overall symmetries reflected in the group

. The pairs of operators identified with a subset and its complement are the key elements of the group. This is because this particular combination of operator and function defines a convolution over the entire set,

. This identity therefore includes the specific up-set and down set relations, and is equal to the interaction information if

is the entropy function.

We now ask if sums of such operator-function pairs can be used to decompose a convolution. This decomposition issue can be addressed by asking this specific question: are there sums of operators acting on functions that add up to a given specific operator acting on another function? If this is possible how do we decompose such convolutions and what do they mean? The simple decomposition of the hypercube into sub-lattices can be shown to be equivalent to the process of finding these convolutions, or operator decompositions. We will not deal with the decomposition relations in a general dimension here, but rather demonstrate them for

and

. First, let’s consider the 3D case. There are three possible ways to decompose the 3D-cube Hasse diagram into two squares (2D hypercubes), which is done by passing a plane through the cube parallel to the faces (see

Figure 7).

Considering one of these decompositions (the leftmost decomposition in

Figure 7) results in the following:

Each of the two terms on the right-hand side could be expressed in operator terms in eight ways (each of the four elements of the sub-lattice being a reference element). There are thus a total of 192 decompositions of the full 3-set convolution, 64 per each of the three decompositions of the cube into two squares. Note that each decomposition leads to the same set of functions, but it is a distinct operator expression. For the 4-set decomposition, there are four ways of decomposing the 4-hypercube into two cubes, so the total number of possible decompositions is 4 × 192 × 192 = 147,456.

8. Discussion

Many diverse information measures have been used in descriptions of order and dependence in complex systems and as data analysis tools [

3,

4,

5,

6,

7,

8,

9,

13,

14]. While the mathematical properties and relationships among these information-related measures are of significant interest in several fields, there has been, to our knowledge, no systematic examination of the full range of relationships and symmetries, and no unification of this diverse range of functions into a single formalism as we do here. Beginning with the known duality relationships, based on Möbius inversions of functions on lattices, we define a set of operators on functions on subset inclusion lattices that map the functions into one another. We show here that they form a simple group, isomorphic to the symmetric group

. A wide range of relationships among the set of functions on the lattice can be expressed simply in terms of this operator algebra formalism. When applied to the information-related measures they can express many relationships among various measures, providing a unified picture and allowing new ways to calculate one from the other using the subset lattice functions. For example, we can express the conditional mutual information in the 4D,

lattice as sums of convolutions of entropy functions with a few terms for multiple 3D and 2D lattices, or create new information functions with specific symmetries and desired properties. Much is left to explore in the full range of implications of this system, including algorithms for prediction from complex data sets, and other ways in which these functions may be used or computed.

This formalism allows us also to make connections with other areas where lattices are useful. Since any distributive lattice is isomorphic to the lattice of sets ordered by inclusion, all the results presented here apply to any system of functions defined on a distributive lattice [

11,

15]. Therefore this unification extends well beyond the information measure functions. Distributive lattices are widespread and include the following: every Boolean algebra is a distributive lattice; the Lindebaum algebra of most logics that support conjunction and disjunction is a distributive lattice; every Heyting algebra is a distributive lattice, every totally ordered set is a distributive lattice with

max as the join and

min as the meet. The natural numbers also form a distributive lattice with the greatest common divisor as the meet and the least common multiple as the join (this infinite lattice, however, requires some extension of the equivalence proof).

The relationships shown here unify, clarify, and can serve to guide the use of a range of measures in the development of the theoretical characterization of information and complexity, and in the algorithms and estimation methods needed for the computational analysis of multi-variable data. Recently Bettencourt and colleagues have used the conditional form of the interaction information (Equation (8)) to generate an expansion which they used to identify subgraphs in complex networks [

16]. This expansion can be viewed as the series of successive delta functions obtained by increasing number of variables and the size of the lattice. The concept of using an expanding lattice (adding variables) that enables such expansions is a very interesting connection to our formalism that will be explored in future work.

We have addressed the relationships between the interaction information, the deltas (conditional interaction information), and the underlying probability densities. We find that the deltas can be expressed as Möbius sums of conditional entropies, the multi-information is simply related by the operators to other information functions, and we made an initial connection to the maximum entropy method. We also note that Knuth has proposed generalizations of the zeta and Möbius functions that define

degrees of inclusion on the lattices [

17,

18]. Knuth’s formalism, integrated with ours, could lead to a more general set of relations, and add another dimension to this theory by incorporating uncertainty or variance in the information-related measures. This could be particularly useful in developing future methods for complexity descriptions and data analysis.

Since the information-related functions have been directly linked to interpretations in algebraic topology [

19] it will also be interesting to explore in future work the topological interpretation of the Möbius operators.

From the simple symmetries of these functions and operators it is clear there is more to uncover in this complex of relationships. The information theory-based measures have a surprising richness and internal relatedness in addition to their practical value in data analysis. While we have described here a systematic structure of relationships and symmetries, the full range of possible relationships, insights and applications using Möbius pairs of functions remains to be fully explored. The practical value of this complex of relationships of information measures will be further evaluated using specific examples in our future publications.