1. Introduction

There are various kinds of entropy describing different systems, e.g., in computations, physics, and dynamical systems. In continuous thermodynamic system, e.g., ideal gases, the entropy has precise meaning of a function that provides foliation of the thermodynamic space of states [

1,

2], which is the statement of the Caratheodory formulation of the second law of thermodynamics. This approach requires a continuous structure on the space of states of the system [

3,

4]. Entropy can be used as comparison measure between states [

3,

4], which is useful in the paper. There is also a point of view that the entropy in a theory can be traced to inaccurate (as it always is) measurement [

5], and the only crucial thing is the difference in entropy and not the entropy itself. In the theory of dynamical systems, topological entropy is used to measure the level of dynamical complexity of a system [

6]. In information theory, the (Shannon) entropy measures how information is produced by its (stochastic) source [

7]. This discussion can be largely extended, however it is not the aim of the paper to make extensive research on the vast literature of the subject. These various interpretations show that the notion of entropy is not well understood.

Apart from these different approaches, better insight is possible when systems with entropy are “connected” in the following sense. In 1961, Rolf Landauer introduced the principle (Landauer’s principle) in irreversible computing in which he postulated that every act of erasing information results in expelling at least

[Joule per bit] (here,

is the Boltzmann constant and

T is the temperature of the system) heat to the environment [

8], i.e., increases thermodynamic entropy. This principle has profound implication in explaining old thermodynamic paradox of the Maxwell’s demon [

9,

10]. There was a dispute on the validity of this principle, however careful derivation [

11] and experimental results, e.g., experimental setup close to the one proposed by Landauer that is presented in [

12], and recent verification in quantum systems that can be found in [

13], prove that the principle is correct. The principle is based on the assumption that every computational system (in principle computer memory) is implemented with the help of physical system and this is a link between information and physical realms.

This paper is an attempt to generalize this principle to every system that contains entropy. This generalized connection between systems is the minimal that preserves entropy-induced ordering. The kinds of structures from category theory [

14,

15] which are involved in the Landauer’s principle are described in the following. Category theory approach has been used in studying entropy, e.g., in [

16,

17]. In this view, it is an extension of the paper [

11], where this categorical viewpoint is abandoned. It is shown that Table 1 of [

11] (see

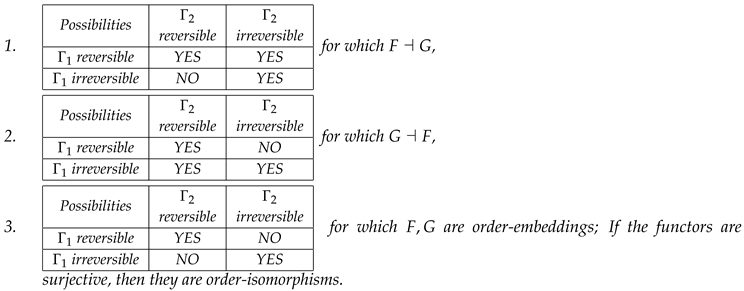

Table 1 in this paper) is an indication of the Galois connection. The mappings (functors) between states of one system (e.g., a logical system) and the second one (a thermodynamic realization of the logical system) preserve entropy properties. These features, when properly defined, are exactly the properties required for the existence of a Galois connection between these two systems seen as ordered sets.

A few remarks are in order before we provide details. The category theory is not an alternative approach in proving the Landauer’s principle, in the same way as it is not a tool to prove basic properties of objects in mathematics. Instead, it offers a layer of abstraction (called “abstract nonsense”) that allows promoting some specific features (e.g., the Landauer’s principle proven by thermodynamic methods) to “universal properties” that can be observed in any other system that shares specific common features. To use this link between specific phenomena/object and category theory, these universal properties have to be proven using specific domain methods. Then, the language of category theory can be used to prove and understand even more on abstract level. The link or “bridge” between original Landauer’s principle and the Galois connection for systems with entropy is formulated and proven in Theorem 4.

This approach is from bottom to top and recently there is some trend in applied science to use such kind of abstract approach to concrete models [

18], especially adjoint functors in physics [

19] of which the Galois connection is a special and distinguished case. In the words of S. Mac Lane, “Adjoint functors arise everywhere.”

This paper is organized as follows: In

Section 2, a brief overview of the mathematical notion of entropy in thermodynamics and the Galois connection is presented for the reader’s convenience.

Section 3 contains the definition of the Landauer’s connection that relates systems with entropy. Then, some various examples of the Landauer’s connection, including description of the classical Landauer’s principle in terms of the Landauer’s connection are outlined. The paper concludes with the discussion on possible implications. The first part of the paper is strict and precise, however

Section 4 varies in the level of precision since the description of a complicated system on high level of generality is in principle impossible or depends on too many details to include them here. Therefore, many conclusions in that section should not be taken too strictly, and are in fact hypotheses or general features rather than strict claims. We however believe that it is worth including them here as they illustrate wide range of disciplines in which the generalized Landauer’s principle can be possibly applied.

3. Main Results

In this section, we use the properties of entropy [

3,

4] and the Galois connection [

15] to construct connection between systems with entropy. We consider general entropy, and not necessarily the thermodynamic one.

The plan of deriving the Galois connection from entropy consists of the following steps:

state-space (G-Set) + entropy → total ordering;

total ordering → poset (G-poset) structure; and

two posets → Galios (Landauer’s) connection between them.

Step 1. The main point is to introduce state-space set

. In the case of thermodynamic systems, the scaling of the system is modeled as an action of the multiplicative group

on the set

, which preserves ordering (as described in [

3,

4] and

Section 2.1). Such kind of element

is the object of a G-Set category [

24], namely

-Set category. However, in non-thermodynamic systems (e.g., information theory), there is usually no group action and we have the following options: narrowly describe the state-space to the category set, select the category of G-Set with the trivial group

, or model state-space on the

-Set category with trivial group action. We select the last possibility since it gives a more uniform approach.

Definition 3. System space is the object of G-Set category, i.e., , where the multiplicative group acts on state-space Γ.

The second ingredient is an entropy function . For example for thermodynamic systems, the entropy must fulfill properties of Definition 1.

It is assumed that every point of

is in the domain of the entropy function. In thermodynamic systems, this assumption is called Comparison Hypothesis and is not always true, as described in [

3,

4]. However, we assume it holds (e.g., no systems with chemical reactions for thermodynamic systems).

The existence of entropy allows us to define

Definition 4. Total ordering ≼ on Γ

is defined in the following way: for . Likewise, The above construction from entropy to ordering for thermodynamic systems is the reverse of the argument from [

3,

4], and it is also sketched in

Section 2.1.

Step 2. The existence of total ordering ≼ on

allows us to define a poset structure. However, for accounts of additional group structure of scaling, the more general approach would be to use G-Pos category [

25], i.e., posets with a group action. The group action is needed only when the scaling is present in the system (in particular thermodynamics). In other systems without scaling, the group action is trivial. Therefore, we omit the group action/scaling part when it is not important in the context and provide modifications in the presence of G-Pos structure of group action/scaling later.

Definition 5. The entropy system is the object of G-Pos category, the objects of which are , with preserving ordering group action (if for there is , then for there is ), where the (partial or) total order is given by the entropy function .

Step 3. The third key ingredient to formulate the Landauer’s connection is the Galois connection from category theory. The short overview of its definition and basic properties are collected in

Section 2.2 for the reader’s convenience.

The definition of the Galois connection suggests that it can relate two thermodynamic or, more generally, entropy systems with state-spaces and . In such case, the existence of entropy imposes poset structure (we omit group action structure for clarity for the moment). This gives our main observation—the following definition is reformulation of the Galois connection (Definition 2) in terms of the entropy, and from the historical reasons we call it the Landauer’s connection (or generalized Landauer’s principle). In the definition below, every poset is treated as a category on its own.

Definition 6. The Landauer’s connection and Landauer’s functor is defined as follows.

Entropy system is implemented/realized/simulated in the entropy system when there is a Galois connection between them, namely, there is a functor and a functor such that .

In terms of the entropy, the condition in Equation (7) is given as We name the functors F and G the Landauer’s functors.

If the group action on set-state is nontrivial, i.e., when scaling of states is present, then we operate on G-Posets and in such situation every functor above, e.g., , consists of two parts that act as :

a set part of the functor: ; and

a function ϕ that is a surjective group endomorphism of ,

We intuitively explain that the functorial properties hold. In the case when there is no scaling ( is trivial), if there are mappings , then they induce the mappings on the connected system and the composition is mapped into . In addition, if there is no transition changing the entropy in , it corresponds to the identity mapping on and this corresponds to the identity mapping on . This is similar for G functor.

Definition 6 is reasonable, as, from the first condition of Theorem 1 in

Section 2.2, the realization of

on

preserves ordering. From the second condition, we get that the mappings

and

do not give lower and higher entropy states, respectively, than the original states, and the third condition shows that

and

preserve images of

F and

G, respectively. For thermodynamic systems the Landauer’s functors preserve entropy properties given in Definition 1. Therefore, for such simulation, the Landauer’s connection is needed, as it is minimal order preserving connection between two posets, and therefore entropy systems.

Surjectivity of is only a technical assumption that simplifies what follows. This assumption is added only for removing an additional degree of freedom, since we can always choose group action to compensate.

It is obvious that, when one system has scaling and the other does not (trivial action of the group), the mapping is the trivial map. However, for nontrivial group action, we have the following

Corollary 1. For Landauer’s connected functors in the presence of scaling, if , then , and . Therefore, ϕ is an isomorphism of groups.

Proof. Assume that for the moment. In the relations , and , substitute and , where . Then, one gets and likewise . Since and are surjective (group homomorphisms), therefore . □

The word “realization” or “simulation” explains that usually we are interested in simulating one, possibly abstract system, which consists some kind of entropy that introduces poset structure in its state-space, e.g., binary computations, using its physical implementation in terms of electronic system, spin system, or any other computing realization, where thermodynamic entropy is given. We can also consider simulation of physical system by the other physical system. If the Landauer’s connection is present, the simulated system will behave as its connected counterpart, following the second law of thermodynamics. If the physical system as one of the Galois connected category is involved, then the connection transfers the second law of thermodynamics to the other category which does not have to be connected with physical world (e.g., from electronic circuits to computation described by the Shannon entropy). This issue is described in details in the next subsection.

Reformulation of the properties of Landauer’s functors is given by Corollary 2.

Corollary 2. The equivalent conditions to Equation (10) are as follows - 1.

For , if then ; analogously for G functor. In other words, F and G are monotone functors.

- 2.

For all , , and .

- 3.

For all , , , and .

The proof is repetition of the proof of Theorem 1.

From Theorem 145 of [

15], we immediately have transitivity of the Galois connection.

Theorem 2. The Landauer’s connection is transitive, namely, if , , and , then, if and , then also .

We have also some kind of uniqueness (see Theorem 144 of [

15]).

Theorem 3. Landauer’s connection is “unique” in the following sense: if and , then , and similarly for the other direction.

From two Landauer’s connected entropy systems, we can isolate those parts that are order isomorphic (see

Section 2.2 for definition). We can define an order isomorphism in the following way (see Definition 118 and Theorem 150 of [

15]):

Construction 1. Take images of the functors and and define new subcategories of posets and . Then, these last categories are order isomorphic by F and G.

The order-isomorphism allows us to introduce classes of equivalences between entropy systems or their subcategories, and therefore introduce in the category of entropy systems (sets of all entropy systems without any arrows apart of identity arrow) the quotient category, whose objects are equivalence classes of order-isomorphism. In addition, order-isomorphism allows us to construct a subsystem of Landauer’s connected systems that can be used to implement the other entropy system faithfully.

We can also close the poset

(see Theorem 151 of [

15]) using the functor

for

, where

. The closure of

(Definition 119 of [

15]) is an endofunctor

, such that for all

we have: (1)

; (2)

K is monotone; and (3)

K is idempotent, i.e.,

. This closure gives the biggest subcategory of

that can be used to simulate/realize

.

The above construction of Landauer connection for entropy systems shows that it is “weakest” relation between them in the sense that it allows only preserving the entropy ordering between them, since the Galois connection is the “weakest” connection between posets.

The final part of this section is devoted to explaining how

Table 1 is related to the Landauer’s connection, and to provide practical tools to indicate this connection.

Corollary 3. Conditions equivalent to the first two conditions of Corollary 2 for are

- 1.

if states are ordered as follows: , then and ; and

- 2.

if states are ordered as follows: , then and .

The Corollary says that the Galois connection is in general not symmetric operation, and functor maps a state to the “higher or equal” state. Likewise, maps a state to the “lower of equal” state.

Order-embedding and order-isomorphism conditions are given by the following standard results from the Galois connection theory [

14,

15]

Lemma 1. The following conditions are equivalent for :

- 1.

.

- 2.

F is surjective,

- 3.

G is injective.

In general, is a closure functor, however

Corollary 4. If and , then and are order-isomorphic.

Finally, we need the definition of reversibility, which mimics thermodynamic reversibility of a process, namely

Definition 7. An entropy system map, that is a poset map , is reversible at , if , that is , i.e., f at p preserves entropy. Otherwise, f is irreversible at p.

Note that reversibility is connected with map and the state on which it acts. Such maps in thermodynamics are called reversible processes and in logic/computing reversible operations.

We are ready to formulate the main theorem that connects classical Landauer’s principle given by

Table 1 with the Landauer’s connection

Theorem 4. For two entropy systems and , and functors and , we have following possibilities for Landauer–Galois connections

Proof. For the first claim, let us take a state and a reversible map that gives , such that (that is , where is the entropy in ). Using the first claim of Corollary 3, we have and and are monotone functors. Therefore, F can map into or , , i.e., is reversible or irreversible process. Irreversible process in is always mapped (functors are monotone) into irreversible process (see the third row of the table).

A similar argument in opposite direction holds for the second case.

For the third case, the functors F and G must map reversible maps to reversible maps and irreversible maps to irreversible ones. Therefore, they preserve ordering so they are order-embeddings onto images (see Lemma 1). If in addition F and G are surjective functors, then from Corollary 4 it results that they are order-isomorphisms. □

The above theorem is a simple tool that helps to detect the presence of the Landauer’s connection and their direction.

In the next section, examples of the Landauer’s connection, including original Landauer’s principle, are given.

4. Examples

This section presents a few examples of various level of details of interplay between entropy systems and Galois connection that we call the Landauer’s connection. We start from simple yet imaginative toy example.

4.1. Toy Example

This example is motivated by a simple example (Example 1.80) of the Galois connection from [

18].

Consider two entropy systems and , where the entropy in both cases is given by the identity (different functions can be selected, e.g., the floor function ; in this case, different ordering on real numbers is used, however derivations are similar). This choice of entropy agrees with standard ordering on natural and real numbers.

One can see that the system

has higher cardinality of states than

. We consider the Galois extension in both ways as in [

18]. In the considerations, these posets are treated as categories on their own, and therefore functors are simple monotone functions.

Case 1. Consider

defined as

and

given by

. We have

since it fulfills Equation (

7), i.e.

Let us consider a few processes on and related processes in induced by the Galois connection:

Consider now the following map given by a simple shift . Take for which . Then, and and therefore process is irreversible (entropy increases). We have with , and with , and therefore, the irreversible process in is mapped by F to reversible process on the level of .

Take the same map with initial point . It gives and therefore and —irreversible process in . Using functor F, we get and . Therefore, irreversible process in is mapped to irreversible process in .

If we take , then reversible (trivial) process in is mapped to reversible process in

No irreversible process in can be realized by a reversible process in .

Summing up, proposed Galois connection gives Case 1 from Theorem 4.

Case 2. We now take defined as and given by . This also defines the Galois connection as it is easily checked. We have the following examples of processes:

The process in , e.g., the shift that irreversibly maps to on the level of gives the map from into which is also irreversible.

For the irreversible shift on that maps to , we have reversible (identity) process in that maps to that is obviously reversible.

Identity (reversible) process in is trivially mapped into reversible process in .

There is no mapping of an irreversible process on to a reversible process in .

In summary, Case 2 of Theorem 4 is restored.

Case 3. For an example of Case 3 of Theorem 4, consider identity mapping between two copies of .

First two examples show how a system with “bigger multiplicity of states” is Galois connected with a system with “smaller number of states” and fulfills the claims of Theorem 4 relating reversible or irreversible processes between these two systems. Similar principle can be used in description of memory chip where two logical states can be realized by some complicated sets of physical states and their internal transitions that realize binary operations. This idea is used in the next subsection.

4.2. Landauer’s Functors and Maxwell’s demon

In this subsection, we describe how the above abstract language can be applied to description of original Landauer’s principle of irreversible computations. Then, the well-known Maxwell’s demon paradox is presented using Landauer’s connection. This is use of a new and more powerful language to the known solution described in detail in [

11].

We again stress that this is not a new solution for the problem, which was solved already. Instead, it is reformulation of the problem in the new abstract language of the Landauer’s connection which in our opinion gives clearer and more uniform description of the problem. The thermodynamic details are hidden in the details of the Galois connection and they manifest themselves in heat emission during irreversible computation.

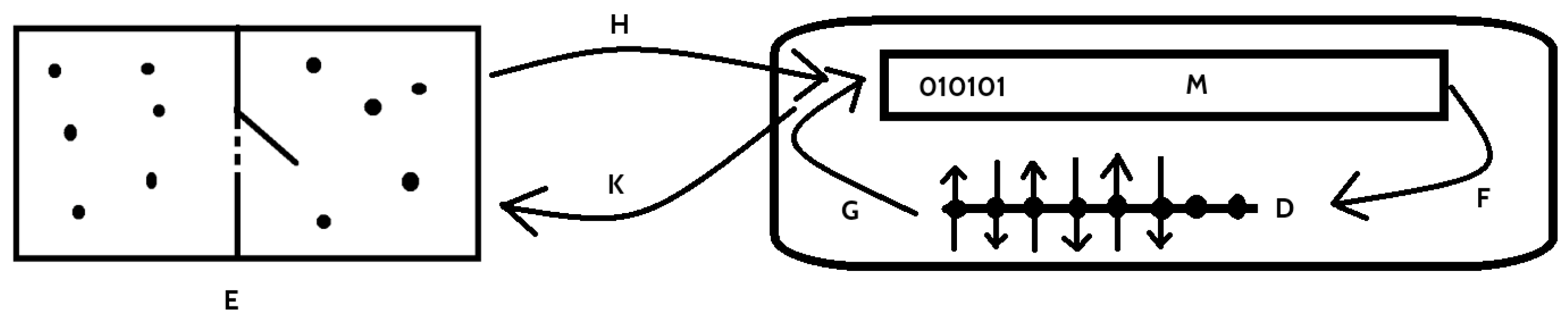

Let us first explain the classical Landauer’s principle in therms of the Landauer’s connection introduced in the previous subsection. Let us consider first computer memory

M that bases on binary logic, and its implementation using some physical system

D. In both cases, they are entropy systems (see

Figure 1). We can therefore build posets using entropy as ordering, namely construct

and

, where

S and

are corresponding entropies.

Since the relation between logical part and physical realization of the memory is described by

Table 1, from Theorem 4, there is a Ladnauer’s connection

between functors

and

. The details of the functors depend on the implementation, however they are maps between logical states and corresponding physical states in the memory implementation, see e.g., [

12,

13]. If there is irreversible operation on the memory

M given by a function

, then it induces irreversible operation on the device

D given by

, and this, by the second law of thermodynamics generates heat that is expelled to the environment. The amount of emitted heat depends on the realization (i.e., on properties of

F and

G), however Landauer showed the lower bound for it, namely,

.

In the next part of our considerations this memory

M is used as a Demon’s memory in the Maxwell’s demon “paradox”. In the experiment, there is the thermodynamic system, i.e., the box with an ideal gas, and the partition that can selectively be opened, i.e., part

E. It is connected with the memory

M, which saves informations on separation of gas particles depending of their kinetic energy, e.g., high kinetic energy particles are collected in the left and low energy particles in the right chamber. The functors

and

are relations between information on localization of the particle in

E and its logical description in

M (not in

D). For details, consider

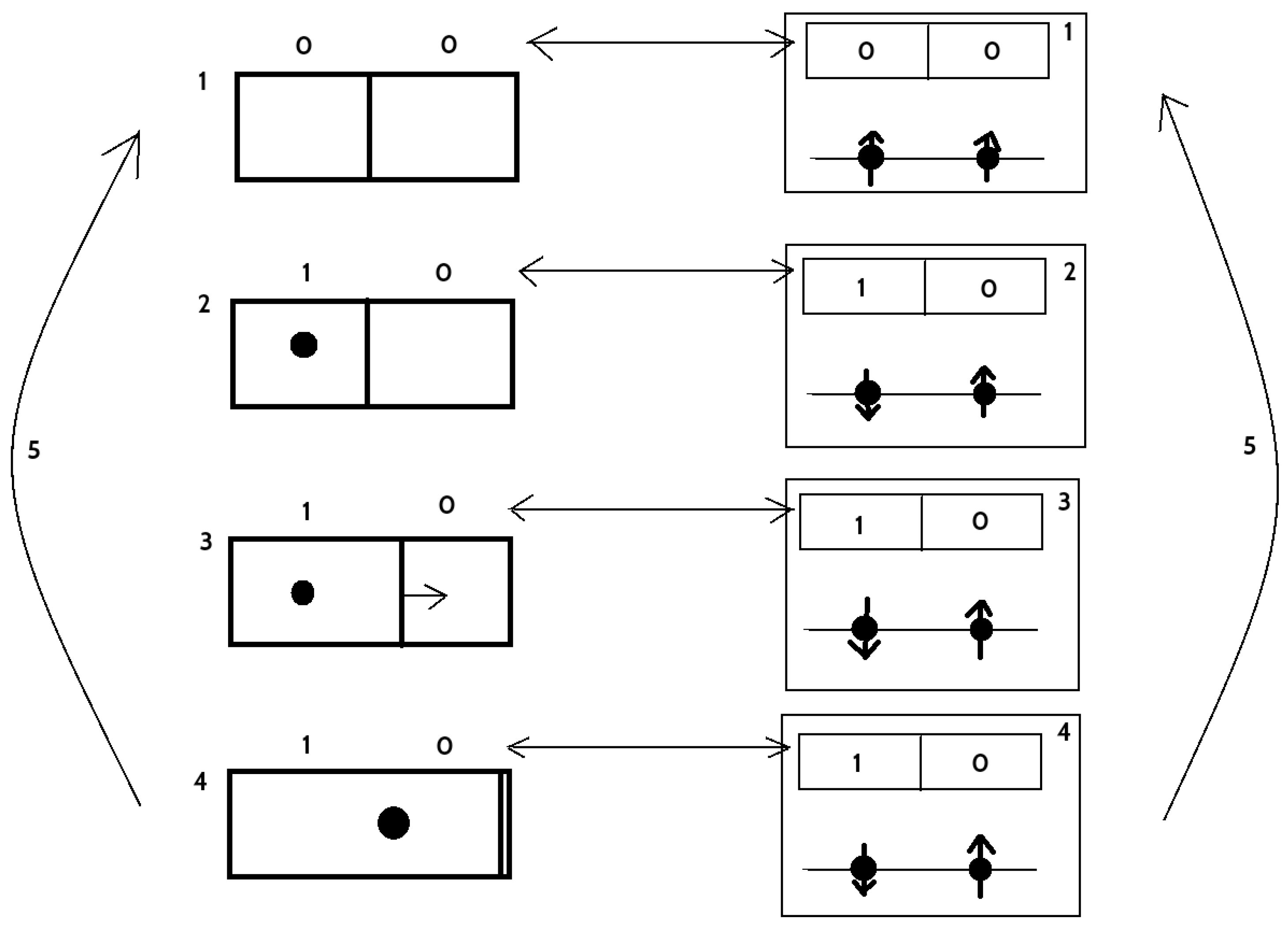

Figure 2 where simplified Maxwell’s demon experiment (due to Leó Szilárd [

26]) with one gas particle is presented. We describe every step in the cycle. Two-bit description of the logical state of the system was selected for better understanding—1 means that the particle is localized in the left (10) or the right (01) part of the box.

In this state, there is no information on localization of the particle, and therefore information entropy as the state is the mixture of two states 01 and 10. This state is associated to 00 bit description (reset) and transferred to the memory.

Particle was localized (for example) in the left chamber (10) so the information entropy is now . This state is transferred into memory, where reversible operation (e.g., ) is performed that change 00 into 10.

Partition starts to move freely. There is adiabatic decompression of a single particle gas. State of the memory is the same as in the previous step.

Partition is pushed maximally to the right. The work done by the particle is , where V is the volume of the box, is the Boltzmann constant, and T is the temperature. Since no heat flow was present, thermodynamic entropy is still constant and the internal energy of the gas decreased. New cycle will start.

This transition is the restart of the cycle. The partition is placed in the middle of the box, and therefore information on localization of the particle is lost. Information entropy is now . State become 00 and it is correlated with the state of the memory—irreversible operation (e.g., ) is performed on the memory, which results in expelling, via Landauer’s principle, at least of heat form its physical part D. Cycle repeats.

One can note that K and H are in fact isomorphisms and they connect the state of the knowledge on the particle position and state of the memory.

Irreversible operation on the memory is transferred via the Landauer’s functor F into irreversible operation on its physical implementation. That results in expelling heat and preserves the second law of thermodynamics for the whole system in every cycle.

If a bigger memory would be used for storing information on the particle localization in a few cycles, then its erasing would expel at least multiple of of heat from D part and preserved the second law of thermodynamics after these cycles. In this case, the end of the cycle is marked by erasing of the memory and not the thermodynamic cycle in E part of the system.

It is interesting to note that the irreversible process f can be transferred to E as , however it produces heat only in D part of the system (however corresponding changes of entropies appear in E, M and D). This explains how the second law of thermodynamics and entropy changes can be transferred from D to E, i.e., irreversible operation f corresponds to irreversible operation on E.

4.3. DNA Computation

In this and the next subsection, sketches of application of the Galois connection to biochemical and biological systems are presented. Due to large scale of complexity of such systems, the description is not detailed and many statements can be treated rather as research hypotheses than firmly stated claims. We believe however that promoting the Galois–Landauer principle to a general principle justifies its formulations in abstract language of category theory, which makes it possible to trace it also in biochemical systems and living organisms.

Every living cell contains “a computer” that operates on complicated chemical principles using DNA (deoxyribonucleic acid), and controls every aspect of the cell. In recent years, such principles were used to implement efficiently some computationally difficult algorithms thanks to enormous parallelization achievable by this approach. For an excellent overview of this subject, see [

27] and references therein.

In short, DNA as a storage of genetic informations consist four bases: A (adenine), G (guanine), C (cytosine) and T (thymine). Single DNA strand can be considered as a list composed with these four letters. The second strand can be connected using the following complementary connection rules: A-T and G-C. Therefore, single strand consists the same amount of information as a double one. In addition, the direction (polarity) of the strand is marked by chemical compounds named and . An example of a short fragment of a DNA strand (called oligonucleotide) is ACTGTA.

Operations on such structure are controlled by changing physical properties of environment (temperature that, e.g., decides if the DNA double helix decouples into individual strands (melting) or combines single strands into double list (annealing)) or chemical properties (especially by adding specially designed enzymes that perform various operations on short pieces of DNA [

27]). In computation, some external, and not present in living organisms, methods such as gel electrophoresis are used [

27].

Following [

28], the most effective representation of bases that are unchanged after changing polarity and the respects complementarity is the three-bit association: A-000, C- 010, G-101, and T-111. One can then represents basic operations in algebraic form [

28].

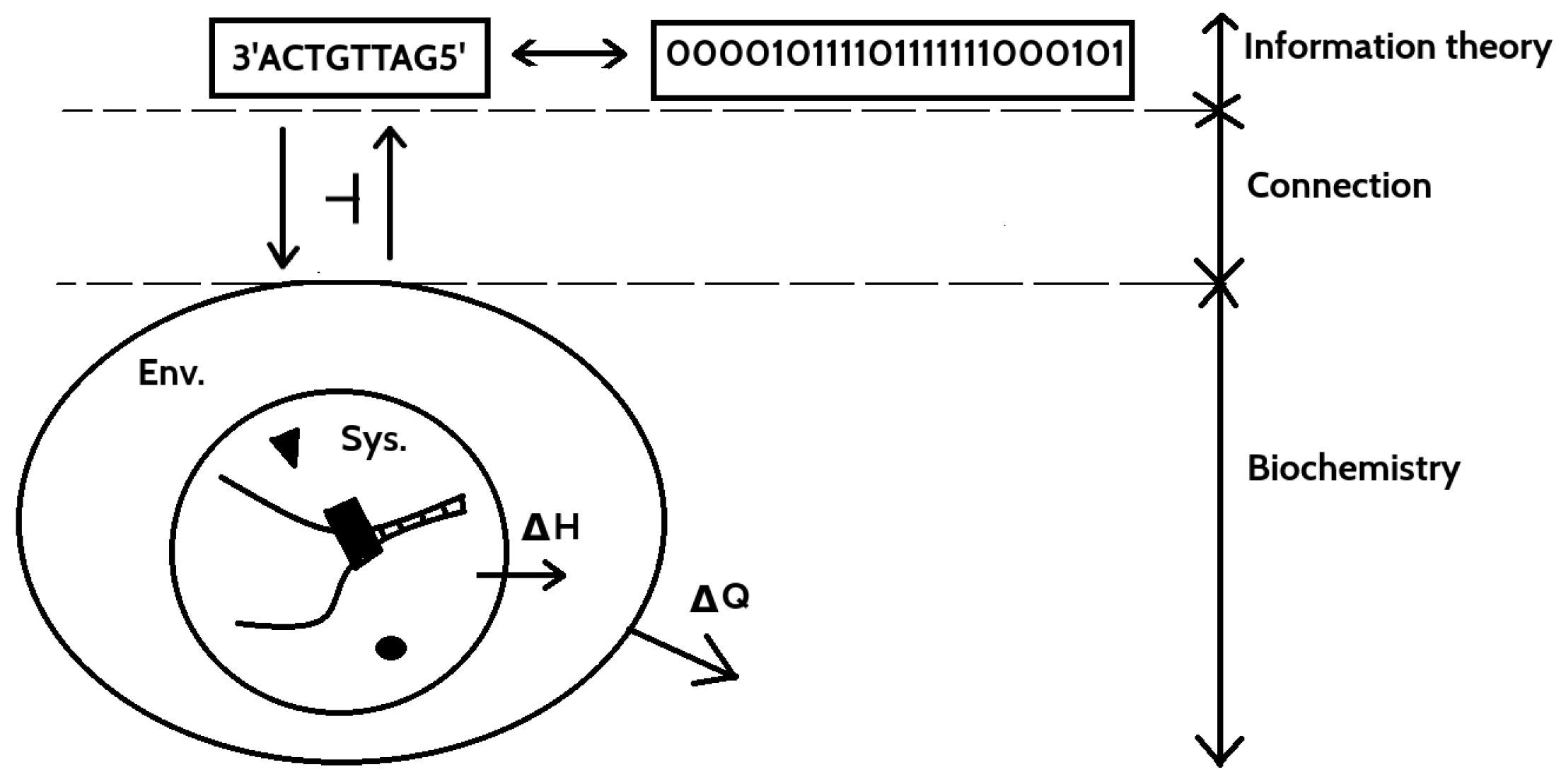

DNA computing and DNA processing in a living cell is a complicated sequence of chemical reactions in some environment usually without sharp borders, and therefore we present only a rough idea how it can be connected with the Galois connection.

On the logical level (see

Figure 3), as described above and in [

27,

28], there is well-formalized set of operations on logical representation of DNA state. In this system, various flavors/notions of entropy that capture different levels of computations can be described—from the Shannon entropy [

29], through block entropy [

30] to topological entropy [

31] among others.

On chemical (realization) level (see

Figure 3), due to enormous complexity, the reactions [

32] in the system with DNA cannot be decoupled from the enclosing environment in which the system is embedded (e.g., living cell or biochemical reactor). The boundary of the environment depends on how complex computation is, e.g., for “small” computation, it can be nucleus of the cell or its membrane (or the test-tube in which compounds are for in vitro computations). However, for long and complex computations, probably the environment in which the cell is living including other cells would be a good choice. Some hints on selecting boundary of the environment result from thermodynamics—boundary should be such a (natural or artificial) barrier/closed surface that the total entropy inside it should always increase or remains constant for the whole process. In other words, it should be minimal boundary of volume in which the second law of thermodynamics is fulfilled for all time of the process.

This whole composed system and environment have to obey the second law of thermodynamic. Interaction between the System and the Environment drive the System with DNA to perform some specific reactions by changing physical and chemical properties of the System. That leads to change in the Gibbs free energy (

Figure 3) that determines direction of the reactions in the DNA system.

Similarity with the model of physical memory described above suggests that such relation between information stored in DNA and various logical operations from logical side, and their chemical realization by the system and environment qualify to describe them in terms of the Galois–Landauer’s connection. If this hypothesis is true, then it can shed some light on basic principles of life on elementary level. This is reasonable hypothesis since such complicated chemical reactions (whatever optimized by evolution process) should always increase total entropy (nontrivial reversible and irreversible computations should be realized by irreversible chemical processes that increase total entropy). In addition, every irreversible computation on logical level, via hypothetical connection, release the Landauer’s heat to the environment.

The model of DNA as a memory modeled using the Galois connection is also vital in view of recent experiment that show how to encode large amount of data (short movie) in DNA of living organism [

33].

In this example, we are motivated by the fact that Galois connection is a model for operation on single genetic bases and their chemical realization. In the next example, we present that the similar structure should exist on the level of genes (conglomerates of genetic information that encode proteins structures) and animal species in the tree of life.

4.4. Is 42 the Meaning of Life?

The provocative title of this subsection refers to the fiction book The Hitchhiker’s Guide to the Galaxy by Douglas Adams. He describes an advanced civilization that designed planet-size computer (Earth) with a “biological component” that should answer the “Ultimate Question of Life, The Universe, and Everything”. This fictional idea surprisingly well resembles the following construction.

The hypothesis on existence of the Galois in the previous example, if true, shows that that on the basic level, life is a computation process on chemical components. On larger scales, the Galois connecting can also conjugate expression of gene pool and animal species interconnections. This vague idea is described in [

18], Example 1.84, and it is worth citing here for completing the picture. Due to complexity of biological examples, only a rough idea is presented. Some general idea on the level of such complexity is presented in [

32] and reverences therein.

Following [

18], let

be the poset describing possible animal populations with inclusion

if the animal species

p is also the animal species

q in the sense of specificity on the tree of life (see Example 1.51 in [

18]). Moreover, the inclusion of species induce some kind of entropy that can be used to measure various changes in the tree of Life.

The other poset describes gene polls and the ordering has the following meaning: when the gene pool b can be generated by the gene pool a. The ordering also can be used to define some kind of entropy that measures ordering or information loss on the level of gene pools.

The Galois connection is defined by two monotone functions (functors, when posets are treated as categories on their own) [

18]. The first one

sends each population to the gene pool that defines it. The second functor

sends each gene pool to the set of animals that can be obtained by recombination of the given gene pool. Then,

in this very broad sense.

This example involves structure of the whole population of organism on Earth and their genetic information as “a database” for processes that describe computations (evolution). Since connected systems are not of thermodynamic origin, no heat during the irreversible process is expelled (it is even difficult to define such quantity not having a definition of temperature in the model). However, operating on not too strict level, the process of evolution can be decomposed, using the hypothesis from the previous section, into chemical reactions. Since evolution is a long-term process, the environment for computation would involve the whole Earth and all organisms in which biochemical reactions take place. This is an unrealistic model, and, therefore, usually focusing on small piece of systems and their interaction with environment is a more reasonable approach [

16,

17]. However, this abstract level motivates the introduction of “the heat of evolution”—Landauer’s heat of all chemically realized bio-computations that were (and still are) realized in the process of evolution.

This idea can serve as a model of life on Earth, however the way to state it precisely requires mathematical biology, biochemistry and taxonomy to be developed on very detailed and precise level not available currently. Only then can. an exact definition of Galois functors be provided. Moreover, this slightly modified example of the Galois connection from [

18] gives hints how to develop entropy measures consistent (preserved by the connection) between biochemical, genetic and taxonomy levels provided that the details of the Galois connections are known.

The situation in modeling such structure is somehow simpler in evolutionary robotics [

34] where a virtual environment that resembles some features of physical environment in which robot operates is used to simulate artificial evolution that is optimization of robot shapes. Genetic code in this case is a set of optimized parameters of robot (e.g., the length of the legs of a robot or its neural network design) and environment is represented by some multidimensional function called fitness function [

35]. Virtual evolution is in fact multidimensional optimization process that is aimed to find minimum of fitness function (which can be globally shifted to have a value equal to 42). This minimum (which can be non-unique) describes optimal fitness in a given environment for the robot construction with respect to optimized parameters. Due to larger “rigidity” of environment (usually fitness function does not change or changes very slowly) than in biological situation (when environment changes abruptly and contains other interacting specimens), the construction of the Galois connection between robots genetic poll and their construction features, and related entropies (in analogy to the presented above biological example), should be easier. The possibility and details of such construction deserves another paper.

Summing up abstract discussion from the last two subsections, on every level of life, there is a pattern which resembles the Galois connection. This pattern can be a hint for an emergent phenomena in biology.