Exact Probability Distribution versus Entropy

Abstract

:1. Introduction

2. Languages

= {x1, x2,. . ., xn}, where the probability distribution is given by pX(x) = Pr(X = x). Introduce the short-hand notation pi = pX(xi), where

. In the following, the state space

= {x1, x2,. . ., xn}, where the probability distribution is given by pX(x) = Pr(X = x). Introduce the short-hand notation pi = pX(xi), where

. In the following, the state space

and its size n are considered as an alphabet with a certain number of symbols. Words are formed by combining symbols into strings. From n symbols, it is possible to form nm different words of length m. Shannon introduced various orders of approximations to a natural language, where the zero-order approximation is obtained by choosing all letters independently and with the same probability. In the first-order approximation, the complexity is increased by choosing the letters according to their probability of occurrence in the natural language. In zero- and first-order approximation, the strings thus consist of independent and identically-distributed (i.i.d.) random variables. For higher-order approximations, the variables are no longer independent [8].

and its size n are considered as an alphabet with a certain number of symbols. Words are formed by combining symbols into strings. From n symbols, it is possible to form nm different words of length m. Shannon introduced various orders of approximations to a natural language, where the zero-order approximation is obtained by choosing all letters independently and with the same probability. In the first-order approximation, the complexity is increased by choosing the letters according to their probability of occurrence in the natural language. In zero- and first-order approximation, the strings thus consist of independent and identically-distributed (i.i.d.) random variables. For higher-order approximations, the variables are no longer independent [8].2.1. Zero-Order Approximation

2.2. First-Order Approximation

2.3. Second-Order Approximation

then, the conditional probability distribution of Y given X is given by pY (y|X = x) = Pr(Y = y|X = x). Introduce the short-hand notation Pij = pY (xj |X = xi), the probability that symbol xj follows symbol xi. P is an n × n matrix, where n is the size of the alphabet, and the sum of the elements in each row is one. The probability of occurrence of each symbol in the alphabet, pi, can easily be obtained from matrix P using the two equations (PT − I)p = 0 and |p| = 1, where p is a vector of length n with elements pi.

then, the conditional probability distribution of Y given X is given by pY (y|X = x) = Pr(Y = y|X = x). Introduce the short-hand notation Pij = pY (xj |X = xi), the probability that symbol xj follows symbol xi. P is an n × n matrix, where n is the size of the alphabet, and the sum of the elements in each row is one. The probability of occurrence of each symbol in the alphabet, pi, can easily be obtained from matrix P using the two equations (PT − I)p = 0 and |p| = 1, where p is a vector of length n with elements pi.3. Numerical Evaluation of Guesswork

3.1. Quantification

3.2. Random Selection

3.3. Normal Distribution

4. Results

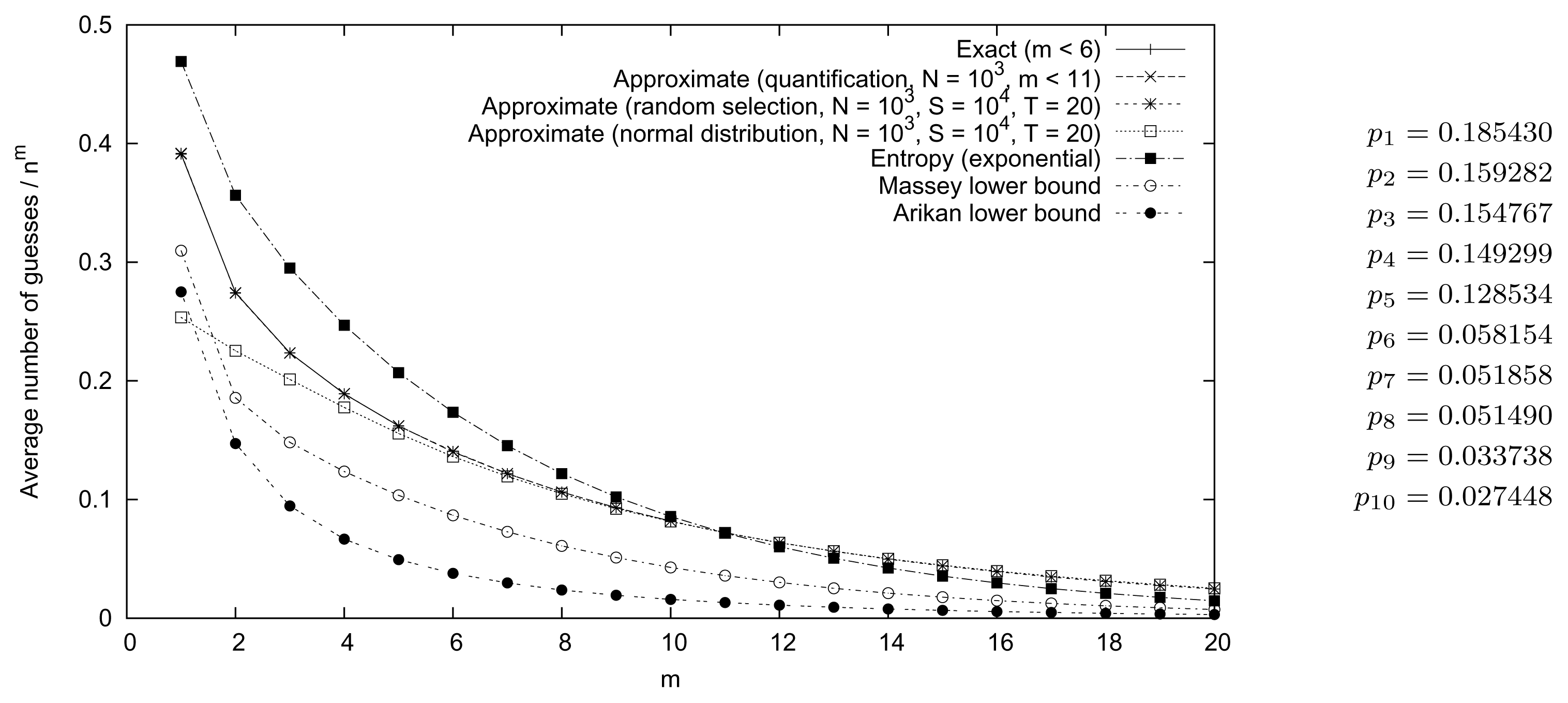

4.1. Random Probability Distribution

Error Estimates

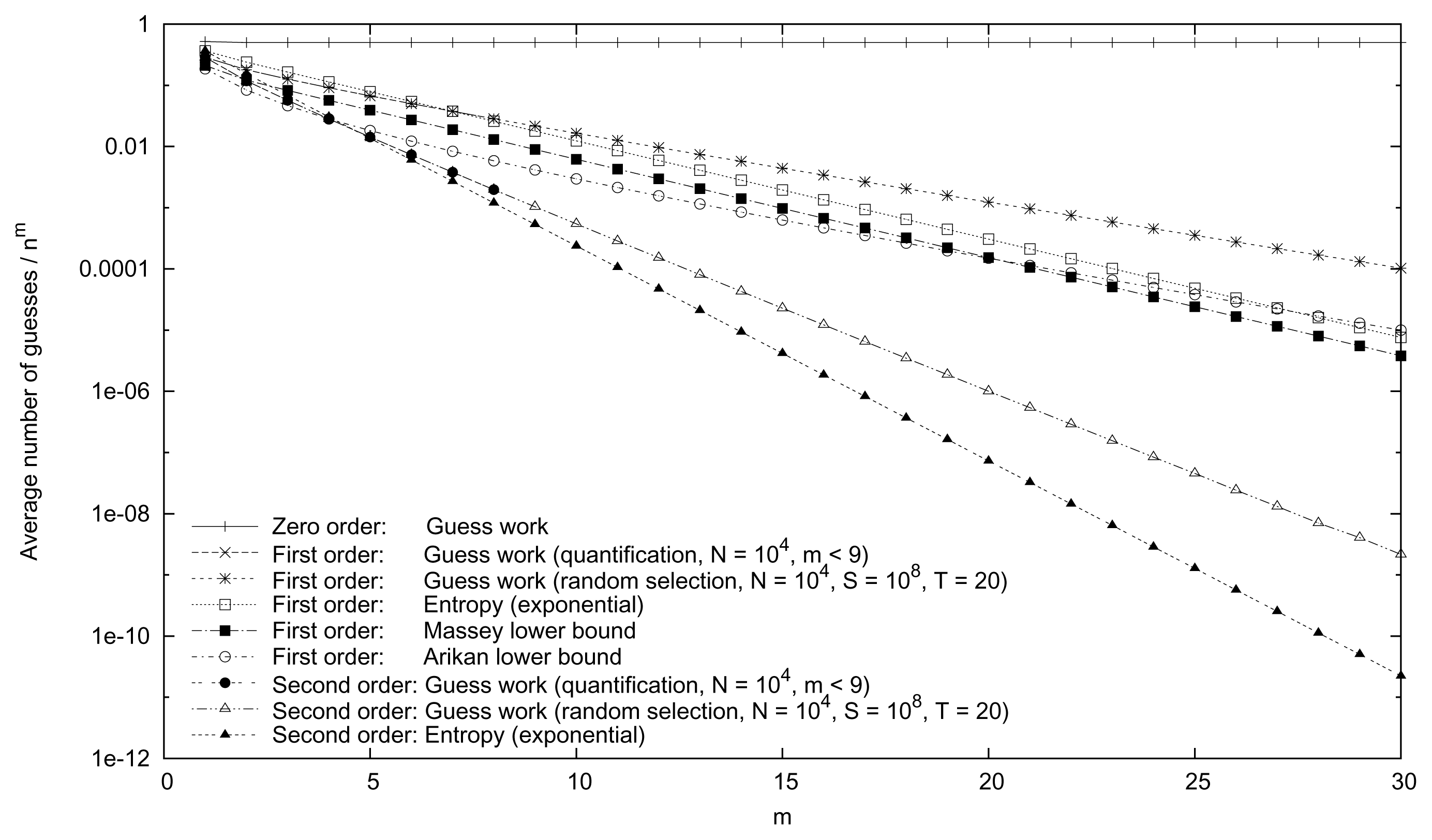

4.2. English Language

Error Estimates

5. Conclusions

Acknowledgments

Appendix: English Bigram Frequencies

| A | B | C | D | E | F | G | H | I | J | K | L | M | N | O | P | Q | R | S | T | U | V | W | X | Y | Z | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| A | 1 | 32 | 39 | 15 | 0 | 10 | 18 | 0 | 16 | 0 | 10 | 77 | 18 | 172 | 2 | 31 | 1 | 101 | 67 | 124 | 12 | 24 | 7 | 0 | 27 | 1 |

| B | 8 | 0 | 0 | 0 | 58 | 0 | 0 | 0 | 6 | 2 | 0 | 21 | 1 | 0 | 11 | 0 | 0 | 6 | 5 | 0 | 25 | 0 | 0 | 0 | 19 | 0 |

| C | 44 | 0 | 12 | 0 | 55 | 1 | 0 | 46 | 15 | 0 | 8 | 16 | 0 | 0 | 59 | 1 | 0 | 7 | 1 | 38 | 16 | 0 | 1 | 0 | 0 | 0 |

| D | 45 | 18 | 4 | 10 | 39 | 12 | 2 | 3 | 57 | 1 | 0 | 7 | 9 | 5 | 37 | 7 | 1 | 10 | 32 | 39 | 8 | 4 | 9 | 0 | 6 | 0 |

| E | 131 | 11 | 64 | 107 | 39 | 23 | 20 | 15 | 40 | 1 | 2 | 46 | 43 | 120 | 46 | 32 | 14 | 154 | 145 | 80 | 7 | 16 | 41 | 17 | 17 | 0 |

| F | 21 | 2 | 9 | 1 | 25 | 14 | 1 | 6 | 21 | 1 | 0 | 10 | 3 | 2 | 38 | 3 | 0 | 4 | 8 | 42 | 11 | 1 | 4 | 0 | 1 | 0 |

| G | 11 | 2 | 1 | 1 | 32 | 3 | 1 | 16 | 10 | 0 | 0 | 4 | 1 | 3 | 23 | 1 | 0 | 21 | 7 | 13 | 8 | 0 | 2 | 0 | 1 | 0 |

| H | 84 | 1 | 2 | 1 | 251 | 2 | 0 | 5 | 72 | 0 | 0 | 3 | 1 | 2 | 46 | 1 | 0 | 8 | 3 | 22 | 2 | 0 | 7 | 0 | 1 | 0 |

| I | 18 | 7 | 55 | 16 | 37 | 27 | 10 | 0 | 0 | 0 | 8 | 39 | 32 | 169 | 63 | 3 | 0 | 21 | 106 | 88 | 0 | 14 | 1 | 1 | 0 | 4 |

| J | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 |

| K | 0 | 0 | 0 | 0 | 28 | 0 | 0 | 0 | 8 | 0 | 0 | 0 | 0 | 3 | 3 | 0 | 0 | 0 | 2 | 1 | 0 | 0 | 3 | 0 | 3 | 0 |

| L | 34 | 7 | 8 | 28 | 72 | 5 | 1 | 0 | 57 | 1 | 3 | 55 | 4 | 1 | 28 | 2 | 2 | 2 | 12 | 19 | 8 | 2 | 5 | 0 | 47 | 0 |

| M | 56 | 9 | 1 | 2 | 48 | 0 | 0 | 1 | 26 | 0 | 0 | 0 | 5 | 3 | 28 | 16 | 0 | 0 | 6 | 6 | 13 | 0 | 2 | 0 | 3 | 0 |

| N | 54 | 7 | 31 | 118 | 64 | 8 | 75 | 9 | 37 | 3 | 3 | 10 | 7 | 9 | 65 | 7 | 0 | 5 | 51 | 110 | 12 | 4 | 15 | 1 | 14 | 0 |

| O | 9 | 18 | 18 | 16 | 3 | 94 | 3 | 3 | 13 | 0 | 5 | 17 | 44 | 145 | 23 | 29 | 0 | 113 | 37 | 53 | 96 | 13 | 36 | 0 | 4 | 2 |

| P | 21 | 1 | 0 | 0 | 40 | 0 | 0 | 7 | 8 | 0 | 0 | 29 | 0 | 0 | 28 | 26 | 0 | 42 | 3 | 14 | 7 | 0 | 1 | 0 | 2 | 0 |

| Q | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 20 | 0 | 0 | 0 | 0 | 0 |

| R | 57 | 4 | 14 | 16 | 148 | 6 | 6 | 3 | 77 | 1 | 11 | 12 | 15 | 12 | 54 | 8 | 0 | 18 | 39 | 63 | 6 | 5 | 10 | 0 | 17 | 0 |

| S | 75 | 13 | 21 | 6 | 84 | 13 | 6 | 30 | 42 | 0 | 2 | 6 | 14 | 19 | 71 | 24 | 2 | 6 | 41 | 121 | 30 | 2 | 27 | 0 | 4 | 0 |

| T | 56 | 14 | 6 | 9 | 94 | 5 | 1 | 315 | 128 | 0 | 0 | 12 | 14 | 8 | 111 | 8 | 0 | 30 | 32 | 53 | 22 | 4 | 16 | 0 | 21 | 0 |

| U | 18 | 5 | 17 | 11 | 11 | 1 | 12 | 2 | 5 | 0 | 0 | 28 | 9 | 33 | 2 | 17 | 0 | 49 | 42 | 45 | 0 | 0 | 0 | 1 | 1 | 1 |

| V | 15 | 0 | 0 | 0 | 53 | 0 | 0 | 0 | 19 | 0 | 0 | 0 | 0 | 0 | 6 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| W | 32 | 0 | 3 | 4 | 30 | 1 | 0 | 48 | 37 | 0 | 0 | 4 | 1 | 10 | 17 | 2 | 0 | 1 | 3 | 6 | 1 | 1 | 2 | 0 | 0 | 0 |

| X | 3 | 0 | 5 | 0 | 1 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 1 | 4 | 0 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 0 |

| Y | 11 | 11 | 10 | 4 | 12 | 3 | 5 | 5 | 18 | 0 | 0 | 6 | 4 | 3 | 28 | 7 | 0 | 5 | 17 | 21 | 1 | 3 | 14 | 0 | 0 | 0 |

| Z | 0 | 0 | 0 | 0 | 5 | 0 | 0 | 0 | 2 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 |

Conflicts of Interest

References and Notes

- Christiansen, M.M.; Duffy, K.R. Guesswork, large deviations, and Shannon entropy. IEEE Trans. Inf. Theory 2013, 59, 796–802. [Google Scholar]

- Dürmuth, M.; Chaabane, A.; Perito, D.; Castelluccia, C. When privacy meets security: Leveraging personal infomation for password cracking 2013. arXiv:1304.6584v1.

- Pliam, J.O. The disparity between work and entropy in cryptology; Available online: http://eprint.iacr.org/1998/024 accessed on 5 October 2014.

- Lundin, R.; Lindskog, S.; Brunström, A.; Fischer-Hübner, S. Using guesswork as a measure for confidentiality of selectivly encrypted messages. In Advances in Information Security; Gollmann, D., Massacci, F., Yautsiukhin, A., Eds.; Springer: New York, NY, USA, 2006; Volume 23, pp. 173–184. [Google Scholar]

- Arikan, E. An inequality on guessing and its application to sequential decoding. IEEE Trans. Inf. Theory 1996, 42, 99–105. [Google Scholar]

- Massey, J.L. Guessing and entropy. Proceedings of the 1994 IEEE International Symposium on Information Theory, Trondheim, Norway, 27 June–1 July 1994; p. 204.

- Andersson, K. Numerical evaluation of the average number of successive guesses. In Unconventional Computation and Natural Computation; Proceedings of 11th International Conference on Unconventional Computation and Natural Computation (UCNC 2012), Orléans, France, 3–7 September, 2012, Lecture Notes in Computer Science; Volume 7445, Durand-Lose, J., Jonoska, N., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; p. 234. [Google Scholar]

- Shannon, C.E. A mathmatical theory of communication. Bell Syst. Tech. J 1948, 27. [Google Scholar]

- Malone, D.; Sullivan, W. Guesswork is not a substitute for entropy. Proceedings of the Information Technology and Telecommunications Conference, Cork, Ireland, 26–27 October 2005.

- Cover, T.M.; Thomas, J.A. Elements of Information Theory, 2nd ed; Wiley: Hoboken, NJ, USA, 2006. [Google Scholar]

- Box, G.E.P.; Hunter, J.S.; Hunter, W.G. Statistics for Experimenters: Design, Innovation and Discovery, 2nd ed; Wiley: Hoboken, NJ, USA, 2005. [Google Scholar]

- Erf. Available online: http://mathworld.wolfram.com/Erf.html accessed on 5 October 2014. from this source the integral has been used.

- for large x

- Nicholl, J. Available online: http://jnicholl.org/Cryptanalysis/Data/DigramFrequencies.php accessed on 5 October 2014.

| Order | Expression |

|---|---|

| 0 | 1/2 |

| 1 | 0.481 · 0.801m · m−1/2 |

| 2 | 0.632 · 0.554m · m−1/2 |

© 2014 by the authors; licensee MDPI, Basel, Switzerland This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Andersson, K. Exact Probability Distribution versus Entropy. Entropy 2014, 16, 5198-5210. https://doi.org/10.3390/e16105198

Andersson K. Exact Probability Distribution versus Entropy. Entropy. 2014; 16(10):5198-5210. https://doi.org/10.3390/e16105198

Chicago/Turabian StyleAndersson, Kerstin. 2014. "Exact Probability Distribution versus Entropy" Entropy 16, no. 10: 5198-5210. https://doi.org/10.3390/e16105198

APA StyleAndersson, K. (2014). Exact Probability Distribution versus Entropy. Entropy, 16(10), 5198-5210. https://doi.org/10.3390/e16105198