Deep Learning-Based Approach for Weed Detection in Potato Crops †

Abstract

1. Introduction

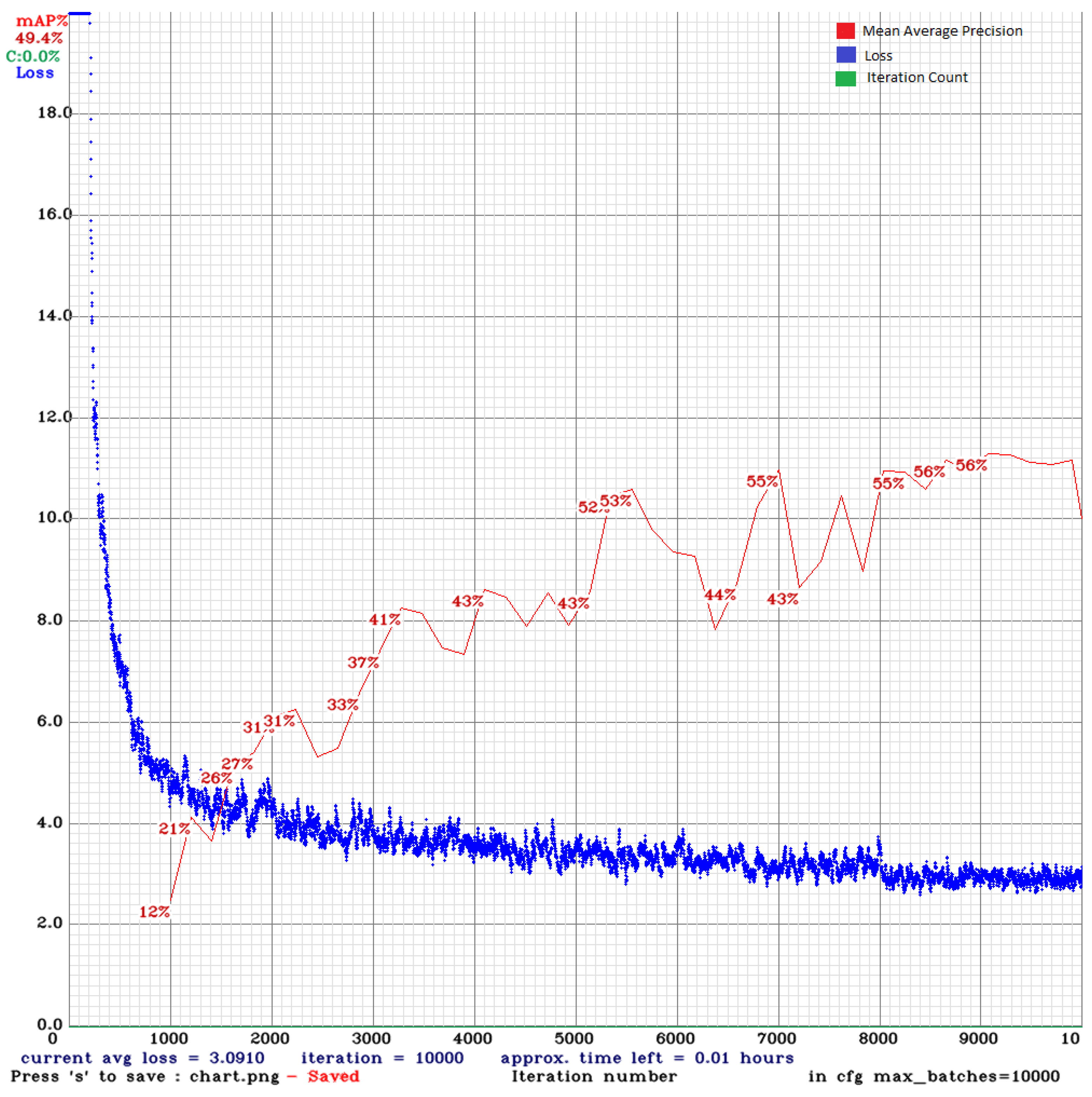

2. Methodology

3. Results

3.1. Evaluation Indicators

3.2. Experimental Results

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Gu, J.; Wang, Z.; Kuen, J.; Ma, L.; Shahroudy, A.; Shuai, B.; Liu, T.; Wang, X.; Wang, G.; Cai, J.; et al. Recent advances in convolutional neural networks. Pattern Recognit. 2018, 77, 354–377. [Google Scholar] [CrossRef]

- Subeesh, A.; Bhole, S.; Singh, K.; Chandel, N.S.; Rajwade, Y.A.; Rao, K.V.R. Deep convolutional neural network models for weed detection in polyhouse grown bell peppers. Artif. Intell. Agric. 2022, 6, 47–54. [Google Scholar] [CrossRef]

- Astani, M.; Hasheminejad, M.; Vaghefi, M. A diverse ensemble classifier for tomato disease recognition. Comput. Electron. Agric. 2022, 198, 107054. [Google Scholar] [CrossRef]

- Huang, M.L.; Chuang, T.C.; Liao, Y.C. Application of transfer learning and image augmentation technology for tomato pest identification. Sustain. Comput. Inform. Syst. 2022, 33, 100646. [Google Scholar] [CrossRef]

- Azimi, S.; Kaur, T.; Gandhi, T.K. A deep learning approach to measure stress level in plants due to Nitrogen deficiency. Measurement 2021, 173, 108650. [Google Scholar] [CrossRef]

- Mishra, A.M.; Harnal, S.; Gautam, V.; Tiwari, R.; Upadhyay, S. Weed density estimation in soya bean crop using deep convolutional neural networks in smart agriculture. J. Plant Dis. Prot. 2022, 129, 593–604. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Khan, F.; Zafar, N.; Tahir, M.N.; Aqib, M.; Saleem, S.; Haroon, Z. Deep Learning-Based Approach for Weed Detection in Potato Crops. Environ. Sci. Proc. 2022, 23, 6. https://doi.org/10.3390/environsciproc2022023006

Khan F, Zafar N, Tahir MN, Aqib M, Saleem S, Haroon Z. Deep Learning-Based Approach for Weed Detection in Potato Crops. Environmental Sciences Proceedings. 2022; 23(1):6. https://doi.org/10.3390/environsciproc2022023006

Chicago/Turabian StyleKhan, Faiza, Noureen Zafar, Muhammad Naveed Tahir, Muhammad Aqib, Shoaib Saleem, and Zainab Haroon. 2022. "Deep Learning-Based Approach for Weed Detection in Potato Crops" Environmental Sciences Proceedings 23, no. 1: 6. https://doi.org/10.3390/environsciproc2022023006

APA StyleKhan, F., Zafar, N., Tahir, M. N., Aqib, M., Saleem, S., & Haroon, Z. (2022). Deep Learning-Based Approach for Weed Detection in Potato Crops. Environmental Sciences Proceedings, 23(1), 6. https://doi.org/10.3390/environsciproc2022023006