Artificial Intelligence in Smart Farms: Plant Phenotyping for Species Recognition and Health Condition Identification Using Deep Learning

Abstract

:1. Introduction

2. Related Work

- There is a scope to analyse the performance of DL models when fed with an imbalanced dataset, especially when there is a significant difference in the number of leaf images present in each class.

- The performance change of the model with the size of the leaf image dataset requires analysis.

- Further research is required to map the change in classification accuracy with differences in the DL classifier’s depth.

- A potential analysis is required to record the change in DL model’s performance with an increased number of classes or increased number of leaf images in each class.

- Computational time in an affordable experimental setup will better visualise application platforms where the model can be deployed.

| Article | Research Area | Dataset | Methodology | Remarks |

|---|---|---|---|---|

| [17] | Plant species identification with small datasets | 32 species from FLAVIA dataset, 20 species from CRLEAVES dataset of leaf images | Convolutional Siamese network (CSN) and a CNN with three convolutional blocks and a convolutional layer with 32 filters | Classifiers trained with small training samples (5 to 30 per species) and got accuracy of 93.7% and 81% by CSN at two different experimental scenarios |

| [18] | Plant identification in a natural scenario | BJFU100 dataset of 10,000 images of 100 plant species. The images were collected using mobile devices | Residual network (ResNet) classifier with 26-layer architecture | 91.78% accuracy was achieved |

| [19] | Plant species classification | Dataset with 43 plant species with 30 image samples each | Feature extraction using pre-trained AlexNet, fine-tuned AlexNet, a proposed CNN model (D-leaf), and vein morphometric. Classification using artificial neural network (ANN), support vector machine (SVM), k-nearest neighbours (KNN) | The proposed method with ANN classifier achieved the highest accuracy of 94.88% |

| [20] | Leaf species recognition using DL | Plant leaf dataset of 240 images of 15 different species | AlexNet architecture, fine-tuning of hyperparameters has been done. | Research achieved an accuracy of 95.56% |

| [21] | Grape plant species identification | Two vineyard image datasets of six varieties of grape with 23 and 14 images each | AlexNet architecture with transfer learning | An accuracy of 77.30% was achieved |

| [22] | Plant disease diagnosis | Open dataset of 87,848 images with 25 different plants | Five CNN based architecture, i.e., AlexNet, AlexNetOWTBn, GoogLeNet, Overfeat and VGG have been used to classify 58 different classes of healthy and diseased plants | Achieved an accuracy of 99.53% |

| [23] | Plant Disease Recognition | Experimented on 8 plants and 19 diseases from a dataset of 40,000 leaf images | AlexNet, VGG16, ResNet, Inception V3 used for feature extraction and proposed two-head network for classification. | Achieved 98.07% accuracy on plant species recognition and 87.45% accuracy on disease classification |

| [24] | Recognition of disease and pests of tomato plants | Dataset contained 5000 images. The method applied for 10 different classes. | Deep learning meta-architectures such as faster region-based CNN, region-based fully convolutional network (R-FCN) and single shot multibox detector (SSD) used for detection and VGG net, ResNet based feature extraction | The research reports the highest average precision of 85.98% with ResNet50 and R-FCN |

| [25] | Identification of plant disease | 14 plant species with 26 diseases from the PlantVillage dataset were used for recognition. Total number of images is 54306. | DL classifiers such as VGG net, ResNet, Inception V4, DenseNet were used. The DL models have been fine-tuned for the process of Disease Identification. | An accuracy of 99.75% has been achieved using DenseNet |

3. Materials and Methods

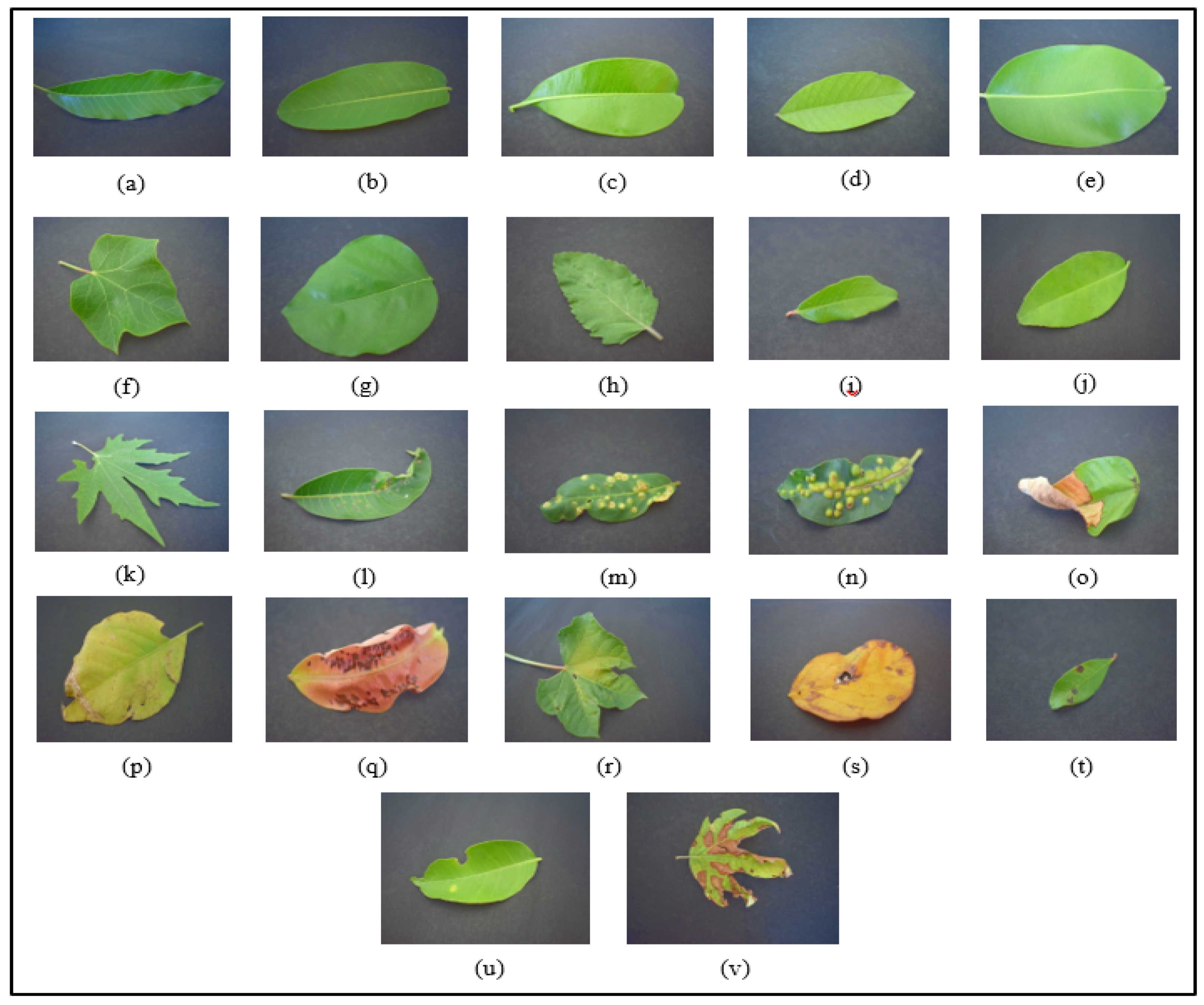

3.1. The Dataset

3.2. Pre-Processing of the Dataset

3.3. Organization of the Dataset for Training

3.4. Classification

3.5. Implementation

4. Results, Analysis, and Comparison

5. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- FAO. The Future of Food and Agriculture—Trends and Challenges; Annual Report; FAO: Rome, Italy, 2017. [Google Scholar]

- Costa, C.; Schurr, U.; Loreto, F.; Menesatti, P.; Carpentier, S. Plant Phenotyping Research Trends, a Science Mapping Approach. Front. Plant Sci. 2019, 9, 1933. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Li, L.; Zhang, Q.; Huang, D. A Review of Imaging Techniques for Plant Phenotyping. Sensors 2014, 14, 20078–20111. [Google Scholar] [CrossRef]

- Mahlein, A.-K. Plant Disease Detection by Imaging Sensors—Parallels and Specific Demands for Precision Agriculture and Plant Phenotyping. Plant Dis. 2016, 100, 241–251. [Google Scholar] [CrossRef] [Green Version]

- Jin, X.; Zarco-Tejada, P.J.; Schmidhalter, U.; Reynolds, M.P.; Hawkesford, M.J.; Varshney, R.K.; Yang, T.; Nie, C.; Li, Z.; Ming, B.; et al. High-Throughput Estimation of Crop Traits: A Review of Ground and Aerial Phenotyping Platforms. IEEE Geosci. Remote Sens. Mag. 2021, 9, 200–231. [Google Scholar] [CrossRef]

- Jiang, Y.; Li, C. Convolutional Neural Networks for Image-Based High-Throughput Plant Phenotyping: A Review. Plant Phenomics 2020, 2020, 1–22. [Google Scholar] [CrossRef] [Green Version]

- Mishra, K.B.; Mishra, A.; Klem, K. Govindjee Plant phenotyping: A perspective. Indian J. Plant Physiol. 2016, 21, 514–527. [Google Scholar] [CrossRef]

- Fiorani, F.; Schurr, U. Future Scenarios for Plant Phenotyping. Annu. Rev. Plant Biol. 2013, 64, 267–291. [Google Scholar] [CrossRef] [Green Version]

- Kaur, S.; Pandey, S.; Goel, S. Plants Disease Identification and Classification through Leaf Images: A Survey. Arch. Comput. Methods Eng. 2018, 26, 507–530. [Google Scholar] [CrossRef]

- Barré, P.; Stöver, B.C.; Müller, K.F.; Steinhage, V. LeafNet: A computer vision system for automatic plant species identification. Ecol. Inform. 2017, 40, 50–56. [Google Scholar] [CrossRef]

- Owomugisha, G.; Mwebaze, E. Machine Learning for Plant Disease Incidence and Severity Measurements from Leaf Images. In Proceedings of the 2016 15th IEEE International Conference on Machine Learning and Applications (ICMLA), Anaheim, CA, USA, 18 December 2016; pp. 158–163. [Google Scholar]

- Pawara, P.; Okafor, E.; Surinta, O.; Schomaker, L.; Wiering, M. Comparing Local Descriptors and Bags of Visual Words to Deep Convolutional Neural Networks for Plant Recognition. In Proceedings of the 6th International Conference on Pattern Recognition Applications and Methods, Porto, Portugal, 24 February 2017; SCITEPRESS: Setúbal, Portugal, 2017; Volume 24, pp. 479–486. [Google Scholar]

- Xiong, J.; Yu, D.; Liu, S.; Shu, L.; Wang, X.; Liu, Z. A Review of Plant Phenotypic Image Recognition Technology Based on Deep Learning. Electronics 2021, 10, 81. [Google Scholar] [CrossRef]

- Lee, S.H.; Chan, C.S.; Mayo, S.J.; Remagnino, P. How deep learning extracts and learns leaf features for plant classification. Pattern Recognit. 2017, 71, 1–13. [Google Scholar] [CrossRef] [Green Version]

- Zhang, S.; Zhang, C. Plant Species Recognition Based on Deep Convolutional Neural Networks. In Proceedings of the International Conference on Intelligent Computing, Liverpool, UK, 7 August 2017; Springer: Cham, Switzerland, 2017; pp. 282–289. [Google Scholar]

- Wang, G.; Sun, Y.; Wang, J. Automatic Image-Based Plant Disease Severity Estimation Using Deep Learning. Comput. Intell. Neurosci. 2017, 2017, 1–8. [Google Scholar] [CrossRef] [Green Version]

- Figueroa-Mata, G.; Mata-Montero, E. Using a convolutional siamese network for image-based plant species identification with small data-sets. Biomimetics 2020, 5, 8. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Sun, Y.; Liu, Y.; Wang, G.; Zhang, H. Deep Learning for Plant Identification in Natural Environment. Comput. Intell. Neurosci. 2017, 2017, 1–6. [Google Scholar] [CrossRef]

- Wei Tan, J.; Chang, S.W.; Abdul-Kareem, S.; Yap, H.J.; Yong, K.T. Deep learning for plant species classification using leaf vein morphometric. IEEE/ACM Trans. Comput. Biol. Bioinform. 2018, 7, 82–90. [Google Scholar]

- Kumar, D.; Verma, C. Automatic Leaf Species Recognition Using Deep Neural Network. In Evolving Technologies for Computing, Communication and Smart World; Springer: Singapore, 2021; pp. 13–22. [Google Scholar]

- Pereira, C.S.; Morais, R.; Reis, M.J.C.S. Deep Learning Techniques for Grape Plant Species Identification in Natural Images. Sensors 2019, 19, 4850. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Ferentinos, K. Deep learning models for plant disease detection and diagnosis. Comput. Electron. Agric. 2018, 145, 311–318. [Google Scholar] [CrossRef]

- Huang, S.; Liu, W.; Qi, F.; Yang, K. Development and Validation of a Deep Learning Algorithm for the Recognition of Plant Disease. In Proceedings of the 2019 IEEE 21st International Conference on High Performance Computing and Communications; IEEE 17th International Conference on Smart City; IEEE 5th International Conference on Data Science and Systems (HPCC/SmartCity/DSS), Zhangjiajie, China, 10 August 2019; pp. 1951–1957. [Google Scholar]

- Fuentes, A.; Yoon, S.; Kim, S.C.; Park, D.S. A Robust Deep-Learning-Based Detector for Real-Time Tomato Plant Diseases and Pests Recognition. Sensors 2017, 17, 2022. [Google Scholar] [CrossRef] [Green Version]

- Too, E.C.; Yujian, L.; Njuki, S.; Yingchun, L. A comparative study of fine-tuning deep learning models for plant disease identifi-cation. Comput. Electron. Agric. 2019, 161, 272–279. [Google Scholar] [CrossRef]

- Chouhan, S.S.; Singh, U.P.; Kaul, A.; Jain, S. A data repository of leaf images: Practice towards plant conservation with plant pathology. In Proceedings of the 2019 IEEE 4th International Conference on Information Systems and Computer Networks (ISCON), Mathura, India, 21 November 2019; pp. 700–707. [Google Scholar]

- Saleem, M.H.; Potgieter, J.; Arif, K.M. Plant Disease Detection and Classification by Deep Learning. Plants 2019, 8, 468. [Google Scholar] [CrossRef] [Green Version]

- Canziani, A.; Paszke, A.; Culurciello, E. An Analysis of Deep Neural Network Models for Practical Applications. arXiv 2017, arXiv:1605.07678. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Atienza, R. Advanced Deep Learning with Keras: Apply Deep Learning Techniques, Autoencoders, GANs, Variational Autoen-Coders, Deep Reinforcement Learning, Policy Gradients, and More; Packt Publishing Ltd.: Birmingham, UK, 2018. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Commun. ACM 2012, 60, 1097–1105. [Google Scholar] [CrossRef]

- Chollet, F. Keras. 2015. Available online: https://github.com/fchollet/keras (accessed on 7 July 2020).

- Bradski, G. The opencv library. Dr Dobb’s J. Softw. Tools 2000, 25, 120–125. [Google Scholar]

- Van der Walt, S.; Schönberger, J.L.; Nunez-Iglesias, J.; Boulogne, F.; Warner, J.D.; Yager, N.; Gouillart, E.; Yu, T. Scikit-image: Image processing in Python. PeerJ 2014, 2, e453. [Google Scholar] [CrossRef]

- Krüger, F. Activity, context, and plan recognition with computational causal behavior models. Ph.D. thesis, Universiät Rostock, Fakultät für Informatik und Elektrotechnik, Rostock, Germany, 2016. [Google Scholar]

- Sokolova, M.; Lapalme, G. A systematic analysis of performance measures for classification tasks. Inf. Process. Manag. 2009, 45, 427–437. [Google Scholar] [CrossRef]

| Plant Species | Number of Images (H: Healthy, I: Infected) | Plant Species | Number of Images (H: Healthy, I: Infected) |

|---|---|---|---|

| Mango (Mangifera indica) | H:170, I:265 | Jatropha (Jatropha curcas L.) | H:133, I:124 |

| Arjun (Terminalia arjuna) | H:220, I:232 | Sukh chain (Pongamia Pinnata L.) | H:322, I:276 |

| Alstonia (Alstonia scholaris) | H:179, I:254 | Basil (Ocimum basilicum) | H:149, I:0 |

| Guava (Psidium guajava) | H:277, I:142 | Pomegranate (Punica granatum L.) | H:287, I:272 |

| Bael (Aegle marmelos) | H:0, I:118 | Lemon (Citrus limon) | H:159, I:77 |

| Jamun (Syzgium cumini) | H:279, I:345 | Chinar (Platanus orientalis) | H:103, I:120 |

| Character/Number | Details |

|---|---|

| U | Dataset generated by under-sampling (i.e., Dataset-I) |

| UA | Dataset generated by under-sampling and augmentation (i.e., Dataset-II, Figure 3) |

| N1 | 12 for SR and 22 for IHIL |

| R | ResNet version 2 based DL classifier has been used. |

| Alex | Alexnet based DL classifier has been used. |

| N2 | Indicates depth of Residual Network based classifier. |

| Relevant Parameters | |

|---|---|

| True positive (tp) | The number of class examples that are correctly predicted. |

| True negative (tn) | The number of correctly recognised examples that do not belong to the class |

| False positive (fp) | The number of predicted class examples that do not truly belong to the class. |

| False negative (fn) | The number of class examples which the classifier fails to recognise. |

| Metrics | Mathematical Expression | Remarks |

|---|---|---|

| Average accuracy | Average of per class ratio of correct prediction to total test samples | |

| Precision | Indicates how accurate the classifier is among those predicted to be class examples | |

| Recall | Indicates how accurate the classifier is for predicting the true class examples | |

| F1 Score | Indicates the balanced average of both precision and recall |

| Test Cases | Task | Average Accuracy (in %) | Precision_M (in %) | Recall_M (in %) | F1 Score_M (in %) |

|---|---|---|---|---|---|

| U12_R11 | SR | 85.98 | 85.99 | 86.46 | 85.59 |

| U12_R20 | SR | 90.53 | 92.13 | 90.62 | 90.89 |

| U12_R29 | SR | 87.12 | 87.63 | 86.81 | 86.49 |

| U12_R38 | SR | 89.39 | 89.38 | 89.24 | 89.11 |

| U12_R47 | SR | 86.36 | 86.58 | 86.11 | 85.53 |

| U22_R20 | IHIL | 81.44 | 83.74 | 81.44 | 81.13 |

| UA12_R20 | SR | 91.94 | 91.84 | 91.67 | 91.49 |

| UA22_R20 | IHIL | 83.14 | 84.00 | 83.14 | 83.19 |

| U12_Alex | SR | 81.06 | 76.85 | 75.32 | 74.87 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hati, A.J.; Singh, R.R. Artificial Intelligence in Smart Farms: Plant Phenotyping for Species Recognition and Health Condition Identification Using Deep Learning. AI 2021, 2, 274-289. https://doi.org/10.3390/ai2020017

Hati AJ, Singh RR. Artificial Intelligence in Smart Farms: Plant Phenotyping for Species Recognition and Health Condition Identification Using Deep Learning. AI. 2021; 2(2):274-289. https://doi.org/10.3390/ai2020017

Chicago/Turabian StyleHati, Anirban Jyoti, and Rajiv Ranjan Singh. 2021. "Artificial Intelligence in Smart Farms: Plant Phenotyping for Species Recognition and Health Condition Identification Using Deep Learning" AI 2, no. 2: 274-289. https://doi.org/10.3390/ai2020017

APA StyleHati, A. J., & Singh, R. R. (2021). Artificial Intelligence in Smart Farms: Plant Phenotyping for Species Recognition and Health Condition Identification Using Deep Learning. AI, 2(2), 274-289. https://doi.org/10.3390/ai2020017