Binaural Range Finding from Synthetic Aperture Computation as the Head is Turned

Abstract

:1. Introduction

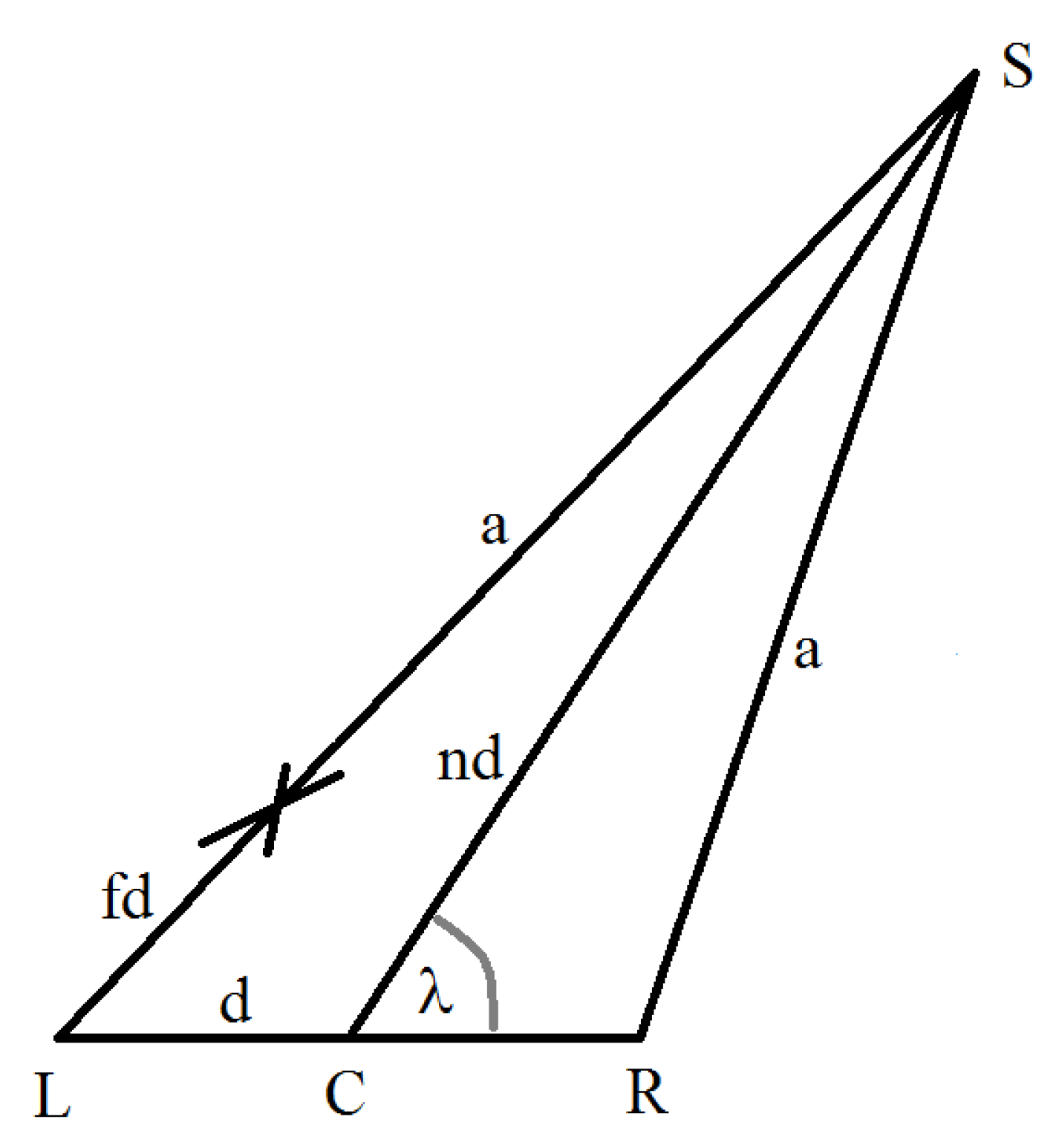

2. Arrival Time Difference, Angle to Acoustic Source, and Range

- L represents the position of the left ear, and R the right ear; the line LR lies on the auditory axis;

- C represents the position of the auditory center;

- S is the position of the acoustic source;

- is the angle at the auditory center between the auditory axis and the direction to the acoustic source;

- represents the distance between the ears (the length of the line LR);

- represents the distance of the acoustic source from the auditory center as a multiple of the length (the length of the line CS);

- represents the difference in the acoustic ray path lengths from the source to the ears as a proportion of the length (); and

- is the distance from the acoustic source to the right ear (the length of the line SR).

- is the acoustic transmission velocity (e.g., 330 ms−1 for air); and

- is the difference in the arrival time of sound received at the ears.

3. Synthetic Aperture Calculation Range Finding: Simulated Experimental Results

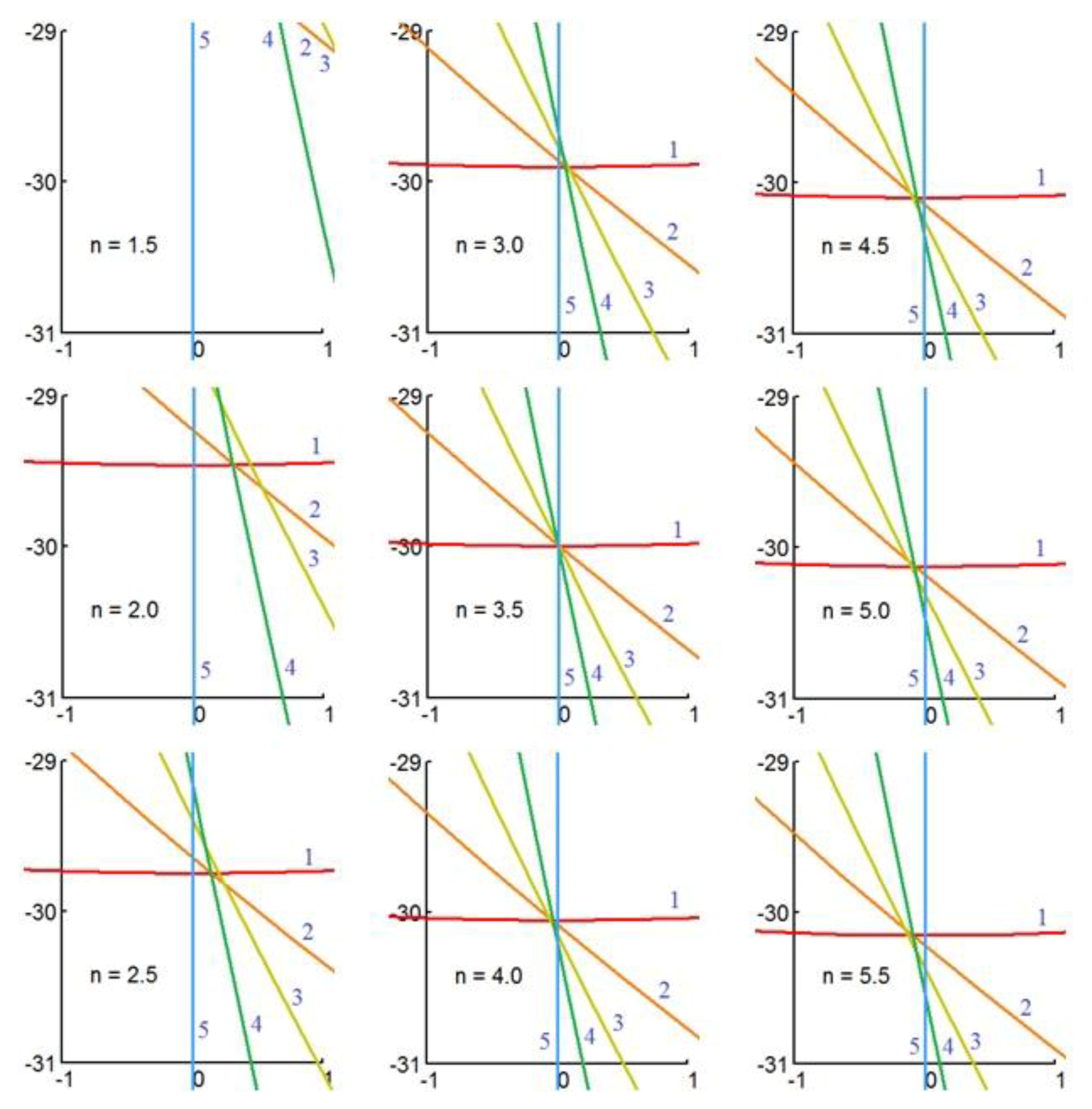

3.1. Horizontal Auditory Axis

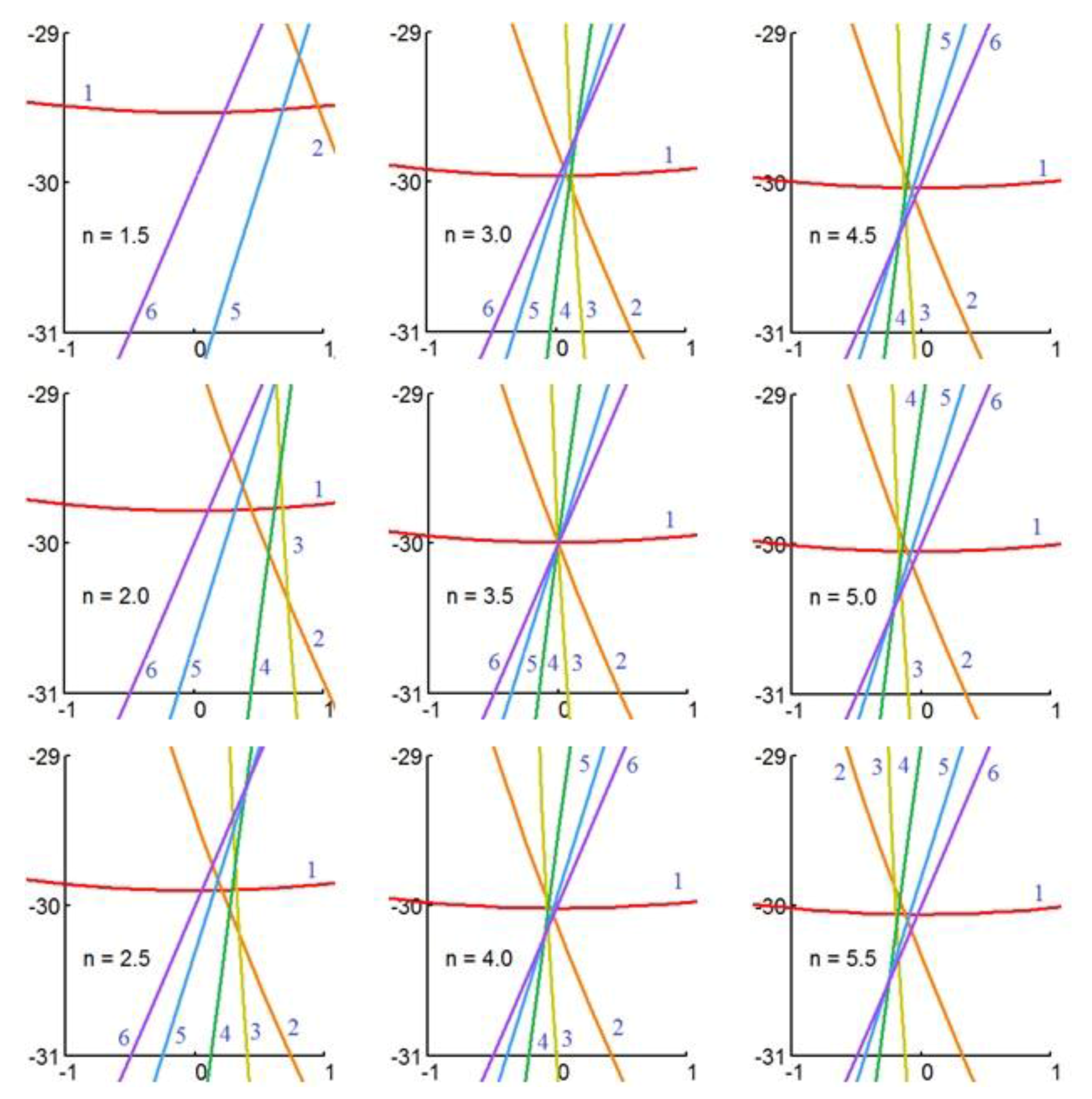

3.2. Inclined Auditory Axis

4. Discussion

5. Summary

Acknowledgments

Conflicts of Interest

References

- Lollmann, H.W.; Barfus, H.; Deleforge, A.; Meier, S.; Kellermann, W. Challenges in acoustic signal enhancement for human-robot communication. In Proceedings of the ITG Conference on Speech Communication, Erlangen, Germany, 24–26 September 2014. [Google Scholar]

- Takanishi, A.; Masukawa, S.; Mori, Y.; Ogawa, T. Development of an anthropomorphic auditory robot that localizes a sound direction. Bull. Cent. Inform. 1995, 20, 24–32. (In Japanese) [Google Scholar]

- Matsusaka, Y.; Tojo, T.; Kuota, S.; Furukawa, K.; Tamiya, D.; Nakano, Y.; Kobayashi, T. Multi-person conversation via multi-modal interface—A robot who communicates with multi-user. In Proceedings of the 16th National Conference on Artificial Intelligence (AAA1-99), Orlando, FL, USA, 18–22 July 1999; pp. 768–775. [Google Scholar]

- Ma, N.; Brown, G.J.; May, T. Robust localisation of multiple speakers exploiting deep neural networks and head movements. In Proceedings of the INTERSPEECH 2015, Dresden, Germany, 6–10 September 2015; pp. 3302–3306. [Google Scholar]

- Schymura, C.; Winter, F.; Kolossa, D.; Spors, S. Binaural sound source localization and tracking using a dynamic spherical head model. In Proceedings of the INTERSPEECH 2015, Dresden, Germany, 6–10 September 2015; pp. 165–169. [Google Scholar]

- Winter, F.; Schultz, S.; Spors, S. Localisation properties of data-based binaural synthesis including translator head-movements. In Proceedings of the Forum Acusticum, Krakow, Poland, 31 January 2014. [Google Scholar]

- Bustamante, G.; Portello, A.; Danes, P. A three-stage framework to active source localization from a binaural head. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brisbane, Australia, 19–24 April 2015; pp. 5620–5624. [Google Scholar]

- May, T.; Ma, N.; Brown, G. Robust localisation of multiple speakers exploiting head movements and multi-conditional training of binaural cues. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brisbane, Australia, 19–24 April 2015; pp. 2679–2683. [Google Scholar]

- Ma, N.; May, T.; Wierstorf, H.; Brown, G. A machine-hearing system exploiting head movements for binaural sound localisation in reverberant conditions. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brisbane, Australia, 19–24 April 2015; pp. 2699–2703. [Google Scholar]

- Bhadkamkar, N.A. Binaural source localizer chip using subthreshold analog CMOS. In Proceedings of the IEEE International Conference on Neural Networks, Orlando, FL, USA, 28 June–2 July 1994; Volume 3, pp. 1866–1870. [Google Scholar]

- Willert, V.; Eggert, J.; Adamy, J.; Stahl, R.; Korner, E. A probabilistic model for binaural sound localization. IEEE Trans. Syst. Man Cybern. B 2006, 36, 982–994. [Google Scholar] [CrossRef]

- Voutsas, K.; Adamy, J. A biologically inspired spiking neural network for sound source lateralization. IEEE Trans. Neural Netw. 2007, 18, 1785–1799. [Google Scholar] [CrossRef] [PubMed]

- Liu, J.; Perez-Gonzalez, D.; Rees, A.; Erwin, H.; Wermter, S. A biologically inspired spiking neural network model of the auditory midbrain for sound source localization. Neurocomputing 2010, 74, 129–139. [Google Scholar] [CrossRef]

- Nakadai, K.; Lourens, T.; Okuno, H.G.; Kitano, H. Active audition for humanoids. In Proceedings of the 17th National Conference Artificial Intelligence (AAAI-2000), Austin, TX, USA, 30 July–3 August 2010; pp. 832–839. [Google Scholar]

- Cech, J.; Mittal, R.; Delefoge, A.; Sanchez-Riera, J.; Alameda-Pineda, X. Active speaker detection and localization with microphone and cameras embedded into a robotic head. In Proceedings of the IEEE-RAS International Conference on Humanoid Robots (Humanoids), Atlanta, GA, USA, 15–17 October 2013; pp. 203–210. [Google Scholar]

- Deleforge, A.; Drouard, V.; Girin, L.; Horaud, R. Mapping sounds on images using binaural spectrograms. In Proceedings of the European Signal Processing Conference, Lisbon, Portugal, 1–5 September 2014; pp. 2470–2474. [Google Scholar]

- Nakamura, K.; Nakadai, K.; Asano, F.; Ince, G. Intelligent sound source localization and its application to multimodal human tracking. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, San Francisco, CA, USA, 25–30 September 2011; pp. 143–148. [Google Scholar]

- Yost, W.A.; Zhong, X.; Najam, A. Judging sound rotation when listeners and sounds rotate: Sound source localization is a multisystem process. J. Acoust. Soc. Am. 2015, 138, 3293–3310. [Google Scholar] [CrossRef] [PubMed]

- Kim, U.H.; Nakadai, K.; Okuno, H.G. Improved sound source localization in horizontal plane for binaural robot audition. Appl. Intell. 2015, 42, 63–74. [Google Scholar] [CrossRef]

- Rodemann, T.; Heckmann, M.; Joublin, F.; Goerick, C.; Scholling, B. Real-time sound localization with a binaural head-system using a biologically-inspired cue-triple mapping. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, Beijing, China, 9–15 October 2006; pp. 860–865. [Google Scholar]

- Portello, A.; Danes, P.; Argentieri, S. Acoustic models and Kalman filtering strategies for active binaural sound localization. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, San Francisco, CA, USA, 25–30 September 2011; pp. 137–142. [Google Scholar]

- Sun, L.; Zhong, X.; Yost, W. Dynamic binaural sound source localization with interaural time difference cues: Artificial listeners. J. Acoust. Soc. Am. 2015, 137, 2226. [Google Scholar] [CrossRef]

- Zhong, X.; Sun, L.; Yost, W. Active binaural localization of multiple sound sources. Robot. Autom. Syst. 2016, 85, 83–92. [Google Scholar] [CrossRef]

- Tamsett, D. Synthetic aperture computation as the head is turned in binaural direction finding. Robotics 2017, 6. [Google Scholar] [CrossRef]

- Wightman, F.L.; Kistler, D.J. The dominant role of low frequency interaural time differences in sound localization. J. Acoust. Soc. Am. 1992, 91, 1648–1661. [Google Scholar] [CrossRef] [PubMed]

- Brughera, A.; Danai, L.; Hartmann, W.M. Human interaural time difference thresholds for sine tones: The high-frequency limit. J. Acoust. Soc. Am. 2013, 133. [Google Scholar] [CrossRef] [PubMed]

- Moore, B. An Introduction to the Psychology of Hearing; Academic Press: San Diego, CA, USA, 2003. [Google Scholar]

- Sayers, B.M.; Cherry, E.C. Mechanism of binaural fusion in the hearing of speech. J. Acoust. Soc. Am. 1957, 36, 923–926. [Google Scholar] [CrossRef]

- Jeffress, L.A.A. A place theory of sound localization. J. Comp. Physiol. Psychol. 1948, 41, 35–39. [Google Scholar] [CrossRef] [PubMed]

- Colburn, H.S. Theory of binaural interaction based on auditory-nerve data. 1. General strategy and preliminary results in interaural discrimination. J. Acoust. Soc. Am. 1973, 54, 1458–1470. [Google Scholar] [CrossRef] [PubMed]

- Kock, W.E. Binaural localization and masking. J. Acoust. Soc. Am. 1950, 22, 801–804. [Google Scholar] [CrossRef]

- Durlach, N.I. Equalization and cancellation theory of binaural masking-level differences. J. Acoust. Soc. Am. 1963, 35, 1206–1218. [Google Scholar] [CrossRef]

- Licklider, J.C.R. Three auditory theories. In Psychology: A Study of a Science; Koch, S., Ed.; McGraw-Hill: New York, NY, USA, 1959; pp. 41–144. [Google Scholar]

- Smith, P.; Joris, P.; Yin, T. Projections of physiologically characterized spherical bushy cell axons from the cochlear nucleus of the cat: Evidence for delay lines to the medial superior olive. J. Comp. Neurol. 1993, 331, 245–260. [Google Scholar] [CrossRef] [PubMed]

- Brand, A.; Behrend, O.; Marquardt, T.; McAlpine, D.; Grothe, B. Precise inhibition is essential for microsecond interaural time difference coding. Nature 2002, 417, 543–547. [Google Scholar] [CrossRef] [PubMed]

- Roffler, S.K.; Butler, R.A. Factors that influence the localization of sound in the vertical plane. J. Acoust. Soc. Am. 1968, 43, 1255–1259. [Google Scholar] [CrossRef] [PubMed]

- Batteau, D. The role of the pinna in human localization. Proc. R. Soc. Lond. Ser. B 1967, 168, 158–180. [Google Scholar] [CrossRef]

- Middlebrooks, J.C.; Makous, J.C.; Green, D.M. Directional sensitivity of sound-pressure levels in the human ear canal. J. Acoust. Soc. Am. 1989, 86, 89–108. [Google Scholar] [CrossRef] [PubMed]

- Rodemann, T.; Ince, G.; Joublin, F.; Goerick, C. Using binaural and spectral cues for azimuth and elevation localization. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, Nice, France, 22–26 September 2008; pp. 2185–2190. [Google Scholar]

- Wallach, H. The role of head movement and vestibular and visual cues in sound localisation. J. Exp. Psychol. 1940, 27, 339–368. [Google Scholar] [CrossRef]

- Perrett, S.; Noble, W. The effect of head rotations on vertical plane sound localization. J. Acoust. Soc. Am. 1997, 102, 2325–2332. [Google Scholar] [CrossRef] [PubMed]

- Lurton, X. Seafloor-mapping sonar systems and Sub-bottom investigations. In An Introduction to Underwater Acoustics: Principles and Applications, 2nd ed.; Springer: Berlin, Germany, 2010; pp. 75–114. [Google Scholar]

- Rice, J.J.; May, B.J.; Spirou, G.A.; Young, E.D. Pinna-based spectral cues for sound localization in cat. Hear. Res. 1992, 58, 132–152. [Google Scholar] [CrossRef]

- Payne, R.S. Acoustic location of prey by barn owls. J. Exp. Biol. 1971, 54, 535–573. [Google Scholar] [PubMed]

- Coleman, P.D. Failure to Localize the Source Distance of an Unfamiliar Sound. J. Acoust. Soc. Am. 1962, 34, 345–346. [Google Scholar] [CrossRef]

- Plenge, G. On the problem of “in head localization”. Acustica 1972, 26, 213–221. [Google Scholar]

- Stern, R.; Brown, G.J.; Wang, D.L. Binaural sound localization. In Computational Auditory Scene Analysis; Wang, D.L., Brown, G.L., Eds.; John Wiley and Sons: Chichester, UK, 2005; pp. 1–34. [Google Scholar]

- Mills, A.W. On the minimum audible angle. J. Acoust. Soc. Am. 1958, 30, 237–246. [Google Scholar] [CrossRef]

- Bala, A.D.S.; Spitzer, M.W.; Takahashi, T.T. Prediction of auditory spatial acuity from neural images of the owl’s auditory space map. Nature 2003, 424, 771–774. [Google Scholar] [CrossRef] [PubMed]

- Knudsen, E.I.; Konishi, M. Mechanisms of sound localization in the barn owl (Tyto alba). J. Comp. Physiol. A 1979, 133, 13–21. [Google Scholar] [CrossRef]

| Circle | (Longitude) | (Colatitude) | ( = 3.5) | ( = ∞) |

|---|---|---|---|---|

| 1 | 90.000 | 30.000 | 0.8636 | 0.8660 |

| 2 | 67.500 | 36.860 | 0.7971 | 0.8001 |

| 3 | 45.000 | 52.239 | 0.6085 | 0.6124 |

| 4 | 22.500 | 70.645 | 0.3284 | 0.3314 |

| 5 | 00.000 | 90.000 | 0.0000 | 0.0000 |

| = 1.5 | = 2.0 | = 2.5 | = 3.0 | = 3.5 | = 4.0 | = 4.5 | = 5.0 | = 5.5 | |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 28.850 | 29.470 | 29.754 | 29.908 | 30.000 | 30.060 | 30.101 | 30.132 | 30.152 |

| 2 | 35.597 | 36.276 | 36.589 | 36.758 | 36.860 | 36.926 | 36.971 | 37.002 | 37.028 |

| 3 | 50.994 | 51.659 | 51.969 | 52.137 | 52.239 | 52.305 | 52.350 | 52.380 | 52.406 |

| 4 | 69.859 | 70.277 | 70.473 | 70.581 | 70.645 | 70.688 | 70.717 | 70.737 | 70.753 |

| 5 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 |

| Circle | (Longitude) | (Colatitude) | ( = 3.5) | ( = ∞) |

|---|---|---|---|---|

| 1 | 90.000 | 10.000 | 0.9845 | 0.9848 |

| 2 | 67.500 | 22.652 | 0.9214 | 0.9229 |

| 3 | 45.000 | 41.716 | 0.7431 | 0.7465 |

| 4 | 22.500 | 61.155 | 0.4787 | 0.4823 |

| 5 | 00.000 | 80.153 | 0.1693 | 0.1710 |

| 6 | −12.130 | 90.000 | 0.0000 | 0.0000 |

| = 1.5 | = 2.0 | = 2.5 | = 3.0 | = 3.5 | = 4.0 | = 4.5 | = 5.0 | = 5.5 | |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 09.537 | 09.788 | 09.902 | 09.963 | 10.000 | 10.024 | 10.040 | 10.052 | 10.060 |

| 2 | 21.699 | 22.214 | 22.449 | 22.576 | 22.652 | 22.701 | 22.735 | 22.759 | 22.777 |

| 3 | 40.416 | 41.115 | 41.436 | 41.611 | 41.716 | 41.784 | 41.831 | 41.864 | 41.889 |

| 4 | 60.080 | 60.654 | 60.921 | 61.067 | 61.155 | 61.213 | 61.252 | 61.280 | 61.301 |

| 5 | 79.733 | 79.956 | 80.061 | 80.119 | 80.153 | 80.176 | 80.192 | 80.203 | 80.211 |

| 6 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 | 90.000 |

© 2017 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tamsett, D. Binaural Range Finding from Synthetic Aperture Computation as the Head is Turned. Robotics 2017, 6, 10. https://doi.org/10.3390/robotics6020010

Tamsett D. Binaural Range Finding from Synthetic Aperture Computation as the Head is Turned. Robotics. 2017; 6(2):10. https://doi.org/10.3390/robotics6020010

Chicago/Turabian StyleTamsett, Duncan. 2017. "Binaural Range Finding from Synthetic Aperture Computation as the Head is Turned" Robotics 6, no. 2: 10. https://doi.org/10.3390/robotics6020010

APA StyleTamsett, D. (2017). Binaural Range Finding from Synthetic Aperture Computation as the Head is Turned. Robotics, 6(2), 10. https://doi.org/10.3390/robotics6020010