Utility Perception in System Dynamics Models †

Abstract

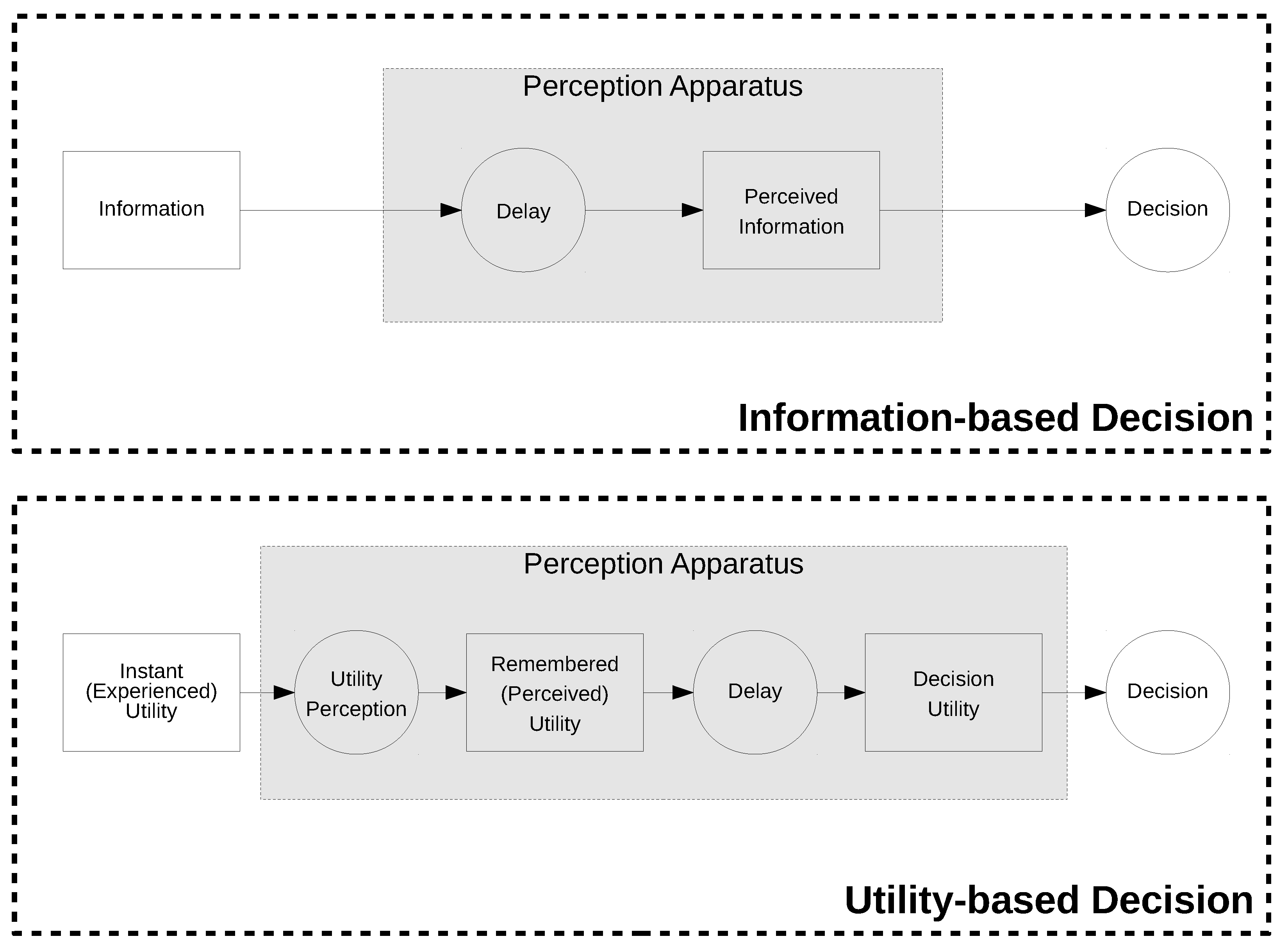

1. Introduction

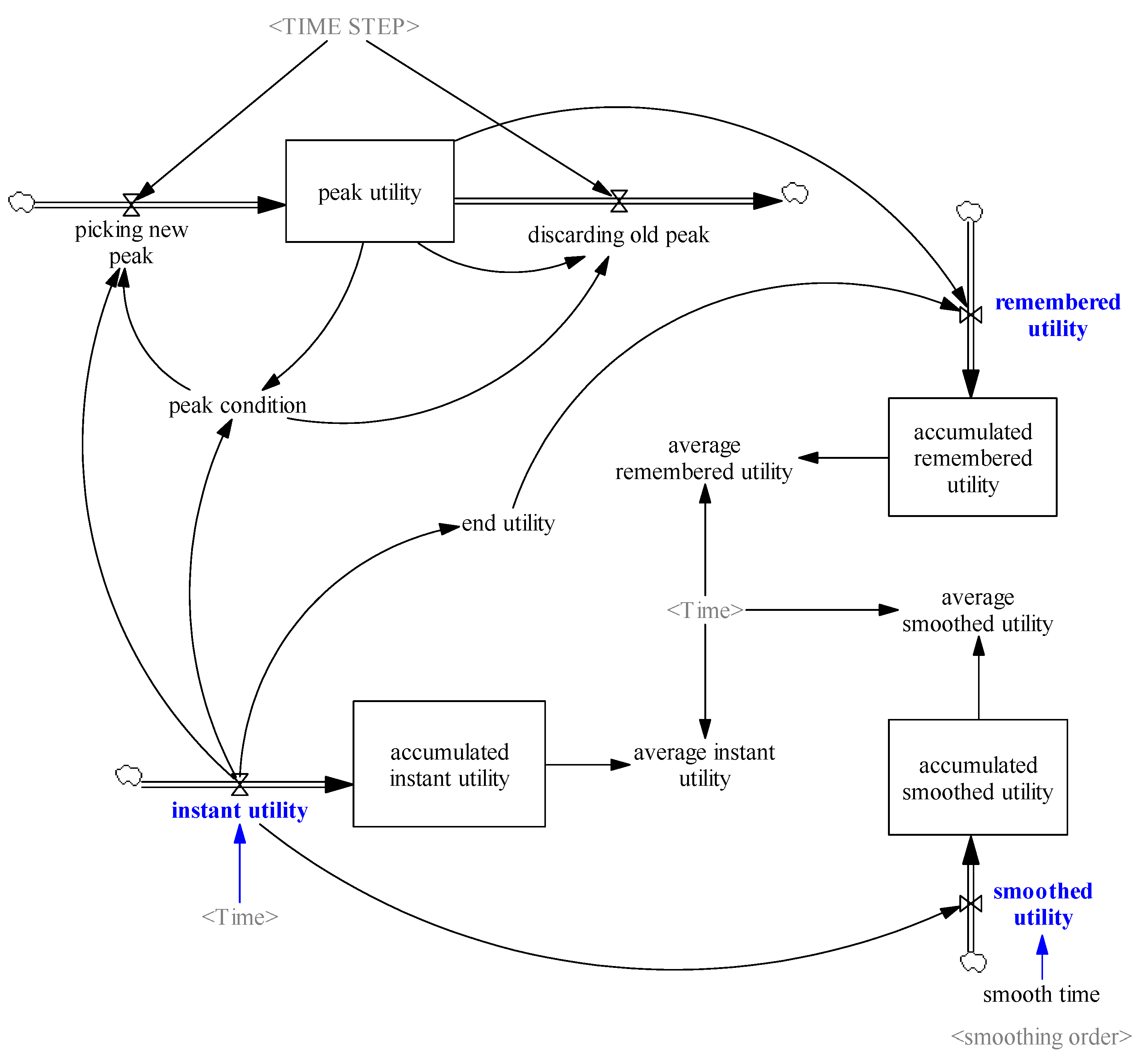

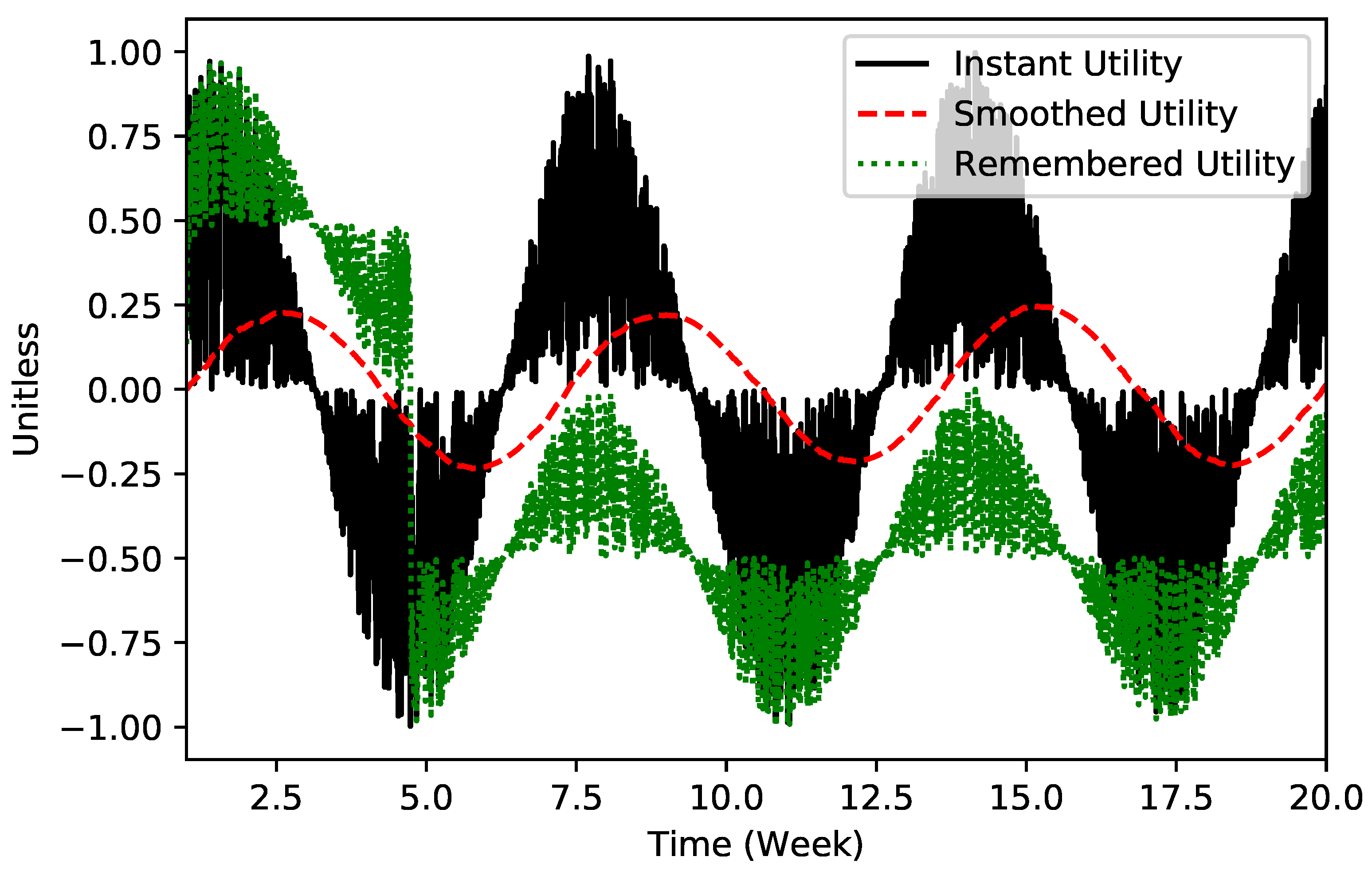

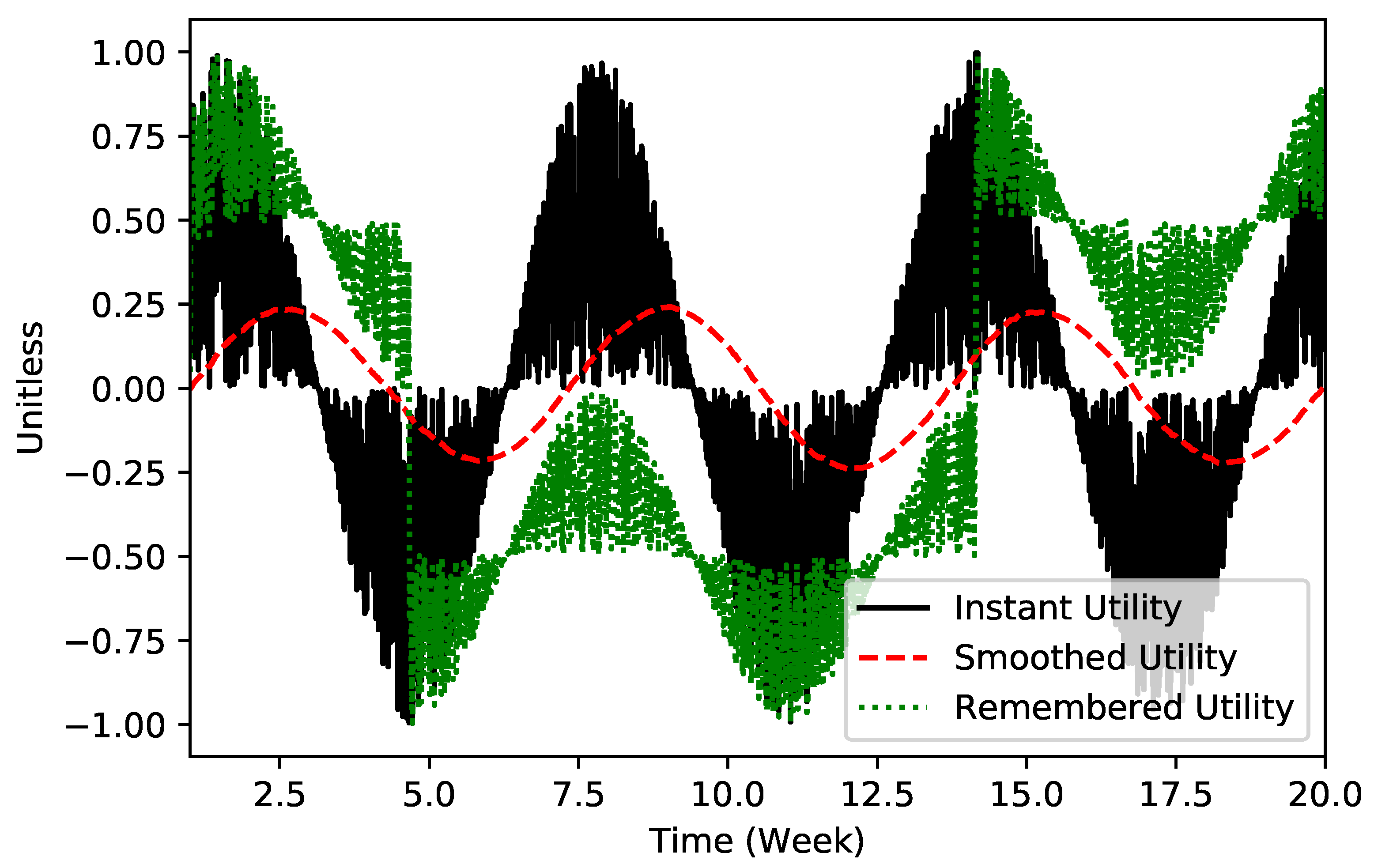

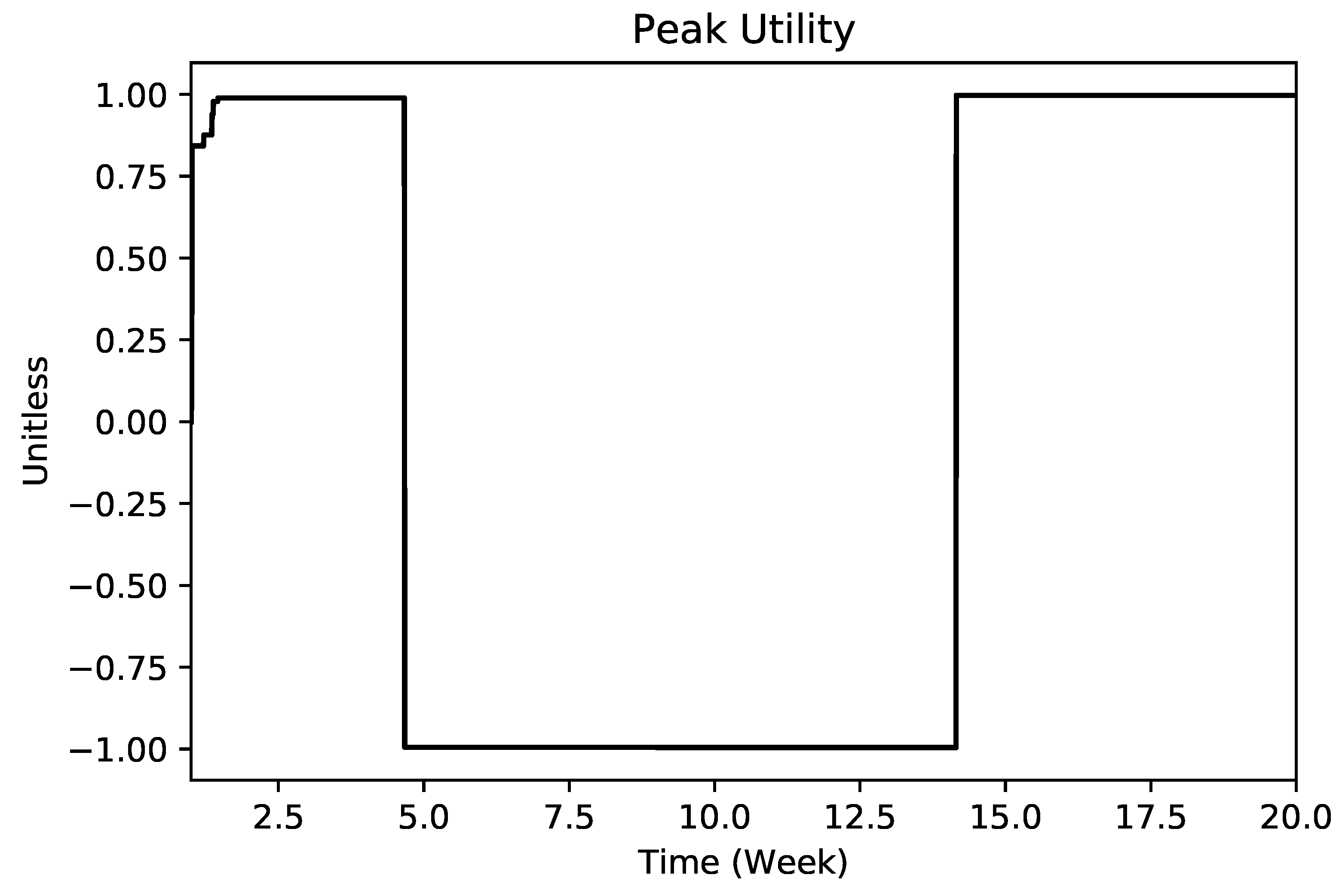

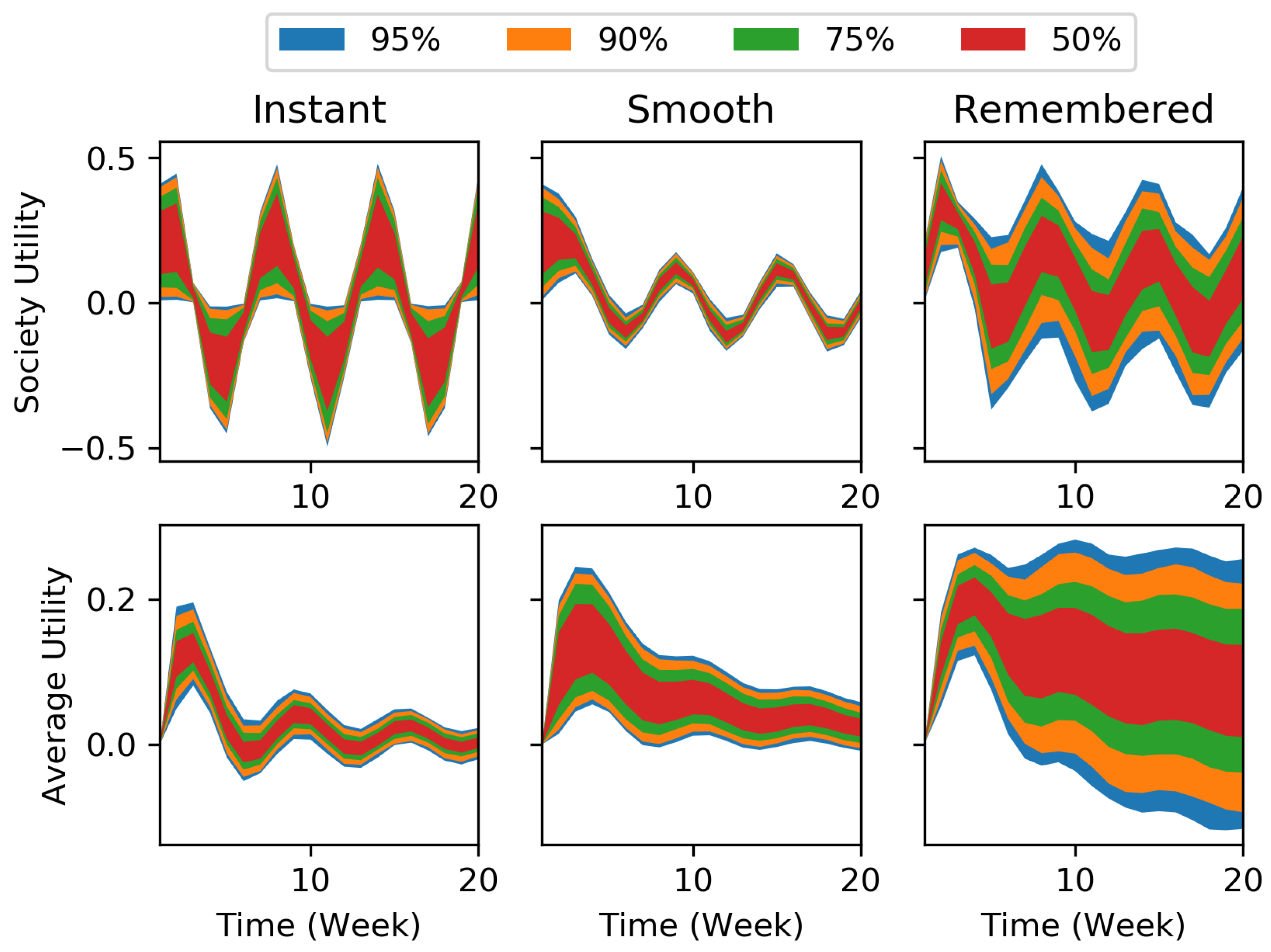

2. Peak–End Rule in a Theoretical Setting

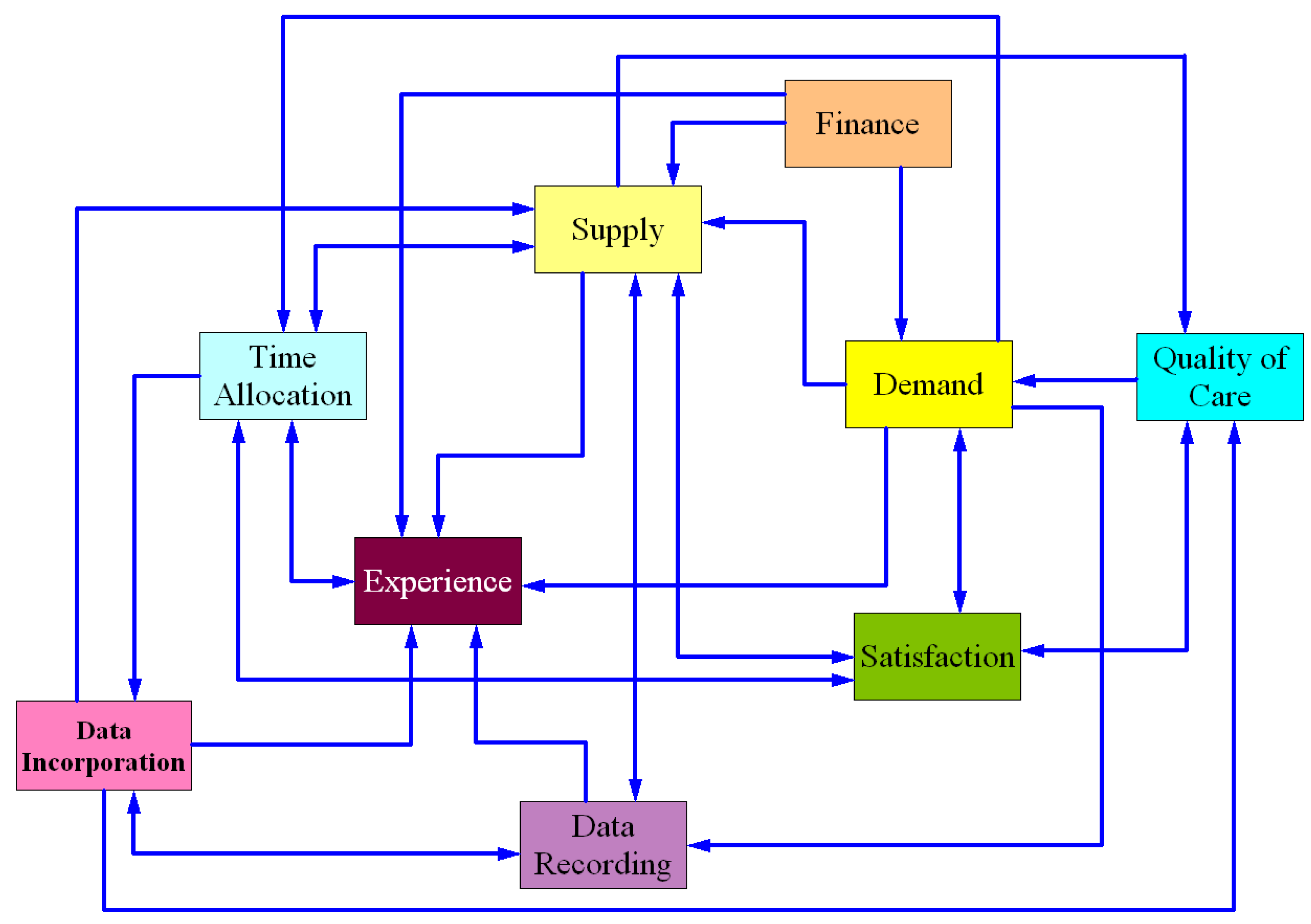

3. Peak–End Value in an Electronic Health Records (EHR) Implementation Model

- Demand calculates the number of patients demanding healthcare services;

- Supply determines the healthcare service capacity of the organization;

- Finance is an accounting module calculating costs, revenues, cash balance, and other financial measures;

- Quality of care accounts for the quality of healthcare services that the organization provides;

- Satisfaction tracks changes in the satisfaction of patients and healthcare providers;

- Time allocation includes mechanisms by which physicians allocate their time between three major tasks: medical practice, learning and making use of the electronic health record system, and leisure;

- Data recording tracks efforts made by physicians and nurses to capture and record digital medical data;

- Data incorporation represents the level of activities of physicians and nurses in incorporating medical health records in actual medical practice;

- Experience shows the skill and experience of physicians and nurses in working with the EHR system.

- BASE is the payment system used in the default setting of the model. In this case, physicians are reimbursed based on a capitated payment system.

- FLEXPAY is a payment system similar to the BASE case, but it also includes an additional bonus for physicians if their satisfaction declines.

- FIXPAY is a different payment system based on constant salary. In addition, physicians will receive extra bonus pay if their satisfaction declines.

- FIXPAY2 is also based on constant salary but 20% higher than the FIXPAY case. Additional bonus will be paid to physicians if higher quality of care is observed.

- physician satisfaction from patient satisfaction,

- physician satisfaction from leisure,

- physician satisfaction from income,

- physician satisfaction from quality of care,

- patient satisfaction from quality of care,

- patient satisfaction from wait time, and

- patient satisfaction from visit time.

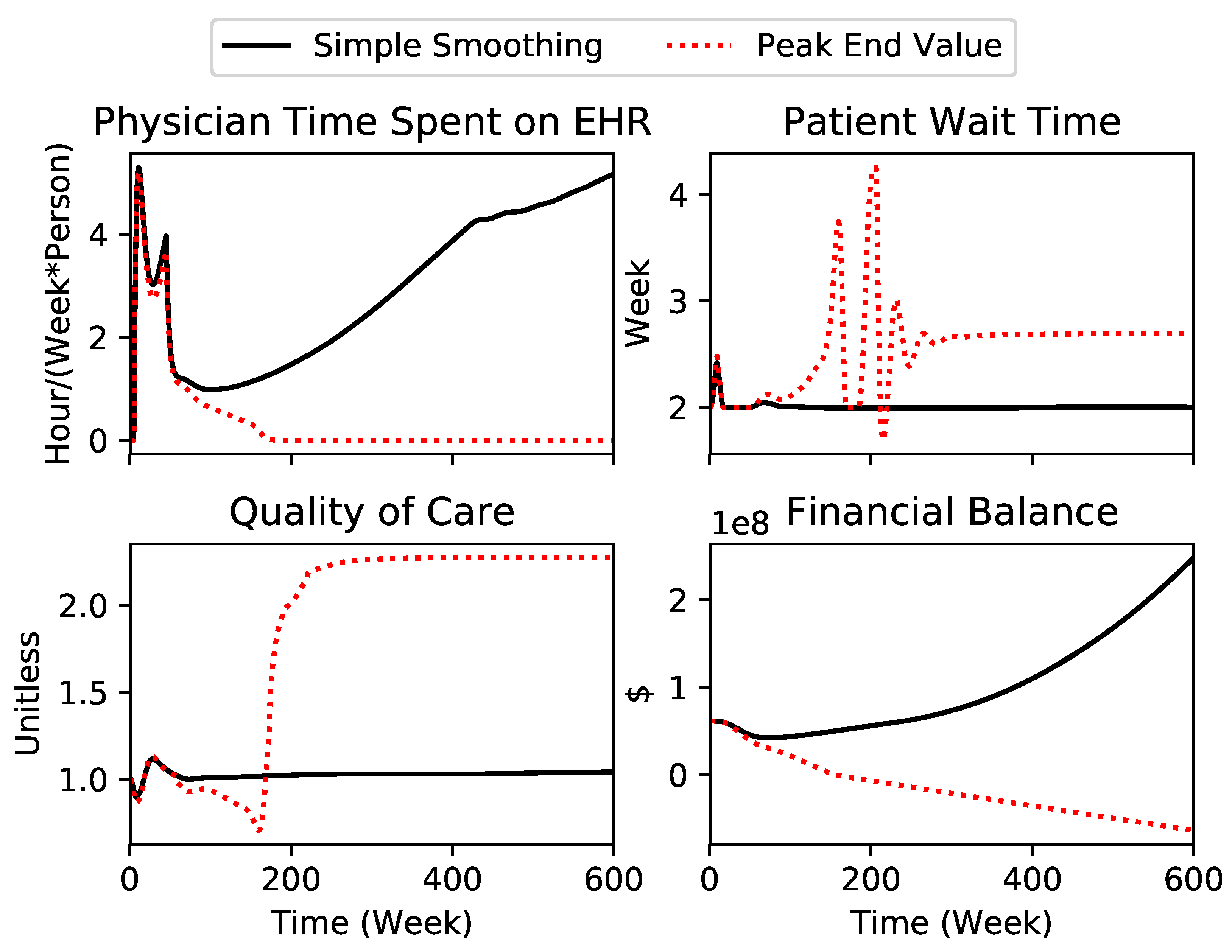

3.1. Number of Runs with Significant Difference in Numerical Results between the Two Formulations

3.2. Number of Runs with Improved Performance in All Four Measures for Each Formulation

3.3. Number of Runs with Similar Policy Implications for Both Formulations

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

Abbreviation

| EHR: | Electronic Health Records |

Appendix A. Equations of the Peak–End Module

Appendix B. EHR Model’s Parameter Variation Range

References

- Kahneman, D.; Wakker, P.P.; Sarin, R. Back to Bentham? Explorations of Experienced Utility. Q. J. Econ. 1997, 112, 375–405. [Google Scholar] [CrossRef]

- Kahneman, D. Experienced utility and objective happiness: A moment-based approach. Psychol. Econ. Decis. 2003, 1, 187–208. [Google Scholar]

- Kahneman, D.; Thaler, R.H. Anomalies: Utility Maximization and Experienced Utility. J. Econ. Perspect. 2006, 20, 221–234. [Google Scholar] [CrossRef]

- Samuelson, P.A. Consumption Theory in Terms of Revealed Preference. Economica 1948, 15, 243–253. [Google Scholar] [CrossRef]

- Fredrickson, B.L.; Kahneman, D. Duration neglect in retrospective evaluations of affective episodes. J. Personal. Soc. Psychol. 1993, 65, 45. [Google Scholar] [CrossRef]

- Kahneman, D.; Fredrickson, B.L.; Schreiber, C.A.; Redelmeier, D.A. When More Pain Is Preferred to Less: Adding a Better End. Psychol. Sci. 1993, 4, 401–405. [Google Scholar] [CrossRef]

- Redelmeier, D.A.; Kahneman, D. Patients’ memories of painful medical treatments: Real-time and retrospective evaluations of two minimally invasive procedures. Pain 1996, 66, 3–8. [Google Scholar] [CrossRef]

- Forrester, J.W. Market Growth as Influenced by Capital Investment. Ind. Manag. Rev. 1968, 9, 83. [Google Scholar]

- Gigerenzer, G.; Gaissmaier, W. Heuristic Decision Making. Annu. Rev. Psychol. 2011, 62, 451–482. [Google Scholar] [CrossRef] [PubMed]

- Evans, J.S.B.T. Dual-Processing Accounts of Reasoning, Judgment, and Social Cognition. Annu. Rev. Psychol. 2008, 59, 255–278. [Google Scholar] [CrossRef] [PubMed]

- Stone, A.A.; Broderick, J.E.; Kaell, A.T.; DelesPaul, P.A.E.G.; Porter, L.E. Does the peak-end phenomenon observed in laboratory pain studies apply to real-world pain in rheumatoid arthritics? J. Pain 2000, 1, 212–217. [Google Scholar] [CrossRef] [PubMed]

- Langer, T.; Sarin, R.; Weber, M. The retrospective evaluation of payment sequences: Duration neglect and peak-and-end effects. J. Econ. Behav. Organ. 2005, 58, 157–175. [Google Scholar] [CrossRef]

- Clark, A.E.; Georgellis, Y. Kahneman meets the quitters: Peak-end behaviour in the labour market. In Proceedings of the BHPS-2007 Conference, Colchester, UK, 5–7 July 2007. [Google Scholar]

- Langarudi, S.P.; Strong, D.M.; Saeed, K.; Johnson, S.A.; Tulu, B.; Trudel, J.; Volkoff, O.; Pelletier, L.R.; Lawrence, G.; Bar-On, I. Dynamics of EHR Implementations. In Proceedings of the 32nd International Conference of the System Dynamics Society, Delft, The Netherlands, 20–24 July 2014; p. 30. [Google Scholar]

- Morrison, J.B.; Oliva, R. Integration of Behavioral and Operational Elements Through System Dynamics. In The Handbook of Behavioral Operations; Wiley: New York, NY, USA, 2017. [Google Scholar]

- Tversky, A.; Kahneman, D. Judgment under Uncertainty: Heuristics and Biases. Science 1974, 185, 1124–1131. [Google Scholar] [CrossRef] [PubMed]

- Kahneman, D.; Slovic, P.; Tversky, A. Judgment under Uncertainty: Heuristics and Biases; Cambridge University Press: New York, NY, USA, 1982. [Google Scholar]

- Jacowitz, K.E.; Kahneman, D. Measures of Anchoring in Estimation Tasks. Personal. Soc. Psychol. Bull. 1995, 21, 1161–1166. [Google Scholar] [CrossRef]

- Carmon, Z.; Kahneman, D. The Experienced Utility of Queuing: Real Time Affect and Retrospective Evaluations of Simulated Queues; Duke University: Durham, NC, USA, 1996. [Google Scholar]

- Kemp, S.; Burt, C.D.B.; Furneaux, L. A test of the peak-end rule with extended autobiographical events. Mem. Cogn. 2008, 36, 132–138. [Google Scholar] [CrossRef]

- Miron-Shatz, T. Evaluating multiepisode events: Boundary conditions for the peak-end rule. Emotion 2009, 9, 206–213. [Google Scholar] [CrossRef] [PubMed]

- Bahaddin, B.; Weinberg, S.; Luna-Reyes, L.; Andersen, D. Simulating Lifetime Saving Decisions: The Behavioral Economics of Countervailing Cognitive Biases. In Proceedings of the 35th International Conference of the System Dynamics Society, Cambridge, MA, USA, 16–20 July 2017; p. 21. [Google Scholar]

- Brunswik, E. Perception and the Representative Design of Psychological Experiments, 2nd ed.; University of California Press: Berkeley, CA, USA, 1956. [Google Scholar]

| 1 | Please note that the customers’ perception of satisfaction (utility) is not discussed explicitly in the market growth model. In the original model, x is delivery delay, and is the perceived delivery delay. The latter is then used directly to calculate demand. In other words, the mechanism through which the customers gain utility due to changes in delivery time, and subsequently adjust their demand, is encapsulated in the formulation of . In an attempt to make the utility consideration explicit, we refer to this formulation as “utility perception”. |

| 2 | A generally used rule states that the simulation time step () must be smaller than 25% of the smallest time constant in the model in order to avoid integration errors, and at the same time, to achieve reasonable precision for the simulation. As a result, the ratio of will always be smaller than . |

| 3 | Recent advancements in the field of behavioral sciences suggest a few variants for decision heuristics as presented by Gigerenzer and Gaissmaier [9]. For example, dual-process theories provide an explanation for how individuals make decisions and how such processes could lead to a bias in decision-making [10]. Due to limited resources, we were unable to examine all these alternatives in a system dynamics setting. However, the interested reader is encouraged to further explore this research area. |

| 4 | It is noteworthy that we also examined different forms of test inputs (including pink noise) on the model. Results of these alternative tests are not reported here, but the implication remains the same as presented. |

| 5 | The default random seed of the Vensim model was 1; To produce the alternative experiment, Vensim’s random seed of 123 was used. |

| 6 | Note that the current analysis assumes no interactions between the agents, although we believe that the agent interactions could lead to more extreme instances, thus augmenting the peak–end manifestation even further (e.g., through word-of-mouth). |

| Criterion | BASE | FLEXPAY | FIXPAY | FIXPAY2 |

|---|---|---|---|---|

| 68.1% | 65.2% | 61.9% | 89.9% | |

| 13.3% | 13.6% | 12.7% | 62.6% | |

| 16.6% | 10.9% | 8.7% | 48.0% | |

| 1 h | 9.1% | 11.4% | 9.7% | 33.1% |

| Utility Perception Method | FLEXPAY | FIXPAY | FIXPAY2 |

|---|---|---|---|

| Simple Smoothing Model | 28.9% | 23.4% | 41.3% |

| Peak–End Value Model | 33.6% | 31.9% | 6.9% |

| Payment System | FLEXPAY | FIXPAY | FIXPAY2 |

|---|---|---|---|

| Similarity rate | 55.5% | 57.1% | 31.6% |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Langarudi, S.P.; Bar-On, I. Utility Perception in System Dynamics Models. Systems 2018, 6, 37. https://doi.org/10.3390/systems6040037

Langarudi SP, Bar-On I. Utility Perception in System Dynamics Models. Systems. 2018; 6(4):37. https://doi.org/10.3390/systems6040037

Chicago/Turabian StyleLangarudi, Saeed P., and Isa Bar-On. 2018. "Utility Perception in System Dynamics Models" Systems 6, no. 4: 37. https://doi.org/10.3390/systems6040037

APA StyleLangarudi, S. P., & Bar-On, I. (2018). Utility Perception in System Dynamics Models. Systems, 6(4), 37. https://doi.org/10.3390/systems6040037