More is not Always Better: The Relation between Item Response and Item Response Time in Raven’s Matrices

Abstract

:1. Introduction

1.1. The Relation of Item Response Time to Item Responses

1.2. The Role of Response Time in Solving Reasoning Items

1.3. Research Goal and Hypotheses

2. Materials and Methods

2.1. Sample

2.2. Instruments

2.3. Statistical Analyses

2.4. Data Preparation

3. Results

3.1. Response Time Effect (Hypothesis 1)

3.2. Response Time Effect Moderated by Person (Hypothesis 2)

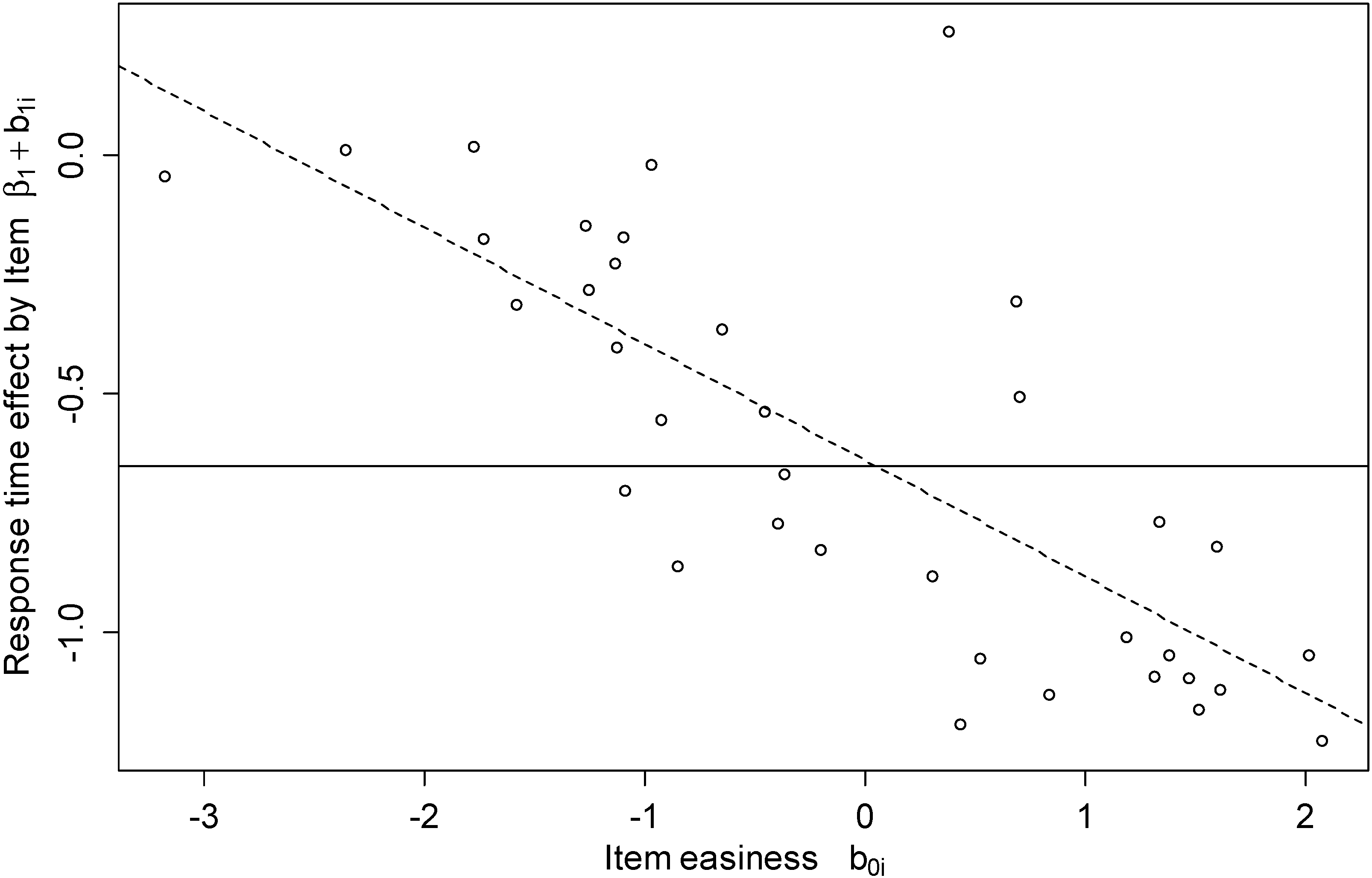

3.3. Response Time Effect Moderated by Item (Hypothesis 3)

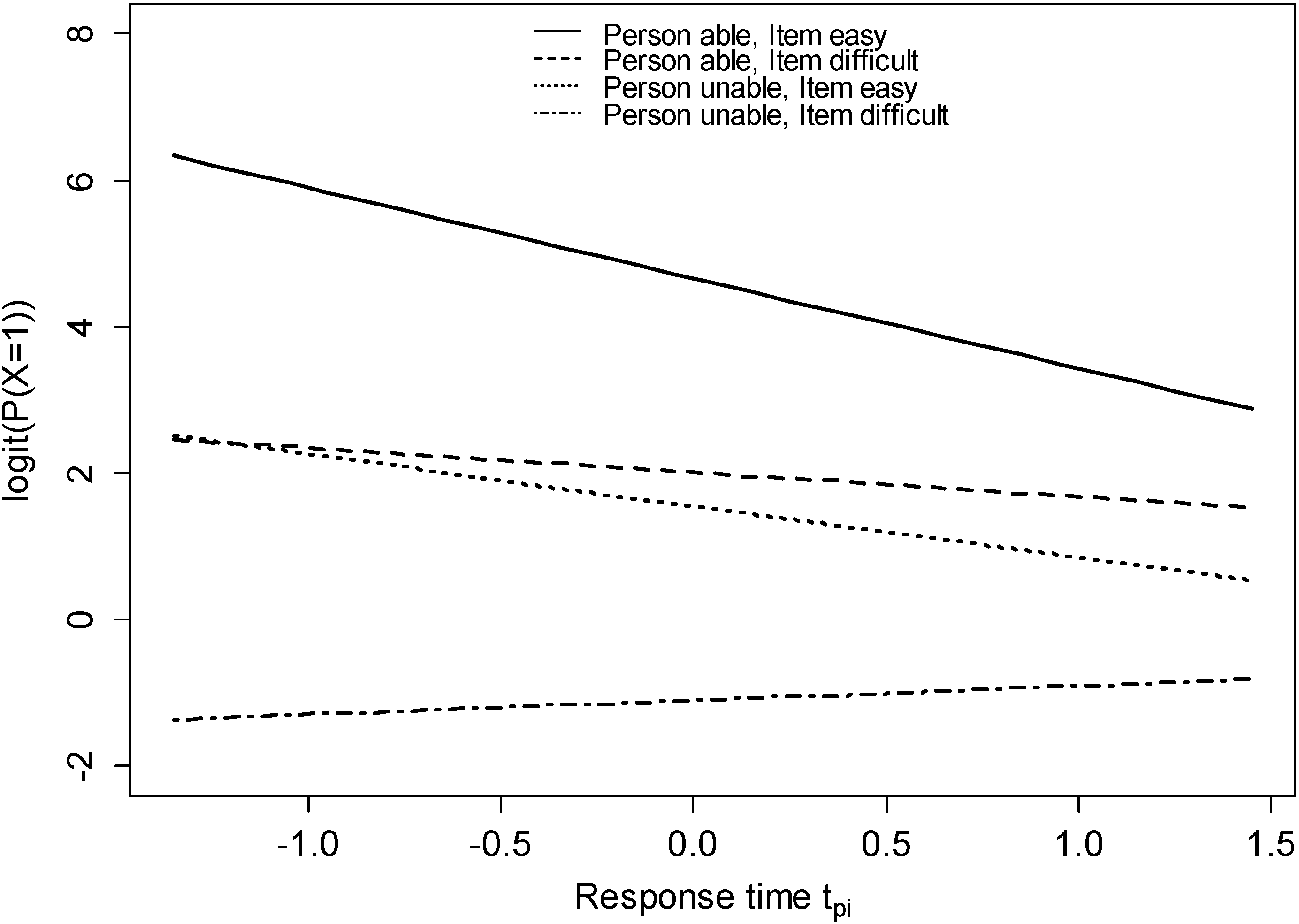

3.4. Response Time Effect Moderated by Item and Person (Integrating Hypotheses 2 and 3)

3.5. Response Time Effect Moderated by Items’ Number of Rules (Hypothesis 4)

3.6. Exploratory Analysis: Response Time Effect Moderated by Error Response Types

4. Discussion

4.1. Negative Response Time Effect

4.2. Limitations

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Van der Linden, W.J. A hierarchical framework for modeling speed and accuracy on test items. Psychometrika 2007, 72, 287–308. [Google Scholar] [CrossRef]

- Wickelgren, W.A. Speed-accuracy tradeoff and information processing dynamics. Acta Psychol. 1973, 41, 67–85. [Google Scholar] [CrossRef]

- Wise, S.L.; DeMars, C.E. An application of item response time: The effort-moderated IRT model. J. Educ. Meas. 2006, 43, 19–38. [Google Scholar] [CrossRef]

- Wise, S.L.; Kong, X. Response time effort: A new measure of examinee motivation in computer-based tests. Appl. Meas. Educ. 2005, 18, 163–183. [Google Scholar] [CrossRef]

- Heathcote, A.; Popiel, S.J.; Mewshort, D.J.K. Analysis of response time distributions: An example using the Stroop task. Psychol. Bull. 1991, 109, 340–347. [Google Scholar] [CrossRef]

- Miyake, A.; Friedman, N.P.; Emerson, M.J.; Witzki, A.H.; Howerter, A.; Wager, T.D. The unity and diversity of executive functions and their contributions to complex “frontal lobe” tasks: A latent variable analysis. Cognit. Psychol. 2000, 41, 49–100. [Google Scholar] [CrossRef] [PubMed]

- Goldhammer, F.; Naumann, J.; Stelter, A.; Tóth, K.; Rölke, H.; Klieme, E. The time on task effect in reading and problem solving is moderated by task difficulty and skill: Insights from a computer-based large-scale assessment. J. Educ. Psychol. 2014, 106, 608–626. [Google Scholar] [CrossRef]

- Verbić, S.; Tomić, B. Test item response time and the response likelihood. 2009. Available online: http://arxiv.org/ftp/arxiv/papers/0901/0901.4356.pdf (accessed on 15 August 2014).

- Ebel, R.L. The use of item response time measurements in the construction of educational achievement tests. Educ. Psychol. Meas. 1953, 13, 391–401. [Google Scholar] [CrossRef]

- Hornke, L.F. Item response times in computerized adaptive testing. Psicológica 2000, 21, 175–189. [Google Scholar]

- Carroll, J.B. Human Cognitive Abilities. A Survey of Factor-Analytical Studies; Cambridge University Press: New York, NY, USA, 1993. [Google Scholar]

- Raven, J.C. Advanced Progressive Matrices; Lewis and Co. Ltd.: London, UK, 1962. [Google Scholar]

- Klein Entink, R.H.; Fox, J.P.; van der Linden, W.J. A multivariate multilevel approach to the modeling of accuracy and speed of test takers. Psychometrika 2009, 74, 21–48. [Google Scholar] [CrossRef] [PubMed]

- Goldhammer, F.; Klein Entink, R.H. Speed of reasoning and its relation to reasoning ability. Intelligence 2011, 39, 108–119. [Google Scholar] [CrossRef]

- Van der Linden, W.J.; Scrams, D.J.; Schnipke, D.L. Using response-time constraints to control for differential speededness in computerized adaptive testing. Appl. Psychol. Meas. 1999, 23, 195–210. [Google Scholar]

- Goldhammer, F.; Naumann, J.; Keßel, Y. Assessing individual differences in basic computer skills. Eur. J. Psychol. Assess. 2013, 29, 263–275. [Google Scholar] [CrossRef]

- Scherer, R.; Greiff, S.; Hautamäki, J. Exploring the relation between speed and ability in complex problem solving. Intelligence 2015, 48, 37–50. [Google Scholar] [CrossRef]

- Lasry, N.; Watkins, J.; Mazur, E.; Ibrahim, A. Response times to conceptual questions. Am. J. Phys. 2013, 81, 703. [Google Scholar] [CrossRef]

- Sporer, S.L. Eyewitness identification accuracy, confidence, and decision times in simultaneous and sequential lineups. J. Appl. Psychol. 1993, 78, 22–33. [Google Scholar] [CrossRef]

- Fitts, P.M.; Posner, M.I. Learning and Skilled Performance in Human Performance; Brooks/Cole: Belmont, CA, USA, 1967. [Google Scholar]

- Schneider, W.; Shiffrin, R.M. Controlled and automatic human information-processing: 1. Detection, search, and attention. Psychol. Rev. 1977, 84, 1–66. [Google Scholar] [CrossRef]

- Schneider, W.; Chein, J.M. Controlled & automatic processing: Behavior, theory, and biological mechanisms. Cognit. Sci. 2003, 27, 525–559. [Google Scholar] [CrossRef]

- Ackerman, P.L. Predicting individual differences in complex skill acquisition: Dynamics of ability determinants. J. Appl. Psychol. 1992, 77, 598–614. [Google Scholar] [CrossRef] [PubMed]

- Hornke, L.F. Response time in computer-aided testing: A “verbal memory” test for routes and maps. Psychol. Sci. 2005, 47, 280–293. [Google Scholar]

- Beckmann, J.F. Differentielle Latenzzeiteffekte bei der Bearbeitung von Reasoning-Items. Diagnostica 2000, 46, 124–129. (In German) [Google Scholar] [CrossRef]

- Beckmann, J.F.; Beckmann, N. Effects of feedback on performance and response latencies in untimed reasoning tests. Psychol. Sci. 2005, 47, 262–278. [Google Scholar]

- Neubauer, A.C. Speed of information processing in the Hick paradigm and response latencies in a psychometric intelligence test. Personal. Individ. Differ. 1990, 11, 147–152. [Google Scholar] [CrossRef]

- Dodonova, Y.A.; Dodonov, Y.S. Faster on easy items, more accurate on difficult ones: Cognitive ability and performance on a task of varying difficulty. Intelligence 2013, 41, 1–10. [Google Scholar] [CrossRef]

- Carpenter, P.A.; Just, M.A.; Shell, P. What one intelligence test measures: A theoretical account of the processing in the Raven progressive matrices test. Psychol. Rev. 1990, 97, 404–431. [Google Scholar] [CrossRef] [PubMed]

- McGrew, K.S. CHC theory and the human cognitive abilities project: Standing on the shoulders of the giants of psychometric intelligence research. Intelligence 2009, 37, 1–10. [Google Scholar] [CrossRef]

- Verguts, T.; de Boeck, P.; Maris, E. Generation speed in Raven’s progressive matrices test. Intelligence 1999, 27, 329–345. [Google Scholar] [CrossRef]

- Raven, J.C.; Raven, J.; Court, J.H. APM Manual (German Edition and Norming by H. Häcker and St. Bulheller); Swets Test Services: Frankfurt am Main, Germany, 1998. [Google Scholar]

- Baayen, R.H.; Davidson, D.J.; Bates, D.M. Mixed-effects modeling with crossed random effects for subjects and items. J. Mem. Lang. 2008, 59, 390–412. [Google Scholar] [CrossRef]

- De Boeck, P.; Bakker, M.; Zwitser, R.; Nivard, M.; Hofman, A.; Tuerlinckx, F.; Partchev, I. The estimation of item response models with the lmer function from the lme4 package in R. J. Statist. Softw. 2011, 39, 1–28. [Google Scholar]

- Doran, H.; Bates, D.; Bliese, P.; Dowling, M. Estimating the multilevel Rasch model: With the lme4 package. J. Statist. Softw. 2007, 20, 1–18. [Google Scholar]

- De Boeck, P. Random item IRT models. Psychometrika 2008, 73, 533–559. [Google Scholar]

- Van der Linden, W.J. Conceptual issues in response-time modeling. J. Educ. Meas. 2009, 46, 247–272. [Google Scholar] [CrossRef]

- Yen, W.M. Effects of local item dependence on the fit and equating performance of the three-parameter logistic model. Appl. Psychol. Meas. 1984, 8, 125–145. [Google Scholar] [CrossRef]

- Yen, W.M. Scaling performance assessments: Strategies for managing local item dependence. J. Educ. Meas. 1993, 30, 187–213. [Google Scholar] [CrossRef]

- Bolker, B.M.; Brooks, M.E.; Clark, C.J.; Geange, S.W.; Poulsen, J.R.; Stevens, M.H.; White, J.S. Generalized linear mixed models: A practical guide for ecology and evolution. Trends Ecol. Evol. 2009, 24, 127–135. [Google Scholar] [CrossRef] [PubMed]

- Greven, S.; Kneib, T. On the behaviour of marginal and conditional AIC in linear mixed models. Biometrika 2010, 97, 773–789. [Google Scholar] [CrossRef]

- Vaida, F.; Blanchard, S. Conditional Akaike information for mixed-effects models. Biometrika 2005, 92, 351–370. [Google Scholar] [CrossRef]

- Bates, D.; Maechler, M.; Bolker, B.; Walker, S. lme4: Linear Mixed-Effects Models Using Eigen and S4. 2013. Available online: http://cran.r-project.org/web/packages/lme4/citation.html (accessed on 11 March 2015).

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2013. [Google Scholar]

- Roskam, E.E. Models for speed and time-limit tests. In Handbook of Modern Item Response Theory; van der Linden, W.J., Hambleton, R., Eds.; Springer: New York, NY, USA, 1997; pp. 87–208. [Google Scholar]

- Wang, T.; Hanson, B.A. Development and calibration of an item response model that incorporates response time. Appl. Psychol. Meas. 2005, 29, 323–339. [Google Scholar] [CrossRef]

- Preckel, F. Diagnostik Intellektueller Hochbegabung. Testentwicklung zur Erfassung der Fluiden Intelligenz. Dissertation; Hogrefe: Göttingen, Germany, 2003. [Google Scholar]

- Babcock, R.L. Analysis of age differences in types of errors on the Raven’s advanced progressive matrices. Intelligence 2002, 30, 485–503. [Google Scholar] [CrossRef]

- Vodegel Matzen, L.B.L.; van der Molen, M.W.; Dudink, A.C.M. Error analysis of Raven test performance. Personal. Individ. Differ. 1994, 16, 433–445. [Google Scholar]

- Meo, M.; Roberts, M.J.; Marucci, F.S. Element salience as a predictor of item difficulty for Raven’s progressive matrices. Intelligence 2007, 35, 359–368. [Google Scholar] [CrossRef]

- Sutcliffe, A.; Rugg, G. A taxonomy of error types for failure analysis and risk assessment. Int. J. Hum. Comput. Interact. 1998, 10, 381–405. [Google Scholar] [CrossRef]

- Johnson-Laird, P.N. A model theory of induction. Int. Stud. Philos. Sci. 1994, 8, 5–29. [Google Scholar] [CrossRef]

- Johnson-Laird, P.N.; Girotto, V.; Legrenzi, P. Reasoning from inconsistency to consistency. Psychol. Rev. 2004, 111, 640–661. [Google Scholar] [CrossRef] [PubMed]

© 2015 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Goldhammer, F.; Naumann, J.; Greiff, S. More is not Always Better: The Relation between Item Response and Item Response Time in Raven’s Matrices. J. Intell. 2015, 3, 21-40. https://doi.org/10.3390/jintelligence3010021

Goldhammer F, Naumann J, Greiff S. More is not Always Better: The Relation between Item Response and Item Response Time in Raven’s Matrices. Journal of Intelligence. 2015; 3(1):21-40. https://doi.org/10.3390/jintelligence3010021

Chicago/Turabian StyleGoldhammer, Frank, Johannes Naumann, and Samuel Greiff. 2015. "More is not Always Better: The Relation between Item Response and Item Response Time in Raven’s Matrices" Journal of Intelligence 3, no. 1: 21-40. https://doi.org/10.3390/jintelligence3010021

APA StyleGoldhammer, F., Naumann, J., & Greiff, S. (2015). More is not Always Better: The Relation between Item Response and Item Response Time in Raven’s Matrices. Journal of Intelligence, 3(1), 21-40. https://doi.org/10.3390/jintelligence3010021