Abstract

In human–robot interaction (HRI), sharing emotions between the human and robot is one of the most important elements. However, market trends suggest that being able to perform productive tasks is more important than being able to express emotions in order for robots to be more accepted by society. In this study, we introduce a method of conveying emotions through a robot arm while it simultaneously executes main tasks. This method utilizes the null space control scheme to exploit the kinematic redundancy of a robot manipulator. In addition, the concept of manipulability ellipsoid is used to maximize the motion in the kinematic redundancy. The “Nextage-Open” robot was used to implement the proposed method, and HRI was recorded on video. Using these videos, a questionnaire with Pleasure–Arousal–Dominance (PAD) scale was conducted via the internet to evaluate people’s impressions of the robot’s emotions. The results suggested that even when industrial machines perform emotional behaviors within the safety standards set by the ISO/TS 15066, it is difficult to provide enough variety for each emotion to be perceived differently. However, people’s reactions to the unclear movements yielded useful and interesting results, showing the complementary roles of motion features, interaction content, prejudice toward robots, and facial expressions in understanding emotion.

1. Introduction

As social robots become more integrated into daily life, an important question arises: how can robots become more acceptable for people? In the 2017 Pew Research Center survey “Automation in Everyday Life”, 4135 people in the United States were asked, “Are you interested in using robots in your daily life, including care-giving?” Out of the respondents, 64% had negative perceptions on robots, stating that they “can’t read robots’ mood” and that robots are “simply scary” [1]. One of the ways being explored to gain user acceptance is to incorporate expressive movements. In order to overcome the negative reputation of robots, researchers have been implementing emotions into robots which are expressed toward the human user [2,3,4,5]. This can be accomplished by displaying affective content on the screen, through expressive sound and through movements. One of the main purposes of robots expressing emotions is that it gives social value to interactions between people and robots. In other words, when people empathize and socialize with robots, the robots are no longer recognized as simply a “tool” but as something social, which can help to appease feelings of aversion and dislike for robots. We aim to change the perception of robots as a mere tool through the emotional expression of robots which can draw out human empathy in a natural way. This should significantly impact the way robots will be used in the general society in the future. For example, it may prevent robots being used in public from being harassed by passersby, particularly in the service industry [6].

If a robot does not have a display board or voice function, such as a robot arm, it may be still able to communicate through motion. Researchers have been trying to convey certain emotions through robot motions (see Section 2.2). For instance, Knight attempted to use a small robot that only has a few degrees of freedom [2], and Beck et al. used a humanoid that utilizes human gesture information to generate expressive robot motions [3]. In these studies, the general tactics for differentiating emotions are adjusting pre-existing gestures/postures and/or the velocity of the motions. Those attempts have successfully conveyed arousal and mood through robot motion.

However, most research on emotional robots treat emotion conveyance as the main task, so the robots cannot do any other productive tasks such as manipulating objects. People’s expectations of robots are becoming clearer, and the impression of usefulness is generally an important element in the public perception of robots. The reality is that household robots such as Vector and Kuri, which are only for sharing emotions, are forced to withdraw from the market even though these companies were viewed as promising [7]. This trend implies that in order for a robot to be accepted, it should have both a productive function and an element that appeals to human sensibilities.

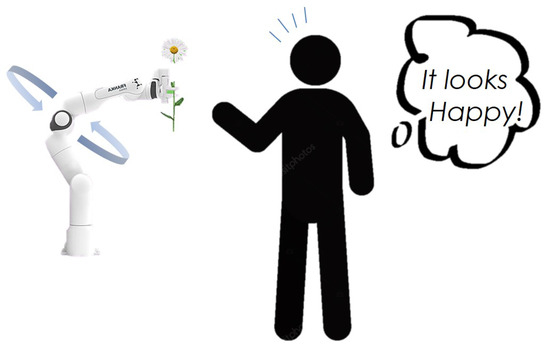

In this research, we develop a motion system that performs tasks while expressing emotions using the kinematic redundancy of robot arms. The abstracted idea is shown in Figure 1. A system for performing emotional actions using redundant degrees of freedom while performing industrial tasks has not yet been demonstrated in the social robot field. Although Claret et al. have shown an example of a humanoid (Pepper) that utilizes the redundant degrees of freedom to express emotion, its main task was a waving gesture and emotion conveyance, while productive task were not implemented [4]. In contrast, the target of our study is a robot arm that is specialized in productive tasks. Furthermore, we will mention the concerns of work-oriented robots as they work in the real world, expressing their emotions through their motions.

Figure 1.

The main idea is the coexistence of productive tasks and emotional expression behavior. The robot’s mood is communicated to the observer through emotional movement within a range of redundant degrees of freedom so as not to affect the main (productive) task at hand. The robot in this figure is Panda arm from Franka Emika [8].

2. Related Work

2.1. Human-Robot-Interaction (HRI) and Social Robotics

Social skills, including the ability to express emotions, are important for robots to become more productive members of society. If robots are to become commonplace and act autonomously in public spaces and homes in the future, human cooperation will be essential for this to happen [6]. That cooperation may take the form of personal human help or robot-friendly social rules. To elicit this human cooperation, robots need to be socially accepted by humans. As mentioned in [1], robots used in the general environment are still not considered pleasant and tend to be considered creepy because their emotions are unreadable. In fact, Kulic and Croft point out the fact that robot motions may create anxiety in the users, and that there is a need to design robot motions that ease the interaction between the human and the robot [9]. Therefore, research on having robots express emotions is important, and through this research, we can gain more knowledge about how humans perceive emotions toward robots.

In HRI research, robot control and human perception have been studied in two research directions: physical (pHRI) and social (sHRI). Our study falls under sHRI. Much of pHRI research is focused on robots, developing control frameworks that treat humans as extraneous noise, external forces, or another “robot” [10,11,12]. In contrast, sHRI research is focused on human perception. sHRI without physical contact has often been investigated through specially designed experiments. Researchers have been trying to analyze mental states, comfort, sense of safety, sociability, and general perceptions of users. Such mental measures are often acquired using post-experimental questionnaires [13,14,15,16].

Weiss et al. [13] used the HRP-2 humanoid to investigate whether the general attitude of people who are skeptical toward robots changed after interacting with the robot. Our study uses the Negative Attitude Toward Robot Scale (see Section 6.1.3) used in their research. Kamide et al. [15] aimed to discover the basic factors for determining the “Anshin” of humanoids from the viewpoint of potential users. “Anshin” is the Japanese concept of subjective well-being toward life with artificial products. According to the factor analysis in their research, the five factors of Anshin are comfort, performance, peace of mind, controllability, and robot-likeness. Existing sHRI studies have also attempted to give robots emotional intelligence and investigate the human perception of emotional robots as mentioned in the following section.

2.2. Expressive Motion by Robots

Emotional expression through movements in a robotic arm has two important advantages. The first is that there is no cost for additional devices for emotional expression. Certainly, retrofitted devices such as displays, voice devices, and LEDs could be used to communicate the mood and internal state of the robot to the outside world. However, these exposed devices are tricky to adapt to a design that can withstand the harsh general environment, and for public facilities where a more robust design is required, the availability of a simple and plain robot arm is desirable. Emotional expression through movement utilizes the robot’s innate kinematic degrees of freedom, thus keeping the design very simple and minimal. The second important aspect is the ability to emphasize presence. As often mentioned in studies of telepresence robots [17,18], presence plays an important role in gaining respect from humans. A flimsy impression diminishes its importance to the individual, causing him or her to lose interest or to treat it thoughtlessly. Appealing to the robot’s internal information not only through displays and voice, but also through actual actions, can elicit human empathy for the robot in a natural way and provide social value through interaction.

Expressing emotions through body motions has been studied in the fields of animation and computer graphics for decades, although it was not until the beginning of 21st century that this was studied in robotics. Kulic and Croft [9] presented the first study of this kind, in which they found that robot motions may cause users to feel anxious. They also pointed out the need to design robot motions so that the interaction is more comfortable between the user and the robot. Saerbeck and Bartneck [19] proposed a method that effectively transmits different emotional states to the user by varying the velocity and acceleration of the robot. The proposed solution has been implemented in a Roomba robot and further shown to be extendable to other robots.

Beck et al. proposed a method for generating emotional expressions in robots by interpolating between robot configurations which are associated with specific emotional expressions [3]. The emotions are selected from the Circumplex model of emotions [20]. This model plays an important role in mathematically defining emotions as points in a two-dimensional space, allowing each point to correspond to different emotions. The Pleasure–Arousal–Dominance (PAD) model [21] is also widely used as a tool to express emotions numerically (see Section 5.2).

An approach has also been taken to add an offset to the known gesture behavior in terms of position and its velocity. For instance, the method proposed by Lim et al. [5] varies the final position and velocity of the base gesture on the basis of the intensity and type of emotion the humanoid robot wishes to convey. In their study, the amount of offset is varied on the basis of the features extracted from the speech signal. Similarly, Nakagawa et al.’s study [22] showed a correspondence between emotion and offset based on the Circumplex model. By adding this offset to the intermediate configuration and velocity of a given trajectory, emotional nuances are added to the trajectory. Thus, the trajectory can be parameterized in two dimensions to express the intended emotion.

Claret et al. proposed using the extra degrees of freedom left over from the robot’s kinematic redundancy to make the robot express emotions [4]. This concept is based on a technique called null space control [23] (see Section 4.2), which uses the extra degrees of freedom to perform an additional task as a sub-task even when the robot is performing its main task. This approach was applied to the humanoid robot Pepper, which was given the gesture of waving as its main task, and an emotional movement component was given as a sub-task. With this method, the robot was able to express emotions simultaneously expressed while performing the main task of hand waving.

In spite of the amount of research presented by the robotic community about emotional motion, the available approaches only deal with emotional movement as the main task. These approaches limit their usage of robots in situations where the robot is only required to perform this single task. This will force the robotic system to switch frequently between the productive task mode and emotional expression mode. The smooth transition between those modes might be tricky, since such switching of tasks is wired from a human point of view and could extend time to complete the productive task. On the other hand, our study examines emotional expression while performing a productive task through manipulators. This method utilizes null space control to exploit redundant Degree of Freedom (DoF) as same as the study of Claret et al. [4]. Furthermore, it adapts the concept of manipulability ellipsoid to null space to maximize emotional expression in manipulator’s null space. The coexistence of a productive task and emotional motion is not analyzed in the study of Claret et al. In addition, the method for effectively regulating the emotional movements expressed within the redundant degrees of freedom using manipulability ellipsoid is newly proposed.

2.3. Exploiting Kinematic Redundancy

Liégeois [24] and Klein and Huang [25] pointed out that if the number of task-required dimensions is lower than the number of robot joints, the robot is considered as redundant, and it is possible to program multiple tasks, regardless of whether the robot structure is redundant. The most common method of exploiting robot redundancy is the null space of the robot’s Jacobian. In this method, the potential function is projected onto the kernel of the main task. In other words, only the space of joint speeds that can be executed without affecting the main task is made available to the sub-task [24,26]. In this approach, the first task is guaranteed to be executed, and the second task is executed only if sufficient degrees of freedom remain in the system, thus avoiding inter-task conflicts.

There are several examples of implementing secondary tasks using the Jacobian null space approach. For instance, Liégeois implemented joint limit avoidance [24], and Nemec and Zlajpah demonstrated singularity avoidance [27]. In addition, Maciejewski and Klein developed a self-collision avoidance system [28]. Hollerbach and Suh implemented torque minimization [29], and Baerlocher and Boulic demonstrated the simultaneous positioning of multiple end-effectors [30]. In a subsequent study, they demonstrated center of mass positioning for humanoids [31]. Peng and Adachi added compliant control to the null space of the 3 DoF robot manipulator [32].

On the other hand, the work of Claret et al. [4] is the only example of a study in which emotional expression was set as a sub-task.

3. Contribution

The contributions of this work are as follows. First, we introduce a novel emotion expression method that allows robotic manipulators to conduct productive tasks and emotional expression simultaneously. This method uses null space control to exploit the kinematic redundancy of the robotic arm. Furthermore, the author proposed a method that adapts manipulability ellipsoid into the null space to maximize motion in the null space effectively. By adjusting the velocity, jerkiness, and spatial extent of the null space motion, this method enables any manipulator to show its mood while executing the main task at its end-effector. This study is distinct among studies about emotional expression by motion, because this method set a productive task as the first priority and sets emotional motion as a sub-task. This method seeks the possibility of robot’s better acceptance by general people by showing both productive skill and social skill that are important to have in human society.

Additionally, the second contribution is providing actual examples of emotional expression within the speed limit as stated in the guidelines for industrial robots performing cooperative tasks by the International Organization for Standardization (ISO/TS 15066 [33]). We discuss the potential problems of emotional expression by robots that can perform productive tasks, such as industrial machines. This example reminds us of important issues when a powerful robot under current rules expresses emotions in an environment close to people. The issue is the powerful robot system needs to follow strict safety measurements, and it restricts expressive motion elements such as velocity, jerkiness and spatial extent. On the other hand, the questionnaire depicts that people still make sense of robot emotion based on the context of interaction, gaze/facial movements, and prejudices against robots, even though the emotional motion elements are unclear. It points out an interesting possibility of emotion conveyance of heavy/powerful robots by controlling those elements other than the motion elements.

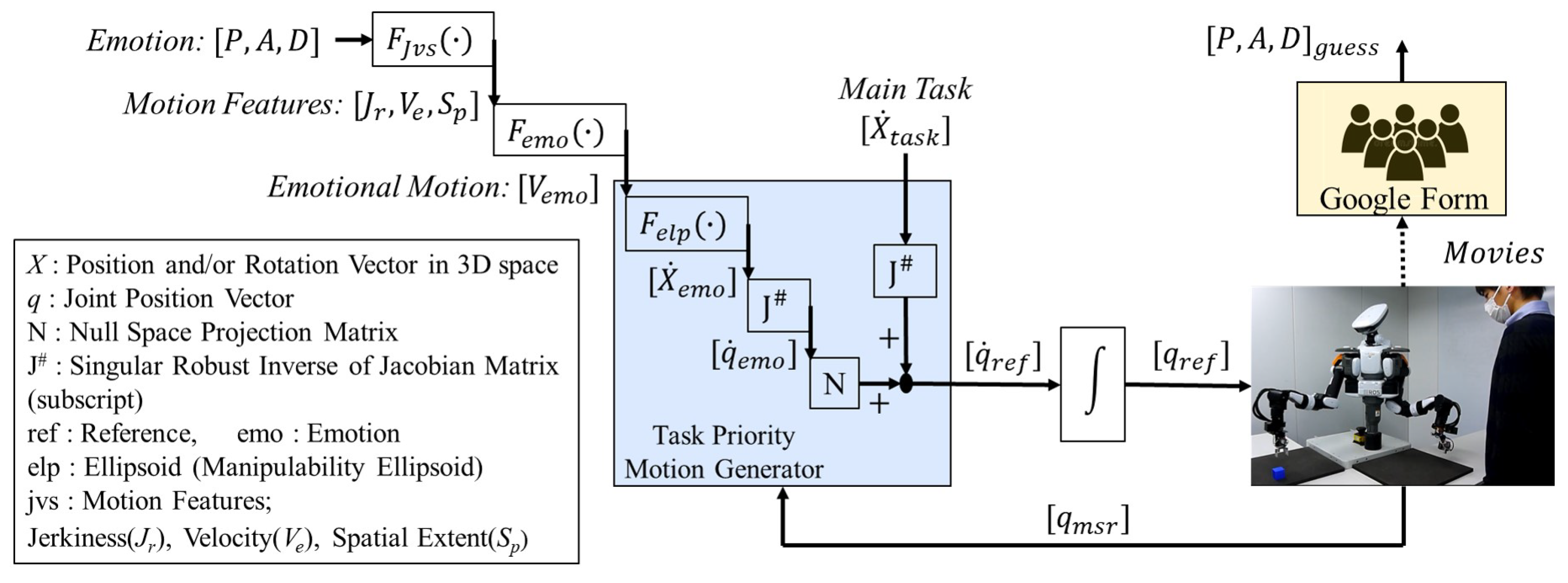

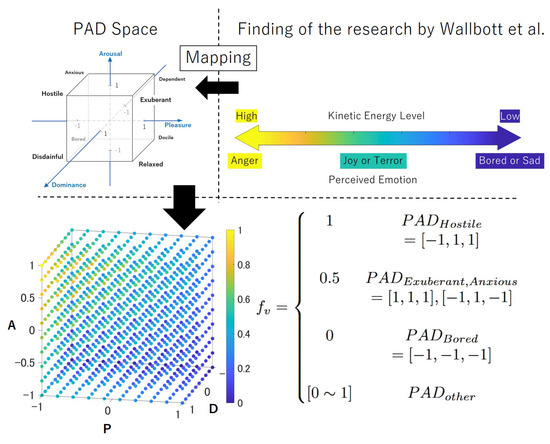

Figure 2 provides an outline of our research. Section 4 describes the kinematics model for redundant robot manipulators. Section 5 introduces the proposed emotion conveyance method. Section 6 describes the implementation of the method on a real robot, questionnaire contents, and experiment. Section 7 and Section 8 present the experimental results and the discussion, respectively. Section 9 concludes this paper.

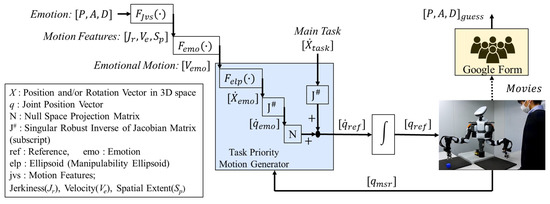

Figure 2.

Emotional information is given to the robot as PAD parameters, which are converted into a set of motion feature parameters with reference to previous studies in cognitive engineering. Then, depending on the values of the motion features, the emotional motion in the null space is generated using its manipulability ellipsoid. This emotional motion was performed by the robot through null space control and recorded on video for an online survey.

4. Robot Manipulator with Kinematic Redundancy

The definition of a redundant manipulator and its kinematics are described here. A redundant manipulator is defined as a manipulator that has more degrees of freedom (DoFs) than a task requires. The Jacobian is related to the DoF of the end-effector. In other words, it indicates the direction in which the end-effector can move at that joint coordination q. The maximum rank of this Jacobian M is equal to the DoF of the task in Cartesian space. Here, let us assume that the manipulator has N DoF and the rank of Jacobian r; thus, there would be three cases as shown below.

- In the case of (N = M).The manipulator has the exact number of DoF that is required to complete the main task at its end-effector.

- In the case of (N > M).The manipulator is redundant with degree of (N − M). The manipulator has the ability to move along its redundant space without moving its end-effector, and that kinetic redundant space is often referred to as Null Space in robotic motion control.

- In the case of (r < M).The manipulator is at the singular pose with degree of (M-r), and its end-effector cannot move to the certain direction (degenerate direction). If the Jacobian is a square matrix, this is the case when the determinant of the matrix is 0. For the other case, such as the case of the redundant manipulator, it is the case when the determinant of the product of Jacobian and its transpose is 0.

4.1. Kinematics of Robotic Manipulators

In this study, the robot’s emotional motion was generated via Null Space Control [23] and it is closely related to kinematics such as Jacobian matrix. So, let us note the basics of the manipulator’s kinematics in this section. First, in the inverse kinematics of the robot arm, the relationship between the position and orientation X in three-dimensional space and the joint angle vector q can be expressed as X = f(q) using a certain nonlinear function f. Then, the differential relation in that equation can be expressed by a certain linear expression with Jacobian as the coefficient matrix.

There are two approaches to inverse kinematics with redundancy: one is to solve equation X = f(q) using numerical methods such the Paul method [34], and the other is to solve Equation (1). However, in the case of singular postures, since Jacobians do not have inverses, Moore–Penrose pseudo-inverses are often used.

However, in singular postures, even pseudo-inverse matrices can deteriorate the accuracy of the computation, and they may generate discontinuous values when incorporated into a closed-loop control system. Therefore, in this study, the SR-Inverse (singularity robust inverse) was used [35].

where I is the identity matrix and k is a very small positive arbitrary constant. By using this SR inverse matrix, it avoids dealing with exact values in the process of calculating the inverse matrix in singular postures and thus prevents discontinuity in the time series calculation results.

4.2. Null Space Control

Null space control is a method of controlling robot motion in the space of joint angular velocity or joint angular accelerations in degrees of freedom that are not used in the main task [23]. There are many studies that take advantage of the kinematic redundancy of robots as mentioned in Section 2.

The null space projection matrix N can be calculated from Jacobian.

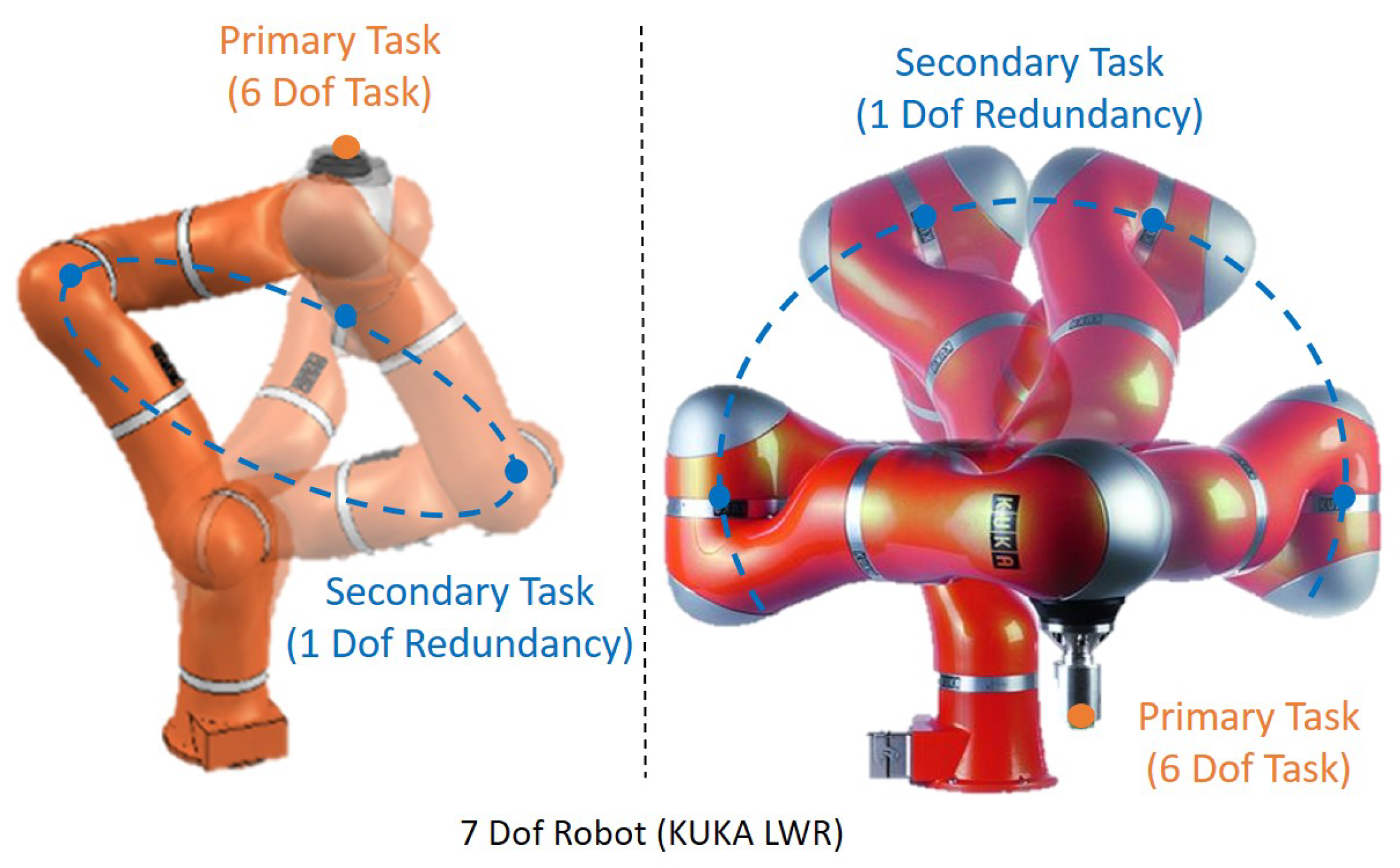

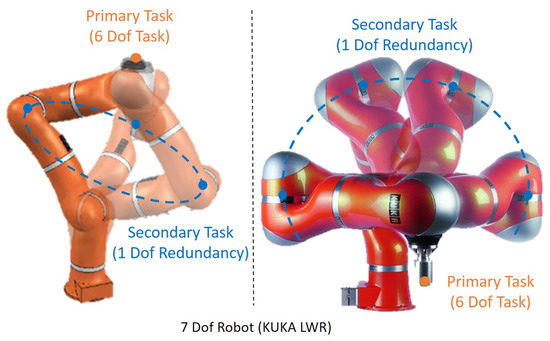

In this research, since a 7-DoF manipulator is used, at least one redundant DoF is basically ensured, as shown in Figure 3, but depending on the type of task, there may be more than two redundant DoFs. For example, the task of moving a ball along a fixed trajectory is represented by 3-DoFs (x, y, z) for position only if the rotation of the ball is arbitrary, which means that the 7-DoF manipulator has a 4th degree of redundancy. In order to effectively utilize this redundancy, we devise a new method for the Jacobian that is used to calculate the null space projection matrix N.

Figure 3.

A redundant robotic manipulator can move parts of the body without affecting the position and/or posture of its end-effector.. The robot in this figure is LWR iiwa from KUKA [36].

Using this N, any additional motion can be added to the main task by projecting it in the null space. The new motion takes a form that does not affect the main task at the end-effector.

The final reference trajectory which contains the main task and emotional motion is given in the second line of Equation (6). is the main task of the end-effector. In this research, it is important to define the emotional component effectively, because it will be trimmed into the null space, and it might lose some important motion feature such as spatial extension of the motions that would convey a dominance level of the mood. To cover this issue, the manipulability ellipsoid is adapted in null space control as described later in Section 5.4.

5. Emotional Conveyance

5.1. Proposed Method

Our method is for generating a joint space motion for robotic manipulators. The generated joint space motion contains both the main task at the end-effector and the emotional motion in its null space. In order to express the intended emotion correctly, the corresponding motion features jerkiness, velocity and spatial extent are adjusted. The motion features were selected on the basis of state-of-the-art studies in the cognitive engineering field. Finally, the adjusted motion features are effectively executed in the null space by using the concept of manipulability ellipsoid.

Similar to Claret et al.’s work [4], our proposed method converts emotion (PAD) into motion features. However, in contrast to previous work, we concentrate on robotic manipulators that execute productive tasks at the end-effector. While the previous related work focused on humanoids and set gestures (e.g., waving) as the main task, our method enables robots to be productive and expressive at same time. In addition, our method of converting emotion to motion features differs from that of previous work, which converted emotion (PAD) into motion features by a simple linear formula. We use findings from previous cognitive engineering studies [37,38,39], focusing on the velocity of the motion to convey emotions. Furthermore, instead of the motion feature Gaze that is used in Claret et al.’s work, we introduce Spatial Extent to express the dominance of the emotion, since manipulators do not have eyes. The degree of spatial extent was represented using geometric entropy [40].

5.2. From Emotion: to the Motion Features:

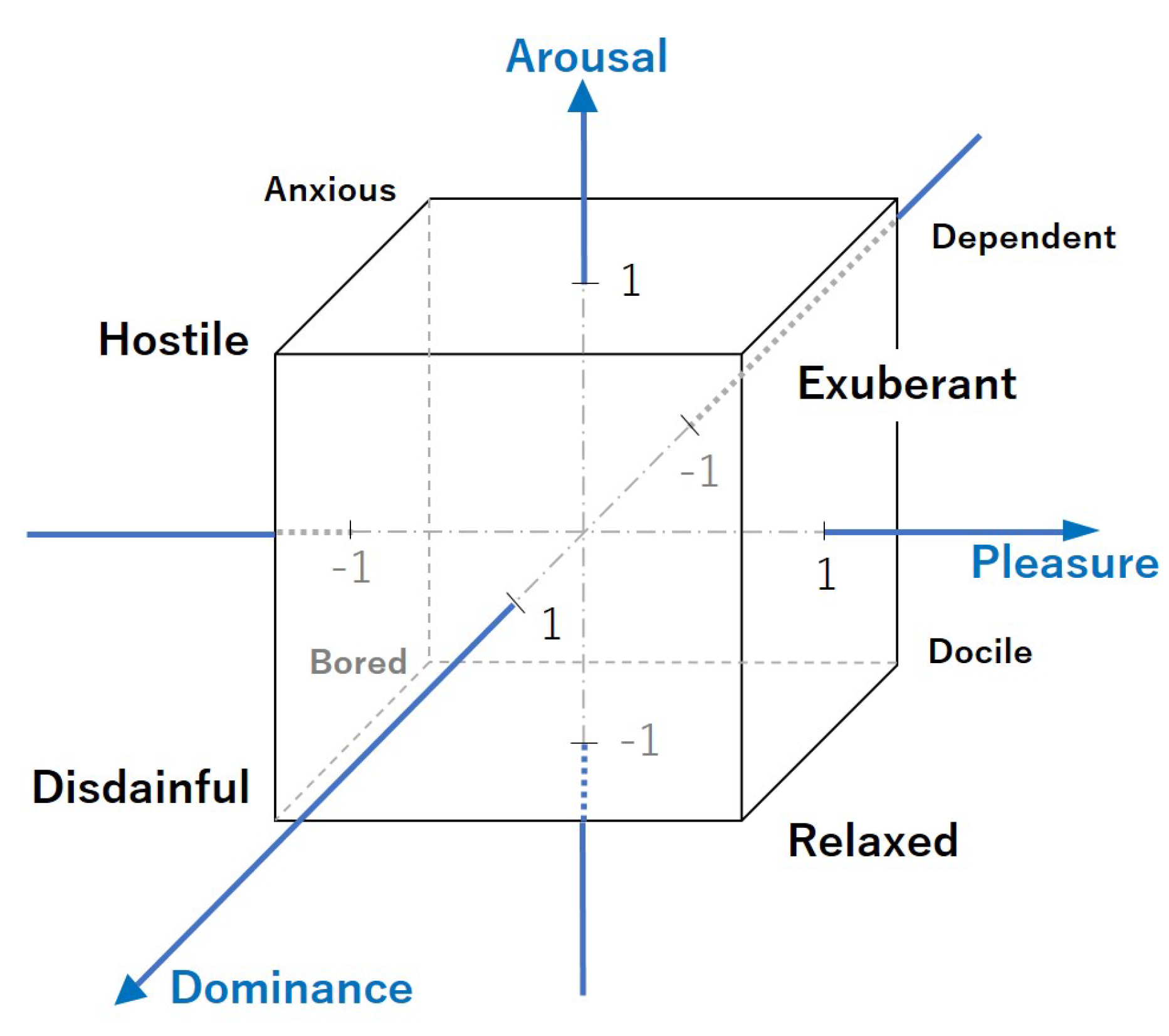

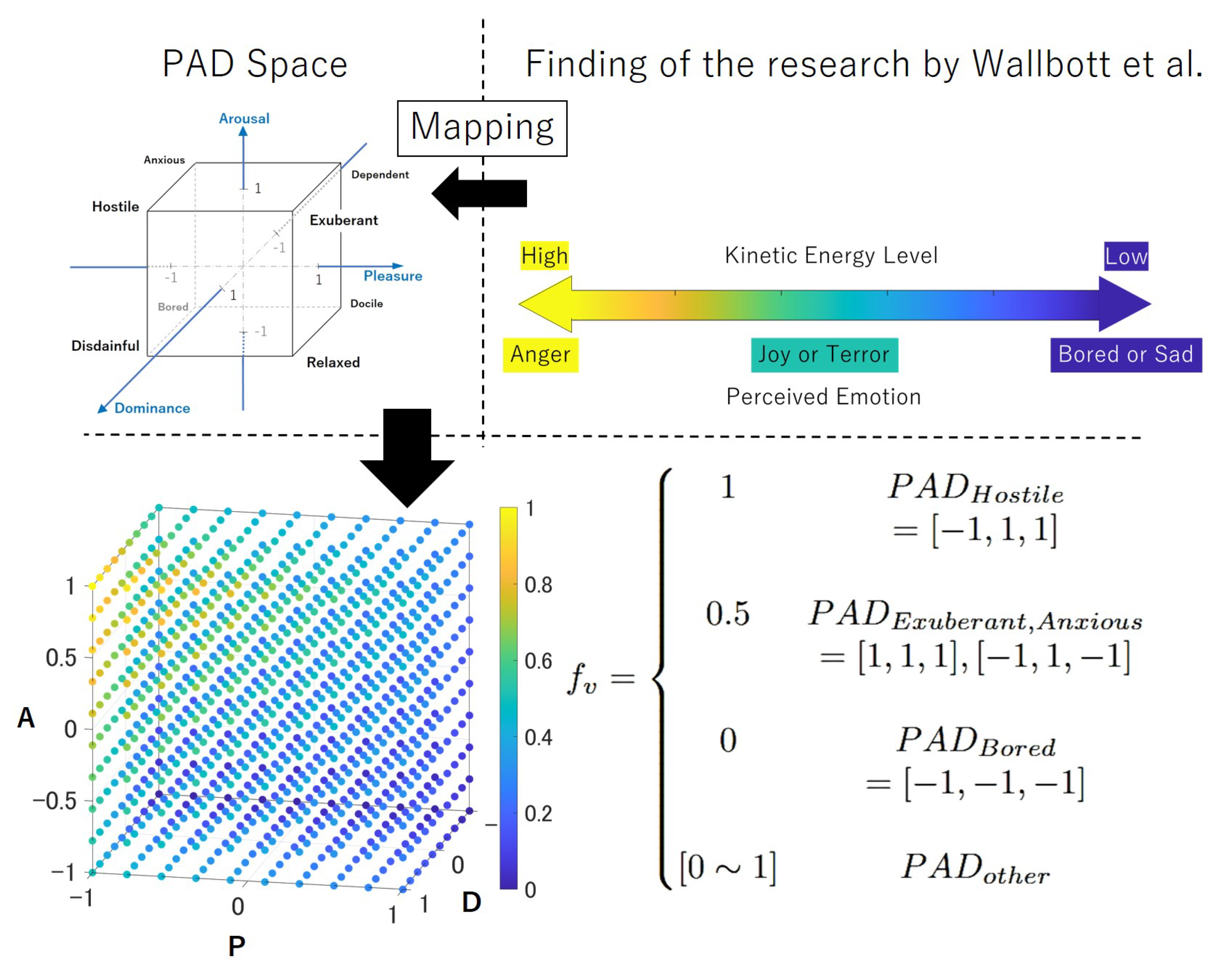

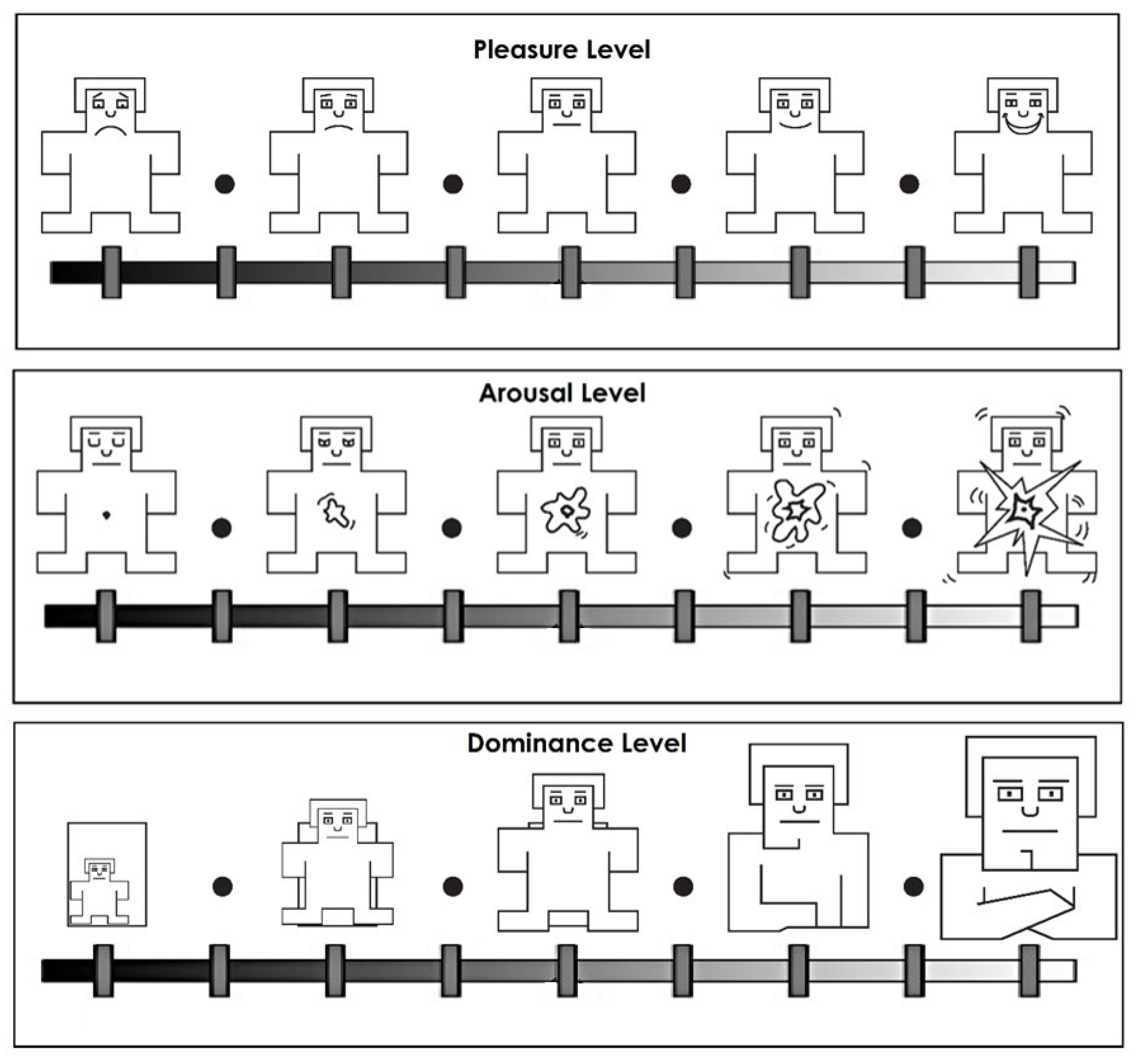

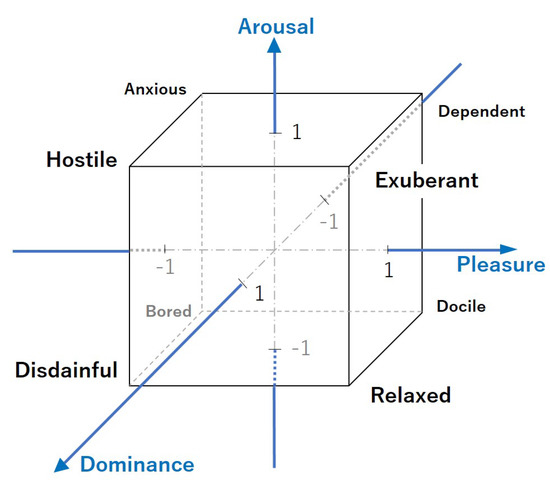

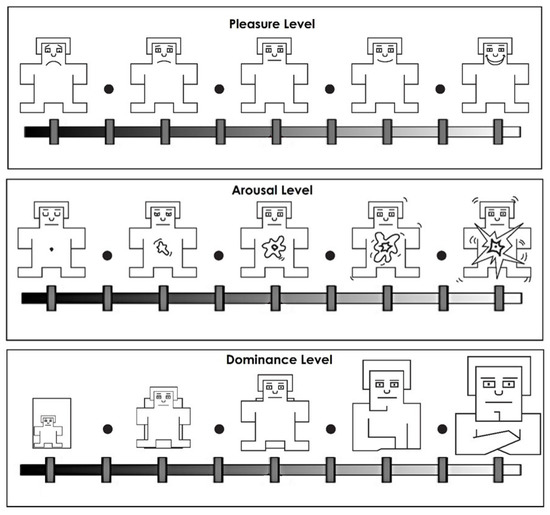

The PAD model is by Mehrabian [21] and used to express emotions in numbers. In this model, a certain emotion is described by three elements: pleasure P, arousal A and dominance D. Each elements are nearly independent and take values from −1 to 1 contentiously. Thus, different emotions can be expressed as points in the 3D space (PAD space), as shown in Figure 4.

Figure 4.

PAD space model by Mehrabian [21]: A single emotion can be broken down into three components: arousal, pleasure, and dominance. Each components takes a value between −1 and 1.

Several studies have attempted to correlate human gestures with PAD parameters [37,41]. However, as many studies have shown, it is not the meaning of the gesture categories that is important for humans to recognize and distinguish emotions in nonverbal communication but rather the elements of motion contained in the movements [42,43,44,45,46]. There are research studies on mapping the relationship between movement elements and PAD parameters to make robots perform actions that match emotions [4,5]. In the study by Claret et al. [4] using the humanoid robot Pepper, jerkiness and activity were used as adjustment parameters. Following the work of Lim et al. [5], they mapped the correspondence between these movement features and PAD parameters, generated affective movements, and verified the validity of emotion transfer. In the study by Donald et al. [37], 25 movement elements are described as transmitting emotional information, and in particular, four of them are capable of expressing most emotions. These four movement components are Activity, Excursion, Extent, and Jerkiness. Activity corresponds to the energy of movement. Excursion indicates how kinetic energy is distributed throughout the movement, while Extent refers to how large the gestures of the hands and arms are. Jerkiness is the derivative of acceleration.

5.2.1. Kinetic Energy

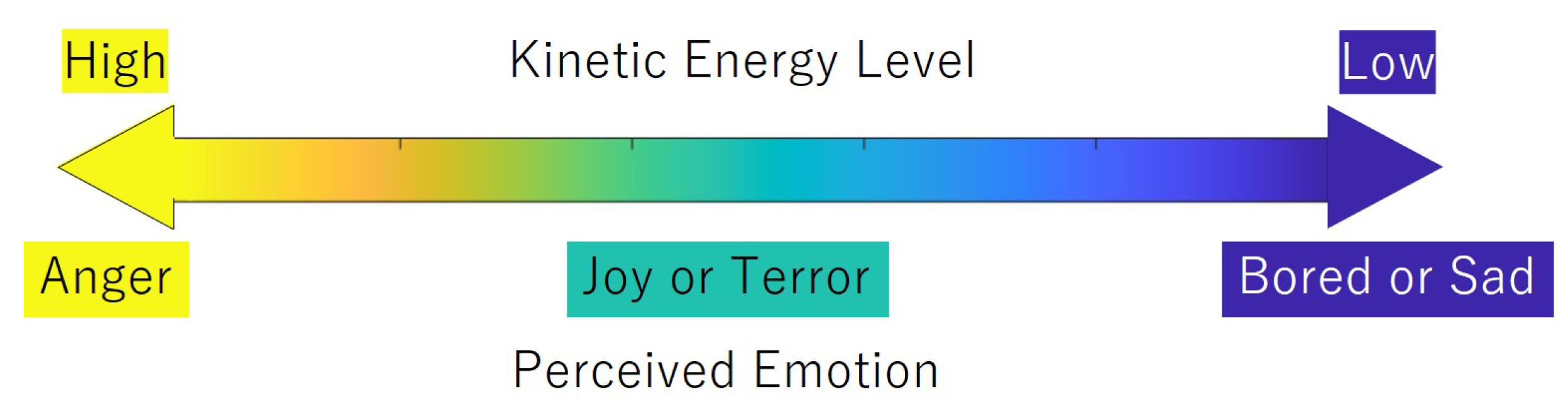

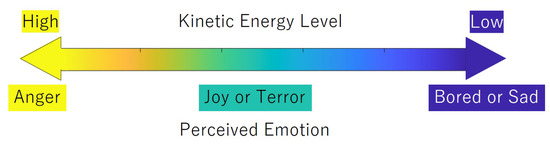

It is stated that the energy of movement (Activity) is positively correlated with the degree of excitation. Therefore, it can be considered that the movement component corresponding to the Arousal in the PAD parameters is the kinetic energy of the movement. In addition, in a study by Wallbott et al. [38], the kinetic energy of movement () was found to play an important role in identifying the 14 emotions.

For instance, when the kinetic energy was high, the emotion expressed by the movement was perceived as anger, and when it was low, the emotion was perceived as joy or fear (see Figure 5). In Equation (7), denotes the inertial matrix of the manipulator.

Figure 5.

Finding of the research by Wallbott et al. [38]. When the kinetic energy was high, the emotion expressed by the movement was perceived as anger, and when it was low, the emotion was perceived as joy or fear.

5.2.2. Jerkiness/Smoothness

It was also stated that Jerkiness is negatively correlated with Pleasure in case of highly excited emotions [37,39]. Therefore, it is considered that the lower the value of Jerkiness (smooth movement), the more positive emotion can be expressed. Although the aforementioned kinetic energy can be perceived as both positive and negative emotion depending on its magnitude, it is expected that the addition of Jerkiness information will allow humans to distinguish the positiveness of the emotion, such as between joy and fear.

5.2.3. Spatial Extent

In the previous research [4], it is mentioned that the direction of eye (gaze) is significantly related to the transmission of dominance D in the PAD parameters. However, since the robot arm in general does not have parts corresponding to the face and eyes, eye movements cannot be introduced into the motion. On the other hand, Wallbot [38] regarded the spatial extension of movements as an indicator for distinguishing between active and passive emotions. Therefore, we treat the range of spatial use as corresponding to the D parameter in the PAD parameters.

In order to represent the spatial extension of the joints’ trajectories, the Geometric Entropy is used. The measure of geometric entropy provides information on how much the trajectory is spread/dispersed over the available space [40]. Glowinski et al. [47] showed in his study about affective gestures that the Geometric Entropy Index can be used for comparison of the hand trajectories’ spatial extension. In addition, it is stated that the Geometric Entropy informs how the available space is explored even in very restrained spaces, including the extreme condition of close trajectory. The joints’ trajectories exhibited in the null space frequently fell into such cases. The geometric entropy () is computed by taking the natural logarithm of two times the length of the pattern traveled by the robot’s links () divided by the perimeter of the convex hull around that path (c) as shown in Equation (8). is computed on the frontal plane relative to the participant’s point of view. The Geometric Entropy is computed for all links and summed up to represent one emotional motion as whole.

5.2.4. to

Based on the information from the references mentioned above, this study will be communicating a rough image of emotion (anger, joy, fear, boredom) by regulating the energy of movement (velocity), and of making people perceive positivity/negativity and dominance level by using the degree of jerkiness and the level of spatial extent.

Here is the mapping between the points in the space to the points in the space within the normalized domain (Equation (9)). and are motion feature parameters that represents jerkiness, velocity and spatial extent, respectively. The motion feature parameter configures the amount of corresponding motion feature in the emotional motion. Mappings for jerkiness and spatial extent were defined linearly with reference to Claret’s study [4]. On the other hand, the mappings for velocity were defined to reflect the findings presented in Wallbott’s study [38], as shown in in Figure 6. The actual equation of is shown in Equation (A1) in Appendix B.

where

Figure 6.

Expressed the finding of the research by Wallbott [38] by mapping .

5.3. From Motion Features: to Emotional Motion in Null Space

The effective way to define adjustable emotional motion is presented by Claret et al. [4]. In their work, the motion was defined in joint velocity space. In contrast, the emotional motion is defined in form of the Cartesian space speed (not velocity) of each joint in this study, since this work is focusing on the motion in 3D space.

where

By adjusting the amplitude A, frequency and phase disturbance , certain motion features such as velocity, jerkiness and spatial extension in Cartesian space can be modified. The is the amplitude of the sinusoidal wave, which regulates the maximum speed of the emotional motion. This coefficient is directly related to the kinetic energy of the motion. On the other hand, this coefficient also has a significant impact on the spatial extent as well. This is because, in general, in continuous motion, the greater the velocity in three-dimensional space, the greater the space used by the motion. Therefore, the coefficient that defines the maximum value of this velocity A was designed to be proportional to both and parameters as shown below.

where is the maximum acceptable velocity of the joint. This limits the motion of the joint in redundant space to the range of .

Next, the phase disturbance of the sinusoidal wave affects the jerkiness when it is set as below.

where

is the maximum design value of the phase shift for the joint. This limits the phase shift to a maximum of . and are the frequency of the phase disturbance. These are determined relative to in Equation (11) using positive value constants .

Finally, the frequency of the periodic velocity shift in 3D space has an effect on the spatial extent of the motion. The frequency was defined to be inversely correlated to . In this way, as the parameter increases, the velocity change in three-dimensional space is expected to be slower, and the spatial utilization of the motion is larger.

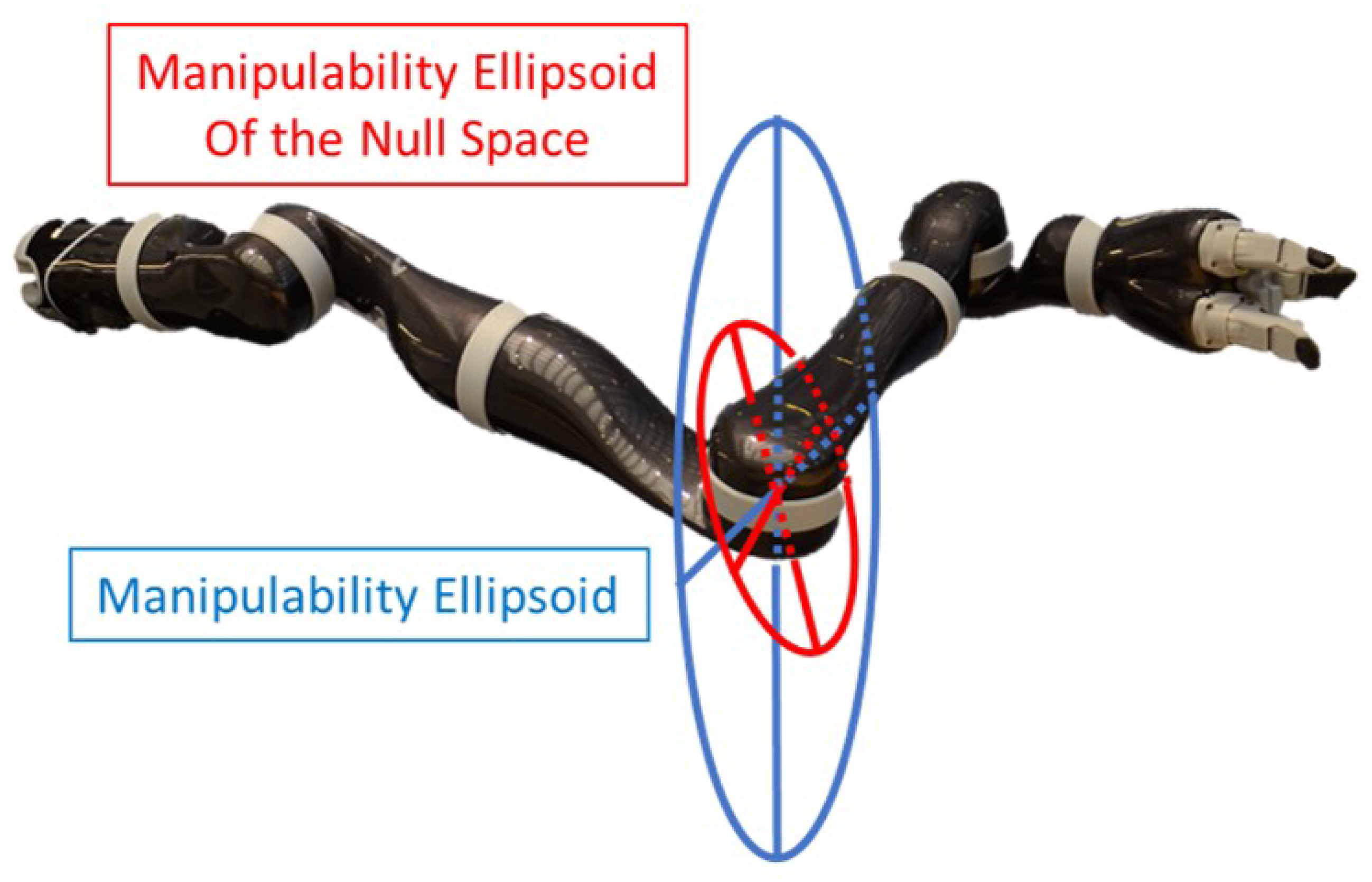

where and are constant for parameter and the offset (max value) of the frequency, respectively. The constant is set to be the minimum value of the , which is more than 0.

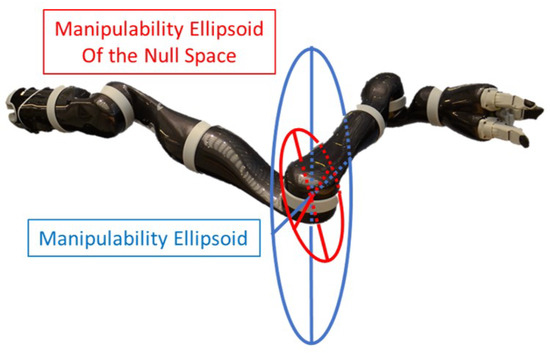

From the above equations, a motion involving features of jerkiness, velocity, spatial extent specified by the parameter is defined. However, since this is defined in the form of speed in 3D space, the direction in which this speed is to be achieved must be specified appropriately. For example, if the axis of this speed can always be set in a direction in which the robot can move without affecting the main task, then the designed motion feature could be realized in the null space. On the other hand, if the direction of is set in a direction in which it is structurally impossible to move, most of the motion feature will be lost when it is projected to the null space. Therefore, this study addresses this issue using manipulability ellipsoid. The manipulability ellipsoid is projected into the null space, and its first principal axis is set as the direction of . This means that the manipulator always moves for emotional expression in the direction in which it can move in the null space. Therefore, the amount of motion feature lost by the null-space projection matrix can be minimized. was converted to the velocity of 3D space emotional motion as shown in the following section.

5.4. Manipulability Ellipsoid in the Null Space

Manipulability ellipsoids are effective tools to perform task space analysis of robotic manipulators. Generally, it is used for the evaluation of the capability at the end-effector in terms of velocities, accelerations and forces. There have been many studies about manipulability ellipsoid. For instance, Yoshikawa introduced the manipulability ellipsoid with consideration of dynamics of the manipulators, which are called dynamic manipulability ellipsoid [48]. In addition, He presented the manipulability ellipsoid for manipulators with redundancy [49]. Chiacchio et al. have focused on a singular pose of the manipulator and improved the manipulability ellipsoid for manipulators with redundancy in terms of task-space accelerations [50]. However, those works are about end-effectors, and only recently have manipulability ellipsoids in null space in redundant manipulators been studied by Kim et al., in 2021 [51]. A simplified image of the manipulability ellipsoid of the robot arm and its projection to its null space is shown in Figure 7.

Figure 7.

Manipulability Ellipsoid for 4th joint. The blue/bigger ellipsoid is for the normal unbounded case. The red/smaller ellipsoid represents the one in the degrees of freedom constrained by the end-effector. The robot in this figure is a Jaco-arm from Kinova [52].

In Kim et al.’s study, it is stated that the primary axis of the manipulability ellipsoid for the joint that is constrained by the end-effectors motion can be calculated using null space projection matrixes N and Jacobian J.

where

The null projected Jacobian contains the relationship between the joint and the task motion in the null space. The null projected Jacobian of the link is a product of the elements of the row and column of the null space projection matrix and Jacobian of the link . The relationship can be obtained from the singular values and vectors by the singular value decomposition (SVD) as Equation (18) shows. Here, and are orthogonal unitary matrices involving singular vectors of and includes singular values, in the diagonal elements. Thus, the primary axis of the null projected manipulability ellipsoid could be obtained with the first singular value and the singular vectors of the first row of , as shown in Equation (17).

Finally, the emotional motion speed for each joint from Equation (11) was transformed to the velocity along the vector and set as emotional motion using Equation (20). Then, it is converted to the joint space velocity motion as below.

where,

Here, represents the total number of joints. Note here that we are adding up the joint displacements to move each joint in the null space. This is because the aim is to allow each joint to move to its maximum extent in its null space. In a redundant robot arm with a serial structure, the joints located in the middle have the largest range of movement in the null space due to its structure. Therefore, when the joint displacements for each joint to move in the null space are added together, the displacement to move the joint located in the middle naturally becomes dominant. Although this effect will be small for a fewer DoF robot arm case, this method can be applied to linearly structured robots with any degrees of freedom, and therefore, the advantage will be significant when applied to a robot arm with larger DoF.

Finally, Equation (6) is used to transfer this emotional motion to the null space. As noted above, the direction of this emotional motion is set as the principal axis of the null space manipulability ellipsoid of each joint, so that maximum expressivity can be expected.

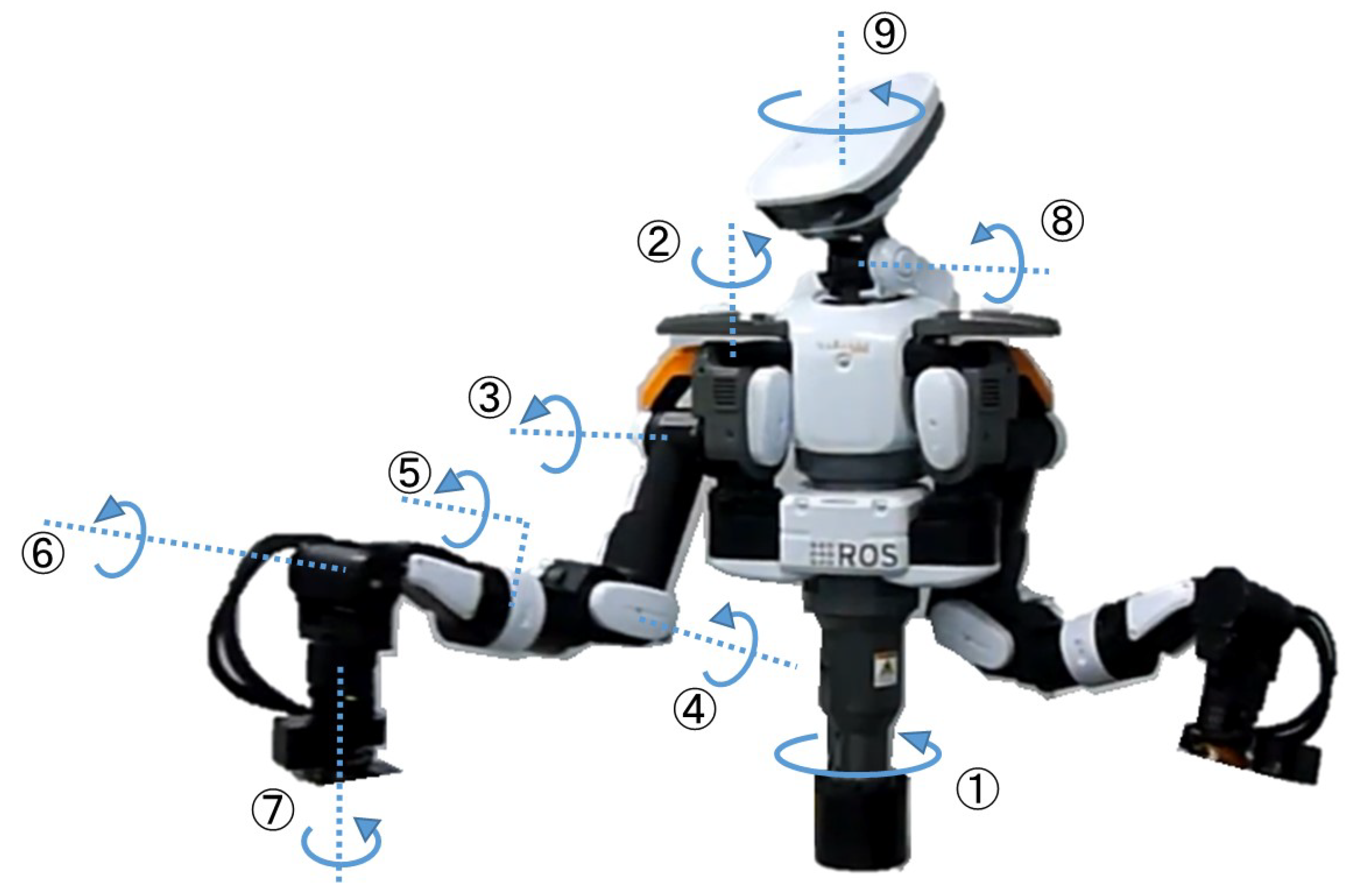

6. Implementation

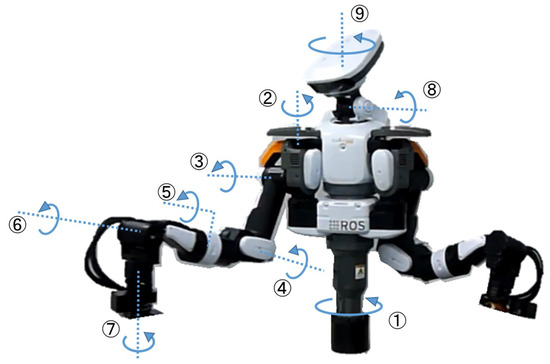

The proposed method is implemented to an upper-half body humanoid “Nextage-Open” shown in Figure 8, which is developed by Kawada Robotics. Nextage-Open is one of the industrial robots with two 6 DoF manipulators. However, the manipulator can have 7 DoF when the chest joint is included (1st joint in Figure 8). The main task at the end-effector is set as moving an object in a horizontal semicircle line. Only the position of the object was specified so that the robot has a 4th degree of redundancy (3 rotational + 1 structural). The joint position control was performed with high accuracy by the internal controller. The implementation is completed through Python3, and the robot was operated via a Robot Operating System (ROS: Melodic) with Ubuntu environment (Ubuntu 18.04).

Figure 8.

Nextage-Open from Kawada Robotics. Its arm has 7 DoF if the chest joint is included. The mass is 36 kg, and height is 0.93 m.

The human-shaped robot was chosen to conduct a survey, so that the participants can naturally perceive emotion. The point of this work is that it can be applied to any form of single tree structure robot, and it can move emotionally without disturbing the main task.

In this implementation, eight kinds of extreme emotions which are located at the vertices of the cube formed in the PAD space in Figure 4 were used to generate emotional motions. Namely, Hostile (PAD = [−1, 1, 1]), Exuberant (PAD = [1, 1, 1]), Disdainful (PAD = [−1, −1, 1]), Relaxed (PAD = [1, −1, 1]), Dependent (PAD = [1, 1, −1]), Anxious (PAD = [−1, 1, −1]), Bored (PAD = [−1, −1, −1]), and Intermediate. Additionally, the intermediate motion (PAD = [0, 0, 0]) is generated. These emotions are manually set to see if people can differentiate those emotions where their motions ought to be different from each other. The proposed method generates a robot’s motion that executes the main task with emotional motion as the sub-task in its null space, in which motion features such as velocity, jerkiness and spatial extent are adjusted according to the emotion of the robot (PAD parameters). The method to generate emotional motion from PAD parameters is explained in Section 5.

In order to avoid self-collision, the motor position limit and velocity limit were strictly set for each joint following the regulations based on the ISO/TS 15066 and ISO 10218-1 [33]. In addition, the emotional motion was simulated in a Gazebo simulator and saved beforehand; then, it was played at the right moment along the scenario even though this method can generate emotional null space motion online along the main task execution. This is because the robot was owned by Kawada Robotics, and our team want maximum safety for people and the robot. In addition, the other arm (left) that is not conducting the main task was used to express emotion in null space as well, while it maintains a safe distance from the other arm. The neck and face were simply tracking the object during the interaction except for the part of the initiation of the interaction and the moment of handing over the object, in which the robot looks at the human.

6.1. Questionnaire

The questionnaire is composed of three parts in the following order: Negative Attitude Toward Robot Scale (NARS), PAD evaluation using SAM scale, and Big Five personality test. The aim for this questionnaire is to find out:

- If the robot was able to convey intended emotion to human using null space;

- If the personality and negative attitude toward the robot affects the interpretation of the emotional motion.

The questionnaire was conducted via the internet using Google Form. The participants are recruited by a recruiting company (Whateverpartners Co., Ltd.) via their platform through the internet. The participants received 110 Japanese yen after the questionnaire. The informed consent was acquired at the beginning of the Google Form by providing the information of the content and background of the research and possible risk for participation. Participants were given the chance to freely express their comments on their impressions at the end of the questionnaire. The actual questionnaire that is generated by the author is available through the author’s Github repository [53] (URL is in Appendix A).

6.1.1. SAM Scale

The Self-Assessment Manikin (SAM) by Lang [54] was used to evaluate people’s interpretation of the robot’s emotion. The SAM scale is a visual questionnaire composed of three scales. Each scale is corresponding to a dimension of the PAD space. Lang conducted a comparative analysis of measurement results from both the SAM and Mehrabian and Russell’s PAD scales. The correlation coefficients between the two scales were 0.94 for pleasure, 0.94 for arousal, and 0.66 for dominance [55]. The SAM scale can eliminate the effects related to verbal measures because of its visual nature. The participants can also write their responses fast and intuitively. The guidelines for a questionnaire with the SAM scale can be found in [56]. The actual SAM scale that was used in this work is shown in Figure 9. After observing the robot’s movements, the participants asked to evaluate the PAD element level in the movements on a 5-point scale, referring to the SAM scale diagram. After watching each video and estimating the PAD, the respondents were asked to rate on a scale of 1 to 5 how confident they were in answering that PAD (1: not confident at all, 5: very much confident). In this research, eight kinds of emotional motion were generated. The order of the questions is randomized for each participants.

Figure 9.

The Self-Assessment Manikin (SAM) by Lang [54]. This scale can eliminate the effects related to verbal measures because of its visual nature and help participants write their responses fast and intuitively.

6.1.2. Big Five Personality Test

The Big Five personality test [57] is a taxonomy of personality traits. The five dimensions are defined as: Openness to experience, Conscientiousness, Extroversion, Agreeableness, and Neuroticism. Although not all researchers agree on the Big Five, it is the most powerful descriptive model of personality and is also a well-established basic framework in the psychology field [57]. The Big Five has several methods to measure: International Personal Item Pool (IPIP) [58], The Ten-Item Personality Inventory (TIPI) [59], Five Item Personality Inventory (FIPI) [59], etc. The most frequently used measures of the Big Five are items that are self-descriptive sentences [60]. In this work, the simplified version of the Big Five personality traits questionnaire was used which consists of 15 Japanese questions. This simplified version was based on TIPI-J by Oshio et al. [61]. This test was used in Yue’s research [62] to diagnose the personality of humans interacting with robots. Each three questions among 15 questions are corresponding to each dimension of the Big Five taxonomy. In Yue’s work, it is shown that a person who has more openness to experience tends to think robots are physically reliable (tough), independent and emotional (Agency). Thus, the personality would indeed affect the perception of the robot. It will be helpful to understand the trend of this human’s tendency for designing robot’s behavior in the future.

6.1.3. Negative Attitude toward Robots Scale (NARS)

The Negative Attitude Toward Robot Scale (NARS) was developed to measure human attitudes toward communication robots in daily-life [63]. This scale consists of fourteen questionnaire items in Japanese. These 14 items are classified into three sub-scales: S1: “Negative Attitude toward Situations of Interaction with Robots” (6 items), S2: “Negative Attitude toward Social Influence of Robots” (5 items), and S3: “Negative Attitude toward Emotions in Interaction with Robots” (3 items). Each question is answered on a five-point scale (1: I strongly disagree, 2: I disagree, 3: Undecided, 4: I agree, 5: I strongly agree), and the score of a person at each sub-scale is calculated by summing the scores of all the items in the sub-scale. Thus, the minimum score and maximum score are 6 and 30 in S1, 5 and 25 in S2, and 3 and 15 in S3, respectively.

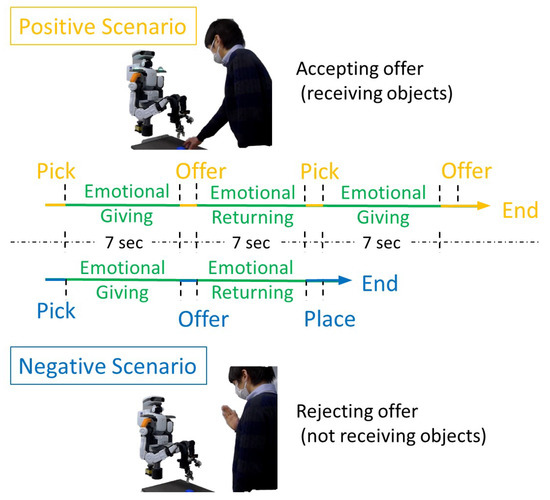

6.2. Experiment Description

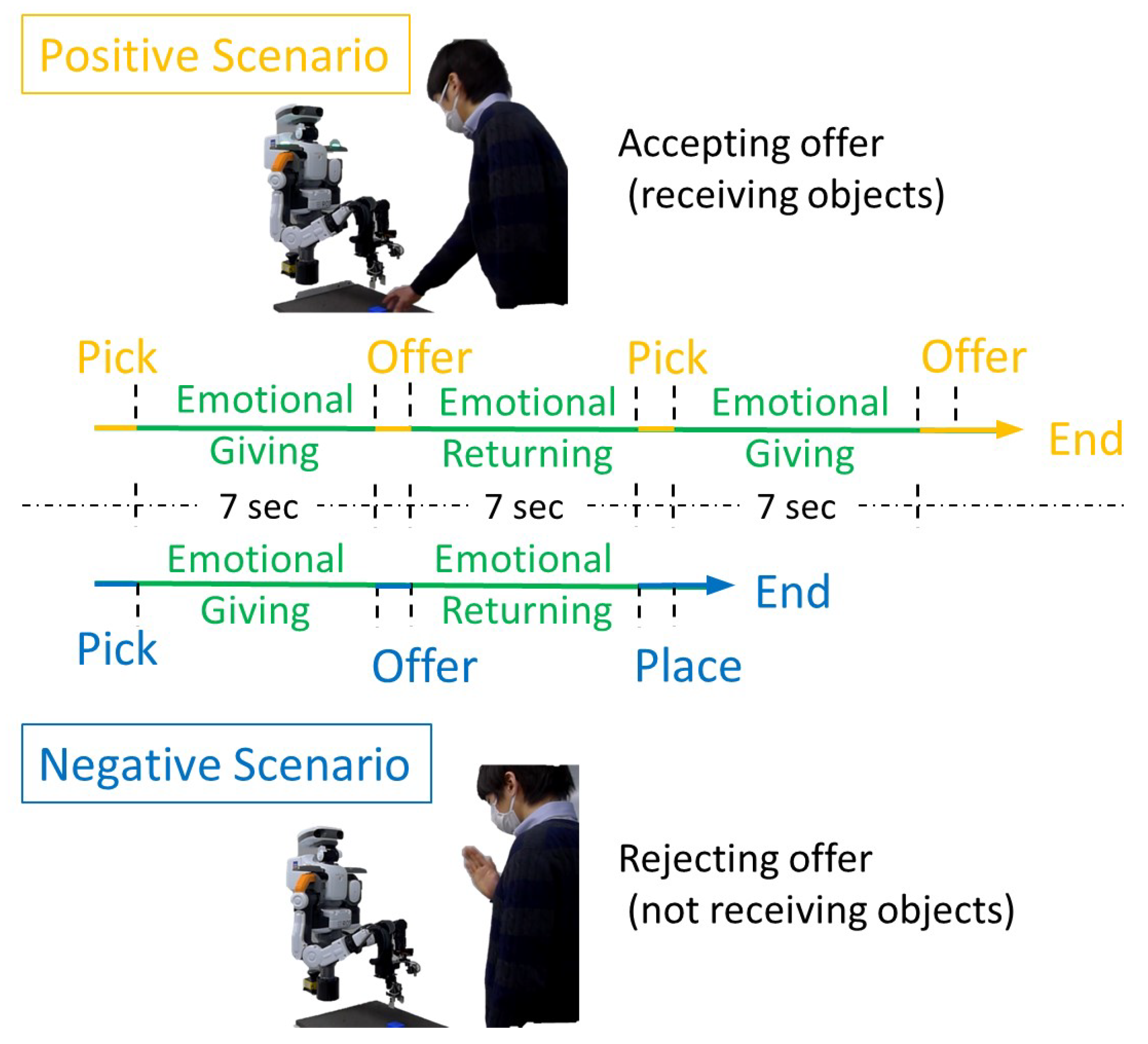

The interaction between a person (actor) and the robot is recorded on a camera. During the interaction, the robot performs an emotion transfer behavior designed by the researcher. There are three different scenarios for the video: a positive scenario (PS), a negative scenario (NS), and a positive scenario from frontal view without the human (PSNH) as shown in Figure 10. The flow of the interactions is described below.

Figure 10.

There are three different scenarios for the video. The first is a positive scenario (PS) where a human makes an affirmative gesture (nodding) and accepts the object offered by the robot. The second is a negative scenario (NS) where the human makes a negative gesture and does not accept the object offered by the robot. Finally, a positive scenario from frontal view without the human (PSNH) was prepared.

<Initializing an Interaction>

- A robot is rearranging objects (Blue Cubes);

- A person stands in front of the robot;

- The robot notices the person and turns to face him;

- The robot offers the object to him (emotion transfer behavior #1).

<Pattern 1 (Positive Scenario)>

- The person accepts the object with an affirmative action (nodding);

- Robot goes to pick up the second object (emotion transfer behavior #2);

- The robot offers the second object to the person (emotion transfer behavior #3);

- The person accepts the object with an affirmative action.

<Pattern 2 (Negative Scenario)>

- The person makes a negative action and does not accept the object;

- Robot returns the object to its original place (emotion Transfer Behavior #2).

The main task was specified as the time-series 3D space position vector only and not with rotation vector so that the robot has more redundant degree of freedom to express motion in the null space. The duration was 7 s, and the joint angler velocity and acceleration at the start and end point was set to zero. Each emotional behavior is pre-generated, and the same emotional expression behavior is used in each scenario. After the carrying task with emotional motion, the robot was switched to position-based control and placed the object using a predefined position command without the null space motion, whose duration is 3 s (see Figure 10).

7. Result

This section presents the results of the evaluation of the technical aspects of the proposed method and the results of the evaluation related to human cognition. The results of technical aspects show if the proposed method generates motions that actually contain the desired motion features and if it is able to adjust it magnitude. There are four results of human recognition. First, there is the quality of the emotion conveyance. The second is the correspondence between the motion features (jerkiness, velocity, spatial extent) in the movement and the perceived PAD values. The third result is a comparison between those who estimated the robot’s emotions through positive scenarios and those who estimated them through negative scenarios. Finally, the results of the comparison of the PAD values perceived by those with high NARS and those with low scores are presented.

7.1. Generated Motions and Their Motion Features

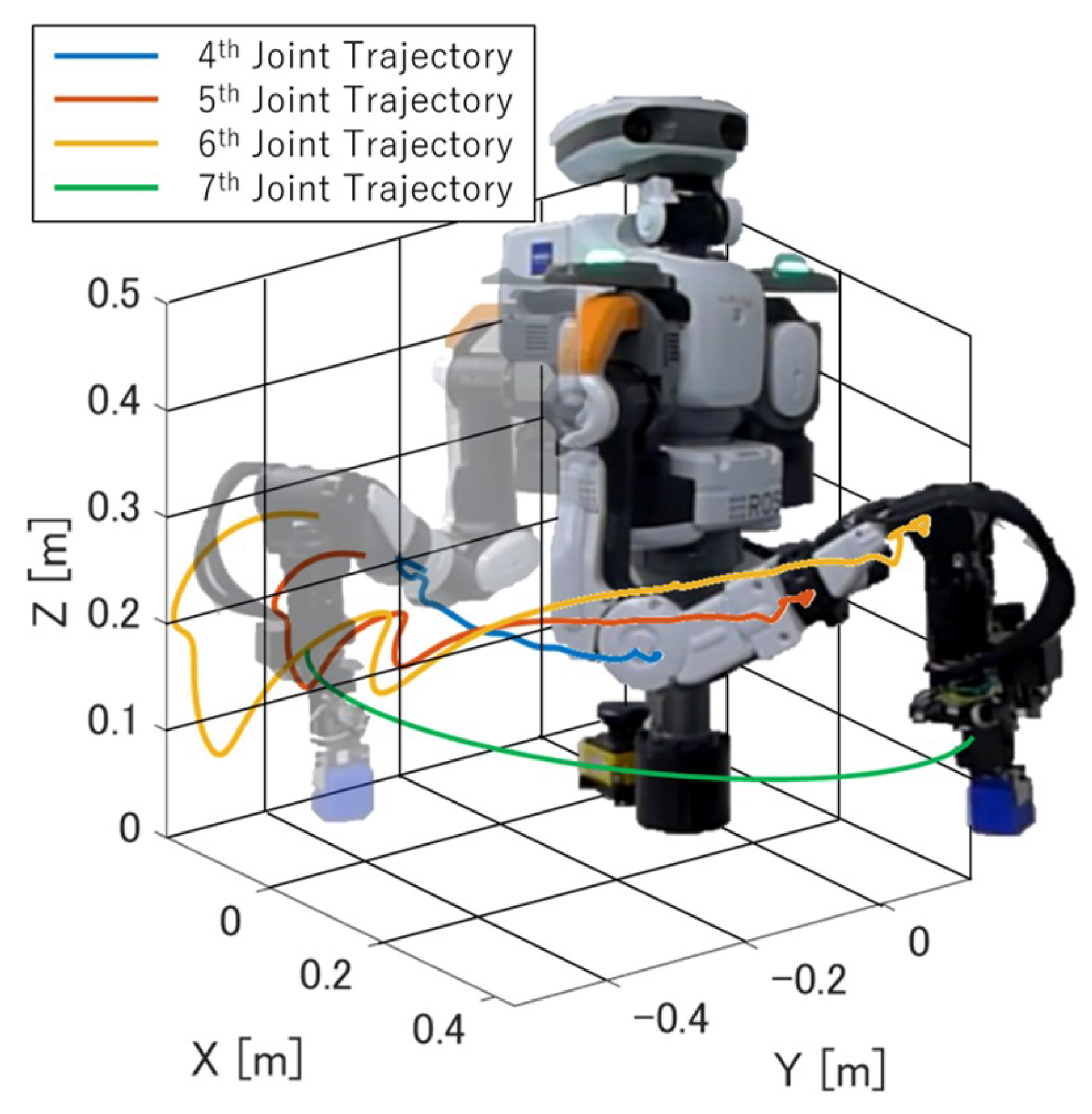

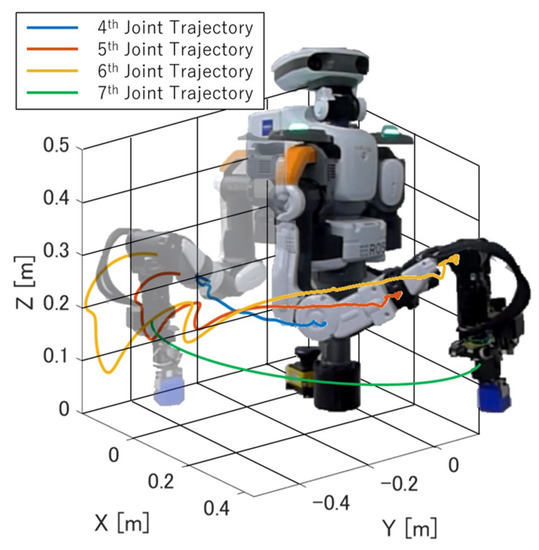

The three-dimensional trajectory of emotion “Hostile” is shown in Figure 11. As the figure shows, the 4th, 5th, and 6th joints are moving dynamically, while the end-defector keeps the predefined trajectory.

Figure 11.

The three-dimensional trajectory of emotion “Hostile”. As the figure shows, the 4th, 5th, and 6th joints are moving dynamically while the end-defector keeps the predefined semicircle trajectory.

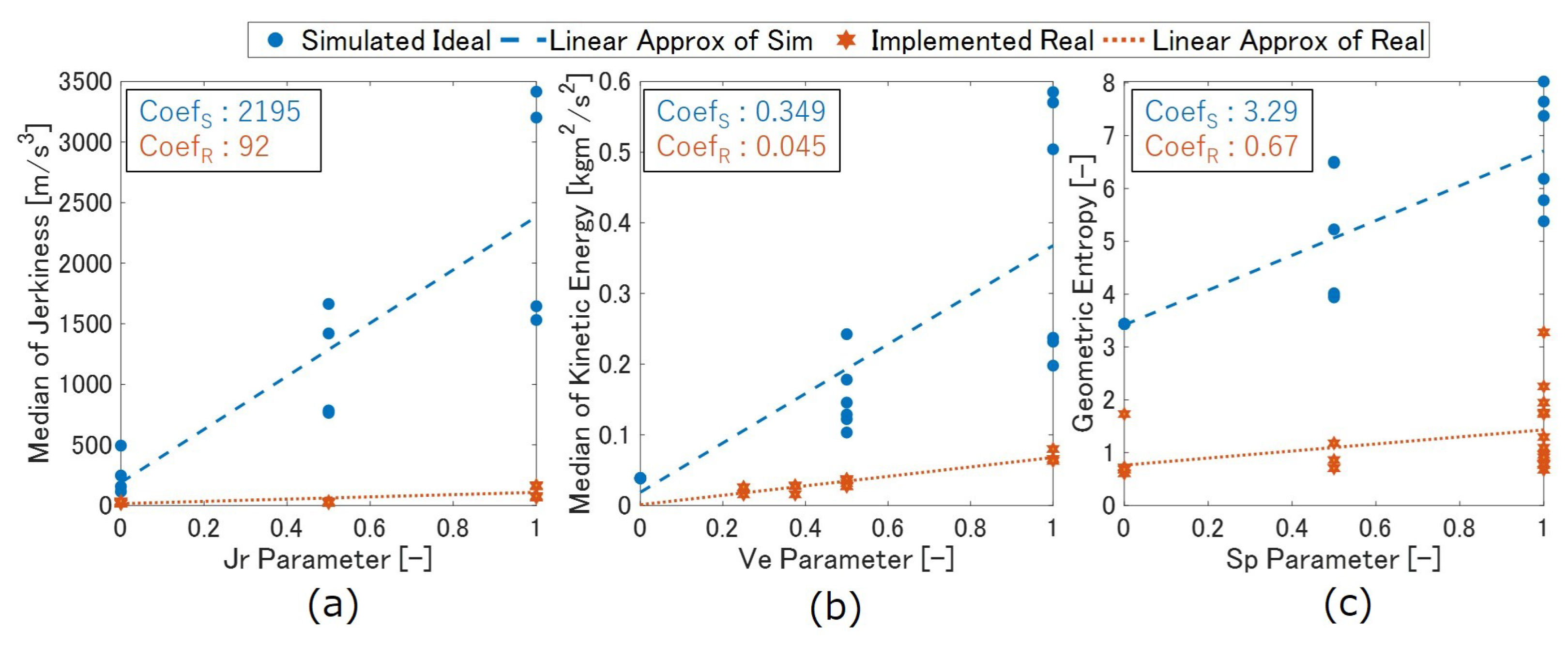

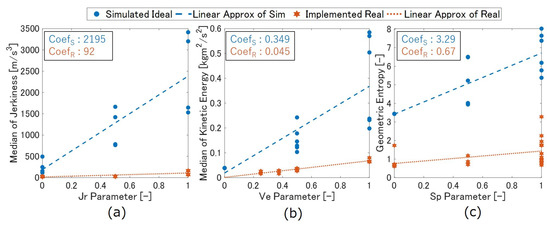

The proposed method is capable of adjusting the degree of jerkiness, kinetic energy, and spatial extent in the null space, respectively. In order to confirm this, the correspondence between the motion feature parameters—, , and —and the amount of the desired motion features index in the actually generated movement is presented in Figure 12. The dotted lines are lines approximated by the least-squares method. In all these cases, there was a positive correlation between the control parameters of the motion feature—, , and —and the amount of the target movement elements in the movements produced by them. In each figure, the two motion feature parameters other than the desired parameter are varied between . For example, in the right figure of Figure 12, which is plotted about the parameter, the plot shows the parameters and varied from 0, 0.5, and 1, respectively.

Figure 12.

Motion features of each generated motion. The proposed method is capable of adjusting the degree of jerkiness (a), kinetic energy (b), and spatial extent (c) in the null space, respectively. Those plotted in circles are the motions generated in the simulation environment (blue), and those plotted with star marks are the ones actually implemented to the real robot (orange).

Although the originally simulated motions, which are plotted in blue color, show a variety of motion features, the actually implemented motions, which are plotted in orange color, show limited variance in the motion features. Especially, in case of jerkiness, it does not show a wide rage of jerkiness even though the parameter varies. The discussion about this will follow in the subsequent Section 8.

7.2. Emotion Conveyance Results

Secondly, the results of the human perception of the robot’s emotions are presented. Table 1 shows the ratings of the robot’s emotions by the participants who viewed the aforementioned emotional expression videos in Section 6.2. The age distribution of the participants was: 20s: 43 (male: 14, female: 29), 30s: 106 (male: 43, female: 63) and 40s: 118 (male: 64, female: 54); the total is 267. They all have a Japanese cultural background.

Table 1.

Results of the emotion conveyance questionnaire 2.

There are three different scenarios for the video: a positive scenario (PS), a negative scenario (NS), and a positive scenario from the frontal view without the human (PSNH). Of the 267 respondents, 70 viewed and answered to PS, 87 viewed and answered to NS, and 110 answered to PSNH.

The values of the PAD parameters representing each emotion are shown besides the emotion. In the “MEAN” column, those highlighted in bold are those for which the majority of participants guessed the PAD value in the correct direction. “CONF MEAN” is the mean of the confidence values; after watching each video and estimating the PAD, the respondents were asked to rate on a scale of 1 to 5 how confident they were in answering that PAD (1: not confident at all, 5: very much confident). In the row of “Bored” and “Docile”, “x” represents any value between 0 and 1. The reason why they share the same row is that the motion feature parameters and of the motion assigned to these two emotions are both 0, which leads to zero amplitude of the emotional motion in Equation (11). This means that for these emotions, the null space motion will be no motion at all.

The following is a summary of the comments that were voluntarily answered at the end of the survey. A total of 113 comments were received. Of these, 38 mentioned that it was difficult to read the robot’s emotions. Conversely, 17 expressed that the robot was emotionally expressive. Of the participants who commented that it was difficult to read emotions, while eight people commented that they could not read emotions due to the lack of facial expression. A total of 41 people left positive comments about the experiment, which were hopeful about the future of robots and humans. On the other hand, seven people commented on their fear of robots and their desire for thoughtful development of intelligent robots.

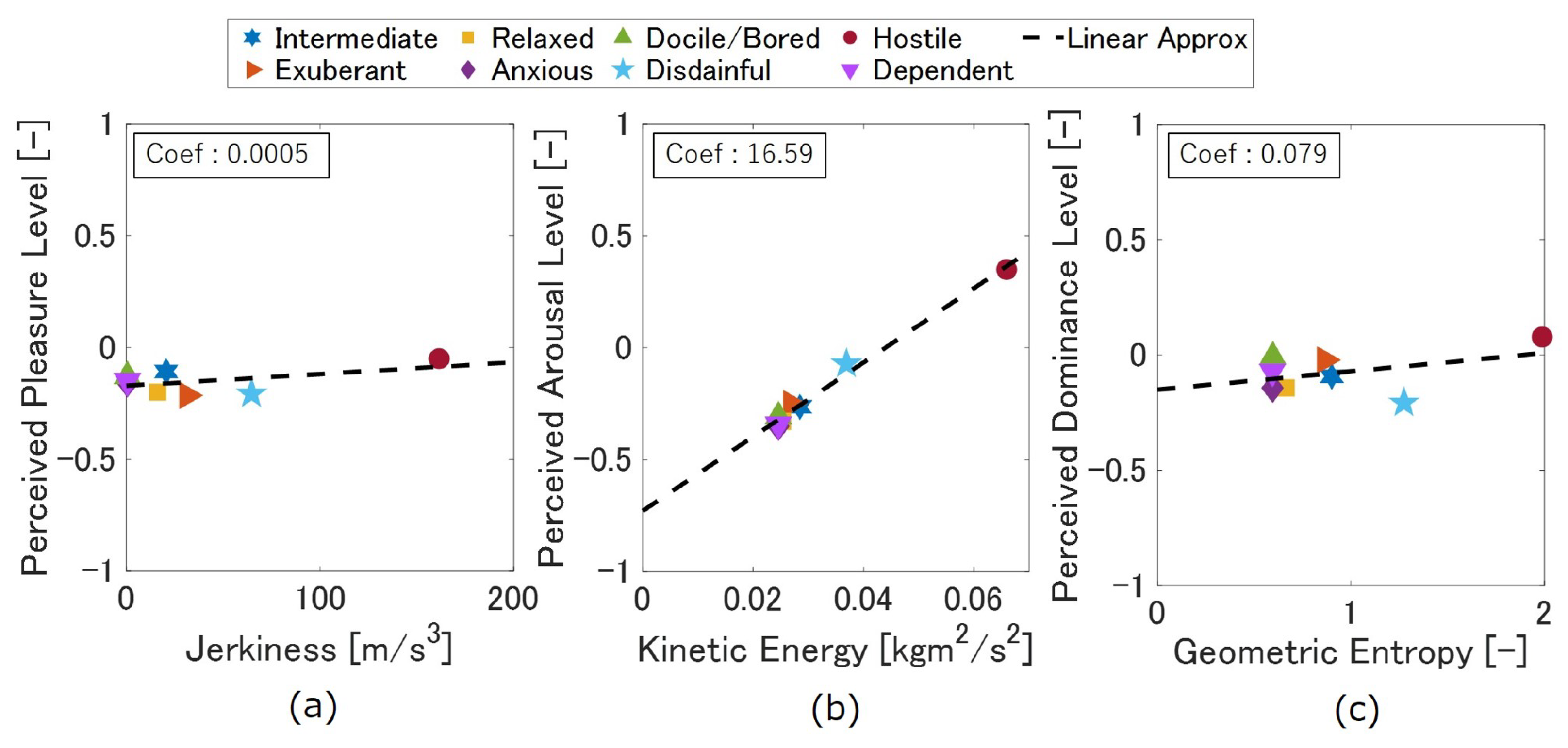

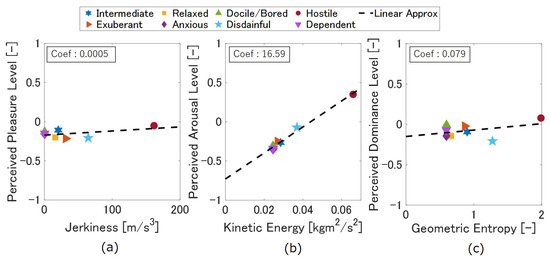

Here is the relationship between the magnitude of the motion features in each emotional movement and the perceived PAD values (Figure 13). Each plot represents each emotional motion, and the dotted lines are lines approximated by the least-squares method. While kinetic energy and perceived arousal level have positive correlation as other researchers suggest, the other two motion features did not vary much, and correlation was not that obvious from those results.

Figure 13.

Motion features and perceived emotions. Each plot represents a different emotion, and the dotted line is the approximate line by the least squares method. Each figure is for jerkiness (a), kinetic energy (b), and geometric entropy (c). “Hostile” was the only one with a distinctive motion features, while the other emotions were similar.

“Hostile” has a distinct value in terms of the motion feature as it intended. On the other hand, the movements other than Hostile were almost identical in terms of movement characteristics. This must be the reason why the emotion conveyance was not proper as seen in Table 1.

Table 2 compares the responses of those who estimated PAD by observing a positive scenario with those who responded by viewing a negative scenario. p-values are obtained using the “Mann–Whitney U Test”, and elements that are Significantly different (p < 0.05) are highlighted in bold.

Table 2.

Comparison between the group who estimated PAD by observing a positive scenario and the one that estimated PAD with the negative scenario.

This evaluation was conducted by right-tailed evaluation. To clarify, let F(u) and G(u) be the cumulative distribution functions of the distributions underlying x, an answer from PS and y, and an answer from NS, respectively. In this case, the distribution underlying x is stochastically grater than the distribution underlying y, i.e., F(u) > G(u) for all u. Thus, positive scenarios tended to be perceived with higher pleasure and dominance than negative ones.

Finally, Table 3 compares the answers of robot emotion (PAD) estimation tendencies of those with high and low NARS scores. Significant differences (p < 0.1) are highlighted in bold.

Table 3.

Comparison between the group with high and low NARS scores.

This evaluation was completed by left-tailed evaluation. To clarify, let F(u) and G(u) be the cumulative distribution functions of the distributions underlying x, an answer from high NARS people and y, and an answer from low NARS people, respectively. In this case, the distribution underlying x is stochastically less than the distribution underlying y, i.e., F(u) < G(u) for all u. Thus, people who has less negative attitudes toward robots tended to perceive robot emotion with higher pleasure, arousal and dominance.

It was difficult to draw clear-cut conclusions about the high and low Big Five personality factors and the perception of robot’s emotion. This is because the observed correlations were limited because robot’s emotional expressions were not so different from each other, as mentioned in Figure 13.

8. Discussion

Prior research has shown that motion features such as kinetic energy, jerkiness, and spatial extent affect human’s perception of emotions. Our approach seeks to change these elements through modulating the emotional parameters (PAD). The perceived emotion and the intensity of each movement element are in reference to previous research as described in Section 5.2.

The movements executed in the simulation environment were highly variable, whereas those that were implemented in the actual robot were relatively unvarying. The gap between the implementation and the simulation was due to safety concerns.

The Nextage-Open is capable of conducting industrial operations with high control accuracy. Therefore, it is equipped with heavy/powerful motors. The actor’s safety was our primary concern, so in the experiment, we limited the range of movements and joint velocity following the regulations based on the ISO/TS 15066 and ISO 10218-1 [33]. In addition, the joint angular jerkiness was limited to prevent any damage to the robot system.

According to ISO/TS 15066, the contact between a robot and a human is classified into two types: transient contact and quasi-static contact. Transient contact is defined as physical contact between the robot and human that lasts less than 0.5 s, i.e., a collision. Quasi-static contact is defined as contact that lasts longer than 0.5 s and is classified as a “pinching” accident.

ISO/TS 15066 also describes the maximum allowable pressure, which indicates how much force the human body can take before becoming injured. For example, in quasi-static contact, pressure of up to 150 N on the neck, 210 N for the back, and 140 N for the fingers are permissible. However, these numbers are simply indicators of when injury occurs, so they cannot be used as safety measures for motion generation. To avoid extreme cases, ISO/TS 15066 provides safety design measures such as speed limits to forces and torques. Table 4, which is from the same ISO document, provides a more realistic guide in ensuring the safety of cooperative robots.

Table 4.

Allowable energy transfer for each body part from ISO/TS 15066 [33].

The requirements specified in ISO/TS 15066 and ISO 10218-1 state that the moving speed of an industrial robot that can be used as a cooperative robot must not exceed 250 mm/s. When applied to the robot used in our study (torso–right arm: 12 kg), the maximum kinetic energy that can be exerted is roughly 0.375 . Therefore, an industrial robot is required to express emotional behavior in the range of 0–0.375 . Considering that the maximum value of kinetic energy in the implemented motion was 0.72 , it is clear that our method implements almost the maximum value of motion that can be adapted safely. The presented results show that emotional movements in the range of 0–0.375 are difficult for people to discriminate. In other words, current industrial robots must exaggerate their movements to a dangerous degree in order to express distinct emotions in a comprehensible manner. Thus, collision avoidance techniques and soft robots are needed to deal with this problem. In order for robots to move freely in human society, it is necessary to take human safety into consideration and abide by relevant laws. In light of this, soft robots and robots made of light materials will have an advantage in entering human society.

Another reason that participants were unable to distinguish between emotional motions may be that the conversion from PAD parameters to parameters was not appropriate. In particular, there is room for improvement in the conversion of the parameter, which is responsible for the amplitude of the motion in the null space. As shown in Figure 13, only “Hostile” had a noticeable change in the movement component. This is due to the fact that when converting PAD parameters to through in Equation (9), the parameters were set to take a range of 0 to 0.5 except for the case of “hostile”. The parameters were not scattered between 0 and 1 but clustered between 0 and 0.5, resulting in similar movements of the elements of the behavior except for the case of “hostile”.

As shown in Table 1, for all three scenarios, the majority correctly estimated the “arousal” level of “bored”, “hostile”, and “relaxed”. However, most of the items in the table have a standard deviation of more than 0.4, which suggests that participants had difficulty discerning emotions.

Between the responses when viewing the robot-only video (PSNH) and when viewing the HRI video (PS, NS), participants were relatively more confident in their estimation of emotion when viewing the HRI. This suggests that the context of the interaction greatly affected the recognition of emotion in the HRI, especially if it was difficult to find differences in emotional behavior. As shown in Table 2, the positive scenarios tended to be perceived with higher pleasure and dominance than the negative ones. The participants who observed the NS provided the following comments referring to the context of the interaction:

“I felt a little sorry for the robots that always got turned down.”

“It was easy to get a negative impression because I felt sad that the robot’s offer was not accepted.”

“The way the robot looked at people when they approached, and the way it seemed to be disappointed after being rejected were very human-like.”

In addition to the context of the interactions, prejudice toward robots also altered the emotional readings. Table 3 shows that those with high NARS were more likely to interpret the robot emotion PAD as low.

These results suggest that emotion judgments are made based on the emotion expression behavior, the content of the interaction (story), and the observer’s impression of the robot. In this case, because it was difficult to read emotions from the robot’s movements, the observers either rationalized the emotions from the scenario or perceived low negative emotions by unconsciously projecting their own prejudices toward the robot.

There were eight comments that referred to the robot’s facial expressions and facial movements, which were not used as emotional expression movements at this time. Those comments indicated that facial expressions and gaze (facial orientation) play an important role in conveying emotional information for humanoid robots. These elements are complementary, so that if one is missing, the others compensate for it, and then people try make sense of the emotion.

9. Conclusions

Sharing emotions between humans and robots is one of the most important elements of human–robot interaction (HRI). Through sharing emotion, people may find HRI socially variable and robots would be able to work at full capacity without aversion/disturbance from people.

We introduced a method of conveying emotions through a robot arm while it simultaneously executes other tasks. Our method utilizes a null space control scheme to exploit the kinematic redundancy of a robot manipulator. The null space control is for adding sub-tasks (additional motion) to the robot without affecting the task at the end-effector. We have also presented an effective motion generating method that combines manipulability ellipsoid and null space control. This method enables the robot to continue to move within the redundant degrees of freedom. Prior research has shown that motion features such as kinetic energy, jerkiness, and spatial extent affect humans’ perception of emotion. Thus, our approach seeks to adjust these features through modulating the emotional parameters (PAD).

Nextage-Open was used to implement the proposed method, and the HRI was recorded on video. Eight different emotions (Bored, Anxious, Dependent, Disdainful, Relaxed, Hostile, Exuberant, and Intermediate) were expressed for each interaction. Then, a questionnaire containing the Self-Assessment Manikin (SAM) scale, Negative Attitude Toward Robot Scale (NARS), and Big Five personality test was conducted via the internet using Google Forms.

Responses were collected from 267 people, and the results suggested that even when industrial machines perform emotional behaviors within the safety standards set by ISO/TS 15066, it is difficult to provide enough variety of motion for each emotion to be distinguished. However, the analysis of the generated motions in simulation showed that the proposed method can adjust the important motion features through modulating the motion feature parameters , and . Furthermore, the results suggest that emotion judgments are made based on the influence of the emotion expressing behavior, the context of the interaction (scenario), the observer’s impression of the robot, and gaze/facial expression, and these factors compensate for each other. In our study, because the robot’s emotional motion was difficult to distinguish, the observers either rationalized the emotions from the scenario or perceived low PAD emotions (negative, less active and passive) by unconsciously projecting their own prejudices toward the robot.

Based on the results of the motion generation and user test in this study, the next research direction is defined. Two specific points would be additionally verified. The first is to update the mapping in the conversion from emotion (PAD parameter) to affective motion elements ( parameter). In order to effectively map the findings from the cognitive engineering and kansei engineering fields, the mapping presented in Figure 6 could be improved so that the degree of motion feature is changed more adequately as the emotion (PAD parameter) changed. The second is the implementation of the presented method using smaller and safer robots. In this case, a large and powerful industrial robot was utilized, which resulted in the speed of movement being greatly limited for safety reasons. On the other hand, a smaller robot arm would be able to safely realize emotionally expressive movements with rich variations, which would be more visually stimulating for people.

Finally, if a robot unilaterally expresses emotions while ignoring the person’s emotional state, there will be no empathy between the two. A system that observes the person’s reactions and express emotions accordingly (human-in-loop) is also necessary and will be our next research direction. Moreover, because some robotic arms do not have practical joint position limits, (e.g., the Kinova Jaco arm), another interesting direction for this research would be to explore the possibility of expressing emotions through non-human like movements.

Author Contributions

Conceptualization, S.H. and G.V.; methodology, S.H. and G.V.; software, S.H.; validation, S.H. and G.V.; formal analysis, S.H.; investigation, S.H.; resources, S.H. and G.V.; data curation, S.H.; writing—original draft preparation, S.H.; writing—review and editing, G.V.; visualization, S.H.; supervision, G.V.; project administration, G.V.; All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

This research passed the ethical review at 11 August 2021 based on the article # 10 of the “Regulations about the Conduct of Research Involving Human participants” of Tokyo University of Agriculture and Technology (Approved No.210703-0319). The review was completed by the “Ethics Review Board for Research Involving Human participants” from Tokyo University of Agriculture and Technology.

Informed Consent Statement

Informed consent was acquired at the beginning of the Google Form by providing the information of the content and background of the research and possible risk for participation. In addition, participants were given the chance to freely express their comments on their impressions at the end of the questionnaire. Respondent IDs were anonymized so that individuals could not be identified. The actual questionnaire is available through the author’s Github repository (URL is in Appendix A).

Data Availability Statement

Not applicable.

Acknowledgments

This experiment was conducted with the support of Kawada Robotics Corp. Authors would like to especially thank Yuichiro Kawazumi, Victor Leve and Naoya Rikiishi for providing knowledge and opportunity to conduct the experiment.

Conflicts of Interest

The authors declare no conflict of interest.

Sample Availability

Samples of the compounds … are available from the authors.

Abbreviations

The following abbreviations are used in this manuscript:

| HRI | Human–Robot Interaction |

| PAD | Pleasure–Arousal–Dominance |

| ISO | International Organization for Standardization |

| DoF | Degree of Freedom |

| SVD | Singular Value Decomposition |

| PS | Positive Scenario |

| NS | Negative Scenario |

| PSNH | Positive Scenario with No Human |

| SAM | Self-Assessment Manikin |

| NARS | Negative Attitude Toward Robots Scale |

Appendix A. Actual Questionnaire [53]

https://github.com/shohei1536/Appendix-IJSR (accessed on 31 August 2022).

Appendix B. Mapping Function fv

References

- Smith, A.; Anderson, M. Automation in Everyday Life. Pew Research Center. 4 October 2017. Available online: https://www.pewresearch.org/internet/2017/10/04/automation-in-everyday-life/ (accessed on 7 May 2022).

- Knight, H. Expressive Motion for Low Degree-of-Freedom Robots. Ph.D. Thesis, Carnegie Mellon University, Pittsburgh, PA, USA, 2016. [Google Scholar]

- Beck, A.; Cañamero, L.; Hiolle, A.; Damiano, L.; Cosi, P.; Tesser, F.; Sommavilla, G. Interpretation of Emotional Body Language Displayed by a Humanoid Robot: A Case Study with Children. Int. J. Soc. Robot. 2013, 5, 325–334. [Google Scholar] [CrossRef]

- Claret, J.; Venture, G.; Basañez, L. Exploiting the Robot Kinematic Redundancy for Emotion Conveyance to Humans as a Lower Priority Task. Int. J. Soc. Robot. 2017, 9, 277–292. [Google Scholar] [CrossRef]

- Lim, A.; Ogata, T.; Okuno, G. Converting emotional voice to motion for robot telepresence. In Proceedings of the 2011 11th IEEE-RAS International Conference on Humanoid Robots, Bled, Slovenia, 26–28 October 2011; pp. 472–479. [Google Scholar] [CrossRef]

- Juang, M. Next-Gen Robots: The Latest Victims of Workplace Abuse. CNBC. 9 August 2017. Available online: https://www.cnbc.com/2017/08/09/as-robots-enter-daily-life-and-workplace-some-face-abuse.html (accessed on 7 May 2022).

- Simon, M. R.I.P., Anki: Yet Another Home Robotics Company Powers Down. WIRED. 29 April 2019. Available online: https://www.wired.com/story/rip-anki-yet-another-home-robotics-company-powers-down/ (accessed on 9 May 2022).

- Emika, F. Available online: https://www.franka.de/ (accessed on 30 September 2022).

- Kulić, D.; Croft, E. Physiological and subjective responses to articulated robot motion. Robotica 2007, 25, 13–27. [Google Scholar] [CrossRef]

- Khoramshahi, M.; Laurens, A.; Triquet, T.; Billard, A. From Human Physical Interaction To Online Motion Adaptation Using Parameterized Dynamical Systems. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 1361–1366. [Google Scholar] [CrossRef]

- Agravante, D.; Cherubini, A.; Bussy, A.; Gergondet, P.; Kheddar, A. Collaborative human-humanoid carrying using vision and haptic sensing. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–7 June 2014; pp. 607–612. [Google Scholar] [CrossRef]

- Erden, M.S.; Tomiyama, T. Human-Intent Detection and Physically Interactive Control of a Robot without Force Sensors. IEEE Trans. Robot. 2010, 26, 370–382. [Google Scholar] [CrossRef]

- Astrid, W.; Regina, B.; Manfred, T.; Eiichi, Y. Addressing user experience and societal impact in a user study with a humanoid robot. In Proceedings of the 23rd Convention of the Society for the Study of Artificial Intelligence and Simulation of Behaviour, AISB 2009, Edinburgh, UK, 6–9 April 2009; pp. 150–157. [Google Scholar]

- Ivaldi, S.; Anzalone, S.; Rousseau, W.; Sigaud, O.; Chetouani, M. Robot initiative in a team learning task increases the rhythm of interaction but not the perceived engagement. Front. Neurorobot. 2014, 8, 5. [Google Scholar] [CrossRef]

- Kamide, H.; Kawabe, K.; Shigemi, S.; Arai, T. Anshin as a concept of subjective well-being between humans and robots in Japan. Adv. Robot. 2015, 29, 1624–1636. [Google Scholar] [CrossRef]

- Schmidtler, J.; Bengler, K.; Dimeas, F.; Campeau-Lecours, A. A questionnaire for the evaluation of physical assistive devices (QUEAD): Testing usability and acceptance in physical human-robot interaction. In Proceedings of the 2017 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Banff, AB, Canada, 5–8 October 2017; pp. 876–881. [Google Scholar] [CrossRef]

- Björnfot, P.; Kaptelinin, V. Probing the design space of a telepresence robot gesture arm with low fidelity prototypes. In Proceedings of the 2017 ACM/IEEE International Conference on Human-Robot Interaction, Vienna, Austria, 6–9 March 2017; pp. 352–360. [Google Scholar] [CrossRef]

- Koenemann, J.; Burget, F.; Bennewitz, M. Real-time imitation of human whole-body motions by humanoids. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–7 June 2014; pp. 2806–2812. [Google Scholar] [CrossRef]

- Saerbeck, M.; Bartneck, C. Perception of affect elicited by robot motion. In Proceedings of the 2010 5th ACM/IEEE International Conference on Human-Robot Interaction (HRI), Osaka, Japan, 2–5 March 2010; pp. 53–60. [Google Scholar] [CrossRef]

- Russell, J. A Circumplex Model of Affect. J. Personal. Soc. Psychol. 1980, 39, 1161–1178. [Google Scholar] [CrossRef]

- Mehrabian, A. Pleasure-Arousal-Dominance: A General Framework for Describing and Measuring Individual Differences in Temperament. J. Curr. Psychol. 1996, 14, 261–292. [Google Scholar] [CrossRef]

- Nakagawa, K.; Shinozawa, K.; Ishiguro, H.; Akimoto, T.; Hagita, N. Motion modification method to control affective nuances for robots. In Proceedings of the 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems, St. Louis, MO, USA, 11–15 October 2009; pp. 5003–5008. [Google Scholar] [CrossRef]

- Corke, P. Dynamics and Control. In Robotics, Vision and Control: Fundamental Algorithms In MATLAB® Second, Completely Revised, Extended And Updated Edition; Springer International Publishing: Cham, Switzerland, 2017; pp. 251–281. [Google Scholar] [CrossRef]

- Liegeois, A. Automatic Supervisory Control of the Configuration and Behavior of Multibody Mechanisms. IEEE Trans. Syst. Man Cybern. 1977, 7, 868–871. [Google Scholar] [CrossRef]

- Klein, C.; Huang, C. Review of pseudoinverse control for use with kinematically redundant manipulators. IEEE Trans. Syst. Man Cybern. 1983, SMC-13, 245–250. [Google Scholar] [CrossRef]

- Dubey, R.; Euler, J.; Babcock, S. An efficient gradient projection optimization scheme for a seven-degree-of-freedom redundant robot with spherical wrist. In Proceedings of the 1988 IEEE International Conference on Robotics and Automation, Philadelphia, PA, USA, 24–29 April 1988; Volume 1, pp. 28–36. [Google Scholar] [CrossRef]

- Nemec, B.; Zlajpah, L. Null space velocity control with dynamically consistent pseudo-inverse. Robotica 2000, 18, 513–518. [Google Scholar] [CrossRef][Green Version]

- Maciejewski, A.; Klein, C. Obstacle Avoidance for Kinematically Redundant Manipulators in Dynamically Varying Environments. Int. J. Robot. Res. 1985, 4, 109–117. [Google Scholar] [CrossRef]

- Hollerbach, J.; Suh, K. Redundancy resolution of manipulators through torque optimization. IEEE J. Robot. Autom. 1987, 3, 308–316. [Google Scholar] [CrossRef]

- Baerlocher, P.; Boulic, R. Task-priority formulations for the kinematic control of highly redundant articulated structures. In Proceedings of the 1998 IEEE/RSJ International Conference on Intelligent Robots and Systems, Innovations in Theory, Practice and Applications (Cat. No.98CH36190), Victoria, BC, Canada, 17 October 1998; Volume 1, pp. 323–329. [Google Scholar] [CrossRef]

- Baerlocher, P.; Boulic, R. An inverse kinematic architecture enforcing an arbitrary number of strict priority levels. Vis. Comput. 2004, 20, 402–417. [Google Scholar] [CrossRef]

- Peng, Z.; Adachi, N. Compliant motion control of kinematically redundant manipulators. IEEE Trans. Robot. Autom. 1993, 9, 831–836. [Google Scholar] [CrossRef]

- Kirschner, R.; Mansfeld, N.; Abdolshah, S.; Haddadin, S. ISO/TS 15066: How Different Interpretations Affect Risk Assessment. arXiv 2022, arXiv:2203.02706. [Google Scholar] [CrossRef]

- Khalil, W.; Creusot, D. SYMORO: A system for the symbolic modelling of robots. Robotica 1997, 15, 153–161. [Google Scholar] [CrossRef]

- Nakamura, Y.; Hanafusa, H. Inverse Kinematic Solutions with Singularity Robustness for Robot Manipulator Control. J. Dyn. Syst. Meas. Control. 1986, 108, 163–171. [Google Scholar] [CrossRef]

- LBR iiwa. Available online: https://www.kuka.com/ja-jp/%E8%A3%BD%E5%93%81%E3%83%BB%E3%82%B5%E3%83%BC%E3%83%93%E3%82%B9/%E3%83%AD%E3%83%9C%E3%83%83%E3%83%88%E3%82%B7%E3%82%B9%E3%83%86%E3%83%A0/%E7%94%A3%E6%A5%AD%E7%94%A8%E3%83%AD%E3%83%9C%E3%83%83%E3%83%88/lbr-iiwa (accessed on 30 September 2022).

- Glowinski, D.; Dael, N.; Camurri, A.; Volpe, G.; Mortillaro, M.; Scherer, K. Toward a Minimal Representation of Affective Gestures. IEEE Trans. Affect. Comput. 2011, 2, 106–118. [Google Scholar] [CrossRef]

- Wallbott, G.; Scherer, R. Cues and channels in emotion recognition. J. Personal. Soc. Psychol. 1986, 51, 690–699. [Google Scholar] [CrossRef]

- Montepare, M.; Koff, E.; Zaitchik, D.; Albert, S. The Use of Body Movements and Gestures as Cues to Emotions in Younger and Older Adults. J. Nonverbal Behav. 1999, 23, 133–152. [Google Scholar] [CrossRef]

- Cordier, P.; Mendés, F.; Pailhous, J.; Bolon, P. Entropy as a global variable of the learning process. Hum. Mov. Sci. 1994, 13, 745–763. [Google Scholar] [CrossRef]