On the Construction of a Two-Step Sixth-Order Scheme to Find the Drazin Generalized Inverse

Abstract

1. Preliminary Remarks

- Introduction of an iterative approach for computing the Drazin inverse.

- Improvement in CPU time compared to well-known existing methods.

- Analytical investigation of the method’s behavior.

- Superior computational efficiency due to reduced computational complexity.

2. Associated Studies

3. Development of a Novel Iterative Approach

4. Theoretical Parts

- The convergence proof demonstrates that the error decreases exponentially, with a sixth-order convergence rate, ensuring rapid approximation of the Drazin inverse.

- A key feature is the reduction in computational effort due to fewer matrix–matrix multiplications while maintaining high accuracy.

- The choice of an appropriate initial value plays a crucial role in ensuring the method’s reliability.

- These insights provide a clear understanding of why the proposed method outperforms existing iterative schemes in both speed and efficiency, especially when the matrix norm-2 is required for each computing step.

5. Efficiency Comparison

6. Experiments

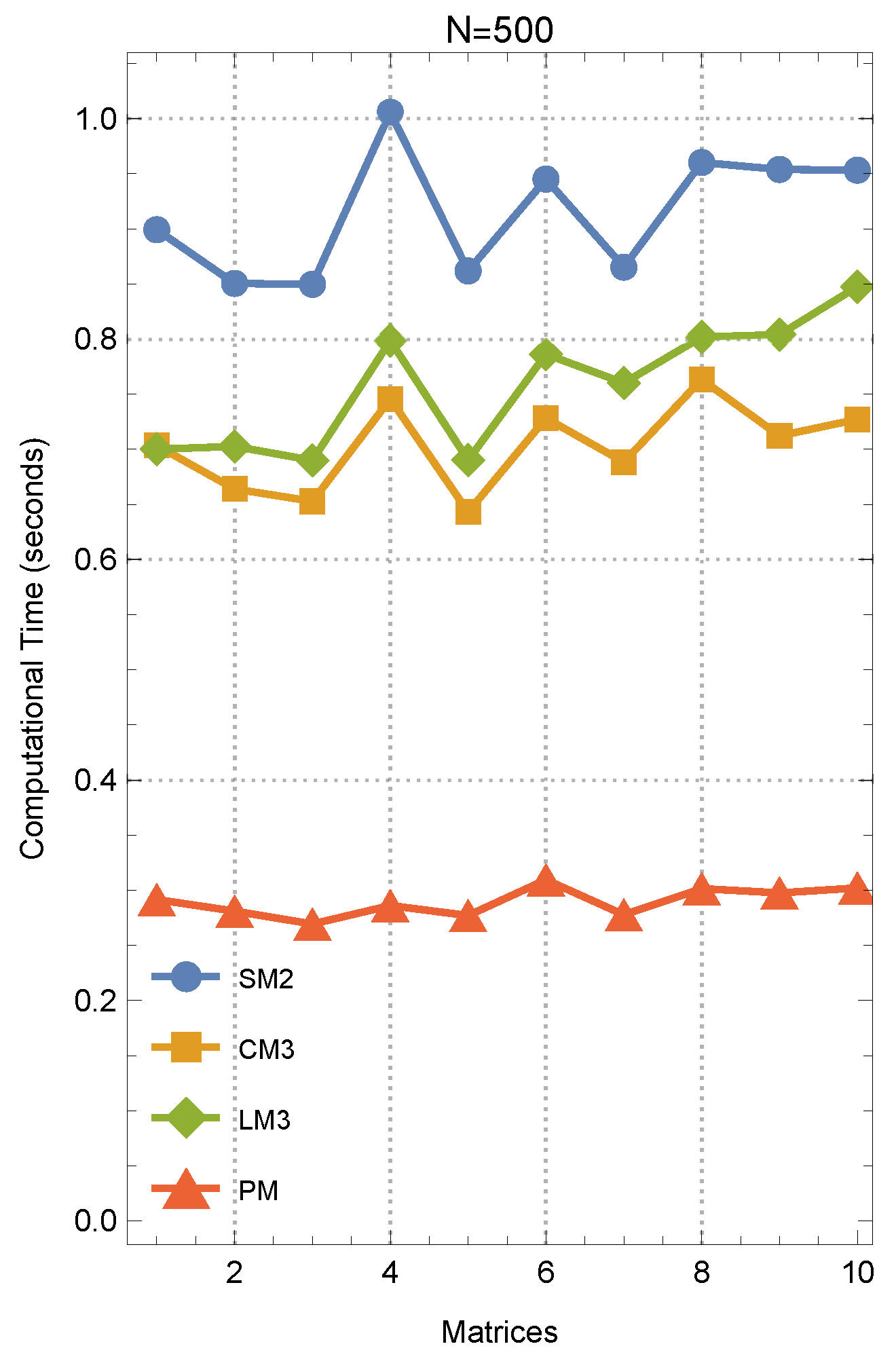

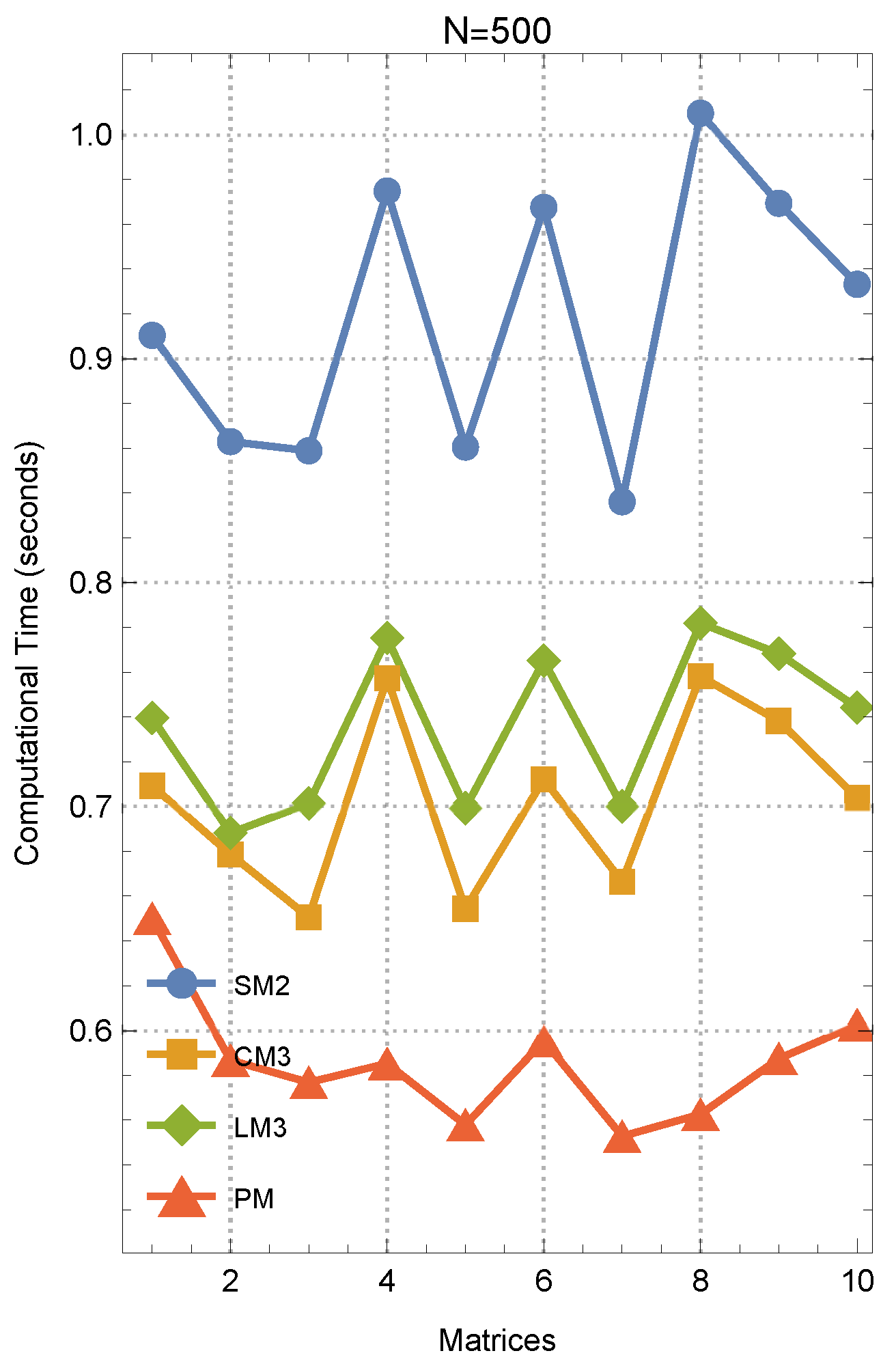

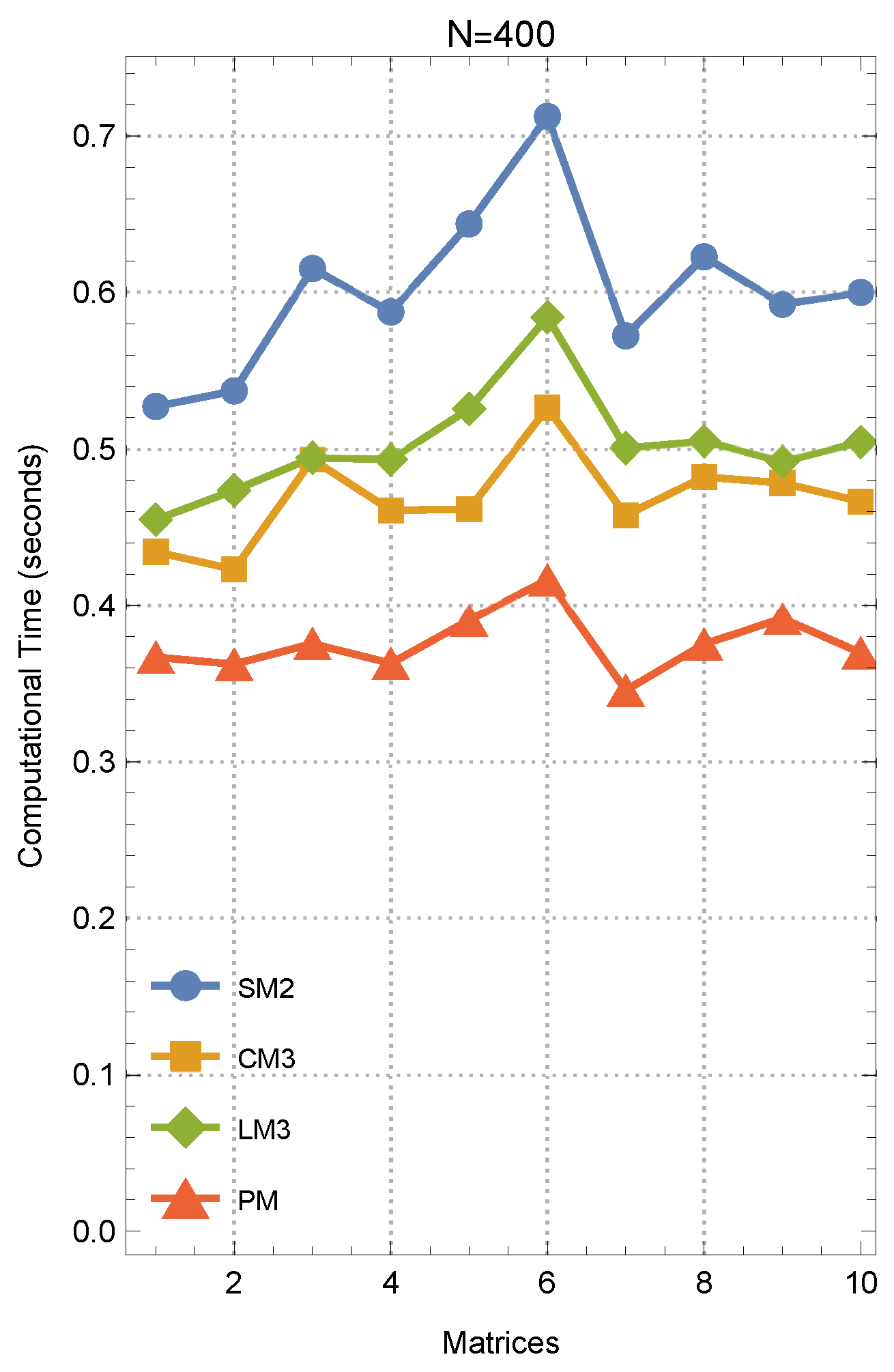

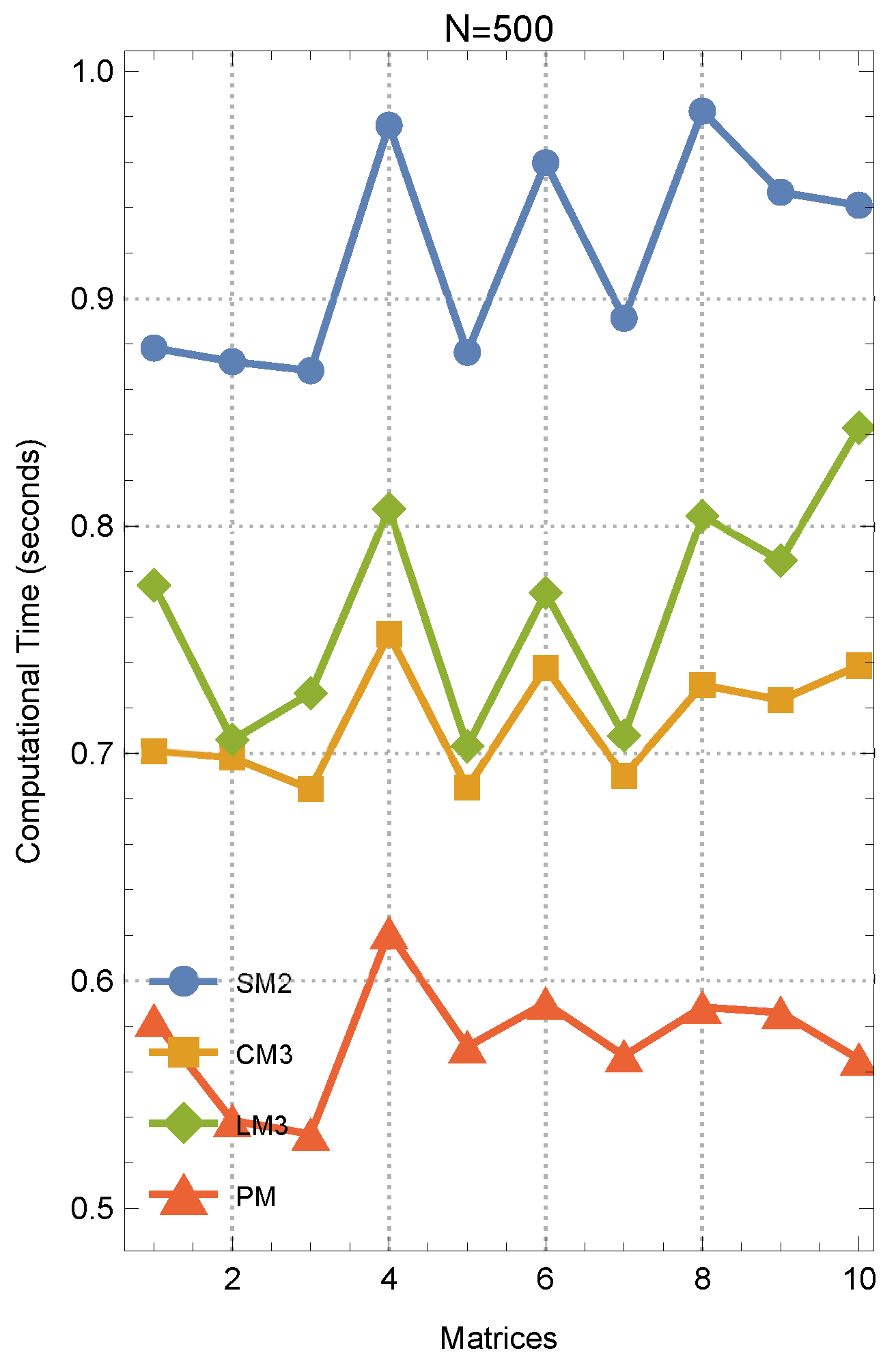

- Computation times for obtaining approximate inverses are measured in seconds.

- When reporting elapsed times, all methods under comparison are executed within an identical computational environment.

- ClearAll["Global‘*"]

- SeedRandom[12];

- n1 = 500; n2 = n1; number = 10; max1 = 75;

- Table[A[j] = RandomReal[{0, 1}, {n1, n2}], {j, number}];

- In this test, convergence is determined by the stopping criterion . Additionally, the initial value is generated by means of

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ghorbanzadeh, M.; Mahdiani, K.; Soleymani, F.; Lotfi, T. A class of Kung-Traub-Type iterative algorithms for matrix inversion. Int. J. Appl. Comput. Math. 2016, 2, 641–648. [Google Scholar] [CrossRef]

- Zehra, A.; Younus, A.; Tunç, C. Controllability and observability of linear impulsive differential algebraic system with Caputo fractional derivative. Comput. Methods Differ. Equ. 2022, 10, 200–214. [Google Scholar]

- Ben-Israel, A.; Greville, T.N.E. Generalized Inverses: Theory and Applications, 2nd ed.; Springer: New York, NY, USA, 2003. [Google Scholar]

- Ma, X.; Kumar Nashine, H.; Shil, S.; Soleymani, F. Exploiting higher computational efficiency index for computing outer generalized inverses. Appl. Numer. Math. 2022, 175, 18–28. [Google Scholar] [CrossRef]

- Jebreen, H.B.; Chalco-Cano, Y. An improved computationally efficient method for finding the Drazin inverse. Discrete Dyn. Nat. Soc. 2018, 2018, 6758302. [Google Scholar] [CrossRef]

- Guo, L.; Hu, G.; Yu, D.; Luan, T. A representation of the Drazin inverse for the sum of two matrices and the anti-triangular block matrices. Mathematics 2023, 11, 3661. [Google Scholar] [CrossRef]

- Sayevand, K.; Pourdarvish, A.; Machado, J.A.T.; Erfanifar, R. On the calculation of the Moore-Penrose and Drazin inverses: Application to fractional calculus. Mathematics 2021, 9, 2501. [Google Scholar] [CrossRef]

- Shil, S.; Kumar Nashine, H.; Soleymani, F. On an inversion-free algorithm for the nonlinear matrix problem . Int. J. Comput. Math. 2022, 99, 2555–2567. [Google Scholar] [CrossRef]

- Wei, Y. Index splitting for the Drazin inverse and the singular linear system. Appl. Math. Comput. 1998, 95, 115–124. [Google Scholar] [CrossRef]

- Drazin, M.P. Pseudoinverses in associative rings and semigroups. Amer. Math. Monthly 1958, 65, 506–514. [Google Scholar] [CrossRef]

- Wilkinson, J.H. Note on the practical significance of the Drazin inverse. In Recent Applications of Generalized Inverses, Pitman Advanced Publishing Program; Campbell, S.L., Ed.; Research Notes in Mathematics No. 66, Boston; Pitman: London, UK, 1982; pp. 82–99. [Google Scholar]

- Kaczorek, T.; Ruszewski, A. Application of the Drazin inverse to the analysis of pointwise completeness and pointwise degeneracy of descriptor fractional linear continuous-time systems. Int. J. Appl. Math. Comput. Sci. 2020, 30, 219–223. [Google Scholar] [CrossRef]

- Romo, F.P. On G-Drazin inverses of finite potent endomorphisms and arbitrary square matrices. Linear and Multilinear Algebra 2022, 70, 2227–2247. [Google Scholar] [CrossRef]

- Kyrchei, I. Explicit formulas for determinantal representations of the Drazin inverse solutions of some matrix and differential matrix equations. Appl. Math. Comput. 2013, 219, 7632–7644. [Google Scholar] [CrossRef]

- Pan, V.Y. Structured Matrices and Polynomials: Unified Superfast Algorithms; BirkhWauser: Boston, FL, USA; Springer: New York, NY, USA, 2001. [Google Scholar]

- Schulz, G. Iterative Berechnung der Reziproken matrix. Z. Angew. Math. Mech. 1933, 13, 57–59. [Google Scholar] [CrossRef]

- Li, X.; Wei, Y. Iterative methods for the Drazin inverse of a matrix with a complex spectrum. Appl. Math. Comput. 2004, 147, 855–862. [Google Scholar] [CrossRef]

- Li, H.-B.; Huang, T.-Z.; Zhang, Y.; Liu, X.-P.; Gu, T.-X. Chebyshev-type methods and preconditioning techniques. Appl. Math. Comput. 2011, 218, 260–270. [Google Scholar] [CrossRef]

- Krishnamurthy, E.V.; Sen, S.K. Numerical Algorithms—Computations in Science and Engineering; Affiliated East-West Press: New Delhi, India, 1986. [Google Scholar]

- Traub, J.F. Iterative Methods for Solution of Equations; Prentice-Hall: Englewood Cliffs, NJ, USA, 1964. [Google Scholar]

- Sen, S.K.; Prabhu, S.S. Optimal iterative schemes for computing Moore-Penrose matrix inverse. Int. J. Sys. Sci. 1976, 8, 748–753. [Google Scholar] [CrossRef]

- Bini, D.A. Numerical computation of the roots of Mandelbrot polynomials: An experimental analysis. Electron. Trans. Numer. Anal. 2024, 61, 1–27. [Google Scholar] [CrossRef]

- Ogbereyivwe, O.; Atajeromavwo, E.J.; Umar, S.S. Jarratt and Jarratt-variant families of iterative schemes for scalar and system of nonlinear equations. Iran. J. Numer. Anal. Optim. 2024, 14, 391–416. [Google Scholar]

- Hernández, M.; Salanova, M. A family of Chebyshev-Halley type methods. Int. J. Comput. Math. 1993, 47, 59–63. [Google Scholar] [CrossRef]

- Ivanov, S.I. Unified convergence analysis of Chebyshev-Halley methods for multiple polynomial zeros. Mathematics 2022, 10, 135. [Google Scholar] [CrossRef]

- Amat, S.; Busquier, S. After notes on Chebyshev’s iterative method. Appl. Math. Nonlinear Sci. 2017, 2, 1–12. [Google Scholar]

- Artidiello, S.; Cordero, A.; Torregrosa, J.R.P.; Vassileva, M. Generalized inverses estimations by means of iterative methods with memory. Mathematics 2020, 8, 2. [Google Scholar] [CrossRef]

- Torkashvand, V.; Kazemi, M.; Azimi, M. Efficient family of three-step with-memory methods and their dynamics. Comput. Methods Differ. Equ. 2024, 12, 599–609. [Google Scholar]

- Canela, J.; Evdoridou, V.; Garijo, A.; Jarque, X. On the basins of attraction of a one-dimensional family of root finding algorithms: From Newton to Traub. Math. Z. 2023, 303, 55. [Google Scholar] [CrossRef]

- Kostadinova, S.G.; Ivanov, S.I. Chebyshev’s method for multiple zeros of analytic functions: Convergence, dynamics and real-world applications. Mathematics 2024, 12, 3043. [Google Scholar] [CrossRef]

- Soleymani, F.; Stanimirović, P.S. A higher order iterative method for computing the Drazin inverse. Sci. World J. 2013, 2013, 708647. [Google Scholar] [CrossRef]

- Sánchez León, J.G. Mathematica Beyond Mathematics: The Wolfram Language in the Real World; Taylor & Francis Group: Boca Raton, FL, USA, 2017. [Google Scholar]

- Trott, M. The Mathematica Guide-Book for Numerics; Springer: New York, NY, USA, 2006. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, K.; Soleymani, F.; Shateyi, S. On the Construction of a Two-Step Sixth-Order Scheme to Find the Drazin Generalized Inverse. Axioms 2025, 14, 22. https://doi.org/10.3390/axioms14010022

Zhang K, Soleymani F, Shateyi S. On the Construction of a Two-Step Sixth-Order Scheme to Find the Drazin Generalized Inverse. Axioms. 2025; 14(1):22. https://doi.org/10.3390/axioms14010022

Chicago/Turabian StyleZhang, Keyang, Fazlollah Soleymani, and Stanford Shateyi. 2025. "On the Construction of a Two-Step Sixth-Order Scheme to Find the Drazin Generalized Inverse" Axioms 14, no. 1: 22. https://doi.org/10.3390/axioms14010022

APA StyleZhang, K., Soleymani, F., & Shateyi, S. (2025). On the Construction of a Two-Step Sixth-Order Scheme to Find the Drazin Generalized Inverse. Axioms, 14(1), 22. https://doi.org/10.3390/axioms14010022