1. Introduction

The problem of finding a point in the intersection of closed and convex subsets in real Hilbert spaces has appeared severally in diverse areas of mathematics and physical sciences. This problem is commonly referred to as the Convex Feasibility Problem (shortly, CFP), and finds its applications in various disciplines such as image restoration, computer tomograph and radiation therapy treatment planning, see [

1]. A generalization of the CFP is the Split Feasibility Problem (SFP) which was introduced by Censor and Elfving [

2] and defined as finding a point in a nonempty closed convex set, whose image under a bounded operator is in another set. Mathematically, the SFP can be formulated as:

where

C and

Q are nonempty closed convex subsets of

and

respectively, and

A is a given matrix of dimension

The SFP also models inverse problems arising from phase retrieval and intensity modulated radiation therapy [

2]. Censor et al. [

3] further introduced another generalization of the CFP and SFP called the Multiple Set Split Feasibility Problem (MSSFP) which is formulated as

where

and

are given integers,

A is a given

real matrix with

its transpose,

and

are nonempty closed convex subsets of

and

respectively. Observe that when

the MSSFP reduces to SFP (

1). In this paper, we focus on the MSSFP in a unified framework. We denote the set of solutions of (

2) by

and assume that

is consistent (i.e., nonempty). It is well known that the MSSFP is equivalent to the following minimization problem:

where

and

are the orthogonal projections onto

C and

Q respectively. For solving (

3), Censor et al. [

3] defined a proximity function

for measuring the distance of a point to all sets as follows:

where

and

j respectively, and

It is easy to see that

Censor et al. [

3] also introduced the following projection method for solving the MSSFP:

where

s is a positive scalar. They further proved the weak convergence of (

5) under the condition that the stepsize

s satisfies

where

is the Lipschitz constant of

. A major setback of (

5) is the fact that the algorithm used a fixed stepsize which is restricted by the Lipschitz constant (this depends on the largest eigenvalue of the matrix

). Computing the largest eigenvalue of

is usually difficult and its conservation results in slow convergence. More so, note that the projection onto the sets

C and

Q are often difficult to calculate when the sets are not simple. This can also result in the complication of (

5). Several efforts have been made in order to find best appropriate modifications of (

5) without the setbacks in infinite dimensional real Hilbert spaces. For instance, Zhao and Yang [

4] introduced a new projection method such that the stepsize

s is selected via an Armijo line search technique for solving the MSSFP. However, this line search process required extra inner iteration for obtaining a suitable stepsize. The authors in [

5] also introduced a self-adaptive projection method which requires the computation of the stepsize directly without any inner iteration. More so, López et al. [

6] introduced a relaxed projection method with a fixed stepsize and proved a weak convergence result for solving the MSSFP. He et al. [

7] further combined a Halpern iterative scheme with the relaxed projection method and proved a strong convergence result for solving the MSSFP. Recently, Suantai et al. [

8] introduced an inertial relaxed projection method with a self-adaptive stepsize for solving the MSSFP. Also, Wen et al. [

9] introduced a cyclic-simultaneous projection method and proved weak convergence result for solving the MSSFP.

Constructing iterative schemes with a faster rate of convergence are usually of great interest. The inertial-type algorithm which originated from the equation for an oscillator with damping and conservative restoring force has been an important tool employed in improving the performance of algorithms and has some nice convergence characteristics. In general, the main feature of the inertial-type algorithms is that we can use the previous iterates to construct the next one. Since the introduction of the inertial-like algorithm, many authors combined the inertial term together with different kinds of iterative algorithms, including Mann, Kranoselski, Halpern, Viscosity, to mention a few, to approximate solutions of fixed point problems and optimization problems. Most authors were able to prove weak convergence results while few proved strong convergence results.

Polyak [

10] was the first author to propose the heavy ball method, Alvarez and Attouch [

11] employed this to the setting of a general maximal monotone operator using the Proximal Point Algorithm (PPA), which is called the inertial PPA, and is of the form:

They proved that if

is non-decreasing and

with

then the Algorithm (

6) converges weakly to a zero of a maximal monotone operator

B. More precisely, condition (

7) is true for

. Here

is an extrapolation factor. Other initial-type algorithms can be found in, for instance [

12,

13,

14,

15,

16,

17].

Motivated by the works of Wen et al. [

9] and López et al. [

6], in this paper, we introduce a general viscosity relaxed projection method with inertial process for solving the MSSFP with the fixed point of strictly pseudo-nonspreading mappings in real Hilbert spaces. The stepsize of our algorithm is selected self-adaptively in each iteration and its convergence does not involve prior estimate of the matrix

More so, we define some sublevel sets whose projections can be calculated explicitly using the formula in [

18]. The general viscosity approximation method guarantees strong convergence of the sequences generated by the algorithm. This improves the weak convergence results proved in [

6,

9,

19]. We further provide some numerical experiments to illustrate the performance and accuracy of our algorithm. Our results improve and complement the results of [

6,

7,

8,

9,

19,

20,

21,

22,

23,

24] and many other results in this direction.

2. Preliminaries

We state some known and useful results which will be needed in the proof of our main theorem. In the sequel, we denote strong and weak convergence by “→” and “⇀”, respectively.

Let C be a nonempty closed convex subset of a real Hilbert space H with inner product and norm . Let be a nonlinear mapping and be the set of all fixed points of S.

A mapping is called

quasi-nonexpansive, if

is nonempty, and

k-strictly pseudo-nonspreading in terms of Browder-Petryshyn [

26], if there exists

such that

Remark 1. - (a)

If is a nonspreading mapping with , then S is quasi-nonexpansive and is closed and convex.

- (b)

It is also clear that every nonspreading mapping is k-strictly pseudo-nonspreading with k=0, but the converse is not true, see example 3 in [27].

Lemma 1. [27] Let be a k-strictly pseudo-nonspreading mapping with . Denote , where , then - (a)

- (b)

the following inequality holds: - (c)

is a quasi-nonexpansive mapping.

Lemma 2. [28] Let be nonempty, closed and convex set. Then, and - 1.

- 2.

- 3.

Lemma 3. [29] Let H be a real Hilbert space and be a bounded sequence in H. For such that , the following identity holds More so, from Lemma 3, we get the following result.

Lemma 4. [30] For all the following inequality holds:where Lemma 5. [27] Let C be a closed convex subset of H, be a k-strictly pseudo-nonspreading mapping with . If is a sequence in C which converges weakly to p and converges strongly to q, then . In particular, if , then . Lemma 6. [31] Let be a sequence of nonegative real numbers be a sequence of real numbers in with conditions and be a sequence of real numbers. Assume that If for every subsequence of satisfying the condition:

3. Main Results

In this section, we present our iterative algorithm and its convergence result.

Let and be real Hilbert spaces, C be a nonempty, closed and convex subset of a real Hilbert space H and be a sequence of -contractive self maps of H with Suppose that is uniformly convergent to for any where D is a bounded subset of C, let be a countable family of -strictly pseudo-nonspreading mapping with and where and

Before we state our algorithm, we assume that the following conditions hold:

- (A1)

The set is given by where are convex functions. Also, the set is given by are convex functions. In addition, we assume that both and are subdifferentiable on and respectively and and are bounded operators.

- (A2)

For any

and

at least one subgradient

and

can be calculated, where

and

denote the subdifferentials of

and

at

x and

y respectively, i.e.,

and

- (A3)

We set

and

as the half-spaces defined by

where

and

where

- (A4)

We define the proximity function by

where

Then the gradient of

is given by

- (A5)

The control sequences and are chosen such that

- -

- -

- -

with

- -

and

We now present our algorithm as follows:

First we show that the sequence

generated by Algorithm 1 is bounded.

| Algorithm 1: GVA |

- Step 0:

Select the initial point and the sequences such that Assumption (A5) is satisfied. Set - Step 1:

Given the nth iterate (i.e., ), if STOP. Otherwise, compute

where the stepsize is defined by

- Step 2:

- Step 3:

Set and return to Step 1.

|

Lemma 7. Suppose the solution set and is the sequence generated by Algorithm 1. Then is bounded.

Proof. Let

and

By applying the nonexpansivity property of the projection mapping and Lemma 4, we have

Also from Lemma 2, we obtain

On substituting (

9) into (

8), we have

More so from Lemma 1, we get

Therefore from (

10) and (

11), we have

Since

is uniformly convergent on

D, it follows that

is bounded. Thus, there exists a positive constant

M, such that

. By induction, we obtain

Hence, is bounded. Consequently and are all bounded. □

We now give our main convergence theorem.

Theorem 1. Suppose that and Assumptions (A1)–(A5) hold. Then, the sequence generated by Algorithm 1 converges strongly to point which is a unique solution of the variational inequality Proof. From Lemma 1 (c), Lemma 3 and (

10), we have

Now, from (

10) and (

12), we have that

Putting

, in view of Lemma 5, we need to prove that

for every

of

satisfying the condition

To show this, suppose that

is a subsequence of

such that (

14) holds. Then

Now, using (

12), we have that

Furthermore, using (

12) and following the same approach as in (

15), we also have that

Since

is Lipschitz continuous and

is bounded, so

is also bounded. Hence from (

18), we can conclude that

which also implies that

Since

is bounded on bounded sets, there exists

such that

Please note that

thus we get

Since

is bounded and

C is closed and convex, we can suppose that the subsequence

of

converges weakly to

We now show that

By the weakly lower semicontinuity of

and boundedness of

A, we have

Then , . This implies that Next we show that

Let

. Since

and

are bounded, there exist subsequences

and

which all converges to

. Using (

10), we have that

By applying Lemma 2 (iii), we have that

By taking lim sup as

on both sides of (

22) and following the same argument as in (

15), we have that

Also, from the definition of

we have from (

19) that

Using (

23) and (

24), we obtain that

Since

is bounded on bounded sets, there exists

such that

Thus,

By the lower semicontinuity of

, we have

Hence

for

which implies that

Hence

Furthermore, we have from (

23) and (

24) that

Then, from the demiclosedness of

k-strictly pseudo-nonspreading mappings (Lemma 5), (

16) and (

25), we obtain

Therefore,

Next is to prove that

converges strongly to

. Also, (

16), we have

More so, from (

25) and (

26), we obtain

Next is to prove that the

Indeed, take a subsequence

of

such that

. Hence, we have

Since

is uniformly convergent on D, we have that

Now, from (

28) and Lemma 4 (i), we obtain

Using Schwartz’s inequality, we have

By the boundedness of

then by (

28) and (

29), we have

Applying (

30) and Lemma 5 in (

13), we obtain that

converges to

z. This completes the proof. □

Next, we give a generalized viscosity approximation method with inertial term which can be regard as a procedure for speeding up the convergence properties of Algorithm 1. In addition to Assumptions (A1)–(A5), we choose a sequence

with

and

Remark 2. From (31) and Step 1, it is easy to see that Indeed, we have for each , which together with (31) implies that Lemma 8. Suppose the solution set and is the sequence generated by Algorithm 2. Then is bounded.

| Algorithm 2: IGVA |

- Step 0:

Select the initial points and the sequences such that Assumption (A5) and ( 31) are satisfied. Set - Step 1:

Given the th and nth iterates (i.e., and ). Choose such that , where

where Compute

and

where the stepsize is defined by

- Step 2:

- Step 3:

Set and return to Step 1.

|

Proof. Let

, using Step 1, we get

By the condition

, there exists a constant

such that

,

. Following similar argument as in the prove of (

10) in Algorithm 1, we have

Also as in (

11), putting

then we get

Then, it follows from (

32), (

33) and (

34) that

Since

is uniformly convergence on D, it follows that

is bounded. Thus, there exists a positive constant

such that

. Thus, it follows by induction that

Therefore is bounded. □

Theorem 2. Suppose that and Assumptions (A1)–(A5) with (31) hold. Then, the sequence generated by Algorithm 2 converges strongly to point which is a unique solution of the variational inequality Proof. Let

, then we have from Step 1 that

where

Similarly as in (

12), we get

Using Step 1, we have that

Now, from (

36), we have that

Next is to show that the

Indeed, take a subsequence

of

such that

. Hence, we have

Since

is uniformly convergent on

D, we have that

Now, from (

28) and Lemma 4 (i), we obtain

By applying Schwartz’s inequality, we get

By the boundedness of

then by (

28) and (

39), we have

On substituting (

40) in (

38), we obtain that

converges strongly to

. This completes the proof. □

4. Numerical Example

In this section, we give some numerical experiments to illustrate the performance of our algorithms with respect to some other algorithms in the literature. All computation are carried out using Lenovo PC with the following specification: Intel(R)core i7-600, CPU 2.48GHz, RAM 8.0GB, MATLAB version 9.5 (R2019b).

Example 1. We consider the MSSFP where and is given by where is a matrix. The closed convex sets of are given bywhere such that where is a positive real number and for each Also, is defined bywhere j is a Hessian matrix, and are vectors generated randomly. For each and the subdifferentials are given byand Please note that the projectionwhere which is equivalent to the following quadratic programming problemwhere The Problem (41) as well as the projection onto can effectively be solved using Optimization Toolbox solver ‘

quadprog’

in MATLAB. We defined the mapping by It is easy to see that is -strictly pseudo-nonspreading. For each and let be defined bywhere . For simplicity, we consider the case for which and compare the performance of Algorithm 1, Algorithm 2 and Algorithm (42) of Wen et al. [9] using various dimension of We choose , . Similarly, for Algorithm (42) of Wen et al. [9], we take and The initial points and the matrices are generated randomly for the following values of N and M: Case I: and ;

Case II: and

Case III: and

Case IV: and

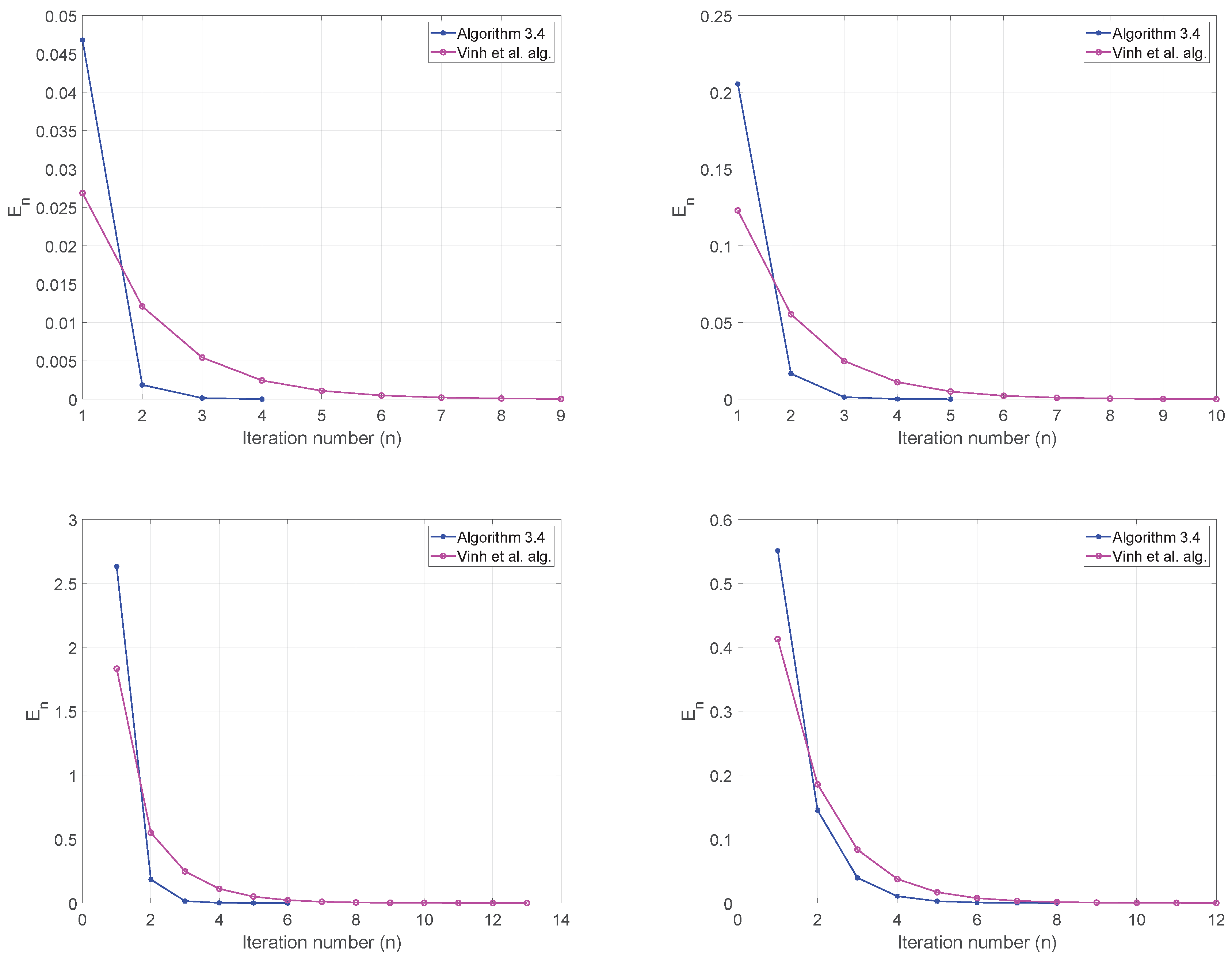

We use as stopping criterion and plot the graphs of against number of iterations. The numerical results are shown in Table 1 and Figure 1. Finally, we present an example in infinite dimensional Hilbert spaces.

Example 2. Let with norm and the inner product We defined the nonempty, closed convex sets and . We defined the linear operator by The projection onto C and Q are given by We consider the MSSFP where , (identity mapping) and . We compare our Algorithm 2 with the CQ-type algorithm (Algorithm 3.1) of Vinh et al. [20]. For Algorithm 2, we take , and for Also, for Vinh et al. alg, we take and We use as stopping criterion and test the algorithms for the following initial points: Case I:

Case II:

Case III:

Case IV: