Automated Mapping of Wetland Ecosystems: A Study Using Google Earth Engine and Machine Learning for Lotus Mapping in Central Vietnam

Abstract

:1. Introduction

2. Study Site and Methodology

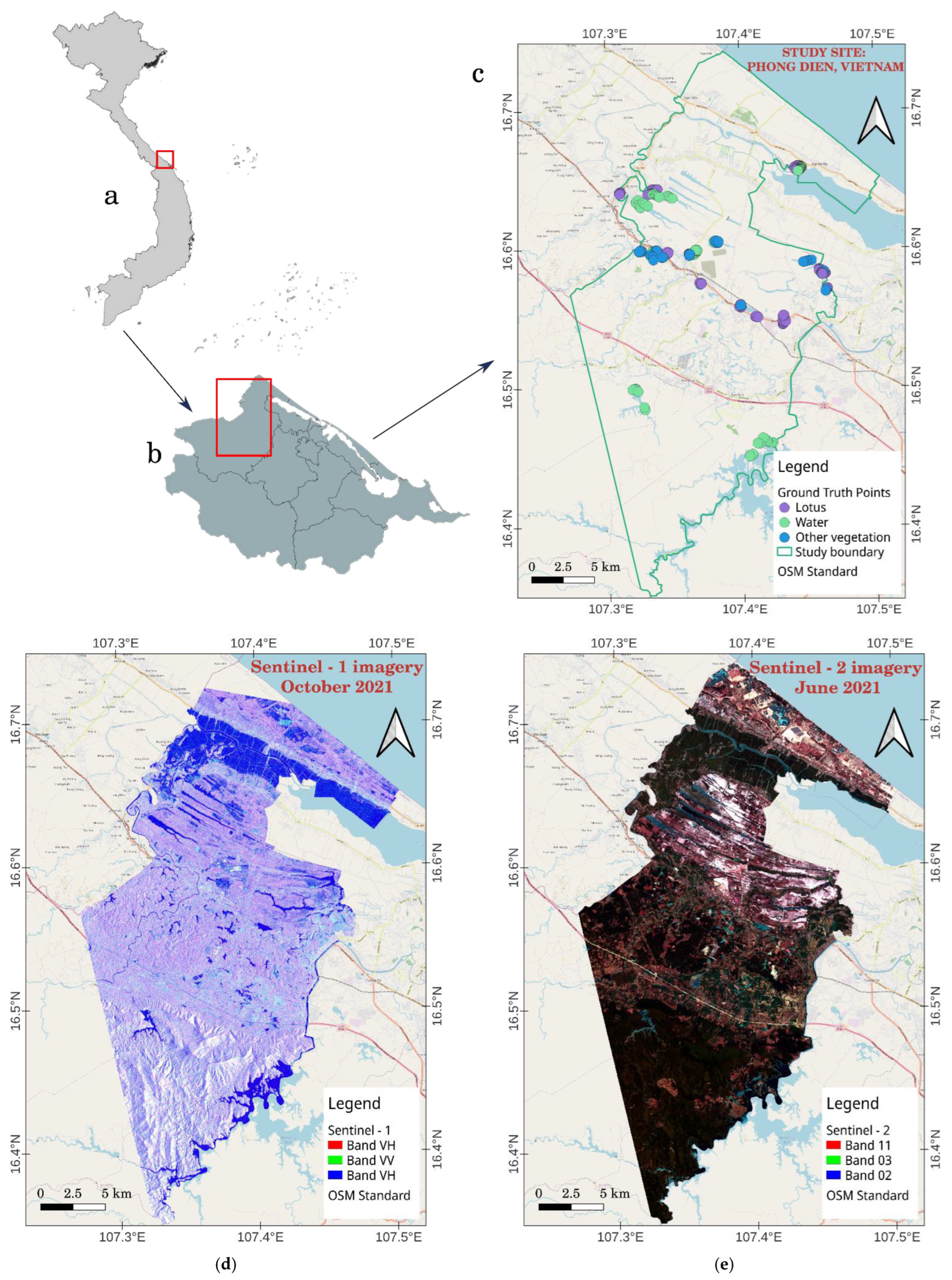

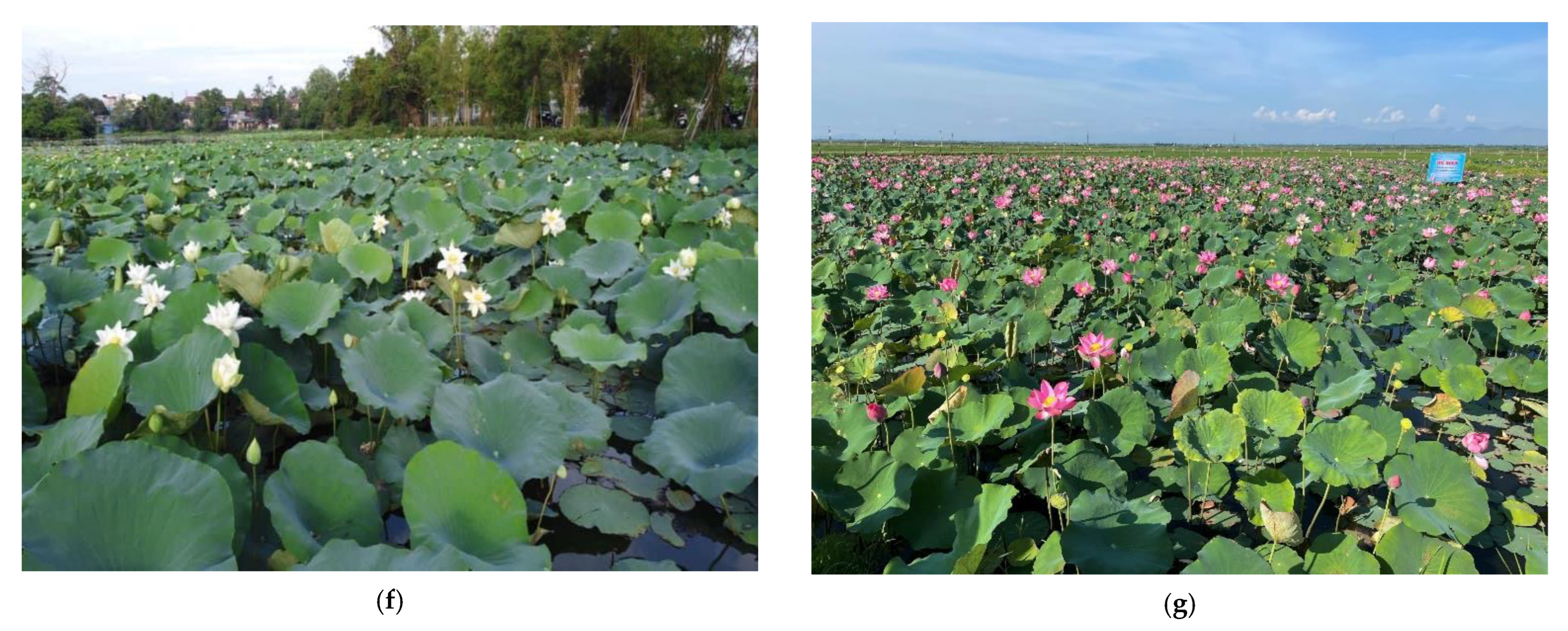

2.1. Study Site

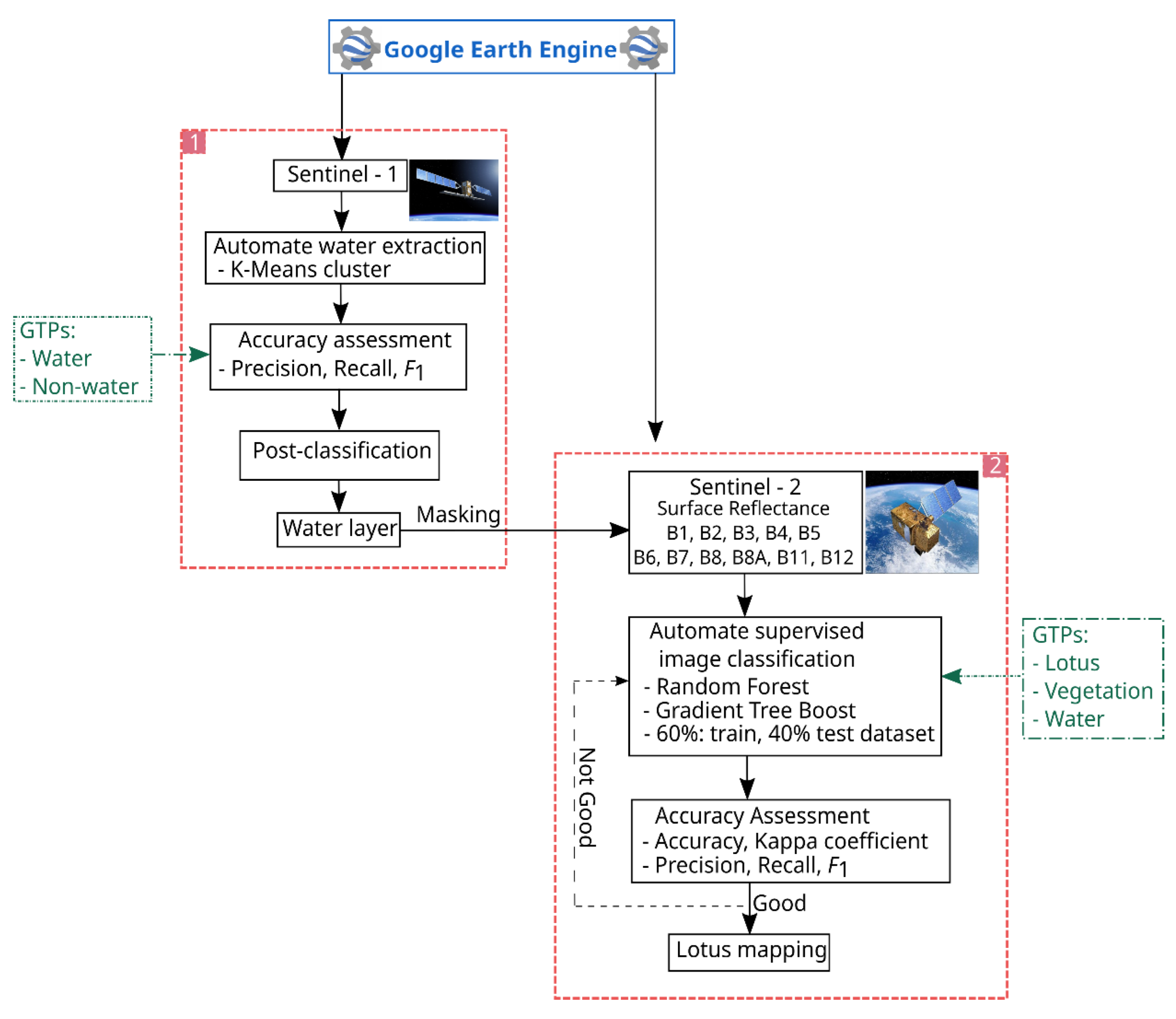

2.2. Methodology

2.2.1. Ground Truth Points (GTPs) Collection

2.2.2. Satellite Image Acquisition

2.2.3. Water Extraction

2.2.4. Lotus Mapping

Random Forest (RF)

Gradient Boosting Tree (GBT)

2.3. Evaluation Metrics

3. Results and Discussion

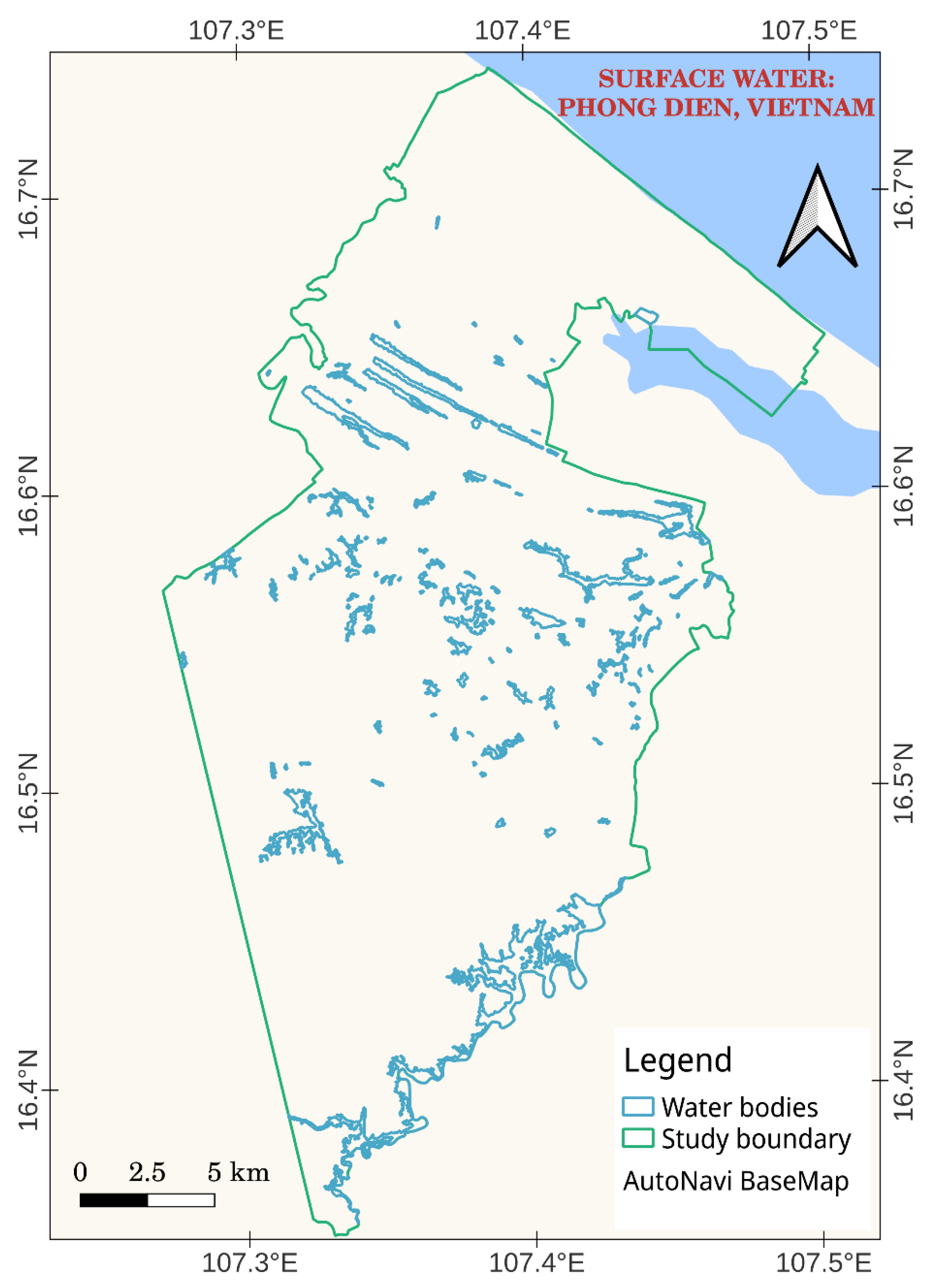

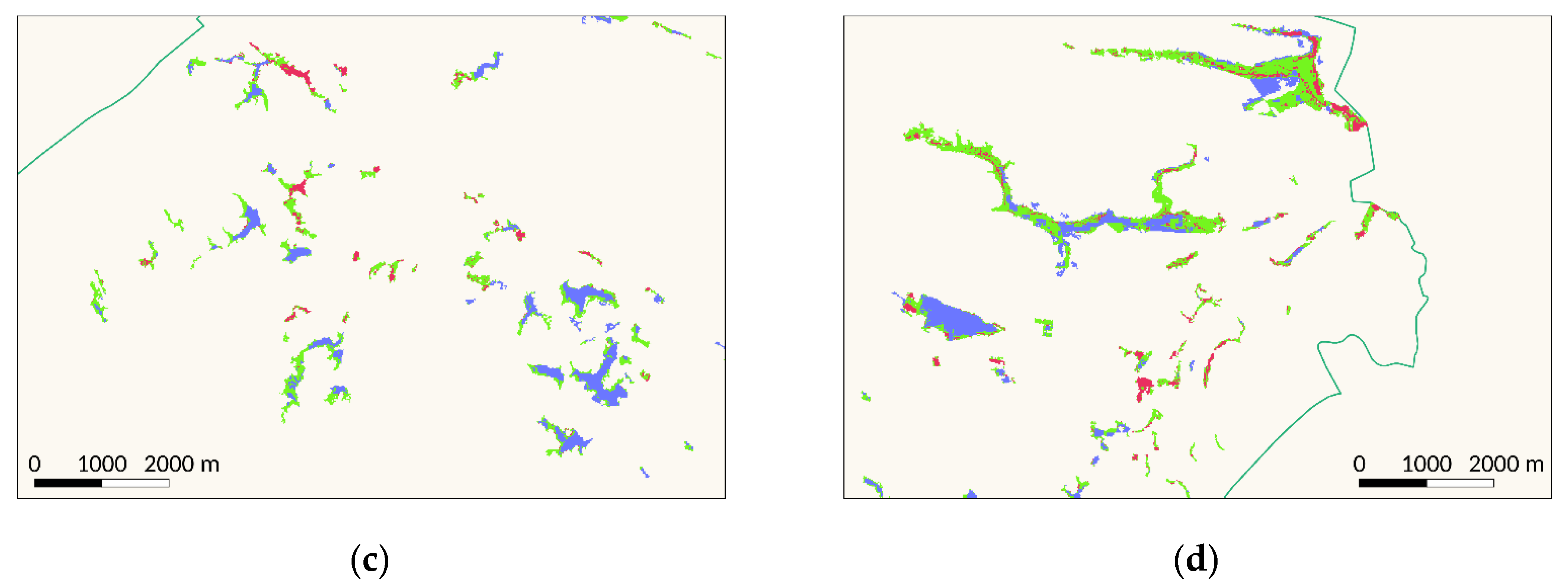

3.1. Automated Water Extraction Using the GEE

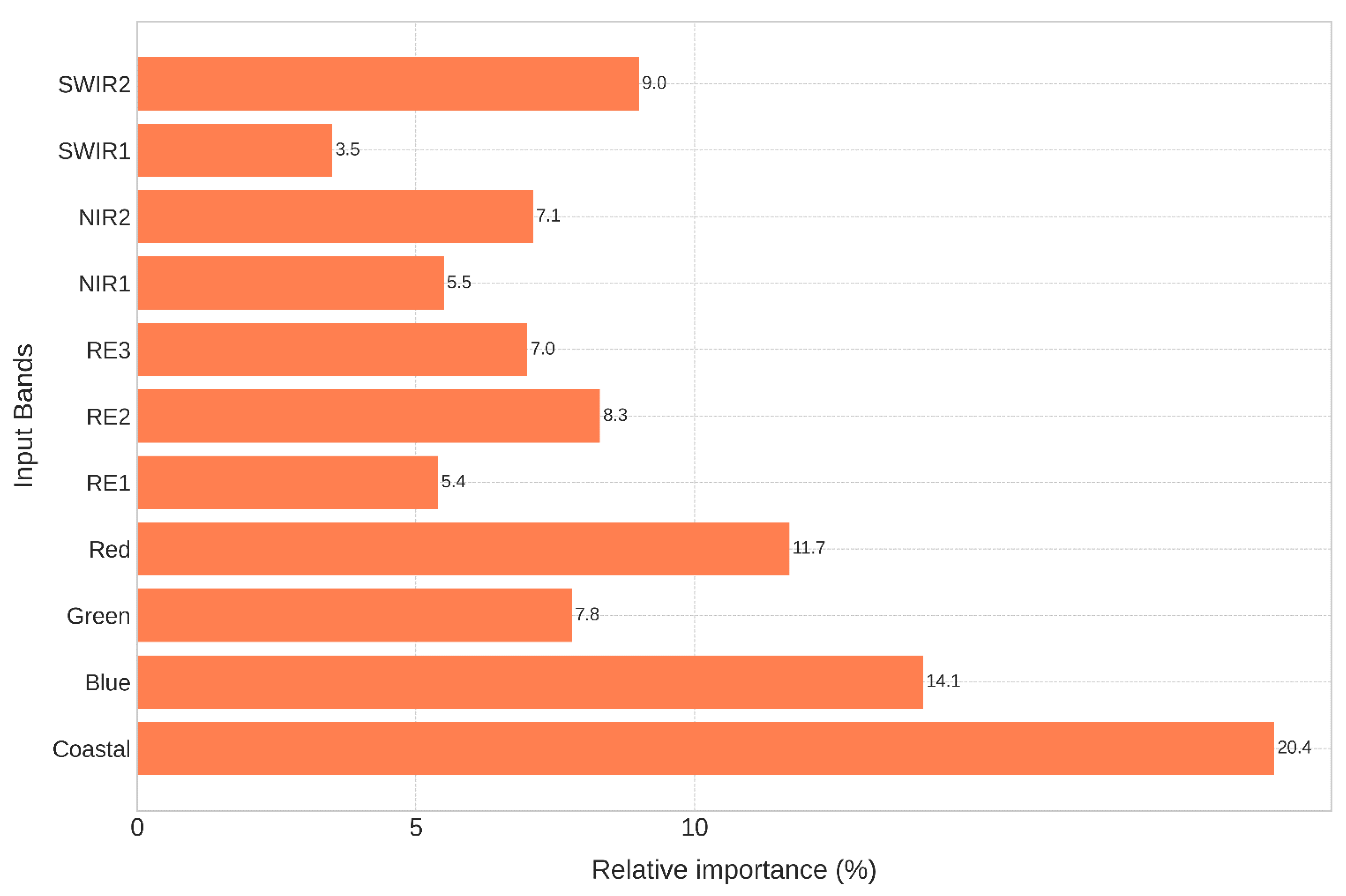

3.2. Automated Lotus Mapping Using the GEE

3.3. Discussion

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Schlesinger, W.H.; Bernhardt, E.S. Wetland Ecosystems. In Biogeochemistry; Elsevier: Amsterdam, The Netherlands, 2020; pp. 249–291. ISBN 978-0-12-814608-8. [Google Scholar]

- Mitsch, W.J.; Bernal, B.; Hernandez, M.E. Ecosystem Services of Wetlands. Int. J. Biodivers. Sci. Ecosyst. Serv. Manag. 2015, 11, 1–4. [Google Scholar] [CrossRef] [Green Version]

- Salimi, S.; Almuktar, S.A.A.A.N.; Scholz, M. Impact of Climate Change on Wetland Ecosystems: A Critical Review of Experimental Wetlands. J. Environ. Manag. 2021, 286, 112160. [Google Scholar] [CrossRef] [PubMed]

- Nahlik, A.M.; Fennessy, M.S. Carbon Storage in US Wetlands. Nat. Commun. 2016, 7, 13835. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Were, D.; Kansiime, F.; Fetahi, T.; Cooper, A.; Jjuuko, C. Carbon Sequestration by Wetlands: A Critical Review of Enhancement Measures for Climate Change Mitigation. Earth Syst. Environ. 2019, 3, 327–340. [Google Scholar] [CrossRef]

- Davidson, N.C. How Much Wetland Has the World Lost? Long-Term and Recent Trends in Global Wetland Area. Mar. Freshw. Res. 2014, 65, 934–941. [Google Scholar] [CrossRef] [Green Version]

- Ramsar Convention on Wetlands. Global Wetland Outlook: State of the World’s Wetlands and Their Services to People. 2018, p. 88. Available online: https://ramsar.org/sites/default/files/documents/library/gwo_e.pdf (accessed on 23 November 2022).

- Hilmi, N.; Chami, R.; Sutherland, M.D.; Hall-Spencer, J.M.; Lebleu, L.; Benitez, M.B.; Levin, L.A. The Role of Blue Carbon in Climate Change Mitigation and Carbon Stock Conservation. Front. Clim. 2021, 3, 1–18. [Google Scholar] [CrossRef]

- Alexandridis, T.K.; Monachou, S.; Skoulikaris, C.; Kalopesa, E.; Zalidis, G.C. Investigation of the Temporal Relation of Remotely Sensed Coastal Water Quality with GIS Modelled Upstream Soil Erosion. Hydrol. Process. 2015, 29, 2373–2384. [Google Scholar] [CrossRef]

- Pham, T.D.; Xia, J.; Ha, N.T.; Bui, D.T.; Le, N.N.; Tekeuchi, W. A Review of Remote Sensing Approaches for Monitoring Blue Carbon Ecosystems: Mangroves, Seagrassesand Salt Marshes during 2010–2018. Sensors 2019, 19, 1933. [Google Scholar] [CrossRef] [Green Version]

- Guo, M.; Li, J.; Sheng, C.; Xu, J.; Wu, L. A Review of Wetland Remote Sensing. Sensors 2017, 17, 777. [Google Scholar] [CrossRef] [Green Version]

- Mahdavi, S.; Salehi, B.; Granger, J.; Amani, M.; Brisco, B.; Huang, W. Remote Sensing for Wetland Classification: A Comprehensive Review. GISci. Remote Sens. 2018, 55, 623–658. [Google Scholar] [CrossRef]

- McCarthy, M.J.; Merton, E.J.; Muller-Karger, F.E. Improved Coastal Wetland Mapping Using Very-High 2-Meter Spatial Resolution Imagery. Int. J. Appl. Earth Obs. Geoinf. 2015, 40, 11–18. [Google Scholar] [CrossRef]

- Amani, M.; Salehi, B.; Mahdavi, S.; Granger, J.E.; Brisco, B.; Hanson, A. Wetland Classification Using Multi-Source and Multi-Temporal Optical Remote Sensing Data in Newfoundland and Labrador, Canada. Can. J. Remote Sens. 2017, 43, 360–373. [Google Scholar] [CrossRef]

- Jahncke, R.; Leblon, B.; Bush, P.; LaRocque, A. Mapping Wetlands in Nova Scotia with Multi-Beam RADARSAT-2 Polarimetric SAR, Optical Satellite Imagery, and Lidar Data. Int. J. Appl. Earth Obs. Geoinf. 2018, 68, 139–156. [Google Scholar] [CrossRef]

- Whiteside, T.G.; Bartolo, R.E. Mapping Aquatic Vegetation in a Tropical Wetland Using High Spatial Resolution Multispectral Satellite Imagery. Remote Sens. 2015, 7, 11664. [Google Scholar] [CrossRef] [Green Version]

- LaRocque, A.; Leblon, B.; Woodward, R.; Bourgeau-Chavez, L. Wetland Mapping in New Brunswick, Canada with Landsat5-TM, ALOS-PALSAR, and RADARSAT-2 Imagery. In Proceedings of the ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Nice, France, 3 August 2020; Volume V-3–2020, pp. 301–308. [Google Scholar]

- Merchant, M.A.; Warren, R.K.; Edwards, R.; Kenyon, J.K. An Object-Based Assessment of Multi-Wavelength SAR, Optical Imagery and Topographical Datasets for Operational Wetland Mapping in Boreal Yukon, Canada. Can. J. Remote Sens. 2019, 45, 308–332. [Google Scholar] [CrossRef]

- LaRocque, A.; Phiri, C.; Leblon, B.; Pirotti, F.; Connor, K.; Hanson, A. Wetland Mapping with Landsat 8 OLI, Sentinel-1, ALOS-1 PALSAR, and LiDAR Data in Southern New Brunswick, Canada. Remote Sens. 2020, 12, 2095. [Google Scholar] [CrossRef]

- Onojeghuo, A.O.; Onojeghuo, A.R.; Cotton, M.; Potter, J.; Jones, B. Wetland Mapping with Multi-Temporal Sentinel-1 & -2 Imagery (2017–2020) and LiDAR Data in the Grassland Natural Region of Alberta. GISci. Remote Sens. 2021, 58, 999–1021. [Google Scholar] [CrossRef]

- Berisha, B.; Mëziu, E.; Shabani, I. Big Data Analytics in Cloud Computing: An Overview. J. Cloud Comput. 2022, 11, 24. [Google Scholar] [CrossRef]

- Kwong, I.H.Y.; Wong, F.K.K.; Fung, T. Automatic Mapping and Monitoring of Marine Water Quality Parameters in Hong Kong Using Sentinel-2 Image Time-Series and Google Earth Engine Cloud Computing. Front. Mar. Sci. 2022, 9, 1–18. [Google Scholar] [CrossRef]

- Kavzoglu, T.; Goral, M. Google Earth Engine for Monitoring Marine Mucilage: Izmit Bay in Spring 2021. Hydrology 2022, 9, 135. [Google Scholar] [CrossRef]

- Farda, N.M. Multi-Temporal Land Use Mapping of Coastal Wetlands Area Using Machine Learning in Google Earth Engine. IOP Conf. Ser. Earth Environ. Sci. 2017, 98, 012042. [Google Scholar] [CrossRef]

- Hird, J.N.; DeLancey, E.R.; McDermid, G.J.; Kariyeva, J. Google Earth Engine, Open-Access Satellite Data, and Machine Learning in Support of Large-Area Probabilistic Wetland Mapping. Remote Sens. 2017, 9, 1315. [Google Scholar] [CrossRef] [Green Version]

- Long, X.; Li, X.; Lin, H.; Zhang, M. Mapping the Vegetation Distribution and Dynamics of a Wetland Using Adaptive-Stacking and Google Earth Engine Based on Multi-Source Remote Sensing Data. Int. J. Appl. Earth Obs. Geoinf. 2021, 102, 102453. [Google Scholar] [CrossRef]

- Wang, M.; Mao, D.; Wang, Y.; Song, K.; Yan, H.; Jia, M.; Wang, Z. Annual Wetland Mapping in Metropolis by Temporal Sample Migration and Random Forest Classification with Time Series Landsat Data and Google Earth Engine. Remote Sens. 2022, 14, 3191. [Google Scholar] [CrossRef]

- Gxokwe, S.; Dube, T.; Mazvimavi, D. Leveraging Google Earth Engine Platform to Characterize and Map Small Seasonal Wetlands in the Semi-Arid Environments of South Africa. Sci. Total Environ. 2022, 803, 150139. [Google Scholar] [CrossRef]

- Wang, Y.; Li, Z.; Zeng, C.; Xia, G.-S.; Shen, H. An Urban Water Extraction Method Combining Deep Learning and Google Earth Engine. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 769–782. [Google Scholar] [CrossRef]

- Mayer, T.; Poortinga, A.; Bhandari, B.; Nicolau, A.P.; Markert, K.; Thwal, N.S.; Markert, A.; Haag, A.; Kilbride, J.; Chishtie, F.; et al. Deep Learning Approach for Sentinel-1 Surface Water Mapping Leveraging Google Earth Engine. ISPRS Open J. Photogramm. Remote Sens. 2021, 2, 100005. [Google Scholar] [CrossRef]

- Li, K.; Wang, J.; Cheng, W.; Wang, Y.; Zhou, Y.; Altansukh, O. Deep Learning Empowers the Google Earth Engine for Automated Water Extraction in the Lake Baikal Basin. Int. J. Appl. Earth Obs. Geoinf. 2022, 112, 102928. [Google Scholar] [CrossRef]

- Gulácsi, A.; Kovács, F. Sentinel-1-Imagery-Based High-Resolution Water Cover Detection on Wetlands, Aided by Google Earth Engine. Remote Sens. 2020, 12, 1614. [Google Scholar] [CrossRef]

- Li, J.; Peng, B.; Wei, Y.; Ye, H. Accurate Extraction of Surface Water in Complex Environment Based on Google Earth Engine and Sentinel-2. PLoS ONE 2021, 16, e0253209. [Google Scholar] [CrossRef]

- Taheri Dehkordi, A.; Valadan Zoej, M.J.; Ghasemi, H.; Ghaderpour, E.; Hassan, Q.K. A New Clustering Method to Generate Training Samples for Supervised Monitoring of Long-Term Water Surface Dynamics Using Landsat Data through Google Earth Engine. Sustainability 2022, 14, 8046. [Google Scholar] [CrossRef]

- Gerbeaux, P.; Finlayson, C.M.; van Dam, A.A. Wetland Classification: Overview. In The Wetland Book: I: Structure and Function, Management and Methods; Finlayson, C.M., Everard, M., Irvine, K., McInnes, R.J., Middleton, B.A., van Dam, A.A., Davidson, N.C., Eds.; Springer Netherlands: Dordrecht, The Netherlands, 2016; pp. 1–8. ISBN 978-94-007-6172-8. [Google Scholar]

- Kew Science Nelumbo Nucifera Gaertn. Available online: http://powo.science.kew.org/taxon/urn:lsid:ipni.org:names:605422-1 (accessed on 6 February 2023).

- Lu, H.-F.; Tan, Y.-W.; Zhang, W.-S.; Qiao, Y.-C.; Campbell, D.E.; Zhou, L.; Ren, H. Integrated Emergy and Economic Evaluation of Lotus-Root Production Systems on Reclaimed Wetlands Surrounding the Pearl River Estuary, China. J. Clean. Prod. 2017, 158, 367–379. [Google Scholar] [CrossRef]

- Vo, H.T.M.; van Halsema, G.; Hellegers, P.; Wyatt, A.; Nguyen, Q.H. The Emergence of Lotus Farming as an Innovation for Adapting to Climate Change in the Upper Vietnamese Mekong Delta. Land 2021, 10, 350. [Google Scholar] [CrossRef]

- Arooj, M.; Imran, S.; Inam-ur-Raheem, M.; Rajoka, M.S.R.; Sameen, A.; Siddique, R.; Sahar, A.; Tariq, S.; Riaz, A.; Hussain, A.; et al. Lotus Seeds (Nelumbinis Semen) as an Emerging Therapeutic Seed: A Comprehensive Review. Food Sci. Nutr. 2021, 9, 3971–3987. [Google Scholar] [CrossRef]

- Harutyunyan, N.; Mar, C.; Muhammad, N.; Anusha, P.; Tigis, K.; Mohamed, A.; Elsalam, T. Aung Lotus Value-Chain Enhancement in Dong Thap, Vietnam. Case-Study Report; Can Tho, Vietnam. 2020. Available online: https://www.researchgate.net/profile/Naira-Harutyunyan-2/publication/341463131_LOTUS_value-chain_enhancement_in_Dong_Thap_Vietnam_Case-Study_Report/links/5ec2d605a6fdcc90d67ecb1e/LOTUS-value-chain-enhancement-in-Dong-Thap-Vietnam-Case-Study-Report.pdf (accessed on 6 February 2023).

- Ahmed, M.; Seraj, R.; Islam, S.M.S. The K-Means Algorithm: A Comprehensive Survey and Performance Evaluation. Electronics 2020, 9, 1295. [Google Scholar] [CrossRef]

- Jie, C.; Jiyue, Z.; Junhui, W.; Yusheng, W.; Huiping, S.; Kaiyan, L. Review on the Research of K-Means Clustering Algorithm in Big Data. In Proceedings of the 2020 IEEE 3rd International Conference on Electronics and Communication Engineering (ICECE), Xi’an, China, 14–16 December 2020; pp. 107–111. [Google Scholar]

- Breiman, L. Random Forest. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef] [Green Version]

- Belgiu, M.; Drăguţ, L. Random Forest in Remote Sensing: A Review of Applications and Future Directions. ISPRS J. Photogramm. Remote Sens. 2016, 114, 24–31. [Google Scholar] [CrossRef]

- Google Earth Engine. Ee.Classifier.SmileRandomForest. Available online: https://developers.google.com/earth-engine/apidocs/ee-classifier-smilerandomforest (accessed on 4 February 2023).

- Li, H. Smile. Available online: https://haifengl.github.io (accessed on 10 March 2022).

- Natekin, A.; Knoll, A. Gradient Boosting Machines, a Tutorial. Front. Neurorobot. 2013, 7, 21. [Google Scholar] [CrossRef] [Green Version]

- Google Earth Engine. Ee.Classifier.SmileGradientTreeBoost. Available online: https://developers.google.com/earth-engine/apidocs/ee-classifier-smilegradienttreeboost (accessed on 4 February 2023).

- McHugh, M.L. Interrater Reliability: The Kappa Statistic. Biochem. Med. 2012, 22, 276–282. [Google Scholar] [CrossRef]

- Hossin, M.; Sulaiman, M.N. A Review on Evaluation Metrics for Data Classification Evaluations. IJDKP 2015, 5, 01–11. [Google Scholar] [CrossRef]

- Sahin, E.K. Assessing the Predictive Capability of Ensemble Tree Methods for Landslide Susceptibility Mapping Using XGBoost, Gradient Boosting Machine, and Random Forest. SN Appl. Sci. 2020, 2, 1308. [Google Scholar] [CrossRef]

- Le, N.N.; Pham, T.D.; Yokoya, N.; Ha, N.T.; Nguyen, T.T.T.; Tran, T.D.T.; Pham, T.D. Learning from Multimodal and Multisensor Earth Observation Dataset for Improving Estimates of Mangrove Soil Organic Carbon in Vietnam. Int. J. Remote Sens. 2021, 42, 6866–6890. [Google Scholar] [CrossRef]

- Ha, N.-T.; Manley-Harris, M.; Pham, T.-D.; Hawes, I. Detecting Multi-Decadal Changes in Seagrass Cover in Tauranga Harbour, New Zealand, Using Landsat Imagery and Boosting Ensemble Classification Techniques. IJGI 2021, 10, 371. [Google Scholar] [CrossRef]

- Rao, P.; Wang, Y.; Liu, Y.; Wang, X.; Hou, Y.; Pan, S.; Wang, F.; Zhu, D. A Comparison of Multiple Methods for Mapping Groundwater Levels in the Mu Us Sandy Land, China. J. Hydrol. Reg. Stud. 2022, 43, 101189. [Google Scholar] [CrossRef]

- Zhang, T.; Su, J.; Liu, C.; Chen, W.-H.; Liu, H.; Liu, G. Band Selection in Sentinel-2 Satellite for Agriculture Applications. In Proceedings of the 2017 23rd International Conference on Automation and Computing (ICAC), Huddersfield, UK, 7–8 September 2017; pp. 1–6. [Google Scholar]

- Zhang, T.-X.; Su, J.-Y.; Liu, C.-J.; Chen, W.-H. Potential Bands of Sentinel-2A Satellite for Classification Problems in Precision Agriculture. Int. J. Autom. Comput. 2019, 16, 16–26. [Google Scholar] [CrossRef] [Green Version]

- Gobron, N.; Pinty, B.; Verstraete, M.; Govaerts, Y. The MERIS Global Vegetation Index (MGVI): Description and Preliminary Application. Int. J. Remote Sens. 1999, 20, 1917–1927. [Google Scholar] [CrossRef]

- Traganos, D.; Poursanidis, D.; Aggarwal, B.; Chrysoulakis, N.; Reinartz, P. Estimating Satellite-Derived Bathymetry (SDB) with the Google Earth Engine and Sentinel-2. Remote Sens. 2018, 10, 859. [Google Scholar] [CrossRef] [Green Version]

- Poursanidis, D.; Traganos, D.; Reinartz, P.; Chrysoulakis, N. On the Use of Sentinel-2 for Coastal Habitat Mapping and Satellite-Derived Bathymetry Estimation Using Downscaled Coastal Aerosol Band. Int. J. Appl. Earth Obs. Geoinf. 2019, 80, 58–70. [Google Scholar] [CrossRef]

- Lippitt, C.D.; Zhang, S. The Impact of Small Unmanned Airborne Platforms on Passive Optical Remote Sensing: A Conceptual Perspective. Int. J. Remote Sens. 2018, 39, 4852–4868. [Google Scholar] [CrossRef]

| Sensor | Level of Processing | Acquisition Date | Cloud Coverage (%) | Spatial Resolution (m) | Bands |

|---|---|---|---|---|---|

| Sentinel-1 | Ground range detected (GRD) level 1 sensor mode: Interferometric wide swath (IW) | 10 October 2020 | 20 m × 22 m– resampling to 10 | VH, VV | |

| Sentinel-2 | Level 2A | 19 June 2021 | 0 | 10 | Band 1–Band 12 * |

| Parameter | Value | Parameter | Value |

|---|---|---|---|

| Number of cluster | 5 | Periodic pruning | 1000 |

| Maximum candidate | 100 | Distance function | Euclidean |

| Maximum iteration | 20 |

| Smile Random Forest (sRF) | |||

| Parameter | Value | Parameter | Value |

| Number of tree | 110 | Minimum leaf population | 17 |

| Variable per split | 11 | Maximum node | 20 |

| Bag fraction | 0.3 | ||

| Smile Gradient Tree Boost (sGTB) | |||

| Number of Tree | 100 | Shrinkage | 0.05 |

| Sampling rate | 0.7 | Max Node | 20 |

| Precision | Recall | F1 | OA | ||

|---|---|---|---|---|---|

| Non-water | 0.99 | 0.94 | 0.97 | 0.97 | 0.94 |

| Water | 0.96 | 0.99 | 0.97 |

| Precision | Recall | F1 | OA | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| sRF | sGTB | sRF | sGTB | sRF | sGTB | sRF | sGTB | sRF | sGTB | |

| Other vegetation | 0.88 | 0.93 | 0.85 | 0.96 | 0.87 | 0.95 | 0.88 | 0.95 | 0.82 | 0.92 |

| Lotus | 0.80 | 0.93 | 0.85 | 0.92 | 0.83 | 0.93 | ||||

| Water bodies | 0.97 | 0.99 | 0.97 | 0.98 | 0.97 | 0.99 | ||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pham, H.-T.; Nguyen, H.-Q.; Le, K.-P.; Tran, T.-P.; Ha, N.-T. Automated Mapping of Wetland Ecosystems: A Study Using Google Earth Engine and Machine Learning for Lotus Mapping in Central Vietnam. Water 2023, 15, 854. https://doi.org/10.3390/w15050854

Pham H-T, Nguyen H-Q, Le K-P, Tran T-P, Ha N-T. Automated Mapping of Wetland Ecosystems: A Study Using Google Earth Engine and Machine Learning for Lotus Mapping in Central Vietnam. Water. 2023; 15(5):854. https://doi.org/10.3390/w15050854

Chicago/Turabian StylePham, Huu-Ty, Hao-Quang Nguyen, Khac-Phuc Le, Thi-Phuong Tran, and Nam-Thang Ha. 2023. "Automated Mapping of Wetland Ecosystems: A Study Using Google Earth Engine and Machine Learning for Lotus Mapping in Central Vietnam" Water 15, no. 5: 854. https://doi.org/10.3390/w15050854