Improving Teacher Effectiveness: Designing Better Assessment Tools in Learning Management Systems

Abstract

:1. Introduction

- What are some the design limitations in the current LMS options?

- How can learning technology support a metacognitive approach to assessment design?

- How can we reduce the effort of deploying assessment through the online assessment system so that it as advantageous to teachers?

2. Literature Review

2.1. Metacognition and Learning

2.2. Analysis of Current LMS Leaders: Blackboard, Moodle, Canvas

3. LiquiZ Improvements to Teacher and Administrator Workflow

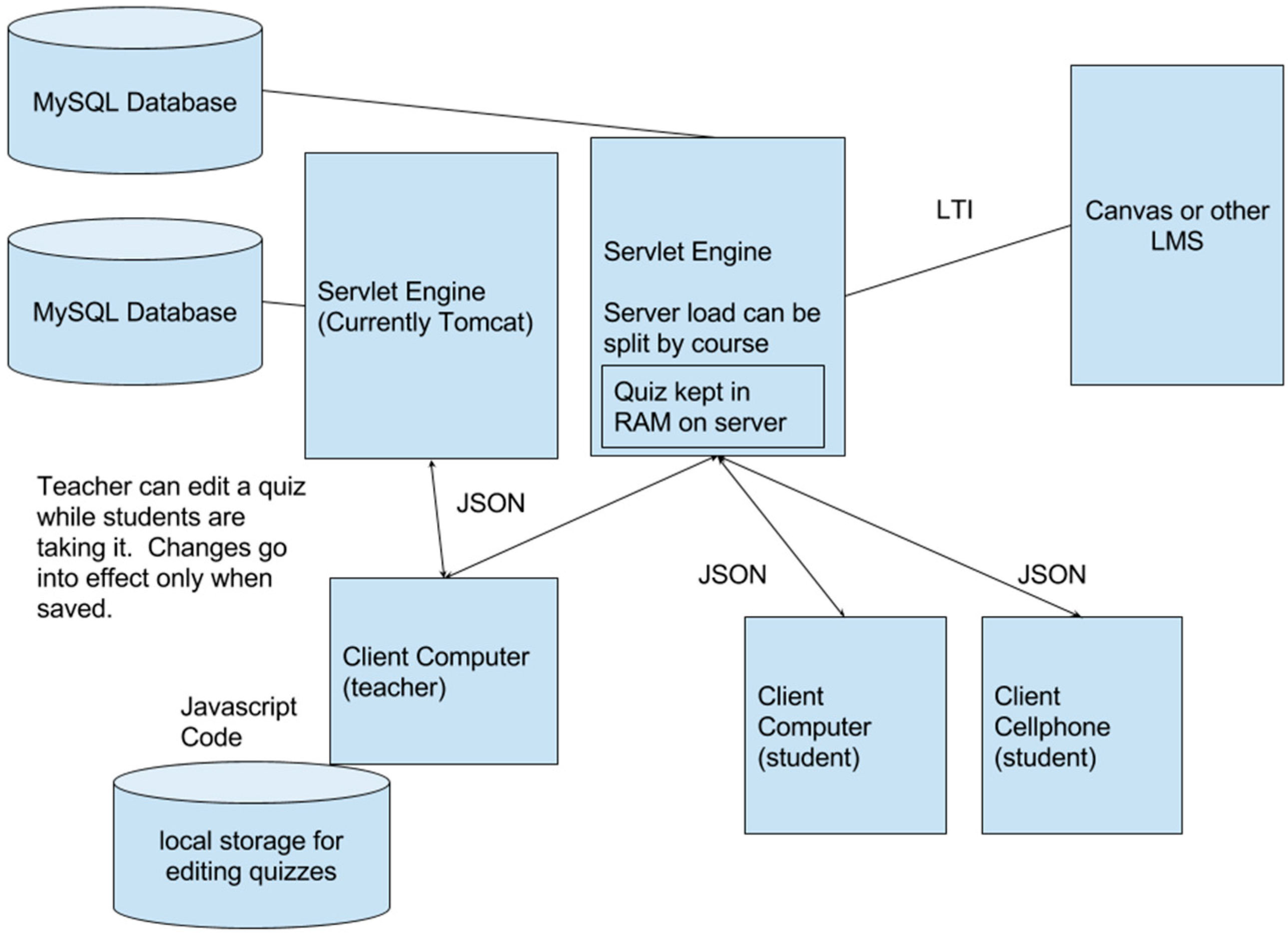

3.1. LiquiZ Architecture Overview

3.2. Optimizing User Interface and Page Traversal

| LMS | Est. page transitions | On-page clicks |

|---|---|---|

| Blackboard | 14 | 59 |

| Moodle | 50 | 0 |

| Canvas | 5 | 50 |

3.3. Re-envisioning Workflow

| Dates | Purpose |

|---|---|

| Visible | The assignment is invisible to students before this date. |

| Open | Assignment cannot be entered before this date. |

| Due | The student should ideally submit the assignment before this date/time. |

| Close | After this date, no student can submit the assignment. |

| Attribute | Purpose |

|---|---|

| VisibleBeforeOpen | Number of days before the open date that the quiz can be seen by the student |

| DaysUntilDue | The number of days after the open date that the assignment is due (days is stored in decimal, so an exact second can be defined) |

| DaysUntilClose | The number of days after the due date that the assignment remains open |

| PenaltyPerDayLate | The percentage cost for each day late (defaults to zero) |

| LatePenalty | Flat penalty for late submission |

| EarlyPerDayBonus | The opposite of a late penalty, with a bonus per day early |

| EarlyBonus | Flat bonus for being early |

| ShowCorrect | Either allow users to see the correct answer right away, or make them wait |

| ShowOwnAnswers | May users see and review their answers or do they have to wait until the close date when everyone has taken the quiz already |

3.4. Sharing Between Users, Departments, and Schools

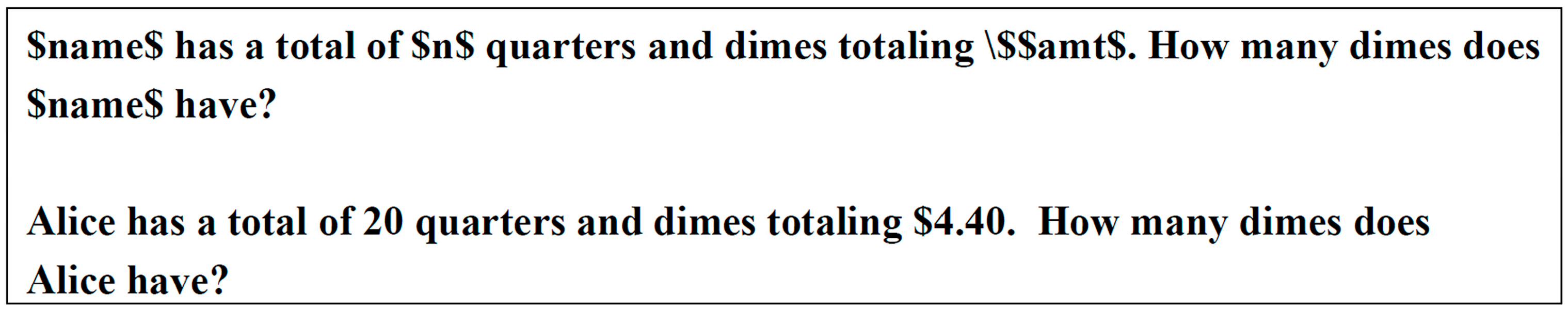

4. New Question Types

4.1. Question Types

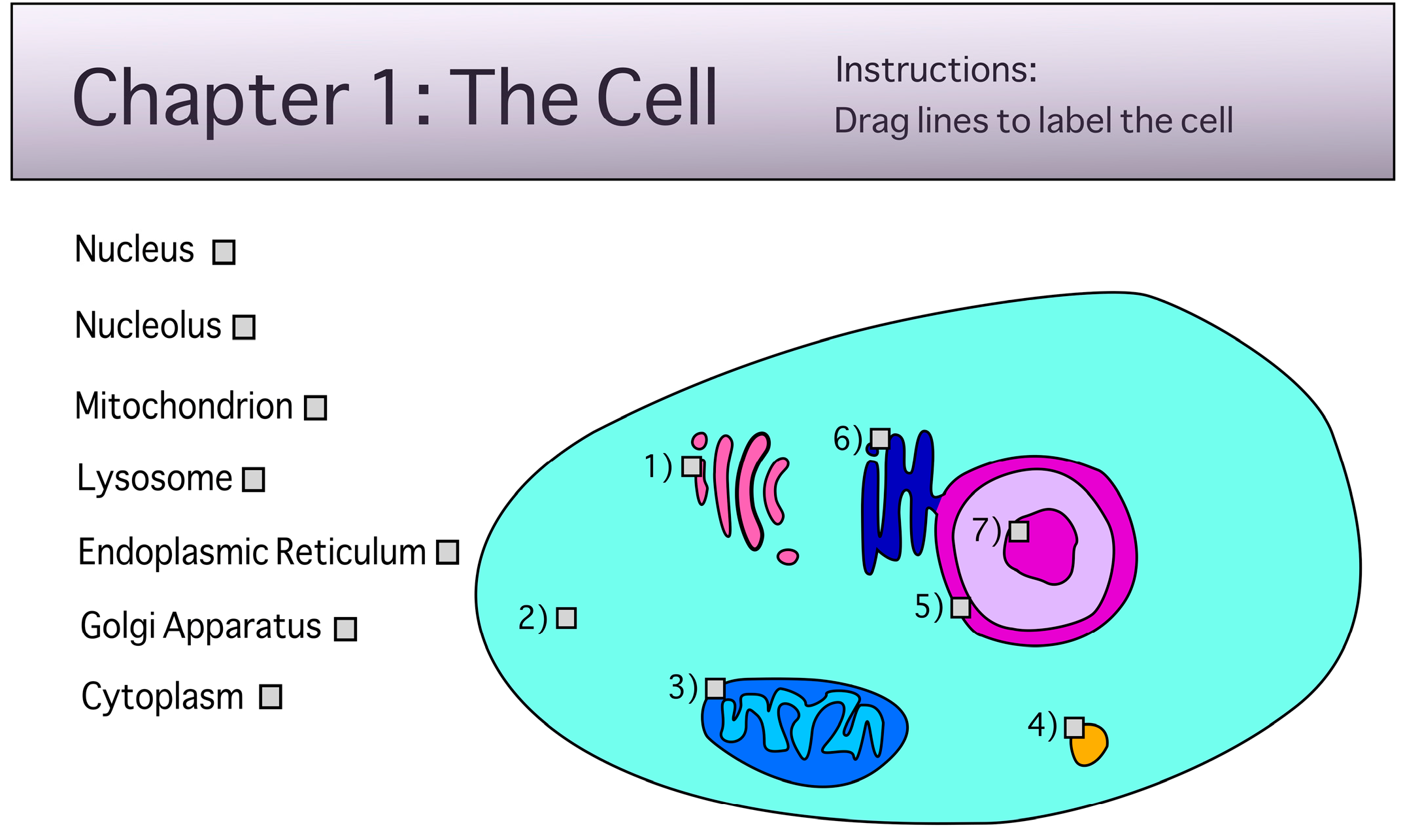

4.1.1. Visual Diagram Entry

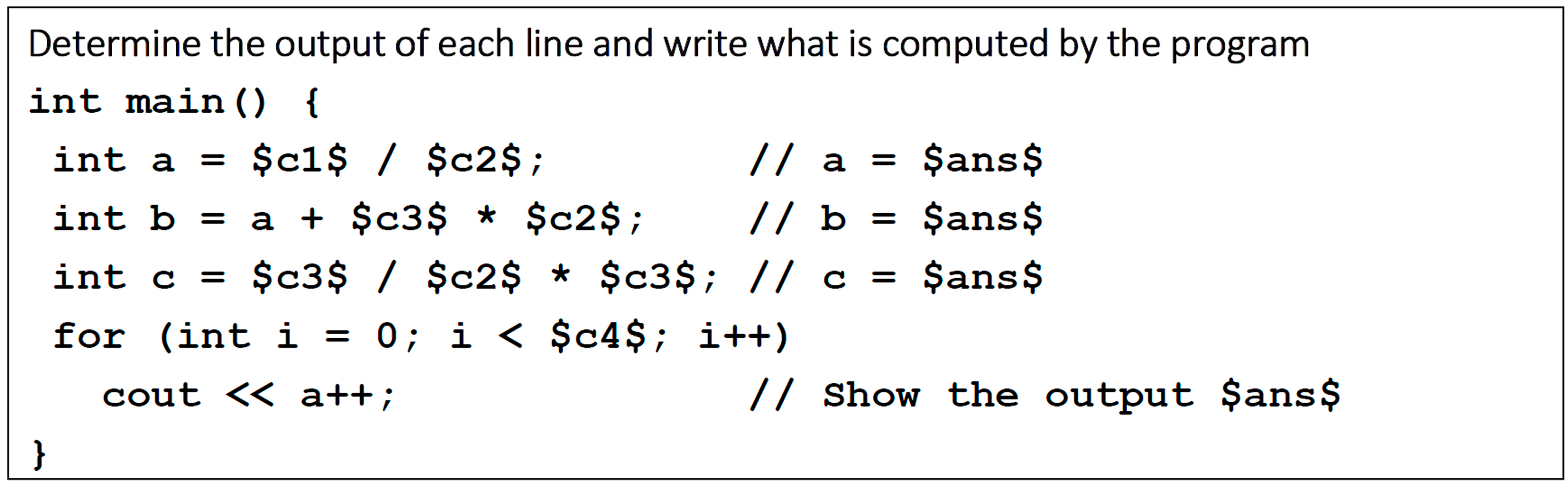

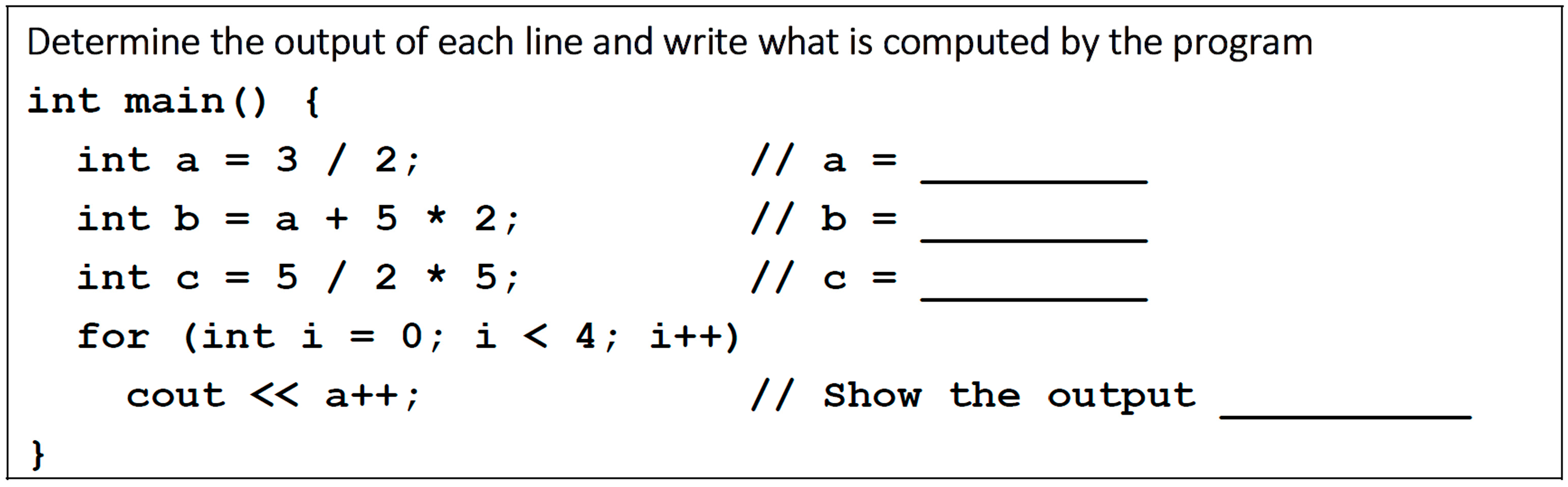

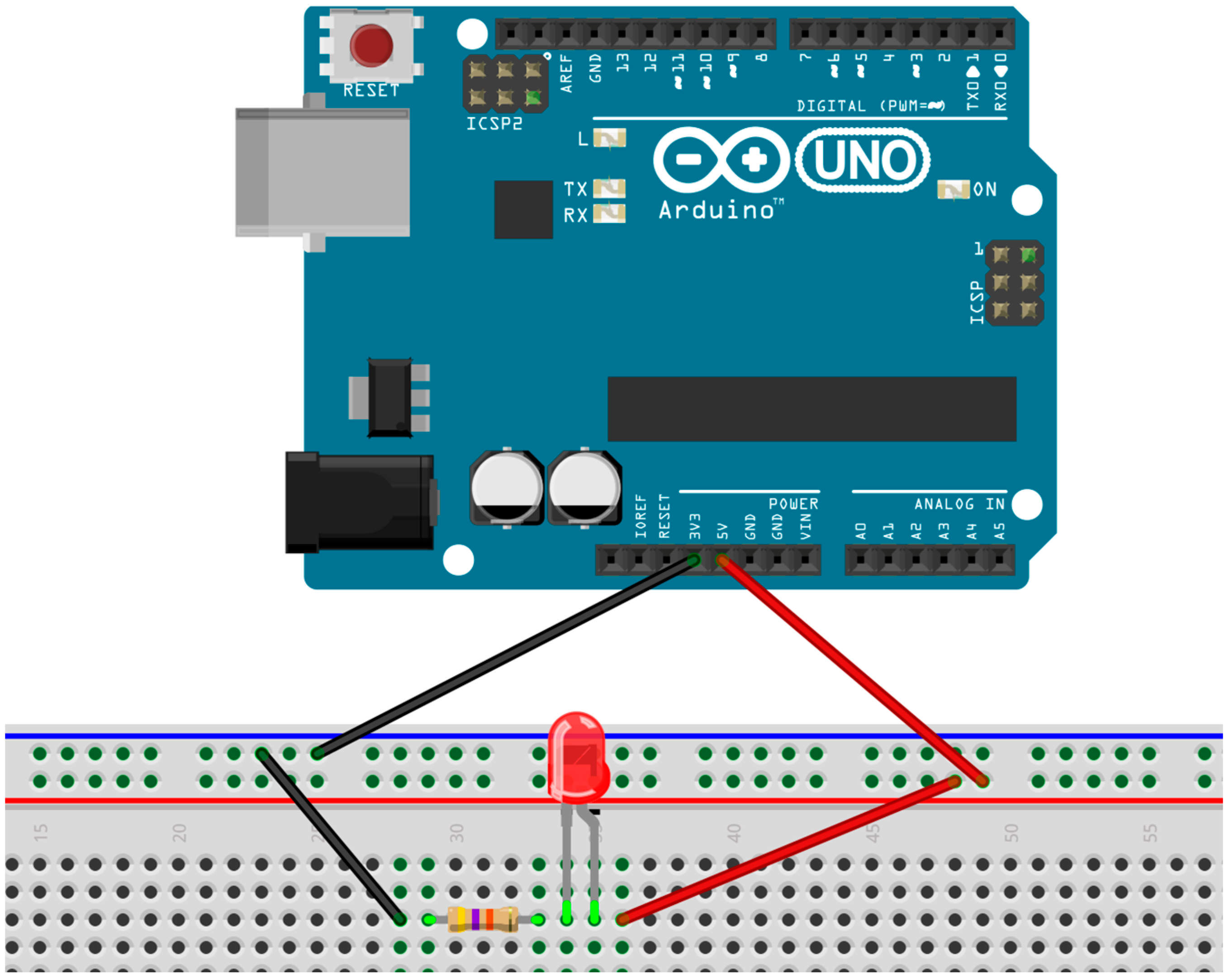

4.2.2. Programming Questions

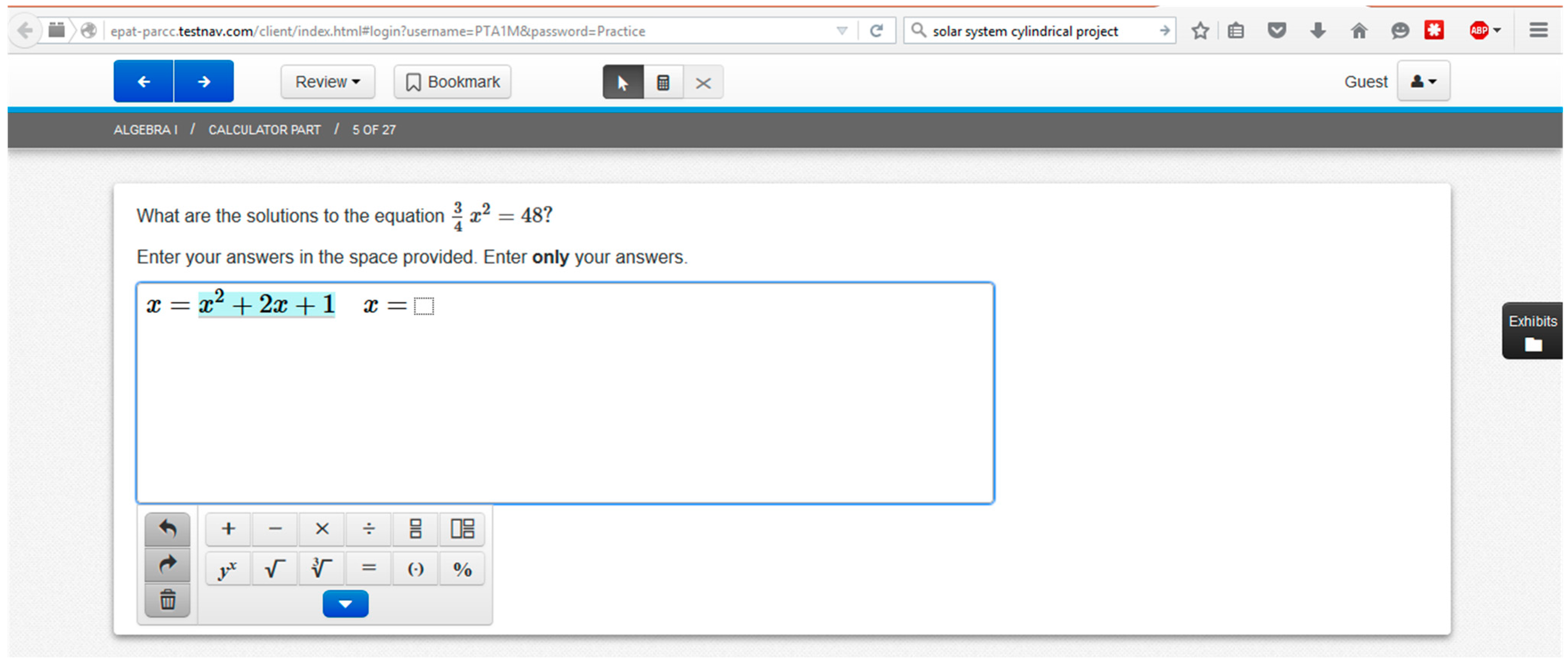

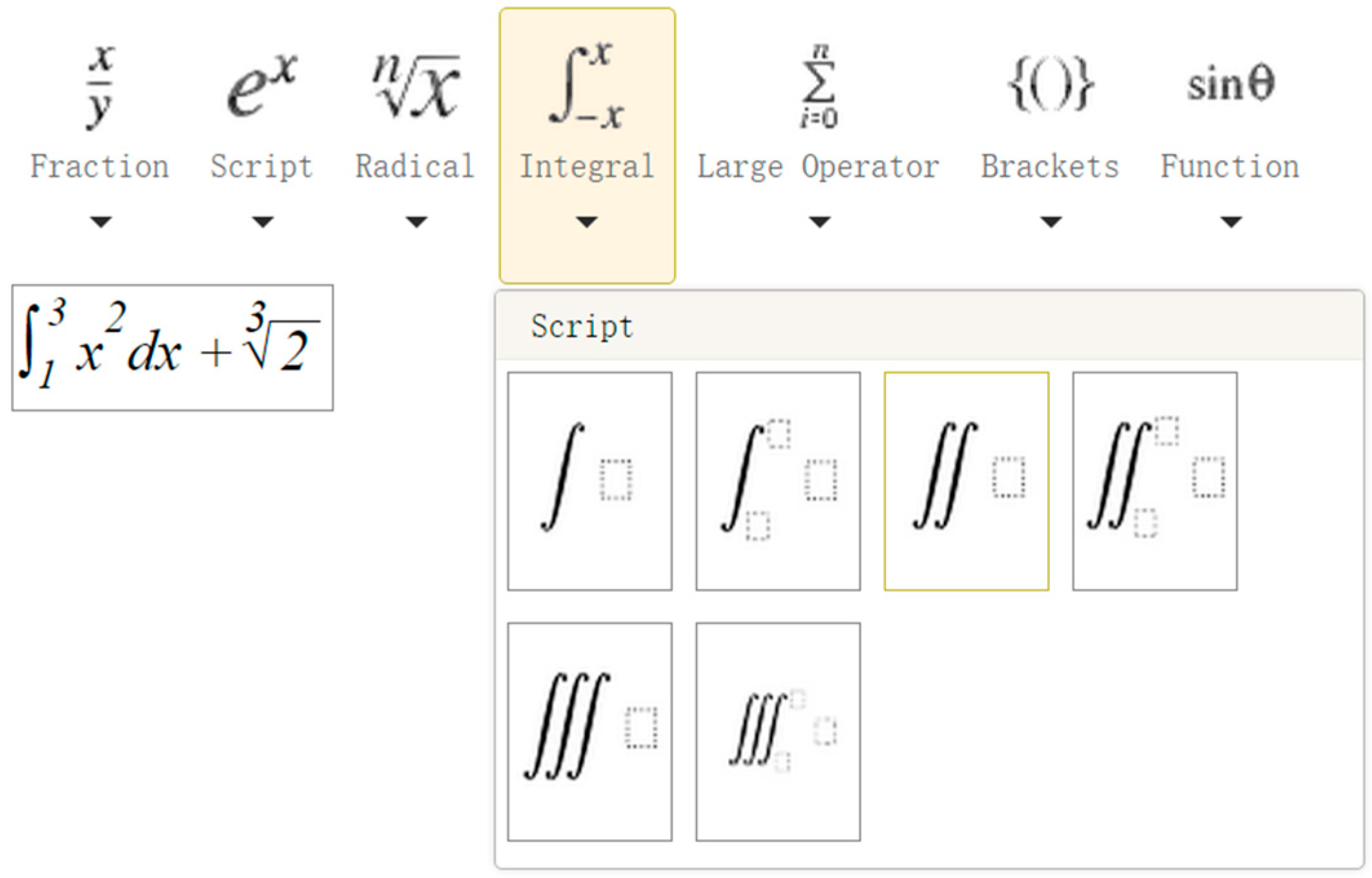

4.2.3. Equation Questions

4.3. Adaptive Quizzing and Real-Time Questions

5. Experimental Section

| Action | Canvas Clicks/Typing | LiquiZ Clicks/Typing |

|---|---|---|

| Create a new quiz with my standard options | 8/2 | 3/1 |

| Create a new multiple choice question | 8/6 | 3/5 |

| Create a new fill-in question | 9/2 | 3/2 |

| Create 10 multiple choice survey questions | 80/60 | 4/14 |

6. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Medved, J.P. LMS Industry User Research Report. Available online: http://www.capterra.com/learning-management-system-software/user-research (accessed on 8 April 2015).

- E-Learning Market Trends and Forecast 2014–2016 Report. A report by Docebo. Available online: http://www.docebo.com/landing/contactform/elearning-market-trends-and-forecast-2014-2016-docebo-report.pdf (accessed on 8 October 2015).

- Flavell, J.H. Metacognition and cognitive monitoring. Am. Psychol. 1979, 34, 906–911. [Google Scholar] [CrossRef]

- Veenman, M.V.J.; Bernadette, H.A.M.; Hout-Wolters, V.; Afflerbach, P. Metacognition and learning: Conceptual and methodological considerations. Metacognit. Learn. 2006, 1, 3–14. [Google Scholar] [CrossRef]

- Schwartz, N.H.; Anderson, C.; Hong, N.; Howard, B.; McGee, S. The influence of metacognitive skills on learners’ memory of information in a hypermedia environment. J. Educ. Comput. Res. 2004, 31, 77–93. [Google Scholar] [CrossRef]

- Govindasamy, T. Successful implementation of e-learning pedagogical considerations. Internet High. Educ. 2002, 4, 287–299. [Google Scholar] [CrossRef]

- Firdiyiyek, Y. Web-based courseware tools: Where is the pedagogy? Educ. Technol. 1999, 39, 29–34. [Google Scholar]

- IMS Question & Test Interoperability Specification Overview. (n.d.). Available online: https://www.imsglobal.org/question/index.html (accessed on 8 October 2015).

- Yorke, M. Formative assessment in higher education: Moves towards theory and the enhancement of pedagogic practice. High. Educ. 2003, 45, 477–501. [Google Scholar] [CrossRef]

- Sadler, D.R. Formative assessment and the design of instructional systems. Instr. Sci. 1989, 18, 119–144. [Google Scholar] [CrossRef]

- Kaniel, S. A metacognitive decision-making model for dynamic assessment and intervention. In Advances in Cognition and Educational Practice: Dynamic Assessment: Prevailing Models and Applications; Lidz, C.S., Elliot, J.G., Eds.; Elsevier Science: New York, NY, USA, 2000; Volume 6, pp. 643–680. [Google Scholar]

- Krathwohl, D. A revision of bloom’s taxonomy: An overview. Theory Pract. 2002, 41. [Google Scholar] [CrossRef]

- Gilbert, J.K. Visualization: A metacognitive skill in science and science education. In Visualization in Science Education; Springer: Drodrecht, The Netherlands, 2005; pp. 9–27. [Google Scholar]

- Peterson, M.P. Cognitive issues in cartographic visualization. In Visualization in Modern Cartography; MacEchren, A.M., Taylor, D.R.F., Eds.; Pergammon Press Ltd.: Oxford, UK, 1994. [Google Scholar]

- Kay, R.H.; LeSage, A. A strategic assessment of audience response systems used in higher education. Australas. J. Educ. Technol. 2009, 25, 235–249. [Google Scholar]

© 2015 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kruger, D.; Inman, S.; Ding, Z.; Kang, Y.; Kuna, P.; Liu, Y.; Lu, X.; Oro, S.; Wang, Y. Improving Teacher Effectiveness: Designing Better Assessment Tools in Learning Management Systems. Future Internet 2015, 7, 484-499. https://doi.org/10.3390/fi7040484

Kruger D, Inman S, Ding Z, Kang Y, Kuna P, Liu Y, Lu X, Oro S, Wang Y. Improving Teacher Effectiveness: Designing Better Assessment Tools in Learning Management Systems. Future Internet. 2015; 7(4):484-499. https://doi.org/10.3390/fi7040484

Chicago/Turabian StyleKruger, Dov, Sarah Inman, Zhiyu Ding, Yijin Kang, Poornima Kuna, Yujie Liu, Xiakun Lu, Stephen Oro, and Yingzhu Wang. 2015. "Improving Teacher Effectiveness: Designing Better Assessment Tools in Learning Management Systems" Future Internet 7, no. 4: 484-499. https://doi.org/10.3390/fi7040484

APA StyleKruger, D., Inman, S., Ding, Z., Kang, Y., Kuna, P., Liu, Y., Lu, X., Oro, S., & Wang, Y. (2015). Improving Teacher Effectiveness: Designing Better Assessment Tools in Learning Management Systems. Future Internet, 7(4), 484-499. https://doi.org/10.3390/fi7040484