1. Introduction

Machine Learning (ML) models are trained to identify predictive patterns from large-scale datasets and to reuse such patterns for inference on unseen data. In modern

Deep Neural Networks (DNNs), however, learned knowledge is not stored in a separable or easily removable form: information can become

implicitly memorized and distributed across model parameters. As a consequence, the influence of specific training samples cannot be retroactively removed by simple data deletion. This phenomenon exposes ML systems to concrete risks, including leakage of sensitive information, amplification of dataset biases, memorization of copyright-protected content, and vulnerabilities to malicious samples such as poisoning or backdoor attacks. Moreover, adversaries may exploit

membership inference attacks to infer whether an individual’s data were used during training [

1].

The practical relevance of this issue has been amplified by regulatory frameworks such as the

General Data Protection Regulation (GDPR), which establishes the

Right to be Forgotten (Art. 17) [

2]. In the context of AI systems, however, removing a record from the training dataset is insufficient to ensure compliance: a trained model may continue to encode and reveal information about deleted samples through its parameters. The most direct remedy—

retrain-from-scratch on the remaining data—is often impractical for modern architectures, including Transformer-based models and, even more so, Large Language Models (LLMs), due to prohibitive computational costs, energy consumption, and service downtime. To bridge this gap between regulatory obligations and technical feasibility,

Machine Unlearning (MU) has emerged as a principled approach for

efficiently and effectively removing the influence of specific training data from an already trained model, ideally yielding behavior indistinguishable from a model that has never been exposed to such data [

1]. As such, MU represents a key enabling component for responsible, secure, and regulation-compliant AI, offering a practical alternative to full retraining [

1,

2].

Despite rapid progress, the MU landscape remains heterogeneous: methods differ in the strength of their removal guarantees, the granularity of deletion requests, the extent to which they require design-time constraints, and the way forgetting is evaluated and reported. In particular, empirical claims of “forgetting” are often supported primarily by utility or efficiency metrics, while robust evidence of removal effectiveness and alignment to the retraining gold standard is less consistently assessed across studies [

3,

4]. This fragmentation complicates both scientific comparison and practical adoption, especially for Transformer-based NLP models where retraining costs are high and information can be diffuse across representations.

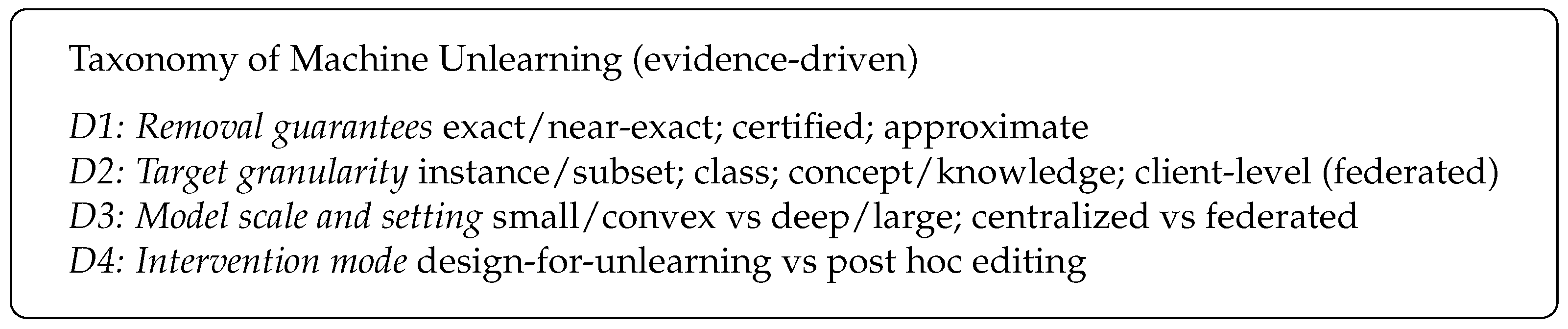

Motivated by this fragmentation, and by the fact that “forgetting” claims are often not evaluated against privacy-relevant threats or the retraining gold standard, this work develops an evidence-driven, deployment-oriented view of supervised MU for Transformer-based NLP. We first consolidate the empirical state of the field through a PRISMA-guided review, extracting recurring design choices, assumptions, and evaluation pitfalls that shape real-world adoptability. Building on these findings, we introduce a multi-level taxonomy and decision framework that organizes MU methods by their operational requirements (e.g., design-time constraints vs. post hoc applicability), expected deletion regime, and strength of removal guarantees, with the explicit goal of helping practitioners match techniques to assurance needs.

We then ground this perspective in a reproducible benchmark study using a standardized unlearning protocol on DistilBERT trained on a public corpus of news headlines for topic classification, where retrain-from-scratch serves as the reference point. We compare representative design-for-unlearning strategies and approximate post hoc approaches, and we assess not only utility but also removal effectiveness under adversarial scrutiny, most notably through membership inference risk, together with calibration and computational cost. To reduce ambiguity in what it means to be “indistinguishable from retraining”, we complement conventional metrics with distributional and structural alignment measures that quantify how closely the unlearned model matches the retrained one. Overall, the results surface consistent trade-offs between accuracy, privacy/forgetting strength, and time-to-unlearn, providing practical guidance on when approximate unlearning is sufficient and when stronger assurances warrant heavier procedures.

The remainder of the paper is organized as follows.

Section 2 introduces the essential background, including definitions of supervised Machine Unlearning, Transformer-based models, and the

AG News dataset.

Section 3 presents the PRISMA-driven systematic review of Machine Unlearning, detailing the search protocol, the classification axes, and a comparative synthesis of existing approaches.

Section 4 introduces the proposed taxonomy and decision framework, mapping the main families of MU techniques.

Section 5 describes the methods and experimental setup, including the pipeline, the definition of forget and retain sets, the implemented techniques, and the evaluation metrics.

Section 6 reports the experimental results and analyzes efficiency aspects.

Section 7 discusses the observed trade-offs, practical implications, and limitations. Finally,

Section 8 concludes the paper.

2. Background

2.1. Supervised Learning and the Need for Data Removal

Consider a supervised classification model parameterized by weights , trained on a dataset of n labeled examples, where denotes the input (e.g., a text) and the corresponding class label. Under the Empirical Risk Minimization (ERM) principle, training seeks parameters that minimize the average per-example loss (e.g., cross-entropy) over the dataset D. In practice, is optimized via stochastic gradient descent (SGD) and its variants.

After training, information extracted from the data is not stored as a set of separable records but becomes distributed across parameters and internal representations. In deep neural networks, the influence of a single example is entangled with that of many others and propagates across multiple layers, making it non-trivial to retroactively remove the impact of specific training samples. As a result, deleting a subset from the dataset does not imply that the trained model has “forgotten” it: the model may still encode and reveal information through its predictions or through privacy and security vulnerabilities (e.g., membership inference).

The practical need for data removal arises in several settings, including regulatory compliance (e.g., the Right to be Forgotten), mitigation of security threats (poisoning/backdoor), removal of unauthorized or copyright-protected data, correction of biased or erroneous records, and model maintenance in operational pipelines (e.g., rollback, auditing, and data drift management). These scenarios motivate mechanisms that can reduce or eliminate the influence of while preserving utility on the retained data and keeping computational costs sustainable.

2.2. Problem Formulation and Definitions

Let

D denote the training dataset and

the

forget set, with

indicating the

retain set. Machine Unlearning (MU) addresses the problem of efficiently removing the influence of

from a model

trained on

D. Formally, the goal is to compute an updated parameterization

such that the resulting model

behaves, as closely as possible, like a model trained

from scratch on

, which represents the

gold standard, while preserving predictive performance on retained data and satisfying practical constraints on cost, scalability, and availability [

4,

5].

Because training is stochastic, the gold standard does not correspond to a single deterministic model. Let A be a (potentially randomized) training algorithm that induces a distribution over trained models when trained on D. A trained model can be viewed as a sample from , while a retrained model corresponds to a sample from . In the strongest formulation, MU aims to produce an unlearned model that is statistically indistinguishable from a sample drawn from , i.e., it matches (or closely approximates) the distribution induced by retraining on the retained data.

In practice, existing approaches differ in how closely they target the retraining distribution.

Exact or

near-exact unlearning methods attempt to match the retraining behavior (or an operational approximation thereof), often by controlling the training process and/or reusing intermediate states. While this can be feasible for certain algorithmic classes, it typically introduces substantial overhead for monolithic deep neural networks. Strategies that are

design-for-unlearning, such as SISA-style partitioning, aim to confine the influence of data to localized components (e.g., shards/slices), enabling more targeted removals at the cost of structural constraints and additional training complexity [

4,

6,

7]. By contrast,

approximate unlearning relaxes indistinguishability and requires only statistical proximity within an explicit tolerance, often inspired by notions related to

Differential Privacy. These methods typically offer better efficiency and scalability, but provide weaker or implicit guarantees about forgetting completeness.

Evaluating MU is inherently multi-dimensional and typically involves a trade-off among three objectives: (i) efficiency (time/cost relative to full retraining), (ii) retained utility on and on the original task, and (iii) forgetting quality (effective removal of ’s influence and closeness to the retraining gold standard, including robustness against attacks such as membership inference). In practice, improving one aspect often degrades another, and the desired operating point depends on the application scenario.

2.3. Transformer-Based NLP Models

The

Transformer [

8] is an attention-based architecture that replaces recurrence with highly parallelizable computation. In its standard form, it consists of stacks of encoder and decoder blocks. Each layer includes multi-head self-attention, a position-wise feed-forward network, and residual connections followed by layer normalization (Add & Norm). Since self-attention is permutation-invariant, sequence order is incorporated through positional encodings.

Building on this architecture,

BERT [

9] is an encoder-only Transformer that learns bidirectional contextual representations via self-supervised pre-training, primarily through

Masked Language Modeling (and

Next Sentence Prediction in the original formulation). A common configuration, BERT

BASE, uses 12 layers, hidden size

, 12 attention heads, and approximately 110 M parameters.

DistilBERT [

10] is a compressed variant obtained via

Knowledge Distillation, transferring knowledge from a BERT

BASE teacher to a smaller student trained with a distillation objective (without NSP). DistilBERT preserves the encoder-only structure but reduces depth to six layers (with

and 12 heads), removes segment embeddings, and omits the pooler. This yields roughly 66 M parameters (about 40% fewer than BERT

BASE) and substantially faster inference, while retaining most of BERT

BASE’s downstream performance. These properties make DistilBERT a practical choice for MU benchmarking, where multiple training and unlearning cycles are required under limited computational budgets.

2.4. AG News Dataset

AG NEWS (AG’s News Topic Classification Dataset) is a widely used benchmark for text classification. It is derived from a larger collection of news articles and focuses on the four most prevalent categories, yielding a compact and balanced corpus suitable for reproducible experimentation.

The task involves four classes (World, Sports, Business, Sci/Tech) and two standard splits: a train set of instances and a test set of 7600 instances. Each sample includes a label and a text composed of title and short description. A key property for evaluation is the perfect class balance (30,000 training instances per class and 1900 test instances per class), making accuracy particularly informative (chance level: 25%).

AG News is well-suited for unlearning experiments because it combines: (i) broad adoption and integration in standard libraries (facilitating reproducibility and comparisons); (ii) moderate scale enabling repeated training/unlearning runs; and (iii) balanced class structure that supports controlled construction of forget sets (e.g., class-balanced removals), which stabilizes the analysis of trade-offs between forgetting, utility, and efficiency.

5. Benchmark Setup

This section describes the experimental protocol adopted to compare retraining (gold standard) with representative machine unlearning techniques under a controlled and reproducible setup. We use the term benchmark to denote a standardized and repeatable evaluation protocol (including deletion regime, baselines, and metrics) that enables controlled comparison against retrain-from-scratch as the reference behavior. Accordingly, the benchmark is intended as a focused, illustrative case study: its primary contribution is the reproducible evaluation protocol and the associated comparative diagnostics, rather than broad empirical generalization across datasets, architectures, or deletion regimes.

For completeness, we also include two oracle-assisted upper-bound baselines, Knowledge Distillation and Weight Scrubbing. These methods assume access to a clean reference model trained on the retained data. In the runtime tables, we therefore report only their incremental update cost, excluding the cost of producing the clean reference itself. Although our experiments consider a single-shot deletion request, the protocol can be extended to repeated or streaming deletions, as discussed in

Section 7.

5.1. Model and Training Setup

We consider a Transformer-based text classifier implemented with DistilBERTForSequenceClassification and configured for four classes (AG News). To ensure comparability, all methods share the same backbone architecture, tokenizer, and evaluation protocol. Inputs (title + description) are tokenized with distilbert-base-uncased using truncation to a maximum sequence length (), while padding is applied dynamically at the batch level. We adopt this value as a compute–coverage trade-off, since self-attention has quadratic cost in sequence length.

We fine-tune a baseline model on the training pool ( instances) and treat a retrain-from-scratch model (trained only on retained data) as the reference for successful unlearning. Unless otherwise specified, baseline and retrain share the same fine-tuning configuration: AdamW, learning rate , weight decay , linear warmup ratio , and three epochs.

All experiments were executed in Kaggle Notebooks using a fixed environment (CPU + single NVIDIA Tesla P100 GPU) to ensure fair comparisons across methods. The implementation is based on PyTorch (v2.10.0) and Hugging Face Transformers (v5.3.0).

Wall-clock runtimes (in seconds) for a single unlearning cycle are analyzed in the efficiency results section. Retraining is the most expensive procedure, while post hoc methods offer substantial speedups. Notably, influence/Hessian updates and distillation-based approaches are among the fastest, whereas SISA incurs overhead due to shard management and selective retraining. For Knowledge Distillation and Weight Scrubbing, the reported runtime counts only the student-update/scrub step given an oracle clean teacher/reference model ( or ); it does not include the cost of producing that clean model (i.e., retraining), which we compute separately as the benchmark target.

5.2. Definition of Forget and Retain Sets

Let denote the original AG News training split (120,000 samples). We first create a disjoint holdout validation set from , with . We define the training pool used for the baseline model as (108,000 samples).

From this pool, we then sample the forget set

(

train_deleted) using a class-balanced deletion regime, selecting

samples uniformly across the four AG News classes (7500 per class). The retained training set is the remainder:

In our configuration, this yields . With this construction, is disjoint from both and by design (hold out first, then sample from the remaining pool).

We additionally construct a small validation subset val_retained (2000 samples) drawn from train_retained to support hyperparameter tuning for the retrained model and for unlearning methods that require validation. The held-out AG News test split (7600 instances) is used as test_full.

5.3. Implemented Unlearning Techniques

We evaluate both design-for-unlearning and post hoc approaches. Post hoc methods start from the baseline model trained on and apply an update guided by . Design-for-unlearning methods instead modify the training procedure so that deletions can be handled efficiently after deployment. We distinguish between methods that can be executed without any clean oracle (SISA, Ascent/Descent-to-Delete, Influence/Hessian) and oracle-assisted upper-bound baselines (Knowledge Distillation and Weight Scrubbing) that require as a clean teacher/reference; the latter are included to contextualize the best-case alignment to retraining under idealized access to a clean model.

Retrain-from-scratch (gold standard). Given , the gold-standard model is trained from scratch on and validated on val_retained. This model defines the target behavior after deletion and serves as the reference for successful unlearning.

SISA (sharded, isolated, sliced, aggregated). SISA can enable near-exact unlearning under appropriate sharding/slicing and stable optimization by retraining only the affected slice(s) after a deletion request. The training data are partitioned into k disjoint shards, each further subdivided into sequential slices. After training, a model checkpoint is stored for each slice, allowing for selective rollback. Upon deletion, only the impacted shard is retrained from the earliest affected slice onward, reducing recomputation relative to full retraining; however, effectiveness and stability can be configuration- and request-regime-dependent in practice.

In our implementation we adopt

shards/slices (one checkpoint per slice), and we aggregate shard-level predictors as an ensemble. We report these settings explicitly because both utility and compute can vary substantially with

and with how dispersed deletion requests are across shards/slices (see

Section 6 and

Section 7).

Gradient-based Ascent/Descent-to-Delete (D2D). D2D alternates (i) gradient ascent on

to reduce fit to deleted samples and (ii) repair steps via gradient descent on

to preserve utility:

where

and

. We use one forget step followed by two repair batches per iteration, together with mixed-precision training and gradient clipping. To reduce catastrophic drift, we freeze the first Transformer block in the final configuration. This tractability choice implies that the method performs a constrained parameter edit and therefore targets

functional unlearning (behavioral regression toward retraining) rather than exact parameter-level equivalence.

Influence functions with Hessian approximation. We approximate the parameter change induced by removing

using an influence-style update computed from the baseline parameters

:

where

is used to estimate curvature,

,

, and

is a Tikhonov damping coefficient. To make the method tractable for DistilBERT, updates are restricted to the classification head and the last Transformer blocks. This restriction makes the method an approximate editing baseline: it aims to match retrain behavior under a limited update budget and does not provide exact (parameter-wise) unlearning guarantees. The linear system is solved via conjugate gradient using Hessian–vector products and a diagonal preconditioner. Since second-order derivatives are required, we disable flash/memory-efficient attention kernels when necessary, which can increase wall-clock time; all methods are timed end-to-end under the same environment, so comparisons remain consistent.

Knowledge distillation (teacher–student unlearning). We implement a teacher–student unlearning procedure following the general paradigm of distillation-based removal. A clean teacher model is obtained via retraining on retained data (), and a student model is initialized from the baseline (). This variant is oracle-assisted, as it assumes access to a clean teacher trained without ; we use it as an upper-bound reference to quantify the best-case effectiveness of distillation given .

The student is then trained to match the teacher’s soft predictions on the retained set, using a KL divergence loss:

where

and

denote student and teacher logits and

is a temperature parameter. In our experiments, we use

and perform a single epoch of distillation over

train_retained.

Weight Scrubbing. We implement Weight Scrubbing as a post hoc parameter repair mechanism that nudges the baseline weights toward a clean reference . Here denotes the parameters of a retrained model on retained data (), so this configuration should be interpreted as an oracle-assisted/upper-bound variant: it assumes access to a clean reference trained without and uses it to quantify the best-case effect of parameter restoration.

Given baseline parameters

and a clean reference

, we apply an exponential moving update:

where

controls the strength of scrubbing. We use

and apply 10 scrubbing steps over the full parameter set.

5.4. Evaluation Metrics

We evaluate unlearning outcomes on train_deleted, train_retained, and test_full along four dimensions: task utility, functional forgetting, privacy leakage (membership inference), and alignment to the retrain-from-scratch reference in output behavior, representations, and parameters.

Utility and calibration. We report accuracy and negative log-likelihood (NLL):

To assess probabilistic quality, we apply temperature scaling on the validation set and compute Expected Calibration Error (ECE) using

M confidence bins:

Forgetting indicators. As a functional signal of forgetting, we compute the average cross-entropy loss on

and

:

and summarize deviations from retraining via

and

with respect to

.

Membership inference audit. We use loss-based membership inference as the primary privacy audit (other scores, such as entropy, maxprob, margin, and energy, follow the same trend in our setting). We consider

MIA Deleted (members:

train_deleted, non-members:

test_full) and

MIA Retained (members:

train_retained, non-members:

test_full). We report ROC curves and AUC; leakage is best summarized by how close the AUC is to 0.5, with values closer to 0.5 indicating a weaker membership signal. Because this benchmark operates in a near-chance MIA regime (AUC values close to 0.5), loss-based MIA is best interpreted as a detectability and sanity-check signal rather than as a standalone privacy guarantee. We discuss stronger attacker models and more demanding “stress-test” regimes (e.g., larger models, stronger overfitting, or representation-based MIAs) in

Section 7.

Alignment to retraining (distributional and structural). To quantify similarity to

, we measure: (i) Jensen–Shannon (JS) divergence between predicted class distributions, (ii) Top-

k behavioral disagreement based on Jaccard overlap, (iii) activation distances (L2 and cosine) on

[CLS] hidden states, and (iv) relative weight shift (RSS). For each input

x, let These measures are intended for

relative comparison and should be interpreted in context. In general, lower values indicate closer alignment to retraining, but what constitutes “good enough” depends on the task and on the inherent variability of independent retraining runs (e.g., across random seeds). A principled acceptance criterion would compare a method’s distance-to-retrain against the retrain-to-retrain variability band; since our benchmark uses fixed seeds to enable controlled comparisons, we use these metrics primarily to rank methods and to flag large deviations, and we discuss seed-sensitivity as a threat to validity in

Section 7.

and denote the softmax class-probability vectors from and the compared model, respectively; split-level values average over examples in the split S.

JS divergence is computed as

and we report

.

For Top-

k agreement, let

and

, and define

For

,

reduces to the Top-1 mismatch rate.

For representation alignment, let

be the

[CLS] hidden states at layer

ℓ (pre-classifier). We compute

and average over

. Unless otherwise stated, we report distances at the last Transformer layer (

), and optionally provide layer-wise profiles.

Finally, parameter drift is quantified via

where

and

denote the full parameter vectors, and

a parameter group (e.g., embeddings, encoder blocks, classifier head).

6. Benchmark Evaluation

We first compare the baseline model, fine-tuned on the training pool , against the retrained model trained only on retained data (). This baseline-vs-retrain comparison establishes the intrinsic impact of removing the forget set on both utility and model behavior. Since baseline training includes (i.e., ), the two models differ only by whether the deleted samples are present during training.

Table 2 reports task performance (accuracy), probabilistic quality (NLL/log-loss), and calibration (ECE with and without temperature scaling) on

train_deleted,

train_retained, and

test_full, together with wall-clock training time. Differences are reported as

.

Retraining on

is faster than training the baseline on

, consistent with the reduced number of training instances after removal. In our setup, retraining requires about 25% less time (

Table 2), and therefore provides the

exact runtime reference against which the efficiency of unlearning procedures is evaluated.

On

train_deleted, the baseline model shows the expected advantage because it has been trained on

. In contrast, the retrained model exhibits a systematic reduction in predictive fit on deleted samples, with a drop in accuracy of roughly

and corresponding increases in NLL/log-loss (

Table 2). Notably, after temperature scaling, ECE values become nearly indistinguishable, suggesting that deletion mainly reduces task-specific fit on forgotten points rather than broadly degrading probabilistic reliability.

On

train_retained, differences remain small: accuracy and NLL change marginally, and temperature-scaled calibration is comparable (or slightly improved) for the retrained model. On

test_full, retraining yields negligible variations in accuracy and calibration, indicating that removing

does not materially affect out-of-sample generalization in this setting (

Table 2).

Figure 4 summarizes the (raw) test accuracy comparison between baseline and retrain on

test_full.

Beyond aggregate metrics, distributional and structural indicators confirm that baseline and retrained models remain close overall (

Table 3). Output-probability divergence is modest and is more pronounced on

train_deleted than on the test set, consistent with the localized impact of removing

. For representation- and parameter-level indicators (activation distance, RSS weight change, and classifier share), we report a single global value computed on a fixed probe (hence the spanning entries in

Table 3). Parameter-level analyses further show that changes concentrate in task-specific components (especially the classification head and upper layers), while early representations remain comparatively stable—a pattern consistent with a boundary readjustment rather than a disruption of general-purpose features.

A per-class inspection does not reveal systematic shifts in confusion patterns or class-specific degradation; observed deviations remain limited and broadly uniform across classes.

6.1. Comparison Across Unlearning Methods

This section compares representative unlearning strategies against the retrain-from-scratch reference, which approximates the desired behavior after removing . Results are organized by (i) forgetting/utility on train_deleted and train_retained, (ii) generalization and calibration on test_full, and (iii) privacy-oriented audits via membership inference.

6.1.1. Performance on Deleted and Retained Sets

We evaluate each method on the forget split (train_deleted) and on the retained split (train_retained). Retraining defines a natural target: on train_deleted it reflects the expected degradation once is absent from training, while on train_retained it approximates the utility of a clean model trained only on .

Table 4 hiA shows predominantly negatithods (Ascent/Descent-to-Delete, Influence/Hessian, Knowledge Distillation, Weight Scrubbing) preserve retained utility well, matching or slightly improving upon retraining on

train_retained. However, they remain systematically

too strong on

train_deleted (higher accuracy and lower NLL than retraining), which is consistent with

under-forgetting: residual information about

still influences the model. SISA behaves qualitatively differently, with a pronounced degradation on both deleted and retained splits and extremely large raw NLL values that are substantially reduced after temperature scaling, suggesting reduced stability and/or overly aggressive removal effects in the adopted configuration. We therefore report the adopted shard/slice setting

and emphasize that SISA’s utility/forgetting profile can be sensitive to this configuration and to deletion dispersion.

6.1.2. Performance and Calibration

We next assess generalization and probabilistic quality on the disjoint test_full split. The main goal is to verify whether unlearning preserves task utility (accuracy) and whether it introduces calibration shifts (NLL/log-loss and ECE), relative to retraining as the clean reference.

As shown in

Table 5, approximate post hoc methods maintain test accuracy and NLL close to retraining, and calibration remains stable overall. Temperature scaling further compresses ECE into a narrow range across methods, indicating that most differences are limited to confidence re-scaling rather than fundamental changes in predictive behavior. A notable exception is Ascent/Descent-to-Delete, which shows a higher uncalibrated ECE, consistent with targeted parameter updates affecting confidence patterns and benefiting from explicit calibration.

SISA exhibits a markedly different profile: substantially lower accuracy and very large raw NLL, while temperature scaling sharply reduces NLL and restores low ECE. This illustrates that calibration can improve even when predictive performance remains degraded, i.e., calibration and accuracy may decouple under strong distributional or ensemble-induced shifts.

Figure 5 summarizes the raw test accuracy of each unlearning method on

test_full relative to the retraining reference.

6.1.3. Membership Inference and Privacy Indicators

To probe privacy leakage, we perform threshold-based membership inference using loss-derived scores and report ROC/AUC, average precision (AP), and FPR at fixed TPR (0.90 and 0.95). We consider two complementary settings: MIA Deleted, where members are train_deleted and non-members are test_full (the core adversarial forgetting audit), and MIA Retained, where members are train_retained and non-members are test_full (a control to detect pathological side effects on retained data).

Table 6 shows that AUC values are close to

in both settings, indicating weak discriminability overall in this experimental regime. Differences between retraining and post hoc methods are small, and no method produces a clear increase in membership leakage relative to the retraining reference. SISA yields the flattest membership signal (AUC closest to

), consistent with stronger smoothing/removal effects, but this must be interpreted jointly with its lower utility and higher instability observed in task metrics. Given the small absolute AUC differences, these MIA results primarily function as a safety-consistency check: method selection in this setup is more strongly driven by the utility/forgetting trade-off on deleted vs. retained splits and by behavioral/structural alignment to retraining.

Since the ROC curves are nearly overlapping and AUC differences are within a few thousandths, we inspect the differential signal , so that retraining lies on the horizontal axis (). Positive values indicate a slightly more effective attacker than retraining at the same FPR, while negative values indicate a weaker attacker (closer to random).

Figure 6 (MIA Deleted) shows that all methods remain confined to a narrow band (approximately from

to

), with small oscillations around zero across the full FPR range. Baseline, Influence/Hessian, Ascent/Descent-to-Delete, and Weight Scrubbing exhibit mildly positive

especially at medium–high FPR (≳0.5), with peaks around

–

, meaning the attacker can identify deleted points only marginally better than under retraining. Knowledge Distillation stays closest to zero overall, indicating the strongest alignment to retraining in terms of membership signal on the forget set. SISA is predominantly negative (about

to

), bringing the ROC slightly closer to the random diagonal, i.e., a weaker attacker than retraining on deleted instances. Overall, the deviations confirm that no method introduces a marked increase in membership leakage, and the Influence/Hessian curve closely tracks the baseline, consistent with the absence of measurable forgetting under this MIA audit.

Figure 7 (MIA Retained) provides the complementary control: we seek methods that remain close to retraining without systematically collapsing the membership signal on retained data. Baseline, Ascent/Descent-to-Delete, Influence/Hessian, Weight Scrubbing, and Knowledge Distillation oscillate around zero within a tight range (about ±0.006), with occasional mildly positive deviations at high FPR (≳0.6), suggesting that the attacker’s ability to recognize retained members remains essentially unchanged (or slightly higher) compared to retraining, i.e., informative structure is preserved on legitimate data. In contrast, SISA shows predominantly negative

(down to about

–

at medium–high FPR), indicating a weaker attacker also on retained points consistent with a stronger but less selective smoothing effect, which aligns with its lower utility profile.

6.1.4. Distributional and Structural Analysis

Beyond utility and privacy metrics, we assess whether unlearned models behaviorally match the retrain-from-scratch reference not only in terms of predictions, but also in terms of (i) distributional similarity of output probabilities and (ii) structural similarity of internal representations and parameters. The goal is to quantify how closely each method realigns to retraining and to identify where this realignment occurs along the model architecture.

Table 7 summarizes the main indicators with respect to retraining. Lower values indicate closer agreement.

Overall, the most effective post hoc approximate methods exhibit a tight distributional alignment to retraining: output-probability divergences remain small on test_full and train_retained, with a consistent (but limited) increase on train_deleted. This pattern indicates that the confidence profile remains close to the clean reference while still reflecting the absence of . In contrast, SISA yields substantially larger distributional shifts, suggesting a broader displacement of the decision geometry that is not confined to the forget set.

Structural indicators provide a coherent explanation for this behavior. The behavioral disagreement measure (Top-k overlap with retrain) shows that post hoc methods preserve local decision consistency: predictions largely coincide with the retrain reference, and disagreement increases only mildly as k grows. Representation-level distances further indicate a characteristic layerwise trend: discrepancies are minimal in early layers and increase toward deeper, task-specific layers. For the methods most aligned with retraining, this profile remains stable across splits, consistent with a controlled readjustment rather than split-specific representational drift. SISA preserves the same monotonic depth trend but at uniformly higher distances, consistent with a more global representational shift.

Finally, weight-space analyses confirm that post hoc updates induce limited overall parameter drift, yet the change is highly concentrated in task-specific components (most notably the classification head and upper layers), while embeddings and early layers remain comparatively stable. This concentration is consistent with a decision-boundary adjustment mechanism that preserves general-purpose representations. Conversely, the larger structural distances observed for SISA are compatible with broader parameter shifts driven by its training and partitioning scheme, rather than targeted and localized forgetting.

6.2. Efficiency Analysis

We evaluate the computational efficiency of unlearning methods in terms of wall-clock time, with the goal of quantifying the practical time-to-unlearn relative to the retrain-from-scratch reference. In operational settings, the feasibility of compliance-driven deletion depends not only on how well a method forgets, but also on how quickly an updated model can be produced after a deletion request.

Under identical architecture, hyperparameters, and hardware, retraining on is faster than baseline training on because it operates on a smaller dataset. In our setup, baseline training takes about 710 s, while retraining takes about 540 s (roughly ). We treat this retraining runtime as the exact time reference for deletion via full retraining, and as the primary benchmark for comparing alternative unlearning strategies.

All

approximate methods that reuse the baseline model as a starting point are faster than monolithic retraining (

Table 8). The gradient-based update (Ascent/Descent-to-Delete) is the most time-efficient, reducing wall-clock time to roughly 300 s (about

vs. retrain). Influence/Hessian follows at around 360 s (

), while Weight Scrubbing and Knowledge Distillation require about 380–395 s (

to

). Overall, these results indicate that the deletion cycle can typically be accelerated by approximately 20–

relative to retraining, at the cost of potential trade-offs in forgetting effectiveness that must be assessed jointly with utility and privacy metrics.

SISA introduces a qualitatively different efficiency profile. By design, it supports exact unlearning through sharding/slicing and selective retraining, but it incurs a substantial upfront overhead in the initial ensemble training phase. In our configuration, SISA training without deletions takes about 1290 s compared to about 710 s for monolithic baseline training (approximately ). This overhead is driven by multiple training phases across shards/slices and by orchestration costs (repeated checkpointing, data loader reconstruction, and training-loop re-initialization).

At unlearning time, SISA efficiency strongly depends on how many instances are removed and how dispersed they are across shards/slices. For a small, localized request (e.g., deleting 4 instances), SISA remains competitive (about 435 s, i.e., vs. retrain). For a large, diffuse request (e.g., deleting ∼30k instances), many components are affected and selective retraining approaches a broad retraining workload: runtime increases to about 980 s (about vs. retrain), becoming more expensive than monolithic retraining. Operationally, this suggests that SISA is most suitable for continuous, incremental, small-scale deletions, whereas its advantage diminishes (and may reverse) under batch deletions affecting large portions of the training data. SISA efficiency is also influenced by the configuration. Increasing the number of shards S and/or slices R increases the number of checkpoints approximately linearly, amplifying storage and I/O overhead. With the adopted setting , the initial training produces 24 checkpoints (about GB), while a worst-case unlearning scenario can require roughly double (about 48 checkpoints, ∼13 GB). More aggressive configurations quickly lead to dozens or hundreds of checkpoints, which can become a practical bottleneck under strict storage/throughput constraints or highly distributed deletion patterns. From an efficiency standpoint, three conclusions emerge. First, when immediate speed-ups on an already trained model are required, lightweight post hoc editing methods (especially gradient-based updates and scrubbing) are typically the most economical. Second, Influence/Hessian can remain time-competitive with retraining, but comes with higher implementation complexity and stricter computational requirements. Third, SISA can be advantageous in systems engineered for frequent small deletions, yet may become slower than retraining for large-scale removals or overly aggressive shard/slice configurations.

7. Discussion

Our results highlight a fundamental three-way tension in practical machine unlearning: preserving utility on legitimate data, achieving effective forgetting and privacy that approaches training without , and satisfying efficiency constraints such as time-to-unlearn and operational overhead. No evaluated method simultaneously optimizes all three dimensions. Instead, approaches occupy distinct positions in this trade-off space, suggesting that unlearning is better framed as a deployment decision conditioned on the required assurance level and the expected deletion regime, rather than as a single universally best algorithmic choice.

Retraining-from-scratch remains the most reliable behavioral reference for deletion, since the model never observes

and therefore provides a natural target response on

train_deleted while maintaining stable generalization on

test_full (

Table 2). This makes retraining a defensible anchor for assessing whether unlearning has succeeded in practice, as it offers a concrete notion of what “forgotten” should look like at the level of model behavior. Its limitation is primarily operational: full retraining can be costly and may become impractical under frequent requests, strict latency constraints, or larger model scales, motivating approximate strategies that aim to approach retrain behavior at reduced cost.

Single-model post hoc methods (gradient-based editing, distillation, scrubbing, influence-style updates) offer substantial runtime advantages (

Table 8) and, in many cases, preserve retained and test utility, as well as calibration relative to retraining (

Table 4 and

Table 5). However, a recurring pattern is that these methods can remain

more predictive on

train_deleted than the retrain reference, with higher accuracy and lower NLL. This is consistent with

under-forgetting: rather than fully removing evidence associated with

, the update may predominantly readjust decision boundaries while leaving residual information in the representation. Distributional and structural analyses are aligned with this interpretation: the strongest post hoc approaches remain close to retraining overall, yet the induced changes are concentrated in task-specific components (

Table 7), suggesting targeted interventions that preserve utility but may offer weaker assurances when judged against retraining.

SISA exhibits a qualitatively different trade-off profile. By sharding and slicing training, it enables composable deletion through selective retraining, which is particularly attractive when deletion requests are small and localized. At the same time, the extent to which SISA approximates the retrain-from-scratch reference is influenced by the sharding/slicing configuration and by the optimization dynamics within each shard. In our setting, we find that some configurations yield outcomes that are less closely aligned with retraining, even though SISA is designed to support near-exact unlearning. This effect is more evident under large, class-balanced deletions dispersed across many shards/slices: more components are affected, selective retraining becomes widespread, and the expected locality benefits are reduced, with additional variability introduced by repeated retraining across multiple components.

This design also introduces non-trivial upfront overhead and sensitivity to deletion granularity. Empirically, SISA can be competitive for limited removals, but becomes more expensive than monolithic retraining when deletions are large or widely distributed (

Table 8). In our configuration, it is also associated with larger deviations from the retrain reference and a broader impact on predictive performance (

Table 5 and

Table 7), suggesting weaker separation between deleted and retained effects under this deletion regime. Finally, while temperature scaling improves calibration, it does not eliminate the utility gap, illustrating that calibration and predictive performance can decouple after substantial procedural changes.

Taken together, these outcomes suggest that deployable unlearning should be evaluated through an

acceptance protocol rather than any single metric. Approximate methods are compelling when low latency is critical, but they should be adopted only when they satisfy all of the following: (i) retained and test utility remain within predefined tolerances, (ii) behavior on deleted samples regresses toward retrain-level performance, and (iii) distributional and structural alignment with retraining is preserved. In this context, membership inference provides supporting evidence rather than a standalone guarantee. In our setting, loss-based MIAs are generally weak, with AUC values close to

across methods (

Table 6), and are therefore best interpreted as safety-consistency checks. This is compatible with limited separation between train and test losses on this task and with the attacker being score-only; stronger attackers (e.g., representation-based) may yield a clearer signal. Stronger assurance is more likely to be achieved by combining privacy audits with behavioral regression on deleted data and complementary alignment diagnostics.

Our benchmark is intentionally controlled: it focuses on supervised text classification (DistilBERT on AG News), a single deletion scale and construction (class-balanced ∼30k deletion), and a fixed execution environment. Additionally, some approximate methods in the benchmark (e.g., Influence/Hessian and gradient-based editing) constrain updates to late layers or the classifier head for tractability; this should be read as targeting functional unlearning rather than guaranteeing exact parameter-level “true unlearning”. Consequently, absolute results should not be over-generalized to other regimes. Different deletion schedules (streaming vs. batch, repeated requests), different targets (instance-, class-, concept-, or client-level deletion), and larger architectures and pre-training settings may amplify or shift the observed trade-offs. Moreover, the experiments rely on fixed random seeds to ensure controlled comparisons across methods; however, retraining outcomes in deep models can vary across seeds due to stochastic optimization and initialization. As a result, distances between an unlearned model and the retraining reference should ideally be interpreted relative to the variability observed across independent retraining runs. We therefore treat seed sensitivity as a potential threat to validity and leave systematic multi-seed evaluation as an extension of the benchmark. In addition, a near-random MIA regime limits the discriminative power of privacy auditing in this setup, motivating stronger attacker models and complementary leakage tests. In particular, when baseline leakage is weak (AUC close to 0.5), incremental improvements due to unlearning may be difficult to detect statistically using score-only attacks. Future benchmark extensions should therefore include settings that induce more detectable memorization (e.g., stronger overfitting, larger models, or more difficult generalization regimes) together with stronger MIAs (e.g., representation-based or likelihood-ratio-style attacks) to stress-test privacy claims.

Several concrete directions emerge from the review and the benchmark evidence. First, standardized benchmarks should move beyond single-shot deletion by varying deletion granularity (instance/class/concept), deletion scale, and deletion schedule (single-shot vs. repeated requests), while reporting utility, calibration, and compute budgets under consistent protocols. This is especially important for design-for-unlearning methods, whose efficiency depends on how deletions are dispersed across shards and slices. Second, privacy auditing should be strengthened: when loss-based MIA operates near chance, differences between methods can be masked, so evaluations should include multiple attacker models (black-box vs. white-box; score-based vs. representation-based) and complementary exposure tests to better assess residual leakage on . Third, the recurring “too-strong on deleted” pattern motivates representation-level diagnostics that can help distinguish boundary readjustment from deeper representational forgetting, for instance, through layer-wise probes, targeted attribution tests, and similarity analyses that may also inform principled where-to-edit strategies. Fourth, design-for-unlearning may benefit from increased modularity (e.g., adapters, structured heads, or separation of task-specific components) and from principled selection of editable parameters to improve selectivity and alignment to retraining. Finally, scaling toward LLM and federated settings introduces additional requirements: in LLMs, unlearning may manifest as behavioral suppression rather than true removal, requiring concept/knowledge-level tests and careful treatment of auxiliary components (retrieval layers, caches), while in federated learning, client-level removal must account for distributed optimization, limited observability, and communication constraints. Across settings, operational verifiability (audit logs, deletion registries, and reproducible unlearning transcripts) will be important to translate algorithmic progress into governance-ready practice.

Overall, retraining remains the clearest behavioral target, while approximate methods can be viable under strict acceptance testing; progress will benefit from standardized evaluation, stronger audits, design-time modularity, and verifiable evidence of forgetting.

8. Conclusions

This work addresses machine unlearning through an integrated perspective that combines: (i) a systematic organization of the literature along key dimensions (request granularity, required guarantees, model scale/setting, and design-for-unlearning vs. post hoc operation); (ii) a practical taxonomy and selection guidelines to support method choice under operational and compliance constraints; and (iii) a unified, reproducible experimental pipeline that compares retrain-from-scratch against representative approximate post hoc techniques and a design-for-unlearning baseline (SISA). The evaluation spans complementary axes utility and probabilistic quality, forgetting/privacy indicators, distributional and structural alignment to retraining, and wall-clock efficiency to make explicit the trade-offs that arise in real deployments.

Empirically, retrain-from-scratch provides the most direct behavioral reference: it preserves stable generalization on held-out data while exhibiting the expected degradation on the forget split, consistent with removing the influence of . In contrast, the strongest approximate post hoc methods remain close to retraining on retained/test utility and calibration and show tight distributional/structural proximity, but they often remain systematically more predictive on deleted samples than retraining, indicating under-forgetting under functional criteria. On the privacy side, loss-based membership inference operates near chance in this setting, with AUC values close to across methods; consequently, MIA acts primarily as a consistency check rather than a decisive discriminator, and robust acceptance should rely on a joint view of deleted-set regression toward retraining, retained/test utility, and behavioral alignment measures. From an efficiency standpoint, post hoc methods substantially reduce time-to-unlearn relative to retraining, while SISA exhibits a request-dependent profile: it incurs substantial upfront overhead and becomes advantageous mainly under frequent, small, localized deletions, whereas large or widely distributed deletions can erase its runtime benefits.

Overall, the study supports a pragmatic conclusion: when strong guarantees or straightforward auditability are required, retraining remains the conservative choice; when rapid response is the dominant constraint, approximate post hoc methods can be effective but should be deployed with explicit acceptance criteria that verify both utility preservation and progress toward retrain-level forgetting. The proposed taxonomy, guidelines, and experimental evidence jointly provide a practical basis for building unlearning systems that are implementable, auditable, and aligned with real-world constraints on performance and resources.