Micro-Blog Sentiment Classification Method Based on the Personality and Bagging Algorithm

Abstract

1. Introduction

2. Related Work

3. Related Concepts

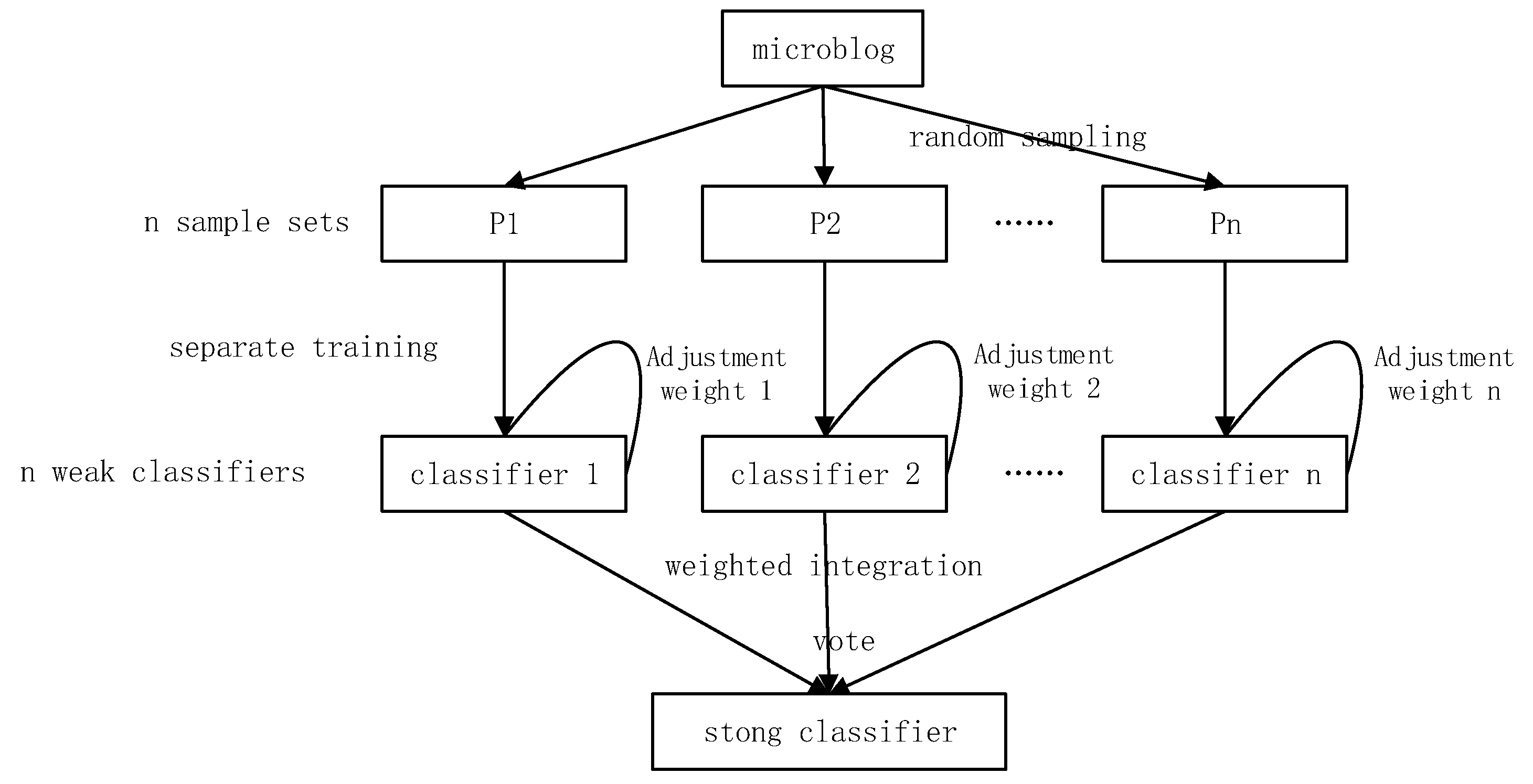

3.1. Ensemble Learning

3.2. Personality Model

3.3. LSTM

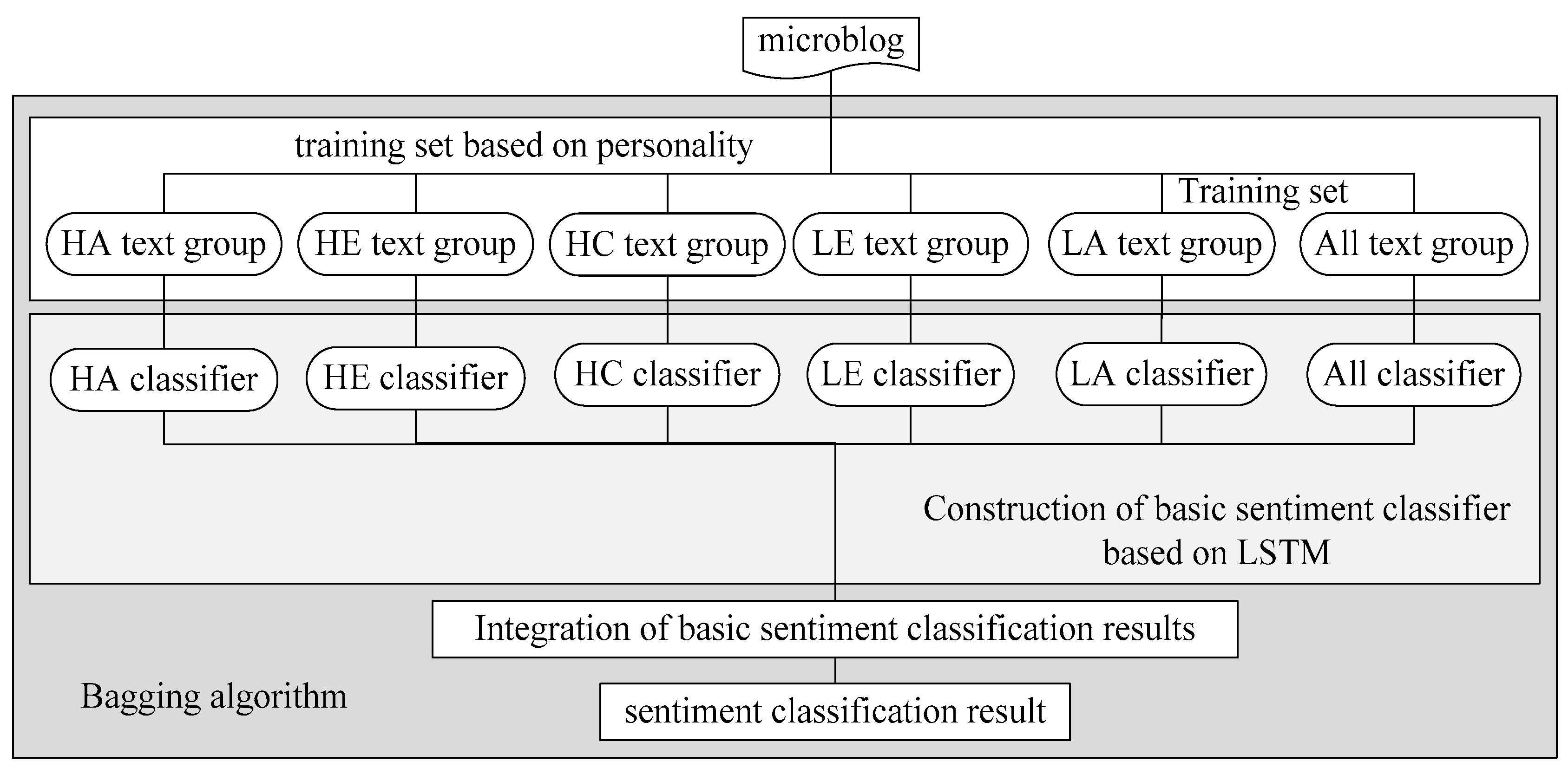

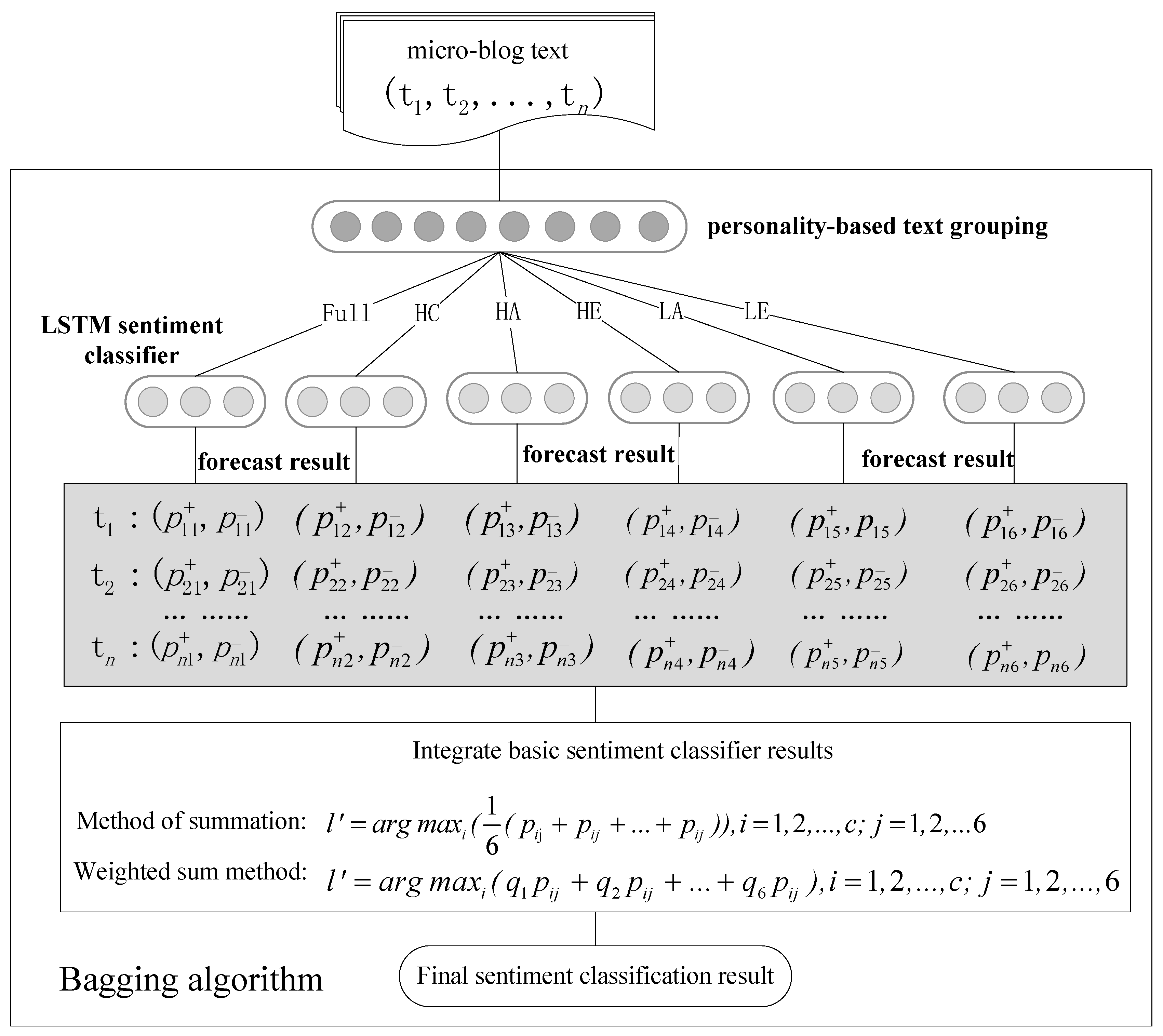

4. Micro-Blog Sentiment Analysis Method Based on Personality and Bagging Algorithm

4.1. Text Personality Classification

4.2. Ensemble Learning of Basic Emotion Classifier

5. Micro-Blog Sentiment Classification Experiment

5.1. Experimental Data

5.2. Basic Sentiment Classifier Comparison

5.3. Comparison of Bagging Algorithm Integration Methods

5.4. Comparative Experiment

- (1)

- SVM: The basic model of a Support Vector Machine (SVM) is to find the best-separated hyperplane in the feature space to maximize the positive and negative sample spacing on the training set. SVM is a supervised learning algorithm used to solve the two-class problem. It can also be used to solve nonlinear problems after the introduction of the kernel method.

- (2)

- LSTM: Proposed by Hochreiter and Schmidhuber (1997), LSTM is a special type of RNN that helps in learning long-term dependency information.

- (3)

- CNN-rand: The CNN-rand was proposed by Kim in 2014 [47] where all the words are randomly initialized and then modified during training.

- (4)

- CNN-static: presented also by Kim in 2014, the CNN static includes a pre-training vector model from word2vec. All words, including randomly initialized unknown words, are kept static, and only the other parameters are learned in the model.

- (5)

- CNN-non-static: Similar to CNN-static, the CNN–non-static includes a fine-tuned vector pre-trained for each task.

- (6)

- PBAL: See Table 6 for experimental parameter settings.

6. Conclusion and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Bermudezgonzalez, D.; Mirandajiménez, S.; Garcíamoreno, R.; Calderónnepamuceno, D. Generating a Spanish Affective Dictionary with Supervised Learning Techniques. In New Perspectives on Teaching and Working with Languages in the Digital Era; Research-publishing.net: Dublin, Ireland, 2016. [Google Scholar]

- Cai, Y.; Yang, K.; Huang, D.; Zhou, Z.; Lei, X.; Xie, H.; Wong, T.L. A hybrid model for opinion mining based on domain sentiment dictionary. Int. J. Mach. Learn. Cybern. 2019. [Google Scholar] [CrossRef]

- Xu, G.; Yu, Z.; Yao, H.; Li, F.; Meng, Y.; Wu, X. Chinese Text Sentiment Analysis Based on Extended Sentiment Dictionary. IEEE Access 2019, 7, 43749–43762. [Google Scholar] [CrossRef]

- Yang, Y.; Zhou, F. Microblog Sentiment Analysis Algorithm Research and Implementation Based on Classification. In Proceedings of the 2015 14th International Symposium on Distributed Computing and Applications for Business Engineering and Science (DCABES), Guiyang, China, 18–24 August 2015. [Google Scholar]

- Pang, B.; Lee, L.; Vaithyanathan, S. Thumbs up? In Sentiment Classification using Machine Learning Techniques. In Proceedings of the Empirical Methods in Natural Language Processing, Philadelphia, PA, USA, 6–7 July 2002; pp. 79–86. [Google Scholar]

- Kamal, A.; Abulaish, M. Statistical Features Identification for Sentiment Analysis Using Machine Learning Techniques. In Proceedings of the 2013 International Symposium on Computational and Business Intelligence, New Delhi, India, 24–26 August 2013; IEEE: Piscataway, NJ, USA, 2014. [Google Scholar]

- Song, M.; Park, H.; Shin, K. Attention-based long short-term memory network using sentiment lexicon embedding for aspect-level sentiment analysis in Korean. Inf. Process. Manag. 2019, 56, 637–653. [Google Scholar] [CrossRef]

- Sharma, A.; Dey, S. A boosted SVM based sentiment analysis approach for online opinionated text. In Proceedings of the Research in Adaptive and Convergent Systems, Montreal, QC, Canada, 1–4 October 2013; pp. 28–34. [Google Scholar]

- Sharma, S.; Srivastava, S.; Kumar, A.; Dangi, A. Multi-Class Sentiment Analysis Comparison Using Support Vector Machine (SVM) and BAGGING Technique-An Ensemble Method. In Proceedings of the 2018 International Conference on Smart Computing and Electronic Enterprise (ICSCEE), Shah Alam, Malaysia, 11–12 July 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 1–6. [Google Scholar]

- Rong, W.; Nie, Y.; Ouyang, Y.; Peng, B.; Zhang, X. Auto-encoder based bagging architecture for sentiment analysis. J. Vis. Lang. Comput. 2014, 25, 840–849. [Google Scholar] [CrossRef]

- Lin, D.; Chen, H.; Li, X. Improving Sentiment Classification Using Feature Highlighting and Feature Bagging. In Proceedings of the 2011 IEEE 11th International Conference on Data Mining Workshops (ICDMW), Vancouver, BC, Canada, 11 December 2011. [Google Scholar]

- Wang, C.H.; Han, D. Sentiment Analysis of Micro-blog Integrated on Explicit Semantic Analysis Method. Wirel. Pers. Commun. 2018, 102, 1–11. [Google Scholar] [CrossRef]

- Waila, P.; Singh, V.K.; Singh, M.K. Evaluating Machine Learning and Unsupervised Semantic Orientation approaches for sentiment analysis of textual reviews. In Proceedings of the 2012 IEEE International Conference on Computational Intelligence & Computing Research (ICCIC), Coimbatore, India, 18–20 December 2012. [Google Scholar]

- Mladenovic, M.; Mitrovic, J.; Krstev, C.; Vitas, D. Hybrid sentiment analysis framework for a morphologically rich language. Intell. Inf. Syst. 2016, 46, 599–620. [Google Scholar] [CrossRef]

- Yin, R.; Li, P.; Wang, B. Sentiment Lexical-Augmented Convolutional Neural Networks for Sentiment Analysis. In Proceedings of the IEEE International Conference on Data Science in Cyberspace, Shenzhen, China, 26–29 June 2017; pp. 630–635. [Google Scholar]

- Dan, L.; Jiang, Q. Text sentiment analysis based on long short-term memory. In Proceedings of the 2016 First IEEE International Conference on Computer Communication and the Internet (ICCCI), Wuhan, China, 13–15 October 2016. [Google Scholar]

- Lu, C.; Huang, H.; Jian, P.; Wang, D.; Guo, Y. A P-LSTM Neural Network for Sentiment Classification. In Proceedings of the Pacific-Asia Conference on Knowledge Discovery and Data Mining, Jeju, Korea, 23–26 May 2017; pp. 524–533. [Google Scholar]

- Rezaeinia, S.M.; Ghodsi, A.; Rahmani, R. Text Classification based on Multiple Block Convolutional Highways. arXiv 2018, arXiv:1807.09602. [Google Scholar]

- Jabreel, M.; Moreno, A. EiTAKA at SemEval-2018 Task 1: An ensemble of n-channels ConvNet and XGboost regressors for emotion analysis of tweets. arXiv 2018, arXiv:1802.09233. [Google Scholar]

- Abdi, A.; Shamsuddin, S.M.; Hasan, S.; Piran, J. Deep learning-based sentiment classification of evaluative text based on Multi-feature fusion. Inf. Process. Manag. 2019, 56, 1245–1259. [Google Scholar] [CrossRef]

- Liu, Y.; Chen, Y. Research on Chinese Micro-Blog Sentiment Analysis Based on Deep Learning. In Proceedings of the 2015 8th International Symposium on Computational Intelligence and Design (ISCID), Hangzhou, China, 12–13 December 2015. [Google Scholar]

- Hyun, D.; Park, C.; Yang, M.; Song, I.; Lee, J.; Yu, H. Target-aware convolutional neural network for target-level sentiment analysis. Inf. Sci. 2019, 491, 166–178. [Google Scholar] [CrossRef]

- Chen, T.; Xu, R.; He, Y.; Wang, X. Improving sentiment analysis via sentence type classification using BiLSTM-CRF and CNN. Expert Syst. Appl. 2017, 72, 221–230. [Google Scholar] [CrossRef]

- Rezaeinia, S.M.; Rahmani, R.; Ghodsi, A.; Veisi, H. Sentiment analysis based on improved pre-trained word embeddings. Expert Syst. Appl. 2019, 117, 139–147. [Google Scholar] [CrossRef]

- Sun, X.; Li, C.; Ren, F. Sentiment analysis for Chinese microblog based on deep neural networks with convolutional extension features. Neurocomputing 2016, 210, 227–236. [Google Scholar] [CrossRef]

- Shrestha, N.; Nasoz, F. Deep Learning Sentiment Analysis of Amazon.Com Reviews and Ratings. Int. J. Soft Comput. Artif. Intell. Appl. 2019, 8, 1–15. [Google Scholar] [CrossRef]

- Bijari, K.; Zare, H.; Kebriaei, E.; Veisi, H. Leveraging deep graph-based text representation for sentiment polarity applications. Expert Syst. Appl. 2020, 144, 113090. [Google Scholar] [CrossRef]

- Hassan, A.; Mahmood, A. Deep Learning approach for sentiment analysis of short texts. In Proceedings of the 2017 3rd International Conference on Control, Automation and Robotics (ICCAR), Nagoya, Japan, 24–26 April 2017. [Google Scholar]

- Poria, S.; Chaturvedi, I.; Cambria, E.; Hussain, A. Convolutional MKL Based Multimodal Emotion Recognition and Sentiment Analysis. In Proceedings of the International Conference on Data Mining, Barcelona, Spain, 12–15 December 2016; pp. 439–448. [Google Scholar]

- You, Q.; Cao, L.; Jin, H.; Luo, J. Robust Visual-Textual Sentiment Analysis: When Attention meets Tree-structured Recursive Neural Networks. In Proceedings of the 24th ACM International Conference on Multimedia, Amsterdam, The Netherlands, 15–19 October 2016. [Google Scholar]

- Xu, J.; Zhu, J.; Zhao, R.; Zhang, L.; He, L.; Li, J. Sentiment analysis of aerospace microblog based on SVM algorithm. Res. Inf. Secur. 2017, 12, 75–79. [Google Scholar]

- Han, K.X.; Ren, W.J. The Application of Support Vector Machine(SVM) on the Sentiment Analysis of Twitter Database Based on an Improved FISHER Kernel Function. Tech. Autom. Appl. 2015, 11, 7. [Google Scholar]

- Cai, G.; Xia, B. Convolutional Neural Networks for Multimedia Sentiment Analysis. In Natural Language Processing and Chinese Computing; Springer: Cham, Switzerland, 2015. [Google Scholar]

- Yu, Y.; Lin, H.; Meng, J.; Zhao, Z. Visual and textual sentiment analysis of a microblog using deep convolutional neural networks. Algorithms 2016, 9, 41. [Google Scholar] [CrossRef]

- Huang, F.; Zhang, X.; Zhao, Z.; Xu, J.; Li, Z. Image–text sentiment analysis via deep multimodal attentive fusion. Knowl. Based Syst. 2019, 167, 26–37. [Google Scholar] [CrossRef]

- Xu, J.; Huang, F.; Zhang, X.; Wang, S.; Li, C.; Li, Z.; He, Y. Visual-textual sentiment classification with bi-directional multi-level attention networks. Knowl. Based Syst. 2019, 178, 61–73. [Google Scholar] [CrossRef]

- Poria, S.; Cambria, E.; Howard, N.; Huang, G.-B.; Hussain, A. Fusing Audio, Visual and Textual Clues for Sentiment Analysis from Multimodal Content. Neurocomputing 2016, 174, 50–59. [Google Scholar] [CrossRef]

- Hirsh, J.B.; Peterson, J.B. Personality and language use in self-narratives. J. Res. Personal. 2009, 43, 524–527. [Google Scholar] [CrossRef]

- Golbeck, J.; Robles, C.; Edmondson, M.; Turner, K. Predicting Personality from Twitter. In Proceedings of the Privacy Security Risk and Trust, Boston, MA, USA, 9–11 October 2011; pp. 149–156. [Google Scholar]

- Bai, S.; Hao, B.; Li, A.; Yuan, S.; Gao, R.; Zhu, T. Predicting Big Five Personality Traits of Microblog Users. In Proceedings of the 2013 IEEE/WIC/ACM International Joint Conferences on Web Intelligence (WI) and Intelligent Agent Technologies (IAT), Atlanta, GA, USA, 17–20 November 2013; pp. 501–508. [Google Scholar]

- Nowson, S.; Perez, J.; Brun, C.; Mirkin, S.; Roux, C. XRCE Personal Language Analytics Engine for Multilingual Author Profiling: Notebook for PAN at CLEF 2015. CLEF (Working Notes). Available online: http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.703.87&rep=rep1&type=pdf (accessed on 18 April 2020).

- IMyers, B.; McCaulley, M.H.; Most, R. Manual, a Guide to the Development and Use of the Myers-Briggs Type Indicator; Consulting Psychologists Press: Palo Alto, CA, USA, 1985. [Google Scholar]

- Qiu, L.; Lin, H.; Ramsay, J.; Yang, F. You are what you tweet: Personality expression and perception on Twitter. J. Res. Personal. 2012, 46, 710–718. [Google Scholar] [CrossRef]

- Mikolov, T.; Karafiat, M.; Burget, L.; Cernocký, J.; Khudanpur, S. Recurrent neural network based language model. In Proceedings of the Conference of the International Speech Communication Association, Makuhari, Chiba, Japan, 26–30 September 2010; pp. 1045–1048. [Google Scholar]

- Bengio, Y.; Simard, P.; Frasconi, P. Learning Long-term Dependencies with Gradient Descent is Difficult. IEEE Trans. Neural Netw. 1994, 5, 157–166. [Google Scholar] [CrossRef]

- Du, Y.; He, Y.; Tian, Y.; Chen, Q.; Lin, L. Microblog bursty topic detection based on user relationship. In Proceedings of the IEEE Joint International Information Technology and Artificial Intelligence Conference, Chongqing, China, 20–22 August 2011; Volume 1, pp. 260–263. [Google Scholar]

- Kim, Y. Convolutional Neural Networks for Sentence Classification. In Proceedings of the Empirical Methods in Natural Language Processing, Hong Kong, China, 3–7 November 2014; pp. 1746–1751. [Google Scholar]

| Feature Symbol | Symbol Meaning |

|---|---|

| HC_Cword | number of highly conscientiousness words in the text |

| HC_Cemoction | number of highly conscientiousness emoji in the text |

| HA_Cword | number of highly agreeableness words in the text |

| HA_Cemoction | number of highly agreeableness emoji in the text |

| LA_Cword | number of low agreeableness words in the text |

| LA_Cemoction | number of low agreeableness emoji in the text |

| HE_Cword | number of highly extraversion words in the text |

| HE_Cemoction | number of highly extraversion emoji in the text |

| LE_Cword | number of low extraversion words in the text |

| LE_Cemoction | number of low extraversion emoji in the text |

| Rule Name | Rule | Rule Meaning |

|---|---|---|

| High conscientiousness judgment rule | IF HC_Cword ≥ p1 ∨ HC_Cemoction ≥ p2 THEN C = HC | When the number of words in the text containing the high conscientiousness dictionary exceeds p1, or the number of highly conscientiousness emoji in the text exceeds p2, the text is determined to be highly conscientiousness. |

| High agreeableness judgment rule | IF LA_Cword ≥ p3 ∨ LA_Cemoction ≥ p4 THEN A = LA | When the number of words in the text containing the high agreeableness dictionary exceeds p3, or the number of highly agreeableness emoji in the text exceeds p4, the text is determined to be highly agreeableness. |

| Low agreeableness judgment rule | IF LA_Cword ≥ p5 ∨ LA_Cemoction ≥ p6 THEN A = LA | When the number of words in the text containing the low agreeableness dictionary exceeds p5, or the number of low agreeableness emoji in the text exceeds p6, the text is determined to be low agreeableness. |

| High extraversion judgment rule | IF HE_Cword ≥ p7 ∨ HE_Cemoction ≥ p8 THEN E= HE | When the number of words in the text containing the high extraversion dictionary exceeds p7, or the number of highly extraversion emoji in the text exceeds p8, the text is determined to be highly extraversion. |

| Low extraversion judgment rule | IF LE_Cword ≥ p9 ∨ LE_Cemoction ≥ p10 THEN E = LE | When the number of words in the text containing the low extraversion dictionary exceeds p9, or the number of low extraversion emoji in the text exceeds p10, the text is determined to be low extraversion. |

| Personality | ALL | HA | HC | HE | LA | LE |

|---|---|---|---|---|---|---|

| F1score | 88.31 | 89.19 | 89.59 | 87.39 | 72.28 | 83.25 |

| Precision | |

|---|---|

| 0.16,0.23,0.16,0.16,014,0.15 | 96.91 |

| 0.15,0.15,0.23,0.16,0.15,0.16 | 96.81 |

| 0.14,0.16,0.25,0.15,0.15,0.15 | 96.81 |

| 0.15,0.15,0.15,0.24,0.15,0.16 | 96.63 |

| 0.15,0.27,0.15,0.14,0.15,0.14 | 96.55 |

| 0.14,0.15,0.15,0.15,0.16,0.25 | 96.45 |

| 0.16,0.16,0.16,0.21,0.15,0.16 | 96.43 |

| 0.2,0.16,0.16,0.16,0.16,0.16 | 96.42 |

| 0.16,0.16,0.15,0.16,0.21,0.16 | 96.41 |

| 0.14,0.16,0.15,0.16,0.24,0.15 | 96.36 |

| 0.14,0.15,0.14,0.15,0.14,0.28 | 96.27 |

| 0.25,0.15,0.15,0.15,0.15,0.15 | 96.27 |

| Method | Precision | Recall | F Score |

|---|---|---|---|

| Direct sum | 96.58 | 97.00 | 96.78 |

| Weighted sum | 96.91 | 97.00 | 96.95 |

| Parameter Name | Parameter Value |

|---|---|

| wordvector dimension | 128 |

| batch_size | 100 |

| learning_rate | 0.01 |

| dropout_ prob | 0.5 |

| num_epochs | 750 |

| hide layer nodes | 75 |

| Method | Precision | Recall | F-Score |

|---|---|---|---|

| SVM | 58.91 | 87.6 | 70.45 |

| CNN-rand | 73.03 | 85.37 | 78.72 |

| CNN-static | 73.52 | 88.49 | 80.31 |

| CNN-non-static | 73.55 | 88.36 | 81.79 |

| LSTM | 88.82 | 87.80 | 88.31 |

| PBAL (proposed) | 96.91 | 97.00 | 96.95 |

| Method | Precision | Recall | F-Score |

|---|---|---|---|

| SVM | 61.97 | 85.26 | 79.69 |

| CNN-rand | 67.48 | 82.13 | 74.09 |

| CNN-static | 67.35 | 83.04 | 74.38 |

| CNN-non-static | 69.83 | 86.62 | 77.32 |

| LSTM | 78.41 | 84.67 | 81.42 |

| PBAL (proposed) | 84.54 | 91.87 | 88.05 |

| PBAL | PBAL-HA | PBAL-HC | PBAL-HE | PBAL-LA | PBAL-LE | |

|---|---|---|---|---|---|---|

| precision | 96.91% | 94.45% | 95.18% | 95.18% | 94.31% | 96.18% |

| recall | 97.00% | 95.00% | 94.40% | 94.80% | 94.26% | 96.00% |

| F-Score | 96.95% | 94.72% | 94.79% | 94.99% | 94.28% | 96.09% |

| PBAL | PBAL-HA | PBAL-HC | PBAL-HE | PBAL-LA | PBAL-LE | |

|---|---|---|---|---|---|---|

| precision | 84.54% | 76.95% | 80.00% | 80.58% | 82.70% | 82.34% |

| recall | 91.87% | 90.80% | 90.13% | 92.40% | 92.40% | 92.00% |

| F-Score | 88.05% | 83.30% | 84.76% | 86.09% | 87.28% | 86.90% |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yang, W.; Yuan, T.; Wang, L. Micro-Blog Sentiment Classification Method Based on the Personality and Bagging Algorithm. Future Internet 2020, 12, 75. https://doi.org/10.3390/fi12040075

Yang W, Yuan T, Wang L. Micro-Blog Sentiment Classification Method Based on the Personality and Bagging Algorithm. Future Internet. 2020; 12(4):75. https://doi.org/10.3390/fi12040075

Chicago/Turabian StyleYang, Wenzhong, Tingting Yuan, and Liejun Wang. 2020. "Micro-Blog Sentiment Classification Method Based on the Personality and Bagging Algorithm" Future Internet 12, no. 4: 75. https://doi.org/10.3390/fi12040075

APA StyleYang, W., Yuan, T., & Wang, L. (2020). Micro-Blog Sentiment Classification Method Based on the Personality and Bagging Algorithm. Future Internet, 12(4), 75. https://doi.org/10.3390/fi12040075