Using U-Net-Like Deep Convolutional Neural Networks for Precise Tree Recognition in Very High Resolution RGB (Red, Green, Blue) Satellite Images

Abstract

1. Introduction

2. Materials and Methods

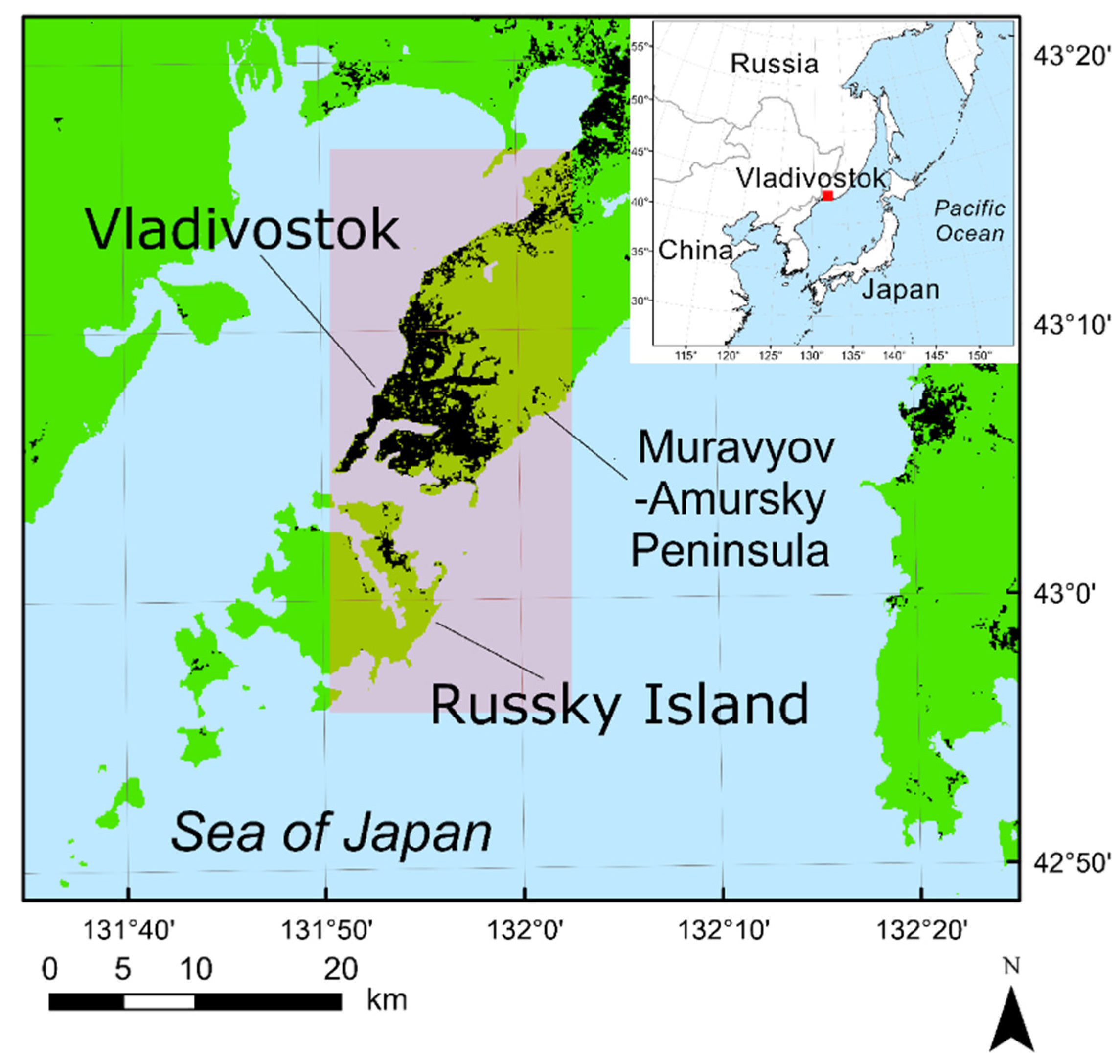

2.1. Study Site and Objects

2.2. Remote Data

2.3. Image Preparation

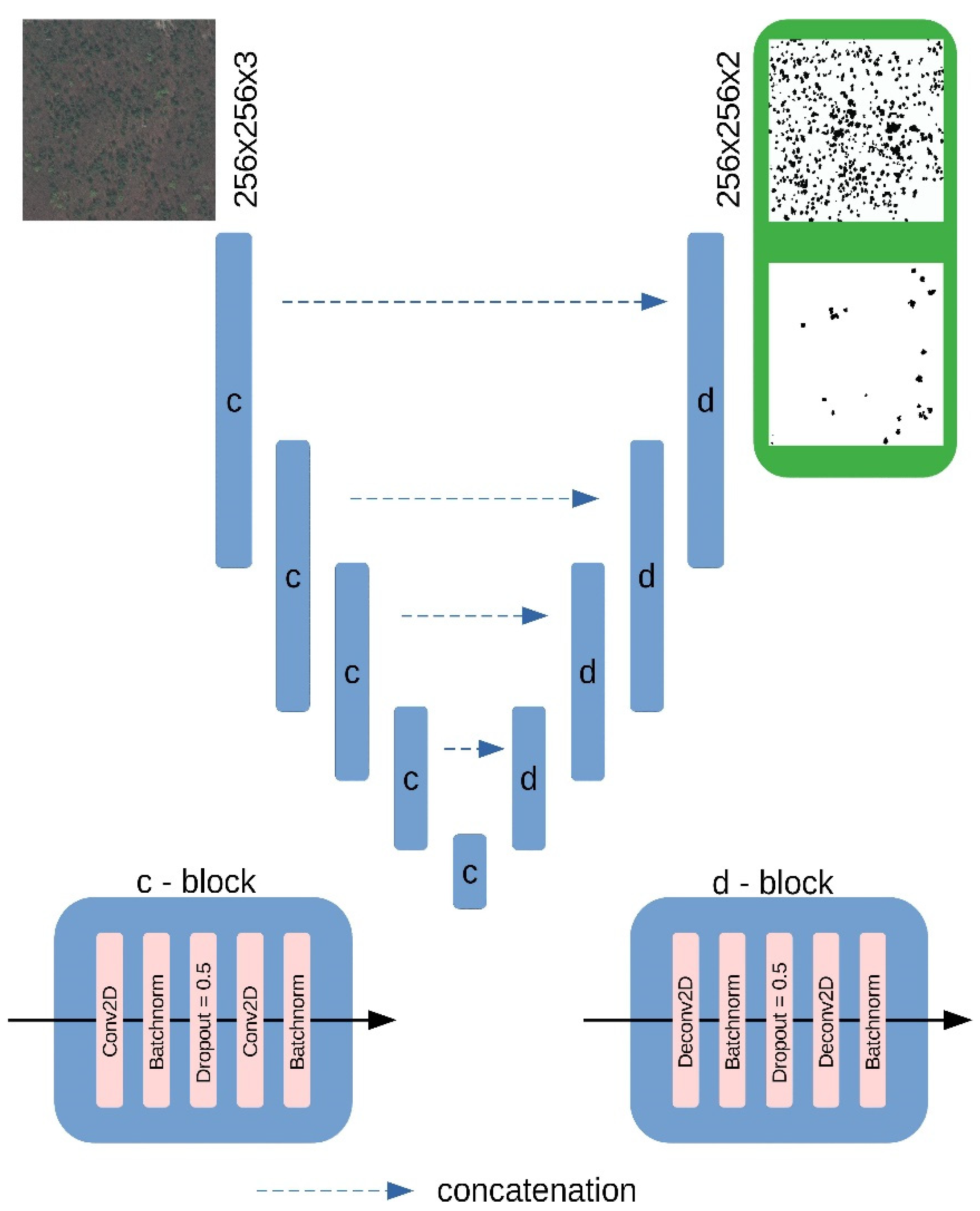

2.4. CNN Design

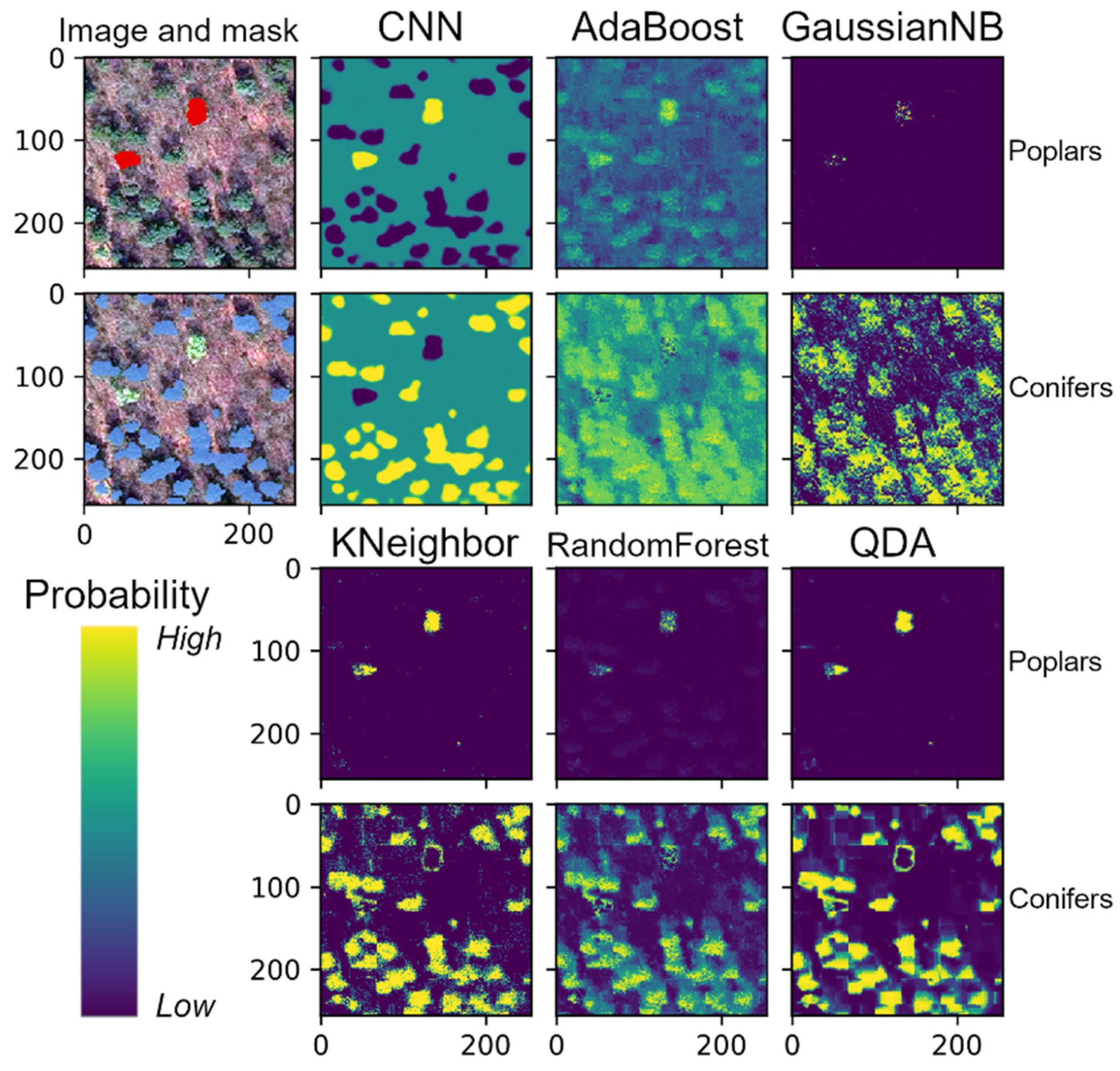

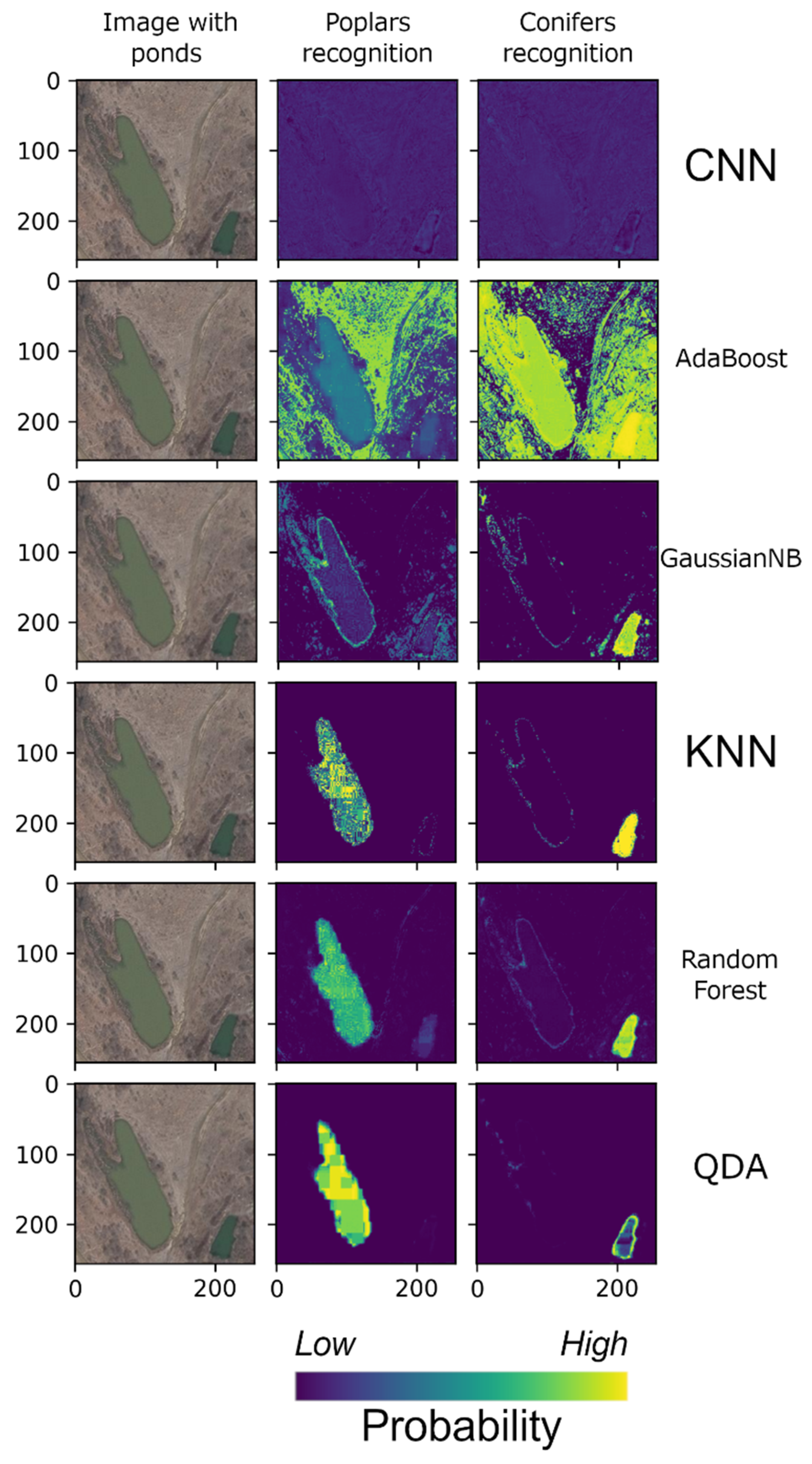

2.5. Comparison with Standard ML Methods

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

| Species | Date 1 |

|---|---|

| Betula costata Trautv. | 18 May |

| Fraxinus mandshurica Rupr. | 21 May |

| Juglans mandshurica Maxim. | 17 May |

| Kalopanax septemlobus (Thunb.) Koidz. | 20 May |

| Phellodendron amurense Rupr. | 26 May |

| Populus suaveolens Fisch. ex Loudon | 5 May |

| Tilia amurensis Rupr. | 16 May |

| Quercus mongolica Fisch. ex Ledeb. | 14 May |

Appendix B

| Image Name; Image Size (Pixels); Main Objects | Image Preview |

|---|---|

| train 1; 1280 × 1280; conifers, poplars, and deciduous leafless trees |  |

| train 2; 1280 × 1280; water surface, bare ground, conifers, poplars, and deciduous leafless trees |  |

| train 3; 512 × 512; roofs, roads, bare ground, conifers and deciduous leafless trees |  |

| test1; 1280 × 1280; conifers, poplars, and deciduous trees |  |

| test2; 512 × 512; ponds, deciduous leafless trees, bare ground |  |

| validation; 1280 × 1280; conifers, poplars, and deciduous leafless trees |  |

References

- Ma, L.; Liu, Y.; Zhang, X.; Ye, Y.; Yin, G.; Johnson, B.A. Deep learning in remote sensing applications: A meta-analysis and review. ISPRS J. Photogramm. 2019, 152, 166–177. [Google Scholar] [CrossRef]

- Komárek, J.; Klouček, T.; Prošek, J. The potential of unmanned aerial systems: A tool towards precision classification of hard to-distinguish vegetation types? Int. J. Appl. Earth Obs. 2018, 71, 9–19. [Google Scholar] [CrossRef]

- Kattenborn, T.; Eichel, J.; Fassnacht, F.E. Convolutional neural networks enable efficient, accurate and fine-grained segmentation of plant species and communities from high-resolution UAV imagery. Sci. Rep. 2019, 9, 17656. [Google Scholar] [CrossRef] [PubMed]

- Kattenborn, T.; Eichel, J.; Wiser, S.; Burrows, L.; Fassnacht, F.E.; Schmidtlein, S. Convolutional neural networks accurately predict cover fractions of plant species and communities in unmanned aerial vehicle imagery. Remote Sens. Ecol. Conserv. 2020, 5, 472–486. [Google Scholar] [CrossRef]

- Hamdi, Z.M.; Brandmeier, M.; Straub, C. Forest damage assessment using deep learning on high resolution remote sensing data. Remote Sens. 2019, 11, 1976. [Google Scholar] [CrossRef]

- Safonova, A.; Tabik, S.; Alcaraz-Segura, D.; Rubtsov, A.; Maglinets, Y.; Herrera, F. Detection of fir trees (Abies sibirica) damaged by the bark beetle in unmanned aerial vehicle images with deep learning. Remote Sens. 2019, 11, 643. [Google Scholar] [CrossRef]

- Sylvian, J.-D.; Drolet, G.; Brown, N. Mapping dead forest cover using a deep convolutional neural network and digital aerial photography. ISPRS J. Photogramm. 2019, 156, 14–26. [Google Scholar] [CrossRef]

- Kislov, D.E.; Korznikov, K.A. Automatic windthrow detection using very-high-resolution satellite imagery and deep learning. Remote Sens. 2020, 12, 1145. [Google Scholar] [CrossRef]

- Li, W.; Fu, H.; Yu, L.; Cracknell, A. Deep learning based oil palm tree detection and counting for high-resolution remote sensing images. Remote Sens. 2017, 9, 22. [Google Scholar] [CrossRef]

- Csillik, O.; Cherbini, J.; Johnson, R.; Lyons, A.; Kelly, M. Identification of citrus trees from unmanned aerial vehicle imagery using convolutional neural networks. Drones 2018, 2, 39. [Google Scholar] [CrossRef]

- Morales, G.; Kemper, G.; Sevillano, G.; Arteaga, D.; Ortega, I.; Telles, J. Automatic segmentation of Mauritia flexuosa in unmanned aerial vehicle (UAV) imagery using deep learning. Forests 2018, 9, 736. [Google Scholar] [CrossRef]

- Fricker, G.A.; Ventura, J.D.; Wolf, J.A.; North, M.P.; Davis, F.W.; Franklin, J. A convolutional neural network classifier identifies tree species in mixed-conifer forest from hyperspectral imagery. Remote Sens. 2019, 11, 2326. [Google Scholar] [CrossRef]

- Kattenborn, T.; Lopatin, J.; Förster, M.; Braun, A.C.; Fassnacht, F.E. UAV data as alternative to field sampling to map woody invasive species based on combined Sentinel-1 and Sentinel-2 data. Remote Sens. Environ. 2019, 227, 61–73. [Google Scholar] [CrossRef]

- Santos, A.A.; Marcato Junior, J.; Araújo, M.S.; Di Martini, D.R.; Tetila, E.C.; Siqueira, H.L.; Aoki, C.; Eltner, A.; Matsubara, E.T.; Pistori, H.; et al. Assessment of CNN-based methods for individual tree detection on images captured by RGB cameras attached to UAVs. Sensors 2019, 19, 3595. [Google Scholar] [CrossRef] [PubMed]

- Wagner, F.H.; Sanchez, A.; Tarabalka, Y.; Lotte, R.G.; Ferreira, M.P.; Aidar, M.P.M.; Phillips, O.L.; Aragao, L.E.O.C. Using the U-Net convolutional network to map forest types and disturbance in the Atlantic rainforest with very high resolution images. Remote Sens. Ecol. Conserv. 2019, 5, 360–375. [Google Scholar] [CrossRef]

- Braga, J.R.G.; Peripato, V.; Dalagnol, R.; Ferreira, M.P.; Tarabalka, Y.; Aragão, L.E.O.C.; de Campos Velho, H.F.; Shiguemori, E.H.; Wagner, F.H. Tree Crown Delineation Algorithm Based on a Convolutional Neural Network. Remote Sens. 2020, 12, 1288. [Google Scholar] [CrossRef]

- Ferreira, M.P.; de Almeida, D.R.A.; de Almeida Papa, D.; Minervino, J.B.S.; Veras, H.F.P.; Formighieri, A.; Santos, C.A.N.; Ferreira, M.A.D.; Figueiredo, E.O.; Ferreira, E.J.L. Individual tree detection and species classification of Amazonian palms using UAV images and deep learning. For. Ecol. Manag. 2020, 475, 118397. [Google Scholar] [CrossRef]

- Wäldchen, J.; Mäder, P. Machine learning for image based species identification. Methods Ecol. Evol. 2018, 9, 2216–2225. [Google Scholar] [CrossRef]

- Brodrick, P.G.; Davies, A.B.; Asner, G.P. Uncovering ecological patterns with convolutional neural networks. Trends Ecol. Evol. 2019, 34, 734–745. [Google Scholar] [CrossRef]

- Christin, S.; Hervet, E.; Lecomte, N. Applications for deep learning in ecology. Methods Ecol. Evol. 2019, 10, 1632–1644. [Google Scholar] [CrossRef]

- Yu, Q.; Gong, P.; Clinton, N.; Bigin, G.; Kelly, M.; Schirokauer, D. Object-based detailed vegetation classification with airborne high spatial resolution remote sensing imagery. Photogramm. Eng. Remote Sens. 2006, 7, 799–811. [Google Scholar] [CrossRef]

- Hill, R.A.; Wilson, A.K.; Hinsley, S.A. Mapping tree species in temperate deciduous woodland using time-series multi-spectral data. Appl. Veg. Sci. 2010, 13, 86–99. [Google Scholar] [CrossRef]

- He, Y.; Yang, J.; Caspersen, J.; Jones, T. An operational workflow of deciduous-dominated forest species classification: Crown delineation, gap elimination, and object-based classification. Remote Sens. 2019, 11, 2078. [Google Scholar] [CrossRef]

- Räsänen, A.; Juutinen, S.; Tuittila, E.; Aurela, M.; Virtanen, T. Comparing ultra-high spatial resolution remote-sensing methods in mapping peatland vegetation. J. Veg. Sci. 2019, 30, 1016–1026. [Google Scholar] [CrossRef]

- Alhindi, T.J.; Kalra, S.; Ng, K.H.; Afrin, A.; Tizhoosh, H.R. Comparing LBP, HOG and deep features for classification of histopathology images. arXiv 2018, arXiv:1805.05837v1. [Google Scholar]

- Lee, S.Y.; Tama, B.A.; Moon, S.J.; Lee, S. Steel surface defect diagnostics using deep convolutional neural network and class activation map. Appl. Sci. 2019, 9, 5449. [Google Scholar] [CrossRef]

- Sucheta, C.; Lovekesh, V.; De Filippo De Grazia, M.; Maurizio, C.; Shandar, A.; Marco, Z.A. Comparison of shallow and deep learning methods for predicting cognitive performance of stroke patients from MRI lesion images. Front. Neuroinform. 2019, 13, 53. [Google Scholar] [CrossRef]

- Krestov, P.V. Forest Vegetation of Easternmost Russia (Russian Far East). In Forest Vegetation of Northeast Asia; Kolbek, J., Srutek, M., Box, E.E.O., Eds.; Springer: Dordrecht, The Netherlands; pp. 93–180. [CrossRef]

- Miyawaki, A. Vegetation of Japan; Shibundo: Tokyo, Japan, 1988; Volume 9, (Japanese, with German and English synopsis). [Google Scholar]

- Korznikov, K.A.; Popova, K.B. Floodplain tall-herb forests on Sakhalin Island (class Salicetea sachalinensis Ohba 1973) (Russian). Rastitel’nost’ Rossii 2018, 33, 66–91. [Google Scholar] [CrossRef]

- Kolesnikov, B.P. Korean Cedar Forests of the Far East; Izdatelstvo Akademii Nauk SSSR: Moscow, Leningrad, Russia, 1956. (In Rsussian) [Google Scholar]

- Vasil’ev, N.G.; Kolesnikov, B.P. Blackfir-Broadleaved Forests of the South Primorye; Izdatelstvo Akademii Nauk SSSR: Moscow, Leningrad, Russia, 1962. (In Russian) [Google Scholar]

- Krestov, P.V.; Song, J.-S.; Nakamura, Y.; Verkholat, V.P. A phytosociological survey of the deciduous temperate forests of mainland Northeast Asia. Phytocoenologia 2006, 36, 77–150. [Google Scholar] [CrossRef]

- Shorten, C.; Khoshgoftaar, T.J. A survey on image data augmentation for deep learning. J. Big Data 2019, 6, 60. [Google Scholar] [CrossRef]

- Chollet, F.; Rahman, F.; Lee, T.; de Marmiesse, G.; Zabluda, O.; Pumperla, M.; Santana, E.; McColgan, T.; Shelgrove, X.; Branchaud-Charron, F.; et al. Keras. 2015. Available online: https://github.com/fchollet/keras. (accessed on 1 December 2020).

- Minaee, S.; Boykov, Y.; Porikli, F.; Plaza, A.; Kehtarnavaz, N.; Terzopoulos, D. Image segmentation using deep learning: A survey. arXiv 2020, arXiv:2001.05566. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2015; Volume 9351. [Google Scholar] [CrossRef]

- Aggarwal, C.C. Neural Networks and Deep Learning; Springer International Publishing: Berlin/Heidelberg, Germany, 2018; 497p. [Google Scholar] [CrossRef]

- Hand, D.J.; Yu, K. Idiot’s Bayes—Not so stupid after all? Int. Stat. Rev. 2001, 69, 385–398. [Google Scholar] [CrossRef]

- Tharvat, A. Linear vs. quadratic discriminant analysis classifier: A tutorial. Int. J. Pattern Recognit. 2016, 3, 145–180. [Google Scholar] [CrossRef]

- Altman, N.S. An introduction to kernel and nearest-neighbor nonparametric regression. Am. Stat. 1992, 46, 175–185. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 1997, 55, 119–139. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. Available online: http://www.jmlr.org/papers/v12/pedregosa11a.html (accessed on 1 December 2002).

- Haralick, R.M.; Shanmugam, K. Textural features for image classification. IEEE Trans. Syst. Man Cybern. 1973, 6, 610–621. [Google Scholar] [CrossRef]

- van der Walt, S.; Schönberger, J.L.; Nunez-Iglesias, J.; Boulogne, F.; Warner, J.D.; Yager, N.; Gouillart, E.; Yu, T. Scikit-image: Image processing in Python. PeerJ 2014, 2, e453. [Google Scholar] [CrossRef]

- Rezatofighi, H.; Tsoi, N.; Gwak, J.Y.; Sadeghian, A.; Reid, I.; Savarese, S. Generalized intersection over union: A metric and a loss for bounding box regression. arXiv 2019, arXiv:1902.09630. [Google Scholar]

- Altman, J. Tree-ring-based disturbance reconstruction in interdisciplinary research: Current state and future directions. Dendrochronologia 2020, 62, 125733. [Google Scholar] [CrossRef]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; et al. PyTorch: An imperative style, high-performance deep learning library. arXiv 2019, arXiv:1912.01703v1. [Google Scholar]

| Classifier | Mean BA 1 | Best Threshold Values | Mean F1 2 | Best Threshold Values | Mean IoU 3 | Best Threshold Values | |||

|---|---|---|---|---|---|---|---|---|---|

| Conifers | Poplars | Conifers | Poplars | Conifers | Poplars | ||||

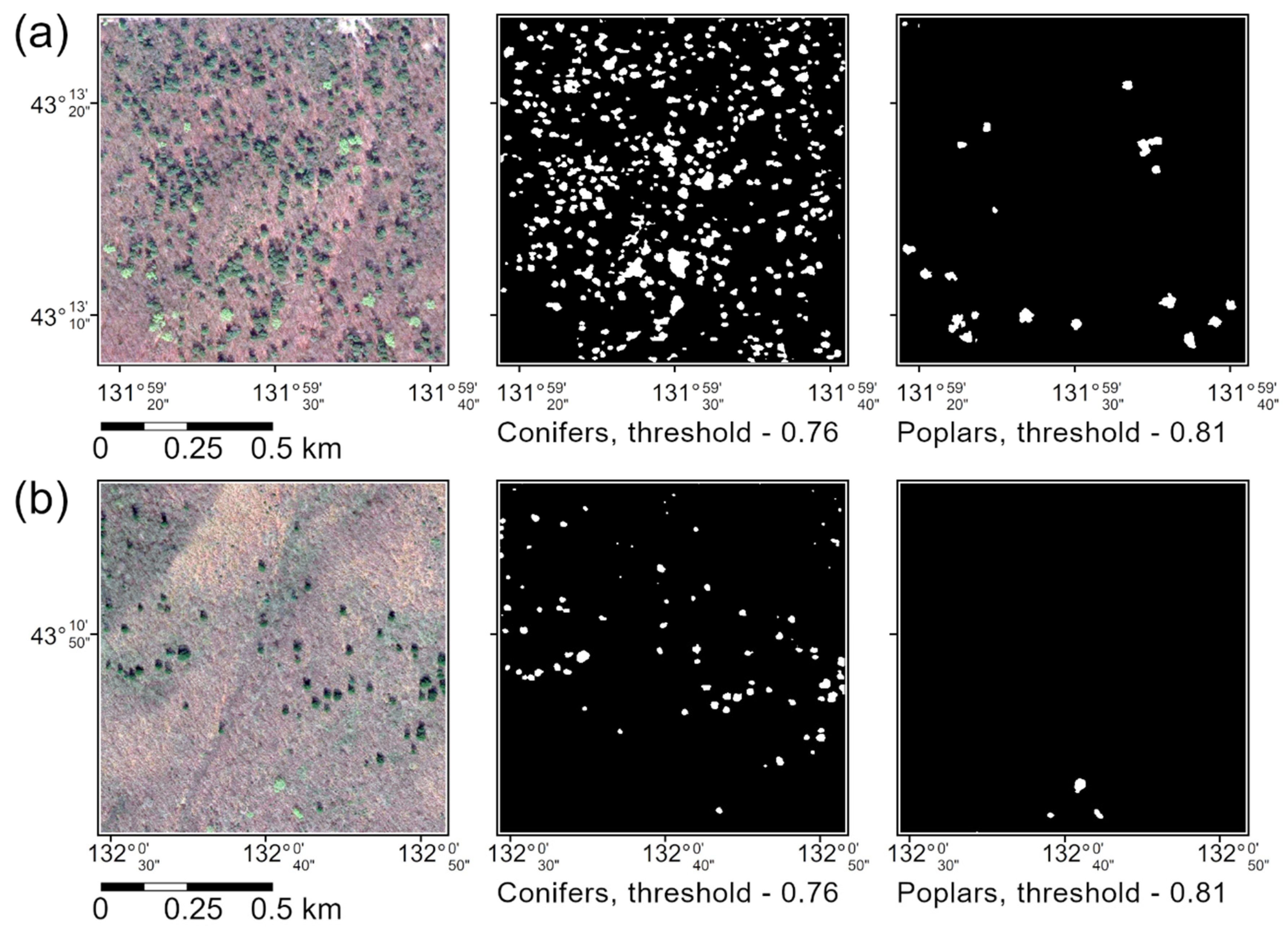

| U-Net-like CNN | 0.96 | 0.61 | 0.61 | 0.97 | 0.76 | 0.81 | 0.94 | 0.76 | 0.81 |

| AdaBoost | 0.69 | 0.31 | 0.36 | 0.84 | 0.41 | 0.36 | 0.79 | 0.41 | 0.36 |

| GaussianNB | 0.75 | 0.01 | 0.61 | 0.85 | 0.06 | 0.86 | 0.80 | 0.60 | 0.91 |

| KNN (k = 3) | 0.88 | 0.01 | 0.01 | 0.93 | 0.36 | 0.36 | 0.88 | 0.36 | 0.36 |

| RandomForest | 0.87 | 0.01 | 0.21 | 0.90 | 0.16 | 0.61 | 0.84 | 0.21 | 0.36 |

| QDA | 0.87 | 0.01 | 0.06 | 0.91 | 0.76 | 0.21 | 0.86 | 0.76 | 0.26 |

| Classifier | Mean BA 1 | Best Threshold Values | Mean F1 2 | Best Threshold Values | Mean IoU 3 | Best Threshold Values | |||

|---|---|---|---|---|---|---|---|---|---|

| Conifers | Poplars | Conifers | Poplars | Conifers | Poplars | ||||

| AdaBoost | 0.69 | 0.40 | 0.36 | 0.84 | 0.41 | 0.36 | 0.79 | 0.41 | 0.36 |

| GaussianNB | 0.75 | 0.96 | 0.95 | 0.85 | 0.96 | 0.96 | 0.80 | 0.95 | 0.96 |

| KNN (k = 3) | 0.89 | 0.71 | 0.71 | 0.93 | 0.71 | 0.71 | 0.88 | 0.36 | 0.36 |

| RandomForest | 0.87 | 0.16 | 0.41 | 0.90 | 0.16 | 0.41 | 0.84 | 0.16 | 0.41 |

| QDA | 0.95 | 0.96 | 0.96 | 0.96 | 0.96 | 0.96 | 0.93 | 0.96 | 0.96 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Korznikov, K.A.; Kislov, D.E.; Altman, J.; Doležal, J.; Vozmishcheva, A.S.; Krestov, P.V. Using U-Net-Like Deep Convolutional Neural Networks for Precise Tree Recognition in Very High Resolution RGB (Red, Green, Blue) Satellite Images. Forests 2021, 12, 66. https://doi.org/10.3390/f12010066

Korznikov KA, Kislov DE, Altman J, Doležal J, Vozmishcheva AS, Krestov PV. Using U-Net-Like Deep Convolutional Neural Networks for Precise Tree Recognition in Very High Resolution RGB (Red, Green, Blue) Satellite Images. Forests. 2021; 12(1):66. https://doi.org/10.3390/f12010066

Chicago/Turabian StyleKorznikov, Kirill A., Dmitry E. Kislov, Jan Altman, Jiří Doležal, Anna S. Vozmishcheva, and Pavel V. Krestov. 2021. "Using U-Net-Like Deep Convolutional Neural Networks for Precise Tree Recognition in Very High Resolution RGB (Red, Green, Blue) Satellite Images" Forests 12, no. 1: 66. https://doi.org/10.3390/f12010066

APA StyleKorznikov, K. A., Kislov, D. E., Altman, J., Doležal, J., Vozmishcheva, A. S., & Krestov, P. V. (2021). Using U-Net-Like Deep Convolutional Neural Networks for Precise Tree Recognition in Very High Resolution RGB (Red, Green, Blue) Satellite Images. Forests, 12(1), 66. https://doi.org/10.3390/f12010066