1. Introduction

The importance of mental health care in managing different types of stress in modern society is increasingly recognized around the world. Stress has negative effects on people’s health and mood in daily life, and its accumulation can cause mental and behavioral dysfunction in the long term [

1]. Besides their impact on individuals, such disorders result in serious economic costs to society because of their association with reduction of lifetime earnings and labor productivity [

2,

3]. This state of affairs requires technologies that are capable of easily checking for mental illnesses such as depression, for which early intervention is associated with higher remission rates [

4,

5,

6].

Previously, some researchers focused on identifying biomarkers in saliva and blood for use in depression screening [

7,

8,

9]. For example, Maes et al. proposed interleukin-1 receptor antagonist (IL-1ra) as a diagnostic biomarker for major depressive disorder (MDD), finding its serum concentration to be increased in affected individuals [

9]. However, besides being invasive, diagnostic body fluid testing incurs additional costs because of the need for special measurement equipment and reagents. Self-report psychological questionnaires such as the Patient Health Questionnaire 9 (PHQ9), General Health Questionnaire (GHQ), and Beck Depression Inventory (BDI) are non-invasive alternatives commonly used by clinicians [

10,

11,

12]. They are relatively simple to administer, but suffer from the inherent drawback of reporting bias, i.e., certain symptoms/behaviors being over- and/or under-endorsed depending on respondents’ awareness of them (or lack thereof) [

13]. This bias can be mitigated by assessments conducted by physicians, such as the Hamilton Depression Rating Scale (HDRS); however, the extra time involved limits the number of interviews that can be administered [

14].

Feelings are reflected in people’s voice and facial expressions, and this common knowledge has also been scientifically substantiated [

15,

16]. Such evidence has driven a recent surge of research interest in acoustic biomarkers for predicting depression and stress levels [

17,

18,

19]. Simplicity is a major advantage of such approaches, i.e., voice recordings can be collected non-invasively and remotely without any specialized equipment besides a microphone. Furthermore, they reduce subjectivity in diagnosis since recording data are processed algorithmically; thus, they avoid reporting bias that is inherent to self-report assessments, holding promise for detecting a variety of mental illnesses. For example, Mundt et al. recorded MDD patients reading a standard script via a telephone interface, and calculated a selection of vocal acoustic properties such as the duration and ratio of vocalizations and silences, fundamental frequency (F0), and first and second formants (F1, F2). Several of these measures were markedly different between patients who responded to treatment and those who did not [

18]. Our research group has also focused on depression’s association with emotional expression. In a previous study, we developed composite metrics for quantifying mental health—“vitality” and “mental activity”—that combine different emotional components of the voice [

19]. In subsequent work, we showed evidence for this measure’s effectiveness in detecting depression and monitoring stress due to life events [

20,

21]. A weak correlation between vitality and BDI score was confirmed, suggesting that some voice features correlated with the BDI score. Still, some limitations of this measure have become apparent. First, since diseases besides depression affect how emotions are expressed, it is challenging to resolve whether abnormal vitality and mental activity truly indicates depression or instead reflects a different condition. Furthermore, since its classification accuracy showed large variation across facilities in some cases, vitality and mental activity might be dependent on (recording) environment.

openSMILE is a platform for deriving extensive sets of acoustic features from audio data [

22], which has been recently applied by several studies in the field of speech diagnostics. Jiang et al. developed a novel computational methodology for detecting depression based on vocal acoustic features extracted using openSMILE from recorded speech in three categories of emotion (positive, neutral, negative). Despite obtaining high accuracy for depression detection, the development of separate models for men and women slightly complicated their application in practice [

23]. Faurholt-Jepsen et al. extracted openSMILE features from voice recordings of patients with bipolar disorder, and attempted to use them to classify their depressive and manic symptoms. Their feature-based classification accurately matched manic and depressive symptoms as measured by the Young Mania Rating Scale (YMRS [

24]) and HRDS, respectively. Nevertheless, their algorithm utilizes an immense number of features, posing a risk of overfitting [

25]. Focusing on mel-frequency cepstrum coefficients (MFCCs), Taguchi et al. reported a significant difference in the second coefficient (MFCC2), which represents spectral energy in the 2000–3000 Hz band, between the voices of MDD patients and healthy controls. However, their analysis included only one type of feature, and did not combine multiple features [

26]. In a previous study, we proposed a voice index based on openSMILE features, which could accurately differentiate between three subject groups: patients with major depressive disorder, patients with bipolar disorder, and healthy individuals. Still, the proposed measure requires further validation because our training data were drawn from a small sample [

27].

The aim of this paper is to develop a composite index based on vocal acoustic features that can accurately differentiate patients with major depressive disorder from healthy adults. Depressed patients and non-depressed controls were recorded reading a set of fixed phrases; the recorded data were split into training and test datasets. Features were extracted from the training data using openSMILE, and qualitatively similar features were mathematically aggregated by means of principal component analysis. These components were used as coefficients in logistic regression to classify subjects. The classification performance of our proposed indicator was tested on the recordings of the test dataset. The result achieved a diagnostic accuracy of approximately 80%.

2. Materials and Methods

2.1. Ethical Considerations

This study was approved by the institutional review board of The University of Tokyo (no. 11572).

2.2. Subjects

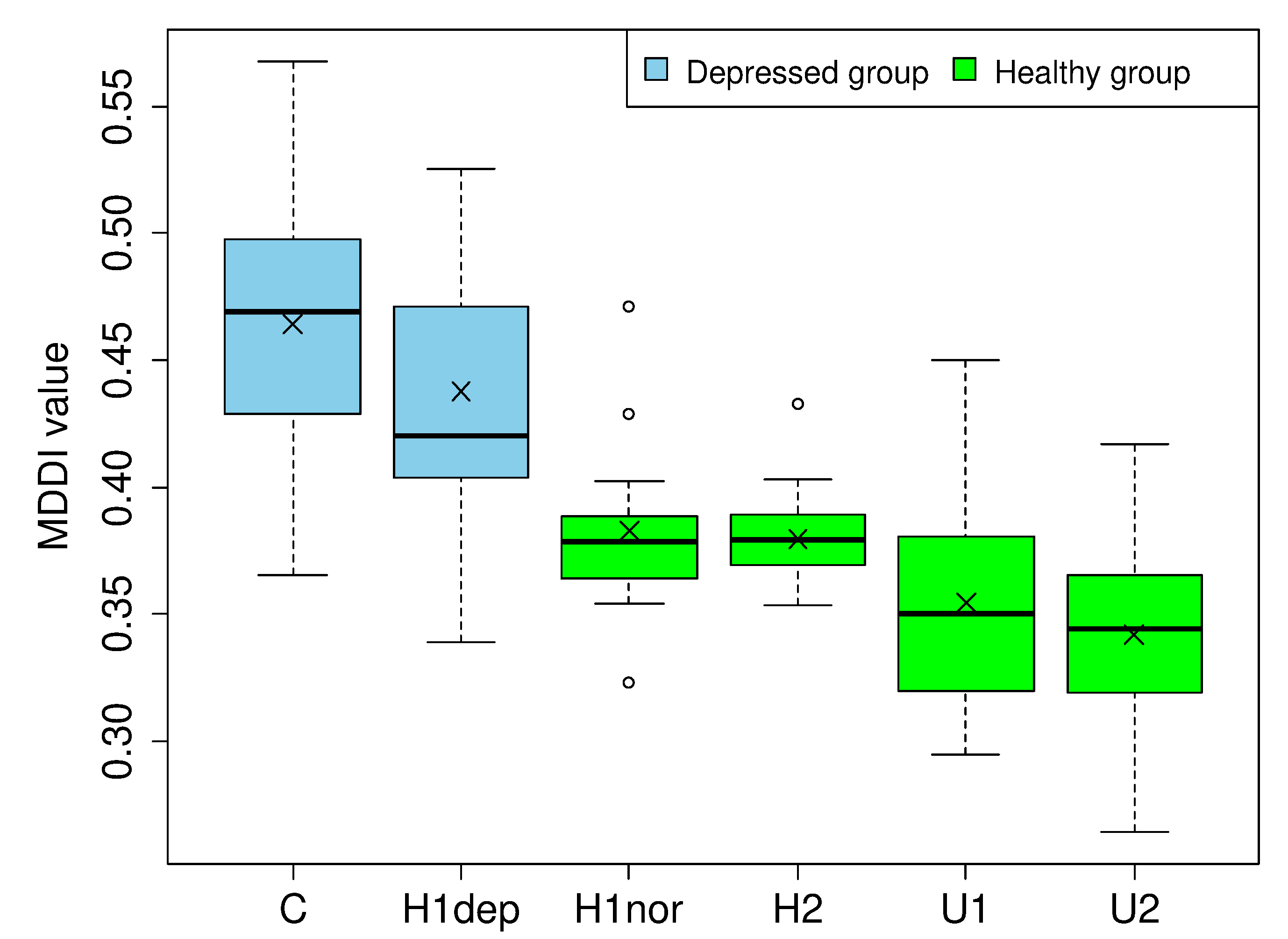

Our study enrolled 306 subjects from five institutions in total. Depressed subjects were recruited from individuals receiving outpatient treatment for major depressive disorder at Ginza Taimei Clinic (“C”: 87) or National Defense Medical College Hospital (“H1”: 90). For the control group, self-reported healthy adults were recruited from the National Defense Medical College Hospital (14), Tokyo Medical University Hospital (“H2”: 23), Asahi University (“U1”: 38), and The University of Tokyo (“U2”: 54). Patients gave informed consent after receiving the study information at their first assessment. Controls gave informed consent after receiving the study information at a health workshop held by the authors, either individually or in small groups. Patients aged 20 years or older were enrolled if they met the diagnostic and statistical manual of mental disorders 4th edition Text Revision (DSM-IV-TR) diagnostic criteria for major depressive disorder [

28]. Candidates with severe physical disability or organic brain disease were excluded. The subjects were diagnosed by a psychiatrist using the Mini-International Neuropsychiatric Interview (M.I.N.I.) [

29]. The details for the subjects recruited from each facility are summarized in

Table 1.

The severity of depression was assessed by physicians using the HDRS. Several standards for HDRS severity rating have been reported [

30]; following the precedent of Riedel et al. [

31], we interpreted that a HDRS total score of less than 8 indicates depression in remission and excluded patients accordingly from analysis. Our final study population consisted of 102 patients (“depressed group (HDRS ≥ 8)”) and 129 healthy adults (“normal group”). The screening outcomes of patients recruited for the “depressed group” are summarized in

Table 2.

2.3. Voice Data

Voice recordings were made in an examination room (C, H1, H2) or conference room (U1, U2). Each subject read aloud 10 set phrases in Japanese, given along with their English translations in

Table 3. Voice recordings were acquired at 24 bit and 96 kHz resolution using a Roland R-26 portable digital audio recorder (Hamamatsu, Japan) and Olympus ME52W lavalier microphone (Tokyo, Japan).

2.4. Voice Analysis

Each audio file was first normalized to minimize differences in volume due to recording environment, and then segmented by phrase. Each phrase was processed independently to extract vocal features. Various scripts were available for automatically calculating different sets of features from audio data using openSMILE. Our study used “the large openSMILE emotion feature set”, developed for use in emotion recognition. We used 6552 (56 × 3 × 39) audio features computed as follows:

56 types of acoustic/physical quantities were calculated at frame level as low-level descriptors: fast Fourier transform (FFT), MFCCs, voiced speech probability, zero-crossing rate, signal energy, F0, and so on.

3 types of temporal statistics were derived from these descriptors at frame level: moving average, first-order change over time (“delta”), and second-order change over time (“delta-delta”).

39 types of statistical functionals were calculated at file (phrase) level from frame-level values: mean, maximum, minimum, centroid, quartiles, variance, kurtosis, skewness, and so on.

Each feature was averaged for every subject across the 10 set phrases to obtain mean values for analysis. The processed data were split by facility of origin into a training set (C, H2, U1, U2) and test set (H1). Next, the classification algorithm was trained on the training data using the derived features.

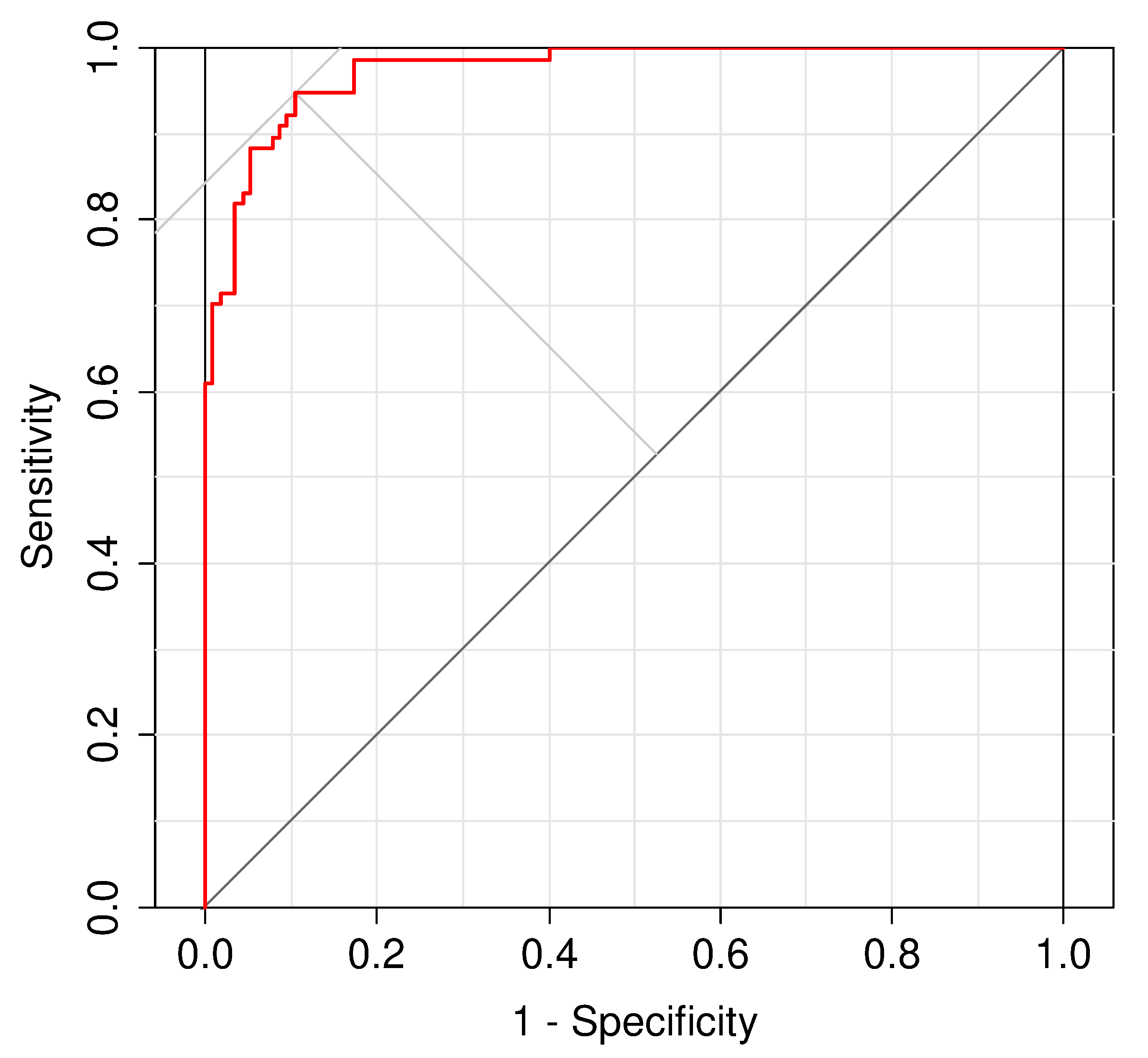

Since models tend to overfit if trained on too many variables, we reduced dimensionality through a combination of receiver operating characteristic (ROC) analysis and principal component analysis (PCA). First, each feature’s ability to independently distinguish depressed from normal adults was quantified as the area under the corresponding ROC curve (AUC); only features exceeding a certain threshold were selected. Next, highly correlated features were transformed into principal components. We carefully selected fewer features than our sample size given that PCA cannot be applied when more features than subjects are present because the resulting correlation matrix is rank-deficient. These components’ ability to predict depression was tested by logistic regression with L2 regularization (ridge regression) [

32]. The regularization parameters were optimized by cross-validation within the training set. Since data were split randomly during cross-validation, different values were computed for the regularization parameters every time the algorithm was run, causing downstream variation in regression coefficients. To stabilize the results of training, the model was trained several times, and each coefficient was averaged across runs to obtain the final weights.

The classification model was a logistic function describing the linear sum of three PCs (parameters) times their respective coefficients (weights). This function’s output was adopted as the classification index for major depressive disorder. The diagnostic performance of this metric was tested on the voice recordings in the test dataset (H1).

To determine the training and test data sets, splitting the data independent of the institution is also possible. However, to facilitate future applications, we employed a method where only a part of the recording environment data, as opposed to the whole, is incorporated in the index. This allows us to assess the extent to which voices in other recording environments were not incorporated in the index. Since H1 comprised of both healthy and depressed subjects, we used the speech data of H1 subjects as the test data.

Statistical processing was conducted using the free software R (version 4.0.2) [

33].

4. Discussion

Early in the model development, the fact that nearly 200 features computed by openSMILE exceeded our high cut-off value for feature selection (AUC > 0.85) led us to expect an abundance of major differences in vocal acoustic qualities of depressed patients compared with those of normal adults. However, the fact that over 80% of their variance could be explained by just three principal components suggested that these differences could be captured by a limited set of qualities. Nevertheless, the sheer number of features with strong loadings on each component made it difficult to interpret what specific vocal properties each represented. The nature of vocal properties influenced by major depressive disorder still remains a mystery. Audio features need to be mapped to physiological attributes of voice in order to decipher the meaning of these components. Hence, it remains an option for future work. The marginally less than 200 openSMILE features selected did not include any features relating to F0 and MFCC2, demonstrated by Mundt et al. [

18] and Taguchi et al. [

26] to be effective in distinguishing patients with major depressive disorders. One of the reasons behind this is believed to be the difference in the voice format, since Mundt et al. analyzed telephone speech and Taguchi et al. analyzed speech in a 16-bit, 22.05kHz PCM format. In addition, MFCC is a feature that highly depends on the content of speech, and differences in the content of speech may also have an effect.

The MDDI is calculated using logistic regression with regularization of three components derived by PCA. This criterion demonstrated very good classification performance (AUC > 0.95), distinguishing between depressed and normal subjects with sensitivity, specificity, and accuracy close to 0.9. This finding supports our expectation of major differences in vocal qualities between depressed and non-depressed adults, and suggests that such differences were properly captured by our classification criterion. Good performance was also observed when the MDDI was used to classify subjects in the test dataset—with sensitivity, specificity, and accuracy values close to 0.8—thus confirming our algorithm did not overfit to the training data. However, further validation seems necessary because we excluded patients that were considered to be in remission (HDRS score < 8), meaning that the sample size of the test set was not fully preserved.

Despite normalizing audio files before analysis to minimize the effects of recording environment, some indications suggested that we might have been unable to eliminate sources of variability besides volume. For normal adults, significant differences in mean MDDI were observed between recordings made in hospital examination rooms and university lecture halls; however, facility-related differences were not observed between comparable environments (i.e., H1nor/H2 and U1/U2). Since none of the depressed patients were recorded in a lecture hall, it is unclear how environmental differences could affect the proposed model’s ability to distinguish them from controls. In addition, it is noteworthy that samples taken from conference rooms tended to have lower MDDI than those from examination room recordings; if the same tendency occurred among patients, it could compromise our model’s detection performance. On the other hand, the fact that depressed patients recorded at C had significantly higher mean MDDI than those at H1dep was not attributable to environmental differences per se; instead, it could be explained by the fact that depression was originally more severe (i.e., HDRS score was higher) at patients at C than H1dep. Indeed, the difference in mean MDDI disappeared after adjustment for HDRS score; this supports the conclusion that facility-related differences in MDDI actually originate from facility-related differences in HDRS score. Furthermore, MDDI appears to reflect depression severity; this hypothesis is supported by the fact that it did not significantly correlate with HDRS score at any individual facility, but did correlate—albeit weakly—across the entire sample.

Almost no elderly patients were included in the depressed group, while some elderly patients were included in the healthy group. Since voice quality generally changes with age, it may have contributed to the classification. We therefore adjusted the MDDI for the two groups by age. Subsequently, a comparison of the mean values showed significant difference. Hence, we can conclude that differences in age distribution have no statistical impact on the MDDI. The reason for this is believed to be that subjects of all ages were included in the healthy group, and any features correlating to age were eliminated in the training process.

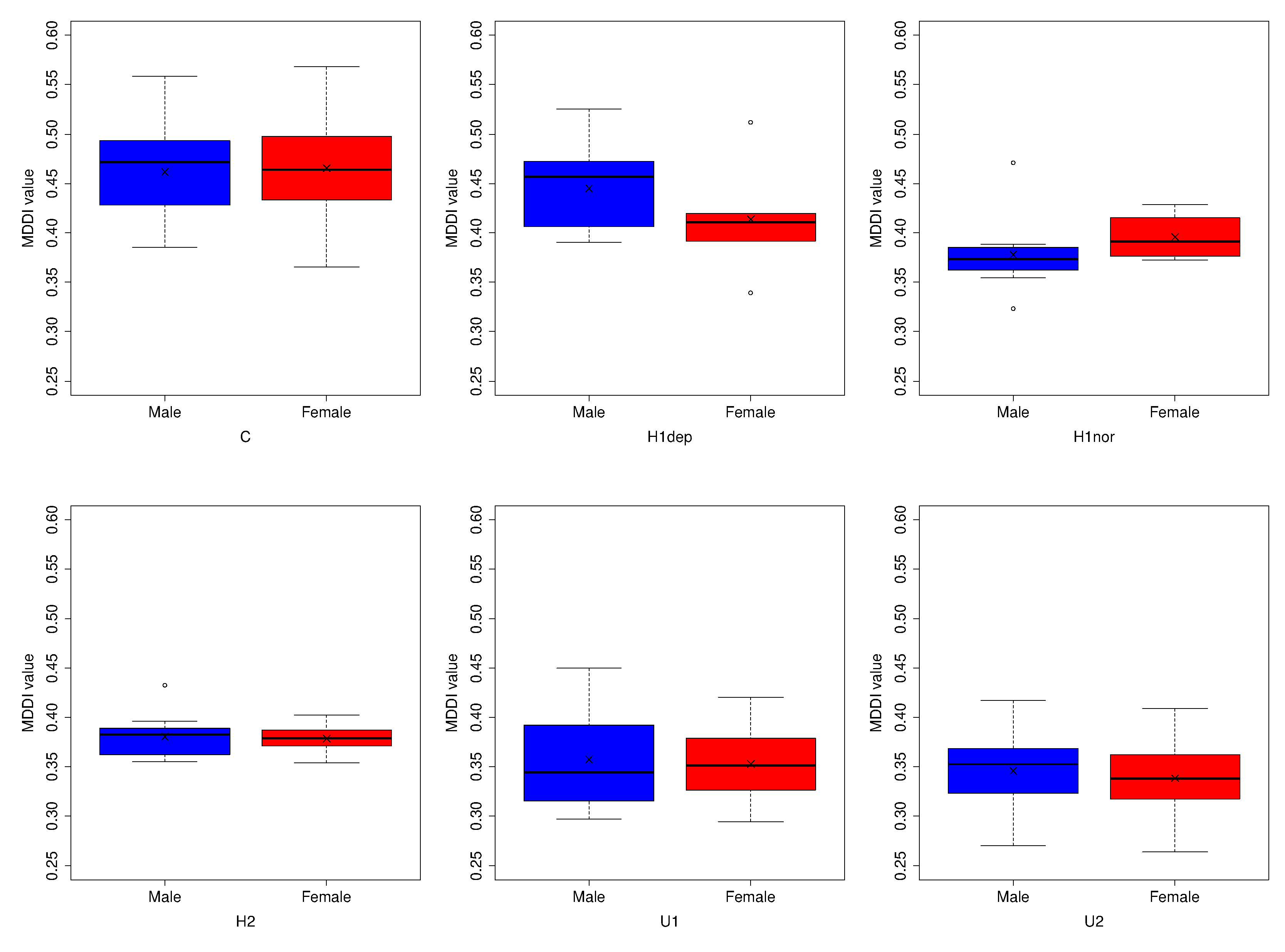

Hormonal changes affect the voice characteristics [

35] and impact the MDDI discrimination. Therefore, this phenomenon needs to be considered. Women are more susceptible to hormonal changes than men, owing to the menstruation. Therefore, we compared the gender differences in the MDDI values. We could not confirm statistical differences in MDDI among depressed or control subjects within any participating facility. The extraction of features with a significant male-female difference from the selected openSMILE features resulted in 43 and 59 features for the depressed and healthy group, respectively. After excluding similar feature pairs from each group, we had 8 and 10 features for the depressed and healthy group, respectively. Accordingly, the absence of any male–female difference in the MDDI may be due to the absence of varying features between males and females in the MDDI. The hormonal changes during the menstruation affect shimmering and jittering speech features [

36]. We did not detect statistically gender-based hormonal differences, as the MDDI excluded the shimmering and jittering speech features. A slight dissimilarity is visible between MDDI distributions of men and women within H1dep and H1nor. While the shimmering and jittering speech features remain unaltered, several other features were affected by the hormonal changes. Although gender-based hormonal changes need to be considered, minimal gender-based differences were observed in the MDDI. Since our classifier is gender-neutral, it is unnecessary to switch models according to patient gender, and thus seems easier to implement compared to the methodology proposed by Jiang et al. [

23]. The sound of the voice impacted hormonal changes [

37]. However, such correlation was not relevant to our MDDI discrimination.

Differences in experimental conditions have a vital impact on the accuracy of research in this field. Therefore, subject conditions (age, gender, recording environment, etc.) must be as consistent as possible. However, since the health status of healthy subjects is based on self-reporting, verifying its reliability is difficult. Moreover, defective speech recording may occur due to errors committed by the recording equipment operator, or the comorbidities of depression may be missed due to failure of diagnosis by the physician. Accordingly, a limitation of this study is the difficulty in completely eliminating these factors.

5. Conclusions

This study aimed to develop a composite index based on vocal acoustic features that can accurately distinguish patients with major depressive disorder from healthy adults. The data were split into a training set and test set in advance. The voice data in the training set were processed using openSMILE to derive 6552 vocal acoustic features. To prevent overfitting, the full set of features was screened to select those that seemed most useful for classification. Next, dimensionality was further reduced by combining and transforming qualitatively similar features using PCA. Logistic regression with regularization was then applied using the three resulting components as model parameters. The proposed criterion—MDDI—distinguished between depressed and normal subjects with ~90% sensitivity, specificity, and accuracy in the training set, and ~80% sensitivity, specificity, and accuracy in the test set. The near absence of gender-related differences in MDDI provides further support of its potential efficacy in practice.

Still, several topics require further study. Clarification is needed on the nature of vocal properties affected by depression. Differences in recording environments, such as between examination rooms in hospitals and conference rooms in general use buildings, should be eliminated as much as possible. Finally, the MDDI’s diagnostic performance should be tested on larger samples of test data.