Premature Spinal Bone Loss in Women Living with HIV is Associated with Shorter Leukocyte Telomere Length

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Design and Populations

2.2. Areal Bone Mineral Density (BMD) Assessments

2.3. Relative Average Leukocyte Telomere Length (LTL) Assay

2.4. Hepatitis C Virus (HCV) Infection Status

2.6. Statistical Methods

3. Results

3.1. Characteristics of the Study Population

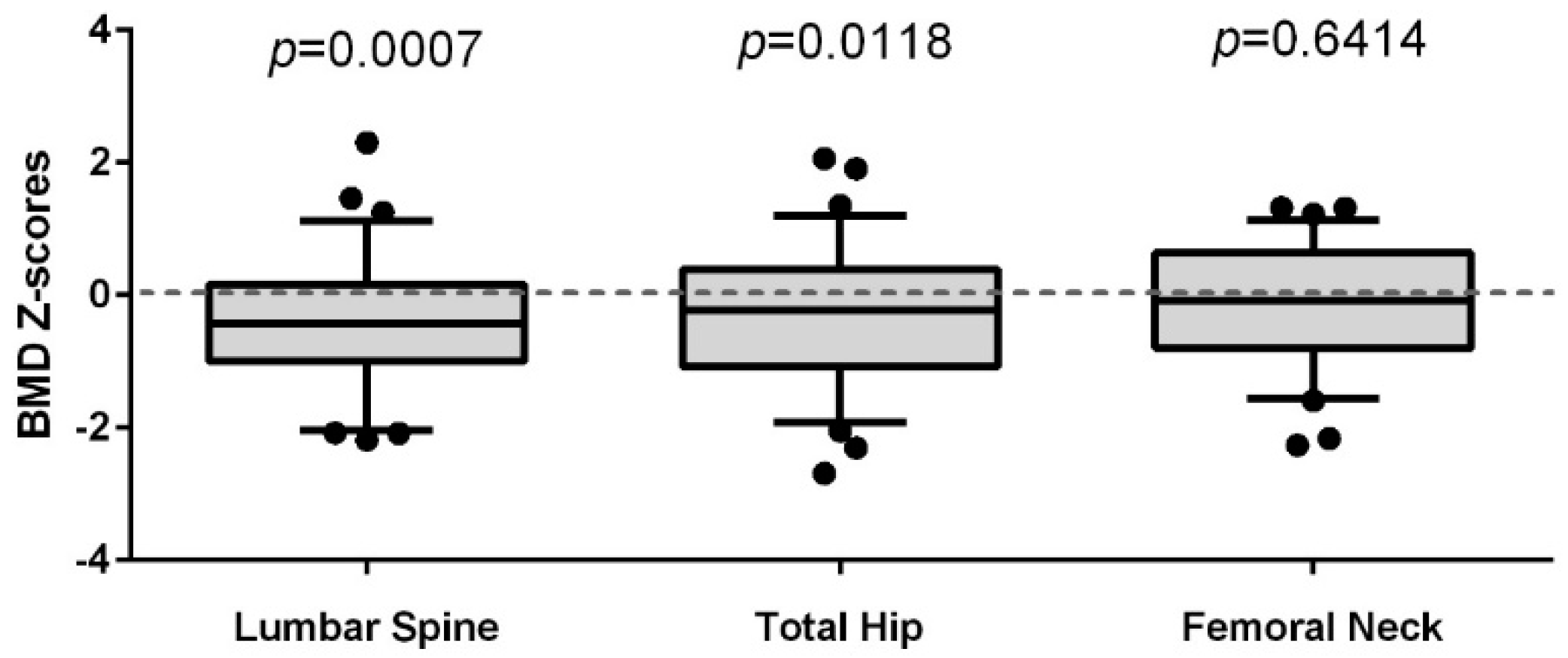

3.2. Comparison of the Lumbar Spine (LS), Total Hip (TH), and Femoral Neck (FN) BMD of Women Living with HIV to a Reference Population of Women (CaMos)

3.3. Predictors of BMD in Women Living with HIV

3.4. Predictors of LTL in Women Living with HIV

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Calmy, A.; Chevalley, T.; Delhumeau, C.; Toutous-Trellu, L.; Spycher-Elbes, R.; Ratib, O.; Zawadynski, S.; Rizzoli, R. Long-term HIV infection and antiretroviral therapy are associated with bone microstructure alterations in premenopausal women. Osteoporos. Int. 2013, 24, 1843–1852. [Google Scholar] [CrossRef] [PubMed]

- Castronuovo, D.; Cacopardo, B.; Pinzone, M.R.; Di Rosa, M.; Martellotta, F.; Schioppa, O.; Moreno, S.; Nunnari, G. Bone disease in the setting of HIV infection: Update and review of the literature. Eur. Rev. Med. Pharmacol. Sci. 2013, 17, 2413–2419. [Google Scholar] [PubMed]

- Guerri-Fernandez, R.; Villar-Garcia, J.; Diez-Perez, A.; Prieto-Alhambra, D. HIV infection, bone metabolism, and fractures. Arq. Bras. Endocrinol. Metabol. 2014, 58, 478–483. [Google Scholar] [CrossRef] [PubMed]

- Paccou, J.; Viget, N.; Legrout-Gerot, I.; Yazdanpanah, Y.; Cortet, B. Bone loss in patients with HIV infection. Jt. Bone Spine 2009, 76, 637–641. [Google Scholar] [CrossRef] [PubMed]

- Panayiotopoulos, A.; Bhat, N.; Bhangoo, A. Bone and vitamin D metabolism in HIV. Rev. Endocr. Metab. Disord. 2013, 14, 119–125. [Google Scholar] [CrossRef] [PubMed]

- Prior, J.; Burdge, D.; Maan, E.; Milner, R.; Hankins, C.; Klein, M.; Walmsley, S. Fragility fractures and bone mineral density in HIV positive women: A case-control population-based study. Osteoporos. Int. 2007, 18, 1345–1353. [Google Scholar] [CrossRef] [PubMed]

- Rothman, M.S.; Bessesen, M.T. HIV infection and osteoporosis: Pathophysiology, diagnosis, and treatment options. Curr. Osteoporos. Rep. 2012, 10, 270–277. [Google Scholar] [CrossRef] [PubMed]

- Thomas, J.; Doherty, S.M. HIV infection—A risk factor for osteoporosis. J. Acquir. Immune Defic. Syndr. 2003, 33, 281–291. [Google Scholar] [CrossRef] [PubMed]

- Brown, T.T.; Qaqish, R.B. Antiretroviral therapy and the prevalence of osteopenia and osteoporosis: A meta-analytic review. AIDS 2006, 20, 2165–2174. [Google Scholar] [CrossRef] [PubMed]

- Guerri-Fernandez, R.; Vestergaard, P.; Carbonell, C.; Knobel, H.; Aviles, F.F.; Castro, A.S.; Nogués, X.; Prieto-Alhambra, D.; Diez-Perez, A. HIV infection is strongly associated with hip fracture risk, independently of age, gender, and comorbidities: A population-based cohort study. J. Bone Miner. Res. 2013, 28, 1259–1263. [Google Scholar] [CrossRef] [PubMed]

- Prior, J.C.; Langsetmo, L.; Lentle, B.C.; Berger, C.; Goltzman, D.; Kovacs, C.S.; Kaiser, S.M.; Adachi, J.D.; Papaioannou, A.; Anastassiades, T.; et al. Ten-year incident osteoporosis-related fractures in the population-based Canadian Multicentre Osteoporosis Study—Comparing site and age-specific risks in women and men. Bone 2015, 71, 237–243. [Google Scholar] [CrossRef] [PubMed]

- Johnston, R.E.; Heitzeg, M.M. Sex, age, race and intervention type in clinical studies of HIV cure: A systematic review. AIDS Res. Hum. Retrovir. 2015, 31, 85–97. [Google Scholar] [CrossRef] [PubMed]

- Pinzone, M.R.; Moreno, S.; Cacopardo, B.; Nunnari, G. Is there enough evidence to use bisphosphonates in HIV-infected patients? A systematic review and meta-analysis. AIDS Rev. 2014, 16, 213–222. [Google Scholar] [PubMed]

- UNAIDS. 2014 Global Statistics, UNAIDS Report: How AIDS Changed Everything, in HIV Surveillance Report 2013; US Centers for Disease Control and Prevention: Atlanta, GA, USA, 2015.

- Deeks, S.G. HIV infection, inflammation, immunosenescence, and aging. Ann. Rev. Med. 2011, 62, 141–155. [Google Scholar] [CrossRef] [PubMed]

- Hoy, J. Bone Disease in HIV: Recommendations for Screening and Management in the Older Patient. Drugs Aging 2015, 32, 549–558. [Google Scholar] [CrossRef] [PubMed]

- Womack, J.A.; Goulet, J.L.; Gibert, C.; Brandt, C.; Chang, C.C.; Gulanski, B.; Fraenkel, L.; Mattocks, K.; Rimland, D.; Rodriguez-Barradas, M.C.; et al. Increased risk of fragility fractures among HIV infected compared to uninfected male veterans. PLoS ONE 2011, 6, e17217. [Google Scholar] [CrossRef] [PubMed]

- Bolland, M.J.; Wang, T.K.; Grey, A.; Gamble, G.D.; Reid, I.R. Stable bone density in HAART-treated individuals with HIV: A meta-analysis. J. Clin. Endocrinol. Metab. 2011, 96, 2721–2731. [Google Scholar] [CrossRef] [PubMed]

- Smith, R.L.; de Boer, R.; Brul, S.; Budovskaya, Y.; van Spek, H. Premature and accelerated aging: HIV or HAART? Front. Genet. 2012, 3, 328. [Google Scholar] [CrossRef] [PubMed]

- Blasco, M.A. Telomeres and human disease: Ageing, cancer and beyond. Nat. Rev. Genet. 2005, 6, 611–622. [Google Scholar] [CrossRef] [PubMed]

- Ziegler, M. The adenine nucleotide translocase—A carrier protein potentially required for mitochondrial generation of NAD. Biochemistry 2005, 70, 173–177. [Google Scholar] [CrossRef] [PubMed]

- Cote, H.C.; Brumme, Z.L.; Craib, K.J.; Alexander, C.S.; Wynhoven, B.; Ting, L.; Wong, H.; Harris, M.; Harrigan, P.R.; O’Shaughnessy, M.V.; et al. Changes in mitochondrial DNA as a marker of nucleoside toxicity in HIV-infected patients. N. Engl. J. Med. 2002, 346, 811–820. [Google Scholar] [CrossRef] [PubMed]

- Hukezalie, K.R.; Thumati, N.R.; Cote, H.C.; Wong, J.M. In vitro and ex vivo inhibition of human telomerase by anti-HIV nucleoside reverse transcriptase inhibitors (NRTIs) but not by non-NRTIs. PLoS ONE 2012, 7, e47505. [Google Scholar] [CrossRef] [PubMed]

- Valdes, A.M.; Richards, J.B.; Gardner, J.P.; Swaminathan, R.; Kimura, M.; Xiaobin, L.; Aviv, A.; Spector, T.D. Telomere length in leukocytes correlates with bone mineral density and is shorter in women with osteoporosis. Osteoporos. Int. 2007, 18, 1203–1210. [Google Scholar] [CrossRef] [PubMed]

- Sanders, J.L.; Cauley, J.A.; Boudreau, R.M.; Zmuda, J.M.; Strotmeyer, E.S.; Opresko, P.L.; Hsueh, W.-C.; Cawthon, R.M.; Li, R.; Harris, T.B.; et al. Leukocyte Telomere Length Is Not Associated With BMD, Osteoporosis, or Fracture in Older Adults: Results from the Health, Aging and Body Composition Study. J. Bone Miner. Res. 2009, 24, 1531–1536. [Google Scholar] [CrossRef] [PubMed]

- Zanet, D.L.; Thorne, A.; Singer, J.; Maan, E.J.; Sattha, B.; Le Campion, A.; Soudeyns, H.; Pick, N.; Murray, M.; Money, D.M.; et al. Association between short leukocyte telomere length and HIV infection in a cohort study: No evidence of a relationship with antiretroviral therapy. Clin. Infect. Dis. 2014, 58, 1322–1332. [Google Scholar] [CrossRef] [PubMed]

- Tenenhouse, A.; Joseph, L.; Kreiger, N.; Poliquin, S.; Murray, T.M.; Blondeau, L.; Berger, C.; Hanley, D.A.; Prior, J.C.; the CaMos Research Group. Estimation of the prevalence of low bone density in Canadian women and men using a population-specific DXA reference standard: The Canadian Multicentre Osteoporosis Study (CaMos). Osteoporos. Int. 2000, 11, 897–904. [Google Scholar] [CrossRef] [PubMed]

- Langsetmo, L.; Hanley, D.A.; Kreiger, N.; Jamal, S.A.; Prior, J.; Adachi, J.D.; Davison, K.S.; Kovacs, C.; Anastassiades, T.; Tenenhouse, A.; et al. Geographic variation of bone mineral density and selected risk factors for prediction of incident fracture among Canadians 50 and older. Bone 2008, 43, 672–678. [Google Scholar] [CrossRef] [PubMed]

- Berger, C.; Goltzman, D.; Langsetmo, L.; Joseph, L.; Jackson, S.; Kreiger, N.; Tenenhouse, A.; Davison, K.S.; Josse, R.G.; Prior, J.C.; et al. Peak bone mass from longitudinal data: Implications for the prevalence, pathophysiology, and diagnosis of osteoporosis. J. Bone Miner. Res. 2010, 25, 1948–1957. [Google Scholar] [CrossRef] [PubMed]

- Kaptoge, S.; da Silva, J.A.; Brixen, K.; Reid, D.M.; Kroger, H.; Nielsen, T.L.; Andersen, M.; Hagen, C.; Lorenc, R.; Boonen, S.; et al. Geographical variation in DXA bone mineral density in young European men and women. Results from the Network in Europe on Male Osteoporosis (NEMO) study. Bone 2008, 43, 332–339. [Google Scholar] [CrossRef] [PubMed]

- Lewiecki, E.M.; Gordon, C.M.; Baim, S.; Leonard, M.B.; Bishop, N.J.; Bianchi, M.L.; Kalkwarf, H.J.; Langman, C.B.; Plotkin, H.; Rauch, F.; et al. International Society for Clinical Densitometry 2007 Adult and Pediatric Official Positions. Bone 2008, 43, 1115–1121. [Google Scholar] [CrossRef] [PubMed]

- Kanis, J.A.; Johnell, O.; Oden, A.; Jonsson, B.; De Laet, C.; Dawson, A. Risk of hip fracture according to the World Health Organization criteria for osteopenia and osteoporosis. Bone 2000, 27, 585–590. [Google Scholar] [CrossRef]

- Zanet, D.L.; Saberi, S.; Oliveira, L.; Sattha, B.; Gadawski, I.; Cote, H.C. Blood and dried blood spot telomere length measurement by qPCR: Assay considerations. PLoS ONE 2013, 8, e57787. [Google Scholar] [CrossRef] [PubMed]

- Meng, S.; Li, J. A novel duplex real-time reverse transcriptase-polymerase chain reaction assay for the detection of hepatitis C viral RNA with armored RNA as internal control. Virol. J. 2010, 7, 117. [Google Scholar] [CrossRef] [PubMed]

- Brandi, M.L. Microarchitecture, the key to bone quality. Rheumatology 2009, 48 (Suppl. 4), iv3–iv8. [Google Scholar] [CrossRef] [PubMed]

- Hunter, D.J.; Sambrook, P.N. Bone loss. Epidemiology of bone loss. Arthritis Res. 2000, 2, 441–445. [Google Scholar] [CrossRef] [PubMed]

- Rolland, T.; Boutroy, S.; Vilayphiou, N.; Blaizot, S.; Chapurlat, R.; Szulc, P. Poor trabecular microarchitecture at the distal radius in older men with increased concentration of high-sensitivity C-reactive protein—The STRAMBO study. Calcif. Tissue Int. 2012, 90, 496–506. [Google Scholar] [CrossRef] [PubMed]

- Von Figura, G.; Hartmann, D.; Song, Z.; Rudolph, K.L. Role of telomere dysfunction in aging and its detection by biomarkers. J. Mol. Med. 2009, 87, 1165–1171. [Google Scholar] [CrossRef] [PubMed]

- Pathai, S.; Lawn, S.D.; Gilbert, C.E.; McGuinness, D.; McGlynn, L.; Weiss, H.A.; Port, J.; Christ, T.; Barclay, K.; Wood, R.; et al. Accelerated biological ageing in HIV-infected individuals in South Africa: A case-control study. AIDS 2013, 27, 2375–2384. [Google Scholar] [CrossRef] [PubMed]

- Manavalan, J.S.; Arpadi, S.; Tharmarajah, S.; Shah, J.; Zhang, C.A.; Foca, M.; Neu, N.; Bell, D.L.; Nishiyama, K.K.; Kousteni, S.; et al. Abnormal Bone Acquisition with Early-Life HIV Infection: Role of Immune Activation and Senescent Osteogenic Precursors. J. Bone Miner. Res. 2016, 31, 1988–1996. [Google Scholar] [CrossRef] [PubMed]

- Beaupere, C.; Garcia, M.; Larghero, J.; Feve, B.; Capeau, J.; Lagathu, C. The HIV proteins Tat and Nef promote human bone marrow mesenchymal stem cell senescence and alter osteoblastic differentiation. Aging Cell 2015, 14, 534–546. [Google Scholar] [CrossRef] [PubMed]

- Cotter, E.J.; Malizia, A.P.; Chew, N.; Powderly, W.G.; Doran, P.P. HIV proteins regulate bone marker secretion and transcription factor activity in cultured human osteoblasts with consequent potential implications for osteoblast function and development. AIDS Res. Hum. Retrovir. 2007, 23, 1521–1530. [Google Scholar] [CrossRef] [PubMed]

- Hernandez-Vallejo, S.J.; Beaupere, C.; Larghero, J.; Capeau, J.; Lagathu, C. HIV protease inhibitors induce senescence and alter osteoblastic potential of human bone marrow mesenchymal stem cells: Beneficial effect of pravastatin. Aging Cell 2013, 12, 955–965. [Google Scholar] [CrossRef] [PubMed]

- Von Zglinicki, T.; Martin-Ruiz, C.M. Telomeres as biomarkers for ageing and age-related diseases. Curr. Mol. Med. 2005, 5, 197–203. [Google Scholar] [CrossRef] [PubMed]

- Leeansyah, E.; Cameron, P.U.; Solomon, A.; Tennakoon, S.; Velayudham, P.; Gouillou, M.; Spelman, T.; Hearps, A.; Fairley, C.; Smit, D.V.; et al. Inhibition of telomerase activity by human immunodeficiency virus (HIV) nucleos(t)ide reverse transcriptase inhibitors: A potential factor contributing to HIV-associated accelerated aging. J. Infect. Dis. 2013, 207, 1157–1165. [Google Scholar] [CrossRef] [PubMed]

- Escota, G.V.; Mondy, K.; Bush, T.; Conley, L.; Brooks, J.T.; Onen, N.; Patel, P.; Kojic, E.M.; Henry, K.; Hammer, J.; et al. High Prevalence of Low Bone Mineral Density and Substantial Bone Loss over 4 Years Among HIV-Infected Persons in the Era of Modern Antiretroviral Therapy. AIDS Res. Hum. Retrovir. 2016, 32, 59–67. [Google Scholar] [CrossRef] [PubMed]

- Ofotokun, I.; Titanji, K.; Vunnava, A.; Roser-Page, S.; Vikulina, T.; Villinger, F.; Rogers, K.; Sheth, A.N.; Lahiri, C.D.; Lennox, J.L.; et al. Antiretroviral therapy induces a rapid increase in bone resorption that is positively associated with the magnitude of immune reconstitution in HIV infection. AIDS 2016, 30, 405–414. [Google Scholar] [CrossRef] [PubMed]

- Triant, V.A.; Brown, T.T.; Lee, H.; Grinspoon, S.K. Fracture prevalence among human immunodeficiency virus (HIV)-infected versus non-HIV-infected patients in a large U.S. healthcare system. J. Clin. Endocrinol. Metab. 2008, 93, 3499–3504. [Google Scholar] [CrossRef] [PubMed]

| Demographics | WLWH (n = 73) | BC CaMos Cohort (n = 280) |

|---|---|---|

| Mean age at DXA (years) | 43 ± 8.7 | 50 ± 8.1 |

| Mean age of women <50 years | 39 ± 6.3 | 42 ± 6.5 |

| Mean age of ≥50 years | 55 ± 2.6 | 55 ± 3.1 |

| Mean BMI (kg/m2) at DXA | 25.4 ± 6.4 | 26.0 ± 5.1 |

| Height (cm) | 162.5 ± 7.3 | 161.4 ± 6.3 |

| Weight (kg) | 67.0 ± 17.2 | 68.3 ± 14.2 |

| Median number of live births | 2 [0–6] | 2 [0–7] |

| Mean smoking pack years | 8.6 ± 12.5 | ^ |

| Mean relative leukocyte telomere length | 2.88 ± 0.52 | ─ * |

| Race/Ethnicity | ||

| Caucasian | 32 (44%) | 224 (80%) |

| Aboriginal | 18 (25%) | 1 (<1%) |

| African-Canadian | 12 (16%) | 0 |

| South Asian | 5 (7%) | 16 (6%) |

| Asian | 3 (4%) | 39 (14%) |

| Other | 3 (4.0%) | 0 |

| History of illicit drug use | ||

| Yes | 19 (26%) | — |

| No | 43 (59%) | — |

| Unknown | 11 (15%) | — |

| Combination antiretroviral therapy | ||

| Naïve | 2 (3%) | — |

| Experienced | 71 (97%) | — |

| Mean lifetime protease inhibitor use (months) | 47 ± 43 | — |

| Mean lifetime tenofovir use (months) | 28 ± 25 | — |

| CD4 count at visit (cells/µL) | ||

| ≤200 | 7 (10%) | — |

| >200 | 65 (90%) | — |

| HIV plasma viral load at visit (copies/mL) | ||

| <250 | 57 (80%) | — |

| ≥250 | 14 (20%) | — |

| Active HCV co-infection | ||

| Yes | 17 (23%) | — |

| No | 56 (77%) | — |

| Bone mineral density (g/cm2) | ||

| Lumbar Spine (L1–4) | 0.97 ± 0.1 | 0.99 ± 0.1 |

| Femoral Neck | 0.77 ± 0.1 | 0.76 ± 0.1 |

| Total Hip | 0.89 ± 0.1 | 0.91 ± 0.1 |

| Standardized β | 95% CI for β | p-Value | |

|---|---|---|---|

| LS BMD Overall Model: R2 = 0.148; F (2, 70) = 6.068, p = 0.004 | |||

| Leukocyte telomere length | 0.301 | 0.019, 0.125 | 0.008 |

| BMI (kg/m2) | 0.237 | 0.000, 0.009 | 0.035 |

| TH BMD overall model: R2 = 0.273; F (2, 70) = 13.128, p < 0.001 | |||

| BMI (kg/m2) | 0.350 | 0.003, 0.011 | 0.001 |

| Age (years) | −0.317 | −0.007, −0.002 | 0.003 |

| FN BMD overall model: R2 = 0.248; F (2, 70) = 11.512, p < 0.001 | |||

| BMI (kg/m2) | 0.212 | 0.000, 0.007 | 0.049 |

| Age (years) | −0.412 | −0.008, −0.002 | <0.001 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kalyan, S.; Pick, N.; Mai, A.; Murray, M.C.M.; Kidson, K.; Chu, J.; Albert, A.Y.K.; Côté, H.C.F.; Maan, E.J.; Goshtasebi, A.; et al. Premature Spinal Bone Loss in Women Living with HIV is Associated with Shorter Leukocyte Telomere Length. Int. J. Environ. Res. Public Health 2018, 15, 1018. https://doi.org/10.3390/ijerph15051018

Kalyan S, Pick N, Mai A, Murray MCM, Kidson K, Chu J, Albert AYK, Côté HCF, Maan EJ, Goshtasebi A, et al. Premature Spinal Bone Loss in Women Living with HIV is Associated with Shorter Leukocyte Telomere Length. International Journal of Environmental Research and Public Health. 2018; 15(5):1018. https://doi.org/10.3390/ijerph15051018

Chicago/Turabian StyleKalyan, Shirin, Neora Pick, Alice Mai, Melanie C. M. Murray, Kristen Kidson, Jackson Chu, Arianne Y. K. Albert, Hélène C. F. Côté, Evelyn J. Maan, Azita Goshtasebi, and et al. 2018. "Premature Spinal Bone Loss in Women Living with HIV is Associated with Shorter Leukocyte Telomere Length" International Journal of Environmental Research and Public Health 15, no. 5: 1018. https://doi.org/10.3390/ijerph15051018

APA StyleKalyan, S., Pick, N., Mai, A., Murray, M. C. M., Kidson, K., Chu, J., Albert, A. Y. K., Côté, H. C. F., Maan, E. J., Goshtasebi, A., Money, D. M., & Prior, J. C. (2018). Premature Spinal Bone Loss in Women Living with HIV is Associated with Shorter Leukocyte Telomere Length. International Journal of Environmental Research and Public Health, 15(5), 1018. https://doi.org/10.3390/ijerph15051018