Need Help?

19 August 2021

Comment on: 'Journal citation reports and the definition of a predatory journal: The case of the Multidisciplinary Digital Publishing Institute (MDPI)' from Oviedo-García

We read the article ‘Journal citation reports and the definition of a predatory journal: The case of the Multidisciplinary Digital Publishing Institute (MDPI)’ (doi:10.1093/reseval/rvab020) from Oviedo-García with interest. We understand that Gold open access—the immediate, free dissemination of research after publication—is sometimes misunderstood and confused with ongoing discussions about predatory publishing practices.

We would like to raise several points of concern regarding the analysis presented in the article, including the misrepresentation of MDPI, as well as concerns around the accuracy of the data and validity of the research methodology.

In general, we noted that the article is predicated on the idea that MDPI is ‘predatory’. It begins with this characterisation, before attempt at evaluation has taken place. The article claims to attempt to ‘elucidate whether these journals are in fact predatory. Their characteristics are therefore examined to see whether they are equatable with certain definitions of predatory journals.’

Different definitions are provided for what a predatory journal may be, but ‘a clear definition for their definitive identification’ is not established. Based on a broad range of criteria from multiple sources, it is stated that ‘[t]he problem is that these criteria, above all if taken in an isolated way, are questionable’, that ‘[i]t is therefore essential to define the concept’. Since the article establishes no clear criteria, and subsequently offers a description of MDPI with minimal context or analysis of other publishers, the resultant implication is that MDPI serves in the article as a de facto definition of predatory publishing. This is a flawed methodology. The argument presented assumes the truth of the conclusion.

The author repeatedly justifies the assessment of MDPI on the basis of a list of predatory journals that MDPI is not included in. The article describes MDPI journals, and then concludes that ‘These results showed that the MDPI-journals under analysis generally fitted the definition of predatory journals’ without providing clear criteria for analysis from the outset, and without offering appropriate contextualisation. This is problematic.

In the abstract, the article states that ‘These journals are analysed, ... with regard to self-citations and the source of those self-citations in 2018 and 2019. The results showed that the self-citation rates increased and was very much higher than those of the leading journals in the JCR category. Besides, an increasingly high rate of citations from other MDPI-journals was observed. The formal criteria together with the analysis of the citation patterns of the 53 journals under analysis all singled them out as predatory journals’. This methodology is flawed. Leading journals in a category cannot be directly compared with other journals differently ranked in the same category. A like-for-like comparison with similarly ranked journals from other publishers would be more appropriate and accurate. Therefore, the conclusion that MDPI journals are predatory does not follow.

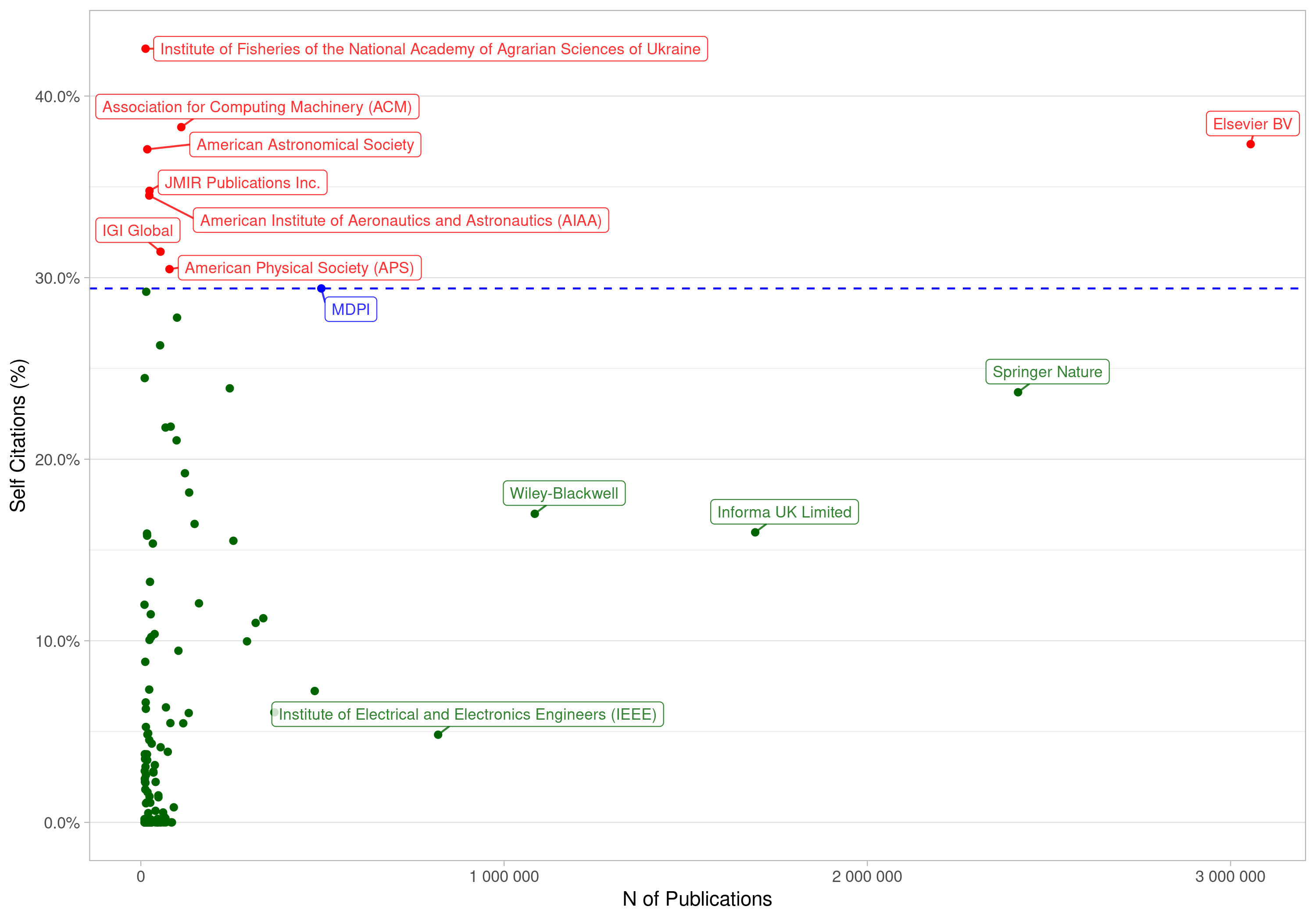

We have taken the opportunity to analyse self-citation data across different publishers. Figure 1 represents publishers with more than 10,000 publications between 2018 and July 2021. The coordinates are the number of articles published in the time interval (X axis) and the percentage of self-citations (Y axis). By percentage of self-citations, we mean the ratio between the number of self-citations and the total number of citations. Self-citation is the sum of self-citation at the publisher level (an author of journal A cites articles published in the same journal A or in any other journal of the same publisher) for all journals of that publisher.

It can be seen that MDPI is in-line with other publishers, and that its self-citation index is lower than that of many others; on the other hand, its self-citation index is higher than some others.

Figure 1. Self-Citation by Publishers, showing publishers with more than 10,000 publications between 2018 and July 2021. (Data source: Scilit citation index, comprising over 1.2 billion citations in total, which are collected and merged from different sources including CrossRef, PubMed Central and XML data of participating publishers.)

The author also considers that a ‘lack of transparency’ may be a factor in considering a journal to be predatory. Any implication that MDPI is not transparent is misleading. The author obtained all the information required for the evaluation from our website regarding editorial processes, peer review and APC policies, and, furthermore, made use of MDPI’s Annual Report. In the past, we have welcomed any discussion or engagement with those who wish to obtain further information from MDPI, as can be seen in articles in blogs such as Scholarly Kitchen (https://scholarlykitchen.sspnet.org/2020/08/10/guest-post-mdpis-remarkable-growth/). This is something we would also welcome in the future.

Another possible criterion highlighted by the author is that ‘journal names may be very similar to prestigious journals’. MDPI has a clear naming strategy, with most of our journals being short names, in the plural, that directly refer to the field. Some of the journals that have been analysed in the article are journals that were previously published by other publishers, and were named by either the owning entity or previous publishers.

The author highlights the ‘exponential growth’ of predatory journals, stating that ‘The alarming increase in the number of predatory journals (from 1,800 to 8,000 over the period 2010–4) and the exponential growth (from 53,000 to 420,000 between 2010 and 2014) of the articles that they publish (Shen and Bjork 2015) have rendered futile any effort to keep white and blacklists updated.’. In the case of MDPI, the author observes that ‘In 1996, 47 articles were published in two journals, since when the number of articles and journals have progressively increased and have undergone exponential growth over recent years.’

The author is correct that MDPI has received a high number of submissions that has led to growth since our launch in 1996, 25 years ago. MDPI journals’ Editorial Boards take decisions on article acceptance following peer review. MDPI is not limited in terms of number of pages, or numbers of articles in particular issues, due to its online nature. In addition, as of writing, MDPI has over 5000 members of staff, spread across 16 offices globally, in order to offer a round the clock service to authors and editors in different time zones, who support the editorial process and journal management. In recent years, open access has come to the fore, is frequently a requirement from funders, and is increasingly embraced by a large number of scholars worldwide. There are also a number of initiatives that support open access, such as Plan S. Our website shows that, as open access has become more widely used as a publishing model for academics and scholars, our portfolio of journals, our staffing, and our submissions have also increased. The share of scholarly literature published in immediate Open Access has in fact almost doubled within the past five years, from 11.0% in the period 2011–15 to 21.9% in 2016–20 (Web of Science Core Collection, Acc. August 2021). This rise is an indication of the increasing democratisation of the global research landscape in which we live and work today.

The author states that ‘[e]very MDPI journal publishes 12 regular yearly [=monthly?] issues’. This is incorrect. MDPI journals publish between 4 and 24 issues per year. MDPI journals covered in the JCR typically publish between 12 and 24 issues per year, with a regular schedule, to allow for timely indexing on behalf of authors.

The author highlights the number of Editorial Board Members that several MDPI journals have, and raises concerns about rapid publication and peer review times, and peer review quality.

‘MDPI reports state that the median time from submission to publication for all its 218 journals was 39 days in 2019 (MDPI 2020) as it was in 2018 when MDPI published 203 journals (MDPI 2019).’ It is acknowledged that comparable data from other publishers is not often published as aggregate figures. Although this may be indicative of a lack of transparency, without this, any comparison is impossible. Equating MDPI’s rapid peer review processes with predatory publishing is therefore misleading. Tables 4 and 5 report on Elsevier journals, but do not include comparable data.

‘The results showed the average time from submission to first decision of JCR-indexed MDPI-journals was 19 days. The increase in the number of journals and articles published had no effect on time from submission to first decision.’ MDPI’s shorter peer review times are enabled by our active Editorial Boards. The Editorial Boards support speed and efficient management of efforts. Our Editorial Boards are, at the time of writing, supported by MDPI’s 5000 strong staff members, are available to provide service to authors and avoid undue delay in the peer review process. Almost 900 editorial and production staff were hired in 2019, and more than 1700 in 2020. Similar high growth rates in number of employees have occurred yearly over the last 10 years. This is how MDPI has handled, in a timely manner, the increased number of articles without delaying the peer reviewing process.

All MDPI articles are peer reviewed and articles are accepted by Academic Editors, who are either Editorial Board members or Guest Editors. We publish the names of the editors who accept the article after peer review. Many of our authors opt for open peer review, which makes the review reports openly available alongside the published article.

‘It is interesting to note that the size of the Editorial Board was, in all cases, larger in the MDPI-journals than in the leading JCR-indexed journals belonging to the same categories’. The comparison is not like-for-like. MDPI journals are compared with leaders in the same JCR category, some of which are observed to have 0 Editorial Board members, and in some cases far lower numbers of published papers annually. The volume of rigorously reviewed papers that MDPI publishes requires many areas of expertise, to ensure that appropriate Editorial Board members are available regularly and not overburdened. The conclusion that having many Editorial Board members means that the journal is predatory does not follow.

‘Editorial Boards of the MDPI-journals are formed of researchers who are not professional editors.’ The implication that the Editorial Boards of MDPI journals are not filled by scientists and academics who are able to make appropriate evaluation of academic articles does not reflect reality. Journal Editorial Boards in academic publishing typically include leading academics who are responsible for making scientific decisions regarding peer review and manuscript acceptance and rejection. The Editorial Boards of MDPI journals include leading academics from a variety of universities and institutions, and they are invited based on clear criteria, for example, expertise in subject area, publication record and personal recommendations.

The author closes their article stating, ‘the 400 or so conferences that MDPI sponsored in 2019 should all be carefully scrutinized.’ MDPI has been a long-time advocate and supporter for conferences within the scientific community, sponsoring esteemed conferences including AGU, AAG Annual Meeting, American Society for Virology's Annual Meeting, European Meteorological Society Annual Meeting, and several conferences organized by the American Chemical Society (ACS National Meeting & Expo (Chemistry & Water), 93rd ACS Colloid & Surface Science Symposium). We are proud to have sponsored more than 400 academic conferences and supported 280 scholars, in particular young academics, to attend scientific events, and look forward to continuing our support for scientific conferences.

The assertion is made that predatory journals are closely associated with the APC publishing model, ‘exploiting the gold open-access publication model to their upmost’. MDPI’s APCs are stated on our website, and are payable after full peer review, for articles accepted for publication. MDPI’s highest APC is 2400 CHF (approximately 2200 Euro).

The author states that ‘The APCs published on the journal web pages of the 53 journals under analysis imply that the articles published in 2019 could have generated an approximate income of 153,834,500 CHF (no APC-related waiver or discount could be considered in this calculation as no JCR-indexed journal provides relevant detailed information on the topic).’. This is inaccurate, and is not compared with other publishers. MDPI’s website (https://www.mdpi.com/apc) specifies the costs associated with open access publishing and where APCs go, which follow recommendations from the Fair Open Access Alliance. The author also claims to have read MDPI’s Annual Report. The Annual Report details the proportion of APCs waived or discounted each year. Information on the APCs of many publishers is available here: https://treemaps.intact-project.org/apcdata/openapc/#publisher/. MDPI’s average APC is significantly lower than other publishers'.

MDPI is not alone in its ‘for profit’ status, and other publishers are publicly listed. The author should be well aware that success in academic publishing is built upon robust peer review processes and providing an efficient service for authors. We believe that there is space for open access publishers to be either not-for-profit organisations, or commercial enterprises. We have found that as a commercial publisher we have been able to drive efficiencies in the peer review and publication process, ensure that we have well-resourced teams and adequate staffing, and reinvest into free services for the academic community such as through preprints.org and SciLit. As we have previously stated (https://www.frontiersin.org/articles/10.3389/fnbeh.2015.00201/full), we accept that some publishers engage in questionable activities and practises that may be characterised as predatory, but we are confident that authors, readers and academics are able to differentiate.

We believe that assessment of the publishing landscape is highly valuable, and we welcome detailed research that engages with core questions.

Contact: Research Integrity and Publication Ethics team, Head of Publication Ethics, MDPI; Dr. Giulia Stefenelli, Chair of the Board of Scientific Officers, MDPI.