Abstract

The rapid surge of Artificial Internet-of-Things (AIoT) devices has outpaced the deployment of robust, privacy-preserving anomaly detection solutions suitable for resource-constrained edge environments. This paper presents a two-stage hybrid Federated Learning (FL) framework for IoT anomaly detection and classification, validated on the real-world N-BaIoT dataset. In the first stage, each device trains a generative Artificial Intelligence (AI) model on benign traffic only, and in the second stage a Histogram-based Gradient-Boosting (HGB) classifier labels flagged traffic. All models operate under a synchronous, collaborative FL architecture across nine commercial IoT devices, thus preserving data privacy and minimizing communication. Through both inter- and intra-benchmarking against state-of-the-art baselines, the Variational Autoencoder–HGB (VAE-HGB) pipeline emerges as the top performer, achieving an average end-to-end accuracy of 99.14% across all classes. These results demonstrate that reconstruction-driven generative AI models, when combined with federated averaging and efficient classification, deliver a highly scalable, accurate, and privacy-preserving solution for securing resource-constrained IoT environments.

1. Introduction

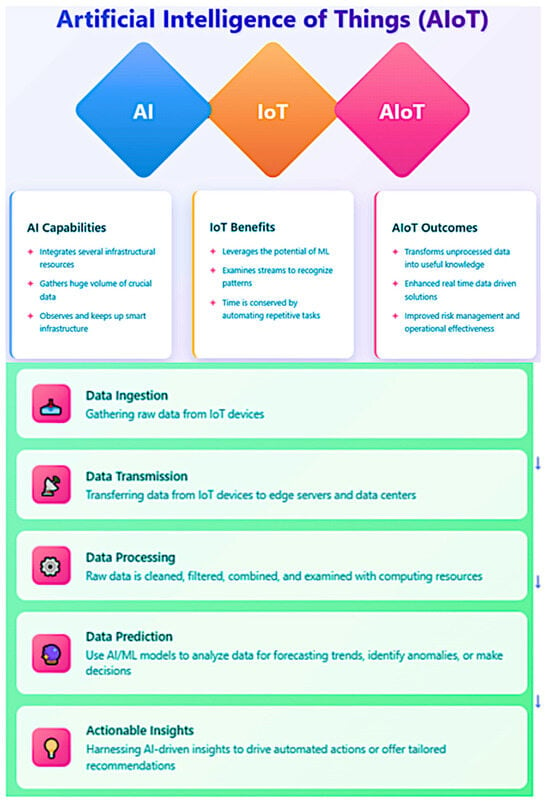

The convergence of AI and the Internet of Things (IoT), commonly referred to as Artificial Intelligence of Things (AIoT), has revolutionized manufacturing processes by enabling intelligent automation, real-time monitoring, and data-driven decision-making. As manufacturing industries increasingly adopt these technologies to achieve sustainability goals, the security of AIoT systems has emerged as a critical factor in ensuring the success of any industrial system [1,2].

Secure Industrial IoT (IIoT) systems play a pivotal role in optimizing resource utilization and minimizing waste in manufacturing environments. When IIoT systems maintain their integrity through robust security measures, they provide accurate and reliable data that forms the foundation for precise resource allocation decisions. Manufacturing facilities equipped with secure sensors and analytics platforms can monitor material consumption with high precision, enabling just-in-time inventory management that prevents overproduction and reduces warehousing requirements [3].

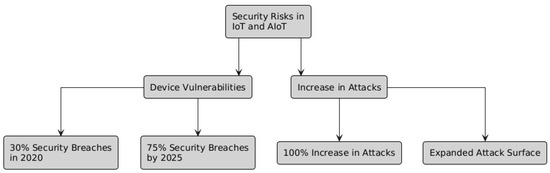

The protection of AIoT systems against malicious tampering is particularly crucial for resource conservation. Unauthorized access to production systems could lead to deliberate or accidental alterations of manufacturing parameters, potentially resulting in significant material waste. For instance, a compromised AIoT system controlling raw material dispensing could cause excessive material usage, directly contradicting sustainability objectives. Secure authentication mechanisms, encryption, and access controls prevent such scenarios by ensuring that only authorized personnel can modify production parameters [4]. Figure 1 shows the relationship between IoT and AIoT.

Figure 1.

AIoT vs. IoT vs. AI.

This study is motivated by two main factors. The swift expansion of IoT-based devices (IIoT/AIoT) across diverse industrial sectors has heightened the dangers of cyberattacks, which may result in substantial operational interruptions. Conventional security solutions frequently fail to adequately handle the dynamic and diverse characteristics of IoT device ecosystems. Secondly, current adaptations of AI present new chances to explore its potential in enhancing cybersecurity measures; however, this necessitates meticulous adaptation to the decentralized and data-sensitive environments of IoT devices. This research provides numerous substantial advances to the domain of cybersecurity in IoT devices through the following contributions:

- Inter-Benchmarking of Hybrid Learning Approaches: A variety of hybrid learning approaches that utilize generative and predictive AI architecture were proposed and internally compared on the N-BaIoT dataset.

- Intra-Benchmarking of Hybrid Learning Approaches: These proposed hybrid learning approaches were compared against external models that were applied on the same dataset in other articles.

- Incorporating a Federated Learning (FL) Framework into a Hybrid Learning Approaches: The Adoption of a synchronous and collaborative FL architecture enhances effective model training while preserving data privacy. This system facilitates incremental and collaborative learning from dispersed data sources, essential for dynamic and scalable IoT environments.

- Validating the Federated Hybrid Learning Approaches with Real-World Data: We validate our hybrid learning approaches utilizing the extensive N-BaIoT dataset derived from real IoT operations, guaranteeing that the models are resilient and efficient across diverse situations and attack vectors. Additionally, the hybrid learning approaches perform empirical evaluation with real traffic data, gathered from nine commercial IoT devices infected by authentic botnets from two families (N-BaIoT dataset).

These contributions seek to deliver a scalable, efficient, and privacy-preserving solution to cybersecurity issues in the IoT sector, utilizing both asynchronous and collaborative learning modalities inside federated learning.

2. The Value of Secured IoT Networks in Manufacturing

Predictive Maintenance (PdM) represents another area where AIoT security significantly contributes to resource conservation. Secure PdM systems analyze equipment performance data to accurately forecast maintenance needs, preventing both premature part replacements and catastrophic failures. When these systems are protected against data manipulation, they maintain prediction accuracy and extend equipment lifespan.

Research demonstrated that secure predictive maintenance systems significantly reduced spare part consumption compared to traditional maintenance approaches, directly contributing to resource conservation and waste reduction [5]. Lampropoulos et al. [6] further highlight that AIoT can optimize processes regarding the production, distribution, consumption, and reuse of renewable resources. Their comprehensive bibliometric analysis of 9182 academic documents confirms that secure AIoT implementations have emerged as important contributors to ensuring sustainability in manufacturing operations.

Energy consumption represents one of the most significant environmental impacts of manufacturing operations. Secure AIoT systems enhance energy efficiency through multiple mechanisms.

Matin et al. [3] demonstrate that AIoT implicitly reduces energy consumption and environmental pollutants through enhanced resource and process scheduling. Their research shows that intelligent systems can analyze operational data to identify energy optimization opportunities that might otherwise remain undetected. Sasikumar et al. [7] present an improved Delegated Proof of Stake (DPoS) algorithm-based IIoT network that combines blockchain and AI for secure real-time data transmission. Their evaluation reveals that this consensus algorithm significantly reduces energy consumption while simultaneously addressing security vulnerabilities. This dual benefit illustrates how security and sustainability can be mutually reinforcing in AIoT implementations.

The architectural design of AIoT systems also impacts energy efficiency. Villar et al. [4] explain that Fog computing creates an intermediate layer between Edge and Cloud where data can be processed locally, reducing both latency and energy consumption associated with data transmission. However, they emphasize that these architectural components must be secured against potential attacks to maintain their energy-saving benefits.

However, there is lack of studies in the literature that show the final net energy savings in IoT-operated manufacturing processes which deploy an AI-based defensive cybersecurity mechanism.

Sustainable manufacturing extends beyond the factory floor to encompass the entire supply chain. Nozari et al. [8] conducted a comprehensive analysis of AIoT challenges in smart supply chains, finding that cybersecurity and lack of proper infrastructure are the most significant barriers to implementation. Their research demonstrates that when these challenges are addressed, AIoT innovations can provide critical information for features such as tracking and instant alerts that improve decision-making throughout the supply chain.

The integration of secure AIoT technologies in supply chains improves information exchange and facilitates monitoring of physical goods. Their study of Fast-Moving Consumer Goods (FMCG) industries revealed that AIoT capabilities such as transparency, agility, and adaptability offer tremendous opportunities to address supply chain management challenges more effectively, but only when properly secured.

Blockchain technology plays a particularly important role in securing supply chain data. Sasikumar et al. [7] explain that blockchain promotes a decentralized architecture for Industrial IoT applications, encouraging secure data exchange among various nodes. This secure exchange is essential for maintaining the integrity of sustainability certifications and enabling manufacturers to verify compliance with environmental standards across their supplier networks.

Manufacturing industries face increasingly stringent environmental regulations that require accurate monitoring and reporting. Secure AIoT systems ensure the integrity of data collection processes for environmental impact reporting, preventing both intentional falsification and accidental corruption of emissions and resource consumption data.

Lampropoulos et al. [6] demonstrate that AIoT can assist in achieving sustainable development goals through optimization of processes and promotion of sustainable practices. Their research shows that AIoT has emerged as an important contributor to ensuring sustainability and achieving sustainable development goals, but emphasizes that security is essential for maintaining the integrity of these systems and the decisions based on them.

Villar et al. [4] highlight that reference architectures based on standards guide developers to create compliant AIoT applications. These architectures incorporate security considerations that help ensure regulatory compliance while also providing the flexibility needed to adapt to evolving requirements.

The standardization of security practices in AIoT implementations helps manufacturers maintain consistent compliance across different operational contexts. Blockchain integration provides transparent and verifiable tracking throughout systems [7]. This transparency benefits both regulatory authorities and consumers increasingly concerned with the environmental impact of products. The immutability of blockchain records, ensured through robust security measures, provides confidence in the validity of sustainability claims.

The concept of product lifecycle management has evolved significantly with the advent of AIoT. Matin et al. [3] observe that AIoT is involved in the complete cycle of sustainable production: product design, process planning, sustainable machining, process scheduling, energy consumption, and supply chain. This comprehensive involvement allows for optimization at each stage of the product lifecycle but requires consistent security measures throughout.

Sasikumar et al. [7] identify three fundamental innovative models that enable long-term digitization of a smart circular economy: IIoT, Edge-based computing, and AI. All of them are features or components of an AIoT. Their research demonstrates that when these technologies are securely integrated, they can significantly increase proper recycling rates for complex products, reducing landfill waste and enabling more effective recovery of valuable materials [9].

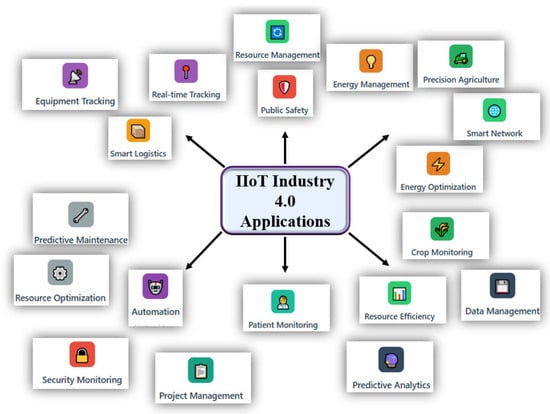

The digitization of industry and the attainment of Industry 4.0 (I4.0) objectives are facilitated by the adoption of emerging technologies, including AI and IoT. Implementing these emerging technologies in industrial manufacturing can enhance product quality, machine efficiency, employee safety, and PdM strategies, while simultaneously reducing overall energy consumption, adverse environmental impacts, and production costs [10,11].

The AIoT infrastructure incorporates cognitive capabilities into IoT devices to improve IoT operations, big data analytics, and human–machine interactions. This exemplifies an IoT system. AIoT-based solutions are crucial in sustainable manufacturing since they facilitate decision-making through extensive data provided by numerous sensors across diverse industrial processes, effectively addressing significant sustainability issues, especially within manufacturing sectors. To achieve the goals of sustainable manufacturing, it is essential to include modern technology [12].

To ensure industrial sustainability, AI and IoT applications such as fuzzy controllers, intelligent scheduling, knowledge-based expert systems and different Machine Learning (ML) and Deep Learning (DL) models have lately been adopted in several manufacturing sectors [13]. Over the past two decades, there has been an increased necessity to employ AI and ML technology for monitoring the risk profiles of supply chain management. Focused on a specific domain Research on AIoT-based sustainable manufacturing is underway, and its integration into manufacturing industries is in the preliminary phase.

The future of manufacturing is progressively accelerating to a data-driven domain. Manufacturers can enhance decision-making and optimize operations by collecting and analyzing data from diverse process segments using many sensors. Nonetheless, it is challenging to integrate them through conventional ML methodologies to achieve real-time forecasting, monitoring, defect or anomaly detection, and decision adjustment. AIoT can evaluate extensive data from many perspectives and extract feature properties with AI approaches to address this issue [14]. Consequently, AIoT encompasses the entire cycle of sustainable production, including product design, process planning, sustainable machining, process scheduling, energy consumption, supply chain management, and cyber security [15,16,17].

AIoT comprises two levels of technology. The first category is computing technology, encompassing Big Data, ML, computer vision, embedded computing, sensors and networks, and Edge computing. The other pertains to certain industrial sectors and addresses PdM tools, process mining, cybersecurity, and optimization. AIoT networks improve the quality of products, maximize machine functionality, minimize expenses, and increase operational efficiency. To obtain optimal efficiency, an AIoT network must perform two fundamental functions: building connections between devices and a centralized system, and simplifying the storage, management, analysis, and effective exploitation of the collected and supplied data. These networks involve various interconnected industrial devices, sensors, and systems that methodically acquire, disseminate, and analyze data to optimize industrial processes and assist informed decision-making. AIoT-based technologies have been integrated into different domains or sectors of the economy, including smart agriculture, smart healthcare, smart homes, smart cities, smart environments, supply chains and circular economies, industrial control units, renewable energy, tourism business, scheduling tasks, and cybersecurity [18].

IoT has focused on the connecting of devices across extensive networks to enable data interchange, management, and aggregation. This fundamental principle has progressed with the emergence of AIoT, which augments IoT by integrating intelligent functionalities into devices. This connection allows devices to independently assess data, make intelligent judgments, and perform actions in real time, thus revolutionizing the operational landscape across multiple industries, including industry, healthcare, smart cities, and cybersecurity [19,20].

IoTs are defined by an extensive network of networked devices that gather and disseminate data, profoundly influencing daily human activities and decision-making processes. The integration of AI with IoT not only improves the capabilities of these devices but also facilitates the processing of substantial amounts of data produced by IoT systems. This feature is essential for acquiring actionable insights and enhancing and securing service delivery in applications like manufacturing, smart healthcare and intelligent transportation systems [21,22]. As the number of sensors continues to increase, the potential of AI in securing these sensors locally at the device Edge layer is increasingly becoming vital.

Furthermore, AIoT promotes the creation of intelligent systems capable of learning from their surroundings and adapting accordingly [23]. This is especially apparent in collaborative decision-making frameworks that utilize AI to enhance resource allocation and operational efficiency in smart cities [24]. The incorporation of AI methodologies allows IoT devices to execute tasks and enhance their performance over time via learning and adaptation, hence augmenting the overall user experience and operational efficiency [25].

AIoT is a transformative technological convergence that enhances the functionalities of IoT devices through the integration of AI. This combination enables real-time data analysis and decision-making while fostering new applications across diverse sectors, ultimately resulting in more intelligent and efficient systems that can substantially enhance operational efficiency while being able to maintain its high levels of cybersecurity [26,27]. By means of local data processing, AIoT infrastructures possess the capability to make rapid decisions autonomously, without the need for ongoing human oversight [28,29,30].

The exponential increase in data created by IoT devices revealed that conventional cloud-based processing models were inadequate for managing the scale, speed, and complexity necessary for real-time decision-making. This resulted in the incorporation of AI functionalities directly into IoT systems. This substantiates the assertion that conventional cloud-based models are inadequate for real-time decision-making [31].

IoT infrastructures were developed to facilitate uninterrupted connectivity and optimize data sharing. As these frameworks evolved, the limitations of centralized cloud computing became increasingly apparent, prompting a transition to more decentralized solutions [32,33]. The integration of AI into IoT systems transforms data management through the implementation of Edge computing, which enables processing at the network’s peripherals.

This transformation diminishes latency, enhances privacy, and equips devices with autonomous capabilities [34,35]. The inherent interoperability of these systems facilitates cohesive integration across diverse sectors, from smart city infrastructures to precision agriculture, creating a dynamic and adaptive network that continuously learns and enhances its operational efficiency [36,37]. For example, IoT technologies facilitate real-time data collection and analysis, which are essential to optimize urban operations and services in smart city initiatives [38].

As device interconnectivity expands, the significance of cybersecurity inside the IoT ecosystem will become increasingly vital. The increase in connected devices expands the attack surface for cybercriminals, necessitating the implementation of stringent security measures to safeguard critical information and uphold consumer trust. Future developments will likely focus on establishing decentralized security frameworks that utilize edge computing and federated learning models.

FL enables the training of ML models across numerous decentralized devices while maintaining data locality, hence enhancing privacy and security by reducing the likelihood of data breaches. As these technologies advance, they will be important in creating a secure and robust IoT infrastructure [39,40]. Table 1 provides a brief description of these layers and their functions.

Table 1.

Brief description of IoT/AIoT layers and their functionalities.

It is worth noting that some components can perform more than one task or role. For example, the Edge components do much more than just data collection, it can perform data processing, analytics, and even run AI models directly at the edge. This is a key aspect of modern IoT architecture that enables reduced latency, bandwidth optimization, and improved privacy.

In IoT architectures, the Security layer is typically represented as a cross-cutting concern that connects with all other layers in the stack. This is because security must be implemented at every level of architecture. Models of Intrusion-Detection Systems (IDS) typically operate at the intersection of the Edge layer and Security layer, but they can also extend to other layers depending on the implementation. At the Edge layer, IDS can perform real-time monitoring and detection of anomalies in device behavior and network traffic.

Within the Security layer, IDS provides security policies, detection algorithms, and response mechanisms. And In the Fog/Cloud layers, more sophisticated IDS models might leverage additional computing resources for deeper analysis and correlation of security events. Thus, AI techniques can be integrated across these layers for data interpretation via IDS. IDS serve a crucial role in protecting IoT networks by recognizing and reducing any anomalies [41]. Some specialized IoT architectures might use a dedicated Processing Layer. Figure 2 shows how physical and digital technologies integrate in IoT devices.

Figure 2.

Technological integration in IoT and AIoT devices.

Benchmarking on IoT-based traffic data also allows for a realistic assessment of a model’s robustness and adaptability to real-world network conditions. This process helps ensure that the models are not just theoretically sound but also practical and effective in diverse and potentially noisy environments. Additionally, since AIoT-specific datasets are still relatively scarce, using IoT data enables ongoing research and development without unnecessary delays, allowing for faster iteration and improvement of security solutions.

Furthermore, insights gained from analyzing IoT traffic can often be transferred to AIoT contexts, especially when the underlying technologies are similar. This approach also helps researchers identify any limitations in their models and highlights areas where further adaptation or additional data might be necessary to address AIoT-specific threats. Overall, leveraging IoT traffic data for benchmarking is a pragmatic and efficient way to drive progress in AIoT cybersecurity, even in the absence of dedicated AIoT datasets.

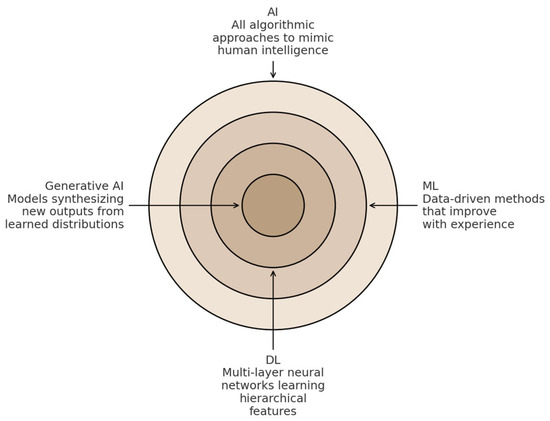

3. AI and Generative AI

Generative AI comprises multiple interconnected components that dictate its functionality and adaptability across various applications. These fundamental components affect the generative AI’s ability to process information, make decisions, and interact with human users [42]. The field of AI is changing quickly, especially with the rise of generative AI, unlike Narrow AI, which is designed to complete a particular cognitive capability and is limited by its inability to learn independently.

Narrow AI can also be called Artificial Narrow Intelligence (ANI) or weak AI. ANI utilizes ML, Natural Language Processing (NLP), and DL via advanced variations in algorithms made up of Neural Networks (NNs) to complete specified tasks [43,44,45]. Some examples of narrow AI include self-driving cars, which rely on computer vision algorithms, and AI virtual assistants. Artificial General Intelligence (AGI), also called general AI or strong AI, refers to a form of AI that can learn independently, think, and perform a wide range of tasks at a human level. The ultimate goal of AGI is to create machines capable of versatile, human-like intelligence, functioning as highly adaptable assistants (AI Agents) in everyday life. Generative AI is a form of AGI [46,47,48]. Figure 3 illustrates generative AI with respect to AI, DL, and ML

Figure 3.

Generative AI within AI, ML, and DL.

Generative AI is a form of AGI capable of producing original content rather than merely analyzing existing data. It employs intricate algorithms, like Generative Adversarial Networks (GANs), to produce diverse outputs, such as text, photos, design prototypes, and music. Generative AI models fundamentally consist of intricate interactions among various software, simulations, algorithms, and statistical models. This encompasses GANs and their expansions (such as multi-agent systems), Variational Autoencoders (VAEs), diffusion models, flow-based models, and transformer neural network architectures [49].

GANs comprise two NNs that collaboratively generate data that mimics real data, offering innovative design alternatives that may have been overlooked. The generator NN, functioning in an unsupervised manner, learns to model the data distribution, while the discriminator neural network assesses the authenticity of the generator’s output. As training advances, both networks enhance their performance, yielding outputs that increasingly resemble the target data. GANs can expedite the prototyping process, reduce costs, and minimize material waste, thereby supporting sustainability goals. Furthermore, employing GANs to produce synthetic datasets can enhance the training of machine learning models, particularly when actual data is scarce or difficult to obtain. Nonetheless, GANs may encounter mode collapse, wherein the generator fails to encapsulate the complete diversity of the data. To mitigate this issue, extensions such as multi-agent GANs, which utilize multiple generators in conjunction with a single discriminator, have been proposed [50].

VAEs employ variational inference to approximate intricate distributions, producing novel data that closely resembles the training samples. A VAE consists of two primary components: an encoder that compresses the input into a latent representation, and a decoder that reconstructs the input from this compressed format. VAEs are utilized in diverse applications, such as text generation in Large Language Models (LLMs), image synthesis, and anomaly detection. Diffusion models are predominantly employed for the generation of optimized images; their outputs typically display a more complex and semantically enriched distribution relative to the original data. Flow-based models operate by transforming data from a basic distribution, such as Gaussian, to a more intricate target distribution via an invertible transformation referred to as a flow. Their computational efficiency and generalizability render them especially advantageous in computer vision, NLP, anomaly detection, and generative design. Transformer models, a category of NNs architecture, are widely employed in signal processing, NLP, computer vision, audio and speech analysis, as well as in multimodal tasks. Transformers utilize an attention mechanism that adeptly captures contextual relationships within sequential data, allowing them to execute a wide array of functions with exceptional accuracy [51].

4. Cybersecurity Threats for IoT-Based Devices

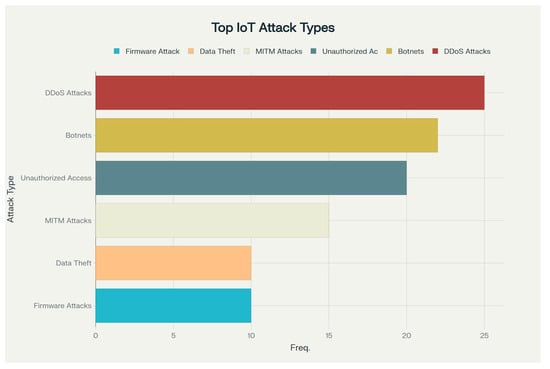

In the current hyper-connected environment, where IoT-based devices are integral to daily life, cybersecurity is of paramount importance. Previously independent devices, like smart homes, wearables, and industrial automation systems, now interact with each other, forming a complex network of interconnectivity. This interconnected society and enterprises offer convenience and efficiency, yet it also introduces significant security dangers [52]. Figure 4 shows the most common types of attacks on IoT-based devices [53]. Table 2 briefly describes these attacks [54].

Figure 4.

Percentage of the most common types of attacks on IoT and AIoT devices.

Table 2.

Brief description of the most common types of attacks on IoT and AIoT devices.

The interdependent characteristics of IoT systems inside IIoT environments imply that a security breach in a single device or component might trigger cascade repercussions, resulting in significant outcomes such as financial losses, reputational harm, legal liabilities, and compromised consumer data [43]. To effectively manage these risks, firms must adhere to best practices for safeguarding their IoT infrastructure in industrial settings. This entails performing comprehensive risk assessments, establishing stringent authentication and access controls, encrypting data both in transit and at rest, employing secure communication protocols, consistently updating software and firmware, and sustaining ongoing surveillance for potential threats and anomalies locally and at end points via the Edge computing layer [55] that exists in AIoT devices or a Cloud layer depending on the type of the IoT device and its setup and protocols. Anomaly detection provides a unifying defense by flagging deviations from “normal” IoT behavior. When an IoT sensor network is instrumented to monitor a physical quantity like temperature, each of the six most attack classes discussed in Table 2 will induce characteristic “anomalies” in the time-series data or associated metadata. Table 3 shows how these deviations could manifest.

Table 3.

Induced characteristic “anomalies” in the time-series data of AIoT instrumented to monitor a physical quantity like temperature as an example.

IoT-based devices are progressively capable of managing a substantial share of their cybersecurity responsibilities directly at the Edge layer, thereby alleviating numerous hazards prior to any data transmission from the device. Table 4 list the most common utilized methods in managing IoT cybersecurity at the Edge layer.

Table 4.

Utilized methods in managing IoT-based devices cybersecurity.

Notwithstanding considerable progress in on-device anomaly detection, IoT security is still impeded by several enduring challenges: resource limitations on small or battery-operated devices restrict their capacity for continuous, computationally intensive inference; the diverse array of IoT hardware complicates the implementation of uniform security toolchains across various edge nodes; and managing timely, secure firmware updates at scale remains one of the most daunting operational obstacles. Thus, the most robust architectures implement a hybrid model wherein AIoT devices establish the initial line of defense locally, addressing immediate threats and optimizing bandwidth, while cloud-based services facilitate centralized policy orchestration, long-term threat correlation, and the extensive analytics necessary for forensic investigations [59]. Figure 5 shows visual summary of security challenges in IoT and AIoT devices [60].

Figure 5.

Security challenges in IoT and AIoT devices.

These security concerns may arise at several levels of the AIoT architecture, including the perception, network, and application layers, each with unique vulnerabilities and consequences.

The application layer of IoT is notably vulnerable to diverse cyberattacks owing to its intricate and linked characteristics. At this stage, assailants can apply advanced strategies, such as fraudulent data injection, to elude detection while manipulating sensor data. These assaults aim at the software and apps operating on IoT devices, potentially resulting in illegal control and data manipulation and hence compromising the entire functionality of industrial systems. Such attacks can hinder operations, impair data integrity, and inflict severe financial and reputational harm.

IoT systems are particularly susceptible to ransomware attacks because of their dependence on networked equipment and protocols. Cascading assaults transpire when the interplay of various devices and services, frequently enabled by platforms, causes vulnerabilities. The escalating utilization of IoT devices, particularly with the emergence of 6G technologies, heightens the potential of data breaches and infringements of privacy. The connected qualities of these devices leave them vulnerable to unauthorized access and data exfiltration. Eavesdropping and spoofing attacks entail the interception and modification of communications between devices [61].

Eavesdropping allows adversaries to acquire unauthorized access to sensitive knowledge, whereas spoofing comprises the impersonation of a device to manipulate data or processes. Creating AI security solutions might ease these vulnerabilities in IoT, thereby lowering the possibility of application-level attacks. Techniques like micro-perturbations uncover concealed intruders by executing little-controlled modifications to sensor readings, simplifying the discovery of illicit data without affecting system operations. Balancing the deployment of AI for security improvement with the mitigation of associated risks is crucial for the sustained advancement of AIoT systems [62,63,64].

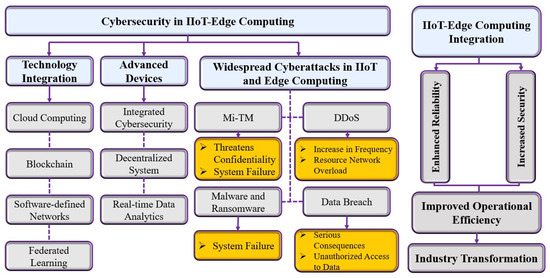

As was mentioned in Section 3 of this paper, IoT architecture typically do not come with a dedicated layer explicitly called “Processing Layer”. Instead, processing functionality is distributed across multiple layers (Edge, Fog, and/or Cloud) in the architecture. Such processes are subject to multiple vulnerabilities that might affect the security and effectiveness of any AIoT-based systems. These attacks exploit vulnerabilities in the network, devices, and data processing systems, requiring thorough detection and mitigation protocols. Figure 6 illustrates cybersecurity threats in the Edge computing layer and how the Edge computing layer helps in mitigating these threats.

Figure 6.

Cybersecurity threats in the Edge computing layer.

Network-level attacks often involve intrusion efforts that can disrupt communication between devices. The network layer is particularly susceptible to intrusion attacks, including DDoS attacks, which can saturate network resources and IoT services by inundating the network with excessive data [65]. Strategies such as Temporary Dynamic Internet Protocol Addressing (TDIP) effectively mitigate such attacks by continuously changing Internet Protocol (IP) addresses, hence improving network security. Malefactors can penetrate IoT devices and create botnets, networks of hacked devices employed for large-scale attacks such as DDoS. These attacks can overwhelm AIoT systems, leading to significant downtime and operational failures [66,67].

The perception layer of IoT is crucial for the acquisition and processing of data from numerous sensors and devices. However, it is susceptible to several types of attacks that can compromise data integrity, confidentiality, and availability. Perception layer attacks target devices and equipment that interact with the physical world, such as sensors, actuators, controllers, and other components within the perception layer. Malefactors may use these limitations to introduce inaccurate data or disrupt data collection processes. Physical layer attacks involve the alteration of hardware components in IoT systems [68]. These assaults may result in data manipulation, unauthorized access, and other security violations, potentially causing significant consequences for industrial systems.

IoT systems that incorporate video are vulnerable to motion-based video assaults. These assaults utilize spatiotemporal attention networks to generate subtle disturbances that are difficult for human observers to detect [69]. In IoT-based smart grids, adversarial attacks can corrupt text data processed by NLP technology. These attacks can alter sentence-level data, misleading classification algorithms without significantly changing the semantic meaning [70]. Figure 7 shows a summary of cybersecurity threats on different layers of AIoT devices [71,72,73,74,75,76,77,78,79,80].

Figure 7.

Common attack on different layers of AIoT devices and their risks.

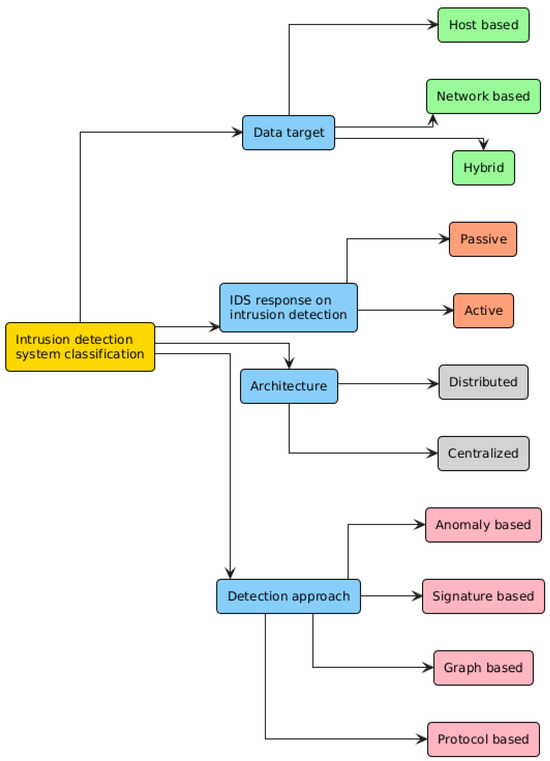

As we enter a future where almost every gadget used by humans is connected to the internet, safeguarding their security is paramount. Network defense systems seek to achieve three fundamental objectives: confidentiality, availability, and integrity. Methods for identifying and mitigating network intrusions can be generally classified according to their emphasis: threat identification, threat neutralization, or a synthesis of both approaches. The research delineates two principal ways to counteract attacks: IDS and Intrusion Prevention Systems (IPS).

IDS functions as a warning system, identifying prospective intrusions without implementing corrective steps, whereas IPS actively engages in countering recognized threats [81]. Nonetheless, IPS has difficulties with false positives, potentially leading to the obstruction of legitimate users. IDSs are frequently highlighted due to apprehensions about false alarm rates, particularly in the context of malware detection. Classification can be further delineated based on the target location of the intrusion detection system: Host-based IDS, Network-based IDS (NIDS), or Hybrid IDS.

Host-based IDS is designed for individual systems, providing robust detection of internal intruders and comprehensive evaluations of compromise severity; yet, it is expensive due to its one-to-one requirement [82]. NIDS is proficient in identifying external threats and provides a protective framework for numerous hosts, yet it encounters difficulties in analyzing high-volume traffic in certain instances [83].

Hybrid IDS merges the advantages of both host- and network-based solutions, hence enhancing security [84]. Active IDS implement actions in response to certain signals, while Passive IDS only generate alarms or reports. From an architecture perspective, Centralized IDS implement distinct monitoring units for each host; nonetheless, they exhibit limited scalability and are susceptible to single points of failure. In contrast, Distributed IDS utilizes a Peer-to-Peer (P2P) design, wherein each monitoring unit concurrently functions as an analysis unit, providing a more adaptable and resilient solution.

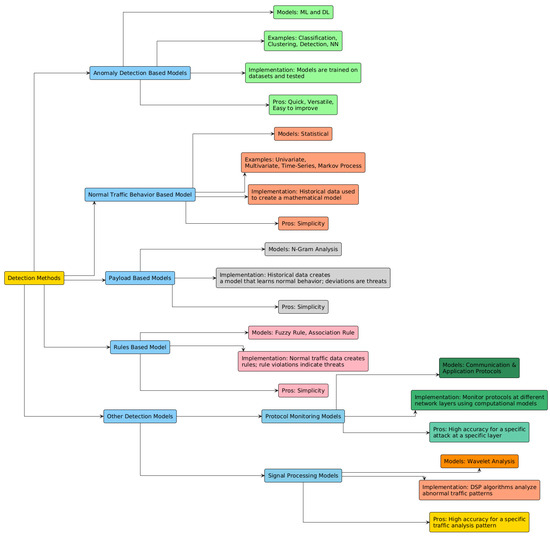

Threat detection primarily utilizes two principal methodologies: Signature-based Detection and Anomaly-based Detection [85]. Signature-based approaches face constraints, especially in the context of Botnets, as these botnets sometimes undergo mutations that modify their identifying signatures. This renders the technique less efficacious for identifying novel Botnet variations in practical situations. Anomaly-based detection approaches are preferred as they operate under the premise that the behavioral patterns of Botnet traffic will diverge from typical network traffic [86]. Alternative approaches, such as Community-Based Anomaly Detection, utilize Communication Graphs to detect Bots. This technique necessitates a complete graph for precise outcomes.

Certain research use specific protocols or frameworks for Botnet identification; however, these methodologies are not universally applicable due to the varying architecture employed by different Botnets [87,88]. The Bad Neighborhood approach is a prevalent tactic employed in Phishing Detection. It entails the identification of clusters of malicious IP addresses that are active throughout a defined period. Nevertheless, the practicality of this strategy is constrained by the pervasive occurrence of DDoS attacks and the complexities involved in establishing such clusters [89,90]. Figure 8 shows different IDS and their relationships with each other. Figure 9 show the categories of threat detection techniques [53,91,92,93,94,95].

Figure 8.

Illustration of the different IDS.

Figure 9.

Categories of threat detection techniques.

Developers must meticulously choose algorithms that are most appropriate for their particular domain while developing intelligent systems. Random Forests (RF) is frequently utilized because of its ensemble learning features, which can enhance the development of more adaptive systems. Random Forest also demonstrates proficiency in managing both categorical and continuous variables, in addition to addressing missing data. Nonetheless, its computational complexity presents difficulties. Support Vector Machines (SVM) and Naive Bayes (NB) serve as alternate options, each possessing distinct advantages and disadvantages.

DL models such as Deep Neural Networks (DNNs) and Recurrent Neural Networks (RNNs), in conjunction with ML models like RF and Decision Trees (DT), have attained accuracy rates above 100%. Recent studies emphasize hybrid or ensemble models that integrate sophisticated techniques like Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) to enhance accuracy. Alternative approaches, such as Gated Recurrent Units (GRU), may also be widely utilized due to their efficiency benefits.

5. The Role of AI in Defensive Cybersecurity of IoT-Based Devices

IoT-based devices generate data at different scales, from bytes to kilobytes per second, contingent upon the application. Data can frequently hold paramount significance, like healthcare records or defense information. DDoS assaults are significant cybersecurity concerns, with IoT devices being especially susceptible to exploitation as conduits for these attacks. This is due to the fact that most IoT devices, such as baby monitors or smart toys, possess restricted user interfaces, hindering people from recognizing that their item has been compromised. With the increasing integration of IoT into many industrial and domestic applications, the pursuit of effective security measures has become paramount. In this section, an overview of scholarly works employing weak AI and generative AI models to flag various types of cyberattacks is provided.

Defensive cybersecurity focuses on preventing attacks with firewalls and monitoring, while offensive cybersecurity proactively seeks vulnerabilities through simulated attacks. Defensive measures are the first line of protection, but offensive tactics reveal weaknesses and improve overall security resilience [96,97].

To protect institutions and individuals from cyberattacks, it is crucial to analyze and classify network data first, facilitating the detection of anomalous and malicious intrusions. Due to the critical importance of categorizing harmful data, several researchers have sought to improve classification techniques by utilizing artificial intelligence. Numerous studies have focused on detecting anomalous and aberrant network activity.

Abu Al-Haija et al. developed a DL-based intelligent detection solution for IoT cybersecurity via convolutional neural networks. They utilized the NSL-KDD dataset to validate their approach, attaining an accuracy rate surpassing 99.3% for binary classification and 98.2% for multiclass identification. Their forthcoming efforts intend to enhance their technology to intercept and examine data packets within the IoT network [98].

Khan et al. propose a blockchain-based security solution for IoT, utilizing the capabilities of Extreme Learning Machine (ELM). Their methodology confirmed the integrity, confidentiality, and availability of blockchain-enabled smart homes. Initial data indicated negligible ELM overheads relative to the resultant cybersecurity advantages. They attained an accuracy of 93.91% utilizing the NSL-KDD dataset, with forthcoming efforts focused on investigating various architectures and datasets [99].

Vanhoenshoven et al. investigated multiple methodologies for identifying malicious URLs. The Malicious URLs Dataset comprises 121 datasets collected over 121 days. The dataset comprises 2.3 million URLs and 3.2 million characteristics. The researchers categorized these URLs into three distinct groups according to particular attributes. Various models, including Multilayer Perceptron (MLP), DT, RF, and KNN, were evaluated using multiple performance criteria, such as accuracy, precision, and recall. The study indicated that all employed approaches demonstrated great accuracy, with the RF model exhibiting exceptional effectiveness at approximately 97% accuracy [100].

Sun and colleagues [101] formulated a model for classifying network traffic, utilizing deep learning techniques with a particular focus on web and data flows. The dataset utilized to train their suggested model was meticulously chosen by the researchers, obtained via intercepting network traffic across many platforms. The Probabilistic Neural Network (PNN) was employed in the analysis, achieving an accuracy of 88.18%, utilizing a 7:3 ratio for training and testing.

Yang et al. [102] initiated a project to develop a system capable of detecting malicious actions within an encrypted network, utilizing deep learning as their tool. The proposed model was based on a Residual Neural Network (ResNet), which has the inherent ability to independently identify unique features while effectively separating the contextual information around encrypted network traffic. Furthermore, their efforts were supported by the utilization of the CTU-13 dataset for model training. In the first data preparation step, the dataset saw multiple modifications. Moreover, they employed Deep-Q-Learning (DQN) to generate adversarial samples of encrypted communication. The result was exceptional, with the model attaining an impressive accuracy rate of 99.94%.

Ongun et al. [103] focused on the CTU-13 dataset, initiating the development of composite models aimed at detecting abnormal network behavior. They utilized Logistic Regression (LR), RF, and GB to develop these models. Their early methodology focused on a connection-level representation, from which features were directly retrieved from the raw connection records. Their research produced an exceptional AUC score of 99%.

5.1. Defensive Strategies Using Generative AI

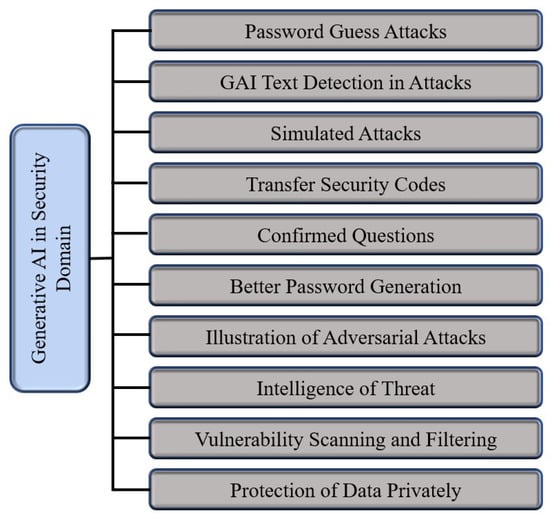

The literature identifies four major primary approaches and five minor approaches of defensive strategies. Major approaches include adversarial training, dataset balance, data augmentation, and data obfuscation. And minor approaches include generative defense for phishing and social engineering, automated honeypot generation, data sanitization and noise injection, synthetic data generation for privacy and robustness, and generative models for anomaly detection [104]. In some cases, these approaches can be combined to produce a more comprehensive enterprise mechanism to defend against various cybersecurity attacks. Figure 10 shows the applications of generative AI in cybersecurity.

Figure 10.

Generative AI applications in cybersecurity.

Generative models such as GANs and VAEs can assimilate the typical patterns of data and thereafter be employed to identify anomalies or outliers. In cybersecurity, generative AI can assist in detecting anomalous network traffic, fraudulent transactions, or malware by highlighting data that deviates from the established standard of “normal” behavior [105,106].

Zavrak and Iskefiyeli [107] demonstrates that VAEs produce analogous Receiver Operating Characteristic (ROC) curves for attackers exhibiting similar tendencies. Hara and Shiomoto [108] utilized Semi-Supervised Adversarial Autoencoders (SSAAE), attaining equivalent outcomes with markedly reduced labeled data, albeit with an extended training duration.

Li et al. [109] introduce a distinctive GAN-based adversarial training architecture for NIDS. By highlighting the interplay between the generator and discriminator components of GANs, their methodology produces resilient and varied adversarial samples, hence enhancing the NIDS against emerging threats. GANs are widely utilized for the production of synthetic data and the enhanced comprehension of minority groups, as demonstrated by Ferdowsi et al. [110].

Certain research concentrates on certain uses of a specific variant of GANs. For example, [111] employs Conditional-based GANs (CGANs) to produce synthetic samples that replicate the distribution of authentic XSS attack situations. The augmented data is subsequently utilized to train a new model to validate the authenticity and dependability of the synthetic samples. Xie et al. introduced a DL-based multi-label detection approach. The methodology employs Wasserstein-based GANs with Gradient Penalty (WGAN-GP) for data augmentation and to mitigate class imbalance concerns [112].

Liu et al. [113] similarly tackle the deficiency of cyber threat data in the space domain by producing synthetic threat data to enhance intrusion detection and defense. Their methodology enhances current data creation techniques, such as GANs and VAEs, to produce data specifically designed for space systems. Le et al. [114] presented an IDS utilizing a CNN and a CGAN to address the deficiency of training data. Experimental findings indicate that their IDS attained elevated detection rates for nine categories of cyberattacks, surpassing rival methodologies and evidencing its efficacy in bolstering IoT security.

A study by [115] introduced a BiLSTM-VAE model with a dynamic loss function to overcome limitations of traditional methods like scalability and false alarms. By capturing temporal dependencies and addressing data imbalance, their model achieved high accuracy and F1 scores on SKAB and TEP datasets, outperforming existing models. This makes it a reliable and scalable solution for anomaly detection in industrial environments via generative models.

5.2. Defensive Strategies with Federated Learning

Recent studies have highlighted the growing importance of FL within the context of IoT. Despite this, much of the existing research in FL has relied on datasets that do not originate from real-world IoT devices, often overlooking the distinctive characteristics and challenges inherent to IoT data [116].

Hamad et al. [117] demonstrated that FL can effectively leverage distributed data to enhance intrusion detection performance while preserving data privacy. The results highlight the potential of FL to address the unique security challenges in heterogeneous IoT environments, offering improved detection accuracy without the need for centralized data collection [118].

Multiple strategies can be used to implement an FL framework. Self-Learning (SL) is an approach where neither data nor model parameters leave the device; instead, each edge device performs training individually and in isolation. This method serves as a baseline for evaluating learning ability in scenarios where no information is shared between devices, making it useful for understanding the limits of purely local learning. Centralized learning (CNL) involves collecting data from different parties and sending it to a centralized computing infrastructure. The central server is responsible for training the model using all the aggregated data. CNL is often used as a benchmark to assess the maximum learning potential when models are built with access to all available data in one place.

Collaborative learning (CL) encompasses custom variants of distributed learning, including federated learning, where multiple agents benefit from jointly training a model. Notably, Paul Vanhaesebrouck et al. [119] introduced a fully decentralized collaborative learning system in which locally learned parameters are shared and averaged across devices in a peer-to-peer (P2P) network, without the need for a centralized authority to orchestrate the process. This approach enables agents to collaborate and improve their models collectively while maintaining a decentralized structure. To the best of our knowledge, Table 5 shows a list of models that were utilized in FL framework on different IoT datasets [117].

Table 5.

Models used in FL-based IDS framework.

6. Dataset

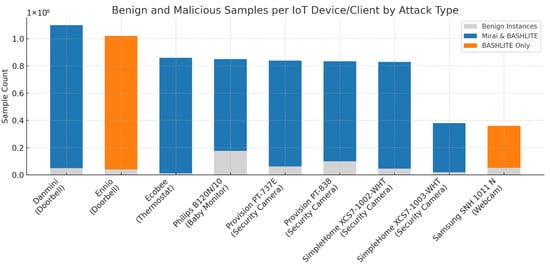

Meidan et al. [120] compiled an extensive network-traffic dataset (N-BaIoT) by instrumenting nine commercially available IoT devices, including doorbells, thermostats, baby monitors, security cameras, and webcams, inside a controlled laboratory setting. For each device, an initial “benign” profile was established by mirroring all incoming and outgoing traffic immediately following installation and operation under standard settings. This benign capture generally lasted several hours per device and was divided into time periods of 100 ms to one minute, resulting in tens of thousands of benign traffic snapshots per device. Figure 11 shows a descriptive summary of the N-BaIoT dataset.

Figure 11.

Benign instances per IoT device with infection status.

Subsequently, each device was deliberately infected, first with the Mirai botnet and then with the BASHLITE botnet, to generate malicious-traffic profiles. During these infection phases, the same port-mirroring process recorded both scanning and attack flows characteristic of each botnet’s propagation and execution stages. By maintaining identical capture durations and windowing parameters, the authors produced parallel malicious datasets for each IoT endpoint. Table 6 shows the different attack vectors that were used to infect the IoT devices.

Table 6.

Attack vectors used to infect devices with BASHLITE and Mirai.

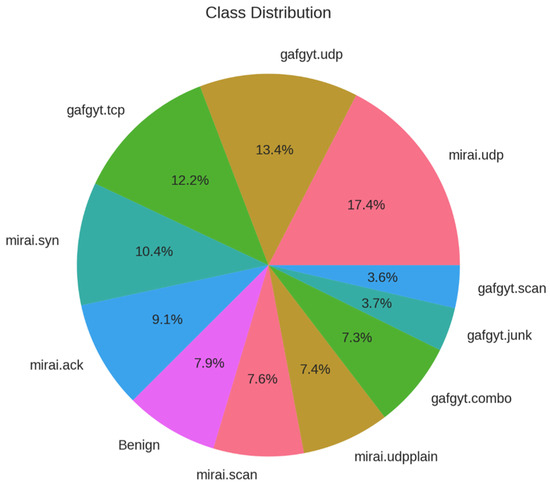

Mirai is a worm-like malware family first identified in late 2016 that systematically scans the Internet for Linux-based IoT devices by exploiting default or weak credentials, integrating them into a substantial DDoS botnet. Its modular, open-source architecture has led to the emergence of numerous variants responsible for some of the largest volumetric attacks recorded [121]. BASHLITE, also referred to as Lizkebab, Gafgyt, or Bashdoor, originated as a lightweight C-based malware that exploits Shellshock vulnerability to infect Unix-like devices. It utilizes straightforward command-and-control protocols to orchestrate various flooding attacks (UDP, TCP, COMBO) and network scans, facilitating high-throughput spam and DDoS operations across extensive networks of compromised IoT devices [122]. Figure 12 shows the target class distributions of these attack vectors.

Figure 12.

Target class distribution (attack vectors and benign).

From every captured snapshot (benign or malicious), the dataset authors computed 115 statistical features, encompassing packet counts, byte volumes, inter-arrival jitters, flow durations, and cross-flow correlations. These features, extracted in a lightweight offline process, form the input vectors for per-device deep autoencoder models. In total, the dataset comprises several hundred thousand labeled snapshots across all nine devices and both botnet families, balancing benign and anomalous examples for robust training and evaluation. Table 7 shows the categories of the captured features based on network traffic analysis.

Table 7.

Features categorical breakdown based on network traffic analysis.

The statistical features employed in the N-BaIoT framework demonstrate considerable high variability, as evidenced by their wide value ranges and pronounced standard deviations, ensuring sensitivity to both subtle and extreme deviations from benign traffic patterns. These features follow diverse distributions, encompassing everything from heavy-tailed to near-Gaussian profiles, which allows the model to capture a broad spectrum of traffic behaviors. To balance the influence of recent versus historical activity, the authors introduce time-based decay through lambda parameters (L5, L3, L1, L0.1, L0.01), each weighting feature values according to different temporal windows and thus enabling the detection mechanism to adapt to both short-lived spikes and longer-term trends. Finally, multiple perspectives are integrated, ranging from host-level aggregates and flow-level statistics to port-level dynamics; so that anomalies can be identified across various granularities of network interaction.

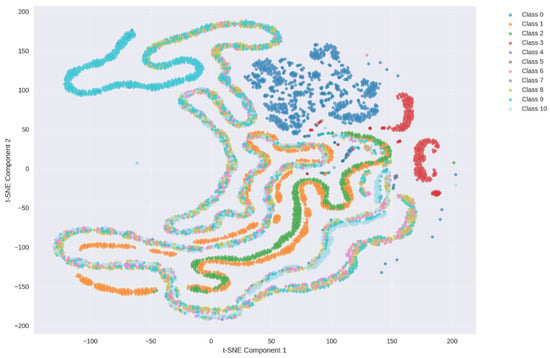

The t-distributed Stochastic Neighbor Embedding (t-SNE) is a nonlinear dimensionality reduction method intended for visualizing high-dimensional data in two or three dimensions. It establishes a probability distribution over pairs of high-dimensional points, ensuring that comparable points exhibit high affinity (represented by a Gaussian kernel), while dissimilar points demonstrate low affinity. A comparable distribution is established over the low-dimensional map with a Student’s t-distribution (with one degree of freedom), which mitigates the “crowding problem” by enabling a more accurate representation of moderate distances between features. Figure 13 shows the exploratory data analysis visualization obtained by t-SNE for reference use only.

Figure 13.

t-SNE visualization for the N-BaIoT dataset.

7. Methodology

7.1. Preprocessing

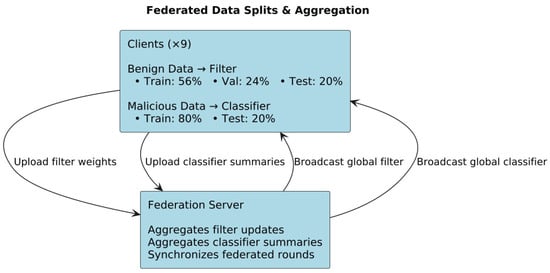

In this pipeline each IoT device (client) holds data of the same feature space (the 115 statistical traffic features) but on different samples, and they jointly train a single global model by exchanging model updates (filter model weights) or aggregated statistics (HGB histograms). That is the hallmark of horizontal (or cross-device) FL where all clients share the same input-space schema, and the privacy-sensitive rows (samples) remain local. Vertical FL, by contrast, would involve different features on the same sample set, which is not the case here. Table 8 shows the preprocessing steps that were implemented for each algorithm. Figure 14 shows the data split and aggregation employed by the horizontal (cross-device) FL framework.

Table 8.

List of all preprocessing steps.

Figure 14.

Federated data split and aggregation.

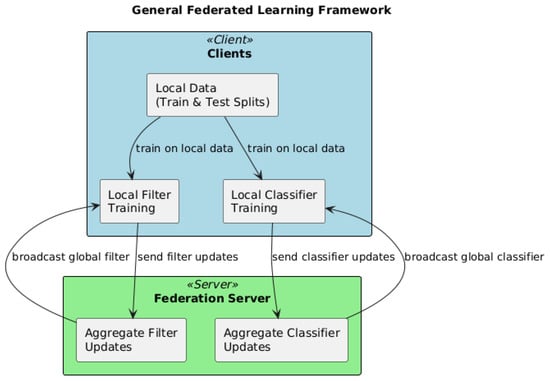

7.2. FL Framework

Each IoT device in the network acts as an independent federated client that retains its own raw traffic data. Before any model training begins, each client partitions its benign (pre-infection) traffic windows into local training, validation, and test sets, while reserving its malicious (post-infection) windows solely for evaluation. To initiate the federated process, the central server initializes a global filter model with random weights and broadcasts these weights to all clients. Each client then refines its local filter by training only on its benign data for a fixed number of local epochs, minimizing reconstruction loss via stochastic optimization (e.g., Adam). Upon completion of these local updates, clients upload their revised filter weights to the server, which aggregates them using the FedAvg algorithm, weighting each client’s contribution by its benign-sample count. This cycle of broadcast, local filtering, and aggregation repeats for a predetermined number of rounds or until convergence on the global validation loss. Once the filter has converged, the server evaluates reconstruction-error distributions across benign and malicious windows on each client to choose an anomaly threshold that balances detection sensitivity against false alarms.

Although it uses a central server to orchestrate weight-averaging (FedAvg for the filter) and histogram-merging (for the HGB classifier), neither raw data nor unaggregated feature vectors ever leave the devices; only model updates or summary statistics are exchanged. This matches CL’s definition as a distributed-training regime in which multiple agents jointly build a model under a (centralized) orchestration authority, rather than moving all data to one place (CNL) or training strictly in isolation (SL). Figure 15 shows the FL framework simulated in this paper.

Figure 15.

General FL framework.

After anomaly thresholds are set, the pipeline proceeds to build a global classifier ensemble (HGB) via a federated histogram-based boosting protocol. Each client computes local histograms of feature gradients and Hessians on its malignant traffic windows and sends only these aggregated statistics to the server. The server then merges all client histograms to identify the optimal feature splits at each node of the boosting trees. It broadcasts the chosen splits and updated leaf weights back to the clients, which incorporate them into their local classifier models. This exchange of histogram summaries and split information iterates for a fixed number of boosting rounds, ultimately producing a global classifier that can distinguish benign from malicious traffic and further differentiate between Mirai and BASHLITE activity. Throughout the entire process, raw network flows and feature vectors remain on-device, ensuring data privacy; only model parameters or aggregated statistics traverse the network, and each client’s influence is naturally scaled to its local data volume. Figure 16 shows the architecture of the federated HGB.

Figure 16.

Federated HGB architecture.

7.3. Filter Algorithms

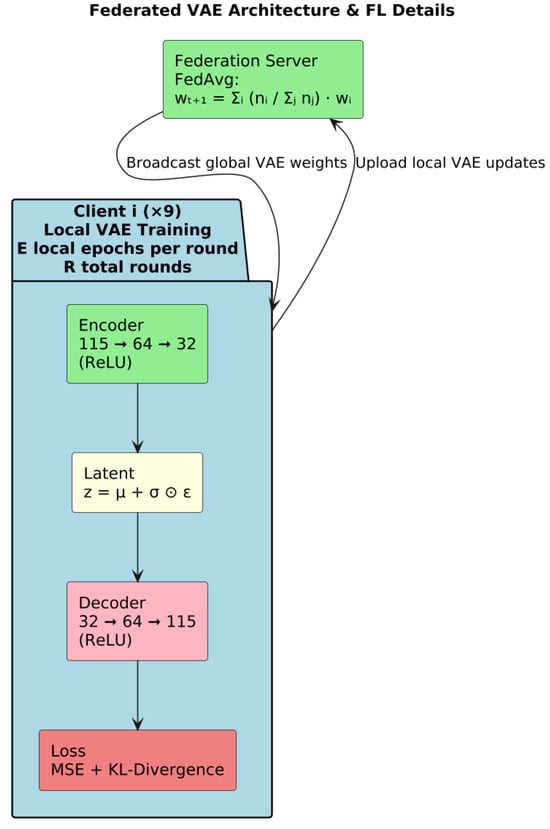

7.3.1. VAEs

The VAE deployed on each IoT client is a compact, fully connected neural “filter” designed to learn a low-dimensional model of normal traffic behavior from 115 statistical features. At its core, the VAE comprises an encoder network that progressively reduces the 115-dimensional input through two hidden layers—first to 64 units, then to 32 units—each equipped with Rectified Linear Unit (ReLU) activations. From this 32-unit representation, two parallel linear transformations generate the parameters of a latent Gaussian distribution (its mean and log-variance), enabling the model to capture uncertainty and variability in benign traffic. Figure 17 shows a summary of VAE federated architecture.

Figure 17.

VAE architecture.

Through the reparameterization, the encoder’s distributional parameters yield a stochastic latent code without interrupting gradient flow, allowing the VAE to learn both the central tendency and dispersion of the data. A mirrored decoder then reconstructs the original feature vector: two hidden layers of 32 and 64 units (again with ReLU activations) expand the latent code back toward the input dimension, and a final linear output layer produces the reconstructed 115-dimensional vector. By training to minimize a combination of reconstruction error—measuring fidelity to the original input—and a regularization term that encourages the latent distribution to remain close to a standard normal, the VAE learns a smooth, continuous model of benign traffic patterns.

This architecture is trained in a federated manner via FedAvg: each client updates its local VAE weights using only its benign samples, then communicates weight updates to the server for aggregation. The result is a global filter that embodies the collective knowledge of all devices without ever centralizing raw network data. Because the VAE’s capacity is modest (32-unit bottleneck) and its layers are narrow, it can be trained efficiently on resource-constrained IoT endpoints while still capturing the salient statistical structure of normal traffic.

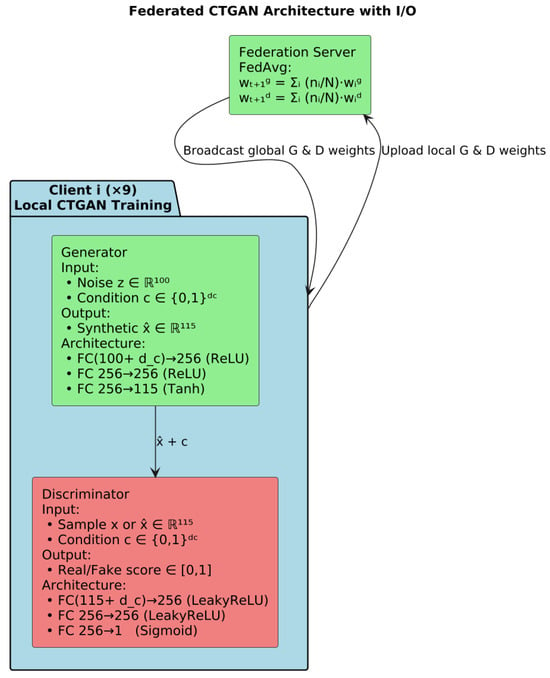

7.3.2. CTGANs

CTGAN is instantiated on each of the nine IoT clients to model the distribution of benign traffic features. CTGAN extends the traditional GAN framework with a generator and discriminator tailored for mixed tabular data: the generator learns to produce synthetic feature vectors conditioned on column distributions, while the discriminator distinguishes generated samples from real benign observations. At initialization, nine independent CTGAN instances are created, one per client, and each with its own network weights and optimization state.

Training proceeds in synchronized federated rounds. In each round, every client trains its local CTGAN for a fixed number of epochs on its private benign windows, reporting both generator and discriminator losses. For example, in Round 1 Client 0 recorded a generator loss of 1.2322 and discriminator loss of 1.0507, while Client 1’s losses were 0.7303 and 1.2427, respectively; similar loss trajectories are logged for all devices before aggregation. These per-client updates capture each device’s unique traffic characteristics without ever sharing raw data. Figure 18 shows the federated CTGAN architecture.

Figure 18.

Architecture of federated CTGAN.

Once all clients finish their local updates, the central server aggregates the CTGAN parameters via a FedAvg-style averaging across clients, weighted by their local benign-sample counts. The merged global model is then evaluated on a held-out validation set of benign windows, with validation loss reported after each aggregation. Training continues until the global validation loss ceases to improve for 10 consecutive rounds, at which point early stopping is triggered (e.g., at Round 51), finalizing the federated CTGAN for downstream anomaly-threshold determination and detection

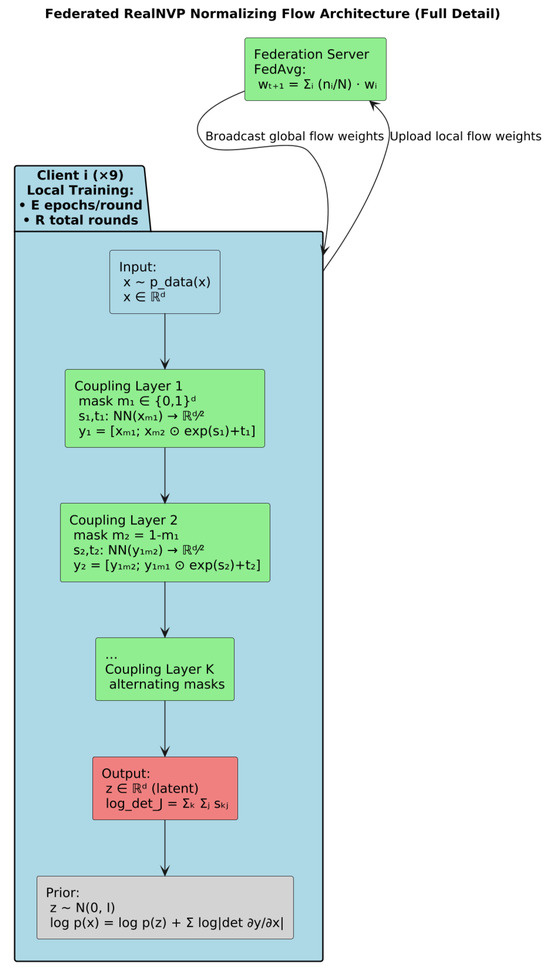

7.3.3. Normalization Flow

The federated NF pipeline is organized around a classical cross-device, parameter-server FL paradigm. The central ServerFlow instantiates a global NormalizingFlow model and nine ClientFlow objects, one per IoT device, each owning a private copy of the same flow architecture and its benign-only training data.

In each federated round, the server first broadcasts its current global flow weights to every client. Each client then loads these weights into its local NormalizingFlow instance and performs exactly Config.FLOW_LOCAL_EPOCHS of training on its own benign windows, producing a local negative-log-likelihood loss and updated parameters. Upon completion, each client uploads only its state_dict() (the tensor-wise parameters) back to the server, no raw features or gradients ever leave the device.

The ServerFlow.aggregate_models() method implements a FedAvg aggregation: for each floating-point parameter key, it stacks the corresponding tensors from all clients and replaces the global parameter with their element-wise mean. Non-floating-point buffers (e.g., masks) are simply carried over from the first client. The server then reloads this averaged state_dict into its global flow and, every five rounds, evaluates it on an aggregated benign validation set to check for improvement. If the global validation loss does not improve for Config.FLOW_PATIENCE = 10 successive checks, early stopping halts further FL rounds.

Internally, the flow itself is a Real Non-Volume Preserving (RNVP)-based model composed of a sequence of affine coupling layers. Each CouplingLayer splits the input vector by an alternating binary mask, passes the masked half through two small neural nets to produce scale and translation vectors, then transforms the unmasked half as accumulating the log-determinant. Stacking Config.FLOW_NUM_LAYERS of these layers with alternating masks yields an invertible mapping to a standard-normal latent z.

The NF is trained in a synchronous, FedAvg-based federated loop across IoT clients, and its backbone coupling-layer structure is RNVP-based, ensuring exact likelihood computation and efficient sampling while preserving client data privacy. Figure 19 shows a summary of the architecture of the federated NF-based RNVP.

Figure 19.

Architecture of the federated NF-based RNVP.

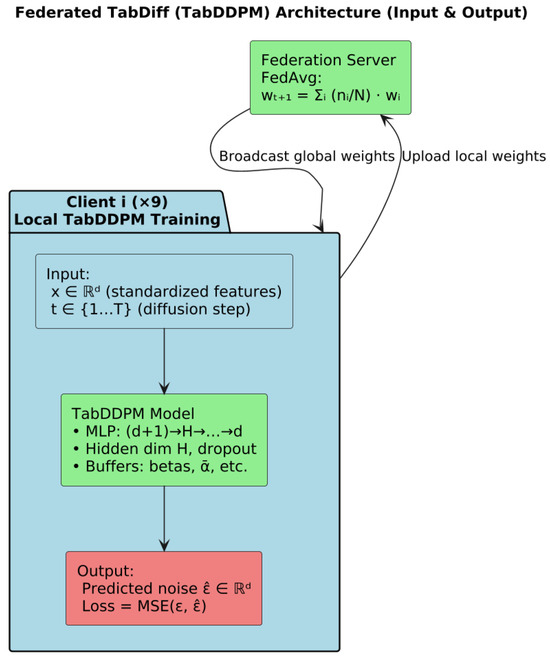

7.3.4. TabDiff

The federated TabDiff implementation follows a classical synchronous, parameter-server paradigm (FedAvg) adapted to a Denoising Diffusion Probabilistic Model (DDPM) for tabular data. The pipeline is organized into two principal components, client-side local training and server-side aggregation, which are executed over multiple FL rounds.

Each IoT client instantiates its own TabDDPM model, parameterized by the global feature dimension, hidden layer size, number of diffusion timesteps, noise schedule, and dropout rate, all drawn from a shared configuration object. Clients convert their benign-only data into NumPy arrays, and employ an AdamW optimizer with weight decay and a learning-rate scheduler. During training the model minimizes mean-squared error between predicted and actual Gaussian noise at randomly sampled diffusion steps.

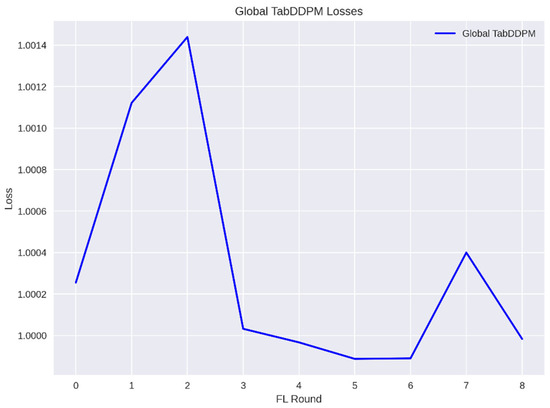

The central server initializes a global TabDDPM model with identical architecture and optimizations. At each FL round, it collects state dictionaries from all clients and aggregates them via FedAvg: for each floating-point tensor, it computes the element-wise mean across client models; for non-floating-point parameters (e.g., embedding indices), it retains the first client’s value. The updated global state is loaded back into the server’s model, which also tracks global loss trajectories and implements early stopping based on a validation patience threshold. Figure 20 shows the architecture of federated TabDiff. Table 9 shows the parameters for all these 4 models.

Figure 20.

Federated TabDiff architecture.

Table 9.

Parameters for all utilized models.

8. Results and Discussion

8.1. VAEs-HGB Federated Pipeline

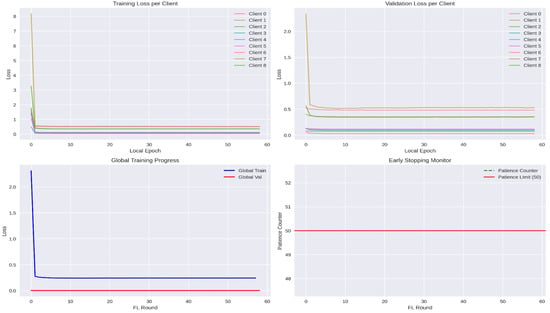

All nine clients exhibit a very steep drop in loss during the first one or two epochs, reflecting rapid initial learning of the bulk of benign traffic patterns. Thereafter, curves flatten out—clients converge to their local minima. Notice that clients with larger datasets (e.g., Client 1) begin with higher initial loss but still reach a plateau comparable to smaller clients, indicating effective normalization by sample count in the later federated aggregation.

The validation curves closely mirror the training curves but start, and settle, at slightly higher values, reflecting a generalization error. As with training loss, most of the decrease happens in the first few epochs, after which validation loss remains almost constant. This consistency across clients demonstrates that none of the local VAEs is grossly overfitting during the local updates. Figure 21 shows the local and global training and validation loss for the VAEs.

Figure 21.

Training and validation loss for VAEs.

The global training loss plunges sharply in the first round, when the model transitions from random initialization to a reasonable filter, and then gradually decreases, approaching a steady state as rounds proceed. The global validation loss remains extremely low (near zero), indicating that the aggregated model generalizes exceedingly well to held-out benign traffic across all clients. Figure 22 shows performance related plots to VAEs.

Figure 22.

Performance measurement during training and testing of VAEs.

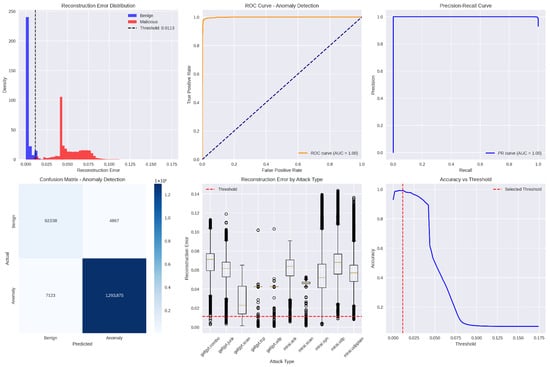

This histogram (with overlaid kernel-density estimates) contrasts the reconstruction-error values produced by the global VAE for benign versus malicious traffic windows. Blue bars and curve represent benign samples, clustered tightly near zero error, while red depicts malicious samples, whose errors are substantially higher. The vertical dashed line marks the chosen anomaly threshold (≈ 0.0113); nearly all benign errors lie to its left, and most malicious errors lie to its right, demonstrating clear separation.

This Receiver Operating Characteristic (ROC) plot shows the true-positive rate (detection sensitivity) versus the false-positive rate as the anomaly threshold varies. The orange curve hugs the top-left corner, and its Area Under the Curve (AUC) is effectively 1.0, indicating that the VAE filter can perfectly distinguish benign from malicious windows over nearly all threshold settings.

This Precision-Recall (PR) graph illustrates the trade-off between detection precision (fraction of flagged windows that are truly malicious) and recall (fraction of all malicious windows correctly flagged) across thresholds. The blue curve remains at or near the top-right, with an AUC of 1.0, signifying that the model simultaneously achieves extremely high precision and recall for anomaly detection.

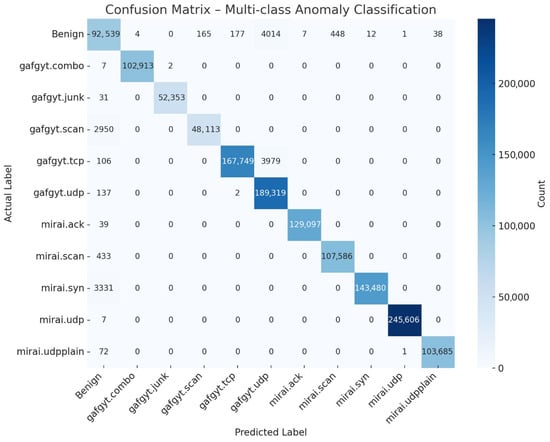

This histogram (with overlaid kernel-density estimates) contrasts the reconstruction-error values produced by the global VAE for benign versus malicious traffic windows. Blue bars and curve represent benign samples, clustered tightly near zero error, while red depicts malicious samples, whose errors are substantially higher. The vertical dashed line Accuracy vs. threshold curve plots the overall classification accuracy (fraction of all windows correctly labeled) as the anomaly threshold is swept from near zero to maximum observed error. Accuracy peaks just above 99% around the selected threshold (marked by the red dashed line) and then declines sharply as the threshold rises, since higher thresholds begin to miss malicious windows. This plot validates the threshold choice as the point of maximal accuracy. Figure 23 shows the confusion matrix for the federated VAE-HGB hybrid approach. Table 10 shows the performance measurements values for this approach.

Figure 23.

End-to-end confusion matrix for the federated VAE-HGB hybrid approach.

Table 10.

End-to-end performance measurements values per class for VAEs-HGB.

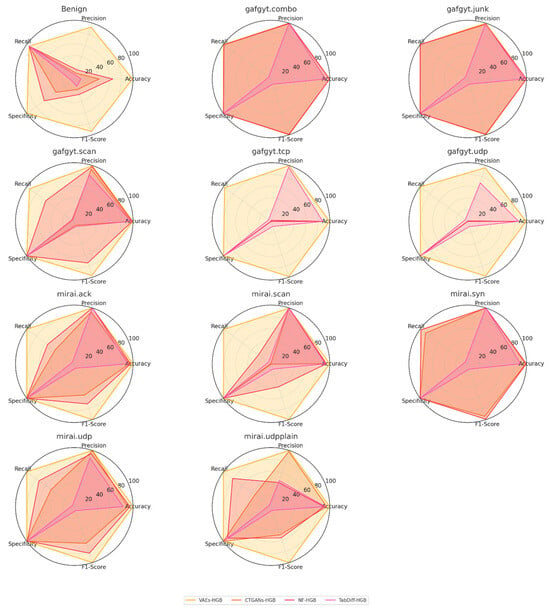

The per-class results in Table 10 demonstrate that the VAEs-HGB pipeline achieves exceptionally high overall classification performance across both benign traffic and diverse botnet attack types. The benign class attains 99.14% accuracy, with a precision of 92.86% and recall of 95.00%, indicating that only a small fraction of benign windows are misclassified as attacks while most true benign instances are correctly preserved. Its specificity of 99.45% further confirms that false-alarm rates are extremely low, and an F1-score of 93.92% reflects a strong balance between precision and recall for normal traffic.

Among the individual attack categories, nearly all achieve accuracies at or above 99.70%, with several (e.g., gafgyt.combo, mirai.udp) reaching a perfect 100%. Precision and recall for these classes likewise hover around 99.5–100%, yielding F1-scores that exceed 97% in the lowest case (gafgyt.udp at 97.90%) and hit 100% for mirai.udp. The smallest performance dip occurs on the gafgyt.scan class, which attains 94.22% recall and 99.66% precision—nonetheless producing a robust F1-score of 96.86%. These results indicate that the hybrid VAE filter plus histogram-based gradient boosting classifier excels at distinguishing even subtle scanning behaviors from benign baselines without confusing them with other flooding or junk-traffic patterns.

Overall, the uniformly high specificity values (≥99.34% for all classes) underscore that the system makes very few false-positive errors across the full eleven-way detection task. Simultaneously, nearly perfect recall on critical flooding attacks (mirai.syn 97.73%, gafgyt.tcp 97.62%) ensures that the most disruptive anomalies are almost never missed. The tight clustering of precision, recall, and F1-scores—each above 93% for all classes and above 99% for the majority—attests to the efficacy of the federated VAEs-HGB architecture in learning both generative and discriminative representations suitable for end-to-end IoT anomaly detection and classification.

8.2. CTGANs-HGB Federated Pipeline

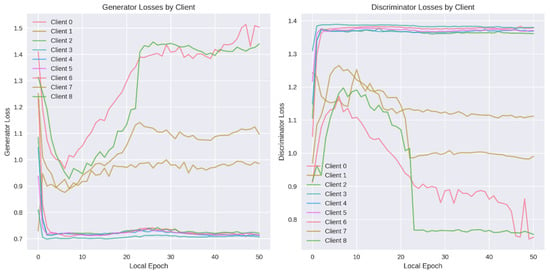

During the training process, the competing generator and discriminator networks evolve on each client during local CTGAN training. During the first few local epochs, every client’s generator network undergoes a dramatic improvement: its loss plunges from the random-initialization level down to around 0.7–1.0, as it quickly learns the coarse patterns of benign traffic. After this “warm-up” phase, the clients bifurcate into two behavioral groups. On Clients 3, 4, and 5, the generator loss stabilizes around 0.70–0.75, indicating that the adversarial contest with their discriminators has reached a steady equilibrium. In contrast, on Clients 0, 1, 2, 6, 7, and 8 the generator loss begins a slow but persistent ascent, reaching values above 1.4 in some cases; thus, suggesting that their discriminators are gradually overpowering the generators and forcing them to struggle to match the discriminator’s increasing discriminative power. This divergence correlates with variations in local data complexity and volume: larger or more heterogeneous benign sets appear to drive more pronounced generator–discriminator imbalance.

The discriminator loss curves mirror these dynamics from the opposite vantage point. Initially, each discriminator’s loss climbs from its random-guess baseline into the 1.30–1.40 band within just two or three epochs, reflecting rapid improvement at distinguishing real from generated samples. For a subset of clients (notably Clients 0 and 2), the discriminator loss subsequently falls steeply, dropping below 1.0 by epoch 25; thus, signaling that these discriminators have established a decisive advantage over their corresponding generators. Client 7 shows a more moderate downward drift in loss, ending near 1.10, whereas Clients 3, 4, 5, 6, and 8 maintain a flat loss curve around 1.35, indicating a sustained balance in their adversarial training. Together, these patterns illustrate substantial heterogeneity in local GAN convergence, underscoring the value of federated parameter aggregation to smooth out individual instabilities and yield a more robust global CTGAN model. Figure 24 shows the generator and discriminator loss. Figure 25 shows the ROC and Precision-Recall (PR) curves for CTGAN.

Figure 24.

Discriminator and generator loss in CTGAN.

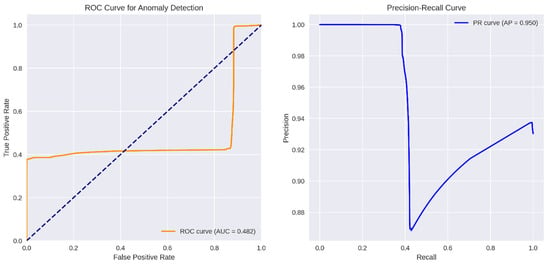

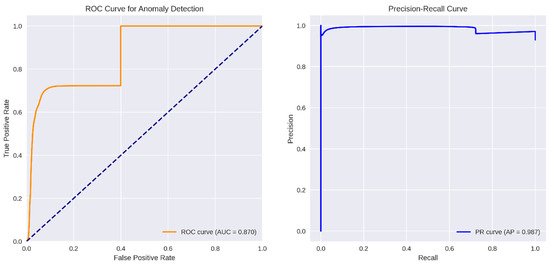

Figure 25.

ROC and PR curves for CTGAN.

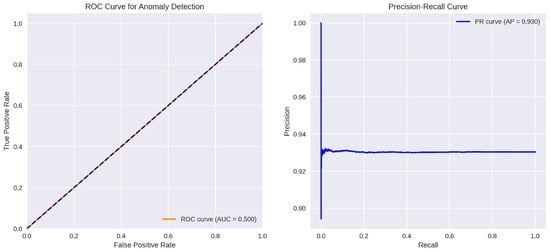

The CTGANs-based anomaly detector’s ROC curve (orange) lies predominantly below the diagonal “chance” line, yielding an AUC of only 0.482. This indicates that, over the full range of decision thresholds, the model’s ability to trade off false positives against true positives is effectively no better than random guessing. Even at very low false-positive rates, the true-positive rate stalls around 0.35–0.40 and only climbs toward 1.0 at the extreme (near 100% false-positive) end. Such a profile demonstrates that CTGANs’ reconstruction or discriminator scores do not reliably separate benign from malicious samples in this setting.

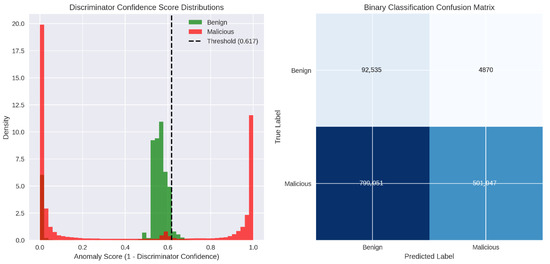

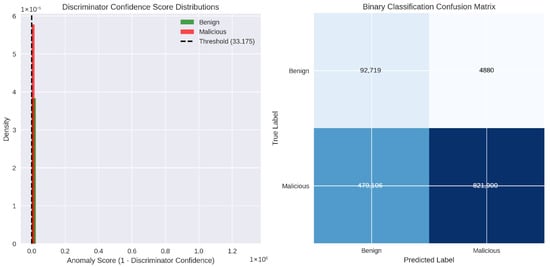

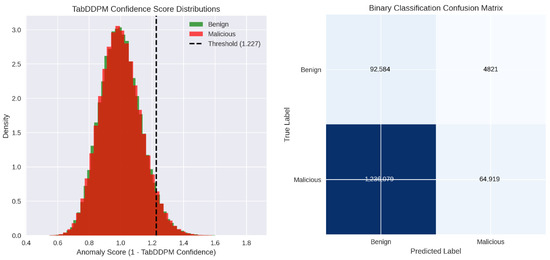

In contrast, the right panel’s PR curve shows an average precision (AP) of 0.950, revealing a markedly different story when focusing on positive (anomalous) detections. At low recall (below roughly 0.4), precision hovers near 1.00—almost every flagged window is truly malicious. Once recall surpasses 40%, precision dips to about 0.87 before gradually rising again toward 0.94 as recall approaches 1.0. This shape implies that CTGANs can indeed identify a small subset of anomalies with very high confidence, but it struggles to detect the full set of malicious windows without incurring a substantial loss in precision. Figure 26 shows the anomaly score threshold and the binary confusion matrix for CTGANs.

Figure 26.

Anomaly score threshold and confusion matrix for CTGAN.

Overall, the CTGANs discriminator does not consistently separate benign from malicious behavior. Although it flags a subset of attacks with high confidence (those in the high-score mode), it misclassifies many other malicious windows as benign, leading to both low recall and a false-alarm burden. Table 11 shows the performance measurements values for this approach.

Table 11.

End-to-end performance measurements values per class for CTGANs-HGB.