1. Introduction

A number of researchers have explored how sensory deprivation in one modality may affect the development of the remaining modalities. When one sense is unavailable, sensory responsibilities shift, and processing of the remaining modalities becomes enhanced. This shifting of responsibilities appears to be compensatory in nature and has thus come to be referred to as compensatory plasticity. For example, as the auditory sense is typically responsible for gathering information and monitoring events in the surrounding environment, deaf individuals tend to compensate with enhanced visual processing for events in the peripheral field of vision [

1,

2,

3,

4]. Enhancements such as these do not appear to be due to decreases in absolute sensory thresholds as much as changes in the manner in which the sensory information is processed [

5]. This results in a unique sensory experience that influences the manner in which music is perceived.

Frequently cited definitions of music, such as “organized sound” [

6] or “an ordered arrangement of sounds and silences…” [

7] emphasize the cultural supremacy of sound in the domain of music. These definitions imply that the deaf population has limited access to music, as well as to the emotional aspects of speech, which also tend to be musical (e.g., speech prosody, vocal timbre). However, definitions of music and emotional speech that focus exclusively on sound fail to incorporate multi-modal aspects, such as body movements, facial expressions and vibrotactation. These multi-modal aspects make music and emotional speech accessible to people of all hearing abilities.

Changes in brain, cognition and behavior following auditory deprivation are typically interpreted from one of two complementary perspectives: neuro-developmental or cognitive. The neuro-developmental perspective focuses on changes that are due to a structural reorganization of the pathways in the brain. The cognitive perspective focuses instead on changes that are due to differences in the recruitment of attention and available resources. The following two sections will review research from neural developmental and cognitive perspectives, in turn. These sections are followed by a consideration of non-auditory elements in music that may be perceived differently following auditory deprivation.

2. Compensatory Plasticity: The Neuro-Developmental Perspective

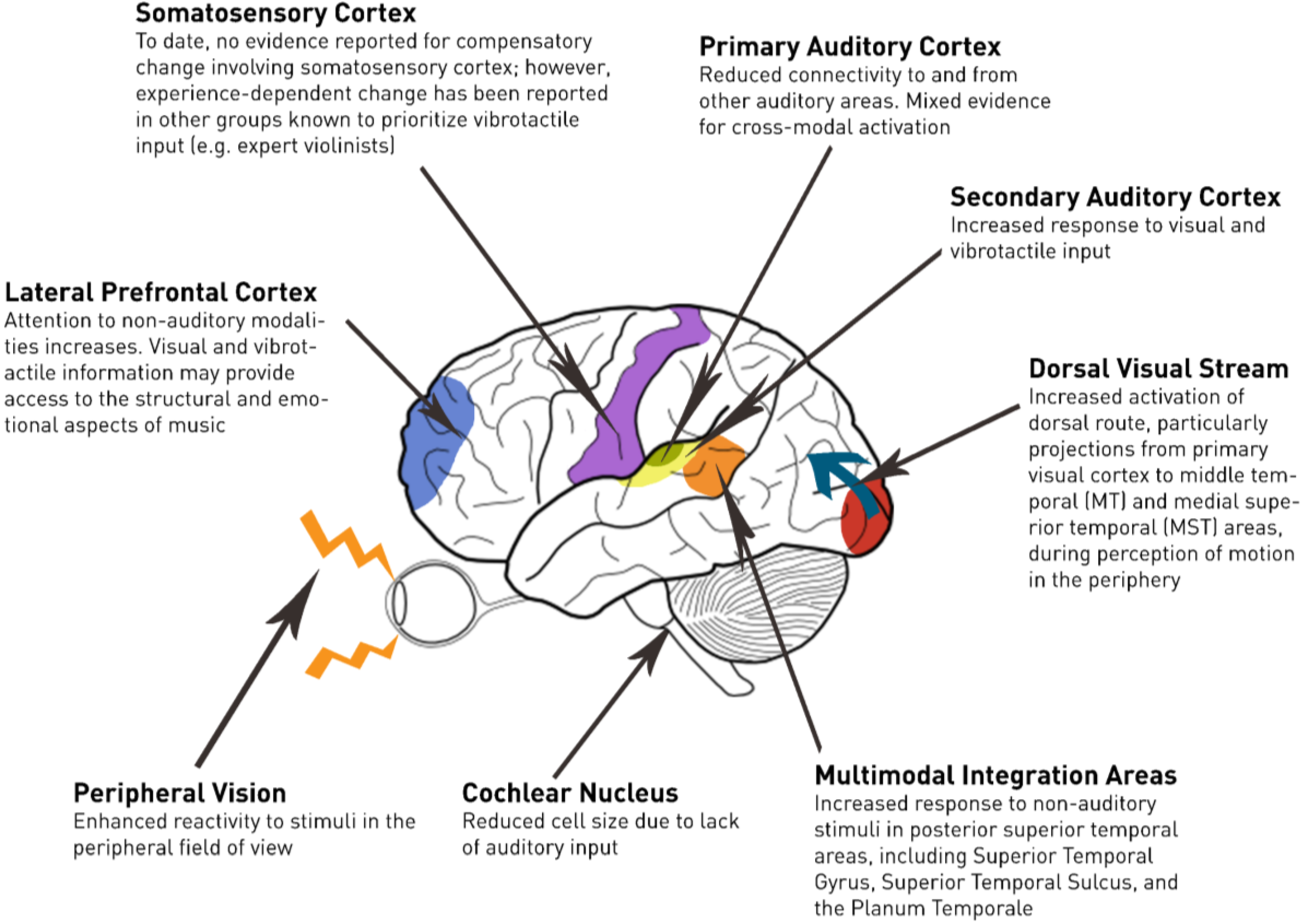

Figure 1 provides a neuro-anatomical view of subcortical and cortical changes occurring following deafness. A subset of these changes has been described as a degeneration of the auditory pathways over time [

8,

9]. For example, Saada

et al. [

9] report that the cochlear nucleus of the deaf white cat (a congenitally deaf strain) is half the size of typically hearing cats. The deaf white cat is considered an animal model for prelingual deafness given that it shows an absence of brainstem-evoked potentials to clicks presented up to 120 dB SPL [

10]. Moore

et al. [

8] noted a similar pattern in human participants, wherein the size of cells in the cochlear nucleus of individuals with profound hearing loss was reduced by up to fifty percent relative to hearing controls. Moreover, there was a robust negative correlation observed between cell size and the duration of profound deafness.

In contrast to the gross morphological changes observed at the level of the brain stem, the total volume of grey and white matter within the primary auditory cortex does not appear to be affected by deafness ([

11]; but see [

12]). However, congenitally deaf individuals show an increase in grey-white ratios relative to their hearing counterparts. This difference in ratios is likely the result of reduced myelination and/or fewer fibers that project to and from the auditory cortex. This finding is consistent with the view that sensory deprivation leads to changes in cortical connectivity due to axonal pruning [

13]. These changes may be interpreted as progressive, rather than degenerative;

i.e., compensations for sensory deprivation.

Figure 1.

Changes in the deaf brain relevant to music perception.

Figure 1.

Changes in the deaf brain relevant to music perception.

2.1. Auditory System Response Changes Following Deprivation

The majority of evidence for crossmodal activation of the auditory cortices in the deaf brain involves visual activation of the secondary auditory cortex. When profoundly deaf individuals are asked to view sign language, activity is observed in the secondary auditory cortex [

14]. Hand motions without a lexical meaning activate areas of the secondary auditory cortex in deaf participants [

15]. Strikingly, even moving dot patterns activate the secondary auditory cortex in deaf participants [

15,

16,

17]. Sung and Ogawa [

18] suggest that some of the reorganization in secondary auditory areas may also be owed to practice with visual language.

Tactile stimulation also elicits activation in the secondary auditory cortex of individuals who are deaf. When vibration is presented to the palms and fingers, activation of the secondary auditory cortex is greater and more widespread in deaf participants than in hearing participants [

19,

20].

Debate exists regarding whether the primary auditory cortex (area roughly corresponding to Heschl’s gyrus) adapts itself to processing information from the non-auditory modalities. Animal research involving the deaf cat has found limited evidence for reorganization in the connectivity of the primary auditory cortex [

21]. In human work, some researchers have found evidence for non-auditory activation in the primary auditory cortex during visual processing [

17,

22], while others have shown activation during somatosensory processing [

19,

23]. In contrast, Hickok

et al. [

24] found no primary auditory cortex activation using magnetoencephalography (MEG) and functional magnetic resonance imaging (fMRI) in a single deaf participant during visual and somatosensory stimulation.

The reorganization of the auditory brain following deafness has been causally linked to enhanced visual discrimination in an animal model. Congenitally deaf cats are known to have superior visual localization in the peripheral field and to have lower visual movement detection thresholds. Lomber, Meredith, and Kral [

25] showed that deactivation of the posterior auditory cortex selectively eliminates the enhanced visual localization, whereas deactivation of the dorsal auditory cortex eliminates the enhanced visual motion detection.

2.2. Non-Auditory System Changes Following Auditory Deprivation

Behavioral studies have not been able to show that auditory deprivation leads to enhancements in the absolute sensitivity of the spared modalities. Deaf and hearing populations demonstrate comparable thresholds for visual brightness and contrast [

26,

27]. Imaging studies have also been unable to reveal definitive evidence for differences in activation of the primary visual cortex between deaf and hearing participants (e.g., [

16]). Nonetheless, deaf individuals do show differences in visual processing of motion [

28] particularly in the periphery [

29]. Research involving the deaf cat found evidence to suggest that extrastriate visual areas, such as those involved in visual motion processing, undergo cross-modal reorganization [

30]. In human participants, motion selective areas of the dorsal visual stream, such as the medial temporal (MT) and medial superior temporal (MST), are more activated in deaf individuals compared to hearing individuals when visual motion is presented to the peripheral field of view [

31,

32].

Given their reliance on tactile input, we might expect deaf individuals to have increased activation of the somatosensory cortex relative to hearing individuals. The somatosensory representation of the digits in the left hand of expert string players has famously been found to be larger than that of controls [

33]. Presumably, this increased representation is owed to the importance of vibrotactile feedback for string players. However, despite the importance of vibrotactile feedback for deaf individuals, no corresponding evidence has been reported to date.

2.3. Multimodal Integration Areas

Many cortical changes occur beyond the primary sensory cortices [

34]. There is agreement in the compensatory literature that the multimodal integration areas of the brain tend towards prioritization of input from the in-tact modalities [

13]. Posterior areas of the superior temporal lobe are well-defined multi-sensory integration areas [

35] that have also been associated with language processing [

36]. In deaf individuals, these multimodal integration areas, including the superior temporal gyrus [

18,

31,

37], superior temporal sulcus [

38] and the planum temporale [

15,

38], are activated more strongly compared to hearing individuals during visual stimulation.

2.4. Limitations of Neuro-Developmental Perspective

The neural perspective leaves several questions unanswered regarding compensatory plasticity. Foremost among these is the evidence for compensatory changes that are not limited to the congenitally deaf population. A critical period for cross-modal plasticity following sensory deprivation exists [

39]. For example, a functional adjustment in the region of the auditory cortex associated with language processing appears to depend on a critical period that ends as early as two years of age [

38]. However, there have been examples of behavioural changes in sensory processing due to deafness in individuals with much later onsets of deafness [

40]. This capacity for change beyond the critical period suggests that compensation may also rely to some extent on cognitive strategies, including the recruitment of increased attentional resources and a redirection of attention to non-auditory modalities. The locus of control for such effects on attention, at least during the early stages of deafness, is likely to be the lateral prefrontal cortex [

41].

3. Behavioral Compensation: The Cognitive Perspective

Behavioral outcomes are not simply a manifestation of neural plasticity alone. The compensation may also involve top down processes, including increased attention directed towards the remaining modalities. Studies that fail to find neural differences in response to non-auditory stimuli among deaf and hearing individuals often utilize passive processing of visual stimuli [

24]. More clear-cut support for cross-modal activation has been seen under attentionally demanding conditions [

42].

Complex behavioural tasks that require attention redirection consistently reveal differences between deaf and hearing individuals (for a review, see [

5]). For example, deaf participants are faster and more efficient in completing visual search tasks than hearing participants [

43]. Deaf participants also appear to engage in more efficient forms of processing visual information. Stivalet

et al. [

43] asked hearing and deaf participants to detect letter targets among distractors. Differential search patterns were reported for each group. Hearing participants showed search patterns that were asymmetric across conditions compared to deaf participants, whose search patterns were symmetric and more efficient overall.

Interference effects from visual distraction provide additional evidence of altered attention redirection in deaf individuals [

44]. Deaf individuals show a shift in the spatial distribution of attention from the center to the periphery [

44,

45,

46]. Parasnis and Samar [

46] conducted a stimulus detection task in which cues were presented to provide information regarding target location. Some of the trials contained a complex task-irrelevant distractor that participants were asked to ignore. Results showed that deaf participants were more adept at redirecting their attention between spatial locations in the periphery than were hearing participants.

3.1. Enhanced Visual Attention to the Periphery

Deaf individuals appear to allocate increased visual attention to the periphery relative to hearing individuals, while no difference appears to exist between groups in visual attention to central targets [

1,

2,

3,

4,

44]. Imaging studies by Bavelier and colleagues [

31,

32] have revealed enhanced recruitment of motion-selective areas (middle temporal/medial superior temporal) for deaf individuals in tasks requiring the detection of luminance changes in the periphery. Behavioural outcomes are also consistent with imaging results. When asked to attend to visual stimuli in the periphery, the deaf participants outperform their hearing counterparts. However, deaf and hearing participants do not show a significant difference in outcomes when asked to attend to visual stimuli in the central field of view.

Neville and Lawson [

2] found that deaf participants more accurately reported motion direction than did hearing participants. Bosworth and Dobkins [

47,

48] found a similar pattern of performance on a visual motion discrimination task. Interestingly, the latter study showed a strong right visual field advantage for deaf participants, suggesting that the left hemisphere, which classically includes language areas, is preferentially recruited in deaf individuals to support the processing of visual motion. These findings support the view that neural plasticity and attention may both contribute to some of the perceptual advantages observed in deaf participants.

As noted earlier, some of the visual processing advantages of deaf individuals may also stem from frequent practice in the use of visual language [

18]. Sign language users must learn how to be especially sensitive to hand and body movements, especially when they occur in peripheral space. Neville and Lawson [

3] compared the detection of apparent motion in deaf signers, hearing signers and hearing non-signers. Findings illustrated that the deaf signers were faster than the other two groups in reporting the direction of motion of the target stimuli in the peripheral field of vision. Nonetheless, individuals who have practice with sign languages, regardless of hearing ability, may recruit resources from the left hemisphere language regions when processing visual motion [

3]. Taken together, these studies strongly suggest that fluency with sign language may be partly responsible for enhancements in motion perception observed in deaf individuals.

3.2. Enhanced Visual Attention to Facial Features

Individuals who are deaf demonstrate enhanced capability to understand speech through the visual modality. For example, deaf individuals demonstrate higher levels of proficiency in lip reading, or speech reading, compared to their normal hearing counterparts [

49,

50]. Deaf sign language users also demonstrate an enhanced ability to detect even subtle differences in facial expressions, compared to non-signers [

51]. This is not surprising in that facial expressions serve important grammatical and semantic roles in sign language [

52]. The cognitive perspective of compensation would argue that the extra practice with facial details should lead to enhancements in facial recognition ability. Bettger

et al. [

40] examined this hypothesis by asking participants to discriminate pictures of human faces under varied conditions of lighting and positioning. They found that deaf signers performed significantly better than hearing participants on this task, especially in situations of poor lighting. To determine whether outcomes were due to auditory neural enhancement or exposure to sign language, Bettger

et al. [

40] repeated the experiment with hearing participants who were native signers and deaf participants who adopted sign language later in life. Both signing groups (deaf and hearing) and the deaf late signers demonstrated trends toward greater facial discrimination than the hearing non-signers. These results suggest that practice with sign language, rather than auditory neural enhancement alone, leads to greater facial discrimination. Deaf individuals who were exposed to sign language later in life still demonstrated superior facial recognition compared to hearing non-signers, suggesting that there may be no critical period to develop this aptitude.

3.3. Enhanced Attention to Vibrotactile Stimuli

Deaf individuals show some processing advantages for haptic and vibrotactile stimuli [

53,

54]. Levänen and Hamdorf [

54] explored the vibrotactile sensitivity of congenitally deaf participants by using sequences of vibration. They found an enhanced ability in the congenitally deaf to detect suprathreshold frequency changes in vibrotactile stimulation. However, on the basis of this study alone, it is not clear whether this enhancement was due to compensatory neural plasticity or changes in attention due to practice.

4. Compensation Summary

Though it is not always clear whether the deaf brain compensates through neuro-development changes, changes in attentional focus or some combination, all of the available evidence suggests that there is a compensatory shift in sensory responsibilities. This shift results in a non-auditory sensory experience that is unique to individuals who are deaf. This unique sensory experience may enhance the perception of non-auditory elements of music.

5. Non-Auditory Elements of Music and the Deaf Experience

5.1. Visual Elements

The appreciation of music does not rely exclusively on sound. While music is generally focused on the auditory modality, the movements of performers, including hand gestures, body movements and facial expressions, influence an audiences’ perceptual and aesthetic assessment of music [

55,

56,

57,

58].

Body movement and facial movements made by performers often provide structural information about music. For example, judgments of musical dissonance can be influenced by visual aspects of a guitar performance [

56]. Facial movements made by singers provide information about the size of the pitch interval between notes [

56]. Video-only presentations of melodic intervals are scaled accurately by observers and do not appear to depend on musical or vocal experience [

59]. Video-based tracking of sung intervals has demonstrated that larger intervals involve more head movement, eyebrow raising and mouth opening [

59].

Musical performers also use facial and body movements to intentionally convey emotional aspects of music. Thompson and Luck [

57] used a motion capture system to explore the ability of a musician to convey emotion through movements of the face and body. When musicians were asked to play with an exaggerated emotional expression, they found an increase in the amount of bodily movement.

The visual aspects of music may be especially exploited by a deaf audience. For example, the enhanced ability possessed by the deaf to discriminate faces becomes especially relevant when faces are partially occluded [

40] as is often the case in live or recorded music. In addition, the increased ability for visual discrimination in the periphery may have implications for how the deaf experience visual aspects of music, beyond the more obvious aspects in the central field of view.

5.2. Vibrotactile Elements

Sound at its most basic level is airborne vibration [

60]. Given this physical aspect, loud sound may elicit vibrations that may be transmitted through walls, floors and furniture. These physical vibrations reach the hair cells of the cochlea at different frequencies. Each hair cell of the cochlea is most sensitive to a specific frequency; called the characteristic frequency [

61]. The auditory cortex receives signals fired from the frequency-specific nerve fibers of the cochlea. These auditory frequencies, or pitches, activate specific areas of the auditory cortex [

62]. Together, these different auditory pitches create melody, harmony and timbre. The vibrotactile receptors in the skin are biomechanically similar to the cochlear hair cells. However, receptors in the skin do not process the same range of frequencies as the cochlear hair cells; instead different classes of receptors respond to different frequency ranges [

63].

A growing body of empirical research has provided a better understanding of the ability to perceive musical information through vibrotactile sources alone. For example, one of the basic elements found to convey emotion in music is tempo [

64]. This term refers to the overall rate of presentation, conventionally measured in beats per minute (BPM). Tactile representation of the tempo using differing inter-pulse intervals can be easily represented through naturally occurring or amplified vibrations produced by voices and musical instruments. There are strong associations between tempo and emotion; happy songs tend to be associated with faster tempos, while sad songs tend to be associated with slower tempos [

65]. Recent research in our own lab involving vibrotactile reproductions of music has found that deaf individuals show similar associations with tempo [

66].

Though the tempo can be easily accessed through vibration, pitch perception proves to be more limited. Discrimination thresholds for frequencies are much larger for vibrotactile than for auditory presentations, and discriminations are limited to frequencies below about 1000 Hz [

67,

68]. However, the majority of these findings are based on research involving hearing participants. As demonstrated by Levänen

et al. [

20], individuals who are deaf have an enhanced ability to discriminate suprathreshold frequency changes in vibrotactile stimuli, which may support an enhanced ability to perceive vibrotactile musical frequencies.

Several studies have explored the human ability to discriminate timbres, such as different musical instruments, using vibrotactile stimuli alone. These studies have found that both deaf and hearing individuals are able to distinguish between dull and bright timbres [

69]. Furthermore, deaf participants are able to distinguish between different voices singing the same phoneme on the same pitch [

70]. It appears that this ability is owed to cortical integration of activity across the different channels of mechanoreceptors.

5.3. Assistive Technologies

In addition to the naturally occurring non-auditory aspects of music, music can also be manipulated through assistive technologies that are designed to enhance the experience of music. Various research groups are exploring the possibilities of using multi-modal technologies, including music visualizations and vibrotactile devices, to make music more accessible for the deaf population.

Though numerous visual representations of music have been created throughout the 20th century, including the iTunes visualizer and Walt Disney’s Fantasia, the effect of these models have not yet been well investigated with deaf participants. Changes in visual processing in the deaf population provide an opportunity for researchers to explore how visual moving patterns representing music might support an informative and enjoyable musical experience in deaf and hearing populations [

71]. Music visualizations created for the deaf population might be best received in the periphery, while reserving central vision for unobstructed presentations of facial animation manifested by the performer.

Vibrotactile devices can enhance the experience of music for individuals who are deaf. Deaf individuals report that vibrotactile devices, such as haptic chairs, are a significant contribution to their musical enjoyment [

72]. Using vibrotactile technology, music has been created in which vibrotactile aspects supersede the auditory aspects of music [

73]. Research has shown that incorporating additional vibrotactile stimuli though sensory substitution technology may be an effective way to convey the emotional information when experiencing music [

74,

75].

In summary, a wealth of non-auditory information is available to provide a deaf audience with access to the structural and emotional aspects of music. The evidence that visual and vibrotactile stimuli may be processed in auditory centers of the brain supports the notion that non-auditory elements of music may provide an experience for deaf individuals that is qualitatively comparable to the experiences that a hearing person has while listening to music.

7. Conclusions

There is abundant evidence for compensatory plasticity in individuals who are deaf. Specific enhancement of visual and vibrotactile skills in individuals who are deaf may provide differential processing of non-auditory aspects of musical performance. These enhancements may influence the manner in which music is perceived. Indeed, the definition of music need not focus on sound alone; music incorporates visual and vibrotactile information. Use of non-auditory modalities may be further enhanced through the use of assistive multi-modal technologies. The unique sensory abilities endowed by the deaf brain should be embraced when developing new music that is meant to be inclusive of the deaf community. These new works have the potential to advance the art of music for all.

Acknowledgments

We would like to thank Deborah I. Fels and three anonymous reviewers for comments on earlier versions of this manuscript.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Loke, W.H.; Song, S. Central and peripheral visual processing in hearing and non-hearing individuals. Bull. Psychon. Soc. 1991, 29, 437–440. [Google Scholar]

- Neville, H.J.; Lawson, D. Attention to central and peripheral visual space in a movement detection task: An event-related potential and behavioral study. II. Congenitally deaf adults. Brain Res. 1987, 45, 268–283. [Google Scholar]

- Neville, H.J.; Lawson, D. Attention to central and peripheral visual space in a movement detection task. III. Separate effects of auditory deprivation and acquisition of a visual language. Brain Res. 1987, 405, 284–294. [Google Scholar]

- Stevens, C.; Neville, H. Neuroplasticity as a double-edged sword: Deaf enhancements and dyslexic deficits in motion processing. J. Cogn. Neurosci. 2006, 18, 701–714. [Google Scholar]

- Pavani, F.; Bottari, D. Visual abilities in individuals with profound deafness a critical review. In The Neural Bases of Multisensory Processes; Murray, M.M., Wallace, M.T., Eds.; CRC Press: Boca Raton, FL, USA, 2012; Chapter 22. [Google Scholar]

- Goldman, R.F. Varèse: Ionisation; Density 21.5; Intégrales; Octandre; Hyperprism; Poème Electronique. Instrumentalists, cond. Robert Craft. Columbia MS 6146 (stereo) (in Reviews of Records). Music. Q. 1961, 47, 133–134. [Google Scholar]

- Clifton, T. Music as Heard: A Study in Applied Phenomenology; Yale University Press: New Haven, CN, USA; London, UK, 1983. [Google Scholar]

- Moore, J.K.; Niparko, J.K.; Miller, M.R.; Linthicum, F.H. Effect of profound hearing loss on a central auditory nucleus. Am. J. Otol. 1994, 15, 588–595. [Google Scholar]

- Saada, A.A.; Niparko, J.K.; Ryugo, D.K. Morphological changes in the cochlear nucleus of congenitally deaf white cats. Brain Res. 1996, 736, 315–328. [Google Scholar]

- Heid, S.; Hartmann, R.; Klinke, R. A model for prelingual deafness, the congenitally deaf white cat–population statistics and degenerative changes. Hear. Res. 1998, 115, 101–112. [Google Scholar]

- Emmorey, K.; Allen, J.S.; Bruss, J.; Schenker, N.; Damasio, H. A morphometric analysis of auditory brain regions in congenitally deaf adults. Proc. Natl. Acad. Sci. USA 2003, 100, 10049–10054. [Google Scholar]

- Li, J.; Li, W.; Xian, J.; Li, Y.; Liu, Z.; Liu, S.; Wang, X.; Wang, Z.; He, H. Cortical thickness analysis and optimized voxel-based morphometry in children and adolescents with prelingually profound sensorineural hearing loss. Brain Res. 2012, 1430, 35–42. [Google Scholar]

- Bavelier, D.; Neville, H.J. Cross-modal plasticity: Where and how? Nat. Rev. Neurosci. 2002, 3, 443–452. [Google Scholar]

- Nishimura, H.; Hashikawa, K.; Doi, K.; Iwaki, T.; Watanabe, Y.; Kusuoka, H.; Nishimura, T.; Kubo, T. Sign language “heard” in the auditory cortex. Nature 1999, 397, 116. [Google Scholar] [CrossRef]

- Sadato, N.; Okada, T.; Honda, M.; Matsuki, K.-I.; Yoshida, M.; Kashikura, K.-I.; Takei, W.; Sato, T.; Kochiyama, T.; Yonekura, Y. Cross-modal integration and plastic changes revealed by lip movement, random-dot motion and sign languages in the hearing and deaf. Cereb. Cortex 2005, 15, 1113–1122. [Google Scholar]

- Fine, I.; Finney, E.M.; Boynton, G.M.; Dobkins, K.R. Comparing the effects of auditory deprivation and sign language within the auditory and visual cortex. J. Cogn. Neurosci. 2005, 17, 1621–1637. [Google Scholar]

- Finney, E.M.; Fine, I.; Dobkins, K.R. Visual stimuli activate auditory cortex in the deaf. Nat. Neurosci. 2001, 4, 1171–1173. [Google Scholar]

- Sung, Y.W.; Ogawa, S. Cross-modal connectivity of the secondary auditory cortex with higher visual area in the congenitally deaf—A case study. J. Biomed. Sci. Eng. 2013, 6, 314–318. [Google Scholar]

- Auer, E.T.; Bernstein, L.E.; Sungkarat, W.; Singh, M. Vibrotactile activation of the auditory cortices in deaf versus hearing adults. Neuroreport 2007, 18, 645–648. [Google Scholar]

- Levänen, S.; Jousmäki, V.; Hari, R. Vibration-induced auditory-cortex activation in a congenitally deaf adult. Curr. Biol. 1998, 8, 869–872. [Google Scholar]

- Barone, P.; Lacassagne, L.; Kral, A. Reorganization of the connectivity of cortical field DZ in congenitally deaf cat. PLoS One 2013, 8, e60093. [Google Scholar]

- Lambertz, N.; Gizewski, E.R.; de Greiff, A.; Forsting, M. Cross-modal plasticity in deaf subjects dependent on the extent of hearing loss. Cogn. Brain Res. 2005, 25, 884–890. [Google Scholar]

- Karns, C.M.; Dow, M.W.; Neville, H.J. Altered cross-modal processing in the primary auditory cortex of congenitally deaf adults: A visual-somatosensory fMRI study with a double-flash illusion. J. Neurosci. 2012, 32, 9626–9638. [Google Scholar]

- Hickok, G.; Poeppel, D.; Clark, K.; Buxton, R.B.; Rowley, H.A.; Roberts, T.P.L. Sensory Mapping in a Congenitally Deaf Subject: MEG and fMRI Studies of Cross-Modal Non-Plasticity. Hum. Brain Mapp. 1997, 5, 437–444. [Google Scholar]

- Lomber, S.G.; Meredith, M.A.; Kral, A. Cross-modal plasticity in specific auditory cortices underlies visual compensations in the deaf. Nat. Neurosci. 2010, 13, 1421–1427. [Google Scholar]

- Bross, M. Residual sensory capacities of the deaf: A signal detection analysis of a visual discrimination task. Percept. Mot. Skills 1979, 48, 187–194. [Google Scholar]

- Finney, E.M.; Dobkins, K.R. Visual contrast sensitivity in deaf versus hearing populations: Exploring the perceptual consequences of auditory deprivation and experience with a visual language. Cogn. Brain Res. 2001, 11, 171–183. [Google Scholar]

- Armstrong, B.; Neville, H.J.; Hillyard, S.A.; Mitchell, T.V. Auditory deprivation affects processing of motion, but not color. Cogn. Brain Res. 2002, 14, 422–434. [Google Scholar]

- Bottari, D.; Caclin, A.; Giard, M.H.; Pavani, F. Changes in early cortical visual processing predict enhanced reactivity in deaf individuals. PLoS One 2011, 6, e25607. [Google Scholar]

- Kok, M.A.; Chabot, N.; Lomber, S.G. Cross-modal reorganization of cortical afferents to dorsal auditory cortex following early-and late-onset deafness. J. Comp. Neurol. 2014, 522, 654–675. [Google Scholar]

- Bavelier, D.; Tomann, A.; Hutton, C.; Mitchell, T.; Corina, D.; Liu, G.; Neville, H. Visual attention to the periphery is enhanced in congenitally deaf individuals. J. Neurosci. 2000, 20, RC93:1–RC93:6. [Google Scholar]

- Bavelier, D.; Brozinsky, C.; Tomann, A.; Mitchell, T.; Neville, H.; Liu, G. Impact of early deafness and early exposure to sign language on the cerebral organization for motion processing. J. Neurosci. 2001, 21, 8931–8942. [Google Scholar]

- Elbert, T.; Pantev, C.; Wienbruch, C.; Rockstroh, B.; Taub, E. Increased cortical representation of the fingers of the left hand in string players. Science 1995, 270, 305–307. [Google Scholar]

- Shimojo, S.; Shams, L. Sensory modalities are not separate modalities: Plasticity and interactions. Curr. Opin. Neurobiol. 2001, 11, 505–509. [Google Scholar]

- Foxe, J.J.; Wylie, G.R.; Martinex, A.; Schroeder, C.E.; Javitt, D.C.; Guilfoyle, D.; Ritter, W.; Murray, M.M. Auditory-somatosensory multisensory processing in auditory association cortex: An fMRI study. J. Neurophysiol. 2002, 88, 540–543. [Google Scholar]

- Reale, R.A.; Calvert, G.A.; Thesen, T.; Jenison, R.L.; Kawasaki, H.; Oya, H.; Howard, M.A.; Brugge, J.F. Auditory-visual processing represented in the human superior temporal gyrus. Neuroscience 2007, 145, 162–184. [Google Scholar]

- Petitto, L.A.; Zatorre, R.J.; Gauna, K.; Nikelski, E.J.; Dostie, D.; Evans, A.C. Speech-like cerebral activity in profoundly deaf people processing signed languages: Implications for the neural basis of human language. Proc. Natl. Acad. Sci. USA 2000, 97, 13961–13966. [Google Scholar]

- Sadato, N.; Yamada, H.; Okada, T.; Yoshida, M.; Hasegawa, T.; Matsuki, K.I.; Itoh, H. Age-dependent plasticity in the superior temporal sulcus in deaf humans: A functional MRI study. BMC Neurosci. 2004, 5, 56. [Google Scholar] [CrossRef] [Green Version]

- Sadato, N.; Okada, T.; Honda, M.; Yonekura, Y. Critical period for cross-modal plasticity in blind humans: A functional MRI study. NeuroImage 2002, 16, 389–400. [Google Scholar]

- Bettger, J.G.; Emmorey, K.; Mccullough, S.H.; Bellugi, U.; Mccullough, S.H. Enhanced Facial Discrimination: Effects of Experience With American Sign Language. J. Deaf Stud. Deaf Educ. 1997, 2, 223–233. [Google Scholar]

- Asplund, C.C.; Todd, J.J.; Snyder, A.P.; Marois, R. A central role for the lateral prefrontal cortex in goal-directed and stimulus-driven attention. Nat. Neurosci. 2010, 13, 507–512. [Google Scholar]

- Bavelier, D.; Dye, M.W.G.; Hauser, P.C. Do deaf individuals see better? Trends Cogn. Sci. 2006, 10, 512–518. [Google Scholar]

- Stivalet, P.; Moreno, Y.; Richard, J.; Barraud, P.-A.; Raphel, C. Differences in visual search tasks between congenitally deaf and normally hearing adults. Cogn. Brain Res. 1998, 6, 227–232. [Google Scholar]

- Proksch, J.; Bavelier, D. Changes in the Spatial Distribution of Visual Attention after Early Deafness. J. Cogn. Neurosci. 2006, 14, 687–701. [Google Scholar]

- Chen, Q.; Zhang, M.; Zhou, X. Effects of spatial distribution of attention during inhibition of return (IOR) on flanker interference in hearing and congenitally deaf people. Brain Res. 2006, 1109, 117–127. [Google Scholar]

- Parasnis, I.; Samar, V.J. Parafoveal attention in congenitally deaf and hearing young adults. Brain Cogn. 1985, 4, 313–327. [Google Scholar]

- Bosworth, R.G.; Dobkins, K.R. Left-hemisphere dominance for motion processing in deaf signers. Psychol. Sci. 1999, 10, 256–262. [Google Scholar]

- Bosworth, R.G.; Dobkins, K.R. The effects of spatial attention on motion processing in deaf signers, hearing signers, and hearing nonsigners. Brain Cogn. 2002, 49, 152–169. [Google Scholar]

- Bernstein, L.E.; Auer, E.T.; Tucker, P.E. Enhanced Speechreading in Deaf Adults. Can Short-Term Training/Practice Close the Gap for Hearing Adults? J. Speech Lang. Hear. Res. 2001, 44, 5–18. [Google Scholar]

- Strelnikov, K.; Rouger, J.; Lagleyre, S.; Fraysse, B.; Deguine, O.; Barone, P. Improvement in speech-reading ability by auditory training: Evidence from gender differences in normally hearing, deaf and cochlear implanted subjects. Neuropsychologia 2009, 47, 972–979. [Google Scholar]

- McCullough, S.; Emmorey, K. Face processing by deaf ASL signers: Evidence for expertise in distinguished local features. J. Deaf Stud. Deaf Educ. 1997, 2, 212–222. [Google Scholar]

- Stokoe, W. Sign Language Structure; Linstok Press: Silver Spring, MD, USA, 1978. [Google Scholar]

- Cranney, J.; Ashton, R. Tactile spatial ability: Lateralized performance of deaf and hearing age groups. J. Exp. Child Psychol. 1982, 34, 123–134. [Google Scholar]

- Levänen, S.; Hamdorf, D. Feeling vibrations: Enhanced tactile sensitivity in congenitally deaf humans. Neurosci. Lett. 2001, 301, 75–77. [Google Scholar]

- Juslin, P.N. Five facets of musical expression: A psychologist’s perspective on music performance. Psychol. Music 2003, 31, 273–302. [Google Scholar]

- Thompson, W.F.; Graham, P.; Russo, F.A. Seeing music performance: Visual influences on perception and experience. Semiotica 2005, 2005, 203–227. [Google Scholar]

- Thompson, M.R.; Luck, G. Exploring the relationships between pianists’ body movements, their expressive intentions, and structural elements of the music. Music. Sci. 2012, 16, 19–40. [Google Scholar]

- Thompson, W.F.; Russo, F.A.; Livingstone, S.R. Facial expressions of singers influence perceived pitch relations. Psychon. Bull. Rev. 2010, 17, 317–322. [Google Scholar]

- Thompson, W.F.; Russo, F.A. Facing the music. Psychol. Sci. 2007, 18, 756–757. [Google Scholar]

- Morse, P.M.C. Vibration and Sound. In American Institute of Physics for the Acoustical Society of America, 2nd ed.; McGraw-Hill: New York, NY, USA, 1948. [Google Scholar]

- Russell, I.J.; Sellick, P.M. Tuning properties of cochlear hair cells. Nature 1977, 267, 858–860. [Google Scholar]

- Schreiner, C.E.; Mendelson, J.R. Functional topography of cat primary auditory cortex: Distribution of integrated excitation. J. Neurophysiol. 1990, 64, 1442–1459. [Google Scholar]

- Bolanowski, S.J.; Gescheider, G.A.; Verrillo, R.T. Hairy skin: Psychophysical channels and their physiological substrates. Somatosens. Mot. Res. 1994, 11, 279–290. [Google Scholar]

- Ilie, G.; Thompson, W.F. A comparison of acoustic cues in music and speech for three dimensions of affect. Music Percept. 2006, 23, 319–329. [Google Scholar]

- Hevner, K. The affective value of pitch and tempo in music. Am. J. Psychol. 1937, 49, 621–630. [Google Scholar]

- Russo, F.A.; Ammirante, P.; Good, A.; Nespoli, G. Feeling the music: Emotional responses to music presented to the skin. Front. Psychol. 2014. submitted. [Google Scholar]

- Verrillo, R.T. Vibration sensation in humans. Music Percept. 1992, 9, 281–302. [Google Scholar]

- Branje, C.J.; Maksimowski, M.; Karam, M.; Fels, D.I.; Russo, F.A. Vibrotactile display of music on the human back. In Proceedings of the 2010 ACHI’10 3rd International Conferences on Advances in Computer-Human Interactions, Saint Maarten, Netherlands Antilles, 10–15 February 2010; pp. 154–159.

- Russo, F.A.; Ammirante, P.; Fels, D.I. Vibrotactile discrimination of musical timbre. J. Exp. Psychol. Hum. Percept. Perform. 2012, 38, 822–826. [Google Scholar]

- Ammirante, P.; Russo, F.A.; Good, A.; Fels, D.I. Feeling Voices. PLoS One 2013, 8, e53585. [Google Scholar]

- Pouris, M.; Fels, D.I. Creating an entertaining and informative music visualization. Lect. Notes Comput. Sci. 2012, 7382, 451–458. [Google Scholar]

- Nanayakkara, S.; Taylor, E.; Wyse, L.; Ong, S.H. An enhanced musical experience for the deaf. In Proceedings of the 27th International Conference on Human Factors in Computing Systems—CHI’09, New York, NY, USA, 2009; ACM Press: New York, NY, USA; pp. 337–346.

- Baijal, A.; Kim, J.; Branje, C.; Russo, F.A.; Fels, D.I. Composing vibrotactile music: A multi-sensory experience with the Emoti-chair. In Proceedings of the 2012 IEEE Haptics Symposium (HAPTICS), Vancouver, BC, 4–7 March 2012; pp. 509–515.

- Karam, M.; Russo, F.; Branje, C.; Price, E.; Fels, D.I. Towards a model human cochlea: Sensory substitution for crossmodal audio-tactile displays. In Proceedings of the Graphics interface, Windsor, ON, Canada, 28–30 May 2008.

- Karam, M.; Nespoli, G.; Russo, F.; Fels, D.I. Modelling Perceptual Elements of Music in a Vibrotactile Display for Deaf Users: A Field Study. In Proceedings of the 2009 ACHI’09 2nd International Conferences on Advances in Computer-Human Interactions, Cancun, Mexico, 1–7 February 2009; pp. 249–254.

- Darrow, A.A. The Role of Music in Deaf Culture: Implications for Music Educators. J. Res. Music Educ. 1993, 41, 93–110. [Google Scholar]

- Cleall, C. Notes on a Young Deaf Musician. Psychol. Music 1983, 11, 101–102. [Google Scholar]

- Timm, L.; Vuust, P.; Brattico, E.; Agrawal, D.; Debener, S.; Büchner, A.; Dengler, R.; Wittfoth, M. Residual neural processing of musical sound features in adult cochlear implant users. Front Hum Neurosci. 2014, 8, 181. [Google Scholar] [CrossRef]

- Vongpaisal, T.; Trehub, S.E.; Schellenberg, E.G. Song recognition by children and adolescents with cochlear implants. J. Speech Lang. Hear. Res. 2006, 49, 1091–1103. [Google Scholar]

- Vongpaisal, T.; Trehub, S.E.; Schellenberg, E.G. Identification of TV tunes by children with cochlear implants. Music Percept. 2009, 27, 17–24. [Google Scholar]

- Cooper, W.B.; Tobey, E.; Loizou, P.C. Music perception by Cochlear Implant and Normal Hearing Listeners as measured by the Montreal Battery for Evaluation of Amusia. Ear Hear. 2008, 29, 618–626. [Google Scholar]

- Hopyan, T.; Peretz, I.; Chan, L.P.; Papsin, B.C.; Gordon, K.A. Children using cochlear implants capitalize on acoustical hearing for music perception. Front. Psychol. 2012, 3. [Google Scholar] [CrossRef]

© 2014 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).