1. Introduction

The evolution of the Internet has provided a variety of modalities of interaction allowing a diverse range of artificial software agents to cooperate, negotiate, delegate and so on [

1]. This new type of online interaction channels contributes to the growth of the e-marketplace, facilitating the exchange of goods between individual parties. In addition, successful e-business transactions crucially depend on establishing, maintaining and managing trust in an online setting [

2,

3].

Many computational trust models use reputation information to enhance the level of trust among its members. Reputation information can be used as a guideline for assessing possible interactions, selecting and understanding behaviours of trading partners [

4]. Most reputation systems use historical information either reported by each participant’s direct observation or indirect feedback from others to derive the reputation of interacting parties [

5,

6]. Yet, the fact is that past experience information of each party is sometimes unavailable. For example, when a new agent has been deployed for the first time, historical information about its behaviours apparently does not exist. As a result, it is hard to decide whether the newly deployed agent is fraudulent or trustworthy and it is also difficult to compete with other existing agents. This problem has been recognised as a reputation bootstrapping problem. There are many studies proposing different approaches to provide the solutions to such problems.

For example, a mathematical model based on probability theory is proposed to identify the relationship between trust [

7], reputation and reciprocity of agents in dyadic interactions. To initialize a trust value of an agent, the notion of a stereotype reputation is introduced based on the information-sharing among agents [

8,

9]. In [

10], an initial trust value is calculated from defective transaction rates submitted by consumer agents. If consumer agents for a specific provider agent do not exist or the collected defective transaction rates are insufficient, then default trust is the average value of all existing consumer agents’ reputations. A more advanced method is proposed by Skopik et al. [

11] in which trust mirroring and trust teleportation based on profile similarities are used to assign an agent’s initial reputation value. In a service-oriented environment, the study by Wu et al. [

12] implements an artificial neural network (ANN) technique to find correlations between features and performance of existing services in order to establish a tentative reputation of an unknown service. Similarly, Yahyaoui et al. [

13] compute a matching result of the observations sequence between a web service and a user which is modelled as a hidden Markov model and pre-defined trust patterns to bootstrap trust of web services. In a peer-to-peer (P2P) environment, Javanmardi et al. [

14] introduce a reputation model based on fuzzy theory to compute a peer trust value in a semantic P2P grid where nodes are grouped according to the semantic similarities between their services.

However, when trust is violated as a result of interactions that do not proceed as expected, it gives rise to a question about the possibility that the broken trust may be recovered similarly to trust initialization of new entrant agents [

15]. Clearly, recovering trust is more difficult than bootstrapping trust as a violation may lead the trust of a transgressor to plunge below its initial level and lead to a negative state of motivation [

16]. As suggested in [

17,

18], trust recovery requires a more complex mechanism to explore different factors that cause the decline of trust and can also be used to heal the one’s initial negative motivations at the same time. Undoubtedly, some trust violations, for example, intentional fraud or a high severe offences do not deserve to be repaired. Violations caused by an unintentional error or a mistake, on the other hand, should function with a trust recovery mechanism. As such, an understanding of the factors that are applicable in terms of trust recovery is essential when dealing with a trust crisis. In addition, in the context of online communities, agents are self-organized and connected to each other to form communities according to their common interests. Therefore, identification of those who are directly and indirectly affected individuals of trust violation in communities in order to obtain sources of trust recovery information is also an important process.

In this study, we strengthen the concept and computational model of the forgiveness mechanism in our previous work [

19] by incorporating two types of incentive mechanisms e.g., financial and reputational incentives, to encourage cooperation among involved participants. The rest of paper is structured as follows: In

Section 2, we present related work on various trust recovery mechanisms.

Section 3 is dedicated to our extended framework. A series of experiments and results are provided in

Section 4. Last section, we conclude our study along with possible future work.

2. Related Work

To facilitate the repair of trust breakdowns, the most famous online auction site, eBay, uses a feature called the “feedback revision” (formerly mutual feedback withdrawal) [

20]. Since there are a variety of reasons for buyers to leave negative feedback, eBay’s feedback revision provides a place for the seller to identify, apologize, and solve the buyer’s problem. Once the buyer is satisfied with the seller’s response, the seller sends a request asking the buyer to revise their negative feedback. The study of the German eBay website by Abeler et al. [

21] confirms that apology as a cheapest tool works more effectively than monetary compensation strategies to induce consumers to withdraw their neutral and negative evaluations.

Allowing untrustworthy agents to build up their reputation, two main prosocial motivations are required: forgiveness and regret. A combinatorial framework of trust, reputation and forgiveness is proposed by the study by Vasalou et al. [

22] called the DigitalBlush System. The system is inspired by human forgiveness, and uses expressions of shame and embarrassment to elicit potential forgiveness by others in the society. In more detail, the offender’s natural reactions after shame and embarrassment (i.e., the blush) can prompt sympathy or forgiveness from the victim. However, misinterpretation of emotional signals can be more problematic than they are not applied. In subsequent works by Vasalou et al. [

23,

24], when trust breaks down, the trustworthiness of the offender will be detected by identifying a number of motivation constituents [

23]. If the result is positive, the victim will be presented along with those motivation constituents to consider before reassigning a reputation value to the offender. In particular, this intervention mechanism intends to alleviate the victim’s negative attributions, while at the same time it aims to prevent the unintentional/infrequent offender from receiving an unfair judgement. In [

24], they investigate trust repairing in one-off online interactions by conducting an experiment that hypothesizes and show that systems designed to stimulate forgiveness can restore a victim’s trust in the offender. In [

25,

26], attribution models are proposed for identifying the causes of trust violation and rebuilding damaged trust through reparative actions which are based on emotion and motivation.

The concept of regret has been proposed by Marsh et al. [

27] as a cognitive inconsistency. Regret can occur from the truster, trustee, or both counterparts. A truster feels regret because a positive trust decision is betrayed by a trustee. In other words, a truster’s regret occurs when their expectations of the interaction toward a trustee are violated and the corresponding betrayal produces severe damage to trust. A trustee feels regret because a negative trust decision is erroneous. This means a trustee expresses regret for what they have done whether it was a wrongdoing or not. Both the truster and trustee can feel regret for a missed opportunity for what they did not do. Forgiveness and regret are considered as implementable properties to formalize the incorporation of trust defining a computational model.

The forgiveness factor has been used as an extended component of classical reputation models [

28,

29]. It is an optimistic view of reconciliation based on the fact that individuals are more likely to forgive someone who committed an offence that seems distant, rather than close, in time. In other words, an agent should always forgive after a sufficiently large time has passed without any interaction. Moreover, an agent should assign a reputation value to its partner initially or increasing to the highest possible value. Choi et al. [

18] analyse the implications of different trust violations and reconciliation tactics based on the development of an agent-based simulation model. The implementation of reconciliation tactics needed will vary depending on the severity of the offence In particular, highly effective reconciliation efforts always result in better outcomes in rebuilding trust, but surely incur a higher cost. Trust-repairing strategies are proposed by Chen et al. [

17] to induce consumers’ (as the victims) positive moods. E-vendors (as the offenders) should respond to negative events by initiating various trust-repairing strategies e.g., apology, adequate information and financial compensation, to change the emotional state of the consumers. The result of this study shows that informational repair e.g., clarifying facts and/or updating information, is considered to be the most effective strategy in dealing with negative feelings, especially for online consumers in Taiwan.

Most approaches in the existing works consider sources of information only from dyadic interactions [

24,

27]. Our approach combines information from both dyadic and community interactions. Besides, some approaches apply only one condition (e.g., regret or forgetting) for evaluating the transgressor’s forgiveness value [

27,

28,

29]. In contrast to our approach, our proposed forgiveness mechanism assembles five different factors to ensure the potential transgressor will engage in future transactions successfully. Moreover, to the best of our knowledge, most existing approaches do not provide mechanisms to encourage future interactions after a reconciliation process, which is contrary to our approach that promotes future interactions through incentive mechanism.

There is also an argument regarding whether forgetting should be a part of a reconciliation process when a transgression occurs. For example, Ambrose et al. [

30] state that social benefits of issuing forgiveness can be fully obtained only if the transgression can be forgotten. This is in line with the study of Bishop et al. [

31] in which there is a strong relation between forgiving and forgetting. The study explores different approaches to dealing with information the transgressor wishes to be forgotten on the Web e.g., controlling information diffusion through revoking access, hiding the wrong deed by creating false information or flooding with large amounts of similar information, etc. However, as opposed to previous studies, Vasalou et al. [

32] and Exline et al. [

33] conform to the condition where trust violation cannot be completely forgotten, resulting in a new trust value for the transgressor for which full recovery is impractical. Based on the same perception, our study applies this condition as well.

3. Extended Framework

In our previous work [

19], a forgiveness mechanism is defined as a function of five positive motivations: intent, history, apology, severity and importance. Intent refers to the extent to which a trust violation is more or less forgivable depending on a victim’s attribution of a transgressor’s intention. History is one of the key components for trust rebuilding that can increase the tendency towards a victim’s forgiveness. An apology is expected from either a transgressor him/herself as an interpersonal apology or his/her organization as a corporate apology, or both. Severity is similar to intent i.e., greater severity of the trust violation can lead to less positive judgements. In contrast, forgiveness is more likely to be offered when the trust violation is perceived as less severe. Importance refers to the competence of a transgressor to provide a product or service to a victim or other members in the community. If the need for the product or service provided by a transgressor is high, forgiveness tends to be granted in order to fulfil the transaction’s requirement and avoid the lack of available service providers even knowing that the outcomes of future transactions may not be maximized.

Table 1 presents a mapping between positive motivations and three sources of forgiveness. As it can be seen from the table, our forgiveness mechanism is an aggregation function of both subjective and objective views.

The subjective view (

) is a forgiveness value computed from the point of view of a victimized individual:

The objective views are forgiveness values assessed at community level i.e., from both victim and transgressor communities:

The following subsections describe how we extend our forgiveness mechanism i.e., by stimulating cooperation between thevictim and potential transgressor through an incentive mechanism; and defining a range of new reputation value as the zone of forgivability.

3.1. Incentive Mechanism

In order to encourage a victim and members of victim community to cooperate with a transgressor in future transactions, we propose two types of incentives:

Financial incentive. A reparative action in the form of monetary compensation provided by the transgressor can be a primary response of functional recovery effort in business environment [

34]. In addition, reducing the product or service price can make the transgressor more attractive and more competitive [

35]. In this study, financial incentive is in the form of a one-time product’s discount price offered by the transgressor to the victim and the victim community members. The value of the discount price is different depending on the value of forgiveness contributed by the the victim and the victim community members. For the victim, the value of the discount price can be calculated as follows:

where

is the forgiveness value evaluated by the victim

x for the violation committed by the transgressor

y who is a member of the community

Y.

is a weight factor of forgiveness value provided by the victim

x.

is the product or service price offered by the transgressor

y. We calculate the value of discount price for members of victim community by assuming that each member contributes to the forgiveness assessment equivalently:

where

is the forgiveness value aggregated from members of victim community

X for the violation committed by the transgressor

y who is a member of the community

Y.

is a weight factor of a forgiveness value provided by the victim community

X.

is the number of victim community members.

Reputational incentive. The transgressor has an obvious incentive to be trustworthy in order to build up its reputation. A one-time discount price as a reparative monetary compensation can motivate cooperation resulting in increasing the transgressor’s reputation. We evaluate reputational incentive from cooperation between the transgressor and the victim according to the expression:

where

is the maximum price of product/service offered by the transgressor and

is the transgressor’s reputation after trust is violated or before considering forgiveness. The reputational incentive from cooperation between the transgressor and the victim community members can be aggregated as follows:

Therefore, the total reputational incentives the transgressor could obtain from cooperation providing financial incentives to the victim and the victim community members is:

3.2. Zone of Forgivability

Thus far, we have identified the transgressor’s forgiveness value evaluated by the victim, the victim community and the transgressor community, together with the expected reputation value calculated from future transactions with financial incentives as the reparative mechanism. The transgressor’s new reputation value is simply the summation of these two values along with the transgressor’s existing reputation value before considering forgiveness as follows:

The result of Equation (

9) should have some limits or boundary values reflecting the fact that the violation that might be forgiven should not be completely forgotten [

32,

33]. Marsh et al. [

27], introduce the concept of

the Limit of Forgivability as a minimum baseline of trust value for determining the worth of a transgressor entering into redemption strategies. However, the concept does not state how great the appropriate boundary values after granting the transgressor’s forgiveness should be, which means it is possible that the transgressor’s trust can be fully reinstated.

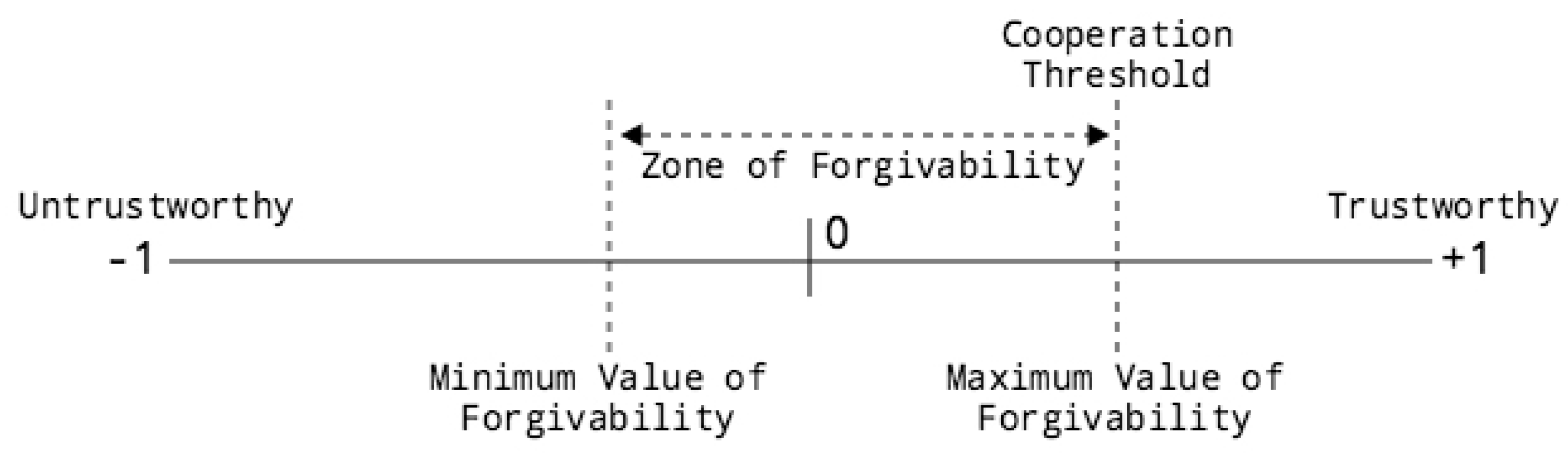

In this study, the boundary values are indicated as

the Zone of Forgivability shown in

Figure 1. Specifically, the minimum boundary value of forgivability can be determined as:

where

is the possible maximum value of an untrustworthy service provider (which is –1) and

is a constant forgiveness threshold. A transgressor is considered to be potential if the aggregation of existing reputation value and forgiveness assessment exceeds the minimum value of forgivability, that is

. Note that the cooperation threshold is used as the maximum value of forgivability which means if the calculated reputation value exceeds the maximum boundary value, then the cooperation threshold will be used as a new reputation:

where

is a constant cooperation threshold. It is worth noting that the Zone of Forgivability still impedes a potential transgressor from being directly selected for future transactions if

is less than the cooperation threshold. However, it is not completely rejected but is rather a baseline for interacting counterparts to incorporate some additional information e.g., cost and quality into decision-making [

36].

4. Experiments and Results

We have conducted a number of experiments to validate the applicability of the proposed exploration framework based on forgiveness and incentive mechanisms. We use the Java programming environment to simulate the transacting agents and their interactions in e-marketplaces. The main purpose of our experiments is to evaluate the efficiency of simulated e-marketplaces by comparing the implementation of our framework with three other approaches:

4.1. Experimental Setting

In our simulated e-marketplaces, there are service providers and consumers interacting for the same kind of product with homogenous acceptable quality. At the outset, the numbers of service providers and consumers are 50 and 100, respectively. Each service provider has the same number of products, 1000 units, and only one unit of product is traded for each transaction. Both service providers and consumers value the price of product differently, which is randomly distributed between 100 and 200 for service providers and between 200 and 250 for consumers.

In each time period, transactions are carried out by random matching between service providers and consumers. In other words, there is a maximum of 100 transactions in each time period, in which some service providers may have been chosen for trading more than once and some may possibly have not been chosen. The reputation value of service providers lies in a range between

and 1 and is updated after the transaction is completed as follows:

where

is a service provider’s reputation fading factor. As the recent reputation value is more important than the older one, we simply set

and

. We also define a trustworthy service provider as an agent having a positive reputation value (

) whereas an untrustworthy service provider is an agent having a negative reputation value (

). All service providers are bootstrapped by initially assigning a reputation value of 0.5.

After each transaction, we calculate the consumer’s utility obtained according to the law of diminishing marginal utility in economics [

37] based on the use of an exponential function. The utility function is proportional to the last updated reputation of the interacting service provider and is defined as follows:

where

is a constant scaling factor for controlling the speed at which utility is increased or decreased and is set to 5 in our experiments.

and

are the maximum and minimum utility the consumers can gain for each transaction which we assume to be 1000 and

, respectively. Additionally, there is a maximum of five service providers which are randomly marked as defective for every time period of interaction. In this way, the transaction between a service provider and a consumer is carried out if it satisfies the following conditions:

The service provider is trustworthy () and the number of products is greater than 0.

The service provider is defective () and the number of products is greater than 0.

The service provider is untrustworthy () and the number of products is greater than 0. Also, the number of forgiveness interventions (denoted as ) is not more than 2, which means each untrustworthy service provider can be granted forgiveness only twice.

More specifically, the condition (1) and (2) are applied to all simulated e-marketplaces (our framework, ALLD, ALLC, and Griffiths) while the condition (3) is applied only to the e-marketplace incorporating our proposed framework. We summarize all parameter settings used in the experiment in

Table 2.

4.2. Experiment 1: Robustness Evaluation

The purpose of this experiment is to examine how robust the e-marketplace can operate as the number of untrustworthy service providers continuously increases over time and new service providers are not allowed to join the marketplace. The number of service providers is initially set to 50 and they are all trustworthy. However, at the end of each time period, some defective service providers will become untrustworthy and will be forced to leave the marketplace. As a result, the number of untrustworthy service providers continuously increases and ultimately leads to the e-marketplace failure due to the lack of trustworthy service providers.

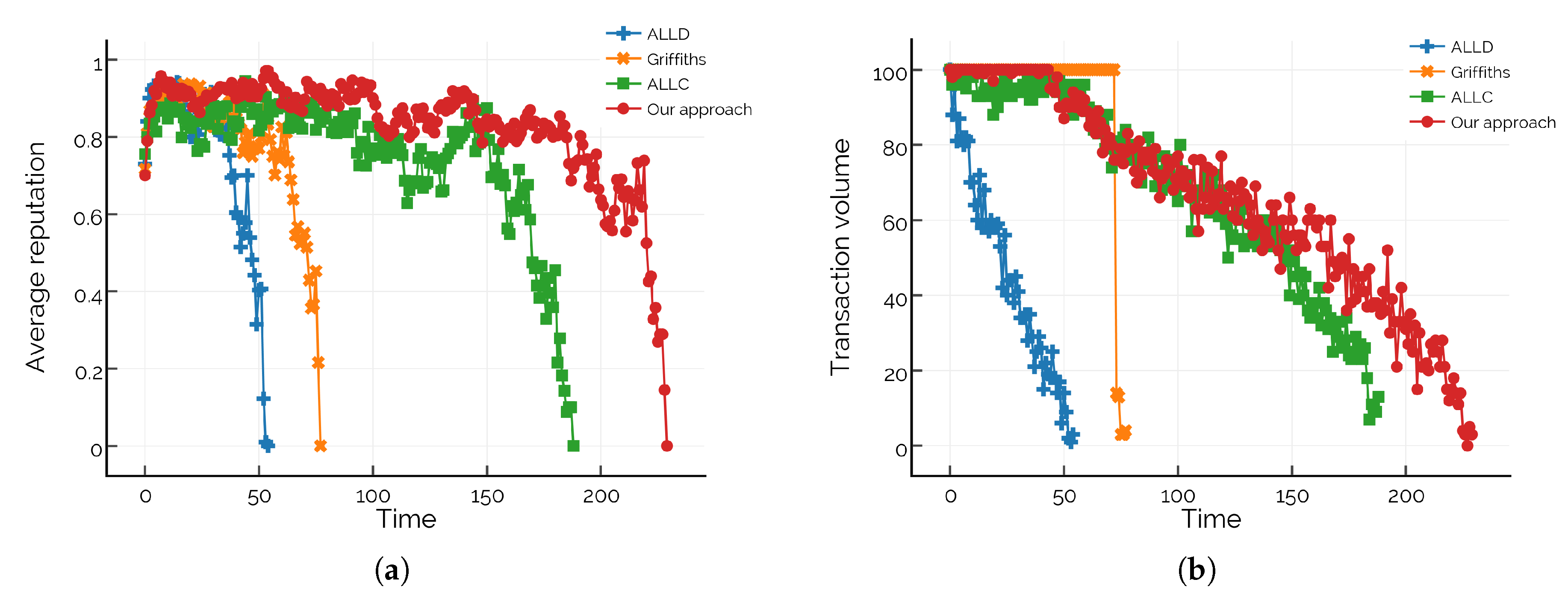

In the first sub-experiment, we compare the average reputation values of service providers in different e-marketplaces. The average reputation value is calculated only from the reputation of trustworthy service providers. Before the collapse of the e-marketplaces as shown in

Figure 2a, the e-marketplace incorporating our framework has a more stable average reputation value and a longer period of interaction time than all other e-marketplaces. The average reputation values in ALLD and Griffiths nosedive to the lowest value much quicker, by about three times compared to ALLC and four times compared to our framework.

In the second sub-experiment, we examine the numbers of market transactions generated at different time periods. The results are shown in

Figure 2b. At the beginning, all e-marketplaces can successfully generate market transactions as all service providers are trustworthy. Over time, as the numbers of untrustworthy service providers increase, the transaction volumes in ALLD drop much more quickly while ALLC and the e-marketplace incorporating our framework decrease at a slower pace. A considerably different result can be observed in Griffiths where the maximum number of market transactions can be generated in each time step as long as there are trustworthy service providers available to consumers. Otherwise, we can notice a sudden drop of transaction volumes as the e-marketplace only performs by defective service providers.

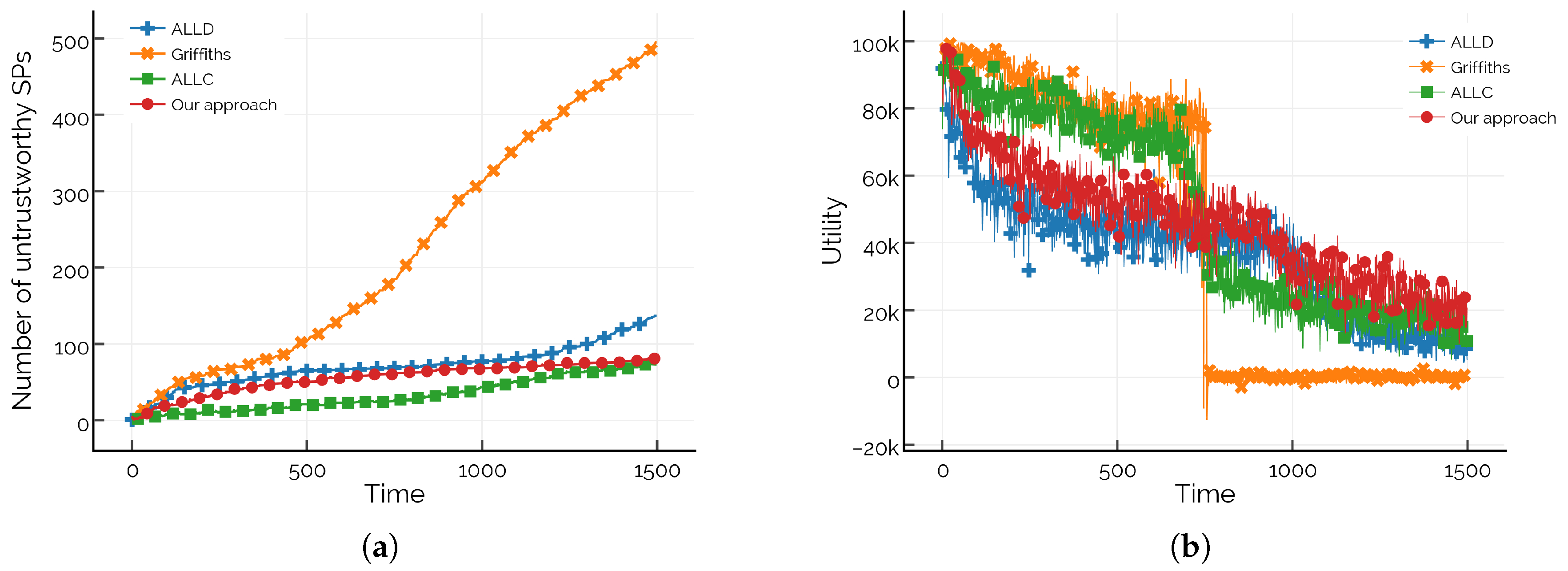

In the third sub-experiment, we compare the numbers of untrustworthy service providers as time progresses. It can be seen in

Figure 3a that the numbers of untrustworthy service providers increase constantly to the point that trustworthy service providers are no longer available to all consumers. However, the increasing rate of number of untrustworthy service providers in the e-marketplace incorporating our framework is much less than that in ALLD, Griffiths and ALLC. In other words, for the e-marketplace in which new service providers are not allowed to join, our framework can improve the e-marketplace to operate more efficiently than other approaches.

In the fourth sub-experiment, we compare the utilities that all consumers obtain at different time points calculated by using Equation (

13). As depicted in

Figure 3b, the consumers in ALLC and the e-marketplace incorporating our framework gain a higher utility because of a cooperative setting with conditions (i.e., our framework) and without condition (i.e., ALLC). The consumers in Griffiths suffer a sudden drop of utilities which is similar to the result of transaction volumes in

Figure 2b due to a rapid decline of number of trustworthy service providers.

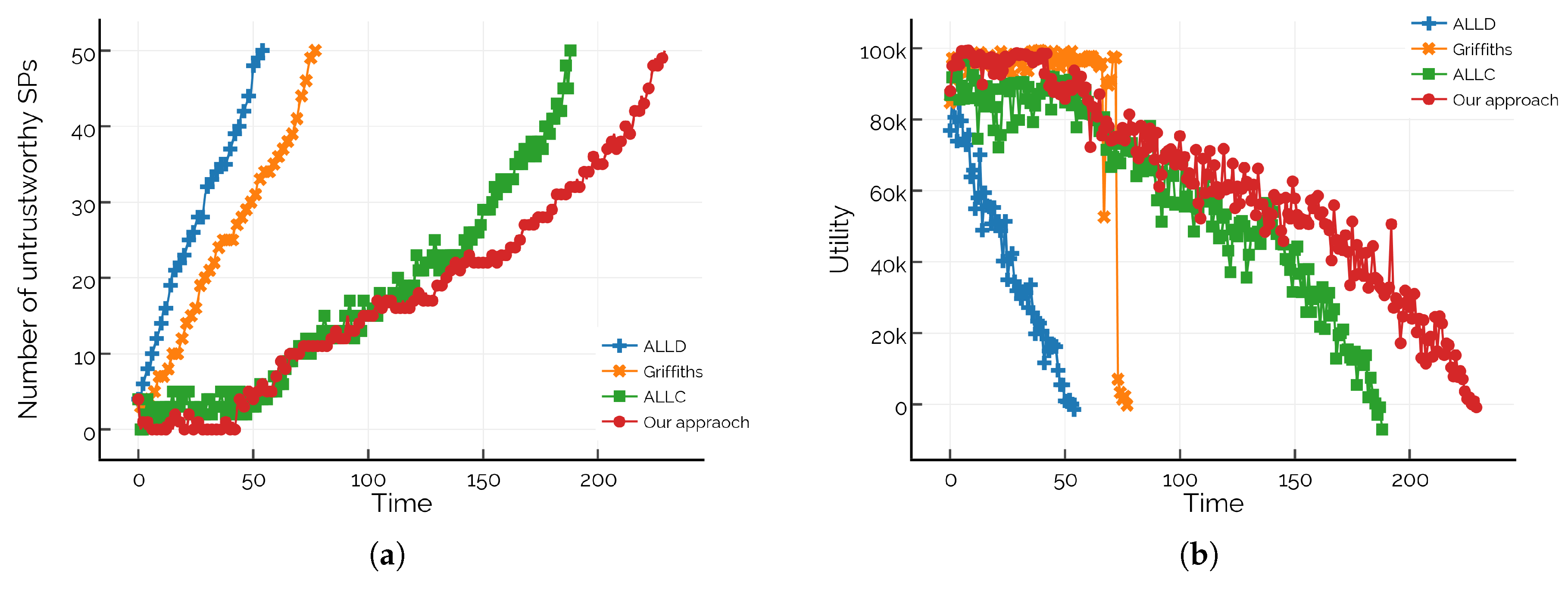

4.3. Experiment 2: Comparison of Dynamic E-Marketplaces

The purpose of this experiment is to investigate the efficiency of the proposed framework by evaluating the average reputation values, total market transactions, market transactions at different time points, number of untrustworthy service providers and number of utilities obtained at different time points in different dynamic e-marketplaces. Initially, the e-marketplaces have the same number of trustworthy service providers as in Experiment 1 (i.e., 50). However, in this experiment we allow new service providers to join the e-marketplace at the end of each time period. The number of newly joined service providers is equal to the number of untrustworthy service providers leaving the e-marketplace in each time period. As a consequence, the number of trustworthy service providers remains constant over the course of the experiment. For simplicity, the results of each sub-experiment are analysed after 1500 time periods.

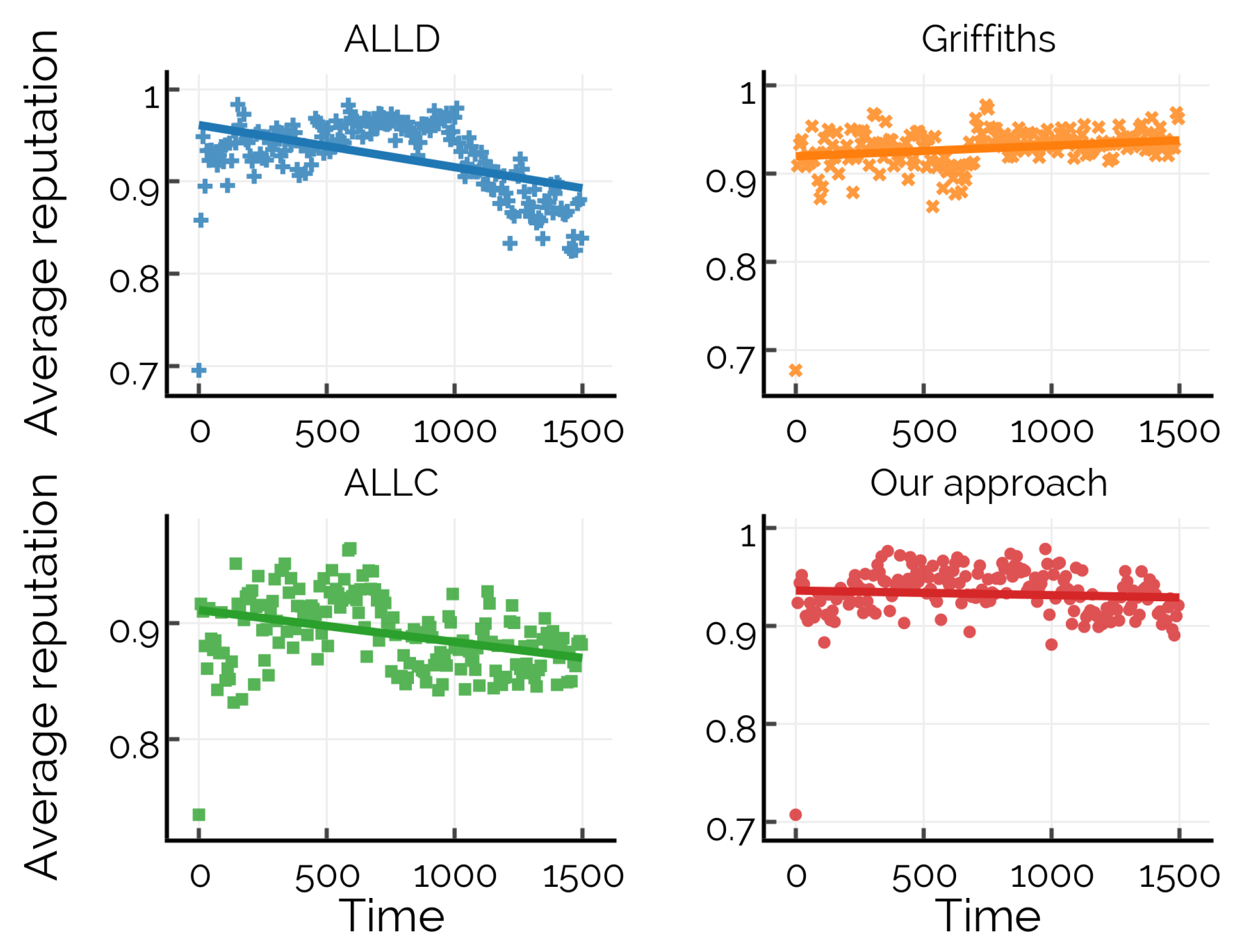

In the first sub-experiment, we compare the average reputation values of service providers in different e-marketplaces. As shown in

Figure 4, the average reputation values of service providers in the e-marketplace incorporating our framework are slightly higher than in ALLD and ALLC. Note that when compared with Griffiths, the result is almost equivalent to our framework. However, all e-marketplaces maintain the same high average reputation values throughout the entire experiment. It is also worth noting that implementing different reputation approaches clearly results in a different outcomes.

In the second sub-experiment, total transactions and transaction volumes at different time periods are measured. In

Figure 5a, ALLC has the highest total number of transactions compared to other e-marketplaces as a result of an optimistic setting. Interestingly, the total transaction volumes of Griffiths increase sharply, then remain nearly constant after time period of 720 as the ability for switching to other trustworthy service providers makes the majority of them run out of products. The increase rate of the total number of transactions of the e-marketplace incorporating our framework is greater than for ALLD and Griffiths (after a time period of 1050). In

Figure 5b, the comparison of transaction volumes generated by different e-marketplaces at different time periods are presented. Again, we notice a sudden drop of number of transaction volumes generated in Griffiths which is similar to the results in

Figure 2b. However, the reason of this drop-off is not due to the lack of trustworthy service providers, but rather the lack of available products provided by trustworthy service providers. Conversely, the decreasing number of market transactions in ALLD, ALLC and the e-marketplace incorporating our framework is mainly due to the increasing number of untrustworthy service providers. Clearly, for an e-marketplace with limited inventory, our framework is capable of dealing with a constant number of defective service providers, helping the e-marketplace to operate and generate market transactions better than other approaches.

In the third sub-experiment, the numbers of untrustworthy service providers in different e-marketplaces are compared. As we can see in

Figure 6a, the e-marketplace incorporating our framework and ALLC have the lowest number of untrustworthy service providers as these e-marketplaces are driven by the benefits of the forgiveness mechanism. Interestingly, the number of untrustworthy service providers in Griffiths outnumbers the number of untrustworthy service providers in all other e-marketplaces by a huge margin due to the capability of product consumption. In other words, the ability to switch to other trustworthy service providers reduces the number of service providers with available products very quickly and at the same time increases the tendency of defective service providers to become untrustworthy.

In the fourth sub-experiment, the utilities of each consumer which are calculated according to Equation (

13) are aggregated and compared.

Figure 6b confirms the results in the second sub-experiment and can be described in the same manner as in

Figure 5a,b.

5. Conclusions and Future Work

In this study, an extended framework of the forgiveness mechanism by incorporating incentive mechanisms to encourage the victim and the members of victim community to cooperate with the transgressor in future transactions is presented. We propose two types of incentives: a financial incentive as a reparative action provided by the transgressor and a reputational incentive for the transgressor to cooperate in order to rebuild trust. The outcome of the proposed framework can be utilized in deciding whether there is potential for recovery after a trust violation. However, even though the violation of norms can be forgiven, it should not be completely forgotten. Therefore, the Zone of Forgivability is introduced, indicating the minimum and maximum boundary values of forgivability. We further conduct a series of experiments to evaluate the effectiveness of the proposed framework through the comparison of different simulated e-marketplaces. The findings of our study are underlined by the fact that incorporation of our proposed framework into the e-marketplace is efficient, especially in long-term interactions, as a result of collaboration with potential counterparts whose trust is conditionally recovered from violation.

There are some issues worth being further addressed in future work. For example, risk assessment is considered as necessary when allowing trust of untrustworthy counterparts to be recovered, especially in risky environments. In this regard, incorporating risk assessment within the framework can make the process of trust recovery more robust. Another issue is the incentive mechanism as a reparative action. Apart from a monetary compensation, it would be interesting for the affected individuals of trust violation to be more flexible in negotiating other attributes such as quality, warranties and delivery time. Moreover, it may be of interest to validate the effectiveness of our approach by extending experiments in more varied dynamic e-marketplace environments.