Mobile Robot Self-Localization System Using Single Webcam Distance Measurement Technology in Indoor Environments

Abstract

: A single-webcam distance measurement technique for indoor robot localization is proposed in this paper. The proposed localization technique uses webcams that are available in an existing surveillance environment. The developed image-based distance measurement system (IBDMS) and parallel lines distance measurement system (PLDMS) have two merits. Firstly, only one webcam is required for estimating the distance. Secondly, the set-up of IBDMS and PLDMS is easy, which only one known-dimension rectangle pattern is needed, i.e., a ground tile. Some common and simple image processing techniques, i.e., background subtraction are used to capture the robot in real time. Thus, for the purposes of indoor robot localization, the proposed method does not need to use expensive high-resolution webcams and complicated pattern recognition methods but just few simple estimating formulas. From the experimental results, the proposed robot localization method is reliable and effective in an indoor environment.1. Introduction

Autonomous robots have a wide range of potential applications in security guards, house cleaning and even warfare. Most of them are equipped with position measurement systems (PMSs) for the purpose of precisely locating themselves and navigating in their working fields. Three typical techniques [1] in PMSs are triangulation, scene analysis, and proximity. The triangulation technique uses the geometric properties of triangles to compute object locations. The most well-known technique is the Global Positioning System (GPS). However, GPS, as it is satellite dependent, has an inherent problem of accurately determining the locations of objects within a building [2]. A proximity location-sensing technique entails determining when an object is “near” a known location, and the object's presence can be sensed via some limited range physical phenomenon. Some famous techniques are detecting physical contact [3,4] or monitoring wireless cellular access points [5,6]. The scene analysis location sensing technique uses features of a scene observed from a particular vantage point to draw conclusions about the location of the observer or of objects in the scene. Some well-known techniques are a radar location system [7] or a visual images location system [8].

In an indoor localization technique, the infrared light [6], ultrasonic [9], laser range finder [10,11], RFID [12], and radar [13] are the most popular wireless techniques. Diffuse infrared technology is commonly used to realize indoor locations, but the short-range signal transmission and line-of-sight requirements limit the growth. Ultrasonic localization [9] uses the time-of-flight measurement technique to provide location information. However, the use of ultrasound requires a great deal of infrastructure in order for it to be highly effective and accurate. Laser distance measurement is executed by measuring the time that it takes for a laser light to be reflected off a target and returned back to the sender. Because the laser range finder is a very accurate and quick measurement device, this device is widely used in many applications. In [10,11], Subramanian et al. and Barawid et al. proposed an autonomous vehicle guidance system based on a laser rangefinder. The laser rangefinder was used to acquire environment distance information that can be used to identify and avoid obstacles during navigation. In [14], Thrun et al. provided an autonomous navigation method based on a particle filter algorithm. In this study, the laser rangefinder can receive all the measurement information that it can utilize to compute the likelihood of the particles. These papers confirm that laser rangefinders are high performance and high accuracy measurement equipment. However, their high performance relies on high hardware costs. RFID-based localization uses RF tags and a reader with an antenna to locate objects, but the detection of each tag only can work over approximately 4 to 6 meter distances. To improve the low precision on location positioning, the well-known SpotON [15] technology uses an aggregation algorithm based on radio signal strength analysis for 3D-location sensing. However, a complete system is not available yet. An RF-based RADAR system [7,16,17] uses the 802.11 network adapter to measure signal strengths at multiple base stations positioned to provide overlapping coverage for locating and tracking objects inside buildings. Unfortunately, most cases to date cannot provide overall accuracy of systems as optimal as desired. In indoor localization for robots, most of these wireless techniques are used to perform scans of static obstacles around the robots, and the localization is calculated by matching those scans with a metric map of the environment [18,19], but in dynamic environments the detected static-features are often not enough for estimating a robust localization.

Li et al. [20] proposed a NN-based mobile phone localization technique using Bluetooth connectivity. In this large-scale network, mobile phones equipped with GPS represent beacons, and others could connect to the beacon phones with Bluetooth connectivity. By formulating the Bluetooth network as an optimization problem, a recurrent neural network is developed to distributively find the solutions in real time. However, in general, the sampling rate of Bluetooth is relatively low, and then accurately estimating a moving object in real time is not easy. In [21], a recurrent neural network was proposed to search a desirable solution for a range-free localization of WSNs under the condition that the WSNs can be formed as a class of nonlinear inequalities defined on a graph. Taking advantage of parallel computation of the NN, the proposed approach can effectively solve the WSN localization problem, although the limited transmission bandwidth might cause difficulty in the localization.

Recently, image-based techniques have been preferred over wireless techniques [4,5,9,22]; this is because they are passive sensors and are not easily disturbed by other sensors. In [1], a portable-PC capable of marker detection, image sequence matching, and location recognition was proposed for an indoor navigation task. JongBae et al. used the augmented reality (AR) technique to achieve an average location recognition success rate of 89%, though the extra cost must be considered in this technique. In [23], Cheoket et al. provided a method of localization and navigation in wide indoor areas with a wearable computer for human-beings. Though the set-up cost is lower, this method is not easy to implement and set up if users do not know the basic concept of electronic circuit analysis and design. Furthermore, an imaged-based method for distance measurement was proposed in [24–29]. According to the transform equations in those papers, the distance can be calculated from the ratio of the size between the pre-defined reference points and the measured object. In recent years, we have seen growing importance placed on research in two-camera localization systems [30,31]. From two different images, the object distances can be calculated by a triangular relationship. However, to ensure the measuring reliability, the photography angle and the distance between two cameras must be maintained at the same position. Due to the use of two cameras for the measuring device, the set-up costs of the experimental environment will be increased.

Nowadays, surveillance systems exist in most modern buildings, and cameras have been configured around these buildings. In general, one camera covers one specific area. In order to locate an autonomous patrolling robot using existing cameras in buildings, a single-camera localization technique must be developed for the patrolling robots. This study aims to develop a single-webcam distance measurement technique for indoor robot localization with the purposes of saving set-up costs and increasing the accuracy of distance measurements. In our approach, the working area setting can be as simplified as possible, because the existing webcams in the surveillance environment can be utilized without any change. For a single webcam in its working coverage area, we develop an improved image-based distance measurement system (IBDMS) and a parallel lines distance measurement system (PLDMS) to measure the location of a robot according to a known-size rectangle pattern, i.e., a ground tile. This measurement system uses four points, i.e., the four corners of a ground tile, to form a pair of parallel lines in the webcam image. Referring to the pair of parallel lines, we can measure the location of a robot within the visual range of a webcam. Because of the fixed monitoring area of an individual webcam, few simple image processing strategies are used to search for the robots before going through IBDMS and PLDMS. First, we use the low-pass filter and on-line background update method to reduce background noise, and adopt the image morphology to complete prospect information and to remove the slight noise. When the mobile robot is located, IBDMS and PLDMS can obtain the real-world coordinates of a mobile robot. Finally, the localization of a mobile robot can be shown on the two-dimensional map immediately. Thus, for the purpose of indoor robot localization, the proposed method does not need to use complicate pattern recognition methods, but just few simple estimation formulas.

2. Photography Methods

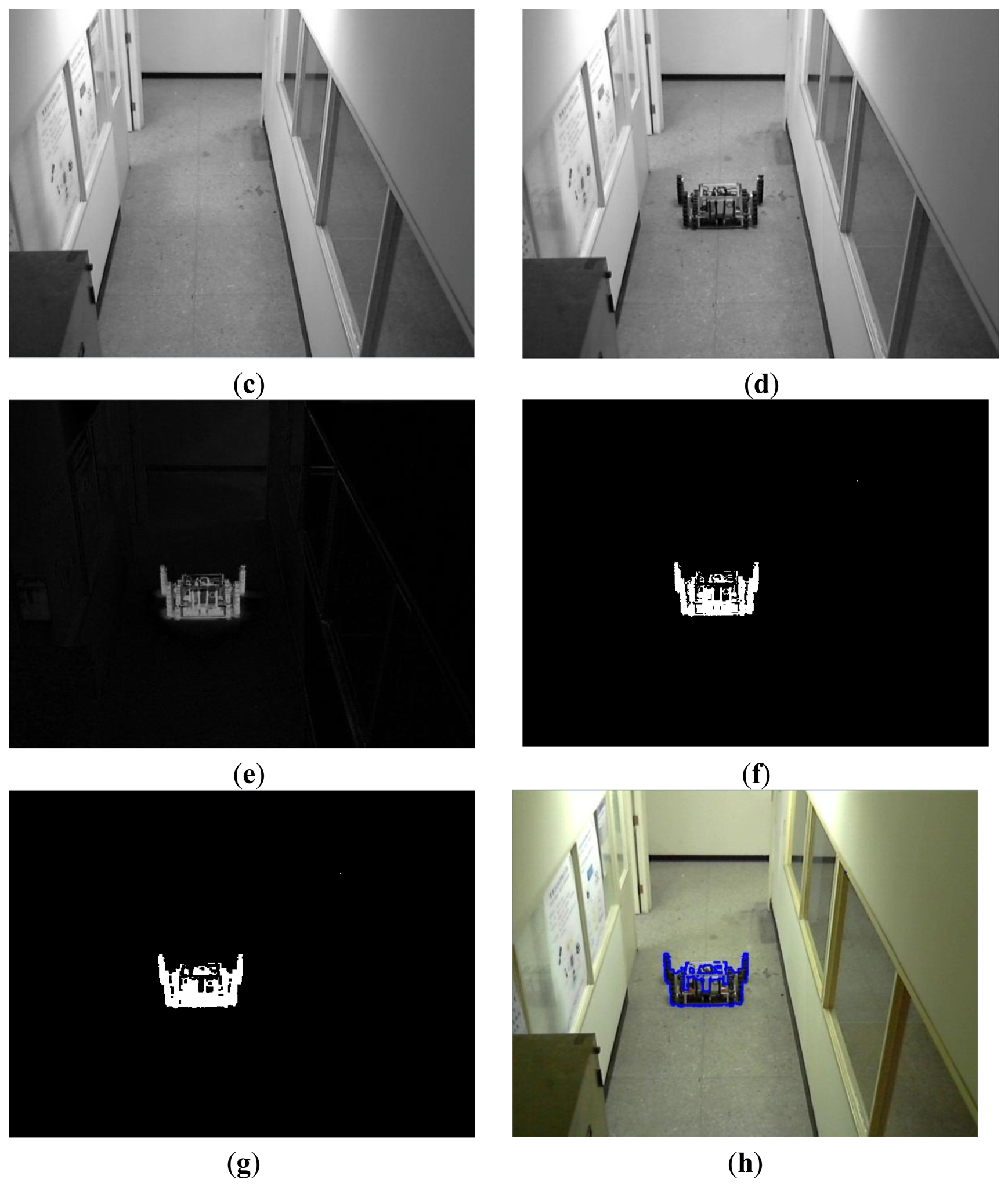

Before locating a robot by the proposed single-webcam localization technique, the acquired images must go through photograph processing for removing noise and unnecessary information. These techniques include a gray scale, a background subtraction, a morphological image processing, and a connected components labeling technique. Next, we briefly discuss the procedures [32] of these photographic correction techniques used in this paper.

2.1. Camera Calibration

Distortion could happen in captured images, especially is cheap webcams are used. To attenuate distortion of the captured images and thus increase the accuracy of the robot location task, the camera calibration should be done before the localization is attempted. OpenCV has taken into account the radial and tangential factors for the image distortion problem. The radial factor can be calculated by the following equations:

The tangential distortion can be corrected via the equations as follows:

In Equations (1–4) the pixel (x,y) is the image coordinate in the input image and (xcorrected, ycorrected) is the image coordinate in the corrected output image. The distortion coefficient vector can be represented as cdi=[k1 k2 p1 p2 k3] Moreover, the unit conversion can be represented as:

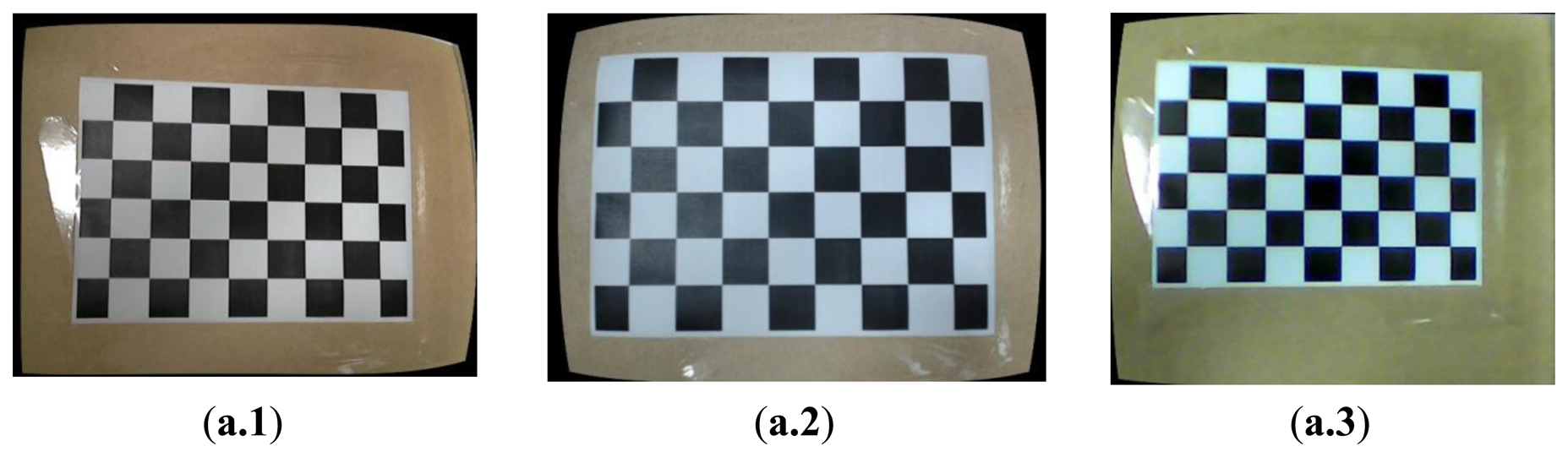

Where w is explained by the use of homography coordinate system (and w=zbefore), fx and fy are the camera focal length, and cx and cy are the optical centers expressed in pixels coordinates. After calculating the camera Equation (5) and the distortion coefficient cdi, the functions initUndistortRectifyMap() and the remap() can calibrate the distorted images. Figure 1a shows the images before the calibration done for three webcams, and Figure 1b shows the images after the calibration procedure. For the 1st webcam (HD Webcam C310, Logitech, Lausanne, Switzerland) in our experimental environment, the distortion coefficient vector is:

For the 2nd webcam (HD Pro Webcam C920, Logitech, Lausanne, Switzerland) in our experimental environment, the distortion coefficient vector is:

For the 3rd webcam (HD Webcam PC235, Ronald, Osaka, Japan) in our experimental environment, the distortion coefficient vector is:

2.2. Image Segmentation

In a grayscale image, the value of each pixel carries only intensity information. It is known as a black-and-white image, which is composed exclusively of shades of gray. Black is at the weakest intensity and white is at the strongest one. The gray scale technique can change a color image into a black-and-white image. The luminance f1(x, y) of the pixel(x, y) is described as:

Subtracting a binary acquired image fcurrent(x, y) from a binary background image fbg(x, y), we can obtain a binary foreground image as:

2.3. Morphological Image Processing

After the process of image segmentation, discontinuous edges and noise may happen in a foreground image. These will cause wrong judgments during object identification. Therefore, this paper utilizes some morphological image processing operations, such as dilation, erosion, opening and closing, in order to enable the underlying shapes to be identified and optimally reconstruct the image from their noisy precursors.

2.4. Connected-Components Labeling

The aim of connected-component labeling is to identify connected-components that share similar pixel intensity values, and then to connect them with each other. The connected-component labeling scans an image and groups pixels into one or more components according to pixel connectivity. Once all groups are determined, each pixel is labeled with a grey level on the basis of the component.

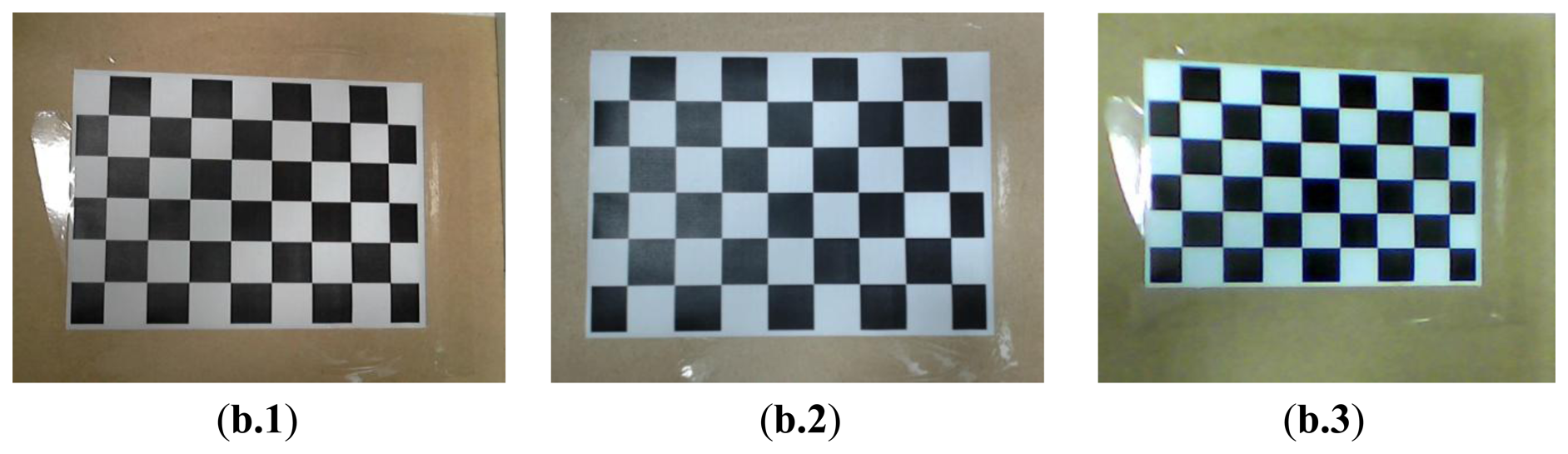

According to the aforementioned discussions, we can locate a robot in the captured image in an image-domain. Figure 2 shows the overall schemes of the image processing, and the experimental results are shown in Figure 3. In Figure 4, Rcenter is the center of the robot in the processed image and can be easily calculated by the simple average method. In this paper, Rcenter stands for the center-coordinate of the robot in the image-domain.

3. Mobile Robot Localization System with Single Webcam

After the captured images go through image processing, we can locate the robot in the image-domain. Then, we should calculate the coordinates of the robot in the image. That is, two distances, the x-axis and the y-axis, should be determined: 1. di represents the distance between Rcenter and the webcam: 2. wi represents the distance between Rcenter and the wall, as shown in Figure 4. In this paper the IBDMS is used to calculate the distance di, and the PLDMS is used to calculate the distance wi.

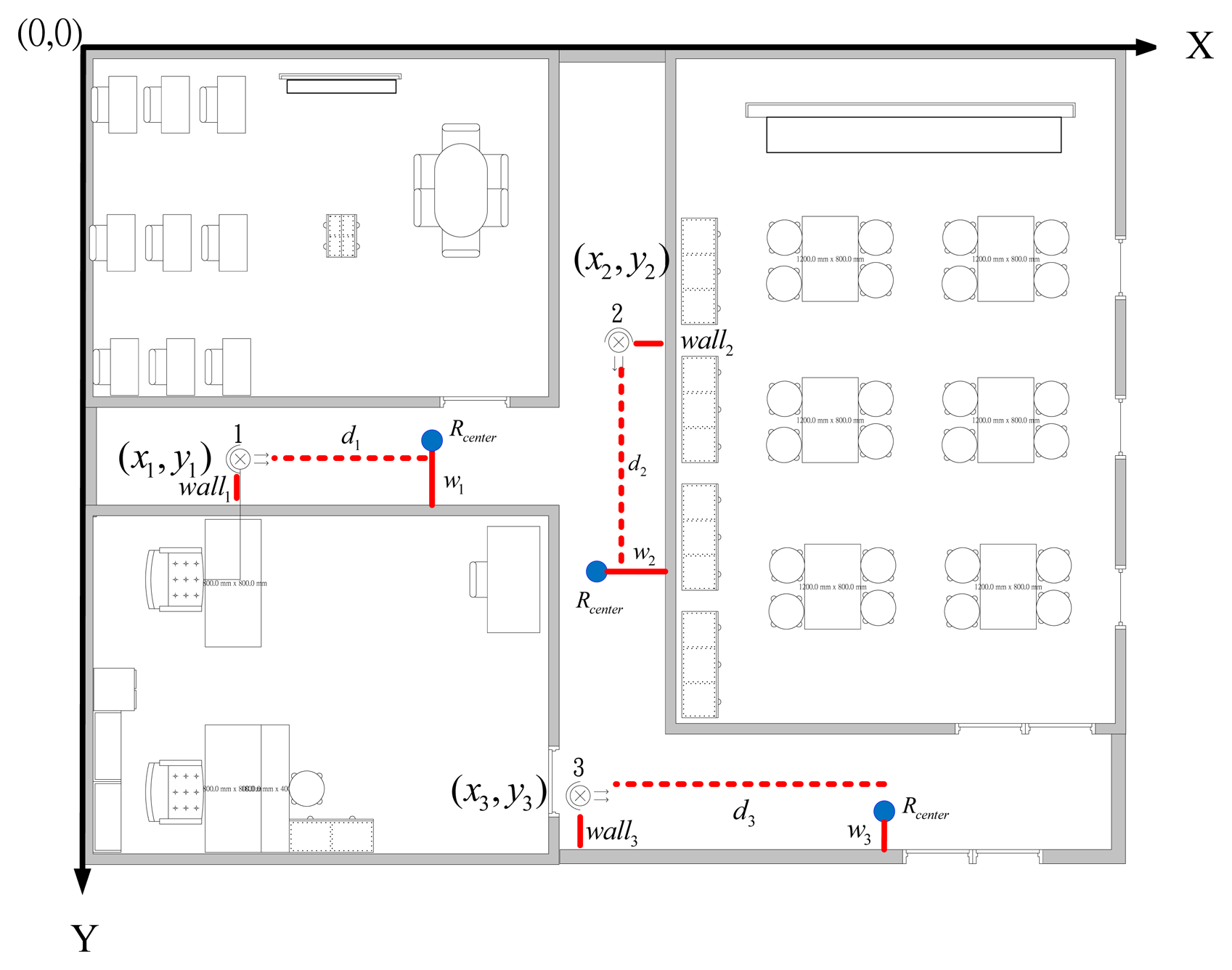

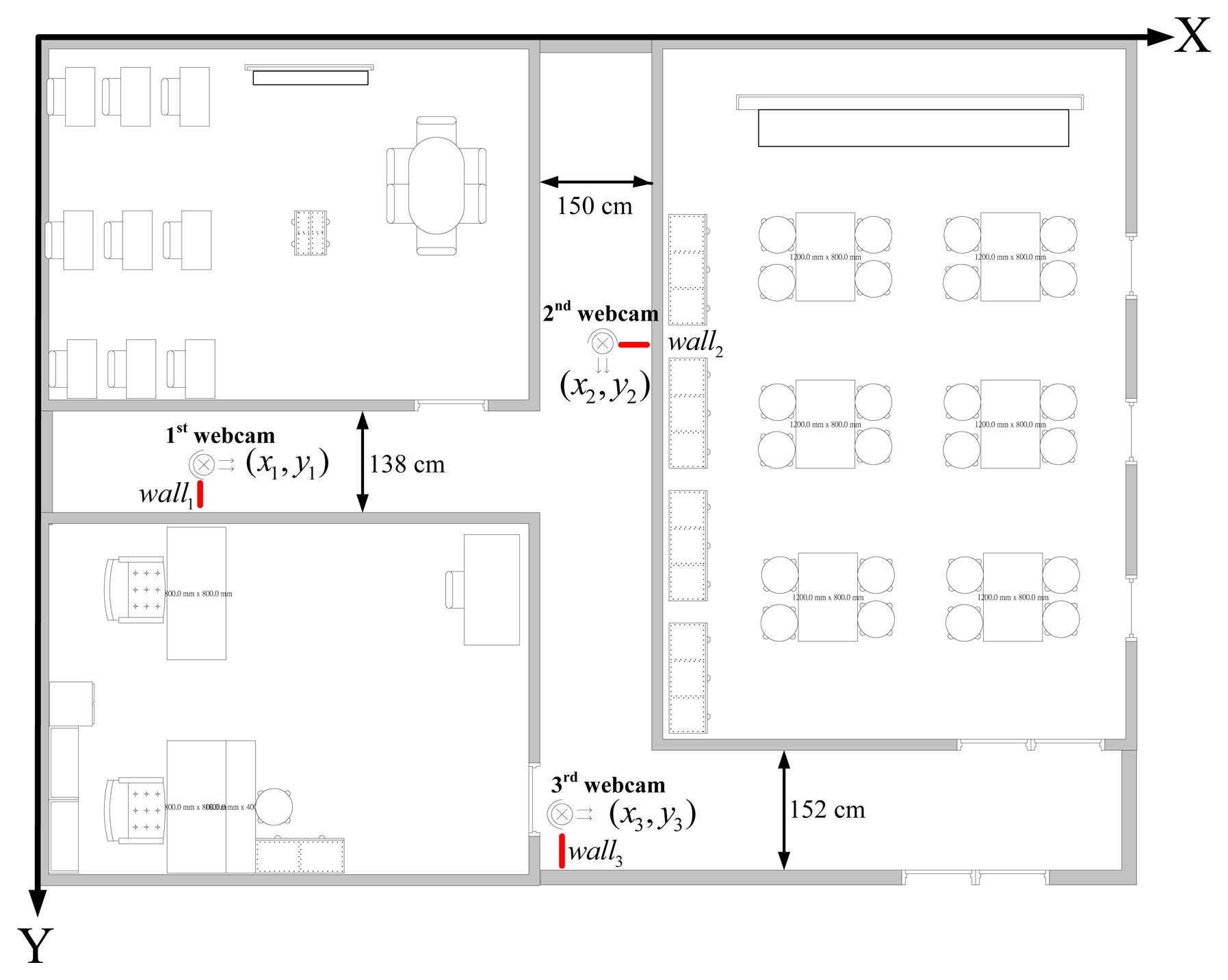

3.1. Experimental Map

Figure 5 shows the map of our experimental environment. In the map, the coordinate of the first webcam is set to (x1, y2), the second one is set to (x2, y2), and the third one is set to. (x3, y3) walli (i=1,2,3) represents the distance between the ith webcam and the wall. di (i=1,2,3) represents the distance between the ith webcam and Rcenter. wi (i=1,2,3) is the distance between the wall and Rcenter. in the ith webcam covering area. Clearly, the coordinates of webcams (xi,yi) and the distances walli are given. Therefore, if distances di and wi can be calculated, we can easily represent the robot with its coordinate in the area, which is covered by one of the set-up webcams. The equations for calculating the coordinate of the robot are defined in Equations (15–17):

Where (xi,yi) (i=1,2,3) are the coordinate of the robot in the covering area of the ith webcam.

3.2. Calculation of Distance di with IBDMS [24–29]

IBDMS is developed in this paper for the purpose of calculating the distances di (i=1,2,3), which can work on a single webcam and only depends on a known-dimension rectangle, i.e., a ground tile. The idea of IBDMS is from the triangular relationship, shown in Figure 6, that is we first capture an image incorporating a known-dimension rectangle, and then the proportion relationship between the real-dimension and the image-dimension of the rectangle can be found. According to the proportion relationship, the distance di can then be easily calculated. Figure 6 shows the IBDMS set-up. It only requires a webcam and two given-location points A and B, which could be two corners of a ground tile. hs is a constant parameter of the webcam O is the intersection of the optical axis and the plane. The targeted objects which lie on the plane and are perpendicular to the optical axis can be measured by simple trigonometric function derivations. Hence, ho can expressed as:

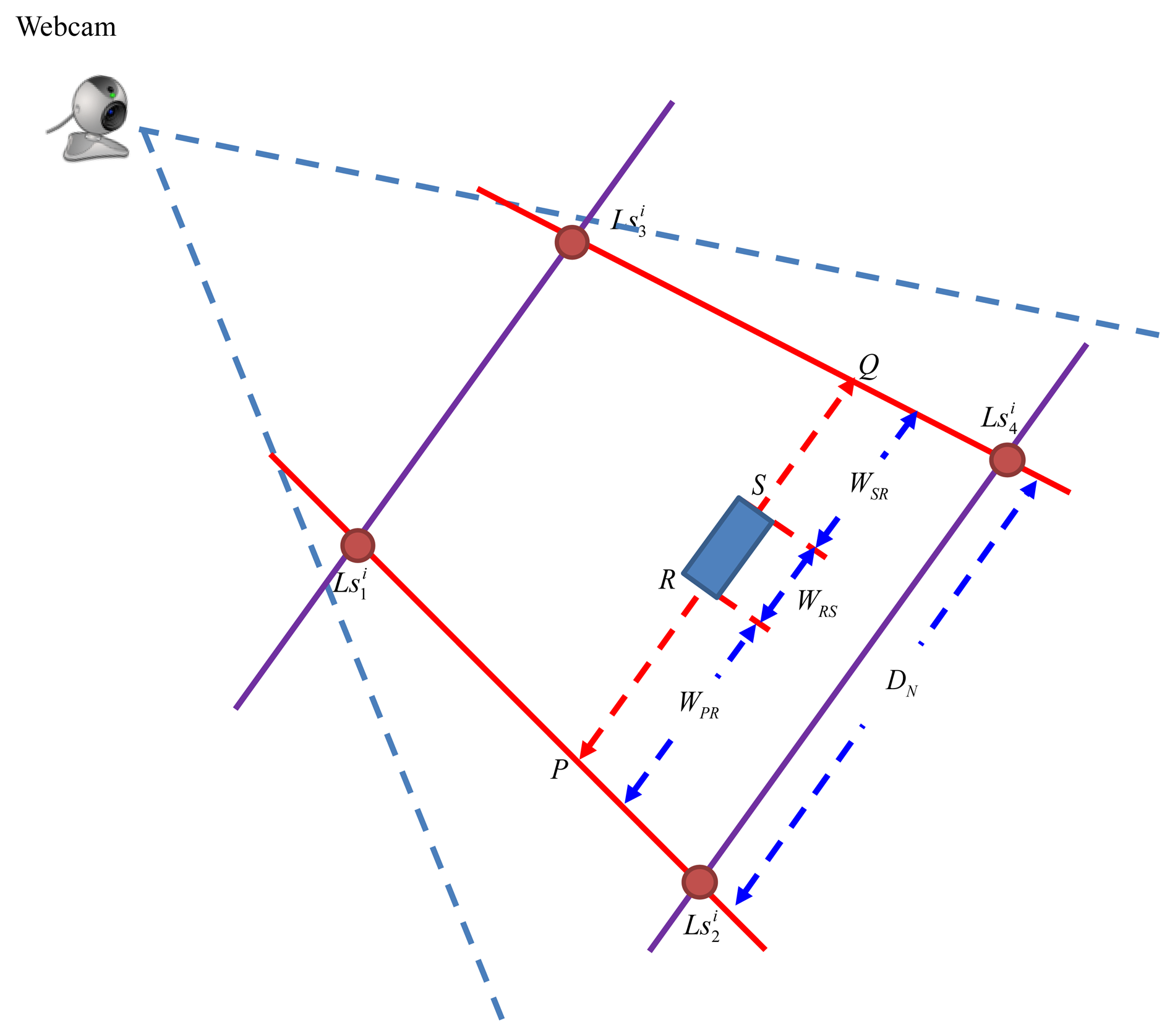

3.3. Calculation of Distance wi with PLDMS

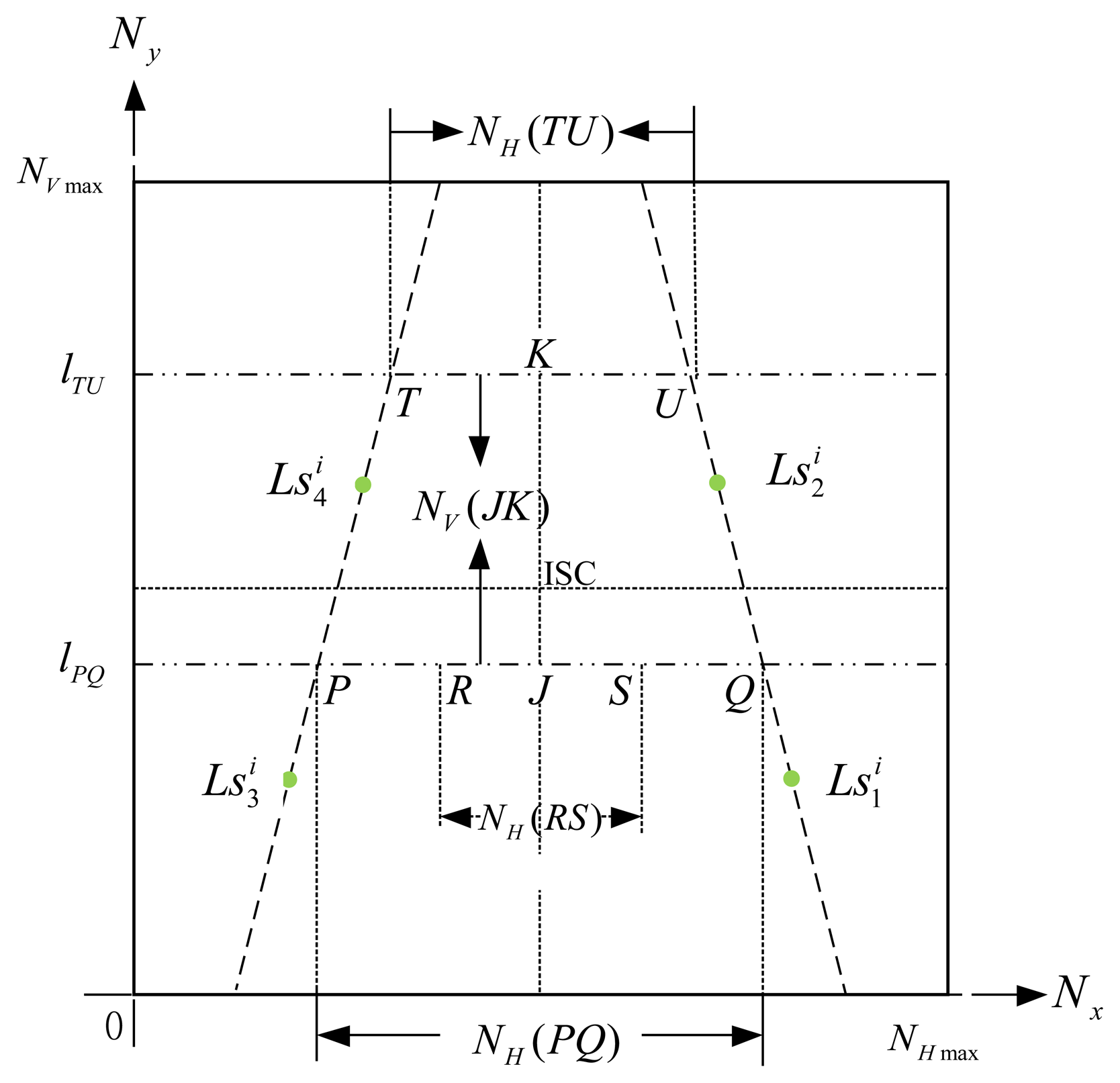

Inheriting the concept of the IBDMS, a parallel-line distance measurement system (PLDMS) for measuring the distances wi is developed. Figure 7 shows the schematic diagram of the PLDMS. In PLDMS, four points, , , , and , are considered as the reference points. In Figure 7, we draw a pair of parallel lines ( and ) through these points DN is the width between and . Furthermore, in the image-domain, as Figure 7, the linear proportion of the line ( ) and the line ( ) can be defined as:

In Figure 8, points R and S could be two corners of a known-dimension rectangle, i.e., a ground tile, in a captured image (image-domain), and P and Q are the cross points between the scan line lPQ and the lines and . For the reason that P, Q, R, and S are laying at the same scan line, we can easily calculate the points P and Q by Equations (19) and (20), where P, S and, ( , ) (j=1,2,3,4) have been given by the image processes. In our experiments, the width between R and S means the width of the robot. Furthermore, the width WRS can be calculated by the proportion relationship, which is defined as:

3.4. Overall Procedures of the Proposed Localization Method

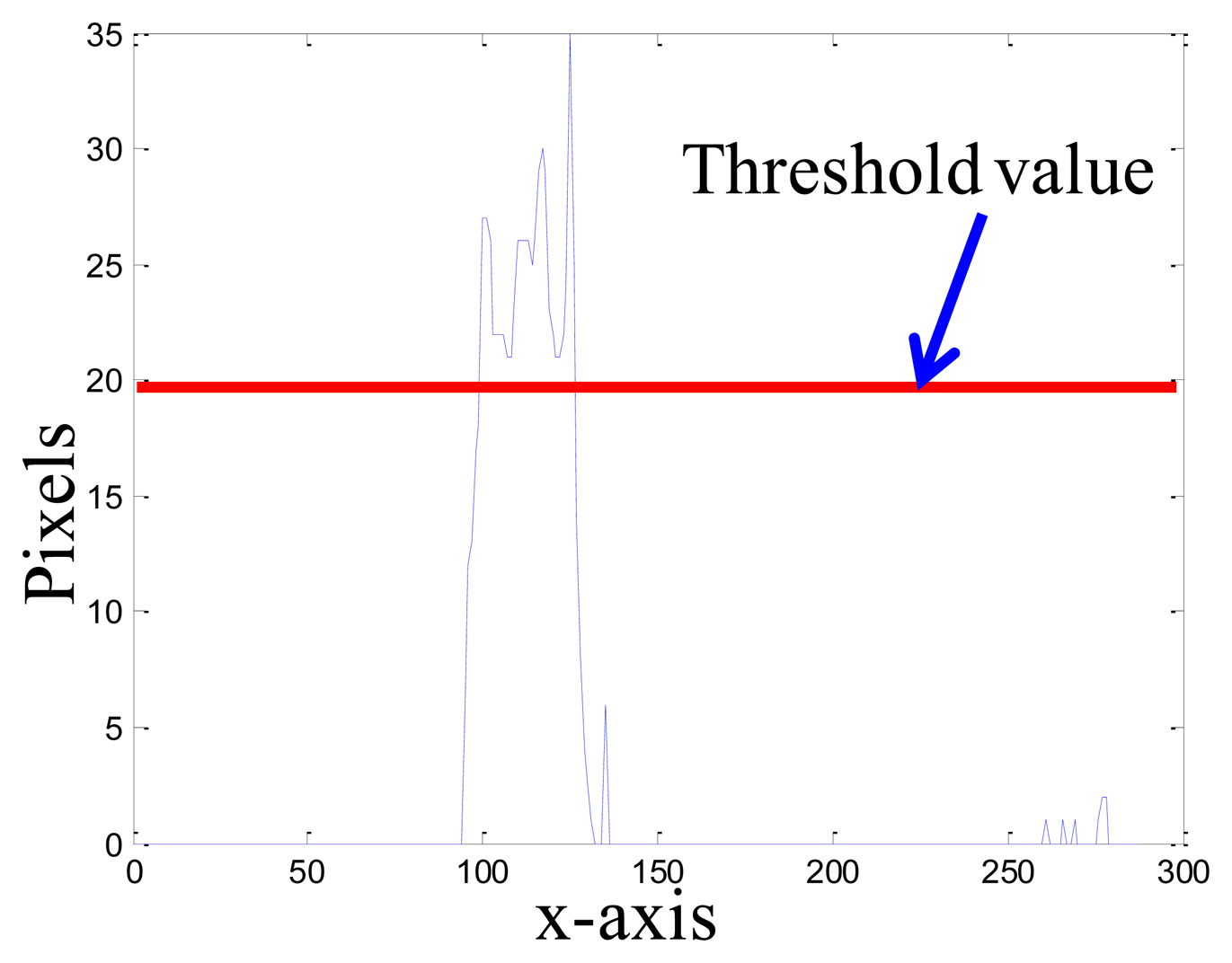

Figure 9 shows the overall scheme of the proposed robot localization method, where ts is the update time-interval for the background image. The moment of updating the background image is that if the segmentation horizontal projection of the binary foreground image ffg(x, y) is bigger than the threshold value t1, the captured image fcurrent(x, y) is set to a new background image; otherwise, we keep the original background image. In Equation (23), the function s• means the process of the segmentation horizontal projection, as shown in Figure 10.

In the image processing, the coordinates of the robot can be calculated through the methods of gray-scale transformation, background subtraction, binarization, morphological processing, connected components labeling, and averaging method. The background image is updated in every ts seconds with the update Equation (23). After image processing, the coordinate in the image-domain goes through the IBDMS for calculating the distance ds (Equation (18)) and the PLDMS for calculating the width wi (Equation (22)). Then, we can locate the robot in the real-world domain.

4. Experimental Results

4.1. Set-Up of the Experimental Environment

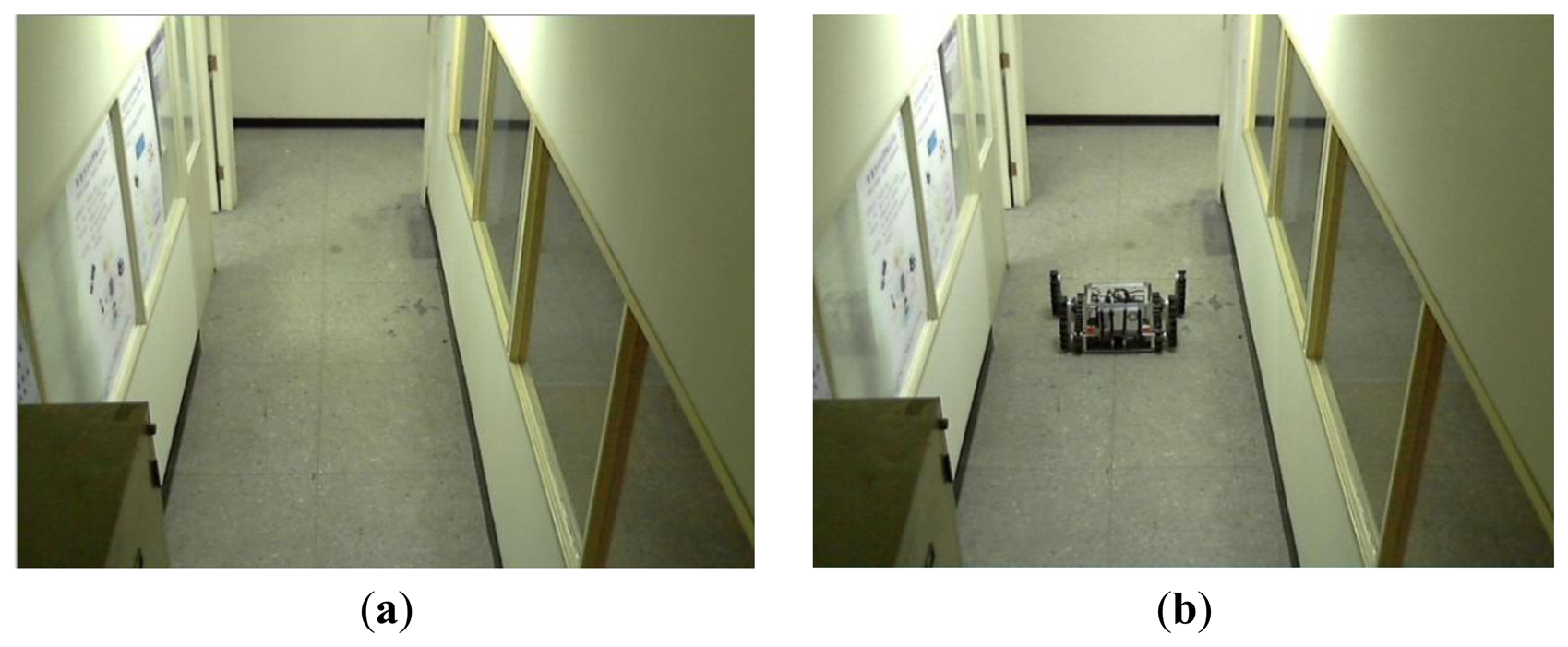

Figure 11 shows the map of our experimental environment. In this map three webcams, which are explained in Section 2.1, are used to cover the possible working area of the robot. The coordinates (x1; y1) of the first webcam are (279; 570); (x2; y2) are (755; 465); and (x3; y3) are (650; 1,030). Because of limitation of the USB 2.0 transmission speed, the resolution of the first webcam is 640 × 480; the second webcam is 640 × 480; and the third webcam is 480 × 360.The aisle widths are shown in Figure 11, and are 138, 150 and 152 cm, respectively. The background image is updated in every 5 s. Threshold value t1 of the updated background image is set to 19.5.

4.2. IBDMS and PLDMS Set-Up

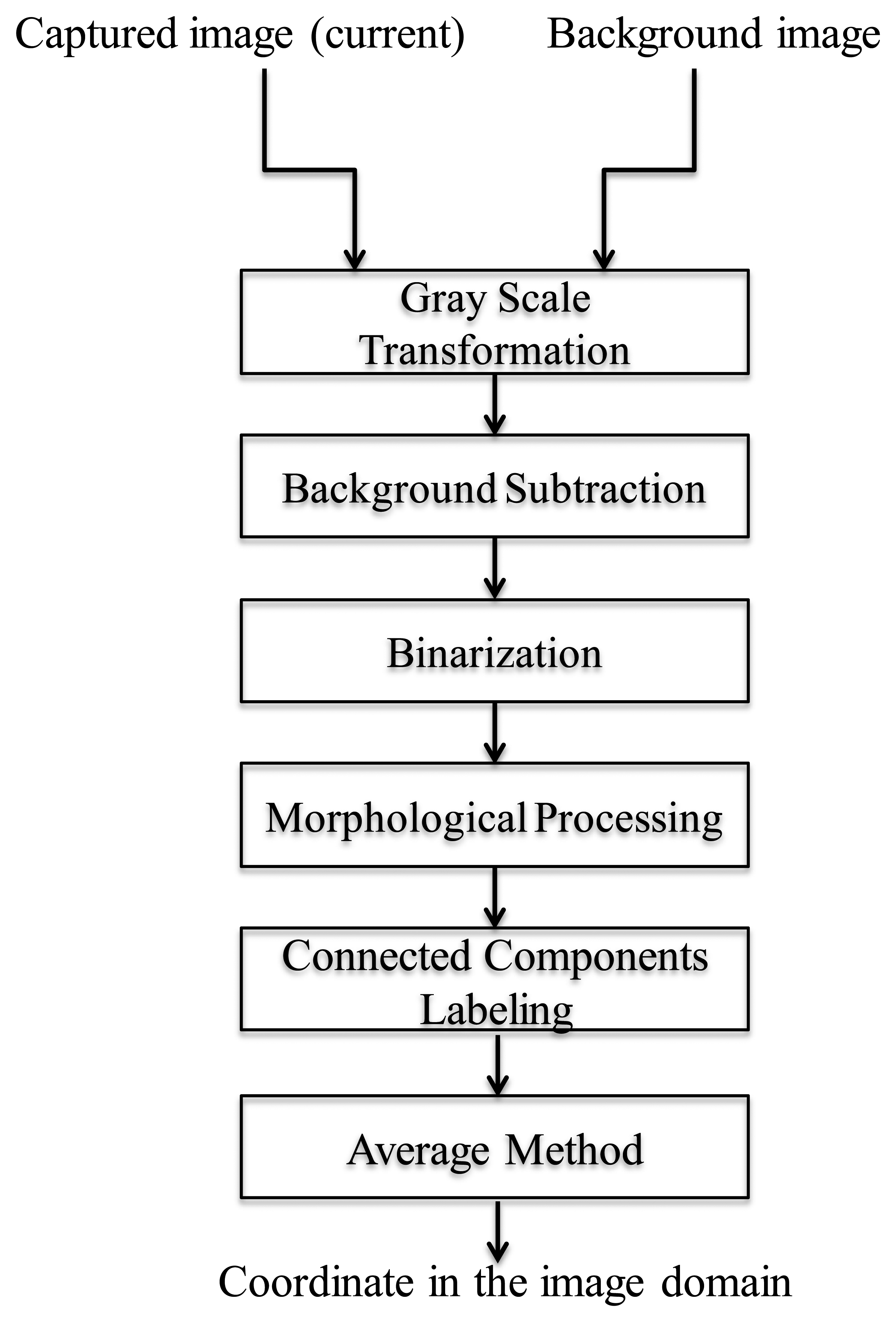

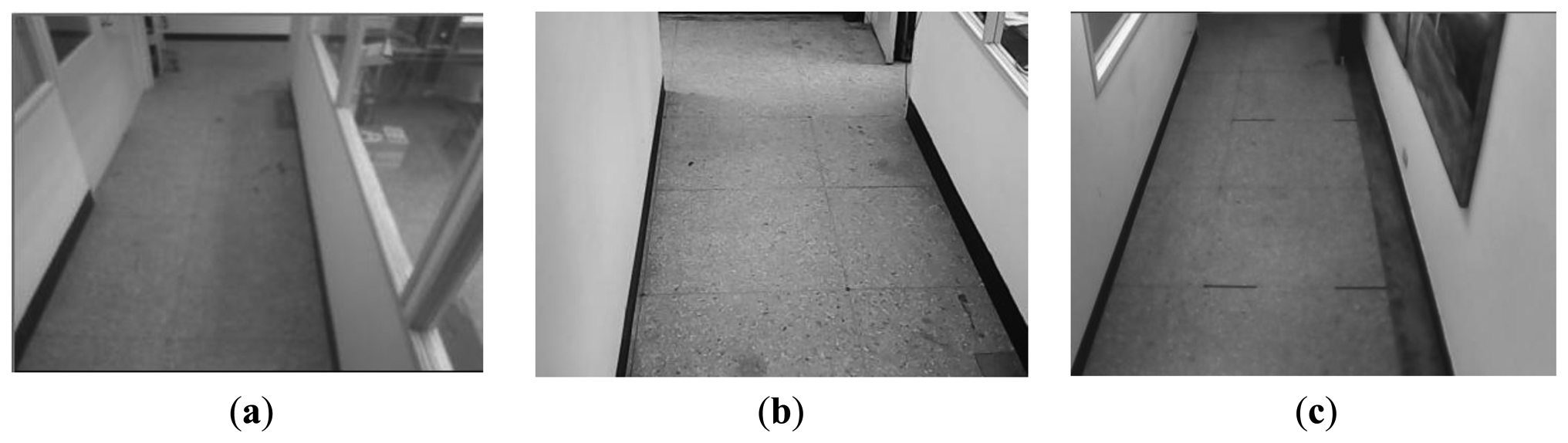

Some basic set-up steps must be performed for the IBDMS and PLDMS before we start the robot localization procedures. In order to alleviate the impact from the image noise, building the first background image is done taking the average of 150 consecutive images, and then the background subtraction method can much more effectively extract the foreground image. Besides, w low-pass filter is adopted to further refine the background image. The obtained background images are shown in Figure 12.

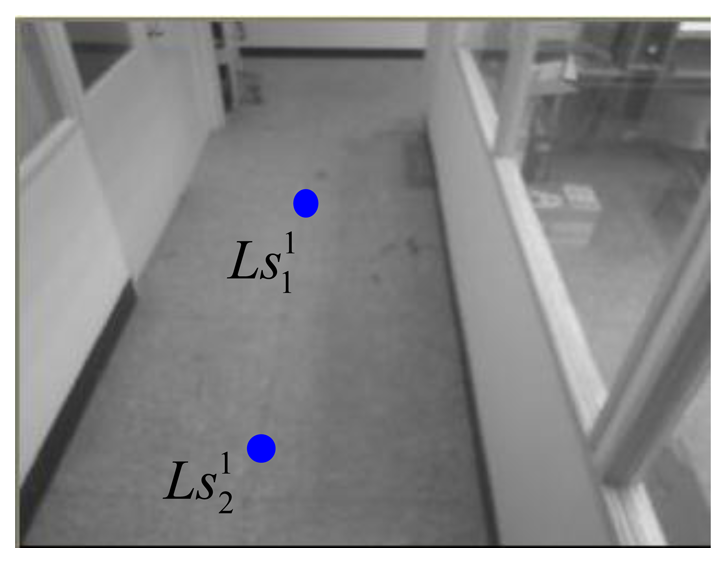

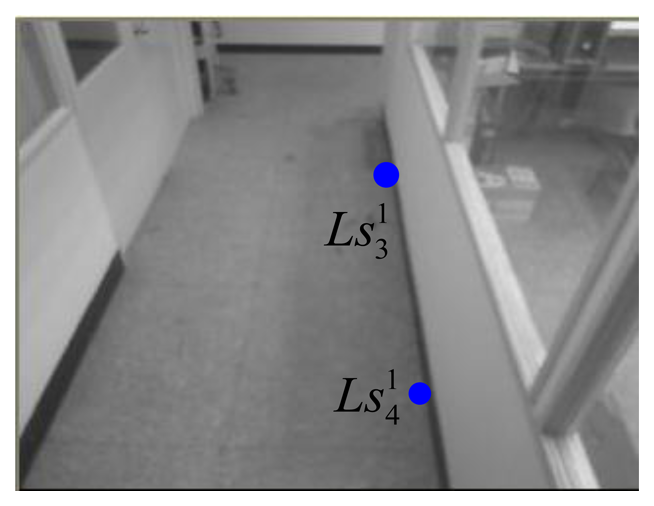

In the 1st webcam, as shown in Figures 13 and 14, four corners of the known-dimension ground tile are used to draw a pair of the virtual parallel line ( and ), whose deriving linear equations can be expressed as:

Similar to the setting procedures of the 1st webcam, the virtual parallel line ( and ) for the 2nd webcam can be expressed as:

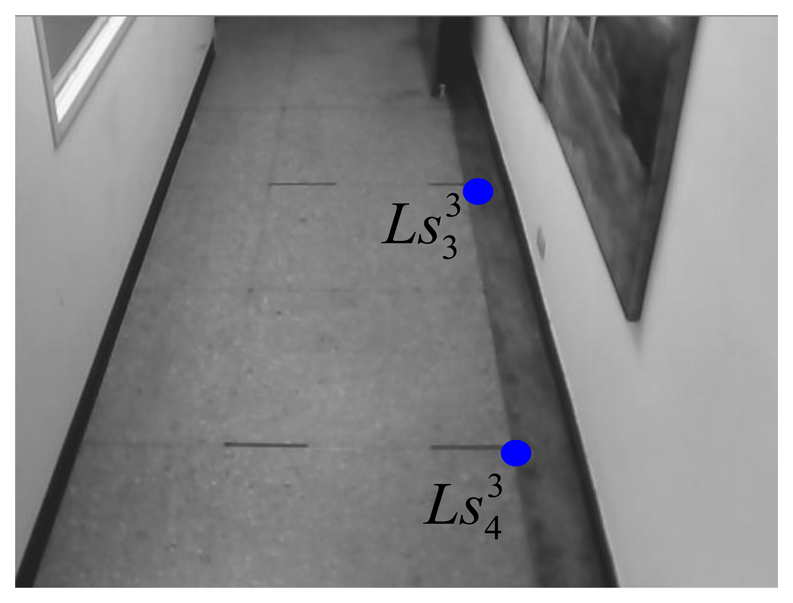

The virtual parallel line ( and ) for the 3rd webcam can be expressed as:

4.3. Experimental Results

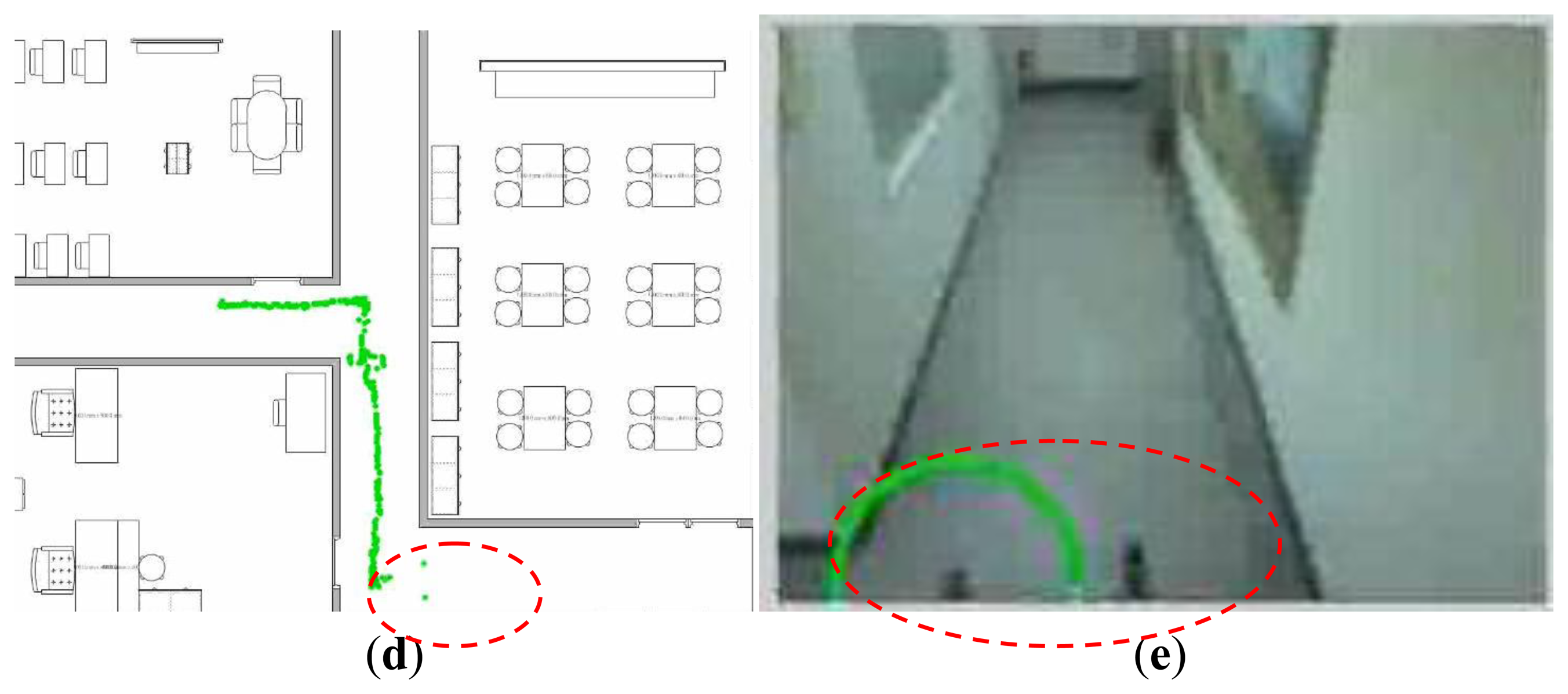

In our experiments, a remote-controlled track-robot moves through the monitored areas. The path of the robot is shown in Figure 19a. In Figure 19a, the circled locations, causing bigger errors as shown in Figure 19b, are the coordinates of the front arms of the robot at the moment of which the robot is moving into the covering area of the 2nd webcam, as shown in Figure 19c. In Figure 19d, a bigger error happens in the circled locations, which are the coordinates of the front arms of the mobile robot at the moment of which the robot is moving into the covering area of the 3rd webcam, as shown in Figure 19e. The error function used to show the ability of our proposed localization method is:

Under the conditions of the static monitored area, it is assumed that light sources and locations of walls and furniture are given. Light influence, therefore, can be easily attenuated through choosing appropriate factors in the image processing techniques. In this paper, we pay more attention to locating the moving robot by using single webcam and have not yet considered the situation of partial occlusions. In this static indoor environment, some image techniques [33–35] could be used to overcome temporary partial occlusion.

5. Conclusions

This paper proposes the use of IBDMS and PLDMS to locate a mobile robot in an indoor environment. Through the image processing and according to a known-dimension ground tile, the IBMDS and PLDMS used can calculate the coordinates of a moving tracked robot. Using this framework, we can quickly estimate the localization of the tracked robot. Furthermore, the experimental environment is easy to set up since only three parameters have to be defined, that is, the maximum pixel, the perspective, and the optical distance. Because the locations of webcams are fixed, we can utilize a simple background subtraction method to extract the data to attenuate the problem of computational burden. In addition, we use a low-pass filter and an on-line background updating method to reduce background noise, and we adopt the image morphology to acquire the robot's image information. This method does not use expensive high-resolution webcams and complex pattern recognition methods to identify the mobile robot, but rather just uses a simple formula to estimate distance. From the experimental results, the localization method is both reliable and effective.

Acknowledgments

This work was supported by the National Science Council, Taiwan, under Grants NSC 102-2221-E-003-009, NSC 102-2221-E-234-001-, and NSC 102-2811-E-011-019.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Kim, J.B.; Jun, H.S. Vision-based location positioning using augmented reality for indoor navigation. IEEE Trans. Consum. Electr. 2008, 54, 954–962. [Google Scholar]

- Lin, C.H.; King, C.T. Sensor-deployment strategies for indoor robot navigation. IEEE Trans. Syst. Man Cybernet 2010, 40, 388–398. [Google Scholar]

- Hinckley, K.; Sinclair, M. Touch-Sensing Input Devices. Proceedings of the Conference on Human Factors in Computing Systems, Pittsburgh, PA, USA, 15–20 May 1999.

- Partridge, K.; Arnstein, L.; Borriello, G.; Whitted, T. Fast Intrabody Signaling. Demonstration at Wireless and Mobile Computer Systems and Applications, Monterey, CA, USA, 7–8 December 2000.

- Hills, A. Wireless andrew. IEEE Spectr. 1999, 36, 49–53. [Google Scholar]

- Want, R.; Hopper, A.; Falcao, V.; Gibbons, J. The active badge location system. ACM Trans. Inform. Syst. 1992, 10, 91–102. [Google Scholar]

- Bahl, P.; Padmanabhan, V. RADAR: An In-Building RF Based User Location and Tracking System. Proceedings of Nineteenth Annual Joint Conference of the IEEE Computer and Communications Societies, Tel Aviv, Israel, 26–30 March 2000.

- Starner, T.; Schiele, B.; Pentland, A. Visual Context Awareness via Wearable Computing. Proceedings of the International Symposium on Wearable Computers, Pittsburgh, PA, USA, 19–20 October 1998; pp. 50–57.

- Ni, L.M.; Lau, Y.C.; Patil, A.P. LANDMARC: Indoor location sensing using active RFID. Wirel. Netw. 2004, 10, 701–710. [Google Scholar]

- Subramanian, V.; Burks, T.F.; Arroyo, A.A. Development of machine vision and laser radar based autonomous vehicle guidance systems for citrus grove navigation. Comput. Electr. Agric. 2006, 53, 130–143. [Google Scholar]

- Barawid, O.C., Jr.; Mizushima, A.; Ishii, K. Development of an autonomous navigation system using a two-dimensional laser scanner in an orchard application. Biosyst. Eng. 2007, 96, 139–149. [Google Scholar]

- Sato, T.; Sugimoto, M.; Hashizume, H. An extension method ofphase accordance method for accurate ultrasonic localization of movingnode. IEICE Trans. Fundament. Electr. Commun. Comput. Sci. 2010, J92-A, 953–963. [Google Scholar]

- Carmer, D.C.; Peterson, L.M. Laser radar in robotics. Proc. IEEE 1996, 84, 299–320. [Google Scholar]

- Thrun, S.; Burgard, W.; Fox, D. Probabilistic Robotics; MIT Press: Cambridge, UK, 2005. [Google Scholar]

- Hightower, J.; Want, R.; Borriello, G. SpotON: An Indoor 3D Location Sensing Technology Based on RF Signal Strength; UW-CSE00-02-02; University of Washington: Seattle, WA, USA, 2000. [Google Scholar]

- Lin, T.H.; Chu, H.H.; You, C.W. Energy-efficient boundary detection for RF-based localization system. IEEE Trans. Mob. Comput. 2009, 8, 29–40. [Google Scholar]

- Lorincz, K.; Welsh, M. MoteTrack: A robust, decentralized approach to RF-based location tracking. Pers. Ubiq. Comput. 2007, 11, 489–503. [Google Scholar]

- Dellaert, F.; Fox, D.; Burgard, W.; Thrun, S. Monte Carlo Localization for Mobile Robots. Proceedings of the 1999 IEEE International Conference on Robotics and Automation, Detroit, MI, USA, 10–15 May 1999; pp. 1322–1328.

- Thrun, S.; Bücken, A.; Burgard, W.; Fox, D.; Fröhlinghaus, T.; Henning, D.; Hofmann, T.; Krell, M.; Schmidt, T. Map Learning and High-Speed Navigation in RHINO; MIT Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Li, S.; Liu, B.; Chen, B.; Lou, Y. Lou neural network based mobile phone localization using bluetooth connectivity. Neural Comput. Appl. 2013, 23, 667–675. [Google Scholar]

- Li, S.; Qin, F. A dynamic neural network approach for solving nonlinear inequalities defined on a graph and its application to distributed. Neurocomputing 2013, 117, 72–80. [Google Scholar]

- Martins, M.H.T.; Chen, H.; Sezaki, K. Otmcl: Orientation Tracking-Based Monte Carlo Localization for Mobile Sensor Networks. Proceedings of the Sixth International Conference on Networked Sensing Systems, Pittsburgh, PA, USA, 17–19 June 2009; pp. 1–8.

- Cheok, A.D.; Yue, L. A novel light-sensor-based information transmission system for indoor positioning and navigation. IEEE Trans. Instrum. Meas. 2011, 60, 290–299. [Google Scholar]

- Hsu, C.C.; Lu, M.C.; Wang, W.Y.; Lu, Y.Y. Three dimensional measurement of distant objects based on laser-projected CCD images. IET Sci. Meas. Technol. 2009, 3, 197–207. [Google Scholar]

- Mertzios, B.G.; Tsirikolias, I.S. Applications of Coordinate Logic Filters in Image Analysis and Pattern Recognition. Proceedings of the 2nd International Symposium on Image and Signal Processing and Analysis, Pula, Croatia, 19–21 June 2001; pp. 125–130.

- Lu, M.C.; Wang, W.Y.; Chu, C.Y. Image-based distance and area measuring systems. IEEE Sens. J. 2006, 6, 495–503. [Google Scholar]

- Wang, W.Y.; Lu, M.C.; Kao, H.L.; Chu, C.Y. Nighttime Vehicle Distance Measuring Systems. IEEE Trans. Circ. Syst. II: Exp. Brief. 2007, 54, 81–85. [Google Scholar]

- Wang, W.Y.; Lu, M.C.; Wang, T.H.; Lu, Y.Y. Electro-optical distance and area measuring method. WSEAS Trans. Syst. 2007, 6, 914–919. [Google Scholar]

- Hus, C.C.; Lu, M.C.; Wang, W.Y.; Lu, Y.Y. Distance measurement based in pixel variation of CCD image. ISA Trans. 2009, 40, 389–395. [Google Scholar]

- Osugi, K.; Miyauchi, K.; Furui, N.; Miyakoshi, H. Development of the scanning laser radar for ACC system. JSAE Rev. 1999, 20, 549–554. [Google Scholar]

- Nakahira, K.; Kodama, T.; Morita, S.; Okuma, S. Distance measurement by an ultrasonic system based on a digital polarity correlator. IEEE Trans. Instrum. Meas. 2001, 50, 1478–1752. [Google Scholar]

- Kawaji, H.; Hatada, K.; Yamasaki, T.; Aizawa, K. An Image-Based Indoor Positioning for Digital Museum Applications. Proceedings of the 16th International Conference on Virtual Systems and Multimedia (VSMM), Seoul, Korea, 20–23 October 2010.

- Cucchiara, R.; Grana, C.; Tardini, G. Probabilistic People Tracking for Occlusion Handling. Proceedings of the 17th International Conference on Pattern Recognition, Cambridge, UK, 23–26 August 2004; pp. 132–135.

- Eng, H.L.; Wang, J.X.; Kam, A.H. A Bayesian Framework for Robust Human Detection and Occlusion Handling Human Shape Model. Proceedings of the International Conference on Pattern Recognition, Cambridge, UK, 23–26 August 2004; pp. 257–260.

- Tsai, C.Y.; Song, K.T. Dynamic visual tracking control of a mobile robot with image noise and occlusion robustness. Image Vis. Comput. 2007, 27, 1007–1022. [Google Scholar]

| Actual Coordinate | Measured Coordinate | Error (cm) | Webcams |

|---|---|---|---|

| (610,592) | (613,588) | 5.00 | Webcam I |

| (627,611) | (626,609) | 2.24 | Webcam I |

| (636,560) | (630,559) | 8.08 | Webcam I |

| (648,591) | (641,594) | 7.62 | Webcam I |

| (684,584) | (676,578) | 10.00 | Webcam I |

| (736,891) | (738,883) | 8.24 | Webcam II |

| (774,912) | (775,901) | 11.04 | Webcam II |

| (759,918) | (756,906) | 12.37 | Webcam II |

| (763,944) | (759,932) | 12.65 | Webcam II |

| (808,966) | (811,952) | 12.37 | Webcam II |

| (1001,779) | (997,779) | 4.00 | Webcam III |

| (1236,1076) | (1225,1074) | 11.18 | Webcam III |

| (1250,1019) | (1241,1021) | 9.49 | Webcam III |

| (1271,1063) | (1262,1065) | 11.18 | Webcam III |

| (1250,1041) | (1241,1039) | 9.22 | Webcam III |

© 2014 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license ( http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Li, I.-H.; Chen, M.-C.; Wang, W.-Y.; Su, S.-F.; Lai, T.-W. Mobile Robot Self-Localization System Using Single Webcam Distance Measurement Technology in Indoor Environments. Sensors 2014, 14, 2089-2109. https://doi.org/10.3390/s140202089

Li I-H, Chen M-C, Wang W-Y, Su S-F, Lai T-W. Mobile Robot Self-Localization System Using Single Webcam Distance Measurement Technology in Indoor Environments. Sensors. 2014; 14(2):2089-2109. https://doi.org/10.3390/s140202089

Chicago/Turabian StyleLi, I-Hsum, Ming-Chang Chen, Wei-Yen Wang, Shun-Feng Su, and To-Wen Lai. 2014. "Mobile Robot Self-Localization System Using Single Webcam Distance Measurement Technology in Indoor Environments" Sensors 14, no. 2: 2089-2109. https://doi.org/10.3390/s140202089