1. Introduction

A third of the world’s worst aquatic invasive species are directly linked to the aquarium and ornamental industry [

1]. Once these species are introduced into natural waterways, they extensively modify the biological communities and disrupt many of the important ecological and cultural services that we have come to depend upon from our freshwater inland ecosystems. They also cause significant economic impacts, both in terms of the costs associated with management/eradication, as well as the overall “devaluation” that is associated with presence of the invader. Rockwell [

2] estimated that over US

$100 million is being spent annually in the United States to control and manage invasive aquatic plants.

Water soldier (

Stratiotes aloides) (

Figure 1) is a perennial aquatic plant species native to Europe and northwest Asia that was introduced into the Trent-Severn Waterway in Ontario, Canada, likely as a result of its popularity as an ornamental plant in water gardens before being banned [

3]. It grows most effectively at water depths up to 2.5 m, but has been found at depths up to 5 m. It becomes buoyant during the summer season, with plants reaching above or near the surface where it can form dense mats that crowd out native vegetation, alter water chemistry, and consequently modify phytoplankton populations and potentially other aquatic organisms. Its sharp serrated leaves can injure swimmers, and generally the plant hinders various other aquatic activities, such as boating and angling [

3]. Because the Trent-Severn Waterway is the only place in North America where water soldier is known to occur, it is considered a high priority to prevent it from spreading to new locations, notably the nearby Great Lakes. Since water soldier was discovered in the waterway, resource managers and their partners have been trying to eradicate the plant through repeated large-scale applications of chemical herbicide (diquat), but have been met with mixed results as the plant continues to show up farther downstream.

Current boat-based detection and monitoring methods in the area of infestation and locations downstream include both systematic (whole lake surveys) and opportunistic monitoring (incidental spotting); however, the type of data collected, although helpful, can be limited and unreliable [

4]. In particular, the point-based sampling method provides incomplete coverage of surveyed areas, and deeper (>1 m) submerged vegetation can sometimes be difficult to detect. Due to the nature of the Trent-Severn Waterway, many sampling sites are inaccessible, as they are too shallow, blocked by obstacles, or in remote regions, making boat-based monitoring time-consuming and expensive [

5].

In recent years, there has been burgeoning use of small drone aircraft systems to conveniently collect timely, very high spatial-resolution (<20 cm) imagery of hard-to-access or -navigate aquatic environments, including wetlands [

6,

7,

8], bogs [

9,

10,

11], lakes [

12,

13,

14], rivers [

15,

16,

17], coasts [

18,

19,

20], and general hydrological and water resource monitoring [

21,

22,

23]. As high-resolution drone imagery tends to be laborious to analyze manually, increasingly sophisticated approaches have been developed and tested to automate such tasks as aquatic vegetation detection and classification, many of them founded on object-based image analysis (OBIA) [

24,

25,

26,

27,

28]. However, few studies have involved the distinct challenge of automated classification of submerged vegetation [

29,

30,

31]. Moreover, although drones themselves are becoming increasingly accessible and easy to use, there is a need to establish more accessible workflows for efficiently analyzing the imagery they generate.

Building upon a previous pilot trial [

32], our aim was to develop an efficient and readily accessible drone imagery acquisition and OBIA workflow for monitoring emergent and submerged water soldier. In this paper, we present and assess our workflow, which we believe could be broadly adapted to other shallow-water aquatic vegetation monitoring operations. The principal elements of the workflow are: (1) collection of radiometrically calibrated multispectral imagery, including a near-infrared (NIR) band; (2) multistage segmentation of the imagery involving an initial separation of above-water from submerged features; and (3) automated classification of image features by means of a supervised machine-learning classifier.

3. Results

Classification performance under varying parameters (texture kernel size and RF parameters) is shown for above-water features in

Table 2 and for submerged features in

Table 3. Overall higher accuracy was achieved for above-water features (OA = 89–92%, kappa = 84–88%) than submerged features (OA = 74–84%, kappa = 58–75%), with kappa values indicating “almost perfect” agreement between manual and automated classifications for above-water features and “substantial” agreement for submerged features [

40]. For above-water features, classifications based on a 5 × 5 pixel texture kernel consistently outperformed those based on a 7 × 7 pixel kernel by a slight margin (

Table 2), while for submerged features neither kernel size clearly outperformed the other, although the overall top three submerged classifications were based on a 7 × 7 pixel kernel (

Table 3). Varying the “maximum depth of the tree” and “maximum number of trees in the forest” RF parameters generally had little discernible effect, with the exception of a significant boost in submerged classification performance when increasing the former parameter from 5 to 10 (

Table 3). Otherwise, classification performance seemed to plateau at a maximum tree depth of 15 for both above-water and submerged features (

Table 2 and

Table 3). For a given maximum tree depth, there was overall no clear effect of varying the maximum number of trees, although the top classifications of above-water and submerged features had maximums of 200 and 250 trees, respectively, suggesting marginal benefit from increasing this parameter (

Table 2 and

Table 3).

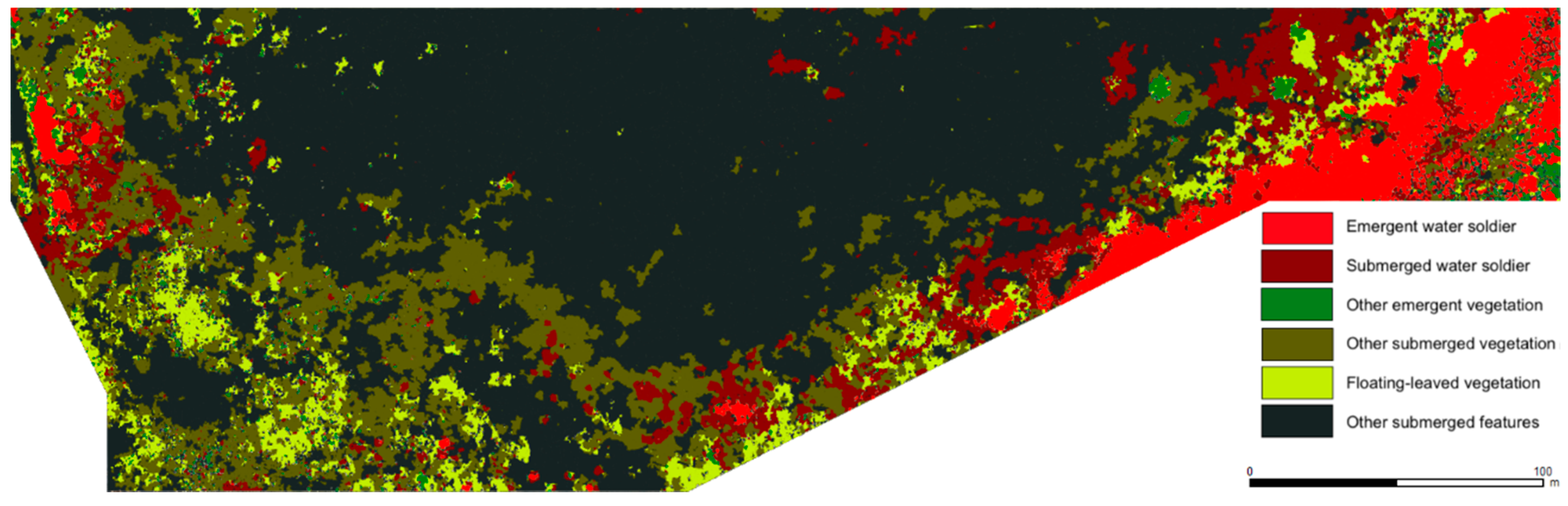

The top above-water and submerged classifications are shown for study site 2 in

Figure 6, and their respective confusion matrices for the validation samples distributed across all five study sites are shown in

Table 4 and

Table 5. For above-water features, user’s and producer’s accuracies were all-around strong (87–98%) for all three classes, with emergent water soldier occasionally misclassified as other emergent vegetation and vice versa, and other emergent vegetation also occasionally misclassified as floating vegetation and vice versa (

Table 4). For submerged features, the principal sources of error were misclassification of submerged water soldier (PA = 71%) and especially other submerged vegetation (PA = 48%) as miscellaneous other submerged features, signifying appreciable omission error rates for these two classes. However, commission error rates were relatively low and comparable among all four classes (UA = 82–87%) (

Table 5).

4. Discussion

We developed and executed a relatively simple and accessible object-based image analysis workflow for mapping shallow-water emergent and submerged aquatic vegetation in discrete-band multispectral aerial imagery collected by a small drone. The workflow yielded excellent automated image classification performance (OA > 90%, kappa > 80%) for emergent/above-water features and comparatively lesser, but still fair, performance for submerged features. These results represent a major improvement over those of our pilot trial, which involved uncalibrated imagery of lower spectral resolution and wholesale segmentation and classification of above-water and submerged features combined, yielding an overall accuracy of 78% and kappa value of 61% [

32]. They also compare favorably to the few previous implementations of automated image analysis to map submerged vegetation in drone imagery [

29,

30].

Identification of submerged features in aerial imagery is inherently challenging because of the variable attenuation of tones and contrasts of features depending on their depth below the water surface, which can be compounded by turbidity as well as surface disturbances, such as ripples, waves, or glint. It is therefore desirable to collect imagery in low wind and cloudy or overcast conditions as we did, although we observed a significant reduction of glint in the multispectral imagery compared to the RGB imagery, indicating that sky conditions were only partly responsible for the incidence of glint, and that multispectral image acquisition under sunny conditions may still produce workable imagery. Also, our experience highlights the preferability of collecting imagery around peak daylight hours—perhaps within ±2 h of solar zenith—when submerged features are receiving maximum illumination. Joyce et al. [

41] recently published a practical guide to collecting optimal quality drone imagery in marine and freshwater environments. Although our multistage approach to image segmentation enabled much more effective segmentation of submerged features, some trade-off between over-segmentation of shallower, brighter features and under-segmentation of deeper, darker features could not be avoided without venturing into more elaborate analyses that we judged to be out of keeping with the efficient workflow we were aiming for. Even at the classification stage, the varying spectral and textural characteristics of features at varying depths pose a distinct challenge for machine-learning classifiers. Despite selecting an ample number of submerged water soldier and other vegetation training samples at varying depths, relatively high omission error rates for these classes (water soldier = 29%, other vegetation = 52%) mainly resulted from deeper patches of vegetation being misclassified as miscellaneous other submerged features (e.g., bare bottom), presumably due to decreasing distinctness of submerged vegetation with increasing depth. It should be noted again that the Sequoia multispectral camera we employed lacked a blue band, as it was primarily designed for crop monitoring applications, for which there is typically little need to record blue-region radiation. With only two bands to work with (red and green) to characterize submerged features, the object attribute set was relatively limited, and superior performance of both segmentation and classification could likely be achieved in future work by using a camera that additionally records a blue band, as blue-region radiation also has greater water penetration potential. Furthermore, it may be possible to use band-differencing techniques to create a depth-invariant index of bottom reflectance [

42].

For this study, we experimented with varying values for a limited number of analysis parameters. It has previously been noted that the numerous customizable steps and parameters encountered over the course of an OBIA workflow can end up consuming a large amount of time in systematic experimentation and refinement [

26], and we wanted to keep such exercises to a reasonable minimum so as to present a relatively straightforward workflow and not overwhelm potential adopters with analysis considerations. The size of the texture kernel is a distinctly important consideration in adequately capturing any textural information that may help differentiate feature classes [

34], and it is instructive to relate the kernel size in pixels to the real-world area it covers in the imagery as a function of the spatial resolution. In our case, we judged a priori that the minimum kernel size of 3 × 3 pixels (≈40 × 40 cm in our multispectral imagery) would likely be insufficient to capture the distinctive textures of the vegetation of interest, particularly the dense mats of large rosettes formed by water soldier plants. Our results indicated that the optimal texture kernel size for capturing this pattern and those of the other types of vegetation was 5 × 5 pixels (≈65 × 65 cm) for emergent plants, while the 7 × 7 pixel kernel size (≈90 × 90 cm) performed comparatively better for submerged features, which may be regarded as a predictable consequence of the reduced definition of the fine-scale texture of submerged features. We also experimented with varying values of two of the most fundamental RF parameters, the maximum number of trees in the forest and the maximum depth of the tree [

38], finding in our case that increasing the former yielded negligible to marginal improvement of classification performance, while increasing the latter yielded an initially significant boost to submerged classification performance before plateauing (little effect was observed on above-water classification performance). We chose the RF classifier on the basis of its well-recognized strong performance for remote sensing image classification and capacity to handle large numbers of variables (i.e., object attributes) as well as multicollinearity among variables, although other popular classifiers such as support vector machines (SVM), decision trees (DT), and artificial neural networks (ANN) have also proven effective in many cases [

36].

A notable aspect of our analysis workflow is its use of off-the-shelf graphical software tools requiring no programming or coding, therefore making it readily accessible to anyone possessing at least basic GIS and image analysis skills. We opted to use a commercial software package (ENVI) available to us to perform image segmentation because of its convenient previewing feature and automatic calculation of a relatively rich set of object attributes for classification. Object classification can also be performed within ENVI (although it lacks the RF classifier, which is why we opted to use different software for classification) as well as other “all-in-one” commercial OBIA software packages, such as eCognition (Trimble, Sunnyvale, CA, USA). However, it would also be feasible to perform the entire image analysis workflow using free software. For example, the Orfeo ToolBox we used to train and execute the RF classification also includes an image segmentation tool with the watershed algorithm in addition to several alternative algorithms. More time would likely be required to establish optimal segmentation parameters due to the lack of a quick previewing feature, and several further operations and decisions on the part of the user would be required to subsequently compute classification attributes for the objects. A variety of spatial, spectral, and texture (including second-order texture) attributes can be calculated for polygons (i.e., objects) overlaying imagery using an assortment of native QGIS tools and QGIS-integrated third-party tools, including from the Orfeo ToolBox, GRASS GIS tools (GRASS GIS Development Team), and SAGA GIS tools (SAGA User Group Association). Although these tools may be less efficient to use than turnkey commercial OBIA software, they do collectively offer a high degree of workflow and parameter customizability that could potentially benefit analysis performance.

Important questions in the burgeoning use of drones for environmental monitoring revolve around efficiency and scalability. Drones themselves are becoming more efficient to deploy in the field thanks to continuously improving performance and ease-of-use, and decreasing costs [

43], as well as streamlining of regulatory frameworks, although regulations remain onerous in some jurisdictions [

44,

45]. However, the frequent claims of exceptional efficiency of drone-based monitoring often overlook or understate the amount of work that can be involved in fully analyzing the typically large volumes of very high-resolution imagery to extract actionable information. The long-term goal of our research is to establish an efficient drone-based aquatic vegetation monitoring protocol—including an image analysis workflow—that can be readily scaled up to numerous additional sites covering a much larger total area than we surveyed in the present study. Although it is encouraging that we were able to combine imagery collected in five separate flights over five discrete sites and successfully perform common segmentations and classifications on all sites simultaneously, it remains to be seen if it would be feasible to apply a common set of segmentation and classification parameters to a larger number of discrete image sets collected in multiple localities over multiple days under varying weather/sky conditions. Working with radiometrically calibrated imagery at a consistent spatial resolution certainly increases the chances of being able to apply common analysis parameters to multiple image sets, but potentially only to a certain extent. Moreover, there is a long-term desire to identify a larger variety of specific categories and species of vegetation, and increasing the number of classes in a supervised classification generally decreases overall accuracy [

36,

46].

Although OBIA is regarded as a semi-automated approach to digitizing imagery, a significant amount of manual effort will nevertheless be required if it is necessary to separately process numerous image sets with different segmentation parameters and separately trained classification models. The subjective manner of establishing segmentation parameters and creating a set of object classification attributes also must be recognized: ultimately, a machine-learning classifier is constrained by the ability of the user to input a set of objects that appropriately demarcates target features as well as a set of attributes that contains the necessary information to effectively distinguish among feature classes. A promising recent development in this regard are so-called “deep learning” (DL) algorithms [

47], which can automatically pick up on whatever visual information distinguishes objects and features of interest, thus freeing the user from the burden of subjectively selecting a finite set of attributes to “propose” to the classifier. Once thoroughly trained, DL classifiers have also shown an exceptional capacity to recognize target features in new image sets without requiring additional training, or segmentation of the input imagery. Promising groundwork has been accomplished in the use of DL algorithms to classify drone imagery, using standard OBIA segmentation methods to initially delineate training objects [

28]. However, a current drawback of DL techniques is that they are still very far from achieving the same level of accessibility and ease-of-use for non-experts as the off-the-shelf OBIA tools we employed in our workflow [

47].