Response Time Reduction Due to Retesting in Mental Speed Tests: A Meta-Analysis

Abstract

:1. Introduction

1.1. Retest Effects in Cognitive Ability Tests

1.2. Mental Speed

1.3. RT Reduction Due to Retesting in Mental Speed Tasks

1.4. Moderators of RT Reduction Due to Retesting in Mental Speed Tasks

1.4.1. Test Form

1.4.2. Task Complexity

1.4.3. Test-Retest Interval

1.4.4. Age

2. Methods

2.1. Inclusion and Exclusion Criteria

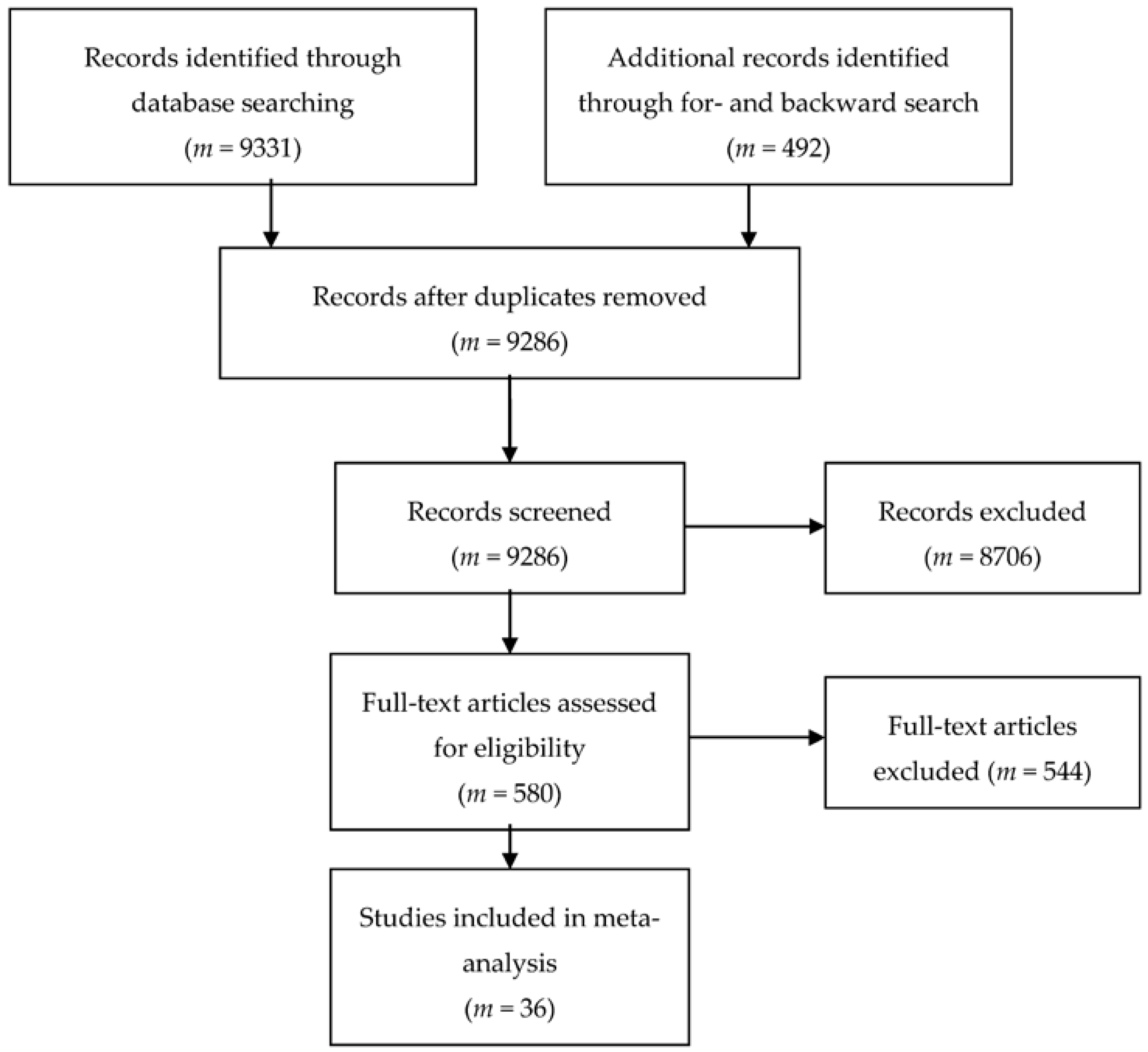

2.2. Literature Search

2.3. Coding

2.4. Effect Size Calculation

2.5. Meta-Analytic Strategy

3. Results

3.1. Study, Sample and Test Characteristics

3.2. RT Reduction

3.3. Moderators

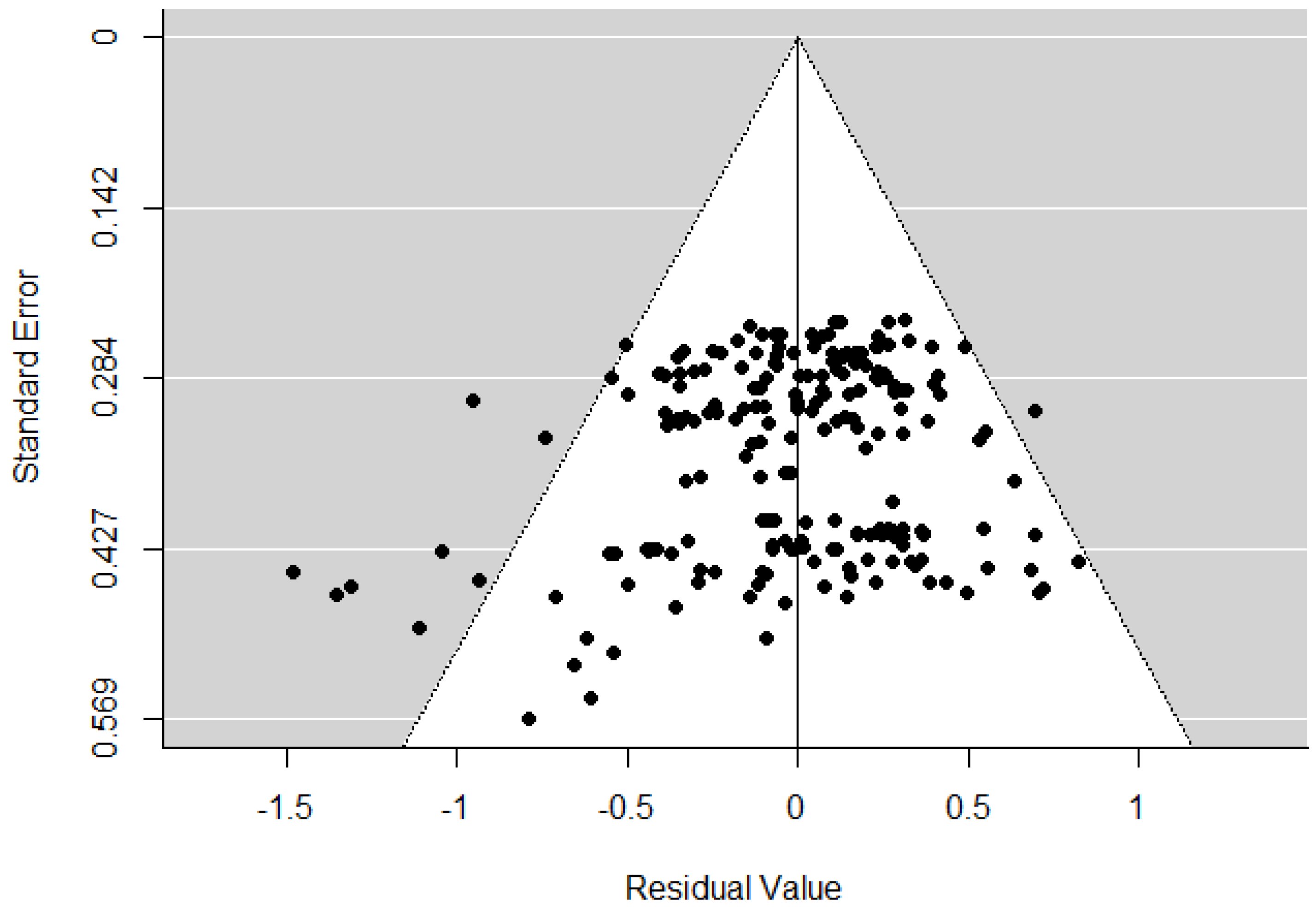

3.4. Publication Bias

4. Discussion

4.1. Summary of Results and Theoretical Implications

4.2. Limitations

4.3. Future Research

4.4. Practical Implications

Supplementary Materials

Acknowledgments

Author Contributions

Conflicts of Interest

Appendix A

| Study | Goal of Study | Sample No. | n | Age (M) | TR Interval (Weeks) | Test | Subtest | Complexity | SMCR | ||

|---|---|---|---|---|---|---|---|---|---|---|---|

| 1.2 | 1.3 | 1.4 | |||||||||

| 1. Anastasopoulou et al., 1999 [118] | retest effects | 1 | 17 | 21.3 | 0.00, 0.03, 0.03 | Response Time Task | complex | −0.54 | −0.66 | −1.12 | |

| 2 | 17 | 21.3 | 0.00, 0.03, 0.03 | Response Time Task | complex | −0.24 | −0.21 | −0.36 | |||

| 2. Baird et al., 2007 [119] | retest effects | 3 | 13 | 17.9 | 0.00 | Computerized Test of Information Processing | choice RT | complex | 0.30 | 0.07 | |

| 0.00 | Computerized Test of Information Processing | simple RT | simple | 0.04 | −0.29 | ||||||

| 0.00 | Computerized Test of Information Processing | semantic RT | complex | −0.35 | −0.73 | ||||||

| 4 | 13 | 19.5 | 1.00 | Computerized Test of Information Processing | choice RT | complex | 0.07 | 0.35 | |||

| 1.00 | Computerized Test of Information Processing | simple RT | simple | −0.04 | 0.02 | ||||||

| 1.00 | Computerized Test of Information Processing | semantic RT | complex | −0.25 | −0.14 | ||||||

| 5 | 13 | 20.5 | 12.86 | Computerized Test of Information Processing | choice RT | complex | 0.00 | ||||

| 12.86 | Computerized Test of Information Processing | simple RT | simple | −0.24 | |||||||

| 12.86 | Computerized Test of Information Processing | semantic RT | complex | −0.37 | |||||||

| 3. Baniqued et al., 2014 [120] | intervention: passive CG | 6 | 61 | 20.7 | 2.50 | Attention Network Test | no cue trials | simple | 0.16 | ||

| 2.50 | Attention Network Test | incongruent-congruent trials | complex | −0.29 | |||||||

| 2.50 | Attention Network Test | location trials | complex | 0.03 | |||||||

| 2.50 | Stroop Test | incongruent-neutral trials | simple | 0.00 | |||||||

| 2.50 | Stroop Test | incongruent-congruent trials | complex | −0.01 | |||||||

| 2.50 | Trail Making Test | numbers | complex | 0.25 | |||||||

| 2.50 | Trail Making Test | letters | complex | −0.57 | |||||||

| 2.50 | Trail Making Test | numbers and letters | complex | −0.19 | |||||||

| 4. Bartels et al., 2010 [107] | retest effects | 7 | 36 | 47.3 | 2.29, 6.00, 9.00 | Attention Test Battery | alterness | simple | −0.07 | −0.09 | −0.15 |

| 2.29, 6.00, 9.00 | Attention Test Battery | visual scanning | simple | −0.58 | −0.80 | −1.00 | |||||

| 2.29, 6.00, 9.00 | Trail Making Test | part B | complex | −0.30 | −0.36 | −0.60 | |||||

| 2.29, 6.00, 9.00 | Trail Making Test | part A | complex | −0.40 | −0.44 | −0.59 | |||||

| 5. Buck et al., 2008 [121] | retest effects | 8 | 48 | 20 | 1.00, 2.00 | Trail Making Test | part A | complex | −0.09 | −0.13 | |

| 1.00, 2.00 | Trail Making Test | part B | complex | −0.13 | −0.19 | ||||||

| 9 | 44 | 20 | 1.00, 2.00 | Delis-Kaplan Trail Making Test | numbers task | complex | −0.13 | −0.16 | |||

| 1.00, 2.00 | Delis-Kaplan Trail Making Test | letters task | complex | −0.04 | −0.11 | ||||||

| 1.00, 2.00 | Delis-Kaplan Trail Making Test | number-letter task | complex | −0.11 | −0.13 | ||||||

| 10 | 42 | 20 | 1.00, 2.00 | Comprehensive Trail Making Test | trial 1 | complex | −0.10 | −0.19 | |||

| 1.00, 2.00 | Comprehensive Trail Making Test | trial 5 | complex | −0.07 | −0.12 | ||||||

| 11 | 48 | 20 | 1.00, 2.00 | Planned Connections | trial 6 | complex | −0.07 | −0.09 | |||

| 1.00, 2.00 | Planned Connections | trial 8 | complex | −0.07 | −0.10 | ||||||

| 6. Bühner et al., 2006 [52] | retest effects | 12 | 25 | 22.2 | 0.00, 0.00, 0.00 | Attention Test Battery | audio | complex | 0.09 | 0.00 | −0.14 |

| 13 | 23 | 22.2 | 0.00, 0.00, 0.00 | Attention Test Battery | squares | complex | −0.08 | −0.61 | −0.62 | ||

| 14 | 24 | 22.2 | 0.00, 0.00, 0.00 | Attention Test Battery | squares and audio | complex | 0.05 | −0.06 | −0.34 | ||

| 15 | 24 | 22.2 | 0.00, 0.00, 0.00 | Attention Test Battery | Go/Nogo | complex | −0.05 | −0.35 | −0.64 | ||

| 7. Bürki et al., 2014 [3] | intervention: passive CG | 16 | 21 | 25.52 | 3.00 | letter comparison task | complex | 0.08 | |||

| 3.00 | simple reaction time task | simple | 0.07 | ||||||||

| 3.00 | pattern comparison task | complex | −0.06 | ||||||||

| 8. Collie et al., 2003 [53] | retest effects | 17 | 113 | 63.68 | 0.03, 0.09, 0.18 | simple reaction time test | simple | −0.15 | −0.26 | −0.57 | |

| 0.18, 0.71, 0.89 | choice reaction time test | complex | −0.30 | −0.47 | −0.52 | ||||||

| 0.18, 0.71, 0.89 | complex reaction time test | complex | −0.34 | −0.44 | −0.51 | ||||||

| 0.18, 0.71, 0.89 | continuous performance test | simple | −0.29 | −0.34 | −0.39 | ||||||

| 0.21, 0.71, 0.89 | matching test | complex | −0.42 | −0.45 | −0.39 | ||||||

| 9. Colom et al., 2013 [122] | retest effects | 18 | 28 | 18.2 | 21.43 | odd-even Flanker task | complex | −0.17 | |||

| 21.43 | right-left Simon task | complex | 0.18 | ||||||||

| 21.43 | vowel-consonant Flanker task | complex | −0.20 | ||||||||

| 10. Dingwall et al., 2009 [123] | test-retest reliability | 19 | 17 | 15.41 | 2.14, 4.29, 6.29 | CogState Battery | identification | simple | 0.32 | 0.32 | 0.21 |

| 2.14, 4.29, 6.29 | CogState Battery | detection | simple | 0.06 | 0.19 | 0.00 | |||||

| 11. Dolan et al., 2013 [124] | test-retest reliability | 20 | 9 | 22 | 0.29 | choice reaction time test | complex | −0.35 | |||

| 0.29 | rapid visual information processing test | simple | 0.40 | ||||||||

| 0.29 | simple reaction time test | simple | −0.26 | ||||||||

| 0.29 | Stroop test | baseline trials | simple | −0.35 | |||||||

| 0.29 | Stroop test | interference trials | complex | −0.27 | |||||||

| 12. Elbin et al., 2011 [125] | test-retest reliability | 21 | 369 | 14.8 | 62.57 | ImPACT | reaction time subscale | complex | −0.37 | ||

| 13. Enge et al., 2014 [126] | intervention: passive CG | 22 | 38 | 21.3 | 3.00, 17.43 | Go/No-go task | complex | −1.19 | −1.68 | ||

| 3.00, 17.43 | Stop Signal | complex | −0.78 | −0.80 | |||||||

| 3.00, 17.43 | Stroop test | complex | −0.63 | −0.74 | |||||||

| 14. Falleti et al., 2006 [127] | retest effects | 23 | 45 | 21.64 | 0.00, 0.00, 0.00 | CogState Battery | choice reaction time | complex | 0.16 | 0.33 | 0.33 |

| 0.00, 0.00, 0.00 | CogState Battery | simple reaction time | simple | 0.00 | 0.00 | −0.16 | |||||

| 0.00, 0.00, 0.00 | CogState Battery | complex reaction time | complex | 0.00 | 0.00 | −0.22 | |||||

| 0.00, 0.00, 0.00 | CogState Battery | matching | complex | −0.59 | −0.69 | −0.79 | |||||

| 24 | 55 | 32.69 | 0.00, 4.29 | CogState Battery | choice reaction time | complex | 0.00 | 0.18 | |||

| 0.00, 4.29 | CogState Battery | continuous associate learning | complex | −0.06 | −0.06 | ||||||

| 0.00, 4.29 | CogState Battery | complex reaction time | complex | −0.12 | −0.12 | ||||||

| 0.00, 4.29 | CogState Battery | simple reaction time | simple | −0.20 | −0.10 | ||||||

| 15. Gil-Gouveia et al., 2016 [128] | longitudinal change in migraineurs: healthy CG | 25 | 24 | 33.3 | 6.43 | Trail Making Test | part A | complex | −0.37 | ||

| 6.43 | Trail Making Test | part B | complex | −0.35 | |||||||

| 16. Hagemeister, 2007 [1] | retest effects | 26 | 60 | 28 | 0.01, 0.50, 0.51 | attention task | complex | −0.48 | −1.41 | −1.44 | |

| 27 | 59 | 23 | 0.01, 0.50, 0.51 | attention task | complex | −0.46 | −1.85 | −1.85 | |||

| 17. Iuliano et al., 2015 [129] | intervention: passive CG | 28 | 20 | 66.47 | 12.00 | Attentive Matrices Test | complex | −0.09 | |||

| 12.00 | Stroop test | complex | 0.18 | ||||||||

| 12.00 | Trail Making Test | part A | complex | −0.16 | |||||||

| 12.00 | Trail Making Test | part B | complex | 0.05 | |||||||

| 18. Langenecker et al., 2007 [130] | test-retest reliability | 29 | 28 | 18.9 | 3.00 | Go/No-go task | level 1 | complex | −0.33 | ||

| 3.00 | Trail Making Test | complex | −0.58 | ||||||||

| 3.00 | Trail Making Test | complex | −0.73 | ||||||||

| 19. Lemay et al., 2004 [131] | retest effects, test-retest reliability | 30 | 37 | 67.35 | 2.00, 4.00 | Stroop test | reading subtest | simple | −0.06 | −0.06 | |

| 2.00, 4.00 | Stroop test | naming subtest | simple | −0.30 | −0.41 | ||||||

| 2.00, 4.00 | Stroop test | interference subtest | complex | −0.64 | −0.90 | ||||||

| 2.00, 4.00 | Stroop test | flexibility subtest | complex | −0.54 | −0.81 | ||||||

| 2.00, 4.00 | Stroop test | inter-naming subtest | complex | −0.64 | −0.92 | ||||||

| 2.00, 4.00 | Stroop test | flex-naming subtest | complex | −0.51 | −0.78 | ||||||

| 20. Levine et al., 2004 [132] | retest effects | 31 | 605 | 40.2 | 35.86 | California Computerized Assessment Package | simple reaction time | simple | −0.13 | ||

| 35.86 | California Computerized Assessment Package | choice reaction time trials 1 | complex | 0.03 | |||||||

| 35.86 | California Computerized Assessment Package | choice traction time trials 2 | complex | −0.11 | |||||||

| 21. Lyall et al., 2016 [102] | longitudinal change | 32 | 19327 | 54.5 | 225.78 | reaction time test | simple | 0.08 | |||

| 22. Mehlsen et al., 2008 [133] | longitudinal change in breast cancer patients: healthy CG | 33 | 17 | 39.3 | 14.00 | Trail Making Test | part A | complex | −0.62 | ||

| 14.00 | Trail Making Test | part B | complex | −0.59 | |||||||

| 23. Mora et al., 2013 [134] | longitudinal change in bipolar patients: healthy CG | 34 | 26 | 41.38 | 312.86 | Continuous Performance Test II | hit reaction time | complex | 0.03 | ||

| 312.86 | Trail Making Test B | part B | complex | −0.01 | |||||||

| 24. Oelhafen et al., 2013 [135] | intervention: passive CG | 35 | 16 | 25.2 | 21.00 | Attention Network Test congruent | congruent trials | simple | −0.19 | ||

| 21.00 | Attention Network Test incongruent | incongruent trials | complex | −0.42 | |||||||

| 25. Ownby et al., 2016 [136] | retest effects | 36 | 51 | 28.92 | 55.33 | Colored Trails | test 1 | complex | −0.36 | ||

| 55.33 | Colored Trails | test 2 | complex | −0.25 | |||||||

| 26. Register-Mihalik et al., 2012 [137] | test-retest-reliability | 37 | 20 | 16 | 0.26, 0.49 | Trail Making Test | part B | complex | −0.98 | −1.48 | |

| 0.26, 0.49 | ImPACT | reaction time subscale | complex | −0.48 | −0.32 | ||||||

| 38 | 20 | 20 | 0.26, 0.49 | Trail Making Test | part B | complex | −0.62 | −1.08 | |||

| 0.26, 0.49 | ImPACT | reaction time subscale | complex | −0.16 | −0.16 | ||||||

| 27. Richmond et al., 2014 [138] | intervention: passive CG | 39 | 18 | 21.6 | 2.29 | psychomotor vigilance task | simple | 0.46 | |||

| 2.29 | sustained attention response task | simple | −0.50 | ||||||||

| 2.29 | Stroop test | complex | −0.19 | ||||||||

| 28. Salminen et al., 2012 [139] | intervention: passive CG | 40 | 18 | 24.5 | 3.00 | auditory discrimination task | SOA 100 | complex | −0.40 | ||

| 3.00 | auditory discrimination task | SOA 400 | complex | −0.24 | |||||||

| 3.00 | auditory discrimination task | SOA 50 | complex | −0.34 | |||||||

| 3.00 | visual discrimination task | SOA 100 | complex | −0.59 | |||||||

| 3.00 | visual discrimination task | SOA 400 | complex | −0.57 | |||||||

| 3.00 | visual discrimination task | SOA 50 | complex | −0.57 | |||||||

| 3.00 | task switching | repetition trials | complex | −0.47 | |||||||

| 3.00 | task switching | single-task trials | complex | −0.36 | |||||||

| 29. Sandberg et al., 2014 [140] | intervention: passive CG | 41 | 13 | 24.62 | 5.00 | Flanker task | complex | −0.06 | |||

| 5.00 | Stroop test | complex | −0.16 | ||||||||

| 42 | 15 | 68.8 | 5.00 | Flanker task | complex | −0.32 | |||||

| 5.00 | Stroop test | complex | −0.10 | ||||||||

| 30. Schatz, 2010 [141] | test-retest-reliability | 43 | 95 | 18.8 | 104.29 | ImPACT | reaction time subscale | complex | −0.17 | ||

| 31. Schmidt et al., 2013 [142] | intervention: passive CG | 44 | 11 | 33.8 | 6.00, 13.50, 20.00 | Trail Making Test A | part A | complex | −0.39 | −0.40 | −0.59 |

| 32. Schranz & Osterode, 2009 [143] | retest effects | 45 | 10 | 42.13 | 1.141 2,29, 3.43 | Determination Test | Action | complex | −0.52 | −1.02 | −1.29 |

| 1.141 2,29, 3.43 | Determination Test | Reaction | complex | −0.56 | −0.91 | −1.10 | |||||

| 33. Sharma et al., 2013 [144] | intervention: passive CG | 46 | 28 | 19 | 12.00 | auditory reaction time test | complex | 0.01 | |||

| 12.00 | Letter Cancellation Test | complex | −0.10 | ||||||||

| 12.00 | Trail Making Test A | part A | complex | −0.17 | |||||||

| 12.00 | Trail Making Test B | part B | complex | −0.23 | |||||||

| 12.00 | visual reaction time test | complex | 0.02 | ||||||||

| 12.00 | visual reaction time test | complex | 0.00 | ||||||||

| 34. Soveri et al., 2013 [145] | intervention: passive CG | 47 | 14 | 23.14 | 2.50 | Simon task | congruent trials | simple | 0.14 | ||

| 2.50 | Simon task | incongruent trials | complex | −0.12 | |||||||

| 35. Steinborn et al., 2008 [146] | retest effects | 48 | 89 | 24.5 | 0.43 | Serial Mental Addition and Comparison Task | complex | −0.74 | |||

| 36. Weglage et al., 2013 [147] | longitudinal change in clinical sample: healthy CG | 49 | 46 | 34.2 | 260.71 | Connecting Numbers | complex | −0.30 | |||

References

- Bürki, C.N.; Ludwig, C.; Chicherio, C.; de Ribaupierre, A. Individual differences in cognitive plasticity: An investigation of training curves in younger and older adults. Psychol. Res. 2014, 78, 821–835. [Google Scholar] [CrossRef] [PubMed]

- Hagemeister, C. How useful is the Power Law of Practice for recognizing practice in concentration tests? Eur. J. Psychol. Assess. 2007, 23, 157–165. [Google Scholar] [CrossRef]

- Hausknecht, J.P.; Halpert, J.A.; Di Paolo, N.T.; Moriarty Gerrard, M.O. Retesting in selection: A meta-analysis of coaching and practice effects for tests of cognitive ability. J. Appl. Psychol. 2007, 92, 373–385. [Google Scholar] [CrossRef] [PubMed]

- Verhaeghen, P. The Elements of Cognitive Aging: Meta-Analyses of Age-Related Differences in Processing Speed and Their Consequences; University Press: Oxford, UK, 2015. [Google Scholar]

- Kulik, J.A.; Kulik, C.-L.C.; Bangert, R.L. Effects of practice on aptitude and achievement test scores. Am. Educ. Res. J. 1984, 21, 435–447. [Google Scholar] [CrossRef]

- Calamia, M.; Markon, K.; Tranel, D. Scoring higher the second time around: Meta-analyses of practice effects in neuropsychological assessment. Clin. Neuropsychol. 2012, 26, 543–570. [Google Scholar] [CrossRef] [PubMed]

- Scharfen, J.; Peters, J.M.; Holling, H. Retest effects in cognitive ability tests: A meta-analysis. Intelligence 2018, 67, 44–66. [Google Scholar] [CrossRef]

- Randall, J.G.; Villado, A.J. Take two: Sources and deterrents of score change in employment retesting. HRMR 2017, 27, 536–553. [Google Scholar] [CrossRef]

- Kyllonen, P.C.; Zu, J. Use of response time for measuring cognitive ability. J. Intell. 2016, 4, 14. [Google Scholar] [CrossRef]

- Van der Linden, W.J. A hierarchical framework for modeling speed and accuracy on test items. Psychometrika 2007, 72, 297–308. [Google Scholar] [CrossRef]

- Lievens, F.; Reeve, C.L.; Heggestad, E.D. An examination of psychometric bias due to retesting on cognitive ability tests in selection settings. J. Appl. Psychol. 2007, 92, 1672–1682. [Google Scholar] [CrossRef] [PubMed]

- Roediger, H.L.; Butler, A.C. The critical role of retrieval practice in long-term retention. Trends Cogn. Sci. 2011, 15, 20–27. [Google Scholar] [CrossRef] [PubMed]

- Reeve, C.L.; Lam, H. The psychometric paradox of practice effects due to retesting: Measurement invariance and stable ability estimates in the face of observed score changes. Intelligence 2005, 33, 535–549. [Google Scholar] [CrossRef]

- Roediger, H.L.; Karpicke, J.D. Test-enhanced learning: Taking memory tests improves long-term retention. Psychol. Sci. 2006, 17, 249–255. [Google Scholar] [CrossRef] [PubMed]

- Racsmány, M.; Szöllözi, Á.; Bencze, D. Retrieval practice makes procedure from remembering: An automatization account for the testing effect. J. Exp. Psychol. Learn. Mem. Cogn. 2017. [Google Scholar] [CrossRef] [PubMed]

- Finkel, D.; Reynolds, C.A.; McArdle, J.J.; Pedersen, N.L. Age changes in processing speed as a leading indicator of cognitive aging. Psychol. Aging 2007, 22, 558–568. [Google Scholar] [CrossRef] [PubMed]

- Hausknecht, J.P.; Trevor, C.O.; Farr, J.L. Retaking ability tests in a selection setting: Implications for practice effects, training performance, and turnover. J. Appl. Psychol. 2002, 87, 243–254. [Google Scholar] [CrossRef] [PubMed]

- Lievens, F.; Buyse, T.; Sackett, P.R. Retest effects in operational selection settings: Development and test of a framework. J. Pers. Psychol. 2005, 58, 981–1007. [Google Scholar] [CrossRef]

- Te Nijenhuis, J.; van Vianen, A.E.M.; van der Flier, H. Score gains on g-loaded tests: No g. Intelligence 2007, 35, 283–300. [Google Scholar] [CrossRef]

- Matton, N.; Vautier, S.; Raufaste, É. Situational effects may account for gain scores in cognitive ability testing: A longitudinal SEM approach. Intelligence 2009, 37, 412–421. [Google Scholar] [CrossRef]

- Freund, P.A.; Holling, H. How to get really smart: Modeling retest and training effects in ability testing using computer-generated figural matrix items. Intelligence 2011, 39, 233–243. [Google Scholar] [CrossRef]

- Reeve, C.L.; Heggestad, E.D.; Lievens, F. Modeling the impact of test anxiety and test familiarity on the criterion-related validity of cognitive ability tests. Intelligence 2009, 37, 34–41. [Google Scholar] [CrossRef]

- Allalouf, A.; Ben-Shakar, G. The effect of coaching on the predictive validity of scholastic aptitude tests. J. Educ. Meas. 1998, 35, 31–47. [Google Scholar] [CrossRef]

- Arendasy, M.E.; Sommer, M. Reducing the effect size of the retest effect: Examining different approaches. Intelligence 2017, 62, 89–98. [Google Scholar] [CrossRef]

- Hayes, T.R.; Petroc, A.A.; Sederberg, P.B. Do we really become smarter when our fluid intelligence test scores improve? Intelligence 2015, 48, 1–14. [Google Scholar] [CrossRef] [PubMed]

- Messick, S.; Jungeblut, A. Time and method in coaching for the SAT. Psychol. Bull. 1981, 89, 191–216. [Google Scholar] [CrossRef]

- Danthiir, V.; Roberts, R.D.; Schulze, R.; Wilhelm, O. Mental speed. On frameworks, paradigms, and a platform for the future. In Handbook of Understanding and Measuring Intelligence; Wilhelm, O., Engle, R.W., Eds.; Sage: London, UK, 2005; pp. 27–46. [Google Scholar]

- Jäger, A.O. Mehrmodale Klassifikation von Intelligenzleistungen: Experimentell kontrollierte Weiterentwicklung eines deskriptiven Intelligenzstrukturmodells [Multi-modal classification of intelligence performances: Further development of a descriptive model of intelligence based on experiments]. Diagnostica 1982, 28, 195–225. [Google Scholar]

- Kubinger, K.D.; Jäger, R.S. Schlüsselbegriffe der Psychologischen Diagnostik [Key Concepts of Psychological Diagnostics]; Beltz: Weinheim, Germany, 2003. [Google Scholar]

- Conway, A.R.A.; Cowan, N.; Bunting, M.F.; Therriault, D.J.; Minkoff, S.R.B. A latent variable analysis of working memory capacity, short-term memory capacity, processing speed, and general fluid intelligence. Intelligence 2002, 30, 163–183. [Google Scholar] [CrossRef]

- Bühner, M.; Krumm, S.; Ziegler, M.; Pluecken, T. Cognitive abilities and their interplay: Reasoning, crystallized intelligence, working memory components, and sustained attention. Individ. Differ. Res. 2006, 27, 57–72. [Google Scholar] [CrossRef]

- Danthiir, V.; Wilhelm, O.; Schulze, R.; Roberts, R.D. Factor structure and validity of paper-and-pencil measures of mental speed: Evidence for a higher-order model? Intelligence 2005, 33, 491–514. [Google Scholar] [CrossRef]

- Wilhelm, O.; Schulze, R. The relation of speeded and unspeeded reasoning with mental speed. Intelligence 2002, 30, 537–554. [Google Scholar] [CrossRef]

- Ackerman, P.L. Individual differences in skill learning: An integration of psychometric and information processing perspectives. Psychol. Bull. 1987, 102, 3–27. [Google Scholar] [CrossRef]

- Goldhammer, F.; Rauch, W.A.; Schweizer, K.; Moosbrugger, H. Differential effects of intelligence, perceptual speed and age on growth in attentional speed and accuracy. Intelligence 2010, 38, 83–92. [Google Scholar] [CrossRef]

- Becker, N.; Schmitz, F.; Göritz, A.S.; Spinath, F.M. Sometimes more is better, and sometimes less is better: Task complexity moderates the response time accuracy correlation. J. Intell. 2016, 4, 11. [Google Scholar] [CrossRef]

- Davidson, W.M.; Carroll, J.B. Speed and level components of time limit scores: A factor analysis. Educ. Psychol. Meas. 1945, 5, 411–427. [Google Scholar] [CrossRef]

- Kyllonen, P.C.; Tirre, W.C.; Christal, R.E. Knowledge and processing speed as determinants of associative learning. J. Exp. Psychol. Gen. 1991, 120, 89–108. [Google Scholar] [CrossRef]

- Villado, A.J.; Randall, J.G.; Zimmer, C.U. The effect of method characteristics on retest score gains and criterion-related validity. J. Bus. Psychol. 2016, 31, 233–248. [Google Scholar] [CrossRef]

- Baltes, P.; Dittmann-Kohli, F.; Kliegl, R. Reserve capacity of the elderly in aging-sensitive tests of fluid intelligence: Replication and extension. Psychol. Aging 1986, 2, 172–177. [Google Scholar] [CrossRef]

- Cohen, J.D.; Dunbar, K.; McClelland, J.L. On the control of automatic processes: A parallel distributed processing account of the Stroop effect. Psychol. Rev. 1990, 97, 332–361. [Google Scholar] [CrossRef] [PubMed]

- LaBerge, D.; Samules, S.J. Toward a theory of automatic information processing in reading. Cogn. Psychol. 1974, 6, 293–323. [Google Scholar] [CrossRef]

- Logan, G.D. Toward an instance theory of automatization. Psychol. Rev. 1988, 95, 492–527. [Google Scholar] [CrossRef]

- Ruthruff, E.; Van Selst, M.; Johnston, J.C.; Remington, R. How does practice reduce dual-task interference: Integration, automatization, or just stage-shortening? Psychol. Res. 2006, 70, 125–142. [Google Scholar] [CrossRef] [PubMed]

- Shiffrin, R.M.; Schneider, W. Controlled and automatic human information processing: II. Perceptual learning, automatic attending, and a general theory. Psychol. Rev. 1977, 84, 127–190. [Google Scholar] [CrossRef]

- Shiffrin, R.M.; Dumais, S.T. Characteristics of automatism. In Attention and Performance; Long, J.B., Baddeley, A., Eds.; Erlbaum: Hillsdale, NJ, USA, 1981; Volume 9, pp. 223–238. [Google Scholar]

- Logan, G.D. Attention and automaticity in Stroop and priming tasks: Theory and data. Cogn. Psychol. 1980, 12, 523–553. [Google Scholar] [CrossRef]

- Newell, A.; Rosenbloom, P.S. Mechanisms of skill acquisition and the law of practice. In Cognitive Skills and Their Acquisition; Anderson, J.R., Ed.; Erlbaum: Hillsdale, NJ, USA, 1981; pp. 1–55. [Google Scholar]

- Donner, Y.; Hardy, J.L. Piecewise power laws in individual learning curves. Psychon. Bull. Rev. 2015, 22, 1308–1319. [Google Scholar] [CrossRef] [PubMed]

- Jaber, M.Y.; Glock, C.J. A learning curve for tasks with cognitive and motor elements. CAIE 2013, 64, 866–871. [Google Scholar] [CrossRef]

- Heathcote, A.; Brown, S. The power law repealed: The case for an exponential law of practice. Psychon. Bull. Rev. 2000, 7, 185–207. [Google Scholar] [CrossRef] [PubMed]

- Bühner, M.; Ziegler, M.; Bohnes, B.; Lauterbach, K. Übungseffekte in den TAP Untertests Test Go/Nogo und Geteilte Aufmerksamkeit sowie dem Aufmerksamkeits-Belastungstest (d2) [Practice effects in TAP subtests Go/Nogo and shared attention and the attention capacity test (d2)]. Z. Neuropsychol. 2006, 17, 191–199. [Google Scholar] [CrossRef]

- Collie, A.; Maruff, P.; Darby, D.G.; McStephen, M. The effects of practice on the cognitive test performance of neurologically normal individuals assessed at brief test-retest intervals. J. Int. Neuropsychol. Soc. 2003, 9, 419–428. [Google Scholar] [CrossRef] [PubMed]

- Rockstroh, S.; Schweizer, K. The effects of retest practice on the speed-ability relationship. Eur. Psychol. 2004, 9, 24–31. [Google Scholar] [CrossRef]

- Rockstroh, S.; Schweizer, K. An investigation on the effect of retest practice on the relationship between speed and ability in attention, memory and working memory tasks. Psychol. Sci. Q. 2009, 4, 420–431. [Google Scholar]

- Soldan, A.; Clarke, B.; Colleran, C.; Kuras, Y. Priming and stimulus-response learning in perceptual classification tasks. Memory 2012, 20, 400–413. [Google Scholar] [CrossRef] [PubMed]

- Westhoff, K.; Dewald, D. Effekte der Übung in der Bearbeitung von Konzentrationstests [Practice effects in attention tests]. Diagnostica 1990, 36, 1–15. [Google Scholar]

- Wöstmann, N.M.; Aichert, D.S.; Costa, A.; Rubia, K.; Möller, H.-J.; Ettinger, U. Reliability and plasticity of response inhibition and interference control. Brain Cogn. 2013, 81, 82–94. [Google Scholar] [CrossRef] [PubMed]

- Druey, M.D. Response-repetition costs in choice-RT tasks: Biased expectancies or response inhibition? Acta Psychol. 2014, 145, 21–32. [Google Scholar] [CrossRef] [PubMed]

- Melzer, I.; Oddsson, L.I.E. The effect of a cognitive task on voluntary step execution in healthy elderly and young individuals. JAGS 2004, 52, 1255–1262. [Google Scholar] [CrossRef] [PubMed]

- Shanks, D.R.; Johnstone, T. Evaluating the relationship between explicit and implicit knowledge in a sequential reaction time task. J. Exp. Psychol. Learn. Mem. Cogn. 1999, 25, 1435–1451. [Google Scholar] [CrossRef] [PubMed]

- Cook, T.D.; Campbell, D.T. Quasi-Experimentation: Design and Analysis Issues for Field Settings; Houghton Mifflin: Boston, MA, USA, 1979. [Google Scholar]

- Maerlender, A.C.; Masterson, C.J.; James, T.D.; Beckwith, J.; Brolinson, P.G. Test-retest, retest, and retest: Growth curve models of repeat testing with Immediate Post-Concussion Assessment and Cognitive Testing (ImPACT). J. Clin. Exp. Neuropsychol. 2016, 38, 869–874. [Google Scholar] [CrossRef] [PubMed]

- Salthouse, T.A.; Schroeder, D.H.; Ferrer, E. Estimating retest effects in longitudinal assessments of cognitive functioning in adults between 18 and 60 years of age. Dev. Psychol. 2004, 40, 813–822. [Google Scholar] [CrossRef] [PubMed]

- Howard, D.V.; Howards, J.H.; Japikse, K.; DiYanni, C.; Thompson, A.; Somberg, R. Implicit sequence learning: Effects of level of structure, adult age, and extended practice. Psychol. Aging 2004, 19, 79–92. [Google Scholar] [CrossRef] [PubMed]

- Van Iddekinge, C.H.; Morgeson, F.P.; Schleicher, D.J.; Campion, M.A. Can I retake it? Exploring subgroup differences and criterion-related validity in promotion retesting. J. Appl. Psychol. 2011, 96, 941–955. [Google Scholar] [CrossRef] [PubMed]

- Cattell, R.B. Intelligence: Its Structure, Growth and Action; North-Holland: Amsterdam, The Netherlands, 1987. [Google Scholar]

- Braver, T.S.; Barch, D.M. A theory of cognitive control, aging cognition, and neuromodulation. Neurosci. Behav. Rev. 2002, 26, 809–817. [Google Scholar] [CrossRef]

- Maquestiaux, F.; Laguë-Beauvais, M.; Ruthruff, E.; Hartley, A.; Bherer, L. Learning to bypass the central bottleneck: Declining automaticity with advancing age. Psychol. Aging 2010, 25, 177–192. [Google Scholar] [CrossRef] [PubMed]

- Maquestiaux, F.; Didierjean, A.; Ruthruff, E.; Chauvel, G.; Hartley, A. Lost ability to automatize task performance in old age. Psychon. Bull. Rev. 2013, 20, 1206–1212. [Google Scholar] [CrossRef] [PubMed]

- Holling, H.; Preckel, F.; Vock, M. Intelligenzdiagnostik [Intelligence Diagnostics]; Hogrefe: Göttingen, Germany, 2004. [Google Scholar]

- Shaffer, D.R.; Kipp, K. Developmental Psychology: Childhood and Adolescence, 8th ed.; Thomson Brooks/Cole Publishing Co.: Belmont, CA, USA, 2010. [Google Scholar]

- Au, J.; Sheehan, E.; Tsai, N.; Duncan, G.J.; Buschkühl, M.; Jaeggi, S.M. Improving fluid intelligence with training on working memory: A meta-analysis. Psychon. Bull. Rev. 2005, 22, 366–377. [Google Scholar] [CrossRef] [PubMed]

- Ball, K.; Edwards, J.D.; Ross, L.A. Impact of speed of processing training on cognitive and everyday functions. J. Gerontol. B Psychol. Sci. Soc. Sci. 2007, 62, 19–31. [Google Scholar] [CrossRef] [PubMed]

- Becker, B.J. Coaching for the Scholastic Aptitude Test: Further synthesis and appraisal. Rev. Educ. Res. 1990, 60, 373–417. [Google Scholar] [CrossRef]

- DerSimonian, R.; Laird, N.M. Evaluating the effect of coaching on SAT scores: A meta-analysis. Harv. Educ. Rev. 1983, 53, 1–15. [Google Scholar] [CrossRef]

- Karch, D.; Albers, L.; Renner, G.; Lichtenauer, N.; von Kries, R. The efficacy of cognitive training programs in children and adolescence: A meta-analysis. Dtsch. Arztebl. Int. 2013, 110, 643–652. [Google Scholar] [CrossRef] [PubMed]

- Kelly, M.; Loughrey, D.; Lawlor, B.A.; Robertson, I.H.; Walsh, C.; Brennan, S. The impact of cognitive training and mental stimulation on cognitive and everyday functioning of healthy older adults: A systematic review and meta-analysis. Ageing Res. Rev. 2014, 15, 28–43. [Google Scholar] [CrossRef] [PubMed]

- Klauer, K.J. Training des induktiven Denkens—Fortschreibung der Metaanalyse von 2008 [Training inductive thinking—Continuation of the 2008 meta-analysis]. Z. Padagog. Psychol. 2014, 28, 5–19. [Google Scholar] [CrossRef]

- Klauer, K.J.; Phye, G.D. Inductive reasoning: A training approach. Rev. Educ. Res. 2008, 78, 85–123. [Google Scholar] [CrossRef]

- Lampit, A.; Hallock, H.; Valenzuela, M. Computerized cognitive training in cognitive healthy older adults: A systematic review and meta-analysis of effect modifiers. PLoS Med. 2014, 11, 1–18. [Google Scholar] [CrossRef] [PubMed]

- Powers, K.L.; Brooks, P.J.; Aldrich, N.J.; Palladino, M.A.; Alfieri, L. Effects of video-game play on information processing: A meta-analytic investigation. Psychon. Bull. Rev. 2013, 20, 1055–1079. [Google Scholar] [CrossRef] [PubMed]

- Schuerger, J.M.; Witt, A.C. The temporal stability of individually tested intelligence. J. Clin. Psychol. 1989, 45, 294–301. [Google Scholar] [CrossRef]

- Scott, G.; Leritz, L.E.; Mumford, M.D. The effectiveness of creativity training: A quantitative review. Creat. Res. J. 2004, 16, 361–388. [Google Scholar] [CrossRef]

- Toril, P.; Reales, J.M.; Ballesteros, S. Video game training enhances cognition of older adults: A meta-analytic study. Psychol. Aging 2014, 29, 706–716. [Google Scholar] [CrossRef] [PubMed]

- Uttal, D.H.; Meadow, N.G.; Tipton, E.; Hand, L.L.; Alden, A.R.; Warren, C.; Newcombe, N.S. The malleability of spatial skills: A meta-analysis of training studies. Psychol. Bull. 2013, 139, 352–402. [Google Scholar] [CrossRef] [PubMed]

- Wang, P.; Liu, H.-H.; Zhu, X.-T.; Meng, T.; Li, H.-J.; Zuo, X.-N. Action video game training for healthy adults: A meta-analytic study. Front. Psychol. 2016, 7, 907. [Google Scholar] [CrossRef] [PubMed]

- Zehnder, F.; Martin, M.; Altgassen, M.; Clare, L. Memory training effects in old age as markers of plasticity: A meta-analysis. Restor. Neurol. Neurosci. 2009, 27, 507–520. [Google Scholar] [CrossRef] [PubMed]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; Group, T.P. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. J. Clin. Epidemiol. 2009, 62, 1006–1012. [Google Scholar] [CrossRef] [PubMed]

- Viechtbauer, W. Conducting meta-analysis in R with the metafor package. J. Stat. Softw. 2010, 36, 1–48. [Google Scholar] [CrossRef]

- R Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2015. [Google Scholar]

- Becker, B.J. Synthesizing standardized mean-change measures. Br. J. Math. Stat. Psychol. 1988, 41, 257–278. [Google Scholar] [CrossRef]

- Gibbons, R.D.; Hedeker, D.R.; Davis, J.M. Estimation of effect size from a series of experiment involving paired comparisons. J. Educ. Stat. 1993, 18, 271–279. [Google Scholar] [CrossRef]

- Morris, S.B.; DeShon, R.P. Combining effect size estimates in meta-analysis with repeated measures and independent-group designs. Psychol. Methods 2002, 7, 105–125. [Google Scholar] [CrossRef] [PubMed]

- Calamia, M.; Markon, K.; Tranel, D. The robust reliability of neuropsychological measures: Meta-analysis of test-retest correlations. Clin. Neuropsychol. 2013, 27, 1077–1105. [Google Scholar] [CrossRef] [PubMed]

- Salanti, G.; Higgins, J.P.T.; Ades, A.E.; Ioannidis, J.P.A. Evaluation of networks of randomized trials. Stat. Methods Med. Res. 2008, 17, 279–301. [Google Scholar] [CrossRef] [PubMed]

- Konstantopoulos, S. Fixed effects and variance components estimation in three-level meta-analysis. Res. Synth. Methods 2011, 2, 61–76. [Google Scholar] [CrossRef] [PubMed]

- Ishak, K.J.; Platt, R.W.; Joseph, L.; Hanley, J.A.; Caro, J.J. Meta-analysis of longitudinal studies. Clin. Trials 2007, 4, 525–539. [Google Scholar] [CrossRef] [PubMed]

- Musekiwa, A.; Manda, S.O.M.; Mwambi, H.G.; Chen, D.-G. Meta-analysis of effect sizes reported at multiple time points using general linear mixed model. PLoS ONE 2016, 11. [Google Scholar] [CrossRef] [PubMed]

- Trikalinos, T.A.; Olkin, I. Meta-analysis of effect sizes reported at multiple time points: A multivariate approach. Clin. Trials 2012, 9, 610–620. [Google Scholar] [CrossRef] [PubMed]

- Hedges, L.V.; Tipton, E.; Johnson, M.C. Robust variance estimation in meta-regression with dependent effect size estimates. Res. Synth. Methods 2010, 1, 39–65. [Google Scholar] [CrossRef] [PubMed]

- Lyall, D.M.; Cullen, B.; Allerhand, M.; Smith, D.J.; Mackay, D.; Evans, J.; Anderson, J.; Fawns-Ritchie, C.; McIntosh, A.M.; Deary, I.J.; et al. Cognitive tests scores in UK Biobank: Data reduction in 480,416 participants and longitudinal stability in 20,346 participants. PLoS ONE 2016. [Google Scholar] [CrossRef]

- Reitan, R.M. Trail Making Test: Manual for Administration and Scoring; Reitan Neuropsychological Laboratory: Tucson, AZ, USA, 1986. [Google Scholar]

- Stroop, J.R. Studies of interference in serial verbal reactions. J. Exp. Psychol. 1935, 18, 643–662. [Google Scholar] [CrossRef]

- Macleod, C.M. Half a century of research on the Stroop effect: An integrative review. Psychol. Bull. 1991, 109, 163–203. [Google Scholar] [CrossRef] [PubMed]

- Sterne, J.A.C.; Egger, M. Funnel plots for detecting bias in meta-analysis: Guidelines on choice of axis. J. Clin. Epidemiol. 2001, 54, 1046–1055. [Google Scholar] [CrossRef]

- Bartels, C.; Wegrzyn, M.; Wiedl, A.; Ackermann, V.; Ehrenreich, H. Practice effects in healthy adults: A longitudinal study on frequent repetitive cognitive testing. BMC Neurosci. 2010, 11, 118–129. [Google Scholar] [CrossRef] [PubMed]

- Puddey, I.B.; Mercer, A.; Andrich, D.; Styles, I. Practice effects in medical school entrance testing with the undergraduate medicine and health sciences admission test (UMAT). Med. Educ. 2014, 14, 48–62. [Google Scholar] [CrossRef] [PubMed]

- Albers, F.; Hoeft, S. Do it again and again. And again-Übungseffekte bei einem computergestützten Test zum räumlichen Vorstellungsvermögen [Do it again and again. And again—Practice effects in a computer-based spatial ability test]. Diagnostica 2019, 55, 71–83. [Google Scholar] [CrossRef]

- Dunlop, P.D.; Morrison, D.L.; Cordery, J.L. Investigating retesting effects in a personnel selection context. IJSA 2011, 19, 217–221. [Google Scholar] [CrossRef]

- Lo, A.Y.; Humphreys, M.; Byrne, G.J.; Pachana, N.A. Test-Retest reliability and practice effects of the Wechsler Memory Scale-III. J. Neuropsychol. 2012, 6, 212–231. [Google Scholar] [CrossRef] [PubMed]

- Schleicher, D.J.; Van Iddekinge, C.H.; Morgeson, F.P.; Campion, M.A. If at first you don’t succeed, try, try again: Understanding race, age, and gender differences in retesting score improvement. J. Appl. Psychol. 2010, 95, 603–627. [Google Scholar] [CrossRef] [PubMed]

- Strobach, T.; Schubert, T. No evidence for task automatization after dual-task training in younger and older adults. Psychol. Aging 2017, 32, 28–41. [Google Scholar] [CrossRef] [PubMed]

- Redick, T.S. Working memory training and interpreting interactions in intelligence interventions. Intelligence 2015, 50, 14–20. [Google Scholar] [CrossRef]

- Hunter, J.E.; Schmidt, F.L. Methods of Meta-Analysis; Sage: London, UK, 1990. [Google Scholar]

- Hausknecht, J.P. Candidate persistence and personality test practice effects: Implications for staffing system management. Pers. Psychol. 2010, 63, 299–324. [Google Scholar] [CrossRef]

- Barron, L.G.; Randall, J.G.; Trent, J.D.; Johnson, J.F.; Villado, A.J. Big five traits: Predictors of retesting propensity and score improvement. Int. J. Sel. Assess. 2017, 25, 138–148. [Google Scholar] [CrossRef]

- Anastasopoulou, T.; Harvey, N. Assessing sequential knowledge through performance measures: The influence of short-term sequential effects. Q. J. Exp. Psychol. 1999, 52, 423–448. [Google Scholar] [CrossRef]

- Baird, B.J.; Tombaugh, T.N.; Francis, M. The effects of practice on speed of information processing using the Adjusting-Paced Serical Addition Test (Adjusting-PSAT) and the Computerized Tests of Information Processing (CTIP). Appl. Neuropsychol. 2007, 14, 88–100. [Google Scholar] [CrossRef] [PubMed]

- Baniqued, P.L.; Kranz, M.B.; Voss, M.W.; Lee, H.; Cosman, J.D.; Severson, J.; Kramer, A.F. Cognitive training with casual video games: Point to consider. Front. Psychol. 2014, 4, 1010. [Google Scholar] [CrossRef] [PubMed]

- Buck, K.K.; Atkinson, T.M.; Ryan, J.P. Evidence of practice effects in variants of the Trail Making Test during serial assessment. J. Clin. Exp. Neuropsychol. 2008, 30, 312–318. [Google Scholar] [CrossRef] [PubMed]

- Colom, R.; Román, F.J.; Abad, F.J.; Shih, P.C.; Privado, J.; Froufe, M.; Escorial, S.; Martínez, K.; Burgaleta, M.; Quiroga, M.A.; et al. Adaptive n-back training does not improve fluid intelligence at the construct level: Gains on individual tests suggest that training may enhance visuospatial processing. Intelligence 2013, 41, 712–727. [Google Scholar] [CrossRef]

- Dingwall, K.M.; Lewis, M.S.; Maruff, P.; Cairney, S. Reliability of repeated cognitive testing in healthy Indigenous Australian adolescents. Aust. Psychol. 2009, 44, 224–234. [Google Scholar] [CrossRef]

- Dolan, E.; Cullen, S.J.; McGoldrick, A.; Warrington, G.D. The impact of making weight on physiological and cognitive processes in elite jockeys. Int. J. Sport Nutr. Exerc. Metab. 2013, 23, 399–408. [Google Scholar] [CrossRef] [PubMed]

- Elbin, R.J.; Schatz, P.; Covassin, T. One-year test-retest reliability of the online version of ImPACT in high school athletes. Am. J. Sport Med. 2011, 39, 2319–2324. [Google Scholar] [CrossRef] [PubMed]

- Enge, S.; Behnke, A.; Fleischhauer, M.; Küttler, L.; Kliegel, M.; Strobel, A. No evidence for true training and transfer effects after inhibitory control training in young healthy adults. J. Exp. Psychol. Learn. Mem. Cogn. 2014, 40, 987–1001. [Google Scholar] [CrossRef] [PubMed]

- Falleti, M.G.; Maruff, P.; Collie, A.; Darby, D.G. Practice effects associated with the repeated assessment of cognitive function using the CogState Battery at 10-minute, one week and one month test-retest intervals. J. Clin. Exp. Neuropsychol. 2006, 28, 1095–1112. [Google Scholar] [CrossRef] [PubMed]

- Gil-Gouveia, R.; Oliveira, A.G.; Martin, I.P. Sequential brief neuropsychological evaluation of migraineurs is identical to controls. Acta Neurol. Scand. 2016, 134, 197–204. [Google Scholar] [CrossRef] [PubMed]

- Iuliano, E.; di Cagno, A.; Aquino, G.; Fiorilli, G.; Mignogna, P.; Calcagno, G.; di Costanzo, A. Effects of different types of physical activity on the cognitive functions and attention in older people: A randomized controlled study. Exp. Gerontol. 2015, 70, 105–110. [Google Scholar] [CrossRef] [PubMed]

- Langenecker, S.A.; Zubieta, J.-K.; Young, E.A.; Akil, H.; Nielson, K.A. A task to manipulate attentional load, set-shifting, and inhibitory control: Convergent validity and test-retest reliability of the Parametric Go/No-Go Test. J. Clin. Exp. Neuropsychol. 2007, 29, 842–853. [Google Scholar] [CrossRef] [PubMed]

- Lemay, S.; Bédard, M.-A.; Rouleau, I.; Tremblay, P.-L.G. Practice effect and test-retest reliability of attentional and executive tests in middle-aged to elderly subjects. Clin. Neuropsychol. 2004, 18, 1–19. [Google Scholar] [CrossRef] [PubMed]

- Levine, A.J.; Miller, E.N.; Becker, J.T.; Selnes, O.A.; Cohen, B.A. Normative data for determining significane of test-retest differences on eight common neuropsychological instruments. Clin. Neuropsychol. 2004, 18, 373–384. [Google Scholar] [CrossRef] [PubMed]

- Mehlsen, M.; Pedersen, A.D.; Jensen, A.B.; Zachariae, R. No indications of cognitive side-effects in a prospective study of breast cancer patients receiving adjuvant chemotherapy. Psychooncology 2009, 18, 248–257. [Google Scholar] [CrossRef] [PubMed]

- Mora, E.; Portella, M.J.; Forcada, I.; Vieta, E.; Mur, M. Persistence of cognitive impairment and its negative impact on psychosocial functioning in lithium-treated, euthymic bipolar patients: A 6-year follow-up study. Psychol. Med. 2013, 43, 1187–1196. [Google Scholar] [CrossRef] [PubMed]

- Oelhafen, S.; Nikolaidis, A.; Padovani, T.; Blaser, D.; Koenig, T.; Perrig, W.J. Increased parietal activity after training of interference control. Neuropsychologia 2013, 2781–2890. [Google Scholar] [CrossRef] [PubMed]

- Ownby, R.L.; Waldrop-Valverde, D.; Jones, D.L.; Sharma, S.; Nehra, R.; Kumar, A.M.; Prabhakar, S.; Acevedo, A.; Kumar, M. Evaluation of practice effect on neuropsychological measures among persons with and without HIV infection in northern India. J. Neurvirol. 2016, 23, 134–140. [Google Scholar] [CrossRef] [PubMed]

- Register-Mihalik, J.K.; Kontos, D.L.; Guskiewicz, K.M.; Mihalik, J.P.; Conder, R.; Shields, E.W. Age-related differences and reliability on computerized and paper-and-pencil neurocognitive assessment batteries. J. Athl. Train. 2012, 47, 297–305. [Google Scholar] [CrossRef] [PubMed]

- Richmond, L.L.; Wolk, D.; Chein, J.; Olson, I.R. Transcranial direct stimulation enhances verbal working memory training performance over time and near transfer outcomes. J. Cogn. Neurosci. 2014, 26, 2443–2454. [Google Scholar] [CrossRef] [PubMed]

- Salminen, T.; Strobach, T.; Schubert, T. On the impacts of working memory training on executive functioning. Front. Hum. Neurosci. 2012, 6, 166. [Google Scholar] [CrossRef] [PubMed]

- Sandberg, P.; Rönnlund, M.; Nyberg, L.; Stigsdotter Neely, A. Executive process training in young and old adults. Aging Neuropsychol. Cogn. 2014, 21, 577–605. [Google Scholar] [CrossRef] [PubMed]

- Schatz, P. Long-term test-retest reliability of baseline cognitive assessments using ImPACT. Am. J. Sports Med. 2010, 38, 47–53. [Google Scholar] [CrossRef] [PubMed]

- Schmidt, P.J.; Keenan, P.A.; Schenkel, L.A.; Berlin, K.; Gibson, C.; Rubinow, D.R. Cognitive performance in healthy women during induced hypogonadism and ovarian steroid addback. Arch. Womens Ment. Health 2013, 16, 47–58. [Google Scholar] [CrossRef] [PubMed]

- Schranz, S.; Osterode, W. Übungseffekte bei computergestützten psychologischen Leistungstests [Practice effects in a computer-based psychological aptitude test]. Wien. Klien. Wochenschr. 2009, 121, 405–412. [Google Scholar] [CrossRef] [PubMed]

- Sharma, V.K.; Rajajeyakumar, M.R.; Velkumary, S.; Subramanian, S.K.; Bhavanani, A.B.; Madanmohan, A.S.; Sahai, A.; Thangavel, D. Effect of fast and slow pranayama practice on cognitive functions in healthy volunteers. J. Clin. Diagn. Res. 2013, 8, 10–13. [Google Scholar] [CrossRef] [PubMed]

- Soveri, A.; Waris, O.; Laine, M. Set shifting training with categorization tasks. PLoS ONE 2013, 8. [Google Scholar] [CrossRef] [PubMed]

- Steinborn, M.B.; Flehmig, H.C.; Westhoff, K.; Langner, R. Predicting school achievement from self-paced continuous performance: Examining the contributions of response speed, accuracy, and response speed variability. Psychol. Sci. Q. 2008, 50, 613–634. [Google Scholar]

- Weglage, J.; Fromm, J.; van Teeffelen-Heithoff, A.; Moeller, H.; Koletzko, B.; Marquardt, T.; Rutsch, F.; Feldmann, R. Neurocognitive functioning in adults with phenylketonuria: Results of a long term study. Mol. Genet. Metab. 2013, 110, 44–48. [Google Scholar] [CrossRef] [PubMed]

| No. of Administrations | Level | Characteristic | M | SD | Mdn | Min | Max | % NA |

|---|---|---|---|---|---|---|---|---|

| 2 | Study (m = 36) | year of publication | 2010.00 | 4.13 | 2012.00 | 1999.00 | 2016.00 | 0.00 |

| no. of administrations | 3.028 | 1.96 | 2.00 | 2.00 | 12.00 | 0.00 | ||

| TR interval (weeks) | 32.89 | 75.32 | 3.00 | 0.00 | 312.90 | 0.00 | ||

| % control groups | 36.73 | 0.00 | ||||||

| Sample (k = 49) | N | 445.10 | 2755.30 | 25.00 | 9.00 | 19,330 | 0.00 | |

| age | 51.70 | 19.74 | 54.50 | 14.8 | 68.80 | 0.00 | ||

| % male | 41.31 | 17.65 | 45.50 | 0.00 | 100.00 | 12.25 | ||

| Test (o = 128) | % alternate test forms | 3.18 | 1.56 | |||||

| SMCR1.2 | −0.22 | 0.28 | −0.19 | −1.19 | 0.46 | 0.00 | ||

| SE(SMCR1.2) | 0.17 | 0.06 | 0.16 | 0.01 | 0.31 | 0.00 | ||

| 3 | Study (m = 14) | year of publication | 2008.00 | 4.05 | 2008.00 | 1999.00 | 2014.00 | 0.00 |

| no. of administrations | 4.64 | 2.40 | 4.00 | 3.00 | 12.00 | 0.00 | ||

| TR interval (weeks) | 4.53 | 5.84 | 2.14 | 0.00 | 17.43 | 0.00 | ||

| % control groups | 12.00 | 0.00 | ||||||

| Sample (k = 25) | N | 34.36 | 22.67 | 25.00 | 10.00 | 113.00 | 0.00 | |

| age | 31.16 | 17.21 | 22.20 | 15.41 | 67.35 | 0.00 | ||

| % male | 39.63 | 19.37 | 33.63 | 0.00 | 100.00 | 12.00 | ||

| Test (o = 58) | % alternate test forms | 3.57 | 3.44 | |||||

| SMCR1.3 | −0.40 | 0.48 | −0.27 | −1.85 | 0.35 | 0.00 | ||

| SE(SMCR1.3) | 0.17 | 0.06 | 0.16 | 0.08 | 0.34 | 0.00 | ||

| 4 | Study (m = 9) | year of publication | 2007.00 | 4.11 | 2007.00 | 1999.00 | 2013.00 | 0.00 |

| no. of administrations | 5.56 | 2.60 | 5.00 | 4.00 | 12.00 | 0.00 | ||

| TR interval (weeks) | 4.38 | 6.71 | 0.51 | 0.00 | 20.00 | 0.00 | ||

| % control groups | 14.29 | 0.00 | ||||||

| Sample (k = 14) | N | 34.36 | 27.79 | 24.00 | 10.00 | 113.00 | 0.00 | |

| age | 34.97 | 18.58 | 23.00 | 15.41 | 63.68 | 0.00 | ||

| % male | 40.58 | 13.20 | 41.18 | 0.00 | 60.00 | 7.14 | ||

| Test (o = 26) | % alternate test forms | 8.33 | 7.69 | |||||

| SMCR1.4 | −0.57 | 0.50 | −0.55 | −1.85 | 0.33 | 0.00 | ||

| SE(SMCR1.4) | 0.17 | 0.08 | 0.16 | 0.08 | 0.39 | 0.00 |

| Comparison | m | k | o | N | SMCR | SE | 95% CI | p | τ |

|---|---|---|---|---|---|---|---|---|---|

| 1.2 | 36 | 49 | 128 | 21,810 | −0.237 | 0.040 | [−0.318, −0.155] | <0.001 | 0.238 |

| 1.3 | 14 | 25 | 58 | 859 | −0.367 | 0.075 | [−0.519, −0.215] | <0.001 | 0.399 |

| 1.4 | 9 | 14 | 26 | 481 | −0.499 | 0.082 | [−0.666, −0.333] | <0.001 | 0.424 |

| 2.3 | −0.131 | 0.054 | [−0.241, −0.021] | 0.021 | |||||

| 3.4 | −0.132 | 0.026 | [−0.186, −0.079] | <0.001 | |||||

| 1.2 vs. 2.3 | −0.106 | 0.059 | [−0.226, 0.014] | 0.041 | |||||

| 2.3 vs. 3.4 | 0.002 | 0.055 | [−0.111, 0.114] | 0.979 |

| Test | Comparison | m | k | o | N | SMCR | SE | 95% CI | p |

|---|---|---|---|---|---|---|---|---|---|

| TMT | 1.2 | 11 | 14 | 28 | 445 | −0.331 | 0.063 | [−0.460, −0.201] | <0.001 |

| 1.3 | 4 | 7 | 11 | 225 | −0.448 | 0.120 | [−0.693, −0.203] | <0.001 | |

| 2.3 | −0.117 | 0.065 | [−0.250, 0.016] | 0.082 | |||||

| 1.2 vs. 2.3 | −0.214 | 0.046 | [−0.307, −0.120] | <0.001 | |||||

| Stroop | 1.2 | 7 | 8 | 15 | 211 | −0.211 | 0.065 | [−0.344, −0.078] | 0.002 |

| 1.3 | 2 | 2 | 7 | 75 | −0.399 | 0.075 | [−0.552, −0.247] | <0.001 | |

| 2.3 | −0.189 | 0.017 | [−0.223, −0.154] | <0.001 | |||||

| 1.2 vs. 2.3 | −0.022 | 0.059 | [−0.142, 0.098] | 0.352 |

| Comparison | Complexity | o | SMCR | SE | 95% CI | p | ∆SMCRsimple-complex | p (∆SMCRsimple-complex) | τ | ∆τ2 |

|---|---|---|---|---|---|---|---|---|---|---|

| 1.2 | simple | 25 | −0.108 | 0.044 | [−0−199, 0.018] | 0.021 | 0.159 | 0.001 | 0.224 | 0.117 |

| complex | 103 | −0.268 | 0.044 | [−0.357, −0.179] | <0.001 | |||||

| 1.3 | simple | 12 | −0.130 | 0.070 | [−0.272, 0.012] | 0.071 | 0.295 | 0.004 | 0.386 | 0.063 |

| complex | 46 | −0.425 | 0.091 | [−0.611, −0.239] | <0.001 | |||||

| 2.3 | simple | −0.022 | 0.047 | [−0.117, 0.074] | 0.645 | 0.135 | 0.029 | |||

| complex | −0.157 | 0.068 | [−0.296, −0.018] | 0.028 | ||||||

| 1.2 vs. 2.3 | simple | −0.087 | 0.059 | [−0.207, 0.034] | 0.152 | 0.024 | 0.347 | |||

| complex | −0.157 | 0.068 | [−0.296, −0.018] | 0.122 |

| Moderator | Comparison | Coefficient | SMCR | SE | 95% CI | p | τ | ∆τ2 |

|---|---|---|---|---|---|---|---|---|

| Test-Retest Interval (weeks) | 1.2 | Int | −0.258 | 0.046 | [−0.353, −0.164] | <0.001 | 0.235 | 0.026 |

| b | 0.001 | 0.001 | [−0.000, 0.002] | 0.038 | ||||

| 1.3 | Int | −0.414 | 0.096 | [−0.611, −0.217] | 0.001 | 0.399 | 0.000 | |

| b | 0.007 | 0.008 | [−0.010, 0.024] | 0.198 | ||||

| 1.4 | Int | −0.543 | 0.106 | [−0.759, −0.327] | <0.001 | 0.425 | 0.000 | |

| b | 0.004 | 0.007 | [−0.010, 0.017] | 0.280 | ||||

| 2.3 | Int | −0.156 | 0.078 | [−0.316, 0.004] | 0.056 | |||

| b | 0.006 | 0.008 | [−0.011, 0.024] | 0.224 | ||||

| 3.4 | Int | −0.129 | 0.028 | [−0.187, −0.071] | <0.001 | |||

| b | −0.003 | 0.004 | [−0.011, 0.004] | 0.384 | ||||

| Age (yrs) | 1.2 | Int | −0.220 | 0.861 | [−0.396, −0.044] | 0.016 | 0.241 | 0.000 |

| b | −0.001 | 0.002 | [−0.631, −0.021] | 0.803 | ||||

| 1.3 | Int | −0.326 | 0.149 | [−0.631, −0.021] | 0.037 | 0.401 | 0.000 | |

| b | −0.001 | 0.003 | [−0.005, 0.004] | 0.717 | ||||

| 1.4 | Int | −0.553 | 0.176 | [−0.912, −0.195] | 0.004 | 0.426 | 0.000 | |

| b | 0.001 | 0.004 | [−0.006, 0.008] | 0.392 | ||||

| 2.3 | Int | −0.106 | 0.103 | [−0.317, 0.105] | 0.312 | |||

| b | −0.001 | 0.002 | [−0.004, 0.003] | 0.723 | ||||

| 3.4 | Int | −0.227 | 0.043 | [−0.314, −0.141] | <0.001 | |||

| b | 0.002 | 0.007 | [0.006, 0.004] | 0.004 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Scharfen, J.; Blum, D.; Holling, H. Response Time Reduction Due to Retesting in Mental Speed Tests: A Meta-Analysis. J. Intell. 2018, 6, 6. https://doi.org/10.3390/jintelligence6010006

Scharfen J, Blum D, Holling H. Response Time Reduction Due to Retesting in Mental Speed Tests: A Meta-Analysis. Journal of Intelligence. 2018; 6(1):6. https://doi.org/10.3390/jintelligence6010006

Chicago/Turabian StyleScharfen, Jana, Diego Blum, and Heinz Holling. 2018. "Response Time Reduction Due to Retesting in Mental Speed Tests: A Meta-Analysis" Journal of Intelligence 6, no. 1: 6. https://doi.org/10.3390/jintelligence6010006