1. Introduction

Advanced robotic technologies add convenience to human life, not only in industrial applications, but also in the fields of education and entertainment. Researchers have developed numerous types of robots to meet different demands, so that the human-robot interactions are simplified and robots can carry out more complex functions. There are many kinds of robotic systems, but the robot arm is the one most used [

1]. For many factories in the industrial field, such as car assembly plants, the use of robot arms is an important tool in the manufacturing process. In order for the movement of robot arms to be controlled according to the target positions, the robot arm movement needs to be designed with respect to stability and precision. As the recognition technology has improved in a variety of ways, robots have become more human-like; hence, these robots are called humanoid robots, as they resemble humans in appearance and imitate their behavior. Robots now offer valuable assistance for humans in their everyday life.

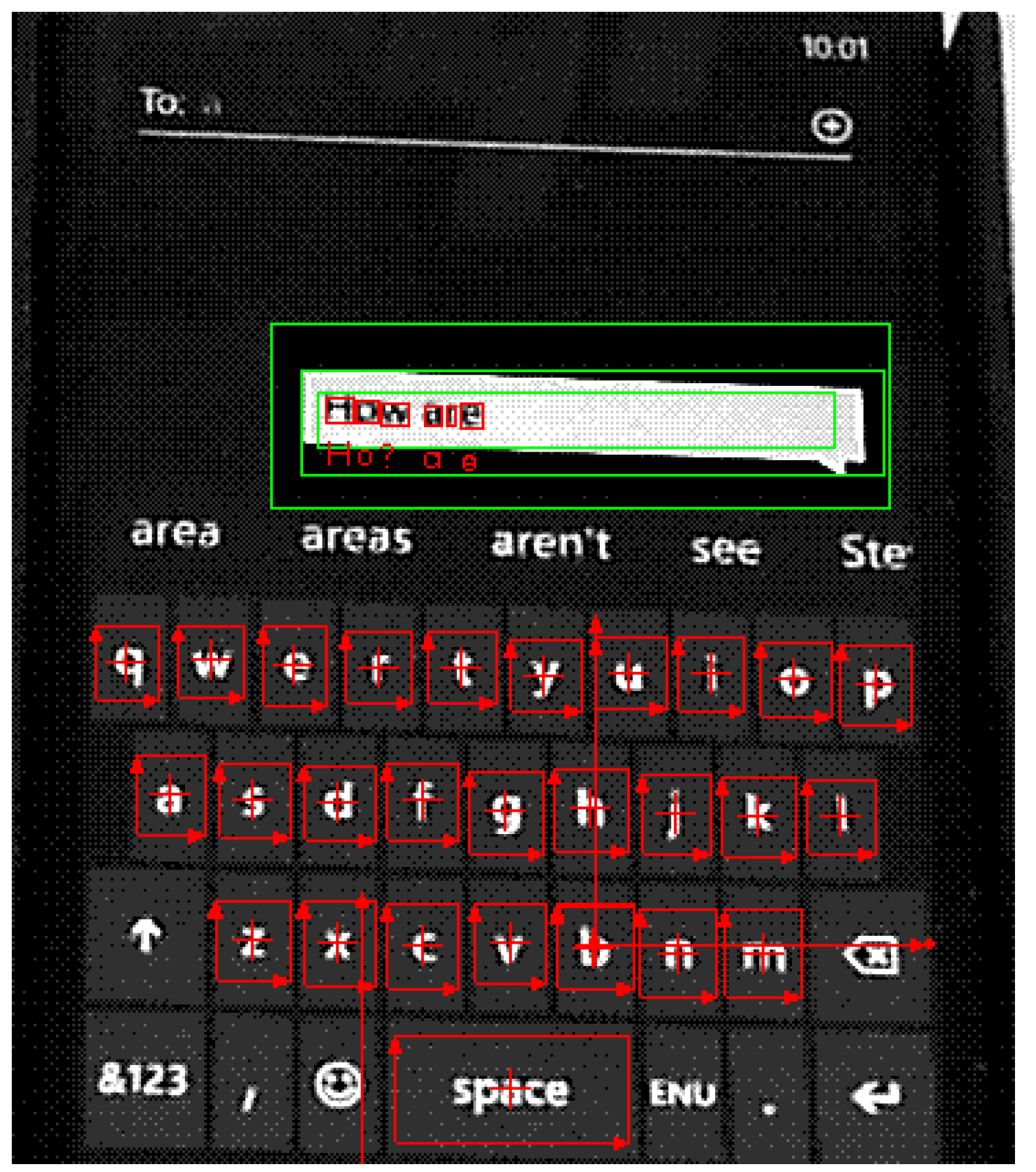

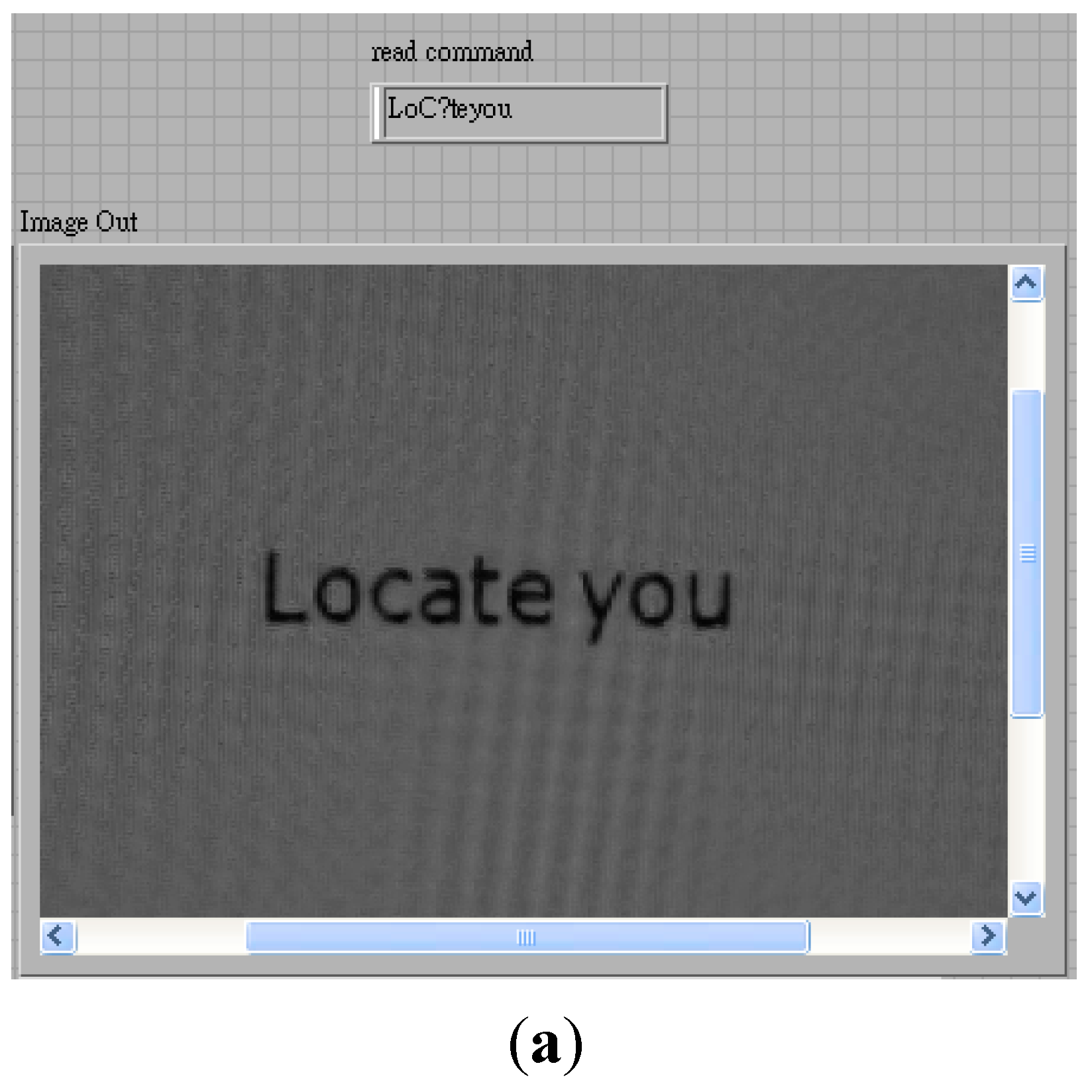

There are now many smartphones on the market, which means that thousands of touch panels must be tested by quality assurance engineers, which can be a dull job. Therefore, we have designed a smartphone automatic test system that uses a robot arm to reduce human operating time and human resources. The mechanical arm of the traditional design generally uses fixed path planning and strategies to control the entire procedure, so that the robot can reach a specific target position and complete the task, as with industrial robot arms. Although the robot arm can finish tasks accurately, if the target position is changed, the robot arm cannot reach the new point until the whole control process has been redesigned. In this study, we applied a two-camera vision system to recognize the characters and locate the position of the targets. A video camera was utilized to capture the commands on the screen of the control panel and another camera was used to read characters and recognize the screen image of the smart phone. The proposed system identifies an object position and the control scheme calculates the relative position of the moving path through image processing and fuzzy control, and then drives the robot arm to reach the desired position. The effectiveness of the proposed control design was verified by the combination of hardware and software tests.

A number of researchers have studied the motion control of robot arms over the past decades. Furuta [

2] considered the dynamic equation of a robot arm. Based on sensor signal feedback, a PID control was designed for the arm to achieve the desired position. Munasinghe [

3] established a simulation system of the robot arm so that the coordinates of each joint could be computed by the simulation system; thus, the arm could be controlled to track an assigned trajectory. Koga [

4] calculated the magnitude of the torque for each joint of the robot arm when it grabs an object. Using the PA-10 robot arm made by Mitsubishi Company as a platform, the paper [

5] proposed the concept of a harmonic drive model to investigate the gravity and material influence on the robot arm. Moreover, the robot arm was controlled so as to track a desired trajectory and the motion error analyzed. In [

6], a two-link robot arm was controlled by a fuzzy sliding mode controller, in which the parameters were adjusted by fuzzy-neural techniques.

In general, the kinematics for robot arms includes two basic problems: the forward kinematics problem and the inverse kinematics problem. Usually, forward kinematics is solved by building a D-H model [

1,

7], deriving the kinematics and differential kinematics formula of the robot to control the robot arm and change its position. Based on the D-H model, it is evident that the kinematics of robot manipulators are nonlinear functions and, actually, the combination of trigonometric functions. The complexity of inverse kinematics usually makes it more difficult to find solutions than with forward kinematics; therefore, researchers have developed different approaches for inverse kinematics. The robot arm system in [

8] was designed to implement the elevator button-pushing behavior by using an inverse kinematics technique. Recently, some research efforts have been directed to solving the inverse kinematics problem using fuzzy logic [

9,

10]. For the combining of image processing with the robot arm, some papers [

11,

12] presented the use of a camera on the robot arm to identify the target and find its location, and then the arm can find the target successfully.

The aim of this study was to provide vision ability to the robot arm through the use of character recognition techniques, as the robot arm must be able to recognize characters on the control panel and the smartphone. Lettoumeau

et al. [

13] presented an approach making it possible for an autonomous mobile robot to read characters, using characters printed on a colored sheet and a neural network trained to identify characters in different conditions to take into consideration the various viewpoints possible. Qadri

et al. [

14] proposed an image processing technology which used the number license plate to identify the vehicle, called Automatic Number Plate Recognition (ANPR). The ANPR system robustly detects and recognizes the vehicle using the license plate under different lighting conditions, and it can be implemented at the entrance of highly restricted areas. Finally, template matching and probability classifications can be used to recognize the characters. In this study, a multi-degree-of-freedom manipulator, vision sensor, image processing and fuzzy theory were applied to the control scheme design. Through position control and image processing, the proposed control scheme proved capable of recognizing characters and driving the robot arm to press the desired button of the tested smartphone. Besides the Introduction section, this paper is organized as follows.

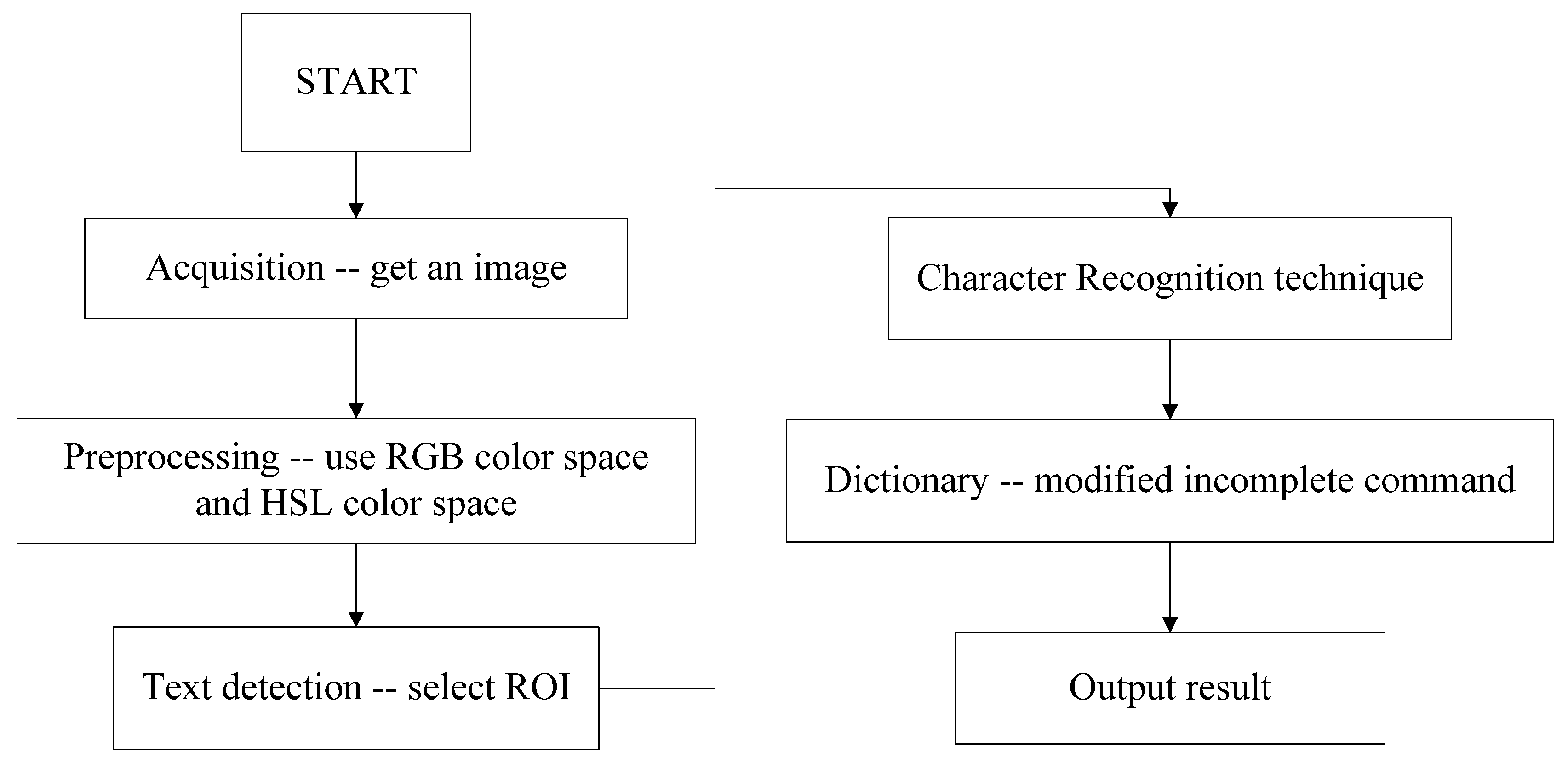

Section 2 gives the experimental setup and the kinematic model of the robot that are used in this study.

Section 3 describes the methods of image processing for characters recognition.

Section 4 proposes the control sequence and a fuzzy controller to the robot arm control.

Section 5 presents two experiments to confirm the proposed control scheme.

Section 6 concludes this research.

2. System Setup

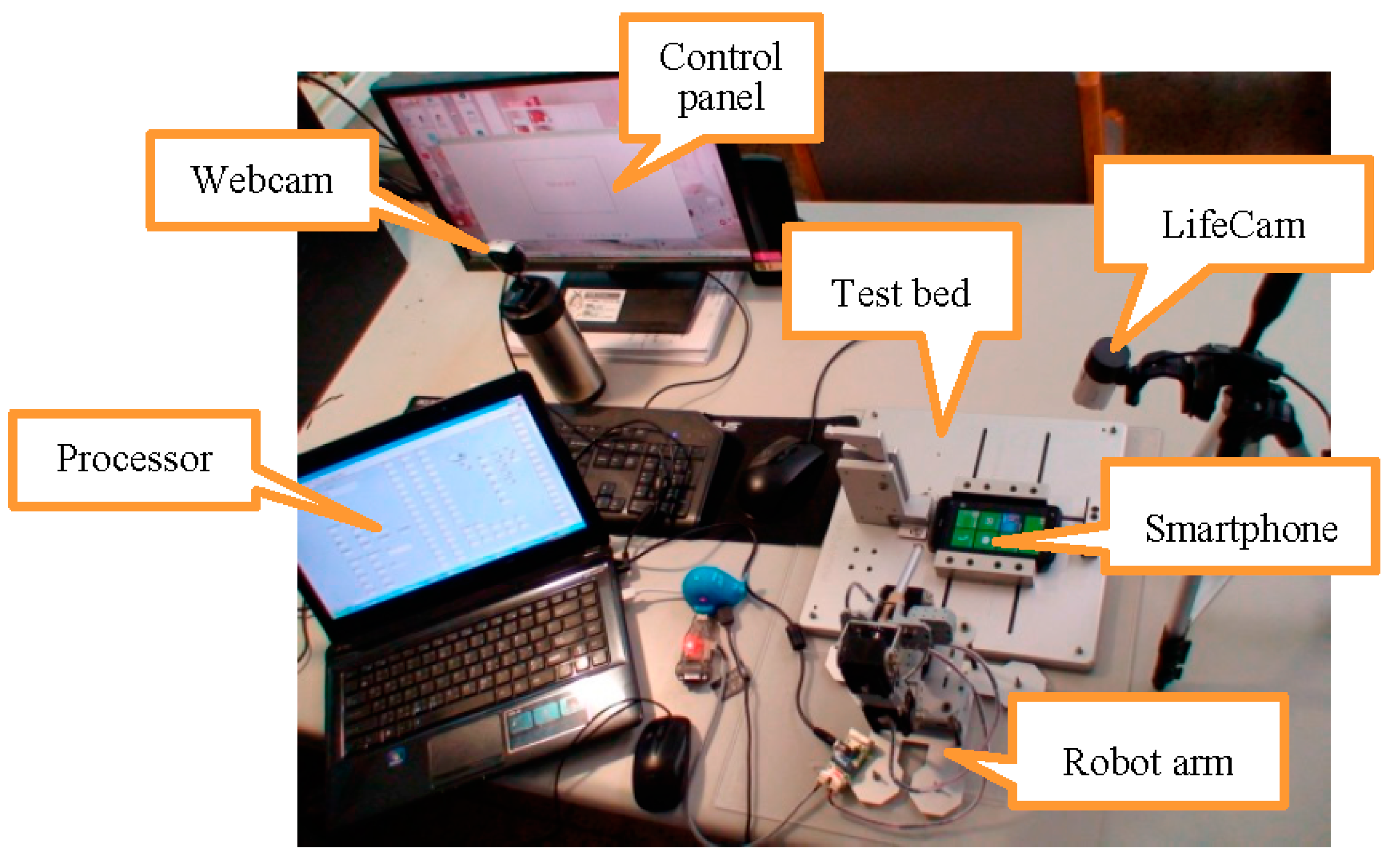

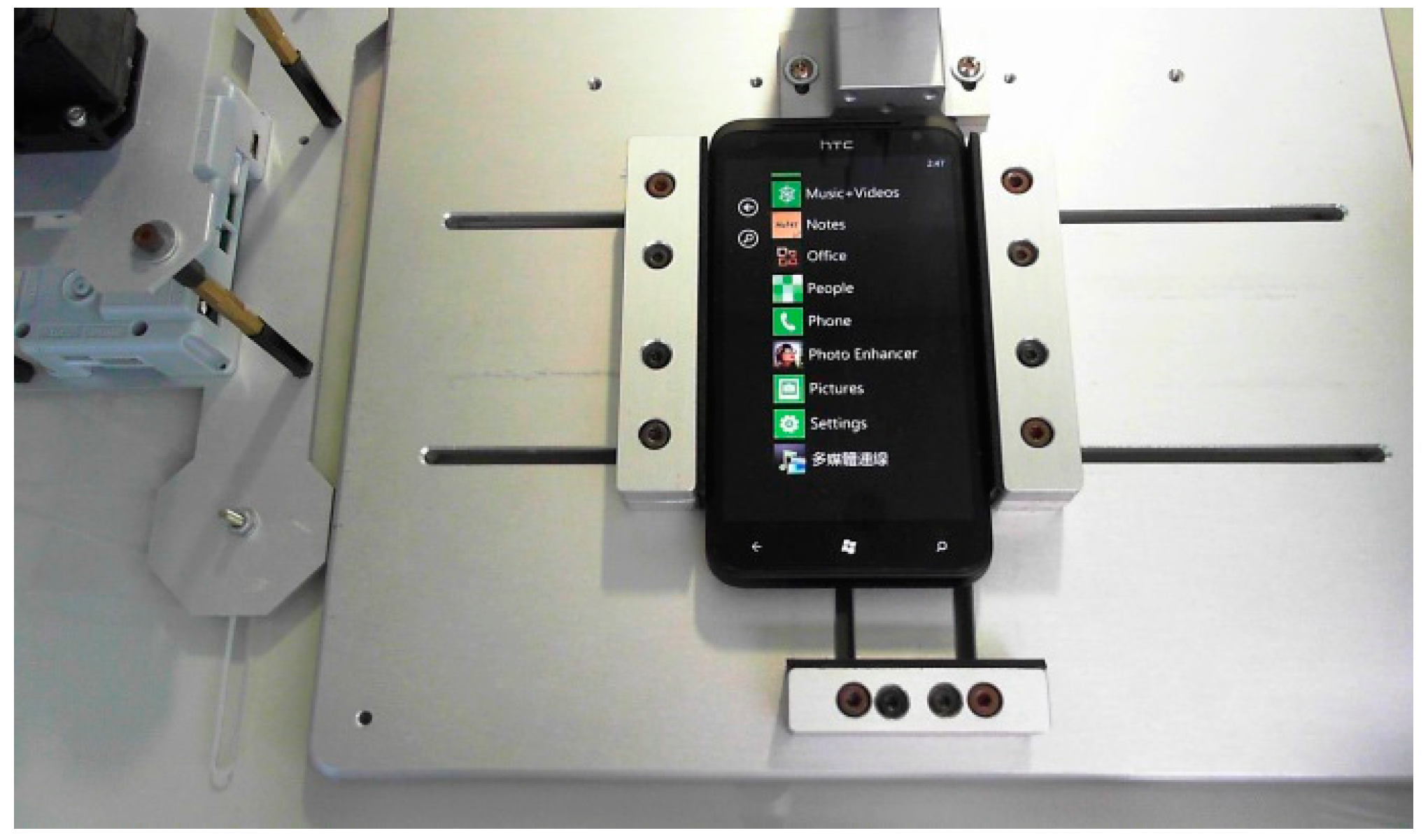

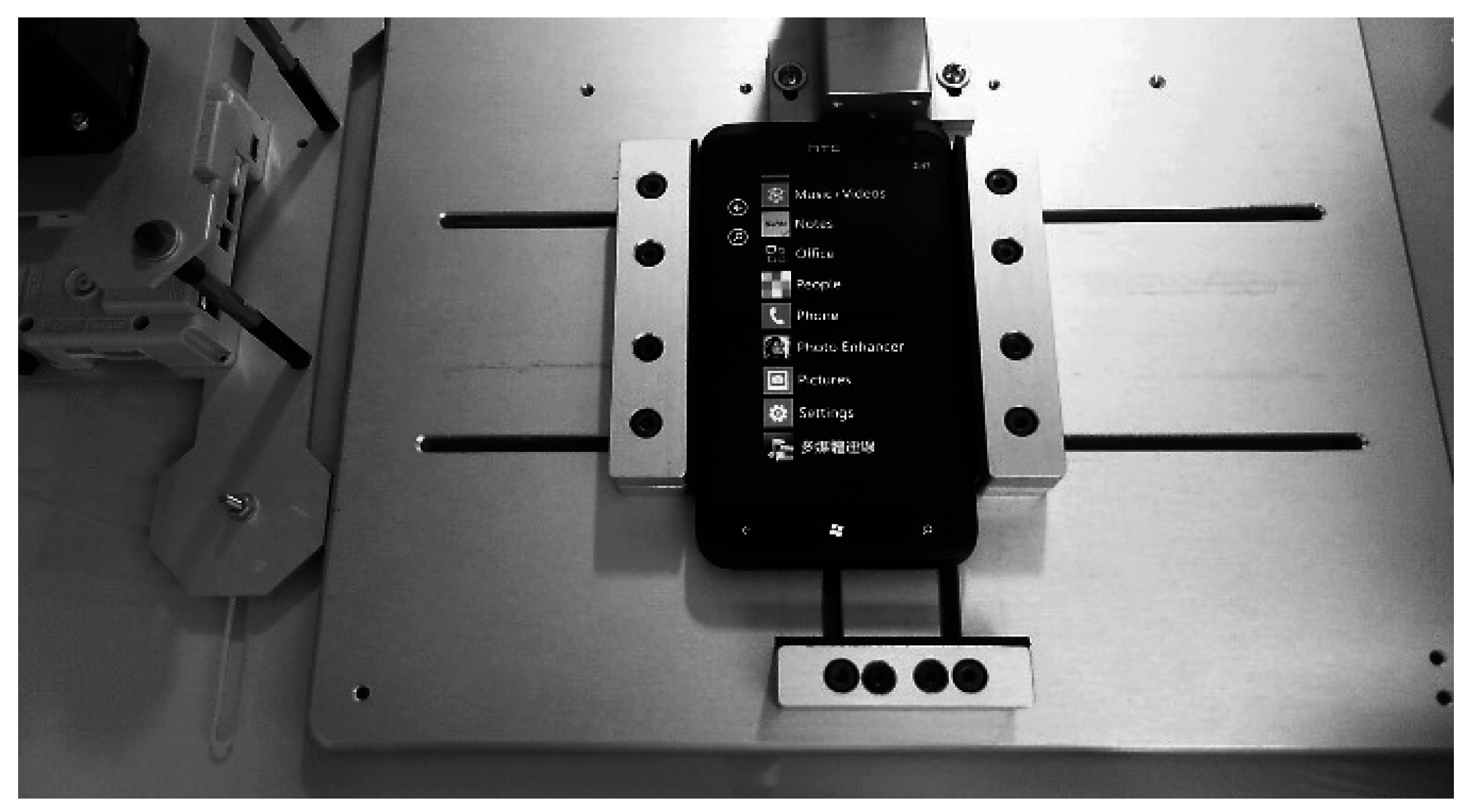

In this study, an embedded computer was used as the main controller. A network camera, Microsoft LifeCam Studio 1080P Full-HD (Microsoft Taiwan Corporation, Taipei, Taiwan), and an E-books W6 PC Webcam (Chung Ching Technical Co., Taichung, Taiwan) were used for the image processing, as shown in

Figure 1. The Dynamixel MX-28 servo motor produced by Robotis Company (Seoul, Korea) was the main motive force of the robot arm, as shown in

Figure 2.

The motor weight was 72 g, dimensions of 35.6 × 50.6 × 35.5 (mm), resolution of 0.088°, running degree of 0°–360°, gear reduction ratio of 193:1, stall torque of 24 kgf-cm (at 12 V, 1.5 A) and a no-load speed of 54 rpm (at 12 V).

Figure 3 shows the experimental setup with a smartphone on the test board.

Figure 1.

(a) Microsoft LifeCam Studio 1080P Full-HD and (b) E-books W6 PC Webcam.

Figure 1.

(a) Microsoft LifeCam Studio 1080P Full-HD and (b) E-books W6 PC Webcam.

Figure 2.

(a) Dynamixel MX-28 servo motor and (b) robot arm.

Figure 2.

(a) Dynamixel MX-28 servo motor and (b) robot arm.

Figure 3.

Experimental setup includes the control panel (PC screen), main processor (notebook), Microsoft LifeCam, E-books Webcam, robot arm, tested smartphone, and test bed.

Figure 3.

Experimental setup includes the control panel (PC screen), main processor (notebook), Microsoft LifeCam, E-books Webcam, robot arm, tested smartphone, and test bed.

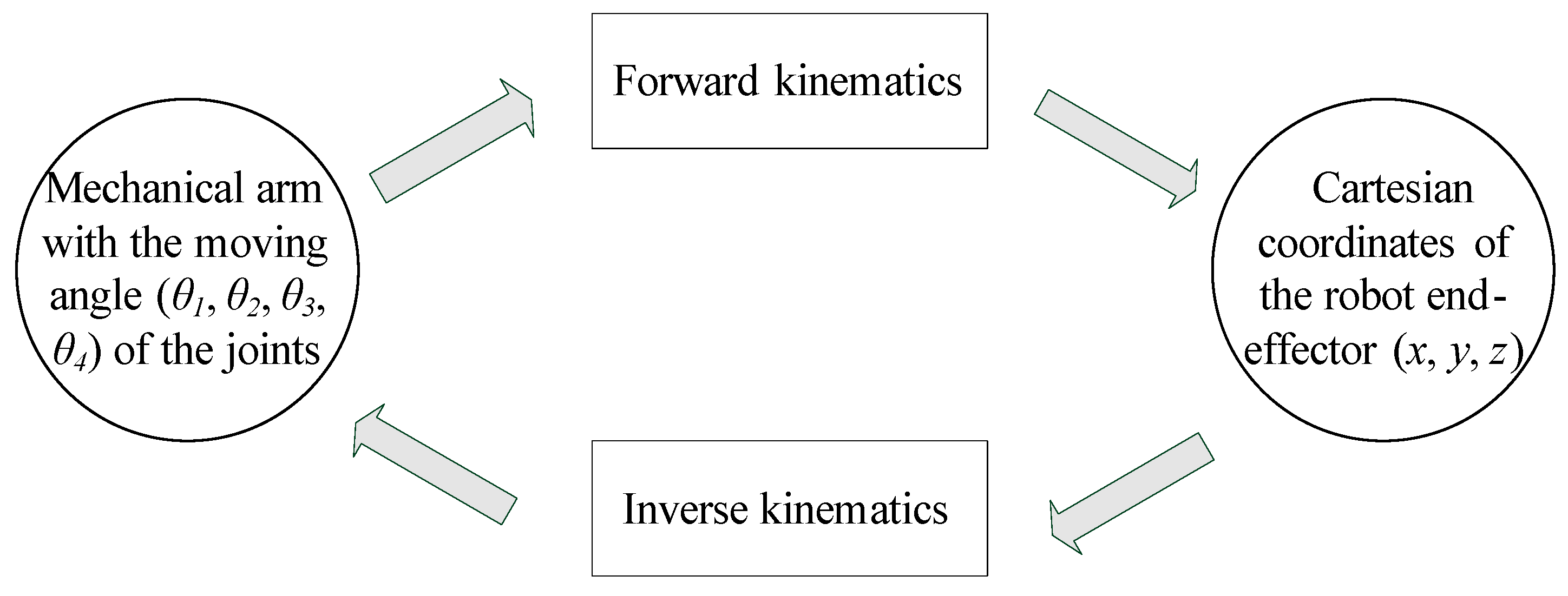

Kinematics [

15] mainly defines the conversion in the space of Cartesian coordinates (

x,

y,

z) and the mechanical arm with the moving angle (θ

1, θ

2, θ

3, θ

4) of the joints. Forward kinematics can be considered as a mapping from the joint space to the operational space with the coordinates of the robot end-effector. Inverse kinematics is a mapping from the operational space with the coordinates of the robot end-effector to the joint space, so it can be considered as the inverse of forward kinematics.

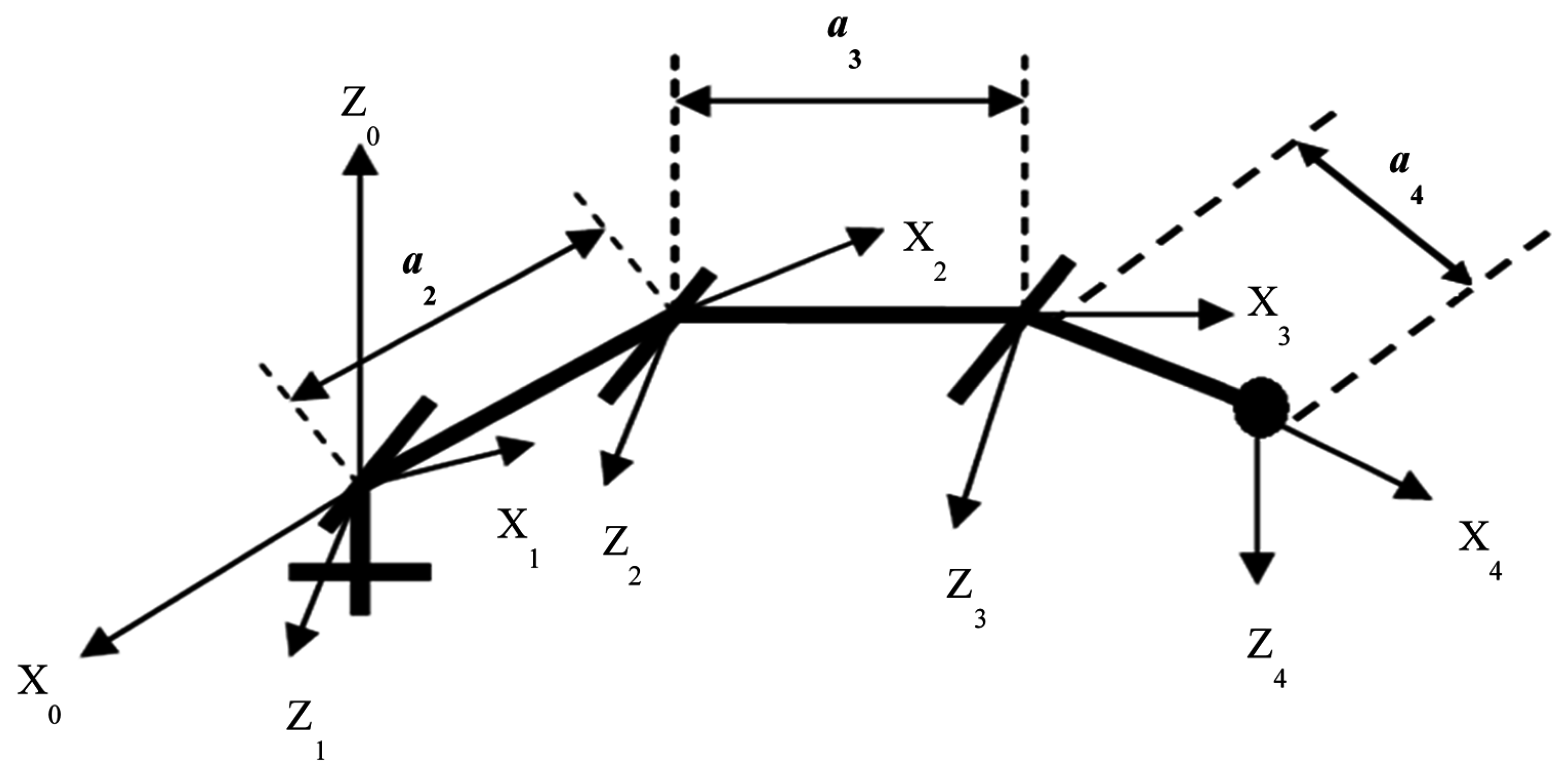

Figure 4 explains the relationship of forward kinematics and inverse kinematics. The presented kinematic model of the arm has four degrees of freedom. The model of the robotic arm is presented in

Figure 5. There are four parameters that fully describe the kinematic relationship between every neighboring joint and link in a manipulator. These four parameters are:

These parameters are derived from the D-H model, as found from the robot arm as analyzed in

Appendix A.

Figure 4.

Relationship of forward kinematics and inverse kinematics.

Figure 4.

Relationship of forward kinematics and inverse kinematics.

Figure 5.

Kinematic model of robotic arm with four degrees of freedom.

Figure 5.

Kinematic model of robotic arm with four degrees of freedom.

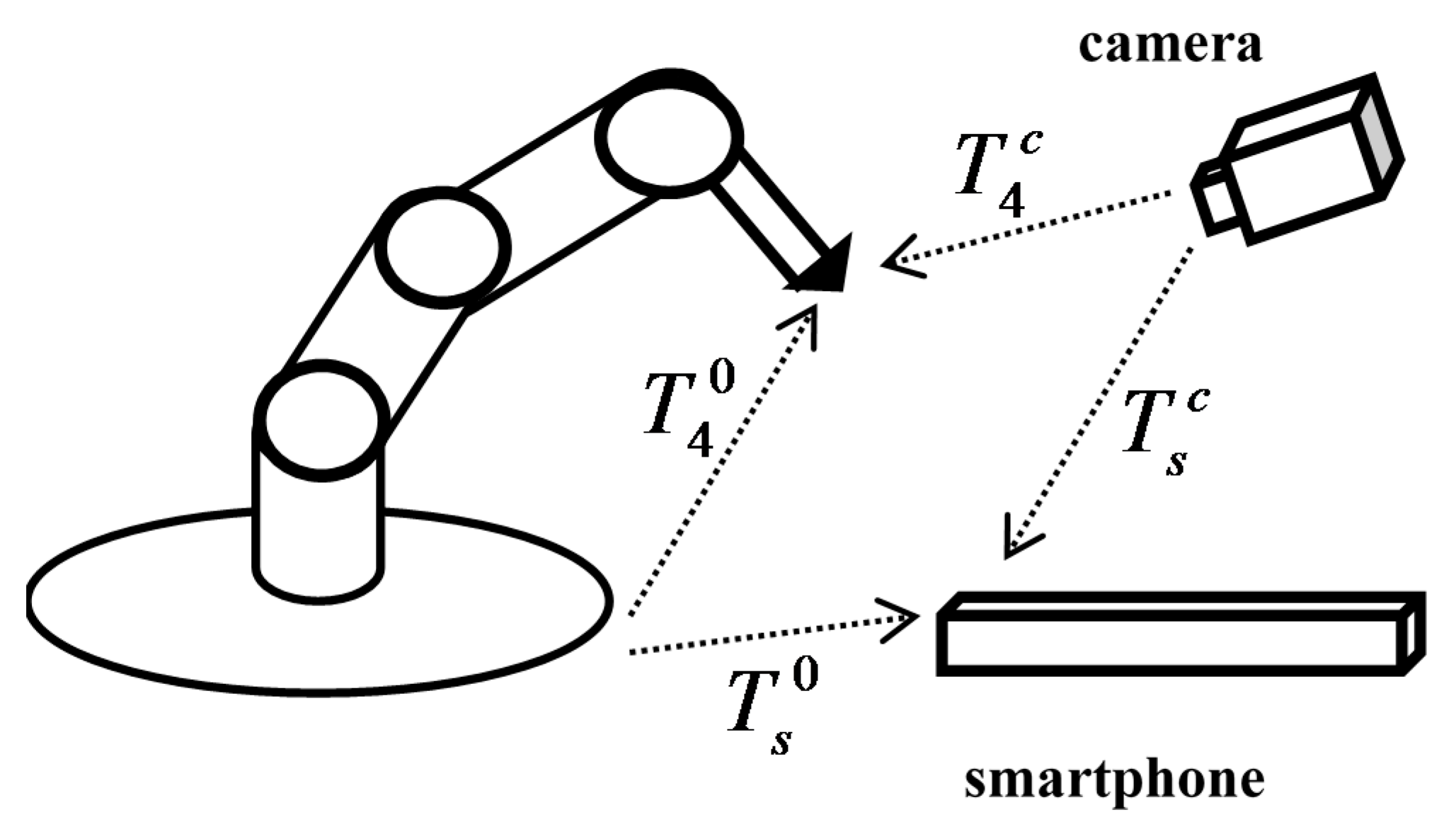

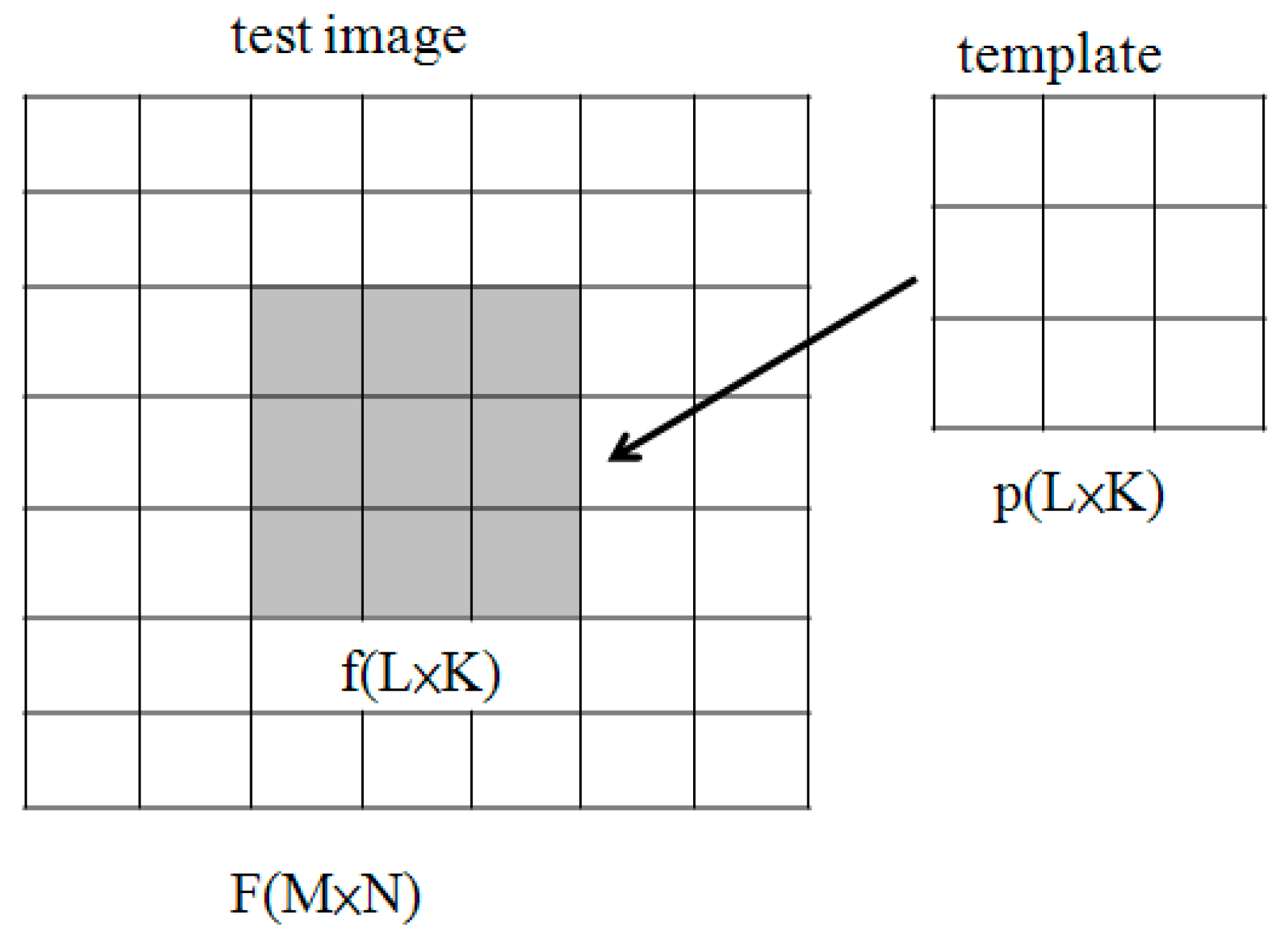

Because the views of the camera and robot arm are different, a coordinate transformation process is needed. The coordinate transformation relationship is shown in

Figure 6, where

T40 is the coordinate transformation relationship of the base frame and end-effector,

T4c is the coordinate transformation relationship for the camera and end-effector,

Ts0 is the coordinate transformation relationship of the base frame and smartphone and

Tsc is the coordinate transformation relationship of the camera and smartphone. We needed to collect more than three data, and then use the least squares method to obtain the transformation matrix. Because the

z-axis coordinates are the same, we only needed to use

x coordinates and

y coordinates, where

xi and

yi are the pattern matching coordinates, and

xr and

yr are the robot end-effector coordinates.

where

r11,

r12,

r21 and

r22 are rotation, and

r13 and

r23 are translation. From Equation (1), we have:

From Equations (2) and (3), we have:

From Equations (4) and (5), we have:

From Equations (6) and (7), we use the least squares method to obtain ψ

1 and ψ

2:

Figure 6.

Coordinate transformation relationship.

Figure 6.

Coordinate transformation relationship.

4. Control Scheme

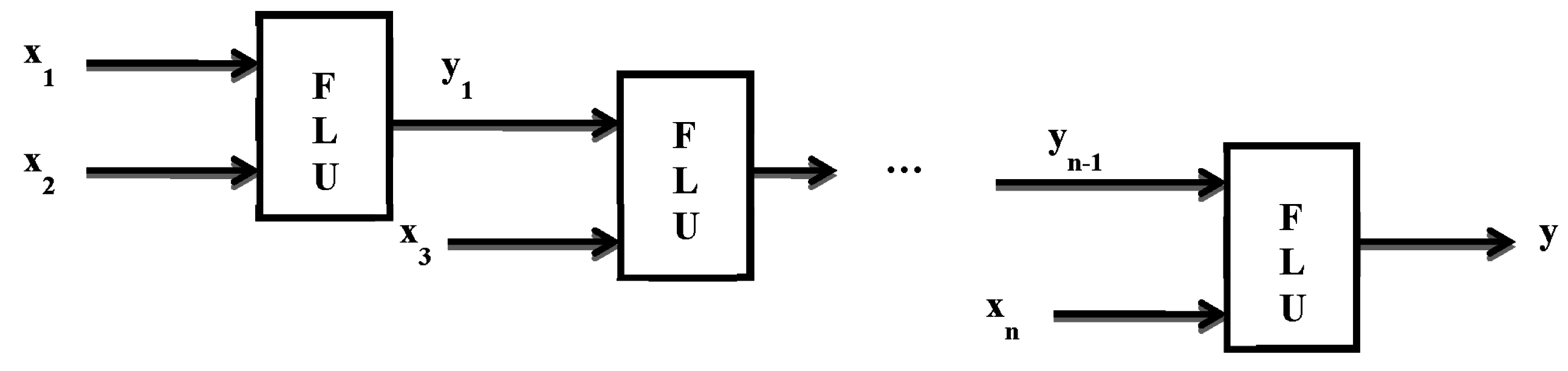

A robotic manipulator modeled with link structural flexibility during execution of a task requires the end-effector to contact the smartphone. It uses the information of the target position obtained as per the previous section. Using this methodology, position control of the end point is possible when the end effector moves to the desired position. The number of fuzzy rules grows exponentially with the number of input variables. To overcome the problem, the idea of using a hierarchical structure in the design of a fuzzy system was reported by Raju and Zhou [

21], where the input variables were put into a collection of low-dimensional fuzzy logic units (FLUs) and the outputs of the FLUs used as the input variables for the FLUs in the next layer, as shown in

Figure 22. According to their findings, the number of fuzzy rules employed in the hierarchical fuzzy system (HFS) is proportional to the number of input variables. A hierarchical fuzzy controller and a conventional fuzzy controller have different input and output architectures, and the difference affects the number of fuzzy rule-based structures. Using hierarchical fuzzy theory in the controller design can effectively reduce the establishment of fuzzy rules. In

Figure 22,

xi are the inputs and

yi are the outputs of the fuzzy logic controllers.

Figure 22.

Typical structure of hierarchical fuzzy system.

Figure 22.

Typical structure of hierarchical fuzzy system.

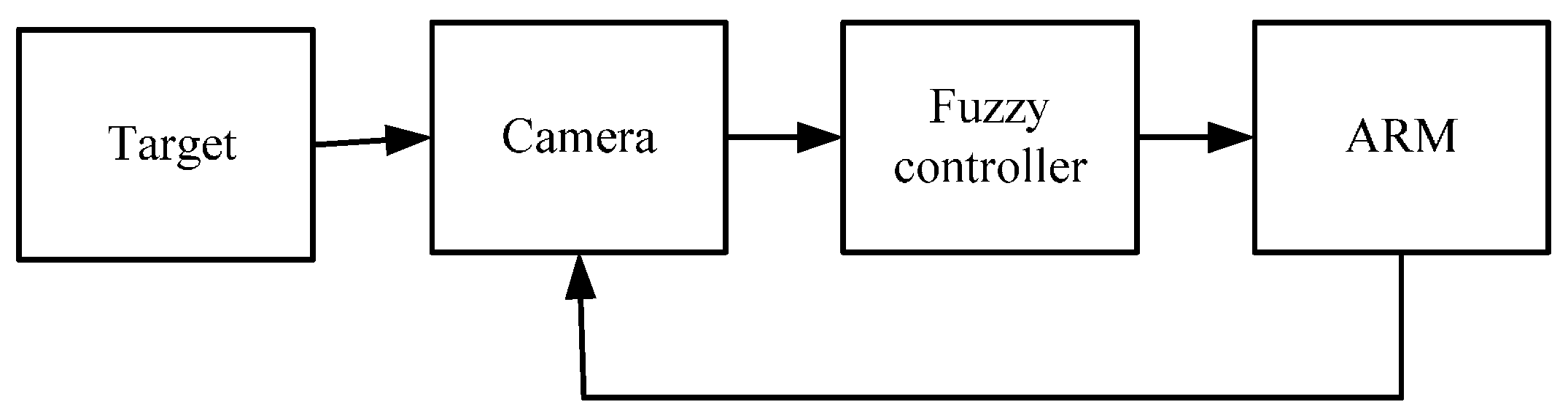

Figure 23 shows the position control scheme with the fuzzy controller: the target coordinate obtained from the camera is sent to the fuzzy controller to find four angles for each joint to make the robot arm move. The entire position control process is shown in

Figure 24.

Figure 23.

Position control scheme with fuzzy controller.

Figure 23.

Position control scheme with fuzzy controller.

Figure 24.

Flow chart of the control sequence.

Figure 24.

Flow chart of the control sequence.

From

Figure 25a–c, θ

3 and θ

4 particularly determine the length of

r; Point

A is the current coordinate of the arm’s end-effector; and point

B is the desired target. When

r is equal to

rd; θ

2 is equal to θ

d2; and θ

1 is equal to θ

d1, and represents a successful move from point

A to the expected point

B (

xd,

yd,

zd).

Figure 25.

Relationship of the robot arm’s joints. (a) Joints 3 and 4; (b) Joint 2; (c) Joint 1.

Figure 25.

Relationship of the robot arm’s joints. (a) Joints 3 and 4; (b) Joint 2; (c) Joint 1.

Fuzzy theory was used in the position control for the four servo motors, which did not require a complex mathematical model of the robot arm. Computations were in the order of θ

3 → θ

4 → θ

2 → θ

1. The use of the hierarchical fuzzy system effectively reduces the number of fuzzy inputs and outputs, layer by layer, with each layer having only one input and one output. Fuzzy rules are given in

Appendix B. The fuzzy control scheme is shown in

Figure 26.

Figure 26.

Fuzzy control scheme.

Figure 26.

Fuzzy control scheme.

Table 1 shows the position control of one test point using three rules and five rules. We can see that the error values for each joint are less than 1°, as shown in

Table 2. The error is within the preset threshold, so the robot arm still moves to the right positions.

Table 1.

Simulation results of position control: test point (173, −101, 25).

Table 1.

Simulation results of position control: test point (173, −101, 25).

| CoordinatesValues | x (mm) | y (mm) | z (mm) |

|---|

| Values |

|---|

| Expected Value | 173 | −101 | 25 |

| Actual value with 5 rules | 173.272 | −100.986 | 25.0894 |

| Actual value with 3 rules | 173.076 | −100.872 | 25.1421 |

Table 2.

Angle errors of 4 joints: test point (173, −101, 25).

Table 2.

Angle errors of 4 joints: test point (173, −101, 25).

| Angles | θ1 (deg) | θ2 (deg) | θ3 (deg) | θ4 (deg) |

|---|

| Values |

|---|

| Error value | 0° | 0.264° | 0.44° | 0.264° |

6. Conclusions

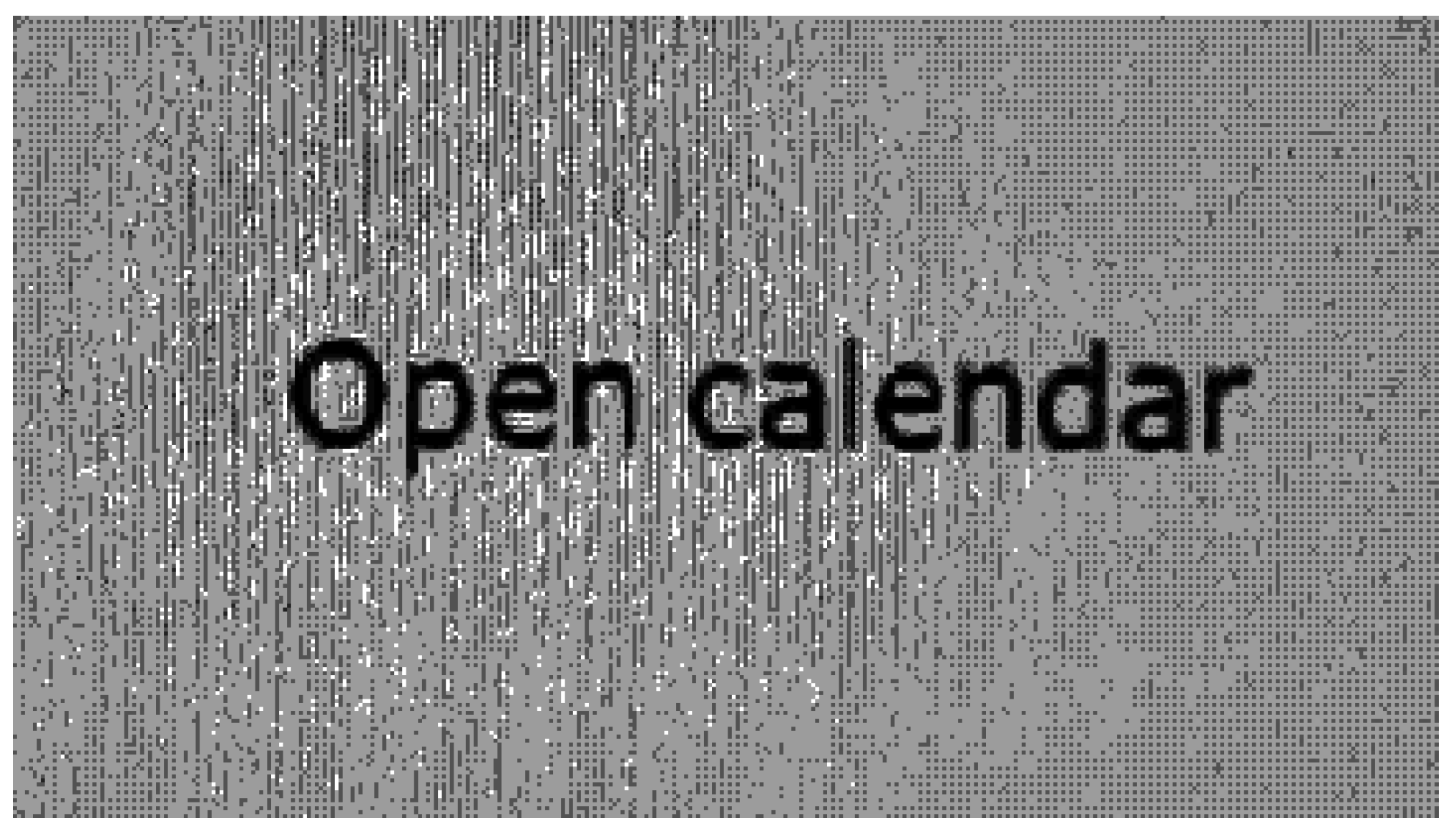

In this paper, an intelligent scheme based on image processing, pattern recognition, character recognition and fuzzy control was proposed to control a robot arm for realizing position control. Forward kinematics was applied to identify the relationships of each joint of the robot arm. The solution of the forward kinematics was obtained using a Denavit-Hartenberg algorithm. In the image processing, we transformed the RGB color space to HSL color space, which significantly reduced the impact of light. Vision Builder for Automated Inspection allowed us to easily configure and benchmark a sequence of visual inspection steps, as well as deploy the visual inspection system for automated inspection. An optical character recognition program was implemented using VBAI, with the characters corrected by a dictionary process. The character recognition process was performed using 43 features in training data. The VBAI is capable of distinguishing 52 characters of the English language (both uppercase and lowercase letters). In addition, characters could be checked by the use of image processing techniques, and the program also proved capable of recognizing characters in incomplete images. In the control scheme, the control system could obtain the desired position in real time by means of the fuzzy controller. To solve the rule explosion problem in a multi-input fuzzy logic system, a hierarchical fuzzy system was applied in the control design. The human-machine interface was handled by LabVIEW 2010 (National Instruments, Austin, TX, USA) and MALAB codes utilized by the controller. Recognition accuracy was 92.4% for images taken from the webcam and 99% using the dictionary process. The experimental results showed that with the proposed control scheme, the robot arm performed different assigned functions successfully.