A Hyperspectral Survey of New York City Lighting Technology

Abstract

:1. Introduction

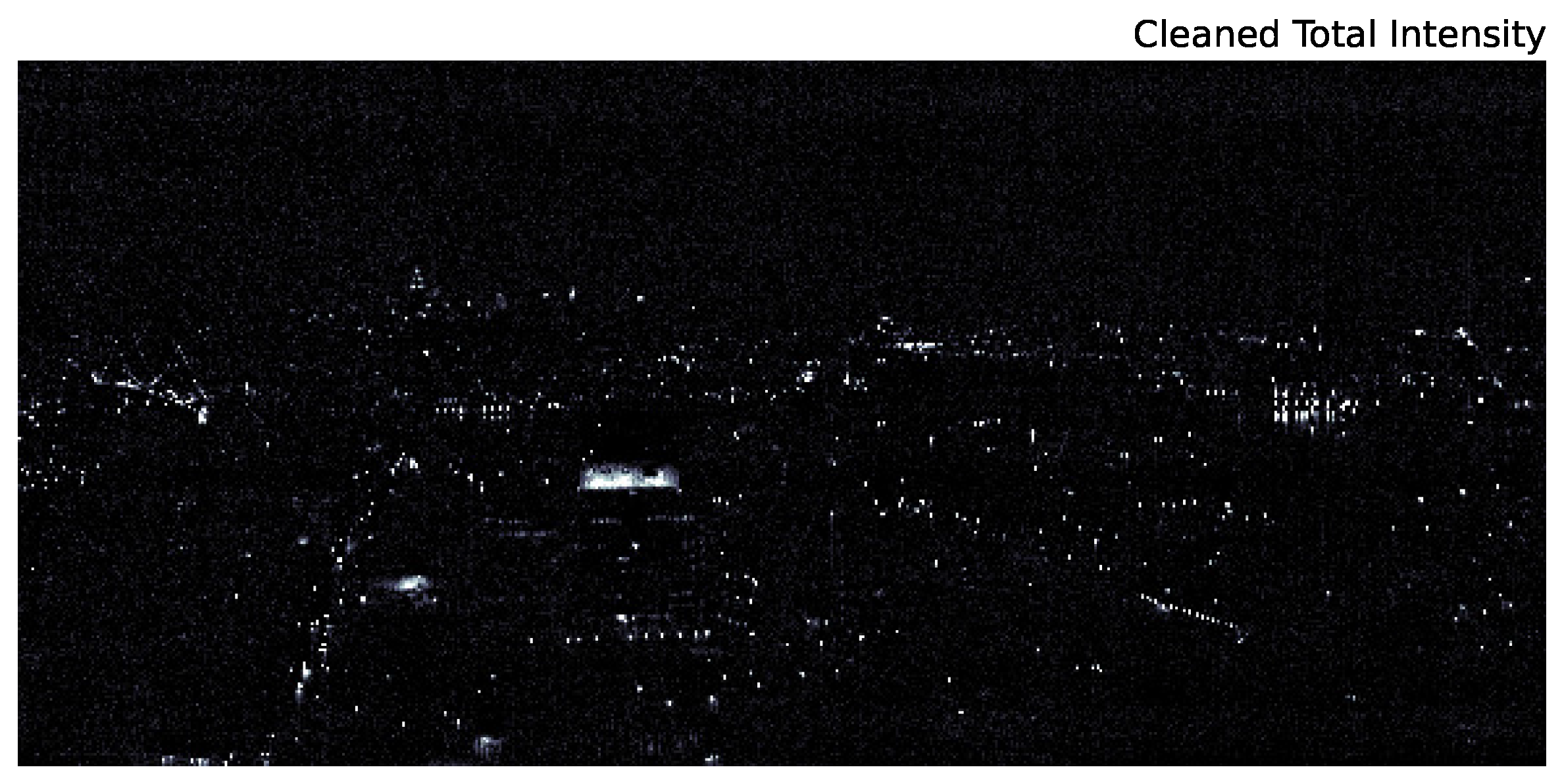

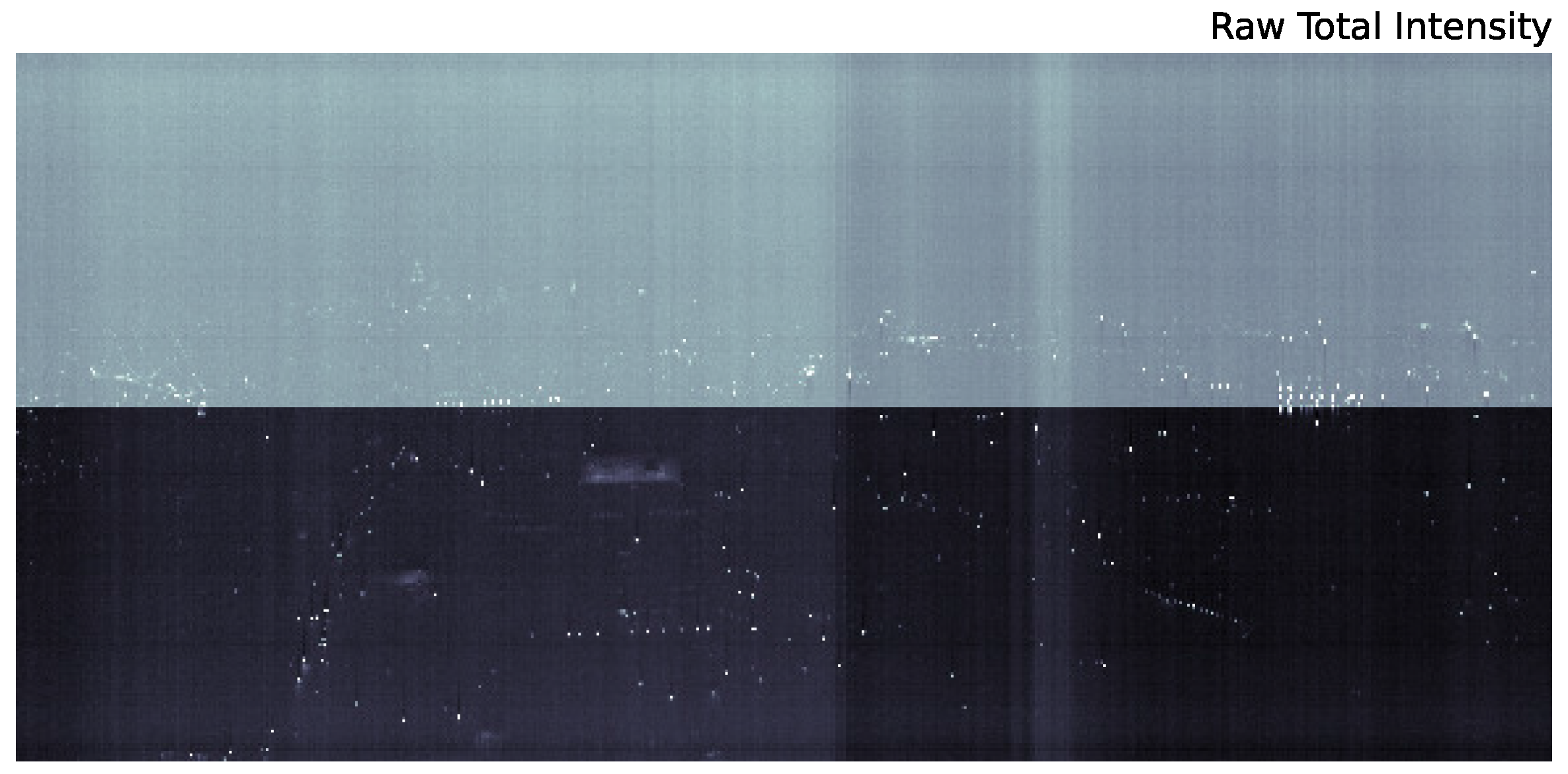

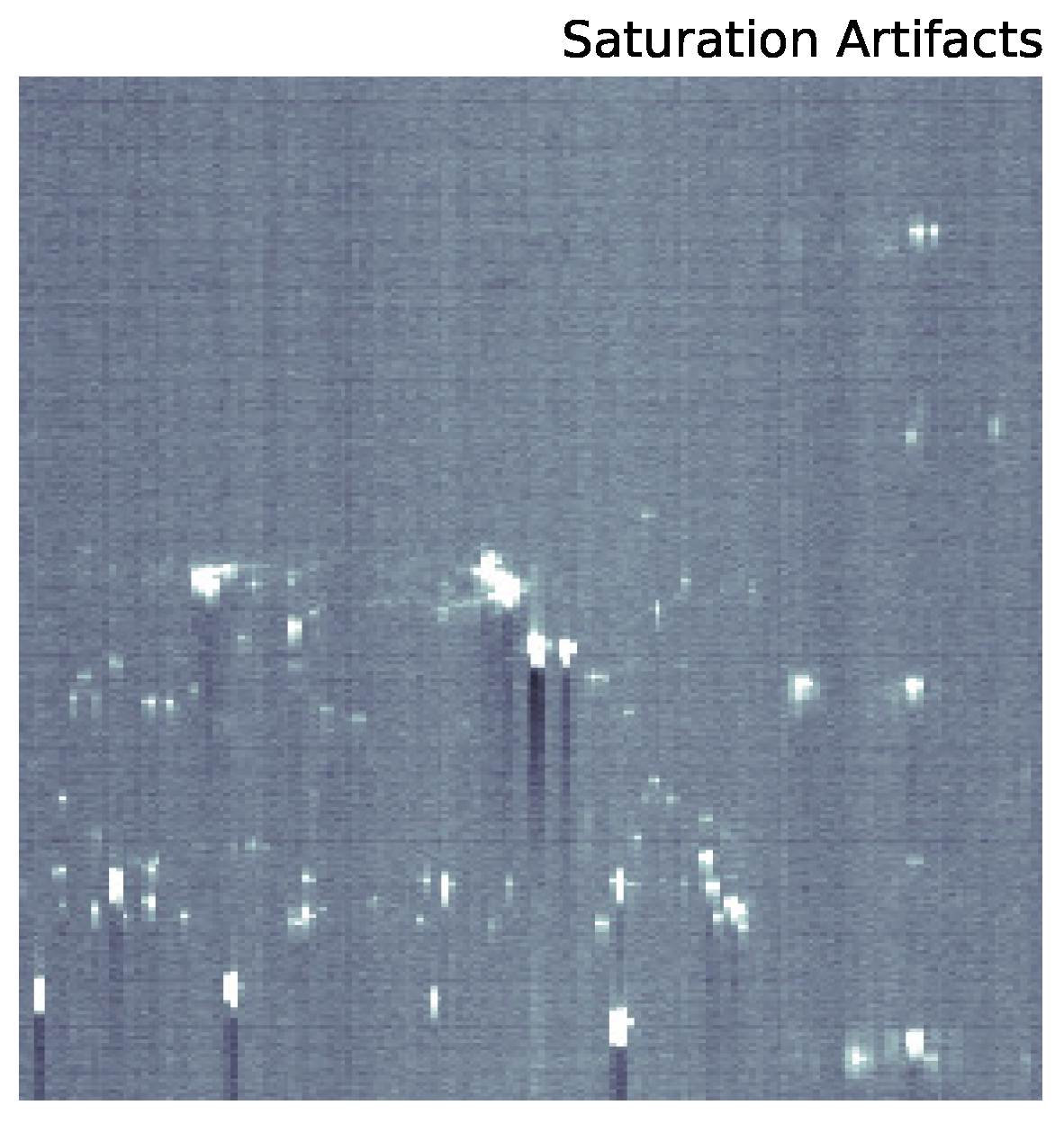

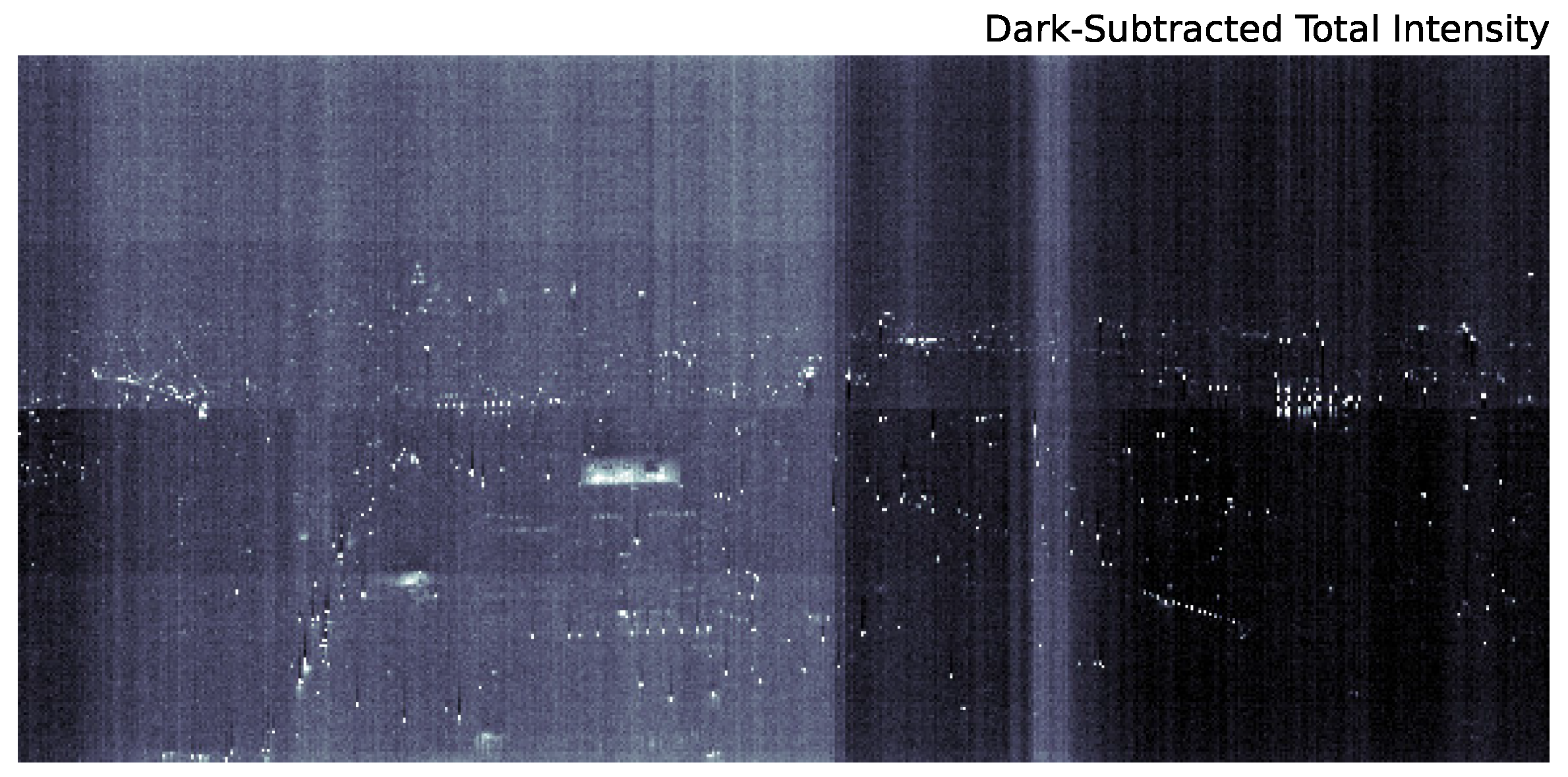

2. Data Acquisition and Reduction

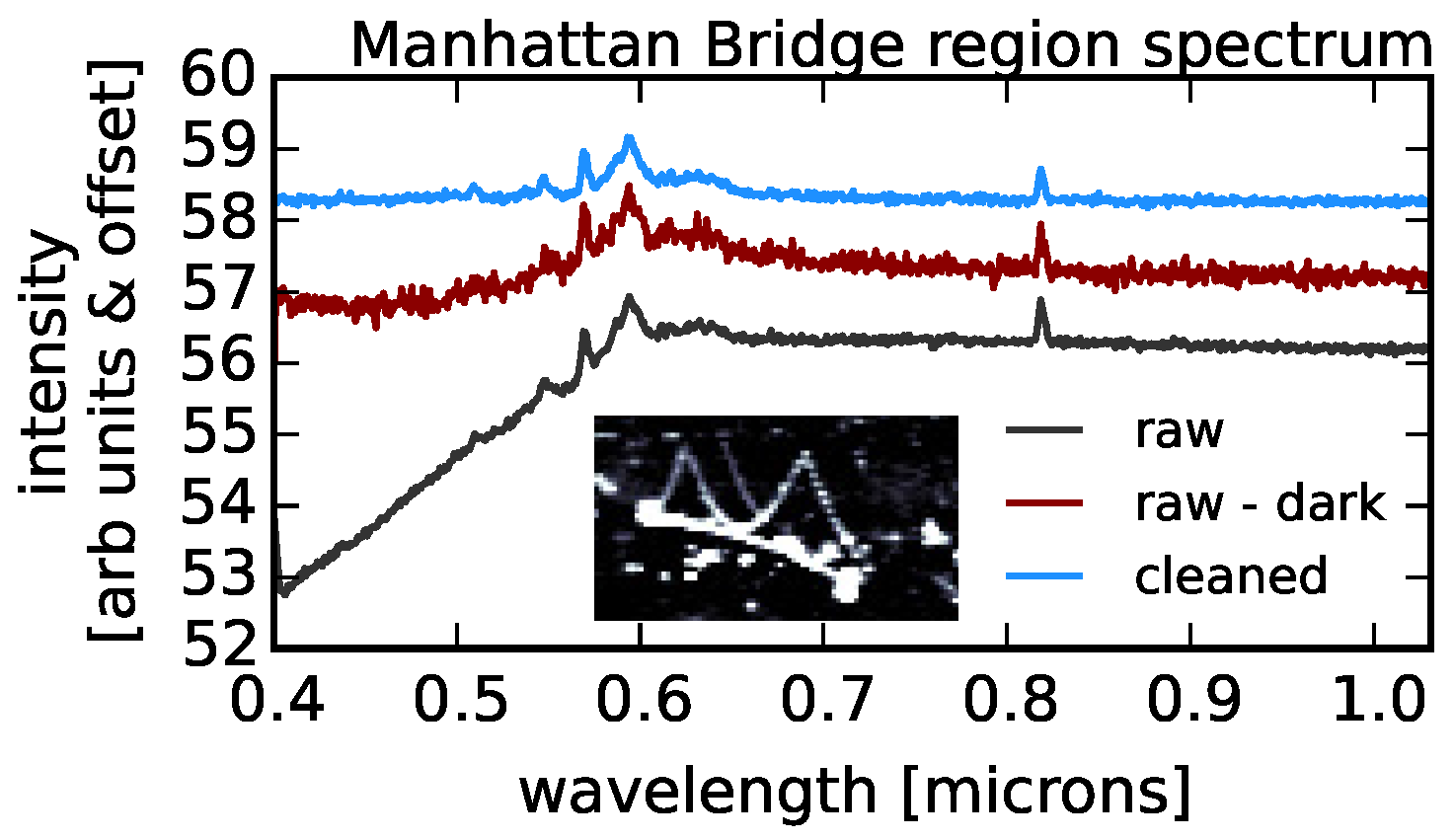

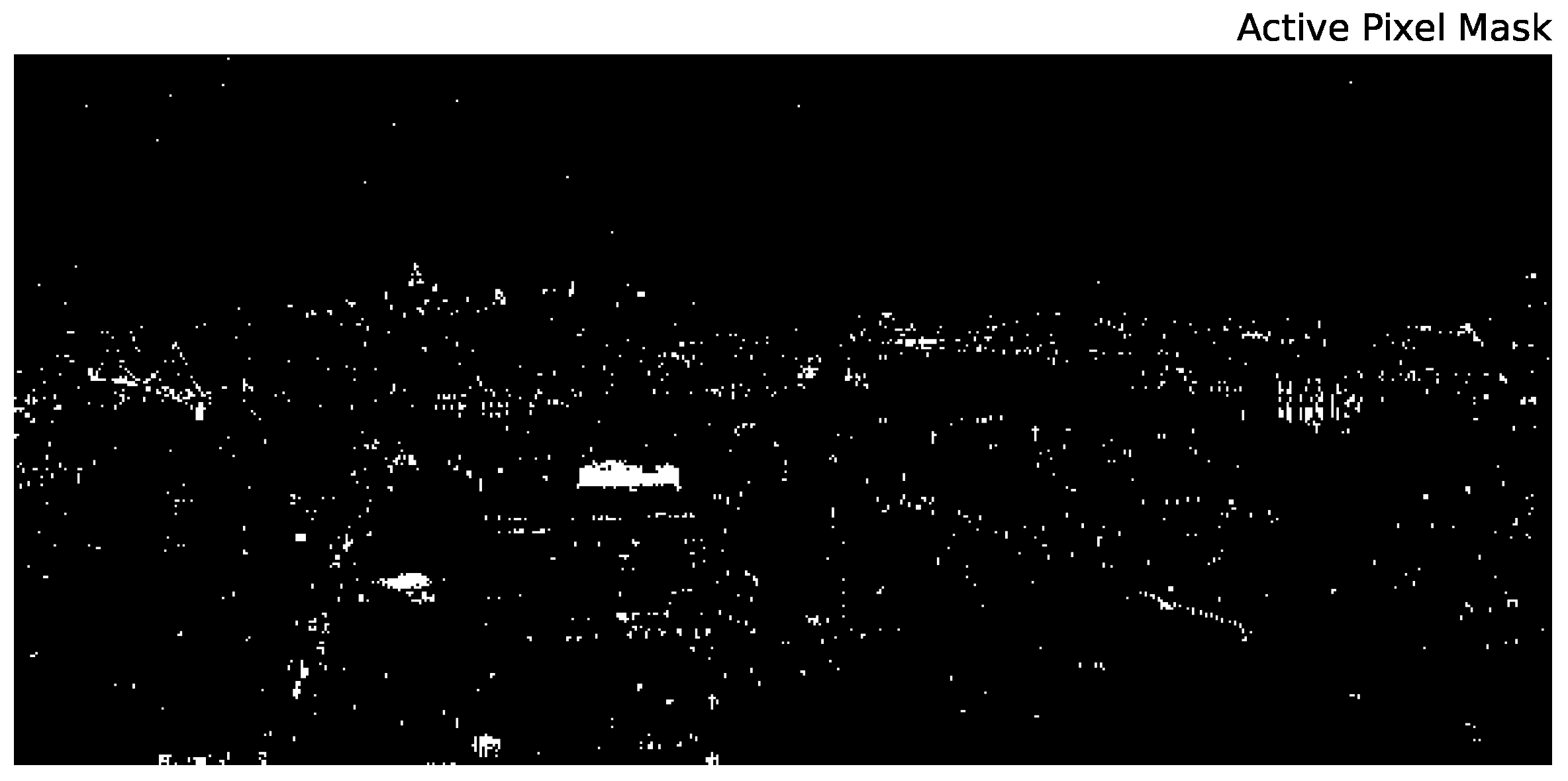

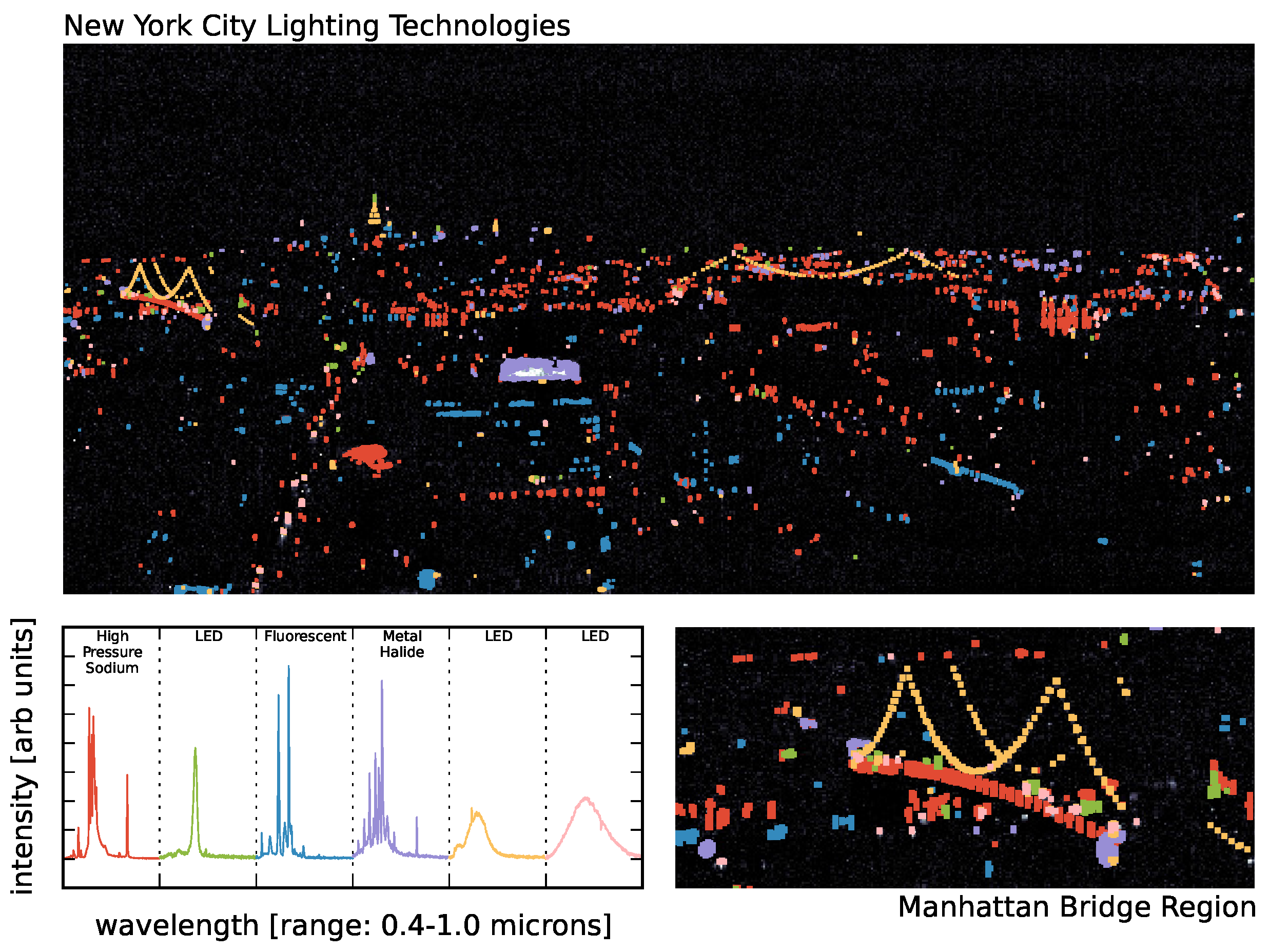

2.1. Data Reduction

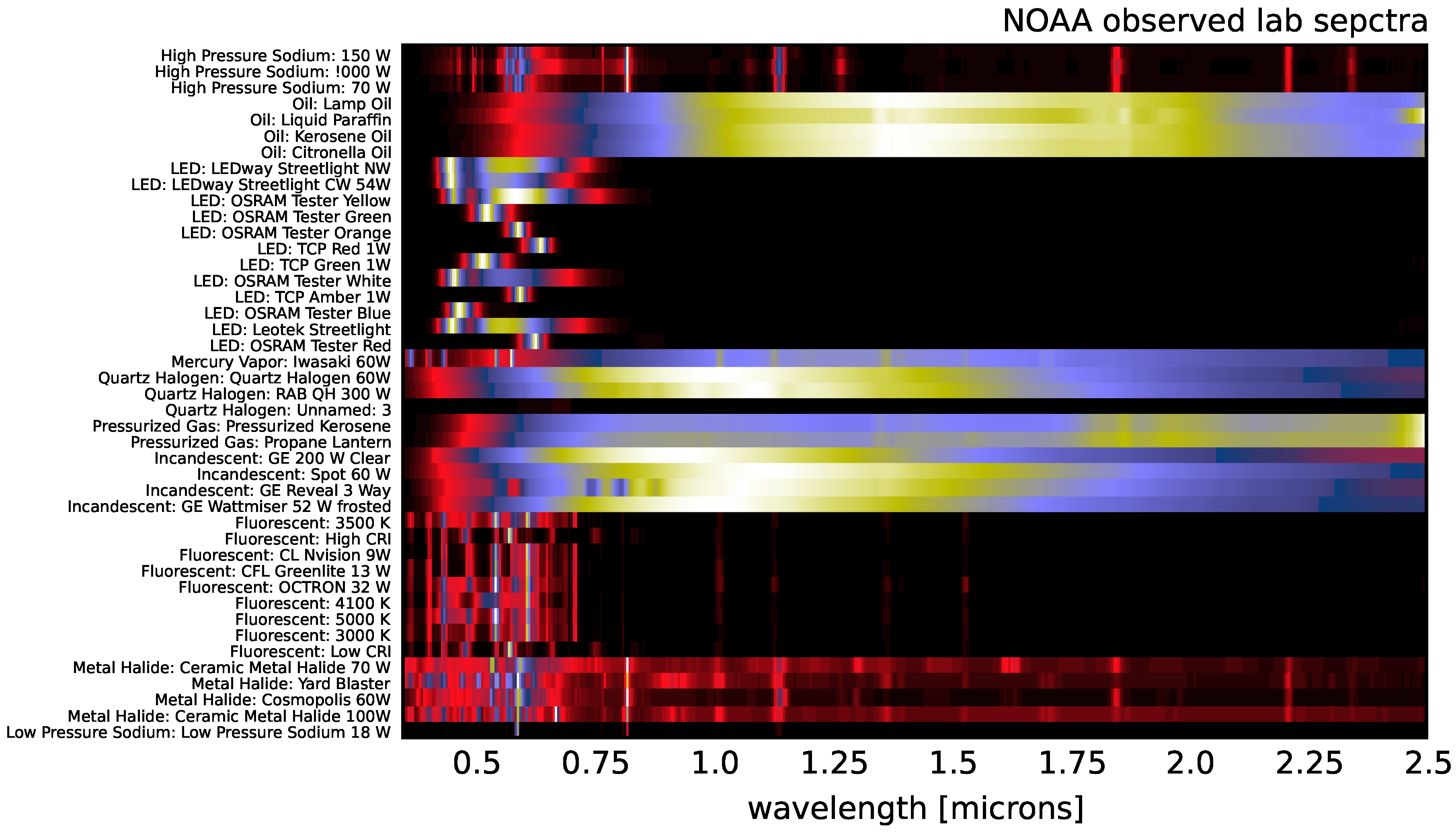

2.2. Supplementary Data

3. Methodology

3.1. k-Means Clustering

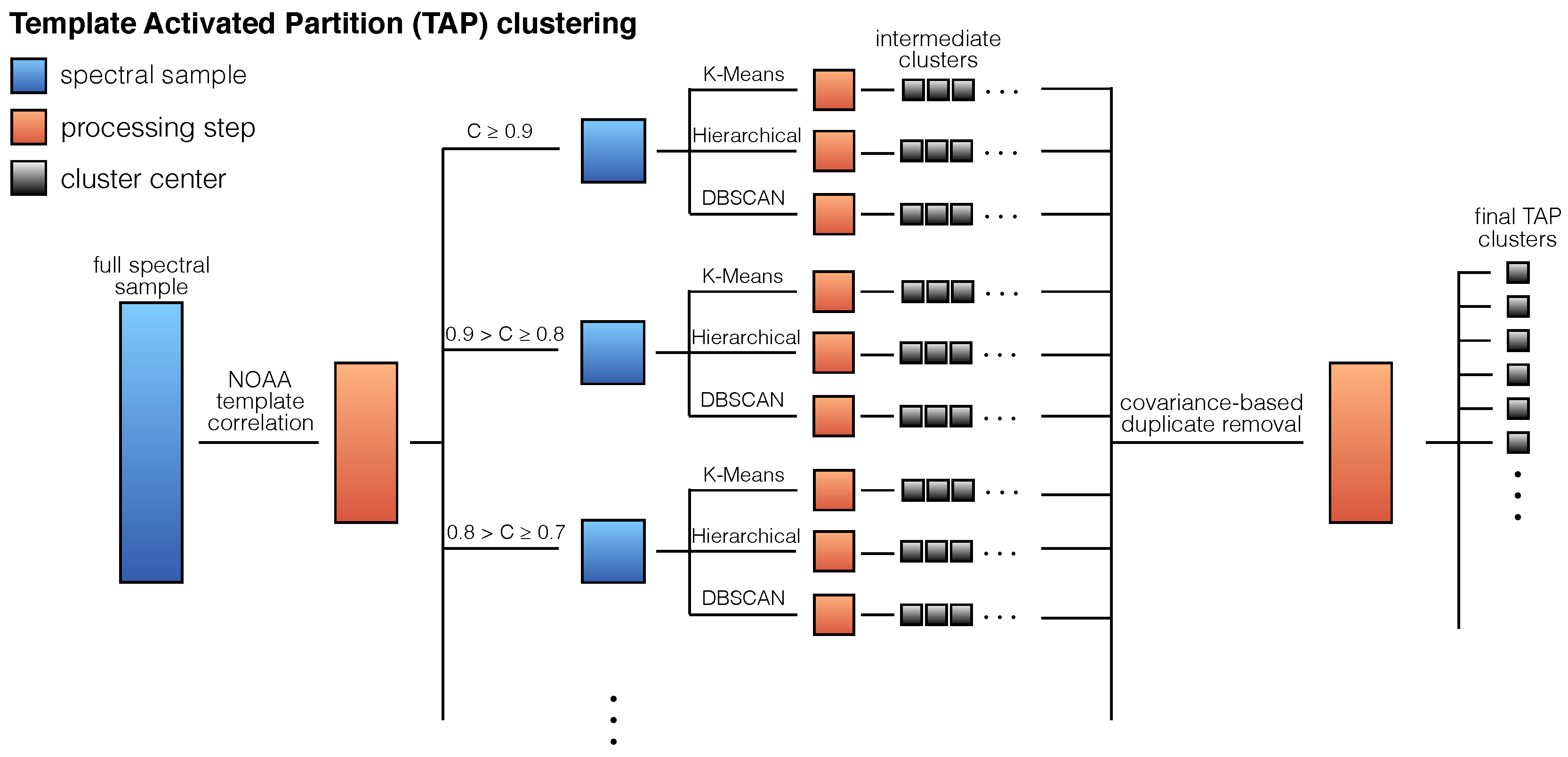

3.2. Template-Activated Partition Clustering

4. Results

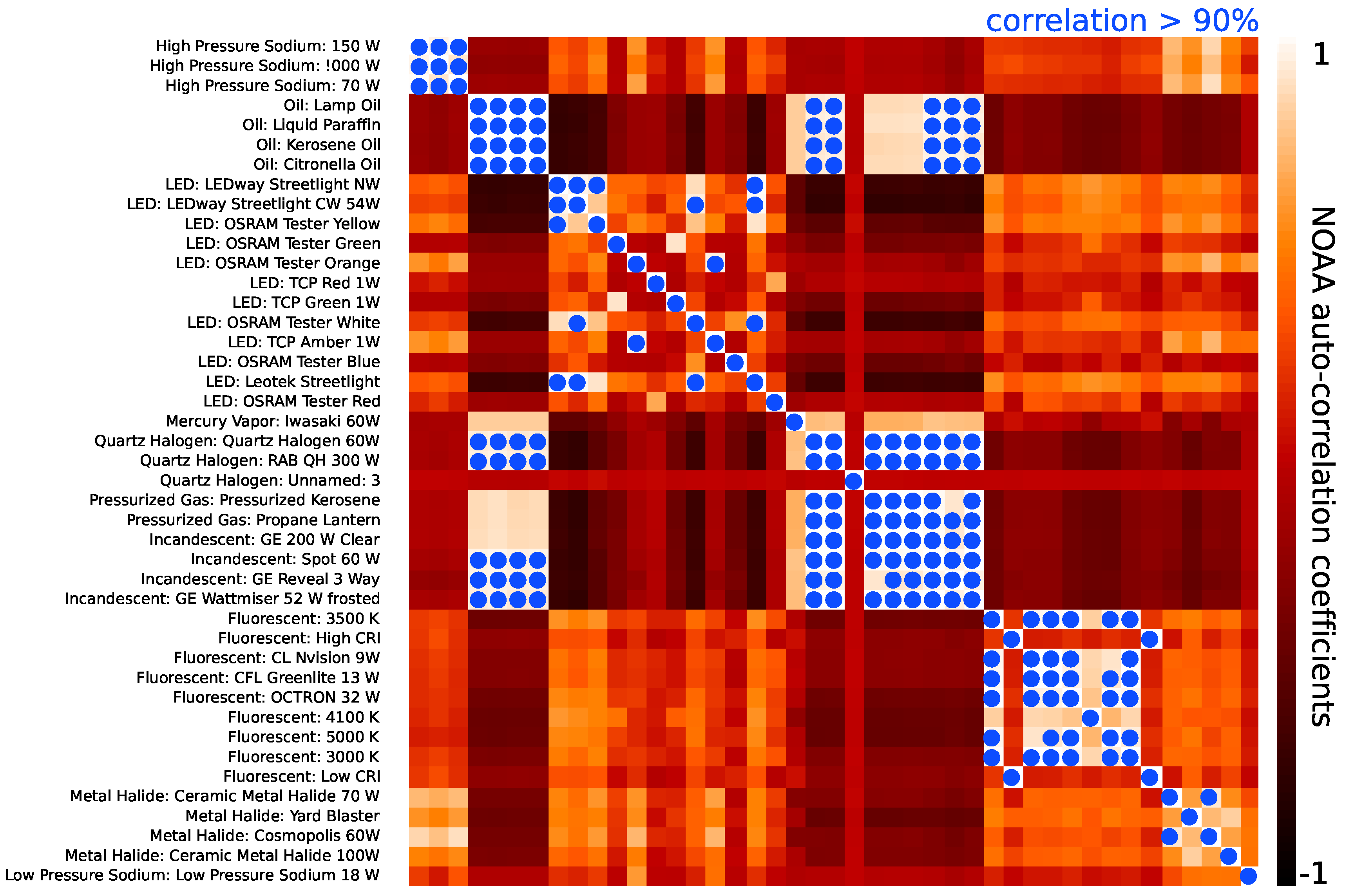

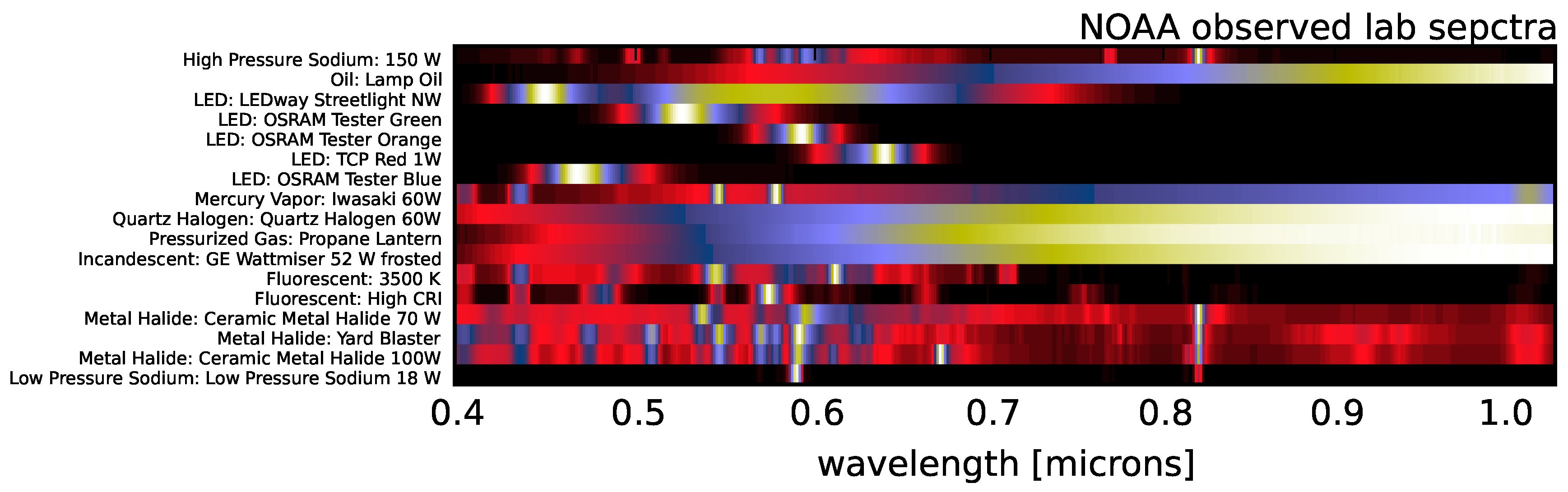

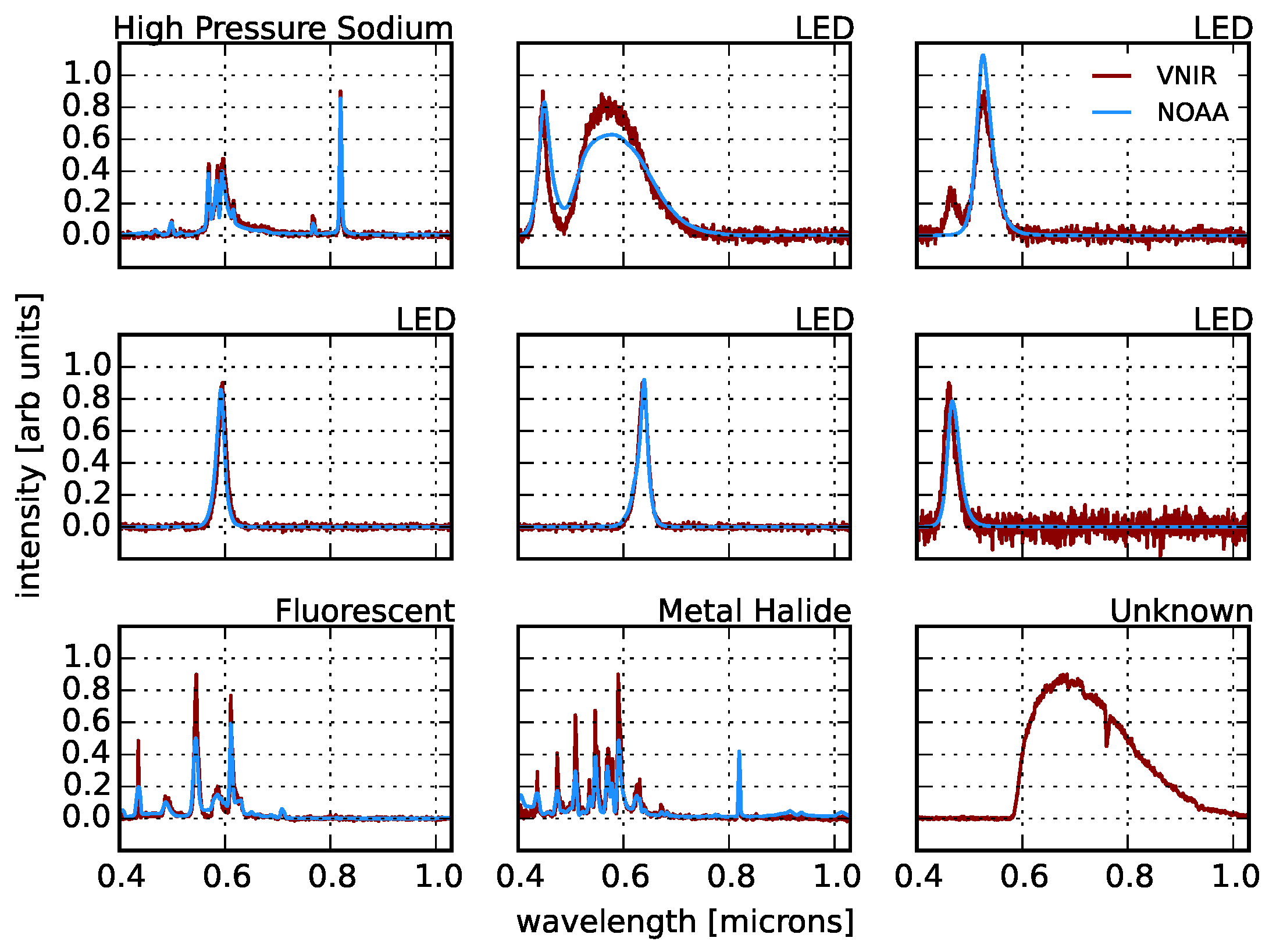

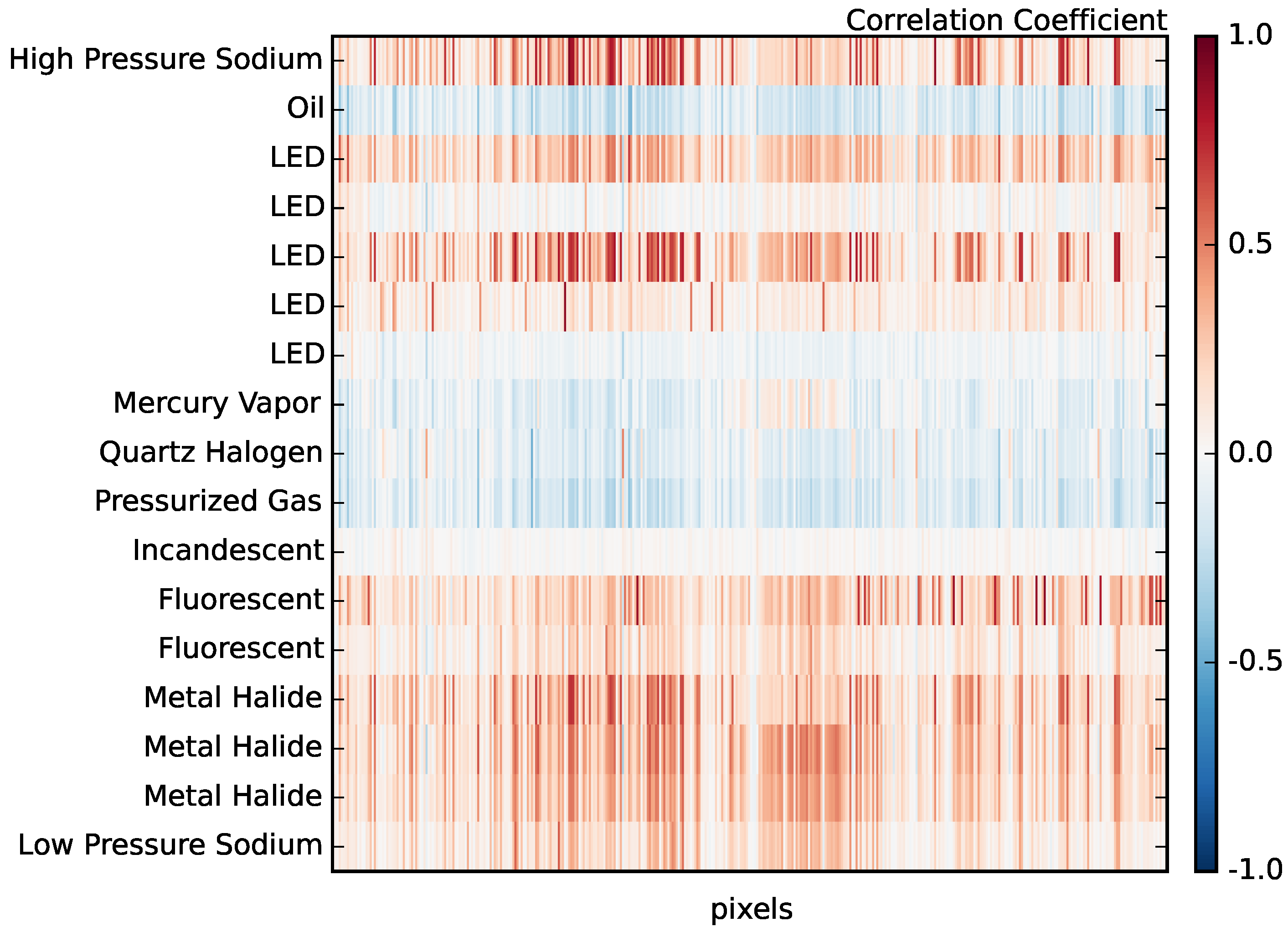

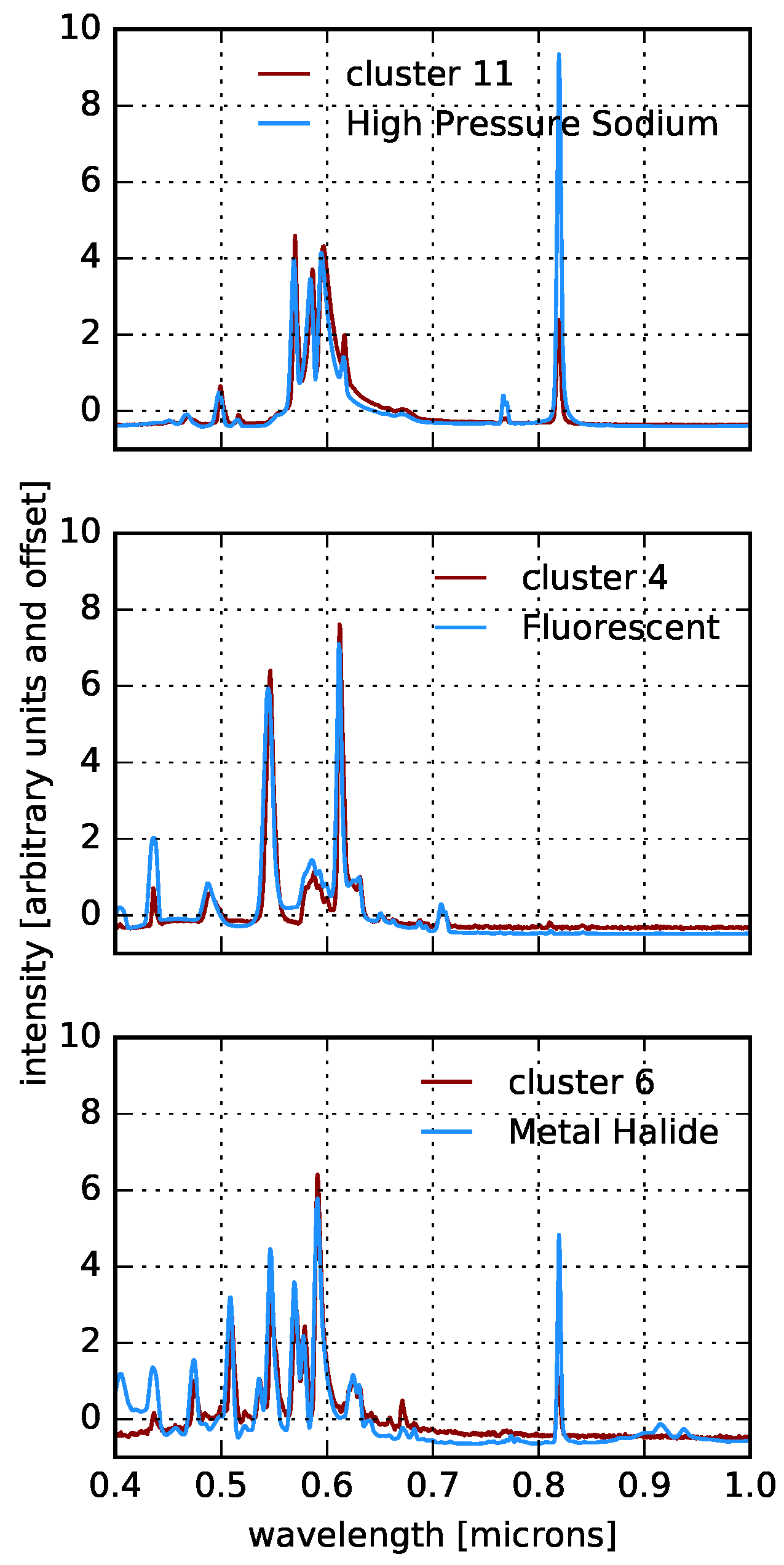

4.1. Correlation with Known Templates

4.2. Unsupervised Learning

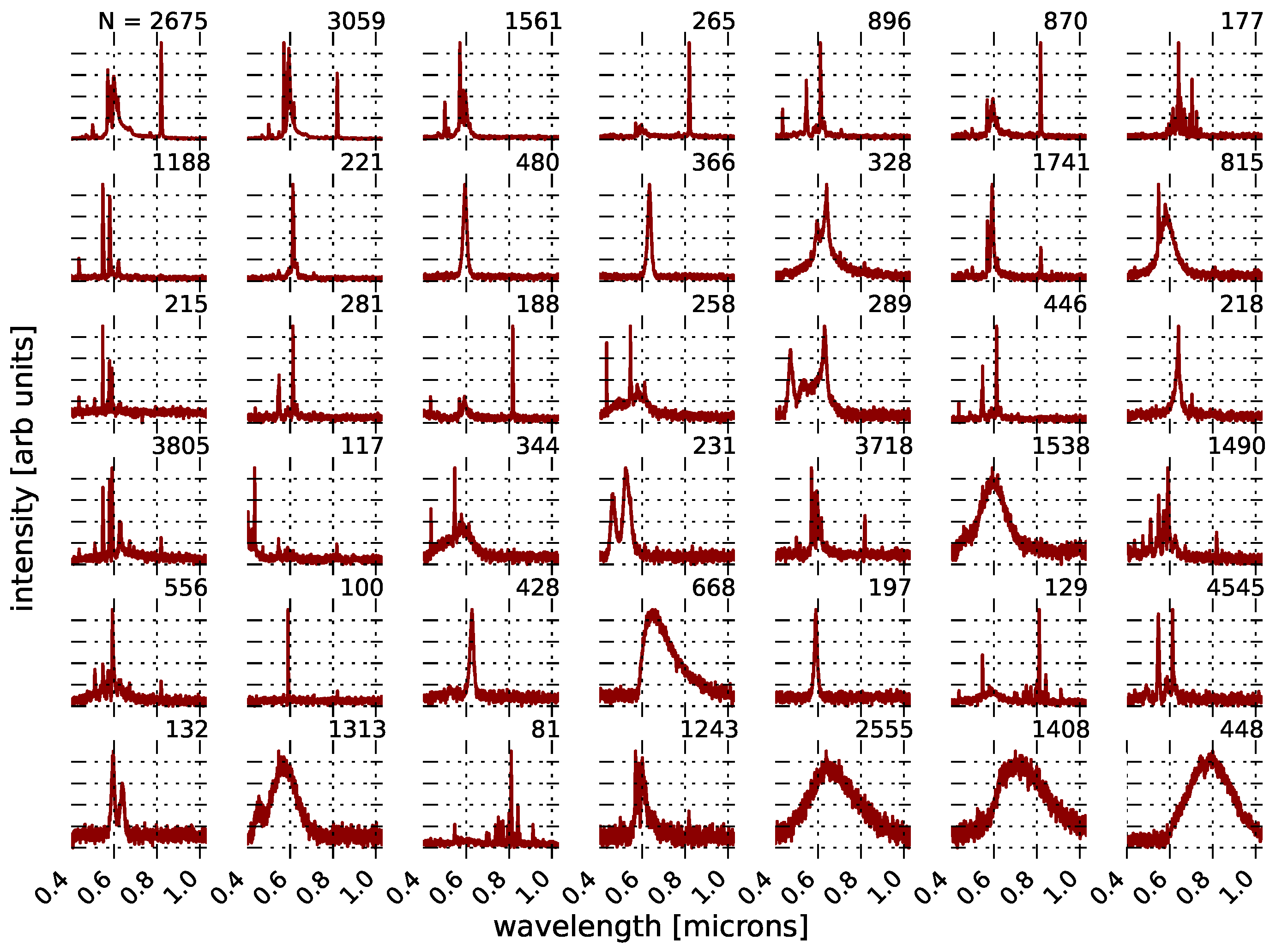

4.2.1. k-Means Clustering

4.2.2. Template-Activated Partition Clustering

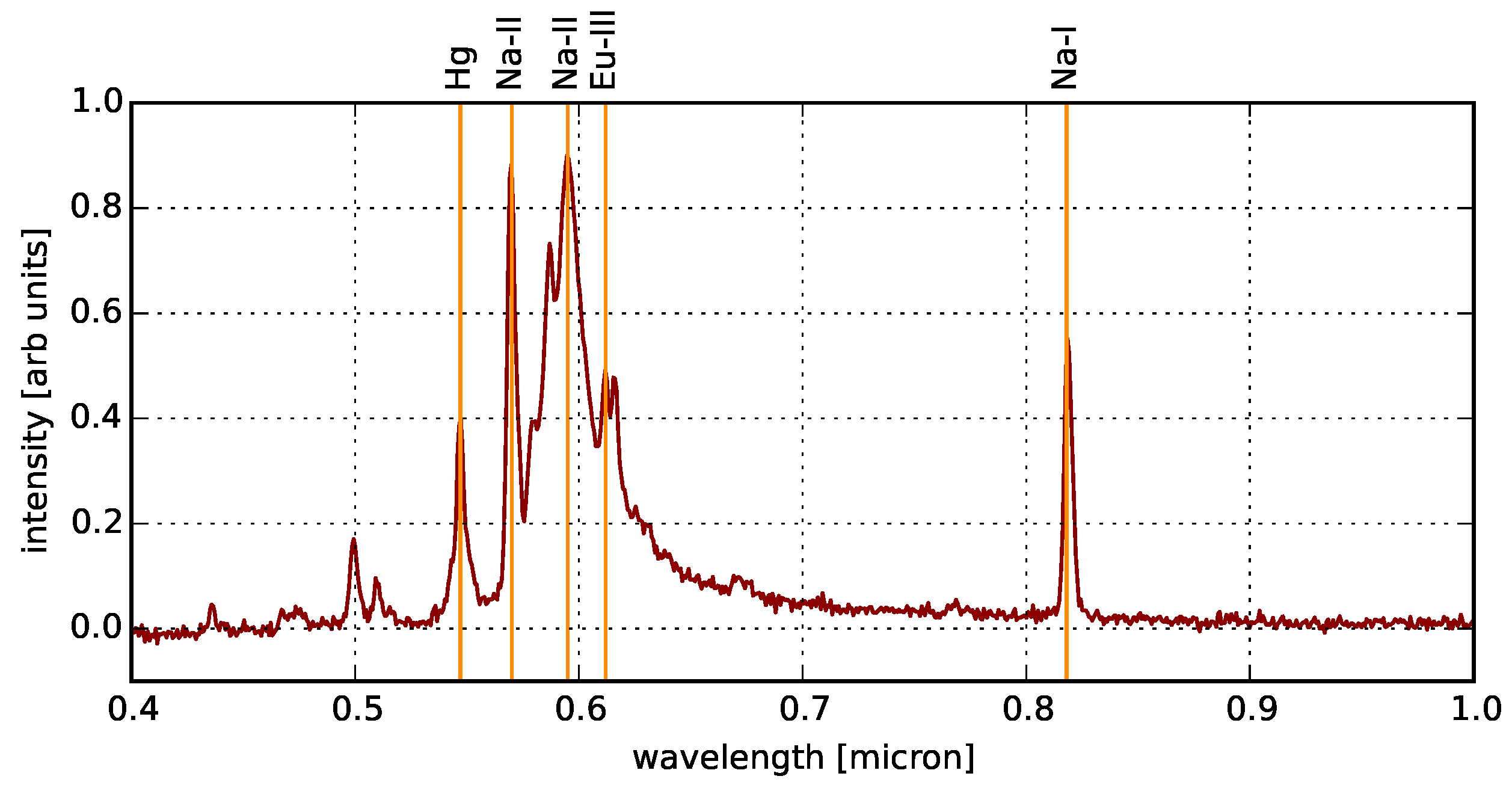

4.3. Aggregate Spectrum

4.4. Applications

4.5. Discussion

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Schernhammer, E.S.; Laden, F.; Speizer, F.E.; Willett, W.C.; Hunter, D.J.; Kawachi, I.; Colditz, G.A. Rotating night shifts and risk of breast cancer in women participating in the nurses’ health study. J. Natl. Cancer Inst. 2001, 93, 1563–1568. [Google Scholar] [CrossRef] [PubMed]

- Kloog, I.; Stevens, R.G.; Haim, A.; Portnov, B.A. Nighttime light level co-distributes with breast cancer incidence worldwide. Cancer Causes Control 2010, 21, 2059–2068. [Google Scholar] [CrossRef] [PubMed]

- Lockley, S.W.; Brainard, G.C.; Czeisler, C.A. High sensitivity of the human circadian melatonin rhythm to resetting by short wavelength light. J. Clin. Endocrinol. Metab. 2003, 88. [Google Scholar] [CrossRef] [PubMed]

- Navara, K.J.; Nelson, R.J. The dark side of light at night: Physiological, epidemiological, and ecological consequences. J. Pineal Res. 2007, 43, 215–224. [Google Scholar] [CrossRef] [PubMed]

- Le Tallec, T.; Perret, M.; Théry, M. Light pollution modifies the expression of daily rhythms and behavior patterns in a nocturnal primate. PLoS ONE 2013, 8, e79250. [Google Scholar] [CrossRef] [PubMed]

- Longcore, T.; Rich, C. Ecological light pollution. Front. Ecol. Environ. 2004, 2, 191–198. [Google Scholar] [CrossRef]

- Gauthreaux, S.A., Jr.; Belser, C.G.; Rich, C.; Longcore, T. Effects of artificial night lighting on migrating birds. In Ecological Consequences of Artificial Night Lighting; Rich, C., Longcore, T., Eds.; Island Press: Washington, DC, USA, 2006; pp. 67–93. [Google Scholar]

- Welch, R. Monitoring urban population and energy utilization patterns from satellite data. Remote Sens. Environ. 1980, 9, 1–9. [Google Scholar] [CrossRef]

- Elvidge, C.D.; Baugh, K.E.; Kihn, E.A.; Kroehl, H.W.; Davis, E.R. Mapping city lights with nighttime data from the DMSP Operational Linescan System. Photogramm. Eng. Remote Sens. 1997, 63, 727–734. [Google Scholar]

- Elvidge, C.D.; Baugh, K.E.; Kihn, E.A.; Kroehl, H.W.; Davis, E.R.; Davis, C.W. Relation between satellite observed visible-near infrared emissions, population, economic activity and electric power consumption. Int. J. Remote Sens. 1997, 18, 1373–1379. [Google Scholar] [CrossRef]

- Sutton, P.; Roberts, D.; Elvidge, C.; Meij, H. A comparison of nighttime satellite imagery and population density for the continental United States. Photogramm. Eng. Remote Sens. 1997, 63, 1303–1313. [Google Scholar]

- Doll, C.H.; Muller, J.P.; Elvidge, C.D. Night-time imagery as a tool for global mapping of socioeconomic parameters and greenhouse gas emissions. AMBIO J. Hum. Environ. 2000, 29, 157–162. [Google Scholar] [CrossRef]

- Doll, C.N.; Muller, J.P.; Morley, J.G. Mapping regional economic activity from night-time light satellite imagery. Ecol. Econ. 2006, 57, 75–92. [Google Scholar] [CrossRef]

- Ghosh, T.; Powell, R.L.; Elvidge, C.D.; Baugh, K.E.; Sutton, P.C.; Anderson, S. Shedding light on the global distribution of economic activity. Open Geogr. J. 2010, 3, 147–160. [Google Scholar]

- Henderson, J.V.; Storeygard, A.; Weil, D.N. Measuring economic growth from outer space. Am. Econ. Rev. 2012, 102, 994–1028. [Google Scholar] [CrossRef] [PubMed]

- Li, X.; Xu, H.; Chen, X.; Li, C. Potential of NPP-VIIRS nighttime light imagery for modeling the regional economy of China. Remote Sens. 2013, 5, 3057–3081. [Google Scholar] [CrossRef]

- Keola, S.; Andersson, M.; Hall, O. Monitoring economic development from space: Using nighttime light and land cover data to measure economic growth. World Dev. 2015, 66, 322–334. [Google Scholar] [CrossRef]

- Lo, C. Modeling the population of China using DMSP operational linescan system nighttime data. Photogramm. Eng. Remote Sens. 2001, 67, 1037–1047. [Google Scholar]

- Briggs, D.J.; Gulliver, J.; Fecht, D.; Vienneau, D.M. Dasymetric modelling of small-area population distribution using land cover and light emissions data. Remote Sens. Environ. 2007, 108, 451–466. [Google Scholar] [CrossRef]

- Levin, N.; Duke, Y. High spatial resolution night-time light images for demographic and socio-economic studies. Remote Sens. Environ. 2012, 119, 1–10. [Google Scholar] [CrossRef]

- Yang, X.; Yue, W.; Gao, D. Spatial improvement of human population distribution based on multi-sensor remote-sensing data: An input for exposure assessment. Int. J. Remote Sens. 2013, 34, 5569–5583. [Google Scholar] [CrossRef]

- Lo, C. Urban indicators of China from radiance-calibrated digital DMSP-OLS nighttime images. Ann. Assoc. Am. Geogr. 2002, 92, 225–240. [Google Scholar] [CrossRef]

- Letu, H.; Hara, M.; Yagi, H.; Naoki, K.; Tana, G.; Nishio, F.; Shuhei, O. Estimating energy consumption from night-time DMPS/OLS imagery after correcting for saturation effects. Int. J. Remote Sens. 2010, 31, 4443–4458. [Google Scholar] [CrossRef]

- He, C.; Ma, Q.; Liu, Z.; Zhang, Q. Modeling the spatiotemporal dynamics of electric power consumption in Mainland China using saturation-corrected DMSP/OLS nighttime stable light data. Int. J. Digit. Earth 2014, 7, 993–1014. [Google Scholar] [CrossRef]

- Xie, Y.; Weng, Q. Detecting urban-scale dynamics of electricity consumption at Chinese cities using time-series DMSP-OLS (Defense Meteorological Satellite Program-Operational Linescan System) nighttime light imageries. Energy 2016, 100, 177–189. [Google Scholar] [CrossRef]

- Ghosh, T.; Elvidge, C.D.; Sutton, P.C.; Baugh, K.E.; Ziskin, D.; Tuttle, B.T. Creating a global grid of distributed fossil fuel CO2 emissions from nighttime satellite imagery. Energies 2010, 3, 1895–1913. [Google Scholar] [CrossRef]

- Henderson, M.; Yeh, E.T.; Gong, P.; Elvidge, C.; Baugh, K. Validation of urban boundaries derived from global night-time satellite imagery. Int. J. Remote Sens. 2003, 24, 595–609. [Google Scholar] [CrossRef]

- Small, C.; Pozzi, F.; Elvidge, C.D. Spatial analysis of global urban extent from DMSP-OLS night lights. Remote Sens. Environ. 2005, 96, 277–291. [Google Scholar] [CrossRef]

- Small, C.; Elvidge, C.D.; Balk, D.; Montgomery, M. Spatial scaling of stable night lights. Remote Sens. Environ. 2011, 115, 269–280. [Google Scholar] [CrossRef]

- Zhou, Y.; Smith, S.J.; Elvidge, C.D.; Zhao, K.; Thomson, A.; Imhoff, M. A cluster-based method to map urban area from DMSP/OLS nightlights. Remote Sens. Environ. 2014, 147, 173–185. [Google Scholar] [CrossRef]

- Shi, K.; Huang, C.; Yu, B.; Yin, B.; Huang, Y.; Wu, J. Evaluation of NPP-VIIRS night-time light composite data for extracting built-up urban areas. Remote Sens. Lett. 2014, 5, 358–366. [Google Scholar] [CrossRef]

- Lu, D.; Tian, H.; Zhou, G.; Ge, H. Regional mapping of human settlements in southeastern China with multisensor remotely sensed data. Remote Sens. Environ. 2008, 112, 3668–3679. [Google Scholar] [CrossRef]

- Cao, X.; Chen, J.; Imura, H.; Higashi, O. A SVM-based method to extract urban areas from DMSP-OLS and SPOT VGT data. Remote Sens. Environ. 2009, 113, 2205–2209. [Google Scholar] [CrossRef]

- Pandey, B.; Joshi, P.; Seto, K.C. Monitoring urbanization dynamics in India using DMSP/OLS night time lights and SPOT-VGT data. Int. J. Appl. Earth Obs. Geoinf. 2013, 23, 49–61. [Google Scholar] [CrossRef]

- Zhang, Q.; Seto, K.C. Mapping urbanization dynamics at regional and global scales using multi-temporal DMSP/OLS nighttime light data. Remote Sens. Environ. 2011, 115, 2320–2329. [Google Scholar] [CrossRef]

- Liu, Z.; He, C.; Zhang, Q.; Huang, Q.; Yang, Y. Extracting the dynamics of urban expansion in China using DMSP-OLS nighttime light data from 1992 to 2008. Landsc. Urban Plan. 2012, 106, 62–72. [Google Scholar] [CrossRef]

- Small, C.; Elvidge, C.D. Night on Earth: Mapping decadal changes of anthropogenic night light in Asia. Int. J. Appl. Earth Obs. Geoinf. 2013, 22, 40–52. [Google Scholar] [CrossRef]

- Xie, Y.; Weng, Q. Updating urban extents with nighttime light imagery by using an object-based thresholding method. Remote Sens. Environ. 2016, 187, 1–13. [Google Scholar] [CrossRef]

- Dobler, G.; Ghandehari, M.; Koonin, S.E.; Nazari, R.; Patrinos, A.; Sharma, M.S.; Tafvizi, A.; Vo, H.T.; Wurtele, J.S. Dynamics of the urban lightscape. Inf. Syst. 2015, 54, 115–126. [Google Scholar] [CrossRef]

- Kruse, F.A.; Elvidge, C.D. Identifying and mapping night lights using imaging spectrometry. In Proceedings of the 2011 IEEE Aerospace Conference, Big Sky, MT, USA, 5–12 March 2011; IEEE: New York, NY, USA, 2011; pp. 1–6. [Google Scholar]

- Kruse, F.A.; Elvidge, C.D. Characterizing urban light sources using imaging spectrometry. In Proceedings of the 2011 Joint Urban Remote Sensing Event, Munich, Germany, 10–13 April 2011; IEEE: New York, NY, USA, 2011; pp. 149–152. [Google Scholar]

- Elvidge, C.D.; Cinzano, P.; Pettit, D.; Arvesen, J.; Sutton, P.; Small, C.; Nemani, R.; Longcore, T.; Rich, C.; Safran, J.; et al. The Nightsat mission concept. Int. J. Remote Sens. 2007, 28, 2645–2670. [Google Scholar] [CrossRef]

- Elvidge, C.D.; Keith, D.M.; Tuttle, B.T.; Baugh, K.E. Spectral identification of lighting type and character. Sensors 2010, 10, 3961–3988. [Google Scholar] [CrossRef] [PubMed]

- Earth Observation Group—Defense Meteorological Satellite Progam, Boulder|ngdc.noaa.gov. Available online: https://www.ngdc.noaa.gov/eog/night_sat/nightsat.html (accessed on 30 November 2016).

- Richards, J.A.; Richards, J. Remote Sensing Digital Image Analysis; Springer: Heidelberg, Germany, 1999; Volume 3. [Google Scholar]

- Schowengerdt, R.A. Remote Sensing: Models and Methods for Image Processing; Academic Press: Cambridge, MA, USA, 2006. [Google Scholar]

- Bioucas-Dias, J.M.; Plaza, A.; Camps-Valls, G.; Scheunders, P.; Nasrabadi, N.; Chanussot, J. Hyperspectral remote sensing data analysis and future challenges. IEEE Geosci. Remote Sens. Mag. 2013, 1, 6–36. [Google Scholar] [CrossRef]

- MacQueen, J. Some methods for classification and analysis of multivariate observations. In Proceedings of the Fifth Berkeley Symposium on Mathematical Statistics and Probability, Oakland, CA, USA, 27 December 1965–7 January 1966; Volume 1, pp. 281–297.

- Arthur, D.; Vassilvitskii, S. K-means++: The advantages of careful seeding. In Proceedings of the Eighteenth Annual ACM-SIAM Symposium on Discrete Algorithms. Society for Industrial and Applied Mathematics, New Orleans, LA, USA, 7–9 January 2007; pp. 1027–1035.

- Celebi, M.E.; Kingravi, H.A.; Vela, P.A. A comparative study of efficient initialization methods for the k-means clustering algorithm. Expert Syst. Appl. 2013, 40, 200–210. [Google Scholar] [CrossRef]

- Ester, M.; Kriegel, H.P.; Sander, J.; Xu, X. A density-based algorithm for discovering clusters in large spatial databases with noise. In Proceedings of the International Conference on Knowledge Discovery and Data Mining, Portland, OR, USA, 2–4 August 1996; Volume 96, pp. 226–231.

- Ward, J.H., Jr. Hierarchical grouping to optimize an objective function. J. Am. Stat. Assoc. 1963, 58, 236–244. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- NYC DOT—Press Releases—Expansion of Energy-Efficient LED-Light Installations Citywide. Available online: http://www.nyc.gov/html/dot/html/pr2012/pr12_19.shtml (accessed on 30 November 2016).

- Physical Sciences Center for Urban Science. Available online: http://serv.cusp.nyu.edu/~gdobler (accessed 30 November 2016).

- Puschnig, J.; Posch, T.; Uttenthaler, S. Night sky photometry and spectroscopy performed at the Vienna University Observatory. J. Quant. Spectrosc. Radiat. Transf. 2014, 139, 64–75. [Google Scholar] [CrossRef]

- Venable, K.; McGee, K.; McGarry, D.; Wightman, K.; Gutierrez, C.; Weiser, R.; Vanderford, B.; Knight, J.; Rasmussen, B.; Coverick, R.; et al. Image Recognition System for Automated Lighting Retrofit Assessment, 2013. Available online: http://oaktrust.library.tamu.edu/handle/1969.1/149181 (accessed on 30 November 2016).

- Kyba, C.C.; Ruhtz, T.; Fischer, J.; Hölker, F. Cloud coverage acts as an amplifier for ecological light pollution in urban ecosystems. PLoS ONE 2011, 6, e17307. [Google Scholar] [CrossRef] [PubMed]

- Kyba, C.; Ruhtz, T.; Fischer, J.; Hölker, F. Red is the new black: how the colour of urban skyglow varies with cloud cover. Mon. Not. R. Astron. Soc. 2012, 425, 701–708. [Google Scholar] [CrossRef]

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dobler, G.; Ghandehari, M.; Koonin, S.E.; Sharma, M.S. A Hyperspectral Survey of New York City Lighting Technology. Sensors 2016, 16, 2047. https://doi.org/10.3390/s16122047

Dobler G, Ghandehari M, Koonin SE, Sharma MS. A Hyperspectral Survey of New York City Lighting Technology. Sensors. 2016; 16(12):2047. https://doi.org/10.3390/s16122047

Chicago/Turabian StyleDobler, Gregory, Masoud Ghandehari, Steven E. Koonin, and Mohit S. Sharma. 2016. "A Hyperspectral Survey of New York City Lighting Technology" Sensors 16, no. 12: 2047. https://doi.org/10.3390/s16122047

APA StyleDobler, G., Ghandehari, M., Koonin, S. E., & Sharma, M. S. (2016). A Hyperspectral Survey of New York City Lighting Technology. Sensors, 16(12), 2047. https://doi.org/10.3390/s16122047