Anchor Node Localization for Wireless Sensor Networks Using Video and Compass Information Fusion

Abstract

: Distributed sensing, computing and communication capabilities of wireless sensor networks require, in most situations, an efficient node localization procedure. In the case of random deployments in harsh or hostile environments, a general localization process within global coordinates is based on a set of anchor nodes able to determine their own position using GPS receivers. In this paper we propose another anchor node localization technique that can be used when GPS devices cannot accomplish their mission or are considered to be too expensive. This novel technique is based on the fusion of video and compass data acquired by the anchor nodes and is especially suitable for video- or multimedia-based wireless sensor networks. For these types of wireless networks the presence of video cameras is intrinsic, while the presence of digital compasses is also required for identifying the cameras' orientations.1. Introduction

A wireless sensor network (WSN) is a collection of small and inexpensive embedded devices, with limited sensing, computing and communication capabilities, acting together to provide measurements of physical parameters or to identify events in known or unknown environments. Their real-life applications are rapidly emerging in a wide variety of domains, ranging from smart battlefields to natural habitat monitoring, precision agriculture, industrial process control or intelligent traffic management.

A large majority of the algorithms developed for WSNs are based on the assumption that all sensor nodes are aware of their position and, furthermore, of the position of nearby neighbor nodes. Since every measurement provided is strictly linked with the sensor node position in the field, a localization process with respect to a local/global coordinate system for each node must be carefully performed. Moreover, some other wireless sensor network related issues (e.g., geographic routing, sensing coverage estimation or nodes' sleep/wake-up procedures) might increase the need to accomplish nodes' localization by relying on location information.

The aim of localization is to supply the physical coordinates for all sensor nodes [1,2]. In the case of manually deployed WSNs, this localization process is almost straightforward. For random deployments in hostile terrain or dangerous battlefields often done through aerial scattering procedures from airplanes, guided missiles or balloons, the nodes' localization problem becomes complicated relying on special nodes that can detect their location automatically. These particular nodes are known as anchor or beacon nodes [3–8], being the cornerstones of every localization technique within global coordinates.

In order to identify their exact location, an almost general solution is to equip the beacon nodes with a Global Position System (GPS) receiver. This approach, even though it is based on a mature technology, has some drawbacks that make it impractical for many applications involving random deployments: (a) the GPS receivers are relatively expensive, energy demanding and bulky; (b) the GPS receivers may be confused by environmental obstacles, tall buildings, dense foliage, etc. [9–11]; (c) the GPS receivers cannot work when an insufficient number of satellites are directly visible [9,10,12]; and (d) the GPS receivers cannot solve completely the localization problem in the case of WSNs based on non-isotropic sensors (e.g., video-based sensor networks or multimedia-based sensor networks) because they cannot provide the orientation of the sensor camera.

In this paper we propose a new method to obtain the anchor node location that uses a digital compass (magnetometer), an image taken by a video camera and the exact location data for some geographically-located referential objects (e.g., solitary trees, electricity transmission towers, furnace chimneys, etc.) situated in the deployment area. This method, due to the low price of digital compasses, is particularly suitable for video- or multimedia-based wireless sensor networks [13–17] where the nodes already equipped with digital compasses (due to the necessity to estimate the cameras' fields of view) may simply become anchor nodes or anytime the GPS receiver is not considered to be an appropriate solution.

The remainder of the paper is organized as follows: Section 2 is a brief overview of anchor localization techniques. In Section 3 we describe the three-step methodology underlining the types of information needed. Section 4 presents the kernel of our approach—the triangulation-based procedure that fuses a valid photo image with the related compass information to obtain the beacon node position within global coordinates. In Section 5, an illustrative case study is depicted, while the conclusions are drawn in the last section.

2. Related Work

Due to cost and power consumption reasons, every node of a randomly deployed WSN cannot be equipped with localization components, only for a small number of them, named anchors. The anchor node localization procedure is basically done through triangulation or trilateration based on a number of known locations. The classical solution used in practice involves GPS receivers that use satellites to obtain the anchors' positions, but this approach is not generally viable due to some known shortcomings of GPS [18].

Reference [3] proposes a GPS-free approach designed for outdoor environments where a group of landmarks with known locations, can interact with the wireless nodes through radio signals. The method extends basic connectivity notion by adding an ideal theoretical model of radio behavior. Unfortunately, the solution cannot be applied in randomly deployed WSNs in harsh environments, where the grid infrastructure of pre-localized landmarks to support positioning localization infrastructure could not be set.

Another solution that uses prior known positions for anchor nodes is presented in [2]. The authors developed a method that aims to calculate the best placement for anchors in order to minimize the localization error and to reduce the number of required anchors, but it still requires pre-calculated deployment.

The anchor localization solution described in this paper does not rely on GPS receivers and does not require manual pre-calculated node placement. Instead, it uses a video camera and a compass to estimate the anchor position based on images captured by the camera and the compass information. It is very cost-effective, especially in the case of video- or multimedia-based wireless sensor networks, where only a compass is needed as supplementary node equipment.

3. Methodology Description

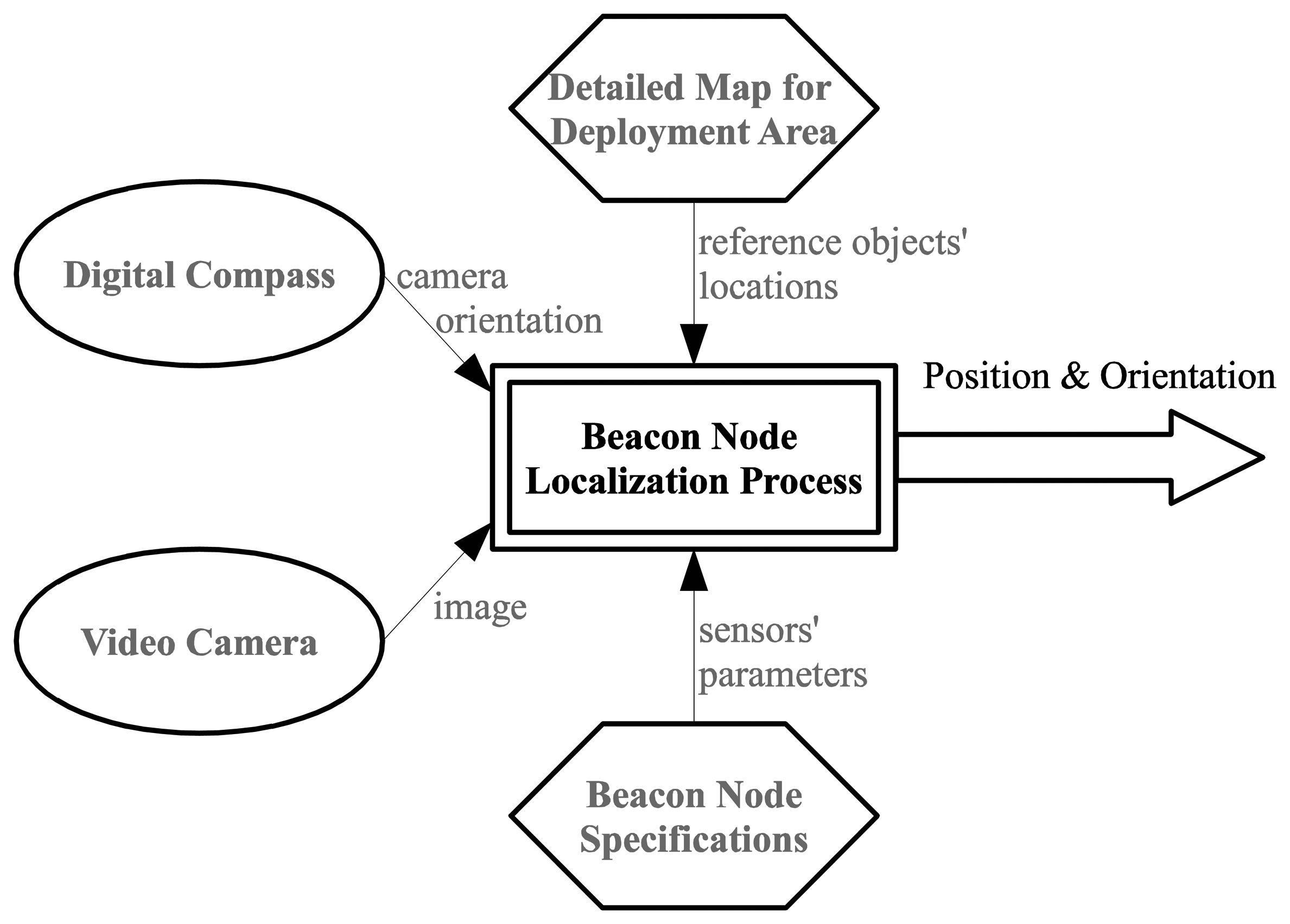

Anchor node localization is a key prerequisite for every localization technique in wireless sensor networks within global coordinates. Our method to determine the exact position of this special type of nodes is an alternative for using GPS devices in areas where the Earth's magnetic field is not disturbed by structures containing ferrous metals or by electronic interferences. It requires information captured by two sensors that equip the node (video and compass), exact positions of a few reference objects in the deployment area and some constructive parameters of the mentioned sensors, as follows (Figure 1):

- -

A video image—a valid snapshot provided by the anchor node's camera immediately after its random deployment in the field;

- -

Compass information—the orientation of the anchor node's camera provided by a digital compass module;

- -

A set of reference objects' locations in the field of deployment—we have to choose some referential objects (towers, lonely trees, electricity transmission towers, furnace chimneys, etc.) inside the area where the deployment is done and identify their precise locations using detailed maps (e.g., Google maps or military maps). To increase the efficiency of our proposed method, the reference objects have to be easily identifiable in the landscape when using automated object recognition algorithms [19,20];

- -

Constructive parameters of the video camera and digital compass (camera angle of view, camera depth of view, compass heading accuracy, etc.)—unlike the straightforward GPS-based localization, our approach involves some computations that require knowing the constructive parameters of sensors.

Based on information described above, a three-step methodology must be performed after the random deployment in the field:

- (1)

The camera will take a valid photo and send it to the base station (e.g., situated on the airplane which made the deployment) where enough computing and energy power is available;

- (2)

Identify reference objects within photo—this step is done using an object recognition procedure applied to the video image;

- (3)

Obtain the exact location through a triangulation-based procedure—the global coordinates of the anchor node are obtained by analyzing the video image in conjunction with the angle value provided by the compass using a triangulation technique. This procedure is presented in details in the following section.

4. Video/Compass Based Node Localization Using Triangulation

After anchor node deployment, a startup image is gathered by its video sensor and sent to the central point where an automatic shape recognition algorithm or even a human operator may identify objects from a given reference object set ℜ:

This set is created prior to the sensor deployment and is based on analysis made upon known information about deployment field. It will contain NRO representative objects Obji selected from the set Obj(Map) of all identifiable objects with known geographical positions from the deployment map. They have to be static (with fixed position) and easy to identify visually in images. They also have to be preferably thin in order to reduce the processing error. For objects localization we should use a military or a highly detailed topographic map if available, but even Google maps in conjunction with GPS localization offers a good estimation as demonstrated by the case study presented in Section 5.

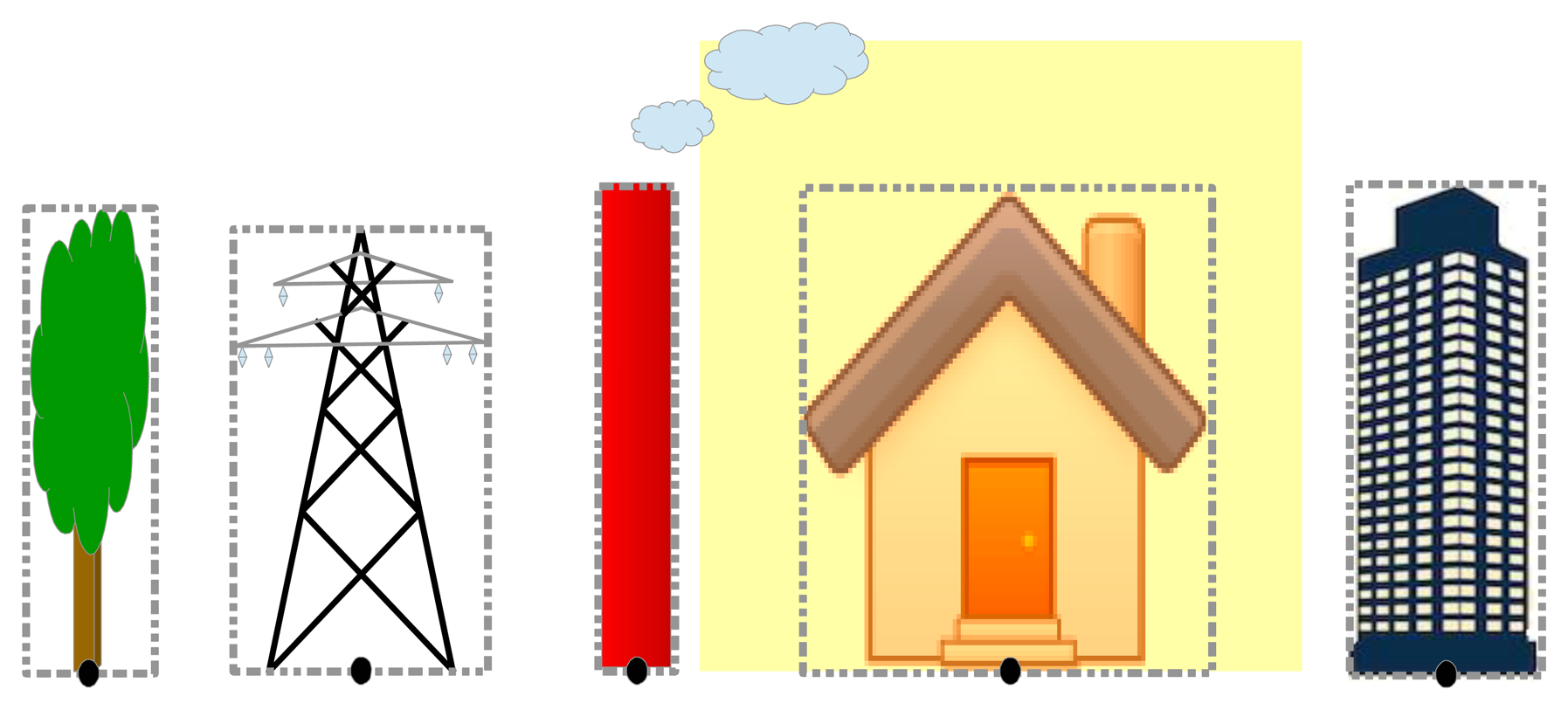

All objects that belong to ℜ will be marked with a reference point calculated as the median point of the lower side of the bounding box framing the object. Position of the reference point is selected in order to be invariant to the height of the objects. However, if dimensions of some of the objects from ℜ are known, we can use this information to estimate the distance between the camera and these objects and therefore to validate or to make small correction after main localization algorithm is ended. Examples for choosing the reference point are depicted in Figure 2.

During setup processing, all reference objects from ℜ that could be identified in the setup images gathered from the anchors' sensors should be marked. Considering K deployed anchors, this procedure results in Γk subsets of ℜ, one for each anchor node k ∈ [1…K]. A supplementary validation has to be applied on subsets in order to eliminate reference objects placed in positions inappropriate for localization procedure. This includes cases of obstructed objects, overlapping objects, and objects intersecting image edges. The further processing considers only the node set Σ containing anchors having card(Γk) ≥ 2. To validate a candidate anchor the following algorithm is proposed, where σi represents the image gathered in the setup phase from the anchor i:

| 1: | set Γi ≔ { } |

| 2: | foreach oj ∈ ℜ do |

| 3: | if σi *contains* oj then Γi≔ Γi ∪ {oj} |

| 4: | foreach ok ∈ Γi do |

| 5: | if *obstructed or partially visible* (ok, σi) then |

| 6: | Γi ≔ Γi / {ok} |

| 7: | foreach om ∈ Γi do |

| 8: | foreach on ∈ Γi do |

| 9: | if (m != n) and (om ∩ on != { }) then |

| 10: | Γi≔ Γi / {on, om} |

| 11: | if card(Γi) >= 2 then |

| 12: | * validate anchor i |

The next step of localization algorithm is based on the well-known triangulation method [21,22]. Triangulation is the process of determining the location of a point by measuring angles to it from other two points whose position on a map is known. In case of more than two reference points, triangulation could be applied repetitively on all possible pairs formed by two distinctive points in order to decrease the error by averaging the results.

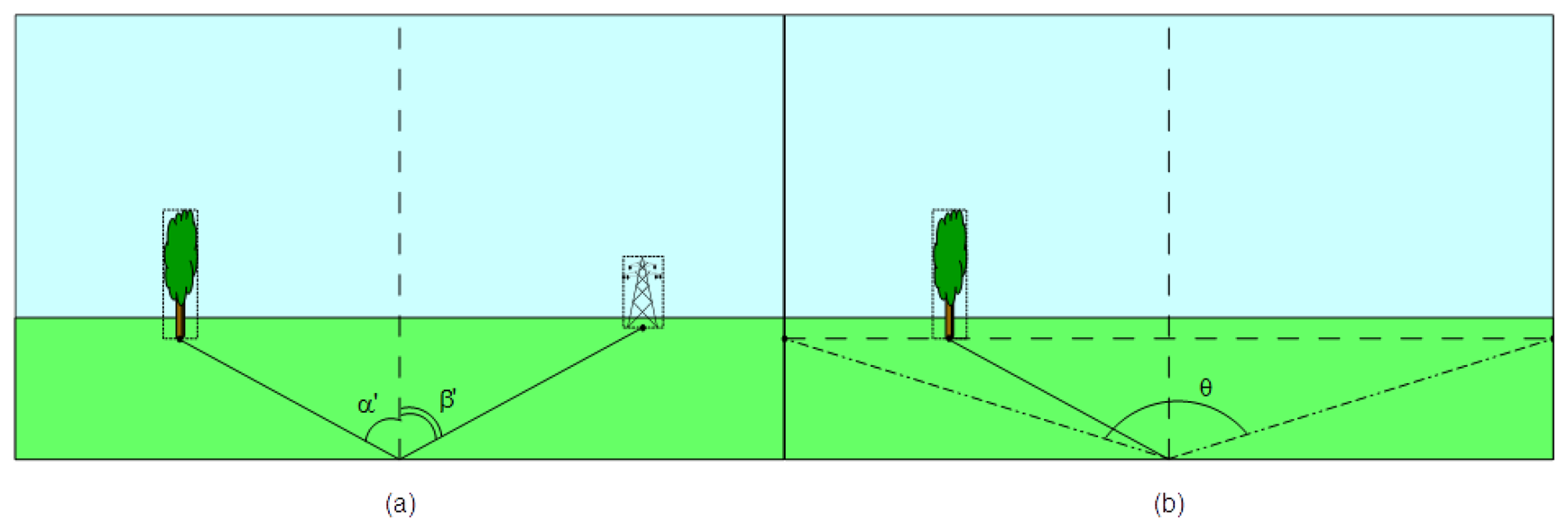

The first step consists in determining the angle of the vertices formed by the camera and the two reference points. For this we consider the camera model presented in Figure 3. The model is expressed by the parameters of its Field of View (FOV): the angle of view ω, the minimum (Dmin) and the maximum depth of field (Dmax). For an ideal camera, the field of view represents the volume of a 3D pyramid containing the part of the environment visible to the camera. The angle of view is considered on a horizontal plane projection of the FOV. All points from this volume are visible in the captured image as long as any obstacles do not obstruct the projection ray from the point to the optical center of the camera. However, the depth of field of a real camera is constrained due to limited resolution and distortion of its lenses. Some objects that are too close or too far from the lens will appear blurred. Therefore, the field of view depth will be limited in a range described by an [Dmin, Dmax] interval.

To determine the absolute camera position using two reference objects we define first a Cartesian reference system. Considering the map of the deployment area we choose and mark an arbitrary point situated near lower left corner of the area. Then we measure on the map the position of this point in geographic coordinates as a (latitude, longitude) pair. Next, we consider this point as the origin of a Cartesian referential system having the y-axis oriented along the North direction on the map, and the x-axis pointing toward East.

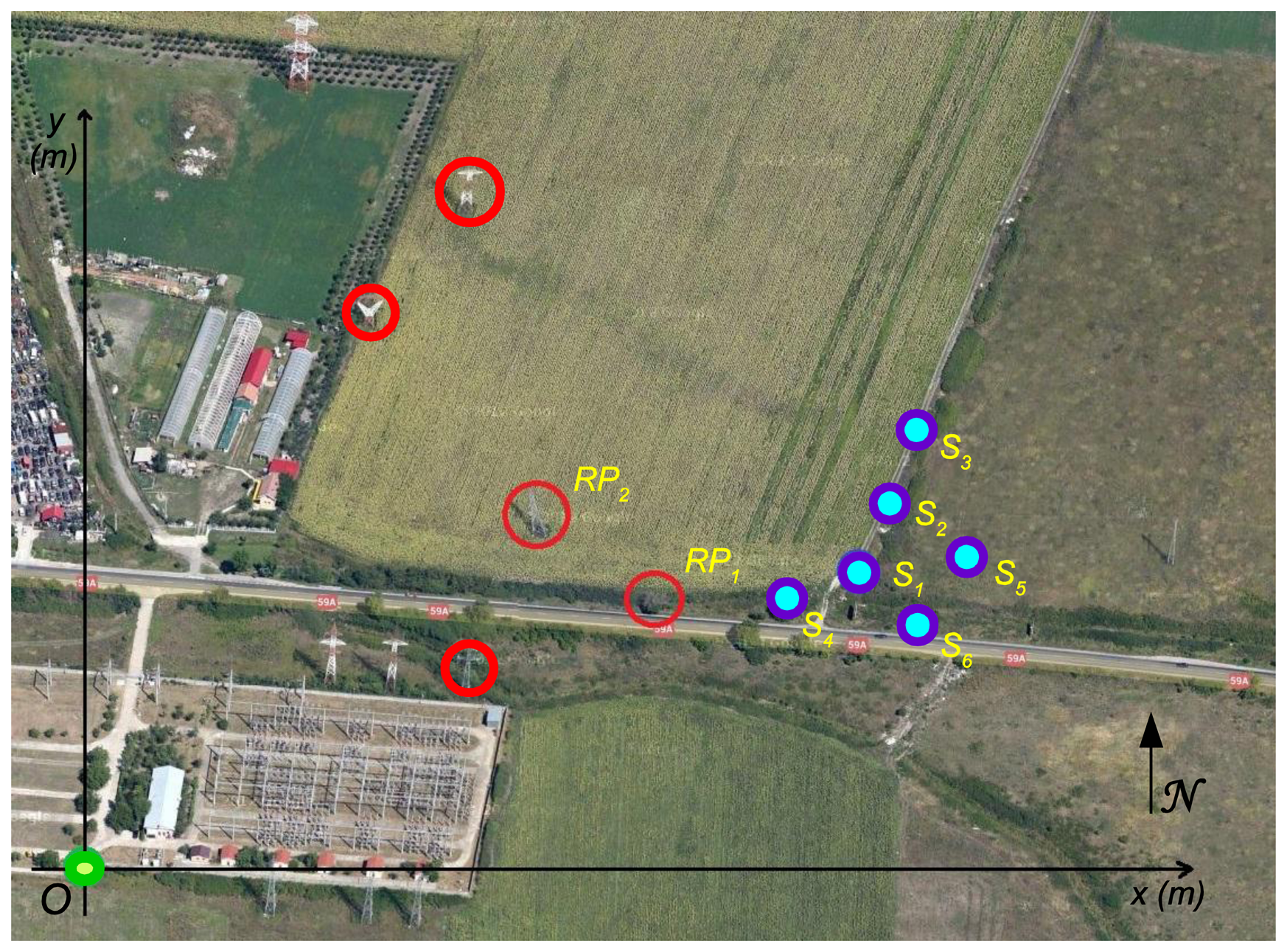

Figure 4 depicts an example of a referential system defined on a topographical map. For easy identification, the reference objects are marked with red circles on the map, while the chosen origin is highlighted in green.

Then we can express the absolute reference objects location into this referential system. For this, we use the haversine Equation (2) based on inverse tangent function to calculate the great circle distance between two points on the Earth's surface [23]:

Therefore, to calculate the absolute coordinates of the reference objects in the proposed Cartesian reference system, we can consider the distances between the origin point and the projection of the corresponding objects reference points on the two axes. These projections will have Δφ = 0 on the x-axis and Δλ = 0 on the y-axis. Considering (φo, λo) and (φRP1, λRP1) as the geographical coordinates of the origin and the coordinates of the first reference point, we can use Equations (3) and (4) for absolute position computation:

Then, the position of the second reference point (xRP2, yRP2) will be computed using a similar set of formulas.

To finalize the first step we use the image captured by the sensor camera where the two reference objects were identified. The aim is to determine the angles α′ and β′ formed by the camera axis and the two reference points. A vertical line drawn on the center of image and therefore aligned with the camera's direction of sight is used as reference. Angles are measured counterclockwise relative to this direction, as depicted in Figure 5a. However, these values are hardly influenced by camera's tilt, perspective and angle of view. To eliminate this influence we have to consider the ratio between the angle of view ω and the measured angle of view θ corresponding to reference point depth. The measured angle of view is obtained from the triangle formed in image by the center of the bottom margin and the two vertices that corresponds to reference point perspective. These vertices are determined by intersection of a horizontal line traversing the reference point with the left and right margins as presented in Figure 5b.

Starting from these values the next step consists in computing the values of the reference angles relative to the geographic North. First we use the compass measurement to obtain the orientation of the camera axis denoted here by the angle γ. In addition we should consider the magnetic declination of the geographical position of the deployment field. Magnetic declination represents the angle between geographic and magnetic North at a specific location and can be positive or negative [24]. Despite the relative vicinity of the geomagnetic North Pole and the geographic North Pole the error could be significant. The correction consists in adding this magnetic declination mag_decl to the measured angle. The final values of reference points' angles α and β are given by the Equation (5):

The last step is the triangulation process itself, presented in Figure 6. It implies the computation of the position (xc,yc) of the sensor camera on the Cartesian reference system defined on the map. For this we use the following equations:

Therefore, the position is calculated as:

The final result of entire localization process is expressed by the triplet {xc, yc, γ}. Together with existing FOV information {ω, Dmin, Dmax}, it allows implementation of complex application based on localized anchor node. The accuracy of proposed localization algorithm is influenced by several factors:

The approximation of the radius of the average Earth circumference, which results from the mean of radius variation. However, this variation from the average radius to meridian (6,367.45 km) or equator (6,378.14 km) is less than 0.08%.

The precision of localization of reference objects. If we use a GPS for estimation we can rely on a precision around 10 m, depending of the number and position of available satellites [25].

The precision of digital compass measurement in general is less than 1.0 degree. For example the three-axis HMR3000 Digital Compass Module (Honeywell, Plymouth, MN, USA) provides 0.5° accuracy [26].

The precision of reference points' angles estimation depends on the method used to identify reference objects into setup images. However, by favoring very thin objects during creation of ℜ we can hardly increase the accuracy.

5. Case Study

To demonstrate the effectiveness of the presented algorithm we consider six anchor nodes, deployed in an area near km-2 of route RO DN59A. The deployment configuration is presented in Figure 7 using a Google map [27], where the reference objects are marked with red circles while the sensors Si are marked in blue.

The chosen origin O is highlighted in green and has the geographical coordinates of 45.759941° North latitude and 21.158411° East longitude. The magnetic declination in this area is 4.39984°. All anchor nodes are equipped with VGA sensors characterized by a measured angle of view ω = 39° and an approximate FOV depth in the range [0.6 m, 450 m].

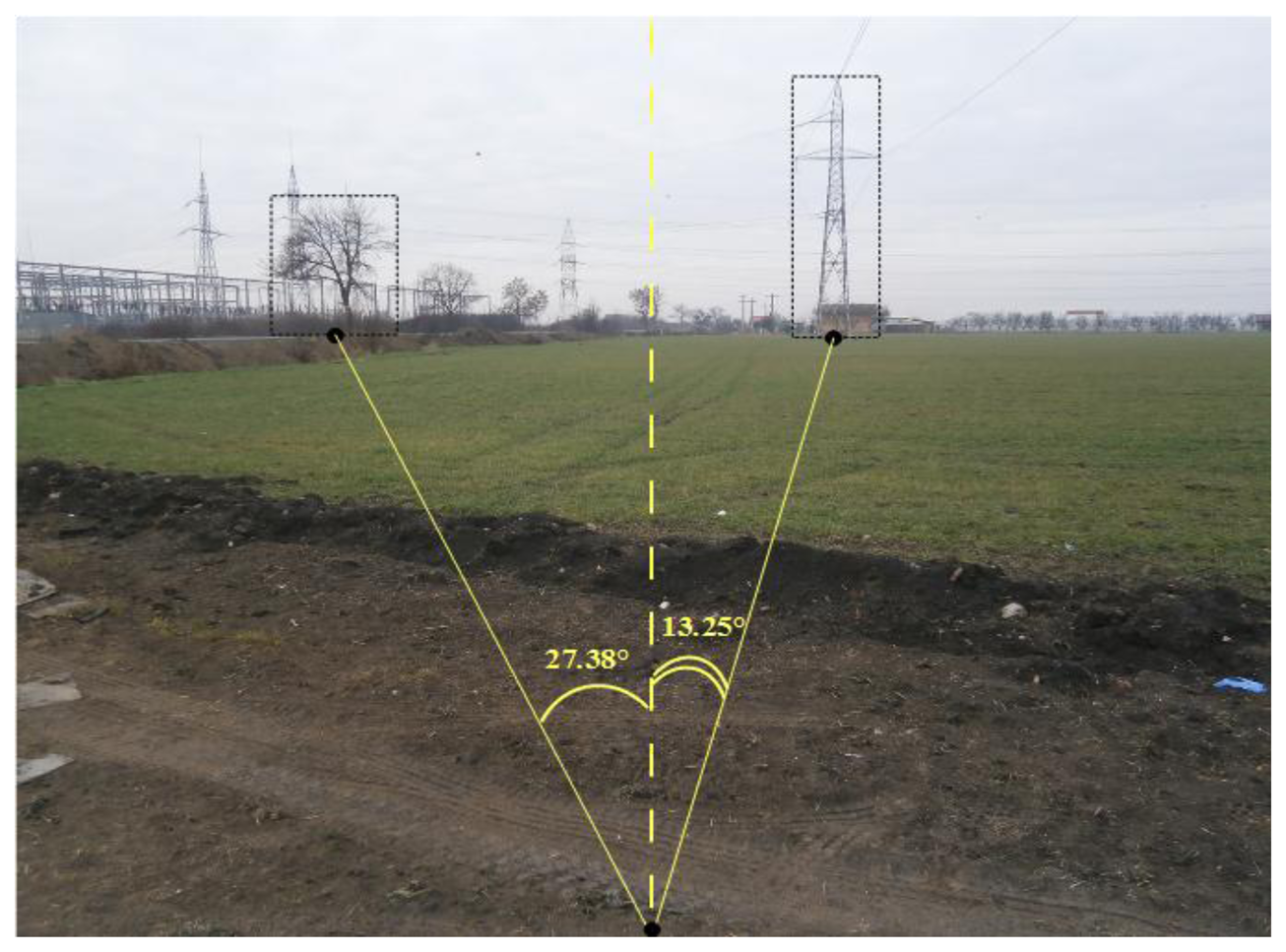

In the following, we present in detail the localization steps for the first node, the results for all nodes being summarized in Table 1. In the image taken by the S1 node (Figure 8), a tree and an electricity pole, used further as reference objects RP1 and RP2, had been identified.

For S1, the bearing γ, measured by the digital compass, was 282.2° WNW. The reference points' angles were measured as presented in Figure 8 and have the values α′ = −27.38° and β′ = 13.25°. The angles of view θRP1 and θRP2 corresponding to the depth of the reference points are 92.39° and 92.19°, respectively. The correction factors (ω/θ) are in this case 2.3689 and 2.3638. Using Equation (4) we computed the corrected reference points' angles as α = 275.0471° and β = 292.2051°.

Using Equations (6) and (7) we obtained the localization coordinates in local referential system:

In order to estimate the error we used a supplementary GPS measurement of sensor S1 position and, after applying Equations (2) and (3), we obtained:

Table 1 summarizes the results obtained after repeating this procedure for all six nodes deployed. It presents the measured and estimated positions for all nodes and the absolute position errors, relative to GPS provided location, defined as:

As resulting from Table 1, in our experiment the maximum absolute position error is around 11 m. The mean of absolute position error for all six nodes is 7.12 m, with a standard deviation of 2.36 m. Moreover, ignoring the compass error and considering the GPS positioning error around 10 m [25] we can reasonable estimate a maximum localization error for the mentioned experimental deployment at a value less than 25 m using presented method.

6. Conclusions

Localization is a crucial procedure for random deployed wireless sensor networks. It is generally based on anchor nodes with efficient capabilities to automatically acquire their position in global coordinates. In this paper, we proposed a new anchor node localization technique meant for special cases where GPS receivers are unavailable or too expensive. Using a triangulation technique based on a video image and compass information our method displays a reasonable precision. Moreover, our method is especially appropriate for non-isotropic sensor networks, where magnetometers providing the orientation of the sensor cones are mandatory.

Authors Contributions The authors contributed equally to this work.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Bachrach, J.; Taylor, C. Localization in Sensor Networks. In Handbook of Sensor Networks: Algorithms and Architectures; John Wiley & Sons, Inc: Hoboken, NJ, USA, 2005; Volume 9, pp. 277–310. [Google Scholar]

- Kunz, T.; Tatham, B. Localization in wireless sensor networks and anchor placement. J. Sens. Actuator Netw. 2012, 1, 36–58. [Google Scholar]

- Bulusu, N.; Heidemann, J.; Estrin, D. GPS-less low-cost outdoor localization for very small devices. IEEE Pers. Commun. Mag. 2000, 7, 28–34. [Google Scholar]

- Cota-Ruiz, J.; Rosiles, J.-G.; Sifuentes, E.; Rivas-Perea, P. A low-complexity geometric bilateration method for localization in wireless sensor networks and its comparison with least-squares methods. Sensors 2012, 12, 839–862. [Google Scholar]

- Paul, A.K.; Sato, T. Detour path angular information based range-free localization in wireless sensor network. J. Sens. Actuator Netw. 2013, 2, 25–45. [Google Scholar]

- Sahoo, P.K.; Hwang, I. Collaborative localization algorithms for wireless sensor networks with reduced localization error. Sensors 2011, 11, 9989–10009. [Google Scholar]

- Chen, X.; Chen, J.; He, J.; Chen, C. The selection of reference anchor nodes and benchmark anchor node in the localization algorithm of wireless sensor network. Intell. Autom. Soft Comput. 2012, 18, 659–669. [Google Scholar]

- Kim, T.; Shon, M.; Kim, M.; Kim, D.S.; Choo, H. Anchor-node-based distributed localization with error correction in wireless sensor networks. Int. J. Distrib. Sens. Netw. 2012, 2012, 975147:1–975147:4. [Google Scholar]

- Williams, B. Intelligent Transport Systems Standardization; Artech House: Norwood, MA, USA, 2008. [Google Scholar]

- Feiner, S.; MacIntyre, B.; Höllerer, T.; Webster, A. A touring machine: Prototyping 3D mobile augmented reality systems for exploring the urban environment. Pers. Technol. 1997, 1, 208–217. [Google Scholar]

- Vecchio, M.; López-Valcarce, R.; Marcelloni, F. A two-objective evolutionary approach based on topological constraints for node localization in wireless sensor networks. Appl. Soft Comput. 2012, 12, 1891–1901. [Google Scholar]

- Rodríguez, A.; Bergasa, L.M.; Alcantarilla, P.F.; Yebes, J.; Cela, A. Obstacle Avoidance System for Assisting Visually Impaired People. Proceedings of the IEEE Intelligent Vehicles Symposium Workshops, Madrid, Spain, 3–5 June 2012; pp. 1–6.

- Costa, D.G.; Guedes, L.A.; Vasques, F.; Portugal, P. Energy-efficient packet relaying in wireless image sensor networks exploiting the sensing relevancies of source nodes and DWT coding. J. Sens. Actuator Netw. 2013, 2, 424–448. [Google Scholar]

- Costa, D.G.; Guedes, L.A. The coverage problem in video-based wireless sensor networks: A survey. Sensors 2010, 10, 8215–8247. [Google Scholar]

- Akyildiz, I.F.; Melodia, T.; Chowdury, K.R. Wireless multimedia sensor networks: A survey. IEEE Wirel. Commun. 2007, 14, 32–39. [Google Scholar]

- Pescaru, D.; Istin, C.; Naghiu, F.; Gavrilescu, M.; Curiac, D. Scalable Metric for Coverage Evaluation in Video-Based Wireless Sensor Networks. Proceedings of the 5th International Symposium on Applied Computational Intelligence and Informatics, Timisoara, Romania, 28–29 May 2009; pp. 323–328.

- Farooq, M.O.; Kunz, T. Wireless sensor networks testbeds and state-of-the-art multimedia sensor nodes. Appl. Math. Inf. Sci. 2014, 8, 935–940. [Google Scholar]

- Rizos, C. Trends in Geopositioning for LBS, Navigation and Mapping. Proceedings of the International Symposium & Exhibition on Geoinformation, Penang, Malaysia, 27–29 September 2005; pp. 27–29.

- Bennamoun, M.; Mamic, G.J. Object Recognition. Fundamentals and Case Studies. In Advances in Computer Vision and Pattern Recognition; Springer: London, UK, 2002. [Google Scholar]

- Campbell, R.J.; Flynn, P.J. A survey of free-form object representation and recognition techniques. Comput. Vis. Image Underst. 2001, 81, 166–210. [Google Scholar]

- Zhou, H.; Wu, H.; Xia, S.; Jin, M.; Ding, N. A Distributed Triangulation Algorithm for Wireless Sensor Networks on 2D and 3D Surface. Proceedings of the 30th IEEE International Conference on Computer Communications—INFOCOM, Shanghai, China, 10–15 April 2011; pp. 1053–1061.

- Bal, M.; Liu, M.; Shen, W.; Ghenniwa, H. Localization in Cooperative Wireless Sensor Networks: A Review. Proceedings of the 13th International Conference on Computer Supported Cooperative Work in Design—CSCWD, Santiago, Chile, 22–24 April 2009; pp. 438–443.

- Sinnott, R.W. Virtues of the haversine. Sky Telesc. 1984, 68, 159. [Google Scholar]

- Easa, S. Analytical solution of magnetic declination problem. J. Surv. Eng. 1989, 115, 324–329. [Google Scholar]

- Hofmann-Wellenhof, B.; Lichtenegger, H.; Collins, J. Global Positioning System: Theory and Practice, 5th ed.; Springer: Vienna, Austria, 2001. [Google Scholar]

- Honeywell International Inc. Honeywell Magnetic Sensors—HMR3000. Available online: http://www.magneticsensors.com/magnetometers-compasses.php (accessed on 07 January 2014).

- Google. Google Maps. Available online: https://maps.google.com/ (accessed on 07 January 2014).

| Sensor # | xGPS (m) | yGPS (m) | xC (m) | yC (m) | Absolute Position Error Δd (m) |

|---|---|---|---|---|---|

| 1 | 277.18 | 139.14 | 276.55 | 131.65 | 7.52 |

| 2 | 296.43 | 189.31 | 298.63 | 192.47 | 3.85 |

| 3 | 310.16 | 238.80 | 299.87 | 234.54 | 11.14 |

| 4 | 258.95 | 131.58 | 261.49 | 137.25 | 6.21 |

| 5 | 336.31 | 156.49 | 332.28 | 152.99 | 5.34 |

| 6 | 319.71 | 124.90 | 312.85 | 119.61 | 8.66 |

© 2014 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license ( http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Pescaru, D.; Curiac, D.-I. Anchor Node Localization for Wireless Sensor Networks Using Video and Compass Information Fusion. Sensors 2014, 14, 4211-4224. https://doi.org/10.3390/s140304211

Pescaru D, Curiac D-I. Anchor Node Localization for Wireless Sensor Networks Using Video and Compass Information Fusion. Sensors. 2014; 14(3):4211-4224. https://doi.org/10.3390/s140304211

Chicago/Turabian StylePescaru, Dan, and Daniel-Ioan Curiac. 2014. "Anchor Node Localization for Wireless Sensor Networks Using Video and Compass Information Fusion" Sensors 14, no. 3: 4211-4224. https://doi.org/10.3390/s140304211