Control Framework for Dexterous Manipulation Using Dynamic Visual Servoing and Tactile Sensors’ Feedback

Abstract

: Tactile sensors play an important role in robotics manipulation to perform dexterous and complex tasks. This paper presents a novel control framework to perform dexterous manipulation with multi-fingered robotic hands using feedback data from tactile and visual sensors. This control framework permits the definition of new visual controllers which allow the path tracking of the object motion taking into account both the dynamics model of the robot hand and the grasping force of the fingertips under a hybrid control scheme. In addition, the proposed general method employs optimal control to obtain the desired behaviour in the joint space of the fingers based on an indicated cost function which determines how the control effort is distributed over the joints of the robotic hand. Finally, authors show experimental verifications on a real robotic manipulation system for some of the controllers derived from the control framework.1. Introduction

Multi-fingered robotic hands allow both the execution of robust grasping tasks and dexterous manipulation. These are two of the skills that human beings have: dexterity and anthropomorphism [1]. These features enable multi-fingered hands to be controlled not only for holding the object with a firm grasp, but also for generating trajectories of the object with the movements of the fingers. This last form of motion control of the grasped object is usually known as “in-hand manipulation” or “dexterous manipulation”. In these cases, the kinematic redundancy of the fingers is used to change the object from an initial to a final configuration, while maintaining fingertip contacts [2,3]. Therefore, fingertip force control plays a very important role for dexterous manipulation in order to give the object the desired motion. For that end, tactile sensors are usually employed to measure the force exerted by the fingertips in order to maintain a desired value and to compute the contact points. This paper presents a new control framework to perform in-hand manipulation with multi-fingered robotic hands using feedback data from tactile and visual sensors.

Tactile sensors share a common property in robotics: they analyze the direct contact between the robot and the objects of the environment in order to adapt the robot’s reaction to the manipulated object. Tactile information is processed according to two different aims: object identification and manipulation control. On the one hand, the properties of the objects extracted from the robot’s tactile sensors can be used to categorize the objects into different classes. On the other hand, the measurements obtained from the tactile sensors can also be applied to control the interaction force [4]. In this paper, a hybrid scheme is proposed for manipulation control which takes into account both the robot hand dynamic model and the fingertips’ forces.

Several approaches to solve the motion planning associated with dexterous manipulation problem have been proposed during the last decade. They have been mainly focused in three specific lines: graph representations [5–7], probabilistic trees [8,9] and hybrid control schemes [10,11]. Some of the previous planners (such as [5,6]) do not take into account the contact forces which are applied to the object and they are usually only based on the maintenance of the geometric contact between the surfaces of the fingers and the object (using surface models [12,13]), kinematic constraints and/or using manipulability analysis [14]. Fingertip force control is usually employed for planning in-hand manipulation algorithms based on hybrid control schemes [11]. The main objective of these algorithms is to control the grasping force to a desired value (satisfying friction conditions) besides controlling the position of the object along a desired trajectory given in the Cartesian space [4]. None of these previous works employs visual and tactile feedback to perform the manipulation task taking into account the robotic hand dynamics. This paper proposes a control framework that allows the definition of new controllers where the manipulated object motion is controlled using visual and tactile servoing algorithms.

Visual servo control techniques [15] allow the guidance of a robotic system using visual information usually in two types of configuration: eye-in-hand (camera fixed to the robot end) and eye-to-hand (external camera). This type of control is frequently applied in robot positioning tasks for a wide range of applications [16,17]. In robotic manipulation, visual techniques are usually applied as only computer vision algorithms for grasping [18,19]. Classical techniques of visual servo control do not take into account the system dynamics because they assume that the robot is a perfect positioning device. This assumption is not appropriate when the robot executes fast and/or accurate movements such as dexterous manipulation with multi-fingered robotic hands. By means of direct or dynamic visual servoing, the internal control loop of servo motors is removed and the visual servo control is used to stabilize the robotic system [20]. This paper uses direct visual servoing in order to perform a visual tracking of the manipulated object and the guidance of the robotic manipulation system. Therefore, in contrast with other control schemes for dexterous manipulation [10–19], the proposed approach takes into account the dynamics model of the robot hand employing visual and tactile sensory information.

In addition, the proposed control approach is based on an optimal control framework. This approach is used to visual servo control a robotic hand during a manipulation task taking into account the hand dynamics. From that framework, several new controllers are derived, which offers a useful unification methodology for direct control any robotic manipulation system using visual and tactile sensors feedback, an approach which has not been implemented in previous research projects. The proposed approach considers the optimization of the motor signals or torques sent to the robotic hand during visual control tasks. Moreover, the general method presented in the paper is based on the formulation of the tracking problem in terms of constraints, which was suggested in [21] inspired by results from analytical dynamics with constrained motion.

Summarizing, the proposed optimal control framework, from which several new dynamic controllers can be derived, is considered as the main contribution of this paper in comparison with other previous approaches for dexterous manipulation [5–14]. In addition, another contribution of this framework is that it uses direct visual servoing to perform the visual tracking of the manipulated object taking into account the dynamics model of the robot hand. In this sense, the presented optimal framework provides a useful unification methodology for the direct control of any robotic manipulation system using visual and tactile sensors feedback.

The paper is organized as follows: Section 2 describes the system architecture of the robotic manipulation system. Afterwards, the kinematics and dynamics formulation of the overall robotic system are described in Section 3. Section 4 explains the theoretical concepts about the employed optimal controller. In Section 5, the image trajectory to be tracked by the robotic manipulation system is described as a task constrain. In Section 6, the general dynamic visual servoing framework and the required modifications to perform a hybrid force control are presented. In Section 7, some new controllers are derived from the optimal control framework. Section 8 describes the experimental results illustrating the proposed controllers. The final section reports some important conclusions.

2. System Architecture

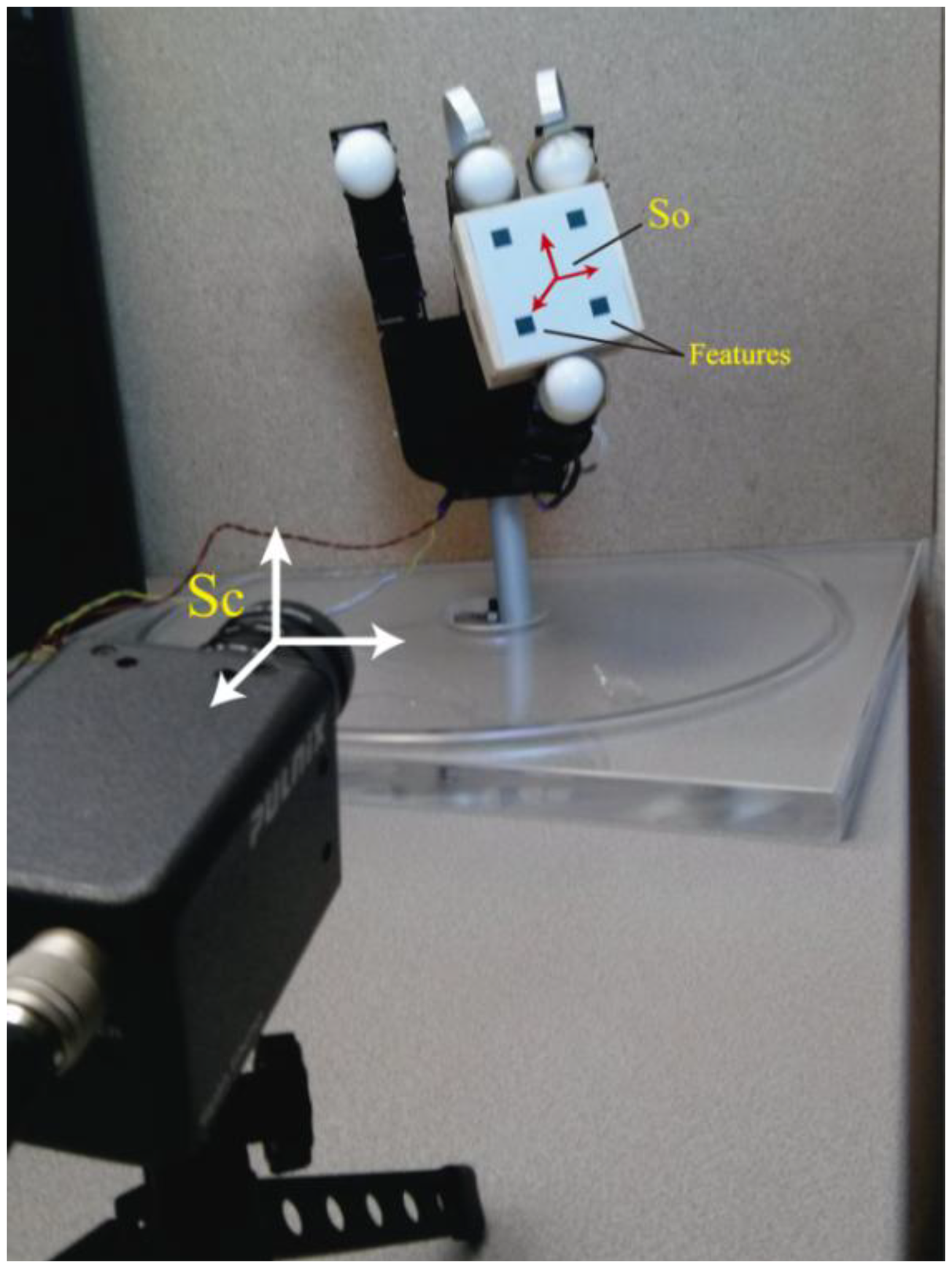

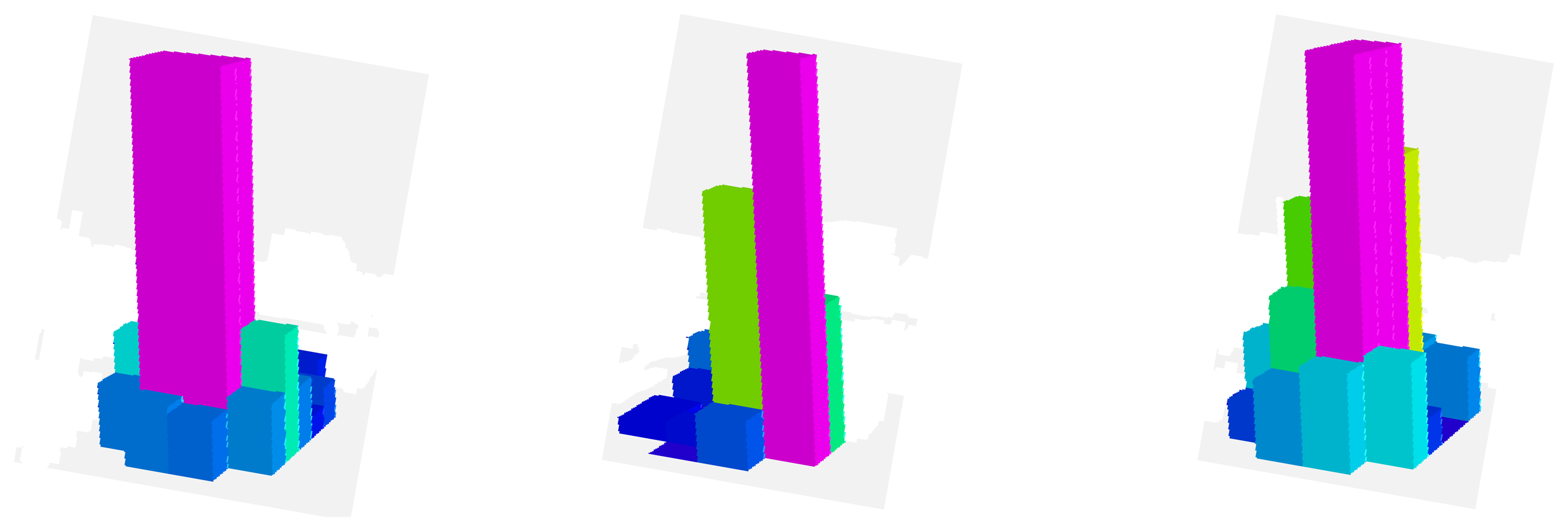

The robotic manipulation system is composed of the Allegro robotic hand (SimLab Co., Seoul, Korea) (see Figure 1a,b). This hand has four fingers and sixteen independent torque-controlled joints (four dof per each finger). This robotic hand has a lightweight and portable anthropomorphic design very suitable for low-cost dexterous manipulation in research. It is capable of holding up to 5 kg and it has support for real-time control and online simulation. In addition, a set of tactile sensors is employed as additional tool in the manipulation system (see Figure 1a). These sensors are installed in an extrinsic configuration [22] on the Allegro hand. These sensors are located at the three hand fingertips that will be used during the manipulation (furthermore, only the three last degrees of freedom of each finger will be controlled, performing a non-redundant system). The tactile sensors are pressure sensing arrays, type PPS RoboTouch (Pressure Profile Systems, Inc., Los Angeles, CA, USA). All these tactels can register pressure vales in the range 0–140 kPa with a frequency of 30 Hz and a sensitivity of 0.7 kPa. Figure 1c shows a 3D representation of the pressure measurements registered by these sensors during a manipulation task. The force exerted by the fingertip is computed using the pressure measurements of the tactile sensors. These measurements are multiplied by the area of each tactel, 25 mm2, so the forces are obtained. The mean of these forces is considered as the force applied by the fingertip. Moreover, the contact points are supposed to be in the tactel of maximum pressure exerted.

Figure 2 represents the experimental setup employed. For the visual servo control, a Gigabit Ethernet TM6740GEV camera (JAI-Pulnix, Shanghai, China) is used, which acquires 200 images every second with a resolution of 640 × 480 pixels. The camera is supposed to be previously calibrated and the camera intrinsic parameters are (u0, v0) = (298, 225) px, and (fu, fv) = (1,082.3, 1,073.7) px (position of the optical center (u0, v0) and the focal length in the x and y directions, respectively). The manipulated object has four marks which will be the extracted visual features.

3. Kinematics and Dynamics Formulation

3.1. Kinematics Equations

We consider the robotic hand as a set of k fingers with three degrees of freedom. Each finger holds an object considering contact points with friction and without slippage. In order to firmly grasp and manipulate the object, the grasp is considered to be an active form closure. Thus, each fingertip i is exerting a fingertip force fCi ∈ ℜ3 within the friction cone at the contact point. The grasping constrain between the robot and the object is done by the grasp matrix [23] which relates the contact forces fC = [fClT … fCkT] T at the fingertips to the resultant force and moment τo ∈ ℜ6 on the object:

As Figure 2 shows, a camera is fixed at the workspace in an eye-to-hand configuration in order to observe a set of features located at the surface of the manipulated object. The vector s = [f1x, f1y, f2x, f2y, …, fnx, fny]T ∈ ℜ2n defines the image coordinates of the extracted features. From the interaction matrix Ls(s) [24] it can be obtained the following relation:

The finger Jacobian denoted by JHi ∈ ℜ3×3 relates the joint velocities of the ith finger (q̇ ∈ ℜ3) with the fingertip velocities (vFi ∈ ℜ3) referenced in camera coordinate frame SC:

This finger Jacobian can be easily obtained from the typical robot Jacobian matrix and applying the known mapping from robot coordinate frame and camera coordinate frame SC. Extending Equation (5) to all the k fingers of the robotic hand, this relation yields:

3.2. Manipulation System Dynamics

The dynamic model of the manipulation system can be divided into the dynamics description both the grasped object and the multi-fingered hand with the contact forces constrain. In this subsection, both dynamics equations will be given.

The motion equation for the object based on the simple case of moving it in free space without any external force, can be described as:

With regard to the multi-fingered hand, we can assume its dynamics as the set of serial three-link rigid mechanism which correspond to the fingers. In this case, the dynamics equation of finger i can be described as:

4. Optimal Control Framework

As stated, Equation (12) represents the dynamics of a multi-fingered robot hand in a robotic manipulation system. If we do not take into account the kinematic constraint between hand-object (Equation (7)), the dynamic model of the multi-fingered robot hand becomes the following expression:

The dynamic model of a serial-link robot has been used in different approaches to control a robotic system for tracking [25]. Following this idea, the approach proposed in [21] gave a new perspective about tracking based on optimal control for nonlinear mechanical systems. This approach will be used in this paper in order to perform a visual tracking of the grasped object in a robotic manipulation system.

Basically, the control approach suggested by [21] supposes a system with m constraints given by:

This equation may contain holonomic and/or non-holonomic constraints, and represents the task for the robotic system to be described in form of m constraints description. Differentiating these constraints with respect to time (assuming that φ is sufficiently smooth), the following equation can be obtained:

Although the optimal control is based on the robotic hand dynamic model of Equation (14), it can be applied to the robotic manipulation system model presented in Equation (12). This fact can be done considering the kinematic constrain between the robotic hand and the object (term of Equation (12)) as a desired value in a hybrid control force of the robotic manipulation system (see Section 6).

5. Constrained Task Description in the Robotic Manipulation System

The main objective of the robotic manipulation system proposed is to control the grasping force to a desired value such that the friction condition is satisfied besides controlling the position of the object along a desired trajectory in the image space. Therefore, the task description as constraint is given by the following equation in the image space:

From this equation, it can be possible to express the image tracking or task in the form of the constraints description of Equation (16) with:

With this definition of A and b, the optimal control will minimize the torques of robot hand while performing a tracking in the image space. With regard to the manipulated object, the motion equation is determined by Equation (10). Using the relation described by Equation (1), the object motion yields:

Solving the motion acceleration of the object from Equation (26) and replacing it in Equation (25), the following expression which relates the reference image acceleration and the desired behaviour of the object taken into account the contact forces is obtained:

6. Dynamic Visual Control Framework

This section describes a new optimal control framework for a robotic manipulation system using direct visual servoing. This control framework is based on the task description in the image space explained in the section above.

6.1. Visual Controller

As stated, the control function that minimizes the motor signals of a robot hand while performing the task described in Equation (14) is given by Equation (18). Replacing the variables concerned on the task description, A and b from Equation (24), the control law is set by:

As it can be seen, the control law (28) depends implicitly on the weighting matrix W and different values of this matrix can simplify the product (JT+MH−1WT−1/2)+ and consequently, the control law. Different control laws will be presented in the next section with different values of W.

In order to demonstrate the stability of the control law, the closed loop is computed from Equations (14) and (28) as:

This Equation can be simplified by pre-multiplying its left and right side by the term (JT+MH−1WT−1/2)W1/2:

Using Equations (20) and (22), from Equation (30) it can be concluded that:

Therefore, when JT+is full rank, an asymptotic tracking is achieved. This way, the convergence of the visual servo control law is demonstrated.

6.2. Force Fingertip Control

The manipulation of an object by a multi-fingered robot hand with fixed contact points alters the robot’s dynamics with a generalized contact force fc acting on the end effector of the fingers (see Equation (12)). In this framework, dynamic control of a robotic manipulation system is considered as a hybrid control produced from the interaction between a robot mechanism (robotic hand) and the environment (objet to be manipulated). This way, in this paper an approach for intending both to control the desired position of the object using visual features and the desired contact force exerted by the fingertips is presented.

In order to incorporate a control which in practice keeps constant the contact forces without affecting the task achievement or image tracking, a modification of the control law defined in Equation (28) is performed. As it is shown in this equation, the task space is defined by the expression W−1/2(JT+MH−1W−1/2)+. Therefore, the term expressed as [I − W−1/2(JT+MH−1W−1/2)+ (JT+MH−1W−1/2)W1/2] · τ1 can be used to project the motor command τ1 onto the null space of the dynamic visual servo task. The fulfillment of the task is not affected by the choice of the control law τ1. Setting τ1 = CH + gH + τ0, the Coriolis, centrifugal and gravitational forces can be compensated. Using this modification, the control law yields as follows:

7. Derived Visual Servo Controllers

One of the main contributions of the proposed framework is the possibility to generate different controllers to perform robotic manipulation tasks taking into account the robot dynamics and using visual-tactile information. Up to now, the control law has been written depending on the weighting matrix W. As stated, the choice of W plays an important role in the controller because it determines how the torques are distributed over the joints. In the next subsections, new control laws are obtained from the choice of different values of W.

7.1. Using W=

Considering as weighting matrix, the control law from Equation (32) yields:

Simplifying this equation, the final control law gives the following expression:

As it can be seen in the Equation (35), this choice of the W matrix has allowed the decoupling of kinematics and dynamics of the robot hand in the control law. This is because the null-space term is based on JT, a matrix with only kinematic contain.

7.2. Using W=

An important value for the control law due to its physical interpretation is since it is consistent with the principle of d’Alembert [25]. Using this value for W, the control law expressed in the Equation (32) results as follows:

Applying the pseudo inverse as Q+ = QT(Q˙QT)−1 and simplifying this equation, the control law yields:

7.3. Using W= DMH−2

In this subsection, a new value of , where D is a diagonal positive matrix, will be employed. This matrix allows distributing the torques to the joints of the robot hand, therefore, large weights in this matrix causes small torques. Using this value for W, the controller from Equation (37) results as follows:

Simplifying the equation as before, the control law yields:

8. Experimental Results

In the previous section, three visual controllers have been obtained using the proposed control framework. In this section, different results are described in order to evaluate the obtained controllers during manipulation tasks using the system architecture presented in Section 2. To do this, four visual features are extracted from the grasped object by using the eye-to-hand camera system. It is assumed that all the visual features are visible during the manipulation experiment. As stated, only three fingers of the robot hand and its three last degrees of freedom are employed in the manipulation task.

8.1. Behavior in the Joint and Image Spaces

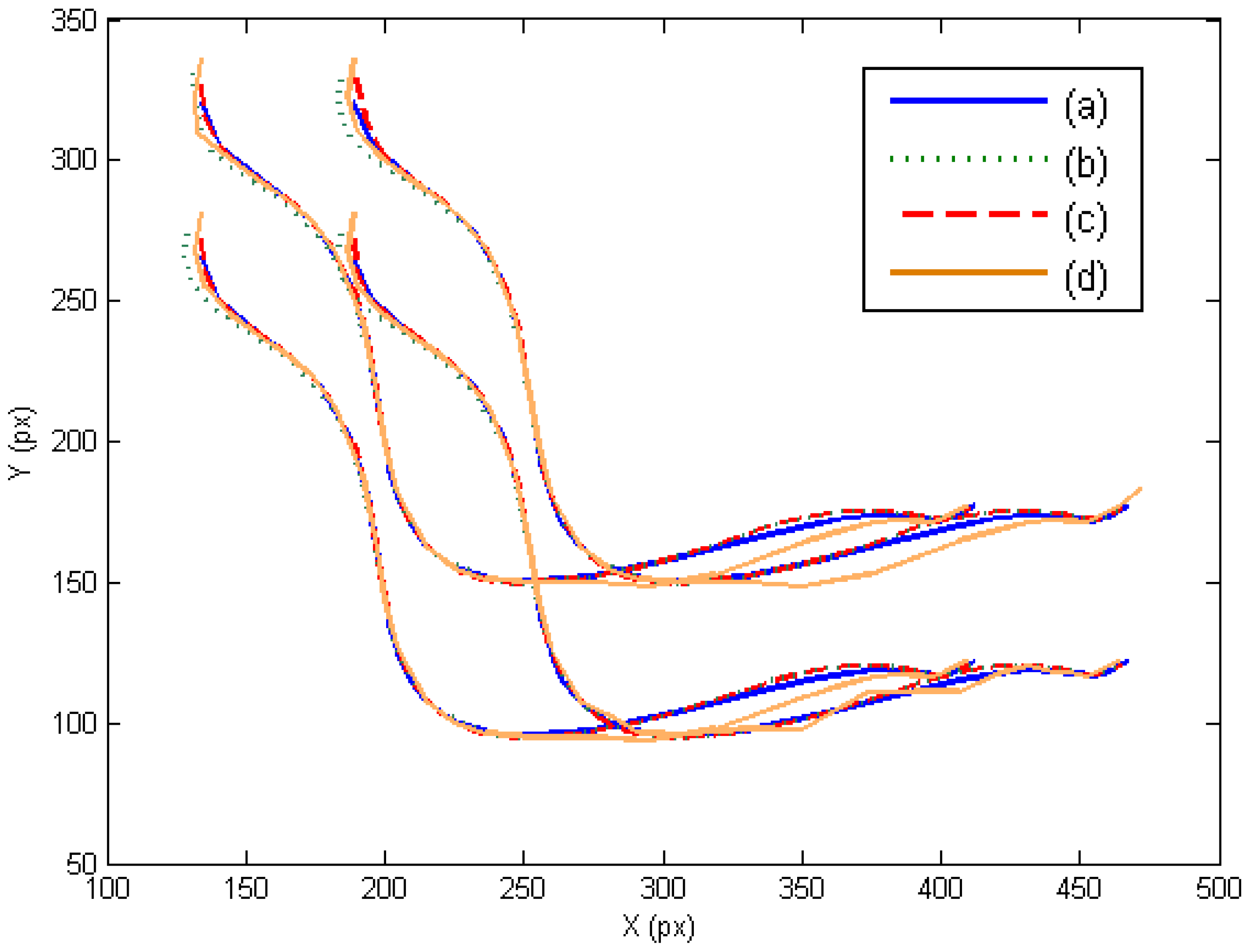

In this section, the three controllers derived in Section 7 are evaluated in the joint and image space. The presented experiments consist of a manipulation task where the extracted image features must track the desired image trajectory represented in Figure 3a. This trajectory has been previously defined like a time-dependent function. In this case, the external eye-to-hand camera is located at a distance of 50 cm observing the trajectory described by the four points located at the object. Figure 3b,c,d represents the obtained image trajectory when W = M−2, W = DM−2 and W = M−1, respectively. As this last figure shows, the tracking is correctly developed in the image space and the image error remains low (Table 1).

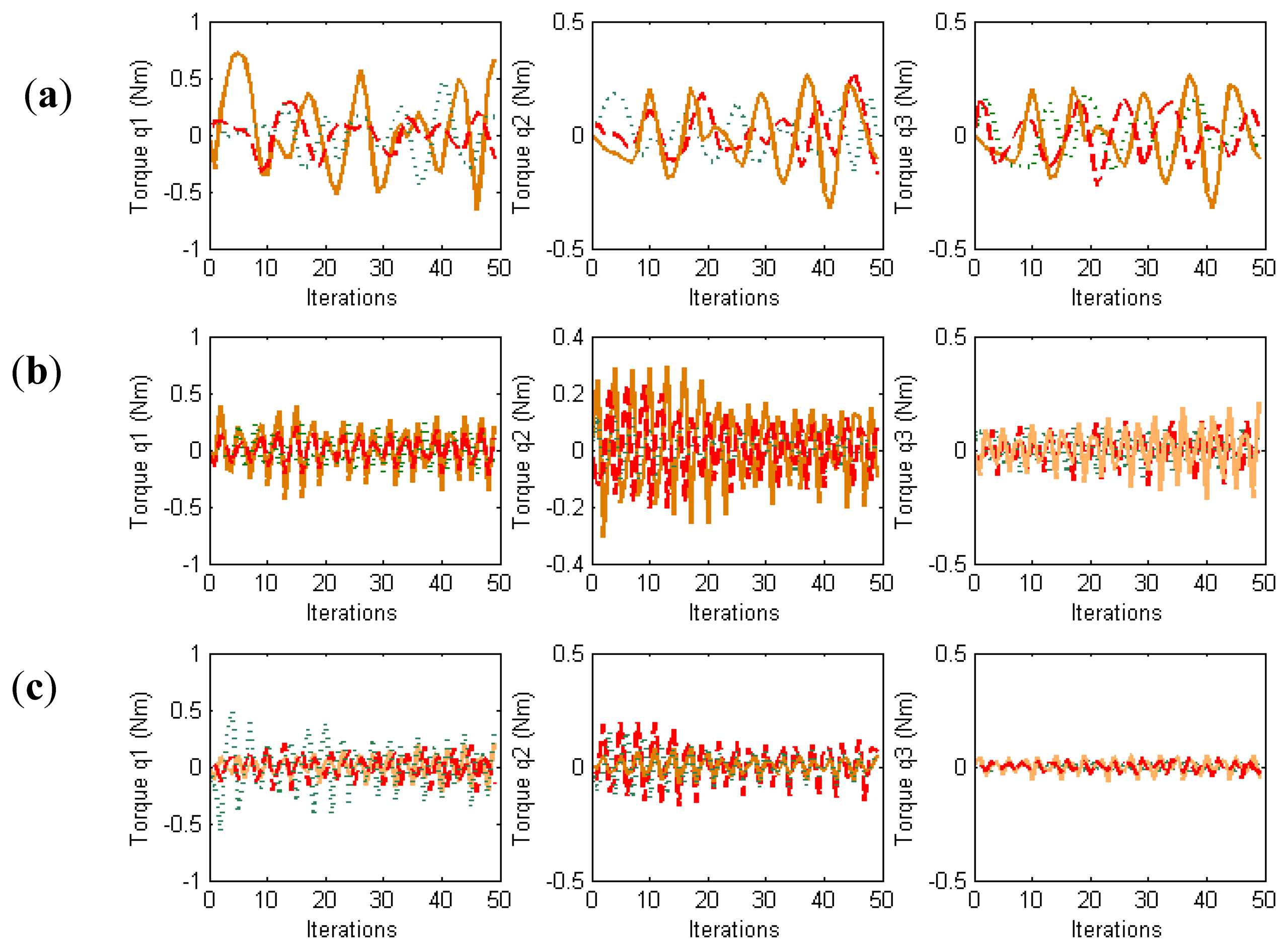

In order to evaluate the behavior in the joint space during these manipulation experiments, the obtained torques are represented in Figure 4. For each joint of each finger, the torques when W = M−2, W = DM−2 and W = M−1 are indicated. When W = DM−2, the value of D allows us to indicate which joints will support high loads. In this experiment, the weight value corresponding to the first joint is twice the weight corresponding to the second and third joints. Comparing the torques of the first joints (first column in Figure 4) and the torques of the second joints (second column in Figure 4), it can be observed that when W = DM−2 (in red) lower torques in the first joints are obtained. Therefore, this diagonal matrix can be employed to distribute the torques and to diminish the effort in the desired joints. When W = M−1 a correct image tracking is also observed (see Figure 2), however a more oscillating behaviour is obtained in the joint space.

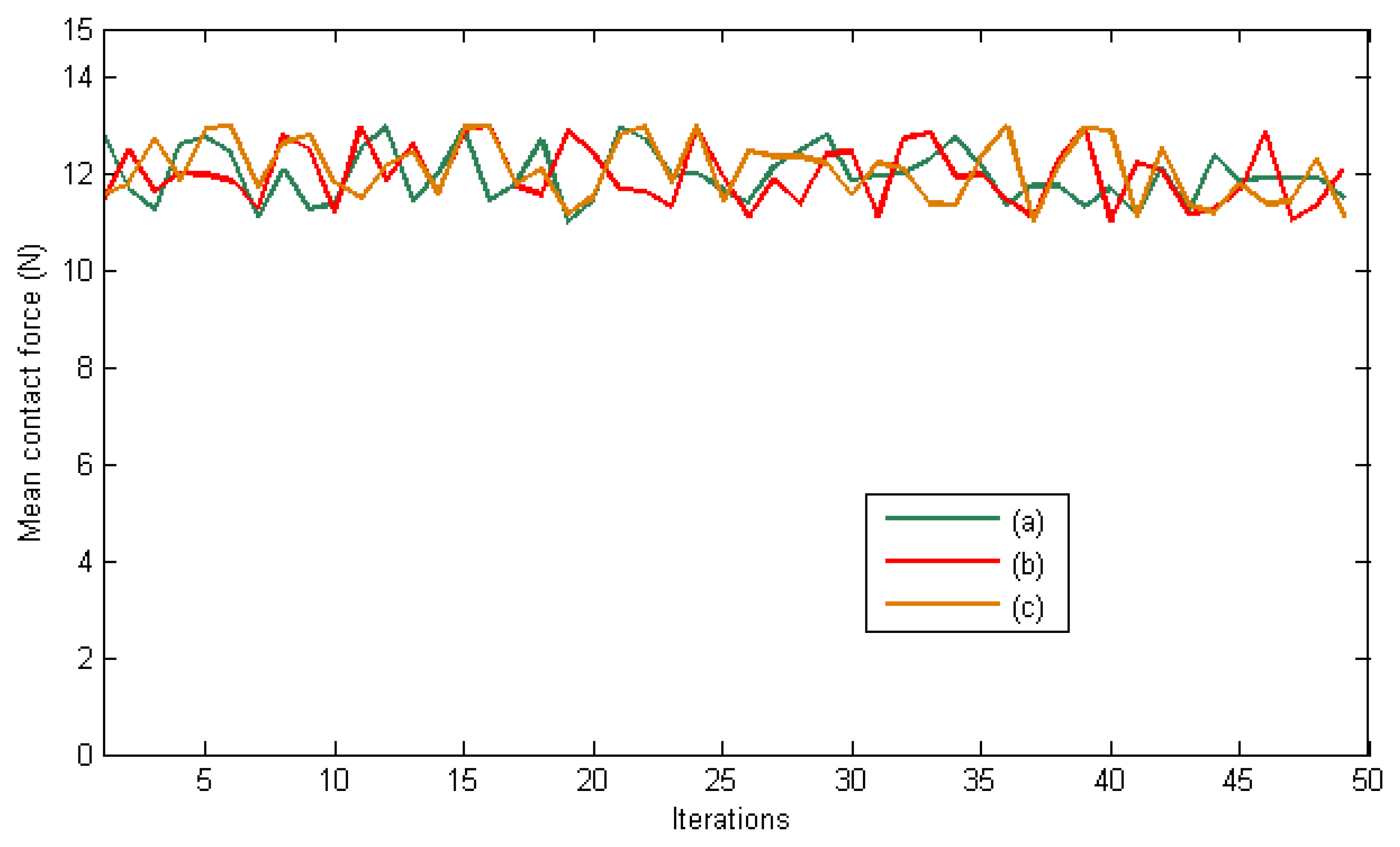

The desired contact forces for the fingertips are regulated to 12 N during the experiment. Figure 5 represents the mean total contact force during the experiment. Furthermore, Figure 6 indicates the distribution of the pressure measurements registered by the arrays of tactile sensors of the three fingers in an intermediate iteration during the manipulation task. The force contact error also remains low and the mean error when W = M−2, W = DM−2 and W = M−1 are 0.87 N, 1.01 N and 0.91 N respectively.

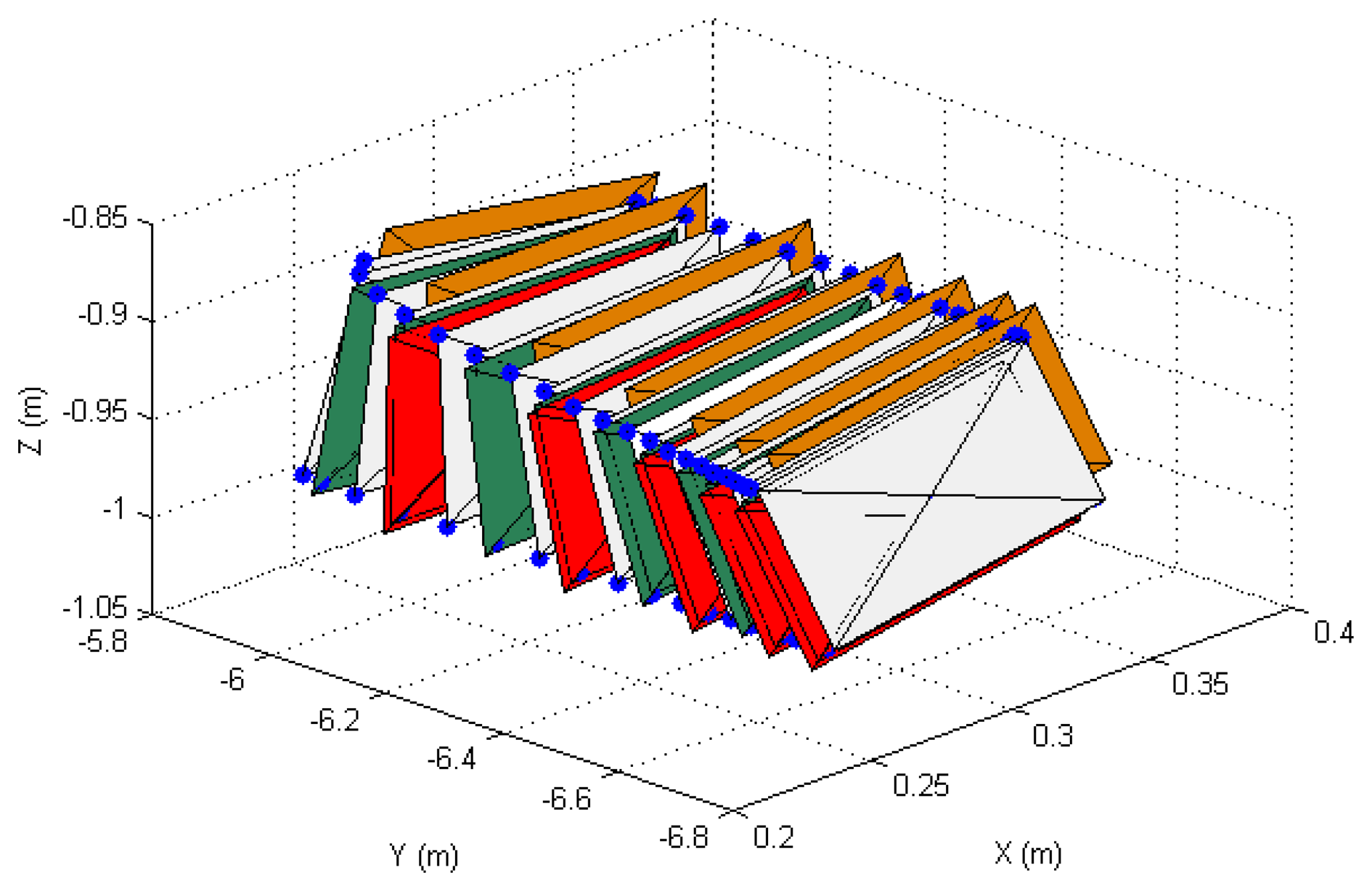

8.2. Behavior in the 3D Space

In order to show the 3D behavior of the proposed controllers, this section presents a manipulation task where the grasped object must perform a rotation while the robot is doing a displacement. The image trajectories described by the four extracted marks using the three controllers are represented in Figure 7. As it was noted in the previous section, the three controllers guide correctly the object in the image space and the best behavior is obtained when W = M−2. The 3D trajectories described by the manipulated object are shown in Figure 8. This last figure only represents the face of the manipulated object observed by the eye-to-hand camera. The desired 3D trajectory is indicated by white rectangles and the desired 3D positions of the extracted features are indicated in blue. The robot is performing a desplacement in the Y axis direction while the manipulation does a rotation of the object. As in the previous cases, the 3D trajectories obtained when W = M−2, W = DM−2 and W = M−1 are indicated in green, red and orange respectively. As it is shown in Figure 8, a correct 3D behaviour is observed and the manipulation is correctly developed by using the proposed visual controllers. In this case, lower errors are obtained setting W = M−2.

Finally, Figures 9 and 10 represent the evolution of the mean contact force and the distribuition of the pressure over the tactile sensors respectivley. As it can be seen, the desired contact force in this case is regulated to 12.5 N. Although the proposed visual controllers have shown a correct behavior to guide a robotic hand during manipulation tasks, the proposed framework can be employed to define new ones by modifying the value of the matrix W.

9. Conclusions

This paper presents a novel optimal control framework which allows defining new dynamic visual controllers in order to carry out the dexterous manipulation of a robotic manipulation system. Making use of both the dynamics of the robotic hand and the definition of the image trajectory as task description of the object motion, different image-based dynamic visual servoing systems are defined to dexterous manipulation with multi-fingered robotic hands. Force fingertip control has been proposed as an additional control command which acts in the null-space of the task which manages the object tracking. This way, the desired contact force can be set independently and accomplished with any of the control laws derived from optimal control framework. For that end, a set of tactile sensors has been used in the real experiments in order to verify the proposed control law.

The approach has been successfully verified with the implementation of some derived controllers on a real robotic manipulation system. As shown, the behavior in task space is very similar and the image error remains low using different values of the weighting matrix. The fingertip interaction force is also regulated and low error is obtained during the manipulation tasks.

Acknowledgments

This work was funded by the Spanish Ministry of Economy, the European FEDER funds and the Valencia Regional Government, through the research projects DPI2012-32390 and PROMETEO/2013/085.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Melchiorri, C.; Kaneko, M. Robot Hands. In Handbook of Robotics; Siciliano, B., Oussama, K., Eds.; Springer-Verlag: Berlin/Heidelberg, Germany, 2008; pp. 345–360. [Google Scholar]

- Cherif, M.; Gupta, K. 3D In-hand Manipulation Planning. Proceedings of IEEE/RSJ International Conference on Intelligent Robots and Systems, Victoria, BC, Canada, 13–17 October 1998; pp. 146–151.

- Han, L.; Trinkle, J. Dexterous Manipulation by Rolling and Finger Gaiting. Proceedings of IEEE International Conference on Robotics and Automatio, Leuven, Belgium, 16–20 May 1998; pp. 730–735.

- Yoshikawa, T. Multifingered robot hands: Control for grasping and manipulation. Ann. Rev. Control. 2010, 34, 199–208. [Google Scholar]

- Trinkle, J.; Hunter, J. A Framework for Planning Dexterous Manipulation. Proceedings of IEEE International Conference on Robotics and Automation, Sacramento, CA, USA, 9–11 April 1991; pp. 1245–1251.

- Zhang, H.; Tanie, K.; Maekawa, H. Dexterous Manipulation Planning by Grasp Transformation. Proceedings of IEEE International Conference on Robotics and Automation, Minneapolis, MN, USA, 22–28 April 1996; pp. 3055–3060.

- Rosell, J.; Suárez, R.; Pérez, A. Path planning for grasping operations using an adaptive PCA-based sampling method. Auton. Robot. 2013, 35, 27–36. [Google Scholar]

- Cherif, M.; Gupta, K. Planning quasi-static fingertip manipulations for reconfiguring objects. IEEE Trans. Robotic. Autom. 1999, 15, 837–848. [Google Scholar]

- Goodwine, B. Stratified Motion Planning with Application to Robotic Finger Gaiting. Proceedings of 14th IFAC World Congress, Beijing China, 23 June 1999; pp. 128–132.

- Perdereau, V.; Drouin, M. Hybrid external control for two robot coordinated motion. Robotica 1996, 14, 141–153. [Google Scholar]

- Perdereau, V.; Drouin, M. A new scheme for hybrid force-position control. Robotica 1993, 11, 453–464. [Google Scholar]

- Corrales, J.A.; Jara, C.A.; Torres, F. Modelling and Simulation of a Multi-Fingered Robotic Hand for Grasping Tasks. Proceedings of 11th International Conference on Control Automation, Robotics and Vision, Singapore, 7–10 December 2010; pp. 1577–1582.

- Cretu, A.M.; Payeur, P.; Petriu, E.M. Soft object deformation monitoring and learning for model-based robotic hand manipulation. IEEE Trans. Syst. Man Cybern. Part B 2012, 42, 740–753. [Google Scholar]

- Yoshikawa, T. Manipulability of robotic mechanisms. Int. J. Robot. Res. 1985, 4, 3–9. [Google Scholar]

- Chaumette, F.; Hutchinson, S. Visual servo control, part I: Basic approaches. IEEE Robot. Autom. Mag. 2006, 13, 82–90. [Google Scholar]

- Jean, J.; Lian, F. Robust visual servo control of a mobile robot for object tracking using shape parameters. IEEE Trans. Control Syst. Technol. 2012, 20, 1461–1471. [Google Scholar]

- Onal, C.; Sitti, M. Visual servoing-based autonomous 2-D manipulation of microparticles using a nanoprobe. IEEE Trans. Control Syst. Technol. 2007, 15, 842–852. [Google Scholar]

- Foresti, G.L.; Pellegrino, F.A. Automatic visual recognition of deformable objects for grasping and manipulation. IEEE Trans. Syst. Man Cybern. Part C 2004, 34, 325–333. [Google Scholar]

- Lipiello, V.; Ruggiero, F.; Siciliano, B.; Villani, L. Visual grasp planning for unknown objects using a multifingered robotic hand. IEEE/ASME Trans. Mechatron. 2013, 18, 1050–1059. [Google Scholar]

- Pomares, J.; Perea, I.; Torres, F. Dynamic visual servoing with chaos control for redundant robots. IEEE/ASME Trans. Mechatron. 2013, pp, 1–9. [Google Scholar]

- Udwadia, F.E. A new perspective on tracking control of nonlinear structural and mechanical systems. Proc. Royal Soc. London Ser. A 2003, 459, 1783–1800. [Google Scholar]

- Tegin, J.; Wikander, J. Tactile sensing in intelligent robotic manipulation—A review. Ind. Robot 2005, 32, 64–70. [Google Scholar]

- Murray, R.; Li, Z.; Sastry, S. A Mathematical Introduction to Robotic Manipulation; CRC Press: Boca Raton, FL, USA, 1994; pp. 211–265. [Google Scholar]

- Flandin, G.; Chaumette, F.; Marchand, E. Eye-in-hand/Eye-to-hand Cooperation for Visual Servoing. Proceedings of IEEE International Conference on Robotics and Automation, San Francisco, CA, USA, 23–28 April 2000; pp. 2741–2746.

- Nakanishi, J.; Cory, R.; Mistry, M.; Peters, J.; Schaal, S. Operational space control: A theoretical and empirical comparison. Int. J. Robot. Res. 2008, 27, 737–757. [Google Scholar]

| W | Image Error (Px) | Force Contact Error (N) |

|---|---|---|

| M−2 | 2.487 | 0.87 |

| DM−2 | 2.621 | 1.01 |

| M−1 | 4.010 | 0.91 |

© 2014 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Jara, C.A.; Pomares, J.; Candelas, F.A.; Torres, F. Control Framework for Dexterous Manipulation Using Dynamic Visual Servoing and Tactile Sensors’ Feedback. Sensors 2014, 14, 1787-1804. https://doi.org/10.3390/s140101787

Jara CA, Pomares J, Candelas FA, Torres F. Control Framework for Dexterous Manipulation Using Dynamic Visual Servoing and Tactile Sensors’ Feedback. Sensors. 2014; 14(1):1787-1804. https://doi.org/10.3390/s140101787

Chicago/Turabian StyleJara, Carlos A., Jorge Pomares, Francisco A. Candelas, and Fernando Torres. 2014. "Control Framework for Dexterous Manipulation Using Dynamic Visual Servoing and Tactile Sensors’ Feedback" Sensors 14, no. 1: 1787-1804. https://doi.org/10.3390/s140101787