Hand Biometric Recognition Based on Fused Hand Geometry and Vascular Patterns

Abstract

: A hand biometric authentication method based on measurements of the user's hand geometry and vascular pattern is proposed. To acquire the hand geometry, the thickness of the side view of the hand, the K-curvature with a hand-shaped chain code, the lengths and angles of the finger valleys, and the lengths and profiles of the fingers were used, and for the vascular pattern, the direction-based vascular-pattern extraction method was used, and thus, a new multimodal biometric approach is proposed. The proposed multimodal biometric system uses only one image to extract the feature points. This system can be configured for low-cost devices. Our multimodal biometric-approach hand-geometry (the side view of the hand and the back of hand) and vascular-pattern recognition method performs at the score level. The results of our study showed that the equal error rate of the proposed system was 0.06%.1. Introduction

The rapidly growing biometric recognition industry [1] requires that its systems deliver high-security in applications such as computer systems and limited-access control areas. Biometrics is the term used in the computer sciences to refer to the field of mathematical analysis regarding unique human features. Hand biometrics is a relatively new type of biometric system. Various biometric features of the hand can be extracted; these are: hand geometry [2–6], finger knuckle [7], vascular pattern of the fingers [8,9], and the vascular pattern of the hand [10–17]. The features of unimodal biometrics have many limitations, such as variation in an individual biometric feature. In order to overcome using unimodal biometrics, combinations of multimodal biometrics [18–21] are being widely developed.

Table 1 presents the relation between the features for identification, the population involved together with results obtained, in terms of performance (FAR: False Acceptance Rate, FRR: False Rejection Rate, EER: Equal Error Rate). Best results in Table 1 are achieved by [21] and our work with multi-modal biometrics. Our work presents a new approach to achieve improved performance (EER = 0.06).

Our study proposes a multimodal biometric approach integrating hand geometry and vascular patterns. Our proposed multimodal biometric system can be constructed as a low-cost device because our system uses only one image to extract the feature points. We perform multimodal biometrics by score-level fusion with z-score normalization, which results in improved recognition performance compared to that of unimodal biometrics consisting of each hand geometry (e.g., the side view of the hand and the back of hand) and vascular pattern.

The rest of this paper is organized as follows: in Section 2, we discuss the hand biometric recognition system and we talk about the proposed hand biometric recognition technique. In Section 3, we discuss the experimental results. We conclude in Section 4.

2. Experimental Section

2.1. Hand Biometric Recognition System

In this section, we discuss the hand biometric recognition system. A proposed user-authentication system using the side and back view of the hand is investigated. The implemented system is detailed in Section 2.1.1. Details of the acquisition device are provided in 2.1.2. The image segmentation and preprocessing are illustrated in Section 2.1.3.

2.1.1. Overview

The block diagram of the implemented system is shown in Figure 1. First, a hand image is obtained from an acquisition device consisting of camera equipped with an infrared (IR) Light-Emitting Diode (LED), IR filter, mirror, and support for the hand, as shown in Figure 2. The camera video signal (analog output) is converted into an image (digital signal) through a grabber board. To extract hand geometric features and hand vascular patterns from the acquired image, we perform hand segmentation by a predetermined area between the side view of the hand and the back of hand. The next step is to search the region of interest (ROI) for the vascular pattern. The vascular pattern is separated from the back of the hand. The extracted sub-image is composed of the three (side view of the hand, the back of hand, and the vascular pattern). Then, feature points are extracted after preprocessing. The matching is calculated using feature points between the data base (DB) and those of the sub-image. The matching score of the side view of the hand, the back of hand, and the vascular pattern is calculated using the Euclidean distance, the distance measure for polygonal curves, and template matching. Finally, we combine these three scores using score-level fusion based on z-score normalization.

2.1.2. Acquisition Device

An acquisition system has been developed for the collection of the side- and back-of-the-hand data and the vascular-pattern-of-the-hand data to acquire a single image. An acquisition device as shown in Figure 2 is constructed. That device is illuminated by a fixed light source located above the hand. The resolution of the acquired image is 640 × 480 pixels.

The acquisition of a sample image is shown in Figure 2. For the work on hand-based biometric identification, an IR LED (840∼850 nm) was used. An input image is captured in an IR environment to acquire the hand vascular pattern. To prevent movement of the hand a fixed support device was used. In order to take the side-of-the-hand image, a mirror was installed. A camera with a Charge-Coupled Device (CCD) sensor (1/3 type B/W) changes light signals into electrical signals. The light signals contain visible light (400–700 nm) and the near-infrared region. An IR filter (850 nm) removes the unwanted light wavelengths and is used to extract vein patterns.

2.1.3. Image Segmentation and Preprocessing

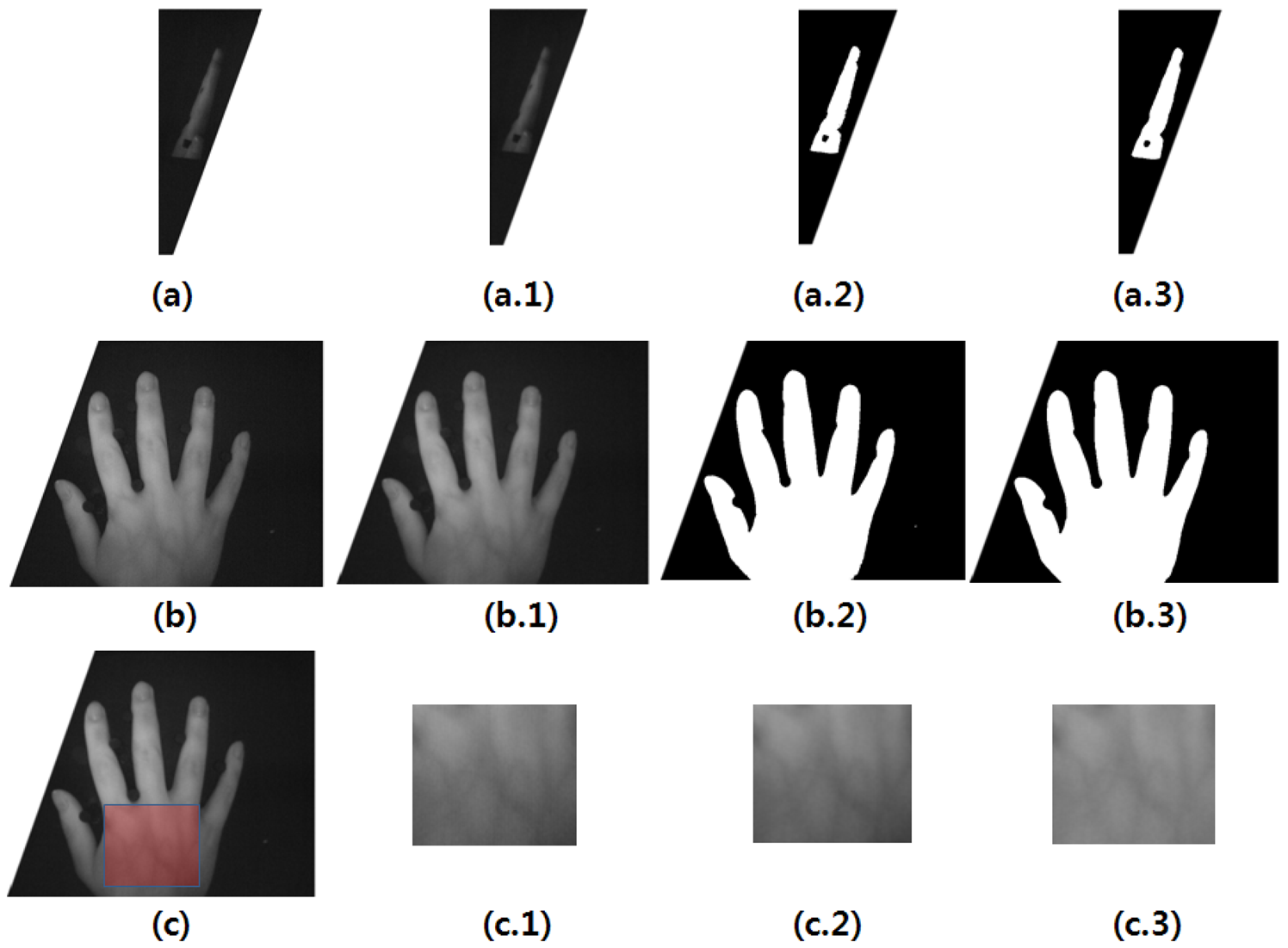

First, for hand recognition, the hand image is captured, and then preprocessing is performed. Preprocessing is conducted in two steps: (1) the gray image is transformed into a black and white one where the background is eliminated. The preprocessing for the side view of the hand is shown in Figure 3(a). The preprocessing for the back-of-the-hand data is shown in Figure 3(b). And, (2), the noise is removed in order to begin the vascular-pattern extraction (VPE) algorithm, as shown in Figure 3(c). Figure 3(a.1),(b.1),(c.2) show the Gaussian filter for noise removal. Figure 3(a.2),(b.2) show the threshold. Figure 3(a.3),(b.3) show the median filter for noise reduction of the threshold image. Figure 3(c.3) shows the high-pass filter for emphasizing the vascular patterns.

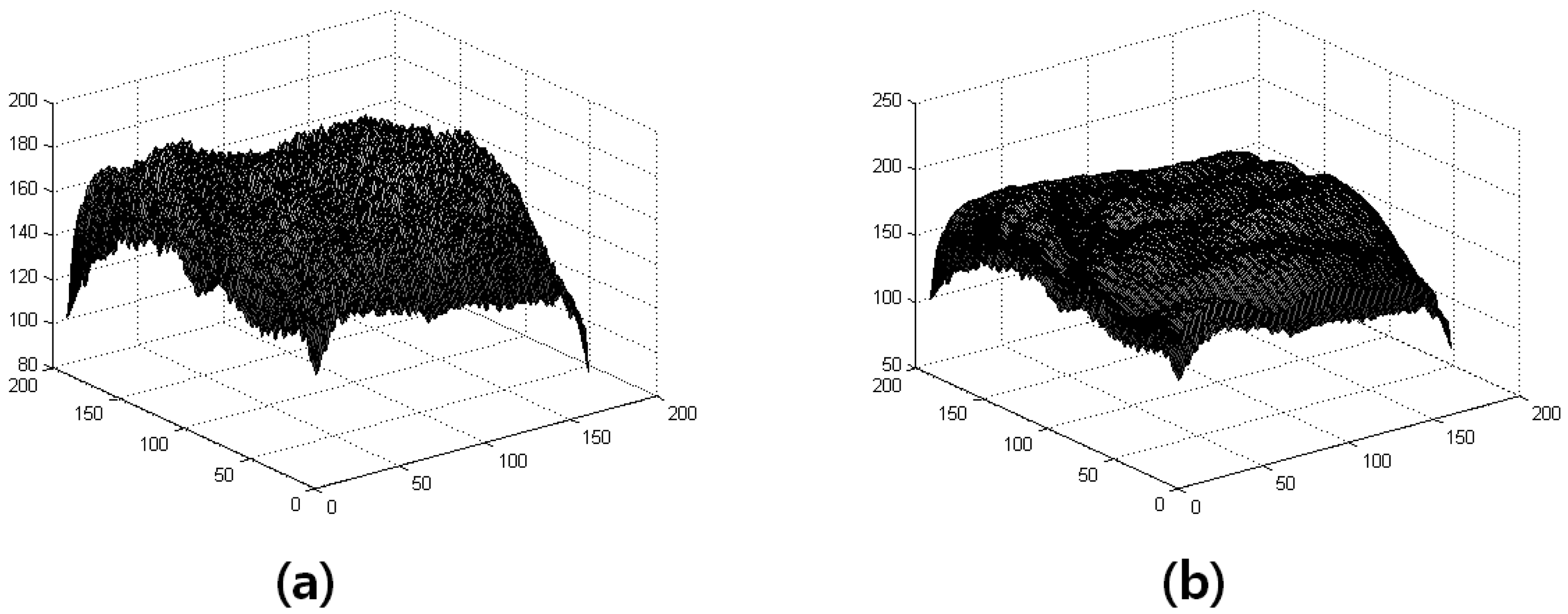

The Gaussian smoothing can be performed using standard convolution methods. The image has M rows and N columns, and the kernel has m rows and n columns. We use a suitable integer-valued convolution kernel that approximates a Gaussian with a σ of 1. Gaussian filtering is shown in Figure 4.

The 2D Gaussian is expressed as:

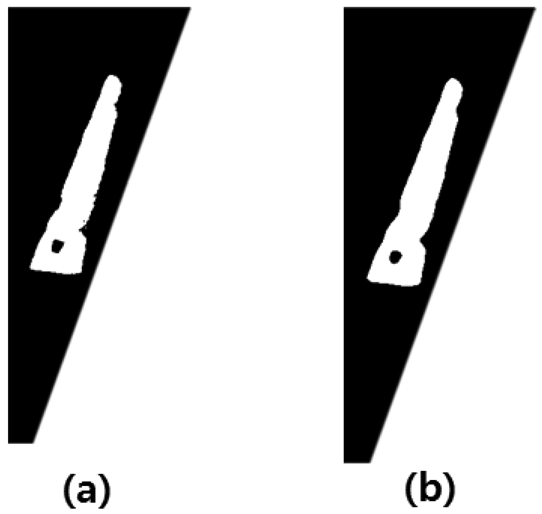

The median filter is to compare these results to a threshold value. The input data is thereby converted to a binary value (0,1). The images of Vascular, Median filter are shown in Figure 5.

The median filter is expressed as:

The next step after preprocessing is the extraction of the feature points. The extraction of the feature-points process includes the thickness of the side view of the hand, the K-curvature [22,23], and the vascular pattern.

2.2. Proposed Hand Biometric Recognition Technique

This section addresses the algorithm used for hand biometric recognition. We detail the extraction of feature and verifier. The side view of the hand is detailed in Section 2.2.1. The back-of-the-hand view is provided in Section 2.2.2. The VPE are illustrated in Section 2.2.3.

2.2.1. The Side View of the Hand

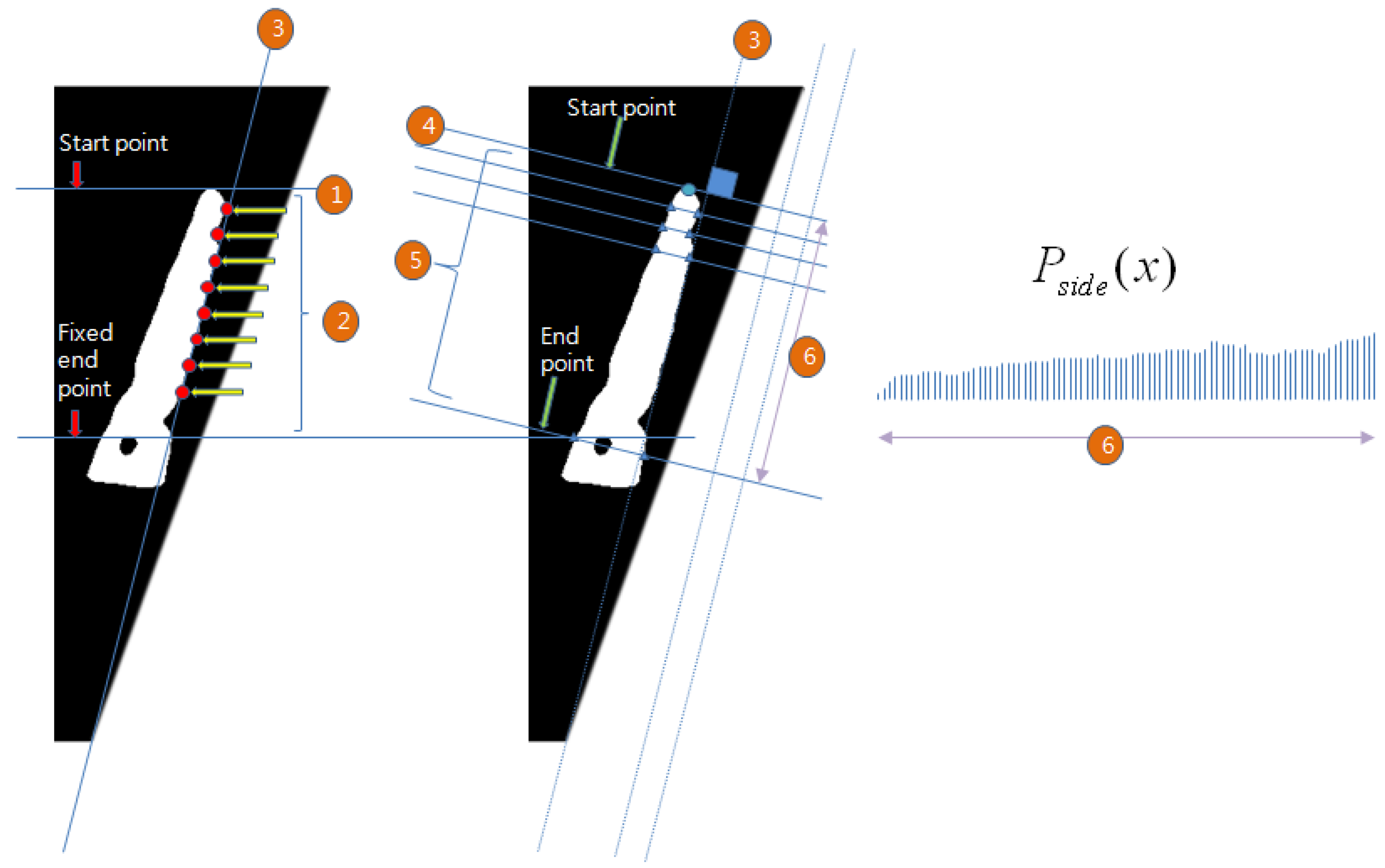

To establish the thickness of the side view of the hand, the heights of the middle finger, the index finger, and the palm are collected and calculated in the following order: (1) find a line at the base of the palm; (2) next, find the starting point perpendicular to the palm base line; (3) then, calculate the thickness of the side view the hand from the starting point to the end point. The location of the endpoint is predetermined by the acquisition device. The profile of thickness is Pside(x), as shown in Figure 6.

2.2.2. The Back of the Hand

The curvature can define a curve intwo-dimensional space. The curvature of the discrete data in a digital image using a suitable approximation is obtained. The concept of K-curvature is such that a continuous curvature is represented by a discrete function.

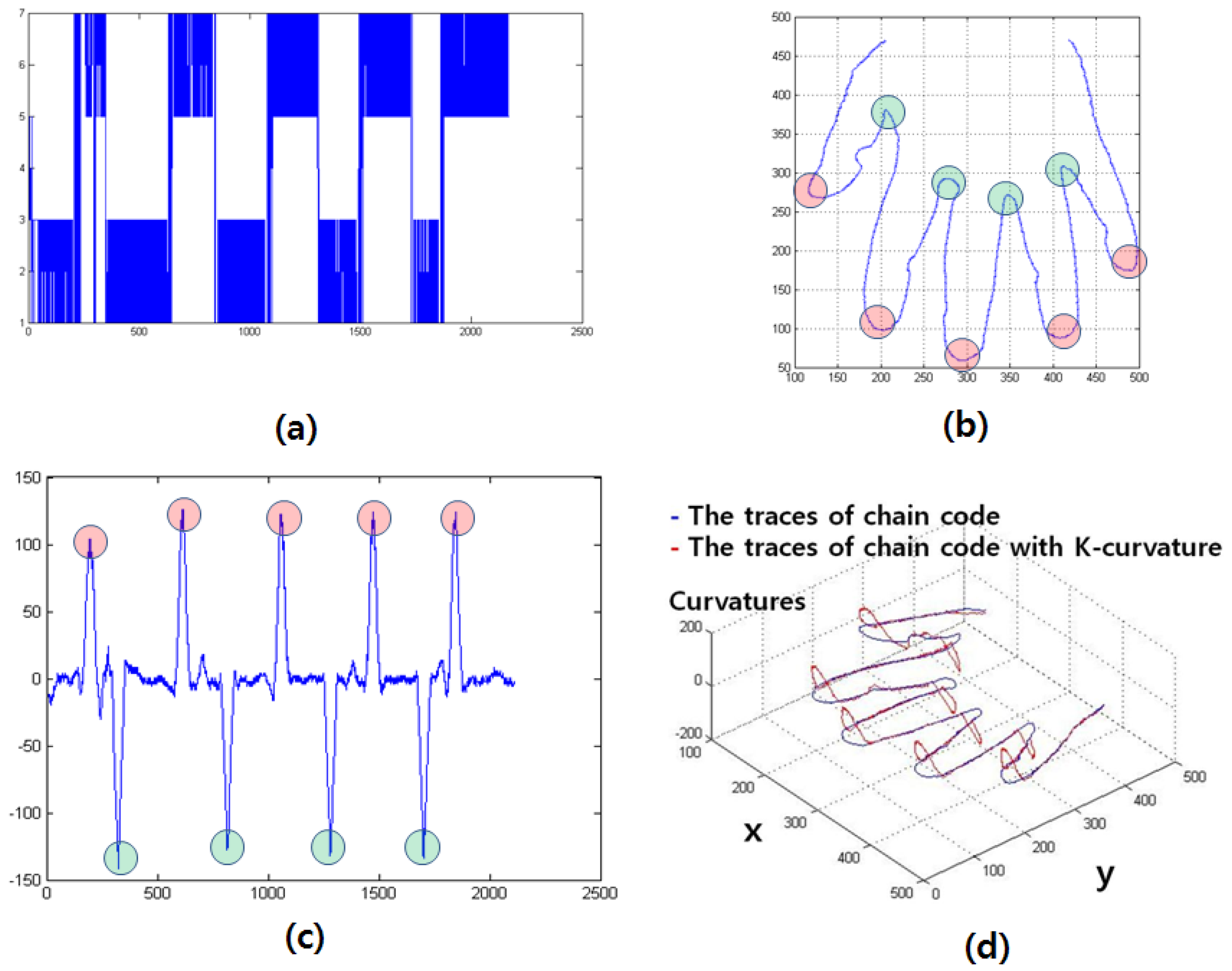

In this study, the K-curvature uses the curvature of the boundaries of the hands and the background as feature vectors. The K-curvature is calculated in the following order: (1) The chain code representation of the hand surface pattern is obtained. The traces of chain code are represented by blue in Figure 7(b),(d); (2) Then, the K-curvature is calculated using data from the trace of chain code.

The K-curvature is expressed as:

The curvature at a point pi is taken as the difference between the mean angular direction of K vectors on the leading curve segment of pi and that K of vectors on the trailing curve segment of Pifi is the i th component of the chain code. K-curvature begins at the beginning of a thumb.

The traces of the K-curvature are represented by red in Figure 7(d) (k = 30). The “maximum peak” means the end of the finger [marked in red in Figure 7(b),(c)]. The “minimum peak” values mean the contact point between the fingers [marked in blue in Figure 7(b),(c)].

The first feature of the hand geometry is the divided K-curvatures. The original K-curvature is split into components that can be characterized. These components consist of K1(x) for the valley between the thumb and index finger; K2(x) for the valley between the index and middle fingers; K3(x) for the valley between the ring and index fingers; and K4(x) for the valley between the ring and little fingers. The features of the end of each finger were removed by a K-curvature above 50. Figure 8 shows the feature extraction for the K-curvature.

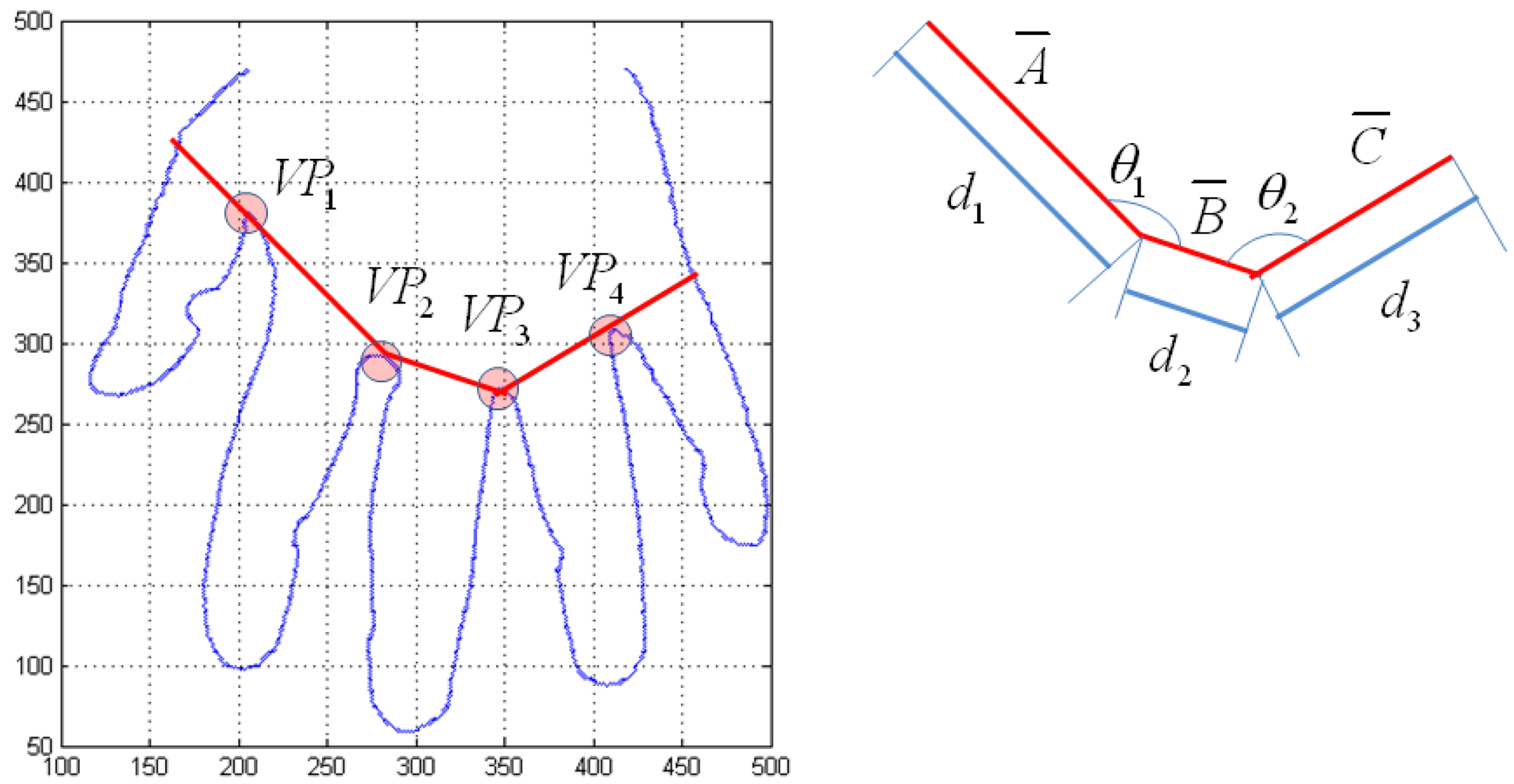

The second feature of the hand geometry is the length and the angle of the finger valley that is calculated by the K-curvature. Valley points consist of VP1, VP2, VP3, and VP4. The d1 is length of a̅ that is connected from VP2 to the outer edge of the hand through VP1. The d2 is the length of b̅ that is connected from VP2 to VP3. The d3 is the length of c̅ that is connected from VP3 to the outer edge of the hand through VP4. The θ1 is the angle between VP1 and VP3 on the basis of VP2. The θ2 is the angle between VP2 and VP4 on the basis of VP3. Figure 9 shows the feature extraction for the lengths and angles of the finger valleys.

The third feature of the hand geometry is the length of the fingers. The peak points for K-curvature consist of PP1, PP2, PP3, and PP4. The lengths are d1 from PP1 to a̅ ; d5 from PP1 to a̅ ; d6 from PP2 to a̅ ; d7 from PP1 to a̅ ; and d8 from PP1 to a̅ The d4 is the length of the line from PP1 to a̅. The d5 is the length of the line from PP2 to a̅. The d6 is the length of the line from PP3 to b̅. The d7 is the length of the line from PP4 to c̅. The d8 is the length of the line from PP5 to c̅. Figure 10 shows the feature extraction for the lengths of the fingers.

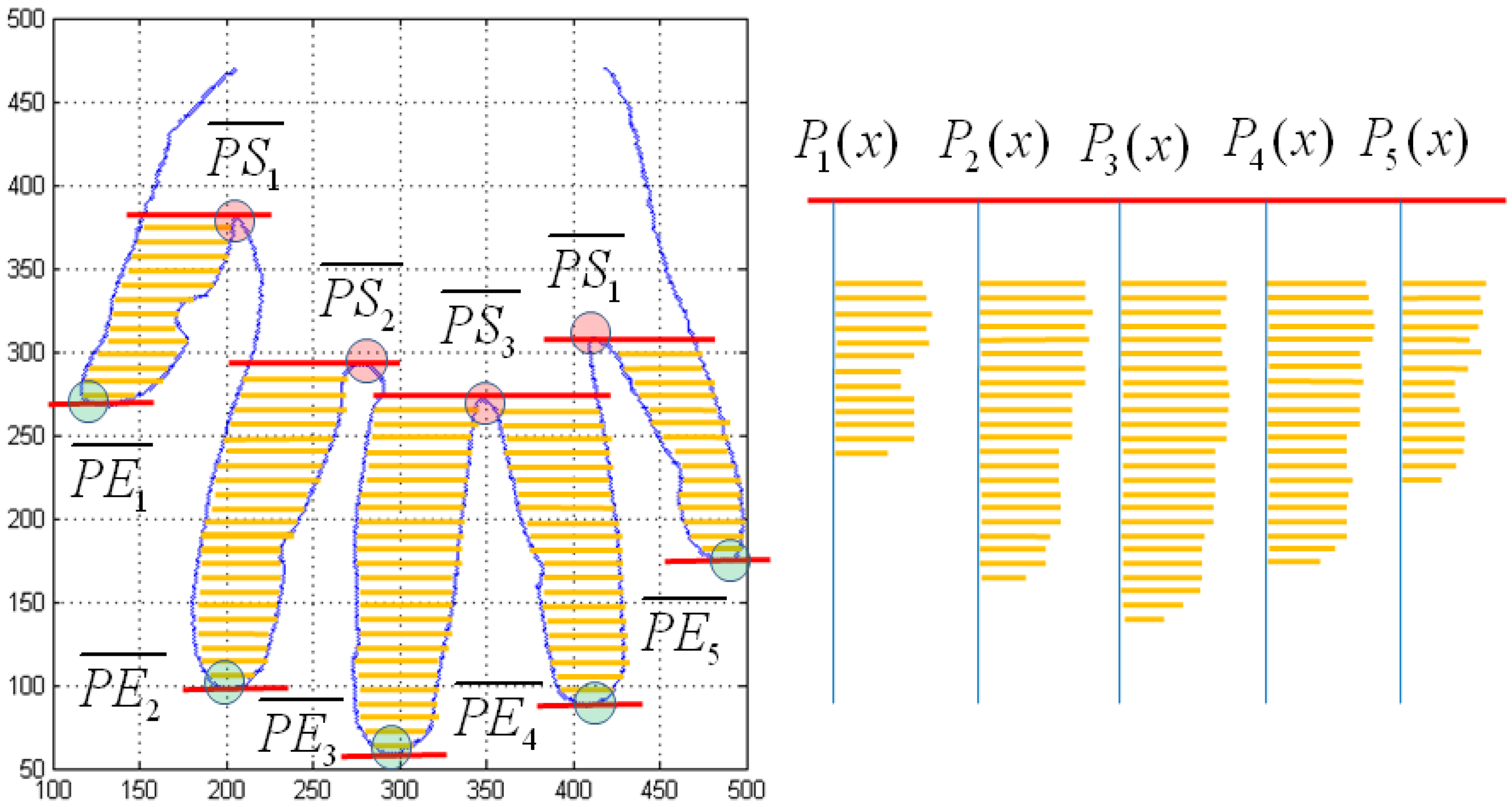

The fourth feature of hand geometry is the profile of the fingers. The starting points of the profile are the y-axis coordinates at the valley points. The end points of the profile are the y-axis coordinates at peak points. The starting point of the baseline consists of , , , and . The end point of the baseline consists of , , , , and . The profile of the fingers consists of P1(x), P2(x), P3(x), P4(x), and P5(x). Figure 11 shows the feature extraction for the profile of fingers.

2.2.3. VPE

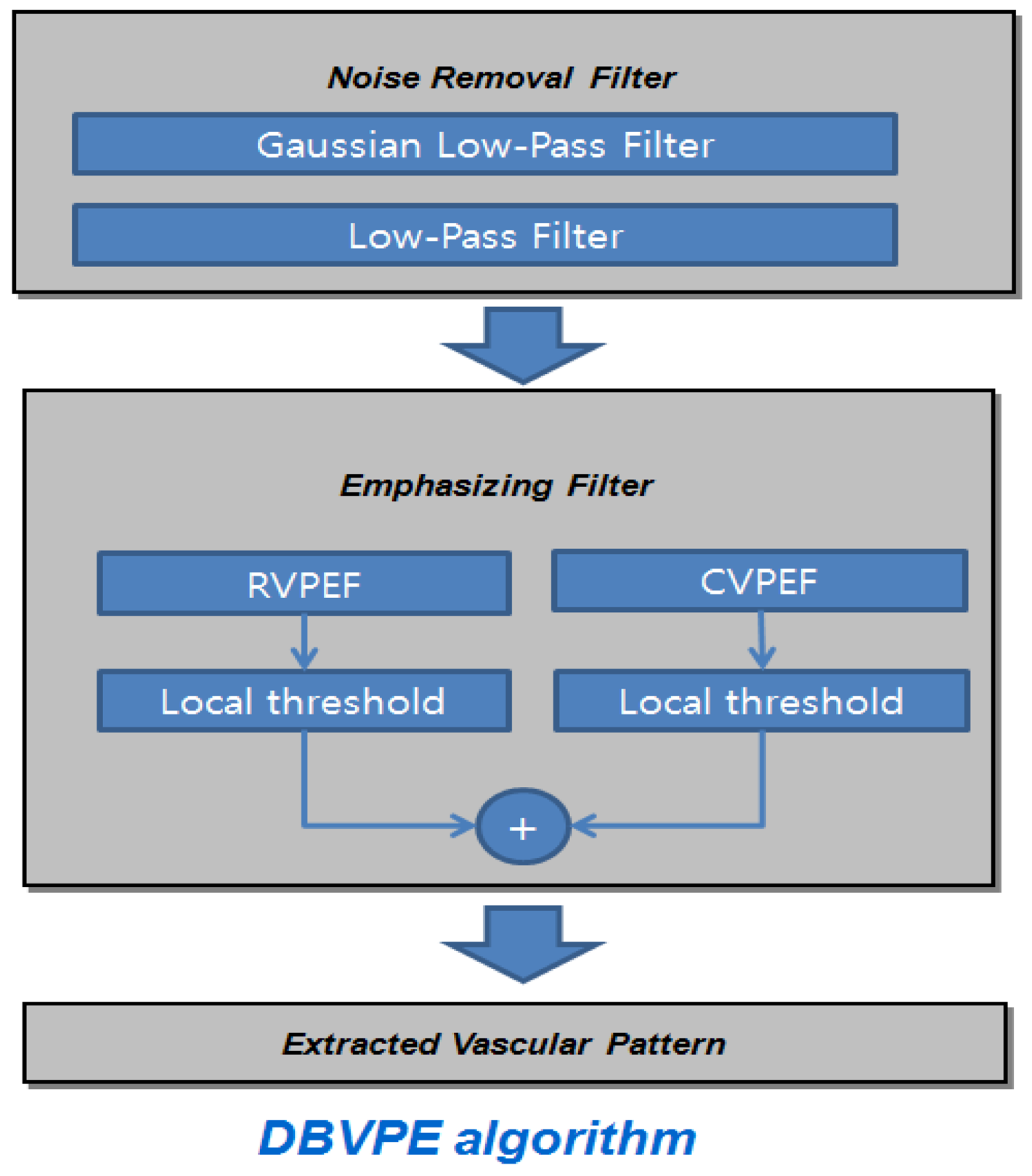

The VPE algorithm is implemented by using the direction-based vascular-pattern extraction (DBVPE) method [11]. The DBVPE uses a noise-removal filter that consists of a Gaussian low-pass filter and a smoothing low-pass filter. The DBVPE uses an emphasizing filter that combines the output of the row VPE filter (RVPEF) and the column vascular-pattern extraction filter (CVPEF). The VPE processing is illustrated in Figure 12.

The VPE algorithm uses a noise-removal filter and an emphasizing filter. The VPE algorithm is shown in Figure 13(c–f). The VPE is calculated in the following order: (1) first, for the noise removal filter, a Gaussian low-pass filter was used, as shown in Figure 13(b); (2) then, the emphasizing filter combines the output of the RVPEF in Figure 13(c); (3) next, the CVPEF in Figure 11(d); (4) next, RVPEF and CVPEF are added together in Figure 13(e); (5) finally, The median filter for noise reduction is shown in Figure 13(f).

The emphasizing filter is expressed as:

2.2.4. The Features of Hand Recognition

The feature of hand recognition is illustrated in Table 2. These features are the length, angle, profile, and vascular pattern, and they are used as data for verification.

2.2.5. Matching

In order to compare the different features of hand recognition, three kinds of verifier algorithms are used. The first algorithm is Euclidean distance. To establish the angle and length, the Euclidean distance algorithm was used. It performs its measurements with the following equation:

The second algorithm is the distance measured for the polygonal curves. For the K-curvature and profile, the distance-measure algorithm was used. An approach to a distance measurement for polygonal curves is to make a comparison between the original curves and the target curves with the objective of minimizing some property under specific constraints on the possible mappings; this algorithm performs its measurements with the following equation:

Qi is the ith component of the target feature vector:

For the K-curvature and profile, the number of scores is 10.

The third algorithm is a matching algorithm. The matching algorithm is used for the vascular pattern, and it obtains the maximum matching value between the source patterns and target patterns. The patterns consist of the vascular pattern and the background pattern. The matching of patterns is calculated by giving a weight of 1/4. The third algorithm performs its measurements with the following equation:

Three kinds of verifier algorithms compute 12 matching scores. The 12 matching scores are illustrated in Table 3.

At the verifier state, the source templates are compared with the target template. A source or target template is represented by 21 feature vectors: one profile of thickness, four K-curvatures, two angles, eight lengths, five profiles of fingers, and one vascular pattern. Angle and length are grouped into a single matching score. The verifier between the source templates and the target templates consists of computing 12 matching scores between them.

For hand geometry recognition, we used a weighted sum between the Euclidean distance and the distance measurement for polygonal curves. The weights W1 and W2 are varied over the range [0,1] in steps of 0.01, such that the constraint W1 + W2 = 1 is satisfied. The best weights for the Euclidean distance and the distance measurements are 0.37 and 0.63. Measurements are performed using the following equation:

The VPE recognition performs its measurements with the following equation:

The false acceptance rate (FAR) is the error rate of accepting the wrong person; the false reject rate (FRR) is the error rate of rejecting own; the genuine acceptance rate (GAR) is 1 − FRR; and the equal error rate (EER) is the error rate when FRR is equal to the FAR.

2.2.6. Matching

Multimodal biometric uses various levels of fusion: matching-score level, decision level, and the feature-extraction level. In this paper, we used integration at the matching-score level. The matching-score level comprises two approaches: the classification approach and the combination approach. Because the combination approach performs better than some classification approaches [24], we select the combination approach that combines the individual matching scores to generate a single scalar score.

The matching-score level needs normalization to transform the score into a common domain before combining it. In this paper, normalization uses a z-score [25]. The z-score is calculated using the arithmetic mean and standard deviation of the given data.

The normalized scores are expressed as:

The distributions of the matching scores of the two modalities after z-score normalization are shown in Figure 14.

Once normalized, the normalized-scores obtained from hand geometric and vascular pattern are combined using a simple weighted-summation operation. The weighted-summation method is given by:

3. Results

The experimental database contains a total of 1,300 images (side-view-of-the-hand, back-of-the-hand and vascular-pattern-of-the-hand images) for 100 subjects, i.e., 13 images per individual. We use three images each individual (a total of 300 images) for training. To test the proposed recognition method, we use 10 images per hand from 100 people. For training and test purposes, each of these biometric data sets is partitioned into with 3 × 100 and with 10 × 100 samples. The users' ages ranged from 20 to 50. Approximately 73% were men, and 27% were women.

In our experiments, we use summed score of all the scores from each unimodal matching as a final matching score. As the EER of unimodal biometrics, hand geometry, and VPE acquired 1.81%, and 1.19%. Our proposed approach is based on a score-level fusion with the unimodal biometrics approach. The score level was normalized as a z-score. The fusion of hand geometry and the VPE obtains the best EER of 0.06%. Figure 15 shows the ROC curves for unimodal and multimodal biometrics.

We measured the speed of the proposed algorithm on a desktop computer with Intel Pentium (R) Dual CPU 2.00 GHz processor, with 2.00 GB of RAM The computational complexity of processing is summarized in Table 4.

4. Conclusions

In this article, we have proposed a new multimodal biometric verification method based on the fusion of the hand geometry and the vascular pattern from a single hand image. The proposed hand recognition method was based on K-curvature, thickness of the side view of the hand, and VPE. The accuracy of the proposed multimodal biometrics method is better than that obtained using unimodal biometrics.

Acknowledgments

This work supported by the Nano IP/SoC Promotion Group of Seoul R&BD Program (10920) and the Converging Research Center Program through the Ministry of Education, Science and Technology (2012K001313).

References

- Jain, A.K.; Bolle, R.; Pankanti, S. Biometrics Persona Identification in Networkwed Society; Kluwer: Norwell, MA, USA, 1999. [Google Scholar]

- Park, G.T.; Im, S.K.; Choi, H.S. A person identification algorithm utilizing hand vein pattern. Proceedings of Korea Signal Processing Conference, Busan, Korea, 27 September 1997; Volume 10. pp. 1107–1110.

- Jain, A.; Ross, A.; Pankanti, S.A. Prototype hand geometry-based verification system. Proceedings of 2nd International Conference on Audio and Video-Based Biometric Person Authentication Authentication, Washington, DC, USA, 22–24 March 1999; pp. 166–171.

- Sanchez-Reillo, R.; Sanchez-Avila, C.; Gonzalez-Marcos, A. Biometric identification through hand geometry measurements. IEEE Trans. Pattern Anal. 2000, 22, 1168–1171. [Google Scholar]

- Malassiotis, S.; Aifanti, N.; Strintzis, M.G. Personal authentication using 3-D finger geometry. IEEE Trans. Inf. Forensics Secur. 2006, 1, 12–21. [Google Scholar]

- Amayeh, G.; Bebis, G.; Nicolescu, M. Improving hand-based verification through online finger template update based on fused confidences. Proceedings of the IEEE 3rd International Conference on Biometrics: Theory, Applications, and Systems, Arlington, VA, USA, 28–30 September 2009; pp. 1–6.

- Kumar, A.; Ravikanth, C. Personal authentication using finger knuckle surface. IEEE Trans. Inf. Forensics Secur. 2009, 4, 98–110. [Google Scholar]

- Lee, E.C.; Lee, H.C.; Park, K.R. Finger vein recognition using minutia-based alignment and local binary pattern-based feature extraction. Int. J. Imaging Syst. Technol. 2009, 19, 179–186. [Google Scholar]

- Mulyono, D.; Jinn, H.S. A study of finger vein biometric for personal identification. Biometrics and Security Technologies. Proceedings of International Symposium on Biometrics and Security Technologies, Islamabad, Pakistan, 23–24 April 2008; pp. 1–8.

- Im, S.K.; Park, H.M.; Kim, S.W.; Chung, C.K.; Choi, H.S. Improved vein pattern extracting algorithm and its implementation. Proceedings of Digest of Technical Papers. International Conference on Consumer Electronics, Los Angeles, CA, USA, 13–15 June 2000; pp. 2–3.

- Im, S.K.; Choi, H.S.; Kim, S.W. A direction-based vascular pattern extraction algorithm for hand vascular pattern verification. ETRI J. 2003, 25, 101–108. [Google Scholar]

- Shimizu, K.; Yamamoto, K. Imaging of physiological functions by laser transillumination. In Advances in Optical Imaging and Photon Migration; Alfano, R.R., Fujimoto, J.G., Eds.; OSA: Orlando, FL, USA, 1996; pp. 348–352. [Google Scholar]

- Mehnert, A.J.; Cross, J.M.; Smith, C.L. Thermalgraphic Imaging: Segmentation of the Subcutaneous Vascular Network of the Back of the Hand (Research Report); Edith Cowan University, Australian Institute of Security and Applied Technology: Perth, Australia, 1993. [Google Scholar]

- Cross, J.M.; Smith, C.L. Thermo graphic imaging of the subcutaneous vascular network of the back of the hand for biometric identification. Proceedings of Institute of Electrical and Electronics Engineers 29th Annual 1995 International Carnahan Conference on Security Technology, Sanderstead, UK, 18–20 October 1995; pp. 20–35.

- Lin, C.L.; Fan, K.C. Biometric verification using thermal images of palm-dorsa vein patterns. IEEE Trans. Circuits Syst. Video Technol. 2004, 14, 199–213. [Google Scholar]

- Tanaka, T.; Kubo, N. Biometric Authentication by Hand Vein Patterns. Proceedings of SICE 2004 Annual Conference, Yokohama, Japan, 4–6 August 2004; pp. 249–253.

- Ding, Y.; Zhuang, D; Wang, K. A study of hand vein recognition method. Proceedings of 2005 IEEE International Conference Mechatronics and Automation, Niagara Falls, Canada, 29 July–1 August 2005; pp. 2106–2110.

- Kumar, A.; Prathyusha, K.V. Personal authentication using hand vein triangulation and knuckle shape. IEEE Trans. Image Process. 2009, 38, 2127–2136. [Google Scholar]

- Kumar, A.; Wong, D.; Shen, H.; Jain, A. Personal verification using palmprint and hand geometry biometric, audio- and video-based biometric person authentication lecture notes. Comput. Sci. 2003, 2688, 668–678. [Google Scholar]

- Ong, M.G.K.; Connie, T.; Jin, A.T.B.; Ling, D.N.C. A Single-sensor Hand Geometry and Palmprint Verification System. Proceedings of WBMA 2003 ACM SIGMM Workshop on Biometrics Methods and Applications, New York, NY, USA, 2–8 November 2003; pp. 100–106.

- Kang, B.J.; Park, K.R. Multimodal biometric method based on vein and geometry of a single finger. Comput. Vis. IET 2010, 4, 209–217. [Google Scholar]

- Groan, F.C.; Verbeek, P.W. Freeman-code probabilities of object boundary quantized contours. Comput. Vis. Graph. Image Process. 1978, 7, 391–402. [Google Scholar]

- Anderson, I.M.; Bezdek, J.C. Curvature and tangential deflection of discrete arcs. IEEE Trans. Pattern Anal. Mach. Intell. 1984, 6, 27–40. [Google Scholar]

- Ross, A.; Jain, A.K. Information fusion in biometrics. Pattern Recogn. Lett. 2003, 24, 2115–2125. [Google Scholar]

- Jain, A.; Nandakumar, K.; Ross, A. Score normalization in multimodal biometric systems. Pattern Recogn. 2005, 38, 1043–1048. [Google Scholar]

| Year [Ref.] | Type | Features | Population size | Performance [%] |

|---|---|---|---|---|

| 1999 [2] | H | Contour coordinates | 53 | FAR = 1, FRR = 6 |

| 1999 [3] | H | Length, width, thickness and deviation | 20 | EER = 5 |

| 2006 [4] | H | Width and Curvature | 73 | EER = 3.6 |

| 2009 [5] | H | Fusion SVDD | 86 | EER = 1.5 |

| 1995 [14] | V | Sequential correlation | 20 | FAR = 0, FRR = 7.9 |

| 2004 [15] | V | Feature points of the vein patterns | 32 | EER = 2.3 |

| 2004 [16] | V | FFT based phase correlation | 25 | FAR = 0.73, FRR = 4 |

| 2005 [17] | V | Distance between feature points | 48 | FAR = 0, FRR = 0.9 |

| 2009 [18] | VK | Vascular structures and knuckle shape | 100 | EER = 1.14 |

| 2003 [19] | PH | Palm-print and Hand Geometry | 100 | FAR = 0, FRR = 1.41 |

| 2003 [20] | PH | Palm-print and Hand Geometry | 50 | FAR = 0.1818, FRR = 1 |

| 2010 [21] | VF | Vascular and geometry of finger | 102 | EER = 0.075 |

| Our work | VH | Vascular and geometry of hand | 100 | EER = 0.06 |

H: Hand geometry, V: Vascular, K: Knuckle shape, P: Palm-print, F: Finger geometry.

| Features | |

|---|---|

| The side view of the hand | profile of thickness:Pside(x) |

| The back-of-the-hand view | K-curvature:K1(x), K2(x), K3(x), K4(x) |

| angle:θ1, θ2 | |

| LENGTH: d1, d2, d3, d4, d5, d6, d7, d8 | |

| profile of fingers: P1(x), P2(x), P3(x), …. P4(x), P5(x), | |

| VPE | vascular pattern |

| Matching score | |

|---|---|

| Euclidean distance | D |

| Distance measurement for polygonal curves | δ1, δ2, δ3, δ4, δ5, δ6, δ7, δ8, δ9, δ10 |

| matching | C |

| Processing | Time (msec) |

|---|---|

| Image Preprocessing | 112 |

| Hand geometric Processing | 11 |

| VPE Processing | 16 |

© 2013 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Park, G.; Kim, S. Hand Biometric Recognition Based on Fused Hand Geometry and Vascular Patterns. Sensors 2013, 13, 2895-2910. https://doi.org/10.3390/s130302895

Park G, Kim S. Hand Biometric Recognition Based on Fused Hand Geometry and Vascular Patterns. Sensors. 2013; 13(3):2895-2910. https://doi.org/10.3390/s130302895

Chicago/Turabian StylePark, GiTae, and Soowon Kim. 2013. "Hand Biometric Recognition Based on Fused Hand Geometry and Vascular Patterns" Sensors 13, no. 3: 2895-2910. https://doi.org/10.3390/s130302895

APA StylePark, G., & Kim, S. (2013). Hand Biometric Recognition Based on Fused Hand Geometry and Vascular Patterns. Sensors, 13(3), 2895-2910. https://doi.org/10.3390/s130302895