3D LIDAR-Camera Extrinsic Calibration Using an Arbitrary Trihedron

Abstract

: This paper presents a novel way to address the extrinsic calibration problem for a system composed of a 3D LIDAR and a camera. The relative transformation between the two sensors is calibrated via a nonlinear least squares (NLS) problem, which is formulated in terms of the geometric constraints associated with a trihedral object. Precise initial estimates of NLS are obtained by dividing it into two sub-problems that are solved individually. With the precise initializations, the calibration parameters are further refined by iteratively optimizing the NLS problem. The algorithm is validated on both simulated and real data, as well as a 3D reconstruction application. Moreover, since the trihedral target used for calibration can be either orthogonal or not, it is very often present in structured environments, making the calibration convenient.1. Introduction

Multi-sensors are commonly equipped on mobile robots for navigation tasks. Currently, for instance, ranging sensors such as high-speed 3D LIDARs are often used in conjunction with cameras for a robot to detect objects [1,2] and reconstruct scenes [3–5]. In these sensor fusion-based applications, a prerequisite is to extrinsically calibrate the relative transformation between the sensors. The result of extrinsic calibration highly impacts subsequent fusion processes.

A variety of methods have been developed to address the LIDAR-camera extrinsic calibration problem. Among them, early interest focuses on systems consisting of a 2D LIDAR and a camera [6-9]. Wasielewski and Strauss [6] and Naroditsky et al. [7] calibrate a 2D laser scanner with respect to a camera by making use of special calibration rigs, such as a white planar board covered with a black line. The work of Zhang and Pless [8] relies on a planar checkerboard pattern. Corners of the pattern [10] are first detected in images and used to determine the poses of planes in camera frames. Meanwhile, 3D points falling on the checkerboard are taken into consideration to estimate the planes' poses in LIDAR frames. Using the geometric constraint of the planar target in a couple of LIDAR-camera observations, the extrinsic calibration problem is formulated as a nonlinear least squares (NLS) problem [11] and solved iteratively.

In recent years, with the development of 3D laser ranging techniques, several methods were proposed to calibrate 3D LIDAR-camera systems [12–18]. Unnikrishnan [12] and Pandey et al. [13] extend the checkerboard pattern-based method [8] from 2D to 3D LIDARs. Mirzaei et al. [19] utilizes a planar board covered with fiducial markers for calibration, which, in essence, is of the same rationale as the checkerboard-based approaches. They further divide the NLS optimization problem into two least-square sub-problems and solve them analytically. The checkerboard pattern is also used in the work of Geiger et al. [20]. They calibrate a 3D LIDAR-camera system using a single shot containing such multiple patterns. Instead of using planar checkerboard patterns, there are several alternative methods that rely on correspondences of points [21], lines [22] or circles [14], or employ inertial sensors [23,24]. Compared to plane-based approaches, most of these methods need to build point- or line-wise correspondences between images and LIDAR points. However, due to the lower and non-uniform resolution of LIDAR measurements, it is difficult to achieve high accuracy.

In this work, we propose a novel way to conduct the extrinsic calibration between a 3D LIDAR and a camera. In contrast to most of the published techniques, our method distinguishes itself in two aspects:

It takes advantage of a trihedron—which may or may not be orthogonal—for calibration. Such trihedral targets are ubiquitous in both indoor and outdoor structured environments, such as two adjacent walls of a building together with the floor. Hence, it is quite convenient for a robot to collect data for calibration. Compared to the aforementioned calibration rigs, the trihedral configuration is less likely to be perturbed even under severe weather conditions, and is easier to be captured.

In contrast to these calibration-rig-based methods that require a user to specify both the region of a plane in 3D LIDAR and the corners in images, our method requires fewer user inputs. Only the region of each plane of the trihedron in the sensors' data is needed. Moreover, the precision of the manual inputs does not make much of a difference.

To present the proposed method, we organize the remainder of this paper as follows. In Section 2, we first describe the extrinsic calibration problem via taking advantage of an trihedral calibration rig, and introduce the associated geometric and motion constraints. Section 3 presents the entire calibration procedure. Experiments conducted on both simulations and real data are exhibited in Section 4, followed with conclusions in Section 5.

2. Problem Description

Let us formally define the problem of 3D LIDAR-camera extrinsic calibration. We are given a camera and a 3D LIDAR that are rigidly mounted with respect to each other. Both sensors are assumed to be pre-calibrated, meaning that their intrinsic parameters are known. A trihedron is observed synchronously by them. Our objective is to determine the relative transformation between the two sensors, by taking advantage of the constraints associated with the trihedron.

For the sake of clarity, in the remaining of this section, we introduce the related definitions and notations, together with the geometric and motion constraints established between the measurements of the two sensors.

2.1. Definitions and Notations

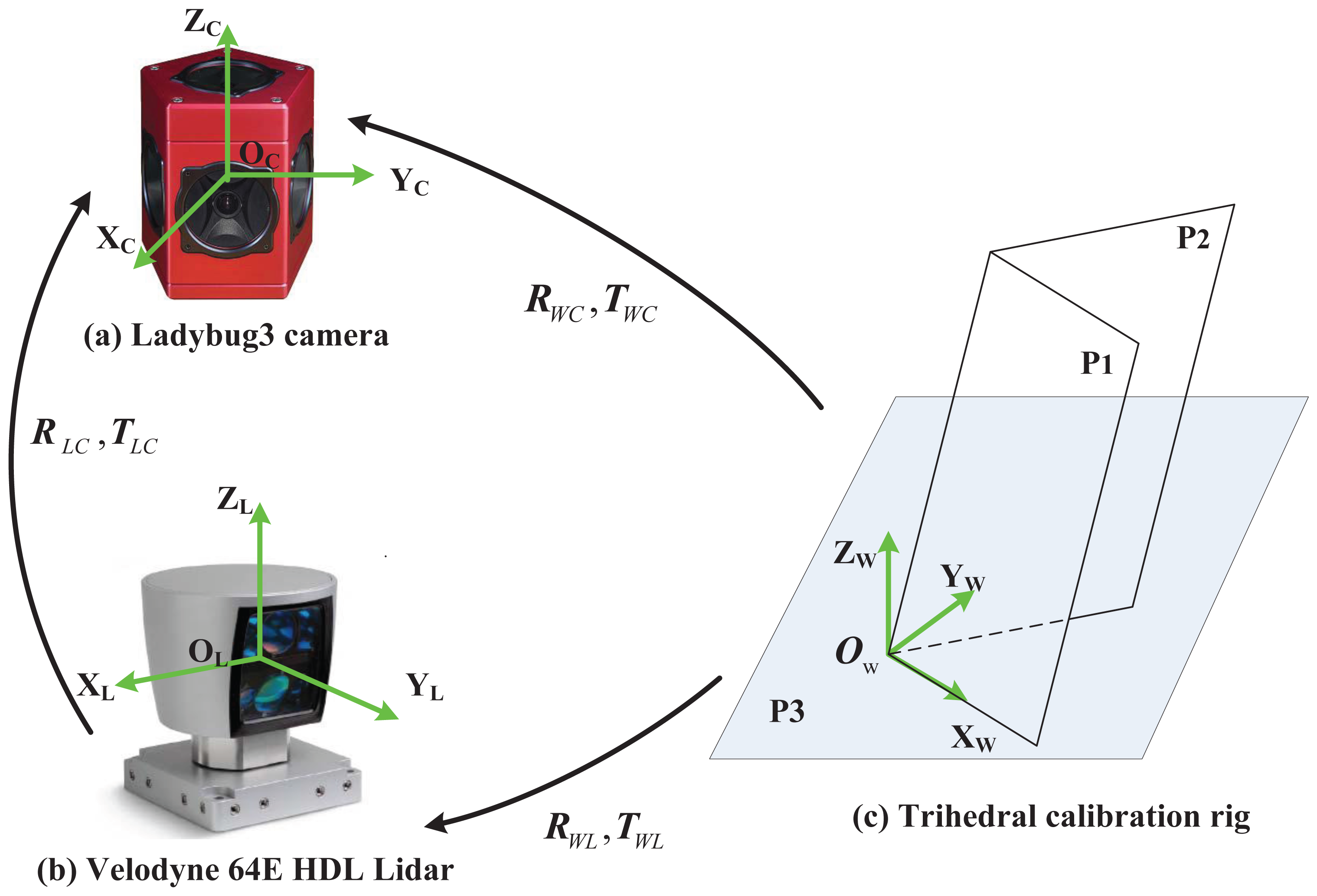

Figure 1 demonstrates a typical calibration configuration. It includes a system composed of a Ladybug3 omnidirectional camera [25] and a commercially available high-speed Velodyne HDL-64E 3D LIDAR [26], as well as a trihedral target viewed by both sensors. In experiments, the trihedron is fixed and the sensor system moves to obtain multiple configurations. In such configurations, several reference frames are defined:

Camera frame: The proposed method is not restricted to a specific camera type, as long as the camera is of a single viewpoint [27] and pre-calibrated. The camera frame is set up to be coincident with the one defined in its projection model. The frame is represented by {Ci}, in which i = 1 ⋯ N indicates the ith configuration.

LIDAR frame: The LIDAR frame is also defined to be coincident with the one in its own projection model, and is denoted by {Li}.

World reference frame: The world reference frame is fixed on the trihedron. Since the trihedral object can be either orthogonal or not, the reference frame is set up in such a way that the origin is at the common vertex and the axis X aligns with one intersection line. The axis Z is aligned with the direction of the plane P3's normal vector, and Y is further determined following the right-hand rule, as illustrated in Figure 1(c). The world frame is denoted by {W}.

Once the frames are defined, we represent the relative rotation and translation from one frame A to another frame B by RAB and TAB, where A, B ∈ {Ci, Li, W}. Then, given a 3D point PA in the frame A, the corresponding point PB in B is computed via PB = RABPA + TAB. In practice, the sensors are rigidly mounted on a mobile robot, and the transformations from the LIDAR to the camera, i.e. RLiCi and TLiCi, are fixed in all configurations even when the robot moves. Hence, they are simply denoted by RLC and TLC, which are the parameters we aim to estimate in the calibration task.

In addition, we know that a plane in a frame is specified by NTP − d = 0, where P is an arbitrary 3D point lying on the plane, and N and d are, respectively, the normal vector and the distance. Hence, we use to describe the jth plane of the trihedron with respect to the frame A, j = 1 ⋯ 3, and specifies the kth point on the plane.

2.2. Geometric and Motion Constraints

The proposed method makes use of a trihedron as a calibration rig. Hence, in order to address the extrinsic calibration problem, several constraints are taken into consideration. They are summarized as follows.

Trihedral constraint: Let us consider the trihedron with respect to a sensor frame A. If its three planes, , are estimated, then the relative rotation RWA and translation TWA from the world frame to the sensor frame are uniquely determined. We represent RWA = [rWA1 rWA2 rWA3], where rWA1, rWA2 and rWA3 are column vectors. Then, we have

and the translation isPlanarity constraint between two frames: This constraint implies that, if points in a frame A are coplanar, then they must lie on a plane when transformed to another frame B. It means that, in the absence of noise, we have a plane {NB, dB} such that

for all coplanar points in the frame A.Planarity constraint between two images: This constraint describes the relationship between a set of coplanar feature points and their correspondences in two images. Given a single-viewpoint camera, for the purpose of generality, we represent its projection model as p = F(P), where P is a 3D point in space and p is the projected image point. The inverse projection model is specified by P = γF−1 (p), in which γ is an unknown scalar, meaning that P lies on a ray determined by p, but its distance stays unknown. Now, we consider two camera frames C1 and C2. In the first frame, the plane on which all the features lie is defined by {NC1, dC1}. Then, pair-wise corresponding image features pC1 and pC2 satisfy

Note that this constraint is also known as the homography constraint [28] when F is a pinhole camera projection model.Motion constraint: When a robot platform moves from one location to another, the translation of the camera and that of the LIDAR are equal to each other, as the sensors are fixed rigidly. Hence, we have

3. Algorithm Description

In order to estimate the relative transformation between a 3D LIDAR and a camera, we capture N (N ≥ 2) observations of a trihedron by both sensors. The sensors are individually calibrated in each configuration first to get their extrinsic parameters with respect to the world reference. Then, the LIDAR-camera extrinsic calibration is formulated as a nonlinear least squares problem in terms of the constraints introduced above. It is further solved by the Levenberg-Marquardt (LM) method [11] after properly estimating the initializations. An overview of the entire calibration procedure is presented in Algorithm 1. The details are subsequently introduced below.

| Algorithm 1: 3D LIDAR-camera extrinsic calibration procedure. |

| Input: N LIDAR-camera observations of a trihedron |

| Manually selected regions of the trihedron's planes in the observations. |

| Output: RLC and TLC |

| for i = 1 → Ndo |

| for j = 1 → 3 do |

| Estimate the jth plane w.r.t the ith LIDAR frame; |

| end |

| Estimate the transformation {RWLi, TWLi}; |

| end |

| Refine based on all observations; |

| Detect features on the first image; |

| for i = 2 → Ndo |

| Detect and match features on the ith image; |

| Estimate the transformation {RC1Ci, TC1Ci}; |

| Estimate ; |

| Estimate the transformation {RWC1, TWC1, RWCi, TWCi}; |

| end |

| Initialize RLC and TLC; |

| Refine the estimates of RLC and TLC. |

3.1. 3D LIDAR Extrinsic Calibration

Given the ith LIDAR observation, this step is to estimate the transformation, RWLi and TWLi, from the world to the ith LIDAR frame. To this end, we first estimate the trihedron's planes according to the LIDAR observation. When a user specifies a set of 3D points that mostly lie on the trihedron's jth plane, the plane's parameters are estimated by minimizing the following linear least squares problem:

When more than one observation is available, we can further refine the results by using the planarity constraints established between each pair of the LIDAR frames. Thus, we get

Let be the parameters estimated in Equation (7), and f be the function that is optimized. Then, the Levenberg-Marquardt method starts from a given initial guess θ0 and iteratively updates the parameters via

3.2. Camera Extrinsic Calibration

This step is to determine the transformations, {RWCi, TWCi}, from the world to the camera frames, together with the planes . In contrast to the LIDAR sensor, it is incapable of recovering all parameters from one image since no metric information is available. Hence, two LIDAR-camera observations are needed.

Given two LIDAR-camera observations, we first estimate RC1C2 and TC1C2 between the two camera frames. Once a user delimits the regions of the planes on two images, a set of point features are detected by SIFT [29] within the regions in the first image and then matched to the correspondences in the second one. The two sets of features are represented by and , which satisfy the epipolar constraint [28]

Once the relative motion between two views is determined, we are able to determine the planes by taking advantage of the planarity constraint established between two images, as defined in Equation (4). Hence, in the presence of noise, we estimate the pose of a plane by

3.3. 3D LIDAR-Camera Extrinsic Calibration

With the above-estimated parameters, we now formulate the LIDAR-camera extrinsic calibration task as a nonlinear least squares problem. In terms of the planarity constraints established between the LIDAR and the camera frames, we get the form

4. Experiments

We implement the proposed method in MATLAB. The running time of our algorithm is coarsely measured on a laptop with an Intel Core2Duo 2.26 GHz processor and 3 GB memory. Except for the manual input procedure, it takes about 20 seconds in average to perform the entire calibration when 9 LIDAR-camera observations are considered. Each contains 5,000 LIDAR points and 100 registered image points. In order to evaluate the proposed method, a series of experiments have been carried out. The algorithm is first tested on simulated data to validate its correctness and explore its sensitivity with respect to noise. Then, it is used to calibrate a real system composed of a 3D LIDAR and a camera. The calibration results are subsequently used for 3D reconstruction.

4.1. Simulations

The first experiment validates the correctness and numerical stability of our algorithm. We hereby generate sets of data to simulate multiple observations of a trihedron obtained by a 3D LIDAR-camera system. The system is of the following properties. The rotation and translation from the LIDAR to the camera are set, respectively, as E(RLC) = [11.46°, 5.73°, 85.94°]T and TLC = [0.4,−0.08, 0.2]T m, where E(RLC) is the Euler angle of RLC. An unorthogonal trihedron is synthesized, whose planes are defined by , , , and , , with respect to the first camera frame. Each plane contains 5,000 LIDAR points and 100 registered image points. The simulated camera uses the following Mercator projection model:

4.1.1. Performance w.r.t. the Number of Observations

The extrinsic calibration can be conducted with two or more LIDAR-camera observations. In this experiment, we investigate the impact of the observation number on the calibration performance. Nine LIDAR-camera observation pairs are generated, in which Gaussian noise with zero mean and σ standard deviation is added. We randomly select σ from the range of [0, 0.2] m for LIDAR points and from the range of [0, 1] pixels for image features. We vary the number of observations from 2 to 9. For each number, 200 independent trials are carried out. The estimated parameters RLC and TLC in each trial are compared with the ground truth and measured, respectively, by the displaced Euler angle of the rotation and the absolute error of the translation. Figure 2 plots the mean and standard deviation of the errors.

Figure 2 shows no obvious benefits achieved when the number of observations increases. The reason is that, even when we use two observations, in total there are already six planes taken into consideration. In our simulations, there is even a peak on the error corresponding to 3 observations, partly because the impact of noise is larger than the benefit achieved from the increase of observations. Hence, on the leverage of complexity and performance, throughout all the following experiments, we continue using two LIDAR–camera observations.

4.1.2. Performance w.r.t. the Noise on LIDAR Points

Real ranging sensors produce noisy measurements. Hence, this experiment explores the sensitivity with respect to noise on LIDAR points. We conduct the experiment on the first two simulated observations. Zero mean Gaussian noise is added to points of the LIDAR observations, with σ varying from 0.02 to 0.2 m. Analogous to the previous case, we conduct 200 independent trials for each noise level. The errors on the translation and rotation are evaluated and plotted in Figure 3.

Figure 3 shows that the errors increase linearly with the noise level. When σ = 0.1 m, which is a noise level of a practical LIDAR, the translation errors are around 0.005 m in Y and Z directions, and 0.01 m in X direction. The rotation errors are about 0.01°. In our simulated configurations, X represents the direction of the optical axis, along which depth information degenerates so that larger errors are resulted in [30].

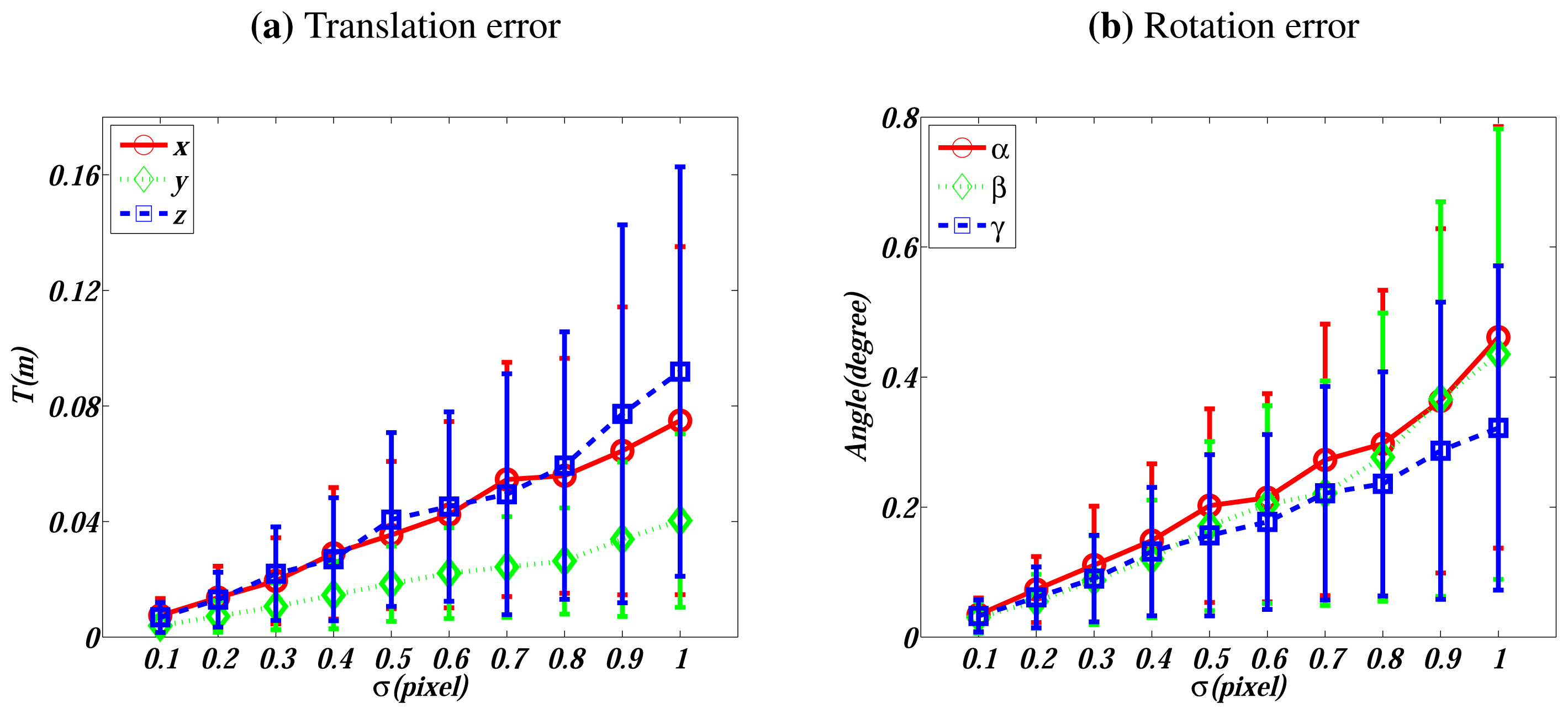

4.1.3. Performance w.r.t. the Noise on Image Points

The feature detection and matching algorithm we use in this work is SIFT [29], which is of sub-pixel accuracy. In this experiment, we investigate the sensitivity with respect to the noise on matched image features. Zero mean Gaussian noise with σ ∈ [0.1, 1] pixels is added to each feature point on the first two simulated image data. Analogously to above cases, 200 trials are conducted for each noise level. The performance is evaluated and plotted in Figure 4.

Figure 4 also presents a linear relationship between the errors and the noise level. When σ = 0.5 pixels, which is a noise level higher than the normal noise, the translation errors are smaller than 0.04 m and the rotation errors are around 0.2°.

4.2. Real Data

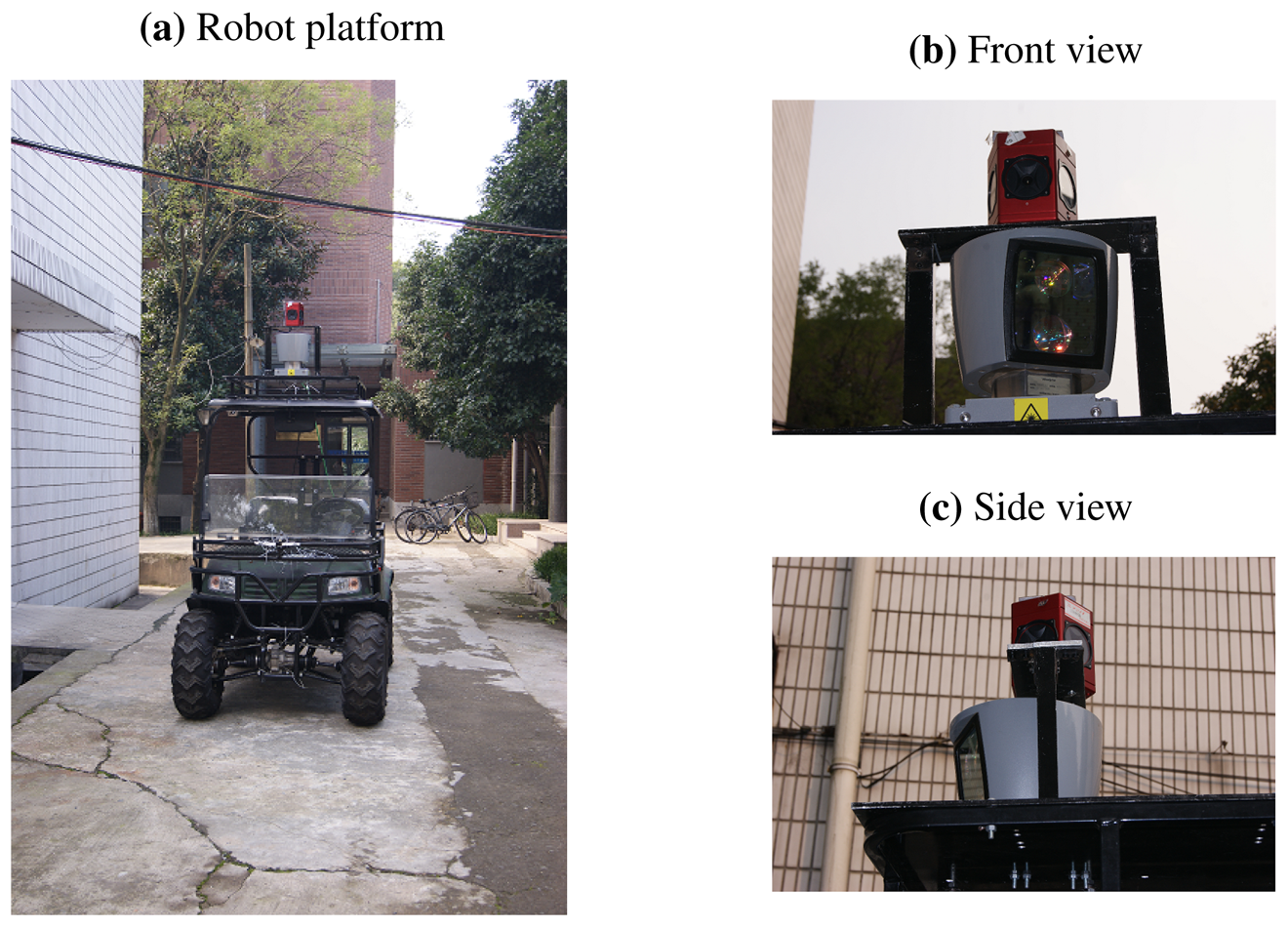

To further evaluate the proposed algorithm, we employ it to calibrate a real system and use the calibration results to reconstruct 3D scenes. The system is composed of a 3D Velodyne HDL-64E LIDAR [26] and a Ladybug3 spherical vision system [25], which are rigidly mounted on the roof of a vehicle, as shown in Figure 5. Both sensors produce omnidirectional measurements.

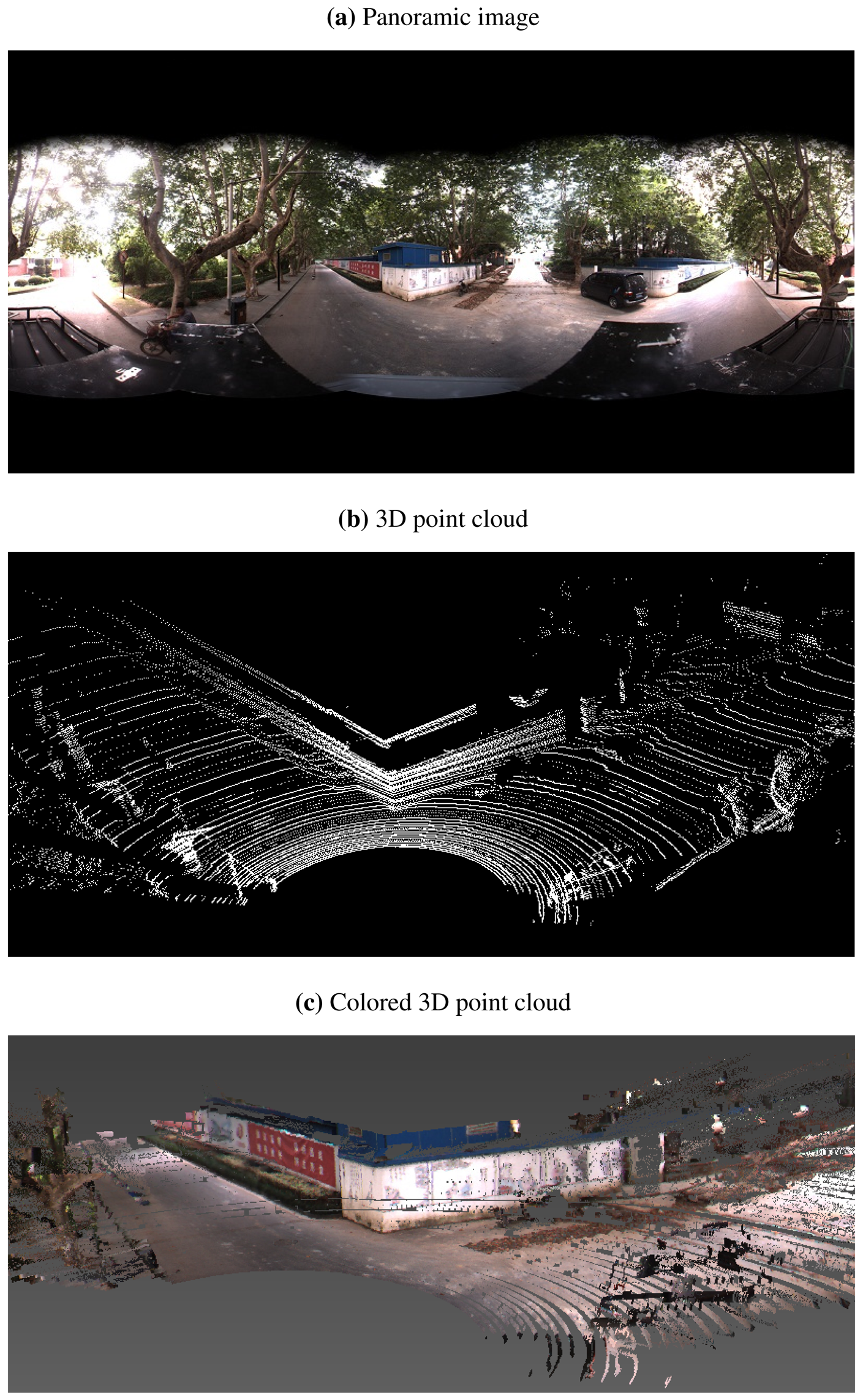

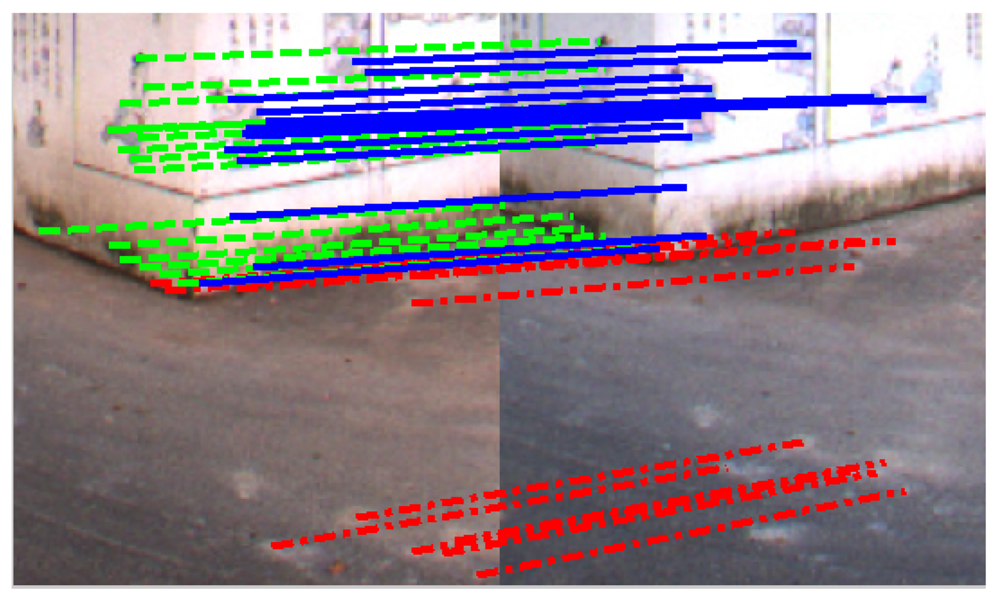

In the experiments, we collect two LIDAR-camera measurements of a scenario containing a trihedral object. The trihedron consists of two adjacent walls of a building, together with the ground plane, as shown in Figures 6 and 7. Due to imperfect construction techniques and noise, the planes of the trihedron are not strictly orthogonal to each other. During the calibration procedure, regions of the planes are manually marked out on both LIDAR and image data, and features on the imaged trihedrons are detected and matched by SIFT [29]. A portion of the matched feature points are shown in Figure 8. Table 1 lists the calibration results of our method. For the purpose of comparison, we also include the results obtained by the checkerboard pattern-based method [12] using six observations. From the results, it is difficult to determine which one is more accurate, since no ground truth is available. Our proposed method, however, is more convenient, as it is easier to collect calibration data and requires less manual input.

In order to validate the calibration results, the determined extrinsic parameters are further used for 3D reconstruction. With the calibrated RLC and TLC, 3D LIDAR points in a view are first transformed into the camera frame and then registered to the image. The colors of registered image pixels are taken to render the corresponding upsampled LIDAR points. Figure 6(c) and Figure 7(c) demonstrate the colored 3D scenes of the two calibration views (only the data within a 180° field of view are shown for a better visibility), from which we see that the walls and the bushes are reconstructed well.

5. Conclusions

In this paper, we have presented a new method of conducting the extrinsic calibration for a 3D LIDAR-camera system. Specifically, instead of using planar checkerboard patterns, we take advantage of arbitrary trihedral objects, which might be either orthogonal or not, for calibration. This kind of configuration is ubiquitous in structured environments, so that it is very convenient for a mobile robot to collect data. We have validated the algorithm on both simulated and real scenarios. Although the experimental results are presented from 3D LIDAR and omnidirectional camera systems, the algorithm is applicable to systems composed of any kind of 3D LIDARs and cameras. Our method is interesting for both indoor or outdoor mobile robots equipped with such sensors. The calibration results can be further used for data fusion applications.

Acknowledgments

This research work is partially supported by the National Natural Science Foundation of China via grants 61001171, 60534070, 90820306, and Chinese Universities Scientific Fund.

References

- Ess, A.; Leibe, B.; Schindler, K.; Van, G.L. Moving Obstacle Detection in Highly Dynamic Scenes. Proceeding of IEEE International Conference Robotics and Automation, Kobe, Japan, 12–17 May 2009.

- Geiger, A.; Lenz, P.; Urtasun, R. Are We Ready for Autonomous Driving? The KITTI Vision Benchmark Suite. Proceeding of 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 16–21 June 2012.

- Wulf, O.; Arras, K.; Christensen, H.; Wagner, B. 2D Mapping of Cluttered Indoor Environments by Means of 3D Perception. Proceeding of IEEE International Conference on Robotics and Automation, Barcelona, Spain, 18–22 April 2004; 4, pp. 4204–4209.

- Pandey, G.; McBride, J.R.; Eustice, R. Ford campus vision and lidar data set. Int. J. Robot. Res. 2011, 30, 1543–1552. [Google Scholar]

- Zhao, G.; Xiao, X.; Yuan, J. Fusion of Velodyne and Camera Data for Scene Parsing. Proceeding of 15th International Conference on Information Fusion (FUSION), Seattle, WA, USA, 6–9 July 2009.

- Wasielewski, S.; Strauss, O. Calibration of a Multi-Sensor System Laser Rangefinder/Camera. Proceedings of the Intelligent Vehicles Symposium, Detroit, MI, USA, 25–26 September 1995; pp. 472–477.

- Naroditsky, O.; Iv, E.P.; Daniilidis, K. Automatic Alignment of a Camera with a Line Scan Lidar System. Proceedings of IEEE International Conference Robotics and Automation, Shanghai, China, 9–13 May 2011.

- Zhang, Q.; Pless, R. Extrinsic Calibration of a Camera and Laser Range Finder (Improve Camera Calibration). Proceedings of IEEE International Conference on Intelligent Robots and Systems, Sendai, Japan, 28–30 September 2004; pp. 2301–2306.

- Mei, C.; Rives, P. Calibration between a Central Catadioptric Camera and a Laser Range Finder for Robotic Applications. Proceedings of IEEE International Conference on Robotics and Automation, Orlando, FL, USA, 15–19 May 2006; pp. 532–537.

- Zhang, Z. A flexible new technique for camera calibration. IEEE Tran. Pattern Anal. Mach. Intell. 2000, 22, 1330–1334. [Google Scholar]

- Madsen, K.; Nielsen, H.; Tingleff, O. Methods for Non-Linear Least Squares Problems. In Informatics and Mathematical Modelling; Technical University of Denmark: Lyngby, Denmark, 2004. [Google Scholar]

- Unnikrishnan, R.; Hebert, M. Fast Extrinsic Calibration of a Laser Rangefinder to a Camera; Carnegie Mellon University, Rotbotics Institute: Pittsburgh, PA, USA, 2005. [Google Scholar]

- Pandey, G.; McBride, J.; Savarese, S.; Eustice, R. Extrinsic Calibration of a 3D Laser Scanner and an Omnidirectional Camera. Proceedings of 7th IFAC Symposium on Intelligent Autonomous Vehicles, Lecce, Italy, 6–8 September 2010.

- Rodriguez, F.S.; Fremont, V.; Bonnifait, P. Extrinsic Calibration between a Multi-Layer Lidar and a Camera. Proceedings of IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems, Seoul, Korea, 20–22 August 2008; pp. 214–219.

- Klimentjew, D.; Hendrich, N.; Zhang, J. Mulit Sensor Fusion of Camera and 3D Laser Range Finder for Object Recognition. Proceedings of IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems, Salt Lake City, UT, USA, 5–7 September 2010; pp. 236–241.

- Aliakbarpour, H.; Nuez, P.; Prado, J.; Khoshhal, K.; Dias, J. An Efficient Algorithm for Extrinsic Calibration between a 3D Laser Range Finder and a Stereo Camera for Surveillance. Proceedings of International Conference on Advanced Robotics, Munich, Germany, 22–26 June 2009; pp. 1–6.

- Pandey, G.; McBride, J.R.; Savarese, S.; Eustice, R.M. Automatic Targetless Extrinsic Calibration of a 3D Lidar and Camera by Maximizing Mutual Information. Proceedings of the AAAI National Conference on Artifical Intelligence, Toronto, Canada, 22–26 July 2012.

- Stamos, I.; Liu, L.; Chen, C.; Wolberg, G.; Yu, G.; Zokai, S. Integrating automated range registration with multiview geometry for the photorealistic modeling of large-scale scenes. Int. J. Comput. Vis. 2008, 78, 237–260. [Google Scholar]

- Mirzaei, F.M.; Kottas, D.G.; Roumeliotis, S.I. 3D lidar-camera intrinsic and extrinsic calibration: Observability analysis and analytical least squares-based initialization. Int. J. Robot. Res. 2012. in press. [Google Scholar]

- Geiger, A.; Moosmann, F.; Car, O.; Schuster, B. A Toolbox for Automatic Calibration of Range and Camera Sensors Using a Single Shot. Proceedings of International Conference on Robotics and Automation (ICRA), St. Paul, MN, USA, 14–18 May 2012.

- Scaramuzza, D.; Harati, A.; Siegwart, R. Extrinsic Self Calibration of a Camera and a 3D Laser Range Finder from Natural Scenes. Proceedings of IEEE International Conference on Intelligent Robots and Systems, San Diego, CA, USA, 29 October–2 November 2007; pp. 4164–4169.

- Li, G.; Liu, Y.; Dong, L.; Cai, X.; Zhou, D. An Algorithm for Extrinsic Parameters Calibration of a Camera and a Laser Range Finder Using Line Features. Proceedings of IEEE International Conference on Intelligent Robots and Systems, San Diego, CA, USA, 29 October–2 November 2007; pp. 3854–3859.

- Mirisola, L.G.B.; Lobo, J.; Dias, J. 3D Map Registration using Vision/Laser and Inertial Sensing. Proceedings of European Conference on Mobile Robots (EMCR), Freiburg, Germany, 19–21 September 2007.

- Nnez, P., Jr.; D., P.; Rocha, R.; Dias, J. Data Fusion Calibration for a 3D Laser Range Finder and a Camera Using Inertial Data. Proceedings of European Conference on Mobile Robots (ECMR), Dubrovnik, Croatia, 23–25 September 2009; pp. 31–36.

- Grey, P. Ladybug3 Getting Started Manual. 2012. Available online: http://www.ptgrey.com/ (accessed on 25 Jannuary 2013). [Google Scholar]

- Velodyne. Velodyne HDL-64E. 2012. Available online: http://velodynelidar.com/lidar (accessed on 25 Jannuary 2013). [Google Scholar]

- Ly, S.; Demonceaux, C.; Vasseur, P. Translation Estimation for Single Viewpoint Cameras Using Lines. Proceedings of International Conference on Robotics and Automation (ICRA), Anchorage, AK, USA, 3–8 May 2010; pp. 1928–1933.

- Hartley, R.I.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar]

- Kinoshita, K. 3D Accuracy Improvement from an Image—Evaluation and Viewpoint Dependency. Proceedings of Australia-Japan Advanced Workshop on Computer Vision, Adelaide, Australia, 9–11 September 2003.

| TX (m) | TY (m) | TZ (m) | α (deg) | β (deg) | γ (deg) | |

|---|---|---|---|---|---|---|

| The proposed method | 0.257 | 0.007 | −0.323 | −1.788 | 1.446 | −88.542 |

| The method in [12] | 0.203 | 0.036 | −0.285 | −1.358 | 1.799 | −88.996 |

© 2013 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Gong, X.; Lin, Y.; Liu, J. 3D LIDAR-Camera Extrinsic Calibration Using an Arbitrary Trihedron. Sensors 2013, 13, 1902-1918. https://doi.org/10.3390/s130201902

Gong X, Lin Y, Liu J. 3D LIDAR-Camera Extrinsic Calibration Using an Arbitrary Trihedron. Sensors. 2013; 13(2):1902-1918. https://doi.org/10.3390/s130201902

Chicago/Turabian StyleGong, Xiaojin, Ying Lin, and Jilin Liu. 2013. "3D LIDAR-Camera Extrinsic Calibration Using an Arbitrary Trihedron" Sensors 13, no. 2: 1902-1918. https://doi.org/10.3390/s130201902