Online Least Squares One-Class Support Vector Machines-Based Abnormal Visual Event Detection

Abstract

: The abnormal event detection problem is an important subject in real-time video surveillance. In this paper, we propose a novel online one-class classification algorithm, online least squares one-class support vector machine (online LS-OC-SVM), combined with its sparsified version (sparse online LS-OC-SVM). LS-OC-SVM extracts a hyperplane as an optimal description of training objects in a regularized least squares sense. The online LS-OC-SVM learns a training set with a limited number of samples to provide a basic normal model, then updates the model through remaining data. In the sparse online scheme, the model complexity is controlled by the coherence criterion. The online LS-OC-SVM is adopted to handle the abnormal event detection problem. Each frame of the video is characterized by the covariance matrix descriptor encoding the moving information, then is classified into a normal or an abnormal frame. Experiments are conducted, on a two-dimensional synthetic distribution dataset and a benchmark video surveillance dataset, to demonstrate the promising results of the proposed online LS-OC-SVM method.1. Introduction

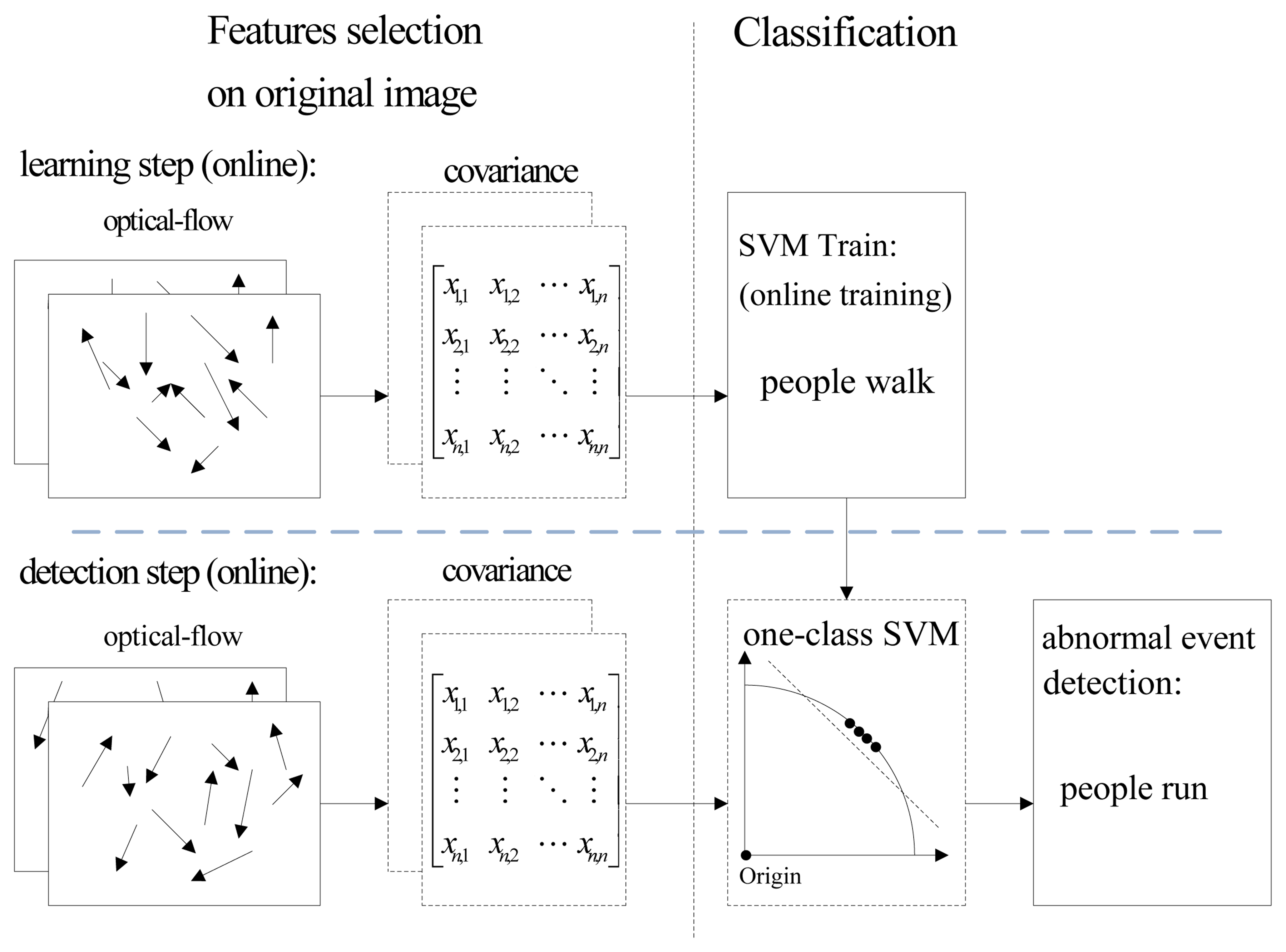

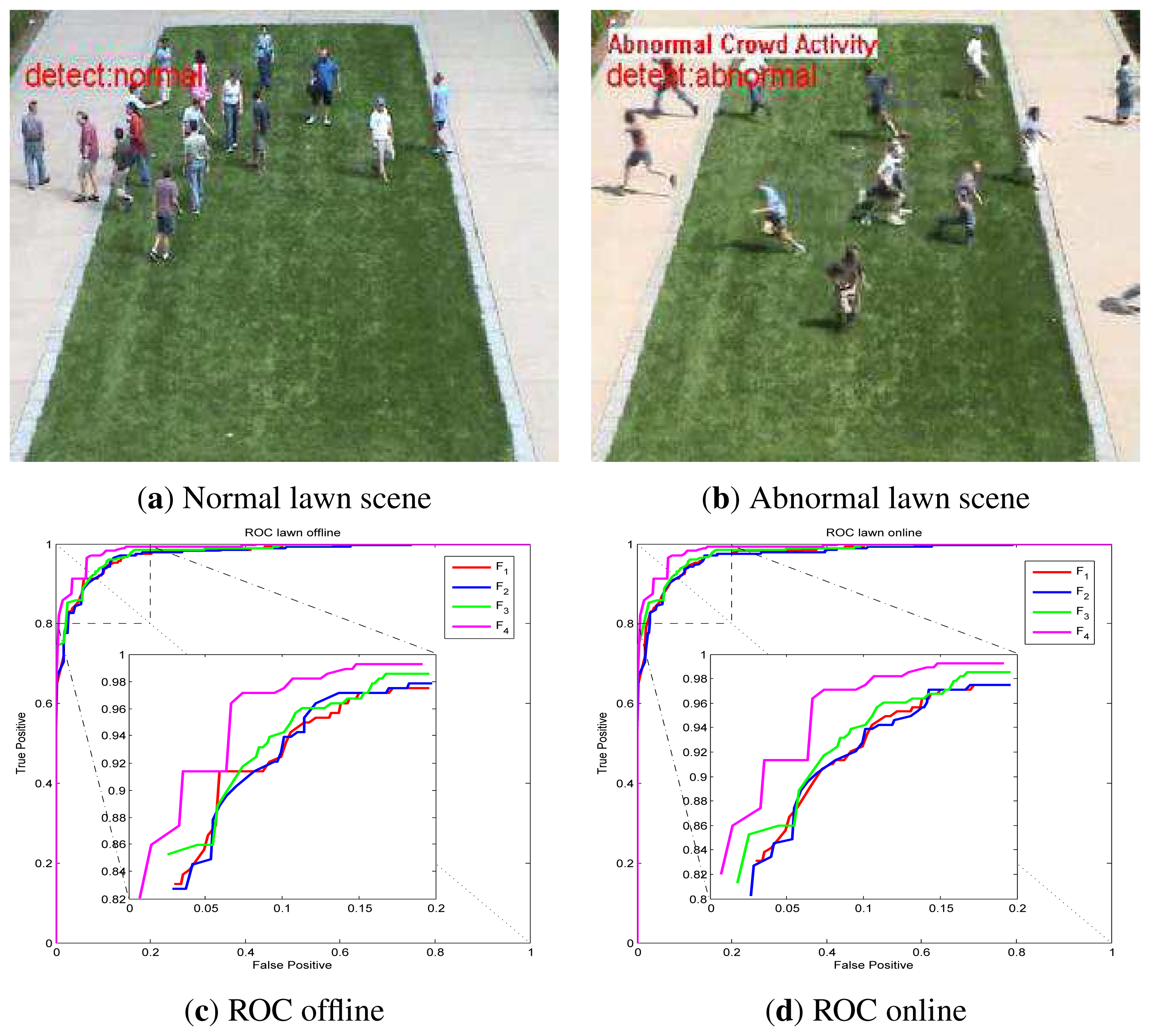

Visual surveillance is one of the major research areas in computer vision. After recording events by a visual sensor, such as a camera, obtaining detailed information of individual or crowd behavior is a challenging object in this area; automatic abnormal event detection is required to provide convenience, safety and an efficient lifestyle for humanity [1]. An abnormal event is defined as behavior deviating from what one expects. For example, a pedestrian panic in a public region: the people are running in the plaza, where people are usually strolling. As shown in Figure 1a, a normal scene is illustrated, the people are walking. In Figure 1b, the people are suddenly running in different directions; this scene is considered abnormal. If a system can detect this event, which might imply a safety risk, security staff can be alerted to take emergency response procedures. The abnormal event detection is a content-based video analysis problem; it includes two technologies: a feature representation of an event model and an abnormal event detection approach.

In [2–4], abnormal detection approaches with behavioral models were introduced. The behavior pattern modeled by adopting optical flow or pixel change history (PCH) was represented by Bayesian models. In [5], the motion feature, including the position, direction and velocity, was modeled by latent Dirichlet allocation. In [6], the abnormal vehicle behavior at intersections was detected via a stochastic graph model based on the Markovian approach. The behavior was labeled as abnormal when the current motion pattern cannot be recognized as any state of the system or a particular sequence of states cannot be parsed with the stochastic model. These works relied on an explicit signal statistical model, and the abnormal events were the ones interpreted as statistical model abrupt changes, maximum likelihood or Bayesian estimation theory [7]. The signal model together with probabilistic assumption techniques are usually extremely powerful insofar as an accurate model ex0ists; these methods are effective in several scenarios. However, there are various situations where a robust and tractable model cannot be obtained. This raises the need for model-free methods.

On the other hand, low-level motion features were employed. In [8], the authors presented an algorithm monitoring optical flow in a set of fixed spatial positions. The similarity of the model was computed to detect abnormal patches. In [9], the irregular behavior of images and videos was detected by comparing the likelihood of patches via a probabilistic graphical model. These methods based on separated patches, benefiting from the partial knowledge of the image, do not exploit the global information of the frame.

Trajectory information is also adopted to detect abnormal events. In [10,11], the authors presented a method for anomalous event detection by means of trajectory analysis. The trajectories were subsampled to a fixed-dimension vector representation and clustered with an one-class support vector machine (SVM). In [12], alarm detection of traffic was performed on the basis of the parameters of the moving objects and their trajectories by using semantic reasoning and ontologies. In [13], vision-based abnormal events for home healthcare systems were detected by using shape feature variation and 3D trajectory. Tracking-based algorithms are likely to fail in crowded scenes.

We consider the model-free approach, which does not require an explicit statistical model. To be accurate, the support vector machine (SVM) classification method is relied on in this paper. Inspired by the satisfactory performance of a covariance feature descriptor representing object in a tracking problem, a covariance descriptor characterizes the moving information of a global frame. In a tracking problem, the covariance descriptor is constructed of the blob intensity or color for template matching. In this paper, covariance encodes the optical flow of the global frame.

The rest of the paper is organized as follows. In Section 2, related works are briefly reviewed. In Section 3, the online least squares one-class support vector machine (online LS-OC-SVM) classification method is originally derived. In Section 4, a covariance matrix descriptor is described to provide feature vectors for the classification algorithm. In Section 5, we propose abnormal detection methods based on the online LS-OC-SVM. In Section 6, we present the results on synthetic data and real-world video scenes. Finally, Section 7 concludes the paper.

2. Related Work

SVM is usually trained in a batch model, i.e., all training data are given a priori and are learned together. If additional training data arrive afterward, the SVM must be retrained from scratch [14]. In the problem of abnormal event detection in video surveillance, the normal sequence for training may last for a long time. It is impractical to train the big training set of normal samples as one batch together. If a new datum is added to a large training set, it will likely have only a minimal effect on the previous decision surface. Resolving the problem from scratch seems computationally wasteful. Considering these two aspects, the online strategy is considered in our work to adapt to the computational and the memory requirement.

Some online learning algorithms for SVM were derived based on analyzing the change of Karush-Kuhn-Tucker (KKT) conditions while updating the classifier. In [15], new arrival data along with the data violating the KKT conditions, and the support vectors from the last iteration, were considered as a new training dataset to train the classifier at the current step. The iteration will be stopped when all data satisfy the KKT conditions. In [16,17], the authors analyzed the change of the KKT conditions when one datum was included into, or removed from, the training set; then, a so-called bookkeeping step was used to compute the new coefficients of the classifier to achieve an online update for a two-class SVM. Useful implementation issues on incremental SVM were presented in [18].

In [7], it was argued that the binary classification algorithm in [16] cannot be directly implemented for a one-class problem. In [7,19], the authors considered the change of the normal model over time and online identified outliers using previous data vectors in a sliding time window. Two one-class SVM classifiers, which preceded and followed the present instant, were compared. A change in the statistics of the time series was likely to occur when the resulting machines were different. The sliding time window approach was considered in [20], with an application on wireless sensor networks. This method, adopting sliding window formulation, is not inherently online, since it requires repeated batch training of new machines.

In [21], an online one-class SVM was presented following the idea of [22]: an exponential window was applied to the data to suit it to an adaptive scenario where the solution was able to track the changes of the data distribution and to forget old patterns. This algorithm is based on the slow-varying assumption.

Some online one-class SVM classification methods were proposed based on support vector data description (SVDD) [23,24], the hypersphere one-class SVM formulation. In [25], an online one-class classification method was proposed, a least squares optimization problem was considered and the model complexity was controlled by the coherence criterion. In [26], a method was proposed to reduce space and time complexities. It reduced the training set size during the training procedure by removing data having a high probability of becoming non-support-vectors.

In order to sidestep the difficulty in the nature of the constrained quadratic optimization problem, we derive an online version of the hyperplane one-class SVM [27] based on the least squares regularization. In the least squares SVM version, one finds the solution by solving a linear system instead of a quadratic programming problem. This advantage comes from the use of equality instead of inequality constraints in the problem formulation [28]. Least squares one-class SVM (LS-OC-SVM) was proposed in [29], without considering the sparsity of the hyperplane representation. It is thus inappropriate to detect abnormal events online. In the following, we shall derive an online version of the least squares one-class SVM, then propose a sparsification representation of the detector.

3. Classification

In this section, we introduce the derivation of the proposed online least square one-class support vector machine (online LS-OC-SVM). In abnormal detection problems, it is supposed that the samples from a positive class are obtainable. A density will only exist if the underlying probability measure possesses an absolutely continuous distribution function, but the general problem of estimating the measure for a large class of sets is not solvable [27,30]. The one-class SVM framework is then suitable to the specificity of the abnormal event detection where only normal scene data are available. Support vector machine (SVM) was initially proposed by Vapnik and Lerner [31], attempting to find a compromise between the minimization of empirical risk and the prevention of overfitting. By applying a kernel trick, SVM can handle nonlinear classification problems [10,32–34]. Based on the theoretical foundation of SVM and the soft-margin trick [35,36], one-class SVM is proposed to address the problem where only one-category (the positive) samples with a few outliers are available. In this section, after a brief review of one-class SVM and least squares one-class SVM on a batch model, an online training algorithm is proposed. A sparsified version of the algorithm will then be provided for further adapting to critical online requirements.

3.1. One-Class SVM

One-class SVM (OC-SVM) aims to determine a suitable region in the input data space, χ, which includes most of the samples drawn from an unknown probability distribution, P. It detects objects that resemble training samples. The hypersphere one-class SVM was proposed in [23,24]. It identified outliers by fitting a hypersphere with a minimal radius. The hyperplane one-class SVM was an extended version of the original SVM to one-class problems [27,36]. It identified outliers by fitting a hyperplane from the origin. In our work, we adopt the hyperplane one-class SVM, which is formulated as a constrained minimization optimization problem:

, maps datum xi from the input space, χ, into the feature space,

, maps datum xi from the input space, χ, into the feature space,  , which allows us to solve a nonlinear classification problem by designing a linear classifier in the feature space. w defines a hyperplane in the feature space separating the projections of training data from the origin. A positive definite kernel function, κ, is defined as κ(x, x′) = <Φ(x), Φ(x′)〉, which implicitly maps the training or testing data, x, into a higher (possibly infinite) dimensional feature space. Introducing the Lagrangian multipliers, αi, the decision function in the input data space, χ, is given by:

, which allows us to solve a nonlinear classification problem by designing a linear classifier in the feature space. w defines a hyperplane in the feature space separating the projections of training data from the origin. A positive definite kernel function, κ, is defined as κ(x, x′) = <Φ(x), Φ(x′)〉, which implicitly maps the training or testing data, x, into a higher (possibly infinite) dimensional feature space. Introducing the Lagrangian multipliers, αi, the decision function in the input data space, χ, is given by:

3.2. Least Squares One-Class SVM

Least squares SVM (LS-SVM) was proposed by Suykens in [37,38]. By using the quadratic loss function, Choi proposed least squares one-class SVM (LS-OC-SVM) [29]. LS-OC-SVM extracts a hyperplane as an optimal description of training objects in a regularized least squares sense. It can be written as the following objective function:

The condition for the slack variables in OC-SVM, ξi ≥ 0, is no longer in need. The variable, ξi, represents an error caused by a training object, xi, with respect to the hyperplane. The definitions of the other parameters in Equation (4) are the same as the ones in OC-SVM. The associated Lagrange is:

Setting derivatives of Equation (5) with respective to primal variables, w, ξi, ρ and αi, to zero, we have the following stationarity conditions:

3.3. Online Least Squares One-Class SVM

In an online learning scheme, the training data continuously arrive. We thus need to tune hyperparameters in the objective function and the hypothesis class in an online manner [17]. Let αn, Kn and In denote the coefficient, Gram matrix and identity matrix at the time step, n, respectively. The parameters of LS-OC-SVM [αn – ρn ]T at the time step, n, could be calculated as:

3.4. Sparse Online Least Squares One-Class SVM

The procedures for calculating the parameters, α and ρ, of LS-OC-SVM in Section 3.3 lose sparseness, due to the quadratic loss function in the objective function Equation (4). This formulation is inappropriate for large-scale data and unsuitable for online learning, as the number of training samples grows infinitely [25]. We propose a sparse solution to provide a robust formulation. A dictionary is adopted to address the sparse approximation problem [40].

Instead of Equation (6), where w is expressed with all available data, we intend to approximate it by adopting a dictionary in a sparse way. Consider a dictionary, x

(x), of a datum, x, to the hyperplane is:

(x), of a datum, x, to the hyperplane is:

After providing these relations with the dictionary, we now discuss the dictionary construction. The coherence criterion is adopted to characterize a dictionary in sparse approximation problems. It provides an elegant model reduction criterion with a less computationally-demanding procedure [25,40,41]. The coherence of a dictionary is defined as the largest correlation between the elements in the dictionary, i.e.,

In the online case, the coherence between a new datum and the current dictionary is calculated by:

. Presetting a threshold, μ0, the new arrival sample, xt, at the time step, t, is tested with the coherence criterion to judge whether the dictionary remains unchanged or is incremented by including the new element. For n training samples, the subset, which includes m (1 ≤ m ≪ n) samples, is considered the initial dictionary. Then, each remaining sample is tested with Equation (32) to determine the relation between itself and the previous dictionary. If ϵt ≤ μ0, it will be included into the dictionary. Concretely, the algorithm is performed with two cases described herein below.

. Presetting a threshold, μ0, the new arrival sample, xt, at the time step, t, is tested with the coherence criterion to judge whether the dictionary remains unchanged or is incremented by including the new element. For n training samples, the subset, which includes m (1 ≤ m ≪ n) samples, is considered the initial dictionary. Then, each remaining sample is tested with Equation (32) to determine the relation between itself and the previous dictionary. If ϵt ≤ μ0, it will be included into the dictionary. Concretely, the algorithm is performed with two cases described herein below.First case:ϵt > μ0

In this case, at time step n + 1, the new data, xn+1, is not included into the dictionary. The Gram matrix, K

, with the entries, κ(xi, xj), i, j ∈ {1, 2, …, D}, is unchanged. When a new sample, x, arrives, we need to compute:

where at time step n + 1, κ is the column vector with entries κ(xn+1,xwj), j ∈ {1, 2, …, D}. K(x) is the matrix with the (i, j)-th entry κ(xi, xwj), i ∈ {1, 2, …, n}, j ∈ {1, 2, …, D}.

Second case: ϵt ≤ μ0

In this case, the new data, xn+1, is added into the dictionary, x

. Then, the Gram matrix should be changed by:

is the Gram matrix of the dictionary, including the new arrival dictionary sample, xn+1, and K

is the Gram matrix of the dictionary, including the new arrival dictionary sample, xn+1, and K  is the Gram matrix of the dictionary at the last time step, n. Let x

is the Gram matrix of the dictionary at the last time step, n. Let x  = {xw1, xw2, …, xwD } denote the dictionary at time step n; d is the column vector with entries dj= κ(x,xwj), j ∈ {1, 2, …, D}, and d = κ(xn+1, xn+1). By adopting the matrix inverse identity Equation (16), we have:

= {xw1, xw2, …, xwD } denote the dictionary at time step n; d is the column vector with entries dj= κ(x,xwj), j ∈ {1, 2, …, D}, and d = κ(xn+1, xn+1). By adopting the matrix inverse identity Equation (16), we have:

(x) and also

should be updated. Let the S denote the updated

at time step n + 1; we have:

(x) and also

should be updated. Let the S denote the updated

at time step n + 1; we have:

with proper choices of matrices A, U, C and V, such that U and V should be chosen as two vectors, and A should be chosen as a scaler. Thus, the inverse, (C−1 + V A−1U), is a scaler; Equation (39) can be calculated very efficiently. For instance, for computing the inverse, including the term, (K

Once knowing S, using Equation (33) to add the new κ with entries κ(xn+1, xwj), j ∈ {1, 2, …,D, D + 1}, xwj is an element of the dictionary.

4. Covariance Descriptor of Frame Behavior

The optical flow is chosen as the basic low-level feature to represent the movement direction and amplitude. We apply the Horn-Schunck (HS) method to compute optical flow in this paper. The optical flow can provide important information about the spatial arrangement of the object and the change rate of this arrangement [42]. The optical flow of a gray image is formulated as the minimization of the following global energy functional:

The covariance feature descriptor was originally proposed by Tuzel [43] for pattern matching in a target tracking problem. Owing to its good performance, the covariance descriptor encoding the optical flow is introduced to represent the global movement of the frame. A feature is defined as:

If proper parameters are given, classical kernels, such as Gaussian, polynomial and sigmoidal kernels, have similar performances [44]. In our work, the Gaussian kernel is used. The covariance matrix is an element in the Lie group; the Gaussian kernel in Euclidean spaces is not suitable. The Gaussian kernel in the Lie group is defined as [45,46]:

5. Abnormal Event Detection

In an abnormal event detection problem, it is assumed that a set of training frames, {I1, I2,…, In} (the positive class), describing the normal behavior is obtained. The abnormal detection strategies relative to the online algorithms proposed in Section 3.3 and Section 3.4 are introduced below.

5.1. Online LS-OC-SVM Strategy

The general architecture of the abnormal event detection method via online least squares one-class SVM (online LS-OC-SVM) proposed in Section 3.3 is summarized in Algorithm 1; the flowchart is shown in Figure 3 and explained below.

| Algorithm 1: Visual abnormal event detection via online least squares one-class support vector machine (LS-OC-SVM) and sparse online LS-OC-SVM. |

| Require |

| n training frames and the corresponding optical flow . |

Compute the covariance matrix of each frame.

|

Step 1: The first step consists of calculating the covariance matrix descriptor of the training frames. The features could be chosen as any form shown in Table 1. This step can be generalized as:

where {OP1,OP2,…, OPn} are the image optical flows of the 1st to n – th frames; {C1,C2,…, Cn} are the covariance matrix descriptors.

Step 2: The second step is applying LS-OC-SVM on a small subset of the training samples to calculate the coefficient parameters, α and ρ, in Equation (11). Consider a subset , 1 ≤ m ≪ n of data selected from the training set . Without loss of generality, assume that the first m frames are chosen. These m samples are trained offline. This step can be described in the following equation:

where [K ] and [α − ρ ]T are defined in Equation (11).Step 3: After learning the first m samples, the coefficient matrices, K and [α − ρ ]T, are obtained. The online LS-OC-SVM method (Section 3.3) is applied to learn the remaining n – m samples {Cm+1,Cm+2…Cn}. This step can be expressed as:

Step 4: Based on the coefficient matrix, [α − ρ ]T, the distance of the training samples and the incoming test sample, Cn+l, with respect to the decision plane is computed. By comparing the distances of the samples, an abnormal event is detected:

where Cn+l is the covariance matrix descriptor of the (n + l) – th frame needed to be classified, and Ci is the sample of the training data. “1” corresponds to an abnormal frame; “ − 1” corresponds to a normal frame. Tdis is the threshold of the distance, it is the maximum distance of the training samples to the hyperplane.

5.2. Sparse Online LS-OC-SVM Strategy

The abnormal event detection via sparse online least squares one-class SVM (sparse online LS-OC-SVM) is introduced below. A subset of the samples is chosen to form the dictionary, C

Step 2-sparse: The second step is applying LS-OC-SVM to train the initial dictionary offline. The first m samples are the initial dictionary denoted as C

Step 3-sparse: After learning the initial dictionary, C

is the dictionary and Ck is a new incoming remaining sample in the training dataset. According to the coherence criterion introduced in Section 3.4, if the new sample, Ck, satisfies the dictionary updated condition, it will be included into the dictionary, C

is the dictionary and Ck is a new incoming remaining sample in the training dataset. According to the coherence criterion introduced in Section 3.4, if the new sample, Ck, satisfies the dictionary updated condition, it will be included into the dictionary, C  .

.6. Abnormal Event Detection Results

This section presents the results of experiments conducted to illustrate the performance of the two proposed classification algorithms, online least square one-class SVM (online LS-OC-SVM) and sparse online least square one-class SVM (sparse online LS-OC-SVM). The two-dimensional synthetic distribution dataset and the University of Minnesota (UMN) [47] dataset are used.

6.1. Synthetic Dataset via Online LS-OC-SVM and Sparse Online LS-OC-SVM

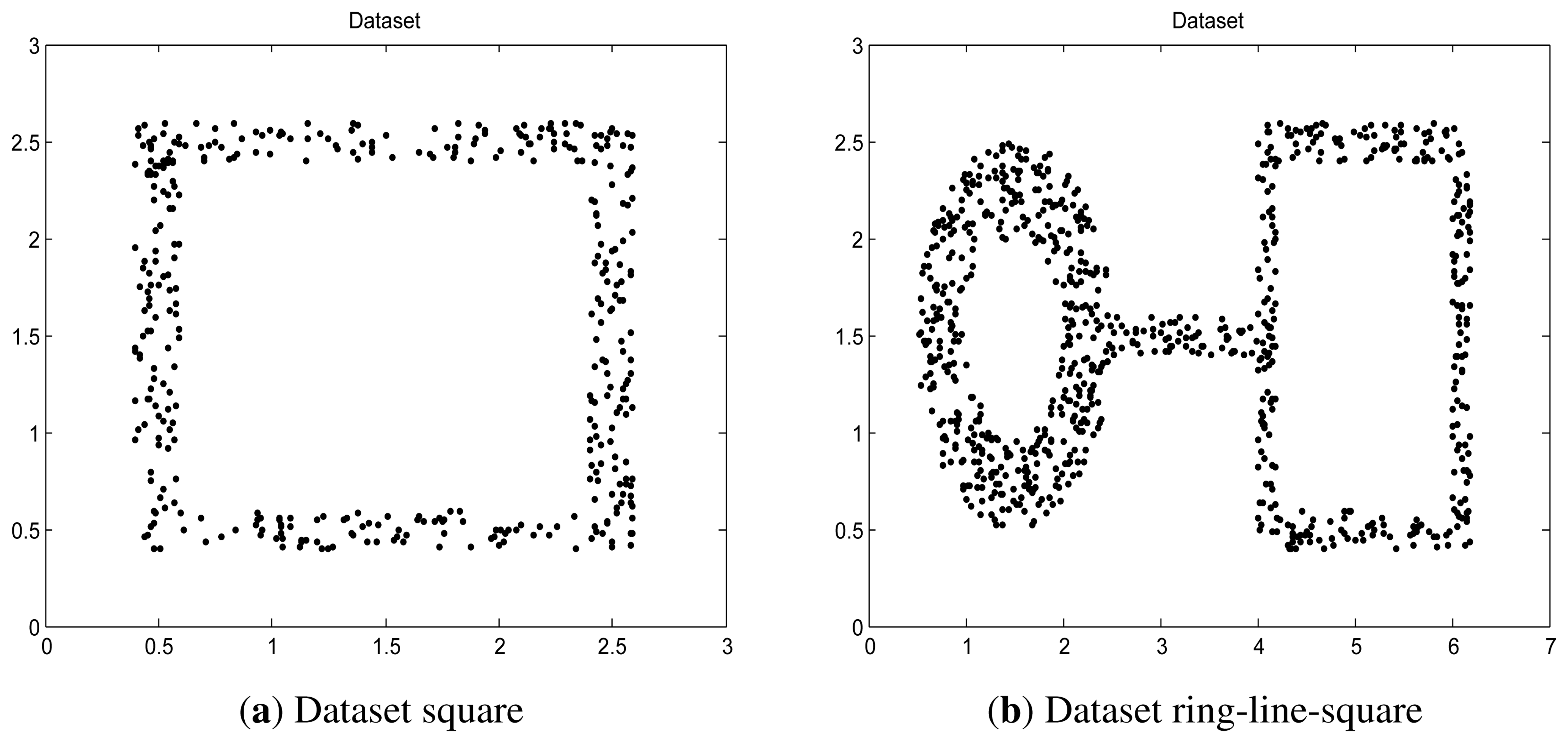

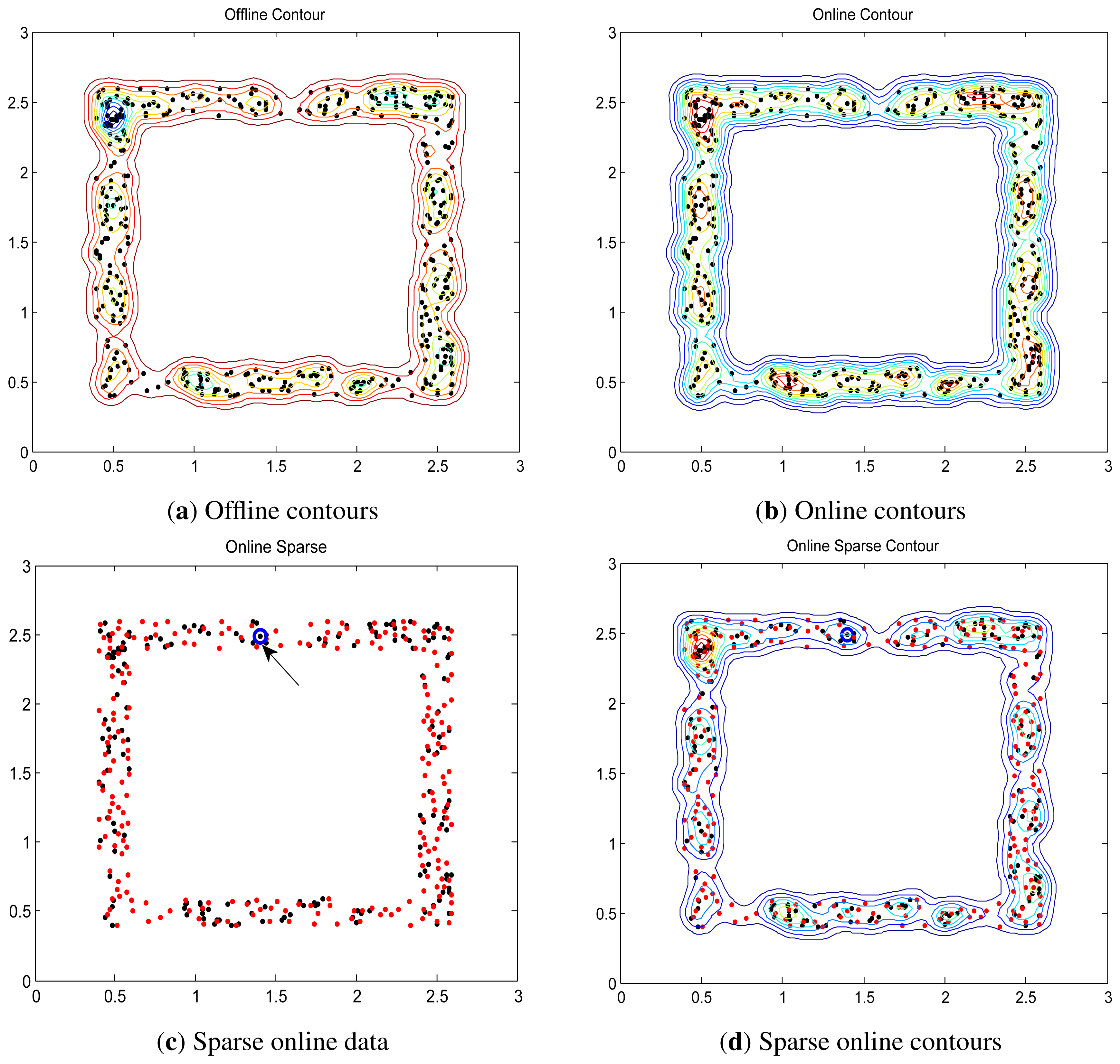

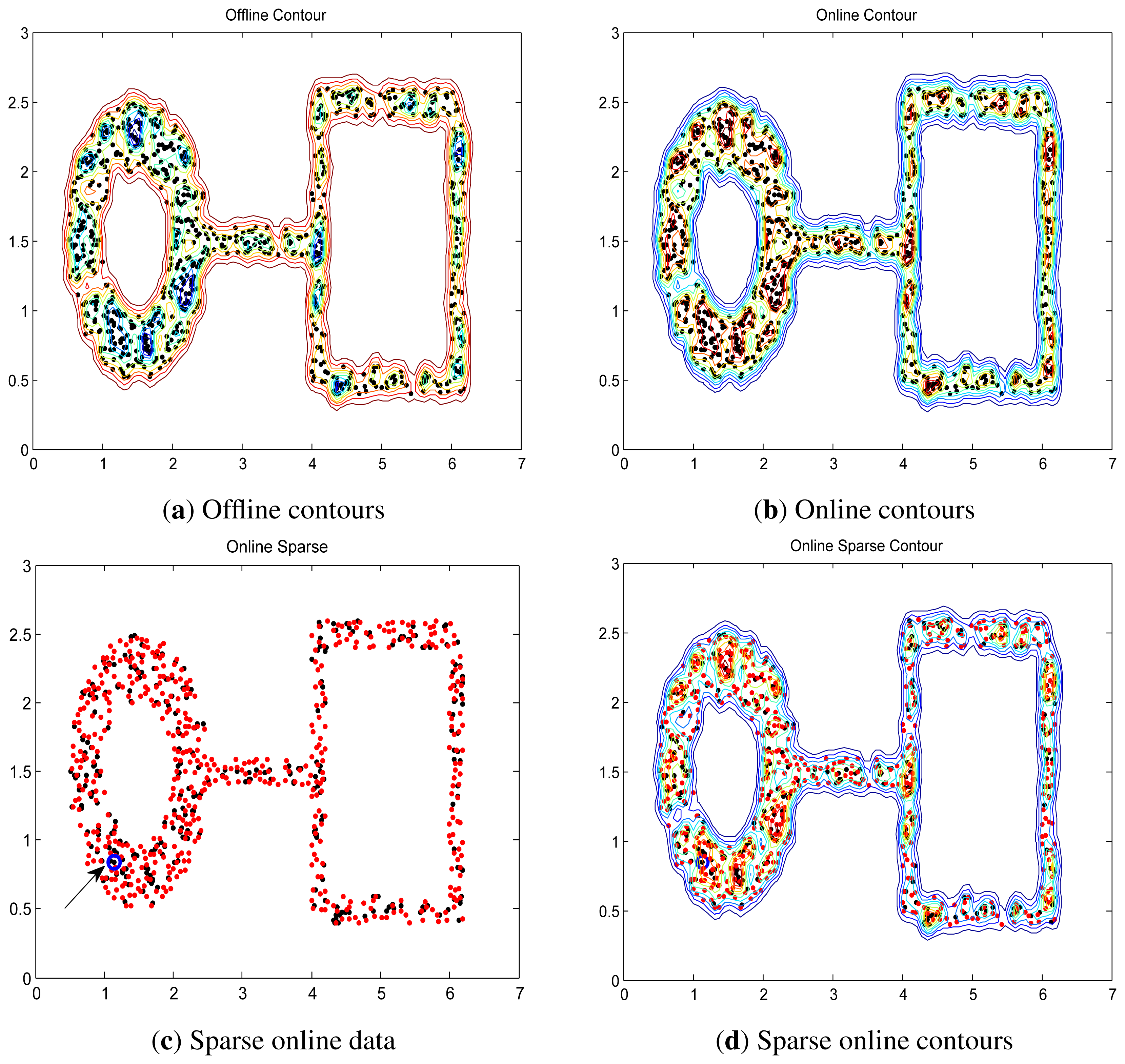

Two synthetic data, “square” and “ring-line-square” [48], are used. The “square” consists of four lines, 2.2 in length and 0.2 in width. In the area of these lines, 400 points were randomly dispersed with a uniform distribution. The “ring-line-square” distribution is composed of three parts: a ring with an inner diameter of 1.0 and an outer diameter of 2.0, a line of 1.6 in length and 0.2 in width, and a square the same as dataset “square” introduced above. 850 points are randomly dispersed with a uniform distribution. These two data are shown in Figure 4.

The first sample is used for initializing the online LS-OC-SVM proposed in Section 3.3; the 399 remaining samples in “square” and 849 remaining samples in “ring-ling-square” are learned in the online manner.

Via the sparse online LS-OC-SVM method proposed in Section 3.4, the first sample is trained offline, and this sample is considered the initial dictionary. Then, each arrival sample in 399 remaining samples in “square” and 849 remaining samples in “ring-ling-square” are checked by the coherence criterion to determine whether the dictionary should be retained or updated by including the new element.

The distances are shown in contours illustrating the boundary. The contours of “square” and “ring-line-square” are shown in Figures 5 and 6, respectively. Gaussian kernel was used in these two data, with bandwidth σ = 0.065. The preset threshold of the coherence criterion is μ0 = 0.08. The detection results obtained by these two online training algorithms are the same as the ones when training data were learned in a batch model.

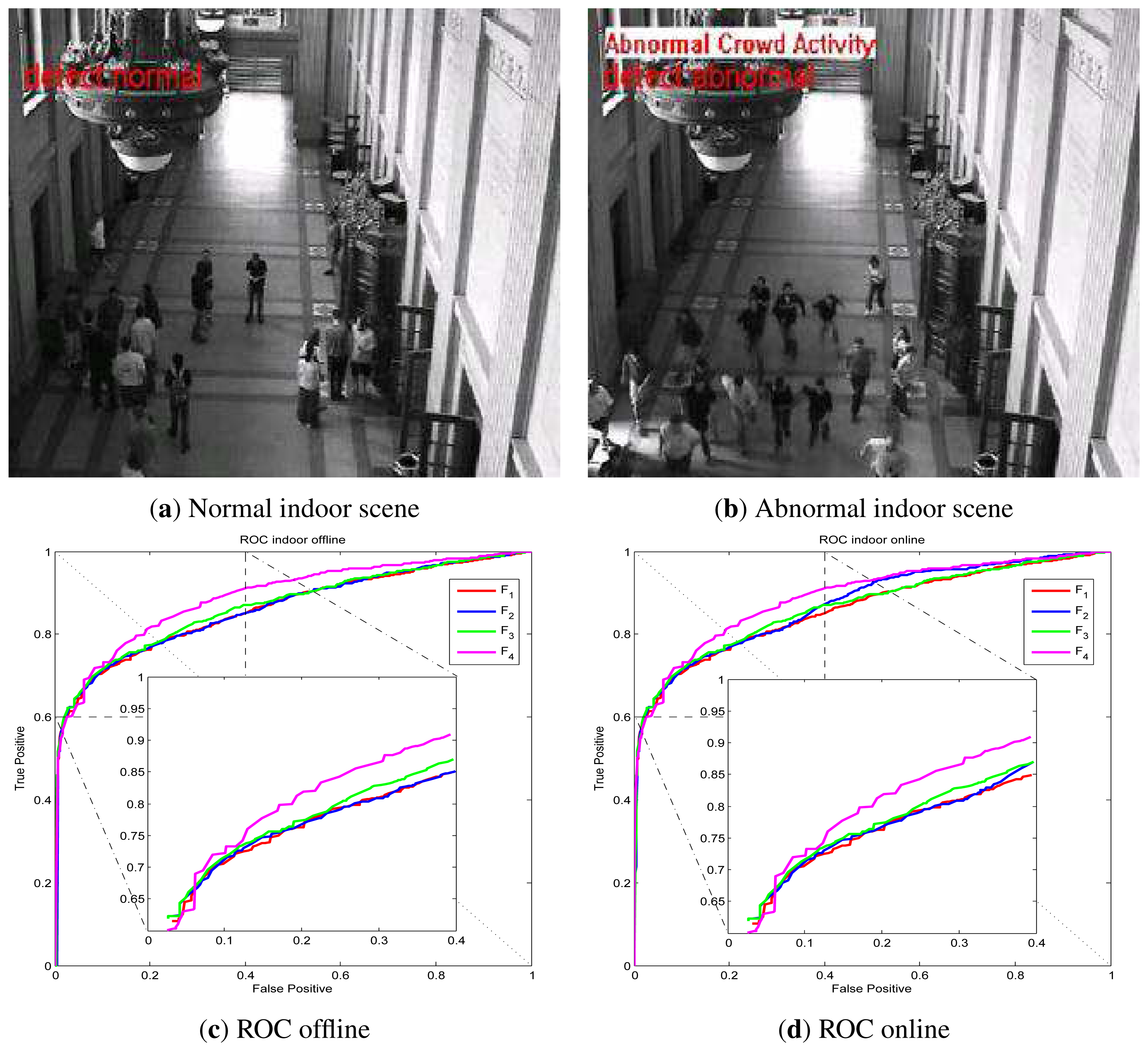

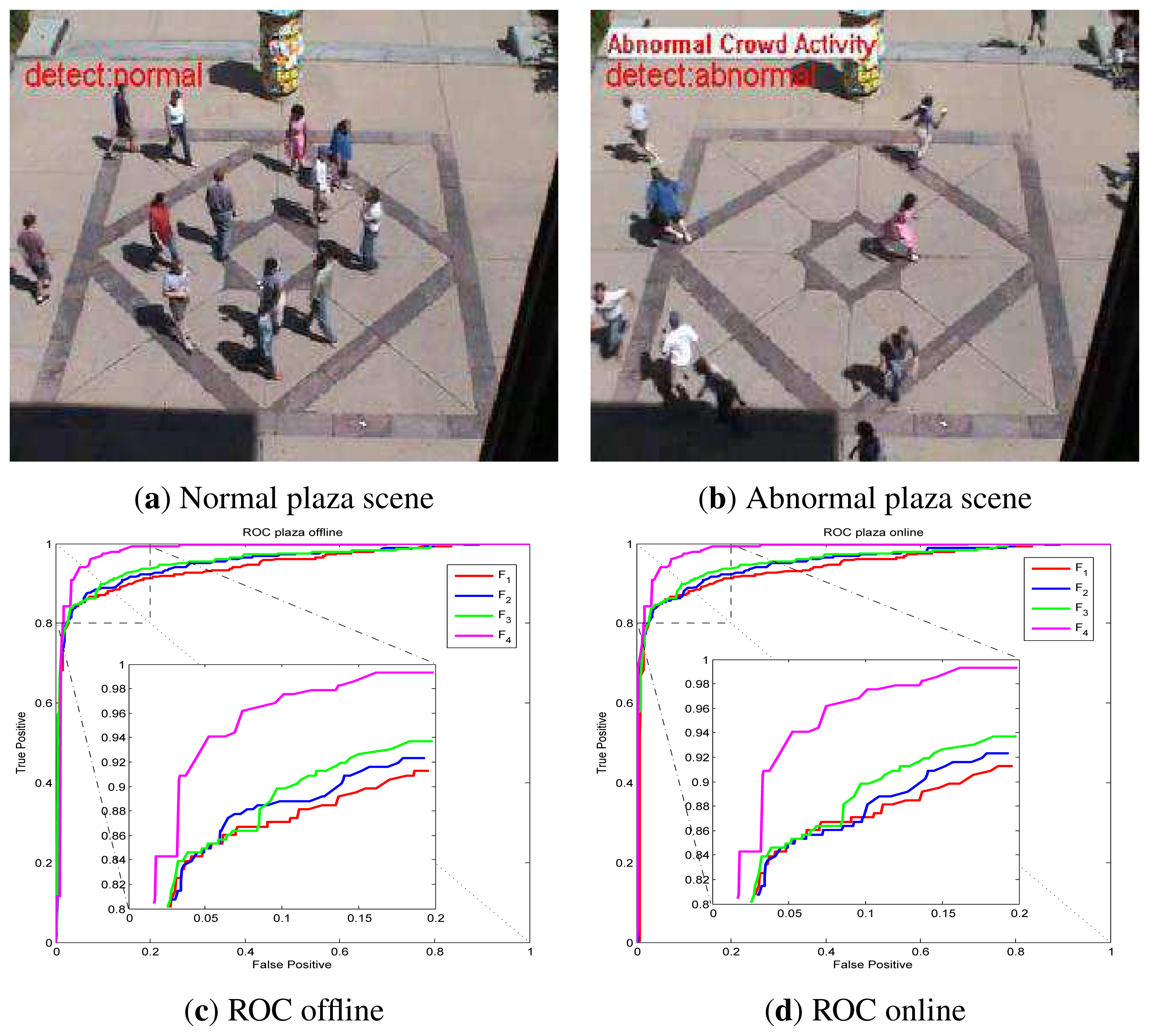

6.2. Abnormal Visual Event Detection via Online LS-OC-SVM

UMN dataset detection results via online LS-OC-SVM proposed in Section 3.3 are shown below. The UMN dataset consists of eleven sequences of crowded panic escape events, which are recorded in a lawn, an indoor and a plaza scene. A frame where the people are walking in different directions is considered as a normal sample for training or for normal testing. A scene where the people are running is taken as an abnormal sample for testing. The detection results of the lawn scene, the indoor scene and the plaza scene are shown in Figures 7, Figure 8 and Figure 9, respectively. A Gaussian kernel for the covariance matrix in the Lie group is used. Various values of the variance, σ, in the Gaussian function and the penalty factor, C, are chosen to form the receiver operating characteristic (ROC) curve. In the indoor scene, time lags of the frame labels lead to the lower area under the ROC curve (AUC) value. In the last few frames, labeled as abnormal of abnormal sequences, there are no people, while, in the training samples, there are no people in the upper half of the image. The covariance of the training frame is similar to the covariance of the abnormal frame without people. Our covariance feature descriptor-based classification method cannot distinguish between these two situations. However, this issue can be resolved by utilizing the foreground information. For example, if there are no moving objects in the frame, this frame is immediately classified as abnormal. The results of these three scenes show that the covariance descriptor can distinguish between normal and abnormal events. The performance of online LS-OC-SVM is almost the same as that of the offline method.

6.3. Abnormal Visual Event Detection via Sparse Online LS-OC-SVM

UMN dataset abnormal event detection results via sparse online LS-OC-SVM proposed in Section 3.4 are presented. Taking the lawn scene as an example, the first normal covariance matrix descriptor from the training samples is included into the dictionary firstly; then, the remaining training covariance descriptors are learned online by the sparse online LS-OC-SVM method. The ROC curve of the detection results of the lawn scene, the indoor scene and the plaza scene are shown in Figure l0a–c, respectively.

The resulting performances when all training samples are learned offline via one-class SVM (OC-SVM), learned via least squares one-class SVM (LS-OC-SVM), learned via online least squares one-class SVM (online LS-OC-SVM) and learned via sparse online least squares one-class SVM (sparse LS-OC-SVM), are shown in Table 2. The LS-OC-SVM algorithm obtains better performance than the original OC-SVM. The performances of online and sparse online strategy results are similar to the resulting performances when all training samples are learned offline. The sparse online strategy can be computed efficiently and can adapt to the memory requirement.

The resulting performances of the covariance matrix descriptor-based online least squares one-class SVM method, and of state-of-the-art methods, are shown in Table 3. The covariance matrix-based online abnormal frame detection method obtains competitive performance. In generally, our sparse online LS-OC-SVM method is better than others, except sparse reconstruction cost (SRC) [49]. In that paper, multi-scale histogram of optical flow (HOF) was taken as a feature and a testing sample was classified by its sparse reconstruction cost, through a weighted linear reconstruction of the over-complete normal basis set. However, the computation of the HOF takes more time than the computation of covariance. By adopting the integral image [43], the covariance matrix descriptor of the subimage can be computed conveniently. The covariance descriptor can appropriately be used to analyze partial image movement. In [49], the whole training dataset was saved in the memory in advance; then, the dictionary was chosen as an optimal subset for reconstructing. Our sparse online LS-OC-SVM strategy enables one to train the classifier with sequential inputs. This property makes our proposed method extremely suitable to handle large volumes of training data, while the method in [49] fails to work due to lack of memory.

7. Conclusions

In this paper, we proposed a method to detect abnormal events via online least squares one-class SVM (online LS-OC-SVM) and sparse online least squares one-class SVM (sparse online LS-OC-SVM). Online LS-OC-SVM learns training samples sequentially; sparse online LS-OC-SVM incorporates the coherence criterion to form the dictionary for a sparse representation of the detector. The covariance matrix descriptor encodes the movement feature of the frame to distinguish between normal and abnormal events. The proposed detection algorithms have been tested on a synthetic dataset and a real-world video dataset yielding successful results in detecting abnormal events.

Acknowledgments

This work is partially supported by the China Scholarship Council of Chinese Government and the SURECAP CPER project (fonction de surveillance dans les réseaux de capteurs sans fil via contrat de plan Etat-Région) and the Platform CAPSEC (capteurs pour la sécurité) funded by Région Champagne-Ardenne and FEDER (fonds européen de développement régional).

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Suriani, N.S.; Hussain, A.; Zulkifley, M.A. Sudden event recognition: A survey. Sensors 2013, 13, 9966–9998. [Google Scholar]

- Kosmopoulos, D.; Chatzis, S.P. Robust visual behavior recognition. IEEE Signal Process. Mag. 2010, 27, 34–45. [Google Scholar]

- Utasi, Á.; Czúni, L. Detection of unusual optical flow patterns by multilevel hidden Markov models. Opt. Eng. 2010. [Google Scholar] [CrossRef]

- Xiang, T.; Gong, S. Incremental and adaptive abnormal behaviour detection. Comput. Vis. Image Underst. 2008, 111, 59–73. [Google Scholar]

- Kwak, S.; Byun, H. Detection of dominant flow and abnormal events in surveillance video. Opt. Eng. 2011. [Google Scholar] [CrossRef]

- Jiménez-Hernández, H.; González-Barbosa, J.J.; Garcia-Ramírez, T. Detecting abnormal vehicular dynamics at intersections based on an unsupervised learning approach and a stochastic model. Sensors 2010, 10, 7576–7601. [Google Scholar]

- Davy, M.; Desobry, F.; Gretton, A.; Doncarli, C. An online support vector machine for abnormal events detection. Signal Process. 2006, 86, 2009–2025. [Google Scholar]

- Adam, A.; Rivlin, E.; Shimshoni, I.; Reinitz, D. Robust real-time unusual event detection using multiple fixed-location monitors. IEEE Trans. Pattern Anal. Mach. Intell. 2008, 30, 555–560. [Google Scholar]

- Boiman, O.; Irani, M. Detecting irregularities in images and in video. Int. J. Comput. Vis. 2007, 74, 17–31. [Google Scholar]

- Piciarelli, C.; Micheloni, C.; Foresti, G.L. Trajectory-based anomalous event detection. IEEE Trans. Circuits Syst. Video Technol. 2008, 18, 1544–1554. [Google Scholar]

- Piciarelli, C.; Foresti, G.L. On-line trajectory clustering for anomalous events detection. Pattern Recognit. Lett. 2006, 27, 1835–1842. [Google Scholar]

- Calavia, L.; Baladrón, C.; Aguiar, J.M.; Carro, B.; Sánchez-Esguevillas, A. A semantic autonomous video surveillance system for dense camera networks in smart cities. Sensors 2012, 12, 10407–10429. [Google Scholar]

- Lee, Y.S.; Chung, W.Y. Visual sensor based abnormal event detection with moving shadow removal in home healthcare applications. Sensors 2012, 12, 573–584. [Google Scholar]

- Shilton, A.; Palaniswami, M.; Ralph, D.; Tsoi, A.C. Incremental training of support vector machines. IEEE Trans. Neural Netw. 2005, 16, 114–131. [Google Scholar]

- Lau, K.; Wu, Q. Online training of support vector classifier. Pattern Recognit. 2003, 36, 1913–1920. [Google Scholar]

- Cauwenberghs, G.; Poggio, T. Incremental and decremental support vector machine learning. Adv. Neural Inf. Process. Syst. 2000, 1, 409–415. [Google Scholar]

- Diehl, C.; Cauwenberghs, G. SVM Incremental Learning, Adaptation and Optimization. Proceedings of International Joint Conference on Neural Networks (IJCNN), Portland, OR, USA, 20–24 July 2003; pp. 2685–2690.

- Laskov, P.; Gehl, C.; Krüger, S.; Müller, K.R. Incremental support vector learning: Analysis, implementation and applications. J. Mach. Learn. Res. 2006. [Google Scholar]

- Desobry, F.; Davy, M.; Doncarli, C. An online kernel change detection algorithm. IEEE Trans. Signal Process. 2005. [Google Scholar]

- Zhang, Y.; Meratnia, N.; Havinga, P. Adaptive and Online One-Class Support Vector Machine-Based Outlier Detection Techniques for Wireless Sensor Networks. Proceedings of International Conference on Advanced Information Networking and Applications Workshops (WAINA), Bradford, UK, 26–29 May 2009; pp. 990–995.

- Gómez-Verdejo, V.; Arenas-García, J.; Lazaro-Gredilla, M.; Navia-Vazquez, A. Adaptive one-class support vector machine. IEEE Trans. Signal Process. 2011. [Google Scholar]

- Kivinen, J.; Smola, A.J.; Williamson, R.C. Online learning with kernels. IEEE Trans. Signal Process. 2004, 52, 2165–2176. [Google Scholar]

- Tax, D. One-Class Classification. Ph.D. Thesis, Delft University of Technology, Delft, The Netherlands, 2001. [Google Scholar]

- Tax, D.M.; Duin, R.P. Support vector data description. Mach. Learn. 2004, 54, 45–66. [Google Scholar]

- Noumir, Z.; Honeine, P.; Richard, C. Online One-Class Machines Based on the Coherence Criterion. Proceedings of the 20th European Signal Processing Conference (EUSIPCO), Bucharest, Romania, 27–31 August 2012; pp. 664–668.

- Kim, P.J.; Chang, H.J.; Choi, J.Y. Fast Incremental Learning for One-Class Support Vector Classifier Using Sample Margin Information. Proceedings of the 19th International Conference on Pattern Recognition (ICPR), Tampa, FL, USA, 8–11 December 2008; pp. 1–4.

- Schölkopf, B.; Platt, J.C.; Shawe-Taylor, J.; Smola, A.J.; Williamson, R.C. Estimating the support of a high-dimensional distribution. Neural Comput. 2001, 13, 1443–1471. [Google Scholar]

- Suykens, J.; Lukas, L.; van Dooren, P.; de Moor, B.; Vandewalle, J. Least Squares Support Vector Machine Classifiers: A Large Scale Algorithm. Proceedings of European Conference on Circuit Theory and Design (ECCTD), Stresa, Italy, 29 August-2 September 1999; pp. 839–842.

- Choi, Y.S. Least squares one-class support vector machine. Pattern Recognit. Lett. 2009, 30, 1236–1240. [Google Scholar]

- Vapnik, V.N. Statistical Learning Theory; Wiley: New York, NY, USA, 1998. [Google Scholar]

- Vapnik, V.N.; Lerner, A. Pattern recognition using generalized portrait method. Autom. Remote Control 1963, 24, 774–780. [Google Scholar]

- Boser, B.E.; Guyon, I.M.; Vapnik, V.N. A Training Algorithm for Optimal Margin Classifiers. Proceedings of ACM the 5th Annual Workshop on Computational Learning Theory (COLT), Pittsburgh, PA, USA, 27–29 July 1992; pp. 144–152.

- Cristianini, N.; Shawe-Taylor, J. An Introduction to Support Vector Machines and other Kernel-Based Learning Methods; Cambridge University Press: Chambridge, UK, 2000. [Google Scholar]

- Canu, S.; Grandvalet, Y.; Guigue, V.; Rakotomamonjy, A. SVM and Kernel Methods Matlab Toolbox, updated on 20 February 2008; A SVM Toolbox fully written in Matlab, even the QP solver. In Perception Systemes et Information; INSA de Rouen: Rouen, France, 2008. [Google Scholar]

- Scholkopf, B.; Smola, A.J.; Williamson, R.C.; Bartlett, P.L. New support vector algorithms. Neural Comput. 2000, 12, 1207–1245. [Google Scholar]

- Ben-Hur, A.; Horn, D.; Siegelmann, H.T.; Vapnik, V. Support vector clustering. J. Mach. Learn. Res. 2002, 2, 125–137. [Google Scholar]

- Suykens, J.A.; Vandewalle, J. Least squares support vector machine classifiers. Neural Process. Lett. 1999, 9, 293–300. [Google Scholar]

- Suykens, J.; Gestel, T.V.; Brabanter, J.D.; Moor, B.D.; Vandewalle, J. Least Squares Support Vector Machines; World Scientific: Singapore, 2002. [Google Scholar]

- Honeine, P. Online kernel principal component analysis: A reduced-order model. IEEE Trans. Pattern Anal. Mack Intell. 2012, 34, 1814–1826. [Google Scholar]

- Tropp, J.A. Greed is good: Algorithmic results for sparse approximation. IEEE Trans. Inf. Theory 2004, 50, 2231–2242. [Google Scholar]

- Richard, C.; Bermudez, J.C.M.; Honeine, P. Online prediction of time series data with kernels. IEEE Trans. Signal Process. 2009, 57, 1058–1067. [Google Scholar]

- Horn, B.K.; Schunck, B.G. Determining optical flow. Artif. Intell. 1981, 17, 185–203. [Google Scholar]

- Tuzel, O.; Porikli, F.; Meer, P. Region covariance: A fast descriptor for detection and classification. Lect. Notes Comput. Sci. 2006, 3952, 589–600. [Google Scholar]

- Schölkopf, B.; Smola, A.J. Learning with Kernels: Support Vector Machines, Regularization, Optimization and Beyond; MIT Press: Cambridge, MA, USA, 2002. [Google Scholar]

- Hall, B. Lie Groups, Lie Algebras, and Representations: An Elementary Introduction; Springer: Berlin, Germany, 2003. [Google Scholar]

- Cong, G.; Fanzhang, L.; Cheng, S. Research on Lie Group kernel learning algorithm. J. Front. Comput. Sci. Technol. 2012, 6, 1026–1038. [Google Scholar]

- Detection of Events—Detection of Unusual Crowd Activity. Available online: http://mha.cs.umn.edu/Movies/Crowd-Activity-All.avi (accessed on 12 Dcember 2013).

- Hoffmann, H. Kernel PCA for novelty detection. Pattern Recognit. 2007, 40, 863–874. [Google Scholar]

- Cong, Y.; Yuan, J.; Liu, J. Sparse Reconstruction Cost for Abnormal Event Detection. Proceedings of IEEE Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 20–25 June 2011; pp. 3449–3456.

- Mehran, R.; Oyama, A.; Shah, M. Abnormal Crowd Behavior Detection Using Social Force Model. Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Miami, FL, USA, 20–25 June 2009; pp. 935–942.

- Shi, Y.; Gao, Y.; Wang, R. Real-Time Abnormal Event Detection in Complicated Scenes. Proceedings of the 20th International Conference on Pattern Recognition (ICPR), Istanbul, Turkey, 23–26 August 2010; pp. 3653–3656.

| Feature VectorF | |

|---|---|

| F1(6 × 6) | [y x uυuxuy ] |

| F2(6 × 6) | [yxuυ υxυy ] |

| F3(8 × 8) | [y xuυuxuyυxυy ] |

| F4(12 × 12) | [y xuυuxuyυxυyuxxuyyυxxυyy ] |

| Features | Area under ROC | ||

| lawn | indoor | plaza | |

| offline OC-SVM | |||

| F1(6 × 6) | 0.9474 | 0.8381 | 0.9148 |

| F2(6 × 6) | 0.9583 | 0.8410 | 0.9192 |

| F3(8 × 8) | 0.9656 | 0.8483 | 0.9367 |

| F4(12 × 12) | 0.9798 | 0.8744 | 0.9782 |

| offline LS-OC-SVM | |||

| F1(6 × 6) | 0.9755 | 0.8605 | 0.9422 |

| F2(6 × 6) | 0.9738 | 0.8603 | 0.9489 |

| F3(8 × 8) | 0.9788 | 0.8662 | 0.9538 |

| F4(12 × 12) | 0.9874 | 0.8900 | 0.9800 |

| Online LS-OC-SVM | |||

| F1(6 × 6) | 0.9755 | 0.8616 | 0.9403 |

| F2(6 × 6) | 0.9720 | 0.8730 | 0.9517 |

| F3(8 × 8) | 0.9795 | 0.8670 | 0.9563 |

| F4(12 × 12) | 0.9874 | 0.8904 | 0.9839 |

| Sparse Online LS-OC-SV | M | ||

| F1(6 × 6) | 0.8840 | 0.8077 | 0.9245 |

| F2(6 × 6) | 0.9435 | 0.8886 | 0.9515 |

| F3(8 × 8) | 0.9269 | 0.8266 | 0.9428 |

| F4(12 × 12) | 0.9510 | 0.8223 | 0.9501 |

| Method | Area under ROC | ||

|---|---|---|---|

| lawn | indoor | plaza | |

| Social Force [50] | 0.96 | ||

| Optical Flow [50] | 0.84 | ||

| NN [49] | 0.93 | ||

| SRC [49] | 0.995 | 0.975 | 0.964 |

| STCOG [51] | 0.9362 | 0.7759 | 0.9661 |

| LS-SVM (Ours) | 0.9874 | 0.8900 | 0.9800 |

| Online (Ours) | 0.9874 | 0.8904 | 0.9839 |

| Sparse Online(Ours) | 0.9510 | 0.8886 | 0.9515 |

© 2013 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Wang, T.; Chen, J.; Zhou, Y.; Snoussi, H. Online Least Squares One-Class Support Vector Machines-Based Abnormal Visual Event Detection. Sensors 2013, 13, 17130-17155. https://doi.org/10.3390/s131217130

Wang T, Chen J, Zhou Y, Snoussi H. Online Least Squares One-Class Support Vector Machines-Based Abnormal Visual Event Detection. Sensors. 2013; 13(12):17130-17155. https://doi.org/10.3390/s131217130

Chicago/Turabian StyleWang, Tian, Jie Chen, Yi Zhou, and Hichem Snoussi. 2013. "Online Least Squares One-Class Support Vector Machines-Based Abnormal Visual Event Detection" Sensors 13, no. 12: 17130-17155. https://doi.org/10.3390/s131217130